Multi-Temporal Passive and Active Remote Sensing for Agricultural Mapping and Acreage Estimation in Context of Small Farm Holds in Ethiopia

Abstract

1. Introduction

2. Materials and Methods

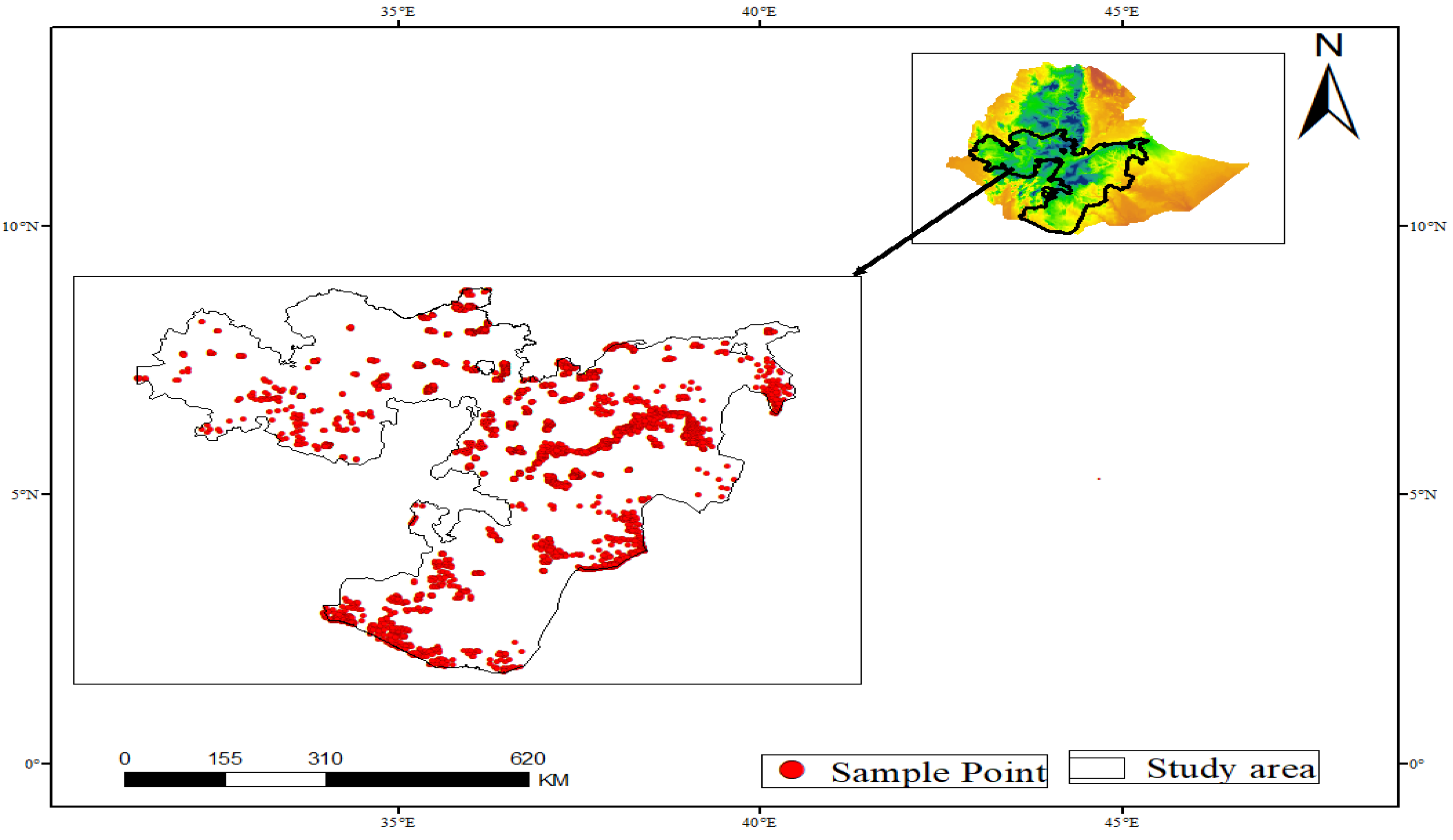

2.1. Study Area

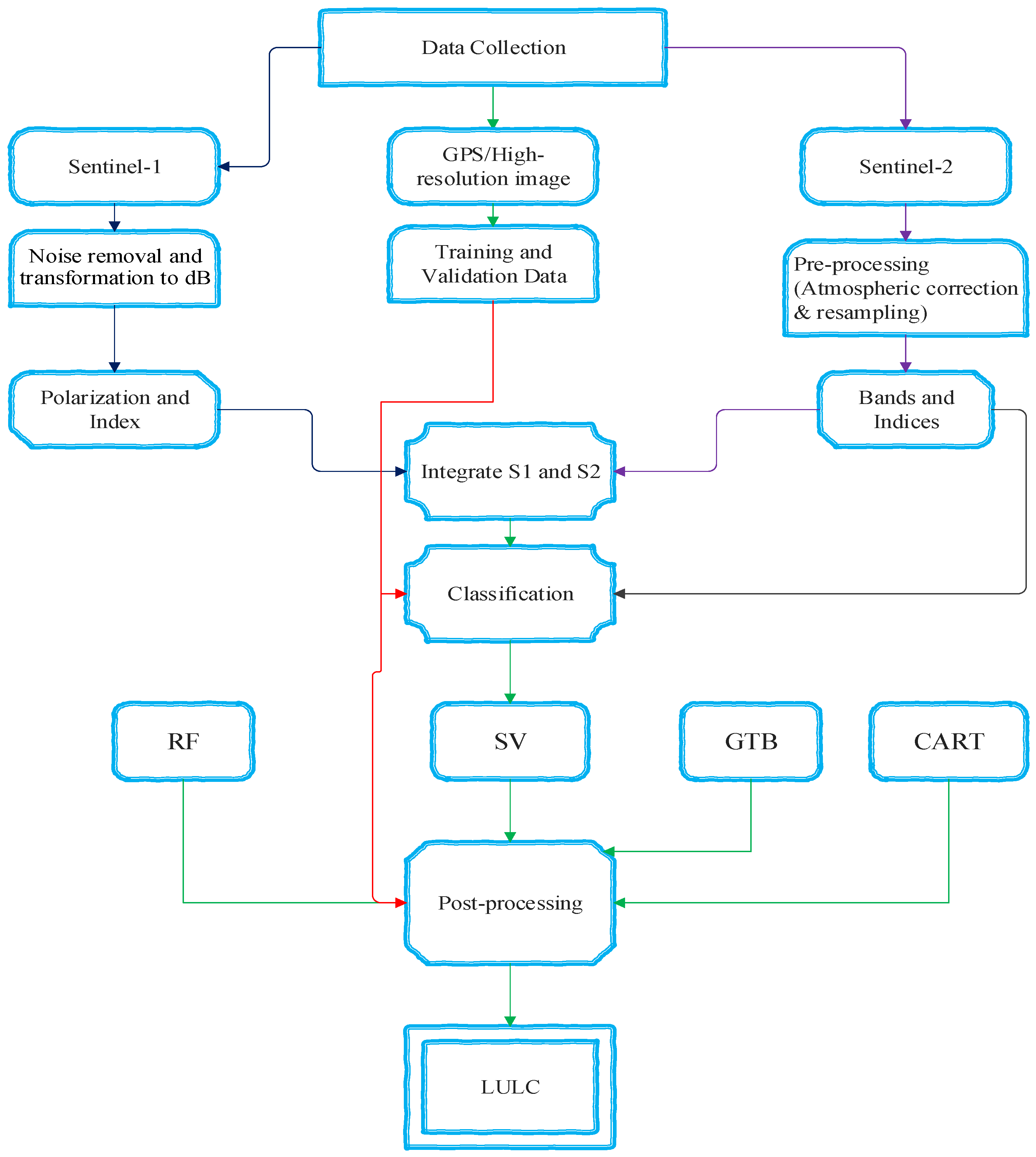

2.2. Methodology

2.2.1. Data Acquisition and Pre-Processing

Satellite Dataset

Reference and Ground Truth Dataset

2.3. Image Processing

2.3.1. Band Selection

2.3.2. Spectral Indices for LULC Detection

2.4. Classification

2.5. Accuracy Assessments

3. Results

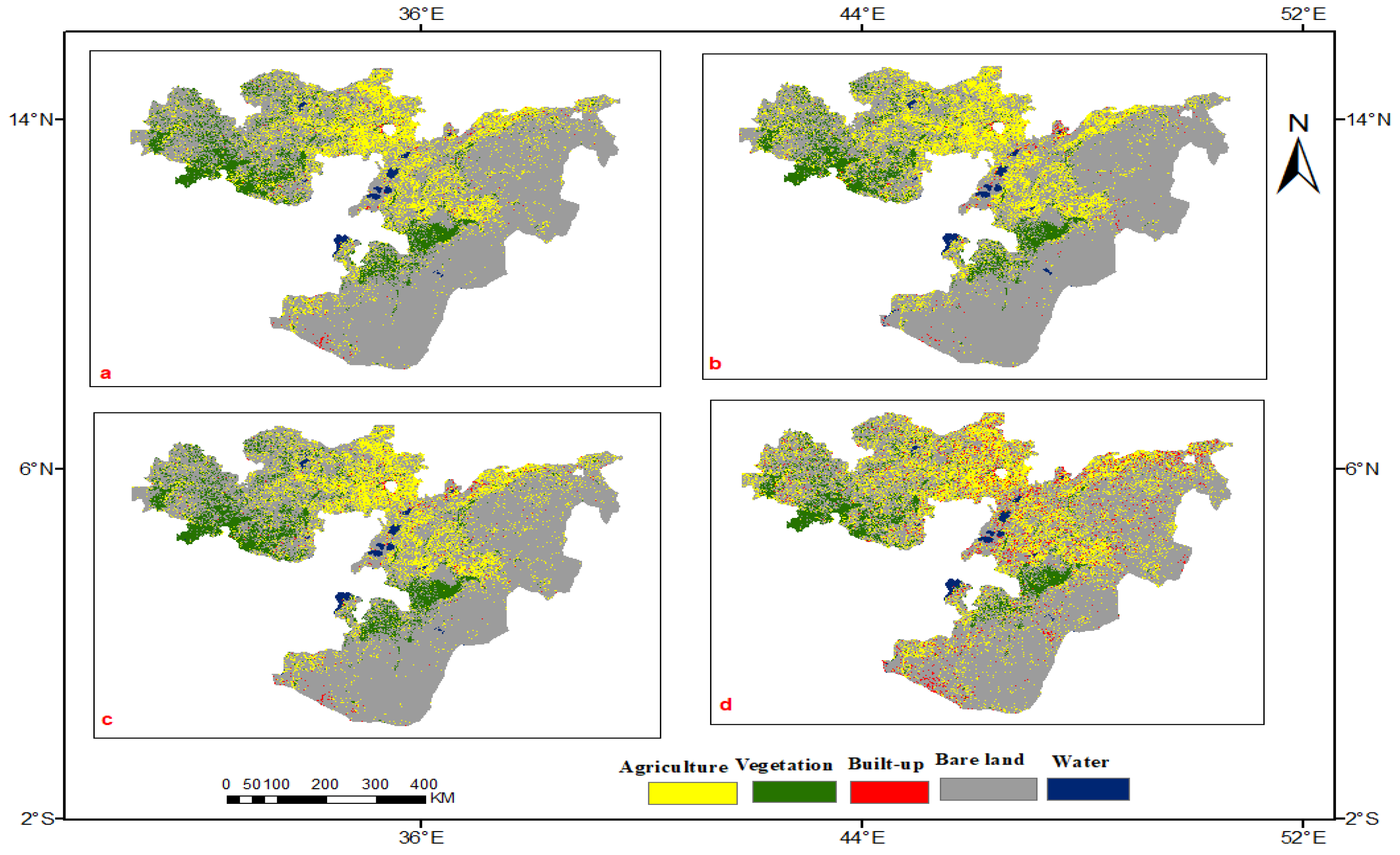

3.1. Land Use Land Cover Maps

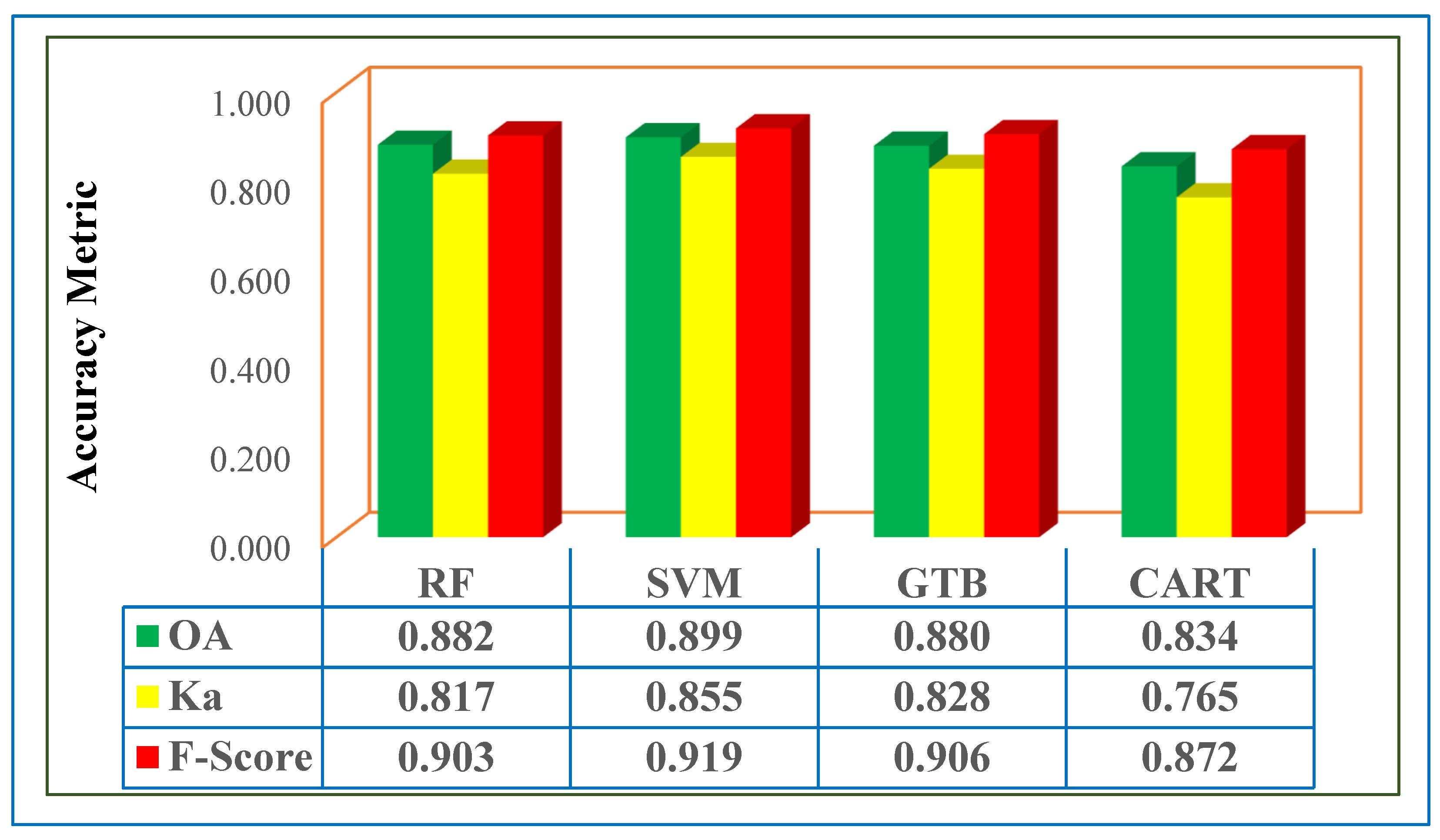

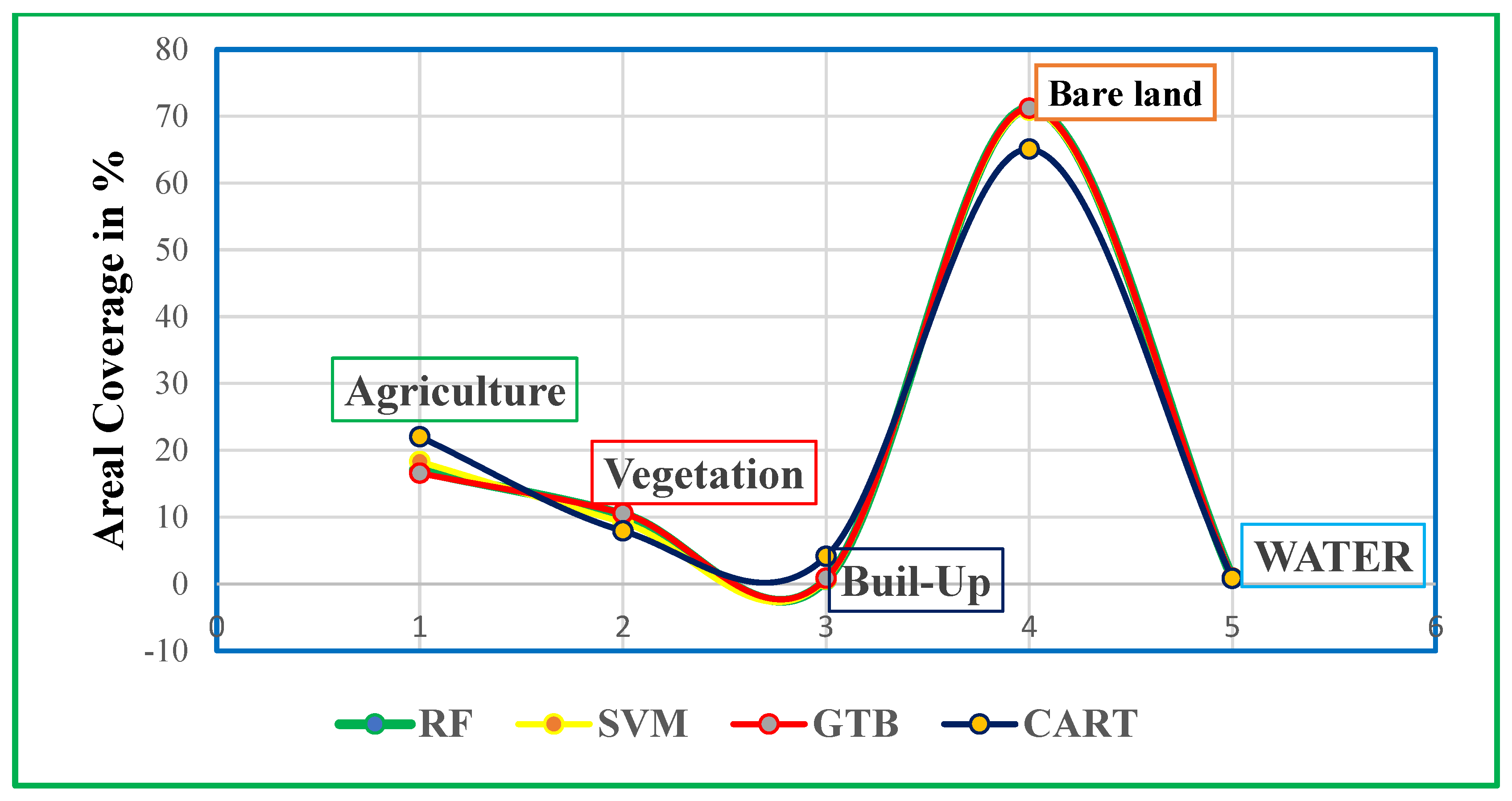

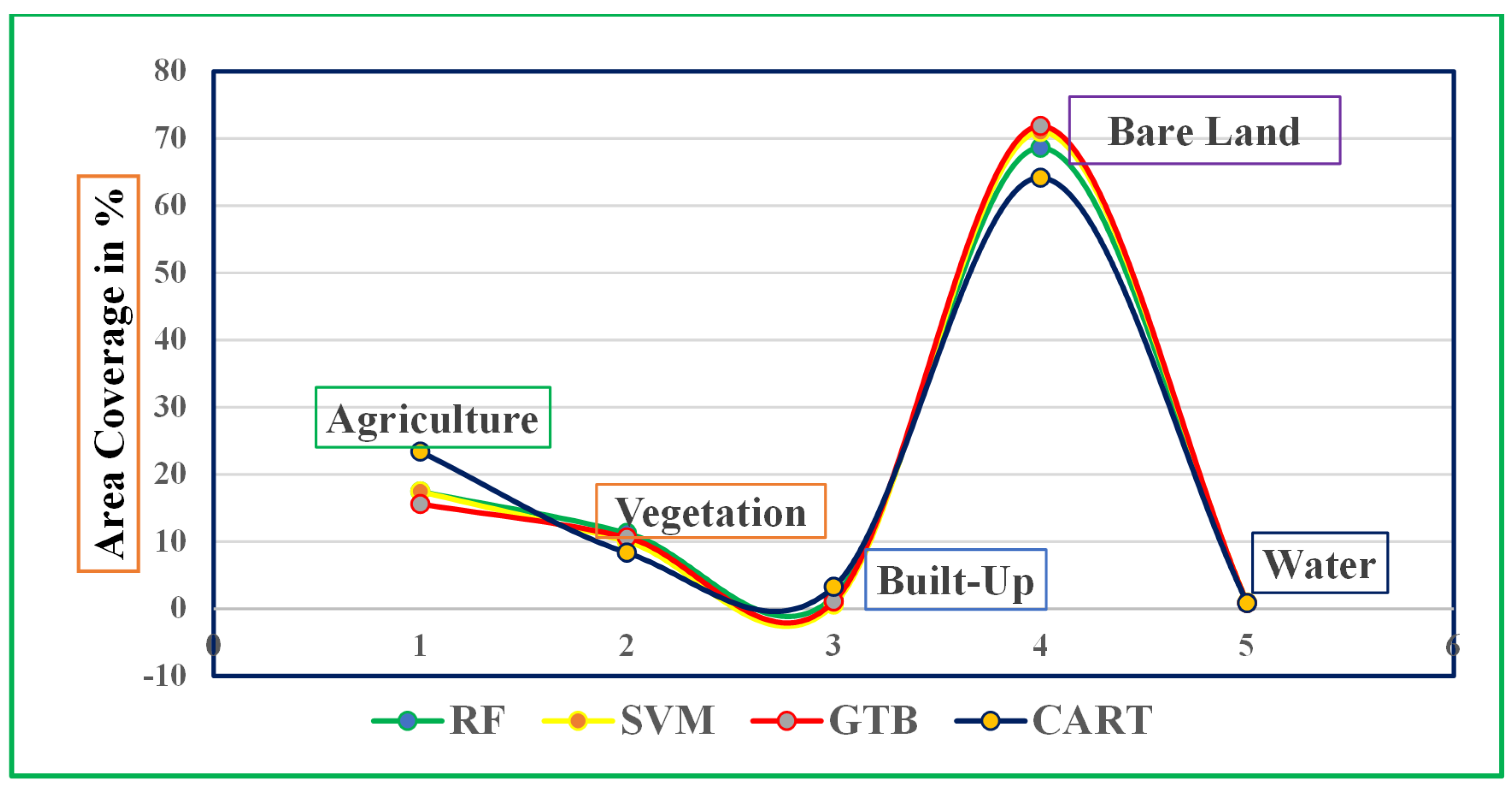

3.2. Quantitative Evaluation

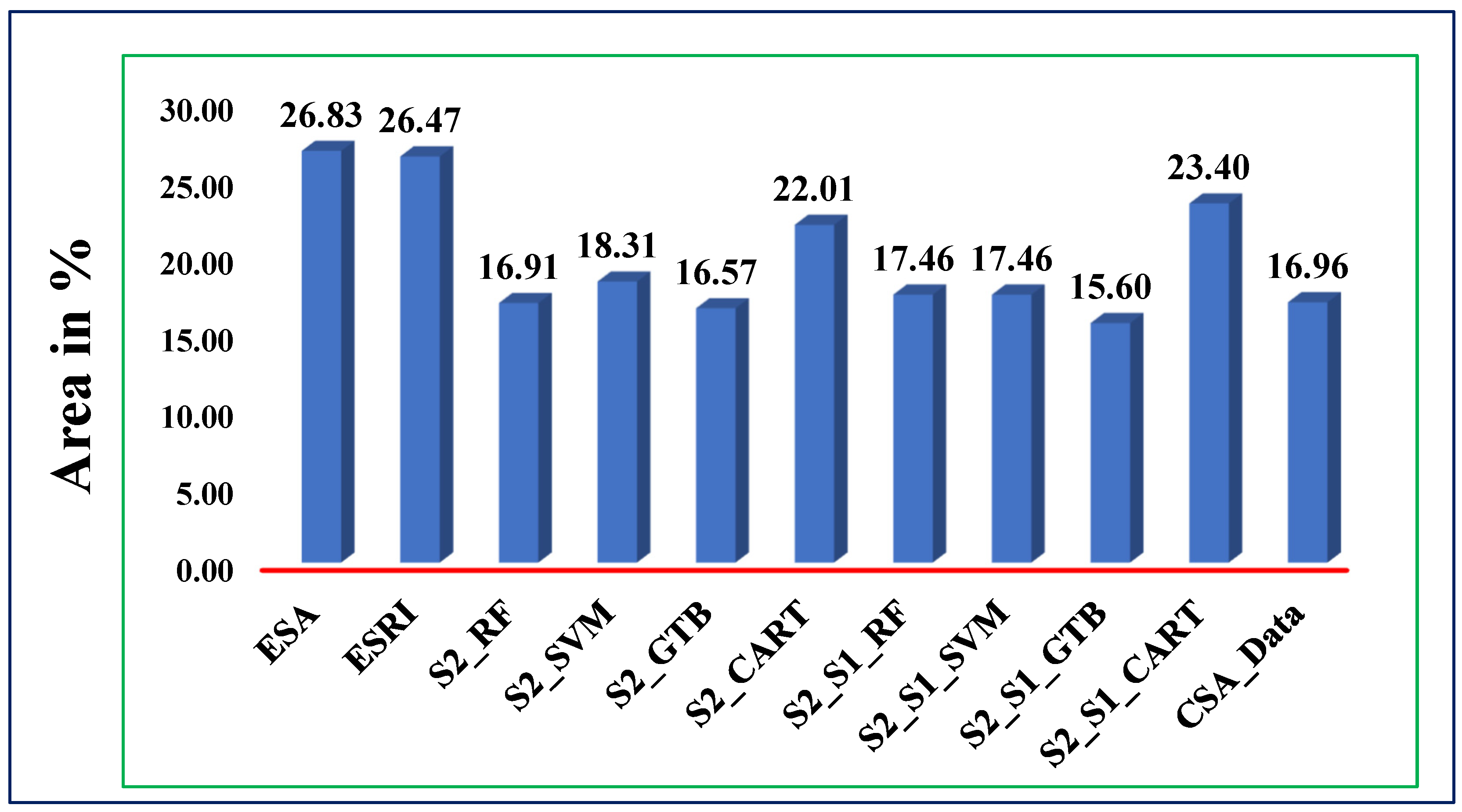

3.3. Acreage Estimation and Implications for Small Farm Holdings

4. Discussion

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Akinyemi, F.O.; Ifejika Speranza, C. Agricultural landscape change impact on the quality of land: An African continent-wide assessment in gained and displaced agricultural lands. Int. J. Appl. Earth Obs. Geoinf. 2022, 106, 102644. [Google Scholar] [CrossRef]

- Baptista, D.; Miguel Salgado, F.; Fayad, M.; Kemoe, D.; Lanci, L.; Mitra, L.S.; Muehlschlegel, P.; Okou, T.S.; Spray, C.; Tuitoek, K.J.; et al. Climate change and SSA’s intensified food insecurity. Int. Monet. Fund 2022, 2022, 1–48. [Google Scholar]

- Choi, Y.W.; Eltahir, E.A.B. Near-term climate change impacts on food crops productivity in East Africa. Theor. Appl. Climatol. 2023, 152, 843–860. [Google Scholar] [CrossRef]

- Mechiche-Alami, A.; Abdi, A.M. Agricultural productivity in relation to climate and cropland management in West Africa. Sci. Rep. 2020, 10, 3393. [Google Scholar] [CrossRef]

- Giller, K.E.; Delaune, T.; Silva, J.V.; van Wijk, M.; Hammond, J.; Descheemaeker, K.; van de Ven, G.; Schut, A.G.T.; Taulya, G.; Chikowo, R.; et al. Small farms and development in sub-Saharan Africa: Farming for food, for income or for lack of better options? Food Secur. 2021, 13, 1431–1454. [Google Scholar] [CrossRef]

- Jayne, T.S.; Wineman, A.; Chamberlin, J.; Muyanga, M.; Yeboah, F.K. Changing Farm Size Distributions and Agricultural Transformation in Sub-Saharan Africa. Annu. Rev. Resour. Econ. 2022, 14, 109–130. [Google Scholar] [CrossRef]

- Peterson, S.; Husak, G. Crop Area Mapping in Southern and Central Malawi With Google Earth Engine. Front. Clim. 2021, 3, 693653. [Google Scholar] [CrossRef]

- Yigezu Wendimu, G. The challenges and prospects of Ethiopian agriculture. Cogent Food Agric. 2021, 7, 1923619. [Google Scholar] [CrossRef]

- Zerssa, G.; Feyssa, D.; Kim, D.G.; Eichler-Löbermann, B. Challenges of smallholder farming in Ethiopia and opportunities by adopting climate-smart agriculture. Agriculture 2021, 11, 192. [Google Scholar] [CrossRef]

- Headey, D.; Dereje, M.; Taffesse, A.S. Land constraints and agricultural intensification in Ethiopia: A village-level analysis of high-potential areas. Food Policy 2014, 48, 129–141. [Google Scholar] [CrossRef]

- Seyoum Taffesse, A.; Dorosh, P.; Gemessa, S.A. Crop production in Ethiopia: Regional patterns and trends. Food Agric. Ethiop. Prog. Policy Chall. 2013, 9780812208, 53–83. [Google Scholar] [CrossRef]

- Mashaba-Munghemezulu, Z.; Chirima, G.J.; Munghemezulu, C. Mapping smallholder maize farms using multi-temporal sentinel-1 data in support of the sustainable development goals. Remote Sens. 2021, 13, 1666. [Google Scholar] [CrossRef]

- Duncan, J.M.A.; Dash, J.; Atkinson, P.M. The potential of satellite-observed crop phenology to enhance yield gap assessments in smallholder landscapes. Front. Environ. Sci. 2015, 3, 56. [Google Scholar] [CrossRef]

- Bégué, A.; Arvor, D.; Lelong, C.; Vintrou, E. Agricultural Systems Studies using Remote Sensing to cite this version: HAL Id: Hal-02098284. Hal 2019. Available online: https://hal.science/hal-02098284 (accessed on 29 January 2024).

- Neigh, C.S.R.; Carroll, M.L.; Wooten, M.R.; McCarty, J.L.; Powell, B.F.; Husak, G.J.; Enenkel, M.; Hain, C.R. Smallholder crop area mapped with wall-to-wall WorldView sub-meter panchromatic image texture: A test case for Tigray, Ethiopia. Remote Sens. Environ. 2018, 212, 8–20. [Google Scholar] [CrossRef]

- Warner, J.M.; Mann, M.L. Agricultural Impacts of the 2015/2016 Drought in Ethiopia Using High-Resolution Data Fusion Methodologies. Handb. Clim. Chang. Resil. 2019, 2, 869–894. [Google Scholar] [CrossRef]

- Abdul-Jabbar, T.S.; Ziboon, A.T.; Albayati, M.M. Crop yield estimation using different remote sensing data: Literature review. IOP Conf. Ser. Earth Environ. Sci. 2023, 1129, 012004. [Google Scholar] [CrossRef]

- Hudait, M.; Patel, P.P. Crop-type mapping and acreage estimation in smallholding plots using Sentinel-2 images and machine learning algorithms: Some comparisons. Egypt. J. Remote Sens. Space Sci. 2022, 25, 147–156. [Google Scholar] [CrossRef]

- Saini, R.; Ghosh, S.K. Crop classification in a heterogeneous agricultural environment using ensemble classifiers and single-date Sentinel-2A imagery. Geocarto Int. 2021, 36, 2141–2159. [Google Scholar] [CrossRef]

- Waldner, F.; Hansen, M.C.; Potapov, P.V.; Löw, F.; Newby, T.; Ferreira, S.; Defourny, P. National-scale cropland mapping based on spectral-temporal features and outdated land cover information. PLoS ONE 2017, 12, e0181911. [Google Scholar] [CrossRef]

- Sun, C.; Bian, Y.; Zhou, T.; Pan, J. Using of multi-source and multi-temporal remote sensing data improves crop-type mapping in the subtropical agriculture region. Sensors 2019, 19, 2401. [Google Scholar] [CrossRef] [PubMed]

- Xie, G.; Niculescu, S. Mapping Crop Types Using Sentinel-2 Data Machine Learning and Monitoring Crop Phenology with Sentinel-1 Backscatter Time Series in Pays de Brest, Brittany, France. Remote Sens. 2022, 14, 4437. [Google Scholar] [CrossRef]

- Santaga, F.S.; Agnelli, A.; Leccese, A.; Vizzari, M. Using sentinel-2 for simplifying soil sampling and mapping: Two case studies in Umbria, Italy. Remote Sens. 2021, 13, 3379. [Google Scholar] [CrossRef]

- Sarteshnizi, R.E.; Vayghan, S.S.; Jazirian, I. Estimation of Soil Moisture Using Sentinel-1 and Sentinel-2 Images. ISPRS Ann. Photogramm. Remote Sens. Spat. Inf. Sci. 2023, 10, 137–142. [Google Scholar] [CrossRef]

- Rukhovich, D.I.; Koroleva, P.V.; Rukhovich, A.D.; Komissarov, M.A. Updating of the Archival Large-Scale Soil Map Based on the Multitemporal Spectral Characteristics of the Bare Soil Surface Landsat Scenes. Remote Sens. 2023, 15, 4491. [Google Scholar] [CrossRef]

- Ofori-Ampofo, S.; Pelletier, C.; Lang, S. Crop type mapping from optical and radar time series using attention-based deep learning. Remote Sens. 2021, 13, 4668. [Google Scholar] [CrossRef]

- Chang, Z.; Li, H.; Chen, D.; Liu, Y.; Zou, C.; Chen, J.; Han, W.; Liu, S.; Zhang, N. Crop Type Identification Using High-Resolution Remote Sensing Images Based on an Improved DeepLabV3+ Network. Remote Sens. 2023, 15, 5088. [Google Scholar] [CrossRef]

- Hosseini, M.; Becker-Reshef, I.; Sahajpal, R.; Fontana, L.; Lafluf, P.; Leale, G.; Puricelli, E.; Varela, M.; Justice, C. Crop yield prediction using integration of polarimteric synthetic aperture radar and optical data. In Proceedings of the 2020 IEEE India Geoscience and Remote Sensing Symposium (InGARSS), Ahmedabad, India, 1–4 December 2020; pp. 17–20. [Google Scholar] [CrossRef]

- Ali, A.M.; Abouelghar, M.; Belal, A.A.; Saleh, N.; Yones, M.; Selim, A.I.; Amin, M.E.S.; Elwesemy, A.; Kucher, D.E.; Maginan, S.; et al. Crop Yield Prediction Using Multi Sensors Remote Sensing (Review Article). Egypt. J. Remote Sens. Sp. Sci. 2022, 25, 711–716. [Google Scholar] [CrossRef]

- Ranjan, A.K.; Parida, B.R. Predicting paddy yield at spatial scale using optical and Synthetic Aperture Radar (SAR) based satellite data in conjunction with field-based Crop Cutting Experiment (CCE) data. Int. J. Remote Sens. 2021, 42, 2046–2071. [Google Scholar] [CrossRef]

- Borra, S.; Thanki, R.; Dey, N. Satellite Image Analysis: Clustering and Classification; Springer: Singapore, 2019; ISBN 978-981-13-6423-5. [Google Scholar]

- Tarasenkov, M.V.; Belov, V.V.; Engel, M.V.; Zimovaya, A.V.; Zonov, M.N.; Bogdanova, A.S. Algorithm for the Reconstruction of the Ground Surface Reflectance in the Visible and Near IR Ranges from MODIS Satellite Data with Allowance for the Influence of Ground Surface Inhomogeneity on the Adjacency Effect and of Multiple Radiation Reflection. Remote Sens. 2023, 15, 2655. [Google Scholar] [CrossRef]

- Ustin, S.L.; Middleton, E.M. Current and near-term advances in Earth observation for ecological applications. Ecol. Process. 2021, 10, 1. [Google Scholar] [CrossRef]

- Yu, X.; Lu, D.; Jiang, X.; Li, G.; Chen, Y.; Li, D.; Chen, E. Examining the roles of spectral, spatial, and topographic features in improving land-cover and forest classifications in a subtropical region. Remote Sens. 2020, 12, 2907. [Google Scholar] [CrossRef]

- Zhao, P.; Lu, D.; Wang, G.; Wu, C.; Huang, Y.; Yu, S. Examining spectral reflectance saturation in landsat imagery and corresponding solutions to improve forest aboveground biomass estimation. Remote Sens. 2016, 8, 469. [Google Scholar] [CrossRef]

- Persello, C.; Tolpekin, V.A.; Bergado, J.R.; de By, R.A. Delineation of agricultural fields in smallholder farms from satellite images using fully convolutional networks and combinatorial grouping. Remote Sens. Environ. 2019, 231, 111253. [Google Scholar] [CrossRef] [PubMed]

- Cucho-Padin, G.; Loayza, H.; Palacios, S.; Balcazar, M.; Carbajal, M.; Quiroz, R. Development of low-cost remote sensing tools and methods for supporting smallholder agriculture. Appl. Geomat. 2020, 12, 247–263. [Google Scholar] [CrossRef]

- Sishodia, R.P.; Ray, R.L.; Singh, S.K. Applications of remote sensing in precision agriculture: A review. Remote Sens. 2020, 12, 3136. [Google Scholar] [CrossRef]

- Waleed, M.; Mubeen, M.; Ahmad, A.; Habib-ur-Rahman, M.; Amin, A.; Farid, H.U.; Hussain, S.; Ali, M.; Qaisrani, S.A.; Nasim, W.; et al. Evaluating the efficiency of coarser to finer resolution multispectral satellites in mapping paddy rice fields using GEE implementation. Sci. Rep. 2022, 12, 13210. [Google Scholar] [CrossRef]

- Chaves, M.E.D.; Picoli, M.C.A.; Sanches, I.D. Recent applications of Landsat 8/OLI and Sentinel-2/MSI for land use and land cover mapping: A systematic review. Remote Sens. 2020, 12, 3062. [Google Scholar] [CrossRef]

- Segarra, J.; Buchaillot, M.L.; Araus, J.L.; Kefauver, S.C. Remote sensing for precision agriculture: Sentinel-2 improved features and applications. Agronomy 2020, 10, 641. [Google Scholar] [CrossRef]

- Ma, L.; Liu, Y.; Zhang, X.; Ye, Y.; Yin, G.; Johnson, B.A. Deep learning in remote sensing applications: A meta-analysis and review. ISPRS J. Photogramm. Remote Sens. 2019, 152, 166–177. [Google Scholar] [CrossRef]

- Orynbaikyzy, A.; Gessner, U.; Mack, B.; Conrad, C. Crop type classification using fusion of sentinel-1 and sentinel-2 data: Assessing the impact of feature selection, optical data availability, and parcel sizes on the accuracies. Remote Sens. 2020, 12, 2779. [Google Scholar] [CrossRef]

- Van Tricht, K.; Gobin, A.; Gilliams, S.; Piccard, I. Synergistic use of radar sentinel-1 and optical sentinel-2 imagery for crop mapping: A case study for Belgium. Remote Sens. 2018, 10, 1642. [Google Scholar] [CrossRef]

- Niculescu, S.; Lardeux, C.; Hanganu, J. Synergy between Sentinel-1 radar time series and Sentinel-2 optical for the mapping of restored areas in Danube delta. Proc. ICA 2018, 1, 82. [Google Scholar] [CrossRef]

- Huang, D.; Tang, Y.; Wang, Q. An Image Fusion Method of SAR and Multispectral Images Based on Non-Subsampled Shearlet Transform and Activity Measure. Sensors 2022, 22, 7055. [Google Scholar] [CrossRef]

- Ienco, D.; Interdonato, R.; Gaetano, R.; Ho Tong Minh, D. Combining Sentinel-1 and Sentinel-2 Satellite Image Time Series for land cover mapping via a multi-source deep learning architecture. ISPRS J. Photogramm. Remote Sens. 2019, 158, 11–22. [Google Scholar] [CrossRef]

- Gargiulo, M.; Dell’aglio, D.A.G.; Iodice, A.; Riccio, D.; Ruello, G. Integration of sentinel-1 and sentinel-2 data for land cover mapping using w-net. Sensors 2020, 20, 2969. [Google Scholar] [CrossRef] [PubMed]

- Chapa, F.; Hariharan, S.; Hack, J. A new approach to high-resolution urban land use classification using open access software and true color satellite images. Sustainability 2019, 11, 5266. [Google Scholar] [CrossRef]

- Géant, C.B.; Gustave, M.N.; Schmitz, S. Mapping small inland wetlands in the South-Kivu province by integrating optical and SAR data with statistical models for accurate distribution assessment. Sci. Rep. 2023, 13, 17626. [Google Scholar] [CrossRef] [PubMed]

- Joshi, N.; Baumann, M.; Ehammer, A.; Fensholt, R.; Grogan, K.; Hostert, P.; Jepsen, M.R.; Kuemmerle, T.; Meyfroidt, P.; Mitchard, E.T.A.; et al. A review of the application of optical and radar remote sensing data fusion to land use mapping and monitoring. Remote Sens. 2016, 8, 70. [Google Scholar] [CrossRef]

- Qin, R.; Liu, T. A Review of Landcover Classification with Very-High Resolution Remotely Sensed Optical Images—Analysis Unit, Model Scalability and Transferability. Remote Sens. 2022, 14, 646. [Google Scholar] [CrossRef]

- Latif, R.M.A.; He, J.; Umer, M. Mapping Cropland Extent in Pakistan Using Machine Learning Algorithms on Google Earth Engine Cloud Computing Framework. ISPRS Int. J. Geo-Inf. 2023, 12, 81. [Google Scholar] [CrossRef]

- Pech-May, F.; Aquino-Santos, R.; Rios-Toledo, G.; Posadas-Durán, J.P.F. Mapping of Land Cover with Optical Images, Supervised Algorithms, and Google Earth Engine. Sensors 2022, 22, 4729. [Google Scholar] [CrossRef]

- Bolfe, É.L.; Parreiras, T.C.; da Silva, L.A.P.; Sano, E.E.; Bettiol, G.M.; Victoria, D.d.C.; Sanches, I.D.; Vicente, L.E. Mapping Agricultural Intensification in the Brazilian Savanna: A Machine Learning Approach Using Harmonized Data from Landsat Sentinel-2. ISPRS Int. J. Geo-Inf. 2023, 12, 263. [Google Scholar] [CrossRef]

- Akhavan, Z.; Hasanlou, M.; Hosseini, M. A Comparison of Tree-Based Regression Models for Soil Moisture Estimation Using Sar Data. ISPRS Ann. Photogramm. Remote Sens. Spat. Inf. Sci. 2023, 10, 37–42. [Google Scholar] [CrossRef]

- Tufail, R.; Ahmad, A.; Javed, M.A.; Ahmad, S.R. A machine learning approach for accurate crop type mapping using combined SAR and optical time series data. Adv. Space Res. 2022, 69, 331–346. [Google Scholar] [CrossRef]

- Akbari, E.; Boloorani, A.D.; Samany, N.N.; Hamzeh, S.; Soufizadeh, S.; Pignatti, S. Crop mapping using random forest and particle swarm optimization based on multi-temporal sentinel-2. Remote Sens. 2020, 12, 1449. [Google Scholar] [CrossRef]

- Kok, Z.H.; Mohamed Shariff, A.R.; Alfatni, M.S.M.; Khairunniza-Bejo, S. Support Vector Machine in Precision Agriculture: A review. Comput. Electron. Agric. 2021, 191, 106546. [Google Scholar] [CrossRef]

- Zheng, B.; Myint, S.W.; Thenkabail, P.S.; Aggarwal, R.M. A support vector machine to identify irrigated crop types using time-series Landsat NDVI data. Int. J. Appl. Earth Obs. Geoinf. 2015, 34, 103–112. [Google Scholar] [CrossRef]

- Camargo, F.F.; Sano, E.E.; Almeida, C.M.; Mura, J.C.; Almeida, T. A comparative assessment of machine-learning techniques for land use and land cover classification of the Brazilian tropical savanna using ALOS-2/PALSAR-2 polarimetric images. Remote Sens. 2019, 11, 1600. [Google Scholar] [CrossRef]

- Jamali, A. Evaluation and comparison of eight machine learning models in land use/land cover mapping using Landsat 8 OLI: A case study of the northern region of Iran. SN Appl. Sci. 2019, 1, 1448. [Google Scholar] [CrossRef]

- Mahmoud, R.; Hassanin, M.; Al Feel, H.; Badry, R.M. Machine Learning-Based Land Use and Land Cover Mapping Using Multi-Spectral Satellite Imagery: A Case Study in Egypt. Sustainability 2023, 15, 9467. [Google Scholar] [CrossRef]

- Oo, T.K.; Arunrat, N.; Sereenonchai, S.; Ussawarujikulchai, A.; Chareonwong, U.; Nutmagul, W. Comparing Four Machine Learning Algorithms for Land Cover Classification in Gold Mining: A Case Study of Kyaukpahto Gold Mine, Northern Myanmar. Sustainability 2022, 14, 10754. [Google Scholar] [CrossRef]

- Razafinimaro, A.; Hajalalaina, A.R.; Rakotonirainy, H.; Zafimarina, R. Land cover classification based optical satellite images using machine learning algorithms. Int. J. Adv. Intell. Inform. 2022, 8, 362–380. [Google Scholar] [CrossRef]

- Adugna, T.; Xu, W.; Fan, J. Comparison of Random Forest and Support Vector Machine Classifiers for Regional Land Cover Mapping Using Coarse Resolution FY-3C Images. Remote Sens. 2022, 14, 574. [Google Scholar] [CrossRef]

- Dash, P.; Sanders, S.L.; Parajuli, P.; Ouyang, Y. Improving the Accuracy of Land Use and Land Cover Classification of Landsat Data in an Agricultural Watershed. Remote Sens. 2023, 15, 4020. [Google Scholar] [CrossRef]

- Burke, M.; Lobell, D.B. Satellite-based assessment of yield variation and its determinants in smallholder African systems. Proc. Natl. Acad. Sci. USA 2017, 114, 2189–2194. [Google Scholar] [CrossRef] [PubMed]

- Kpienbaareh, D.; Sun, X.; Wang, J.; Luginaah, I.; Kerr, R.B.; Lupafya, E.; Dakishoni, L. Crop type and land cover mapping in northern malawi using the integration of sentinel-1, sentinel-2, and planetscope satellite data. Remote Sens. 2021, 13, 700. [Google Scholar] [CrossRef]

- Solórzano, J.V.; Mas, J.F.; Gao, Y.; Gallardo-Cruz, J.A. Land use land cover classification with U-net: Advantages of combining sentinel-1 and sentinel-2 imagery. Remote Sens. 2021, 13, 3600. [Google Scholar] [CrossRef]

- Li, M.; Stein, A. Mapping land use from high resolution satellite images by exploiting the spatial arrangement of land cover objects. Remote Sens. 2020, 12, 4158. [Google Scholar] [CrossRef]

- Whyte, A.; Ferentinos, K.P.; Petropoulos, G.P. A new synergistic approach for monitoring wetlands using Sentinels -1 and 2 data with object-based machine learning algorithms. Environ. Model. Softw. 2018, 104, 40–54. [Google Scholar] [CrossRef]

- Zhang, L.; Hu, Q.; Tang, Z. Using Sentinel-2 Imagery and Machine Learning Algorithms to Assess the Inundation Status of Nebraska Conservation Easements during 2018–2021. Remote Sens. 2022, 14, 4382. [Google Scholar] [CrossRef]

- Ashton, R.A.; Kefyalew, T.; Tesfaye, G.; Pullan, R.L.; Yadeta, D.; Reithinger, R.; Kolaczinski, J.H.; Brooker, S. School-based surveys of malaria in Oromia Regional State, Ethiopia: A rapid survey method for malaria in low transmission settings. Malar. J. 2011, 10, 25. [Google Scholar] [CrossRef][Green Version]

- Adugna, A. Demography and Health Aynalem Adugna July, 2014. 2014. Available online: http://www.ethiodemographyandhealth.org/ (accessed on 29 January 2024).

- Iiyama, M.; Derero, A.; Kelemu, K.; Muthuri, C.; Kinuthia, R.; Ayenkulu, E.; Kiptot, E.; Hadgu, K.; Mowo, J.; Sinclair, F.L. Understanding patterns of tree adoption on farms in semi-arid and sub-humid Ethiopia. Agrofor. Syst. 2017, 91, 271–293. [Google Scholar] [CrossRef]

- Tilahun, M.; Tefesa, M.; Girma, T.; Milkiyas, M.; Tamirat, H. Climate Change Indicators Trace for Identification of Climate Change Climatology & Weather Forecasting Climate Change Indicators Trace for Identification of Climate Change Vulnerability in Salale Zone, Oromia Region, Ethiopia. J. Climatol. Weather. Forecast. 2021, 9, 298. [Google Scholar]

- Brychkova, G.; Kekae, K.; McKeown, P.C.; Hanson, J.; Jones, C.S.; Thornton, P.; Spillane, C. Climate change and land-use change impacts on future availability of forage grass species for Ethiopian dairy systems. Sci. Rep. 2022, 12, 20512. [Google Scholar] [CrossRef] [PubMed]

- Central Statistical Agency (CSA). The Federa Democratic Republic of Ethiopia Report on Area and Production of Majr Crops. Addis Ababa Ethiop. 2020. [Google Scholar]

- Li, H.; Wang, C.; Zhong, C.; Zhang, Z.; Liu, Q. Mapping typical urban LULC from landsat imagery without training samples or self-defined parameters. Remote Sens. 2017, 9, 700. [Google Scholar] [CrossRef]

- Dobrinić, D.; Gašparović, M.; Medak, D. Sentinel-1 and 2 time-series for vegetation mapping using random forest classification: A case study of northern croatia. Remote Sens. 2021, 13, 2321. [Google Scholar] [CrossRef]

- Orieschnig, C.A.; Belaud, G.; Venot, J.P.; Massuel, S.; Ogilvie, A. Input imagery, classifiers, and cloud computing: Insights from multi-temporal LULC mapping in the Cambodian Mekong Delta. Eur. J. Remote Sens. 2021, 54, 398–416. [Google Scholar] [CrossRef]

- Saad El Imanni, H.; El Harti, A.; Hssaisoune, M.; Velastegui-Montoya, A.; Elbouzidi, A.; Addi, M.; El Iysaouy, L.; El Hachimi, J. Rapid and Automated Approach for Early Crop Mapping Using Sentinel-1 and Sentinel-2 on Google Earth Engine; A Case of a Highly Heterogeneous and Fragmented Agricultural Region. J. Imaging 2022, 8, 316. [Google Scholar] [CrossRef]

- Felegari, S.; Sharifi, A.; Moravej, K.; Amin, M.; Golchin, A.; Muzirafuti, A.; Tariq, A.; Zhao, N. Integration of sentinel 1 and sentinel 2 satellite images for crop mapping. Appl. Sci. 2021, 11, 10104. [Google Scholar] [CrossRef]

- Drusch, M.; Del Bello, U.; Carlier, S.; Colin, O.; Fernandez, V.; Gascon, F.; Hoersch, B.; Isola, C.; Laberinti, P.; Martimort, P.; et al. Sentinel-2: ESA’s Optical High-Resolution Mission for GMES Operational Services. Remote Sens. Environ. 2012, 120, 25–36. [Google Scholar] [CrossRef]

- Gascon, F.; Bouzinac, C.; Thépaut, O.; Jung, M.; Francesconi, B.; Louis, J.; Lonjou, V.; Lafrance, B.; Massera, S.; Gaudel-Vacaresse, A.; et al. Copernicus Sentinel-2A calibration and products validation status. Remote Sens. 2017, 9, 584. [Google Scholar] [CrossRef]

- Djamai, N.; Fernandes, R. Comparison of SNAP-derived Sentinel-2A L2A product to ESA product over Europe. Remote Sens. 2018, 10, 926. [Google Scholar] [CrossRef]

- Torres, R.; Snoeij, P.; Geudtner, D.; Bibby, D.; Davidson, M.; Attema, E.; Potin, P.; Rommen, B.; Floury, N.; Brown, M.; et al. GMES Sentinel-1 mission. Remote Sens. Environ. 2012, 120, 9–24. [Google Scholar] [CrossRef]

- Filipponi, F.; Smiraglia, D.; Agrillo, E. Earth Observation for Phenological Metrics (EO4PM): Temporal Discriminant to Characterize Forest Ecosystems. Remote Sens. 2022, 14, 721. [Google Scholar] [CrossRef]

- Schmidt, K.; Schwerdt, M.; Hajduch, G.; Vincent, P.; Recchia, A.; Pinheiro, M. Radiometric Re-Compensation of Sentinel-1 SAR Data Products for Artificial Biases due to Antenna Pattern Changes. Remote Sens. 2023, 15, 1377. [Google Scholar] [CrossRef]

- Mullissa, A.; Vollrath, A.; Odongo-Braun, C.; Slagter, B.; Balling, J.; Gou, Y.; Gorelick, N.; Reiche, J. Sentinel-1 sar backscatter analysis ready data preparation in google earth engine. Remote Sens. 2021, 13, 1954. [Google Scholar] [CrossRef]

- Laine, J. Crop Identification with Sentinel-2 Satellite Imagery in Finland. Master’s Thesis, Aalto University, Espoo, Finland, 2018; pp. 1–84. [Google Scholar]

- Sun, G.; Li, Z.; Zhang, A.; Wang, X.; Ding, S.; Jia, X.; Li, J.; Liu, Q. High-resolution and Multitemporal Impervious Surface Mapping in the Lancang-Mekong Basin with Google Earth Engine. Earth Syst. Sci. Data Discuss. 2022, 1–29. [Google Scholar] [CrossRef]

- Huang, C.; Zhang, C.; He, Y.; Liu, Q.; Li, H.; Su, F.; Liu, G.; Bridhikitti, A. Land cover mapping in cloud-prone tropical areas using Sentinel-2 data: Integrating spectral features with Ndvi temporal dynamics. Remote Sens. 2020, 12, 1163. [Google Scholar] [CrossRef]

- Yi, Z.; Jia, L.; Chen, Q. Crop classification using multi-temporal sentinel-2 data in the Shiyang river basin of China. Remote Sens. 2020, 12, 4052. [Google Scholar] [CrossRef]

- Paszkuta, M. Impact of cloud cover on local remote sensing—Piaśnica River case study. Oceanol. Hydrobiol. Stud. 2022, 51, 283–297. [Google Scholar] [CrossRef]

- Potapov, P.; Hansen, M.C.; Pickens, A.; Hernandez-Serna, A.; Tyukavina, A.; Turubanova, S.; Zalles, V.; Li, X.; Khan, A.; Stolle, F.; et al. The Global 2000-2020 Land Cover and Land Use Change Dataset Derived From the Landsat Archive: First Results. Front. Remote Sens. 2022, 3, 856903. [Google Scholar] [CrossRef]

- Prudente, V.H.R.; Martins, V.S.; Vieira, D.C.; Silva, N.R.d.F.E.; Adami, M.; Sanches, I.D.A. Limitations of cloud cover for optical remote sensing of agricultural areas across South America. Remote Sens. Appl. Soc. Environ. 2020, 20, 100414. [Google Scholar] [CrossRef]

- Whitcraft, A.K.; Vermote, E.F.; Becker-Reshef, I.; Justice, C.O. Cloud cover throughout the agricultural growing season: Impacts on passive optical earth observations. Remote Sens. Environ. 2015, 156, 438–447. [Google Scholar] [CrossRef]

- Lopes, M.; Frison, P.L.; Crowson, M.; Warren-Thomas, E.; Hariyadi, B.; Kartika, W.D.; Agus, F.; Hamer, K.C.; Stringer, L.; Hill, J.K.; et al. Improving the accuracy of land cover classification in cloud persistent areas using optical and radar satellite image time series. Methods Ecol. Evol. 2020, 11, 532–541. [Google Scholar] [CrossRef]

- Sebastianelli, A.; Nowakowski, A.; Puglisi, E.; Del Rosso, M.P.; Mifdal, J.; Pirri, F.; Mathieu, P.P.; Ullo, S.L. Spatio-Temporal SAR-Optical Data Fusion for Cloud Removal via a Deep Hierarchical Model. arXiv 2021, arXiv:2106.12226. [Google Scholar]

- Xiong, Q.; Li, G.; Yao, X.; Zhang, X. SAR-to-Optical Image Translation and Cloud Removal Based on Conditional Generative Adversarial Networks: Literature Survey, Taxonomy, Evaluation Indicators, Limits and Future Directions. Remote Sens. 2023, 15, 1137. [Google Scholar] [CrossRef]

- Holtgrave, A.K.; Röder, N.; Ackermann, A.; Erasmi, S.; Kleinschmit, B. Comparing Sentinel-1 and -2 data and indices for agricultural land use monitoring. Remote Sens. 2020, 12, 2919. [Google Scholar] [CrossRef]

- Çolak, E.; Chandra, M.; Sunar, F. The use of sentinel 1/2 vegetation indexes with gee time series data in detecting land cover changes in the sinop nuclear power plant construction site. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. ISPRS Arch. 2021, 43, 701–706. [Google Scholar] [CrossRef]

- Qin, Q.; Xu, D.; Hou, L.; Shen, B.; Xin, X. Comparing vegetation indices from Sentinel-2 and Landsat 8 under different vegetation gradients based on a controlled grazing experiment. Ecol. Indic. 2021, 133, 108363. [Google Scholar] [CrossRef]

- Wardlow, B.D.; Egbert, S.L.; Kastens, J.H. Analysis of time-series MODIS 250 m vegetation index data for crop classification in the U.S. Central Great Plains. Remote Sens. Environ. 2007, 108, 290–310. [Google Scholar] [CrossRef]

- Huete, A.; Didan, K.; Miura, T.; Rodriguez, E.P.; Gao, X.; Ferreira, L.G. Overview of the radiometric and biophysical performance of the MODIS vegetation indices. Remote Sens. Environ. 2002, 83, 195–213. [Google Scholar] [CrossRef]

- Frampton, W.J.; Dash, J.; Watmough, G.; Milton, E.J. Evaluating the capabilities of Sentinel-2 for quantitative estimation of biophysical variables in vegetation. ISPRS J. Photogramm. Remote Sens. 2013, 82, 83–92. [Google Scholar] [CrossRef]

- Allawai, M.F.; Ahmed, B.A. Using Remote Sensing and GIS in Measuring Vegetation Cover Change from Satellite Imagery in Mosul City, North of Iraq. IOP Conf. Ser. Mater. Sci. Eng. 2020, 757, 012062. [Google Scholar] [CrossRef]

- Rouibah, K.; Belabbas, M. Applying multi-index approach from sentinel-2 imagery to extract urban areas in dry season (Semi-arid land in north east algeria). Rev. Teledetec. 2020, 2020, 89–101. [Google Scholar] [CrossRef]

- Kapil; Pal, M. Comparison of landsat 8 and sentinel 2 data for accurate mapping of built-up area and bare soil. In Proceedings of the 38th Asian Conference on Remote Sensing, New Delhi, India, 23–27 October 2017; pp. 2–5. [Google Scholar]

- McFeeters, S.K. NDWI by McFEETERS. Remote Sens. Environ. 1996, 25, 687–711. [Google Scholar]

- Xu, H. Modification of normalised difference water index (NDWI) to enhance open water features in remotely sensed imagery. Int. J. Remote Sens. 2006, 27, 3025–3033. [Google Scholar] [CrossRef]

- Du, Y.; Zhang, Y.; Ling, F.; Wang, Q.; Li, W.; Li, X. Water bodies’ mapping from Sentinel-2 imagery with Modified Normalized Difference Water Index at 10-m spatial resolution produced by sharpening the swir band. Remote Sens. 2016, 8, 354. [Google Scholar] [CrossRef]

- Lastovicka, J.; Svec, P.; Paluba, D.; Kobliuk, N.; Svoboda, J.; Hladky, R.; Stych, P. Sentinel-2 data in an evaluation of the impact of the disturbances on forest vegetation. Remote Sens. 2020, 12, 1914. [Google Scholar] [CrossRef]

- Agapiou, A. Estimating proportion of vegetation cover at the vicinity of archaeological sites using sentinel-1 and-2 data, supplemented by crowdsourced openstreetmap geodata. Appl. Sci. 2020, 10, 4764. [Google Scholar] [CrossRef]

- McCarty, D.A.; Kim, H.W.; Lee, H.K. Evaluation of light gradient boosted machine learning technique in large scale land use and land cover classification. Environments 2020, 7, 84. [Google Scholar] [CrossRef]

- Gu, G.; Wu, B.; Zhang, W.; Lu, R.; Feng, X.; Liao, W.; Pang, C.; Lu, S. Comparing machine learning methods for predicting land development intensity. PLoS ONE 2023, 18, e0282476. [Google Scholar] [CrossRef]

- Sahin, E.K. Assessing the predictive capability of ensemble tree methods for landslide susceptibility mapping using XGBoost, gradient boosting machine, and random forest. SN Appl. Sci. 2020, 2, 1308. [Google Scholar] [CrossRef]

- Saini, R.; Ghosh, S.K. Crop classsification on singled. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci 2018, XLII, 20–23. [Google Scholar]

- Abdi, A.M. Land cover and land use classification performance of machine learning algorithms in a boreal landscape using Sentinel-2 data. GIScience Remote Sens. 2020, 57, 1–20. [Google Scholar] [CrossRef]

- Alzahrani, A.; Kanan, A. Machine Learning Approaches for Developing Land Cover Mapping. Appl. Bionics Biomech. 2022, 2022, 5190193. [Google Scholar] [CrossRef]

- Yuh, Y.G.; Tracz, W.; Matthews, H.D.; Turner, S.E. Application of machine learning approaches for land cover monitoring in northern Cameroon. Ecol. Inform. 2023, 74, 101955. [Google Scholar] [CrossRef]

- Ramachandra, T.V.; Mondal, T.; Setturu, B. Relative performance evaluation of machine learning algorithms for land use classification using multispectral moderate resolution data. SN Appl. Sci. 2023, 5, 274. [Google Scholar] [CrossRef]

- Zhang, C.; Liu, Y.; Tie, N. Forest Land Resource Information Acquisition with Sentinel-2 Image Utilizing Support Vector Machine, K-Nearest Neighbor, Random Forest, Decision Trees and Multi-Layer Perceptron. Forests 2023, 14, 254. [Google Scholar] [CrossRef]

- Nguyen, H.T.T.; Doan, T.M.; Radeloff, V. Applying Random Forest classification to map Land use/Land cover using Landsat 8 OLI. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. ISPRS Arch. 2018, 42, 363–367. [Google Scholar] [CrossRef]

- Wei, X.; Zhang, W.; Zhang, Z.; Huang, H.; Meng, L. Urban land use land cover classification based on GF-6 satellite imagery and multi-feature optimization. Geocarto Int. 2023, 38, 2236579. [Google Scholar] [CrossRef]

- De Sousa, C.; Fatoyinbo, L.; Neigh, C.; Boucka, F.; Angoue, V.; Larsen, T. Cloud-computing and machine learning in support of country-level land cover and ecosystem extent mapping in Liberia and Gabon. PLoS ONE 2020, 15, e0227438. [Google Scholar] [CrossRef]

- Maxwell, A.E.; Strager, M.P.; Warner, T.A.; Ramezan, C.A.; Morgan, A.N.; Pauley, C.E. Large-area, high spatial resolution land cover mapping using random forests, GEOBIA, and NAIP orthophotography: Findings and recommendations. Remote Sens. 2019, 11, 1409. [Google Scholar] [CrossRef]

- Noi Phan, T.; Kuch, V.; Lehnert, L.W. Land cover classification using google earth engine and random forest classifier-the role of image composition. Remote Sens. 2020, 12, 2411. [Google Scholar] [CrossRef]

- Aryal, J.; Sitaula, C.; Frery, A.C. Land use and land cover (LULC) performance modeling using machine learning algorithms: A case study of the city of Melbourne, Australia. Sci. Rep. 2023, 13, 13510. [Google Scholar] [CrossRef] [PubMed]

- Rodriguez-Galiano, V.F.; Ghimire, B.; Rogan, J.; Chica-olmo, M.; Rigol-sanchez, J.P. An assessment of the effectiveness of a random forest classifier for land-cover classification. ISPRS J. Photogramm. Remote Sens. 2012, 67, 93–104. [Google Scholar] [CrossRef]

- Rash, A.; Mustafa, Y.; Hamad, R. Quantitative assessment of Land use/land cover changes in a developing region using machine learning algorithms: A case study in the Kurdistan Region, Iraq. Heliyon 2023, 9, e21253. [Google Scholar] [CrossRef] [PubMed]

- Aziz, G.; Minallah, N.; Saeed, A.; Frnda, J.; Khan, W. Remote sensing based forest cover classification using machine learning. Sci. Rep. 2024, 14, 69. [Google Scholar] [CrossRef] [PubMed]

- Palanisamy, P.A.; Jain, K.; Bonafoni, S. Machine Learning Classifier Evaluation for Different Input Combinations: A Case Study with Landsat 9 and Sentinel-2 Data. Remote Sens. 2023, 15, 3241. [Google Scholar] [CrossRef]

- Friedman, J.H. Stochastic gradient boosting. Comput. Stat. Data Anal. 2002, 38, 367–378. [Google Scholar] [CrossRef]

- Alodah, I.; Neville, J. Combining Gradient Boosting Machines with Collective Inference to Predict Continuous Values. arXiv 2016, arXiv:1607.00110. [Google Scholar]

- Handoko, J.; Herwindiati, D.E.; Hendryli, J. Gradient Boosting Tree for Land Use Change Detection Using Landsat 7 and 8 Imageries: A Case Study of Bogor Area as Water Buffer Zone of Jakarta. IOP Conf. Ser. Earth Environ. Sci. 2020, 581, 012045. [Google Scholar] [CrossRef]

- Ouma, Y.; Nkwae, B.; Moalafhi, D.; Odirile, P.; Parida, B.; Anderson, G.; Qi, J. Comparison of Machine Learning Classifiers for Multitemporal and Multisensor Mapping of Urban Lulc Features. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. ISPRS Arch. 2022, 43, 681–689. [Google Scholar] [CrossRef]

- Mustapha, M.; Zineddine, M. Assessing the Impact of Climate Change On Seasonal Variation In Agricultural Land Use Using Sentinel-2 and Machine Learning. Environ. Sci. Proc. 2023, 1, 1–7. [Google Scholar]

- Ustuner, M.; Sanli, F.B.; Dixon, B. Application of support vector machines for landuse classification using high-resolution rapideye images: A sensitivity analysis. Eur. J. Remote Sens. 2015, 48, 403–422. [Google Scholar] [CrossRef]

- Tamirat, H.; Argaw, M.; Tekalign, M. Support vector machine-based spatiotemporal land use land cover change analysis in a complex urban and rural landscape of Akaki river catchment, a Suburb of Addis Ababa, Ethiopia. Heliyon 2023, 9, e22510. [Google Scholar] [CrossRef]

- Martínez Prentice, R.; Villoslada Peciña, M.; Ward, R.D.; Bergamo, T.F.; Joyce, C.B.; Sepp, K. Machine learning classification and accuracy assessment from high-resolution images of coastal wetlands. Remote Sens. 2021, 13, 3669. [Google Scholar] [CrossRef]

- Maxwell, A.E.; Warner, T.A.; Fang, F. Implementation of machine-learning classification in remote sensing: An applied review. Int. J. Remote Sens. 2018, 39, 2784–2817. [Google Scholar] [CrossRef]

- Shetty, S.; Gupta, P.K.; Belgiu, M.; Srivastav, S.K. Assessing the effect of training sampling design on the performance of machine learning classifiers for land cover mapping using multi-temporal remote sensing data and google earth engine. Remote Sens. 2021, 13, 1433. [Google Scholar] [CrossRef]

- Sheykhmousa, M.; Mahdianpari, M.; Ghanbari, H.; Mohammadimanesh, F.; Ghamisi, P.; Homayouni, S. Support Vector Machine Versus Random Forest for Remote Sensing Image Classification: A Meta-Analysis and Systematic Review. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2020, 13, 6308–6325. [Google Scholar] [CrossRef]

- Dabija, A.; Kluczek, M.; Zagajewski, B.; Raczko, E.; Kycko, M.; Al-Sulttani, A.H.; Tardà, A.; Pineda, L.; Corbera, J. Comparison of support vector machines and random forests for corine land cover mapping. Remote Sens. 2021, 13, 777. [Google Scholar] [CrossRef]

- Bahari, N.I.S.; Ahmad, A.; Aboobaider, B.M. Application of support vector machine for classification of multispectral data. IOP Conf. Ser. Earth Environ. Sci. 2014, 20, 012038. [Google Scholar] [CrossRef]

- Moisen, G.G. Classification and Regression Trees. Encycl. Ecol. 2008, 5, 582–588. [Google Scholar] [CrossRef]

- Yan, X.; Li, J.; Smith, A.R.; Yang, D.; Ma, T.; Su, Y.T.; Shao, J. Evaluation of machine learning methods and multi-source remote sensing data combinations to construct forest above-ground biomass models. Int. J. Digit. Earth 2023, 16, 4471–4491. [Google Scholar] [CrossRef]

- Bittencourt, H.R.; Clarke, R.T. Use of Classification and Regression Trees (CART) to Classify Remotely-Sensed Digital Images. Int. Geosci. Remote Sens. Symp. 2003, 6, 3751–3753. [Google Scholar] [CrossRef]

- Praticò, S.; Solano, F.; Di Fazio, S.; Modica, G. Machine learning classification of mediterranean forest habitats in google earth engine based on seasonal sentinel-2 time-series and input image composition optimisation. Remote Sens. 2021, 13, 586. [Google Scholar] [CrossRef]

- Maindonald, J. Statistical Learning from a Regression Perspective; Springer: Cham, Switzerland, 2009; Volume 29, ISBN 9783030401887. [Google Scholar]

- Loukika, K.N.; Keesara, V.R.; Sridhar, V. Analysis of land use and land cover using machine learning algorithms on google earth engine for Munneru river basin, India. Sustainability 2021, 13, 13758. [Google Scholar] [CrossRef]

- Fonte, C.C.; See, L.; Laso-Bayas, J.C.; Lesiv, M.; Fritz, S. Assessing the accuracy of land use land cover (lulc) maps using class proportions in the reference data. ISPRS Ann. Photogramm. Remote Sens. Spat. Inf. Sci. 2020, 5, 669–674. [Google Scholar] [CrossRef]

- Modica, G.; De Luca, G.; Messina, G.; Praticò, S. Comparison and assessment of different object-based classifications using machine learning algorithms and UAVs multispectral imagery: A case study in a citrus orchard and an onion crop. Eur. J. Remote Sens. 2021, 54, 431–460. [Google Scholar] [CrossRef]

- Tariq, A.; Jiango, Y.; Lu, L.; Jamil, A.; Al-ashkar, I.; Kamran, M.; Sabagh, A. El Integrated use of Sentinel-1 and Sentinel-2 data and open-source machine learning algorithms for burnt and unburnt scars. Geomat. Nat. Hazards Risk 2023, 14, 2190856. [Google Scholar] [CrossRef]

- Elsaid Adlan Abdelkareem, O.; S Eltahir, M.E.; ELNOUR Adam, H.; Rahamtallah Abualgasim, M.; Esaid Adlan Abdelkareem, O.; Mohamed Ahmed Elamin, H.; Elyas Siddig Eltahir, M.; Elnour Adam, H.; Eltom Elhaja, M.; Majdeldin Rahamtalla, A.; et al. Accuracy Assessment of Land Use Land Cover in Umabdalla Natural Reserved Forest. Int. J. Agric. Environ. Sci. 2018, 3, 5–9. [Google Scholar]

- Basheer, S.; Wang, X.; Farooque, A.A.; Nawaz, R.A.; Liu, K.; Adekanmbi, T.; Liu, S. Comparison of Land Use Land Cover Classifiers Using Different Satellite Imagery and Machine Learning Techniques. Remote Sens. 2022, 14, 4978. [Google Scholar] [CrossRef]

- Talukdar, S.; Singha, P.; Mahato, S.; Shahfahad; Pal, S.; Liou, Y.A.; Rahman, A. Land-use land-cover classification by machine learning classifiers for satellite observations-A review. Remote Sens. 2020, 12, 1135. [Google Scholar] [CrossRef]

- Ouattara, B.; Forkuor, G.; Zoungrana, B.J.B.; Dimobe, K.; Danumah, J.; Saley, B.; Tondoh, J.E. Crops monitoring and yield estimation using sentinel products in semi-arid smallholder irrigation schemes. Int. J. Remote Sens. 2020, 41, 6527–6549. [Google Scholar] [CrossRef]

- Baber, S. The Impact of Radiometric Calibration Error on Earth Observation-Supported Decision Making. Bachelor’s Thesis, Massachusetts Institute of Technology, Cambridge, MA, USA, 2021. [Google Scholar]

- Marshall, M.; Crommelinck, S.; Kohli, D.; Perger, C.; Yang, M.Y.; Ghosh, A.; Fritz, S.; de Bie, K.; Nelson, A. Crowd-driven and automated mapping of field boundaries in highly fragmented agricultural landscapes of Ethiopia with very high spatial resolution imagery. Remote Sens. 2019, 11, 2082. [Google Scholar] [CrossRef]

- Liu, C.-A.; Chen, Z.-X.; Shao, Y.; Chen, J.-S.; Hasi, T.; Pan, H.-Z. Research advances of SAR remote sensing for agriculture applications: A review. J. Integr. Agric. 2019, 18, 506–525. [Google Scholar] [CrossRef]

- Gbodjo, Y.J.E.; Ienco, D.; Leroux, L. Benchmarking statistical modelling approaches with multi-source remote sensing data for millet yield monitoring: A case study of the groundnut basin in central Senegal. Int. J. Remote Sens. 2021, 42, 9277–9300. [Google Scholar] [CrossRef]

- Li, E.; Samat, A.; Liu, W.; Lin, C.; Bai, X. High-resolution imagery classification based on different levels of information. Remote Sens. 2019, 11, 2916. [Google Scholar] [CrossRef]

- Zhang, H.; He, J.; Chen, S.; Zhan, Y.; Bai, Y.; Qin, Y. Comparing Three Methods of Selecting Training Samples in Supervised Classification of Multispectral Remote Sensing Images. Sensors 2023, 23, 8530. [Google Scholar] [CrossRef]

- Van-Tuam, N.; Rachid, N.; Van-Anh, L.L.C. Application of GIS and Remote Sensing for predicting Land-use change in the French Jura Mountains with the LCM Model. In Proceedings of the 34th Asian Conference on Remote Sensing, Bali, Indonesia, 20–24 October 2013; pp. 95–102. [Google Scholar]

- Gondwe, J.F.; Lin, S.; Munthali, R.M. Analysis of Land Use and Land Cover Changes in Urban Areas Using Remote Sensing: Case of Blantyre City. Discret. Dyn. Nat. Soc. 2021, 2021, 8011565. [Google Scholar] [CrossRef]

- Khan, A.; Govil, H.; Kumar, G.; Dave, R. Synergistic use of Sentinel-1 and Sentinel-2 for improved LULC mapping with special reference to bad land class: A case study for Yamuna River floodplain, India. Spat. Inf. Res. 2020, 28, 669–681. [Google Scholar] [CrossRef]

- Slagter, B.; Tsendbazar, N.E.; Vollrath, A.; Reiche, J. Mapping wetland characteristics using temporally dense Sentinel-1 and Sentinel-2 data: A case study in the St. Lucia wetlands, South Africa. Int. J. Appl. Earth Obs. Geoinf. 2020, 86, 102009. [Google Scholar] [CrossRef]

- Chabalala, Y.; Adam, E.; Ali, K.A. Machine Learning Classification of Fused Sentinel-1 and Sentinel-2 Image Data towards Mapping Fruit Plantations in Highly Heterogenous Landscapes. Remote Sens. 2022, 14, 2621. [Google Scholar] [CrossRef]

- Tavares, P.A.; Beltrão, N.E.S.; Guimarães, U.S.; Teodoro, A.C. Integration of sentinel-1 and sentinel-2 for classification and LULC mapping in the urban area of Belém, eastern Brazilian Amazon. Sensors 2019, 19, 1140. [Google Scholar] [CrossRef] [PubMed]

- Fernandez, H.S.; de Oliveira, F.H.; Gerente, J.; Junior, F.C.G.; Providelo, L.A.; Marchiori, G.; Liu, Y. Sentinel-1 and Sentinel-2 data fusion by Principal Components Analysis applied to the vegetation classification around power transmission lines. Aust. J. Basic Appl. Sci. 2022, 16, 1–14. [Google Scholar]

- De Luca, G.; Silva, M.N.J.; Di Fazio, S.; Modica, G. Integrated use of Sentinel-1 and Sentinel-2 data and open-source machine learning algorithms for land cover mapping in a Mediterranean region. Eur. J. Remote Sens. 2022, 55, 52–70. [Google Scholar] [CrossRef]

- Aryal, K.; Apan, A.; Maraseni, T. Comparing global and local land cover maps for ecosystem management in the Himalayas. Remote Sens. Appl. Soc. Environ. 2023, 30, 100952. [Google Scholar] [CrossRef]

- Duarte, D.; Fonte, C.; Costa, H.; Caetano, M. Thematic Comparison between ESA WorldCover 2020 Land Cover Product and a National Land Use Land Cover Map. Land 2023, 12, 490. [Google Scholar] [CrossRef]

- Venter, Z.S.; Barton, D.N.; Chakraborty, T.; Simensen, T.; Singh, G. Global 10 m Land Use Land Cover Datasets: A Comparison of Dynamic World, World Cover and Esri Land Cover. Remote Sens. 2022, 14, 4101. [Google Scholar] [CrossRef]

- Carletto, C.; Jolliffe, D.; Banerjee, R. From Tragedy to Renaissance: Improving Agricultural Data for Better Policies. J. Dev. Stud. 2015, 51, 133–148. [Google Scholar] [CrossRef]

- Khechba, K.; Laamrani, A.; Dhiba, D.; Misbah, K.; Chehbouni, A. Monitoring and analyzing yield gap in africa through soil attribute best management using remote sensing approaches: A review. Remote Sens. 2021, 13, 4602. [Google Scholar] [CrossRef]

- Masiza, W.; Chirima, J.G.; Hamandawana, H.; Pillay, R. Enhanced mapping of a smallholder crop farming landscape through image fusion and model stacking. Int. J. Remote Sens. 2020, 41, 8736–8753. [Google Scholar] [CrossRef]

- Tseng, G.; Nakalembe, C.; Kerner, H.; Becker-Reshef, I. Annual and in-season mapping of cropland at field scale with sparse labels. Clim. Chang. AI 2020, 1–6. [Google Scholar]

- Misra, G.; Cawkwell, F.; Wingler, A. Status of phenological research using sentinel-2 data: A review. Remote Sens. 2020, 12, 2760. [Google Scholar] [CrossRef]

- Tran, K.H.; Zhang, X.; Ye, Y.; Shen, Y.; Gao, S.; Liu, Y.; Richardson, A. HP-LSP: A reference of land surface phenology from fused Harmonized Landsat and Sentinel-2 with PhenoCam data. Sci. Data 2023, 10, 691. [Google Scholar] [CrossRef] [PubMed]

- Zhang, P.; Hu, S.; Li, W.; Zhang, C.; Cheng, P. Improving parcel-level mapping of smallholder crops from vhsr imagery: An ensemble machine-learning-based framework. Remote Sens. 2021, 13, 2146. [Google Scholar] [CrossRef]

- Hirayama, H.; Sharma, R.C.; Tomita, M.; Hara, K. Evaluating multiple classifier system for the reduction of salt-and-pepper noise in the classification of very-high-resolution satellite images. Int. J. Remote Sens. 2019, 40, 2542–2557. [Google Scholar] [CrossRef]

- Nguyen, T.T.H.; Chau, T.N.Q.; Pham, T.A.; Tran, T.X.P.; Phan, T.H.; Pham, T.M.T. Mapping Land use/land cover using a combination of Radar Sentinel-1A and Sentinel-2A optical images. IOP Conf. Ser. Earth Environ. Sci. 2021, 652, 012021. [Google Scholar] [CrossRef]

- Petrushevsky, N.; Manzoni, M.; Guarnieri, A.M. High-resolution urban mapping by fusion of sar and optical data. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. ISPRS Arch. 2021, 43, 273–278. [Google Scholar] [CrossRef]

- Zeng, J.; Tan, M.L.; Tew, Y.L.; Zhang, F.; Wang, T.; Samat, N.; Tangang, F.; Yusop, Z. Optimization of Open-Access Optical and Radar Satellite Data in Google Earth Engine for Oil Palm Mapping in the Muda River Basin, Malaysia. Agric. 2022, 12, 1435. [Google Scholar] [CrossRef]

- Spracklen, B.; Spracklen, D.V. Synergistic Use of Sentinel-1 and Sentinel-2 to Map Natural Forest and Acacia Plantation and Stand Ages in North-Central Vietnam. Remote Sens. 2021, 13, 185. [Google Scholar] [CrossRef]

- Guo, L.; Zhao, S.; Gao, J.; Zhang, H.; Zou, Y.; Xiao, X. A Novel Workflow for Crop Type Mapping with a Time Series of Synthetic Aperture Radar and Optical Images in the Google Earth Engine. Remote Sens. 2022, 14, 5458. [Google Scholar] [CrossRef]

- Nicolau, A.P.; Flores-Anderson, A.; Griffin, R.; Herndon, K.; Meyer, F.J. Assessing SAR C-band data to effectively distinguish modified land uses in a heavily disturbed Amazon forest. Int. J. Appl. Earth Obs. Geoinf. 2021, 94, 102214. [Google Scholar] [CrossRef]

- Clerici, N.; Valbuena Calderón, C.A.; Posada, J.M. Fusion of sentinel-1a and sentinel-2A data for land cover mapping: A case study in the lower Magdalena region, Colombia. J. Maps 2017, 13, 718–726. [Google Scholar] [CrossRef]

- Tamiminia, H.; Salehi, B.; Mahdianpari, M.; Quackenbush, L.; Adeli, S.; Brisco, B. Google Earth Engine for geo-big data applications: A meta-analysis and systematic review. ISPRS J. Photogramm. Remote Sens. 2020, 164, 152–170. [Google Scholar] [CrossRef]

- Aquilino, M.; Tarantino, C.; Adamo, M.; Barbanente, A.; Blonda, P. Earth observation for the implementation of sustainable development goal 11 indicators at local scale: Monitoring of the migrant population distribution. Remote Sens. 2020, 12, 950. [Google Scholar] [CrossRef]

- Rogan, J.; Franklin, J.; Stow, D.; Miller, J.; Woodcock, C.; Roberts, D. Mapping land-cover modifications over large areas: A comparison of machine learning algorithms. Remote Sens. Environ. 2008, 112, 2272–2283. [Google Scholar] [CrossRef]

- Olofsson, P.; Foody, G.M.; Stehman, S.V.; Woodcock, C.E. Making better use of accuracy data in land change studies: Estimating accuracy and area and quantifying uncertainty using stratified estimation. Remote Sens. Environ. 2013, 129, 122–131. [Google Scholar] [CrossRef]

- Cheng, K.S.; Ling, J.Y.; Lin, T.W.; Liu, Y.T.; Shen, Y.C.; Kono, Y. Quantifying Uncertainty in Land-Use/Land-Cover Classification Accuracy: A Stochastic Simulation Approach. Front. Environ. Sci. 2021, 9, 46. [Google Scholar] [CrossRef]

- FAO. Map Accuracy Assessment and Area Estimation: A Practical Guide; Food and Agriculture Organization of the United Nations: Rome, Italy, 2016; Volume 69. [Google Scholar]

- Maxwell, A.E.; Warner, T.A. Thematic classification accuracy assessment with inherently uncertain boundaries: An argument for center-weighted accuracy assessment metrics. Remote Sens. 2020, 12, 1905. [Google Scholar] [CrossRef]

| Index | Formula | Reference | Equation |

|---|---|---|---|

| NDVI | [105] | (1) | |

| EVI | [106,107] | (2) | |

| GNDVI | [108,109] | (3) | |

| BSI | [110,111] | (4) | |

| NDWI | [112] | (5) | |

| MNDWI | [113,114] | (6) | |

| TCG | [115] | (7) | |

| TCW | [115] | (8) | |

| Ratio | [103] | (9) | |

| mRVI | [116] | (10) |

| UA | PA | F-Score | FM | ||

|---|---|---|---|---|---|

| SVM | Agriculture | 0.88 | 0.76 | 0.81 | 0.71 |

| Vegetation | 1.00 | 0.98 | 0.99 | 0.98 | |

| Built-up | 0.96 | 0.98 | 0.97 | 0.94 | |

| Bare land | 0.86 | 0.92 | 0.89 | 0.80 | |

| Water | 0.95 | 0.92 | 0.94 | 0.87 | |

| RF | Agriculture | 0.83 | 0.79 | 0.81 | 0.70 |

| Vegetation | 1.00 | 0.98 | 0.99 | 0.98 | |

| Built-up | 0.93 | 0.91 | 0.92 | 0.85 | |

| Bare land | 0.84 | 0.88 | 0.86 | 0.75 | |

| Water | 0.97 | 0.91 | 0.94 | 0.88 | |

| GTB | Agriculture | 0.83 | 0.80 | 0.82 | 0.71 |

| Vegetation | 0.99 | 0.98 | 0.98 | 0.97 | |

| Built-up | 0.93 | 0.92 | 0.93 | 0.86 | |

| Bare land | 0.85 | 0.89 | 0.87 | 0.77 | |

| Water | 0.97 | 0.90 | 0.93 | 0.87 | |

| CART | Agriculture | 0.75 | 0.78 | 0.77 | 0.64 |

| Vegetation | 0.99 | 0.98 | 0.98 | 0.96 | |

| Built-up | 0.86 | 0.89 | 0.88 | 0.77 | |

| Bare land | 0.83 | 0.81 | 0.82 | 0.70 | |

| Water | 0.95 | 0.88 | 0.92 | 0.83 |

| Classifier | Class | UA | PA | F-Score | FM |

|---|---|---|---|---|---|

| SVM | Agriculture | 0.92 | 0.87 | 0.89 | 0.82 |

| Vegetation | 0.99 | 0.98 | 0.98 | 0.97 | |

| Built-up | 0.99 | 0.98 | 0.98 | 0.97 | |

| Bare land | 0.91 | 0.95 | 0.93 | 0.87 | |

| Water | 0.93 | 0.91 | 0.92 | 0.86 | |

| RF | Agriculture | 0.91 | 0.89 | 0.89 | 0.82 |

| Vegetation | 0.99 | 0.99 | 0.99 | 0.97 | |

| Built-up | 0.96 | 0.94 | 0.95 | 0.90 | |

| Bare land | 0.88 | 0.91 | 0.89 | 0.81 | |

| Water | 0.98 | 0.94 | 0.97 | 0.92 | |

| GTB | Agriculture | 0.89 | 0.87 | 0.88 | 0.80 |

| Vegetation | 0.99 | 0.98 | 0.98 | 0.97 | |

| Built-up | 0.96 | 0.94 | 0.94 | 0.90 | |

| Bare land | 0.89 | 0.91 | 0.90 | 0.82 | |

| Water | 0.82 | 0.87 | 0.92 | 0.75 | |

| CART | Agriculture | 0.83 | 0.86 | 0.84 | 0.74 |

| Vegetation | 1.00 | 0.96 | 0.98 | 0.96 | |

| Built-up | 0.95 | 0.91 | 0.93 | 0.86 | |

| Bare land | 0.83 | 0.85 | 0.84 | 0.73 | |

| Water | 0.98 | 0.88 | 0.93 | 0.86 |

| Class | Dataset | MLAs | UA | PA | Area in km | ±95% CI | OA |

|---|---|---|---|---|---|---|---|

| Agriculture | S2_S1 | SVM | 0.92 | 0.77 | 67,801.48 | 4119.42 | 92 |

| RF | 0.91 | 0.74 | 68,705.93 | 4942.889 | 90 | ||

| GTB | 0.89 | 0.74 | 60,662.78 | 4147.145 | 90 | ||

| CART | 0.83 | 0.76 | 81,658.95 | 5507.65 | 85 | ||

| S2 | SVM | 0.88 | 0.65 | 79,785.45 | 5281.748 | 88 | |

| RF | 0.83 | 0.65 | 70,127.21 | 5124.638 | 86 | ||

| GTB | 0.83 | 0.66 | 68,027.59 | 4991.566 | 86 | ||

| CART | 0.75 | 0.69 | 76,942.89 | 5342.114 | 83 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Mengesha, T.E.; Desta, L.T.; Gamba, P.; Ayehu, G.T. Multi-Temporal Passive and Active Remote Sensing for Agricultural Mapping and Acreage Estimation in Context of Small Farm Holds in Ethiopia. Land 2024, 13, 335. https://doi.org/10.3390/land13030335

Mengesha TE, Desta LT, Gamba P, Ayehu GT. Multi-Temporal Passive and Active Remote Sensing for Agricultural Mapping and Acreage Estimation in Context of Small Farm Holds in Ethiopia. Land. 2024; 13(3):335. https://doi.org/10.3390/land13030335

Chicago/Turabian StyleMengesha, Tesfamariam Engida, Lulseged Tamene Desta, Paolo Gamba, and Getachew Tesfaye Ayehu. 2024. "Multi-Temporal Passive and Active Remote Sensing for Agricultural Mapping and Acreage Estimation in Context of Small Farm Holds in Ethiopia" Land 13, no. 3: 335. https://doi.org/10.3390/land13030335

APA StyleMengesha, T. E., Desta, L. T., Gamba, P., & Ayehu, G. T. (2024). Multi-Temporal Passive and Active Remote Sensing for Agricultural Mapping and Acreage Estimation in Context of Small Farm Holds in Ethiopia. Land, 13(3), 335. https://doi.org/10.3390/land13030335