Automated Extraction of Urban Water Bodies from ZY‐3 Multi‐Spectral Imagery

Abstract

:1. Introduction

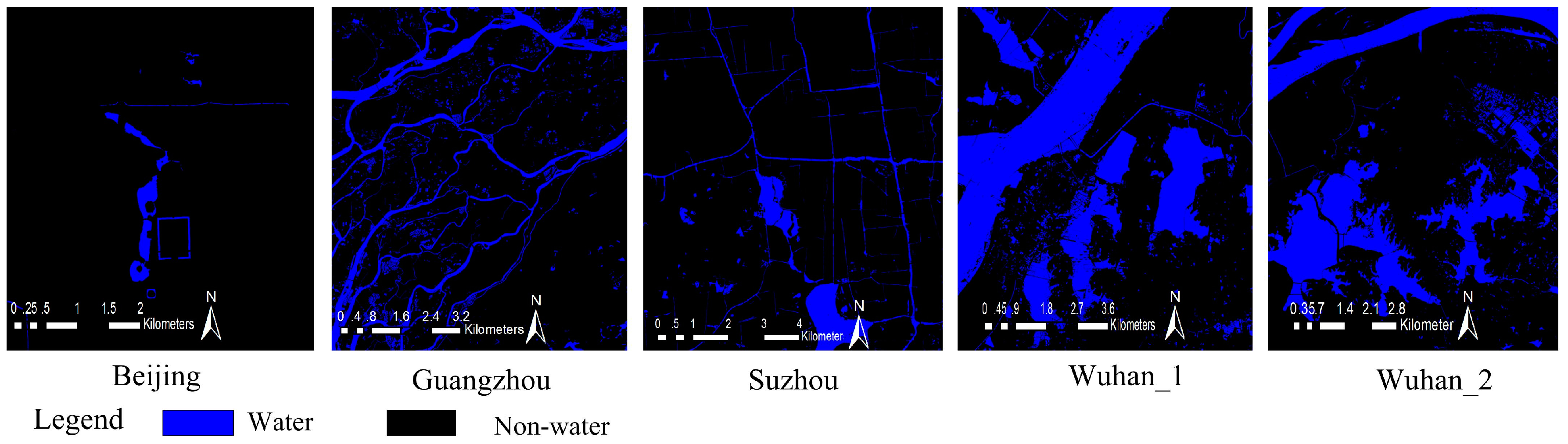

2. Study Areas and Data

2.1. Study Areas

2.2. Experimental ZY3 Imagery and Its Corresponding Reference Imagery

- Delineate precision of the fuzzy boundary of water body is within three pixels while the clear boundary of water body is within one pixels.

- Less than or equal to one pixels of water body information is not given to delineate.

- We choose reference of higher resolution Google map image in order to distinguish between water body and building shadow as well as the seemingly water body and non-water body.

- Urban water system is basically interconnected with each, other except for the river intercepted by bridge.

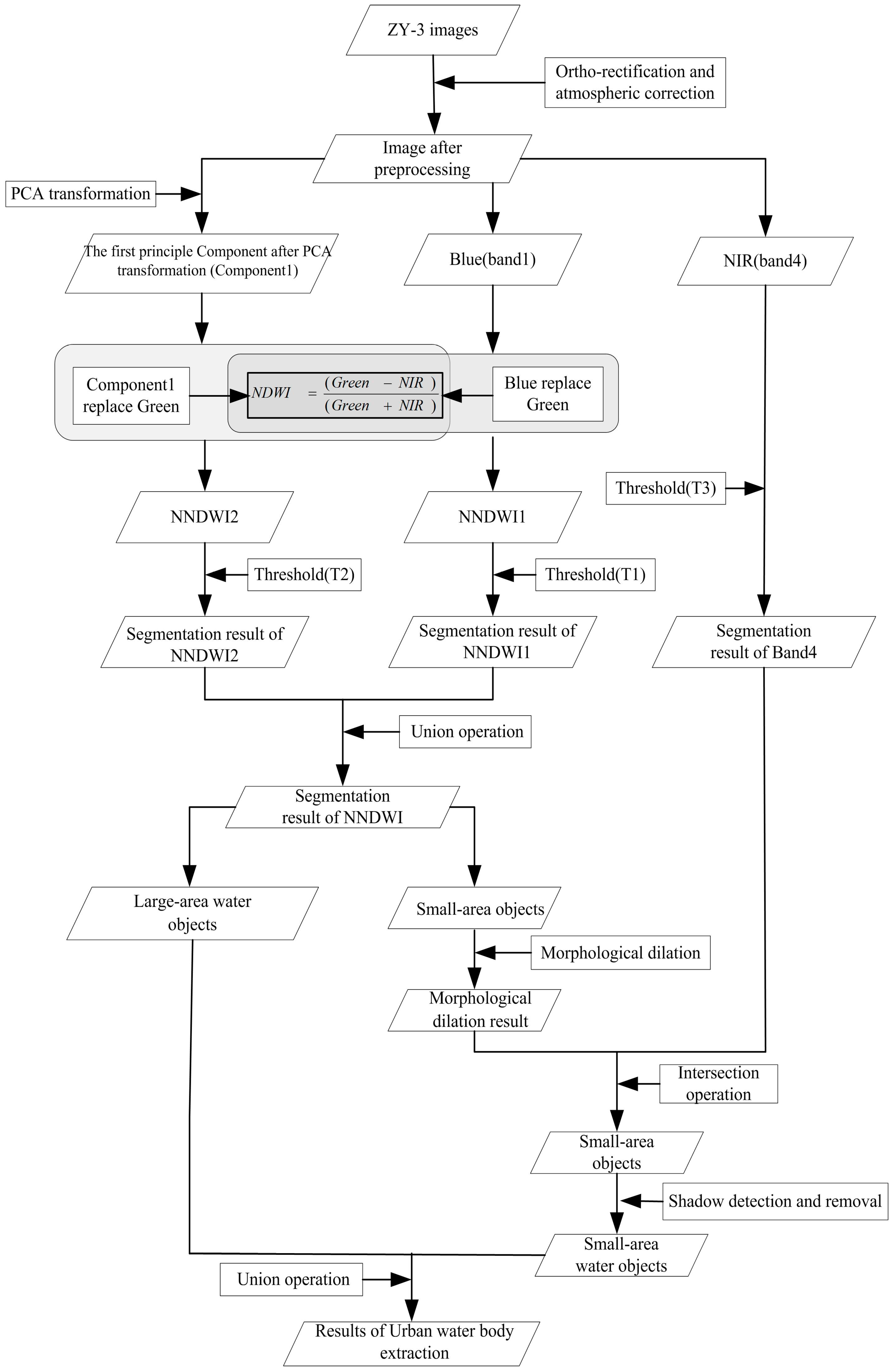

3. Method

3.1. Satellite Image Preprocessing

3.2. Normalized Difference Water Index (NDWI)

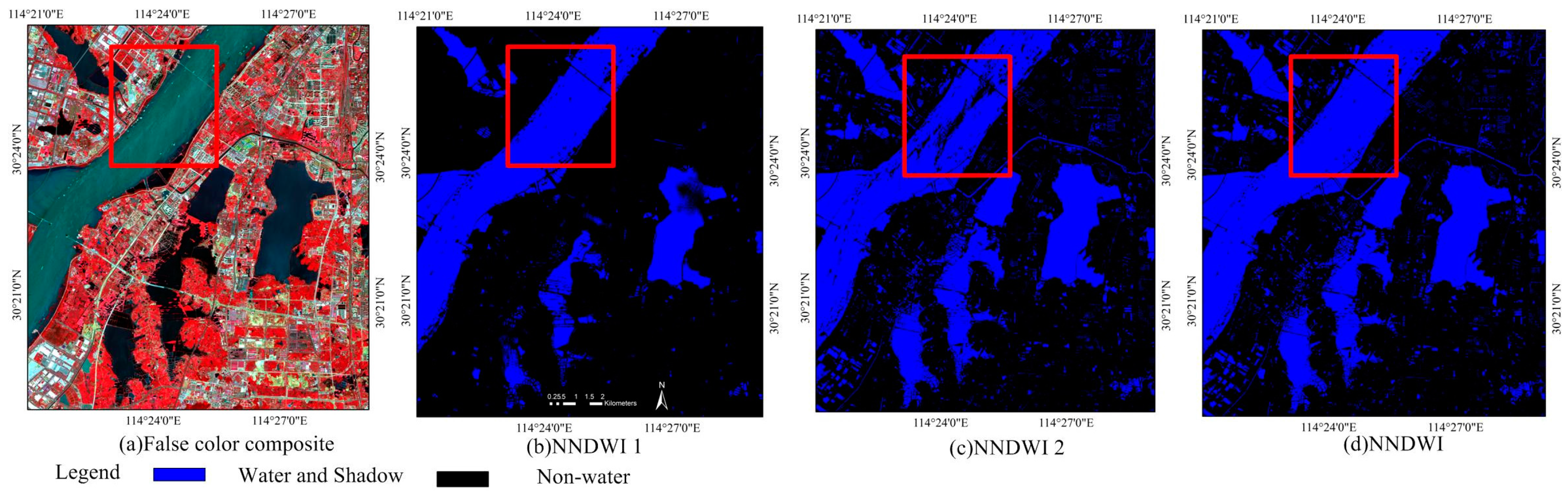

3.3. New Normalized Difference Water Indexes (NNDWI)

- Use the ZY-3 Blue band (Band1) to replace the green band in Equation (1) to obtain NNDWI1, i.e.,

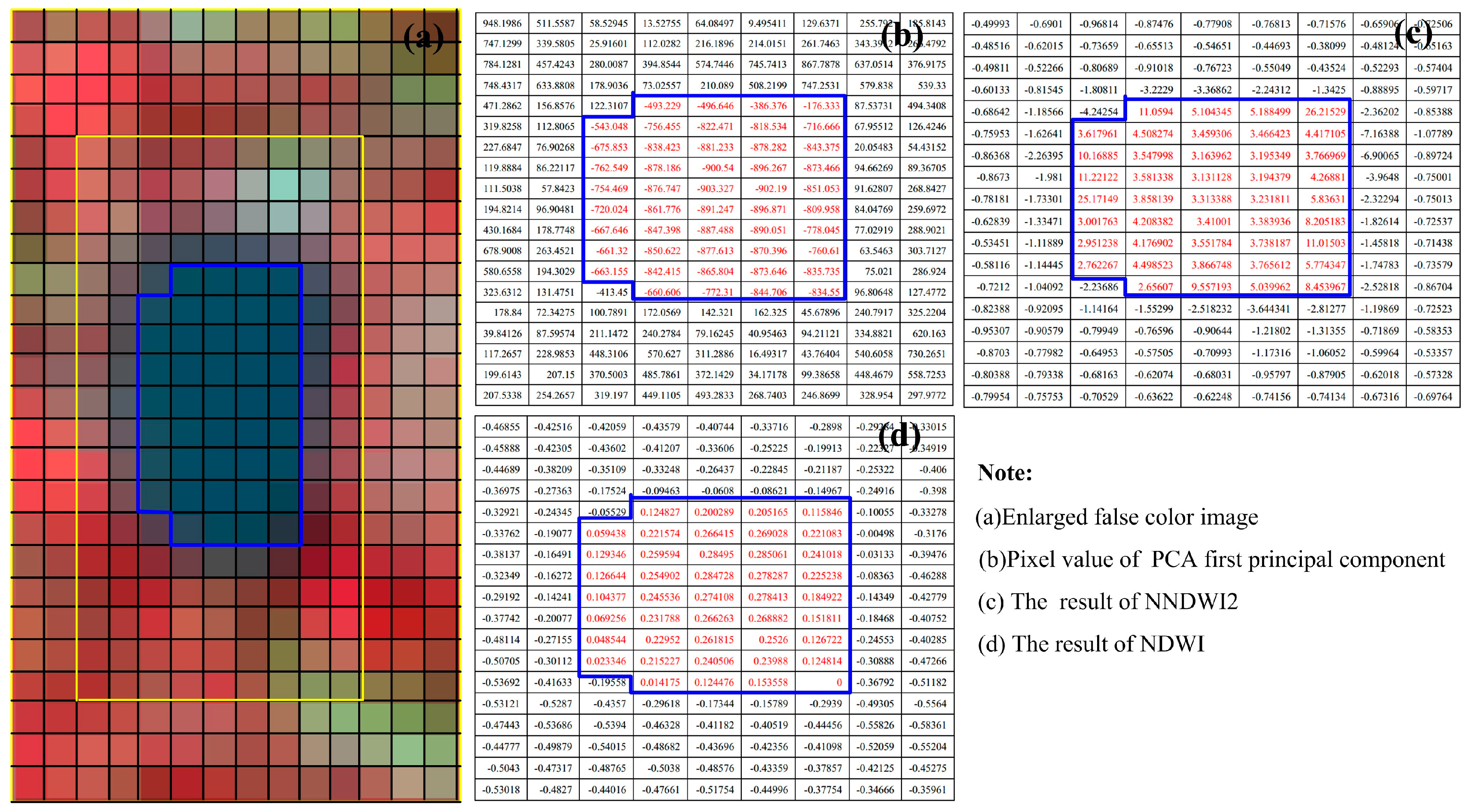

- Four bands of ZY-3 imagery were processed by the Principal Component Analysis (PCA) transformation [41], use the first principle component after PCA transformation to replace the Green band in Equation (1) to obtain NNDWI2, i.e.,where Component1 is the first principal component after PCA transformation. The PCA transformation reflects the methodology of dimension reduction [41]. From the mathematic perspective, it is to find a set of basis vectors which can most efficiently express the relations among various data. From the geometrical perspective, it is to rotate the original coordinate axis and get an orthogonal one, so that all data points reach the maximum dispersion along the new axis direction. When applied to the image analysis, it is to find as few basis images as possible to preserve the maximum information of the original images, thus achieving the purpose of feature extraction.

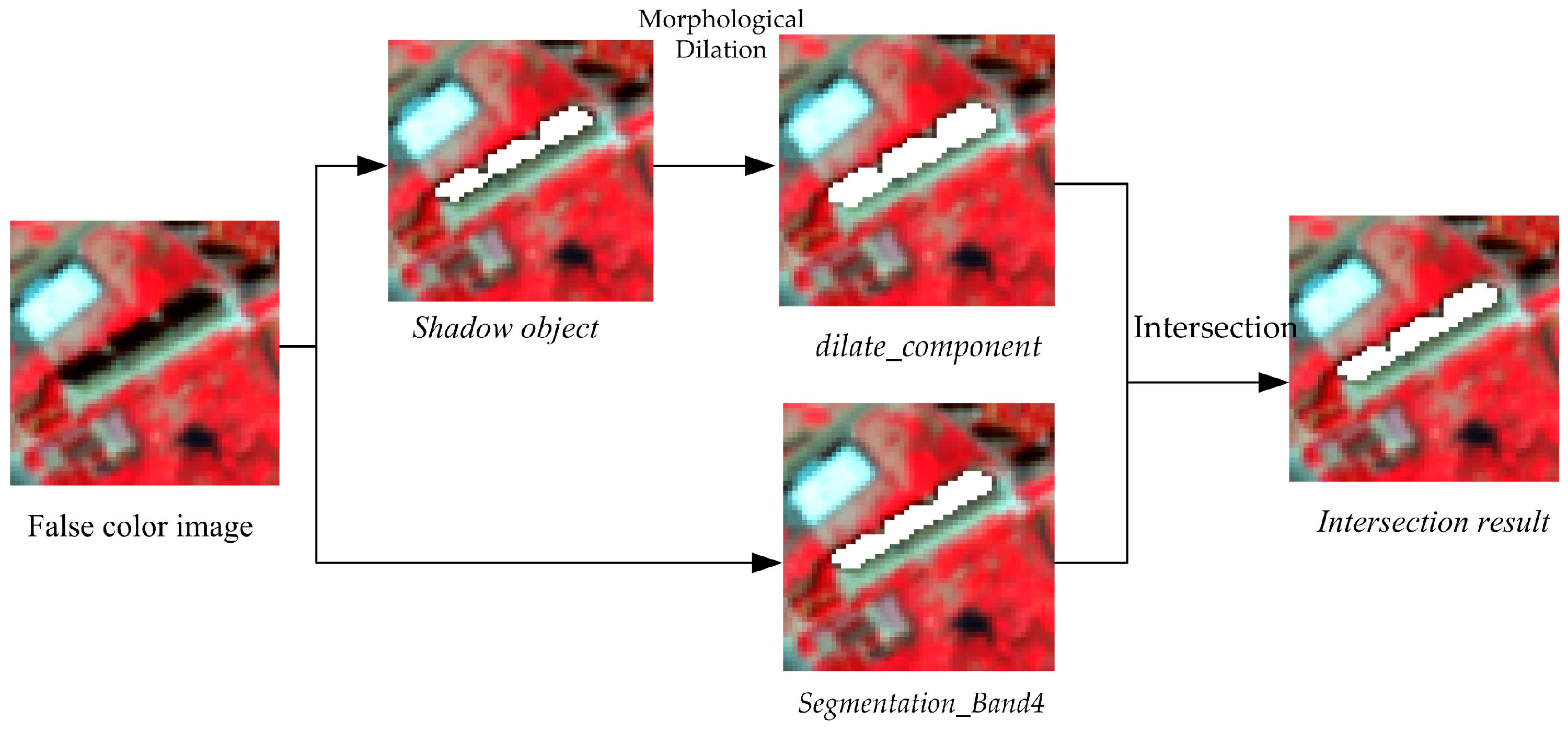

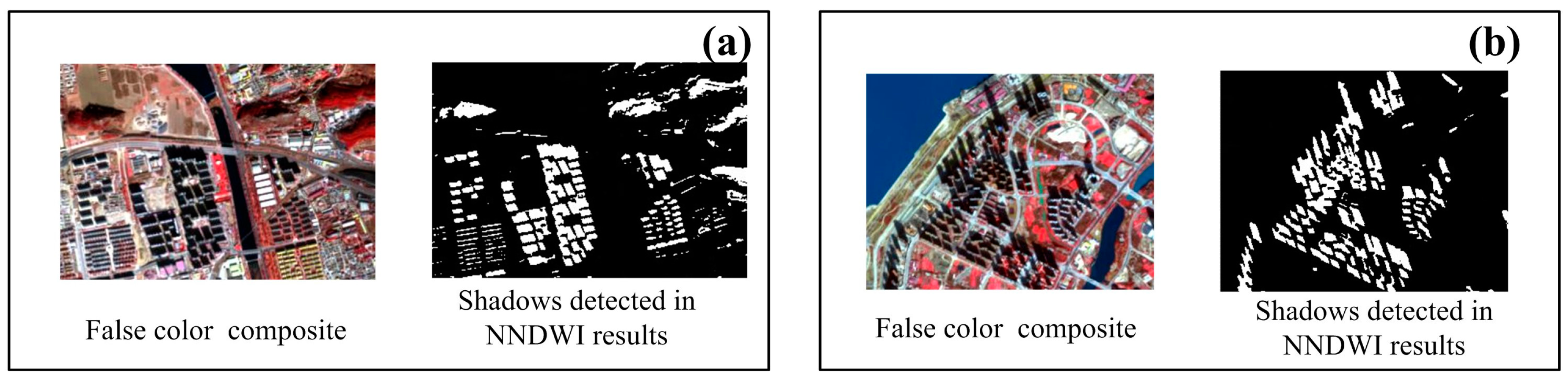

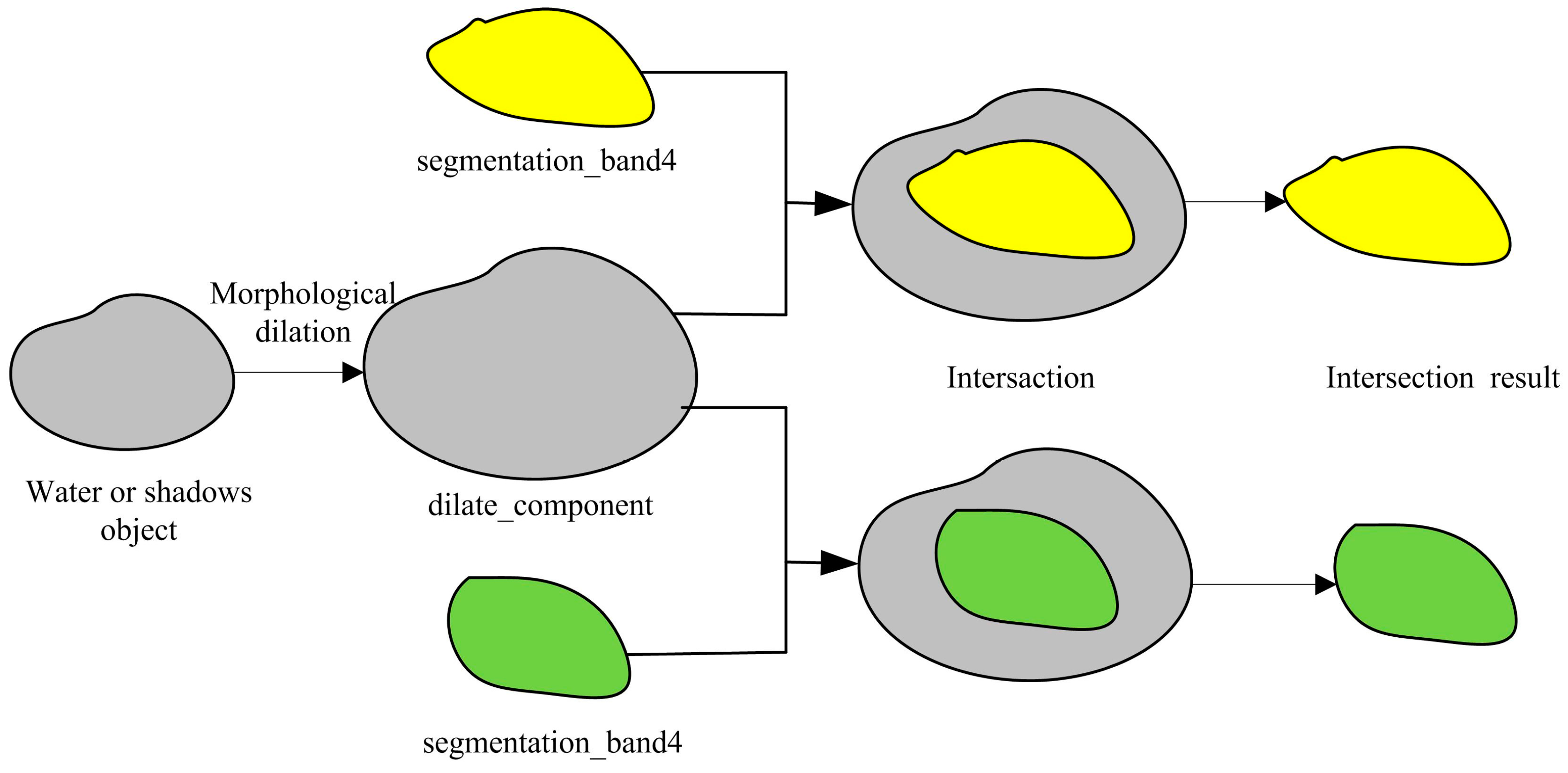

3.4. Shadow Detection Based on Object Oriented Technology

3.4.1. Shadow Objects

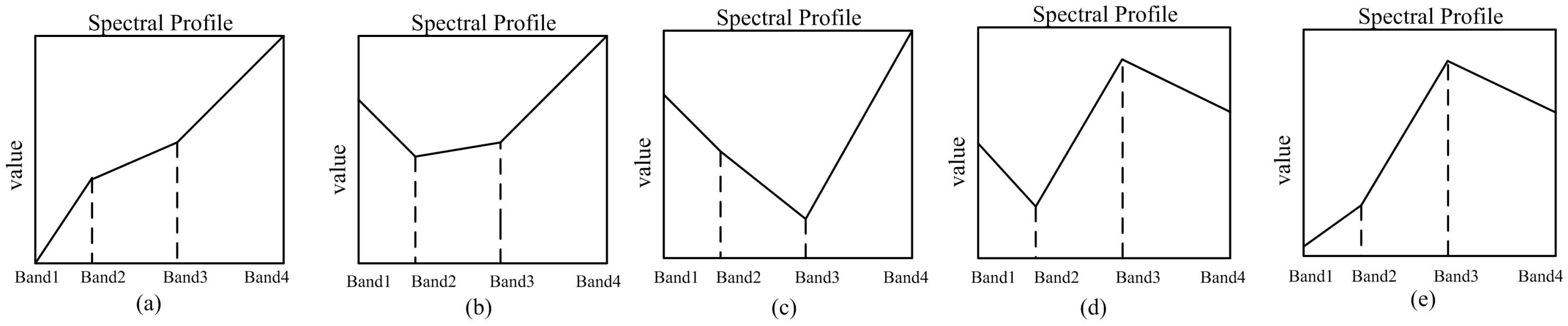

3.4.2. The Shadow Objects Description (The Description of Spectral Feature Relations between Water-Body Pixels and Shadow-Area Pixels)

3.4.3. The Shadow Objects Detection Method

3.5. Urban Water Extraction and Its Accuracy Evaluation

4. Experimental Results and Analysis

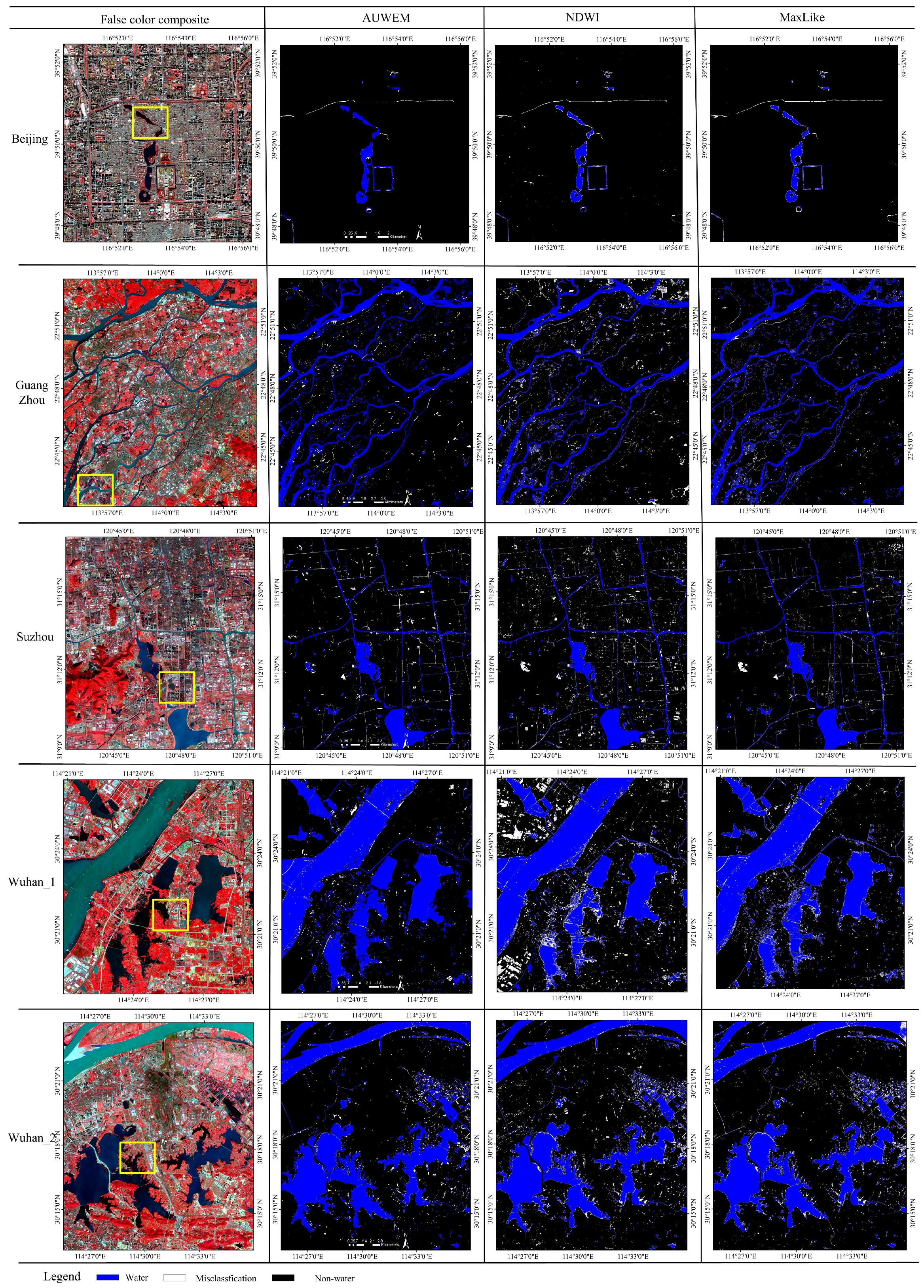

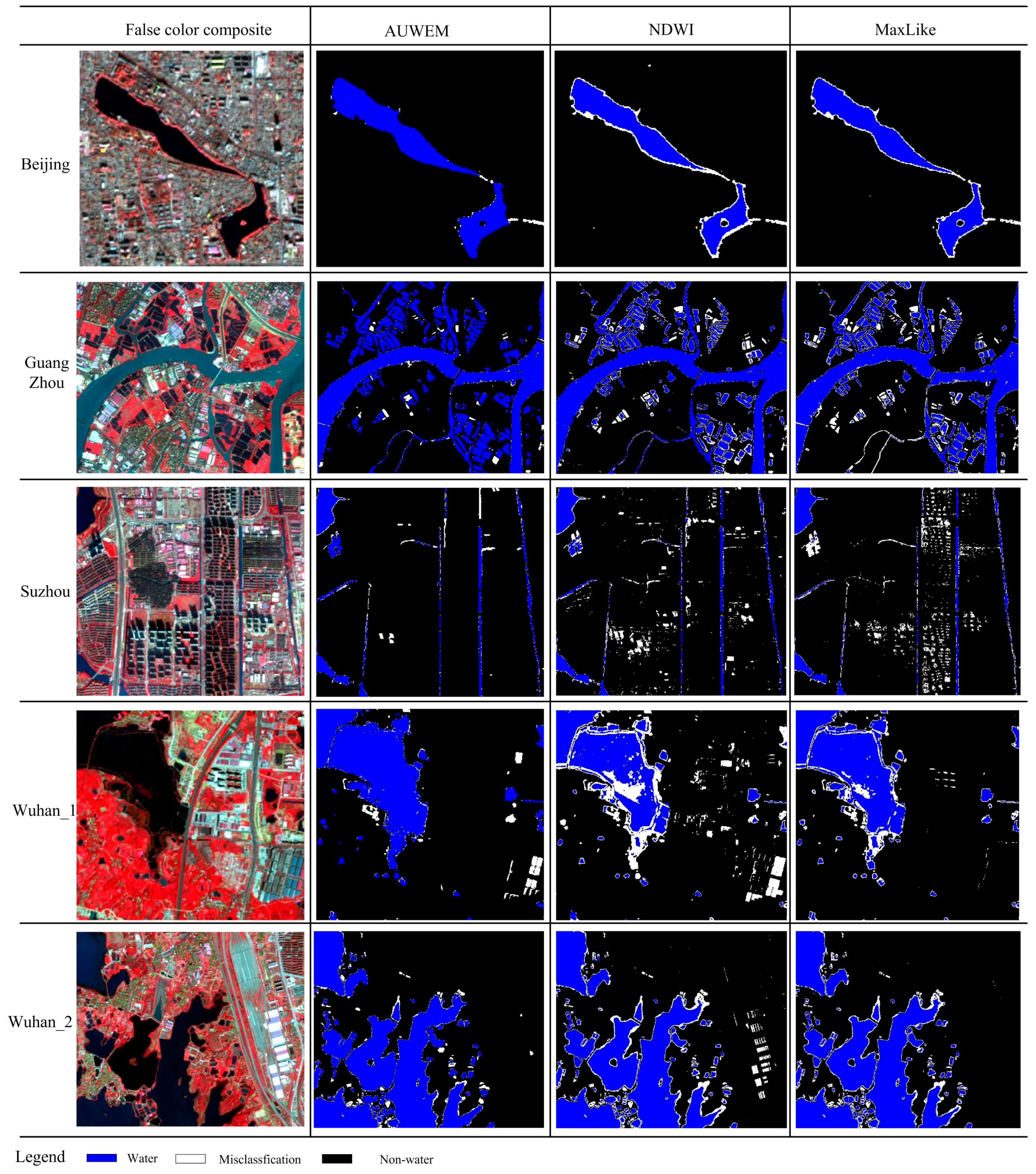

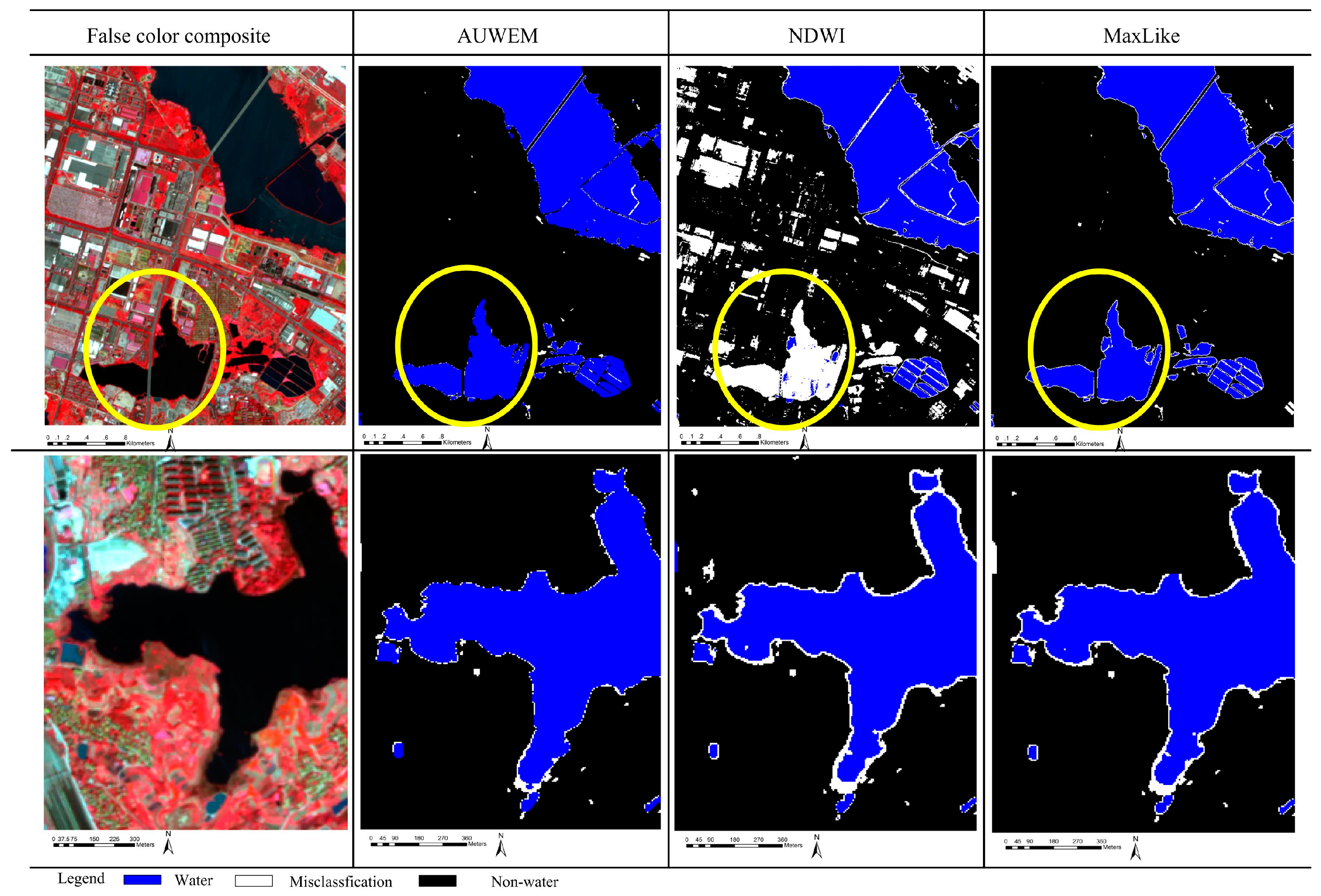

4.1. Water Extraction Maps

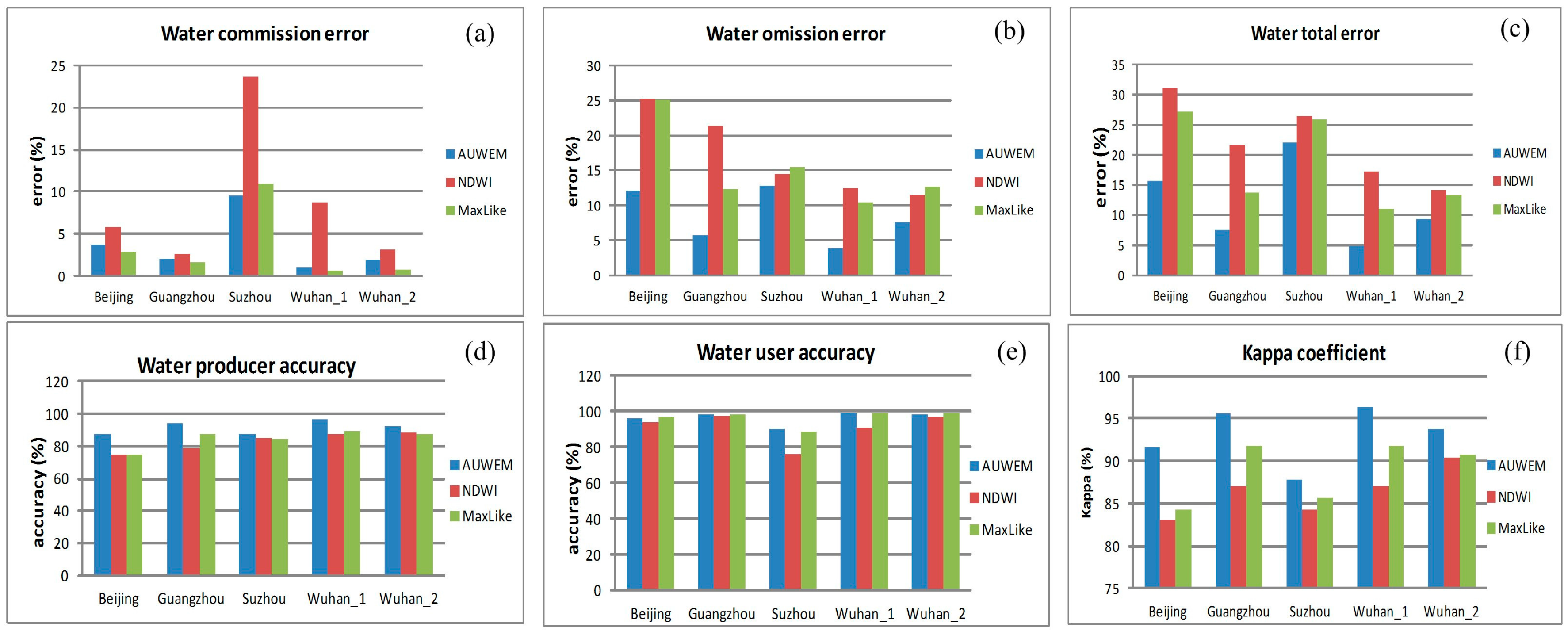

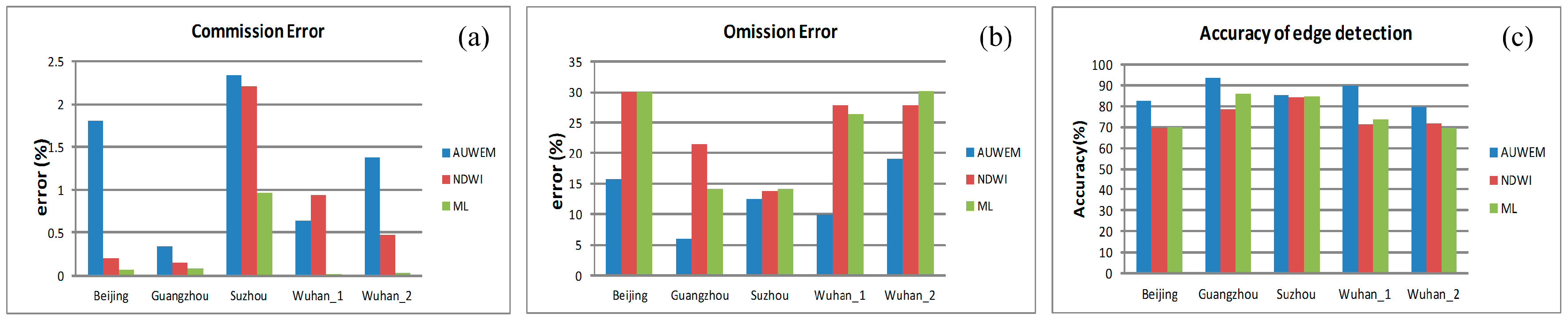

4.2. Water Extraction Accuracy

4.3. An Analysis of Water-Edge Pixel Extraction Accuracy

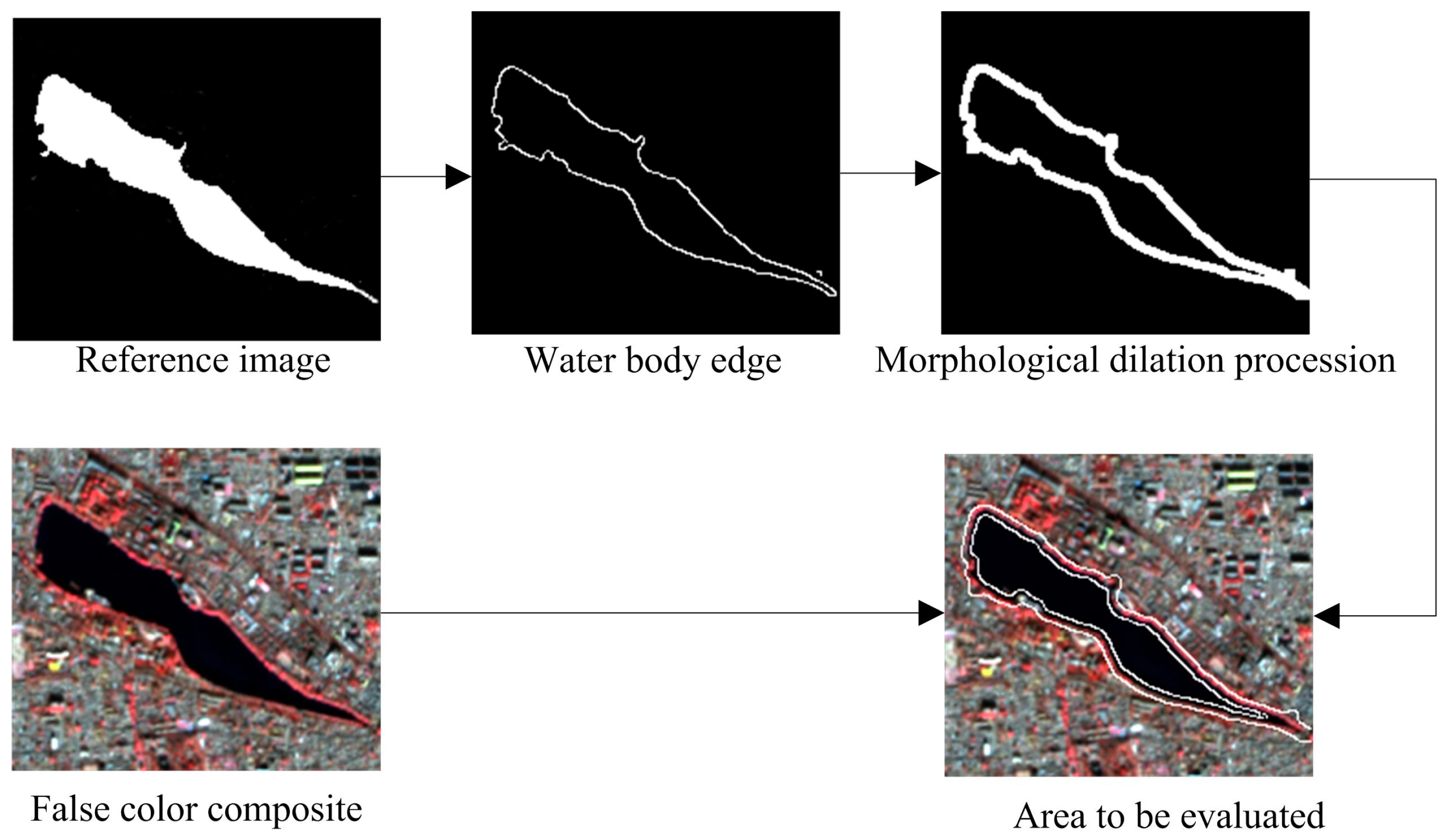

- Use the reference image to acquire the water edge by applying the Canny operator.

- Apply the morphological dilation to the acquired edge to establish a buffer zone centered around the edge with a radius of four pixels.

- Determine the pixels in the buffer zone. Suppose that the total number of pixels in the buffer zone is N, the number of correctly classified pixels is NR, the number of omitted pixels is No, and the number of commission error is Nc, then:where A + Eo + Ec = 100%. A indicates the proportion of correctly classified edge pixels (accuracy of edge detection), Eo indicates the proportion of omitted edge pixels (omission error), and Ec is the proportion of commissioned edge pixels (commission error). The edge detection results generated by the approach indicate a comparative rather than absolute conclusion. After all, the reference imageries we use are manually obtained so there will be limitations in visual observations and statistical results are an approximate reflection of the algorithms’ edge extraction accuracy. The process of obtaining the algorithm to acquire the water edge area for evaluation is shown below in Figure 10.

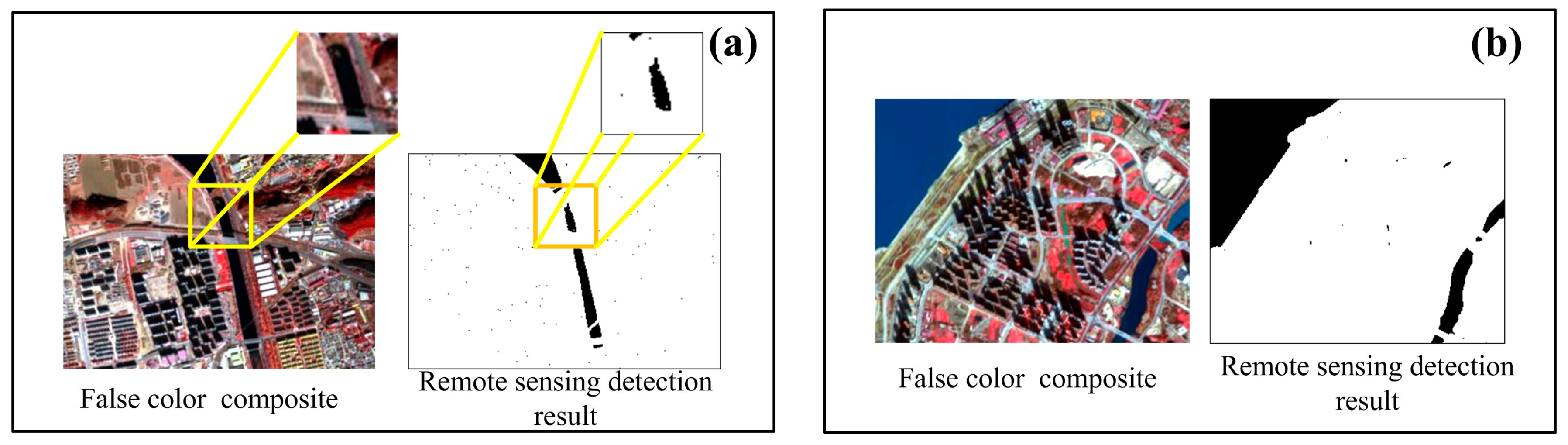

5. Discussion

5.1. Effect of PCA Transformation

5.2. Effect of Intersection

5.3. Shadow Detection Ability of the Shadow Object Description Method

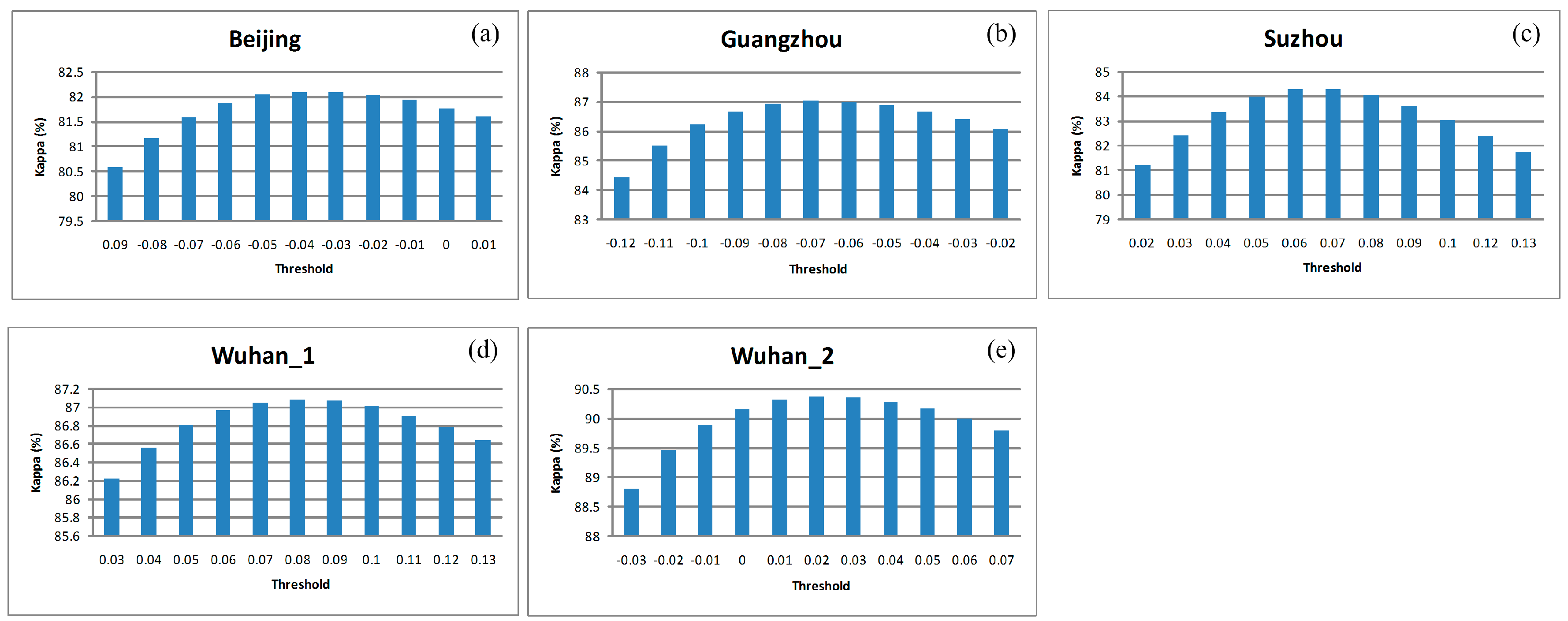

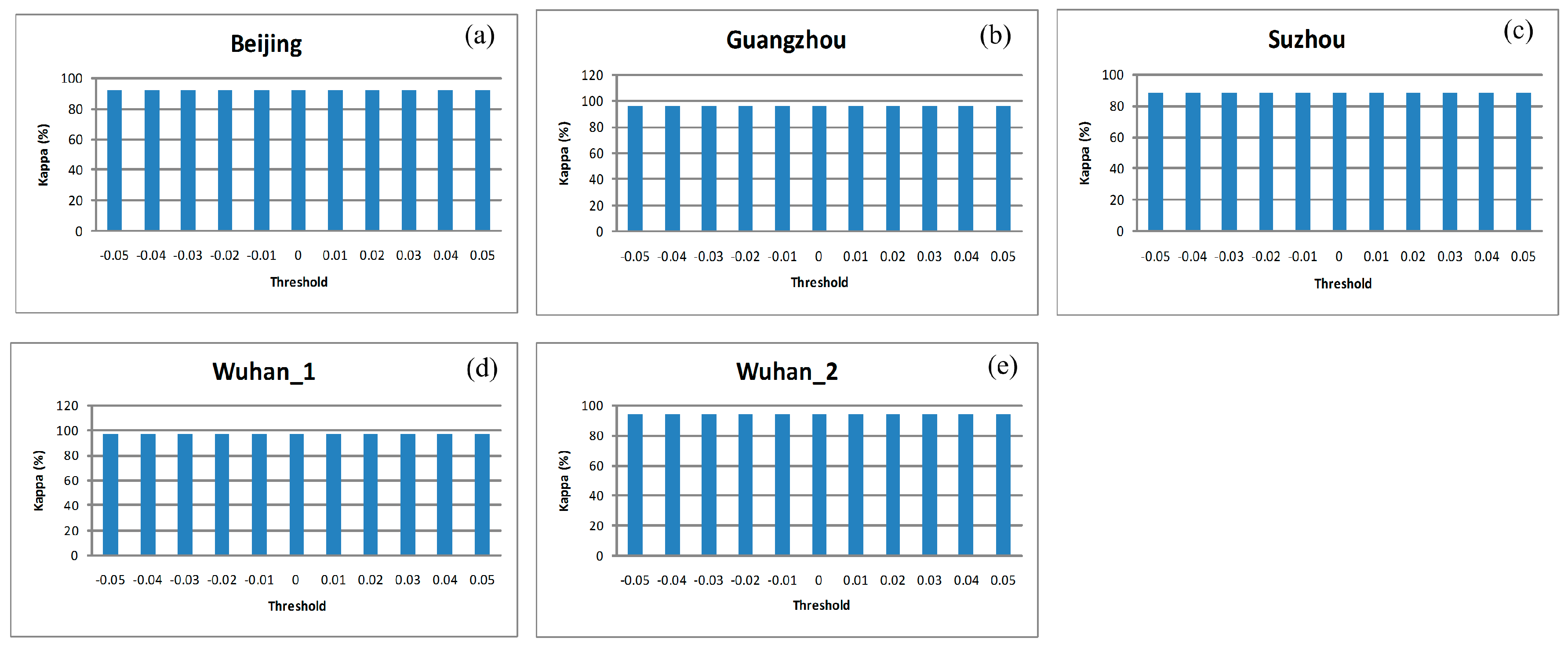

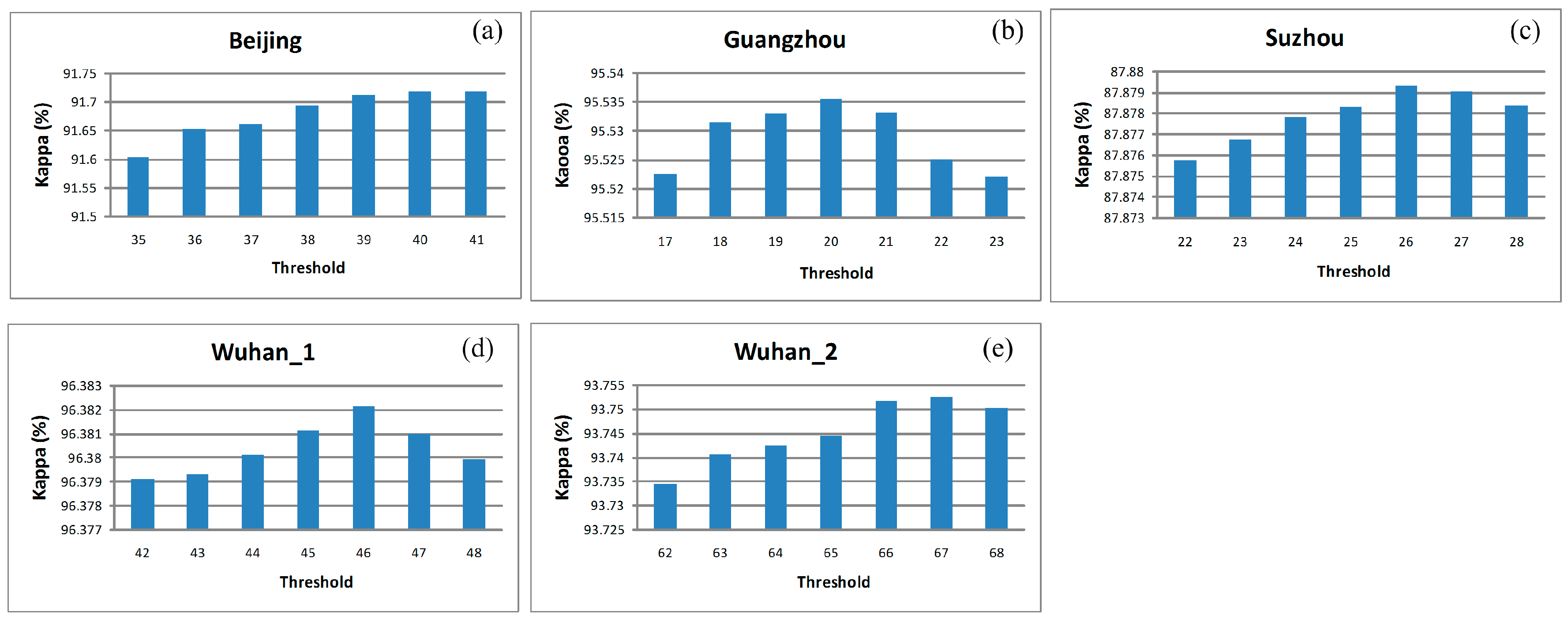

5.4. Threshold Setting and Stability of Algorithm in Correlation Computation

5.5. Summary

6. Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

Appendix A

| Ground Truth (Pixels) | |||||

|---|---|---|---|---|---|

| Class | Water | No_Water | Total | Produc Accuracy (%) | Omission Error (%) |

| Water | 34,961 | 11,657 | 46,618 | 74.9946 | 25.0054 |

| No_water | 1061 | 2,244,771 | 2,245,832 | 99.9528 | 0.0472 |

| Total | 36,022 | 2,256,428 | 2,292,450 | ||

| User Accuracy (%) | 97.0546 | 99.4834 | |||

| Commission Error (%) | 2.9454 | 0.5166 | |||

| Overall Accuracy = 99.4452%; Kappa Coefficient = 84.3326% | |||||

| Ground Truth (Pixels) | |||||

|---|---|---|---|---|---|

| Class | Water | No_Water | Total | Produc Accuracy (%) | Omission Error (%) |

| Water | 34,827 | 11,791 | 46,618 | 74.7072 | 25.2928 |

| No_water | 2125 | 2,243,707 | 2,245,832 | 99.9054 | 0.0946 |

| Total | 36,952 | 2,255,498 | 2,292,450 | ||

| User Accuracy (%) | 94.2493 | 99.4772 | |||

| Commission Error (%) | 5.7507 | 0.5228 | |||

| Overall Accuracy = 99.3930%; Kappa Coefficient = 83.0431% | |||||

| Ground Truth (Pixels) | |||||

|---|---|---|---|---|---|

| Class | Water | No_Water | Total | Produc Accuracy (%) | Omission Error (%) |

| Water | 40,929 | 5689 | 46,618 | 87.7966 | 12.2034 |

| No_water | 1571 | 2,244,261 | 2,245,832 | 99.9300 | 0.0700 |

| Total | 42,500 | 2,249,950 | 2,292,450 | ||

| User Accuracy (%) | 96.3035 | 99.7471 | |||

| Commission Error (%) | 3.6965 | 0.2529 | |||

| Overall accuracy = 99.6833%; Kappa Coefficient = 91.6924% | |||||

Appendix B

| Ground Truth (Pixels) | |||||

|---|---|---|---|---|---|

| Class | Water | No_Water | Total | Produc Accuracy (%) | Omission Error (%) |

| Water | 1,212,617 | 169,976 | 1,382,593 | 87.7060 | 12.2940 |

| No_water | 19,157 | 8,988,885 | 9,008,042 | 99.7873 | 0.2127 |

| Total | 1,231,774 | 9,158,861 | 10,390,635 | ||

| User Accuracy (%) | 98.4448 | 98.1441 | |||

| Commission Error (%) | 1.5552 | 1.8559 | |||

| Overall accuracy = 98.1798%; Kappa Coefficient = 91.7285% | |||||

| Ground Truth (Pixels) | |||||

|---|---|---|---|---|---|

| Class | Water | No_Water | Total | Produc Accuracy (%) | Omission Error (%) |

| Water | 1,087,494 | 295,099 | 1,382,593 | 78.6561 | 21.3439 |

| No_water | 29,105 | 8,978,937 | 9,008,042 | 99.6769 | 0.3231 |

| Total | 1,116,599 | 9,274,036 | 10,390,635 | ||

| User Accuracy (%) | 97.3934 | 96.8180 | |||

| Commission Error (%) | 2.6066 | 3.1820 | |||

| Overall accuracy = 96.8798%; Kappa Coefficient = 85.2771% | |||||

| Ground Truth (Pixels) | |||||

|---|---|---|---|---|---|

| Class | Water | No_Water | Total | Produc Accuracy (%) | Omission Error (%) |

| Water | 1,304,001 | 78,592 | 1,382,593 | 94.3156 | 5.6844 |

| No_water | 26,733 | 8,981,309 | 9,008,042 | 99.7032 | 0.2968 |

| Total | 1,330,734 | 9,059,901 | 10,390,635 | ||

| User Accuracy (%) | 97.9911 | 99.1325 | |||

| Commission Error (%) | 2.0089 | 0.8675 | |||

| Overall accuracy = 98.9863%; Kappa Coefficient = 95.5355% | |||||

Appendix C

| Ground Truth (Pixels) | |||||

|---|---|---|---|---|---|

| Class | Water | No_Water | Total | Produc Accuracy (%) | Omission Error (%) |

| Water | 415,717 | 76,225 | 491,942 | 84.5053 | 15.4947 |

| No_water | 50,948 | 5,673,154 | 5,724,102 | 99.1099 | 0.8901 |

| Total | 466,665 | 5,749,379 | 6,216,044 | ||

| User Accuracy (%) | 89.0825 | 98.6742 | |||

| Commission Error (%) | 10.9175 | 1.3258 | |||

| Overall accuracy = 97.9541%; Kappa Coefficient = 85.6260% | |||||

| Ground Truth (Pixels) | |||||

|---|---|---|---|---|---|

| Class | Water | No_Water | Total | Produc Accuracy (%) | Omission Error (%) |

| Water | 420,726 | 71,216 | 491,942 | 85.5235 | 14.4765 |

| No_water | 130,884 | 5,593,218 | 5,724,102 | 97.7135 | 2.2865 |

| Total | 551,610 | 5,664,434 | 6,216,044 | ||

| User Accuracy (%) | 76.2724 | 98.7428 | |||

| Commission Error (%) | 23.7276 | 1.2572 | |||

| Overall accuracy = 96.7487%; Kappa Coefficient = 78.8652% | |||||

| Ground Truth (Pixels) | |||||

|---|---|---|---|---|---|

| Class | Water | No_Water | Total | Produc Accuracy (%) | Omission Error (%) |

| Water | 429,101 | 62,841 | 491,942 | 87.2259 | 12.7741 |

| No_water | 45,182 | 5,678,920 | 5,724,102 | 99.2107 | 0.7893 |

| Total | 474,283 | 5,741,761 | 6,216,044 | ||

| User Accuracy (%) | 90.4736 | 98.9055 | |||

| Commission Error (%) | 9.5264 | 1.0945 | |||

| Overall accuracy = 98.2622%Kappa Coefficient = 87.8783% | |||||

Appendix D

| Ground Truth (Pixels) | |||||

|---|---|---|---|---|---|

| Class | Water | No_Water | Total | Produc Accuracy (%) | Omission Error (%) |

| Water | 1,562,974 | 182,267 | 1,745,241 | 89.5563 | 10.4437 |

| No_water | 9274 | 3,905,130 | 3,914,404 | 99.7631 | 0.2369 |

| Total | 1,572,248 | 4,087,397 | 5,659,645 | ||

| User Accuracy (%) | 99.4101 | 95.5408 | |||

| Commission Error (%) | 0.5899 | 4.4592 | |||

| Overall accuracy = 96.6157%; Kappa Coefficient = 91.8418% | |||||

| Ground Truth (Pixels) | |||||

|---|---|---|---|---|---|

| Class | Water | No_Water | Total | Produc Accuracy (%) | Omission Error (%) |

| Water | 1,526,202 | 219,039 | 1,745,241 | 87.4494 | 12.5506 |

| No_water | 146,867 | 3,767,537 | 3,914,404 | 96.2480 | 5.4944 |

| Total | 1,673,069 | 3,986,576 | 5,659,645 | ||

| User Accuracy (%) | 91.2217 | 94.5056 | |||

| Commission Error (%) | 8.7783 | 3.7520 | |||

| Overall accuracy = 93.5348%; Kappa Coefficient = 84.6675% | |||||

| Ground Truth (Pixels) | |||||

|---|---|---|---|---|---|

| Class | Water | No_Water | Total | Produc Accuracy (%) | Omission Error (%) |

| Water | 1,676,387 | 68,854 | 1,745,241 | 96.0548 | 3.9452 |

| No_water | 17,803 | 3,896,601 | 3,914,404 | 99.5452 | 0.4548 |

| Total | 1,694,190 | 3,965,455 | 5,659,645 | ||

| User Accuracy (%) | 98.9492 | 98.2637 | |||

| Commission Error (%) | 1.0508 | 1.7363 | |||

| Overall accuracy = 98.4689%; Kappa Coefficient = 96.3811% | |||||

Appendix E

| Ground Truth (Pixels) | |||||

|---|---|---|---|---|---|

| Class | Water | No_Water | Total | Produc Accuracy (%) | Omission Error (%) |

| Water | 2,084,870 | 303,303 | 2,388,173 | 87.2998 | 12.7002 |

| No_water | 17,198 | 7,422,653 | 7,439,851 | 99.7688 | 0.2312 |

| Total | 2,102,068 | 7,725,956 | 9,828,024 | ||

| User Accuracy (%) | 99.1819 | 96.0742 | |||

| Commission Error (%) | 0.7201 | 3.9258 | |||

| Overall accuracy = 96.7389%; Kappa Coefficient = 90.7601% | |||||

| Ground Truth (Pixels) | |||||

|---|---|---|---|---|---|

| Class | Water | No_Water | Total | Produc Accuracy (%) | Omission Error (%) |

| Water | 2,114,412 | 273,761 | 2,388,173 | 88.5368 | 11.4632 |

| No_water | 68,478 | 7,371,373 | 7,439,851 | 99.0796 | 0.9204 |

| Total | 2,182,890 | 7,645,134 | 9,828,024 | ||

| User Accuracy (%) | 96.8630 | 96.4191 | |||

| Commission Error (%) | 3.1370 | 3.5809 | |||

| Overall accuracy = 96.5177%; Kappa Coefficient = 90.2501% | |||||

| Ground Truth (Pixels) | |||||

|---|---|---|---|---|---|

| Class | Water | No_Water | Total | Produc Accuracy (%) | Omission Error (%) |

| Water | 2,207,784 | 180,389 | 2,388,173 | 92.4466 | 7.5534 |

| No_water | 41,320 | 7,398,531 | 7,439,851 | 99.4446 | 0.5554 |

| Total | 2,249,104 | 7,578,920 | 9,828,024 | ||

| User Accuracy (%) | 98.1628 | 97.6199 | |||

| Commission Error (%) | 1.8372 | 2.3801 | |||

| Overall accuracy = 97.7441%; Kappa Coefficient = 93.7445% | |||||

References

- Stabler, L.B. Management regimes affect woody plant productivity and water use efficiency in an urbandesert ecosystem. Urban Ecosyst. 2008, 11, 197–211. [Google Scholar] [CrossRef]

- Mcfeeters, S.K. Using the Normalized Difference Water Index (NDWI) within a Geographic Information System to Detect Swimming Pools for Mosquito Abatement: A Practical Approach. Remote Sens. 2013, 5, 3544–3561. [Google Scholar] [CrossRef]

- Zhai, W.; Huang, C. Fast building damage mapping using a single post-earthquake PolSAR image: A case study of the 2010 Yushu earthquake. Earth Planets Space 2016, 68, 1–12. [Google Scholar] [CrossRef]

- Raju, P.L.N.; Sarma, K.K.; Barman, D.; Handique, B.K.; Chutia, D.; Kundu, S.S.; Das, R.; Chakraborty, K.; Das, R.; Goswami, J.; et al. Operational remote sensing services in north eastern region of India for natural resources management, early warning for disaster risk reduction and dissemination of information and services. ISPRS—Int. Arch. Photogramm. Remote Sens. Spat. Inform. Sci. 2016, XLI-B4, 767–775. [Google Scholar] [CrossRef]

- Muriithi, F.K. Land use and land cover (LULC) changes in semi-arid sub-watersheds of Laikipia and Athi River basins, Kenya, as influenced by expanding intensive commercial horticulture. Remote Sens. Appl. Soc. Environ. 2016, 3, 73–88. [Google Scholar] [CrossRef]

- Byun, Y.; Han, Y.; Chae, T. Image fusion-based change detection for flood extent extraction using bi-temporal very high-resolution satellite images. Remote Sens. 2015, 7, 10347–10363. [Google Scholar] [CrossRef]

- Kang, L.; Zhang, S.; Ding, Y.; He, X. Extraction and preference ordering of multireservoir water supply rules in dry years. Water 2016, 8, 28. [Google Scholar] [CrossRef]

- Katz, D. Undermining demand management with supply management: Moral hazard in Israeli water policies. Water 2016, 8, 159. [Google Scholar] [CrossRef]

- Deus, D.; Gloaguen, R. Remote sensing analysis of lake dynamics in semi-arid regions: Implication for water resource management. Lake Manyara, east African Rift, northern Tanzania. Water 2013, 5, 698–727. [Google Scholar] [CrossRef]

- Zhai, K.; Xiaoqing, W.U.; Qin, Y.; Du, P. Comparison of surface water extraction performances of different classic water indices using OLI and TM imageries in different situations. Geo-Spat. Inform. Sci. 2015, 18, 32–42. [Google Scholar] [CrossRef]

- Gautam, V.K.; Gaurav, P.K.; Murugan, P.; Annadurai, M. Assessment of surface water dynamics in Bangalore using WRI, NDWI, MNDWI, supervised classification and K-T transformation. Aquat. Procedia 2015, 4, 739–746. [Google Scholar] [CrossRef]

- Bryant, R.G.; Rainey, M.P. Investigation of flood inundation on playas within the Zone of Chotts, using a time-series of AVHRR. Remote Sens. Environ. 2002, 82, 360–375. [Google Scholar] [CrossRef]

- Sun, F.; Sun, W.; Chen, J.; Gong, P. Comparison and improvement of methods for identifying water bodies in remotely sensed imagery. Int. J. Remote Sens. 2012, 33, 6854–6875. [Google Scholar] [CrossRef]

- Mcfeeters, S.K. The use of the Normalized Difference Water Index (NDWI) in the delineation of open water features. Int. J. Remote Sens. 1996, 17, 1425–1432. [Google Scholar] [CrossRef]

- Xu, H. Modification of normalized difference water index (NDWI) to enhance open water features in remotely sensed imagery. Int. J. Remote Sens. 2006, 27, 3025–3033. [Google Scholar] [CrossRef]

- Feyisa, G.L.; Meilby, H.; Fensholt, R.; Proud, S.R. Automated Water Extraction Index: A new technique for surface water mapping using Landsat imagery. Remote Sens. Environ. 2014, 140, 23–35. [Google Scholar] [CrossRef]

- Rogers, A.S.; Kearney, M.S. Reducing signature variability in unmixing coastal marsh Thematic Mapper scenes using spectral indices. Int. J. Remote Sens. 2004, 25, 2317–2335. [Google Scholar] [CrossRef]

- Lira, J. Segmentation and morphology of open water bodies from multispectral images. Int. J. Remote Sens. 2006, 27, 4015–4038. [Google Scholar] [CrossRef]

- Lv, W.; Yu, Q.; Yu, W. Water Extraction in SAR Images Using GLCM and Support Vector Machine. In Proceedings of the IEEE 10th International Conference on Signal Processing Proceedings, Beijing, China, 24–28 October 2010.

- Wendleder, A.; Breunig, M.; Martin, K.; Wessel, B.; Roth, A. Water body detection from TanDEM-X data: Concept and first evaluation of an accurate water indication mask. Soc. Sci. Electron. Publ. 2011, 25, 3779–3782. [Google Scholar]

- Wendleder, A.; Wessel, B.; Roth, A.; Breunig, M.; Martin, K.; Wagenbrenner, S. TanDEM-X water indication mask: Generation and first evaluation results. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2012, 6, 1–9. [Google Scholar] [CrossRef]

- Wang, Y.; Ruan, R.; She, Y.; Yan, M. Extraction of water information based on RADARSAT SAR and Landsat ETM+. Procedia Environ. Sci. 2011, 10, 2301–2306. [Google Scholar] [CrossRef]

- Wang, K.; Trinder, J.C. Applied Watershed Segmentation Algorithm for Water Body Extraction in Airborne SAR Image. In Proceedings of the European Conference on Synthetic Aperture Radar, Aachen, Germany, 2–4 June 2014.

- Ahtonen, P.; Hallikainen, M. Automatic Detection of Water Bodies from Spaceborne SAR Images. In Proceedings of the 2005 IEEE International on Geoscience and Remote Sensing Symposium, IGARSS ‘05, Seoul, Korea, 25–29 July 2005.

- Li, B.; Zhang, H.; Xu, F. Water Extraction in High Resolution Remote Sensing Image Based on Hierarchical Spectrum and Shape Features. In Proceedings of the 35th International Symposium on Remote Sensing of Environment (ISRSE35), Beijing, China, 22–26 April 2013.

- Deng, Y.; Zhang, H.; Wang, C.; Liu, M. Object-Oriented Water Extraction of PolSAR Image Based on Target Decomposition. In Proceedings of the 2015 IEEE 5th Asia-Pacific Conference on Synthetic Aperture Radar (APSAR), Singapore, 1–4 September 2015.

- Jiang, H.; Feng, M.; Zhu, Y.; Lu, N.; Huang, J.; Xiao, T. An automated method for extracting rivers and lakes from Landsat imagery. Remote Sens. 2014, 6, 5067–5089. [Google Scholar] [CrossRef]

- Steele, M.K.; Heffernan, J.B. Morphological characteristics of urban water bodies: Mechanisms of change and implications for ecosystem function. Ecol. Appl. 2014, 24, 1070–1084. [Google Scholar] [CrossRef] [PubMed]

- Yu, X.; Li, B.; Shao, J.; Zhou, J.; Duan, H. Land Cover Change Detection of Ezhou Huarong District Based on Multi-Temporal ZY-3 Satellite Images. In Proceedings of the 2015 IEEE 23rd International Conference on Geoinformatics, Wuhan, China, 19–21 June 2015.

- Dong, Y.; Chen, W.; Chang, H.; Zhang, Y.; Feng, R.; Meng, L. Assessment of orthoimage and DEM derived from ZY-3 stereo image in Northeastern China. Surv. Rev. 2015, 48, 247–257. [Google Scholar] [CrossRef]

- Yao, F.; Wang, C.; Dong, D.; Luo, J.; Shen, Z.; Yang, K. High-resolution mapping of urban surface water using ZY-3 multi-spectral imagery. Remote Sens. 2015, 7, 12336–12355. [Google Scholar] [CrossRef]

- Su, N.; Zhang, Y.; Tian, S.; Yan, Y.; Miao, X. Shadow detection and removal for occluded object information recovery in urban high-resolution panchromatic satellite images. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2016, 9, 1–15. [Google Scholar] [CrossRef]

- Li, Y.; Gong, P.; Sasagawa, T. Integrated shadow removal based on photogrammetry and image analysis. Int. J. Remote Sens. 2005, 26, 3911–3929. [Google Scholar] [CrossRef]

- Zhou, W.; Huang, G.; Troy, A.; Cadenasso, M.L. Object-based land cover classification of shaded areas in high spatial resolution imagery of urban areas: A comparison study. Remote Sens. Environ. 2009, 113, 1769–1777. [Google Scholar] [CrossRef]

- Xie, J.; Zhang, L.; You, J.; Shiu, S. Effective texture classification by texton encoding induced statistical features. Pattern Recognit. 2014, 48, 447–457. [Google Scholar] [CrossRef]

- Lizarazo, I. SVM-based segmentation and classification of remotely sensed data. Int. J. Remote Sens. 2008, 29, 7277–7283. [Google Scholar] [CrossRef]

- Huang, X.; Zhang, L. Morphological building/shadow index for building extraction from high-resolution imagery over urban areas. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2012, 5, 161–172. [Google Scholar] [CrossRef]

- Xie, C.; Huang, X.; Zeng, W.; Fang, X. A novel water index for urban high-resolution eight-band WorldView-2 imagery. Int. J. Digit. Earth 2016, 9, 925–941. [Google Scholar] [CrossRef]

- Sivanpilla, R.; Miller, S.N. Improvements in mapping water bodies using ASTER data. Ecol. Inform. 2010, 5, 73–78. [Google Scholar] [CrossRef]

- Nazeer, M.; Nichol, J.E.; Yung, Y.K. Evaluation of atmospheric correction models and Landsat surface reflectance product in an urban coastal environment. Int. J. Remote Sens. 2014, 35, 6271–6291. [Google Scholar] [CrossRef]

- Pechenizkiy, M.; Tsymbal, A.; Puuronen, S. PCA-based feature transformation for classification: Issues in medical diagnostics. Proc. IEEE Symp. Comput. Based Med. Syst. 2004, 1, 535–540. [Google Scholar]

- Heijmans, H.J.A.M.; Ronse, C. The algebraic basis of mathematical morphology I. Dilations and erosions. Comput. Vis. Graph. Image Process. 1990, 50, 245–295. [Google Scholar] [CrossRef]

| City’s Name and Location | Area Coverage (Pixels) | Water Body Type | Topography | Climate | Color Infrared Composite (4/3/2 Band Combination) |

|---|---|---|---|---|---|

| Beijing (39.9° N, 116.3° E) | 1479 × 1550 (77.1 km2) | Rivers Polluted lakes Clear lake | Plain | Warm temperate semi humid continental monsoon climate |  |

| Guangzhou (23° N, 113.6° E) | 2351 × 2644 (209.1 km2) | Rivers Ponds Polluted lakes Clear lake | Basin, plain | Typical monsoon climate in South Asia |  |

| Suzhou (31.2° N, 120.5° E) | 2351 × 2644 (209.1 km2) | Rivers Ponds Polluted lakes Clear lake | Basin, plain, hills. | Subtropical humid monsoon climate |  |

| Wuhan_1 (30.5° N, 114.3° E) | 2245 × 2521 (190.4 km2) | Rivers Ponds Large polluted lakes Large clear lakes | Basin, plain, hills. | Subtropical humid monsoon climate |  |

| Wuhan_2 (30.5° N, 114.3° E) | 2894 × 3396 (330.6 km2) | Rivers Ponds Large polluted lakes Large clear lakes | Basin, plain, hills. | Subtropical humid monsoon climate |  |

| Item | Contents |

|---|---|

| Camera model | Panchromatic orthographic; Panchromatic front-view and rear-view; multi-spectral orthographic |

| Resolution | Sub-satellite points full-color: 2.1 m; front- and rear-view 22° full color: 3.6 m; sub-satellite points multi-spectral: 5.8 m |

| Wavelength | Panchromatic: 450 nm–800 nm Multi-spectral: Band1 (450 nm–520 nm); Band2 (520 nm–590 nm) Band3 (630 nm–690 nm); Band4 (770 nm–890 nm) |

| Width | Sub-satellite points Panchromatic: 50 km, single-view 2500 km2; Sub-satellite points multi-spectral: 52 km, single-view 2704 km2 |

| Revisit cycle | 5 days |

| Daily image acquisition | Panchromatic: nearly 1,000,000 km2/day; Fusion: nearly 1,000,000 km2/day |

| Test Site | ZY-3 Scenes | ||

|---|---|---|---|

| Acquisition Date | Path | Row | |

| Beijing | 28 November 2013 | 002 | 125 |

| Guangzhou | 20 October 2013 | 895 | 167 |

| Suzhou | 17 December 2015 | 882 | 147 |

| Wuhan_1 | 24 July 2016 | 001 | 149 |

| Wuhan_2 | 28 March 2016 | 897 | 148 |

| Method | Threshold | ||||

|---|---|---|---|---|---|

| Beijing | Guangzhou | Suzhou | Wuhan_1 | Wuhan_2 | |

| AUWEM | T1 = 0, T2 = 0, T3 = 38 | T1 = 0, T2 = 0, T3 = 20 | T1 = 0, T2 = 0, T3 = 25 | T1 = 0, T2 = 0, T3 = 45 | T1 = 0, T2 = 0, T3 = 65 |

| NDWI | T = −0.04 | T = −0.07 | T = 0.07 | T = 0.08 | T = 0.02 |

| MaxLike | - | - | - | - | - |

| Classification Algorithm | Beijing (1479 × 1550) | Guangzhou (2973 × 3495) | Suzhou (2351 × 2644) | Wuhan_1 (2245 × 2521) | Wuhan_2 (2894 × 3396) |

|---|---|---|---|---|---|

| Kappa (%) | Kappa (%) | Kappa (%) | Kappa (%) | Kappa (%) | |

| AUWEM | 91.6924 | 95.5355 | 87.8783 | 96.3811 | 93.7445 |

| NDWI | 83.0431 | 85.2771 | 78.8652 | 84.6675 | 90.2501 |

| MaxLike | 84.3326 | 91.7285 | 85.6260 | 91.8418 | 90.7601 |

| Site | Method | Commission Error (%) | Omission Error (%) | A (%) |

|---|---|---|---|---|

| Beijing | AUWEM | 1.8032 | 15.8446 | 82.3522 |

| NDWI | 0.2042 | 29.9648 | 69.8310 | |

| MaxLike | 0.0738 | 29.9747 | 69.9515 | |

| Guangzhou | AUWEM | 0.3417 | 5.8892 | 93.7691 |

| NDWI | 0.1438 | 21.4114 | 78.4448 | |

| MaxLike | 0.0833 | 14.1019 | 85.8149 | |

| Suzhou | AUWEM | 2.3455 | 12.5791 | 85.0755 |

| NDWI | 2.2140 | 13.6943 | 84.0917 | |

| MaxLike | 0.9649 | 14.2155 | 84.8196 | |

| Wuhan_1 | AUWEM | 0.6422 | 9.8925 | 89.4653 |

| NDWI | 0.9452 | 27.8494 | 71.2054 | |

| MaxLike | 0.0211 | 26.3919 | 73.5870 | |

| Wuhan_2 | AUWEM | 1.3827 | 19.0375 | 79.5798 |

| NDWI | 0.4743 | 27.8402 | 71.6855 | |

| MaxLike | 0.0335 | 30.1691 | 69.7974 |

| Image Name | Image Size | Nb | Na | Nb-Na |

|---|---|---|---|---|

| a | 361 × 361 | 11,883 | 15,888 | 4005 |

| b | 327 × 335 | 12,336 | 17,630 | 5294 |

| c | 299 × 319 | 9923 | 12,218 | 2295 |

| d | 677 × 762 | 76,932 | 57,389 | −19,543 |

© 2017 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license ( http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Yang, F.; Guo, J.; Tan, H.; Wang, J. Automated Extraction of Urban Water Bodies from ZY‐3 Multi‐Spectral Imagery. Water 2017, 9, 144. https://doi.org/10.3390/w9020144

Yang F, Guo J, Tan H, Wang J. Automated Extraction of Urban Water Bodies from ZY‐3 Multi‐Spectral Imagery. Water. 2017; 9(2):144. https://doi.org/10.3390/w9020144

Chicago/Turabian StyleYang, Fan, Jianhua Guo, Hai Tan, and Jingxue Wang. 2017. "Automated Extraction of Urban Water Bodies from ZY‐3 Multi‐Spectral Imagery" Water 9, no. 2: 144. https://doi.org/10.3390/w9020144

APA StyleYang, F., Guo, J., Tan, H., & Wang, J. (2017). Automated Extraction of Urban Water Bodies from ZY‐3 Multi‐Spectral Imagery. Water, 9(2), 144. https://doi.org/10.3390/w9020144