Application of Closed-Circuit Television Image Segmentation for Irrigation Channel Water Level Measurement

Abstract

:1. Introduction

2. Materials and Methods

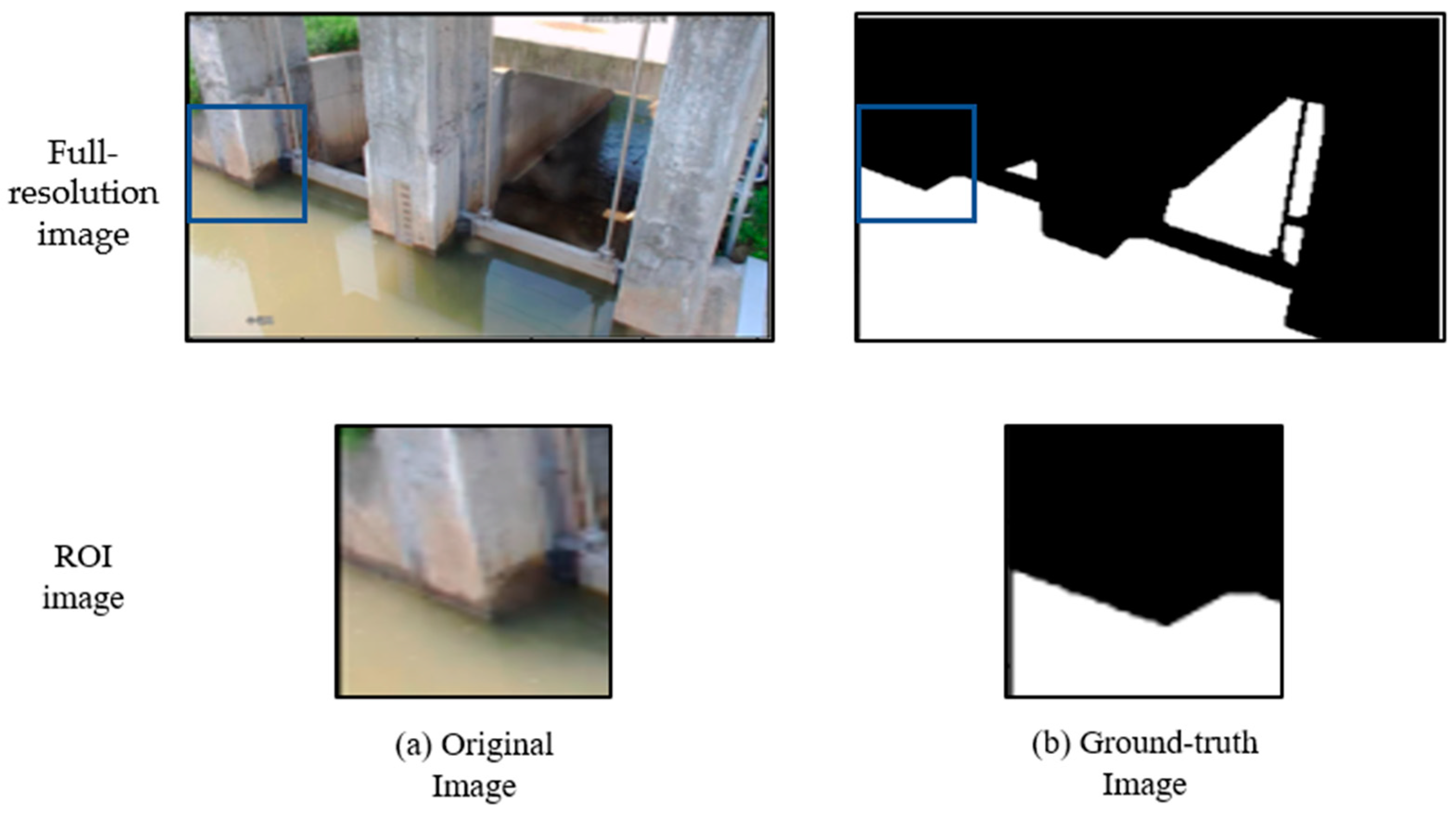

2.1. Dataset

2.2. Hardware and Software

2.3. Segmentation Model Construction (U-Net and Link-Net)

2.4. Water Level Estimation

2.5. Performance Evaluation

3. Results and Discussion

3.1. Semantic Segmentation

3.1.1. Optimal Epoch Decision

3.1.2. Segmented Results with 313 Test Datasets

3.2. Water Level Estimation

3.2.1. Full-Resolution Image and Linear Line for Conversion

3.2.2. ROI Image and Linear Line for Conversion

3.2.3. ROI Image and Quadratic Line for Conversion

3.2.4. Overall Comparisons for Three Approaches

4. Discussion

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Koech, R.; Langat, P. Improving irrigation water use efficiency: A review of advances, challenges and opportunities in the Australian context. Water 2018, 10, 1771. [Google Scholar] [CrossRef]

- Akbari, M.; Gheysari, M.; Mostafazadeh-Fard, B.; Shayannejad, M. Surface irrigation simulation-optimization model based on meta-heuristic algorithms. Agric. Water Manag. 2018, 201, 46–57. [Google Scholar] [CrossRef]

- Costabile, P.; Costanzo, C.; Gangi, F.; De Gaetani, C.I.; Rossi, L.; Gandolfi, C.; Masseroni, D. High-resolution 2D modelling for simulating and improving the management of border irrigation. Agric. Water Manag. 2023, 275, 108042. [Google Scholar] [CrossRef]

- Conde, G.; Quijano, N.; Ocampo-Martinez, C. Modeling and control in open-channel irrigation systems: A review. Annu. Rev. Control 2021, 51, 153–171. [Google Scholar] [CrossRef]

- Koech, R.; Rod, S.; Malcolm, G. Automation and control in surface irrigation systems: Current status and expected future trends. In Proceedings of the Southern Region Engineering Conference, Toowoomba, Australia, 11–12 November 2010. [Google Scholar]

- Weyer, E. Control of irrigation channels. IEEE Trans. Control Syst. Technol. 2008, 16, 664–675. [Google Scholar] [CrossRef]

- Lee, J. Evaluation of automatic irrigation system for rice cultivation and sustainable agriculture water management. Sustainability 2022, 14, 11044. [Google Scholar] [CrossRef]

- Kuswidiyanto, L.W.; Nugroho, A.P.; Jati, A.W.; Wismoyo, G.W.; Murtiningrum; Arif, S.S. Automatic water level monitoring system based on computer vision technology for supportin the irrigation modernization. IOP Conf. Ser. Earth Environ. Sci. 2021, 686, 012055. [Google Scholar] [CrossRef]

- Lozano, D.; Arranja, C.; Rijo, M.; Mateos, L. Simulation of automatic control of an irrigation canal. Agric. Water Manag. 2010, 97, 91–100. [Google Scholar] [CrossRef]

- Masseroni, D.; Moller, P.; Tyrell, R.; Romani, M.; Lasagna, A.; Sali, G.; Facchi, A.; Gandolfia, C. Evaluating performances of the first automatic system for paddy irrigation in Europe. Agric. Water Manag. 2018, 201, 58–69. [Google Scholar] [CrossRef]

- Hamdi, M.; Rehman, A.; Alghamdi, A.; Nizamani, M.A.; Missen, M.M.S.; Memon, M.A. Internet of Things (IoT) Based Water Irrigation System. Int. J. Online Biomed. Eng. 2021, 17, 69–80. [Google Scholar] [CrossRef]

- Park, C.E.; Kim, J.T.; Oh, S.T. Analysis of stage-discharge relationships in the irrigation canal with auto-measuring system. J. Korean Soc. Agric. Eng. 2012, 54, 109–114. [Google Scholar]

- Hong, E.M.; Nam, W.-H.; Choi, J.-Y.; Kim, J.-T. Evalutation of water supply adequacy using real-time water level monitoring system in paddy irrigation canals. J. Korean Soc. Agric. Eng. 2014, 56, 1–8. [Google Scholar]

- Lee, J.; Noh, J.; Kang, M.; Shin, H. Evaluation of the irrigation water supply of agricultural reservoir based on measurement information from irrigation canal. J. Korean Soc. Agric. Eng. 2020, 62, 63–72. [Google Scholar]

- Seibert, J.; Strobi, B.; Etter, S.; Hummer, P.; van Meerveld, H.J. Virtual staff gauges for crowd-based stream level observations. Front. Earth Sci. 2019, 7, 70. [Google Scholar] [CrossRef]

- Kuo, L.-C.; Tai, C.-C. Automatic water-level measurement system for confined-space applications. Rev. Sci. Instrum. 2021, 92, 085001. [Google Scholar] [CrossRef]

- Liu, W.-C.; Chung, C.-K.; Huang, W.-C. Image-based recognition and processing system for monitoring water levels in an irrigation and drainage channel. Paddy Water Environ. 2023, 21, 417–431. [Google Scholar] [CrossRef]

- Sharma, N.; Sheifali, G.; Deepika, K.; Sultan, A.; Hani, A.; Yousef, A.; Asadullah, S. U-Net model with transfer learning model as a backbone for segmentation of Gastrointestinal tract. Bioengineering 2023, 10, 119. [Google Scholar] [CrossRef]

- Long, J.; Evan, S.; Trevor, D. Fully convolutional networks for semantic segmentation. arXiv 2015, arXiv:1411.4038. [Google Scholar]

- Zhou, L.; Zhang, C.; Wu, M. D-LinkNet: LinkNet with pretrained encoder and dilated convolution for high resolution satellite imagery road extraction. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, Salt Lake City, UT, USA, 18–22 June 2018. [Google Scholar]

- Muhadi, N.A.; Abdullah, A.F.; Bejo, S.K.; Mahadi, M.R.; Mijic, A. Deep Learning semantic segmentation for water level estimation using surveillance camera. Appl. Sci. 2021, 11, 9691. [Google Scholar] [CrossRef]

- Vianna, P.; Farias, R.; de Albuquerque Pereira, W.C. U-Net and SegNet performances on lesion segmentation of breast ultrasonography images. Res. Biomed. Eng. 2021, 37, 171–179. [Google Scholar] [CrossRef]

- Chang, R.; Hou, D.; Chen, Z.; Chen, L. Automatic extraction of urban impervious surface based on SAH-Unet. Remote Sens. 2023, 15, 1042. [Google Scholar] [CrossRef]

- Abdollahi, A.; Pradhan, B.; Alamri, A.M. An ensemble architecture of deep convolutional Segnet and Unet networks for building semantic segmentation from high-resolution aerial images. Geocarto Int. 2022, 37, 3355–3370. [Google Scholar] [CrossRef]

- Kim, J.; Jeon, H.; Kim, D.-J. Extracting flooded areas in Southeast Asia using SegNet and U-Net. Korean J. Remote Sens. 2020, 36, 1095–1107. [Google Scholar]

- Hies, T.; Parasuraman, S.B.; Wang, Y.; Duester, R.; Eikaas, H.S.; Tan, K.M. Enhanced water-level detection by image processing. In Proceedings of the 10th International Conference on Hydroinformatics, Hamburg, Germany, 14–18 July 2012. [Google Scholar]

- Lin, Y.-T.; Lin, Y.-C.; Han, J.-Y. Automatic water-level detection using single-camera images with varied poses. Measurement 2018, 127, 167–174. [Google Scholar] [CrossRef]

- De Vitry, M.M.; Kramer, S.; Wegner, J.D.; Leitão, J.P. Scalable flood level trend monitoring with surveillance cameras using a deep convolutional neural network. Hydrol. Earth Syst. Sci. 2019, 23, 4621–4634. [Google Scholar] [CrossRef]

- Zaffaroni, M.; Rossi, C. Water segmentation with deep learning models for flood detection and monitoring. In Proceedings of the 17th Information Systems for Crisis Response and Management, Blacksburg, VA, USA, 24–27 May 2020. [Google Scholar]

- Lopez-Fuentes, L.; Rossi, C.; Skinnemoen, H. River segmentation for flood monitoring. In Proceedings of the 2017 IEEE International Conference on Big Data, Boston, MA, USA, 11–14 December 2017. [Google Scholar]

- Akiyama, T.S.; Junior, J.M.; Gonçalves, W.N.; Bressan, P.O.; Eltner, A.; Binder, F.; Singer, T. Deep learning applied to water segmentation. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. (ISPRS Arch.) 2020, 43, 1189–1193. [Google Scholar] [CrossRef]

- Vandaele, R.; Dance, S.L.; Ojha, V. Deep learning for automated river-level monitoring through river-camera images: An approach based on water segmentation and transfer learning. Hydrol. Earth Syst. Sci. 2021, 25, 4435–4453. [Google Scholar] [CrossRef]

- Bai, G.; Hou, J.; Zhang, T.; Li, B.; Han, H.; Wang, T.; Hinkelmann, R.; Zhang, D.; Guo, L. An intelligent water level monitoring method based on SSD algorithm. Measurement 2021, 185, 110047. [Google Scholar] [CrossRef]

- Kim, K.; Kim, M.; Yoon, P.; Bang, J.; Myoung, W.-H.; Choi, J.-Y.; Choi, G.-H. Application of CCTV images and semantic segmentation model for water level estimation of irrigation channel. J. Korean Soc. Agric. Eng. 2022, 64, 63–73. [Google Scholar]

- Ronneberger, O.; Fischer, P.; Brox, T. U-Net: Convolutional networks for biomedical image segmentation. arXiv 2015, arXiv:1505.04597v1. [Google Scholar]

- Chaurasia, A.; Culurciello, E. LinkNet: Exploiting encoder representations for efficient semantic segmentation. arXiv 2017, arXiv:1707.03718v1. [Google Scholar]

- Chaudhary, P.; D’Aronco, S.; Leitão, J.P.; Schindler, K.; Wegner, J.D. Water level prediction from social media images with a multi-task ranking approach. ISPRS J. Photogramm. Remote Sens. 2020, 167, 252–262. [Google Scholar] [CrossRef]

- Zhang, Z.; Zhou, Y.; Liu, H.; Gao, H. In-situ water level measurement using NIR-imaging video camera. Flow Meas. Instrum. 2019, 67, 95–106. [Google Scholar] [CrossRef]

- Lee, Y.-J.; Kim, P.-S.; Kim, S.J.; Jee, Y.K.; Joo, U.J. Estimation of water loss in irrigation canals through field measurement. J. Korean Soc. Agric. Eng. 2008, 50, 13–21. [Google Scholar]

- Mohammadi, A.; Rizi, A.P.; Abbasi, N. Field measurement and analysis of water losses at the main and tertiary levels of irrigation canals: Varamin Irrigation Scheme, Iran. Glob. Ecol. Conserv. 2019, 18, e00646. [Google Scholar] [CrossRef]

- Sultan, T.; Latif, A.; Shakir, A.S.; Kheder, K.; Rashid, M.U. Comparison of water conveyance losses in unlined and lined watercourses in developing countries. Tech. J. Univ. Eng. Technol. Taxila 2014, 19, 23. [Google Scholar]

| Image Type | File Format | Resolution | Number of Datasets | Water Level Range (m) | |||

|---|---|---|---|---|---|---|---|

| Full-Resolution | ROI | Train | Validation | Test | |||

| Raw image | PNG | 1280 × 720 × 3 | 256 × 256 × 3 | 1125 | 126 | 313 | 0.63~1.10 |

| Mask image | TIFF | 1280 × 720 × 1 | 256 × 256 × 1 | ||||

| Encoder | ||||||

|---|---|---|---|---|---|---|

| Stage | ResNet-18 | ResNet-50 | VGGNet-16 | VGGNet-19 | Block Architecture | |

| U-Net | Link-Net | |||||

| Conv1 | × 1 | × 1 | × 2 | × 2 | Convolution ↓ BatchNorm ↓ ReLuActivation ↓ Zero Padding | |

| Conv2 | × 2 | × 3 | × 2 | × 2 | ||

| Conv3 | × 2 | × 4 | × 3 | × 4 | ||

| Conv4 | × 2 | × 6 | × 3 | × 4 | ||

| Conv5 | × 2 | × 3 | × 3 | × 4 | ||

| Decoder | ||||||

| Stage | U-Net | Link-Net | Block Architecture | |||

| ResNet-18 | ResNet-50 | VGGNet-16 and VGGNet-19 | U-Net | Link-Net | ||

| Conv1 | × 2 | × 1 × 1 × 1 | × 1 × 1 × 1 | × 1 × 1 × 1 | Up-sampling ↓ Concatenation ↓ × 2 | × 1 ↓ Up-sampling ↓ × 2 ↓ Add |

| Conv2 | × 2 | × 1 × 1 × 1 | × 1 × 1 × 1 | × 1 × 1 × 1 | ||

| Conv3 | × 2 | × 1 × 1 × 1 | × 1 × 1 × 1 | × 1 × 1 × 1 | ||

| Conv4 | × 2 | × 1 × 1 × 1 | × 1 × 1 × 1 | × 1 × 1 × 1 | ||

| Conv5 | × 2 | × 1 × 1 × 1 | × 1 × 1 × 1 | × 1 × 1 × 1 | ||

| Model | Optimizer | Batch Size | Loss Function | Evaluation |

|---|---|---|---|---|

| U-Net | Adam | 8 | Binary cross-entropy | F1 score |

| Link-Net |

| Metric | Description | Formula | Value Range | Unit |

|---|---|---|---|---|

| True Positive | Sum of correctly identified water pixels | TP | 0~No. of pixels | ea |

| True Negative | Sum of correctly identified non-water pixels | TN | 0~No. of pixels | ea |

| False Positive | Sum of pixels incorrectly identified as water | FP | 0~No. of pixels | ea |

| False Negative | Sum of pixels incorrectly identified as non-water | FN | 0~No. of pixels | ea |

| Precision (P) | Proportion of detected water pixels | 0~1 | - | |

| Recall (R) | Proportion of ground-truth water pixels detected | 0~1 | - | |

| F1 Score | Harmonic mean between the precision and recall | 0~1 | - | |

| Water level values | Observed water level at time i | 0~water level | m | |

| Estimated water level at time i | 0~water level | m | ||

| Average of observed water levels | 0~water level | m | ||

| R2 | Coefficient of determination | 0~1 | - | |

| MAE | Mean Absolute Error | 0~∞ | m | |

| RMSE | Root Mean Squared Error | 0~∞ | m | |

| ME | Maximum Error | - | 0~∞ | m |

| NE>0.05 | Gross error (>0.05 m) number | - | 0~313 | ea |

| Segmentation Model | Backbone Model | ||||

|---|---|---|---|---|---|

| ResNet-18 | ResNet-50 | VGGNet-16 | VGGNet-19 | ||

| Number of parameters | U-Net | 14,340,570 | 32,561,114 | 23,752,273 | 29,061,969 |

| Link-Net | 11,521,690 | 28,783,386 | 20,325,137 | 25,634,833 | |

| Time to train (h) | U-Net | 146 | 261 | 221 | 248 |

| Link-Net | 144 | 256 | 226 | 252 | |

| Segmentation Model | Backbone Model | ||||

|---|---|---|---|---|---|

| ResNet-18 | ResNet-50 | VGGNet-16 | VGGNet-19 | ||

| Train loss | U-Net | 0.00242 | 0.00293 | 0.00238 | 0.00389 |

| Link-Net | 0.00116 | 0.00357 | 0.00205 | 0.00270 | |

| Validation loss | U-Net | 0.00517 | 0.00553 | 0.00866 | 0.00865 |

| Link-Net | 0.00572 | 0.00521 | 0.00771 | 0.00969 | |

| Epoch | U-Net | 57 | 55 | 57 | 53 |

| Link-Net | 76 | 72 | 72 | 77 | |

| Dataset | Image and Conversion Line | R2 | MAE (m) | RMSE (m) | Maximum Error (m) | NE>0.05 | NE>0.03 | NE>0.02 | NE>0.01 |

|---|---|---|---|---|---|---|---|---|---|

| Dataset selected randomly | Full-resolution with linear | 0.84 | 0.03 | 0.06 | 0.25 | 36 | 67 | 141 | 286 |

| ROI with linear | 0.94 | 0.05 | 0.06 | 0.13 | 1 | 208 | 222 | 236 | |

| ROI with quadratic | 0.99 | 0.01 | 0.01 | 0.06 | 1 | 7 | 33 | 136 | |

| Dataset selected with constant 10 min interval | Full-resolution with linear | 0.05 | 0.04 | 0.05 | 0.10 | 39 | 83 | 102 | 111 |

| ROI with linear | 0.86 | 0.05 | 0.05 | 0.08 | 59 | 114 | 129 | 135 | |

| ROI with quadratic | 0.86 | 0.01 | 0.01 | 0.04 | 0 | 9 | 29 | 65 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kim, K.; Choi, J.-Y. Application of Closed-Circuit Television Image Segmentation for Irrigation Channel Water Level Measurement. Water 2023, 15, 3308. https://doi.org/10.3390/w15183308

Kim K, Choi J-Y. Application of Closed-Circuit Television Image Segmentation for Irrigation Channel Water Level Measurement. Water. 2023; 15(18):3308. https://doi.org/10.3390/w15183308

Chicago/Turabian StyleKim, Kwihoon, and Jin-Yong Choi. 2023. "Application of Closed-Circuit Television Image Segmentation for Irrigation Channel Water Level Measurement" Water 15, no. 18: 3308. https://doi.org/10.3390/w15183308