A Review of Neural Networks for Air Temperature Forecasting

Abstract

1. Introduction

2. ANN Inputs

3. Artificial Neural Networks (ANNs)

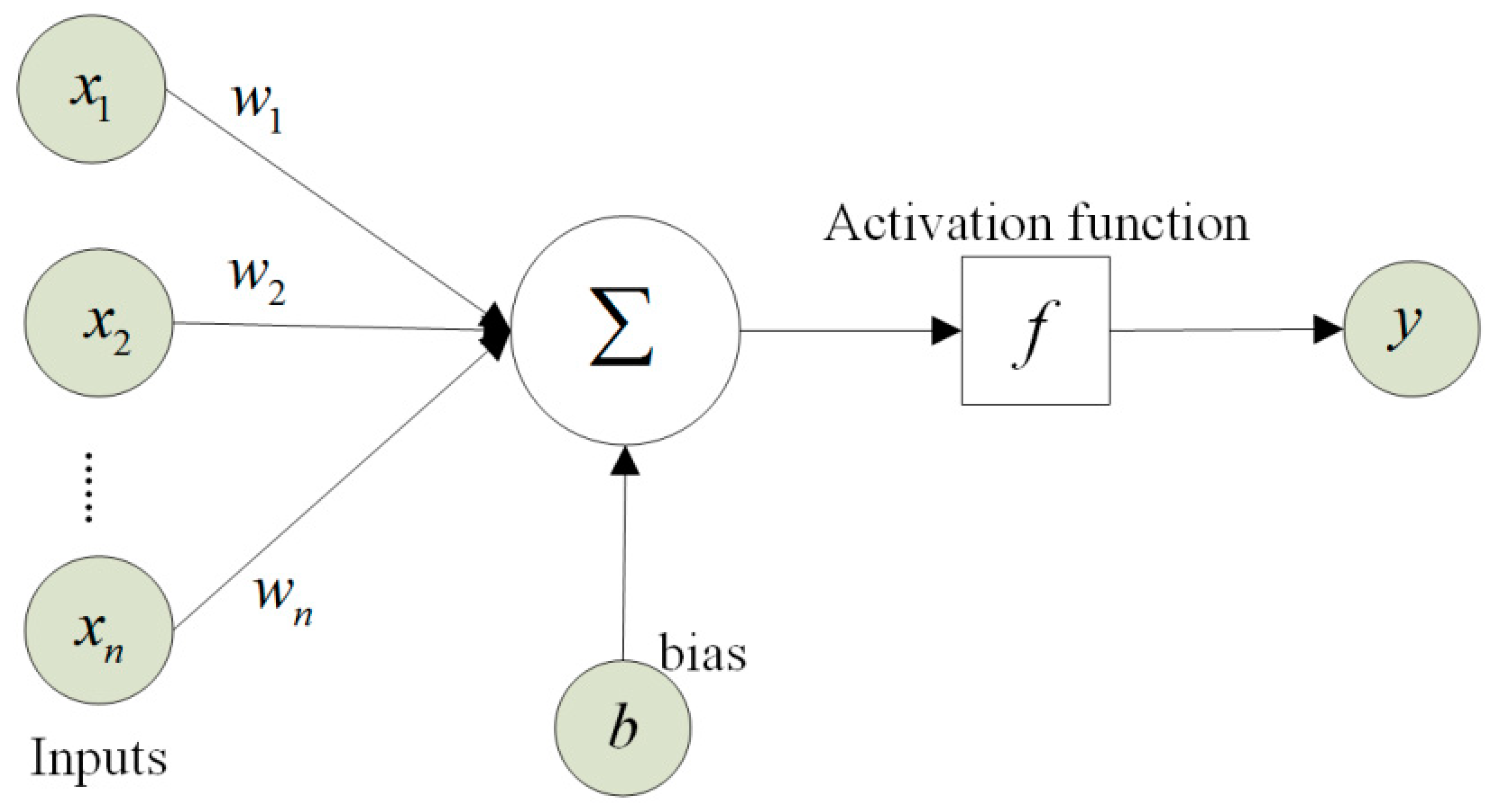

3.1. Multilayer Perceptron (MLP)

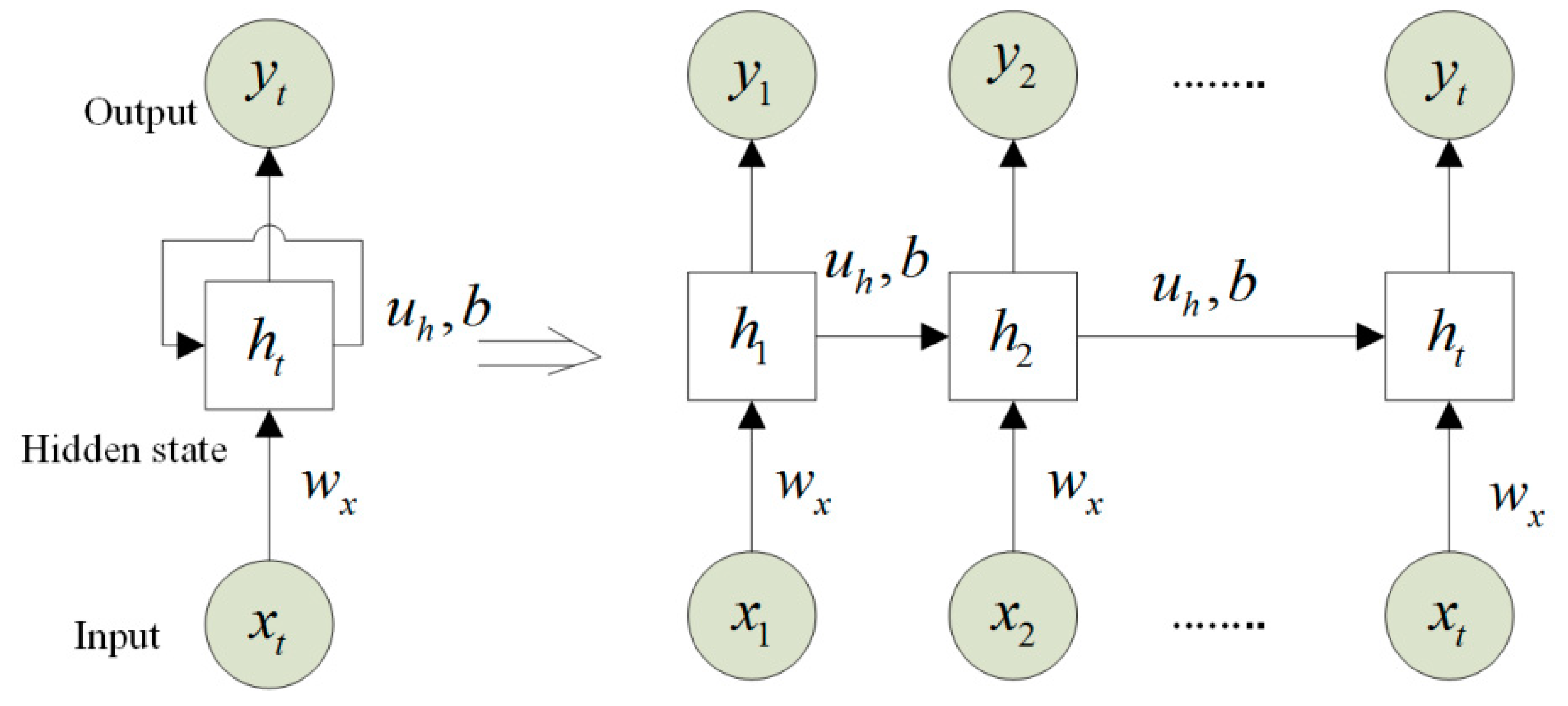

3.2. Recurrent Neural Network (RNN)

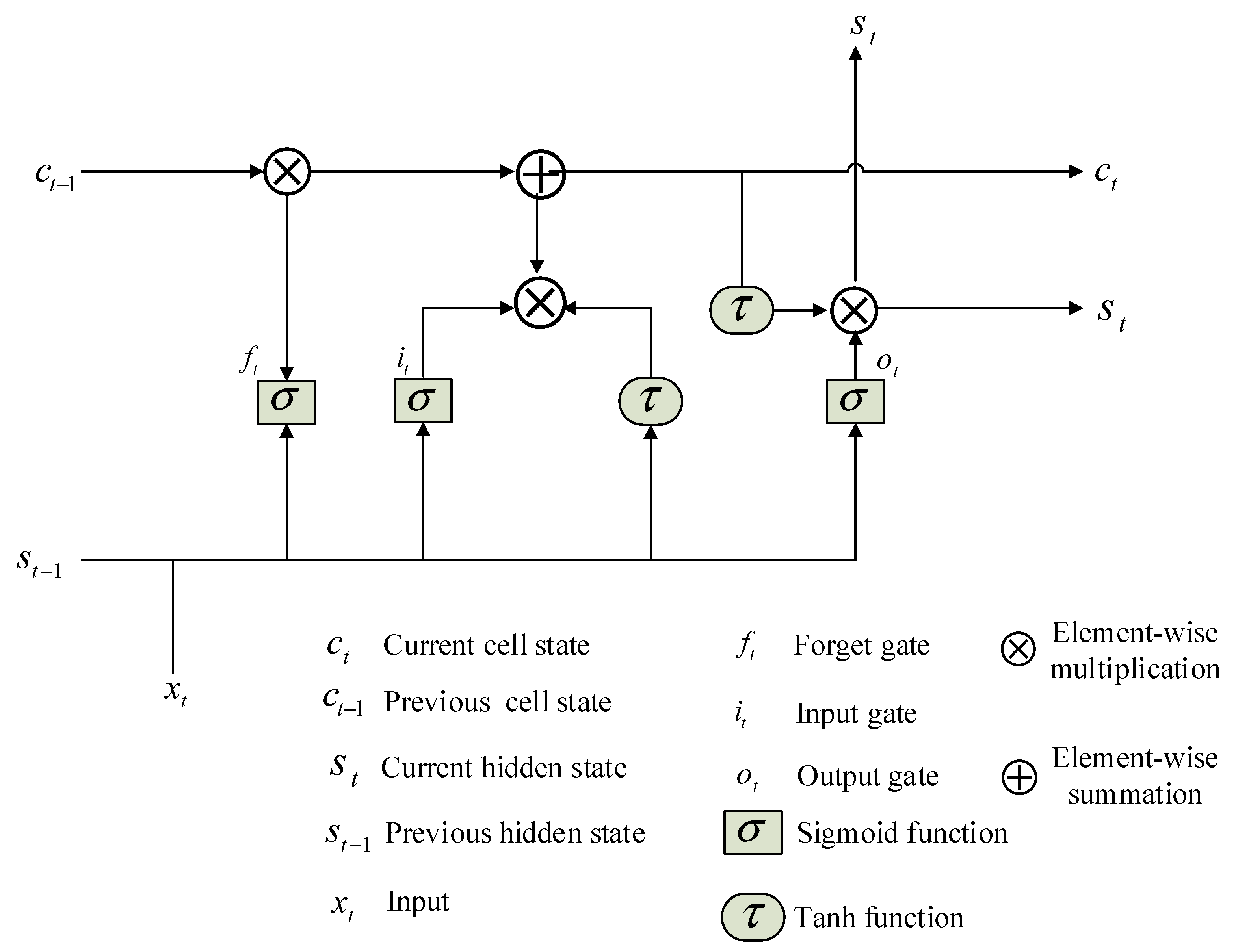

3.3. Long Short-Term Memory (LSTM)

4. Related Work

4.1. Univariate Models

4.2. Multivariate Models

5. Discussion

6. Conclusions and Future Research Work

- The combination of neural networks with many optimization algorithms (e.g., particle swarm algorithm (PSO), harmony search, genetic programming, etc.) has not been applied to air temperature forecasting. The meta-learning approaches can be utilized in the future to forecast air temperature more accurately. They can be combined with neural network models to strengthen the model robustness since the heuristic algorithm can optimize the hyperparameters of ANNs.

- The effect analysis of relevant meteorological (e. g., maximum, minimum, and mean temperature, rainfall, and relative humidity) and geographical (e.g., latitude, longitude, and elevation) variables should be performed to improve the accuracy of air temperature prediction. Thus, the feature selection techniques, such as recursive feature elimination, random forest, and correlation coefficient, should be employed to select the useful input variables for air temperature forecast.

- Comparison of the performance of ANN-based models with other soft computing approaches, such as support vector machines (SVMs), autoregressive moving average model (ARMA), and extreme learning machines to determine the best approach to forecast air temperature over different hydrologic conditions and time horizons.

- The long-term air temperature prediction has an important role in human lives and other sectors, such as energy consumption and agriculture. Hence, it should be investigated more deeply in future studies via the RNN and LSTM models. Their performance should also be compared with other medium or long-range models, such as the European Centre for Medium-Range Weather Forecasts (ECMWF) model and global weather forecast models [57].

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Pachauri, R.K.; Allen, M.R.; Barros, V.R.; Broome, J.; Cramer, W.; Christ, R.; Church, J.A.; Clarke, L.; Dahe, Q.; Dasgupta, P.; et al. Climate Change 2014: Synthesis Report. Contribution of Working Groups I, II and III to the Fifth Assessment Report of the Intergovernmental Panel on Climate Change | EPIC. Available online: https://epic.awi.de/id/eprint/37530/ (accessed on 11 February 2021).

- Tajfar, E.; Bateni, S.M.; Lakshmi, V.; Ek, M. Estimation of surface heat fluxes via variational assimilation of land surface temperature, air temperature and specific humidity into a coupled land surface-atmospheric boundary layer model. J. Hydrol. 2020, 583, 124577. [Google Scholar] [CrossRef]

- Tajfar, E.; Bateni, S.M.; Margulis, S.A.; Gentine, P.; Auligne, T. Estimation of turbulent heat fluxes via assimilation of air temperature and specific humidity into an atmospheric boundary layer model. J. Hydrometeorol. 2020, 21, 205–225. [Google Scholar] [CrossRef]

- Valipour, M.; Bateni, S.M.; Gholami Sefidkouhi, M.A.; Raeini-Sarjaz, M.; Singh, V.P. Complexity of Forces Driving Trend of Reference Evapotranspiration and Signals of Climate Change. Atmosphere (Basel) 2020, 11, 1081. [Google Scholar] [CrossRef]

- Lan, L.; Lian, Z.; Pan, L. The effects of air temperature on office workers’ well-being, workload and productivity-evaluated with subjective ratings. Appl. Ergon. 2010, 42, 29–36. [Google Scholar] [CrossRef]

- Schulte, P.A.; Bhattacharya, A.; Butler, C.R.; Chun, H.K.; Jacklitsch, B.; Jacobs, T.; Kiefer, M.; Lincoln, J.; Pendergrass, S.; Shire, J.; et al. Advancing the framework for considering the effects of climate change on worker safety and health. J. Occup. Environ. Hyg. 2016, 13, 847–865. [Google Scholar] [CrossRef]

- Sharma, N.; Sharma, P.; Irwin, D.; Shenoy, P. Predicting solar generation from weather forecasts using machine learning. In Proceedings of the 2011 IEEE International Conference on Smart Grid Communications, Brussels, Belgium, 17–20 October 2011; pp. 528–533. [Google Scholar]

- Sardans, J.; Peñuelas, J.; Estiarte, M. Warming and drought alter soil phosphatase activity and soil P availability in a Mediterranean shrubland. Plant Soil 2006, 289, 227–238. [Google Scholar] [CrossRef]

- Green, M.A. General temperature dependence of solar cell performance and implications for device modelling. Prog. Photovoltaics Res. Appl. 2003, 11, 333–340. [Google Scholar] [CrossRef]

- Tang, C.; Crosby, B.T.; Wheaton, J.M.; Piechota, T.C. Assessing streamflow sensitivity to temperature increases in the Salmon River Basin, Idaho. Glob. Planet. Change 2012, 88–89, 32–44. [Google Scholar] [CrossRef]

- Jovic, S.; Nedeljkovic, B.; Golubovic, Z.; Kostic, N. Evolutionary algorithm for reference evapotranspiration analysis. Comput. Electron. Agric. 2018, 150, 1–4. [Google Scholar] [CrossRef]

- Marzo, A.; Trigo, M.; Alonso-Montesinos, J.; Martínez-Durbán, M.; López, G.; Ferrada, P.; Fuentealba, E.; Cortés, M.; Batlles, F.J. Daily global solar radiation estimation in desert areas using daily extreme temperatures and extraterrestrial radiation. Renew. Energy 2017, 113, 303–311. [Google Scholar] [CrossRef]

- Smith, D.M.; Cusack, S.; Colman, A.W.; Folland, C.K.; Harris, G.R.; Murphy, J.M. Improved Surface Temperature Prediction for the Coming Decade from a Global Climate Model. Science 2007, 317, 796–799. [Google Scholar] [CrossRef]

- Yang, T.; Sun, F.; Gentine, P.; Liu, W.; Wang, H.; Yin, J.; Du, M.; Liu, C. Evaluation and machine learning improvement of global hydrological model-based flood simulations. Environ. Res. Lett. 2019, 14. [Google Scholar] [CrossRef]

- Lee, J.; Kim, C.G.; Lee, J.E.; Kim, N.W.; Kim, H. Application of artificial neural networks to rainfall forecasting in the Geum River Basin, Korea. Water (Switzerland) 2018, 10, 1448. [Google Scholar] [CrossRef]

- Zou, Q.; Xiong, Q.; Li, Q.; Yi, H.; Yu, Y.; Wu, C. A water quality prediction method based on the multi-time scale bidirectional long short-term memory network. Environ. Sci. Pollut. Res. 2020, 27, 16853–16864. [Google Scholar] [CrossRef] [PubMed]

- Altan Dombayci, Ö.; Gölcü, M. Daily means ambient temperature prediction using artificial neural network method: A case study of Turkey. Renew. Energy 2009, 34, 1158–1161. [Google Scholar] [CrossRef]

- Cifuentes, J.; Marulanda, G.; Bello, A.; Reneses, J. Air temperature forecasting using machine learning techniques: A review. Energies 2020, 13, 4215. [Google Scholar] [CrossRef]

- Chattopadhyay, S.; Jhajharia, D.; Chattopadhyay, G. Univariate modelling of monthly maximum temperature time series over northeast India: Neural network versus Yule-Walker equation based approach. Meteorol. Appl. 2011, 18, 70–82. [Google Scholar] [CrossRef]

- Ustaoglu, B.; Cigizoglu, H.K.; Karaca, M. Forecast of daily mean, maximum and minimum temperature time series by three artificial neural network methods. Meteorol. Appl. 2008, 15, 431–445. [Google Scholar] [CrossRef]

- Fahimi Nezhad, E.; Fallah Ghalhari, G.; Bayatani, F. Forecasting Maximum Seasonal Temperature Using Artificial Neural Networks “Tehran Case Study”. Asia-Pacific J. Atmos. Sci. 2019, 55, 145–153. [Google Scholar] [CrossRef]

- Smith, B.A.; Mcclendon, R.W.; Hoogenboom, G. Improving Air Temperature Prediction with Artificial Neural Networks. Int. J. Comput. Inf. Eng. 2007, 1, 3159. [Google Scholar]

- Zhang, Z.; Dong, Y.; Yuan, Y. Temperature Forecasting via Convolutional Recurrent Neural Networks Based on Time-Series Data. Complexity 2020, 2020. [Google Scholar] [CrossRef]

- Kreuzer, D.; Munz, M.; Schlüter, S. Short-term temperature forecasts using a convolutional neural network — An application to different weather stations in Germany. Mach. Learn. with Appl. 2020, 2, 100007. [Google Scholar] [CrossRef]

- Lee, S.; Lee, Y.S.; Son, Y. Forecasting daily temperatures with different time interval data using deep neural networks. Appl. Sci. 2020, 10, 1609. [Google Scholar] [CrossRef]

- Salcedo-Sanz, S.; Deo, R.C.; Carro-Calvo, L.; Saavedra-Moreno, B. Monthly prediction of air temperature in Australia and New Zealand with machine learning algorithms. Theor. Appl. Climatol. 2016, 125, 13–25. [Google Scholar] [CrossRef]

- Rajendra, P.; Murthy, K.V.N.; Subbarao, A.; Boadh, R. Use of ANN models in the prediction of meteorological data. Model. Earth Syst. Environ. 2019, 5, 1051–1058. [Google Scholar] [CrossRef]

- Bilgili, M.; Sahin, B. Prediction of long-term monthly temperature and rainfall in Turkey. Energy Sources, Part A Recover. Util. Environ. Eff. 2010, 32, 60–71. [Google Scholar] [CrossRef]

- De Jesgs, O.; Hagan, M.T. Backpropagation Through Time for a General Class of Recurrent Network. In Proceedings of the International Joint Conference on Neural Networks (Cat. No.01CH37222), Washington, DC, USA, 15–19 July 2001; pp. 2638–2643. [Google Scholar] [CrossRef]

- Hochreiter, S. The vanishing gradient problem during learning. Int. J. Uncertainty, Fuzziness Knowledge-Based Syst. 1998, 6, 107–116. [Google Scholar] [CrossRef]

- Hochreiter, S. Long Short-Term Memory. Neural Comput. 1997, 1780, 1735–1780. [Google Scholar] [CrossRef] [PubMed]

- Tran, T.K.T.; Lee, T.; Shin, J.-Y.; Kim, J.-S.; Kamruzzaman, M. Deep Learning-Based Maximum Temperature Forecasting Assisted with Meta-Learning for Hyperparameter Optimization. Atmosphere (Basel) 2020, 11, 487. [Google Scholar] [CrossRef]

- Abhishek, K.; Singh, M.P.; Ghosh, S.; Anand, A. Weather Forecasting Model using Artificial Neural Network. Procedia Technol. 2012, 4, 311–318. [Google Scholar] [CrossRef]

- Kumar, P.; Kashyap, P.; Ali, J. Temperature Forecasting using Artificial Neural Networks (ANN). J. Hill Agric. 2013. [Google Scholar] [CrossRef]

- Tran, T.T.K.; Lee, T.; Kim, J.S. Increasing neurons or deepening layers in forecasting maximum temperature time series? Atmosphere (Basel) 2020, 11, 1072. [Google Scholar] [CrossRef]

- Li, C.; Zhang, Y.; Zhao, G. Deep Learning with Long Short-Term Memory Networks for Air Temperature Predictions. In Proceedings of the 2019 International Conference on Artificial Intelligence and Advanced Manufacturing (AIAM), Dublin, Ireland, 16–18 October 2019; pp. 243–249. [Google Scholar] [CrossRef]

- Afzali, M.; Afzali, A.; Zahedi, G. The Potential of Artificial Neural Network Technique in Daily and Monthly Ambient Air Temperature Prediction. Int. J. Environ. Sci. Dev. 2012, 3, 33–38. [Google Scholar] [CrossRef]

- De, S.S.; Debnath, A. Artificial Neural Network Based Prediction of Maximum and Minimum Temperature in the Summer Monsoon Months over India. Appl. Phys. Res. 2009, 1, 37–44. [Google Scholar] [CrossRef]

- Smith, B.A.; Hoogenboom, G.; McClendon, R.W. Artificial neural networks for automated year-round temperature prediction. Comput. Electron. Agric. 2009, 68, 52–61. [Google Scholar] [CrossRef]

- Kisi, O.; Shiri, J. Prediction of long-term monthly air temperature using geographical inputs. Int. J. Climatol. 2014, 34, 179–186. [Google Scholar] [CrossRef]

- Kisi, O.; Sanikhani, H. Modelling long-term monthly temperatures by several data-driven methods using geographical inputs. Int. J. Climatol. 2015, 35, 3834–3846. [Google Scholar] [CrossRef]

- Şahin, M. Modelling of air temperature using remote sensing and artificial neural network in Turkey. Adv. Sp. Res. 2012, 50, 973–985. [Google Scholar] [CrossRef]

- Akram, M.; El, C. Sequence to Sequence Weather Forecasting with Long Short-Term Memory Recurrent Neural Networks. Int. J. Comput. Appl. 2016, 143, 7–11. [Google Scholar] [CrossRef]

- Jallal, M.A.; Chabaa, S.; El Yassini, A.; Zeroual, A.; Ibnyaich, S. Air temperature forecasting using artificial neural networks with delayed exogenous input. In Proceedings of the 2019 International Conference on Wireless Technologies, Embedded and Intelligent Systems (WITS), Fez, Morocco, 3–4 April 2019; pp. 1–6. [Google Scholar] [CrossRef]

- Park, I.; Kim, H.S.; Lee, J.; Kim, J.H.; Song, C.H.; Kim, H. Temperature Prediction Using the Missing Data Refinement Model Based on a Long Short-Term Memory Neural Network. Atmosphere (Basel) 2019, 10, 718. [Google Scholar] [CrossRef]

- Huang, Y.; Zhao, H.; Huang, X. A Prediction Scheme for Daily Maximum and Minimum Temperature Forecasts Using Recurrent Neural Network and Rough set. In IOP Conference Series: Earth and Environmental Science; IOP Publishing: Bristowl, UK, 2019; Volume 237. [Google Scholar] [CrossRef]

- Sundaram, M.; Prakash, M.; Surenther, I.; Balaji, N.V.; Kannimuthu, S. Weather Forecasting using Machine Learning Techniques. Test Eng. Manag. 2020, 83, 15264–15273. [Google Scholar] [CrossRef]

- Roy, D.S. Forecasting the Air Temperature at a Weather Station Using Deep Neural Networks. Procedia Comput. Sci. 2020, 178, 38–46. [Google Scholar] [CrossRef]

- Kabir, S.; Pender, G.; Patidar, S. Investigating capabilities of machine learning techniques in forecasting stream flow. In Institution of Civil Engineers-Water Management; Thomas Telford Ltd.: London, UK, 2020; Volume 173, pp. 69–86. [Google Scholar]

- Yuan, X.; Chen, C.; Lei, X.; Yuan, Y.; Muhammad Adnan, R. Monthly runoff forecasting based on LSTM–ALO model. Stoch. Environ. Res. Risk Assess. 2018, 32, 2199–2212. [Google Scholar] [CrossRef]

- Abbot, J.; Marohasy, J. Application of artificial neural networks to rainfall forecasting in Queensland, Australia. Adv. Atmos. Sci. 2012, 29, 717–730. [Google Scholar] [CrossRef]

- Mekanik, F.; Imteaz, M.A.; Gato-Trinidad, S.; Elmahdi, A. Multiple regression and Artificial Neural Network for long-term rainfall forecasting using large scale climate modes. J. Hydrol. 2013, 503, 11–21. [Google Scholar] [CrossRef]

- Bessafi, M.; Lasserre-Bigorry, A.; Neumann, C.J.; Pignolet-Tardan, F.; Payet, D.; Lee-Ching-Ken, M. Statistical prediction of tropical cyclone motion: An analog-CLIPER approach. Weather Forecast. 2002, 17, 821–831. [Google Scholar] [CrossRef]

- Hrnjica, B.; Bonacci, O. Lake Level Prediction using Feed Forward and Recurrent Neural Networks. Water Resour. Manag. 2019, 33, 2471–2484. [Google Scholar] [CrossRef]

- Liu, P.; Wang, J.; Sangaiah, A.; Xie, Y.; Yin, X. Analysis and Prediction of Water Quality Using LSTM Deep Neural Networks in IoT Environment. Sustainability 2019, 11, 2058. [Google Scholar] [CrossRef]

- Azad, A.; Pirayesh, J.; Farzin, S.; Malekani, L.; Moradinasab, S.; Kisi, O. Application of heuristic algorithms in improving performance of soft computing models for prediction of min, mean and max air temperatures. Eng. J. 2019, 23, 83–98. [Google Scholar] [CrossRef]

- Frnda, J.; Durica, M.; Nedoma, J.; Zabka, S.; Martinek, R.; Kostelansky, M. A weather forecast model accuracy analysis and ecmwf enhancement proposal by neural network. Sensors (Switzerland) 2019, 19, 5144. [Google Scholar] [CrossRef] [PubMed]

| Reference | Input | Data | Region | Type of Model | Configuration of Hidden Layer | Output |

|---|---|---|---|---|---|---|

| Ustaoglu et al. [20] | Past seven daily mean, maximum and minimum air temperature | 1989 to 2003 | Turkey | ANN (feed-forward back propagation (FFBP), (2) radial basis function (RBF) and, (3) generalized regression neural network (GRNN)) | FFBF (7,5,1) RBF (Tmean, Tmin: 7,0.99,1; Tmax: 7,0.55,1) GRNN (Tmean: 7,0.05,1; Tmax: 7,0.08,1, Tmin: 7,0.07,1) | Daily mean, maximum and minimum temperature |

| Chattopadhyay et al. [19] | Previous maximum temperature values | 1901–2003 | India | Multilayer Perceptron (MLP), Generalized Feed Forward Neural Network (GFFNN), and Modular Neural Network (MNN). | Number of hidden nodes determined by the number of adjustable parameters | Mean monthly maximum temperature |

| Abhishek et al. [33] | Historical 10-year data of a particular day | 1999–2009 | Canada | Feed-forward ANN | 5-hidden-layer network with 10 or 16 neurons/layer | Daily maximum temperature |

| Kumar et al. [34] | Six previous weekly mean temperature | 2002–2011 | India | Feed-forward ANN | 2 hidden layers with 5 neurons/layer | One week ahead mean temperature |

| Tran et al. [32] | Past daily maximum temperature (from 7–36) | 1976–2015 | South Korea | Traditional ANN, RNN, LSTM | Hidden nodes: 1–20 Hidden layer: 1–3 | Daily maximum temperature for 1–15 days in advance |

| Tran and Lee [35] | Six previous daily maximum temperature | 1976–2015 | South Korea | Traditional ANN | Hidden layer: 1, 3, 5 Parameters: 49, 113, 169, 353, 1001 | One day ahead maximum temperature |

| Zhang et al. [23] | Four past temperature data map series | 1952–2018 | China | Convolutional recurrent neural network (CRNN) | 3 convolution layers followed a LSTM and a dense layer | Four future temperature data map series |

| Li et al. [36] | Historical time series of temperature | 2009 to 2018 | China | Stacked LSTM | 3 LSTMs (20, 10, and 4 hidden cells)—fully connected layer (4 neurons) | One half-hour ahead temperature |

| Six past observations | DNN | Three hidden layers with 12, 8 and 4 neurons | ||||

| Afzali et al. [37] | Daily and monthly mean, minimum and maximum ambient air temperature | 1961–2004 | Iran | Feed-forward ANN | One hidden layer with 15 neurons | Maximum minimum and mean ambient air temperature developing ANN models for one day and one month ahead |

| De and Debnath [38] | December to May maximum and minimum temperature | 1901–2003. | India | Feed-forward NN | One hidden layer with 2 neurons | Maximum and minimum temperature monsoon months (June–August) |

| Smith et al. [22] | Up to prior 24 h: temperature, wind speed, rainfall, relative humidity solar radiation, time-of-day, day-of-year | 2000–2005 | Georgia, USA | Ward-style ANN | Hidden Layer: 3 parallel slabs Hidden nodes: (2–75) nodes per slab | 1 h to 12 h air Temperature |

| Smith et al. [39] | Up to prior 24 h: temperature, wind speed, rainfall, relative humidity solar radiation, time-of-day, day-of-year, hourly rate of change in each of the above weather variables in the last 24 h | 1997 to 2005 | Georgia, USA | Ward-style ANN | Hidden Layer: 3 parallel slabs Hidden nodes: 40 nodes per slab | 1 h to 12 h air temperature |

| Altan Dombayci and Gölcü [17] | Month, day, and mean temperature of the previous day | 2003–2006 | Turkey | ANN (Levenberg–Marquardt (LM) feed-forward backpropagation algorithms) | One hidden layer with 6 neurons | Daily mean ambient temperatures |

| Bilgili and Sahin [28] | Latitude, longitude, altitude, and month | 1975 to 2006 | Turkey | MLP | One hidden layer with 32 neurons | Monthly temperature |

| Kisi and Shiri [40] | Latitude, longitude, altitude, and month | 1956 and 2010 | Iran | Feed-forward ANN | One hidden layer with 4 neurons | Monthly temperature |

| Kisi and Sanikhani [41] | Number of the months, station latitude, longi- tude and altitude values | 1986–2000 | Iran | Feed-forward ANN | One hidden layer | Monthly air temperature values |

| Şahin [42] | City, month, altitude, latitude, longitude, monthly mean land surface temperatures | 1995 to 2005 | Turkey | Feed-forward ANN | One hidden layer with 14 neurons One hidden layer with 24 neurons | Monthly mean air temperature |

| Salcedo-Sanz et al. [26] | Temperature in the previous month, southern oscillation index (SOI), indian ocean dipole (IOD), and pacific decadal oscillation (PDO) | 1900–2010 for urban and 1910–2010 for rural stations in Australia and 1930–2010 in New Zealand’s stations | Australia and New Zealand | Multilayer perceptron MLP | NA | Monthly mean air temperature |

| Akram and El [43] | 24 (or 72) temperature values | 2000–2015 | Morocco | LSTM | Two LSTM layers and a fully connected hidden layer in between with a 100 neuron | 24 and 72 h |

| Jallal et al. [44] | 3 previous values of air temperature (t-1, t-2, t-3) and global solar radiation GSR (t, t-1, t-2, t-3, t-4) | 2014 | Morocco | MLP | 2 hidden layers with 5 and 8 neurons | Half hourly air temperature (t) |

| Park et al. [45] | 24 h weather data: hourly wind speed, wind direction, and humidity | November 1981 to December 2017 | South Korea | LSTM | Number of LSTM layers and number of hidden nodes were set to 4 and 384 | Temperature up to 14 days in advance |

| Huang et al. [46] | 8–9 temperature factors from 50 CLIPER predictors | Jan, 2015–Jun, 2018 | 14 stations in Guangxi, China | Recurrent Neural Network (RNN) | One hidden layer with 10 neurons | 24 h daily maximum and minimum temperature |

| Sundaram et al. [47] | Air temperature, pressure, Relative humidity, Mean wind direction, Total cloud cover, Horizontal visibility, Dew point temperature | 2006–2018 | India | MLP | Five hidden layers with 16, 32, 16, 5, and 1 neurons | Temperature for eight weeks |

| Roy [48] | Past seven days of average wind speed, precipitation, snowfall, snow depth, average temperature, maximum temperature and minimum temperature | 1/1/2009 to 1/1/2019 | John F. Kennedy International Airport, NY | MLP, LSTM, CNN+LSTM | MLP: 2 layers with 16 neurons per layer LSTM: 16 hidden neurons followed by a dense layer CNN+LSTM: has one convolutional layer (32 filters with a kernel size of 5) followed by a LSTM cell containing 16 neurons and finally a dense layer | Average temperature for the next day and 10 days ahead |

| Kreuzer et al. [24] | Air temperature, relative humidity, relative air pressure, sea level air pressure, cloudiness, wind speed, wind direction, precipitation, month, hour of day | 2009–2018 | Germany | Univariate LSTM, multivariate LSTM, ConvLSTM | Univariate and multivariate LSTM: 1 hidden layer with 32 hidden neurons ConvLSTM: 6 convolutional layers + 2 LSTM + 2 dense layers | 24 h air temperature |

| Lee et al. [25] | Air temperature, precipitation, humidity, vapor pressure, dew point temperature, air pressure, sea level pressure, hours of sunshine, solar radiation, total cloud cover, middle-and low-level cloud cover, ground surface temperature, wind speed and direction | 2009–2018 | South Korea | MLP, LSTM, CNN | MLP: 6 hidden layers LSTM (daily input): 2 hidden LSTM + 3 dense layers LSTM (hourly input): 2 hidden LSTM + 6 dense layers CNN: 5 convolutional layers + 2 dense layers | Daily average, minimum, and maximum temperature |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Tran, T.T.K.; Bateni, S.M.; Ki, S.J.; Vosoughifar, H. A Review of Neural Networks for Air Temperature Forecasting. Water 2021, 13, 1294. https://doi.org/10.3390/w13091294

Tran TTK, Bateni SM, Ki SJ, Vosoughifar H. A Review of Neural Networks for Air Temperature Forecasting. Water. 2021; 13(9):1294. https://doi.org/10.3390/w13091294

Chicago/Turabian StyleTran, Trang Thi Kieu, Sayed M. Bateni, Seo Jin Ki, and Hamidreza Vosoughifar. 2021. "A Review of Neural Networks for Air Temperature Forecasting" Water 13, no. 9: 1294. https://doi.org/10.3390/w13091294

APA StyleTran, T. T. K., Bateni, S. M., Ki, S. J., & Vosoughifar, H. (2021). A Review of Neural Networks for Air Temperature Forecasting. Water, 13(9), 1294. https://doi.org/10.3390/w13091294