Uncertainty Quantification in Machine Learning Modeling for Multi-Step Time Series Forecasting: Example of Recurrent Neural Networks in Discharge Simulations

Abstract

1. Introduction

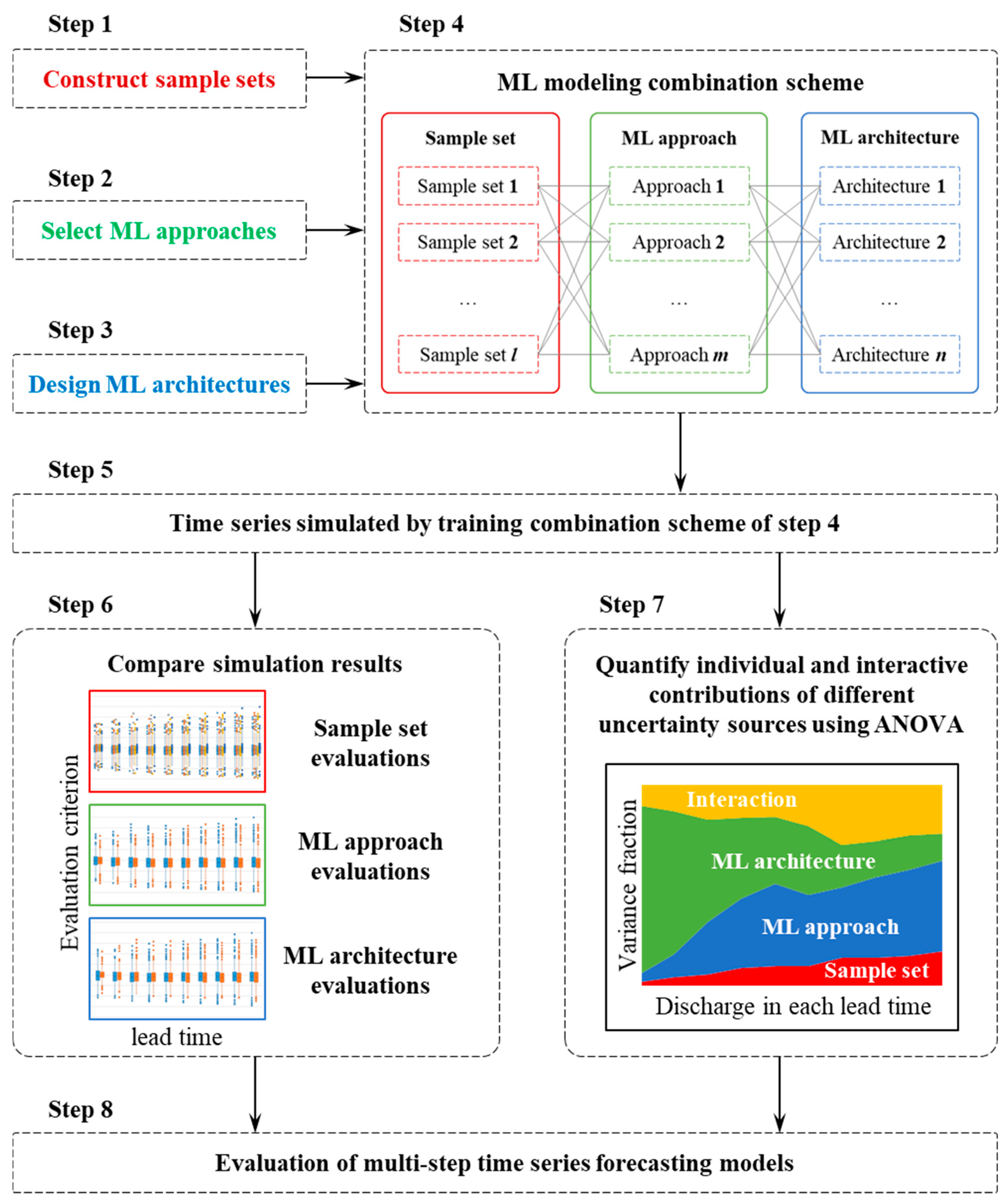

2. Methodology

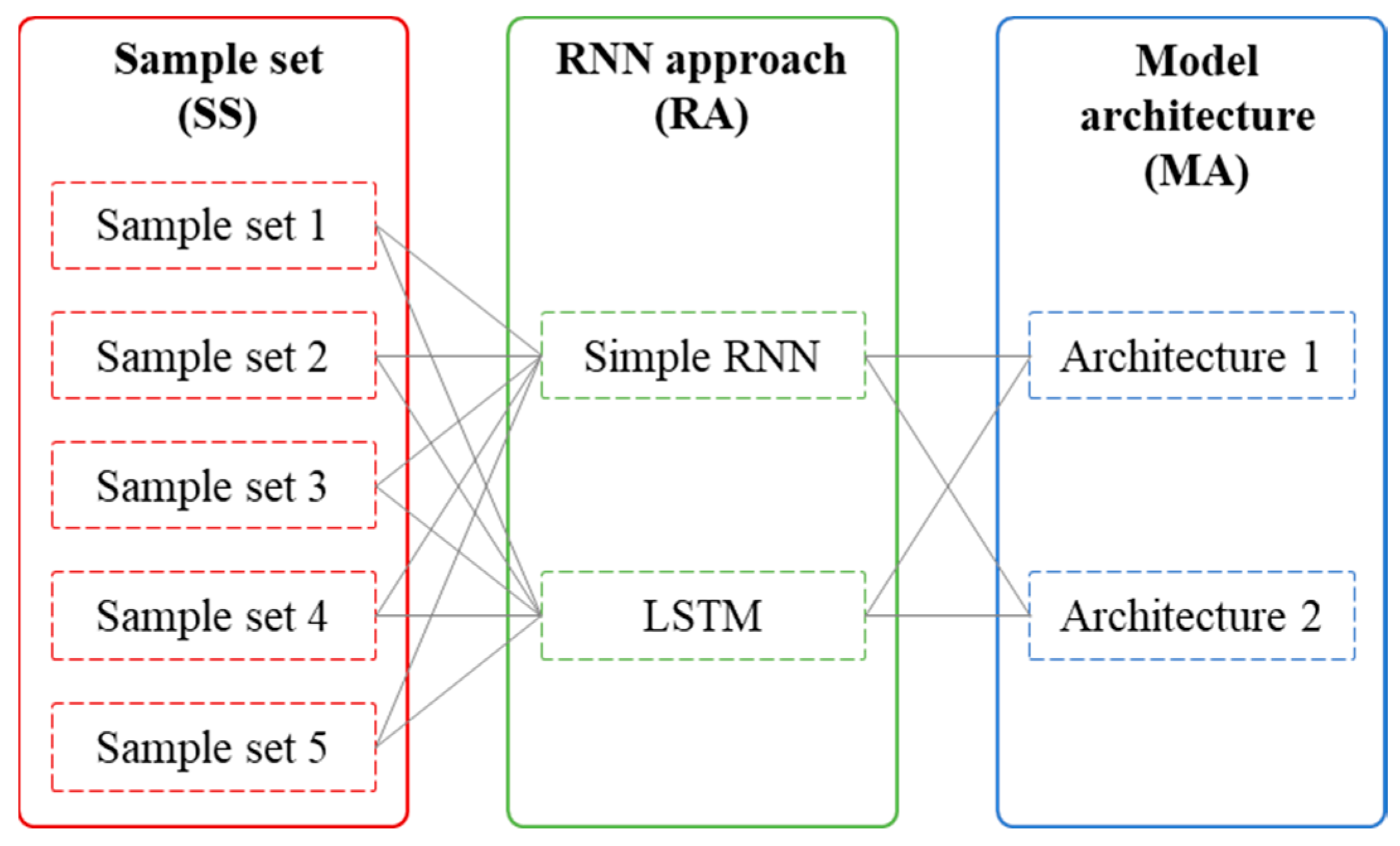

2.1. The Proposed Framework

2.2. RNN Approaches

2.2.1. Simple RNN

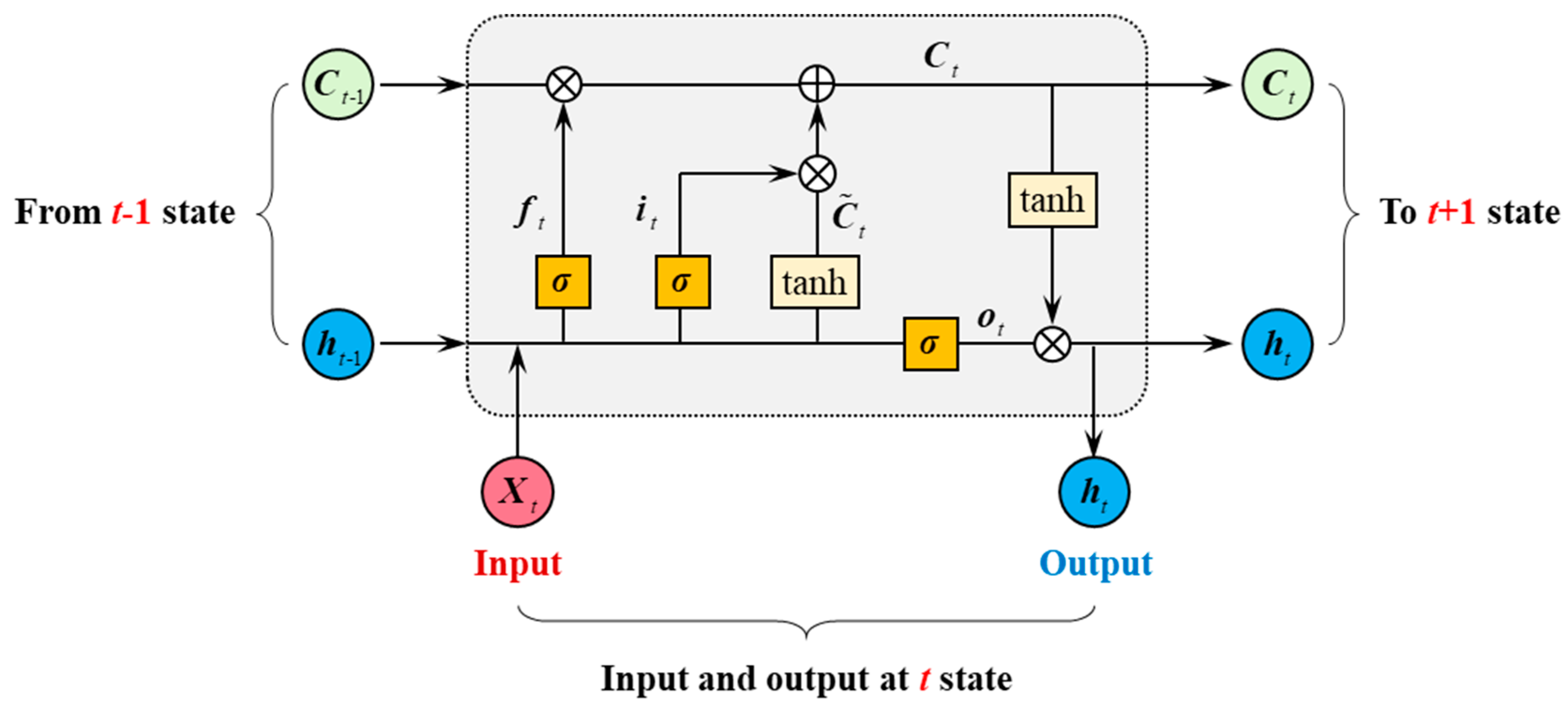

2.2.2. LSTM Network

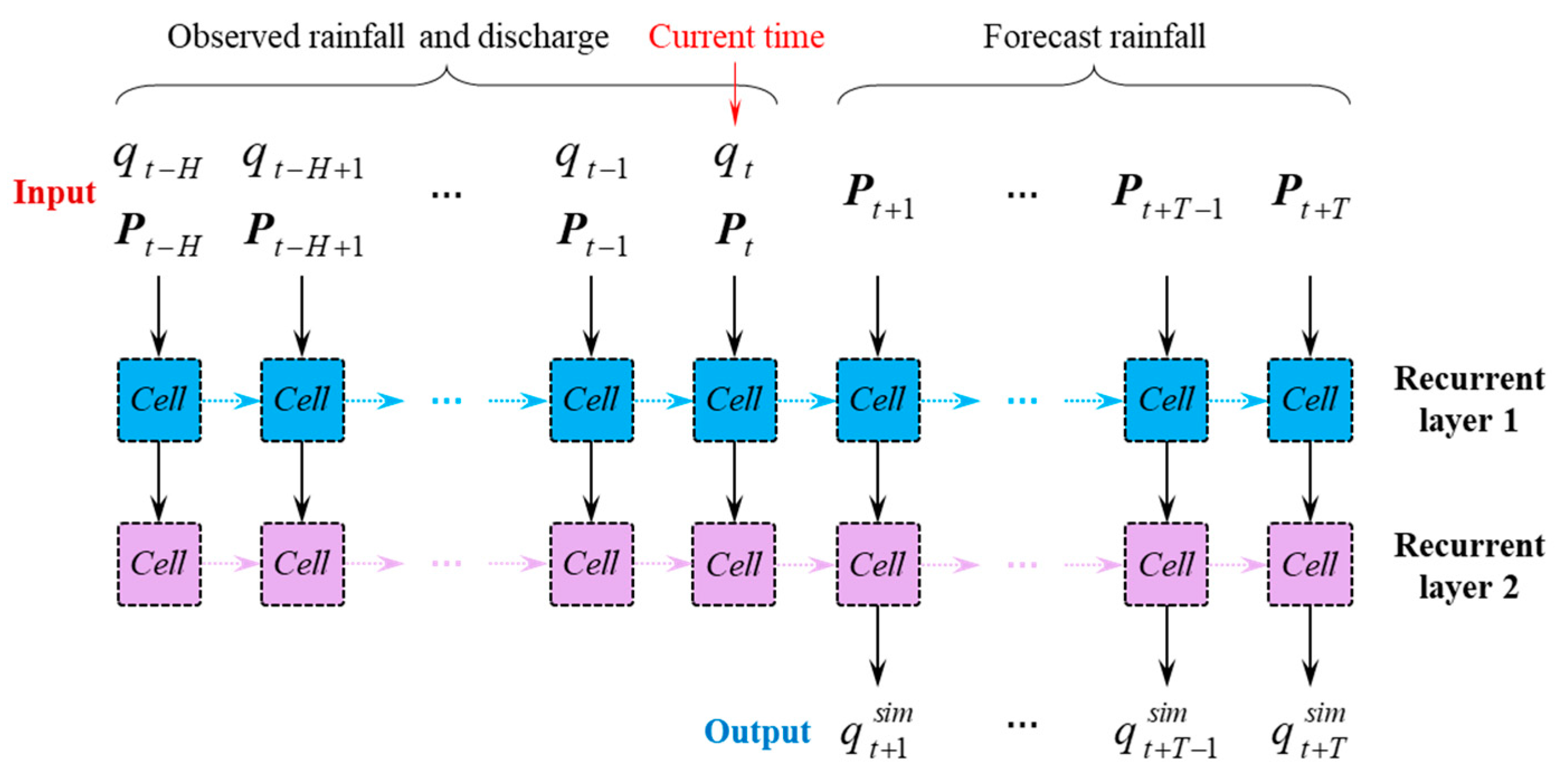

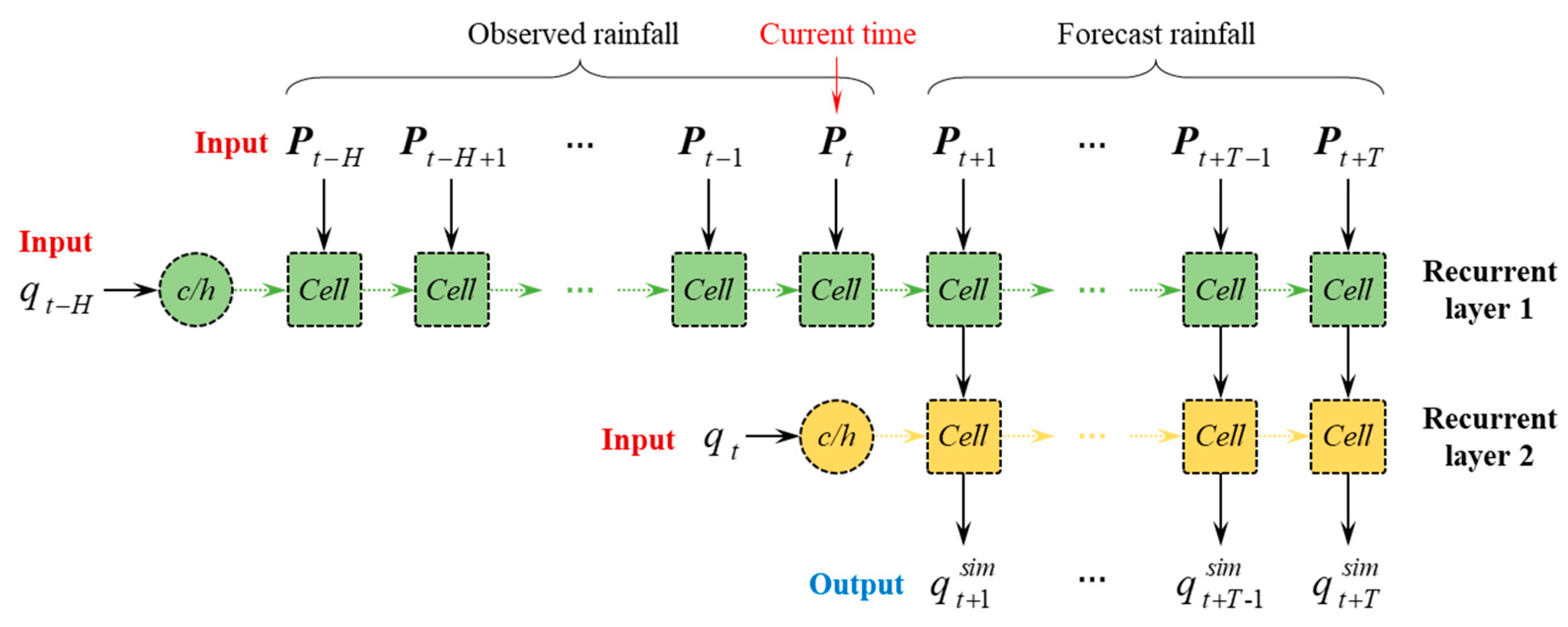

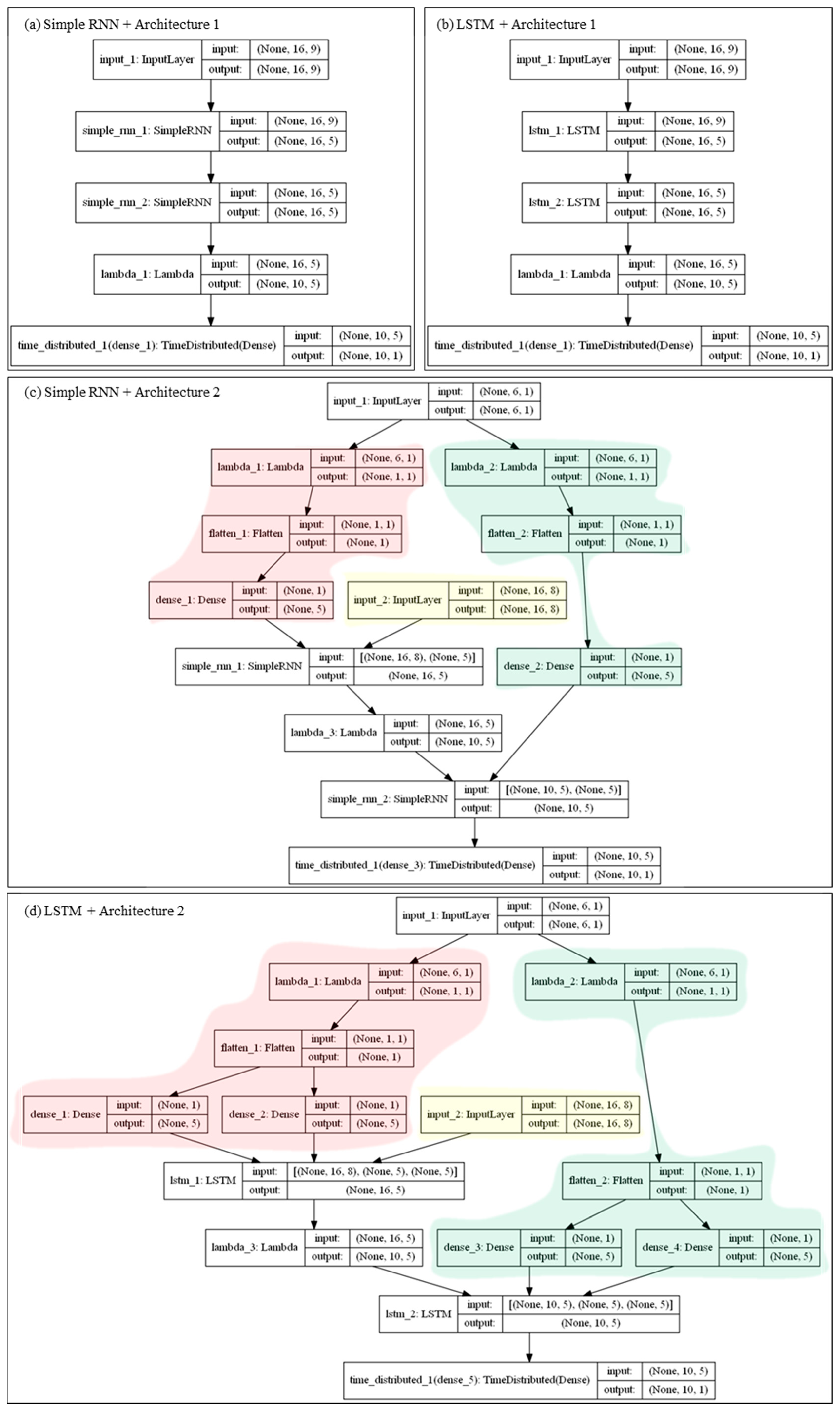

2.3. Model Architecture Design

2.3.1. Model Architecture 1

2.3.2. Model Architecture 2

2.4. Criteria for Accuracy Assessment

2.5. Variance Decomposition

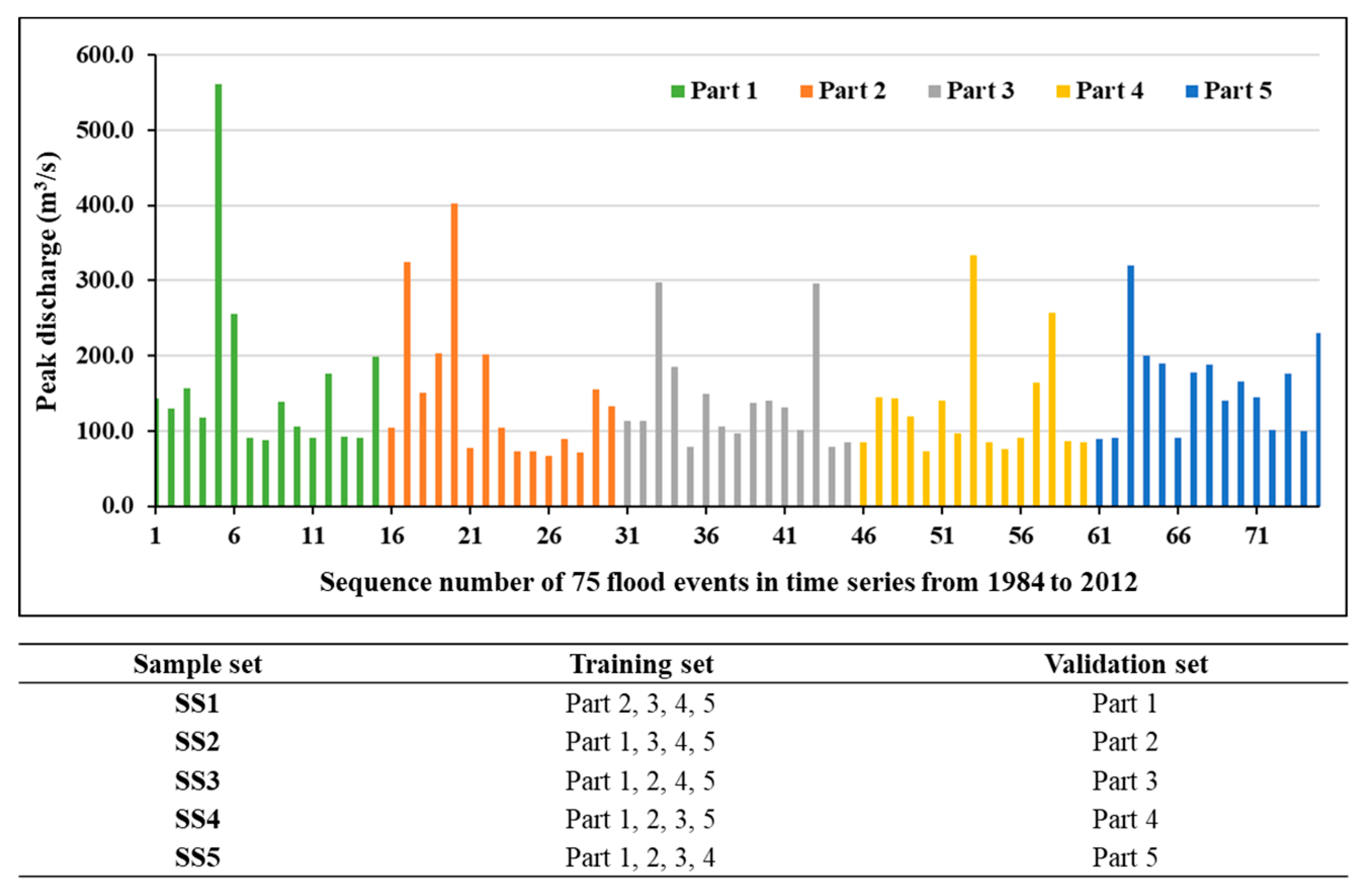

2.5.1. Subsampling Approach

2.5.2. ANOVA Approach

3. Case Study

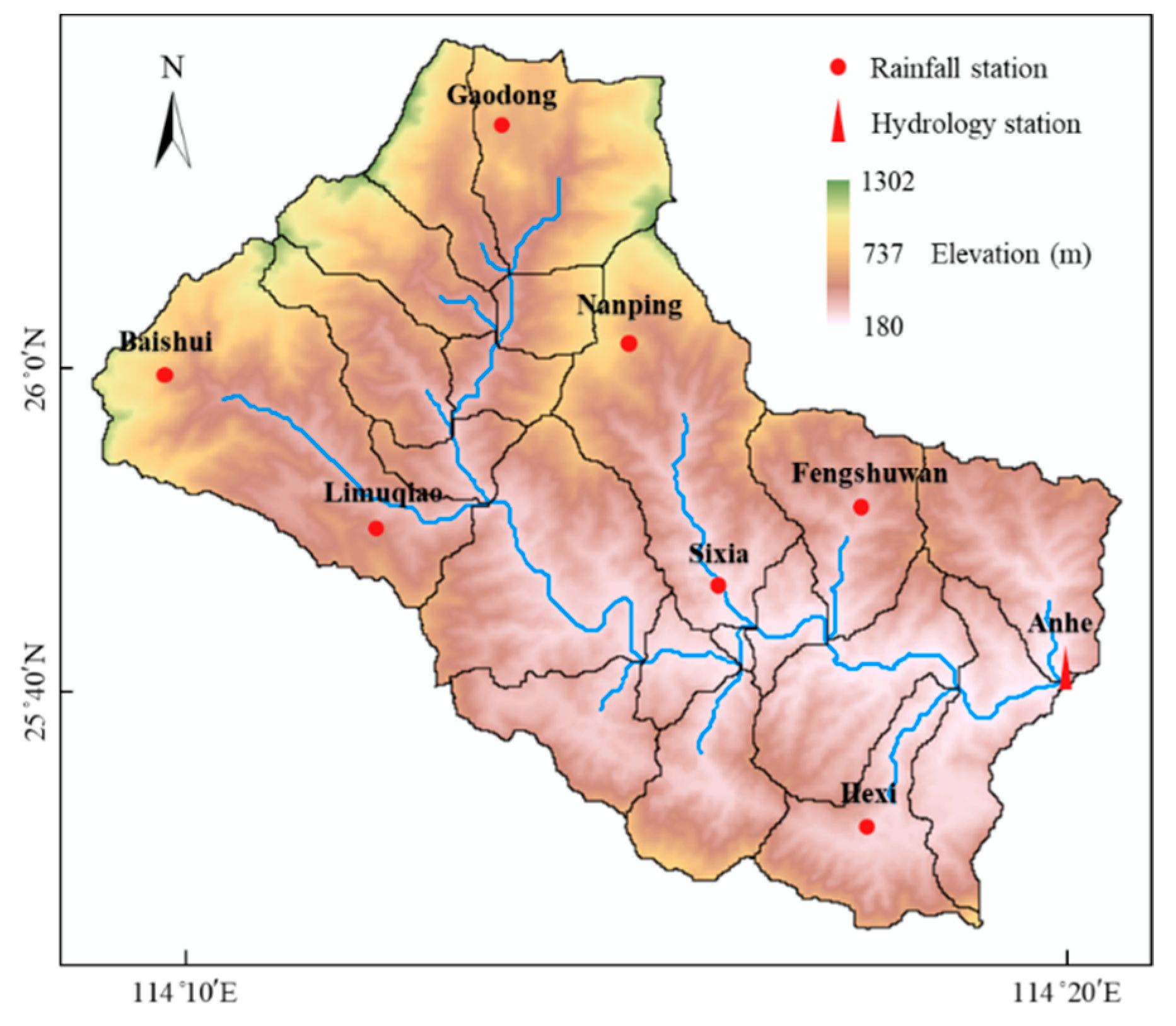

3.1. Study Area

3.2. Model Training

4. Results and Discussion

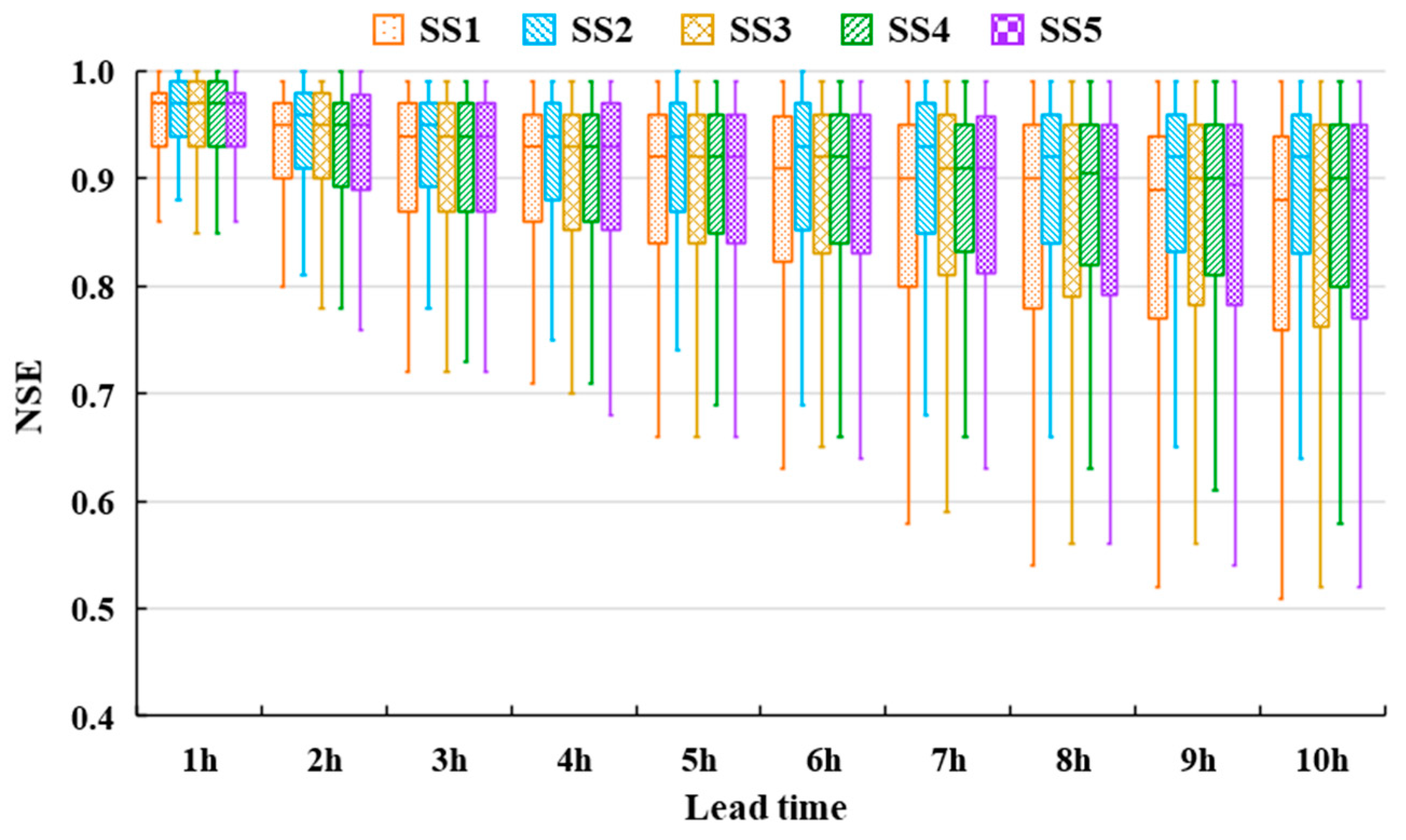

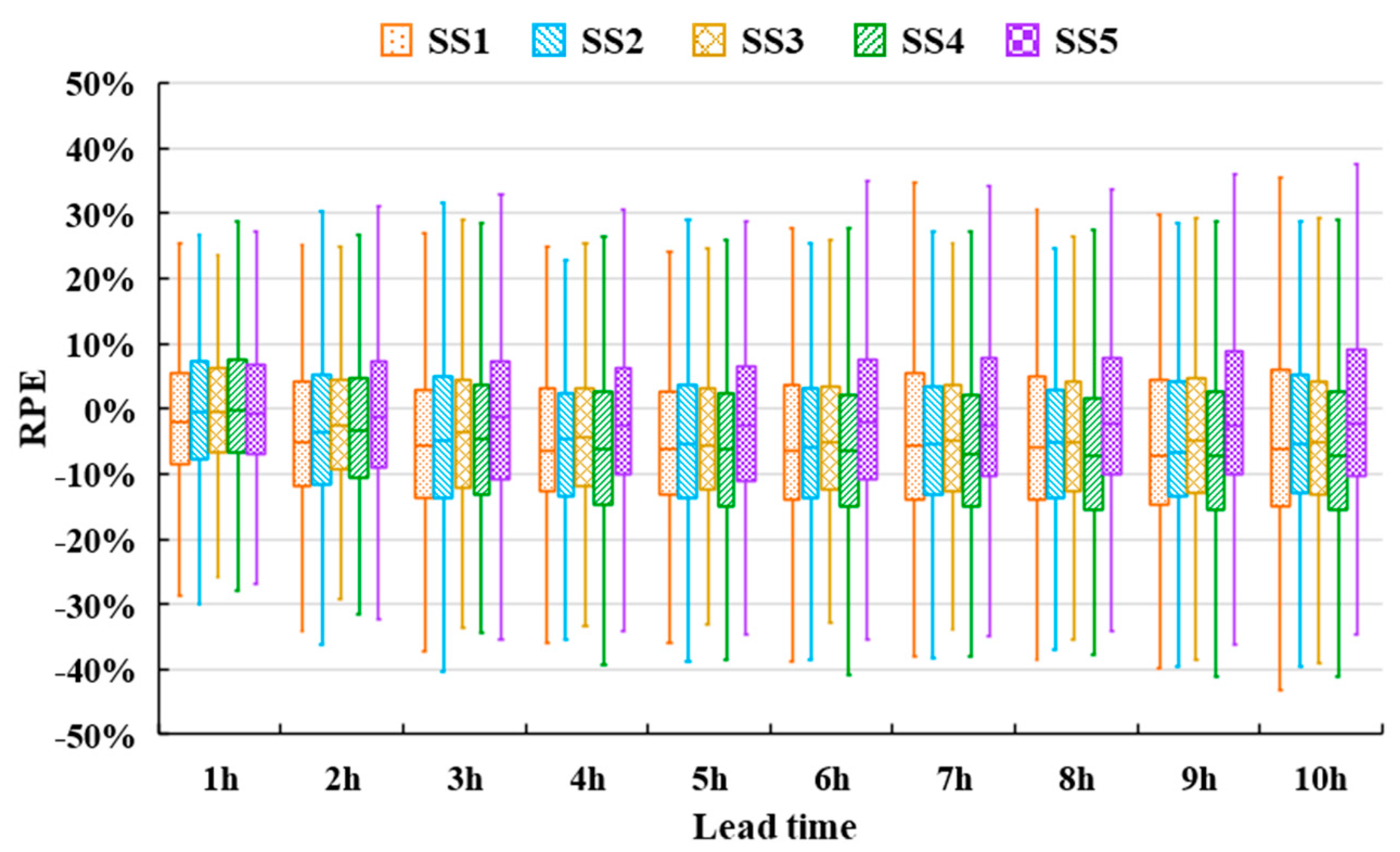

4.1. Sample Set Evaluations

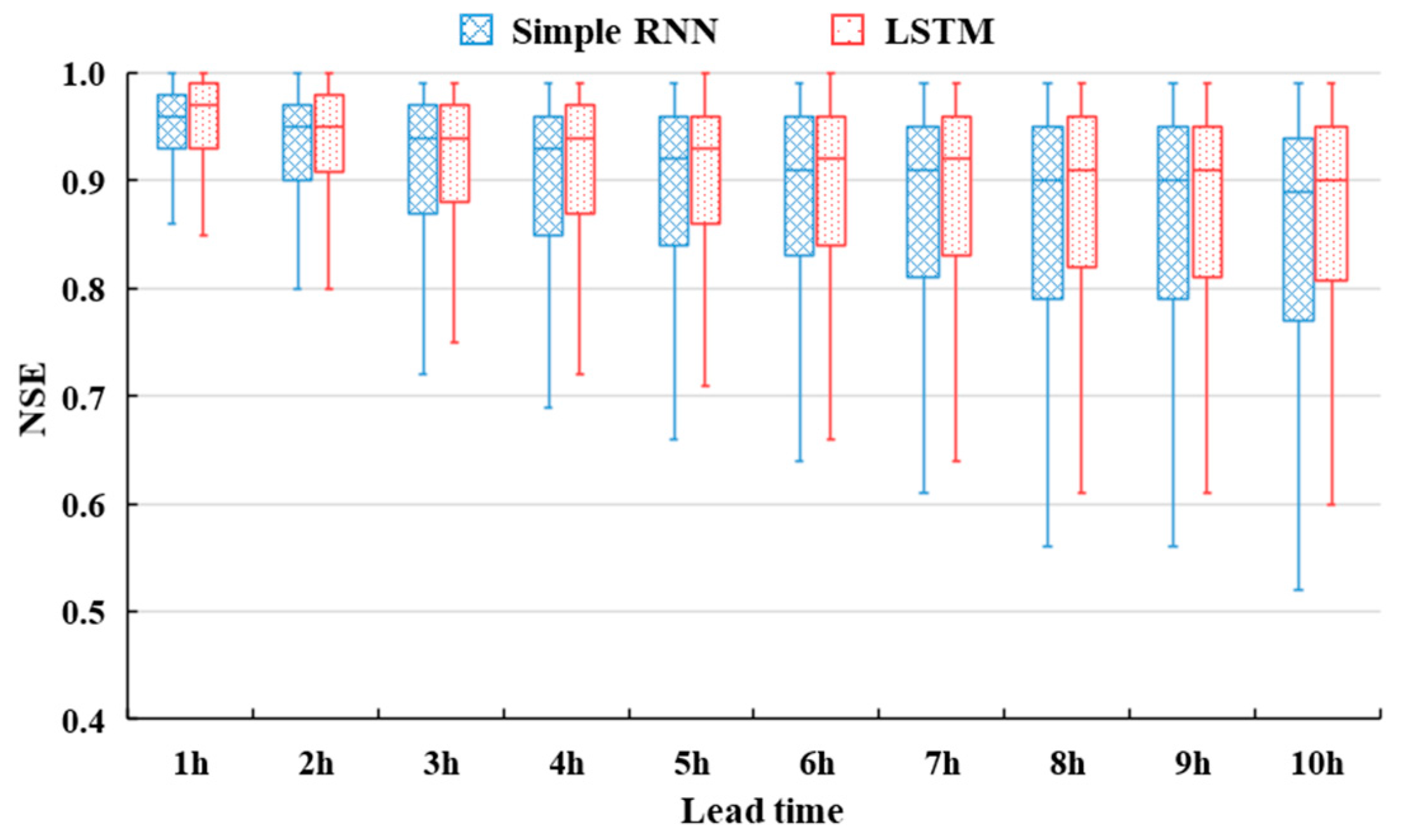

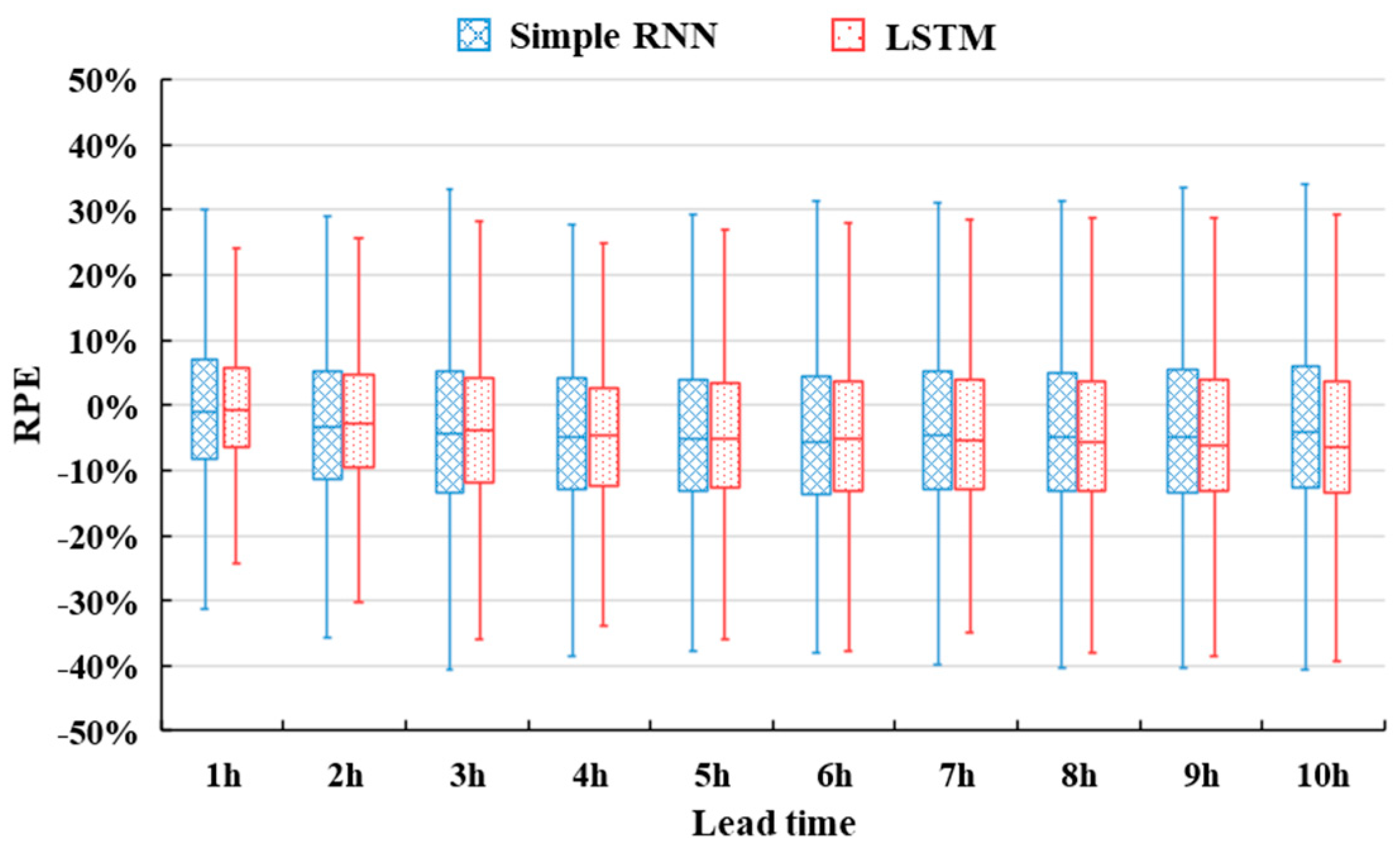

4.2. RNN Approach Evaluations

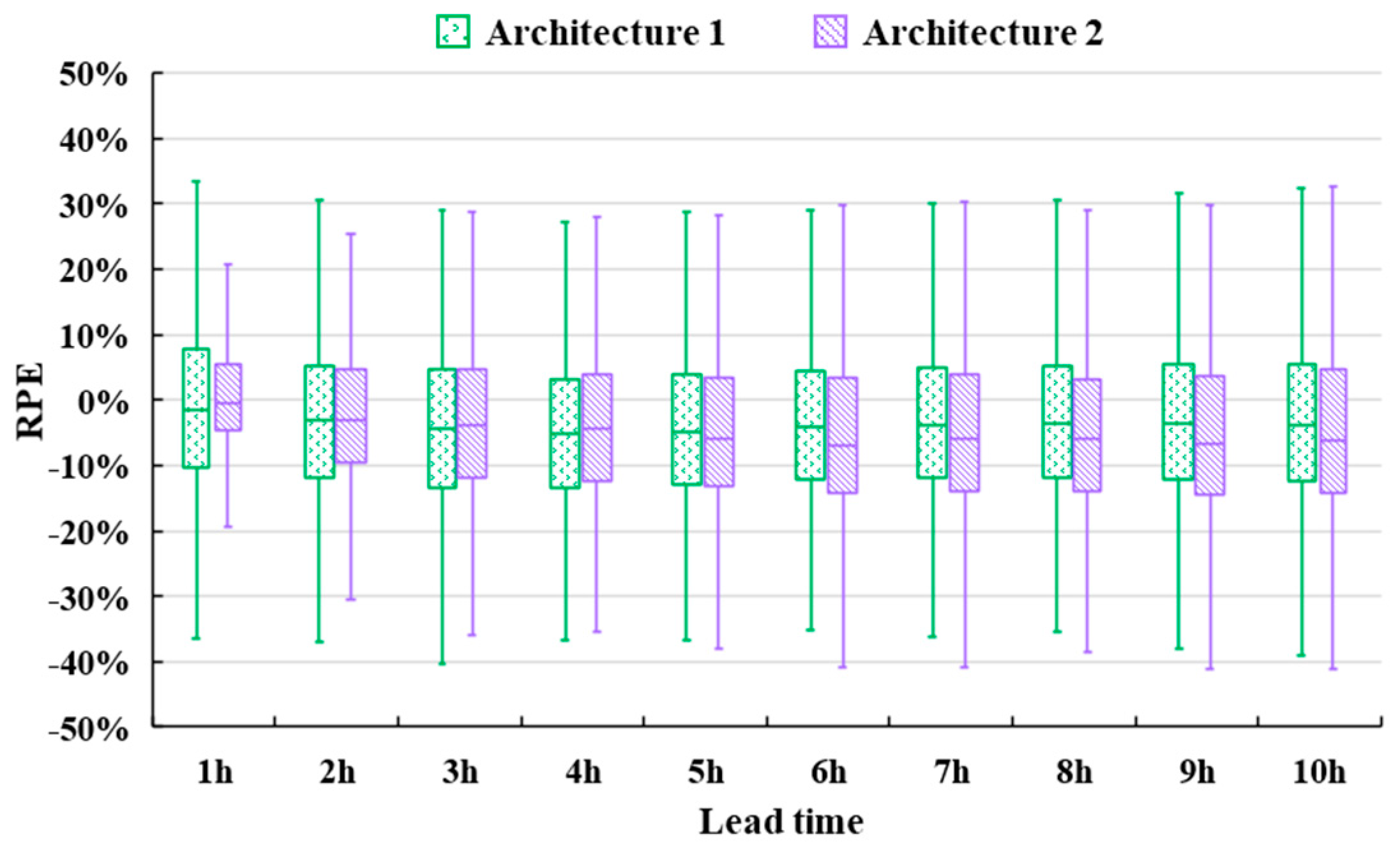

4.3. Model Architecture Evaluations

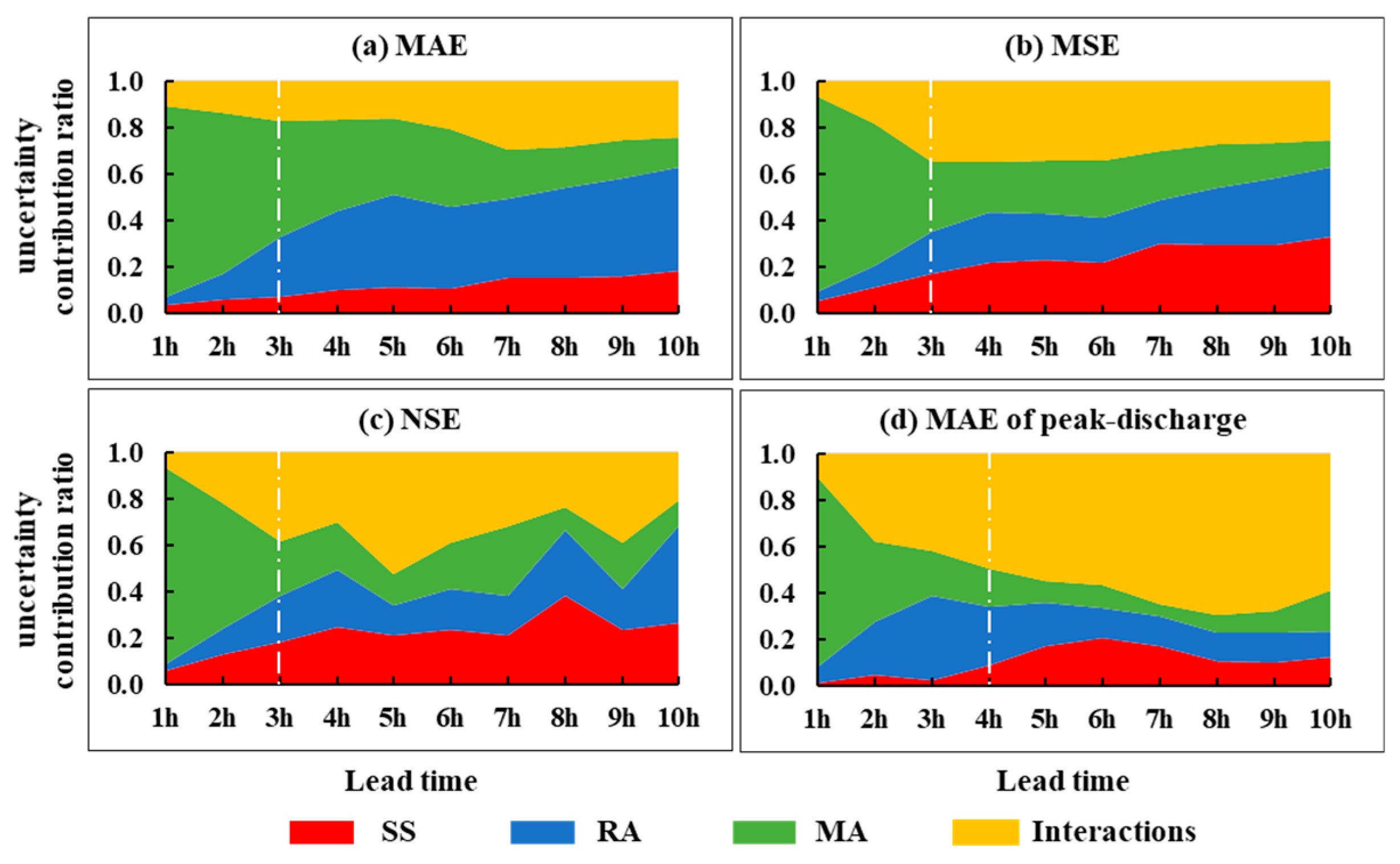

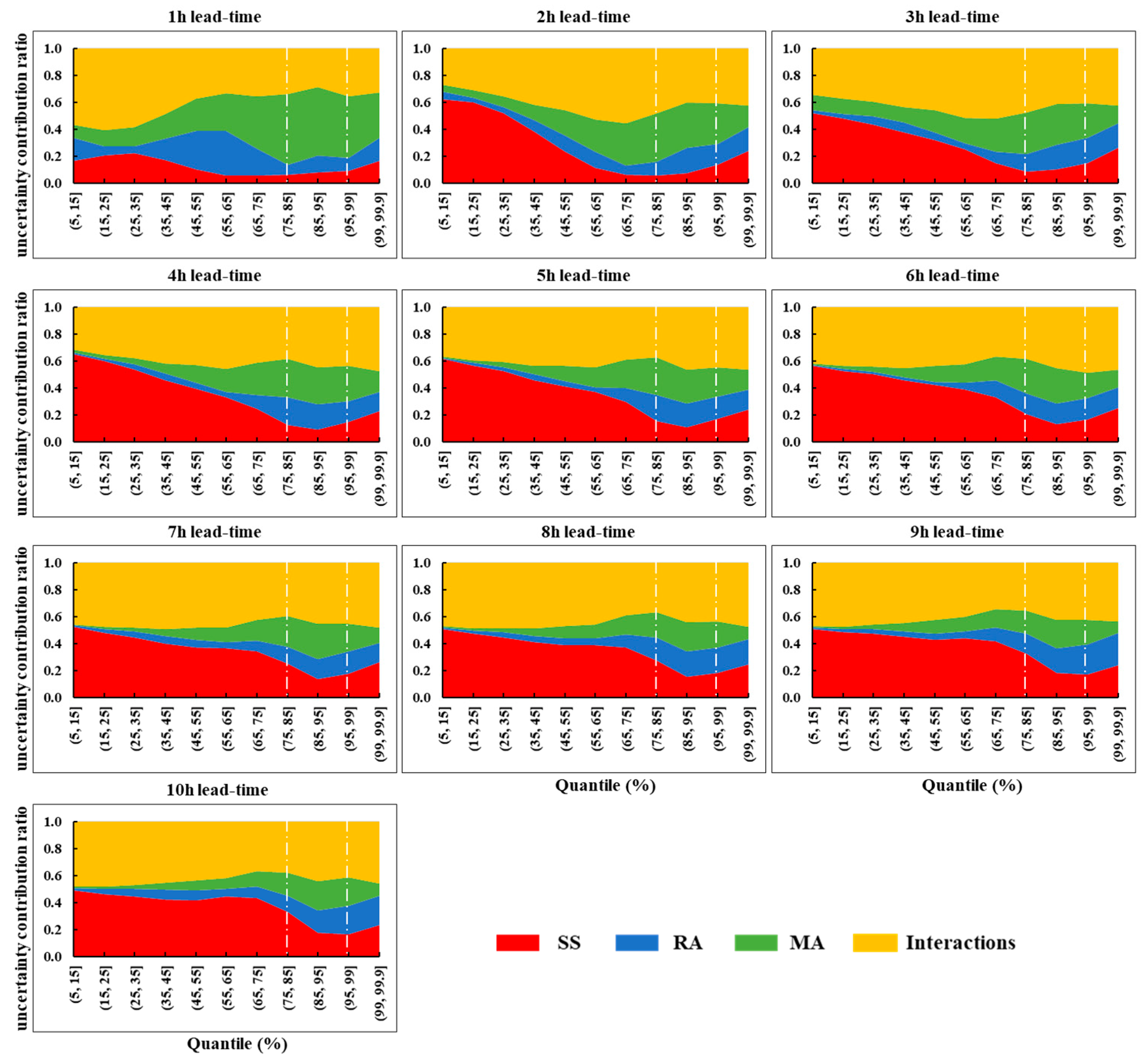

4.4. Uncertainty Source Quantification

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Shrestha, A.; Mahmood, A. Review of deep learning algorithms and architectures. IEEE Access 2019, 7, 53040–53065. [Google Scholar] [CrossRef]

- Qin, J.; Liang, J.; Chen, T.; Lei, X.; Kang, A. Simulating and predicting of hydrological time series based on tensorflow deep learning. Pol. J. Environ. Stud. 2019, 28, 795–802. [Google Scholar] [CrossRef]

- Hu, C.; Wu, Q.; Li, H.; Jian, S.; Li, N.; Lou, Z. Deep learning with a long short-term memory networks approach for rainfall-runoff simulation. Water 2018, 10, 1543. [Google Scholar] [CrossRef]

- Mosavi, A.; Ozturk, P.; Chau, K.W. Flood prediction using machine learning models: Literature review. Water 2018, 10, 1536. [Google Scholar] [CrossRef]

- Jha, A.; Chandrasekaran, A.; Kim, C.; Ramprasad, R. Impact of dataset uncertainties on machine learning model predictions: The example of polymer glass transition temperatures. Model. Simul. Mater. Sci. Eng. 2019, 27. [Google Scholar] [CrossRef]

- Rahmati, O.; Choubin, B.; Fathabadi, A.; Coulon, F.; Soltani, E.; Shahabi, H.; Mollaefar, E.; Tiefenbacher, J.; Cipullo, S.; Bin Ahmad, B.; et al. Predicting uncertainty of machine learning models for modelling nitrate pollution of groundwater using quantile regression and uneec methods. Sci. Total Environ. 2019, 688, 855–866. [Google Scholar] [CrossRef]

- Li, Y.M.; Xiao, W.R.; Wang, P.F. Uncertainty Quantification of Artificial Neural Network Based Machine Learning Potentials; Amer Soc Mechanical Engineers: New York, NY, USA, 2019. [Google Scholar]

- Yu, Y.; Si, X.; Hu, C.; Zhang, J. A review of recurrent neural networks: Lstm cells and network architectures. Neural Comput. 2019, 31, 1235–1270. [Google Scholar] [CrossRef]

- Hochreiter, S.; Schmidhuber, J. Long short-term memory. Neural Comput. 1997, 9, 1735–1780. [Google Scholar] [CrossRef]

- Hsu, W.N.; Zhang, Y.; Lee, A.; Glass, J.; Int Speech Commun, A. Exploiting depth and highway connections in convolutional recurrent deep neural networks for speech recognition. In Proceedings of the 17th annual conference of the international speech communication association, San Francisco, CA, USA, 8–12 September 2016; pp. 395–399. [Google Scholar]

- Kim, H.Y.; Won, C.H. Forecasting the volatility of stock price index: A hybrid model integrating lstm with multiple garch-type models. Expert Syst. Appl. 2018, 103, 25–37. [Google Scholar] [CrossRef]

- Palangi, H.; Deng, L.; Shen, Y.; Gao, J.; He, X.; Chen, J.; Song, X.; Ward, R. Deep sentence embedding using long short-term memory networks: Analysis and application to information retrieval. IEEE ACM Trans. Audio Speech Lang. Process. 2016, 24, 694–707. [Google Scholar] [CrossRef]

- Zhao, Z.; Chen, W.; Wu, X.; Chen, P.C.Y.; Liu, J. Lstm network: A deep learning approach for short-term traffic forecast. IET Intell. Transp. Syst. 2017, 11, 68–75. [Google Scholar] [CrossRef]

- Tan, J.H.; Hagiwara, Y.; Pang, W.; Lim, I.; Oh, S.L.; Adam, M.; Tan, R.S.; Chen, M.; Acharya, U.R. Application of stacked convolutional and long short-term memory network for accurate identification of cad ecg signals. Comput. Biol. Med. 2018, 94, 19–26. [Google Scholar] [CrossRef] [PubMed]

- Kratzert, F.; Klotz, D.; Brenner, C.; Schulz, K.; Herrnegger, M. Rainfall-runoff modelling using long short-term memory (lstm) networks. Hydrol. Earth Syst. Sci. 2018, 22, 6005–6022. [Google Scholar] [CrossRef]

- Zhang, D.; Lin, J.; Peng, Q.; Wang, D.; Yang, T.; Sorooshian, S.; Liu, X.; Zhuang, J. Modeling and simulating of reservoir operation using the artificial neural network, support vector regression, deep learning algorithm. J. Hydrol. 2018, 565, 720–736. [Google Scholar] [CrossRef]

- Tian, Y.; Xu, Y.P.; Yang, Z.; Wang, G.; Zhu, Q. Integration of a parsimonious hydrological model with recurrent neural networks for improved streamflow forecasting. Water 2018, 10, 1655. [Google Scholar] [CrossRef]

- Committee, A.M. Unbalanced robust anova for the estimation of measurement uncertainty at reduced cost. Anal. Methods 2014, 6, 7110–7111. [Google Scholar]

- Campolo, M.; Andreussi, P.; Soldati, A. River flood forecasting with a neural network model. Water Resour. Res. 1999, 35, 1191–1197. [Google Scholar] [CrossRef]

- Roberts, W.; Williams, G.P.; Jackson, E.; Nelson, E.J.; Ames, D.P. Hydrostats: A python package for characterizing errors between observed and predicted time series. Hydrology 2018, 5, 66. [Google Scholar] [CrossRef]

- Liu, P.; Zhang, X.; Zhao, Y.; Deng, C.; Li, Z.; Xiong, M. Improving efficiencies of flood forecasting during lead times: An operational method and its application in the baiyunshan reservoir. Hydrol. Res. 2019, 50, 709–724. [Google Scholar] [CrossRef]

- Song, T.; Ding, W.; Wu, J.; Liu, H.; Zhou, H.; Chu, J. Flash flood forecasting based on long short-term memory networks. Water 2020, 12, 109. [Google Scholar] [CrossRef]

- Deque, M.; Rowell, D.P.; Luethi, D.; Giorgi, F.; Christensen, J.H.; Rockel, B.; Jacob, D.; Kjellstrom, E.; de Castro, M.; van den Hurk, B. An intercomparison of regional climate simulations for europe: Assessing uncertainties in model projections. Clim. Chang. 2007, 81, 53–70. [Google Scholar] [CrossRef]

- Bosshard, T.; Carambia, M.; Goergen, K.; Kotlarski, S.; Krahe, P.; Zappa, M.; Schaer, C. Quantifying uncertainty sources in an ensemble of hydrological climate-impact projections. Water Resour. Res. 2013, 49, 1523–1536. [Google Scholar] [CrossRef]

- Liang, C.; Li, H.; Lei, M.; Du, Q. Dongting lake water level forecast and its relationship with the three gorges dam based on a long short-term memory network. Water 2018, 10, 1389. [Google Scholar] [CrossRef]

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Song, T.; Ding, W.; Liu, H.; Wu, J.; Zhou, H.; Chu, J. Uncertainty Quantification in Machine Learning Modeling for Multi-Step Time Series Forecasting: Example of Recurrent Neural Networks in Discharge Simulations. Water 2020, 12, 912. https://doi.org/10.3390/w12030912

Song T, Ding W, Liu H, Wu J, Zhou H, Chu J. Uncertainty Quantification in Machine Learning Modeling for Multi-Step Time Series Forecasting: Example of Recurrent Neural Networks in Discharge Simulations. Water. 2020; 12(3):912. https://doi.org/10.3390/w12030912

Chicago/Turabian StyleSong, Tianyu, Wei Ding, Haixing Liu, Jian Wu, Huicheng Zhou, and Jinggang Chu. 2020. "Uncertainty Quantification in Machine Learning Modeling for Multi-Step Time Series Forecasting: Example of Recurrent Neural Networks in Discharge Simulations" Water 12, no. 3: 912. https://doi.org/10.3390/w12030912

APA StyleSong, T., Ding, W., Liu, H., Wu, J., Zhou, H., & Chu, J. (2020). Uncertainty Quantification in Machine Learning Modeling for Multi-Step Time Series Forecasting: Example of Recurrent Neural Networks in Discharge Simulations. Water, 12(3), 912. https://doi.org/10.3390/w12030912