Author Contributions

Conceptualization, S.S. and Y.A.-G.; Methodology, S.S., Y.A.-G., and G.G.-M.; Software, S.S.; Validation, S.S., Y.A.-G., and G.G.-M.; Formal Analysis, S.S., Y.A.-G. and G.G.-M.; Investigation, S.S., Y.A.-G., G.G.-M., A.R.-C., and J.M.M.-M.; Resources, S.S. and Y.A.-G.; Writing—Original Draft Preparation, S.S. and G.G.-M.; Writing—Review and Editing, G.G.-M., A.R.-C., and J.M.M.-M.; Supervision, Y.A.-G., A.R.-C., and J.M.M.-M.; Project Administration, Y.A.-G., A.R.-C., and J.M.M.-M.; Funding Acquisition, Y.A.-G., G.G.-M.

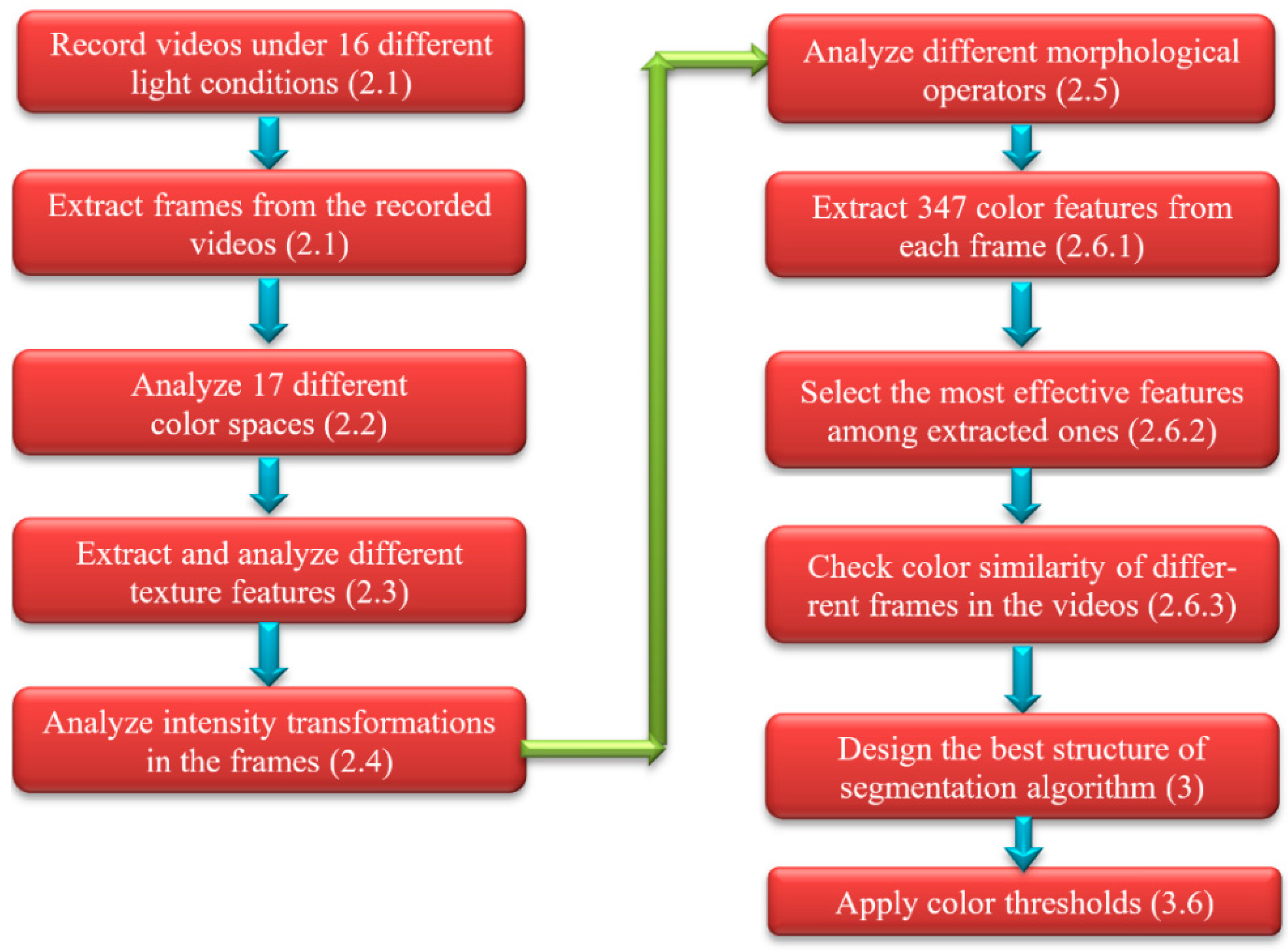

Figure 1.

Steps in the development of a complete algorithm for color segmentation of apples. In parentheses, a reference to the section/subsection where each step is described.

Figure 1.

Steps in the development of a complete algorithm for color segmentation of apples. In parentheses, a reference to the section/subsection where each step is described.

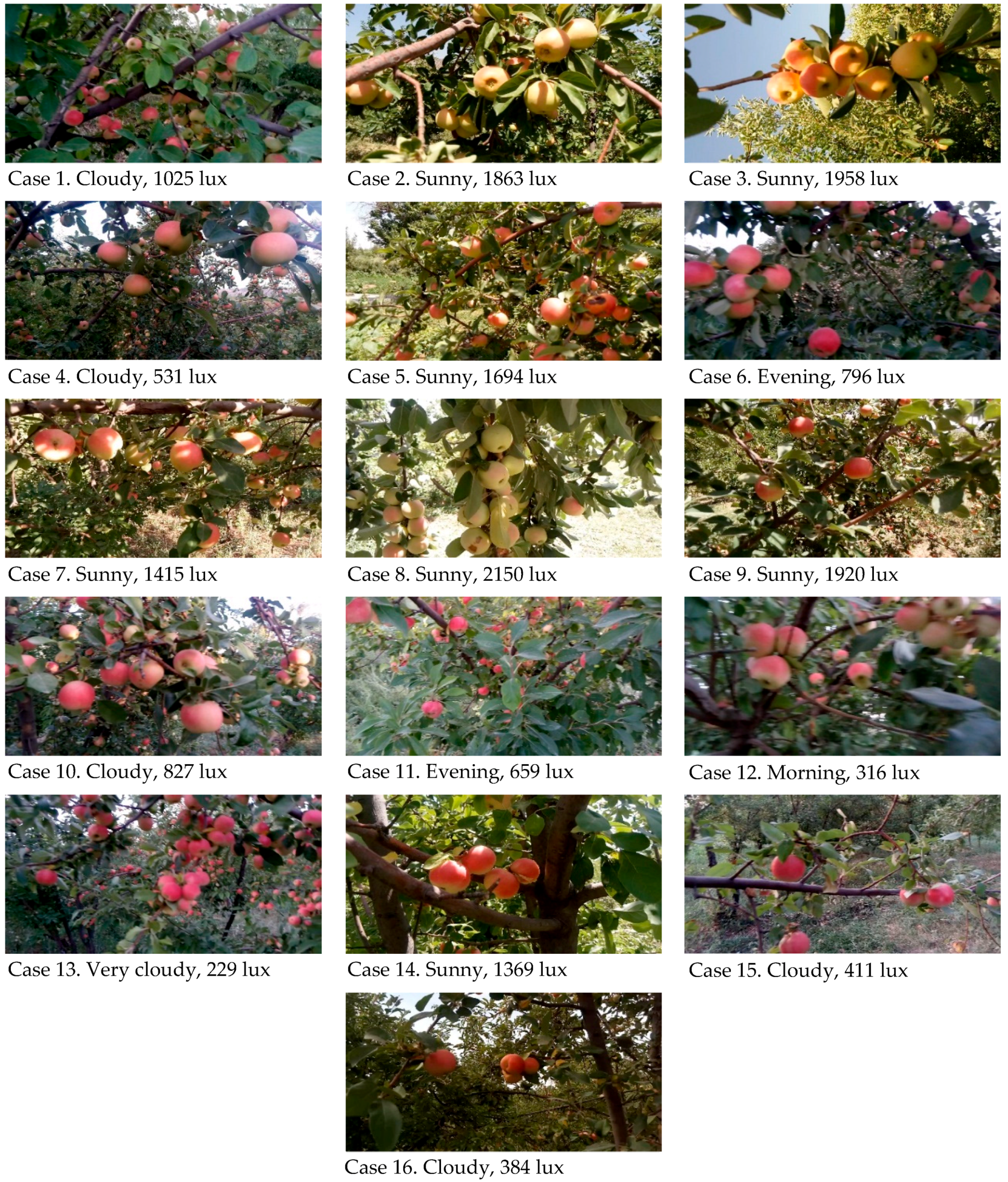

Figure 2.

Sample frames of videos taken under different lighting conditions. For each case, the weather condition and the measured light intensity (in lux) is indicated.

Figure 2.

Sample frames of videos taken under different lighting conditions. For each case, the weather condition and the measured light intensity (in lux) is indicated.

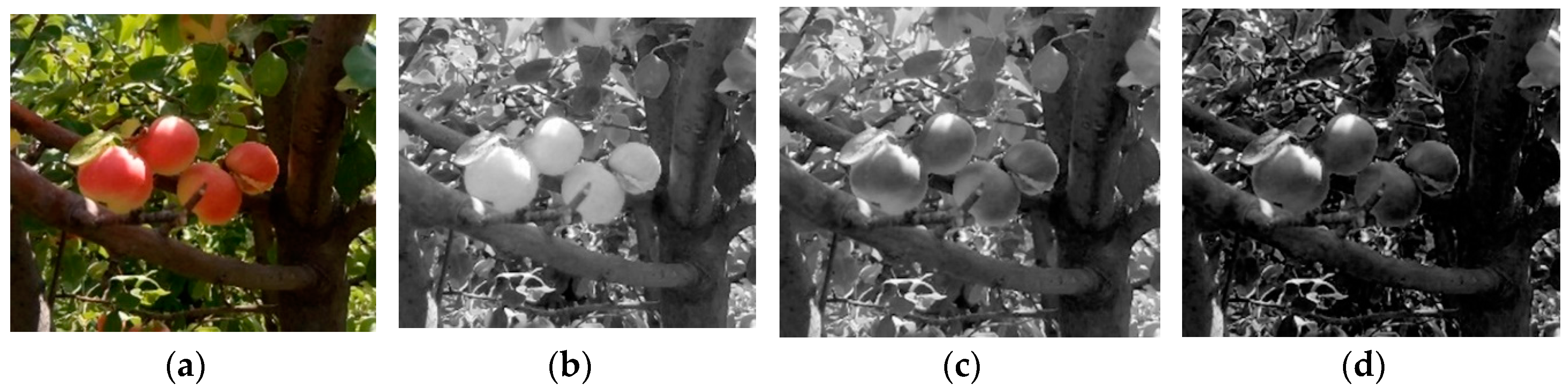

Figure 3.

Sample apple tree image and RGB channels. (a) Original RGB image. (b) R channel. (c) G channel. (d) B channel.

Figure 3.

Sample apple tree image and RGB channels. (a) Original RGB image. (b) R channel. (c) G channel. (d) B channel.

Figure 4.

Sample apple tree image under six different color spaces. (a) RGB color space. (b) HSV color space. (c) YCbCr color space. (d) CMY color space. (e) HSL color space. (f) L*u*v* color space. For visualization purposes, the three channels of each space are represented in the R, G, and B channels.

Figure 4.

Sample apple tree image under six different color spaces. (a) RGB color space. (b) HSV color space. (c) YCbCr color space. (d) CMY color space. (e) HSL color space. (f) L*u*v* color space. For visualization purposes, the three channels of each space are represented in the R, G, and B channels.

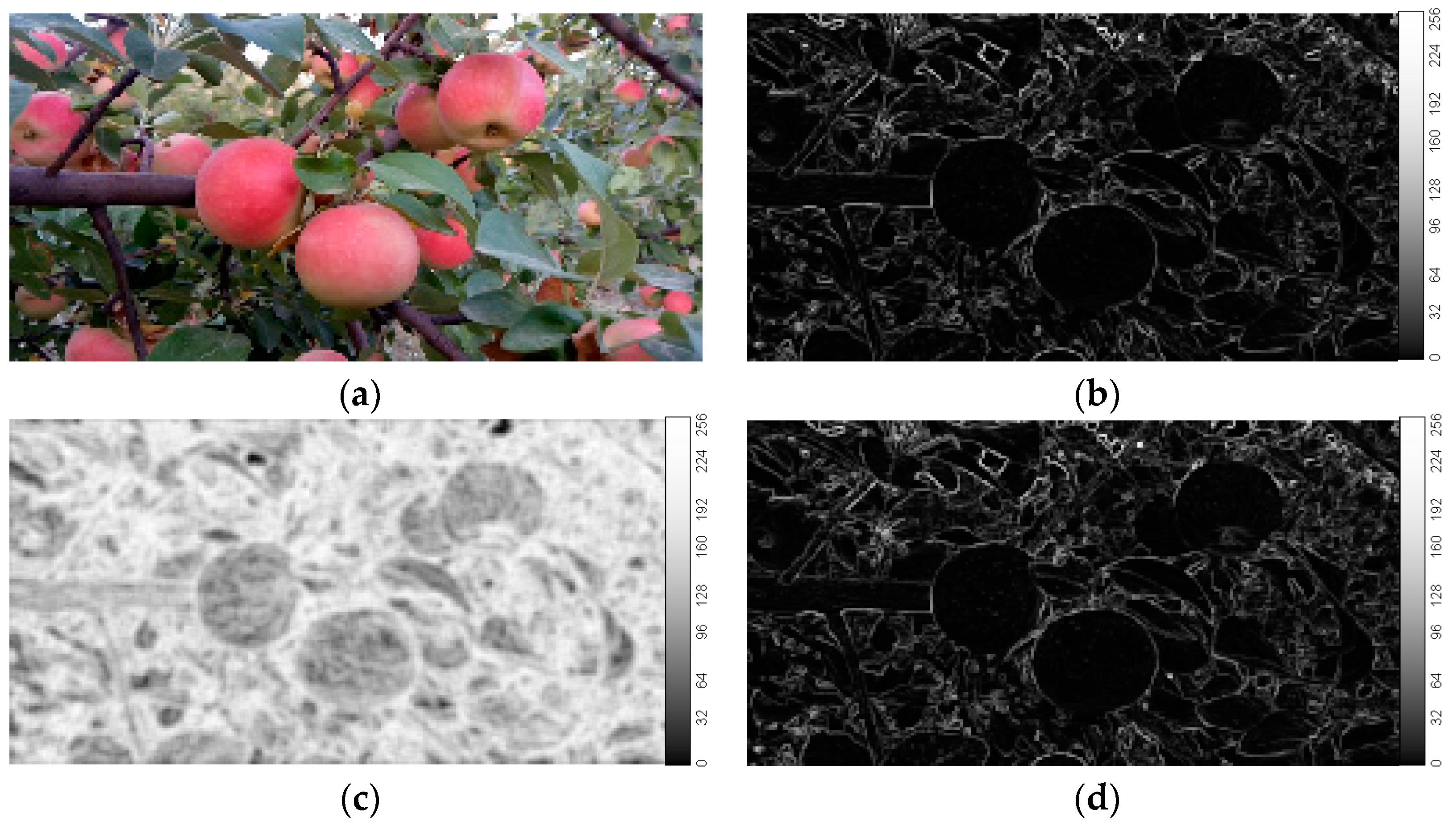

Figure 5.

Sample result of three texture features in an image of apples. (a) Original color image. (b) Image obtained by applying local range feature. (c) Local entropy feature. (d) Local standard deviation feature.

Figure 5.

Sample result of three texture features in an image of apples. (a) Original color image. (b) Image obtained by applying local range feature. (c) Local entropy feature. (d) Local standard deviation feature.

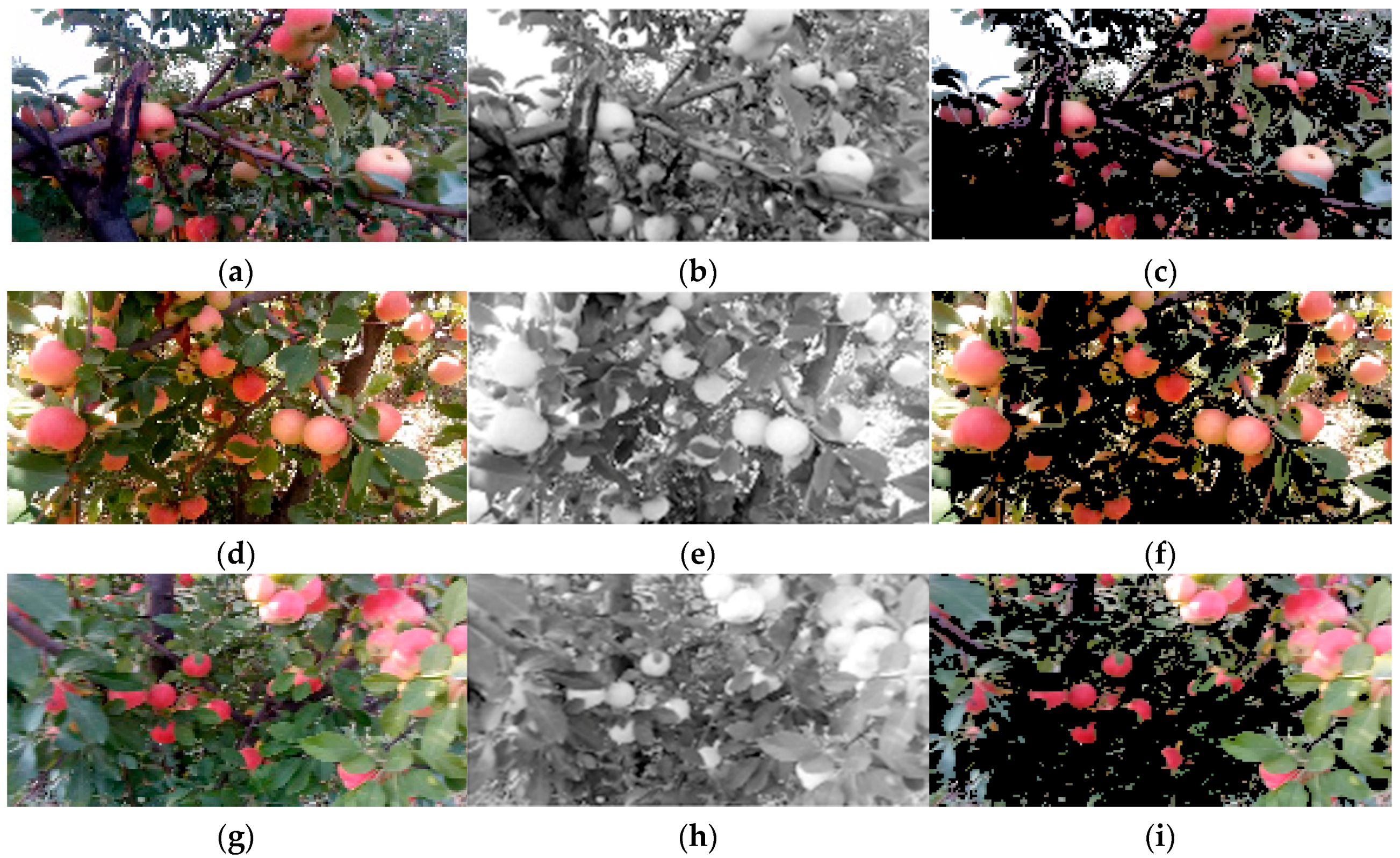

Figure 6.

Sample results of applying intensity segmentation on apple images. (a,d,g) Three color samples of the videos produced in 796, 1920, and 659 lux. (b,e,h) Results from the intensity transformation by selecting the R channel. (c,f,i) Segmentation images after applying the threshold.

Figure 6.

Sample results of applying intensity segmentation on apple images. (a,d,g) Three color samples of the videos produced in 796, 1920, and 659 lux. (b,e,h) Results from the intensity transformation by selecting the R channel. (c,f,i) Segmentation images after applying the threshold.

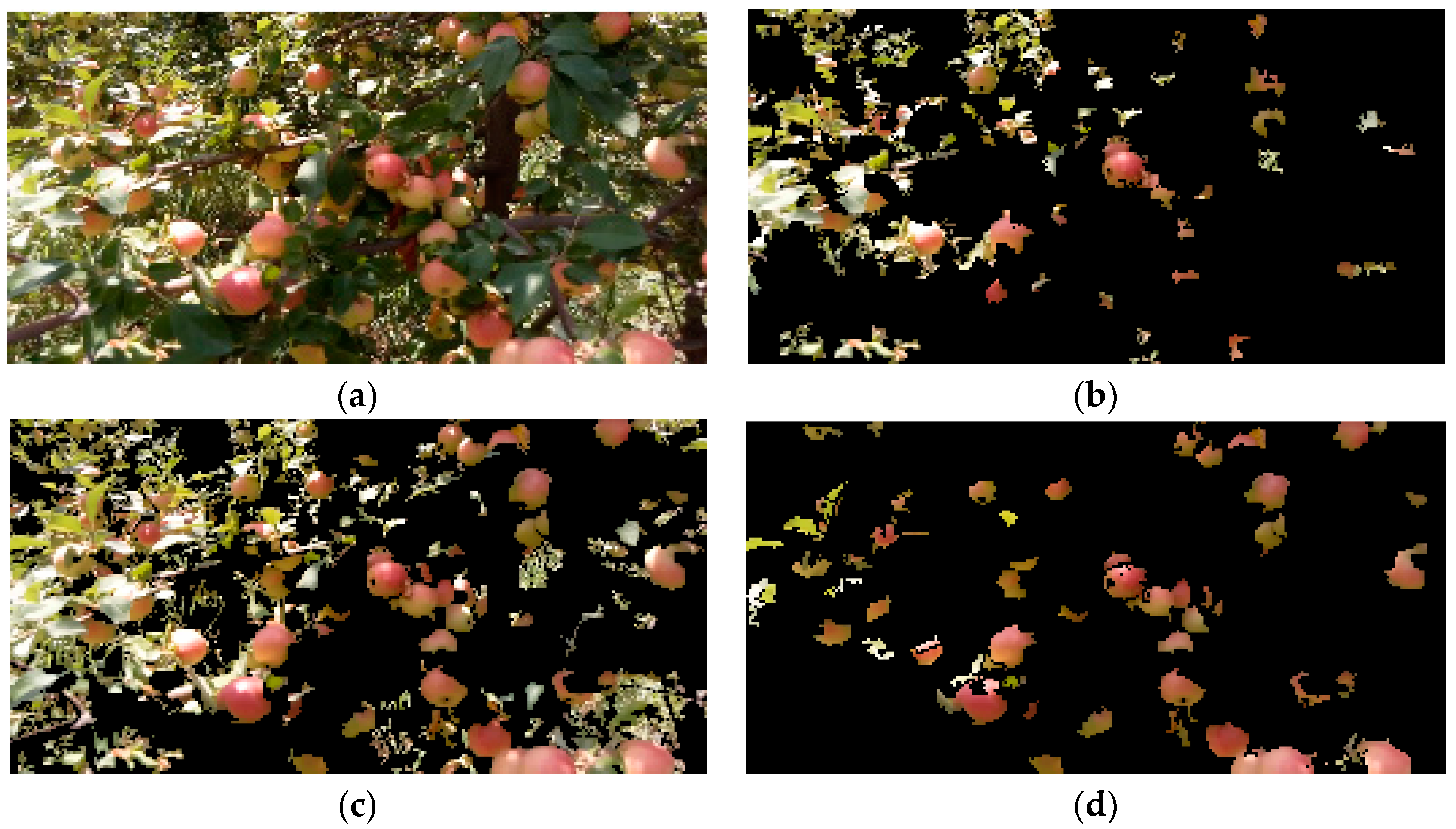

Figure 7.

Results of different orders of the color, texture and intensity steps on the segmentation algorithm. (a) Original color image of the apples. (b) Segmentation in texture, color and intensity order. (c) Segmentation in intensity, texture and color order. (d) Segmentation in color, texture, and intensity order.

Figure 7.

Results of different orders of the color, texture and intensity steps on the segmentation algorithm. (a) Original color image of the apples. (b) Segmentation in texture, color and intensity order. (c) Segmentation in intensity, texture and color order. (d) Segmentation in color, texture, and intensity order.

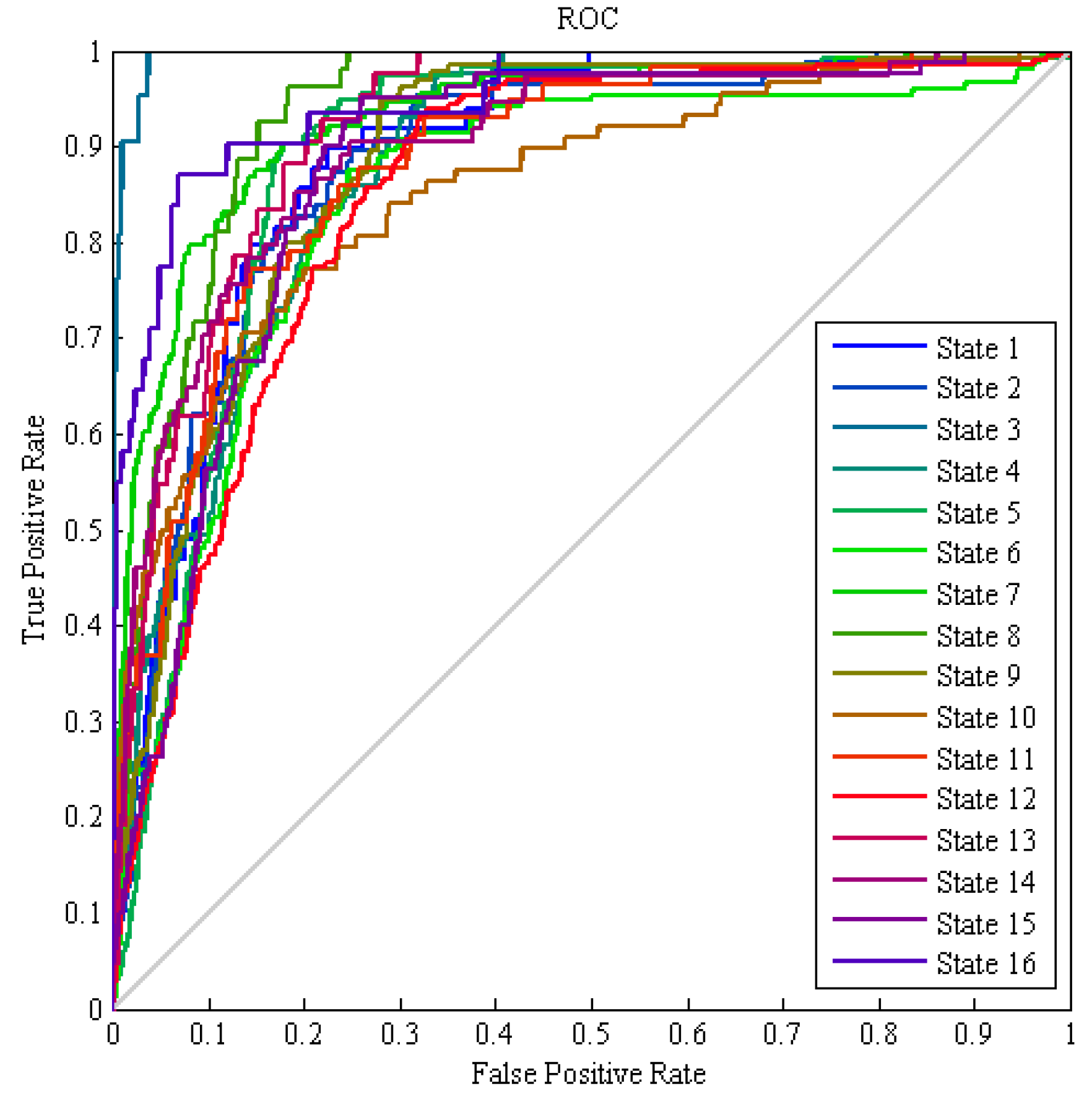

Figure 8.

Receiver operating characteristic (ROC) graph of the hybrid ANN-CA classification of frames for the 16 different classes, using color features.

Figure 8.

Receiver operating characteristic (ROC) graph of the hybrid ANN-CA classification of frames for the 16 different classes, using color features.

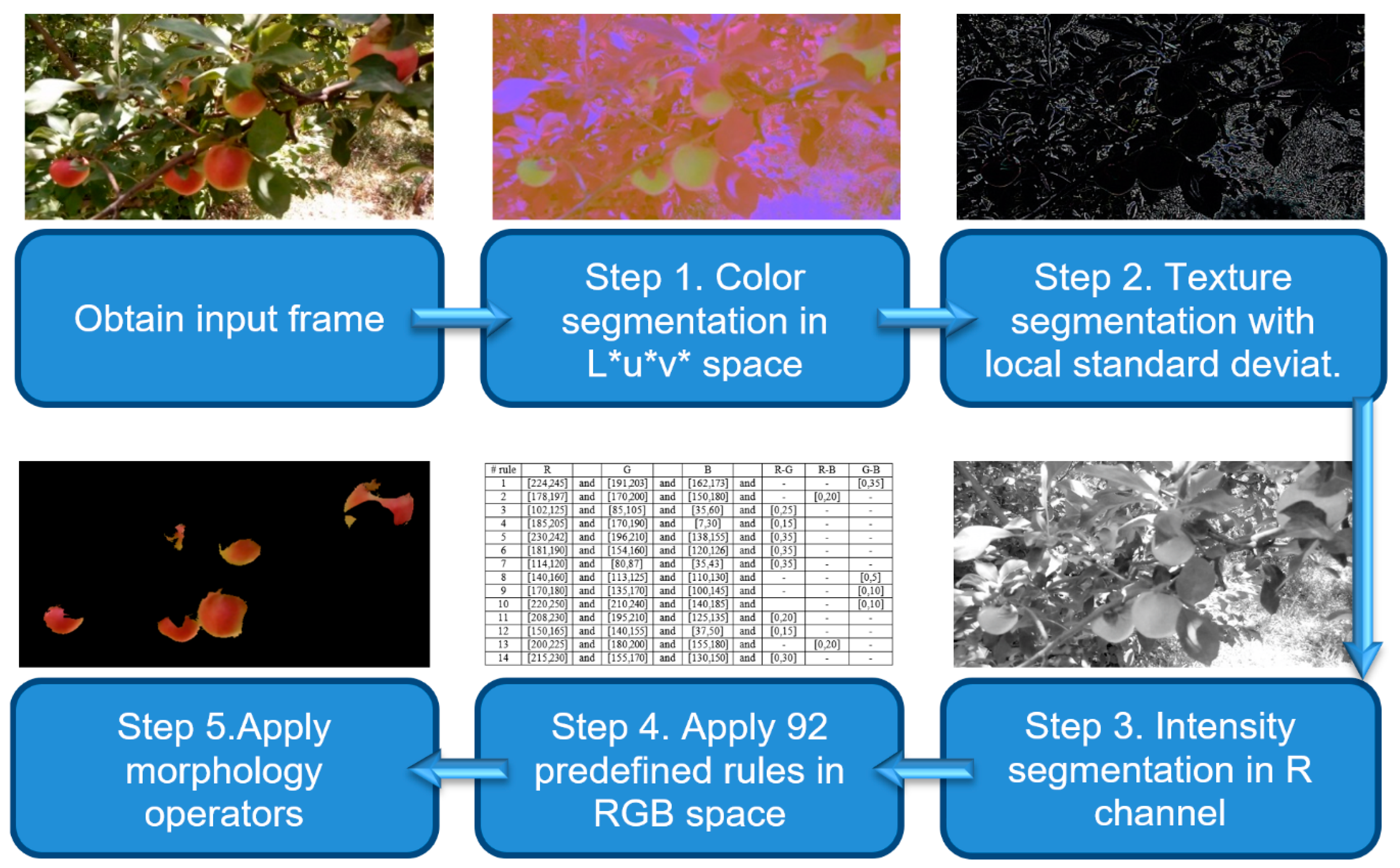

Figure 9.

Flowchart of the proposed apple segmentation algorithm.

Figure 9.

Flowchart of the proposed apple segmentation algorithm.

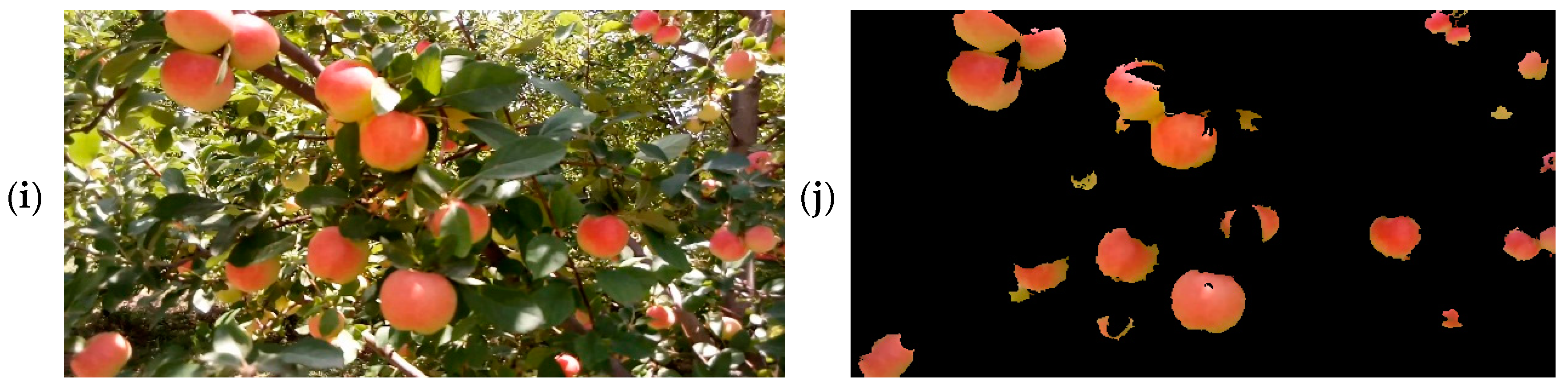

Figure 10.

Final segmentation results for five sample frames from different light intensities. (a,c,e,g,i) Original color images. (b,d,f,h,j) Corresponding segmented images, with background in black.

Figure 10.

Final segmentation results for five sample frames from different light intensities. (a,c,e,g,i) Original color images. (b,d,f,h,j) Corresponding segmented images, with background in black.

Table 1.

Characteristics of the videos captured under 16 different light conditions. The videos were obtained on different days. In the videos of evening and morning, the sky was clear.

Table 1.

Characteristics of the videos captured under 16 different light conditions. The videos were obtained on different days. In the videos of evening and morning, the sky was clear.

| Case Number | Capture Date | Weather Condition | Light Intensity (lux) | Time of the Day | Video Length (min) | Total Number of Frames | Train/Test + Evaluation Frames |

|---|

| 1 | 16 July 2017 | Cloudy | 1025 | 13:25 | 05:05 | 1830 | 1281/549 |

| 2 | 17 July 2017 | Sunny | 1863 | 11:10 | 10:04 | 3624 | 2537/1087 |

| 3 | 20 July 2017 | Sunny | 1958 | 14:30 | 01:03 | 378 | 265/113 |

| 4 | 23 July 2017 | Cloudy | 531 | 15:35 | 07:25 | 2670 | 1869/801 |

| 5 | 25 July 2017 | Sunny | 1694 | 16:36 | 10:17 | 3702 | 2592/1110 |

| 6 | 29 July 2017 | Evening | 796 | 18:05 | 12:09 | 4374 | 3062/1312 |

| 7 | 1 August 2017 | Sunny | 1415 | 10:15 | 11:05 | 3990 | 2793/1197 |

| 8 | 3 August 2017 | Sunny | 2150 | 13:15 | 03:14 | 1164 | 815/349 |

| 9 | 5 August 2017 | Sunny | 1920 | 12:00 | 12:25 | 4470 | 3129/1341 |

| 10 | 9 August 2017 | Cloudy | 827 | 14:10 | 07:23 | 2658 | 1861/797 |

| 11 | 10 August 2017 | Evening | 659 | 19:15 | 06:00 | 2160 | 1512/648 |

| 12 | 13 August 2017 | Morning | 316 | 07:25 | 18:04 | 6504 | 4553/1952 |

| 13 | 15 August 2017 | Very cloudy | 229 | 20:05 | 00:53 | 318 | 223/95 |

| 14 | 18 August 2017 | Sunny | 1369 | 09:05 | 06:34 | 2364 | 1655/709 |

| 15 | 20 August 2017 | Cloudy | 411 | 16:25 | 07:46 | 2796 | 1957/838 |

| 16 | 22 August 2017 | Cloudy | 384 | 17:15 | 02:32 | 912 | 639/274 |

Table 2.

Color features extracted for each pixel, related to vegetation indices. Rn, Gn, and Bn refer to normalized red, green, and blue, respectively.

Table 2.

Color features extracted for each pixel, related to vegetation indices. Rn, Gn, and Bn refer to normalized red, green, and blue, respectively.

| Extracted Feature | Formula for Calculating the Feature |

|---|

| Normalized first component of RGB | Rn = R/(R + G + B) |

| Normalized second component of RGB | Gn = G/(R + G + B) |

| Normalized third component of RGB | Bn = B/(R + G + B) |

| Gray channel | gray = 0.2898 × R + 0.5870 × G + 0.1140 × B |

| Additional green [20] | EXG = 2 × Gn − Rn − Bn |

| Additional red [21] | EXR = 1.4 × Rn − Gn |

| Color index for extracted vegetation cover [3] | CIVE = 0.441 × Rn − 0.811 × Gn + 0.385 × Bn + 18.78 |

| Subtraction between additional green and additional red [22] | EXGR = EXG − EXR |

| Normalized difference index [23] | NDI = (Gn − Rn)/(Gn + Rn) |

| Green index minus blue [20] | GB = (Gn − Bn) |

| Red-blue contrast [24] | RBI = (Gn − Bn)/(Gn + Bn) |

| Green-red index [24] | ERI = (Rn − Gn) × (Rn − Bn) |

| Additional green index [24] | EGI = (Gn − Rn) × (Gn − Bn) |

| Additional blue index [24] | EBI = (Bn − Gn) × (Bn − Rn) |

Table 3.

Parameters used in the multilayer perceptron neural network for the selection of the most effective color features.

Table 3.

Parameters used in the multilayer perceptron neural network for the selection of the most effective color features.

| Parameter | Value |

|---|

| Number of layers | 2 |

| Number of neurons | First layer: 8 |

| Second layer: 12 |

| Transfer functions | First layer: hyperbolic tangent sigmoid |

| Second layer: hyperbolic tangent sigmoid |

| Backpropagation network training function | Scaled conjugate gradient |

| Backpropagation weight/bias learning function | Hebb with decay weight learning |

Table 4.

Values of the multilayer perceptron artificial neural network parameters adjusted by the cultural algorithm (ANN-CA method).

Table 4.

Values of the multilayer perceptron artificial neural network parameters adjusted by the cultural algorithm (ANN-CA method).

| Parameter | Value |

|---|

| Number of hidden layers | 3 |

| Number of neurons | First layer: 25 |

| Second layer: 25 |

| Third layer: 25 |

| Transfer functions | First layer: hyperbolic tangent sigmoid |

| Second layer: hyperbolic tangent sigmoid |

| Third layer: hyperbolic tangent sigmoid |

| Backpropagation network training function | Levenberg–Marquardt |

| Backpropagation weight/bias learning function | LVQ1 weight learning |

Table 5.

Confusion matrix of the classification of 43,914 frames in the 16 classes corresponding to the different lighting conditions, using color features and the hybrid ANN-CA method. Rows: real classes. Columns: predicted classes. The percentage of color sharing of each class is defined as the percentage of frames of that class classified in a different class.

Table 5.

Confusion matrix of the classification of 43,914 frames in the 16 classes corresponding to the different lighting conditions, using color features and the hybrid ANN-CA method. Rows: real classes. Columns: predicted classes. The percentage of color sharing of each class is defined as the percentage of frames of that class classified in a different class.

| | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | 11 | 12 | 13 | 14 | 15 | 16 | Color Sharing by Class (%) | Total Color Sharing (%) |

|---|

| 1 | 330 | 0 | 0 | 200 | 0 | 520 | 0 | 0 | 0 | 90 | 0 | 440 | 0 | 0 | 250 | 0 | 81.97 | 58.96 |

| 2 | 0 | 180 | 70 | 0 | 1330 | 0 | 90 | 104 | 1320 | 0 | 0 | 10 | 0 | 290 | 200 | 30 | 93.93 |

| 3 | 18 | 0 | 262 | 6 | 0 | 0 | 10 | 0 | 10 | 24 | 0 | 42 | 0 | 0 | 6 | 0 | 30.69 |

| 4 | 110 | 0 | 0 | 510 | 0 | 830 | 0 | 0 | 0 | 300 | 0 | 710 | 0 | 0 | 210 | 0 | 80.90 |

| 5 | 0 | 232 | 150 | 0 | 1710 | 0 | 50 | 30 | 990 | 0 | 0 | 0 | 0 | 520 | 0 | 20 | 53.81 |

| 6 | 80 | 0 | 0 | 160 | 0 | 2310 | 10 | 0 | 0 | 290 | 0 | 1300 | 0 | 0 | 224 | 0 | 47.19 |

| 7 | 0 | 50 | 20 | 30 | 160 | 130 | 2750 | 80 | 140 | 80 | 0 | 360 | 0 | 20 | 130 | 40 | 31.08 |

| 8 | 0 | 126 | 0 | 0 | 24 | 0 | 44 | 258 | 618 | 0 | 0 | 12 | 0 | 82 | 0 | 0 | 75.29 |

| 9 | 0 | 230 | 80 | 0 | 1270 | 0 | 170 | 380 | 1930 | 0 | 0 | 0 | 0 | 330 | 30 | 50 | 56.82 |

| 10 | 0 | 0 | 0 | 78 | 0 | 470 | 180 | 0 | 40 | 1030 | 0 | 740 | 0 | 0 | 120 | 0 | 61.25 |

| 11 | 0 | 0 | 10 | 20 | 0 | 120 | 1090 | 0 | 70 | 90 | 0 | 550 | 0 | 20 | 190 | 0 | 100 |

| 12 | 220 | 0 | 30 | 70 | 0 | 770 | 300 | 30 | 0 | 180 | 0 | 4330 | 0 | 0 | 574 | 0 | 33.27 |

| 13 | 30 | 0 | 12 | 0 | 0 | 41 | 8 | 0 | 0 | 65 | 0 | 132 | 6 | 0 | 24 | 0 | 98.11 |

| 14 | 0 | 30 | 10 | 0 | 710 | 0 | 20 | 80 | 580 | 0 | 0 | 24 | 0 | 890 | 0 | 20 | 62.35 |

| 15 | 30 | 0 | 10 | 90 | 0 | 760 | 20 | 0 | 0 | 90 | 0 | 860 | 0 | 0 | 936 | 0 | 66.52 |

| 16 | 0 | 10 | 0 | 0 | 40 | 0 | 110 | 30 | 72 | 0 | 0 | 30 | 10 | 30 | 90 | 490 | 44.05 |

Table 6.

Thresholds defined in RGB color space for the segmentation of background pixels.

Table 6.

Thresholds defined in RGB color space for the segmentation of background pixels.

| Rule Number | R | | G | | B | | R-G | R-B | G-B |

|---|

| 1 | [224,245] | and | [191,203] | and | [162,173] | and | - | - | [0,35] |

| 2 | [178,197] | and | [170,200] | and | [150,180] | and | - | [0,20] | - |

| 3 | [102,125] | and | [85,105] | and | [35,60] | and | [0,25] | - | - |

| 4 | [185,205] | and | [170,190] | and | [7,30] | and | [0,15] | - | - |

| 5 | [230,242] | and | [196,210] | and | [138,155] | and | [0,35] | - | - |

| 6 | [181,190] | and | [154,160] | and | [120,126] | and | [0,35] | - | - |

| 7 | [114,120] | and | [80,87] | and | [35,43] | and | [0,35] | - | - |

| 8 | [140,160] | and | [113,125] | and | [110,130] | and | - | - | [0,5] |

| 9 | [170,180] | and | [135,170] | and | [100,145] | and | - | - | [0,10] |

| 10 | [220,250] | and | [210,240] | and | [140,185] | and | | - | [0,10] |

| 11 | [208,230] | and | [195,210] | and | [125,135] | and | [0,20] | - | - |

| 12 | [150,165] | and | [140,155] | and | [37,50] | and | [0,15] | - | - |

| 13 | [200,225] | and | [180,200] | and | [155,180] | and | - | [0,20] | - |

| 14 | [215,230] | and | [155,170] | and | [130,150] | and | [0,30] | - | - |

| 15 | [185,195] | and | [170,185] | and | [120,130] | and | [0,20] | - | - |

| 16 | [142,167] | and | [120,139] | and | [67,97] | and | [0,30] | - | - |

| 17 | [170,185] | and | [155,170] | and | [13,30] | and | [0,20] | - | - |

| 18 | [200,222] | and | [175,202] | and | [110,125] | and | [0,35] | - | - |

| 19 | [198,225] | and | [186,210] | and | [50,76] | and | - | [0,20] | - |

| 20 | [178,182] | and | [164,167] | and | [126,133] | and | [0,18] | - | - |

| 21 | [98,116] | and | [70,98] | and | [50,83] | and | [0,30] | - | - |

| 22 | [129,140] | and | [110,120] | and | [109,120] | and | - | - | [0,10] |

| 23 | [239,255] | and | [230,255] | and | [55,170] | and | - | [0,25] | - |

| 24 | [180,255] | and | [180,255] | and | [180,255] | and | - | - | - |

| 25 | [160,200] | and | [149,180] | and | [30,60] | and | [0,20] | - | - |

| 26 | [200,220] | and | [190,210] | and | [120,145] | and | [0,15] | - | - |

| 27 | [125,140] | and | [117,130] | and | [25,42] | and | - | [0,15] | - |

| 28 | [170,190] | and | [140,155] | and | [130,140] | and | - | - | [0,20] |

| 29 | [178,185] | and | [125,140] | and | [105,125] | and | - | - | [0,25] |

| 30 | [195,220] | and | [185,210] | and | [135,155] | and | [0,15] | - | - |

| 31 | [220,235] | and | [195,215] | and | [150,180] | and | [0,30] | - | - |

| 32 | [92,102] | and | [82,94] | and | [20,35] | and | [0,15] | - | - |

| 33 | [100,110] | and | [75,90] | and | [68,82] | and | - | - | [0,15] |

| 34 | [220,230] | and | [193,207] | and | [125,145] | and | [0,30] | - | - |

| 35 | [212,230] | and | [202,220] | and | [20,38] | and | [0,15] | - | - |

| 36 | [105,108] | and | [74,77] | and | [64,66] | and | - | - | [0,15] |

| 37 | [96,100] | and | [74,78] | and | [66,70] | and | [0,10] | - | - |

| 38 | [95,127] | and | [91,110] | and | [85,90] | and | [0,35] | - | - |

| 39 | [150,155] | and | [136,140] | and | [119,122] | and | - | - | [0,20] |

| 40 | [146,166] | and | [133,150] | and | [49,54] | and | [0,20] | - | - |

| 41 | [97,110] | and | [84,95] | and | [30,45] | and | [0,15] | - | - |

| 42 | [120,135] | and | [100,115] | and | [55,68] | and | [0,25] | - | - |

| 43 | [120,155] | and | [95,120] | and | [80,100] | and | - | - | [0,25] |

| 44 | [195,210] | and | [160,185] | and | [125,150] | and | [50,70] | - | - |

| 45 | [110,115] | and | [75,78] | and | [57,78] | and | - | - | [0,20] |

| 46 | [115,130] | and | [100,115] | and | [49,55] | and | [0,20] | - | - |

| 47 | [132,142] | and | [78,85] | and | [52,58] | and | - | - | [0,30] |

| 48 | [92,130] | and | [63,95] | and | [12,35] | and | [0,35] | - | - |

| 49 | [220,225] | and | [226,242] | and | [102,110] | and | - | [0,20] | - |

| 50 | [127,142] | and | [96,110] | and | [68,77] | and | [0,35] | - | - |

| 51 | [120,140] | and | [85,110] | and | [50,67] | and | - | [0,40] | - |

| 52 | [100,160] | and | [100,160] | and | [10,50] | and | [0,10] | - | - |

| 53 | [189,200] | and | [171,191] | and | [60,9] | and | [0,15] | - | - |

| 54 | [105,120] | and | [85,100] | and | [48,60] | and | [0,25] | - | - |

| 55 | [225,235] | and | [170,180] | and | [135,145] | and | - | - | [0,35] |

| 56 | [215,220] | and | [195,205] | and | [150,160] | and | [0,25] | - | - |

| 57 | [190,202] | and | [179,190] | and | [48,53] | and | [0,20] | - | - |

| 58 | [109,122] | and | [68,90] | and | [42,58] | and | - | - | [0,35] |

| 59 | [109,120] | and | [65,80] | and | [69,75] | and | - | [0,35] | - |

| 60 | [129,140] | and | [89,95] | and | [64,75] | and | - | - | [0,27] |

| 61 | [191,195] | and | [170,178] | and | [128,132] | and | [0,25] | - | - |

| 62 | [155,170] | and | [132,152] | and | [100,125] | and | [0,35] | - | - |

| 63 | [95,105] | and | [57,72] | and | [27,42] | and | - | - | [0,35] |

| 64 | [110,135] | and | [90,120] | and | [85,110] | and | - | - | [0,15] |

| 65 | [250,255] | and | [182,188] | and | [146,149] | and | - | - | [0,40] |

| 66 | [215,220] | and | [183,190] | and | [135,140] | and | [0,35] | - | - |

| 67 | [113,122] | and | [87,93] | and | [81,87] | and | - | - | [0,10] |

| 68 | [227,242] | and | [211,225] | and | [72,82] | and | [0,20] | - | - |

| 69 | [164,172] | and | [150,159] | and | [95,105] | and | [0,20] | - | - |

| 70 | [139,145] | and | [111,120] | and | [70,81] | and | [0,35] | - | - |

| 71 | [142,152] | and | [118,127] | and | [90,107] | and | - | - | [0,30] |

| 72 | - | - | - | - | - | - | [0,10] | - | - |

| 73 | [122,131] | and | [105,110] | and | [56,60] | and | [0,22] | - | - |

| 74 | [230,245] | and | [165,175] | and | [120,130] | and | - | - | [35,50] |

| 75 | [225,235] | and | [170,180] | and | [135,145] | and | - | [0,40] | - |

| 76 | [90,110] | and | [55,80] | and | [40,62] | and | - | - | [0,20] |

| 77 | [195,207] | and | [179,182] | and | [25,33] | and | [0,27] | - | - |

| 78 | [244,248] | and | [232,236] | and | [84,88] | and | [0,15] | - | - |

| 79 | [181,189] | and | [169,178] | and | [113,121] | and | [0,15] | - | - |

| 80 | [190,210] | and | [180,205] | and | [110,125] | and | [0,20] | - | - |

| 81 | [190,210] | and | [160,180] | and | [140,170] | and | - | - | [0,20] |

| 82 | [212,220] | and | [193,205] | and | [125,148] | and | [0,25] | - | - |

| 83 | [98,108] | and | [82,90] | and | [22,38] | and | [0,25] | - | - |

| 84 | [202,227] | and | [185,215] | and | [35,40] | and | [0,20] | - | - |

| 85 | [215,237] | and | [215,227] | and | [45,65] | and | - | [0,15] | - |

| 86 | [170,205] | and | [165,185] | and | [87,100] | and | [0,20] | - | - |

| 87 | [125,160] | and | [110,145] | and | [55,90] | and | [0,20] | - | - |

| 88 | [222,230] | and | [161,169] | and | [130,137] | and | - | - | [0,35] |

| 89 | [155,185] | and | [130,165] | and | [110,150] | and | - | - | [0,20] |

| 90 | [195,215] | and | [165,195] | and | [120,140] | and | [0,35] | - | - |

| 91 | [120,128] | and | [110,118] | and | [40,55] | and | [0,15] | - | - |

| 92 | [95,115] | and | [49,70] | and | [25,50] | and | - | - | [0,25] |

Table 7.

Confusion matrix and accuracy of the proposed apple segmentation algorithm in the test set.

Table 7.

Confusion matrix and accuracy of the proposed apple segmentation algorithm in the test set.

| Predicted/Real Class | Apple | Background | All Data | Classification Error by Class (%) | Classification Accuracy (%) |

|---|

| Apple | 91,406 | 798 | 92,204 | 0.865 | 99.12 |

| Background | 1052 | 117,496 | 118,548 | 0.887 |

Table 8.

Confusion matrix and accuracy of the segmentation algorithm using neural networks on the test set.

Table 8.

Confusion matrix and accuracy of the segmentation algorithm using neural networks on the test set.

| Predicted/Real Class | Apple | Background | All Data | Classification Error by Class (%) | Classification Accuracy (%) |

|---|

| Apple | 87,090 | 5114 | 92,204 | 5.55 | 95.23 |

| Background | 4932 | 113,616 | 118,548 | 4.16 |

Table 9.

Confusion matrix and accuracy of the segmentation algorithm using color histogram models on the testing set.

Table 9.

Confusion matrix and accuracy of the segmentation algorithm using color histogram models on the testing set.

| Predicted/Real Class | Apple | Background | All Data | Classification Error by Class (%) | Classification Accuracy (%) |

|---|

| Apple | 88,103 | 4101 | 92,204 | 4.45 | 96.80 |

| Background | 2645 | 115,903 | 118,548 | 2.23 |

Table 10.

Measures of sensitivity (Sens.), specificity (Spec.) and accuracy (Accur.) of the proposed segmentation algorithm and the two methods used for comparison: neural networks and color histogram models.

Table 10.

Measures of sensitivity (Sens.), specificity (Spec.) and accuracy (Accur.) of the proposed segmentation algorithm and the two methods used for comparison: neural networks and color histogram models.

| | Proposed Method | Neural Networks | Color Histograms |

|---|

| Class | Sens. (%) | Spec. (%) | Accur. (%) | Sens. (%) | Spec. (%) | Accur. (%) | Sens. (%) | Spec. (%) | Accur. (%) |

|---|

| Apple | 99.13 | 98.86 | 99.12 | 94.45 | 94.64 | 95.23 | 95.55 | 97.09 | 96.80 |

| Background | 99.11 | 99.33 | 95.84 | 95.69 | 97.77 | 96.58 |

Table 11.

Comparison of the segmentation accuracy of the proposed algorithm with respect to other recent research works.

Table 11.

Comparison of the segmentation accuracy of the proposed algorithm with respect to other recent research works.

| Method | Number of Test Samples | Accuracy Rate (%) |

|---|

| Proposed method | 210,752 | 99.12 |

| ANN method | 210,752 | 95.23 |

| Color histograms method | 210,752 | 96.8 |

| Tang et al. [30] | 100 | 92.5 |

| Aquino et al. [31] | 152 | 95.72 |