1. Introduction

As an essential oil crop, rapeseed has become the main source of edible vegetable oil [

1]. The harvest period has a significant impact on the quality and yield of rapeseed. A suitable harvest period can be utilized to guide the accurate management, decision-making and marketing of rapeseed [

2]. The method of rapeseed harvest is divided into two types: artificial harvesting and mechanized harvesting [

3]. Manual harvesting means that harvesting after ripening, drying, threshing, cleaning, and additional steps are completed manually, with low harvesting efficiency and high production costs. Mechanized harvesting of rapeseed includes segmented harvesting and combined harvesting. The operation process of segmented harvest is similar to that of artificial harvest, in which rapeseed is cut down, dried and threshed in the early stage of yellow ripening. Combined harvesting is a combined operation mode of harvesting, threshing, and cleaning rapeseed with a combine harvester, greatly improving the harvesting efficiency [

4]. As of 2022, the proportion of manual and machine harvest of rapeseed in China will account for about 50%, respectively. No matter what kind of harvest method, early or late harvesting will have a huge influence on the yield and quality of rapeseed. At present, harvest time is mainly judged by manually observing the color changes of the pods and stalks, which are not only subjective, but also difficult to replicate. In order to guarantee the quality and yield of the harvest, an intelligent method is urgently needed to determine the best harvest time for rapeseed.

In recent years, the rapid development of computer vision and machine learning has provided a new way to monitor crop growth. At present, hyperspectral analysis technology is widely used to predict crop maturity. For example, Zhao J. [

5] and others used the visible/near-infrared spectra of apples with different maturities, extracted spectral feature variables using random forests, and established a fast non-destructive discrimination model for apple harvest periods using extreme learning machine (ELM) and support vector regression (SVR) classification models. Xie Z. and others screened six wavelength combinations by taking hyperspectral images of round leaf spinach, based on the genetic algorithm of grouping elite genetic strategy, and then established a recognition model for spinach freshness based on depth learning technology [

6]. Jing Z. [

7] and others used UAV to take soybean multispectral images, and predicted soybean maturity time from 130 features in five segments of multispectral images using the partial least squares regression method, and revealed the practicability of multispectral images in predicting soybean maturity. Xu X. and others developed a wheat yield monitoring method by using UAVs remote sensing hyperspectral images and field growth data of winter wheat for many years. In the booting stage, flowering stage and filling stage, R2 values were 0.55, 0.64, and 0.66 [

8]. Garcia-Martinez etc. analyzed different multispectral and red-green-blue (RGB) vegetation indexes and digital estimates of vegetation coverage and density, and estimated corn yield using a neural network model [

9]. Hyperspectral analysis technology has the advantages of high modeling accuracy but its equipment is expensive and cumbersome to use. It is difficult to promote the application of this technology for rapeseed production, which is dominated by small and medium-sized farms.

In recent years, with the progress of image sensor technology, more and more researchers use RGB images taken by smart phones or UAVs to quickly, and nondestructively, diagnose crops. Laura Z. etc. used the method of multi-camera combinations to capture rapeseed images to analyze and estimated rapeseed yield parameters based on a neural network from single vine images combined with whole rapeseed images [

10]. Trevisan Rodrigo etc. used UAV images and developed a complementary convolution neural network to predict the maturity of soybean. The root means square error on the verification set was less than two days [

11]. Ji Y. etc. used UAV images to monitor plant height during the whole growth period of broad beans and estimated the yield of broad beans using multiple time point data of plant heights based on a machine learning algorithm. R2 reached 0.98 [

12]. Ortenzi L. etc. used UAVs to collect RGB images of olive tree canopies and realized the early yield estimation of olives through the extracted canopy radius. The error of the predicted output was less than 18%. This study provided a digital method for the efficient management of olive plantations [

13]. Fathipoor H. established a partial least squares regression (PLSR) model to predict the forage yield by analyzing the plant height and vegetation index obtained based on the RGB image of UAV during the forage growth stage, and R2 reached 0.85 [

14]. To sum up, the monitoring method using RGB images has significant low-cost advantages and is easy to carry. However, RGB images are vulnerable to light and have few image features, so it requires professional feature extraction methods and complex machine learning methods to establish accurate prediction models.

Simple and easy intelligent decision-making solutions for harvesting are urgently needed in rapeseed production. The method of combining image feature extraction with machine learning is expected to solve this problem. Most of the existing image feature extraction methods are based on manual extraction, taking the texture, color and their combined features of the image as the input features of machine learning [

15]. The artificial extraction method is limited by the influence of lighting conditions, camera sensors, plant surface characteristics and varieties, and it is difficult to establish a stable feature library. The rapidly developing convolutional neural network (CNN) can automatically learn and extract the most representative features from data in the training process with classification as the goal, gradually replacing the artificial feature extraction method [

16,

17]. However, the ripening process of rapeseed in the pod stage changes continuously. The image characteristics of rapeseed in the mature stage change gradually with the ripening process. The stalk gradually turns yellow green from near the root to white, while the pod gradually turns yellow and gray from green. Although the CNN can extract complex and effective features, it lacks the continuity of time and is not suitable for such a long dynamic cycle task as rapeseed maturity monitoring. Long short-term memory (LSTM) has been superior in dynamic systalk analysis in many fields [

18,

19]. CNN has significant advantages in exploring more spatial features, while LSTM has the ability to reveal phenological features. The integration of the advantages of these two types of algorithms will further improve the flexibility and reliability of prediction models based on multi-source production data [

20]. For example, SUN and others put forward a soybean yield prediction model based on convolution neural network-long short-term memory network (CNN-LSTM). Taking weather data, surface temperature and ground reflectivity as input data, and taking historical soybean yield data as labels, they combined the data and converted them into tensors based on histograms for model training. The results of the model are better than those of CNN or LSTM models alone. However, this method only takes objective climate data as input, and does not involve the characteristics of soybean growth process [

7].

In order to realize the intelligent prediction of rapeseed harvest period, this paper proposed a deep learning network based on CNN-LSTM to analyze the field images of rapeseed in the fruit period to establish a yield classification prediction model. First, the CNN network was used to automatically extract the features of rapeseed canopy HSV color space image with significant features, and the color features of rapeseed stalks screened by the comprehensive random forest method were used to form the rapeseed canopy stalk multi-source feature input, so as to fully exploit the mature rapeseed features. Finally, LSTM was used to deal with the timing problem of rapeseed ripening process, and the experiment showed that the accuracy of yield classification prediction of rapeseed reached 96%. With the presented method, only RGB images taken by mobile phones or drones can be used to accurately determine whether rapeseed has reached the optimal harvest period. It is an intelligent method for rapeseed production.

2. Materials and Methods

2.1. Experimental Materials and Data Acquisition

The rapeseed varieties were Zhongshuang 6, Dadi 55 and Huayouza 62, which will be planted in Huanggang Modern Agricultural Science and Technology Park, Huanggang City, Hubei Province in 2020 and harvested in May 2021. The experimental field was a rice field rotation, and nitrogen fertilizer was applied at the ratio of 5:2:3 (base, seedling and moss fertilizer). The P2O5 and K2O applied in different treatments were 150 kg/ha. All phosphate fertilizers were base-applied, with 1/2 potassium fertilizer as base fertilizer and 1/2 Brassica fertilizer. The method of seedling raising, and transplanting was adopted, sowing on September 24 and transplanting on October 28. Density: 450,000 plants/ha. The width of the irrigation ditch between two adjacent plots was 0.2 m. A 1m wide protective plot was built around the whole site to reduce the edge effect. The experimental data were collected continuously from 1 May to 15 May 2021. A total of 80 plants of each variety were sampled every day. The experimental data collection mainly included three links: image shooting, rapeseed harvesting, threshing and yield measurement.

2.1.1. The Experimental Images Acquisition

For the rapeseed harvested on that day, rear cameras such as the iPhone 8P and Xiaomi 10 were used to take images of its canopy and stalk (automatic mode, image resolution of 12 million pixels). Shooting height was about 0.3 m from the canopy and 0.2 m from the stalk. The mobile phone camera was placed to shoot 10–20 images at the canopy and stalk at 17:00–19:00 every day, without direct sunlight. A total of 820 images of three varieties were collected, as shown in

Table 1. Specific conditions of note in the shooting environment included: (1) avoiding rainy days and the day following rain, and avoiding strong reflections caused by direct sunlight on sunny days; and (2) when taking canopy images, attempting to take only images of the pod, and attempting to only take images of stalks when taking images of stalks.

2.1.2. The Yield Production Acquisition

After the image shooting was completed, 60 rapeseed plants were harvested manually. The daily harvested rapeseed plants were recorded and numbered, and then dried. After drying, the rapeseed met the national recovery standard (moisture content of less than 8%), and manual threshing and weighing were carried out to obtain the daily yield data.

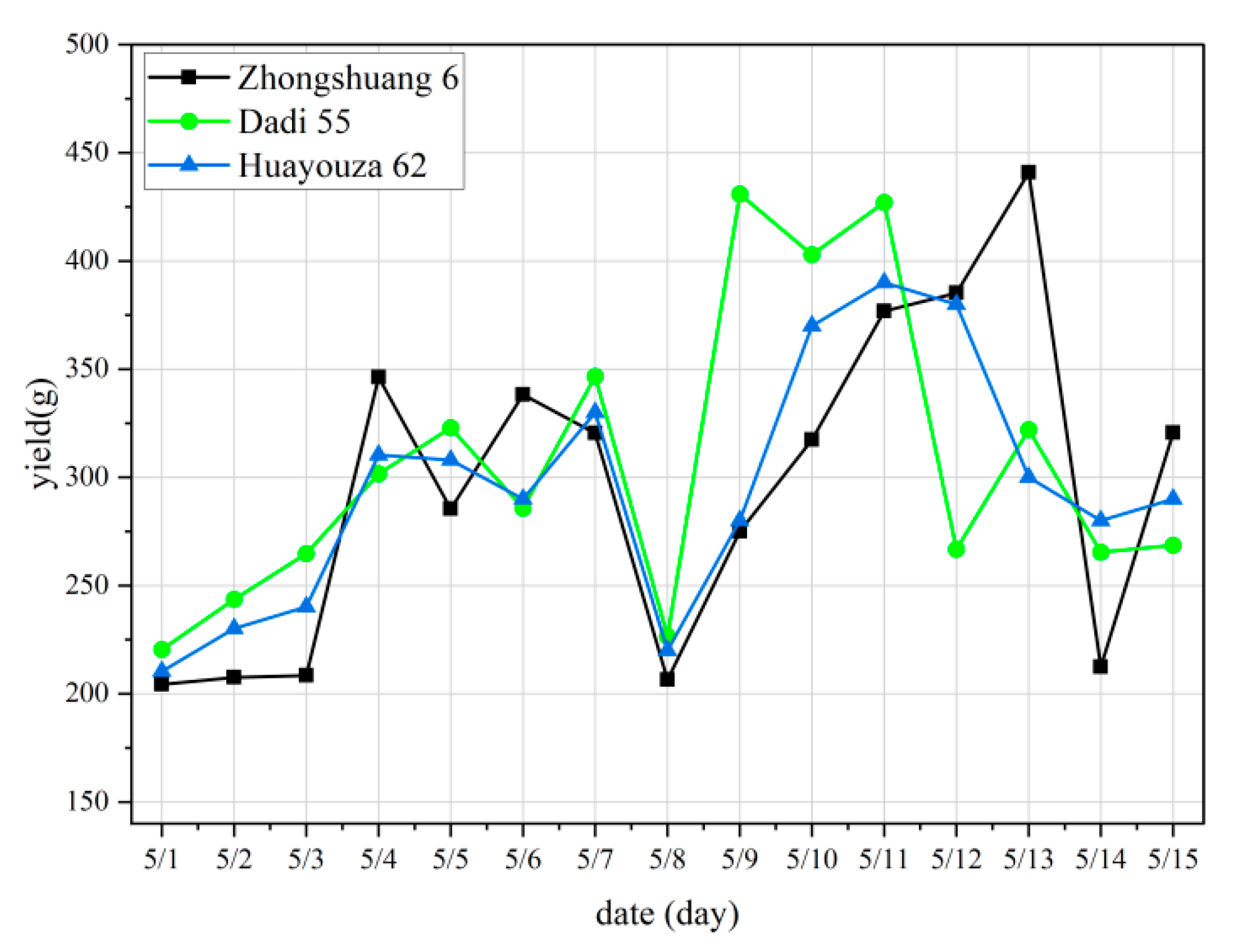

Figure 1 shows the curve of daily yield data of the three varieties.

The planting density of the experimental field was 450,000 plants/hm

2. We sampled 60 rapeseed plants each time. The production data we acquired was converted into the customary yield data according to Formula (1):

where

Cy is the customary yield data,

Wy is the daily yield data we acquired.

2.2. The Classification Processing of Yield Data

Nutrient deficiency, soil, climate, cultivation methods, etc. may lead to reductions in yield in varying degrees [

21]. The maximum theoretical yield of a rapeseed variety is obtained in the experimental field by a breeding expert, which is only an ideal reference index to establish the yield level. In our manuscript, the maximum yield was provided by the rapeseed research team of the School of Plant Science and Technology in Huazhong Agricultural University. The maturity process of rapeseed can be divided into three stages as green maturity (the pods are green), yellow maturity (10% of the pods begin to turn yellow) and full maturity (most of the fruit pods turn yellow) [

22]. According to the suggestions of rapeseed planting experts, the yield range is divided into 90–100%, 70–90% and <70%, which are consistent with the actual agriculture production. According to the proportion of effective yield and maximum theoretical yield, the problem of absolute yield prediction is transformed into the problem of yield classification prediction. The effective yield is divided into three yield levels, namely, 90–100% yield is first class, 70–90% yield is second class, and less than 70% yield is third class.

Table 2 shows the pretreatment results of yield data of three varieties for 15 consecutive days. The table describes the yield level corresponding to the yield of each variety during the 15-day monitoring. It can be observed from

Table 2 that the yield data of 5/8 have an abnormally low value (the rain in the day of 5/8 caused the loss of pod and affected the yield data of 5/9). Even if this abnormal data is not taken into account, the actual yield of the three varieties in the monitoring period is not a linear trend from low to high and then to low. The timing of the highest yield occurrence for the three breeds was also inconsistent. It is difficult to establish a high precision prediction model for the data with insignificant laws using traditional machine learning modeling methods.

2.3. Image Datasets Enhancement

To improve the generalization ability of the training model, increase the robustness of the model, and at the same time expand the image database of rapeseed in the mature stage, the collected experimental images were enhanced. By randomly rotating the original image to a certain angle, translating a certain distance, scaling, and adding Gaussian noise to these features that do not affect image classification, the purpose of enhancing data was achieved. The fixed enhancement data of canopy and stalk images of three varieties were 250 respectively, that is, 500 images for each variety, 1500 images in total, for subsequent network model training and testing.

2.4. Image Segmentation and Feature Extraction of Rapeseed Field Image

2.4.1. Rapeseed Field Image Segmentation Using U-Net Network

In order to eliminate the interference of soil, weeds and other backgrounds on the prediction model, it was necessary to segment the pod from the complex field background for subsequent processing. The deep learning method has been widely used in the field of image segmentation and is also widely used in image segmentation in agriculture [

23]. The U-Net network adopts a U-shaped symmetrical structure [

24]. This method splices features together in the Channel dimension to form thicker features. In addition, U-Net performs well on small data sets, and has a good recognition effect on subtle edges. Therefore, U-Net is suitable for extraction of slender targets such as rapeseed pod and rapeseed stalk. There are three parts in the U-net. Labeled images and the original images were put into the first four layers. In Downsampling part, the images were resized to four different sizes and high-dimensional feature maps were extracted using convolution. In Contact part, the feature maps of each layer were used to link different modules. Descriptions of the contact modules were shown in the below chart of

Figure 2b. In Upsampling part, the convolved high-dimensional feature map was pooled and superposed with the feature map obtained from each layer, and finally connected to the full connection layer for pixel discrimination. A total of 750 canopy images and 750 stalk images in the experimental image set were divided into training set, verification set, and validation set according to 60%, 20% and 20%. The specific network structure and training process of training with U-Net network are shown in

Figure 2.

2.4.2. Silique Image Features Extraction Based on the CNN

The color change of the silique layer is closely related to the maturity. So, accurate extraction of the effective features of the canopy is crucial to the accuracy of subsequent classification and recognition. Compared with RGB color space, the HSV model separates chroma, saturation and brightness, and reduces the influence of light on the characteristics of the silique image. As showed in

Figure 3, RGB images of silique in different stages of maturity and its H channel of HSV model are shown. Compared with the RGB image, H channel has a very significant feature change, and the color of mature silique changes from full green to yellow green, and to full yellow and black. Therefore, the H component of HSV color space was used to establish the best harvest time prediction model.

To better excavate the spatial, color, and texture characteristics of the rapeseed canopy, the rapeseed canopy images taken for 15 consecutive days were extracted for high-dimensional features using the CNN module. First, the H component of the image is pre-trained. Using

m n × n convolution kernels, the

Xi,j (the elements in the

i th row and

j th column of the image) pixels of the image were convolved. After convolution, the value of each pixel in the feature diagram was calculated as showed in Equation (2).

In Equation (2), Wm,n represents the n th column weight in row m in the convolution kernel, Wb represents the bias term, ai,j represents the i th row j th element in the feature graph, and f indicates the activation function.

After convolution, a total of m feature maps were obtained by average pooling the feature maps, that is, taking the average value within the n × n range of the feature map to further reduce the image while retaining important information. In the pooling of the last layer, the results were arranged into a row of vectors xi to form a fully connected layer. Finally, extracted high-dimensional features were input into the LSTM for training to establish a classification model.

2.4.3. Stalk Image Features Extraction Based on the Random Forest

In agricultural production, the yellow-green-ratio of stalks is typically used as an important indicator to judge whether rapeseed is mature. In order to more comprehensively and accurately describe the changes of rapeseed stalk color during rapeseed ripening, 31 color features of the stalks were extracted from RGB, HSV, YCbCr and other three spaces [

25]. Among them, seven color features of vegetation index based on RGB spatial model, such as EXR, were adopted, as shown in

Table 3. For HSV and YCbCr color space, the mean, standard deviation, skewness, and peak values of the three channels were selected, respectively.

In order to solve the problem of too many original features and redundancy, stalk color features were screened to improve the effectiveness of features. Random forests can effectively screen the color features of stalks by internal sorting of features [

26]. A training sample set was formed by taking part of the sample from 31 kinds of eigenvalues in a random and put-back manner, and repeated

N times to form

N training sample sets and

N decision trees. The features that are not drawn as tests were used to calculate the error rate of the decision tree model prediction, which became the out-of-pocket data error (

). Noise interference was randomly added to the features not extracted and the out of bag data error again was calculated again, which was recorded as

. For

N trees, the importance of feature

q was calculated as shown in Equation (3) [

27].

where,

E(

q) is the feature importance;

N is the number of classification decision trees;

q is the current calculation feature; If the accuracy of out of bag data decreases significantly (that is,

increases). After adding random noise, this indicated that feature

q has a great impact on the prediction results of samples, which further indicated that it is of high importance. The color characteristics of the top six rapeseed stalks obtained from random forests were: the average value of channel A, the average value of channel B, the average value of channel CB, ExR, NDI, and the average value of channel Y. These six features are the key image features of the stalk.

2.5. Prediction Model of Yield Level Based on CNN-LSTM

LSTM is a special Recurrent Neural Network (RNN), which is mainly used to solve the problem of gradient disappearance and gradient explosion during long sequence training. Compared with the general neural network, it can deal with the data of sequence changes. For the continuous time series of rapeseed maturity, the maturity of the previous day must have a great relationship with the maturity of the next day and will affect the maturity of the next day. In addition, the maturity of rapeseed in the field is uneven, and the effective yield data fluctuates in a small range. If only image features are used without considering the relationship between images, it will lead to a wrong prediction. Transfer of cell state h in LSTM structure exactly describes the relationship between images. For continuously collected image datasets, the use of CNN-LSTM can not only fully mine the feature information carried by a single image itself, but also can fully use the continuity between features to mine the timing information carried between images, which can maximize the accuracy of discrimination. Therefore, this paper used the neural network architecture based on CNN-LSTM to establish the prediction model of rapeseed harvest time.

The processing of recognition using the CNN-LSTM framework is shown in

Figure 4. The input of this framework is the silique features using CNN and the stalk features using Random Forest. Initial state is a set of initial parameters for h0 adopted by human set-ting, which will change from zero to other value in the deep learning net training. Yield production was to be classed three levels, so there were 3 classes in the CNN features extraction. There were three classes in the final recognition result too. High dimensional features are extracted from the last full connection layer of CNN and used as one-dimensional vector features of

× 1, where

t is the number of images in each time step. Each gate cell contains an

m × n matrix, m represents the canopy pod features extracted by CNN and the stalk features obtained by machine learning screening, and n represents all time steps. As a loss function, cross entropy adjusts network parameters based on classification accuracy in the dataset. After the training, the final hidden layer unit state

represents the important feature coding information of each period. The one-dimensional feature vector

is connected to the Full connection layer, and then connected to a

Softmax layer for classification.

LSTM structural parameters are shown in

Table 4. This paper determined hyperparameters based on experimental results from large datasets.

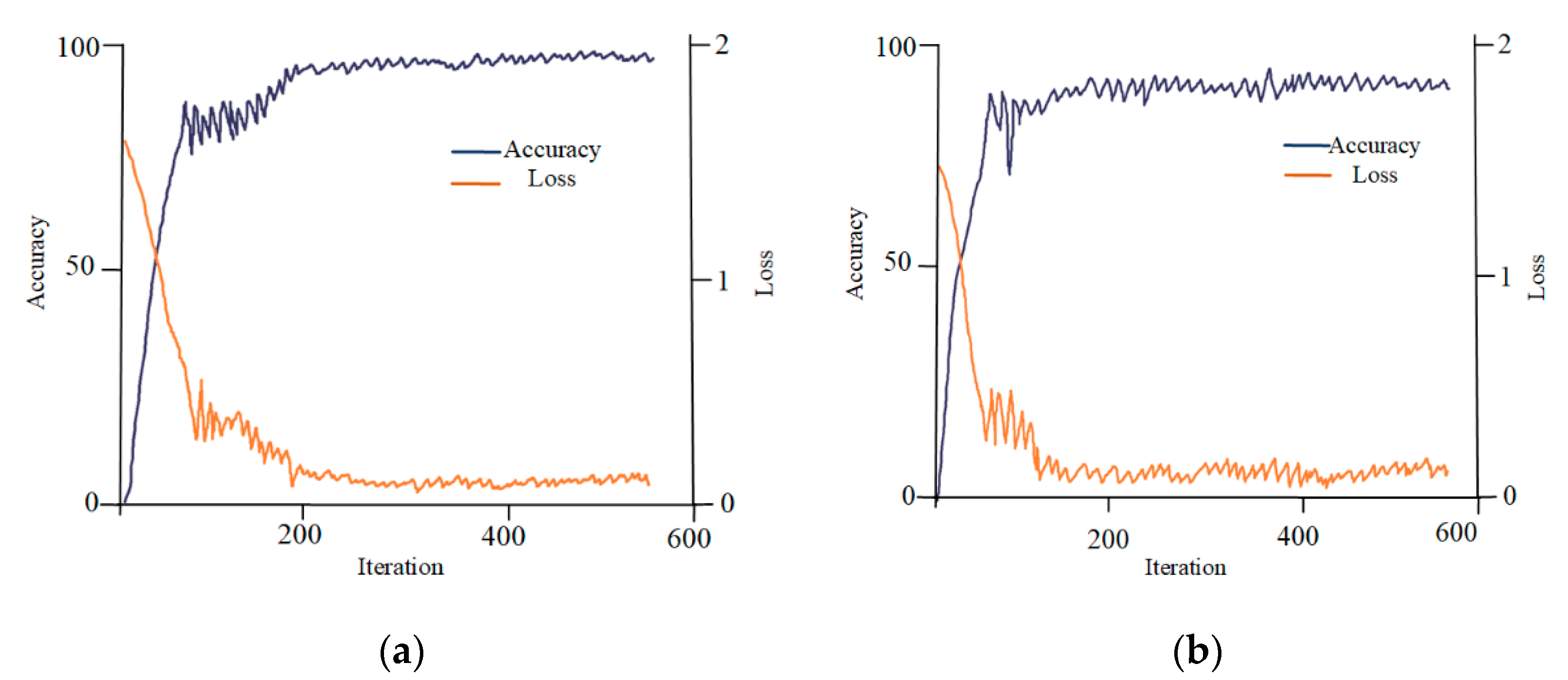

4. Conclusions

In this paper, a prediction model of the best harvest time of rapeseed based on the CNN-LSTM deep learning framework was proposed. Based on rapeseed canopy and stalk image data at pod stage, high-dimensional features of rapeseed canopy image were extracted by CNN deep learning method, and then the color features of rapeseed stalks were screened by using random forests, forming the joint features of rapeseed silique and stalk. Based on the joint characteristics of the above two aspects, a prediction model of Inception V3-LSTM rapeseed yield grade was established by using LSTM recurrent neural network. The experimental results showed that 94% classification accuracy was achieved in the validation set for three rapeseed varieties. Using CNN network to extract image features to replace the traditional artificial feature extraction method can not only greatly reduce the invalid workload, but also help to avoid the impact of many redundant features on the recognition rate of the algorithm and has a good robustness for images with insignificant color features. In addition, referring to the experience of manually judging the maturity of rapeseed, the color feature of the stalk was added, which can improve the classification and recognition rate by 6%. Using Timeline tools of TensorFlow, the algorithm execution time was tested with 12-million-pixel images of rapeseed canopy and stalk. The average result of 20 time was 0.54 s.

We present a precise prediction method for the three varieties of rapeseed. For a new rapeseed variety, the presented method will be adopted for prediction of harvest time through the following steps: (1) Breeding experts provided the maximum theoretical yield data of a new rapeseed, which is divided into three levels according to three intervals of 90–100%, 70–90% and <70%; (2) Image and yield data were collected from the yellow maturity of rapeseed. Canopy and stalk images of rape were taken for 8–12 consecutive days, and 60 rapes were taken to measure yield; (3) The presented method was used to process the collected images and yield data to obtain the yield level prediction model; and (4) Steps (2) and (3) only need to be carried out in the first year, and can be applied in the second year and after. After entering the yellow maturity period, workers can monitor the change of rape yield by taking the canopy and stalk images and process these images. If the prediction results show that the yield level is between 90–100%, harvesting can be carried out. In the case of serious reduction conditions, the prediction result will remain at a low level even at the full maturity period, and can be harvested immediately.

The method proposed in this paper has realized automation for the feature extraction of canopy and stalk. When the prediction model is established for more varieties and larger data, the model can be constructed efficiently. Therefore, the method proposed in this paper has good adaptability and practicality. Furthermore, the data and model in this article will be deployed to the cloud database and open to users for free. Users can use mobile terminals to judge the best harvest time of rapeseed conveniently and quickly, which can effectively improve the intelligence of rapeseed production and reduce yield loss.