Abstract

The SUREVEG project focuses on improvement of biodiversity and soil fertility in organic agriculture through strip-cropping systems. To counter the additional workforce a robotic tool is proposed. Within the project, a modular proof of concept (POC) version will be produced that will combine detection technologies with actuation on a single-plant level in the form of a robotic arm. This article focuses on the detection of crop characteristics through point clouds obtained with two lidars. Segregation in soil and plants was successfully achieved without the use of additional data from other sensor types, by calculating weighted sums, resulting in a dynamically obtained threshold criterion. This method was able to extract the vegetation from the point cloud in strips with varying vegetation coverage and sizes. The resulting vegetation clouds were compared to drone imagery, to prove they perfectly matched all green areas in said image. By dividing the remaining clouds of overlapping plants by means of the nominal planting distance, the number of plants, their volumes, and thereby the expected yields per row could be determined.

1. Introduction

Through the modernization of agricultural machinery and the accompanying development of common agricultural practices in recent decades the focus has mainly been on productivity, neglecting the natural balances of the environment. Luckily, the market for organic agriculture is growing [1], driving conventional farmers to change their businesses. In Europe specifically, the growth in demand alongside recent legislative initiatives have increased the organically cultivated area around 12 percent per year in the 2000s [2]. Organic farming optimizes nutrients in the soil and benefits biodiversity, while reducing water pollution, among other things [3]. The SUREVEG project [4] focuses on the effects of different organic fertilizer strategies in strip-cropping systems to increase biodiversity [5], improve soil fertility, and reduce the use of natural resource [6,7]. The project’s field trials are spread out all over Europe to account for the variation in the environmental effects of agricultural practices within and between the European Union (EU) [8]. Using a multi-cropping system enhances resilience [5], system sustainability, local nutrient recycling, and soil carbon storage, all while increasing production [9]. The increase in complexity of management demands a robotic proof-of-concept to apply said fertilizer strategies.

Conventional agriculture is based on an industrial farming concept, growing single species in large fields, and applying fertilisers and pesticides at homogeneous doses to ensure maximum yields. Organic farming proposes a different paradigm using little to no chemicals in smaller-scale farms. Naturally, this goes hand in hand with a higher labor cost. Precision agriculture technologies such as GPS positioning, variable rate dosing systems, and sensors to gather information on plant, soil, and environment have been proposed as the natural evolution of industrial farming towards a future with large-scale automated (but still industrialised) agriculture. By applying automation technologies to organic farming, however, the technological gap between current labour intensive organic agriculture practices and increasing consumers demands can be closed, while pursuing maximum environmental preservation.

Advancements in robotics nowadays have found applications in nearly all industries, to which agriculture is no exception [10]. Agricultural machinery is getting more and more advanced and the first autonomous machines (robots) have already entered the commercial market [11,12]. To minimize the amount of soil compaction the ideal solution is a gantry system carrying the sensors and actuators [13]. Regardless of the type of supporting structure, a perception system to register the current application area is key, here referring to the state of the (individual) plants, while not excluding possible expansion to soil characteristics or any other parameter of interest. As summarized in References [14,15] there are many (visual) ways of plant detection being investigated. In this paper, the optical sensing is performed using a Light Detection and Ranging sensor, also known as lidar, following References [16,17,18], among others.

To counteract the previously mentioned increase in complexity of management demands of a multi-crop system, one of the main objectives of the SUREVEG project is the development of automated machinery for the management of strip-cropping systems. The development of the proposed robotic tool will be based on a modular gantry system, following Reference [19]. Within the project framework, a modular proof-of-concept version will be produced, combining sensing technologies with actuation in the form of a robotic arm. This proof-of-concept will focus on fertilization needs, which are to be identified in real-time and actuated upon on a single-plant scale. Within this conceptual framework, first sensing approaches consist of lidar measurements of various strip-cropping fields, to be able to compare crop architecture in-strip to its monoculture counterpart. The hypothesis of this work is that the 3D point clouds obtained from a set of lidars mounted in front of a GPS guided tractor can be separated into plant and soil clouds using a customized algorithm based on a weighted sum, with the objective of calculating plant volumes and volume consistency within rows, as well as between rows, and even fields. By automatically extracting the 3D model of each plant within a field the crop volume can be estimated to address their nutritional needs on a single-plant scale, hereby facilitating the change from conventional farming to organic strip-cropping within a precision farming framework.

This paper is structured as follows: in Section 2 the experimental fields, hardware, and algorithms are all introduced in detail. This is followed by the results in Section 3, which has been divided into subsections containing the specific results that correspond to each of the processing steps explained previously. In Section 4, these results are compared to similar studies and placed into context, highlighting the differences and similarities. To conclude, Section 5 summarises the main findings.

2. Materials and Methods

2.1. Experimental Fields

The SUREVEG project relies heavily on the field studies of all its partners, that are located throughout Europe. The organic strip-cropping fields used for this study are located in Wageningen, the Netherlands, on the campus of Wageningen University & Research (WUR) (51°5927.4 N, 5°3936.0 E). The large scale long term field trial established in 2018 follows a complete randomised block design with three replicates of 4 treatments with 5 crops (2 year grass ley, cabbage, leek, potato, wheat). The crops in this experiment were selected based on market share and soil type. Comparisons to large scale references were obtained via an unbalanced incomplete block design, where each large scale reference is accompanied by one of the treatments.

The lidar measurements were performed in August of 2018, of different crops. The data sets used for this work all belong to strips of cabbages of a single variety, that is, Brassica oleracea L. var. Capitata, ’Rivera’ commercial cultivar. Of the 12 data sets used in this work, 8 were strips of 3 m wide alternated with wheat strips of equal width, containing 4 equally spaced rows. The remaining 4 data sets originate from a nearby monoculture field exclusively carrying this variety of cabbages at an equal row-spacing. With respect to the 9 standard growth stages as defined in Reference [20] the majority of the cabbages was in stage 6. In 2018 in particular the meteorological circumstances leading up to these measurements were rather extreme, as shown in Reference [21] among others, negatively impacting the development and yields as demonstrated in, for example, References [22,23]. Due to the heterogeneity of the development throughout the research fields the crops were harvested in two sessions, with nearly a month in between the two, as listed in Table 1.

Table 1.

Timeline of the measured crop, indicated in Days After Transplant (DAT).

2.2. Hardware

The two optical sensors employed in this study are lidars of the type LMS111 produced by SICK AG, set to 50 Hz and a resolution of 0.5 degrees. Both were attached to the front three-point linkage of a tractor, kindly provided by the staff of WUR, by means of aluminum Bosch Rexroth profiles, as shown in Figure 1, roughly 1 m above the soil. The wheelbase of the tractor covers exactly two of the four rows per strip, allowing to pass in between the individual crop rows. Furthermore, the tractor has GPS-guidance, which was set to a fixed speed of 2 km/h for these measurements. This speed is assumed to have been maintained constant and, together with the time difference between the scans, serves as the basis on which the displacement between two consecutive scanned lines were measured was calculated. The lasers were directly connected to a laptop, that registered the data using the manufacturer’s proprietary software.

Figure 1.

The sensor setup mounted to the tractor’s three-point linkage. Here shown while scanning a different crop.

2.3. Point Cloud Consolidation

Following several previous studies, for example, References [16,24], the orientation of the two lidars was set to downward-looking and push-broom respectively. The mounting structure maintained both the inclination and separation distance fixed for all measurements, allowing deduction of the exact transformation values from the data-set to merge both clouds. The software used to read the sensor measurements was the manufacturer’s proprietary program, SOPAS Engineering Tool, as commonly used, for example, in References [25,26,27,28] among others.

2.4. Soil Identification

To identify the points belonging to soil, both an altitude threshold as well as a plane identifier were found to give insufficient results. For being an open field with elevated planting rows the soil points as identified with the naked eye already demonstrated a relatively large variability. In combination with the relative low crops, a more elaborate method had to be developed. In this paper, a weighted sum is proposed, to allow for soil extraction from isolated point clouds without using additional sensors. A weighted sum, also known as cost function or loss function, can be stipulated in any way, thereby rewarding desirable attributes or penalizing undesirable ones, which makes it useful for a wide range of applications across all industries. In the application presented here, only the location data of the point cloud are considered, omitting the remission values included in the measurement.

2.4.1. Weighted Sum

In any field, containing any crop, the characteristics of the soil-points can be defined as having low elevation while the points in its immediate surroundings will be of similar altitude. Using a weighted sum results in a unique value for each point within the point cloud that quantifies a point and its neighbours. The proximity of each point is taken into account, such that the final value will depend most on the closest points, incorporating the inverse of its distance relative to the point that is being evaluated. To speed up calculations per point, only the points within a radius of 150 mm were taken into account. In the end, each point will have a value, rewarding the more points there are close-by, and the higher and closer they are with a higher weighted sum for that specific point. This is summarised in Equation (1), where is the weighted sum J of a point in question k, and i the index of each of the N points within the aforementioned radius. Of each neighbouring point i their height, h, and distance to point k, , make up their contribution to the final value J of point k. This is calculated until all points have been evaluated as a point k, meaning each of them also contributes N times in the weighted sum of each of their neighbours.

2.4.2. Cut-Off Value of J

After assigning a value J to each of the points in the merged cloud, that is, the combination of all data points of a single strip measurement provided by each laser separately, the entirety of values gives new insight in the variation of weighted sums within that specific measurement. More specifically, sorting these values from smallest to largest reveals a linear slope on the lower end of the spectrum, that appears in each of the measured strips. The amount of values in the sorted set, , that follow the linear estimation is assumed to relate to the amount of exposed soil, with the exact values within that linear section relating to the definition of heights used (i.e., what height is defined as 0) and difference in soil profiles.

Choosing two percentiles on the lower end of the spectrum to define the linear estimation L avoids influence of any plant points on the estimation, even for strips with more plant coverage (less exposed soil points). Subtracting the resulting linear extrapolation L from the actual values leads to a clear cut between near-zero values prescribing the soil versus higher values for the plants. There are many ways in which this difference can be located mathematically. Here a difference of more than 50,000 is employed to identify the threshold value in between soil and plants. This threshold is established empirically based on the data-sets available and might not be applicable to any future measurements.

2.5. Plant Grouping

Depending on the size of the measured plants, the extraction of plant count can be attempted in various manners. As the previous step extracted all plant data from the point cloud, the resulting points appear in groups, which for fields with a lower coverage ratio do not overlap. In this situation, any segmentation method would identify the separate plants, for example, based on Euclidean distance. Here, if the Euclidean distance between two points is smaller than a given threshold they both belong to the same cluster. All points at a larger distance are assumed to belong to a different cluster, hereby creating clusters where the smallest Euclidean distance between any member of cluster A and any member of cluster B is equal to or larger than said threshold distance.

For overlapping vegetation, this method would result in a single cluster that covers the entire length of the field. A second segmentation step could include more advanced methods such as for example, growing regions. For now the second step uses seeding distances to overlay a grid on the obtained clusters. Any cluster spanning two or more nominal seeding points is cut in the imaginary boundary between both grid segments. Due to seeding irregularity and differences in emergence this method needs replacement in further research.

3. Results

3.1. Successful Measurement Registration Rate

Data losses on either the sending (lidars) or receiving (laptop) end are known to occur when working at high measurement frequencies. Using the software of the sensor manufacturer the following percentages of messages were correctly received in each of the lasers, see Table 2. On average, lidar 1 managed to successfully register 89% on average, versus 87% in lidar 2, without any significant outliers in any of the measured passages. The difference between the two lasers has not been investigated in this preliminary study.

Table 2.

Percentages of scans that were registered correctly by each of the lasers in each of the scanned strips.

3.2. Point Cloud Accuracy

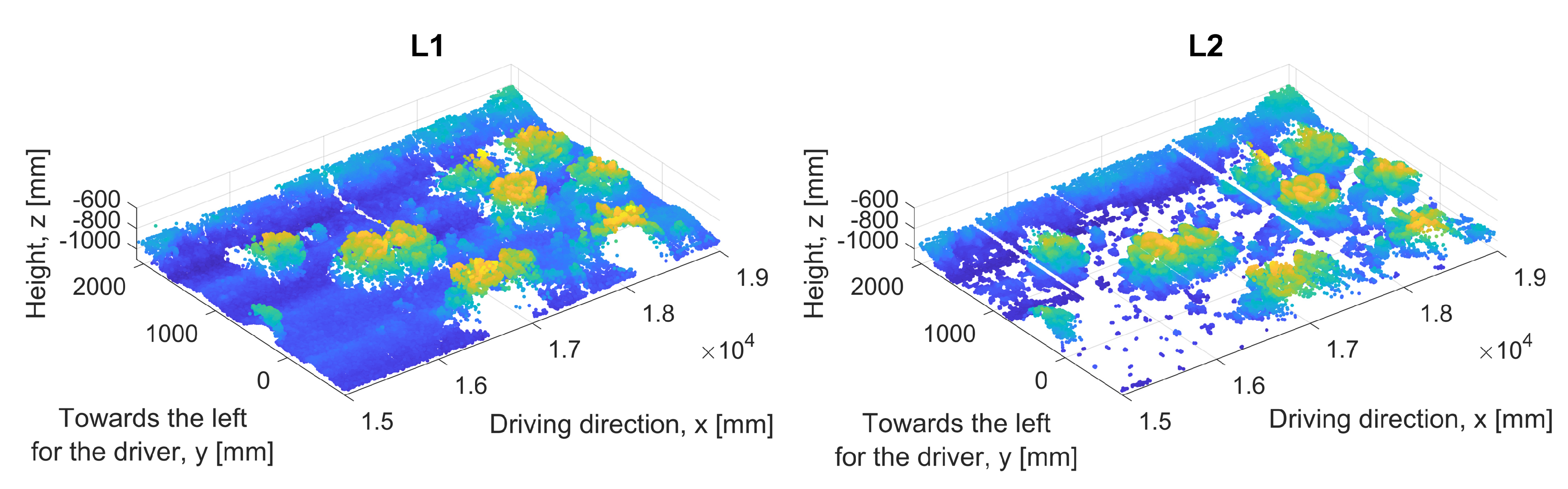

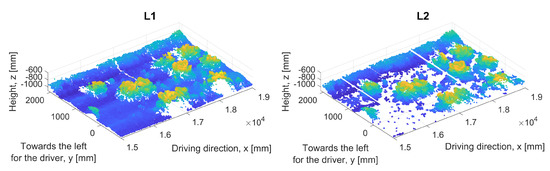

In theory, the accuracy in the configuration described in Section 2.2 is to have a new scan every 1.1 cm in the direction of driving, and a lateral resolution at soil height of 0.9 cm for the vertically mounted lidar. The higher plant parts are closer to the sensor than the soil and therefore inherently have a higher resolution. An example of the obtained accuracy is shown in Figure 2, where the lost measurements are visible as white lines at a constant x coordinate. Also note that the bare soil is picked up less by the inclined scanner. In this preliminary study, the subsequent translation to match the coordinate frames of both lasers was deduced manually, and is consistent for all measurements. Finally, the merged point cloud is translated to align the mark with the center of the strip.

Figure 2.

Example of the sensor output for the two lidars, including two areas of typical data losses recognisable by the white lines at a constant value of x. Furthermore, the inclined lidar has difficulties with detecting bare soil, which is clearly visible in the front-most corner of this sample area.

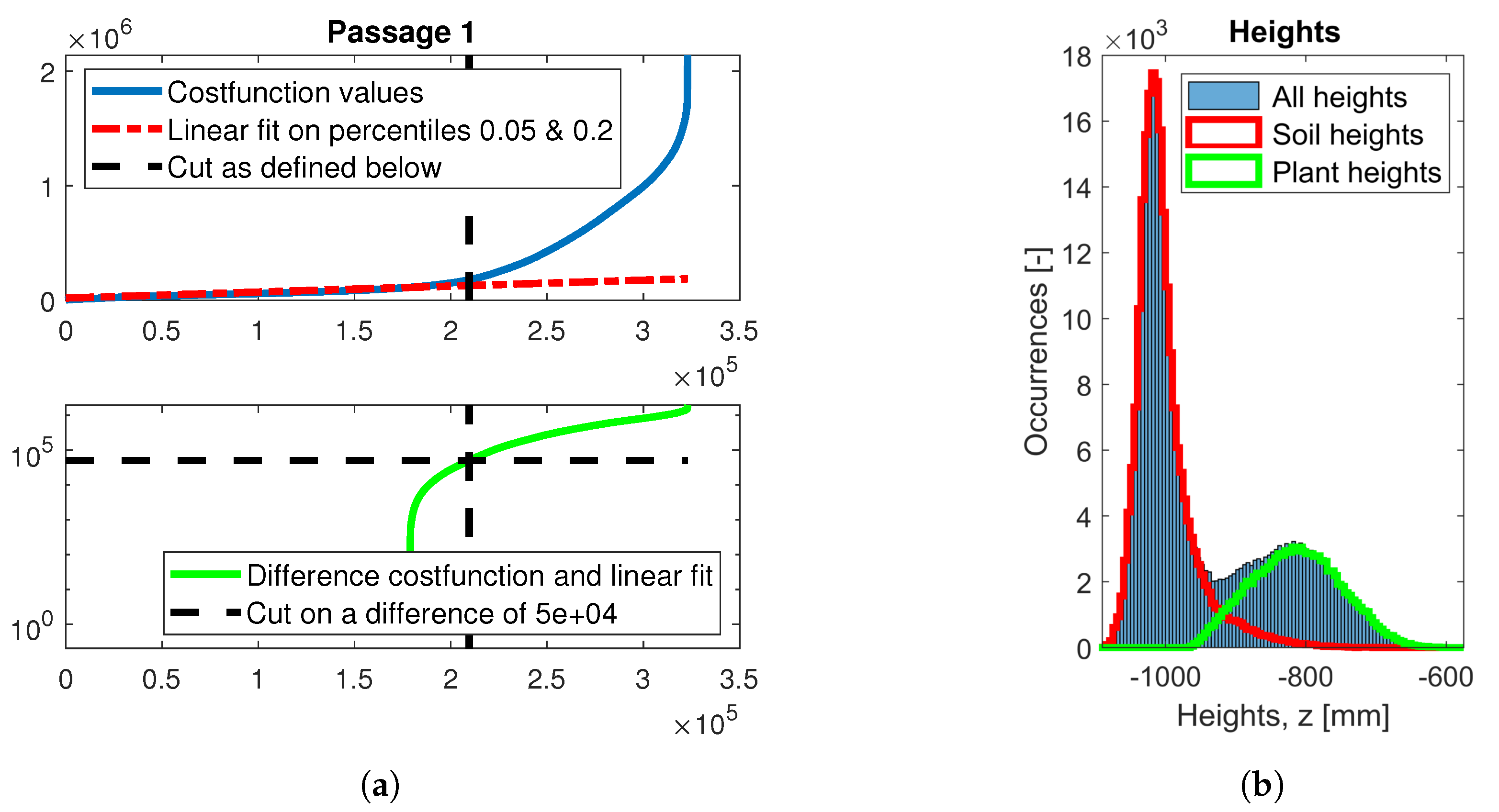

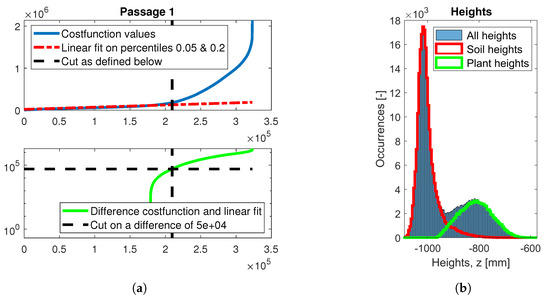

3.3. Weighted Sum Values

Each point in the merged point cloud is subjected to the procedure described in Section 2.4. The resulting values present in one of the measured strips is shown in Figure 3a. Here, the points are sorted based on their weighted sum (solid blue), and compared to a linear approximation (dashed red). Subtracting the approximation from the sorted values results in the lower curve shown in solid green. The cut-off process is visualized by the fixed horizontal line at (dashed black). At the point where that fixed limit crosses the green curve, a vertical line spanning both graphs (dashed black) visualizes the translation to the corresponding weighted sum value J. This value is the threshold of J used for all points within this strip to distinguish between soil and plants. When comparing the height data of the points to the left of that vertical separator, that is, all soil points, with the points to the right of the vertical line, that is, plant points, a near-perfect Gaussian distribution can be recognised in the height distribution histogram shown in Figure 3b.

Figure 3.

After calculating the weighted sum of each point in the strip, the cloud can be divided into plants and soil as summarized graphically in (a), to obtain the division shown in (b).

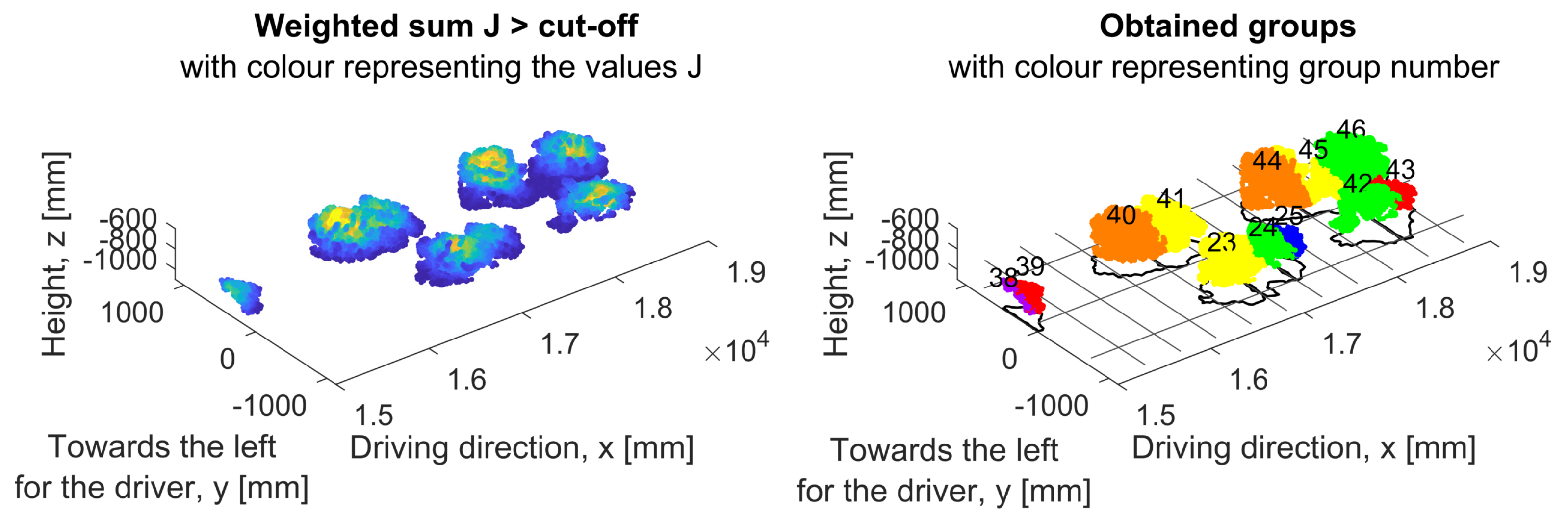

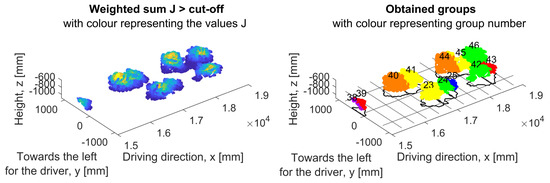

3.4. Grouping

In an attempt to quantify the number of cabbages per row, at first a Euclidean distance of 75 mm or more defines a new cluster. Secondly, a raster representing the sewing points is used to further divide any clusters covering more than one nominal seeding location. The result of both steps is shown in Figure 4 for the same sample area shown in previous figures, alongside the intermediate result of only the soil identification. At this time the clustering has not been further looked into, as this is a pilot study.

Figure 4.

The cabbage strip shown in Figure 2 after merging the two point clouds, and subsequently calculating the weighted sum, to categorize each point as either soil or plant. On the left, all plant points of the central two rows of this strip. On the right the result of two-step grouping as described in Section 2.5. Note that for example, cluster 40 and 41 have been identified satisfactory, while for example, the points in cluster 45 should actually be subscribed to both of the adjacent clusters.

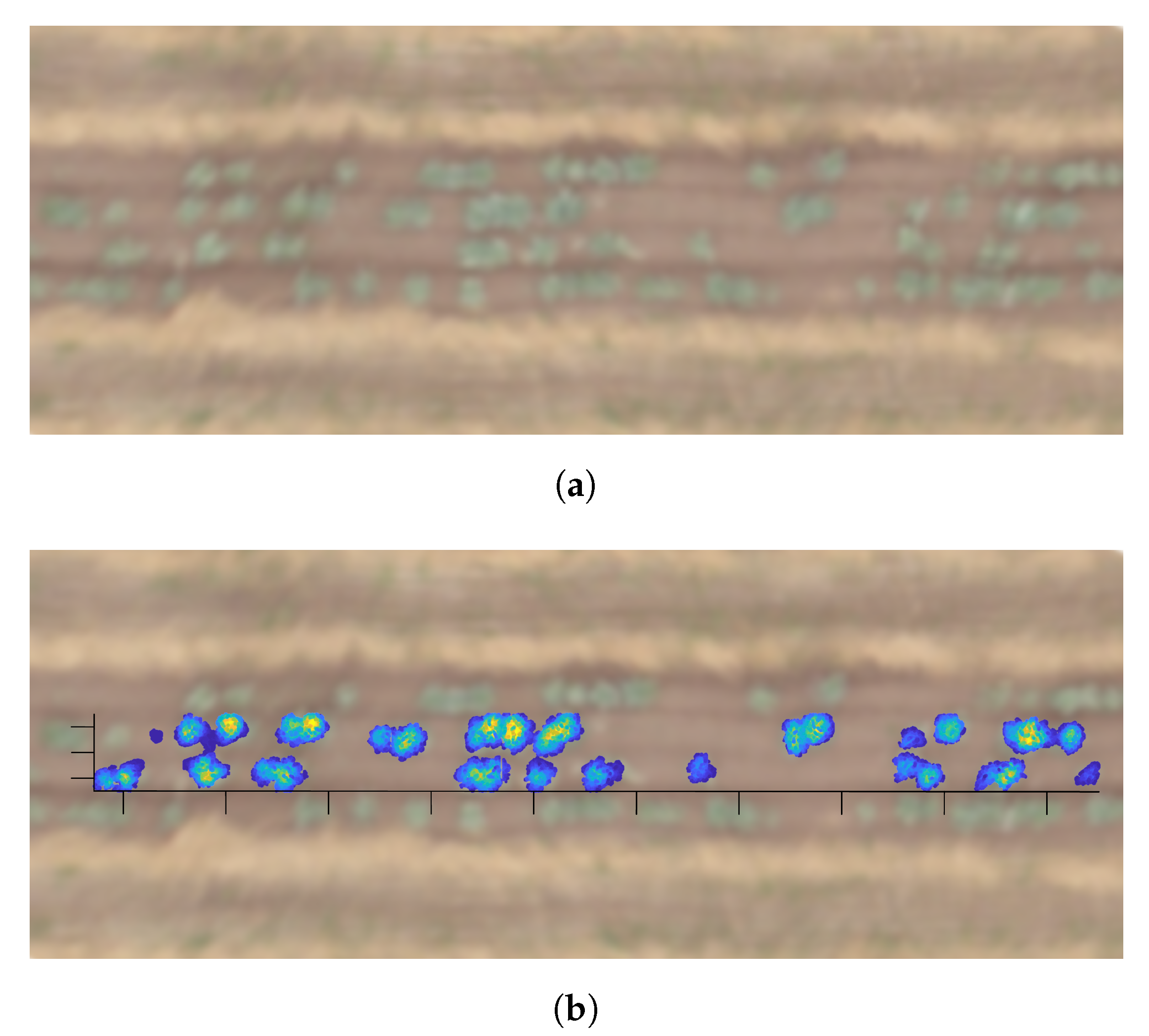

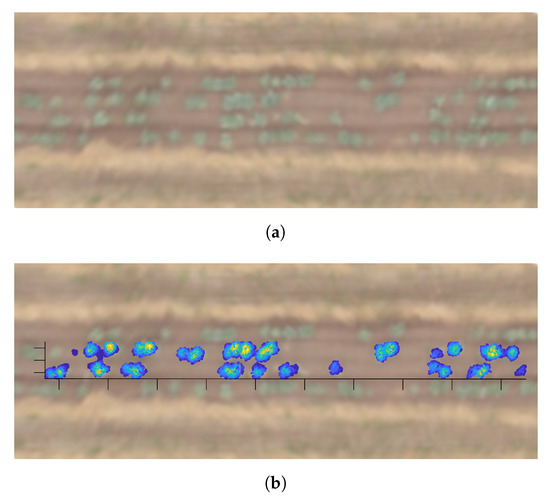

3.5. Comparison to Drone Images

When compared to the final yield, the point clouds that qualified as vegetation based on their weighted sum values unfortunately did not show the expected correlation, due to the exceptional climatic circumstances of that year, as described in Section 2.1. To verify the results, however, drone imagery of that same week was available. In Figure 5, a small part of the drone mosaic is included, once with and once without an overlay of the weighted sum values present in that specific strip. Although the resolution of the imagery leaves something to be desired, the vegetation is clearly distinguishable. The overlay matches the green areas to a satisfactory level.

Figure 5.

A part of the drone image zoomed into one of the measured strips, shown both without (a) and with (b) an overlay of the points identified by the algorithm as vegetation. The quality of the image is not optimal but the correct identification of the vegetation in the central row is visible. In (b) the colours correspond to the value of the weighted sum in each of the points.

4. Discussion

Canopy estimation in orchards through lidars mounted on inter-row terrestrial vehicles has been carried out quite frequently in various types of orchards. The approaches in volume estimation vary, for example, in Reference [17] where point clouds are divided into slices of 10 cm along the direction of the plant rows, which were subsequently divided into vertical slices of the same width, where the outer-most measured points in each of these square cuboids define the third and final dimension for the volume estimation of that area. Others combine multiple orchard passages, where each plant row is scanned from both sides at least once, into a single coordinate system, as for example, done in Reference [29]. This allows for more advanced tree estimations such as convex hull calculations, as explained in Reference [30]. Combinations of slices and more advanced methods are for example, included in Reference [27]. Variations of the slicing approach can also be found for other crop-types such as for example, tomatoes in greenhouses [18]. Orchard canopy is usually scanned laterally or even upward depending on the mounting height of the sensor. Due to the size of this type of vegetation, each row needs to be scanned from both sides in order to obtain a complete overview of its volume. In some cases, the orchard layout allows for individual tree-scanning, enabling other procedures as for example, imaging from 8 directions as described in Reference [31]. The advantages of the setup as the one used in this work are that a single pass is sufficient for registration of the crop volume, without the need to combine these already large data-sets into even larger ones, as well as the direct relation between crop volume and marketable yield inherent to these types of crops.

For cereals, which have a more similar field layout to the work presented here, 3D modelling through lidars is also gaining traction. In maize [16], for example, the issue addressed in this paper was solved using a RANSAC algorithm, which fits a plane through the lower most 3D points and defines any deviation from that plane as vegetation. This approach was also attempted on the data set used in this work but due to soil profiles and ridging in the direction of crop rows this method did not yield satisfactory results. Furthermore, the lower most crop points in leafy vegetables are vital for a correct estimation of crop volume and therefore vital to yield estimation. In wheat, the reflectance value of the lidar was successfully applied to distinguish vegetation from soil in a similar setting [32]. This was attempted here as well but did not yield satisfactory results for the crop type used here.

The use of a weighted sum that assigns a quantifiable value that can be statistically evaluated contributes to the more accurate separation between soil and vegetation that is especially needed in lower crops. Even though the RANSAC algorithm has been applied successfully in other lower vegetation with similar dimensions like cotton [33], the method presented in this paper improves that process, at the cost of needing higher computational power. The volume of the resulting vegetation point cloud obtained in Reference [33] is estimated using a Trapezoidal rule based algorithm, which might be a computationally cheaper alternative to the highly accurate convex hull calculations used in orchard scans, thereby providing a trade-off in computational power.

In this preliminary work, segregation in soil and plants was successfully achieved using only the aforementioned weighted sums, without the use of any additional data from other sensor types and without relying on reflectance values. This results in a dynamically obtained threshold criterion that is able to extract the vegetation from the point cloud in varying situations in terms of vegetation coverage and sizes (strip-cropping or monoculture, early stages to final development stages, etc.), where conventional separation methods have failed. In other words, the hypothesis of a weighted sum based algorithm having the potential to be superior to conventional soil separation methods has been confirmed on the basis of this preliminary study. Comparison between the resulting vegetation clouds and drone imagery proved a perfect match for all green areas in the central rows of each strip.

5. Conclusions

Lidar sensors have been used in canopy scanning in multiple research efforts, both in orchards as well as in arable crops. In uneven soils, common methods for soil-vegetation separation leave something to be desired, as they do not account for the lower most part of the crop, crucial for accurate volume estimation. For leafy vegetables like the cabbages used in this work, the yield has a direct relation to the volume of the plant, which is why tracking its development in volume gives great insight in the direct yield progress of each individual plant.

The developed methods prove that automatic volume calculation and thereby yield calculation, per row is feasible without misaccounting aforementioned lower crop architectures, as is the case for other commonly used methods. The developed method does not rely on external sensor readings, such as for example, colours, that have to be matched up with the point cloud. For earlier development stages, the automatic extraction of individual plants is proven here as well, which allows for individual fertilization correction on a single-plant scale, coinciding with one of the main goals of the SUREVEG project. For larger plants that already show overlap, the automatic division will have to be developed further to correctly allocate the measured points to each of the plants.

In future research, repeated measurements of the same crop, on regular intervals, will allow for development tracking and detection of slight deviations on a single-plant scale, in order to adjust fertilizer dosages with great precision in the development stages, where its impact is highest. Future research will involve application of these methods to other (leafy) vegetables, as well as combining multiple scans to visualize individual development curves of each crop in a (strip-cropping) field.

Author Contributions

Investigation, methodology, software and writing, A.K.; Conceptualization, supervision, and funding acquisition, D.v.A. and C.V.; Resources, J.J.R. All authors have read and agreed to the published version of the manuscript.

Funding

Financial support for this project is provided by funding bodies within the H2020 ERA-net project, CORE Organic Cofund, and with cofunds from the European Commission.

Acknowledgments

Special thanks to Pilar Barreiro for suggestions and support, and Annet Westhoek, Peter van der Zee, and the research team of Farming Systems Ecology at Wageningen University and Research for their help on site.

Conflicts of Interest

The authors declare no conflict of interest. The funders had no role in the design of the study; in the collection, analyses, or interpretation of data; in the writing of the manuscript, or in the decision to publish the results.

References

- Golijan, J.; Popoviš, A. Basic characteristics of the organic agriculture market. In Proceedings of the Fifth International Conference on Competitiveness of Agro-Food and Environmental Economy, Bucharest, Romania, 10–11 November 2016; pp. 239–248. [Google Scholar]

- Willer, H.; Meredith, S.; Moeskops, B.; Busacca, E. Organic in Europe: Prospects and Developments; Technical Report; Research Institute of Organic Agriculture (FiBL), Frick, and IFOAM-Organics International: Bonn, Germany, 2018. [Google Scholar]

- Kukreja, R.; Meredith, S. Resource Efficiency and Organic Farming: Facing Up to the Challenge; Technical Report; IFOAM EU Group: Brussels, Belgium, 2011. [Google Scholar]

- CORE Organic Cofund. SureVeg. 2018. Available online: http://projects.au.dk/coreorganiccofund/research-projects/sureveg/ (accessed on 3 April 2019).

- Wojtkowski, P.A. Biodiversity. In Agroecological Economics; Elsevier: Amsterdam, The Netherlands, 2008; pp. 73–96. [Google Scholar] [CrossRef]

- Exner, D.; Davidson, D.; Ghaffarzadeh, M.; Cruse, R. Yields and returns from strip intercropping on six Iowa farms. Am. J. Altern. Agric. 1999, 14, 69. [Google Scholar] [CrossRef]

- Bouws, H.; Finckh, M.R. Effects of strip intercropping of potatoes with non-hosts on late blight severity and tuber yield in organic production. Plant Pathol. 2008, 57, 916–927. [Google Scholar] [CrossRef]

- Fanelli, R.M. The (un)sustainability of the land use practices and agricultural production in EU countries. Int. J. Environ. Stud. 2019, 76, 273–294. [Google Scholar] [CrossRef]

- Wang, Q.; Li, Y.; Alva, A. Cropping Systems to Improve Carbon Sequestration for Mitigation of Climate Change. J. Environ. Prot. 2010, 1, 207–215. [Google Scholar] [CrossRef]

- Blackmore, S.; Stout, B.; MaoHua, W.; Runov, B.; Stafford, J.V. Robotic agriculture—The future of agricultural mechanisation? In Proceedings of the 5th European Conference on Precision Agriculture, Uppsala, Sweden, 9–12 June 2005. [Google Scholar]

- Slaughter, D.C.; Giles, D.K.; Downey, D. Autonomous robotic weed control systems: A review. Comput. Electron. Agric. 2007, 61, 63–78. [Google Scholar] [CrossRef]

- Reina, G.; Milella, A.; Rouveure, R.; Nielsen, M.; Worst, R.; Blas, M.R. Ambient awareness for agricultural robotic vehicles. Biosyst. Eng. 2016, 146, 114–132. [Google Scholar] [CrossRef]

- Taylor, J. Development and Benefits of Vehicle Gantries and Controlled-Traffic Systems. In Developments in Agricultural Engineering; Elsevier: Amsterdam, The Netherlands, 1994; Volume 11, pp. 521–537. [Google Scholar] [CrossRef]

- Li, L.; Zhang, Q.; Huang, D. A Review of Imaging Techniques for Plant Phenotyping. Sensors 2014, 14, 20078–20111. [Google Scholar] [CrossRef]

- Bégué, A.; Arvor, D.; Bellon, B.; Betbeder, J.; de Abelleyra, D.; Ferraz, R.; Lebourgeois, V.; Lelong, C.; Simões, M.; Verón, S.; et al. Remote Sensing and Cropping Practices: A Review. Remote Sens. 2018, 10, 99. [Google Scholar] [CrossRef]

- Garrido, M.; Paraforos, D.S.; Reiser, D.; Arellano, M.V.; Griepentrog, H.W.; Valero, C.; Baghdadi, N.; Thenkabail, P.S. 3D Maize Plant Reconstruction Based on Georeferenced Overlapping LiDAR Point Clouds. Remote Sens. 2015, 7, 17077–17096. [Google Scholar] [CrossRef]

- Escolà, A.; Sebé, F.; Miquel Pascual, B.; Eduard Gregorio, B.; Rosell-Polo, J.R.; Escolà AEscola, A. Mobile terrestrial laser scanner applications in precision fruticulture/horticulture and tools to extract information from canopy point clouds. Precis. Agric. 2017, 18, 111–132. [Google Scholar] [CrossRef]

- Llop, J.; Gil, E.; Llorens, J.; Miranda-Fuentes, A.; Gallart, M. Testing the Suitability of a Terrestrial 2D LiDAR Scanner for Canopy Characterization of Greenhouse Tomato Crops. Sensors 2016, 16, 1435. [Google Scholar] [CrossRef] [PubMed]

- Schäfer, D.W. Gantry technology in organic crop production. In Proceedings of the NJF’s 22nd Congress “Nordic Agriculture in Global Perspective”, Turku, Finland, 1–4 July 2003; p. 212. [Google Scholar]

- Andaloro, J.T.; Rose, K.B.; Shelton, A.M.; Hoy, C.W.; Becker, R.F. Cabbage growth stages. N. Y. Food Life Sci. Bull. 1983, 101, 1–4. [Google Scholar]

- Vogel, M.M.; Zscheischler, J.; Wartenburger, R.; Dee, D.; Seneviratne, S.I. Concurrent 2018 Hot Extremes Across Northern Hemisphere Due to Human-Induced Climate Change. Earth’s Future 2019, 7, 692–703. [Google Scholar] [CrossRef] [PubMed]

- Zhao, C.; Liu, B.; Piao, S.; Wang, X.; Lobell, D.B.; Huang, Y.; Huang, M.; Yao, Y.; Bassu, S.; Ciais, P.; et al. Temperature increase reduces global yields of major crops in four independent estimates. Proc. Natl. Acad. Sci. USA 2017, 114, 9326–9331. [Google Scholar] [CrossRef]

- Battisti, D.S.; Naylor, R.L. Historical Warnings of Future Food Insecurity with Unprecedented Seasonal Heat. Science 2009, 323, 240–244. [Google Scholar] [CrossRef]

- Martínez-Guanter, J.; Garrido-Izard, M.; Valero, C.; Slaughter, D.C.; Pérez-Ruiz, M. Optical Sensing to Determine Tomato Plant Spacing for Precise Agrochemical Application: Two Scenarios. Sensors 2017, 17, 1096. [Google Scholar] [CrossRef]

- Andújar, D.; Moreno, H.; Bengochea-Guevara, J.M.; de Castro, A.; Ribeiro, A. Aerial imagery or on-ground detection? An economic analysis for vineyard crops. Comput. Electron. Agric. 2019, 157, 351–358. [Google Scholar] [CrossRef]

- Foldager, F.F.; Pedersen, J.M.; Skov, E.H.; Evgrafova, A.; Green, O. Lidar-based 3d scans of soil surfaces and furrows in two soil types. Sensors 2019, 19, 661. [Google Scholar] [CrossRef]

- Bietresato, M.; Carabin, G.; Vidoni, R.; Gasparetto, A.; Mazzetto, F. Evaluation of a LiDAR-based 3D-stereoscopic vision system for crop-monitoring applications. Comput. Electron. Agric. 2016, 124, 1–13. [Google Scholar] [CrossRef]

- Andújar, D.; Moreno, H.; Valero, C.; Gerhards, R.; Griepentrog, H.W. Weed-crop discrimination using LiDAR measurements. In Precision Agriculture 2013 Proceedings of the 9th European Conference on Precision Agriculture, Catalonia, Spain, 7–11 July 2013; Wageningen Academic Publishers: Wageningen, The Netherlands, 2013; pp. 541–545. [Google Scholar]

- Rosell, J.R.; Llorens, J.; Sanz, R.; Arnó, J.; Ribes-Dasi, M.; Masip, J.; Escolà, A.; Camp, F.; Solanelles, F.; Gràcia, F.; et al. Obtaining the three-dimensional structure of tree orchards from remote 2D terrestrial LIDAR scanning. Agric. For. Meteorol. 2009, 149, 1505–1515. [Google Scholar] [CrossRef]

- Auat Cheein, F.A.; Guivant, J. SLAM-based incremental convex hull processing approach for treetop volume estimation. Comput. Electron. Agric. 2014, 102, 19–30. [Google Scholar] [CrossRef]

- Miranda-Fuentes, A.; Llorens, J.; Gamarra-Diezma, J.; Gil-Ribes, J.; Gil, E. Towards an Optimized Method of Olive Tree Crown Volume Measurement. Sensors 2015, 15, 3671–3687. [Google Scholar] [CrossRef]

- Jimenez-Berni, J.A.; Deery, D.M.; Rozas-Larraondo, P.; Condon, A.T.G.; Rebetzke, G.J.; James, R.A.; Bovill, W.D.; Furbank, R.T.; Sirault, X.R. High throughput determination of plant height, ground cover, and above-ground biomass in wheat with LiDAR. Front. Plant Sci. 2018, 9. [Google Scholar] [CrossRef]

- Sun, S.; Li, C.; Paterson, A.H.; Jiang, Y.; Xu, R.; Robertson, J.S.; Snider, J.L.; Chee, P.W. In-field high throughput phenotyping and cotton plant growth analysis using LiDAR. Front. Plant Sci. 2018, 9. [Google Scholar] [CrossRef]

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).