1. Introduction

The concept of a digital twin of a core sample allowing for numerical analysis of its physical and lithological properties arose several years ago [

1,

2]. Digital Rock (DR) physics workflow consists of imaging and digitizing the pore space and substances of a natural rock sample and subsequent numerical simulation of various physical processes in this digital model. It has a number of benefits with respect to traditional lab core analysis. DR technology is becoming more and more attractive nowadays due to development of image acquisition and processing methods. There are many industrial applications of DR [

3]: estimation of fluid transport properties such as absolute and relative permeabilities [

4,

5,

6]; assessment of enhanced oil recovery (EOR) methods [

7,

8]; calculation of elastic moduli and electrical conductivity [

9]; and modelling of nuclear magnetic resonance (NMR) response [

10].

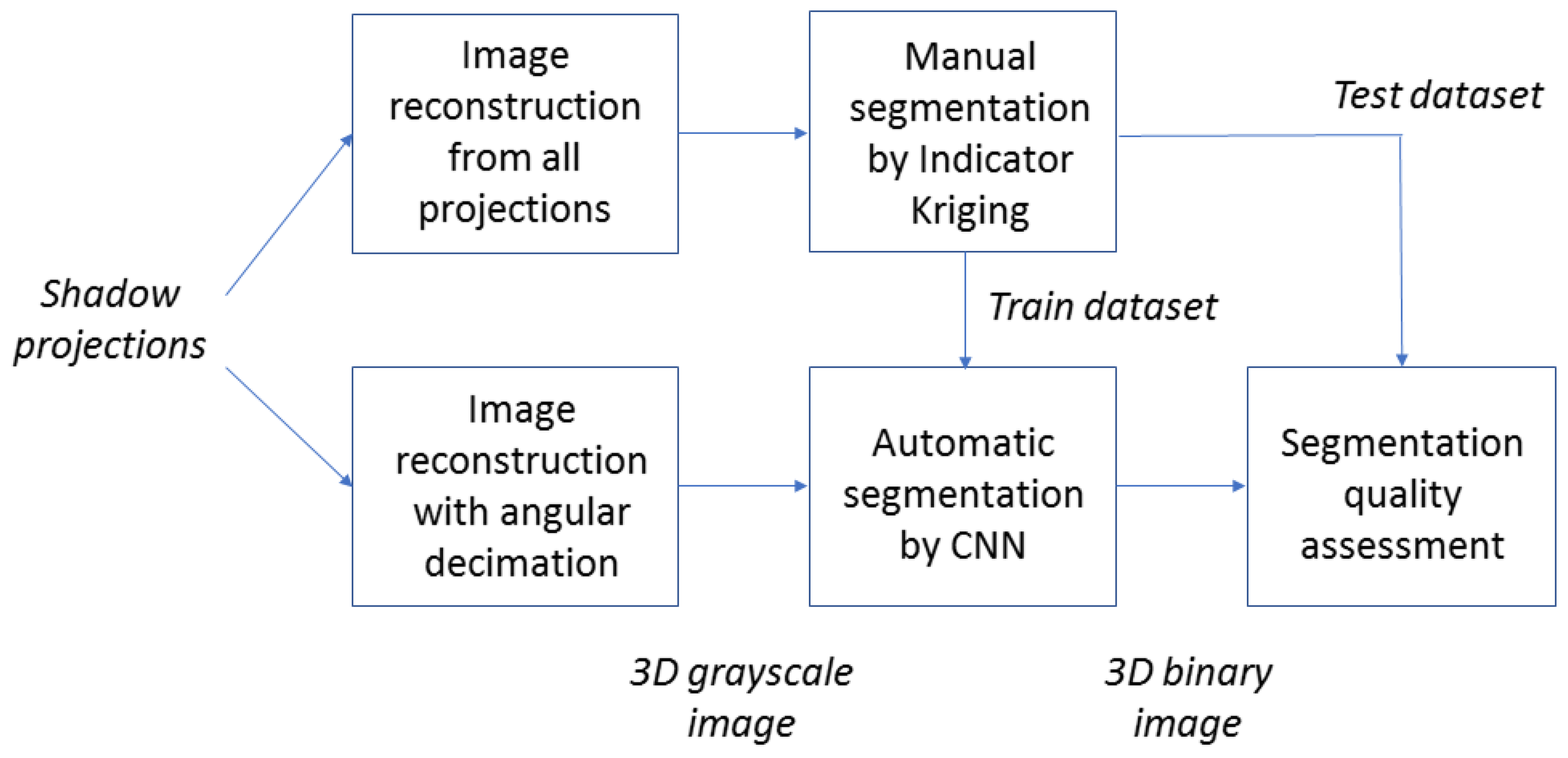

Figure 1 shows a typical DR workflow [

11]. The first step is image acquisition by X-ray microcomputed tomography (microCT) [

12]. The microCT system acquires a series of shadow projections over 180

or 360

rock sample rotation. A special software program reconstructs a 3D grayscale image from those projections by a back-projection method [

13]. Quality of 3D image depends on the number of projections used in the reconstruction procedure. One of the key steps in the workflow is a digital model construction by segmenting a 3D image. In an ideal case, multi-class segmentation is required to distinguish different mineral phases, organic substances, and pores. For the sake of simplicity, binary segmentation is often considered: each voxel is treated as belonging to a solid matrix or pore space. The resulting digital 3D model is applied for numerical simulation of properties of interest.

Even though image segmentation has been a field of active research for many decades, it remains one of the most difficult steps in various image processing systems. In the DR workflow, inadequate segmentation yields vastly differing results in a fluid transport and geophysical modelling. It is worth noting that some level of uncertainty about a segmentation outcome is inevitable for X-ray microCT images due to many factors, in particular, partial volume effect [

14]. According to our own experiments and outcomes claimed in [

15,

16], the Indicator Kriging (IK) algorithm [

17] provides reasonable segmentation outcomes for the majority of X-ray microtomographic images and, in many cases, outperforms other segmentation techniques. However, IK requires operator participation. Parameters of IK such as two thresholds and radius of Kriging window should be selected manually for each image under processing. Usually, an operator makes several segmentation attempts to select suitable parameters. This is a long and subjective procedure even for experienced persons. Ultimately, it is desirable to have a fully automatic image processing pipeline including segmentation. It can provide improved reproducibility and more objective simulation outcomes due to excluding subjective assessments of an operator. Modern machine learning (ML) based image segmentation methods look like a perfect candidate for the role.

Nowadays, ML and especially deep learning approaches are experiencing explosive growth. The main advantage of a deep learning is the ability to learn complex patterns and features from the data itself. Segmentation by means of Convolutional Neural Networks (CNN) provides state-of-the-art results for many tasks [

18]. CNN is one of the most promising approaches for automatic segmentation of microCT images. Our aim is to train CNN-based models once and to apply these models many times in the automatic mode for the segmentation of various rock samples.

One of the key challenges for the use of supervised machine learning for microCT images of rock samples is the lack of ground truth (GT). Manual pixel-perfect labelling of a large enough 3D dataset would be way too time-consuming, while utilizing significantly higher-resolution images, such as Scanning Electron Microscopy (SEM), possesses its own challenges [

19,

20,

21]. Moreover, gathering a representative enough collection of all the practically important diverse sample types is a demanding task by itself.

In this paper, we propose an approach for generation of labelled datasets and compare segmentation results by various deep neural networks, highlighting most promising approaches and discussing potential pitfalls for automatic processing of 3D images of rock samples. The remainder of this paper is organized as follows.

Section 2 contains a review of the existing approaches for segmentation of rock samples by supervised machine learning techniques. Next, we present in detail our approach for generation of training and validation datasets (

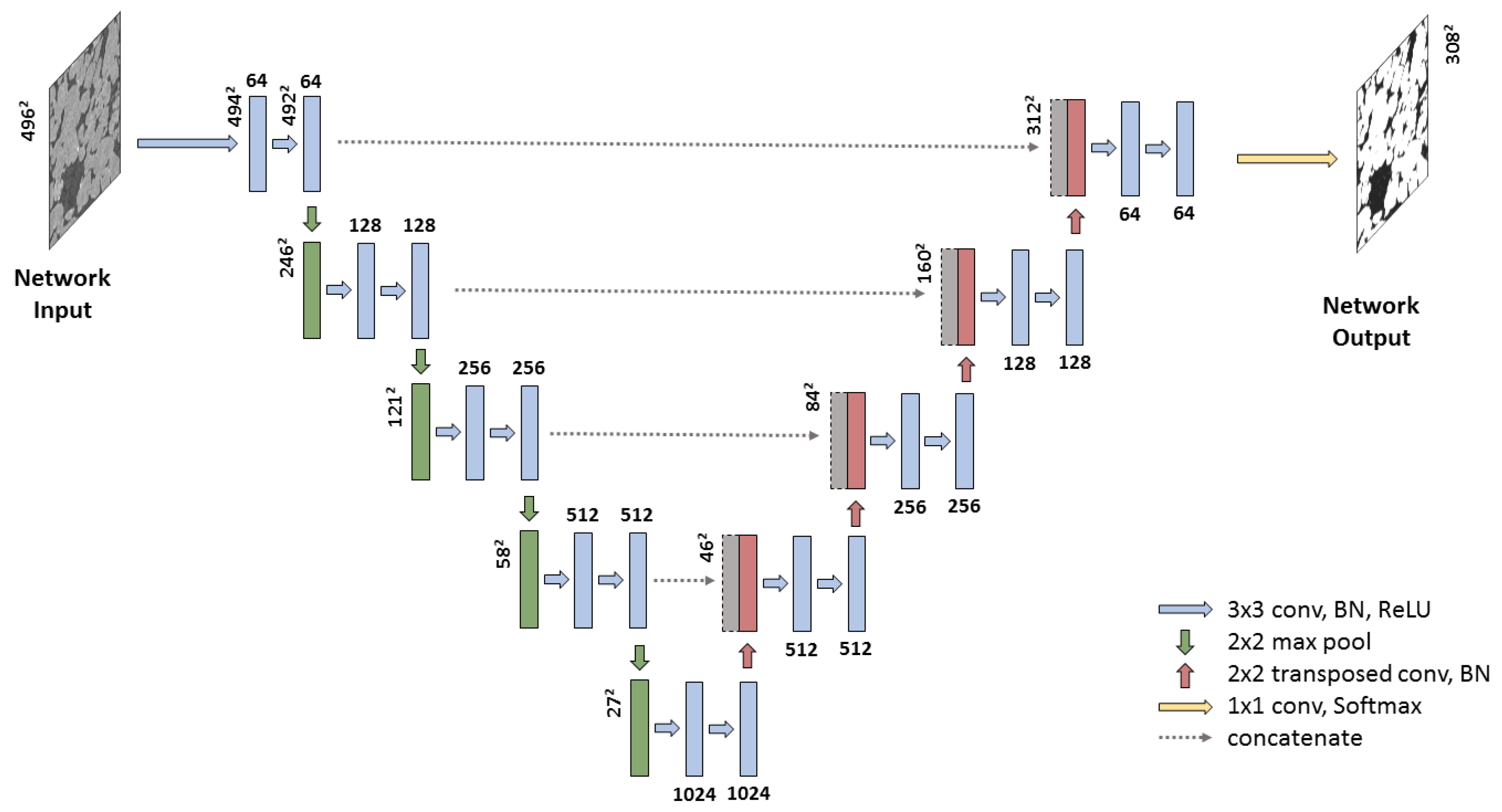

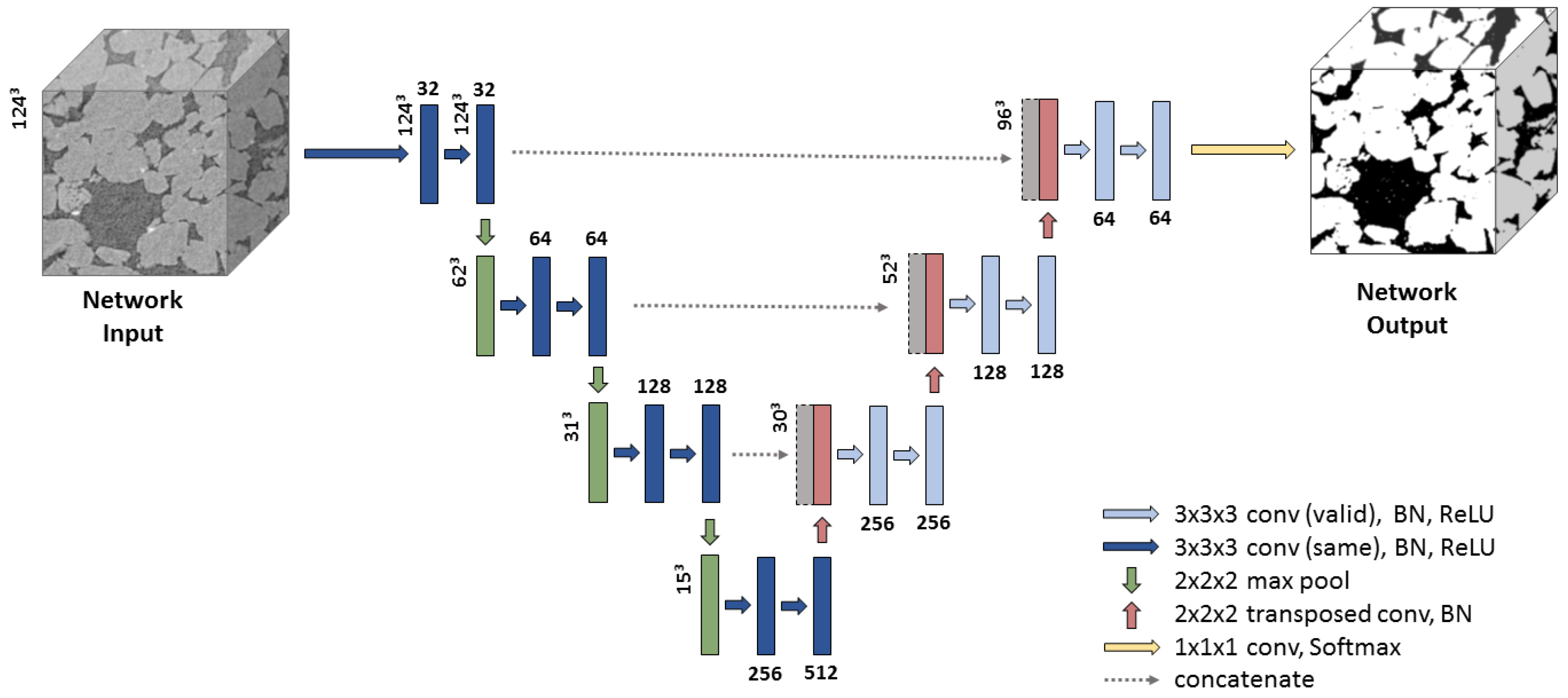

Section 3), pre-processing of microCT images and training of 2D slice-wise SegNet, 2D and 3D U-net for segmentation (

Section 4).

Section 5 presents the experimental results for deep neural networks under investigation as well as comparison with conventional segmentation methods: IK and thresholding. Finally, the conclusions and discussions of further research directions are contained in

Section 6.

2. Related Works

Training, tuning, and evaluation of segmentation algorithms for microCT images of porous media are challenging due to the lack of GT. Traditionally, samples with known spatial structure or synthetic images are applied as GT. In [

16], the researchers used porosity measurements for glass beads to quantitatively compare segmentation methods. In [

15], the authors simulated 2D and 3D soil images.

In this section, we focus on the review of existing applications of machine-learning techniques for handling microCT rock samples. We pay special attention to the training, testing, and validation sets construction. Data from the training set is applied for training of the ML model, data from the testing set is used for model hyperparameters adjustment, and data from the validation set is employed for the final estimation of the model performance. Sometimes, the same data are used as both testing and validation datasets.

ML-based methods of processing of microCT rock images can be divided into two groups: end-to-end regression providing direct prediction of the physical properties, such as porosity and permeability; image segmentation by solving of the classification problem with subsequent physical properties’ evaluation. We are interested in the second one because it is more interpretable and physically correct. Nevertheless, below, we mention several end-to-end methods for direct prediction of rock properties.

The authors in [

22] depict the application of three unsupervised and four supervised ML methods for segmentation of three microCT images: andesite and two sandstones. Similar investigation for andesite can be found in [

23]. These papers claim that the datasets they use contain up to 42 million of voxels, but each rock sample is analysed separately from all other rock types. Unfortunately, the way of splitting on training and testing datasets is described rather vaguely. According to the example, provided in the figures and in the text, all these voxels are from a single 1800 × 1800 × 10 box-shaped Region of Interest (ROI). This might not be an issue for the neural network they use (single hidden layer with just 10 neurons) but might lead to severe overfitting for larger neural networks, since even the first and the last z-slices are quite similar. Moreover, one of the difficulties, associated with cone-beam 3D microCT images we deal with, is that their local properties (e.g., sharpness) are not completely uniform—the z-slice from centre of the image is generally sharper than those from the most upper or lower part of the image. To achieve better results, one must use a dataset representing all such parts. The porosity of segmented images is compared with laboratory measurements by a gas pycnometer. Such method of estimation of segmentation quality was criticized, for example, in [

11] because microCT images are limited in spatial resolution by the order of microns without any chance to reproduce pores of nanometer scale. Thus, for almost any natural rock sample, digital porosity would be lower than those measured in the lab by gas. In addition, these images are typically taken on much smaller samples than ones used for laboratory measurements, which makes direct comparison of porosity values unfair.

In [

24], end-to-end CNN for prediction of pores media characteristics from images is described. Three samples of different sandstones are considered. The images of the samples are segmented by thresholding with automatic threshold selection by Otsu [

25]; then, pore networks are extracted [

26]. The characteristics of pores media, namely porosity, coordination number, and average pore size, are calculated from the pore networks. CNN is trained to predict the sample characteristics mentioned, based solely on 2D (128 × 128) grayscale image fragments. The dataset containing 7680 slices from images of three samples is split into training and testing ones. There are no details about the splitting procedure. We surmise that slices from each of the three samples were both in training and testing sets, and it might lead to an overestimation of the quality of the regression model.

The authors in [

27] describe the application of several ML-based approaches including 2D CNN inspired by VGG (Visual Geometry Group) [

28] and 3D CNN inspired by VoxNet [

29] for prediction of permeability. The dataset is based solely on a single 400 × 400 × 400 image of sandstone. In one of the experiments, it was cut into 9261 intersecting 100 × 100 × 100 volumes. Not surprisingly, for such idealistic conditions, methods considered in the paper demonstrate good prediction accuracy. It would be interesting to assess their performance on unseen data.

The authors in [

30] compare three methods for segmentation of synthetic microCT data. A real image of a single sandstone sample is segmented into void and solid phases via ZEISS ZEN Intellesis (Carl Zeiss Microscopy GmbH: Jena, Germany) [

30]—graphical interface for interactive training of machine learning classifiers provided by scikit-learn [

31] backend. For the generation of synthetic images, this segmented volume is forward-projected into the projection domain, then shadow projections are blurred, and Gaussian noise is added to them; finally, a new volumetric image is reconstructed form these processed shadow projections. A similar method for GT generation is described in [

11]. Obviously, such approaches produce significantly more natural distortions, compared to adding Gaussian noise directly into the microCT image. However, a microCT device demonstrates many other distortions: X-ray tube instabilities, sample position mechanical volatility, detector dead pixels, etc. Modern reconstruction methods go to great lengths to compensate them. For example, the authors in [

32] describes a method for software shadow projections post-alignment. However, even these methods are not perfect. Creating a completely synthetic dataset that would accurately reproduce all these effects is a difficult task. Moreover, even an imaginary perfect microCT device would experience phase-contrast effects that are usually visible as oversharpened edges in microCT image [

33]. Basic forward and backward-projection methods ignore this effect as well.

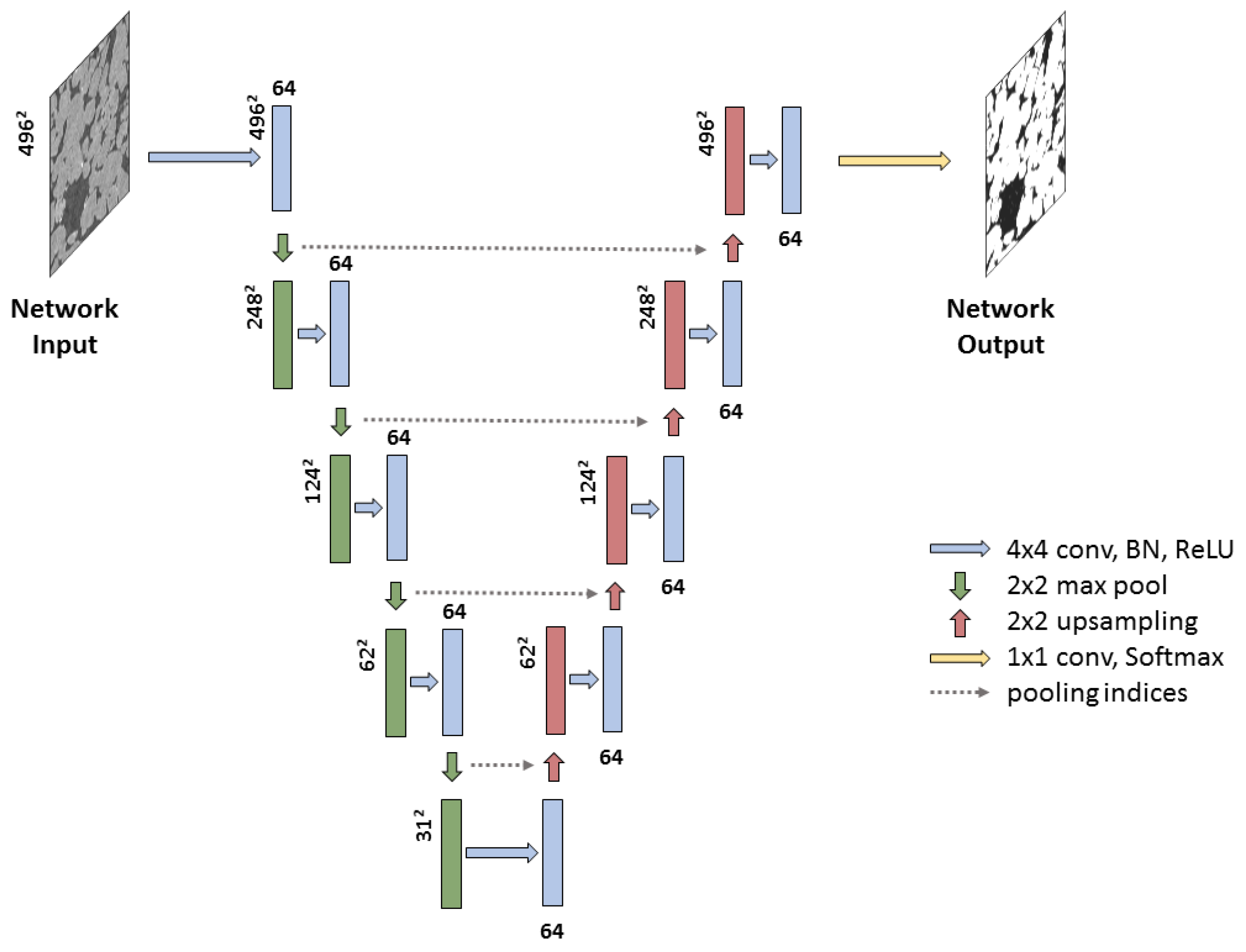

Multi-phase segmentation of microCT image of sandstone by CNN is presented in [

34]. Two versions of SegNet [

35,

36] in 2D slice-wise mode were explored. Just 20 slices with size 256 × 256 were annotated in semiautomatic manner. Most of the five phases are clearly distinguishable by their grayscale levels, which greatly simplifies the task. The additional phase related to “other minerals (mainly clays)” is not far from quartz by its greyscale level but features a dramatically different texture. Dataset was split into training, testing, and validation set. To cope with the limited number of annotated data available, data augmentation by cross-correlation simulation algorithm was applied.

In general, we conclude that existing works related to microCT images for Digital Rock workflow only deal with relatively small datasets. In addition, as a rule, data from the same sample are used for training, testing, and validation. Although this allows for performing a segmentation of the sample in question, it is difficult to say something about the generalization capability. The trained network might either perfectly work or completely fail on an image of another sample, which is different by mineralogy, image acquisition parameters, intensity distribution, etc.

3. Datasets

We propose the following relatively fast and simple approach for dataset generation. Our aim is to achieve the maximum possible quality, even if the experiment will last several times longer than a standard one. It should be noted that enormous increase of imaging experiment time does not guarantee perfect quality by itself. Possible instabilities of microCT system can lead to significant degradation of overall image quality for long scanning. First, an X-ray microCT experiment with a reasonably large number of shadow projections is conducted. High-quality 3D grayscale image is reconstructed using all shadow projections. Next, we run the same reconstruction process using only each forth projection to produce the 3D grayscale image with reduced quality. Such approach is named angular decimation. Finally, we perform IK-based segmentation of the high-quality grayscale image and obtain the binary image, which we consider as GT for image with reduced quality. IK parameters are thoughtfully selected by an operator in an interactive manner. The dataset is split into training and validation sets.

Figure 2 demonstrates the scheme of our approach for datasets generation and investigation of microCT images’ segmentation methods.

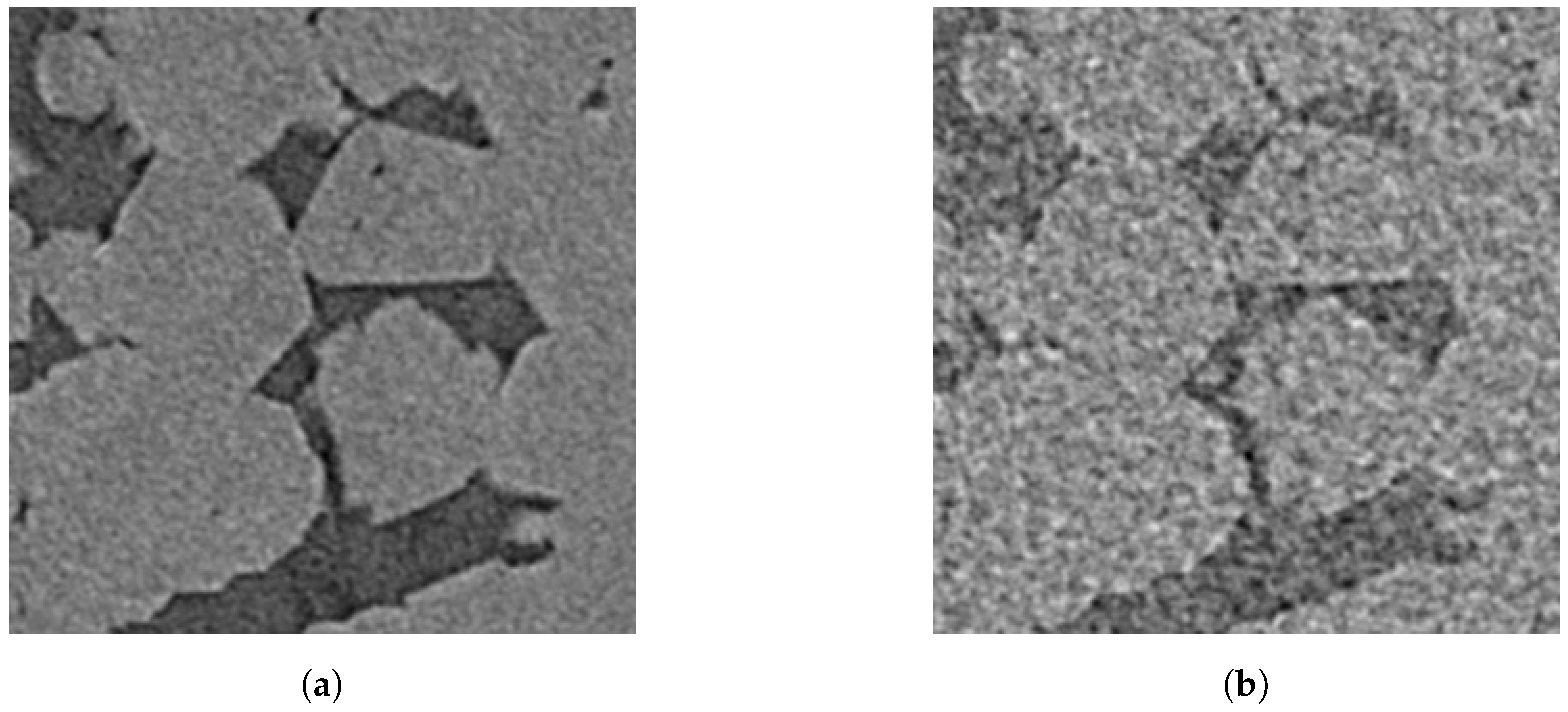

One can compare the quality of corresponding image fragments reconstructed from all shadow projections (see

Figure 3a) and reconstructed from four times less shadow projections (see

Figure 3b). The usage of the reduced number of shadow projections can be considered as a mimicking of a faster way of scanning. This decimated set of the shadow projections is highly similar to independent four times faster scan. In contrast to unnatural noise patterns and hardly-realistic ring artefacts demonstrated in [

11], our images reconstructed with four times angular decimation demonstrate no unnatural features.

Our assumption is the following: the best technique performs segmentation of the worsened copy as much as possible closer to segmentation outcomes for high-quality ones. For our experiments, we selected eight samples of sand and sandstones, which can be scanned with the best quality to mitigate dependence from the segmentation method for GT obtaining. Although we apply our own modification of the IK algorithm for the segmentation of high-quality images, any reasonable segmentation technique could be used for the task. We also use IK for comparison with CNN-based segmentations. We argue that IK has no intrinsic advantages over other techniques under investigation because the statistical distributions of intensities in the local vicinity notably differ for an image with reduced quality and a high-quality one.

We scanned the samples using SkyScan 1172 microCT system (Bruker MicroCT: Kontich, Belgium) with the following parameters: X-ray high voltage—100 kV, X-ray tube current—100 A; voxel size—2.3 m; exposure time—1767 ms, averaging of 10 frames. The imaging was performed in 180 mode, and about 2000 shadow projections were employed for reconstruction of a high-quality image.

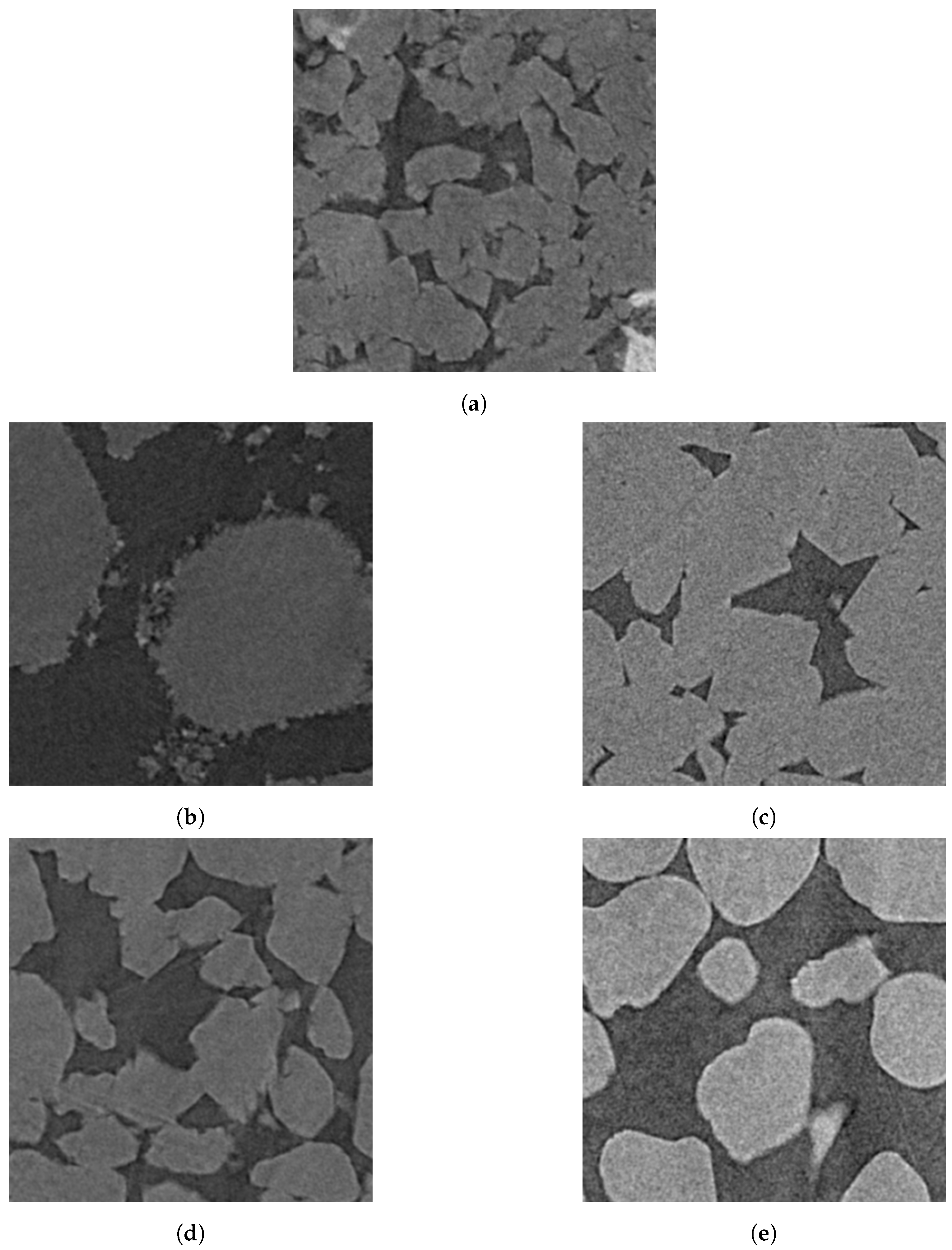

The samples belong to five different types:

BB: Buff Berea sandstone has small semi-rounded non-uniform grains (see fragment of high-quality image in

Figure 4a).

GRV: Gravelite sandstone has large angular non-uniform grains (see a fragment of a high-quality image in

Figure 4b).

FB: Fontainebleau sandstone has large angular uniform grains and almost no microporosity (see fragment of high-quality image in

Figure 4c). We used two samples of such sandstone denoted as FB1 and FB2.

BHI: Bentheimer sandstone has middle, sub-angular non-uniform grains with 10–15% inclusions of other substances such as spar and clay (see fragments of high-quality images in

Figure 4d). We used three samples of such sandstone denoted as BHI1, BHI2 and BHI3.

UFS: Unifrac sand has large rounded uniform particles and no microporosity (see fragments of high-quality images in

Figure 4e).

In this paper, we use the microporosity term for the designation of fine structures relating to pores and grains having a size close to scanning resolution.

Each 3D image has a size of

voxels with 8 bits per voxel bit depth. It can be represented as a set of

matrices

and

. Ground truth segmentation can be represented as a set of

matrices

and

.

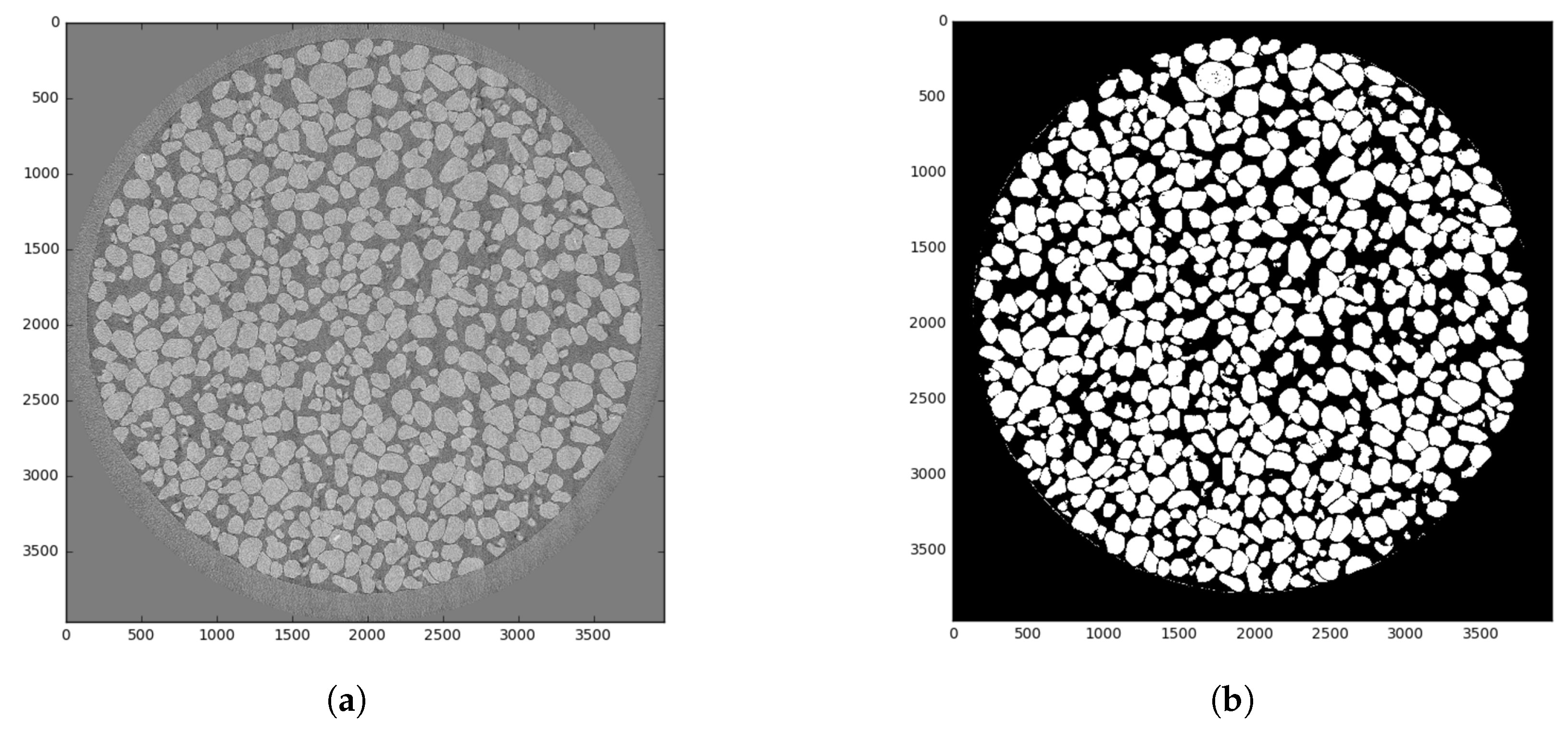

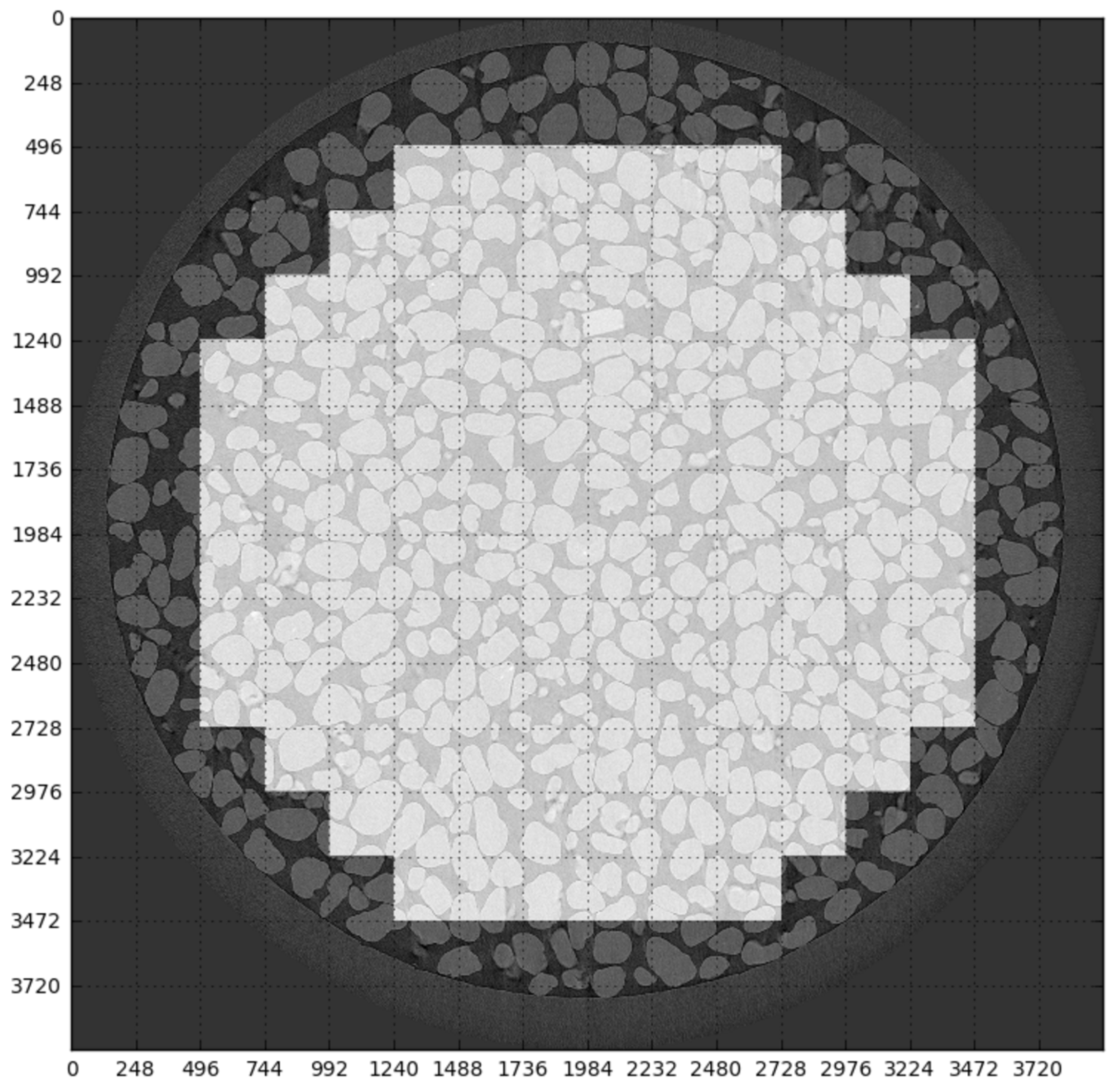

Figure 5a shows an example of a slice for a UFS sample, and

Figure 5b demonstrates corresponding GT. Brighter pixels refer to a more solid matter. For the segmented image, white pixels stand for quartz, and black pixels are for voids. We only consider the inner part of the image belonging to a sample. Thus, we process for each 3D image about 1600 slices containing a totally

voxels per image.

As was mentioned above, existing studies of applications of machine learning techniques for segmentation only use from one to three samples of rocks. It leads to an overestimation of segmentation quality and does not allow assessment of the generalization capability of trained classifiers. We have no aim just to demonstrate that CNN is able to provide high segmentation outcomes for the single sample, in the case of the network being trained on the same sample. Our intention is the investigation of how CNN-based segmentation operates for unseen data:

On the other hand, we aim to maintain a reasonably limited rock types variability. We do not include carbonate rocks, X-ray contrasted samples, etc.

We split our dataset into training and validation sets in five different ways (see

Table 1). We do not fine-tune hyperparameters individually for each experiment, so we do not use the testing dataset.

Dataset 1 is aimed at the estimation of segmentation quality of sandstones by a neural network trained on a single UFS sample.

Datasets 2 and

3 have one additional sample in the training set.

Dataset 2 includes GRV, which has maximal visual difference with other images, in the training set.

Dataset 3 includes FB1 to training set, and it has GRV and FB2 (second sample of Fontainebleau sandstone) in the validation set.

Dataset 4 includes UFC, GRV, and FB1 in the training set. Finally,

Dataset 5 has five samples in the training set and FB2, BHI3, and BB in validation set. In general, we expect an improvement of the performance of neural networks with enrichment of the training dataset with the new data.

As mentioned above, existing investigations of the application of machine learning methods for the segmentation of images of rock samples used relatively small training and testing sets. Our datasets significantly outperform them in both the amount and diversity of data.

Table 2 contains sizes of training and validation sets in 3D images, 2D slices, and voxels.

5. Results and Discussion

For evaluating the quality of the segmentation inside ROI, we decided to pick the most application-neutral metrics,

Accuracy expressed in percentages:

where

are indices of pixel;

ROI is the region of interest depicted in

Figure 6;

l is the number of slices;

is the matrix of predicted labels;

S is the matrix of ground truth labels. The

Accuracy is calculated for each sample separately.

Our aim is developing a solution for automatic segmentation without operator participation. Currently, in our Digital Rock workflow, IK operates under operator control. The image segmentation algorithm IK is based on two concepts [

17]. The first concept is the

kriging (or Gaussian process regression) interpolation method, which is widely used in geostatistics [

54]. Kriging provides optimal (in sense of minimum variance) estimation of the unknown value at some spatial point based on the values observed in the neighbourhood points weighted according to spatial covariance. In general, the idea is associated with distance-weighting algorithms. The size of the neighbourhood is chosen by an operator. The second concept is the

indicator, which represents the value to be interpolated, and is based on an a priori assignment of grayscale values to classes, for example, pore and solid substances.

Thus, the IK method requires three parameters set by an operator for the segmenting of each image. Firstly, the window radius controls the neighbourhood size. Increasing window radius usually results in a smoother and less noisy outcome. Secondly, the two thresholds are used to create indicator functions for the pore and substance classes. Ultimately, they are associated with probabilities that a voxel with a given intensity level belongs to a specific class if ignoring all the information about its neighbours. The simplest interpretation is that all pixels above the substance threshold should go to the substance class, regardless of their neighbourhood and vice versa all below the pore threshold go to pore class. Selecting adequate thresholds is especially challenging for noisy images when the noise level exceeds the difference between the grey levels associated with the two classes.

Even for an experienced person, it is extremely difficult to select these three parameters for providing suitable segmentation results from the first attempt. In practice, operator iteratively makes several segmentation attempts aiming to find better parameters based on visual analysis of results. For considered images, such iterative parameter selection by operator requires about an hour. Despite several attempts, segmentation results remain rather subjective, and their repeatability and reproducibility are not high.

Table 3 contains

Accuracy of segmentation via IK by three operators. These results were obtained for a BB image reconstructed with four times angular decimation. Each operator made five attempts. In these attempts, one can see a huge spread in segmentation quality. Although theoretically achievable

Accuracy by IK for this image equals 96.2, no one from the operator did not get this optimal result, average

Accuracy in operators’ attempts is much lower than the highest possible. For the other seven images, we could not organize identical manual segmentation experiments via IK by experienced operators. Instead of that, we determined optimal IK parameters by means of

Accuracy maximization via a simplex search optimizer [

55].

In spite of its simplicity, there are examples of successful applications of thresholding for segmentation of microCT images [

2,

56]. Thresholding is applicable in the case of high contrast between voxels of voids and solid substances, which leads to the bi-modal histogram. In the thresholding algorithm, the voxels with a value lower than or equal to the threshold are marked as pore and voxels with a value higher than the threshold are marketed as a solid. In practice, the threshold is set by an operator or automatically [

25]. We consider thresholding as one more non-machine-learning method for comparison. We determined an optimal threshold by

Accuracy maximization via the exhaustive search.

Table 4 contains

Accuracy of segmentation of images reconstructed with four time angular decimation for both non-machine-learning methods. In this table, IK and thresholding are thought to be the same as perfect manual parameter selection. In the most cases, interactive parameters selection by an operator would provide worse segmentation quality. Thus, one should understand

Accuracy of these optimal IK and thresholding as an upper estimation of their performance.

From

Table 4, we can see, as it was expected, IK provides much better results in comparison with thresholding. For IK, variation of

Accuracy among different samples is very low, and IK performs equally for diverse images. For thresholding,

Accuracy on the UFS sample is significantly lower in comparison with other images.

For CNN-based segmentation methods, we present results in two ways:

Table 5,

Table 6 and

Table 7 contain

Accuracy for SegNet, 2D U-net, and 3D U-net accordingly depending on dataset, and we discuss the performance of each network independently from each other;

Table 8,

Table 9,

Table 10,

Table 11 and

Table 12 contain

Accuracy for five datasets depending on the segmentation method, and we compare deep neural networks with each other as well as against IK and thresholding.

Table 5 contains

Accuracy of segmentation by 2D SegNet depending on the dataset. The hyphen symbol means that the sample is in the training set. One might expect that adding more data to the training set should improve the segmentation quality. This should be clearly seen when the data related to the same rock type as in validation set is added to the training set. Sometimes, training set enlargement can lead to an insignificant decrease of

Accuracy due to occasional stuff. For FB2 sample,

Accuracy goes down as we add a GRV rock sample together with FB1 to the training set, although the addition of only FB1 leads to performance increases. Some effects do not satisfy our expectations. For BHI3,

Accuracy decreases after adding BHI1 and BHI2 to training set. Moreover,

Accuracy for BHI3 differs drastically from the metrics for BHI1 and BHI2. It is surprising because we do not see visual differences between those three images. In general, even without comparison with other segmentation methods, SegNet demonstrated unsatisfactory outcomes.

Table 6 contains

Accuracy of segmentation by 2D U-net depending on the dataset. Obtained outcomes satisfy our expectations. Segmentation quality grows with the size of training set.

Accuracy for FB2 increases when we include FB1 sample to the training set. The same happens for BHI3 after the adding BHI1 and BHI2 to the training set. There is no big variety in quality metrics for different samples, except

Dataset 1 with the smallest training set.

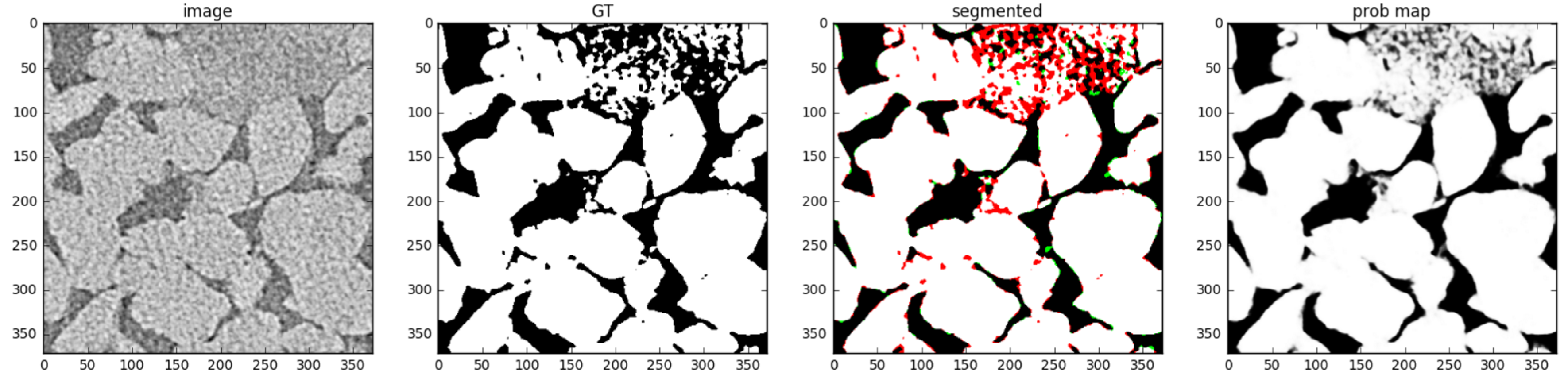

Figure 10 and

Figure 11 demonstrate how training set enrichment affects 2D U-net segmentation quality for the most difficult clayey regions with microporosity. These figures show GT, segmentation outcomes with an indication of false positive and false negative errors, and visualization of network output, which can be treated as a posteriori probability. Regions with microporosity proved to be the most challenging for segmentation by the networks. In BHI1 image (see

Figure 4e), the entire microporosity region was segmented erroneously by a network trained on

Dataset 1 that only contains a rounded and non-microporous UFS sand sample (see

Figure 4e) in the training set. The probability map looks much like a binary image; most pixels are close to either zero or one. The microporosity region is not only incorrectly classified, but the network is pretty sure that this is a large almost clean pore. When we enrich our training set with GRV and FB1 sandstones, which have some regions with microporosity, the situation becomes better (see

Figure 11). Once again, the majority of the segmentation errors are located in the microporosity region; however, the probability map demonstrates shades of grey there, and some of pixels are predicted properly. Indeed, these pixels are difficult to predict.

Segmentation outcomes by 3D U-net are presented in

Table 7. As well as for 2D U-net, we do not see any contradictions in the results by 3D U-net.

Let’s compare various segmentation methods (see

Table 8,

Table 9,

Table 10,

Table 11 and

Table 12). SegNet performance is clearly inferior to the other methods.

Accuracy of thresholding is not high even for an optimal threshold. Formally, IK with optimal parameters has the highest segmentation quality. In practice, IK with parameters selected by an operator can have a lower performance (see

Table 3). Taking into account the performance of IK “in wild”, 2D and 3D U-net provide comparable results, sometimes these networks outperform IK. The undoubted advantage of U-net-based methods is operator-free segmentation mode. Once trained, we are able to use the model without adjustment of any parameters. 2D and 3D U-nets provide perfect repeatability and reproducibility segmentation results. The application of these deep networks excludes the influence of the subjective judgments of an operator.

Since a 2D segmentation technique is unable to use all available local information, our intuition suggests that we should use 3D receptive fields. However, 2D and 3D U-nets performance is approximately equal. This could be related to by the restrictions, imposed on the 3D model, to limit the computational cost: the smaller number of layers and feature maps, padding, etc. This phenomenon can be explained by the small depth of 3D model and relatively small size of 3D cube used for training and inference; recall that we cannot expand our network due to GPU memory limits. The authors in [

43] compare 2D and 3D U-net for segmentation of cardiac MRI images and make a similar explanation to the fact that the 3D network does not outperform the slice-wise one. We expect that further thoughtful architectural and hyperparameter optimizations would eventually allow the 3D version to outperform the 2D one.

In addition, our study suggests that the optimal choice of the quality metrics remains an open issue. Pixel-wise Accuracy, being deliberately general and neutral toward different applications, has limited discrimination power for estimation of segmentation quality of images of rock samples. Experiments with images having microporosity shows big regions of solid matrix and voids providing the main contribution to the measure, whereas small structural features occurring has a subtle influence. However, such microstructural features can have significant importance for further simulation, e.g., of flow processes. It is preferable to elaborate quality metrics based on physical meanings of pore space topology and roughness of surface for the problem solved.

All experiments were performed on a system with four GPUs Tesla K40m made by nVidia (Santa Clara, CA). GPU provides 2880 stream cores, 12 GB of memory, and 4.29 Tflops of peak single precision performance. All CNNs were implemented via TensorFlow [

57]. Each of the CNN-based models was independently trained on each of the five datasets. Typically, we did training at night. Training time for every model was about 12 hours. There is no significant difference in training time for models under consideration. Thresholding is the fastest segmentation method, and several minutes are required for processing of microCT images; however, segmentation quality is unacceptable. Segmentation time for both U-nets is about several hours per image. Taking into account several attempts of an operator to find suitable IK parameters, such segmentation time is comparable with the current segmentation procedure via IK. Using special hardware accelerators can speedup segmentation via CNN [

58].