4.2.1. Effectiveness of the Model

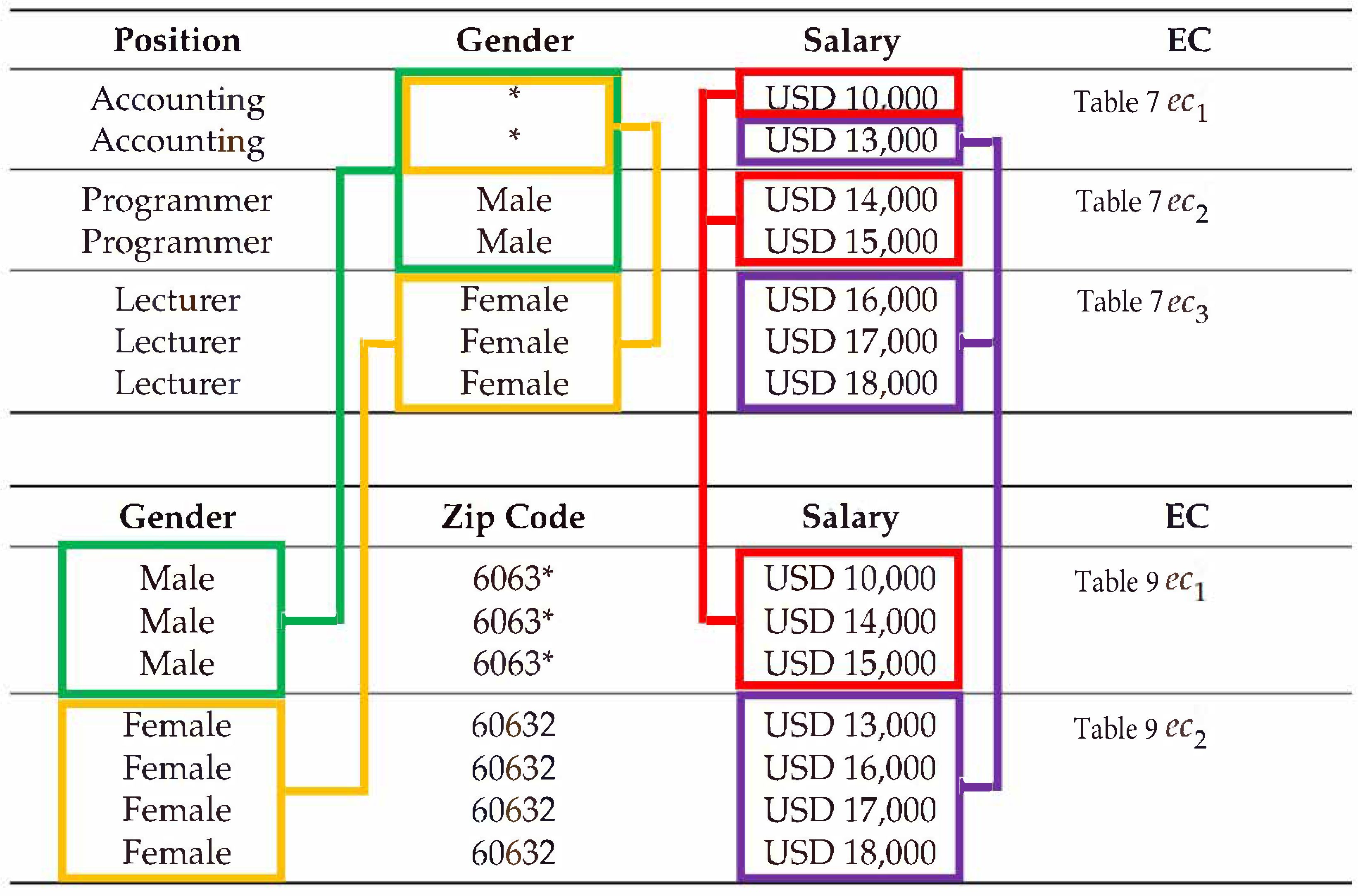

In the first experiment, we evaluate the effect of the number of quasi-identifier attributes on the data utility of the datasets constructed by the proposed model and the compared models such that they are based on and penalty costs. For the experiment, the value of l is fixed at 2 for the proposed model and l-Diversity. For -Privacy, the values of L, K, and C are set to the number of quasi-identifier attributes, l, and , respectively. Furthermore, all sensitive values available in the experimental datasets are protected sensitive values. Only - is set as a sensitive attribute. The number of quasi-identifier attributes varies from 1 to 6. The process of varying the number of quasi-identifier attributes is as follows.

Initially, only - is a quasi-identifier attribute.

In the second experiment, the experimental dataset only contains - and as quasi-identifier attributes.

-, , and - are the quasi-identifier attributes in the third experiment.

The fourth experiment has the quasi-identifier attributes -, , -, and .

The quasi-identifier attributes in the fifth experimental dataset are -, , -, , and .

In the final experimental dataset, , , -, , , and - are all set as quasi-identifier attributes.

As shown in

Figure 5 and

Figure 6, when the number of quasi-identifier attributes is increased, the

and

penalty costs of all experimental datasets also increase. Moreover,

l-Diversity and

-Privacy are equally effective and exhibit higher performance than the proposed model. However, they are slightly different. The higher

and

penalty costs in the experimental datasets with an increasing number of quasi-identifier attributes are due to the increase in the number of quasi-identifier attributes, and the size of the equivalence classes also increases. In addition, larger equivalence classes generally lead to more suppressed or generalized values. Moreover, larger equivalence classes often lead to a large number of generalized values in datasets. The reason

l-Diversity and

-Privacy are equally effective in terms of maintaining the data utility of the experimental datasets with every experiment is that when the experimental datasets do not allow for the retrieval of missing values and all sensitive values are protected sensitive values, the released version of the data from the datasets based on

l-Diversity is not different from that based on

-Privacy. The reason for the reduced effectiveness of the proposed model compared to the other models is that, in addition to datasets needing to satisfy the privacy preservation constraints, the compared results between datasets and their corresponding released versions must also satisfy the privacy preservation constraints. For this reason, the datasets satisfy the privacy preservation constraint of the proposed model; they are not susceptible to privacy violation from data comparison attacks. However, datasets constructed from

l-Diversity and

-Privacy are still susceptible to such attacks.

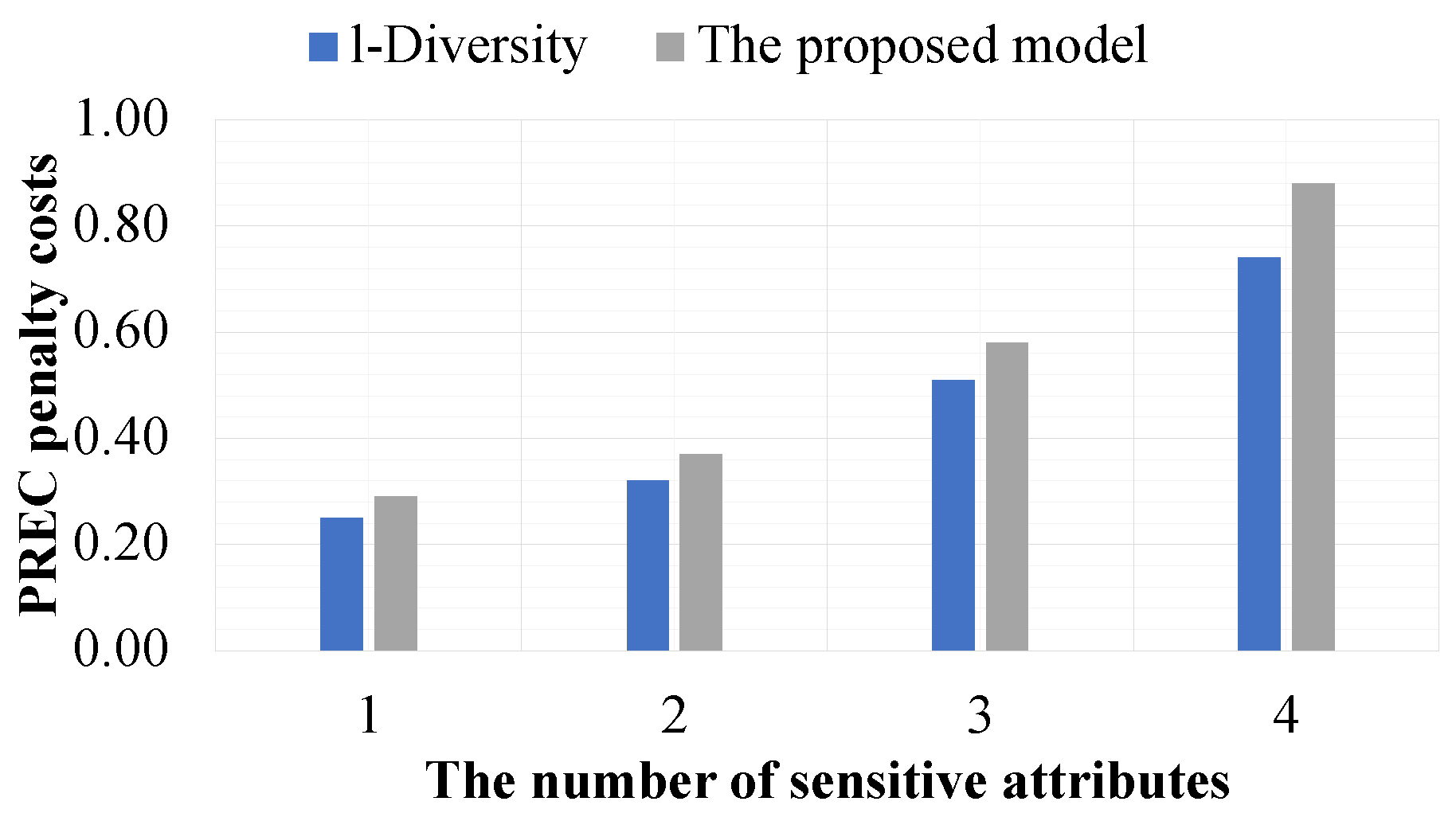

In the second experiment, we evaluate the effect of the number of sensitive attributes on the data utility of datasets constructed by the proposed model and the compared models such that they are based on and . In this experiment, the proposed model is only evaluated by comparison with l-Diversity because -Privacy cannot address privacy violation issues in datasets with multiple sensitive attributes. In this experiment, the value of l is fixed at 2; all quasi-identifier attributes are available in the experimental datasets; and the number of sensitive attributes varies from 1 to 4. The process of varying the number of sensitive attributes is as follows.

The first experimental dataset only has - as a sensitive attribute.

The second experimental dataset only contains - and as sensitive attributes.

-, , and are the sensitive attributes in the third experimental dataset.

In the final experimental dataset, -, , , and -- are all set as sensitive attributes.

Figure 7 and

Figure 8 show that when the number of sensitive attributes is increased, the

and

penalty costs of all experimental datasets are also increased. The reason for the higher

and

penalty costs in the experimental datasets with an increasing number of sensitive attributes is that when the number of sensitive attributes is increased, the size of the equivalence classes also increases. Moreover,

l-Diversity is more effective than the proposed model. However, they are slightly different. The reason for the reduced effectiveness of the proposed model compared to

l-Diversity is that, in addition to datasets needing to satisfy the privacy preservation constraints, the compared results between datasets and their corresponding released versions must also satisfy the privacy preservation constraints, but this privacy preservation constraint is not considered by

l-Diversity. For this reason, although the proposed model is less effective than

l-Diversity, it is more secure in terms of privacy preservation.

In the third experiment, we evaluate the effect of the value of l on the data utility of datasets constructed by the proposed model and the other models such that they are based on and . In this experiment, only - is set as a sensitive attribute, and all quasi-identifier attributes are available in the experimental datasets. The value of l is varied from 2 to 10 for the proposed model and l-Diversity. In -Privacy, the values of L, K, and C are set to the number of quasi-identifier attributes, l, and , respectively. Furthermore, all sensitive values available in the experimental datasets are protected sensitive values.

Figure 9 and

Figure 10 show that when the value of

l is increased, the

and

penalty costs of all experimental datasets are also increased. This is because when the value of

l is increased, the size of the equivalence classes is also increased. Moreover, the compared models are more effective than the proposed model. However, they are slightly different. The reason that the proposed model is less effective than the compared models is that in addition to datasets needing to satisfy the privacy preservation constraints, the compared results between the datasets and their corresponding released versions must also satisfy the privacy preservation constraints, but this privacy preservation constraint is not considered by the compared models.

Figure 5,

Figure 6,

Figure 7,

Figure 8,

Figure 9 and

Figure 10 show that the number of sensitive attributes and the value of

l have a greater effect on the data utility in the datasets than the number of quasi-identifier attributes; this is because the privacy preservation constraint of the proposed model and of the compared models is based on the number of distinct sensitive values.

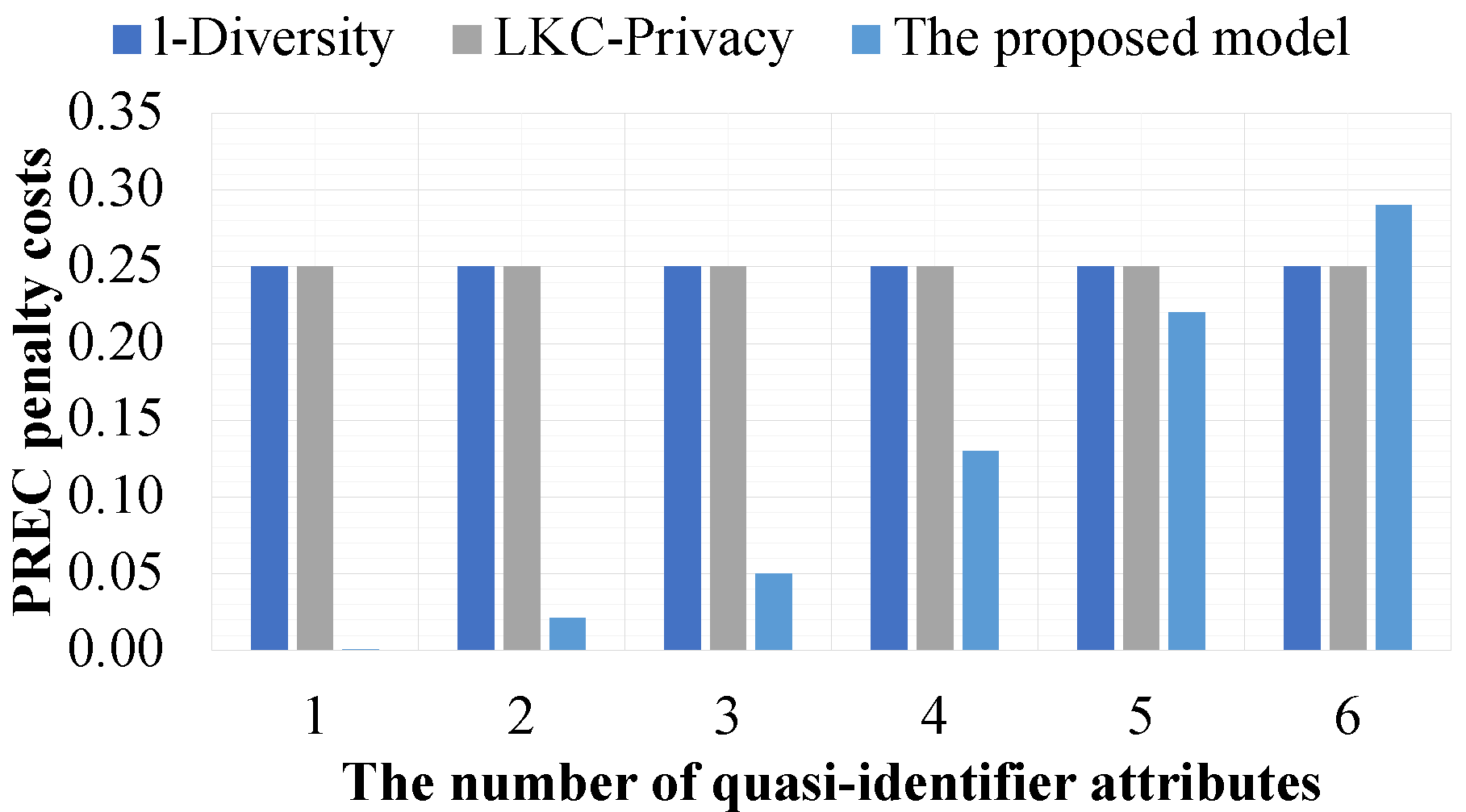

In the fourth experiment, we evaluate the effect of a limited number of quasi-identifier attributes on the data utility of datasets constructed by the proposed model and the compared models such that they are based on and . In this experiment, we assume that the data holder needs to limit the number of quasi-identifier attributes for data release from one to six attributes. In this experiment, the value of l is fixed at 2 for the proposed model and l-Diversity. In -Privacy, the values of L, K, and C are set to the number of quasi-identifier attributes, l, and , respectively. Furthermore, all sensitive values available in the experimental datasets are protected sensitive values. Only - is set as a sensitive attribute. All quasi-identifier attributes are available in the experimental datasets.

Figure 11 and

Figure 12 show that the proposed model is more effective than the compared models in all experiments constructed from experimental datasets with five quasi-identifier attributes at most. The reason for this is that it supports separation of the quasi-identifier attributes to preserve the privacy of data in datasets, while the compared models do not consider this property in their privacy preservation constraints. For this reason, the compared models must consider all quasi-identifier attributes in every experiment. However, when the experimental dataset has six quasi-identifier attributes, the proposed model is less effective than the compared models. This is because the experimental datasets are the same size, and in addition to datasets needing to satisfy the privacy preservation constraints, the compared results between datasets and their corresponding released versions must also satisfy the privacy preservation constraint of the proposed model.

In the fifth experiment, we evaluate the effect of a limited number of sensitive attributes on the data utility of datasets constructed by the proposed model and the compared models such that they are based on and . In this experiment, the proposed model is only evaluated by comparison with l-Diversity because -Privacy cannot address privacy violation issues in datasets that have multiple sensitive attributes, and we assume that the data holder needs to limit the number of sensitive attributes for data release from one to four attributes. For this experiment, the value of l is fixed at 2. All quasi-identifiers and sensitive attributes are available in the experimental datasets.

Figure 13 and

Figure 14 show that the proposed model is more effective than the compared models in all experiments constructed from the experimental datasets with three sensitive attributes at most. The reason for this is that it supports separation of the sensitive attributes to preserve the privacy of data in datasets, while the compared models do not consider this property in their privacy preservation constraints. For this reason, the compared models must consider all sensitive attributes in every experiment. However, when the experimental dataset has four sensitive attributes, the proposed model is less effective than the compared models. The reason for this is that the experimental datasets are the same size, and in addition to datasets needing to satisfy the privacy preservation constraints, the compared results between datasets and their corresponding released versions must also satisfy the privacy preservation constraint of the proposed model.

Figure 11,

Figure 12,

Figure 13 and

Figure 14 clearly indicate that the proposed model is more secure in terms of privacy preservation and better in terms of maintaining the data utility of datasets compared to the other models.

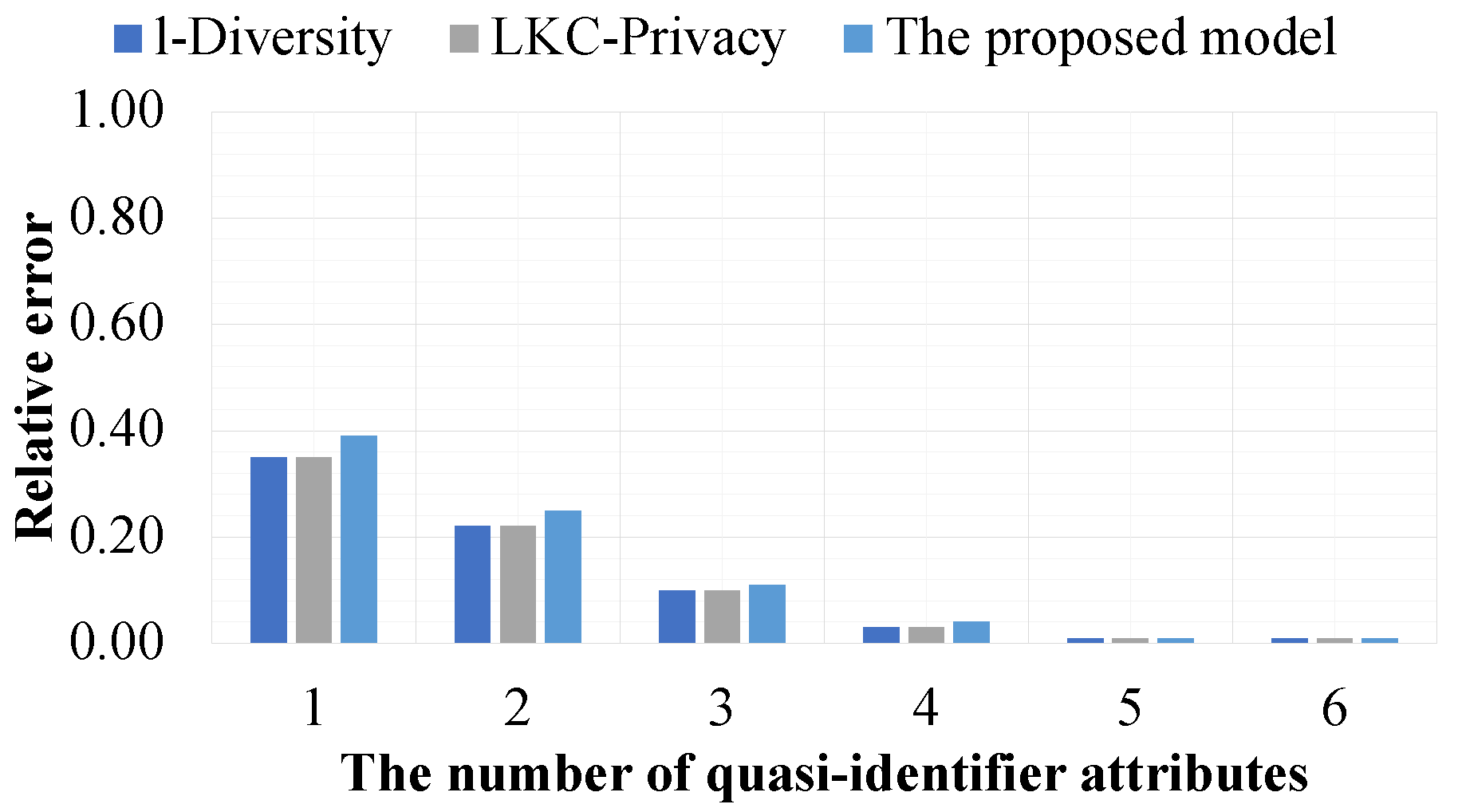

In the sixth experiment, we evaluate the data utility of datasets that satisfy the privacy preservation constraint of the proposed model,

l-Diversity, and

-Privacy such that they are based on the

query function in conjunction with the

or

query operator and the range of queries and they are evaluated by the relative error metric presented in

Section 3.2.3. In this experiment, the value of

l is fixed at 2 for the proposed model and

l-Diversity. With

-Privacy, the values of

L,

K, and

C are set to the number of quasi-identifier attributes,

l, and

, respectively. Furthermore, all sensitive values available in the experiment datasets are protected sensitive values. Only

-

is a sensitive attribute, and all quasi-identifier attributes are available in the experimental datasets. Moreover, all of the experimental results are shown in

Figure 15 and

Figure 16 and are presented as the mean of the average of the results of 15 randomized queries in the form of

Query 1. The experimental results are shown in

Figure 17 and are presented as the mean of the average results of 15 randomized queries in the form of

Query 2.

The elements of these queries are defined as follows:

are the specified quasi-identifier attributes , , -, , , and -.

are the specified values for querying the data from the datasets.

is the lower bound for querying the data from the datasets.

is the upper bound for querying the data from the datasets.

In

Figure 15, we show the data utility of query results affected by the

query operation. The experimental results show that the number of query-condition attributes inversely influences the data utility of query results; i.e., a larger number of query-condition attributes leads to higher data utility of the query results. This is because a larger number of query-condition attributes gives all experimental models more options for generalizing the data in datasets, thus resulting in fewer errors.

Figure 16 shows the effect of using the

query operation on the query results. Obviously, when the number of query-condition attributes is increased, the relative errors of the query results are also increased. This is because all experimental models have limitations regarding the values satisfied in data queries.

Figure 17 shows the effect of using the range of queries on the query results. Note that when the query range condition is set to 0, it means that the exact same value is applied. The trend of the experimental results in

Figure 17 is similar to that of the experimental results shown in

Figure 15 for the same reason, i.e., a wide range of query conditions often leads to more options for generalizing the data in datasets, thus resulting in fewer errors.