A Systematic Review on Social Robots in Public Spaces: Threat Landscape and Attack Surface

Abstract

1. Introduction

- A transdisciplinary perspective of threat actors, threat landscape, and attack surface of social robots in public spaces.

- A set of comprehensive taxonomies for threat actors and threat landscape.

- A comprehensive attack surface for social robots in public spaces.

- A description of four potential attack scenarios for stakeholders in the field of social robots in public spaces.

2. Background and Related Works

2.1. Key Concepts and Definitions

2.1.1. Social Robots and Their Sensors

2.1.2. Public Space

2.1.3. Assets and Vulnerabilities

2.1.4. Threats and Threat Landscape

2.1.5. Attacks and Attack Surface

2.1.6. Cybersecurity, Safety, and Privacy

2.2. Related Works

2.2.1. Cybersecurity Threat Actors

2.2.2. Safety and Social Errors in HRI

2.2.3. Social Robot Security

2.2.4. Cybersecurity Threat Landscape

2.2.5. Summary of Related Works

- (i)

- The public space in which social robots will operate is dynamic and subject to various human and natural factors.

- (ii)

- Social robots will operate with little or no supervision and very close to users (including threat actors), which will increase the probability of attack success.

- (iii)

- The enabling communication technologies for social robots in public spaces are heterogeneous, which further increases their attack surface, as the whole system is more complicated than the sum of its constituent parts.

- (iv)

- The definition of the threat landscape for social robots in public spaces should include the perspective of all stakeholders and not just the cybersecurity discipline.

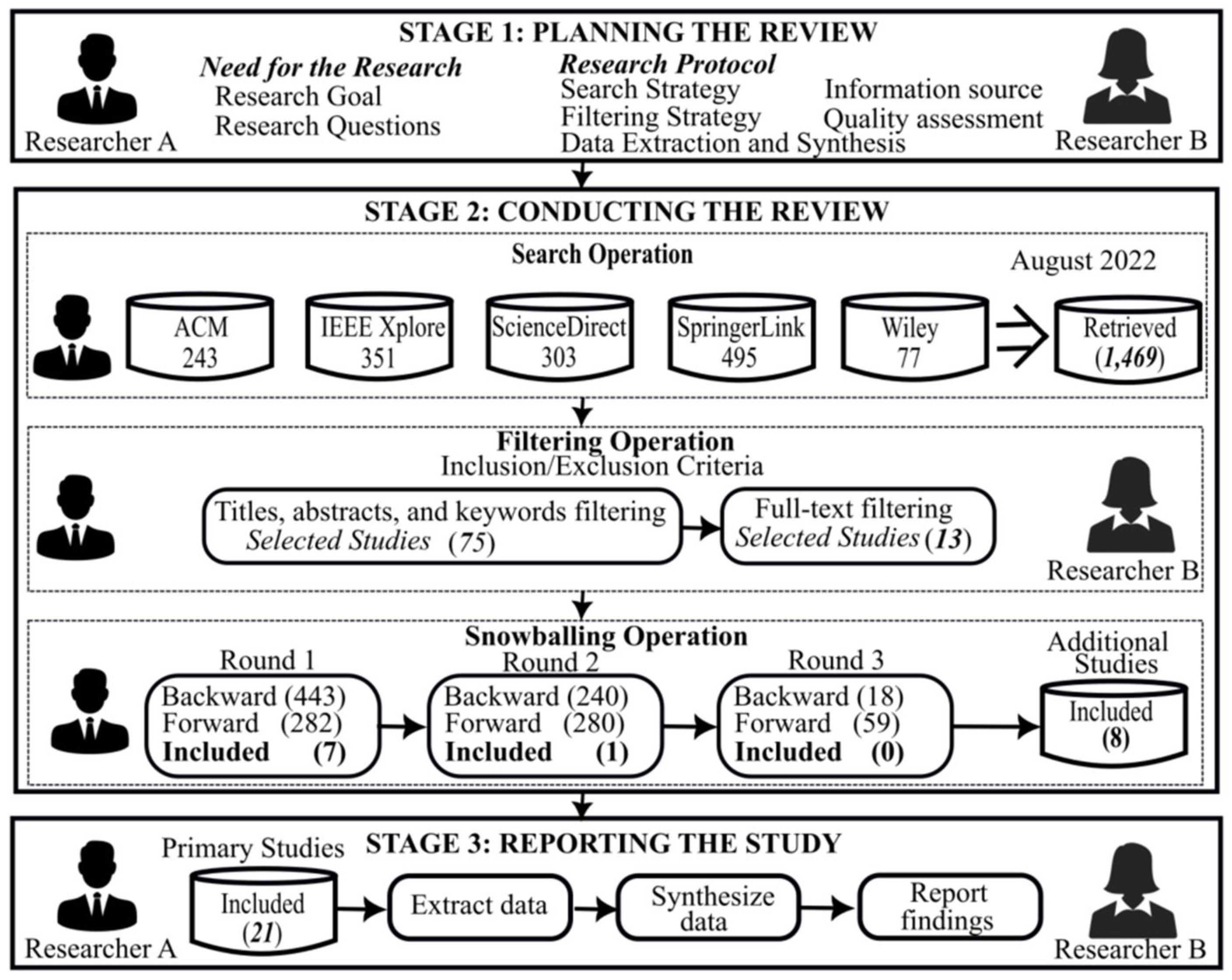

3. Methodology

3.1. Planning the Review

3.1.1. The Need for the Study

- RQ1.1: What is the research trend of empirical studies on social robots in public spaces?

- RQ1.2: What is the citation landscape of the primary studies in this area?

- RQ1.3: What are the reported research methods for these empirical studies?

- RQ2.1: Who are the reported potential threat actors for social robots in public spaces?

- RQ2.2: What are the reported threat actors’ motives for attack?

- RQ3.1: What are the reported assets (sub-components) of social robots in public spaces?

- RQ3.2: What are the reported threats?

- RQ3.3: What are the reported vulnerabilities?

- RQ4.1: What are the reported attacks on social robots in public spaces?

- RQ4.2: What are the reported attack scenarios?

- RQ4.3: What are the reported attack impacts?

- RQ4.4: What are the reported attack mitigations?

3.1.2. Developing and Evaluating the Research Protocol

- Domain (I1): The main field must be socially interactive robots in public spaces. The study must explicitly discuss threats, attacks, or vulnerabilities of social robots/humanoids. Public space in this context refers to any location, indoor or outdoor, accessible to the members of the public. Access to these public locations is the key to our usage of public space in this study, not ownership. Also considered as public spaces for this study are smart homes for elderly care, museums, playgrounds, libraries, medical centers, or rehabilitation homes that may not be easily accessible to all members of the public but allows visitors periodically.

- Methods (I2): Empirical studies using quantitative, qualitative, or mixed methodologies. Such studies could be an interview, case studies, or experiments which were observed in the field, laboratory, or public settings. We focused on empirical studies to ensure that our findings were backed with empirical evidence, not philosophical opinions.

- Type (I3): Study types were to include journal articles, conference papers, book chapters, or magazine articles. These studies must be peer-reviewed.

- Language (I4): English language only.

- Irrelevant studies (E1): Studies outside the specified domain above. We also classified non-empirical studies that are related to the above domain as irrelevant to this research.

- Secondary studies (E2): All secondary studies in the form of reviews, surveys, and SLRs.

- Language (E3): Studies that are not in the English language.

- Duplicate studies (E4): Studies duplicated in more than one digital library or extended papers.

- Inaccessible studies (E5): All studies with access restrictions.

- Short papers (E6): Work-in-progress and doctoral symposium presentations that are less than four papers.

- Front and back matter (E7): Search results containing all front matter like abstract pages, title pages, table of contents, and so on; we also excluded all back matter like index, bibliography, etc.

- Papers that are not peer-reviewed (E8): All studies that did not undergo peer review during snowballing.

3.2. Conducting the Review

3.2.1. Searching

3.2.2. Filtering

3.2.3. Snowballing

3.2.4. Quality Assessment

3.2.5. Data Extraction and Synthesis

4. Results

4.1. Research Trends for Social Robots in Public Spaces

4.1.1. What Is the Research Trend of Empirical Studies on Social Robots in Public Spaces?

4.1.2. What Is the Citation Landscape of Studies on Social Robots in Public Spaces?

4.1.3. What Research Methods Are Employed in Studies on Social Robots in Public Spaces?

4.2. Social Robot Threat Actors and Their Motives for Attack

4.2.1. Who Are the Reported Potential Threat Actors for Social Robots in Public Spaces?

4.2.2. What Are the Reported Threat Actors’ Motives for Attack?

4.3. Identifying Assets, Threats, and Vulnerabilities of Social Robots in Public Spaces

4.3.1. What Are the Reported Assets (Sub-Components) of Social Robots in Public Spaces?

4.3.2. What Are the Reported Threats?

4.3.3. What Are the Reported Vulnerabilities?

4.4. Attack Surface of Social Robots in Public Spaces

4.4.1. What Are the Reported Attacks on Social Robots in Public Spaces?

- Engaging in deceptive interactions. It includes spoofing and manipulating human behaviors in social engineering. Variants of spoofing attacks include content, identity, resource location, and action spoofing. Not all these variants of spoofing attacks were identified in this review. Identity spoofing attacks include address resolution protocol (ARP) spoofing (PS01), signature spoofing (cold boot attack PS13), DNS spoofing (PSA04), and phishing (PS05, PS11, and PSA03). PS01 reported one account of an action spoofing attack (clickjacking attack). Seven studies (PS01, PS05, PS07, PS11, and PSA05) reported attacks related to manipulating human behavior, i.e., pretexting, influencing perception, target influence via framing, influence via incentives, and influence via psychological principles.

- Abusing existing functionalities. It includes interface manipulation, flooding, excessive allocation, resource leakage exposure, functionality abuse, communication channel manipulation, sustained client engagement, protocol manipulation, and functionality bypass attacks. Interface manipulation attacks exploiting unused ports were reported in PS01. Flooding attacks resulting in distributed denial of service (DDoS) were also reported (PS10, PS13, and PSA03). Likewise, resource leakage exposure attacks resulting from CPU in-use memory leaks (PS13) and communication channel manipulation attacks in the form of man-in-the-middle attacks were reported (PS01, PS10, PS13, and PSA03).

- Manipulating data structures. It includes manipulating buffer, shared resource, pointer, and input data. Buffer overflow and input data modification attacks were reported in PS13 and PS09, respectively.

- Manipulating system resources. It includes attacks on software/hardware integrity, infrastructure, file, configuration, obstruction, modification during manufacture/distribution, malicious logic insertion, and contamination of resources. Some examples of attacks were identified in this category, i.e., physical hacking (PS05, PS09, PS10, and PS11), embedded (pdf) file manipulations (PSA04), obstruction attacks (jamming, blocking, physical destruction, PS02, PS10, PS13, PSA03).

- Injecting unexpected items. It comprises injection and code execution attacks. Injection attack variants include parameter, resource, code, command, hardware fault, traffic, and object injection. Examples of reported attacks in the category include code injection attacks (PS01, PS04, PS13) and code execution attacks (PS01, PSA03, and PSA04).

- Employing probabilistic techniques. It employs brute force and fuzzing. Brute force attacks on passwords and encryption keys were reported in PS01, PS13, and PSA01. Attacks resulting from unchanged the default administrators’ username/password and dictionary brute force password attacks were also noted in PS01.

- Manipulating timing and state. This class of attacks includes forced deadlock, leveraging race conditions, and manipulating the state. Forced deadlock attacks can result in denial of service (PS10, PS13, PSA03), while leveraging race conditions can result in a time-of-use attack (PS13). Manipulating states can result in various types of malicious code execution attacks (PS01, PSA03, PSA04).

- Collecting and analyzing information. It includes excavation, interception, footprinting, fingerprinting, reverse engineering, protocol analysis, and information elicitation. Data excavation entails extracting data from both active and decommissioned devices and users. Interception involves sniffing and eavesdropping attacks (PS10 and PS13). Footprinting is directed to system resources/services, while fingerprinting can involve active and passive data collection above the system to detect its characteristics (PSA03). Reverse engineering uses white and black box approaches to analyze the system’s hardware components. Protocol analysis is directed at cryptographic protocols to detect encryption keys. Information elicitation is a social engineering attack directed at privileged users to obtain sensitive information (PS05 and PS11). In this review, some studies reported collecting and analyzing information attacks as surveillance (PS05, PS07, and PSA06).

- Subverting access control. It includes exploits of trusted identifiers (PSA03), authentication abuse, authentication bypass (PSA01, PS09, PS13, and PSA03), exploit of trust in client (PS06), privilege escalation (PS01, PS09, and PS13), bypassing physical security (PS01), physical theft (PS05), and use of known domain credentials (PS01).

4.4.2. What Are the Reported Attack Scenarios for Social Robots in Public Spaces?

4.4.3. What Are the Reported Attack Impacts of Social Robots in Public Spaces?

4.4.4. What Are the Reported Attack Mitigations on Social Robots in Public Spaces?

5. Discussion

5.1. Implications of Our Findings

5.1.1. Research Trends of Social Robots in Public Spaces

5.1.2. Threat Actors of Social Robots in Public Spaces

5.1.3. Identifying Assets, Threats, and Vulnerabilities of Social Robots in Public Spaces

5.1.4. Attack Surface of Social Robots in Public Spaces

5.2. Limitations and Threats to Validity

5.2.1. Construct Validity

5.2.2. Internal Validity

5.2.3. External Validity

5.3. Recommendations and Future Works

- Academic researchers and industrial practitioners should carry out further empirical research to test and validate the threats proposed in this study. This will provide valuable insights into the security of human–social robot interactions in public spaces.

- Stakeholders in social robots in public spaces should develop a security framework to guide social robot manufacturers, designers, developers, business organizations, and users on the best practices in this field. This transdisciplinary task will require input from all stakeholders.

- Regulatory authorities should develop a regulatory standard and certification for social robot stakeholders. The existing ISO 13482:2014 on robots, robotic devices, and safety requirements for personal care robots do not fully address the needs of social robots in public spaces [37]. Such standards will enable users and non-experts to measure and ascertain the security level of social robots.

- Social robot designers and developers should incorporate fail-safe, privacy-by-design, and security-by-design concepts in social robot component development. Such design could incorporate self-repair (or defective component isolation) capabilities for social robots in public spaces.

- Entrepreneurs and start-ups should be encouraged by stakeholders in the areas of cloud computing and AI services. This will foster development and easy adoption of the technology.

6. Taxonomy for Threat Actors and Threat Landscape for Social Robots in Public Spaces

6.1. Taxonomy for Threat Actors of Social Robots in Public Spaces

- Internal threat actors include employees, authorized users, and other organization staff with internal access. The insider threats posed by these actors could be intentional or non-intentional (mistakes, recklessness, or inadequate training). A particular example of an insider threat is authorized users under duress (e.g., a user whose loved ones are kidnapped and compelled to compromise). Other people with inside access who are potential threat actors could be vendors, cleaning staff, maintenance staff, delivery agents, sales agents, and staff from other departments, among others.

- External threat actors are those outside the organization, often without authorized access to the social robot system or services. These actors could be physically present within public spaces or in remote locations. Examples of external threat actors include hackers, cyber criminals, state actors, competitors, organized crime, cyber terrorists, and corporate spies, among others.

- Supply chain threat actors create, develop, design, test, validate, distribute, or maintain social robots. Actors that previously or currently had access to any sub-component of the social robot system, who can create a backdoor on hardware, software, storage, AI, and communication, fit into this group. A direct attack on any of these actors could have a strong implication for the security of social robots. This supply chain taxonomy is aligned with ENISA’s threat landscape for supply chain attacks [135].

- Public space threat actors could be humans (internal or external) or disasters (natural or man-made) [136] that are within the physical vicinity of the social robot. Physical proximity to the social robot is a key consideration for this group of actors. Disaster threat actors in the public space could be fire, flood, earthquake, tsunami, volcanic eruptions, tornadoes, and wars, among others. In contrast, human threat actors in a public space context could be malicious humans (e.g., users, end users, bystanders, passers-by, thieves, vandals, and saboteurs) within a public space of the social robot. It is possible for a threat actor to belong to more than one group in this taxonomy.

6.2. Taxonomy for Threat Landscape of Social Robots in Public Spaces

- Physical threats are safety-related threats to social robots and humans. Social robot abuse, vandalism, sabotage, and theft are some physical threats directed at social robots in public spaces. Social robots operating in public spaces pose the threat of physical/psychological harm and property (assets) and environmental damage to humans.

- Social threat. Potential threats to personal space and legal/regulatory and social norm violations fall under the social threat landscape.

- Public space threats. Strict regulations on sensitive data collection exist in the public space domain. As with the case of threat actors, we considered disaster and human threats affecting public space.

- Cybersecurity threats can originate from different sources. Therefore, a taxonomy based on Mitre’s CAPEC domain of attacks [70] is proposed. Moreover, it complements the proposal of Yaacoub et al. [71] on robotics cybersecurity, as it incorporates specific aspects of threats to social robots in public spaces.

- Software threats are threats to operating systems, applications (including third-party apps), utilities, and firmware (embedded software). Tuma et al. presented a systematic review of software system threats in [140].

- Supply chain threats, like threat actors, could arise from any of the following: supply chains, hardware, software, communication, cloud services, and AI services. A comprehensive analysis of supply chain threats is presented by ENISA in [135].

- Human threats, also known as social engineering, include phishing, baiting, pretexting, shoulder surfing, impersonation, dumpster diving, and so on. A comprehensive systemization of knowledge on human threats is presented by Das et al. in [141].

- Communication network threats address two specific features of the communication systems of social robots in public spaces: wireless and mobility. According to [142,143], the wireless communication networks expose them to (i) accidental association, (ii) malicious association, (iii) ad hoc networks, (iv) non-traditional networks, (v) identity theft, (vi) adversary-in-the-middle, (vii) denial of service, and (viii) network injection threats. According to [143], the mobile nature of social robots’ interactions in public space imposes the following threats: (i) inadequate physical security control, (ii) use of untrusted networks/apps/contents/services, and (iii) insecure interaction with other systems.

- Cloud services, according to NIST SP 800-144 [144], introduce the following nine threats: (i) governance, (ii) compliance, (iii) trust, (iv) architecture, (v) identity and access management, (vi) software isolation, (vii) data protection, (viii) availability, and (ix) incident response threats.

- AI service threats, according to the AI threat landscape of Microsoft Corporation [60], lead to intentional and unintentional failures. Intentional AI failure threats include (i) perturbation attacks, (ii) poisoning attacks, (iii) model invasion, (iv) backdoor models, (v) model stealing, (vi) membership inference, (vii) reprogramming of the model, (viii) adversarial examples in public spaces, and (ix) malicious model providers recovering training data. Unintentional threats include (i) reward hacking, (ii) side effects, (iii) distributional shifts, (iv) natural adversarial examples, (v) common corruption, and (vi) incomplete testing of ML.

7. Conclusions

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Appendix A

| Sensor Type | Sensor | Description with Robot Application Examples |

|---|---|---|

| Internal | Potentiometer | An internal position sensor, e.g., a rotary potentiometer, is used for shaft rotation measurement. Masahiko et al. used a rotary potentiometer to measure the musculoskeletal stiffness of a humanoid robot [145]. |

| Optical encoder | A shaft connected to a circular disc containing one or more tracks of alternating transparent and opaque areas is used to measure rotational motion. Lang et al. employed an optical encoder and other sensors for object pose estimation and localization in their mobile robot [146]. | |

| Tachometer | It provides velocity feedback by measuring the motor rotating speed within the robot. Wang et al. employed a tachometer in a camera wheel robot design [147]. | |

| Inertia Measurement Unit (IMU) | A module containing three accelerometers, three gyroscopes, and three magnetometers responsible for robot gait/stability control [148]. Ding et al. employed an intelligent IMU for the gait event detection of a robot [149]. | |

| Accelerometer | It measures the change in speed of a robot. It can also be used in gait selection. Kunal et al. used this sensor in the implementation of a 5 DoF robotic arm [150]. | |

| Gyroscope | An angular motion detector or indicator. They measure the rate of rotation. Kim et al. employed a gyroscope to design and control a sphere robot [151]. | |

| Range | Ultrasonic sensor | A sound wave measures the distance between the sensor and an object. They are used for navigation and obstacle avoidance [152]. Liu et al. employed this sensor in their mobile robot localization and navigation design [153]. |

| RGB Depth Cameras | It consists of an RGB camera, a depth sensor and a multiarray microphone that produces both RGB and depth video streams [40]. It is used in face recognition, face modeling, gesture recognition, activity recognition, and navigation. Bagate et al. employed this sensor in human activity recognition [154]. | |

| Time-of-flight (ToF) cameras and other range sensors | ToF sensors use a single light pulse to measure the time it takes to travel from the sensor to the object. Other range-sensing approaches include stereo cameras, interferometry, ToF in the frequency domain, and hyper-depth cameras [40]. | |

| LiDAR | Light Imaging Detection and Ranging employs a laser scanning approach to generate a high-quality 3D image of the environment. Its application is limited by reflection from glass surfaces or water. Sushrutha et al. employed LiDAR for low-drift state estimation of humanoid robots [155]. | |

| RADAR | Radio detection and ranging use radio waves to determine the distance and angle of an object relative to a source. Modern CMOS mmWave radar sensors have mmWave detection capabilities. Guo et al. developed an auditory sensor for social robots using radar [156]. | |

| Touch | Tactile sensor | Tactile sensors are used for grasping, object manipulation, and detection. It involves the measurement of pressure, temperature, texture, and acceleration. Human safety among robots will require the complete coverage of robots with a tactile sensor. Different types of tactile sensor designs include piezoresistive, capacitive, piezoelectric, optical, and magnetic sensors [157]. Avelino et al. employed tactile sensors in the natural handshake design of social robots [158]. Sun et al. developed a humanlike skin for robots [159]. This also covers research on robot pains and reflexes [160]. |

| Audio | Microphone | An audio sensor for detecting sounds. Virtually all humanoid robots have an inbuilt microphone or microphone array [161]. |

| Smell | Electronic nose | A device for gas and chemical detection similar to the human nose [162]. Eamsa-ard et al. developed an electronic nose for smell detection and tracking in humanoids [163]. |

| Taste | Electronic tongue | A device with a lipid/polymer membrane that can evaluate taste objectively. Yoshimatsu et al. developed a taste sensor that can detect non-charged bitter substances [164]. |

| Vision | Visible Spectrum camera | Visible light spectrum cameras are used for the day vision of the robot. They are passive sensors that do not generate their own energy. Guan et al. employed this type of camera for mobile robot localization tasks [165]. |

| Infrared camera | Infrared cameras are suitable for night vision (absence of light) using thermal imaging. Milella et al. employed an infrared camera for robotic ground mapping and estimation beyond visible light [41]. | |

| VCSEL | Vertical-cavity surface-emitting laser (VCSEL) is a special laser with emission perpendicular to its top surface instead of its edge. It is used in 3D facial recognition and imaging due to its lower cost, scalability, and stability [166]. Bajpai et al. employed VCSEL in their console design for humanoids capable of real-time dynamics measurements at different speeds [167]. | |

| Position | GPS | A Global Positioning System is used for robot navigation and localization. Zhang et al. employed GPS in the path-planning design of firefighting robots [168]. |

| Magnetic sensors | Magnetic sensors are used for monitoring the robot’s motor movement and position. Qin et al. employed a magnetic sensor array to design real-time robot gesture interaction [169]. |

Appendix B

| PS | Ref | Citations | Backwards | Forward | Included | ID |

|---|---|---|---|---|---|---|

| ROUND 1 | ||||||

| PS01 | 27 | 34 | 0 | 1 | 1 | PSA01 |

| PS02 | 36 | 50 | 1 | 0 | 1 | PSA02 |

| PS03 | 53 | 3 | 0 | 0 | 0 | |

| PS04 | 9 | 5 | 0 | 0 | 0 | |

| PS05 | 36 | 12 | 0 | 0 | 0 | |

| PS06 | 31 | 3 | 0 | 0 | 0 | |

| PS07 | 32 | 31 | 0 | 0 | 0 | |

| PS08 | 21 | 14 | 0 | 0 | 0 | |

| PS09 | 29 | 57 | 0 | 2 | 2 | PSA03–PSA04 |

| PS10 | 13 | 5 | 0 | 0 | 0 | |

| PS11 | 39 | 5 | 1 | 0 | 1 | PSA05 |

| PS12 | 71 | 61 | 1 | 0 | 1 | PSA06 |

| PS13 | 46 | 2 | 0 | 0 | 0 | |

| ROUND 2 | ||||||

| PSA01 | 23 | 4 | 0 | 0 | 0 | |

| PSA02 | 25 | 169 | 0 | 1 | 1 | PSB01 |

| PSA03 | 36 | 1 | 0 | 0 | 0 | |

| PSA04 | 16 | 16 | 0 | 0 | 0 | |

| PSA05 | 44 | 0 | 0 | 0 | 0 | |

| PSA06 | 55 | 57 | 0 | 0 | 0 | |

| PSA07 | 41 | 34 | 0 | 0 | 0 | |

| ROUND 3 | ||||||

| PSB01 | 18 | 59 | 0 | 0 | 0 | |

Appendix C

| # | ID | QAS1 | QAS2 | QAS3 | QAS4 | QAS5 | QAS6 | QAS7 | QAS8 | QAS9 | QAS10 | QAS11 | QAS12 | QAS13 | QAS14 | QAS15 | Total (%) |

| 1 | PS01 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 0.5 | 1 | 0 | 1 | 90 |

| 2 | PS02 | 1 | 1 | 1 | 0.5 | 1 | 1 | 1 | 1 | 1 | 1 | 0.5 | 0.5 | 1 | 0 | 1 | 83.3 |

| 3 | PS03 | 1 | 0.5 | 1 | 0.5 | 1 | 1 | 1 | 1 | 1 | 1 | 0.5 | 0.5 | 1 | 0 | 1 | 80 |

| 4 | PS04 | 1 | 1 | 1 | 0.5 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 0.5 | 1 | 0 | 1 | 86.7 |

| 5 | PS05 | 1 | 0.5 | 1 | 0.5 | 1 | 1 | 1 | 1 | 1 | 1 | 0.5 | 0.5 | 0.5 | 0 | 1 | 76.7 |

| 6 | PS06 | 1 | 1 | 1 | 0.5 | 1 | 1 | 0.5 | 1 | 0.5 | 0.5 | 1 | 0.5 | 0.5 | 0 | 1 | 73.3 |

| 7 | PS07 | 1 | 0.5 | 0.5 | 0.5 | 0.5 | 1 | 1 | 1 | 0.5 | 1 | 0.5 | 0.5 | 1 | 0 | 1 | 70 |

| 8 | PS08 | 1 | 0.5 | 1 | 0.5 | 0.5 | 1 | 1 | 1 | 0.5 | 1 | 0.5 | 0.5 | 1 | 0 | 1 | 73.3 |

| 9 | PS09 | 1 | 1 | 1 | 0.5 | 1 | 0.5 | 1 | 1 | 1 | 1 | 0.5 | 0.5 | 0.5 | 0 | 1 | 767 |

| 10 | PS10 | 1 | 1 | 1 | 0.5 | 1 | 1 | 0.5 | 1 | 1 | 1 | 0.5 | 0.5 | 0.5 | 0 | 1 | 76.7 |

| 11 | PS11 | 1 | 0.5 | 1 | 0.5 | 1 | 1 | 1 | 0.5 | 1 | 1 | 0.5 | 0.5 | 0.5 | 0 | 1 | 73.3 |

| 12 | PS12 | 1 | 1 | 1 | 1 | 0.5 | 1 | 1 | 1 | 1 | 1 | 0.5 | 0.5 | 0.5 | 1 | 1 | 86.7 |

| 13 | PS13 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 0 | 1 | 93.3 |

| 14 | PSA01 | 1 | 1 | 1 | 0.5 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 0.5 | 0.5 | 0 | 1 | 83.3 |

| 15 | PSA02 | 1 | 0.5 | 1 | 0.5 | 0.5 | 1 | 1 | 1 | 1 | 1 | 0.5 | 0.5 | 0.5 | 0 | 1 | 73.3 |

| 16 | PSA03 | 1 | 1 | 0.5 | 0.5 | 1 | 1 | 0.5 | 1 | 1 | 1 | 1 | 0.5 | 0.5 | 0 | 1 | 76.7 |

| 17 | PSA04 | 1 | 0.5 | 1 | 0.5 | 1 | 1 | 1 | 1 | 1 | 0.5 | 0.5 | 0.5 | 0.5 | 0 | 1 | 73.3 |

| 18 | PSA05 | 1 | 0.5 | 0.5 | 0.5 | 0.5 | 1 | 1 | 1 | 1 | 1 | 0.5 | 0.5 | 0.5 | 0 | 1 | 70 |

| 19 | PSA06 | 1 | 1 | 1 | 0.5 | 0.5 | 1 | 1 | 1 | 1 | 1 | 0.5 | 0.5 | 0.5 | 0 | 1 | 76.7 |

| 20 | PSA07 | 1 | 1 | 1 | 0.5 | 1 | 1 | 1 | 1 | 1 | 1 | 0.5 | 0.5 | 0.5 | 0 | 1 | 80 |

| 21 | PSB01 | 1 | 0.5 | 1 | 0.5 | 0.5 | 1 | 1 | 1 | 1 | 1 | 0.5 | 0.5 | 0.5 | 0 | 1 | 73.3 |

| # | ID | Year | Country of Research | Affiliation |

|---|---|---|---|---|

| 1 | PS08 | 2014 | Brunei | Academia |

| 2 | PSA07 | 2021 | Canada | Academia |

| 3 | PS12 | 2021 | China | Academia |

| 4 | PSA04 | 2020 | China | Mixed |

| 5 | PS09 | 2019 | China, Saudi Arabia, Egypt | Academia |

| 6 | PSA06 | 2018 | China, USA | Academia |

| 7 | PS03 | 2021 | Denmark, Netherlands | Mixed |

| 8 | PSA01 | 2019 | Germany, Luxembourg | Academia |

| 9 | PSA03 | 2019 | India, Oceania | Academia |

| 10 | PS04 | 2019 | Italy | Academia |

| 11 | PS11 | 2020 | Italy | Mixed |

| 12 | PS13 | 2020 | Italy | Academia |

| 13 | PSA05 | 2018 | Italy | Academia |

| 14 | PSA02 | 2015 | Japan | Mixed |

| 15 | PSB01 | 2020 | Japan | Mixed |

| 16 | PS06 | 2020 | Portugal | Academia |

| 17 | PS10 | 2019 | Romania, Luxembourg, Denmark, France | Mixed |

| 18 | PS01 | 2018 | Sweden, Denmark | Academia |

| 19 | PS02 | 2018 | USA | Academia |

| 20 | PS05 | 2017 | USA | Academia |

| 21 | PS07 | 2016 | USA, China | Academia |

| Research Method | Research Types | IDs |

|---|---|---|

| Mixed | Experiment and use case | PS10 |

| Experiment and Observation | PSA02, PS11, PSB01 | |

| Experiment, Questionnaire, and Observation | PSA05 | |

| Observation (Crowdsourced study) and Survey | PSA07 | |

| Qualitative | Focus Group | PS05 |

| Observation (Between-subject study) | PS02 | |

| Quantitative | Experiment and Questionnaire | PS03 |

| Experiment and Online survey | PS07 | |

| Experiment | PS01, PS04, PS06, PS08, PS09, PS12, PS13, PSA01, PSA03, PSA04, PSA06 |

Appendix D

| Actors | Description | IDs |

|---|---|---|

| Academic research groups | A team of researchers, usually from the same faculty, who are experts in the same field and are collaborating on the problem or topic. | PSA01 |

| Adversaries | A person, group, organization, or government that engages in a harmful activity or intends to do so. | PSA04 |

| Centralized trusted entities | A third party to whom an entity has entrusted the faithful performance of specific services. An entity has the option to represent itself as a trustworthy party. | PS06 |

| Children | A child who has not reached puberty or reached the legal age of maturity. | PS02, PSA02, PSA05 |

| Competitors | An individual, group, or business that competes with others. | PSA03 |

| Cyber criminals | An individual, group, or business that is involved in Internet-based criminal conduct or illegal activities. | PSA03 |

| End users | A user who is trusted and has the authority to use the services of a social robot. | PS10 |

| External attackers | Any external party acting to compromise a social robot or try to take advantage of a social robot’s known vulnerabilities. | PS13, PSA01 |

| Human actors | A person or group responsible for harmful, prohibited, or anti-social behavior. | PSA07 |

| Illegal users | A person or group that is not allowed by law to use the services of a social robot. | PSA06 |

| Insiders | A person or thing inside the security perimeter who has permission to use system resources but who misuses them against the wishes of the people who gave them to them. | PS01, PS13, PSA03 |

| Invader | A person or group that uses force or craft to take control of another person’s space or resources without the owner’s consent. | PSA06 |

| Malevolent user | A user who is creating harm or evil or wishing to do so. | PS13 |

| Malicious attacker | A person, group, organization, or government that either intended to harm a social robot or to steal personal data from it. | PS01, PSA01, PSA03 |

| Malicious user | A person, group, organization, or government with access rights that either intended to harm a social robot or to steal personal data from it. | PS09, PS13 |

| Motivated attacker | An attacker with a specific motive. | PS01 |

| Non-government gangs | A gang of criminals that collaborates. | PSA01 |

| Others (Any attacker) | Any other type of attacker. | PS01 |

| Privileged user | A user who has been granted permission (and is therefore trusted) to carry out security-related tasks that regular users are not permitted to do. | PS13 |

| Remote operator | An operator interacting outside of a security perimeter with a social robot. | PSA04 |

| Root user | A trusted user who has the authority to do security-related tasks that are not appropriate for regular users. | PS13 |

| Skilled individual | Someone possessing the skills required to do a task or job effectively. | PSA01 |

| Social engineers | Someone who uses others’ confidence and trust to manipulate them into disclosing private information, granting unwanted access, or committing fraud. | PS11, PSA05 |

| Social robots | An intelligent robot capable of human social interaction while observing social norms. | PS03, PS08 |

| State level intelligence | A government group responsible for acquiring foreign or local information. | PSA01 |

| Unauthorized party | A person or group that is not given logical or physical access to the social robot. | PS10 |

| Disgruntled staff | A staffperson who is displeased, irritated, and disappointed regarding something. | PSA03 |

| Spy | A person who covertly gathers and disseminates information about the social robot or its users. | PS07, PS13, PSA03 |

| Wannabes | A person who is unsuccessfully attempting to become famous. | PS13 |

| Description of Cyber-Attack Motive | IDs |

|---|---|

| Information gathering (Reconnaissance) | |

| Using port scanning to detect Pepper’s vulnerabilities | PS01 |

| Conducting surveillance on users through a social robot | PS05 |

| Retrieving users’ identity information through a recovery attack | PS09 |

| Gathering users’ data through sniffing attacks on communication between robots and cloud | PS10 |

| Using social robots for social engineering attacks to retrieve users’ background data | PS11 |

| Conducting cyber-espionage and surveillance on users through social robotic platforms | PS13 |

| Social robots’ sensors collect a lot of users’ sensitive data during interaction, which an attacker tries to access during an attack | PSA05 |

| Exploiting human overtrust in social robots during a social engineering attack on users | PSA05 |

| Gaining/Escalating privileges | |

| Gaining and escalating privileges due to unsecured plain-text communication between social robots and cloud | PS01 |

| Gaining access control privileges at the edge cloud due to inadequate security | PS09 |

| Gaining privileges through a man-in-the-middle attack on social robots | PS10 |

| Gaining and escalating super privileges through insider attack | PS13 |

| Modifying data | |

| Modifying Pepper root password | PS01 |

| Maliciously falsifying social robot cloud data | PS09 |

| Tampering of social robot’s data-in-use located in DRAM memory due to insider attack privilege escalation | PS13 |

| Pepper social robot being accessed and misused by adversaries through insecure cloud-based interaction | PSA01 |

| Instances of cyber criminals exploiting robotic platforms to modify data | PSA03 |

| Read (Take/Steal) data | |

| Stealing Pepper’s credentials and data | PS01 |

| Leakage of users’ identity data in the cloud | PS09 |

| Stealing and tampering of data resulting from root users’ privilege escalation | PS13 |

| Access to Pepper through its connection to the Internet and cloud services | PSA01 |

| Cyber criminals exploiting robotic platforms and stealing data | PSA03 |

| Execute unauthorized commands | |

| Executing an arbitrary malicious code in Pepper through a MIME-sniffing attack | PS01 |

| Injecting malevolent data and commands to the social robot while exploiting ROS vulnerabilities | PS13 |

| Adversaries using malicious pdf attachments as an efficient weapon for executing codes in social robots | PSA04 |

| Deny/Disrupt services or operation | |

| Publishing huge amounts of data to realize a DoS attack after a successful insider attack | PS13 |

| Flooding communication network with heavy traffic to result in a DDoS attack | PSA03 |

| Damage/Destroy assets or properties | |

| Damage to assets and reputation of an organization or individual | PS04 |

| Causing damages in users’ homes | PS13 |

| Physical damage to museum’s valuable artifacts and other assets | PSA01 |

| IDs | Reported Attack | Attack Category |

|---|---|---|

| PS01 | ARP Spoofing | Cyber |

| PS10 | Botnet Attack | Cyber |

| PS13 | Buffer overflow | Cyber |

| PS01 | Clickjacking attacks | Cyber |

| PS13 | Code injection attack | Cyber |

| PS13 | Code-reuse attack | Cyber |

| PS13 | Cold boot attack | Cyber |

| PS06 | Collision resistance attack | Cyber |

| PS05 | Damage to property | Environment |

| PS09 | Data leakage | Cyber |

| PS09 | Data modification | Cyber |

| PS10 | Data sniffing attack | Cyber |

| PS04 | Data Theft | Cyber |

| PS10 | DDoS attack | Cyber |

| PS11 | Deception | Social, |

| PSA03 | Denial of Service (DoS) attack | Cyber |

| PS13 | DoS | Cyber |

| PS13 | Eavesdropping | Cyber |

| PSA06 | Embarrassment and privacy violation | Physical |

| PSA04 | Embedded file attack | Cyber |

| PS11 | Espionage (recording video, taking pictures and conducting searches on users) | Cyber |

| PS11 | Exploiting human emotion | Social, Cyber |

| PSA05 | Exploiting human trust towards social robots | Physical |

| PSA04 | Form submission and URI attacks | Cyber |

| PSA01 | GPS sensor attacks | Cyber |

| PS10 | Hacking of Control Software | Cyber |

| PS05 | Hacking | Cyber |

| PS09 | Hacking | Cyber |

| PS11 | Hacking | Cyber |

| PS08 | Harm to humans resulting from robot failure | Physical |

| PSA05 | Human factor attacks | Physical |

| PS12 | Illegal authorization attacks | Cyber |

| PS07 | Information theft (Espionage) | Cyber |

| PS05 | Information theft | Cyber |

| PS03 | Invading personal space | Social |

| PS13 | Lago attack | Cyber |

| PS01 | Malicious code execution | Cyber |

| PSA03 | Malicious code execution | Cyber |

| PSA04 | Malicious code execution | Cyber |

| PSA03 | Malware attack | Cyber |

| PS01 | Malware attack | Cyber |

| PS07 | Malware attack | Cyber |

| PSA04 | Malware attack | Cyber |

| PS07 | Malware attack | Cyber |

| PS01 | Man-in-the-Middle Attack | Cyber |

| PS10 | Man-in-the-Middle Attack | Cyber |

| PS13 | Man-in-the-Middle Attack | Cyber |

| PSA03 | Man-in-the-Middle Attack | Cyber |

| PS11 | Manipulation tactics | Cyber, Social |

| PS01 | Meltdown and specter attacks | Cyber |

| PS01 | MIME-sniffing attack | Cyber |

| PS13 | Modifying Linux base attack | Cyber |

| PSA04 | pdf file attacks | Cyber |

| PS11 | Personal information extraction | Cyber |

| PS05 | Personal space violation | Social |

| PS05 | Phishing attacks (accounts and medical records) | Cyber |

| PS11 | Phishing attacks | Cyber |

| PSA02 | Physical (Harm to robot) | Physical |

| PSA02 | Physical (Obstructing robot path) | Physical |

| PSA07 | Physical (Obstructing robot path) | Physical |

| PSB01 | Physical (Obstructing robot path) | Physical |

| PSA02 | Physical (Psychological effects on humans) | Physical |

| PS02 | Physical abuse (Physical violence towards robot) | Physical |

| PS13 | Physical attacks that unpackage the CPU or any programming bug | Cyber |

| PSA01 | Physical damage to properties/environment | Environment |

| PS01 | Physical harm to human | Physical |

| PS13 | Physical harm to human | Physical |

| PSA01 | Physical harm to human | Physical |

| PSA03 | Physical harm to human | Physical |

| PSA01 | Psychological harm to human | Physical |

| PS04 | Remote Code Execution | Cyber |

| PSA03 | Remote Control Without Authentication | Cyber |

| PS01 | Remote Control Without Authentication | Cyber |

| PS13 | Replay | Cyber |

| PSA07 | Robot bullying | Physical |

| PSA07 | Robot mistreatment | Physical |

| PSA07 | Robot vandalism | Physical |

| PSA07 | Sabotaging robot tasks | Physical |

| PS13 | Side-channel attack | Cyber |

| PS13 | Sniffing (bus-sniffing attack) | Cyber |

| PS07 | Social engineering | Cyber |

| PS13 | Specter attack | Cyber |

| PS04 | Spoofing attack on user information | Cyber |

| PS01 | SSH dictionary brute-force attack | Cyber |

| PS13 | Stealing security certificates | Cyber |

| PS07 | Surveillance | Cyber, Social |

| PSA06 | Surveillance | Cyber, Social |

| PS05 | Surveillance | Cyber |

| PS11 | Theft (Stealing) | Physical, Social |

| PS05 | Theft | Physical |

| PS02 | Traffic analysis attack | Cyber |

| PS11 | Trust violation | Social |

| PS05 | User preference violation through targeted marketing | Cyber |

| PS02 | Verbal abuse towards a robot | Physical |

| PSA07 | Verbal violence towards robots | Physical |

| PS01 | XSS (Cross-site scripting) | Cyber |

| IDs | Attack | Attack Mitigation |

|---|---|---|

| PS01 | Port Scanning attack | Use of Portspoof software and running of automated assessment tools |

| PS01 | Security patches and updates-related attacks | Software security analysis and updates |

| PS01 | Insecure communication channel with HTTP-related attacks | Communication over secure channels using HTTPS |

| PS01 | Insecure-password-management-related attacks | Smarter access control mechanisms using blockchain smart contracts |

| PS01 | Brute-force password attacks | IP blacklisting and setting upper bound for simultaneous connections |

| PS01 | Unverified inputs resulting in malicious code execution | Adequate input validation |

| PS01 | Man-in-the-Middle attacks | Secure cryptographic certificate handling |

| PS01 | Remote control without authentication | Secure API design |

| PS02 | Robot physical abuse by humans | Bystander intervention and possible social robot shutdown during abuse |

| PS03 | Personal space invasion by social robots | Use of audible sound when making an approach |

| PS04 | Anomalous behaviors in robots resulting from cyber-attacks | Intrusion detection and protection mechanism using system logs |

| PS04 | Attacks resulting from insecure policies in robot development | Awareness and enforcing strong security policies |

| PS06 | Data privacy violation in social robots | The use of BlockRobot with DAP based on EOS Blockchain |

| PS07 | Recording of users’ sensitive data (nakedness) | Automatic nakedness detection and prevention in smart homes using CNN |

| PS08 | Physical harm to humans due to failure | Dynamic Social Zone (DSZ) framework |

| PS09 | Attacks resulting from identity authentication limitations | A secure identity authentication mechanism |

| PS09 | Access-control-limitations-related attacks | A polynomial-based access control system |

| PS09 | Attacks exploiting communication payload size and delay | An efficient security policy |

| PS10 | Cyber-attacks on socially assistive robots | Secure IoT platform |

| PS12 | Face visual privacy violation | Facial privacy generative adversarial network (FPGAN) model |

| PS13 | An insider with root-privileges-related attack | Hardware-assisted trusted execution environment (HTEE) |

| PS13 | Protection against return-oriented programming (ROP) | Isolation feature of hardware-assisted trusted execution environment (HTEE) |

| PSA01 | Robot software development/testing vulnerabilities | Robot application security process platform in collaboration with security engineer |

| PSA02 | Social robot abuse by children | A planning technique for avoiding children abuse |

| PSA03 | Cyber and physical attacks on robotic platforms | A platform for monitoring and detection of attacks |

| PSA03 | Public-space-factors-related attacks | Platform with alerts for fire, safety, water, power, and local news. |

| PSA04 | Malicious code execution from pdf file access | Mobile malicious pdf detector (MMPD) algorithm |

| PSA06 | Recording of privacy-sensitive images of users | A Real-time Object Detection Algorithm based on Feature YOLO (RODA-FY) |

| PSB01 | Robot abuse by children | Recommended early stopping of abusive behaviors and preventing children from imitating abusive behaviors |

References

- Sheridan, T.B. A Review of Recent Research in Social Robotics. Curr. Opin. Psychol. 2020, 36, 7–12. [Google Scholar] [CrossRef] [PubMed]

- Martinez-Martin, E.; Costa, A. Assistive Technology for Elderly Care: An Overview. IEEE Access Pract. Innov. Open Solut. 2021, 9, 92420–92430. [Google Scholar] [CrossRef]

- Portugal, D.; Alvito, P.; Christodoulou, E.; Samaras, G.; Dias, J. A Study on the Deployment of a Service Robot in an Elderly Care Center. Int. J. Soc. Robot. 2019, 11, 317–341. [Google Scholar] [CrossRef]

- Kyrarini, M.; Lygerakis, F.; Rajavenkatanarayanan, A.; Sevastopoulos, C.; Nambiappan, H.R.; Chaitanya, K.K.; Babu, A.R.; Mathew, J.; Makedon, F. A Survey of Robots in Healthcare. Technologies 2021, 9, 8. [Google Scholar] [CrossRef]

- Logan, D.E.; Breazeal, C.; Goodwin, M.S.; Jeong, S.; O’Connell, B.; Smith-Freedman, D.; Heathers, J.; Weinstock, P. Social Robots for Hospitalized Children. Pediatrics 2019, 144, e20181511. [Google Scholar] [CrossRef]

- Scoglio, A.A.; Reilly, E.D.; Gorman, J.A.; Drebing, C.E. Use of Social Robots in Mental Health and Well-Being Research: Systematic Review. J. Med. Internet Res. 2019, 21, e13322. [Google Scholar] [CrossRef]

- Gerłowska, J.; Furtak-Niczyporuk, M.; Rejdak, K. Robotic Assistance for People with Dementia: A Viable Option for the Future? Expert Rev. Med. Devices 2020, 17, 507–518. [Google Scholar] [CrossRef]

- Ghafurian, M.; Hoey, J.; Dautenhahn, K. Social Robots for the Care of Persons with Dementia: A Systematic Review. ACM Trans. Hum.-Robot Interact. 2021, 10, 1–31. [Google Scholar] [CrossRef]

- Woods, D.; Yuan, F.; Jao, Y.-L.; Zhao, X. Social Robots for Older Adults with Dementia: A Narrative Review on Challenges & Future Directions. In Proceedings of the Social Robotics, Singapore, 2 November 2021; Li, H., Ge, S.S., Wu, Y., Wykowska, A., He, H., Liu, X., Li, D., Perez-Osorio, J., Eds.; Springer International Publishing: Cham, Germany, 2021; pp. 411–420. [Google Scholar]

- Alam, A. Social Robots in Education for Long-Term Human-Robot Interaction: Socially Supportive Behaviour of Robotic Tutor for Creating Robo-Tangible Learning Environment in a Guided Discovery Learning Interaction. ECS Trans. 2022, 107, 12389. [Google Scholar] [CrossRef]

- Belpaeme, T.; Kennedy, J.; Ramachandran, A.; Scassellati, B.; Tanaka, F. Social Robots for Education: A Review. Sci. Robot. 2018, 3, eaat5954. [Google Scholar] [CrossRef]

- Lytridis, C.; Bazinas, C.; Sidiropoulos, G.; Papakostas, G.A.; Kaburlasos, V.G.; Nikopoulou, V.-A.; Holeva, V.; Evangeliou, A. Distance Special Education Delivery by Social Robots. Electronics 2020, 9, 1034. [Google Scholar] [CrossRef]

- Rosenberg-Kima, R.B.; Koren, Y.; Gordon, G. Robot-Supported Collaborative Learning (RSCL): Social Robots as Teaching Assistants for Higher Education Small Group Facilitation. Front. Robot. AI 2020, 6, 148. [Google Scholar] [CrossRef]

- Belpaeme, T.; Vogt, P.; van den Berghe, R.; Bergmann, K.; Göksun, T.; de Haas, M.; Kanero, J.; Kennedy, J.; Küntay, A.C.; Oudgenoeg-Paz, O.; et al. Guidelines for Designing Social Robots as Second Language Tutors. Int. J. Soc. Robot. 2018, 10, 325–341. [Google Scholar] [CrossRef] [PubMed]

- Engwall, O.; Lopes, J.; Åhlund, A. Robot Interaction Styles for Conversation Practice in Second Language Learning. Int. J. Soc. Robot. 2021, 13, 251–276. [Google Scholar] [CrossRef]

- Kanero, J.; Geçkin, V.; Oranç, C.; Mamus, E.; Küntay, A.C.; Göksun, T. Social Robots for Early Language Learning: Current Evidence and Future Directions. Child Dev. Perspect. 2018, 12, 146–151. [Google Scholar] [CrossRef]

- van den Berghe, R.; Verhagen, J.; Oudgenoeg-Paz, O.; van der Ven, S.; Leseman, P. Social Robots for Language Learning: A Review. Rev. Educ. Res. 2019, 89, 259–295. [Google Scholar] [CrossRef]

- Aaltonen, I.; Arvola, A.; Heikkilä, P.; Lammi, H. Hello Pepper, May I Tickle You? Children’s and Adults’ Responses to an Entertainment Robot at a Shopping Mall. In Proceedings of the Companion of the 2017 ACM/IEEE International Conference on Human-Robot Interaction, Vienna, Austria, 6–9 March 2017; Association for Computing Machinery: New York, NY, USA, 2017; pp. 53–54. [Google Scholar]

- Čaić, M.; Mahr, D.; Oderkerken-Schröder, G. Value of Social Robots in Services: Social Cognition Perspective. J. Serv. Mark. 2019, 33, 463–478. [Google Scholar] [CrossRef]

- Yeoman, I.; Mars, M. Robots, Men and Sex Tourism. Futures 2012, 44, 365–371. [Google Scholar] [CrossRef]

- Pinillos, R.; Marcos, S.; Feliz, R.; Zalama, E.; Gómez-García-Bermejo, J. Long-Term Assessment of a Service Robot in a Hotel Environment. Robot. Auton. Syst. 2016, 79, 40–57. [Google Scholar] [CrossRef]

- Ivanov, S.; Seyitoğlu, F.; Markova, M. Hotel Managers’ Perceptions towards the Use of Robots: A Mixed-Methods Approach. Inf. Technol. Tour. 2020, 22, 505–535. [Google Scholar] [CrossRef]

- Mubin, O.; Ahmad, M.I.; Kaur, S.; Shi, W.; Khan, A. Social Robots in Public Spaces: A Meta-Review. In Proceedings of the Social Robotics, Qingdao, China, 27 November 2018; Ge, S.S., Cabibihan, J.-J., Salichs, M.A., Broadbent, E., He, H., Wagner, A.R., Castro-González, Á., Eds.; Springer International Publishing: Cham, Germany, 2018; pp. 213–220. [Google Scholar]

- Thunberg, S.; Ziemke, T. Are People Ready for Social Robots in Public Spaces? In Proceedings of the Companion of the 2020 ACM/IEEE International Conference on Human-Robot Interaction, Cambridge, UK, 23–26 March 2020; Association for Computing Machinery: New York, NY, USA, 2020; pp. 482–484. [Google Scholar]

- Mintrom, M.; Sumartojo, S.; Kulić, D.; Tian, L.; Carreno-Medrano, P.; Allen, A. Robots in Public Spaces: Implications for Policy Design. Policy Des. Pract. 2022, 5, 123–139. [Google Scholar] [CrossRef]

- Radanliev, P.; De Roure, D.; Walton, R.; Van Kleek, M.; Montalvo, R.M.; Santos, O.; Maddox, L.; Cannady, S. COVID-19 What Have We Learned? The Rise of Social Machines and Connected Devices in Pandemic Management Following the Concepts of Predictive, Preventive and Personalized Medicine. EPMA J. 2020, 11, 311–332. [Google Scholar] [CrossRef] [PubMed]

- Shen, Y.; Guo, D.; Long, F.; Mateos, L.A.; Ding, H.; Xiu, Z.; Hellman, R.B.; King, A.; Chen, S.; Zhang, C.; et al. Robots Under COVID-19 Pandemic: A Comprehensive Survey. IEEE Access 2021, 9, 1590–1615. [Google Scholar] [CrossRef] [PubMed]

- Research and Markets Global Social Robots Market—Growth, Trends, COVID-19 Impact, and Forecasts (2022–2027). Available online: https://www.researchandmarkets.com/reports/5120156/global-social-robots-market-growth-trends (accessed on 16 October 2022).

- European Partnership on Artificial Intelligence, Data and Robotics AI Data Robotics Partnership EU. Available online: https://ai-data-robotics-partnership.eu/ (accessed on 15 October 2022).

- United Nations ESCAP Ageing Societies. Available online: https://www.unescap.org/our-work/social-development/ageing-societies (accessed on 16 October 2022).

- WHO Ageing and Health. Available online: https://www.who.int/news-room/fact-sheets/detail/ageing-and-health (accessed on 16 October 2022).

- Stone, R.; Harahan, M.F. Improving The Long-Term Care Workforce Serving Older Adults. Health Aff. 2010, 29, 109–115. [Google Scholar] [CrossRef]

- Fosch Villaronga, E.; Golia, A.J. Robots, Standards and the Law: Rivalries between Private Standards and Public Policymaking for Robot Governance. Comput. Law Secur. Rev. 2019, 35, 129–144. [Google Scholar] [CrossRef]

- Fosch-Villaronga, E.; Mahler, T. Cybersecurity, Safety and Robots: Strengthening the Link between Cybersecurity and Safety in the Context of Care Robots. Comput. Law Secur. Rev. 2021, 41, 105528. [Google Scholar] [CrossRef]

- Fosch-Villaronga, E.; Lutz, C.; Tamò-Larrieux, A. Gathering Expert Opinions for Social Robots’ Ethical, Legal, and Societal Concerns: Findings from Four International Workshops. Int. J. Soc. Robot. 2020, 12, 441–458. [Google Scholar] [CrossRef]

- Chatterjee, S.; Chaudhuri, R.; Vrontis, D. Usage Intention of Social Robots for Domestic Purpose: From Security, Privacy, and Legal Perspectives. Inf. Syst. Front. 2021, 1–16. [Google Scholar] [CrossRef]

- Salvini, P.; Paez-Granados, D.; Billard, A. On the Safety of Mobile Robots Serving in Public Spaces: Identifying Gaps in EN ISO 13482:2014 and Calling for a New Standard. ACM Trans. Hum.-Robot Interact. 2021, 10, 1–27. [Google Scholar] [CrossRef]

- Ahmad Yousef, K.M.; AlMajali, A.; Ghalyon, S.A.; Dweik, W.; Mohd, B.J. Analyzing Cyber-Physical Threats on Robotic Platforms. Sensors 2018, 18, 1643. [Google Scholar] [CrossRef]

- Özdol, B.; Köseler, E.; Alçiçek, E.; Cesur, S.E.; Aydemir, P.J.; Bahtiyar, Ş. A Survey on Security Attacks with Remote Ground Robots. El-Cezeri 2021, 8, 1286–1308. [Google Scholar] [CrossRef]

- Choi, J. Range Sensors: Ultrasonic Sensors, Kinect, and LiDAR. In Humanoid Robotics: A Reference; Goswami, A., Vadakkepat, P., Eds.; Springer: Dordrecht, The Netherlands, 2019; pp. 2521–2538. ISBN 978-94-007-6045-5. [Google Scholar]

- Milella, A.; Reina, G.; Nielsen, M. A Multi-Sensor Robotic Platform for Ground Mapping and Estimation beyond the Visible Spectrum. Precis. Agric. 2019, 20, 423–444. [Google Scholar] [CrossRef]

- Nandhini, C.; Murmu, A.; Doriya, R. Study and Analysis of Cloud-Based Robotics Framework. In Proceedings of the 2017 International Conference on Current Trends in Computer, Electrical, Electronics and Communication (CTCEEC), Mysore, India, 8–9 September 2017; pp. 800–8111. [Google Scholar]

- Sun, Y. Cloud Edge Computing for Socialization Robot Based on Intelligent Data Envelopment. Comput. Electr. Eng. 2021, 92, 107136. [Google Scholar] [CrossRef]

- Jawhar, I.; Mohamed, N.; Al-Jaroodi, J. Secure Communication in Multi-Robot Systems. In Proceedings of the 2020 IEEE Systems Security Symposium (SSS), Crystal City, VA, USA, 1 July–1 August 2020; pp. 1–8. [Google Scholar]

- Bures, M.; Klima, M.; Rechtberger, V.; Ahmed, B.S.; Hindy, H.; Bellekens, X. Review of Specific Features and Challenges in the Current Internet of Things Systems Impacting Their Security and Reliability. In Proceedings of the Trends and Applications in Information Systems and Technologies, Terceira Island, Azores, Portugal, 30 March–2 April 2021; Rocha, Á., Adeli, H., Dzemyda, G., Moreira, F., Ramalho Correia, A.M., Eds.; Springer International Publishing: Cham, Germany, 2021; pp. 546–556. [Google Scholar]

- Mahmoud, R.; Yousuf, T.; Aloul, F.; Zualkernan, I. Internet of Things (IoT) Security: Current Status, Challenges and Prospective Measures. In Proceedings of the 2015 10th International Conference for Internet Technology and Secured Transactions (ICITST), London, UK, 14–16 December 2015; pp. 336–341. [Google Scholar]

- Morales, C.G.; Carter, E.J.; Tan, X.Z.; Steinfeld, A. Interaction Needs and Opportunities for Failing Robots. In Proceedings of the 2019 on Designing Interactive Systems Conference, San Diego, CA, USA, 23–28 June 2019; Association for Computing Machinery: New York, NY, USA, 2019; pp. 659–670. [Google Scholar]

- Sailio, M.; Latvala, O.-M.; Szanto, A. Cyber Threat Actors for the Factory of the Future. Appl. Sci. 2020, 10, 4334. [Google Scholar] [CrossRef]

- Liu, Y.-C.; Bianchin, G.; Pasqualetti, F. Secure Trajectory Planning against Undetectable Spoofing Attacks. Automatica 2020, 112, 108655. [Google Scholar] [CrossRef]

- Tsiostas, D.; Kittes, G.; Chouliaras, N.; Kantzavelou, I.; Maglaras, L.; Douligeris, C.; Vlachos, V. The Insider Threat: Reasons, Effects and Mitigation Techniques. In Proceedings of the 24th Pan-Hellenic Conference on Informatics, Athens, Greece, 20–22 November 2020; Association for Computing Machinery: New York, NY, USA, 2020; pp. 340–345. [Google Scholar]

- Kaloudi, N.; Li, J. The AI-Based Cyber Threat Landscape: A Survey. ACM Comput. Surv. 2020, 53, 1–34. [Google Scholar] [CrossRef]

- Boada, J.P.; Maestre, B.R.; Genís, C.T. The Ethical Issues of Social Assistive Robotics: A Critical Literature Review. Technol. Soc. 2021, 67, 101726. [Google Scholar] [CrossRef]

- Sarrica, M.; Brondi, S.; Fortunati, L. How Many Facets Does a “Social Robot” Have? A Review of Scientific and Popular Definitions Online. Inf. Technol. People 2019, 33, 1–21. [Google Scholar] [CrossRef]

- Henschel, A.; Laban, G.; Cross, E.S. What Makes a Robot Social? A Review of Social Robots from Science Fiction to a Home or Hospital Near You. Curr. Robot. Rep. 2021, 2, 9–19. [Google Scholar] [CrossRef]

- Woo, H.; LeTendre, G.K.; Pham-Shouse, T.; Xiong, Y. The Use of Social Robots in Classrooms: A Review of Field-Based Studies. Educ. Res. Rev. 2021, 33, 100388. [Google Scholar] [CrossRef]

- Papadopoulos, I.; Lazzarino, R.; Miah, S.; Weaver, T.; Thomas, B.; Koulouglioti, C. A Systematic Review of the Literature Regarding Socially Assistive Robots in Pre-Tertiary Education. Comput. Educ. 2020, 155, 103924. [Google Scholar] [CrossRef]

- Donnermann, M.; Schaper, P.; Lugrin, B. Social Robots in Applied Settings: A Long-Term Study on Adaptive Robotic Tutors in Higher Education. Front. Robot. AI 2022, 9, 831633. [Google Scholar] [CrossRef] [PubMed]

- Cooper, S.; Di Fava, A.; Villacañas, Ó.; Silva, T.; Fernandez-Carbajales, V.; Unzueta, L.; Serras, M.; Marchionni, L.; Ferro, F. Social Robotic Application to Support Active and Healthy Ageing. In Proceedings of the 2021 30th IEEE International Conference on Robot & Human Interactive Communication (RO-MAN), Vancouver, BC, Canada, 8–12 August 2021; pp. 1074–1080. [Google Scholar]

- Mavroeidis, V.; Hohimer, R.; Casey, T.; Jesang, A. Threat Actor Type Inference and Characterization within Cyber Threat Intelligence. In Proceedings of the 2021 13th International Conference on Cyber Conflict (CyCon), Tallinn, Estonia, 25–28 May 2021; pp. 327–352. [Google Scholar]

- Siva Kumar, R.S.; O’Brien, D.; Albert, K.; Viljoen, S.; Snover, J. Failure Modes in Machine Learning Systems. Available online: https://arxiv.org/abs/1911.11034 (accessed on 5 December 2022).

- Giaretta, A.; De Donno, M.; Dragoni, N. Adding Salt to Pepper: A Structured Security Assessment over a Humanoid Robot. In Proceedings of the 13th International Conference on Availability, Reliability and Security, Hamburg Germany, 27–30 August 2018; Association for Computing Machinery: New York, NY, USA, 2018. [Google Scholar]

- Srinivas Aditya, U.S.P.; Singh, R.; Singh, P.K.; Kalla, A. A Survey on Blockchain in Robotics: Issues, Opportunities, Challenges and Future Directions. J. Netw. Comput. Appl. 2021, 196, 103245. [Google Scholar] [CrossRef]

- Dario, P.; Laschi, C.; Guglielmelli, E. Sensors and Actuators for “humanoid” Robots. Adv. Robot. 1996, 11, 567–584. [Google Scholar] [CrossRef]

- Woodford, C. Robots. Available online: http://www.explainthatstuff.com/robots.html (accessed on 4 November 2022).

- Tonkin, M.V. Socially Responsible Design for Social Robots in Public Spaces. Ph.D. Thesis, University of Technology Sydney, Sydney, Australia, 2021. [Google Scholar]

- Fortunati, L.; Cavallo, F.; Sarrica, M. The Role of Social Robots in Public Space. In Proceedings of the Ambient Assisted Living, London, UK, 25 March 2019; Casiddu, N., Porfirione, C., Monteriù, A., Cavallo, F., Eds.; Springer International Publishing: Cham, Germany, 2019; pp. 171–186. [Google Scholar]

- Altman, I.; Zube, E.H. Public Places and Spaces; Springer Science & Business Media: Berlin, Germany, 2012; ISBN 978-1-4684-5601-1. [Google Scholar]

- Ross, R.; McEvilley, M.; Carrier Oren, J. System Security Engineering: Considerations for a Multidisciplinary Approach in the Engineering of Trustworthy Secure Systems; NIST: Gaithersburg, MD, USA, 2016; p. 260. [Google Scholar]

- Newhouse, W.; Johnson, B.; Kinling, S.; Kuruvilla, J.; Mulugeta, B.; Sandlin, K. Multifactor Authentication for E-Commerce Risk-Based, FIDO Universal Second Factor Implementations for Purchasers; NIST Special Publication 1800-17; NIST: Gaithersburg, MD, USA, 2019. [Google Scholar]

- MITRE Common Attack Pattern Enumeration and Classification (CAPEC): Domains of Attack (Version 3.7). Available online: https://capec.mitre.org/data/definitions/3000.html (accessed on 19 September 2022).

- Yaacoub, J.-P.A.; Noura, H.N.; Salman, O.; Chehab, A. Robotics Cyber Security: Vulnerabilities, Attacks, Countermeasures, and Recommendations. Int. J. Inf. Secur. 2022, 21, 115–158. [Google Scholar] [CrossRef]

- DeMarinis, N.; Tellex, S.; Kemerlis, V.P.; Konidaris, G.; Fonseca, R. Scanning the Internet for ROS: A View of Security in Robotics Research. In Proceedings of the 2019 International Conference on Robotics and Automation (ICRA), Montreal, QC, Canada, 20–24 May 2019; pp. 8514–8521. [Google Scholar]

- Montasari, R.; Hill, R.; Parkinson, S.; Daneshkhah, A.; Hosseinian-Far, A. Hardware-Based Cyber Threats: Attack Vectors and Defence Techniques. Int. J. Electron. Secur. Digit. Forensics 2020, 12, 397–411. [Google Scholar] [CrossRef]

- NIST. Minimum Security Requirements for Federal Information and Information Systems; NIST: Gaithersburg, MD, USA, 2006. [Google Scholar]

- Barnard-Wills, D.; Marinos, L.; Portesi, S. Threat Landscape and Good Practice Guide for Smart Home and Converged Media; European Union Agency for Network and Information Security: Athens, Greece, 2014; p. 62. ISBN 978-92-9204-096-3. [Google Scholar]

- Dautenhahn, K. Methodology & Themes of Human-Robot Interaction: A Growing Research Field. Int. J. Adv. Robot. Syst. 2007, 4, 15. [Google Scholar] [CrossRef]

- Baxter, P.; Kennedy, J.; Senft, E.; Lemaignan, S.; Belpaeme, T. From Characterising Three Years of HRI to Methodology and Reporting Recommendations. In Proceedings of the 2016 11th ACM/IEEE International Conference on Human-Robot Interaction (HRI), Christchurch, New Zealand, 7–10 March 2016; pp. 391–398. [Google Scholar]

- Höflich, J.R. Relationships to Social Robots: Towards a Triadic Analysis of Media-Oriented Behavior. Intervalla 2013, 1, 35–48. [Google Scholar]

- Mayoral-Vilches, V. Robot Cybersecurity, a Review. Int. J. Cyber Forensics Adv. Threats Investig. 2021; in press. [Google Scholar]

- Nieles, M.; Dempsey, K.; Pillitteri, V. An Introduction to Information Security; NIST Special Publication 800-12; NIST: Gaithersburg, MD, USA, 2017; Revision 1. [Google Scholar]

- Alzubaidi, M.; Anbar, M.; Hanshi, S.M. Neighbor-Passive Monitoring Technique for Detecting Sinkhole Attacks in RPL Networks. In Proceedings of the 2017 International Conference on Computer Science and Artificial Intelligence, Jakarta, Indonesia, 5–7 December 2017; Association for Computing Machinery: New York, NY, USA, 2017; pp. 173–182. [Google Scholar]

- Shoukry, Y.; Martin, P.; Yona, Y.; Diggavi, S.; Srivastava, M. PyCRA: Physical Challenge-Response Authentication for Active Sensors under Spoofing Attacks. In Proceedings of the 22nd ACM SIGSAC Conference on Computer and Communications Security, Denver, CO, USA, 12–16 October 2015; Association for Computing Machinery: New York, NY, USA, 2015; pp. 1004–1015. [Google Scholar]

- Ross, R.; Pillitteri, V.; Dempsey, K.; Riddle; Guissanie, G. Protecting Controlled Unclassified Information in Nonfederal Systems and Organizations; NIST: Gaithersburg, MD, USA, 2020. [Google Scholar]

- UK NCSS UK. National Cyber Security Strategy 2016–2021. 2016; p. 80. Available online: https://assets.publishing.service.gov.uk/government/uploads/system/uploads/attachment_data/file/567242/national_cyber_security_strategy_2016.pdf (accessed on 4 November 2022).

- Ross, R.; Pillitteri, V.; Graubart, R.; Bodeau, D.; Mcquaid, R. Developing Cyber-Resilient Systems: A Systems Security Engineering Approach; NIST Special Publication 800-160; NIST: Gaithersburg, MD, USA, 2021. [Google Scholar]

- Lasota, P.A.; Song, T.; Shah, J.A. A Survey of Methods for Safe Human-Robot Interaction; Now Publishers: Delft, The Netherlands, 2017; ISBN 978-1-68083-279-2. [Google Scholar]

- Garfinkel, S.L. De-Identification of Personal Information; National Institute of Standards and Technology: Gaithersburg, MD, USA, 2015; p. NIST IR 8053. [Google Scholar]

- Casey, T. Threat Agent Library Helps Identify Information Security Risks. Intel Inf. Technol. 2007, 2, 1–12. [Google Scholar] [CrossRef]

- Zacharaki, A.; Kostavelis, I.; Gasteratos, A.; Dokas, I. Safety Bounds in Human Robot Interaction: A Survey. Saf. Sci. 2020, 127, 104667. [Google Scholar] [CrossRef]

- Tian, L.; Oviatt, S. A Taxonomy of Social Errors in Human-Robot Interaction. J. Hum.-Robot Interact. 2021, 10, 1–32. [Google Scholar] [CrossRef]

- Honig, S.; Oron-Gilad, T. Understanding and Resolving Failures in Human-Robot Interaction: Literature Review and Model Development. Front. Psychol. 2018, 9, 861. [Google Scholar] [CrossRef] [PubMed]

- Cornelius, G.; Caire, P.; Hochgeschwender, N.; Olivares-Mendez, M.A.; Esteves-Verissimo, P.; Völp, M.; Voos, H. A Perspective of Security for Mobile Service Robots. In Proceedings of the ROBOT 2017: Third Iberian Robotics Conference, Seville, Spain, 22–24 November 2017; Ollero, A., Sanfeliu, A., Montano, L., Lau, N., Cardeira, C., Eds.; Springer International Publishing: Cham, Germany, 2018; pp. 88–100. [Google Scholar]

- Cerrudo, C.; Apa, L. Hacking Robots before Skynet; IOActive: Seattle, WA, USA, 2017; pp. 1–17. [Google Scholar]

- European Union Agency for Cybersecurity. ENISA Threat Landscape 2021: April 2020 to Mid July 2021; Publications Office: Luxembourg, Luxembourg, 2021; ISBN 978-92-9204-536-4. [Google Scholar]

- European Union Agency for Cybersecurity. ENISA Cybersecurity Threat Landscape Methodology; Publications Office: Luxembourg, Luxembourg, 2022. [Google Scholar]

- Choo, K.-K.R. The Cyber Threat Landscape: Challenges and Future Research Directions. Comput. Secur. 2011, 30, 719–731. [Google Scholar] [CrossRef]

- Kitchenham, B.; Brereton, P. A Systematic Review of Systematic Review Process Research in Software Engineering. Inf. Softw. Technol. 2013, 55, 2049–2075. [Google Scholar] [CrossRef]

- Humayun, M.; Niazi, M.; Jhanjhi, N.; Alshayeb, M.; Mahmood, S. Cyber Security Threats and Vulnerabilities: A Systematic Mapping Study. Arab. J. Sci. Eng. 2020, 45, 3171–3189. [Google Scholar] [CrossRef]

- Oruma, S.O.; Sánchez-Gordón, M.; Colomo-Palacios, R.; Gkioulos, V.; Hansen, J. Supplementary Materials to “A Systematic Review of Social Robots in Public Spaces: Threat Landscape and Attack Surface”—Mendeley Data. Mendeley Data 2022. [Google Scholar] [CrossRef]

- Fong, T.; Nourbakhsh, I.; Dautenhahn, K. A Survey of Socially Interactive Robots. Robot. Auton. Syst. 2003, 42, 143–166. [Google Scholar] [CrossRef]

- Mazzeo, G.; Staffa, M. TROS: Protecting Humanoids ROS from Privileged Attackers. Int. J. Soc. Robot. 2020, 12, 827–841. [Google Scholar] [CrossRef]

- Felizardo, K.R.; Mendes, E.; Kalinowski, M.; Souza, É.F.; Vijaykumar, N.L. Using Forward Snowballing to Update Systematic Reviews in Software Engineering. In Proceedings of the 10th ACM/IEEE International Symposium on Empirical Software Engineering and Measurement, Ciudad Real, Spain, 8–9 September 2016; Association for Computing Machinery: New York, NY, USA, 2016; pp. 1–6. [Google Scholar]

- Brščić, D.; Kidokoro, H.; Suehiro, Y.; Kanda, T. Escaping from Children’s Abuse of Social Robots. In Proceedings of the Tenth Annual ACM/IEEE International Conference on Human-Robot Interaction, Portland, OR, USA, 2–5 March 2015; Association for Computing Machinery: New York, NY, USA, 2015; pp. 59–66. [Google Scholar]

- Lin, J.; Li, Y.; Yang, G. FPGAN: Face de-Identification Method with Generative Adversarial Networks for Social Robots. Neural Netw. 2021, 133, 132–147. [Google Scholar] [CrossRef]

- Zhang, Y.; Qian, Y.; Wu, D.; Hossain, M.S.; Ghoneim, A.; Chen, M. Emotion-Aware Multimedia Systems Security. IEEE Trans. Multimed. 2019, 21, 617–624. [Google Scholar] [CrossRef]

- Aroyo, A.M.; Rea, F.; Sandini, G.; Sciutti, A. Trust and Social Engineering in Human Robot Interaction: Will a Robot Make You Disclose Sensitive Information, Conform to Its Recommendations or Gamble? IEEE Robot. Autom. Lett. 2018, 3, 3701–3708. [Google Scholar] [CrossRef]

- Tan, X.Z.; Vázquez, M.; Carter, E.J.; Morales, C.G.; Steinfeld, A. Inducing Bystander Interventions During Robot Abuse with Social Mechanisms. In Proceedings of the 2018 ACM/IEEE International Conference on Human-Robot Interaction, Chicago, IL, USA, 5–8 March 2018; Association for Computing Machinery: New York, NY, USA, 2018; pp. 169–177. [Google Scholar]

- Yang, G.; Yang, J.; Sheng, W.; Junior, F.E.F.; Li, S. Convolutional Neural Network-Based Embarrassing Situation Detection under Camera for Social Robot in Smart Homes. Sensors 2018, 18, 1530. [Google Scholar] [CrossRef]

- Fernandes, F.E.; Yang, G.; Do, H.M.; Sheng, W. Detection of Privacy-Sensitive Situations for Social Robots in Smart Homes. In Proceedings of the 2016 IEEE International Conference on Automation Science and Engineering (CASE), Fort Worth, TX, USA, 21–25 August 2016; pp. 727–732. [Google Scholar]

- Bhardwaj, A.; Avasthi, V.; Goundar, S. Cyber Security Attacks on Robotic Platforms. Netw. Secur. 2019, 2019, 13–19. [Google Scholar] [CrossRef]

- Truong, X.-T.; Yoong, V.N.; Ngo, T.-D. Dynamic Social Zone for Human Safety in Human-Robot Shared Workspaces. In Proceedings of the 2014 11th International Conference on Ubiquitous Robots and Ambient Intelligence (URAI), Kuala Lumpur, Malaysia, 12–15 November 2014; pp. 391–396. [Google Scholar]

- Krupp, M.M.; Rueben, M.; Grimm, C.M.; Smart, W.D. A Focus Group Study of Privacy Concerns about Telepresence Robots. In Proceedings of the 2017 26th IEEE International Symposium on Robot and Human Interactive Communication (RO-MAN), Lisbon, Portugal, 28 August–1 September 2017; pp. 1451–1458. [Google Scholar]

- Yamada, S.; Kanda, T.; Tomita, K. An Escalating Model of Children’s Robot Abuse. In Proceedings of the 2020 15th ACM/IEEE International Conference on Human-Robot Interaction (HRI), Cambridge, UK, 23–26 March 2020; pp. 191–199. [Google Scholar]

- Olivato, M.; Cotugno, O.; Brigato, L.; Bloisi, D.; Farinelli, A.; Iocchi, L. A Comparative Analysis on the Use of Autoencoders for Robot Security Anomaly Detection. In Proceedings of the 2019 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Venetian Macao, Macau, 4–8 November 2019; pp. 984–989. [Google Scholar]

- Vulpe, A.; Paikan, A.; Craciunescu, R.; Ziafati, P.; Kyriazakos, S.; Hemmer, A.; Badonnel, R. IoT Security Approaches in Social Robots for Ambient Assisted Living Scenarios. In Proceedings of the 2019 22nd International Symposium on Wireless Personal Multimedia Communications (WPMC), Lisbon, Portugal, 24–27 November 2019; pp. 1–6. [Google Scholar]

- Abate, A.F.; Bisogni, C.; Cascone, L.; Castiglione, A.; Costabile, G.; Mercuri, I. Social Robot Interactions for Social Engineering: Opportunities and Open Issues. In Proceedings of the 2020 IEEE Intl Conf on Dependable, Autonomic and Secure Computing, Intl Conf on Pervasive Intelligence and Computing, Intl Conf on Cloud and Big Data Computing, Intl Conf on Cyber Science and Technology Congress (DASC/PiCom/CBDCom/CyberSciTech), Online, 17–22 August 2020; pp. 539–547. [Google Scholar]

- Hochgeschwender, N.; Cornelius, G.; Voos, H. Arguing Security of Autonomous Robots. In Proceedings of the 2019 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Macau, China, 3–8 November 2019; pp. 7791–7797. [Google Scholar]

- Joosse, M.; Lohse, M.; Berkel, N.V.; Sardar, A.; Evers, V. Making Appearances: How Robots Should Approach People. ACM Trans. Hum.-Robot Interact. 2021, 10, 1–24. [Google Scholar] [CrossRef]

- Vasylkovskyi, V.; Guerreiro, S.; Sequeira, J.S. BlockRobot: Increasing Privacy in Human Robot Interaction by Using Blockchain. In Proceedings of the 2020 IEEE International Conference on Blockchain (Blockchain), Virtual Event, 2–6 November 2020; pp. 106–115. [Google Scholar]

- Sanoubari, E.; Young, J.; Houston, A.; Dautenhahn, K. Can Robots Be Bullied? A Crowdsourced Feasibility Study for Using Social Robots in Anti-Bullying Interventions. In Proceedings of the 2021 30th IEEE International Conference on Robot & Human Interactive Communication (RO-MAN), Vancouver, BC, Canada, 8–12 August 2021; pp. 931–938. [Google Scholar]

- Cui, Y.; Sun, Y.; Luo, J.; Huang, Y.; Zhou, Y.; Li, X. MMPD: A Novel Malicious PDF File Detector for Mobile Robots. IEEE Sens. J. 2020, 1, 17583–17592. [Google Scholar] [CrossRef]

- Garousi, V.; Fernandes, J.M. Highly-Cited Papers in Software Engineering: The Top-100. Inf. Softw. Technol. 2016, 71, 108–128. [Google Scholar] [CrossRef]

- Lockheed Martin Cyber Kill Chain®. Available online: https://www.lockheedmartin.com/en-us/capabilities/cyber/cyber-kill-chain.html (accessed on 6 June 2022).

- MITRE CAPEC: Mechanisms of Attack. Available online: https://capec.mitre.org/data/definitions/1000.html (accessed on 29 September 2022).

- IGI Global What Is Attack Scenario|IGI Global. Available online: https://www.igi-global.com/dictionary/attack-scenario/59726 (accessed on 2 October 2022).

- ENISA Cybersecurity Challenges in the Uptake of Artificial Intelligence in Autonomous Driving. Available online: https://www.enisa.europa.eu/news/enisa-news/cybersecurity-challenges-in-the-uptake-of-artificial-intelligence-in-autonomous-driving (accessed on 1 October 2022).

- NIST NIST Cybersecurity Framework Version 1.1. Available online: https://www.nist.gov/news-events/news/2018/04/nist-releases-version-11-its-popular-cybersecurity-framework (accessed on 6 June 2022).

- Agrafiotis, I.; Nurse, J.R.C.; Goldsmith, M.; Creese, S.; Upton, D. A Taxonomy of Cyber-Harms: Defining the Impacts of Cyber-Attacks and Understanding How They Propagate. J. Cybersecurity 2018, 4, tyy006. [Google Scholar] [CrossRef]

- Collins, E.C. Drawing Parallels in Human–Other Interactions: A Trans-Disciplinary Approach to Developing Human–Robot Interaction Methodologies. Philos. Trans. R. Soc. B Biol. Sci. 2019, 374, 20180433. [Google Scholar] [CrossRef] [PubMed]

- Moon, M. SoftBank Reportedly Stopped the Production of Its Pepper Robots Last Year: The Robot Suffered from Weak Demand According to Reuters and Nikkei. Available online: https://www.engadget.com/softbank-stopped-production-pepper-robots-032616568.html (accessed on 8 October 2022).

- Nocentini, O.; Fiorini, L.; Acerbi, G.; Sorrentino, A.; Mancioppi, G.; Cavallo, F. A Survey of Behavioral Models for Social Robots. Robotics 2019, 8, 54. [Google Scholar] [CrossRef]

- Johal, W. Research Trends in Social Robots for Learning. Curr. Robot. Rep. 2020, 1, 75–83. [Google Scholar] [CrossRef]

- Kirschgens, L.A.; Ugarte, I.Z.; Uriarte, E.G.; Rosas, A.M.; Vilches, V.M. Robot Hazards: From Safety to Security. arXiv 2021, arXiv:1806.06681. [Google Scholar]

- Wohlin, C.; Runeson, P.; Höst, M.; Ohlsson, M.C.; Regnell, B.; Wesslén, A. Experimentation in Software Engineering; Springer: Berlin/Heidelberg, Germany, 2012; ISBN 978-3-642-29043-5. [Google Scholar]

- ENISA Threat Landscape for Supply Chain Attacks. Available online: https://www.enisa.europa.eu/publications/threat-landscape-for-supply-chain-attacks (accessed on 6 June 2022).

- Mohamed Shaluf, I. Disaster Types. Disaster Prev. Manag. Int. J. 2007, 16, 704–717. [Google Scholar] [CrossRef]

- Jbair, M.; Ahmad, B.; Maple, C.; Harrison, R. Threat Modelling for Industrial Cyber Physical Systems in the Era of Smart Manufacturing. Comput. Ind. 2022, 137, 103611. [Google Scholar] [CrossRef]

- Li, H.; Liu, Q.; Zhang, J. A Survey of Hardware Trojan Threat and Defense. Integration 2016, 55, 426–437. [Google Scholar] [CrossRef]

- Sidhu, S.; Mohd, B.J.; Hayajneh, T. Hardware Security in IoT Devices with Emphasis on Hardware Trojans. J. Sens. Actuator Netw. 2019, 8, 42. [Google Scholar] [CrossRef]

- Tuma, K.; Calikli, G.; Scandariato, R. Threat Analysis of Software Systems: A Systematic Literature Review. J. Syst. Softw. 2018, 144, 275–294. [Google Scholar] [CrossRef]

- Das, A.; Baki, S.; El Aassal, A.; Verma, R.; Dunbar, A. SoK: A Comprehensive Reexamination of Phishing Research From the Security Perspective. IEEE Commun. Surv. Tutor. 2020, 22, 671–708. [Google Scholar] [CrossRef]

- Choi, M.; Robles, R.J.; Hong, C.; Kim, T. Wireless Network Security: Vulnerabilities, Threats and Countermeasures. Int. J. Multimed. Ubiquitous Eng. 2008, 3, 10. [Google Scholar]

- Stallings, W.; Brown, L. Computer Security: Principles and Practice, 4th ed.; Pearson: New York, NY, USA, 2018; ISBN 978-0-13-479410-5. [Google Scholar]

- NIST; Jansen, W.; Grance, T. Guidelines on Security and Privacy in Public Cloud Computing; NIST: Gaithersburg, MD, USA, 2011; p. 80. [Google Scholar]

- Masahiko, O.; Nobuyuki, I.; Yuto, N.; Masayuki, I. Stiffness Readout in Musculo-Skeletal Humanoid Robot by Using Rotary Potentiometer. In Proceedings of the 2010 IEEE SENSORS, Waikoloa, HI, USA, 1–4 November 2010; pp. 2329–2333. [Google Scholar]

- Lang, H.; Wang, Y.; de Silva, C.W. Mobile Robot Localization and Object Pose Estimation Using Optical Encoder, Vision and Laser Sensors. In Proceedings of the 2008 IEEE International Conference on Automation and Logistics, Qingdao, China, 1–3 September 2008; pp. 617–622. [Google Scholar]

- Wang, Z.; Zhang, J. Calibration Method of Internal and External Parameters of Camera Wheel Tachometer Based on TagSLAM Framework. In Proceedings of the International Conference on Signal Processing and Communication Technology (SPCT 2021), Harbin, China, 23–25 December 2021; SPIE: Bellingham, WA, USA, 2022; Volume 12178, pp. 413–417. [Google Scholar]

- Huang, Q.; Zhang, S. Applications of IMU in Humanoid Robot. In Humanoid Robotics: A Reference; Goswami, A., Vadakkepat, P., Eds.; Springer: Dordrecht, The Netherlands, 2017; pp. 1–23. ISBN 978-94-007-7194-9. [Google Scholar]

- Ding, S.; Ouyang, X.; Liu, T.; Li, Z.; Yang, H. Gait Event Detection of a Lower Extremity Exoskeleton Robot by an Intelligent IMU. IEEE Sens. J. 2018, 18, 9728–9735. [Google Scholar] [CrossRef]

- Kunal, K.; Arfianto, A.Z.; Poetro, J.E.; Waseel, F.; Atmoko, R.A. Accelerometer Implementation as Feedback on 5 Degree of Freedom Arm Robot. J. Robot. Control JRC 2020, 1, 31–34. [Google Scholar] [CrossRef]