1. Introduction

With the continuous improvement in the availability and quality of high-resolution remote sensing imagery [

1,

2], together with the rapid development of deep learning techniques, remote sensing object detection (RSOD) models have been increasingly deployed in a wide range of real-world remote sensing applications, such as urban governance [

3], disaster assessment [

4,

5], and ecological monitoring [

6,

7]. In these operational and mission-critical scenarios, high-performance RSOD models not only are essential for intelligent interpretation but also represent valuable digital assets with strong commercial value and intellectual property (IP) attributes. Such models typically rely on large-scale annotated datasets, substantial computational resources, and long-term iterative optimization [

8]. However, in practical application pipelines, particularly during model delivery, sharing, and cloud- and device-side deployment, RSOD models are vulnerable to piracy, unauthorized duplication, and illegal resale [

9,

10]. These threats can severely compromise the economic interests and IP rights of model owners and further impede the secure and sustainable development of operational remote sensing systems. Therefore, there is an urgent need to develop an effective and practical IP protection method specifically designed for RSOD models.

In recent years, to address various security threats to models [

11], embedding watermark information [

12,

13,

14] into deep neural networks (DNNs) has emerged as an important and effective solution. The core idea of model watermarking is to embed copyright information associated with the model owner’s identity, such as secret keys, specific trigger behaviors, or verifiable structural characteristics, into the model while preserving its original task performance as much as possible. In this way, even if the model is subsequently copied, distributed, or tampered with during deployment and usage, the copyright owner can still detect or recover the embedded watermark through pre-designed verification or extraction mechanisms. This enables reliable ownership claims and provides forensic evidence for identifying infringement.

Specifically, existing watermarking techniques for DNN models can generally be categorized into two main types: black-box watermarking and white-box watermarking. Black-box watermarking methods [

15,

16,

17,

18,

19,

20,

21,

22] typically construct a set of specially designed trigger input samples during the embedding stage so that the model produces predefined abnormal output patterns when queried with these inputs. During the ownership verification stage, the copyright owner inputs verification samples to a suspicious model or service and determines the presence of the watermark by checking whether the output behavior matches the predefined watermark patterns. However, such methods rely on interactive black-box queries, making the ownership verification process prone to disputes. For example, it may be questioned whether the verifier intentionally selected special input samples or whether the accused party applied additional post-processing to the outputs. As a result, the evidence chain provided by black-box watermarking may lack sufficient transparency and judicial credibility.

In contrast, white-box watermarking methods [

8,

23,

24,

25,

26,

27,

28] embed watermark information directly into a model’s internal components and bind it to internal information, such as parameters or intermediate representations. When the model’s internal information is exposed or when third-party forensic authentication and arbitration platforms are required for evidence collection, a subset of internal information containing the watermark can be extracted from the suspicious model. Ownership can subsequently be verified using a fixed and reproducible watermark extraction and verification algorithm. Since the watermark evidence is derived directly from the model’s internal information, the verification process is independent of external interactions or query interfaces, thus demonstrating stronger interpretability, stability, and credibility than black-box watermarking. In summary, for high-value RSOD models that are vulnerable to illegal copying and misuse, investigating and developing white-box watermarking protection methods based on internal model information is of great practical significance and application value in both real-world deployment and judicial forensics scenarios.

Despite providing more interpretable and trustworthy ownership evidence by leveraging a model’s internal information, existing white-box watermarking methods vary widely in their embedding locations and implementation forms [

23,

26,

27]. Among them, weight-based white-box watermarking is particularly practical for RSOD models since it avoids architecture modification, has low embedding overhead, and supports efficient extraction and verification. However, existing weight-based white-box watermarking methods still suffer from evident limitations in terms of robustness and stealthiness. In particular, under common perturbations encountered in the deployment of RSOD models, watermark information is prone to extraction failure or increased exposure risk, thereby limiting its effectiveness. Specifically, white-box watermarking methods based on parameter projection [

27] or sign-based alignment [

8,

29] typically encode watermark bits as the sign of a weight projection along a specific direction or as the result of a threshold decision. However, they are highly sensitive to numerical perturbations, and such perturbations can easily cause the underlying sign relationships to drift, thereby reducing verification reliability. Methods based on statistical distribution constraints [

25,

30] introduce regularization terms to enforce certain weights to follow predefined distributional patterns, thereby improving watermark extractability. However, such constraints may introduce detectable anomalies that compromise stealthiness and can also conflict with the primary task objective, leading to performance degradation. In addition, some methods that rely on specific architectural designs [

31] are limited in terms of cross-architecture transferability and adaptation to mainstream CNN-based RSOD models, which hinders their general applicability. Therefore, designing a white-box watermarking mechanism that simultaneously achieves robustness, stealthiness, and transferability while preserving detection performance remains a key challenge.

To address this challenge, we propose a white-box watermarking method to protect the IP of RSOD models, which enhances robustness to common model perturbations and attacks while maintaining watermark imperceptibility. Specifically, we first evaluate the sensitivity of model parameters by analyzing the gradients of the detection loss, and we adaptively select weights that have minimal impact on the primary task performance as watermark carriers. Then, we employ a margin-based parameter-ranking encoding mechanism to embed watermark information by enforcing the relative ordering relationships between paired parameters. In addition, to further improve robustness against attacks, we introduce an attack-simulation training strategy that incorporates common perturbations into the optimization process. Finally, we introduce a regularization term based on the statistical properties of the weights to align the parameter distribution of the watermark-bearing layers with that of the original model, thereby further enhancing the stealthiness of the watermark.

The main contributions are summarized as follows:

We propose a novel white-box watermarking method that achieves both robustness and stealthiness for protecting the IP of RSOD models.

We design a margin-based parameter-ranking watermark embedding scheme, which encodes watermark bits by enforcing stable relative ordering constraints between paired model parameters, thereby enabling a high watermark verification success rate.

We introduce an attack-simulation-driven training strategy to improve robustness against watermark removal attacks, along with a statistical-distribution-constrained stealthiness loss to minimize detectable parameter perturbations.

Extensive experiments on multiple RSOD detectors and benchmark datasets demonstrate that the proposed method achieves a watermark success rate of 100% under the evaluation settings, with negligible impact on detection accuracy, and exhibits strong robustness and stealthiness against various watermark removal attacks.

The remainder of this paper is organized as follows.

Section 2 reviews related work on black-box and white-box model watermarking.

Section 3 introduces the preliminaries and problem formulation. The proposed white-box watermarking method for RSOD models is presented in

Section 4. Extensive experimental evaluations, including threshold determination, fidelity, effectiveness, robustness, stealthiness, and ablation studies, are reported in

Section 5. Finally,

Section 6 concludes this paper and outlines future research directions.

3. Preliminary and Problem Formulation

In this section, we first introduce the overall workflow of white-box watermarking, then present the metrics used to evaluate watermark performance, and finally provide a formal description of the problem.

3.1. The Main Pipeline of White-Box Watermarking

White-box watermarking aims to embed identifiable ownership information into a neural network model, enabling reliable verification when the model’s internal parameters or intermediate representations are accessible. In general, the white-box watermarking pipeline consists of three stages: watermark message construction, watermark embedding, and watermark verification.

In the watermark message construction phase, a watermark message is first sampled from the message space M and then encoded into a structured watermark representation, which is subsequently embedded into the model’s carrier weights.

In the watermark embedding phase, let

denote the original neural network model parameterized by weights

. Given a training dataset

, where

and

denote the input and the corresponding label of the

i-th training example and

is the number of training samples, the model is typically learned by minimizing the task loss

. The objective of watermark embedding is to obtain a watermarked model

such that: (i) it preserves the original task performance, and (ii) it embeds the watermark message

in a verifiable manner. To achieve this, watermark embedding is commonly formulated as a joint optimization problem:

where

controls the trade-off between task fidelity and watermark strength, and

denotes the watermark embedding loss. After optimization, the resulting watermarked model

is distributed or deployed.

In the watermark verification phase, the model owner aims to determine whether the suspected model

contains the embedded watermark. First, the owner applies an extraction function

to recover the watermark message from the suspected model:

Next, the extracted watermark

is compared with the

using a similarity measure. If the similarity score

exceeds a predefined threshold

, the watermark is regarded as successfully verified:

3.2. Watermark Performance Metrics

To comprehensively evaluate the performance of white-box watermarking, it is necessary to assess not only whether the watermark can be successfully verified but also whether the protected model remains useful and secure under various conditions. In this work, we evaluate watermarking performance from four key perspectives, including fidelity, effectiveness, stealthiness, and robustness:

Fidelity. It evaluates whether watermark embedding maintains the model’s original performance.

Effectiveness. This metric evaluates whether the watermark can be verified with high confidence when the suspect model is derived from the protected model.

Stealthiness. It measures whether the watermark is difficult for an adversary to detect or distinguish from normal model behavior.

Robustness. It characterizes the watermark’s resistance to adversarial attempts to remove or invalidate it.

3.3. Problem Formulation

In this work, we want to design a white-box watermarking for the RSOD model in which the model owner is allowed to access the internal parameters of a suspected model and aims to establish verifiable ownership through an embedded watermark.

Let denote an RSOD model parameterized by weights . The owner specifies a watermark message m and embeds it into the model parameters. A fundamental design question arises at the beginning: where should the watermark be embedded? Specifically, the owner must select a subset of model parameters (or intermediate representations) as the watermark carrier, denoted by . The carrier selection strategy should provide sufficient capacity for encoding m while avoiding fragile or highly sensitive components that may lead to noticeable accuracy degradation or facilitate watermark removal. Moreover, the watermark embedding and verification procedures should be efficient and reliable, enabling the owner to extract or validate the watermark in a deterministic manner while preventing it from being easily detected and erased.

Therefore, our objective is to train a watermarked model

while ensuring that it satisfies multiple requirements simultaneously: (1)

Fidelity: watermark embedding should not significantly degrade the model utility, i.e., the performance drop

should be small. (2)

Effectiveness: the watermark should be verified with high confidence on the watermarked model while remaining unlikely to be triggered in non-watermarked models. (3)

Stealthiness: the watermark should not introduce abnormal patterns that can be easily detected or localized by an adversary, meaning the watermarked parameters should remain statistically indistinguishable from normal training variations. (4)

Robustness: under a set of common attacks or transformations

(e.g., fine-tuning [

32] and quantitative attack [

33]), the watermark should also remain verifiable on the transformed model

.

4. The Proposed Method

4.1. Method Overview

The overview of the proposed method is illustrated in

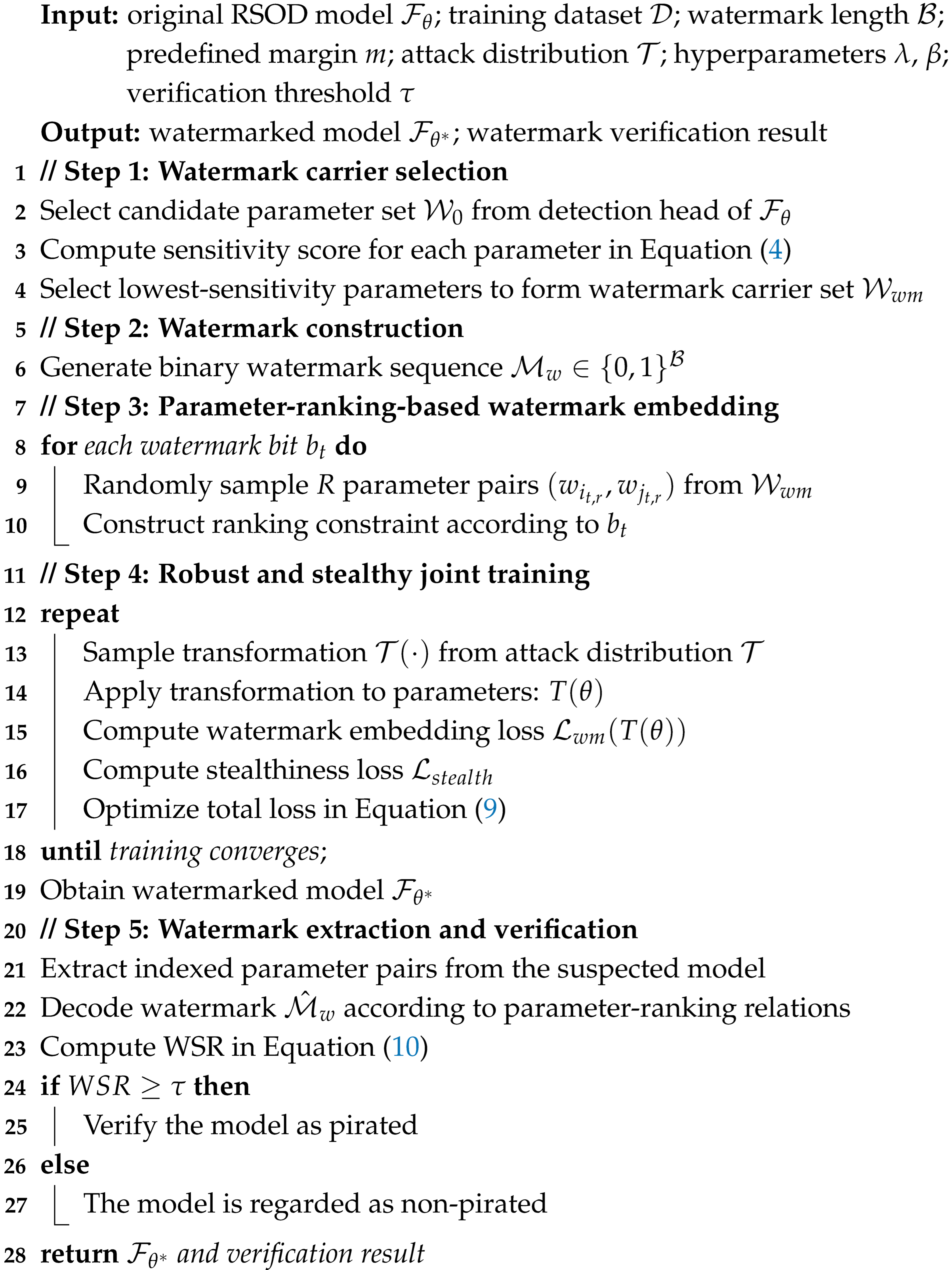

Figure 1 and Algorithm 1. The overall framework consists of three stages: watermark carrier analysis and selection, watermark embedding and optimization, and watermark extraction and verification. First, we analyze the sensitivity of model parameters to the detection task by computing gradients of the detection loss, and we adaptively select low-impact parameters as watermark carriers to preserve detection performance. Then, watermark bits are embedded using a parameter-ranking-based encoding mechanism that enforces relative magnitude relationships between selected parameter pairs. To improve robustness and stealthiness in practical scenarios, we further incorporate attack-simulation training during embedding and apply distribution constraints to reduce detectable statistical artifacts. Finally, the watermark is extracted using the same parameter-indexing rules, and ownership is verified by measuring the consistency between the extracted watermark and the original one.

| Algorithm 1 Overall procedure of robust and stealthy watermark embedding and verification. |

![Remotesensing 18 00985 i001 Remotesensing 18 00985 i001]() |

4.2. Sensitivity-Based Watermark Carrier Selection

In this section, we analyze and select the carrier-weight parameters for watermark embedding. To avoid noticeable degradation of model inference performance due to watermark embedding, the watermark carriers are preferentially selected from a set of weights with relatively limited impact on detection performance. Given that different functional modules in RSOD models play distinct roles in feature modeling and prediction, existing studies [

34,

35] have shown that feature extraction modules [

36], such as the backbone, are primarily responsible for learning high-level semantic and spatial representations. As a result, perturbations to their parameters tend to cause more significant changes in the overall feature distribution and detection performance. In contrast, the detection head [

37] mainly performs task-specific mapping and prediction based on already extracted features, and its detection accuracy is relatively insensitive to mild parameter perturbations or localized adjustments [

38]. Therefore, to minimize the impact of watermark embedding on the primary task performance, our work prioritizes selecting a subset of weight parameters from the detection head to construct the initial candidate parameter set

.

Subsequently, to further filter and analyze the influence of each parameter

on model inference performance under slight variations, we adopt a gradient-based sensitivity analysis approach for quantitative evaluation. This approach characterizes the impact of parameter variations on the primary training objective, thereby indirectly reflecting the contribution of different parameters to model inference performance. Specifically, let

denote the primary training objective of the RSOD model. The sensitivity of

is measured by computing the gradient of

, denoted as

. During the computation, only 10% of the training samples are used to measure

, which is estimated statistically through multiple forward and backward passes over several mini-batches. Specifically, the sensitivity metric for each parameter is obtained by averaging the squared gradient values across different mini-batches:

where the expectation

is approximated by averaging the squared gradients over multiple mini-batches. A larger

indicates that small changes in

lead to more significant variations in the detection loss and inference performance, whereas a smaller

implies that the parameter has a relatively limited impact on model performance.

After completing the sensitivity evaluation, the candidate parameters are ranked according to their sensitivity scores, and those with the lowest sensitivity values and sufficient quantity to meet the watermark embedding requirements are selected to form the final watermark carrier parameter set .

4.3. Watermark Embedding and Optimization

In this section, we first construct the watermark information to be embedded. Then, by introducing a watermark embedding loss based on parameter-ranking relationships, is embedded into the . Finally, to further enhance the robustness and stealthiness of the watermark, we design an attack-simulation training strategy and a distribution-constrained watermark stealthiness enhancement scheme, which are jointly optimized during the watermark embedding process.

4.3.1. Watermark Information Construction

To ensure the uniqueness and unpredictability of the watermark information, our work adopts a watermark construction strategy consistent with existing studies [

8,

27]. Specifically, a fixed-length binary sequence is randomly generated using the SHA-256 hash function [

39] and used as the watermark information to be embedded. The generated watermark satisfies

, where

denotes the length of the watermark in bits.

4.3.2. Parameter-Ranking-Based Watermark Embedding

Unlike watermark embedding methods based on parameter projection or sign constraints, we propose a parameter-ranking-based watermark embedding strategy, in which watermark information is encoded by enforcing relative ordering relationships between pairs of model parameters. Specifically, for each watermark bit in the , we randomly sample pairs of parameters from the , denoted as , where and denote two distinct indices randomly sampled from the parameter index set, corresponding to a pair of model parameters involved in the ranking constraint.

To embed the watermark information into the carrier parameters, we define the following watermark embedding loss:

where

denotes the exponential function, and the sign variable

is determined by the watermark bit

as

Specifically, when , the parameter difference is encouraged to satisfy , whereas when , the desired constraint becomes .

By minimizing , the model parameters are guided to satisfy ranking constraints consistent with the embedded watermark bits, while preserving the original detection performance, thereby achieving stable and reliable watermark embedding.

4.3.3. Attack-Simulation-Driven Robust Training

To enhance the robustness of the embedded watermark against various perturbations that may occur during model distribution, we further propose an attack-simulation-driven robust training strategy, in which potential attack processes are explicitly incorporated into the training optimization framework. Specifically, we define a set of common parameter transformations and attack distributions , and we randomly apply these transformations to the model parameters during training. In our work, mainly includes quantization attacks and fine-tuning attacks .

During each training iteration, a transformation is first sampled from the attack distribution , and the sampled transformation is then applied to the current model parameters to generate a perturbed parameter set . The watermark embedding loss is subsequently evaluated on the model after applying the parameter transformation, allowing the optimization process to account for the effects of parameter perturbations explicitly. Through this process, the watermark constraints are encouraged to remain satisfied not only for the original parameters but also for their attacked versions.

Based on this, inspired by the concept of Expectation over Transformation (EOT) [

40], we formulate the watermark embedding objective as an expected robustness optimization problem under the attack distribution. The resulting objective function can be expressed as:

By explicitly incorporating attack simulation during training, the model is guided to preserve the extractability and verifiability of the embedded watermark even after undergoing various forms of parameter perturbations.

4.3.4. Distribution-Constrained Stealthiness Enhancement Strategy

In this section, to prevent the embedded watermark from being detected and subsequently removed through statistical analysis, we further introduce a distribution-constrained stealthiness regularization term during training to mitigate the impact of watermark embedding on the statistical properties of model parameters.

Specifically, the parameter distribution of the original model without watermark embedding is taken as a reference, and the parameters of the watermarked model are constrained to remain statistically consistent with the original distribution. Let

denote the parameters of the

l-th layer in the original model, and let

represent the corresponding parameters in the watermarked model. By matching the mean and variance of the parameter distributions at each layer, we construct a stealthiness loss defined as:

where

and

denote the mean and standard deviation of the parameter distribution, respectively, and

denotes the set of carrier parameter layers subject to the stealthiness constraint.

4.3.5. Total Training Loss Function

By jointly considering watermark robustness and stealthiness constraints, the final training objective is formulated as:

where

is a hyperparameter that balances watermark robustness, and

controls the weight of the stealthiness regularization term, trading off watermark embedding strength against parameter distribution consistency.

Through joint optimization of the above objective, the model is able to embed watermarks that are both robust and stealthy while preserving the original detection performance.

4.4. Watermark Extraction and Verification

In this section, watermark extraction and verification are performed on a suspected model to determine whether it contains the embedded copyright watermark, thereby enabling reliable ownership verification. The overall verification procedure consists of two stages: watermark extraction and watermark verification.

Watermark extraction: The objective of the watermark extraction stage is to recover the potentially embedded watermark bit sequence from the suspected model. Specifically, following the same parameter-indexing rules used during

Section 4.3.2, candidate parameter pairs that may carry watermark information are extracted from the parameter set

of the suspected model

. The extracted parameter pairs are denoted as

, where the indices

are identical to those used during embedding. Accordingly, the watermark-related carrier parameter subset in the

can be expressed as

.

Subsequently, the watermark information is decoded using the same parameter-ranking-based encoding scheme. Specifically, the parameter difference for the r-th parameter pair corresponding to the t-th watermark bit is defined as . Based on the sign of the parameter difference, a local decision bit is obtained as , where denotes the indicator function. Since each is embedded using parameter pairs, local decisions are obtained during decoding. These local decisions are aggregated to produce the final decoded watermark bit: .

Finally, the decoded watermark sequence of is constructed as .

Watermark verification: After obtaining the from the , it is compared with the original watermark securely stored by the copyright owner to determine whether a valid watermark is present.

To quantitatively evaluate the success of watermark verification, we introduce the Watermark Success Rate (WSR), defined as:

The WSR measures the bit-level consistency between the decoded and original watermarks and ranges from 0 to 100%. A higher WSR indicates a more reliable verification result, with WSR = 100% implying that the watermark is perfectly recovered.

In addition, in practical verification scenarios, model application may introduce certain perturbations, allowing for a small number of bit errors between the decoded and original watermarks. Therefore, a decision threshold is introduced. If WSR , the is determined to be a pirated model. Otherwise, it is regarded as a non-pirated model.

5. Experiment and Result Analysis

5.1. Experimental Setup

Models and datasets: In this study, two representative object detection frameworks widely adopted in RSOD were utilized for experimental evaluation. Specifically, the two-stage detector Faster R-CNN [

41], equipped with a ResNet50 backbone and the feature pyramid network (FPN) [

35], was selected, alongside the one-stage detector YOLOv5 [

42], which employs CSPDarknet53 as its feature extraction network. Furthermore, experiments were conducted on three benchmark RSOD datasets, namely, NWPU VHR-10 [

43], RSOD24 [

44], and LEVIR [

45], which are commonly used for performance assessment in remote sensing scenarios.

Evaluation metrics: To quantitatively evaluate the effectiveness of the proposed approach, two evaluation criteria are adopted: mean Average Precision (mAP) and WSR. The mAP serves as an indicator of the model’s performance on the primary object detection task and is obtained by computing the mean of Average Precision (AP) values across all categories. AP provides a comprehensive assessment of detection performance by jointly considering precision and recall in object localization and classification. Higher AP values reflect superior detection capability, indicating improved accuracy and completeness in identifying target objects. The WSR is used to measure the accuracy of watermark extraction by evaluating the correspondence between the embedded and extracted watermark bits. A higher WSR indicates a greater degree of bit-level consistency, leading to more reliable copyright verification.

Training configuration and details: All experiments were conducted on a workstation running the Windows operating system, equipped with an Intel i7-14700KF CPU and an NVIDIA GeForce RTX 4090 GPU. The proposed watermark embedding framework was implemented using the PyTorch 2.9.0 deep learning library. During training, the model parameters were optimized following the standard training pipeline of the corresponding detection frameworks. In addition, the initial watermark carriers were selected from the localization branch of the detection head. Furthermore, the margin parameter used in the ranking-based watermark embedding constraint was set to .

For the attack-simulation-driven robust training strategy, the simulated fine-tuning attack was implemented by temporarily fine-tuning the model on the original training dataset for 150 epochs with a learning rate of 0.01. The simulated quantization attack was implemented by temporarily quantizing the model parameters to lower numerical precision (e.g., FP16 or INT8) and then dequantizing them back to floating-point representation before the forward pass.

Compared methods: For a thorough and fair evaluation, several representative state-of-the-art watermarking methods under the white-box setting were selected for comparison, including Chen [

8], Uchida [

27], Tyagi [

46], RIGA [

28], DeepIPR [

23] and Zhang [

24]. Notably, the methods [

23,

24,

27,

28,

46] were originally designed for image classification tasks. For a fair evaluation in the context of RSOD, we reimplemented these approaches and adapted them to the RSOD domain for comparison.

5.2. Threshold Setting

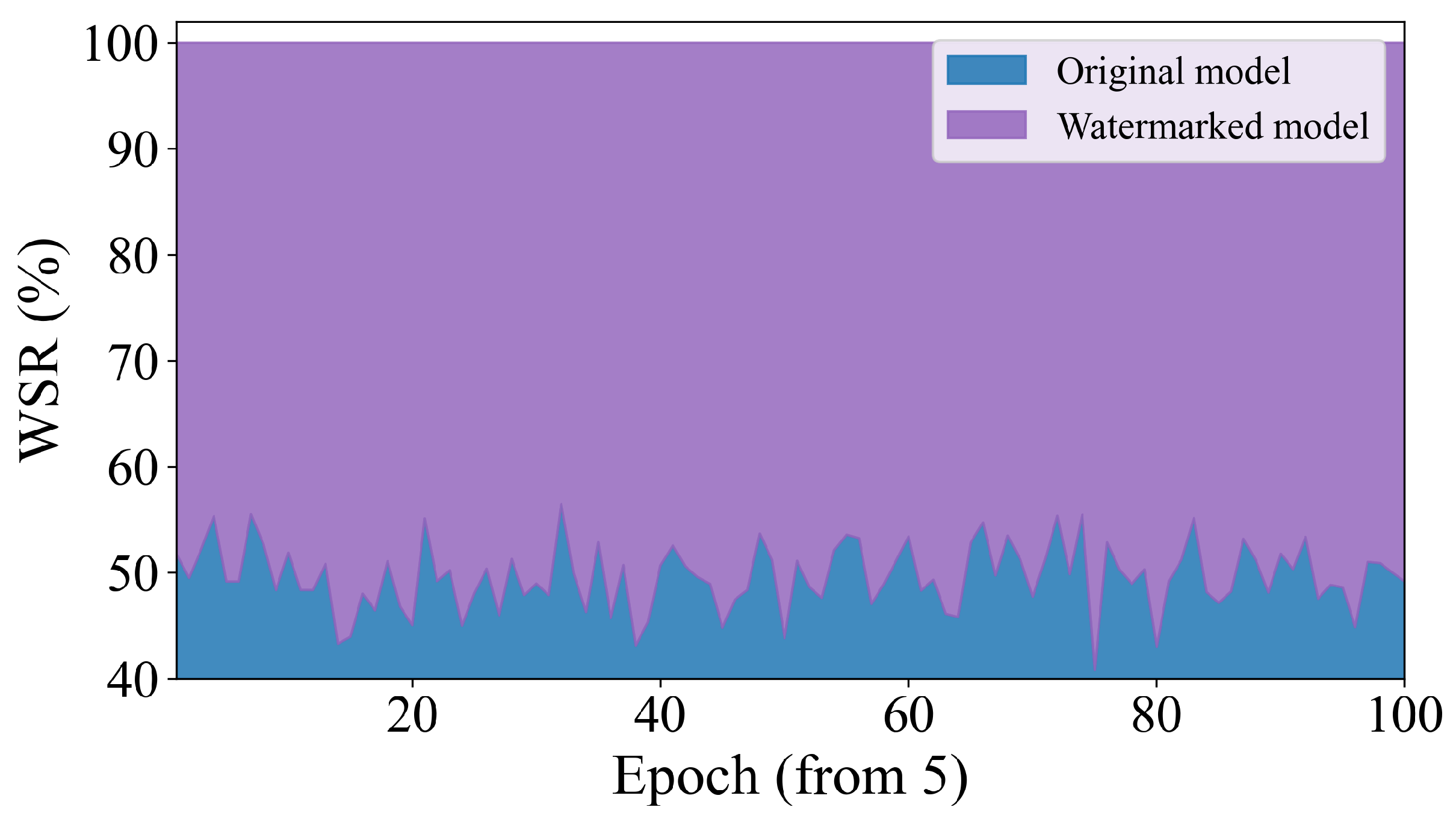

The threshold is introduced to determine whether the extracted watermark can be reliably identified for copyright verification, where a watermark is considered successfully detected if the WSR exceeds . To establish an appropriate value for , we design a comparative experiment that analyzes the watermark extraction behavior from both original models and watermarked models.

Specifically, we apply the watermark extraction algorithm to extract watermark information from the same indexed positions of the model weights, which serve as the designated watermark carrier as detailed in

Section 4.2. For the original model, although no watermark is embedded, the extraction procedure is still applied to the corresponding weights and the resulting bit sequence is compared with the predefined watermark. In contrast, for the watermarked model, the same procedure is applied to the embedded weights, and the extracted bits are compared with the embedded watermark to obtain the corresponding WSR. Furthermore, to ensure statistical reliability, the experiment is conducted over multiple checkpoints. Starting from the fifth training epoch, watermark extraction is performed 100 consecutive times for the original model, resulting in 100 WSR measurements. The same procedure is applied to the watermarked model, also yielding 100 WSR values under identical conditions.

The experimental results are illustrated in

Figure 2. The WSR values obtained from the original model fluctuate around 50%, which is consistent with the expected behavior of random bit matching. In contrast, the WSR values of the watermarked model remain consistently at 100%, demonstrating stable and accurate watermark extraction. This clear separation between the two distributions indicates a substantial margin for reliable threshold selection. Based on these observations, we set the threshold

in this work. This value effectively distinguishes watermarked models from original models, minimizing false positives while ensuring robust watermark verification.

5.3. Fidelity Evaluation

The purpose of the fidelity evaluation is to examine whether the proposed watermarking scheme preserves the original detection performance of RSOD models after watermark embedding. To this end, we compare the detection accuracy of original models and their watermarked counterparts on three benchmark RSOD datasets, namely, NWPU VHR-10, RSOD24, and LEVIR, using two representative detectors: Faster R-CNN and YOLOv5. Experimental results presented in

Table 1 demonstrate that watermark embedding has a negligible impact on detection performance across all model–dataset combinations. Specifically, for Faster R-CNN, the mAP changes are −0.07%, +0.08%, and −0.05% on NWPU VHR-10, RSOD24, and LEVIR, respectively. Similarly, for YOLOv5, the corresponding mAP variations are −0.02%, +0.03%, and −0.04 percentage points. These extremely small fluctuations, which are within 0.1%, indicate that the proposed watermarking strategy does not meaningfully affect detection accuracy. In addition, as illustrated in

Figure 3, we further visualize the inference results of the original model and the watermarked model on the same input samples, providing qualitative evidence that watermark embedding does not alter the detection behavior. Overall, the results demonstrate that the proposed method achieves high fidelity, successfully embedding watermarks while maintaining the original performance of RSOD models.

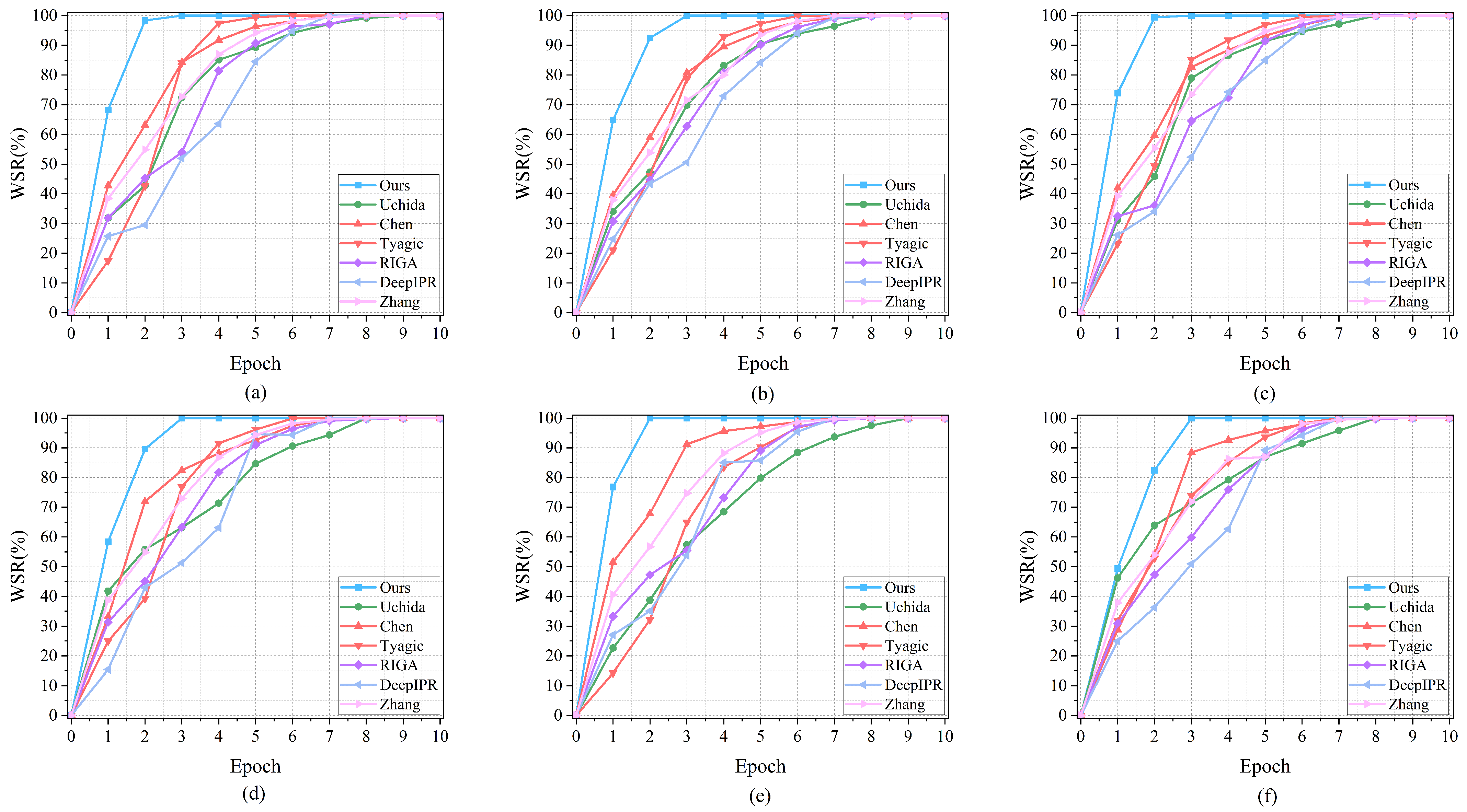

5.4. Effectiveness

In this section, we evaluate the effectiveness of the proposed watermarking method during training, with a particular focus on how quickly a reliable and verifiable watermark can be established. Comparative experiments are conducted on Faster R-CNN and YOLOv5 detectors across three RSOD benchmark datasets, namely, NWPU VHR-10, RSOD24, and LEVIR. Six representative white-box watermarking methods are selected as baselines, and the evolution of the WSR over training epochs is analyzed. As shown in

Figure 4, although all compared methods eventually achieve a WSR of 100%, the proposed method consistently exhibits a significantly faster convergence behavior, reaching and maintaining the maximum WSR in earlier training epochs. In contrast, the baseline methods require more training iterations to gradually reach the same level of watermark detectability. Notably, the WSR of the proposed method exceeds the predefined threshold

at an earlier stage of training, thereby enabling effective ownership verification at earlier epochs. This indicates that the proposed method is able to establish a reliable and verifiable watermark at an early stage of training, which is particularly desirable for practical RSOD model protection and ownership verification scenarios.

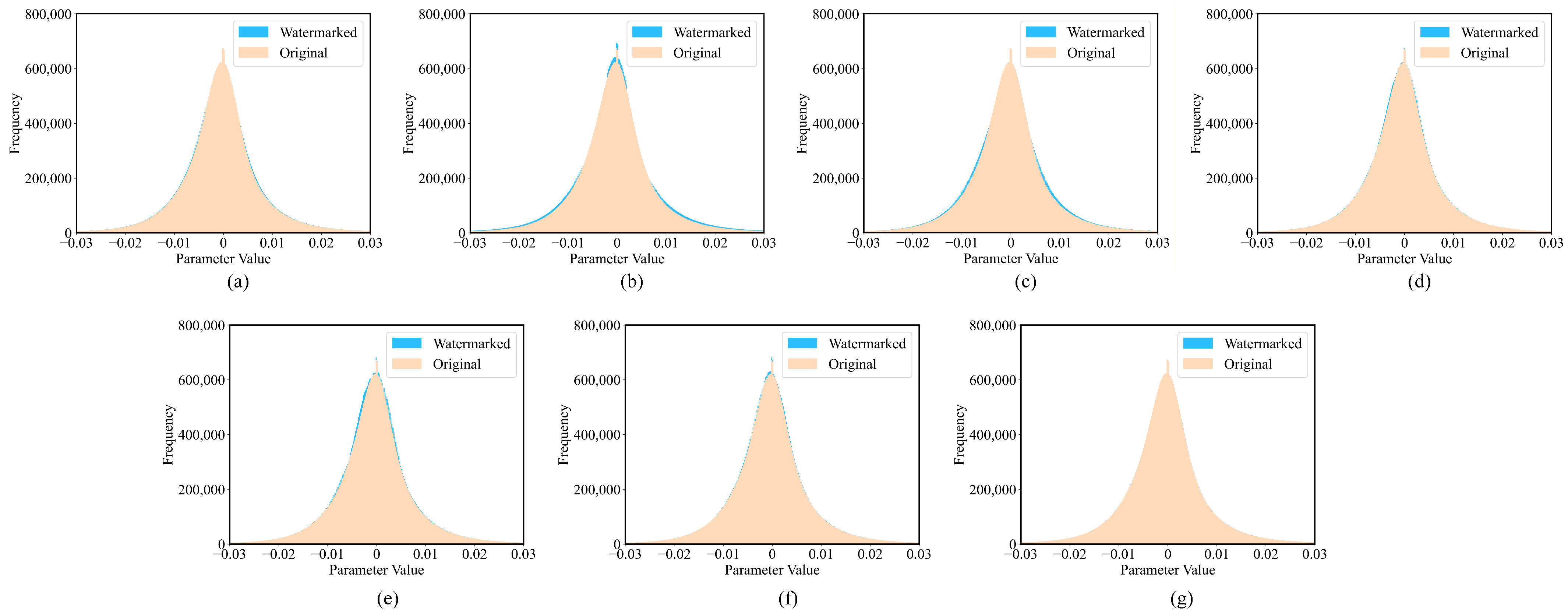

5.5. Stealthiness

In this subsection, we evaluate the stealthiness of the proposed watermarking method, i.e., whether watermark embedding introduces detectable artifacts in the model parameters that could facilitate watermark detection. To this end, we compare the parameter value distributions of watermark-bearing layers between the watermarked model and the corresponding original model. Specifically, for each method, we collect the weights from the layers used for watermark embedding and visualize their empirical distributions (frequency vs. parameter value). If watermark embedding causes abnormal parameter patterns, the distributions of the watermarked and clean models are expected to diverge; otherwise, they should remain highly overlapped.

The experimental results are shown in

Figure 5, where

Figure 5a–f correspond to Chen, Uchida, Tyagi, RIGA, DeepIPR and Zhang, respectively, and

Figure 5g corresponds to the proposed method. As illustrated, the baseline methods exhibit varying degrees of distribution discrepancy between the watermarked and original models, indicating that watermark embedding may alter the weight statistics and potentially leave detectable signatures. In contrast, our method shows a high degree of overlap between the two distributions, suggesting that the statistical characteristics of the watermark-bearing parameters are well preserved. Overall, these observations demonstrate that the proposed stealthiness enhancement strategy effectively maintains distributional consistency between the watermarked parameters and those of the original model, thereby reducing detectable watermark traces and improving the imperceptibility of watermark embedding.

5.6. Robustness

In this section, model watermarking is evaluated under common attack operations such as fine-tuning and quantization attacks, which may potentially undermine ownership verification. To examine the robustness of the proposed method under these conditions, we conduct extensive experiments involving fine-tuning and quantization attacks.

5.6.1. Robustness Against Fine-Tuning

To evaluate robustness against fine-tuning attacks, we simulate a practical post-deployment scenario in which an adversary continues training a stolen (watermarked) RSOD model on the target task to adapt it to new data distributions or to intentionally weaken the embedded watermark. Specifically, we take the watermarked models produced by different methods and perform additional fine-tuning for 100 epochs while periodically extracting the watermark and recording the WSR throughout the fine-tuning process. Experiments are conducted on two representative detectors, Faster R-CNN and YOLOv5, across the considered RSOD datasets, and the WSR evolution during fine-tuning is summarized in

Figure 6.

The results show that the proposed method consistently maintains a high WSR throughout the fine-tuning process, remaining stable at 100% across all settings, which indicates strong resistance to parameter variations introduced by continued optimization. In contrast, the baseline methods exhibit clear degradation trends: their WSR values gradually decrease as fine-tuning progresses, and the decline becomes more evident at later epochs, suggesting that their watermark representations are more sensitive to weight updates. Overall, these results demonstrate that the proposed watermarking strategy offers strong robustness against fine-tuning-based watermark removal, enabling reliable ownership verification even when the suspect model has undergone substantial post-training adaptation.

5.6.2. Robustness Against Quantitative Attack

To evaluate robustness against quantization attacks, we apply post-training quantization to the watermarked RSOD models and examine whether the embedded watermark remains verifiable under reduced numerical precision. Specifically, three quantization settings are considered, including fp32, fp16, and int8. Experiments are conducted on two representative detectors (Faster R-CNN and YOLOv5) and two RSOD benchmark datasets (NWPU VHR-10 and RSOD24). For each method, both mAP and WSR are reported after quantization, as summarized in

Table 2. Overall, quantization causes only minor fluctuations in mAP for all methods, indicating that detection accuracy is largely preserved across different precision levels. However, the watermark verification performance shows clear differences: the baseline methods generally suffer noticeable WSR degradation under fp16 and int8 quantization, suggesting that their watermark representations are sensitive to precision reduction. In contrast, the proposed method consistently maintains WSR = 100% across all tested detectors, datasets, and quantization settings. These results demonstrate that the proposed watermarking scheme is highly robust against quantization-induced numerical perturbations, enabling reliable ownership verification in practical deployment scenarios involving model compression.

5.7. Ablation Study on Watermark Length

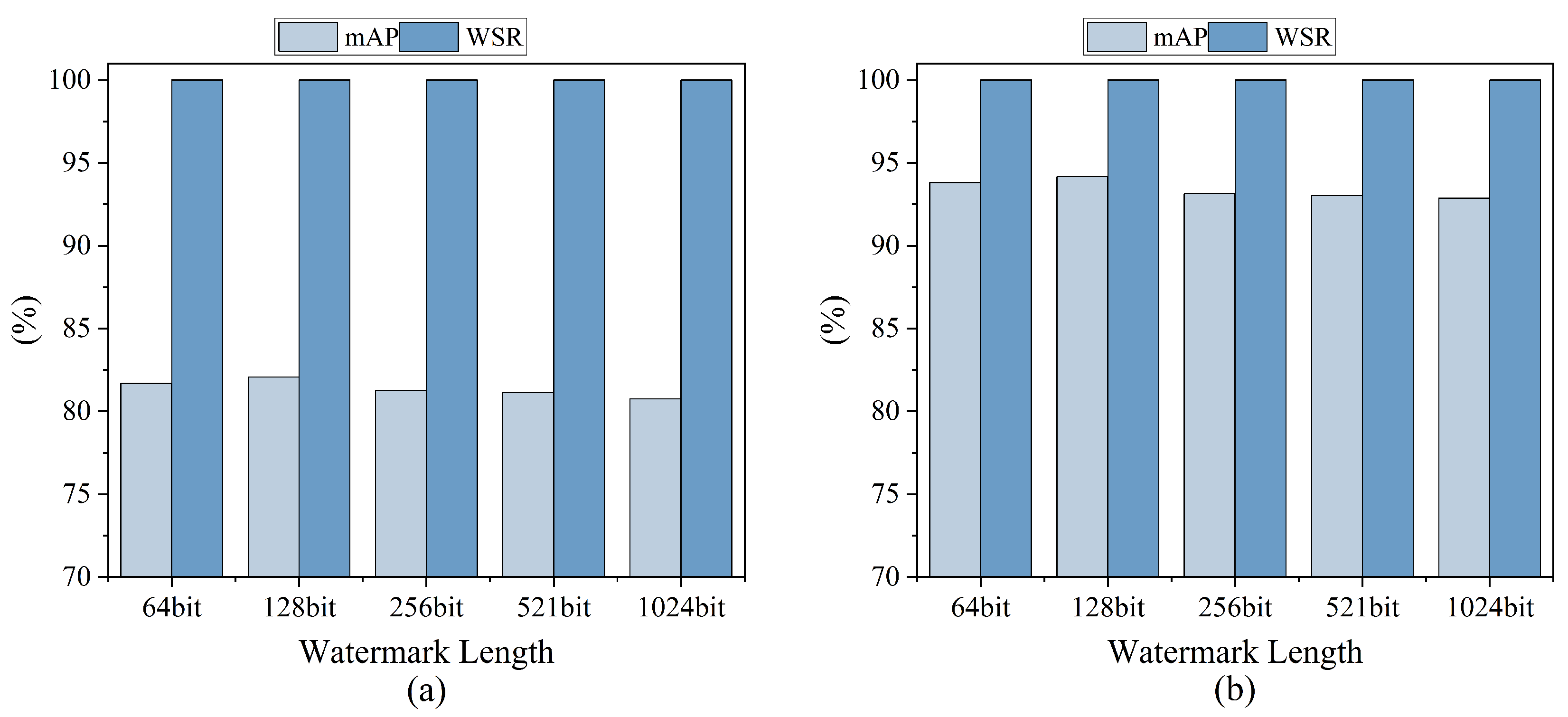

To investigate the influence of watermark length on both detection performance and watermark verifiability, we conduct an ablation study on the NWPU VHR-10 dataset using two representative detectors, Faster R-CNN and YOLOv5, while keeping all other training and embedding settings unchanged. Specifically, the watermark length is set to 64, 128, 256, 512, and 1024 bits, and for each configuration we report the mAP together with the WSR, as shown in

Figure 7. The results indicate that the proposed method consistently achieves WSR = 100% across all tested watermark lengths for both detectors, demonstrating that watermark verification remains reliable even when the watermark capacity increases. Meanwhile, the mAP values only exhibit minor fluctuations under different watermark lengths, suggesting that the proposed embedding scheme maintains high fidelity. Notably, the highest detection accuracy is obtained when the watermark length is set to 128 bits for both Faster R-CNN and YOLOv5. Therefore, we adopt 128 bit watermarks as the default setting in this paper, as it provides the best trade-off between detection performance and watermark capacity while preserving perfect watermark verification.

6. Conclusions and Future Works

In this work, we proposed a robust and stealthy white-box watermarking framework for protecting the IP of RSOD models. By analyzing parameter sensitivity through gradient information, the proposed method adaptively selects watermark carriers that have minimal impact on the primary detection task. A margin-based parameter-ranking mechanism is then employed to encode watermark information by enforcing stable relative ordering relationships between paired parameters. To further enhance robustness under practical deployment conditions, an attack-simulation training strategy is introduced to improve resistance to common perturbations, while a distribution-alignment regularization term is incorporated to preserve the statistical characteristics of the original model and enhance watermark stealthiness. Extensive experimental results on multiple detectors and RSOD benchmark datasets demonstrate that the proposed method consistently achieves a WSR of 100% under the evaluation settings while introducing negligible degradation in detection performance and maintaining strong robustness and imperceptibility.

Future work will focus on improving the generality and security of the proposed framework. In particular, we will investigate its transferability and extend it to other vision tasks (e.g., semantic segmentation and change detection). Moreover, we will evaluate the method under more stringent threat models, including cross-architecture transfer and adaptive removal attacks, to further strengthen robustness in realistic adversarial scenarios. In addition, we will explore more advanced stealthiness evaluation strategies, such as classifier-based detection approaches and adversarial detection scenarios, to further examine whether the embedded watermark can be detected by sophisticated statistical or learning-based detectors. These directions are expected to advance the development of reliable and practically deployable model watermarking techniques.