3.1. Feature Extraction

The DINOv3 ViT-B/16 distilled model is used as an image encoder for feature extraction. DINOv3, a self-supervised vision transformer, is pretrained on large-scale datasets to learn discriminative representations without explicit labels, making it particularly suitable for remote sensing images where annotated data may be limited. The graphical representation of this model is illustrated in

Figure 1.

DINOv3 works on the student-teacher model. It shares two distinct segmented views of a single image and passes them to both encoder models: the student and the teacher. Both encoders split the image into smaller patches of 16 × 16 size called special learnable class tokens or CLS, resulting in a sequence of 196 patch tokens plus one class token, yielding a total sequence length of 197. Each token is embedded in a 768-dimensional vector space. The ViT-B configuration consists of 12 transformer encoder layers, with 12 attention heads per layer, an embedding dimension of 768, and a feed-forward network (FFN) inner dimension of 3072. This setup enables the extraction of hierarchical features that capture both local textures (e.g., building structures) and global contexts (e.g., land use patterns) in remote sensing scenes using pretrained DINOv3. These are then fed into the projection head to obtain logits and then apply the SoftMax function to produce probability distributions, with the method that trains the student model to match the prediction of the teacher model by minimizing the cross entropy between them (by stopping the gradient from flowing into the teacher’s model during training). Only the parameters of the student model are updated to match the predictions of the teacher’s model, while the teacher model provides a stable target. This approach is known as knowledge distillation. The idea behind using this approach is to transfer the knowledge distilled from a powerful source (i.e., the teacher’s model) to a smaller model (student), making the inference more efficient. However, the architecture and model parameters of both models (student and teacher) are the same. To train a model without labels is also a challenge here.

To overcome this issue, output dimensions are set to a large number, i.e., 65,000. This enables the model to discover and represent a wide range of visual concepts without requiring us to define them in advance. Also, in knowledge distillation, the teacher model is already trained with unlabeled data, to implement this teacher model, the weights of the teacher model are updated as a moving average (i.e., exponential moving average (EMA)) of the student’s weight over time; due to this, the teacher model changes gradually, providing a stable and consistent target for the student model to learn from. After each training step, the parameters of the teacher model are updated using the formula:

The value

controls the update rate of the teacher model; a larger value means slower updates. Initially,

, which gradually increases with the training of the model. This approach alone is insufficient for problems like remote sensing images, where images exhibit different categories of features. With only this method, a problem could arise in which a single dimension dominates the distribution, leading to uninformative and collapsed representations. To overcome the issue of single-dominated distribution, the concept of centering is introduced. It helps prevent collapse by ensuring the average output of the teacher model remains balanced across all output dimensions. Instead of computing the center vector of each batch independently, the moving average of the center is maintained over time, using the equation:

With center and trick, there arises an issue of collapsing to a uniform distribution. To address this, sharpening of the distribution is enforced by lowering the temperature parameter in the SoftMax function.

P is the probability distribution; T is the temperature. As we lower the temperature T, probability distributions produced by the SoftMax function become more peaked, centering more mass on the larger logit. Conversely, as we increase the temperature, the output probabilities become less sharp and are more evenly distributed across all classes. The temperature of the student model is fixed at 0.1, and the teacher model starts at a lower temperature and gradually increases to a higher value using a linear function throughout training. Due to the difference in temperature, the probability distribution of the teacher model is sharper than the student model. This makes the prediction of the teacher model more confident, with a higher probability mass on the most likely class. This gives a strong signal to the student model, encouraging it to match the confident prediction of the teacher model.

Instead of using only two views, multiple cropped views of the image are created. Both global and local views are generated; the teacher model observers only global views, while the student model receives both local and global views as input. By using this strategy, the model learns to associate detailed information from small local patches with the broader context provided by the global views. As a result, the model becomes better at recognizing objects and patterns even when only a small portion of the image is visible. This method is known as self-distillation with no labels (DINO).

DINOv3 introduces a special technique, called gram anchoring, to improve the quality of dense visual features. During training, as features become less focused and the similarity maps become noisier, the model tends to make worse predictions for dense features. To address this issue, DINOv3 uses a previous version of the teacher model to regularize correlations across all patch pairs by computing cosine similarities between all patch token embeddings, forming what is called “Gram Matrix”. An additional loss function is introduced to encourage the Gram matrix of the student model to closely match that of the teacher model. This mechanism is referred to as “Gram Anchoring”. This regularization preserves the spatial relationships between local patches while still allowing the student features to be refined during training.

The main parameters used in Dinov3 for feature extraction are presented in

Table 1, whereas the architecture of DINOv3 is illustrated in the

Figure 2. During training, the DINOv3 backbone served as a fixed feature extractor, with its parameters frozen. This strategy preserves the robustness of the self-supervised visual representations while ensuring stable optimization given the limited size of remote sensing captioning datasets.

3.2. Feature Aggregation and Caption Generation

Although DINOv3 captures global relationships through attention, its patch embeddings remain highly redundant and do not explicitly model local spatial continuity across neighboring patches. Remote sensing images often contain structured, quasi-linear patterns—such as road segments, river flows, coastlines, and agricultural boundaries that benefit from a lightweight inductive bias favoring smooth transitions across neighboring patches. To introduce such structural guidance, a small LSTM aggregation module is incorporated.

The LSTM processes the patch tokens in a fixed spatial order (a flattened raster sequence), enabling it to aggregate locally coherent features while remaining compatible with transformer-based processing. Rather than treating patches as a true temporal sequence, the LSTM acts as a contextual smoother and a redundancy reducer, producing refined features that are better aligned with the decoder’s cross-attention. This improves the coherence of generated captions—particularly for spatially continuous objects—without significantly increasing the model complexity.

The aggregation input consists of the batch of patch tokens from the DINOv3 encoder, shaped as [Batch Size, 197, 768]. An LSTM cell with input and hidden dimensions of 768 processes each token sequentially. At each step t, the hidden state is updated based on the current token and previous states, forming the aggregated feature for that position. The output is a refined feature map of shape [Batch Size, 197, 768], which serves as the key and value inputs to the decoder’s cross-attention layers. This LSTM-based fusion enhances the model’s ability to handle the sequential nature of spatial features in remote sensing images, such as linear arrangements in roads or rivers, thereby improving the coherence of generated captions.

The aggregated features are fed into a transformer decoder to generate natural-language captions in an auto-regressive manner. The decoder follows a standard architecture with three layers, each comprising masked multi-head self-attention, multi-head cross-attention, and a feed-forward network. The word embedding and decoder dimensions are both set to 512, with eight attention heads and a query/key/value dimension of 64 (computed as 512/8). The FFN inner dimension is set to 768 to align with the encoder’s output.

Input caption tokens (from ground-truth captions during training or generated tokens during inference) are embedded into 512-dimensional vectors and augmented with positional encoding. Masked self-attention ensures causality by allowing the model to attend only to preceding tokens. Cross-attention uses queries from the self-attention output (512-d) and keys/values from the aggregated visual features (768-d), enabling effective alignment between textual descriptions and visual elements. The final output of the decoder is projected through a linear layer to the vocabulary size (V), resulting in an output dimension of (512, V), where V denotes the caption vocabulary size derived from the datasets. During training, cross-entropy loss with teacher forcing is employed, while during inference, beam search is used for optimal caption generation. The overall process, from feature extraction to caption generation, is illustrated in

Figure 3. The integration of DINOv3 with the Transformer fusion module is expected to provide three main improvements:

Enhanced spatial discriminability through patch-level self-supervised features.

Improved semantic consistency due to domain-agnostic representation learning.

Stronger global–local context modeling via Transformer-based attention.

Although pure Transformer decoders have demonstrated strong performance in large-scale vision-language tasks, they often require extensive labeled data to avoid overfitting. In remote sensing captioning, where datasets are relatively small, we adopt a hybrid Transformer–LSTM decoder. The Transformer component captures rich global visual context, while the LSTM enforces sequential linguistic consistency during caption generation. This combination promotes stable training and semantically coherent captions by leveraging the strengths of both architectures.

As the proposed framework follows a standard encoder–decoder paradigm, it is distinguished by its use of DINOv3, a label-free, self-distilled Vision Transformer that learns semantically rich and domain-agnostic representations well suited to remote sensing imagery. Unlike CLIP or supervised ViT backbones, DINOv3 does not rely on image-to-text alignment or large-scale labeled natural image datasets, mitigating domain shift and annotation scarcity. Furthermore, architectural advances in DINOv3, such as gram anchoring and register tokens, enhance spatial coherence and the stability of dense features, which are critical for describing fine-grained remote sensing structures. Finally, the hybrid Transformer–LSTM decoder is designed to leverage the structured patch embeddings produced by DINOv3, promoting spatial continuity and improving semantic alignment in the generated captions.

3.3. Experimentation

3.3.1. Dataset Details

To evaluate the proposed remote sensing image captioning framework across both standard scene captioning benchmarks and complex multi-modal geospatial datasets, experiments were conducted on five public datasets. These datasets are organized into two categories based on their annotation style and application focus.

Category I Standard Remote Sensing Datasets: Two widely used remote sensing datasets, namely UCM-Captions and RSICD, were employed in this study. The UCM-Captions dataset is relatively small, whereas RSICD is the largest in this domain. RSICD contains 10,921 remote sensing images covering 30 common scene categories, such as airports, mountains, and farmland. Each image has a resolution of 224 × 224 pixels and is annotated with five descriptive sentences. In total, the dataset includes 54,605 sentences, of which 24,333 are unique.

Figure 4 presents sample images from both datasets along with their corresponding descriptions. Following the standard data split protocol used in prior work, 8000/1000/1921 images were used for training, validation, and testing on RSICD, respectively, and 1680/210/210 for UCM-Captions, ensuring direct comparability with published results.

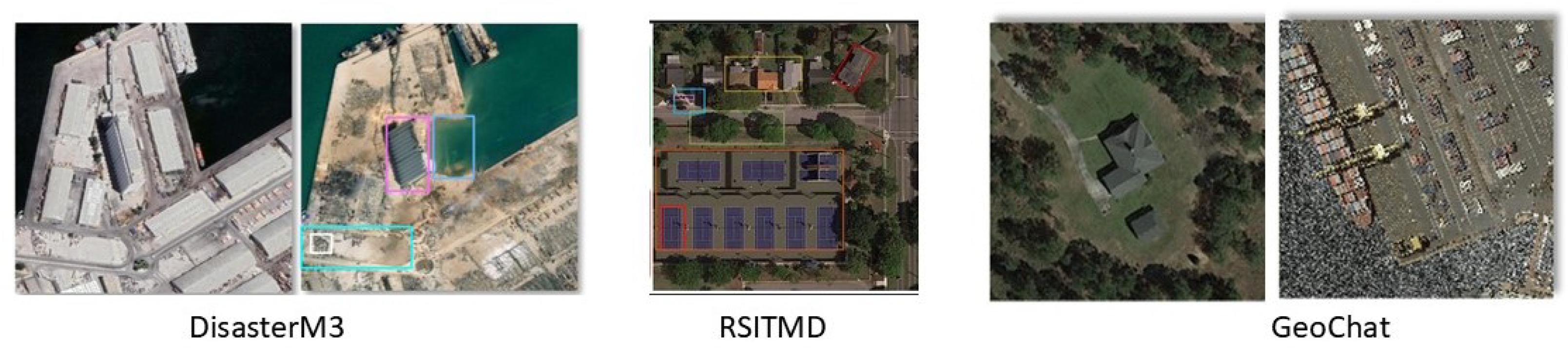

Category II Advanced Domain-Specific Datasets: This category includes RSITMD, DisasterM3, and GeoChat, which introduce richer semantic content, multi-modal reasoning requirements, and domain-specific captioning challenges beyond conventional scene descriptions. Sample images from these three datasets are shown in

Figure 5.

RSITMD (Remote Sensing Image–Text Multi-Modal Dataset) contains 4743 high-resolution remote sensing images, each paired with five textual descriptions.

DisasterM3 is designed for disaster monitoring and emergency response and features satellite images depicting events such as floods, earthquakes, wildfires, and infrastructure damage.

GeoChat is a vision–language dataset aimed at geospatial reasoning and conversational understanding. It pairs satellite images with descriptive and dialogue-style captions that encode geographic context, spatial relationships, and location-aware semantics.

3.3.2. Evaluation Metrics

Model performance is evaluated based on the similarity between candidate and reference sentences. Commonly used automatic evaluation metrics include BLEU [

39], METEOR [

40], ROUGE-L, and CIDEr [

41]. These metrics measure the similarity between candidate sentences and reference sentences from different perspectives. Higher metric values indicate better caption generation performance.

3.3.3. Experimentation and Parameter Setting

Training was conducted on a high-performance computing system equipped with two NVIDIA Tesla V100 GPUs, each with 32 GB of memory. The use of dual GPUs enabled parallel processing and significantly reduced training time. The model was implemented using PyTorch (version 2.1.0), leveraging GPU acceleration and automatic differentiation. Training was performed for a maximum of 100 epochs, with early stopping applied if no improvement in validation performance was observed for 10 consecutive epochs. The batch size was set to 32 to ensure efficient utilization of the GPU while maintaining stable gradient updates.

Gradient clipping with a maximum norm of 5.0 was applied to prevent exploding gradients, which can occur in deep networks with recurrent components such as LSTMs. Both the encoder and decoder were optimized using the Adam optimizer with a learning rate of 0.0001. Doubly stochastic attention regularization was applied with a parameter of 1.0 to encourage uniform attention distribution across all image regions, thereby improving caption quality. Training and validation statistics, including loss values and evaluation metrics, were recorded every 25 batches to monitor convergence and detect potential issues such as overfitting or underfitting. The word embeddings were fine-tuned to capture the domain-specific vocabulary of the RSICD dataset.