1. Introduction

The lunar south polar region has attracted intense scientific interest due to its unique geological context and the potential to contain water ice. It lies along the rim of the South Pole–Aitken (SPA) basin, the Moon’s oldest and largest impact structure [

1], which is believed to have excavated lower-crustal or upper-mantle materials, providing a unique window into lunar internal composition and evolution [

2,

3,

4]. Furthermore, the Moon’s slight axial tilt (

) results in permanently shadowed regions (PSRs) within polar craters [

5]. These PSRs are devoid of direct sunlight and act as “cold traps” (

K), capable of preserving water ice and other volatiles over geologic timescales [

6]. This has been proved by observations from neutron absorption to ultraviolet reflectance [

7,

8,

9,

10,

11,

12,

13,

14].

Therefore, exploration of the south polar region offers both practical resources and fundamental scientific insights, driving numerous recent missions (e.g., NASA Artemis and Chang’e-7) to focus on this target.

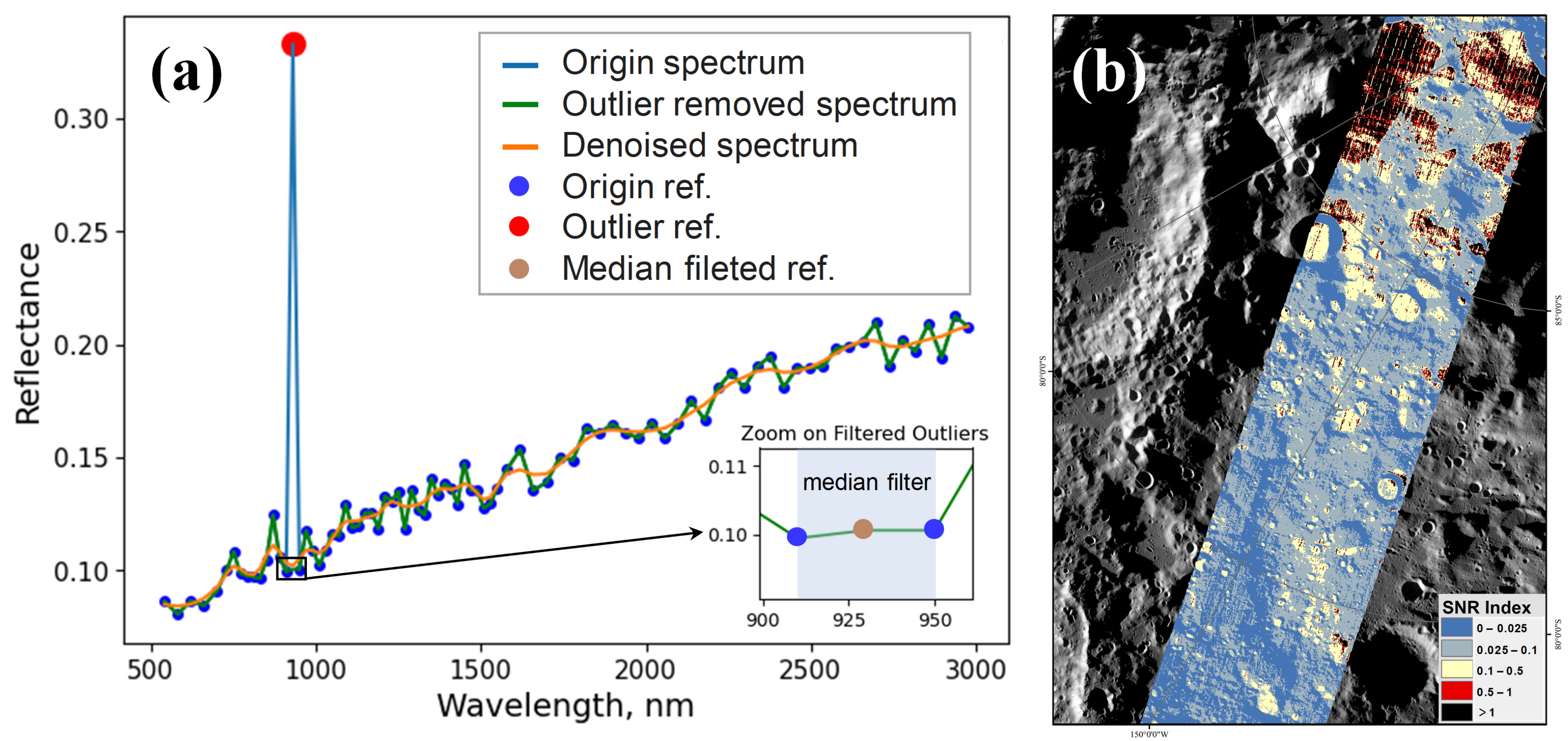

Hyperspectral sensors can provide continuous visible to near-infrared (VIS-NIR) spectral measurements to characterize polar volatiles and ancient crustal materials. The Moon Mineralogy Mapper (M3), a hyperspectral imaging spectrometer onboard India’s Chandrayaan-1, acquired VIS-NIR reflectance measurements spanning nearly the entire lunar surface, including polar regions [

15]. Its comprehensive spectral coverage enables reliable identification of key lunar minerals, including pyroxene, plagioclase, olivine, and spinel [

16,

17]. This identification is based on their diagnostic absorption features (

Figure 1a). M3 additionally covers critical OH/H

2O absorption bands around 1300, 1500, and

[

18,

19], which are essential for discriminating hydration signatures from lunar regolith responses (

Figure 1b). Collectively, these capabilities establish M3 data as an indispensable dataset for investigating both lunar mineralogy and potential water ice deposits.

However, low solar elevation angles in the polar region generate widespread topographic shadows in M3 imagery, where their spectral signatures are primarily derived from secondary scattered light rather than direct illumination. The low intensity of this secondary illumination results in a significantly degraded signal-to-noise (SNR) from these regions. Consequently, mineralogical mapping derived from M3 data has been confined within 0∼

N/S [

21]. For higher latitude regions, conservative approaches have been adopted by either excluding low-SNR areas entirely or applying strict filters to remove degraded spectra. Specifically, the spectral SNR index (SNRI), used to filter out unreliable M3 measurements [

22,

23], and the integral of the squared second derivative (ISSD), used to identify noise- and shadow-affected pixels, have been proposed.

However, the proportion of low-SNR pixels increases markedly toward high latitudes. Studies show that over one-quarter of regions above

S lack high-quality observations even before excluding high-incidence-angle anomalies [

24]. This inherent degradation severely limits the utility of M3 for polar scientific investigations. Therefore, effective methods to recover these low-SNR data are essential for utilizing the full potential of M3 observations.

Research on the enhancement or restoration of lunar HSI data, especially for low-SNR polar data, remains limited. While terrestrial HSI shadow compensation and denoising strategies provide a practical foundation, their application to the lunar environment faces challenges due to fundamentally different illumination physics. Current terrestrial compensation methods generally fall into two categories. Physical model-based approaches [

25,

26], which rely on explicit reflectance models with prior knowledge of scene geometry and sensor data, are hampered in lunar polar environments since scattered light from crater walls and adjacent topographic highs violates the direct-illumination assumptions of photometric models. Limited contextual information and sensor constraints further hinder the feasibility of direct spectral recovery. Spectral unmixing-based approaches [

27,

28], reconstructing shadowed spectra by estimating the endmember abundances from adjacent illuminated pixels, fail to account for the nonlinear photon interactions caused by multiple scattering and indirect illumination by linear unmixing models. The relatively homogeneous lunar regolith further diminishes endmember separability. To address these limitations, deep learning has emerged as a promising alternative.

As a data-driven approach, deep learning is especially well-suited to capture the complex spatial–spectral interactions in degraded HSI data [

29]. Among them, convolutional neural networks (CNNs) have become the prevailing paradigm for terrestrial hyperspectral restoration, benefiting from their inherent ability to automatically learn hierarchical spatial–spectral features and model complex nonlinear relationships directly from data [

30,

31]. One of the first CNN architectures for HSI denoising was proposed by Xie et al. [

32], followed by an autoencoder-based shadow-compensation approach that learns relighting functions from paired shadowed and illuminated spectra under diverse atmospheric conditions [

33]. A residual-learning-based denoising CNN (DnCNN) that reformulates denoising by estimating noise residuals rather than directly reconstructing clean images further established the utility of CNNs in HSI restoration [

34]. Subsequent developments have further improved HSI denoising performance. An HSID-CNN [

35] learns nonlinear mappings via feature fusion across spectral bands and spatial neighborhoods, while an HSI-SDeCNN [

36] exploits 3D spatial–spectral patches from target and neighboring bands. These designs achieve robust noise suppression and restoration beyond traditional band-wise filters or purely 2D spatial denoisers [

36]. These methods highlight the importance of joint spectral–spatial processing. Nevertheless, most CNN-based models lack explicit attention mechanisms to adaptively weight spatial–spectral features, limiting their performance in photon-starved imaging scenarios (i.e., a photon-scarce condition where insufficient signal photons are recorded due to extremely low illumination).

Recently, Transformer architectures have been introduced to HSI restoration tasks for their capabilities to capture long-range, global dependencies with their powerful self-attention mechanisms. Liang et al. [

37] proposed a spatial–spectral Transformer for HSI denoising, leveraging the self-attention mechanism to capture long-range spatial and spectral correlations. Lai et al. [

38] developed a Transformer-based framework to address a spectrum of HSI restoration tasks, including denoising, inpainting, and super-resolution. These approaches highlight the advantages of Transformers in capturing global information. However, the direct application of Transformers to typical terrestrial HSIs introduces substantial computational complexity and parameter overheads [

39]. Beyond these, they are prone to over-fitting in data-scarce scenarios [

40,

41]. This substantial data requirement constitutes a fundamental limitation in lunar HSI compensation, where the availability of paired, high-quality observational data is inherently limited. Moreover, Transformers may struggle to preserve subtle local spectral variations, which are crucial for lunar mineral identification [

42,

43], potentially compromising the fidelity of restored signals.

Beyond CNNs and Transformers, diffusion models have recently emerged as a distinct paradigm in restoration techniques for hyperspectral and optical remote sensing. Built on denoising diffusion probabilistic models (DDPMs) [

44], these approaches employ an iterative denoising–sampling process to reconstruct high-quality images from degraded observations. In hyperspectral restoration, diffusion priors have been actively explored: DDS2M [

45] and HIR-Diff [

46] introduce self-supervised and unsupervised priors for spatial–spectral modeling, while HSR-Diff [

47] leverages conditional diffusion for spectrally aware super-resolution. However, despite their impressive generative capabilities, these approaches incur substantial computational costs and significant inference latency due to their iterative nature [

48]. Critically, they are data-hungry and, owing to inherent stochastic sampling, struggle to guarantee the deterministic preservation of subtle spectral signatures and fine-grained spatial–spectral coherence, which are essential for reliable quantitative analysis.

Nevertheless, deep learning methodologies for terrestrial HSI denoising and shadow-compensation cannot be directly transferred to lunar applications due to the unique environment of lunar polar regions. Terrestrial HSI restoration models are primarily designed to handle idealized noise patterns such as Gaussian, impulse, or stripe noise under relatively consistent illumination and diverse surface compositions [

49]. In particular, many CNN-based denoising networks implicitly assume additive Gaussian noise model. In contrast, lunar polar HSIs—especially under low illumination—exhibit fundamentally different degradation characteristics. Photon-starvation induces signal-dependent noise that is better described by Poisson statistics than by additive Gaussian perturbations [

50]. Moreover, complex topographic shadowing leads to extreme illumination variability, and the relatively homogeneous regolith reduces spectral contrast [

51]. Under such weak illumination, the measured HSI signals can approach the sensor noise floor, making restoration an ill-posed inverse problem in which small modeling mismatches may induce disproportionate spectral distortions. This domain gap further constrains the direct transferability of recent transformer- and diffusion-based restorers. Transformer architectures, despite their powerful global modeling capability, often rely on terrestrial datasets for pre-training; consequently, the learned spectral–spatial dependencies may not readily generalize to lunar mineral surfaces with fundamentally different spectral properties. Likewise, diffusion models learn data priors from terrestrial training distributions and can therefore be more prone to producing Earth-like textures or artifacts when applied to lunar polar scenes. Addressing such signal-dependent, spatially varying noise and subtle spectral signatures requires adaptive modules, such as attention mechanisms, to selectively enhance diagnostic information. Therefore, these challenges underscore the need for lunar-specific restoration frameworks and motivate the design of tailored deep learning models.

As the first attempt to address lunar HSI degradation, we previously proposed a CycleGAN-based compensation network tailored to M3 low-SNR spectra [

24]. This paired-CycleGAN formulated compensation as a spectral translation between low- and high-SNR domains, utilizing adversarial learning to model their nonlinear relationship. Despite improving the spectral fidelity of low-signal pixels substantially, it operated solely in the spectral domain without exploiting spatial context, which resulted in spatial inconsistency across neighboring pixels and speckle-like artifacts in homogeneous regions. These limitations underscore the methodological boundary of spectral-only methods: their inability to preserve spatial coherence critical for faithful hyperspectral reconstruction. Therefore, an integrated approach that jointly exploits inter-band spectral dependencies and local spatial context is imperative for robust lunar HSI enhancement.

In this study, a novel spatial–spectral fusion 3D signal compensation (SSF-3DSC) network is proposed to overcome the limitations of spectral-only approaches. To the best of our knowledge, this is the first deep residual network specifically designed for lunar hyperspectral data. SSF-3DSC integrates spectral and spatial information by processing the entire 3D HSI cube (), thereby avoiding pixel- or spectrum-wise isolation. Its architecture consists of three components: (1) a spectral compensation module (SCM) that restores individual spectral signatures; (2) a multi-scale spatial attention (MSA) module that emphasizes salient features across scales; (3) a cascaded 3D residual convolutional module (C3D-RCM) that performs final spatial–spectral fusion and reconstruction. By formulating compensation as a 3D cube reconstruction task, SSF-3DSC simultaneously restores spectral fidelity and enforces spatial coherence, thereby mitigating the spatial artifacts inherent to spectral-only methods. Its architecture and staged training strategy are tailored to the key challenges of lunar compensation: limited training data and complex, non-Gaussian degradations induced by photon starvation. Comprehensive experiments demonstrate that this spatial–spectral approach yields coherent and reliable reflectance reconstructions in low-signal polar regions. The main contributions of this work are summarized as follows:

- (1)

Spatial–spectral Cooperative Restoration Architecture: An end-to-end architecture is developed to simultaneously restore spatial and spectral information in low-SNR lunar regions, thereby addressing the trade-off between spectral fidelity and spatial consistency in low-illumination HSIs over homogeneous, regolith-covered surfaces.

- (2)

Dual-Branch Noise Compensation: A dual-branch module is proposed to decouple degradation characteristics and separate optimization pathways for spatial and spectral reconstruction, with joint spatial–spectral loss ensuring balanced fidelity across both domains.

- (3)

Cross-Scale Residual Feature Fusion: A cross-scale residual feature fusion strategy is proposed to enhance multi-scale feature representation by integrating pixel-wise spectral features with spatial context across scales, thereby improving the extraction and integration of spatial–spectral details.

- (4)

Superior Performance and Extensive Scientific Applicability: This work provides the first validation of deep learning-based methods for restoring spatial–spectral features in low-SNR lunar polar HSIs, demonstrating their feasibility for both quantitative enhancement and regional-scale scientific applications.

The rest of this paper is organized as follows.

Section 2 introduces the M3 dataset and preprocessing.

Section 3 presents the proposed SSF-3DSC framework.

Section 4 reports experimental evaluations, including comparisons, ablation studies, and regional applications.

Section 6 concludes the paper and discusses future research directions. An overview of the complete workflow is shown in

Figure 2.

3. Methodology

Signal compensation for lunar HSI can be conceptualized as a domain-to-domain translation problem. The fundamental challenge is to reconstruct high-fidelity data cubes from observations, degraded by suboptimal illumination, while preserving both spatial–spectral integrity and accuracy. To this end, the compensation process can be modeled as a learned transformation

that maps a hyperspectral cube

l from the low-SNR domain

to its high-fidelity counterpart

h in the high-SNR domain

. This relationship is expressed as follows:

through the learning of

, and the network is trained to restore the information content of low-quality input cubes

l, producing spatially coherent and spectrally accurate outputs,

h.

Building on this formulation, this section presents the proposed SSF-3DSC framework.

Section 3.1 outlines the network architecture,

Section 3.2 introduces the staged training strategy,

Section 3.3 describes the loss functions,

Section 3.4 summarizes implementation details, and

Section 3.5 details the metrics for compensation evaluation.

3.1. Spatial–Spectral Fusion 3D Signal Compensation (SSF-3DSC) Framework Architecture

A novel deep learning network is proposed to increase the SNR of hyperspectral imagery of the lunar surface under low illumination conditions. The training samples were subsequently fed into the proposed network, whose architecture consists of three main components: a spectral compensation module (SCM), a multi-scale spatial attention module (MSA), and a cascaded 3D residual convolutional module (C3D-RCM). Together, these modules learn a robust compensation mapping that reconstructs high-SNR output. A binary validity mask is applied throughout the framework to exclude null pixels from intermediate operations and loss computations, ensuring that only reliable data contributes to the compensation process. Specifically, the validity mask is constructed from the preprocessed M3 cube by assigning 1 to pixels with finite, physically meaningful reflectance values and 0 to invalid/no-data pixels; it is then applied consistently to gate forward propagation and to exclude invalid pixels from all loss computations.

Figure 7 shows the specific structure of the proposed SSF-3DSC framework.

3.1.1. Spectral Compensation Module (SCM)

The spectral compensation module (SCM) focuses on enhancing the spectral features of HSI cubes, which are critical for lunar surface analysis due to their role in characterizing surface composition. The SCM is implemented using the fully connected neural network (FCNN) architecture and serves as the first-stage spectral compensator in our framework. To capture spectral reflectance across all pixels, this module flattens the input cube along its spatial dimensions (h and w), treating each pixel’s spectrum as an independent sample. This results in a set of spectral vectors (batch size × h × w), each with 83 bands, which are processed through an FCNN.

Previous results have shown that the FCNN architecture with a global receptive field is effective in capturing long-range spectral correlations [

67]. This FCNN-based design mirrors the generator network proposed by Ni et al. [

24], which demonstrated robust performance in extracting and reconstructing spectral features within a CycleGAN framework for low signal spectra compensation in the lunar south polar region. The overall architecture of SCM is displayed in

Figure 8, and the details of the spectral feature extraction block are presented in

Table 1. Each layer, except the output layer, employs a leaky rectified linear unit (LReLU) activation function to introduce non-linearity [

49], while the output layer uses a Sigmoid activation to constrain the compensated spectra within a normalized range. After completing the spectral feature processing through the FCNN, the module reshapes the output back to the original 3D cube (i.e., restoring the flattened spectral vectors back to their original spatial–spectral arrangement). This complete spectral compensation process can be mathematically expressed as follows:

where

denotes flattening only along the spatial dimensions

while preserving the channel dimension

B,

denotes reshaping into the specified dimensions,

represents the masked low-SNR input,

denotes the sigmoid activation function, and

is the spectrally compensated output. By operating purely on spectral signatures, the SCM corrects band-wise intensity deviations and improves spectral fidelity before any spatial processing is applied.

3.1.2. Multi-Scale Spatial Attention Module (MSA)

While the SCM focuses on spectral reconstruction, it may introduce spatial artifacts such as speckle noise. The multi-scale spatial attention module (MSA) is designed to recover and refine spatial features that may be degraded in low-signal HSIs, thereby mitigating artifacts introduced during spectral reconstruction. Inspired by CBAM’s spatial attention [

68], the proposed MSA incorporates parallel multi-scale convolutional kernels directly into the spatial-attention generation process. These features are then fused via a

projection to generate a unified attention map, facilitating scale-aware saliency. This module employs a multi-scale convolutional approach combined with a spatial attention mechanism to extract spatial patterns at varying spatial scales, mitigating speckle noise or spatial inconsistencies.

MSA first performs channel-wise average and max pooling along the spectral dimension of the masked input cube (

Figure 9), yielding two complementary single-channel spatial maps: (i) an average-pooled map encodes global illumination/background context; (ii) a max-pooled map highlights salient high-contrast structures and edge features. Each map is then processed through parallel convolutional layers with kernel sizes of

,

, and

to capture multi-scale spatial features. The resulting feature maps–three from average pooling and three from maximum pooling, plus the original pooled maps–are concatenated along the channel dimension, forming an 8-channel feature map.

A

convolution, followed by batch normalization and a sigmoid activation, transforms this concatenated feature map into a spatial attention map

. Values of

are between 0 and 1, with higher values assigning greater weights to locations prioritized during compensation. The computation is expressed as follows:

where

and

denote the multi-scale features from average and maximum pooling, respectively.

represents channel-wise concatenation,

is the

convolution,

is batch normalization, and

is the sigmoid function.

In essence, the MSA module serves as a spatial regularizer by learning a dynamic attention map. This map acts as a sophisticated weighting mask that selectively enhances salient spatial details (e.g., edges and textures) while suppressing noise and spatial distortions across the image, ensuring these crucial details are preserved and refined in the final output.

3.1.3. Cascaded 3D Residual Convolutional Module (C3D-RCM)

The C3D-RCM integrates the spectral and spatial features extracted by the two above modules, performing comprehensive spatial–spectral feature fusion. Constructed upon a 3D ResNet backbone [

69], this module utilizes a cascaded multi-scale convolutional design to effectively aggregate cross-band and cross-pixel dependencies, thereby optimizing the fusion of fine spectral details with broad spatial structures. First, the masked attention map

is applied to the SCM output, via element-wise multiplication:

where

denotes the fused feature map (i.e., the spatial–spectral feature map in

Figure 7). This attention mechanism selectively amplifies informative regions while attenuating less relevant or noisy regions, thereby optimizing feature representation for subsequent processing. Subsequently, the C3D-RCM maps the band axis of the fused feature cube to the volumetric depth (i.e.,

D =

B) while initializing the feature-channel dimension to

C = 1; then, it employs a two-stage processing pipeline based on 3D residual convolutions to jointly model spatial–spectral correlations. As shown in

Figure 10, the feature extraction stage employs a cascade of 3D residual convolutional (3D-RC) blocks that increase the feature-channel depth from 1 to 32, 64, and 128, thereby increasing capacity to aggregate inter-band and inter-pixel dependencies while preserving the

resolution. The subsequent feature-refinement stage systematically reduces it to 1, effectively consolidating the learned high-dimensional representations into a single-channel compensated cube while enforcing spatial coherence and spectral fidelity. Each 3D-RC block comprises two

convolutions with ReLU activations and an identity shortcut to stabilize training (

Figure 7). These 3D filters enable joint spatial–spectral processing by exploiting correlations across both spatial and spectral dimensions simultaneously [

70].

The final output

is computed by adding the output of our C3D-RCM to the masked low-SNR input, embodying the residual learning paradigm:

The residual connections in this module mean that the network actually learns to predict the difference (residual) between the low-SNR input and the desired high-SNR output, rather than predicting the high-SNR image directly. This residual learning strategy is commonly used in image denoising tasks—the model focuses on estimating the noise component, which is then added to (or subtracted from) the input to obtain the clean result [

71]. By learning the SNR improvement as a residual mapping, the RCM facilitates faster convergence and avoids over-smoothing, ensuring that the fine details present in the high-SNR target are recovered in the final output.

3.2. Staged Training Strategy

Given the complexity of the multi-module architecture and to mitigate the risk of any single component overpowering others during initial training, a phased training strategy is adopted to incrementally build the network’s capability:

Stage 1—Spectral Pre-training: The SCM is trained alone while parameters in the MSA and C3D-RCM are kept frozen. In this stage, the network learns to perform accurate spectral compensation on low-SNR cubes, essentially refining each pixel’s spectral signature to match high-SNR characteristics using the high-SNR target as a reference. The isolation of the spectral sub-network first ensures that the SCM provides a solid foundation of spectral fidelity before introducing spatial learning.

Stage 2—Spectral–Spatial Joint Training: The MSA is unfrozen and jointly optimized with the already pre-trained SCM, while the C3D-RCM remains frozen. In this stage, the model learns to restore spatial features and suppress artifacts through the MSA on top of the spectral corrections from Phase 1. Jointly optimizing SCM and MSA allows the network to balance spectral reconstruction with spatial denoising, yielding an output both spectrally accurate and spatially clean.

Stage 3—End-to-End Fine-Tuning: The C3D-RCM is unfrozen, and the entire architecture is trained end-to-end. In this final phase, all modules (SCM, MSA, and C3D-RCM) are optimized together so that the 3D residual convolutional layers can adjust the combined spatial–spectral features and refine the residual mapping. This end-to-end fine-tuning allows the full model to converge to an optimal solution that coherently integrates spectral and spatial enhancements.

3.3. Loss Function

The ill-posed, low-signal lunar environment and inherent spatial–spectral coupling, combined with staged modular training, necessitate specialized composite losses. These loss functions were devised for each training phase to optimize different aspects of the training process. The loss functions were strategically chosen to align with the objectives of the SCM, MSA, and C3D-RCM, emphasizing spectral fidelity, spatial coherence, and overall reconstruction quality across the three-stage training process. A masking mechanism is applied to all loss computations to restrict calculations to valid pixels. Below, the following subsections detail the loss functions employed in each phase and their specific contributions to the training objective.

3.3.1. Stage 1: Spectral Feature Extraction

In the first training stage, the model is optimized with loss terms that emphasize spectral accuracy. The loss based on the mean spectral angle mapper (MSAM) is used to preserve spectral shape by measuring the angle between the reconstructed and reference spectral vectors [

72]. This loss is defined as the mean of spectral angles (in radians) over all pixels, effectively penalizing spectral distortions irrespective of intensity. Reconstruction error (RE) is added as a pixel-wise fidelity term, typically implemented as a mean squared error between the denoised image and ground truth [

73]. Minimizing RE ensures that low absolute differences in reflectance or intensity, improving spectral amplitude fidelity. To encourage smoothness of the compensated spectra and minimize fluctuations, a first-order total variation (TV) [

24] loss is included. First-order TV loss penalizes abrupt spikes between adjacent spectral bands, thereby promoting spectral continuity and robustness in the reconstructed spectral profiles. The above three loss functions are expressed as follows:

where

and

represent the flattened spectra of the image cubes

h and

l, respectively, where

j denotes the spectral band index and

B is the total number of spectral bands in the sample cube.

denotes the proposed compensation network.

denotes the

norm.

and

indicate expectations over the probability distribution of high- and low-signal cubes computed as masked mini-batch means over valid pixels for all losses, where

represents the underlying data distribution.

Consequently, the combined loss for Stage 1 is formulated as follows:

where

are positive scalar weights that control the contribution of their respective loss terms.

3.3.2. Stage 2: Spatial Feature Restoration

The second stage introduces additional loss functions targeting toward preserving spatial structure on top of

. Multi-scale structural similarity index (MS-SSIM) loss is incorporated to enhance structural similarity across different scales [

74]. MS-SSIM is a full-reference image quality metric that evaluates the similarity between two images by considering structural information, luminance, and contrast at multiple resolutions, making it effective for capturing both local and global perceptual quality. Here, 1 − MS-SSIM is used as the loss term, so a higher MS-SSIM index yields a lower loss, encouraging the network to produce outputs with structural characteristics closely matching the ground truth at coarse and fine scales.

where

M denotes the number of spatial scales,

represents the SSIM value at the

ℓ-th layer, and

,

, and

are the local mean, variance, and cross-covariance statistics, respectively, calculated between the compensated output

and the reference high-signal hyperspectral image cube

h. The stability constants

and

, defined as

and

[

75], with parameters

,

, and a dynamic range of

, are consistent with the physical properties of reflectance (radiance).

To preserve high-frequency details, a Laplacian pyramid loss is incorporated, which adeptly captures spatial details across multiple scales, thereby enhancing texture and edge preservation at various resolution levels. This loss is computed by decomposing images into a Laplacian pyramid and summing the differences at each level [

76], thereby penalizing errors in both low-frequency (blurry) content and high-frequency textures:

where

and

denote the Laplacian pyramid components for the high-SNR and compensated images;

is the total number of valid pixels in layer

l, while

L represents the total number of pyramid layers. The input cubes at each layer are denoted by

, and

represents the Gaussian blur operation [

77].

Consequently, the total loss in Stage 2 becomes the following:

with placeholder weights balancing new terms. The MS-SSIM loss

guides the network to restore spatial structures and textures (improving visual similarity), while the Laplacian pyramid loss

ensures that both global structure and fine details are recovered at multiple scales.

3.3.3. Stage 3: End-to-End Training

In the final stage, the entire network is fine-tuned with an aggregated loss to balance the spectral and spatial objectives. A Log-Cosh loss term is introduced to stabilize training and attenuate outliers. The Log-Cosh loss is defined as follows:

The Log-Cosh function behaves similarly to mean squared error (MSE, i.e.,

loss) for small residuals, yielding smooth gradients, while approximating mean absolute error (MAE, i.e.,

loss) for larger residuals, thus providing robustness against outliers [

78]. Unlike MAE, which is non-differentiable at zero, and MSE, which is overly sensitive to extreme values, Log-Cosh has twice-differentiability across its domain, ensuring optimization stability. This smooth gradient property facilitates better coordination among multiple loss functions during the end-to-end training phase.

By including Log-Cosh into the overall objective, extreme errors are further suppressed, yielding a more balanced trade-off between spectral fidelity and spatial sharpness. The end-to-end training loss is a weighted sum of all prior loss terms (MSAM, RE, TV, MS-SSIM, and Laplacian) plus the Log-Cosh term:

where

is a positive scalar weight that modulates the contribution of the Log-Cosh term.

3.4. Training Details

The SSF-3DSC framework was implemented using PyTorch (v2.5.1). Training was conducted with a batch size of 32 on an NVIDIA A40 GPU, with each training stage running for 1000 iterations.

The Adam optimizer was employed to minimize the loss function (

17), configured with an initial learning rate of

, weight decay of

, and momentum parameters of

,

, and

. To ensure stable convergence, a cosine-annealing learning rate scheduler (CosineAnnealingLR) was utilized, which progressively reduces the learning rate from an initial value of

to a minimum threshold of

over 1000 epochs. This smooth decay strategy eliminates the training instabilities typically associated with step-decay methods, ensuring stable convergence while facilitating effective parameter fine-tuning in later training phases.

During the training process, we sequentially unfreezed specific modules (SCM, MSA, and C3D-RCM) to facilitate progressive learning. To address the challenge of multi-objective optimization across different training stages, weighting parameters were re-initialized by a scale-normalization heuristic similar to GradNorm [

79]: a short warm-up run was first used to record the mean magnitude of each potential loss term, including those not yet active. After which coefficients

were set so that all weighted losses lay in the same order of magnitude. These statistics were continuously updated during subsequent training, allowing the recorded values to be reused for Stages 2 and 3 without additional warm-up, thereby ensuring that newly introduced objectives (i.e., MS-SSIM, Laplacian, and Log-Cosh) received sufficient gradient signal without overwhelming previously optimized terms. Coefficients for computing running averages of the gradient were all set to 0.5, with specific weighting parameters for each loss component represented as shown in

Table 2.

3.5. Evaluation Metrics

To comprehensively assess the spectral compensation performance, we employ a suite of established evaluation metrics commonly used in hyperspectral imaging analysis. The metrics are categorized into spatial-domain and spectral-domain measures to provide both perceptual and quantitative assessments. Reference-based metrics are computed with respect to high-signal observations serving as the radiometric benchmark, derived from overlapping acquisitions under favorable illumination and geometrically co-registered to ensure pixel-level correspondence with the compensated low-signal test data.

3.5.1. Spatial-Domain Metrics

Peak Signal-to-Noise Ratio (PSNR)

PSNR quantifies reconstruction fidelity by measuring distortion suppression relative to maximum signal power. Operating at an image-wide scale, PSNR is expressed in decibels (dB), where higher PSNR values indicate superior reconstruction fidelity. Typically, an improvement of 1–2 dB in PSNR corresponds to visually discernible enhancement [

80].

Feature Similarity Index Matrix (FSIM)

FSIM evaluates the preservation of perceptually significant spatial features, such as structural information and edge integrity. Calculated globally across the entire image, FSIM represents a perceptual quality measure that quantifies similarity by integrating phase congruency and gradient magnitude to assess similarities between reference and processed images [

81]. FSIM values range from 0 to 1, with higher values indicating superior preservation of salient features and edge structures.

3.5.2. Spectral-Domain Metrics

Mean Relative Absolute Error (MRAE)

This metric quantifies spectral deviation by computing the average relative error between reconstructed and reference spectral values on a pixel-wise basis, normalized by the true signal intensity. This produces a dimensionless indicator of spectral distortion, with lower values signifying higher fidelity and 0 indicating perfect recovery. Particularly valuable in hyperspectral analysis, MRAE exhibits higher sensitivity to errors in low-intensity spectral regions [

82], which are critical for lunar polar region exploration.

Erreur Relative Globale Adimensionnelle de Synthèse (ERGAS)

This metric quantifies the overall spectral quality of the reconstructed image with heightened sensitivity to band-to-band spectral distortions. As a global, image-level quality indicator, ERGAS is a dimensionless global error metric which quantifies spectral fidelity by calculating normalized root mean square error (RMSE) across all spectral bands. Lower ERGAS values indicate reduced spectral distortion, with 0 representing perfect reconstruction. ERGAS is widely adopted in HSI applications due to its sensitivity to spectral artifacts and subtle spectral variations that may be overlooked by spatial-domain metrics [

83].

4. Experimental Results

To validate the effectiveness of the proposed framework, we conducted comprehensive experiments comprising comparative analysis and real-data evaluations. To the best of our knowledge, this study represents the first study to explicitly integrate spatial–spectral information for M3 HSI signal compensation in low-illumination lunar polar regions. In the absence of dedicated fusion architectures tailored for this task, we adopt several representative terrestrial HSI restoration networks as architectural baselines to investigate how conventional spatial–spectral modeling units perform under lunar polar degradation conditions.

We benchmarked SSF-3DSC against five representative deep-learning baselines. These include three CNN-based restoration backbones (3D-DnCNN [

34], HSID-CNN [

35], and HSI-SDeCNN [

36]), widely used for terrestrial hyperspectral denoising, and a diffusion-based generative model (conditional DDPM, cDDPM [

44]), which represents recent probabilistic restoration approaches. We also included paired-CycleGAN [

24], the first deep-learning method for spectral-domain compensation in lunar HSIs, to examine the contributory role of spatial information under low-illumination conditions.

To ensure a fair comparison, all baselines were re-implemented and trained from scratch under identical conditions, including the same paired lunar dataset, data splits, preprocessing procedures, and optimization settings (e.g., optimizer configuration and learning-rate schedules). Because a validated degradation model for lunar polar HSIs is not yet available, we refrain from adopting standard terrestrial simulation protocols for either training or performance evaluation. Unlike terrestrial denoising benchmarks that assume idealized noise models under stable illumination, M3 observations in shadowed polar regions are governed by photon-starved, illumination-dependent, and inherently non-linear degradations induced by extreme illumination variability and topographic occlusion. Consequently, conventional synthetic-noise simulations are not physically faithful to this context. Therefore, we benchmarked all methods on a domain-faithful paired dataset derived from real lunar observations, ensuring that both training and assessment reflect the operational constraints of lunar polar exploration. Performance was evaluated using quantitative metrics, visual comparisons, and spectral profile analyses, with detailed results presented in the following subsections.

4.1. Performance Evaluation of Spatial–Spectral Quality Metrics

Performance was evaluated on the test set using four metrics: mean PSNR (M-PSNR), mean FSIM (M-FSIM), MRAE, and ERGAS. Generally, higher values for MPSNR and MFSIM indicate better spatial similarity, whereas lower values for MRAE and ERGAS signify higher spectral fidelity. These baselines were selected to cover representative restoration paradigms, including widely adopted supervised CNN denoisers (3D-DnCNN, HSID-CNN, and HSI-SDeCNN) and a diffusion-based probabilistic model (cDDPM), thereby facilitating a systematic evaluation of how standard terrestrial priors generalize to photon-starved M3 polar observations under paired real-observation supervision.

Table 3 lists the compensation results of different networks on the test set, where the best performance for each metric is marked in bold, and the second-best is underlined.

The results in

Table 3 demonstrate that our proposed framework significantly outperforms 3D input-based networks [

34,

35,

36], the spectral dimension-exclusive compensation approach [

24], and the diffusion-based cDDPM baseline [

44] across all evaluation metrics. Specifically, our proposed network achieves the highest MPSNR (27.68 dB) and MFSIM (0.9452), representing improvements of 1.85 dB and 0.0654 over the strongest baseline (3D-DnCNN), respectively. In contrast, HSI-SDeCNN and HSID-CNN obtained relatively low spatial performance, lagging behind with MPSNRs of 24.38 dB and 22.86 dB and MFSIMs of 0.8024 and 0.8038. Paired-CycleGAN, trained exclusively on one-dimensional spectral data, exhibits limited spatial fidelity (MPSNR = 22.9880, MFSIM = 0.7937) due to the absence of spatial information in its input. The notably low spatial quality metrics underscore the critical importance of incorporating spatial contextual features in HSI compensation tasks. The cDDPM performs worst among all methods, with extremely low spatial scores (M-PSNR = 6.54 dB, M-FSIM = 0.659), indicating that the diffusion prior fails to recover meaningful spatial structures under the photon-starved, data-limited conditions of the lunar polar regions.

Concurrently, the proposed framework excels in spectral fidelity restoration. Specifically, SSF-3DSC achieves the lowest (best) ERGAS of 24.42 and MRAE of 17.54, reducing relative spectral distortion by over 15% compared with the 3D-DnCNN (ERGAS = 28.78; MRAE = 21.58) and by more than 40% versus HSID-CNN and HSI-SDeCNN. The diffusion-based cDDPM exhibits the most severe spectral distortion, yielding the highest ERGAS (168.43) and MRAE (148.23) values among all evaluated methods. Notably, paired-CycleGAN—a network optimized exclusively for spectral fidelity—yields the second-best spectral restoration performance (ERGAS = 27.10; MRAE = 20.67). This superior performance of our model over paired-CycleGAN can be attributed to the spectral contextual information from neighboring pixels explicitly incorporated by SSF-3DSC through its spatial–spectral modeling architecture. Compared to approaches that solely utilize spectral dimension information, the integration of mutual spatial constraints from adjacent pixels enables SSF-3DSC to effectively mitigate both global spectral distortion (quantified by ERGAS) and inter-band relative amplitude errors (measured by MRAE).

The synergistic performance achieved across spatial–spectral quality metrics demonstrates that our framework effectively balances spatial consistency with spectral fidelity, comprehensively validating the effectiveness of our proposed network architecture for low-signal compensation in M3 data.

4.2. Performance Evaluation of Visual Quality

To provide an intuitive visualization of the spatial effects achieved by various network architectures for lunar polar signal compensation, we performed spectral integration over the full wavelength range to generate grayscale images (as illustrated in

Figure 11).

Supplementary Figure S1 provides enlarged views of representative regions to highlight fine-scale detail preservation. Compared to the generation of false-color images using selected bands, grayscale images eliminate the subjective bias associated with arbitrary band selection. A unified contrast stretching operation was applied to both the compensated results and original images to facilitate qualitative assessment of the full-band energy levels of each pixel following compensation. The eight cases shown in

Figure 11 cover a broad range of diverse lunar surface conditions—from deep-shadow, low-SNR areas, and illumination boundaries to texture-rich terrains and heterogeneous regions with varying contrast levels—enabling visual assessment under diverse spatial and illumination conditions.

Figure 11a shows the low-SNR images, while

Figure 11b shows the paired high-signal images.

Figure 11c–h displays the resulting images obtained after applying different compensation methods. To facilitate systematic visual assessment, we annotate representative topographic features using colored bounding boxes. White boxes delineate salient geomorphological ROIs in the paired dataset. Colored boxes provide a qualitative guide to restoration quality: red indicates failure (no recognizable features), yellow indicates partial restoration (coarse structures without fine-scale texture), and green indicates successful restoration with preserved fine-grained morphology. It should be noted that this color coding serves solely as a visual guide and does not constitute a quantitative categorization; visual impressions should be interpreted together with the objective metrics in

Table 3 to provide a complete evaluation.

Visual inspection reveals that 3D-DnCNN and the proposed method outperform all other evaluated networks in terms of overall compensation quality. In particular, HSI-SDeCNNs reconstruct only conspicuous brightness modulations associated with prominent topographic variations (e.g., in Cases 4 and 5) and fail to reproduce fine-scale topographic details, particularly the subtle terrain undulations in Case 2. In comparison, 3D-DnCNNs demonstrates an improved capability to restore more detailed topographic relief features (particularly in Cases 4, 5, and 6). Nevertheless, they still struggle with the reconstruction of fine textural details and other smaller-scale morphological features (Cases 1 and 3). Paired-CycleGANs, trained exclusively on one-dimensional spectral data without spatial context, produce compensation results exhibiting scattered spatial noise artifacts that obscure the majority of characteristic topographic features. Despite these artifacts, paired-CycleGANs achieve a closer approximation of the overall image brightness (grayscale intensity) to the references than the aforementioned three networks, indicating superior fidelity in restoring the integrated spectral intensity per pixel—consistent with its demonstrated effectiveness in mineral-detection applications [

24]. The diffusion-based cDDPM baseline exhibits the poorest performance, with its artifacts exacerbated into pervasive high-frequency granular noise that nearly precludes the reconstruction of all recognizable topographic features. This result suggests that the learned diffusion prior struggles to effectively model the signal-dependent degradation patterns characteristic of photon-starved and data-scarce lunar polar observations. In contrast, the proposed method yields compensation results that exhibit notable consistency in multi-scale topographic features, textural details, and global brightness relative to the reference data. For instance, it successfully reconstructs fine-scale features, including wrinkle ridges in Case 6, the slope in the lower-left portion of Case 1, and detailed topographic variations in Case 4. This comprehensive restoration capability arises from the MSA module’s integration of multi-scale spatial features, coupled with the joint optimization of MS-SSIM and Laplacian-pyramid loss functions. Although the proposed method demonstrates effective signal compensation capabilities, cascaded 3D convolutions and MS-SSIM/Log-Cosh losses introduce a mild spatial smoothing effect. This smoothing tendency, however, is mitigated by the residual formulation and Laplacian-pyramid objective in C3D-RCM, which guide the network to suppress noise rather than blur structural features, thereby preserving meso-scale topographic boundaries (e.g., crater rims, ridges, and slopes) at the M3 OP2C resolution. Compared to other evaluated approaches, it better preserves fine-scale morphological details and achieves superior overall reconstruction quality.

Overall, the superior visual fidelity achieved by SSF-3DSC highlights its potential to improve the interpretability of HSI data acquired under the low-illumination conditions of the lunar poles.

4.3. Performance Evaluation of Spectral Profiles

The fidelity of spectral signatures is paramount for the robust interpretation of HSI, particularly in lunar missions targeting objectives such as quantitative mineralogical mapping, hydroxyl/waterice abundance estimation, and elucidating geological evolution. Accurate spectral compensation is the cornerstone for discriminating the physicochemical properties of diverse lunar surface materials under low-illumination conditions, where attenuated signals compromise the spectral characteristics of shadowed terrain and PSRs near the lunar poles.

To evaluate the spectral restoration capability of various network architectures, a comparative analysis of representative pixel spectral profiles was conducted.

Figure 12 presents the band-wise reflectance profiles at the pixel level (29, 9) within the representative test samples for HSI-SDeCNN, HSID-CNN, 3D-DnCNN, paired-CycleGAN, and our proposed SSF-3DSC. The vertical axis (‘Reflectance’) represents reflectance values, while the horizontal axis denotes the band index corresponding to M3 spectral channels. Due to the substantial spectral distortion exhibited by the cDDPM baseline, it is excluded from the spectral profile plots to prevent visual clutter from obscuring the other spectral profiles; its inability to reconstruct reliable spectral signatures has been adequately demonstrated by the quantitative evaluation in

Section 4.1. As illustrated, the spectral profile compensated by the proposed network (blue dashed line) demonstrates the highest fidelity to the high-signal reference (red solid line). In contrast, all competing networks introduce varying degrees of spectral distortion, including the over or underestimation of overall reflectance levels, as well as the introduction of artificial fluctuations in spectral profiles. Specifically, HSID-CNN (green dashed line) exhibits pronounced spectral oscillations and spurious absorption bands; HSI-SDeCNN (brown dashed line) and 3D-DnCNN (purple dashed line) exhibit substantial deviations in spectral morphology and absorption band positions compared to the reference spectrum (e.g., Cases 2, 4, and 8), while simultaneously introducing systematic reflectance bias through over or underestimation (e.g., Cases 1, 3, and 4). Although paired-CycleGAN (orange dashed line) retains varying degrees of reflectance bias (Cases 1, 2, and 7), it significantly outperforms the aforementioned networks by delivering more accurate restoration of global spectral morphology and enhanced fidelity in local absorption band center recovery, thus representing the closest competing approach to our proposed SSF-3DSC. Such reflectance bias, however, is an inherent artifact of its single-pixel processing, which precludes the stabilizing influence of spatial regularization. In contrast, SSF-3DSC exploits spatial context from neighboring pixels to impose structural constraints that effectively suppress fluctuations in reflectance levels. The superior spectral fidelity achieved by SSF-3DSC relative to the purely spectral paired-CycleGAN underscores the critical role of spatial context in enabling robust hyperspectral signal reconstruction.

Combined with the spatial assessments in

Section 4.2, these results further substantiate our network’s comprehensive signal-compensation capability in the lunar polar setting, which is essential for reliable lunar surface characterization under challenging illumination conditions and in low-signal regions. It is worth noting that the compared baselines are strong and widely adopted restoration backbones, and our results should be interpreted through the lens of domain specificity. The observed performance gap primarily reflects domain-specific degradations: lunar polar observations exhibit distinct, illumination-driven, and signal-dependent effects. By explicitly incorporating spatial–spectral fusion mechanisms tailored to this setting, SSF-3DSC is better suited to lunar signal compensation than general-purpose restorers, resulting in more reliable compensation under lunar polar conditions.

4.4. Ablation Study

To validate the contribution of constituent terms of our proposed architecture to the overall performance for signal compensation, a comprehensive ablation study was conducted to examine the individual and combined effects of these elements. Because the MS-SSIM, Laplacian-pyramid, and Log-Cosh losses are progressively incorporated into Stages 2 and 3 with distinct roles (

Section 3.3.2 and

Section 3.3.3), our ablation analysis primarily focuses on architectural modules and the staged-training strategy, while the effects of these loss terms are interpreted through their stage-wise objectives: structural similarity, multi-scale detail preservation, and robust end-to-end optimization, respectively. As detailed in

Section 3.1, our architecture consists of three components (SCM, MSA, and C3D-RCM), which are designed to capture spectral-dimensional representations, multi-scale spatial representations, and perform spatial–spectral feature extraction and reconstruction of HSI cubes, respectively. Furthermore, end-to-end training was incorporated into the ablation framework to assess the efficacy of our staged training strategy. The effectiveness of both architectural components and training methodologies was comprehensively evaluated using both visual assessment and quantitative performance metrics.

Table 4 summarizes the quantitative performance of ablation experiments across different architectural modules and training strategy configurations, while

Figure 13 and

Figure 14 illustrate the corresponding spatial–spectral reconstruction effects. The complete architecture incorporating all three modules (SCM, MSA, and C3D-RCM) with staged training achieves optimal performance in both visual quality (

Figure 13h) and all evaluation metrics (last column of

Table 4). Specifically, this configuration attains the highest PSNR and FSIM, as well as the lowest ERGAS and MRAE, while exhibiting the most similar visual appearance to the ground truth (

Figure 13b vs.

Figure 13h). The ablation study systematically demonstrates that removing individual modules consistently degrades model performance. In particular, eliminating the SCM results in severe spectral fidelity degradation, with ERGAS deteriorating to 34.07 and MRAE increasing to 25.99, demonstrating the fundamental importance of SCM for spectral compensation and restoration. The spatial domain also exhibits substantial detail loss (

Figure 13c). Similarly, though to a lesser extent, excluding the MSA component leads to moderate performance decline in spatial reconstruction (PSNR = 26.63 dB, FSIM = 0.9291), thereby demonstrating the importance of multi-scale attention mechanisms for capturing hierarchical spatial features effectively. Despite achieving the second-best spatial visual performance,

Figure 13d exhibits increased blurriness relative to both the proposed method and ground truth, a degradation closely associated with the loss of multi-scale spatial attention features provided by the MSA module. The removal of the C3D-RCM module results in slight performance degradation (PSNR = 27.02 dB, FSIM = 0.9410, ERGAS = 25.98, MRAE = 18.45), as this module is primarily responsible for reconstructing both spectral features and multi-scale spatial features, with the spatial degradation manifesting as increased residual spatial artifacts (noisy pixels, as shown in

Figure 13e). Finally, the training strategy itself plays a crucial role. When all three modules are utilized, switching from staged training to an end-to-end training strategy causes dramatic deterioration in all quality metrics and achieves the poorest spatial consistency among all ablation configurations (

Figure 13g). This substantial improvement validates the effectiveness of the progressive learning strategy in optimizing the multi-module architecture for addressing complex restoration tasks involving low illumination lunar HSIs.

These results highlight the complementary nature of our proposed modules and training strategy, where their full integration yields superior performance compared to any subset combination. This architectural synergy demonstrates that each module distinctly addresses spectral characteristics, multi-scale spatial details, or feature reconstruction, collectively enabling more accurate and robust signal compensation. It is worth noting that when a specific module was ablated, any loss function terms exclusively designed for or tightly coupled with that module were also removed. The distinct impact of whether these corresponding loss terms are removed alongside the module or not—as a separate consideration from the module’s ablation itself—is beyond the scope of this particular study.

5. Discussion

The preceding sections have rigorously assessed the performance of the proposed network through quantitative metrics, visual inspection, spectral-profile analysis, and ablation studies. This discussion now emphasizes the framework’s practical applicability at regional scales, including the examination of spatial consistency and mineralogical fidelity in previously unsampled test regions, as well as the broader scientific implications, particularly the substantial expansion of usable data coverage in the scientifically critical lunar polar regions.

5.1. Evaluation Based on Spatial Consistency

Recent lunar exploration missions, such as NASA’s Artemis program [

84,

85,

86] and China’s CE-7 mission [

87,

88,

89], have increased scientific interest in potential landing zones within the lunar south polar region. Among the identified scientifically significant areas, De Gerlache Rim 1 and Connecting Ridge Extension were initially identified by NASA in 2022 as 2 of the 13 candidate landing regions for the Artemis III mission [

66]. As described in

Section 2.3, these regions were designated as independent test zones due to their scientific importance and the availability of both high- and low-signal M3 HSI coverage, providing an optimal testbed for evaluating the spatial consistency of the proposed signal compensation method at regional scales.

To demonstrate the regional-scale effectiveness of our approach,

Figure 15 presents a comparative analysis of low-SNR M3 images from challenging low-illumination conditions with our model’s signal-compensated outputs and the corresponding high-SNR reference imagery from the two candidate landing regions. The rectangular frames delineate the boundaries of de Gerlache Rim 1 and the Connecting Ridge Extension, where coverage corresponds to overlapping zones of co-registered low- and high-SNR observations. In contrast, the blue-filled areas mark regions without paired low- and high-SNR coverage. For regional signal compensation, an overlapping patch-based processing strategy was employed to mitigate edge artifacts. The Target regions was partitioned into 32 × 32 pixel patches with a three-pixel overlap, each processed independently through our network. The processed patches were seamlessly reassembled using a gradient-based weighted blending algorithm on the overlapping zones to ensure spatial continuity, implemented as normalized weighted averaging in the overlaps.

Figure 15b presents the regional-scale signal compensation results, revealing substantial improvements in image quality and topographic clarity. The compensated imagery exhibits strong visual correlation with the high-SNR reference data (

Figure 15c), with restored topographic features showing remarkable agreement with the underlying LRO LOLA digital terrain model (DTM). This stands in stark contrast to the original low-SNR observations (

Figure 15a), where adverse illumination conditions have severely attenuated signal quality, rendering topographic features nearly indiscernible.

Specifically, the rim and interior walls of de Gerlache crater show remarkable correspondence between the compensated results (

Figure 15b) and reference imagery (

Figure 15c), with the compensated crater rim profile closely matching the underlying DTM topography. Furthermore, our compensation results accurately capture the detailed topographic relief characteristics at the base of the Connecting Ridge Extension. These distinct geological features are almost entirely obscured in the original low-SNR data. Given that spectral integration is performed during the display process with uniform contrast stretching applied across all images, the observed brightness similarity between our compensated results and the ground truth observations further validates the model’s fidelity in accurately restoring the integrated spectral signal intensity.

Nevertheless, despite the generally high compensation quality achieved across this region, a notable exception occurs in the pitted terrain northwest of Spudis crater, located in the upper-right portion of the Connecting Ridge Extension candidate landing site. This region presents uniquely challenging conditions: the compensated imagery displays persistent ambiguity and blurring artifacts that suggest fundamental limitations in signal recovery. This area represents the most poorly illuminated region in the original low-SNR imagery and exhibits significant noise artifacts that persist even in its paired high-SNR counterpart. These observations indicate that, due to its low-lying topography and the inherent challenges of M3’s polar observation conditions, this region failed to yield high-quality observational data throughout the entire OP2C period. Consequently, even the data designated as ’high-SNR’ for this specific locale appear compromised, with the corresponding low-SNR pixels likely approaching the critical lower boundary of the SNRI threshold used for HSI quality evaluation. Therefore, subsequent research should comprehensively examine how SNRI threshold selection influences the reliability and robustness of spectral compensation methodologies.

In summary, the evaluation of spatial consistency validates the efficacy of our proposed architecture for regional-scale lunar HSI compensation. The compensated results exhibit considerable visual correlation with high-SNR reference imagery, effectively restoring obscured geological features in challenging low-illumination conditions. Despite its limitations in instances of extreme signal degradation, the model demonstrates overall robustness in generating spatially coherent and topographically reliable imagery from compromised lunar HSIs.

5.2. Evaluation Based on Mineral Abundance Inversion

To validate the spectral fidelity of our SSF-3DSC framework in preserving mineralogical spectral signatures, we conducted a mineralogical inversion analysis in the Shackleton Crater region. This region presents an ideal testing ground for spectral compensation validation, given its status as a high-priority scientific target with dual significance: PSRs that are candidate sites for water ice deposits [

90] and exposures of ancient crustal material. The crater’s complicated topography produces diverse illumination conditions—from direct solar illumination on rims to scattered light in shadowed areas—enabling comprehensive validation of our compensation methodology.

Reflectance anomalies observed on the western wall of Shackleton Crater are typically associated purest anorthosite (PAN)–lunar crustal rock composed of >98% plagioclase [

91,

92]. Plagioclase is intrinsically very reflective in the visible–NIR (especially around 1050 nm) and exhibits a characteristic Fe

2+ absorption band near 1250 nm [

93]. In practice, these spectral properties mean that regions rich in plagioclase (anorthosite) appear with elevated reflectance at 1050 nm and a stronger absorption at 1249 nm. Hence, the 1050/1249 nm reflectance ratio provides a robust proxy for plagioclase abundance [

21]. In other words, higher values of the 1050/1249 nm ratio indicate stronger 1250 nm absorption relative to 1050 nm, a hallmark of plagioclase minerals.

Figure 16 presents a comparative analysis of plagioclase abundance maps: (a) raw low-SNR M3 images (0.1 < SNRI < 0.5), (b) the corresponding SSF-3DSC-compensated results, and (c) a reference mineralogical ratio map based on SELENE/Kaguya multiband imager (MI) observations (adapted from Haruyama et al. [

91]). This Kaguya MI ratio map, acquired under direct solar illumination of Shackleton’s upper inner wall, provides a high-fidelity external benchmark for plagioclase distribution under optimal observational conditions. In all maps, a uniform color scale is applied where warm (orange/red) tones mark high 1050/1249 nm ratio values (high plagioclase content), and cool (green/blue) tones mark low ratios (mafic-rich or plagioclase-poor terrain).

The SSF-3DSC compensated ratio map (

Figure 16b) effectively reproduces the spatial mineralogical patterns observed in the MI reference (

Figure 16c). The highest ratio values are consistently observed on the western inner wall of Shackleton Crater in both datasets, corresponding to known locations of pure anorthosite (PAN) previously identified through LROC NAC observations [

91]. This spectral fidelity extends throughout the crater region: elevated plagioclase abundances characterize the northwestern outer rim (displayed as orange-yellow hues), while the outer regions of Shackleton Crater maintain consistently lower values (shown in green). The compensated map preserves the same lateral variations as the MI reference, maintaining relative contrast between adjacent geological units. These results confirm that our compensation approach not only restores the overall signal but also retains the fine-scale spectral relationships essential for mineral mapping.

Conversely, the ratio map derived from low-SNR raw imagery (

Figure 16a) is dominated by noise and artifacts, exhibiting anomalous values and substantial spatial irregularities throughout the crater region. Instead of delineating any clear geological units, the map displays a chaotic spatial distribution of random high and low ratio pixels–an expected outcome given the low illumination data quality. This chaotic pattern, coupled with geologically unrealistic abundance estimates (ratios exceeding 1.08 outside the crater rim), renders direct mineralogical interpretation from such signal-degraded data unreliable. This fragmentation is a direct consequence of the poor data quality: without compensation, shadowed spectra produce spurious band depths that overwhelm the true signal.

Despite overall consistency of compensation results, sparse anomalous pixels persist within the delineated region. These outliers, represented by the scattered blue pixels in the central region of

Figure 16b, are sparsely scattered in the region beyond the western crater rim, contrasting with the elevated plagioclase signatures characteristic of their geological surroundings. Such localized discrepancies probably arise from the fundamental challenges of processing extremely low-SNR data, where signal attenuation in certain pixels inevitably exceeds the compensation capacity of our current framework architecture. While these sparse anomalies constitute an inherent limitation of the methodology, they remain sufficiently isolated to preserve the integrity of the broader mineralogical interpretation. This finding underscores a critical principle for practical applications: confidence in compensated data should scale with spatial coherence–isolated pixels warrant careful scrutiny, whereas regionally consistent patterns provide more reliable mineralogical insights.

5.3. Usable-Coverage Expansion and Outlook

The analyses presented in previous sections collectively demonstrate that our proposed deep learning framework significantly enhances the restoration quality and spectral fidelity of M3 HSIs acquired under challenging illumination conditions within lunar polar regions. To quantify the practical impact of our approach, we assess the distribution of signal quality across the lunar south polar region by computing the SNRI for each pixel using optimal values from repeated M3 observations.

Table 5 presents the proportional distribution of pixels across different SNRI ranges within two latitudinal zones. The statistics reveal that while high-quality observations (SNRI

) constitute 90% of pixels within 70°S, this proportion drops dramatically to 75% beyond 80°S. More critically, the moderately degraded pixels (

)—previously deemed unusable for quantitative analysis—represent 6.47% and 15.10% of the respective regions. These pixels, concentrated in topographically shadowed areas, contain crucial mineralogical information about the Moon’s polar geology that would otherwise remain inaccessible without spectral compensation.

Building upon our validated compensation capability, we systematically processed all pixels within the 0.1–0.5 SNRI range across the lunar south polar region (70°S). This operational implementation effectively expands the usable M3 coverage from regions with SNRI to those with SNRI . This application aims not only to showcase the regional-scale restoration capabilities of our model but also to explore its utility in a real-world scientific context: a preliminary mineral abundance inversion in this newly accessible data regime.

This regional-scale analysis shows that the compensation approach substantially increases usable coverage in high-latitude scenes by incorporating moderate-SNR (SNRI: 0.1–0.5) observations, particularly within 80°S–90°S shadowed terrains, thereby providing a more data-rich basis for subsequent polar studies. By broadening the analyzable pixel set, the expanded dataset may support more reliable, fine-scale mapping in shadowed regions when combined with complementary observations (e.g., higher-resolution imagery or multi-sensor data). While detailed mineralogical interpretation of these newly accessible patches lies outside the present scope, the demonstrated coverage gain provides a practical foundation for future work, potentially facilitating more robust mineralogical mapping and resource assessments in high-latitude terrains.

5.4. Methodological Limitations and Future Directions

Comprehensive evaluation through quantitative metrics, visual assessments, and spectral-profile analyses (

Section 4 and

Section 5) demonstrates the effectiveness of SSF-3DSC. Despite these improvements, several limitations warrant attention.

First, since our paired-learning approach is trained on data within a fixed SNR range, the network cannot be expected to deliver compensation quality exceeding that of reference observations, constituting an inherent methodological boundary rather than a technical limitation addressable through architectural improvements. Therefore, the method should not be generalized to extremely low-SNR, deep-PSR spectra (typically SNRI > 0.5) and derived application-level inferences (including volatile-related interpretation), where diagnostic absorption signatures may fall below the noise floor and lie outside the learned mapping. Second, isolated anomalous pixels persist sporadically within well-compensated regions, particularly in areas experiencing extreme signal attenuation. Thus, careful contextual inspection is required when performing detailed mineralogical interpretation. Third, the selection of the SNRI threshold, which determines the boundaries for compensation, directly impacts both the coverage and fidelity of recoverable data. Notably, higher SNRI typically corresponds to increasingly noise-dominated spectra, for which restoration reliability may degrade rapidly; therefore, results beyond our validated range (0.1 < SNRI < 0.5) should be interpreted with caution. But, an optimal, universally applicable threshold remains undetermined. Finally, as the model was trained exclusively on M3 OP2C data from the lunar south polar region, its generalization to highly heterogeneous terrains, other polar regions, and alternative hyperspectral sensors remains to be validated through systematic evaluation.

Future work should focus on refine SNRI thresholding for dataset construction and investigate the model’s performance across a continuum of SNR levels to establish clear reliability bounds. Furthermore, extending SSF-3DSC to the lunar north pole and to future hyperspectral instruments targeting shadowed craters will validate the method’s generalizability and advance polar investigations, enabling more reliable water ice detection and resource prospecting. In addition, exploring multi-sensor data fusion (e.g., combining optical HSI with thermal or SAR observations) is expected to further enhance the robustness of compensation in challenging illumination regimes. Methodologically, exploring more advanced attention-guided spatial–spectral fusion backbones may provide additional gains in module synergy and overall performance.