1. Introduction

Mapping avalanche activities is a crucial component for aiding forecasting and risk mitigation in mountainous regions [

1]. Every year, more than 100 avalanche-related fatalities are reported across Europe, and numerous infrastructures, roads, and buildings are damaged by this phenomenon [

2]. In-field measurements are the most reliable option to quantitatively assess the area covered by an avalanche, but these are expensive, limited by accessibility, risky for observers, and unsuitable for broad and continuous monitoring [

3]. Remote sensing has therefore emerged as a valuable alternative, enabling safe, large-scale, and frequent monitoring of avalanche activity through the systematic acquisition of high-quality satellite imagery, particularly at high latitudes [

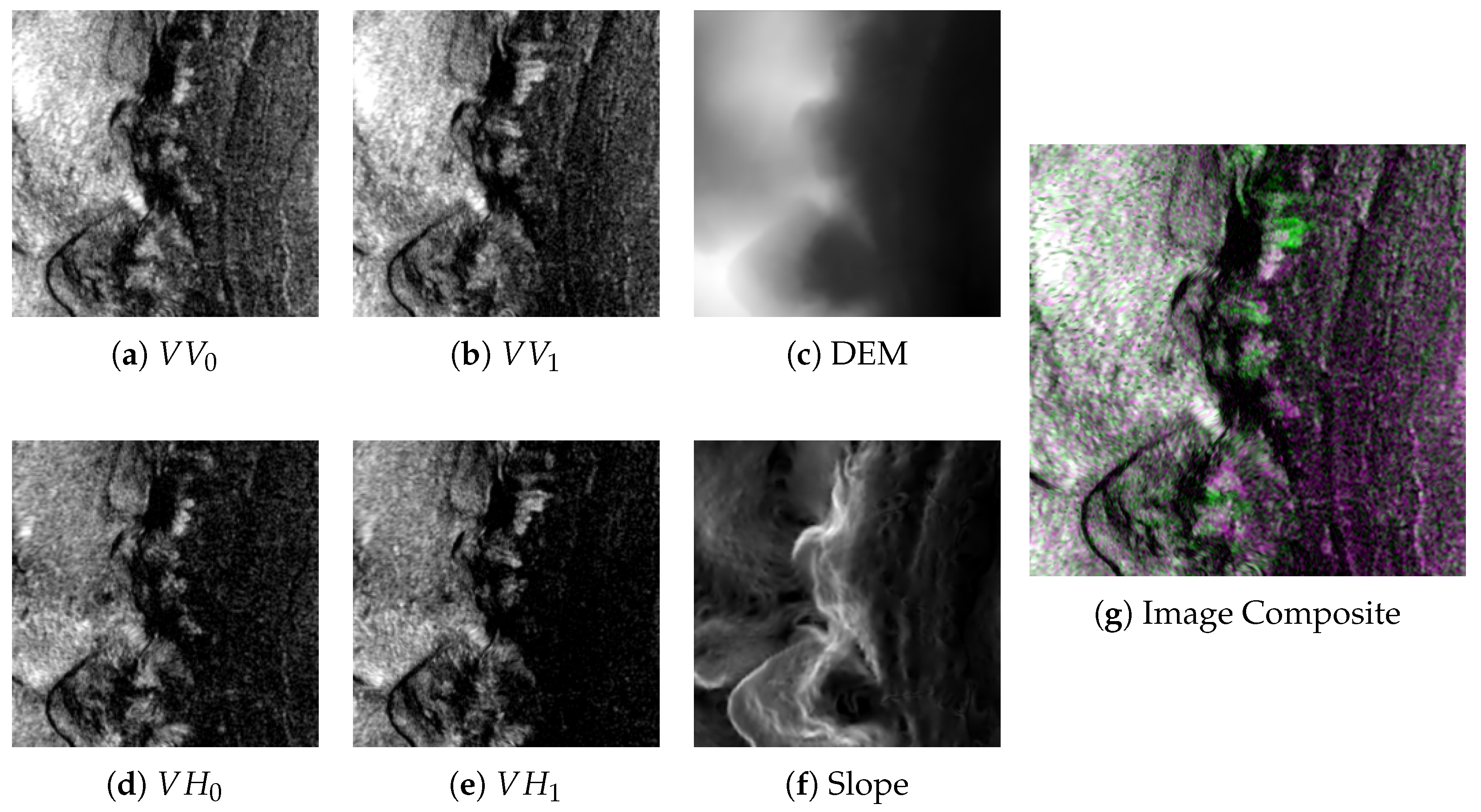

4]. Examples of SAR backscatter images and the corresponding expert masks are shown in

Figure 1.

Among the available remote sensing modalities, Synthetic Aperture Radar (SAR) data is especially well suited for avalanche detection, as it is independent from weather conditions and exhibits clear scattering patterns of snow debris [

5]. However, the manual identification of snow avalanches in SAR images is complex, time-consuming, and requires expert knowledge [

6]. To overcome these limitations, several automated approaches for continuous avalanche monitoring have been proposed in recent years [

7]. In particular, deep learning-based image segmentation methods currently represent the most promising solutions. Nevertheless, existing models still suffer from a high rate of false positives and fail to reach the same level of accuracy as human experts [

8]. Therefore, manual annotation of SAR images still represents the gold standard in the field [

4].

Perhaps among the major obstacles limiting further improvements in deep learning methods is the scarcity of labeled data, which is necessary to train more accurate models. Manual annotations are not only costly to produce but also prone to errors. Smaller avalanches are often overlooked, while imprecise drawing of the mask contours introduces label noise that negatively influences the performance of segmentation models [

2,

9]. These inaccuracies arise from a combination of annotator subjectivity, speckle noise in the SAR images, and ambiguity in interpreting the actual contours of the debris.

The goal of this study is to develop a tool that facilitates the annotation of snow avalanches and improves the quality of the segmentation masks in SAR imagery. To this end, we adapt the SAM [

10] to the task of avalanche annotation and evaluate its integration into a semi-automatic annotation workflow. The SAM is a computer vision foundation model able to identify and segment any object in natural images with remarkable accuracy, requiring minimal user inputs in the form of prompts, namely simple clicks or bounding boxes (BBs) drawn around the objects of interest. Rather than relying on explicit class information, the SAM leverages prompt-based guidance to localize the target object, which makes it highly flexible and transferable to downstream tasks with minimal retraining. However, images from the SAR domain significantly differ from the RGB images on which the SAM was originally trained, preventing its straightforward application for avalanche segmentation in SAR images. Recent studies have demonstrated that adapting the SAM to specialized imaging modalities can substantially reduce annotation effort while maintaining high segmentation quality [

11,

12,

13,

14]. These findings motivate the exploration of the SAM as a semi-automated annotation tool for snow avalanches in SAR data.

The main contribution of our work is to extend the SAM to our use case by addressing the following key challenges:

Domain adaptation: Given the limited amount of training data, effective domain adaptation must be achieved by fine-tuning only a small subset of the SAM’s parameters.

Input adaptation: The standard SAM architecture can only process three channels’ inputs, whereas raw SAR images consist of a different number of channels.

Improved prompt robustness: The SAM struggles with small targets and with imprecise prompts, e.g., a bounding box (BB) much larger than the object of interest. Identifying prompt strategies that improve robustness, especially for segmenting small avalanches, is therefore critical.

Training optimization: Given that even the lightest SAM variant exceeds 90 million parameters, the fine-tuning procedure must be carefully designed to be computationally feasible on commercial hardware.

To address these challenges, we (1) employ adapters [

15] in combination with decoder fine-tuning for tackling domain shifts, (2) introduce a multiple encoder method inspired by [

16] to process the six channels of SAR images, (3) propose a specific prompt strategy based on BBs, and (4) introduce a custom algorithm for training the SAM.

The experimental results demonstrate that our approach successfully adapts the SAM to the SAR domain, achieving competitive or superior performance compared with existing methods in the literature. Moreover, the proposed prompt strategy reduces the sensitivity to prompt precision, enabling performance comparable to prompt-free segmentation approaches when using a full-image (minimum precision) prompt. Finally, the integration of our method into a semi-automatic annotation tool significantly improves annotation efficiency, demonstrating its practical value for generating a large-scale inventory of snow avalanches.

1.1. Related Work

In this subsection, we provide a few essential notions on SAR remote sensing (

Section 1.1.1), we mention the most effective solutions for segmenting avalanches in SAR images (

Section 1.1.2), and then we introduce the SAM and SAM adaptation (

Section 1.1.3 and

Section 1.1.4, respectively).

1.1.1. Synthetic Aperture Radar Images for Avalanche Mapping

SAR images are obtained from the backscattered energy of the microwave signals emitted by the radar itself. Unlike optical sensors that are passive and capture reflected sunlight, SAR is an active technology that operates independently of sunlight or cloud cover. At typical operating frequencies (e.g., X, C, or L band), SAR sensors are highly sensitive to surface properties, such as roughness and moisture. Therefore, debris deposited by snow avalanches can be distinguished from the surrounding undisturbed snow because of their different roughness and structural properties, resulting in an increased and enabled detection in SAR images [

2,

9].

SAR sensors operate using different polarization modes, which describe the orientation of the transmitted and received electromagnetic waves. These are generally categorized into co-polarized signals (VV or HH), where the transmit and receive orientations are the same, and cross-polarized signals (VH or HV), where they are orthogonal. When full polarimetric measurements are available (all co- and cross-polarized channels), target decomposition methods can be used to interpret dominant scattering mechanisms [

17,

18,

19]. Sentinel-1, however, provides only dual polarized data (either VV/VH or HH/HV); thus, standard decompositions are not directly applicable, and dual polarization variants are typically more approximate [

20,

21]. Therefore, in this work, we directly use VV and VH backscattering:

Human experts annotate avalanche debris by looking at RGB composites obtained by combining two co-registered SAR images taken at consecutive times

and

. The time offset between two different passes can vary between 6 and 12 days. The most common RGB composites are given by

or created through specific algorithms like those described in

Appendix B.1. Unfortunately, SAR images are affected by speckle noise, which negatively influences their use for many tasks. Speckle noise can be reduced during image preprocessing, e.g., by applying a Lee filter [

22]. Modern noise removal approaches consist of pretraining deep learning models in a self-supervised way to reduce the impact of speckle noise on the final performance [

23,

24].

Useful auxiliary inputs that aid the human annotator to perform manual segmentation are the Digital Elevation Map (DEM) and the Meterological Fields (Mets) data. DEM images associate with each pixel a real value representing the elevation above sea level, expressed in meters. In the context of snow avalanche detection, DEM data can inform the model about areas where avalanches cannot occur, i.e., flat surfaces far from mountain slopes. The DEM can be used to derive the Slope Angle (SA), a topographical feature that can be used to identify release zones, i.e., those regions where avalanches can release debris. In particular, avalanche debris can be found in the proximity of slopes whose inclination ranges between 30 and 50° [

9]. The SA is defined as follows:

where

is the elevation associated with pixel

p and

represents the gradient.

The relevance of Met data for avalanche detection has been highlighted in previous work [

2,

25,

26], but its impact on performing automatic segmentation is still to be determined. The Met data consists of a time series associated with each SAR image, which spans the entire duration

between the two satellite acquisitions.

1.1.2. Automated Avalanche Detection with Deep Learning

Automatic detection of snow avalanches with deep learning is still a relatively new field. Deep learning approaches must reach a certain degree of reliability before being deployed in avalanche warning services to assess the avalanche danger and support decision making in specific communities [

2]. The most prominent deep learning model for segmentation by Bianchi et al. [

9] is based on a U-Net with an encoder-decoder structure and skip connections, illustrated in

Figure 2, which takes as input SAR images, the terrain slope, and other topographic features. While the model achieved performance superior to existing approaches in automated avalanche detection, it still produces several detections not corresponding to annotations in the test set. Despite most of them being false alarms, some of the false positives were actual avalanches missed during labeling by the expert, highlighting a limitation in the manual annotation process.

1.1.3. Segment Anything Model

The SAM [

10] is a computer vision foundation model that can identify and segment almost any object in natural RGB images, achieving remarkable accuracy. The SAM does not leverage any class information but instead relies on a minimal user prompt to isolate objects from their background, thus generating binary masks. This makes the SAM adaptable to many downstream tasks with minimal to no retraining, often through prompt engineering (given the right prompt, the model generalizes well even for unseen objects and different domains). Prompts can be points, BBs, masks, and text. BBs in particular are represented by a tuple

that corresponds to the top-left and bottom-right corners.

The original SAM was trained using a data engine technique composed of three subsequent phases: assisted manual, semi-automatic, and fully automatic. The procedure progressively reduces the presence of a human in the loop, leading to the creation of the SA-1B dataset [

10], the largest dataset currently available for segmentation on natural images.

The architecture of the SAM, depicted in

Figure 3, is composed of the following:

Image Encoder: This is a classical pretrained Vision Transformer (ViT) [

27] which takes as input 1024 × 1024 RGB images and outputs 256 × 64 × 64 embeddings.

Prompt Encoder: Prompts can be either sparse (points, boxes, and text) or dense (segmentation masks). Sparse prompts are mapped to 256-dimensional embeddings and used later for computing cross-attention with the image embedding in the decoder. Dense prompts are processed with convolutional layers and directly mapped to the image embedding.

Mask Decoder: The decoder takes as input the image embedding and the encoded sparse prompts and outputs a probability map. The decoder architecture updates both the image and prompt embeddings by relying on self-attention applied to the prompt embedding and on bidirectional cross-attention between the image features and the prompt embeddings.

There are three main versions of the SAM, which differ in their number of parameters:

ViT-B: 91 million parameters;

ViT-L: 308 million parameters;

ViT-H: 636 million parameters.

The choice between these versions is driven by the target latency, hardware requirements, and training set size in the case of SAM retraining.

1.1.4. Adapting the SAM

The literature presents several solutions for adapting the SAM to different domains. Med-SAM consists of a successful retraining of the SAM to medical images, resulting in an effective tool for assisting doctors and medical experts [

11]. In Med-SAM, both the SAM encoder and the decoder are fine-tuned on an incredibly large amount of labeled medical images. This retraining strategy is not viable in our specific case due to the shortage of annotated images and computational constraints.

Another successful approach to adapting the SAM consists of substituting the decoder. This strategy allowed applying the SAM to the SAR domain and enabled the construction of SAMRS, the largest dataset for semantic segmentation in remote sensing [

13]. Substituting the decoder, usually paired with encoder adaptation, enables multi-class segmentation but gives up the possibility of using prompts [

13,

14,

15,

28]. It is possible to modify the decoder of the SAM to perform multi-class segmentation while still allowing prompts by changing the convolutional layers of the decoder. However, this modification also requires fundamental changes to the prompt handling, and to our knowledge, it has not been applied in practice. In our study, we did not explore substitution or major modifications to the decoder for two reasons:

Avalanche segmentation can be cast as a binary segmentation problem, where avalanches play the role of the foreground class.

We wanted to preserve prompts, which are fundamental for semi-automatic segmentation.

Since the SAM image encoder is characterized by a large number of parameters and layers, it represents a significant computational bottleneck. As a consequence, full fine-tuning of the SAM is often an impractical solution for domain adaptation.

The recent literature focuses on Parameter-Efficient Fine-Tuning (PEFT) strategies to adapt the SAM to a new domain. Many methods have been proposed in this direction, and the most popular ones are Low-Rank Adaptation (LoRA) [

29], adapters [

15], and Auto-SAM [

30]. The latter tries to adapt the SAM to a new domain through the introduction of a parallel network and belongs to another family of methods that tries to improve SAM performance on new domains by adding additional prompts or modifying existing ones [

12,

14,

16,

30].

2. Materials and Methods

Section 2.1 describes the avalanche dataset, the available modalities (multi-temporal SAR channels, DEM, and derived SA), and the preprocessing needed to meet the SAM’s fixed input resolution.

Section 2.2 details how we adapt the SAM to the SAR domain by training lightweight adapters in the ViT image encoder and fine-tuning the mask decoder.

Section 2.4 presents a compute-efficient training scheme that reuses image embeddings for all prompts associated with the same image.

Section 2.3 describes our BB-based prompting strategy, including prompt generation from masks and augmentation to handle imprecise user inputs.

Section 2.5 introduces our multi-encoder architecture to leverage a larger number of input channels, based on supervised embedding alignment and fusion.

Section 2.6 then combines these elements into the final three-phase training procedure and reports the main optimization settings. Finally,

Section 2.7 presents the web-based tool used to assess the method in a human-in-the-loop annotation workflow.

2.1. Dataset

The dataset consisted of 2681 labeled samples acquired from various regions in Norway. Each sample maintained a ground sampling distance of 10 m × 10 m per pixel, with image resolutions ranging from 355 × 363 to 512 × 512 pixels. Given the 10 m pixel spacing, deposits smaller than 1–5 pixels may not be consistently detectable; therefore, the dataset and reported performance primarily reflect avalanches that are resolvable at this scale.

Each observation comprises three distinct data modalities: SAR, DEM, and Met. Our preliminary results showed that prompting Met data to the segmentation model did not improve the performance, despite the relevance of this data in avalanche detection (see

Appendix D). Therefore, Met data are not discussed further in the following.

The SAR data is represented by the two SAR images with both the VV and VH channels, collected at time steps

and

. In our dataset, the two images were taken either at 6 or 12 days apart, and the image values are represented as a normalized radar cross-section (Sigma nought) expressed in decibels (dB). To create an RGB image composite, each SAR channel must also be rescaled from the original dB scale to [0, 1]. For these specific datasets, the images used for manual labeling were created through Algorithm A1. An example of such an RGB composite is presented in

Figure 4. We deferred the algorithm and the details on manual labeling to

Appendix B.1.

The values of the single-channel DEM images in our dataset ranged from 19.11 m to 2274.41 m above sea level, with a mean value of 675.82 m and a standard deviation of 380.62 m. The images had to be rescaled to make them compatible with RGB standard values ([0, 255] integer or [0, 1] float). The DEM had a spatial resolution of 10 m and was resampled to the same grid as the SAR images. We also processed the DEM images to derive the SA values, expressed in angular degrees.

To satisfy the fixed input requirement of the SAM image encoder (1024 × 1024 pixels), each sample was resized such that its longest dimension matched the target resolution. For samples with smaller aspect ratios, the remaining area was zero-padded, ensuring the preservation of the original spatial proportions and preventing geometric distortion of the SAR and DEM features. Additionally, each RGB image was normalized to a zero mean and unit standard deviation before being fed to the encoder.

2.2. Domain Adaptation

As previously discussed, the SAM was originally trained on RGB images from the natural image domain, which substantially differed from the SAR and DEM data in our dataset. Adapting the SAM to avalanche segmentation requires a fine-tuning step in which a subset of the model parameters is retrained. Training the entire model would lead to severe risks of overfitting, given the limited size of our dataset and the large number of model parameters (the smallest version of the SAM used in our experiments was based on ViT-B and contained over 91 M parameters). On top of that, fine-tuning the SAM requires great computational effort. In the following, we separately discuss how we adapted the encoder and decoder components of the SAM.

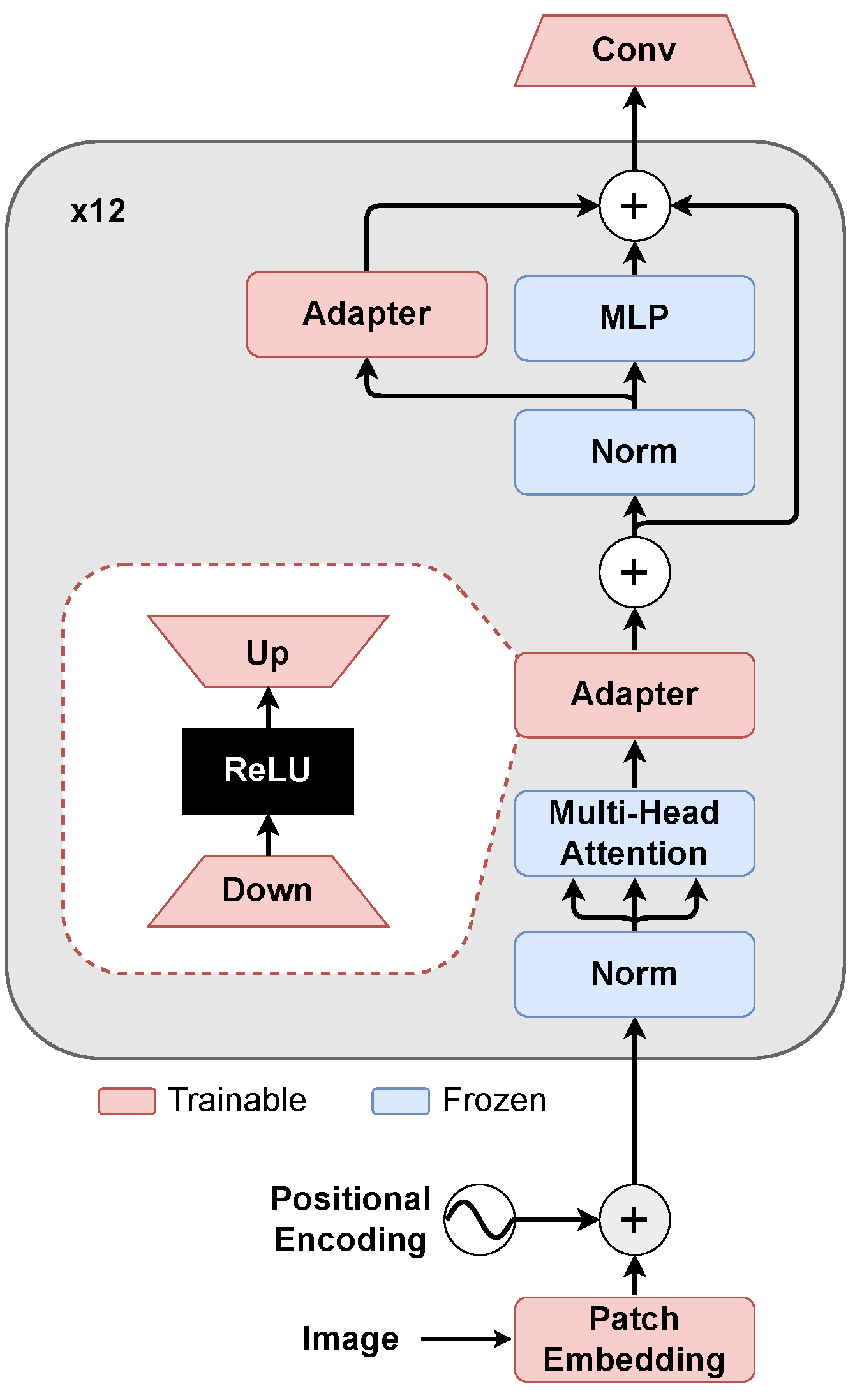

2.2.1. Image Encoder

In the SAM, most of the parameters are concentrated in the image encoder, which in the model based on ViT-B comprises approximately 86 M parameters and represents the major bottleneck to performing both training and inference. As discussed in

Section 1.1.4, several methods have been proposed in the literature to adapt the SAM’s image encoder and, in general, large foundation models to different domains. In this work, we experimented with Auto-SAM [

30], LoRA [

29], and adapters [

15]. Among these, we found that the adapters yielded the best performance in terms of the Intersection over Union (IoU) metric on the avalanche class, which served as our main validation metric. Further details were deferred to

Appendix F.

Adapters are trainable components that are placed in the transformer block of the ViT between the multi-head attention and the residual connection and in parallel with the Multi-Layer Perceptron (MLP) layer, as shown in

Figure 5. These modules transform the intermediate hidden states

x of the ViT as follows:

where ReLU is the standard activation function and Up

and Down

represent two fully connected layers that perform upscaling and downscaling, respectively.

Adding adapters to every transformer block of the ViT-B introduced 7 M parameters in the ViT-B encoder, which consisted of only 10% of the encoder parameters, reducing by over 90% the number of trainable parameters with respect to fine-tuning. We did not find a benefit in using dropout and set the MLP-ratio, which is the ratio between the number of output and input neurons of the linear layer, to 0.25.

Following the standard implementation in the PyTorch (v2.5.1) Linear layer, the weights and biases in the Up and Down layers of the adapters were initialized from a uniform distribution

, where the parameter

k is defined as follows:

This is a rather generic and uninformative weight initialization, which usually works best when there are many training samples available to learn the best weight configuration. However, the preliminary results showed that initialization schemes more tailored to our use case, including pretraining the adapters with self-supervised objectives such as missing data imputation and speckle denoising, did not convey significant improvements in our experiments. Additional details on pretraining and weight initialization are discussed in

Appendix C.

2.2.2. Decoder

Since the decoder already outputs a binary mask, which in our case served as the avalanche class, we did not have to change the architecture of the decoder. Therefore, we simply fine-tuned the decoder as illustrated in previous work [

13,

14,

15]. Plain fine-tuning without modifying the decoder architecture allowed us to preserve the prompt for the semi-automatic annotation, which is the main use case for our model.

2.3. Robustness to Inaccurate Prompts

We adopted BBs as prompts for the SAM, as they are intuitive, easy to provide, and widely recognized as the most effective prompting strategy for semi-automatic annotation, particularly in the context of SAR imagery [

13]. We created BBs from the segmentation masks as follows: (1) By computing for each avalanche the minimum enclosing rectangle; (2) By increasing the BBs through an ad hoc augmentation strategy; (3) By merging BBs that intersect to increase efficiency and simulate a more realistic human input. All three steps are illustrated in

Figure 6.

Since the SAM does not explicitly leverage any class information, the prompt alone determines the target object to be segmented. In the context of avalanche segmentation, we noticed that inaccurate BBs led to a drop in segmentation performance, suggesting that inaccurate localization hampers the model’s ability to correctly identify avalanche debris. In an operational setting, however, some degree of imprecision in human-provided prompts is unavoidable. To improve SAM robustness when inaccurate BBs are prompted, our prompt strategy increases the BB by displacing the four coordinates with a random value drawn from a uniform distribution , where k denotes the maximum number of offset pixels. During training, we used a mixed prompt strategy in which 80% of the prompts were accurate boxes (), 10% were inaccurate (), and in 10% of the cases, the BBs were replaced with full-image BBs. To perform model selection in the validation stage, we only used accurate boxes. Instead of drawing the displacement from the uniform distribution , we used a fixed value of 20. We observed that the introduction of the inaccurate and full-image BBs during training made the model more robust to imprecise prompts without affecting the usage of accurate prompts in validation.

On top of improving robustness to inaccurate prompts, we found that our strategy yields two additional benefits. The first is that the proposed augmentation strategy trains the model to perform full-image segmentation, enabling prompt-free inference. The second benefit is that training with imprecise and full-image prompts improves the segmentation performance over small avalanches. This is a particularly important result since the predictions from the baseline model exhibit a positive correlation between the avalanche size and the IoU, indicating that smaller avalanches are more challenging to segment. The performance degradation on small targets can be attributed to different factors:

Bias in the dataset: Small avalanches are more difficult to segment accurately for human annotators and are also more affected by image noise, making ground truth labels less reliable.

Architectural: ViT, which serves as the backbone of SAM, lacks skip connections that would preserve fine-grained spatial details from the early layers. Additionally, the fixed receptive field is not well suited for detecting objects at different scales.

The original SAM paper found that eliminating all connected components with areas inferior to 100 pixels significantly improved IoU performance, highlighting a fundamental limitation of the SAM in segmenting small objects. In

Appendix E, we discuss additional details and attempts at improving the detection performance on small avalanches.

2.4. Resource Optimization

As discussed in

Section 2.2, we employed the ViT-B variant of the SAM, which contains 91 million parameters, 86 million of which reside in the image encoder and constitute the primary computational bottleneck during fine-tuning. In the SAM, the image encoder and the prompt encoder operate independently; the image embedding depends only on the input image, while the prompt embedding depends exclusively on the provided prompt (e.g., the BB). This architectural property becomes particularly relevant in our setting, where multiple BB prompts are associated with different avalanches appearing in the same image. We note that this differs from the more common setting, where a natural image contains a single object of interest.

Processing each image-prompt pair independently would result in redundant and computationally expensive recomputation of the same image embedding. To avoid this redundancy, we computed the image embedding only once per image and reused it for all associated prompts. Algorithm 1 details the data preparation procedure that enables the resource optimization. The key idea is to replicate the image embedding (Line 6 of Algorithm 1) so that it can be paired with each prompt and processed in parallel by the decoder. This allows all prompts associated with the same image to be evaluated simultaneously, significantly improving training efficiency. All the repeated image embeddings are then concatenated to form a single expanded batch (Line 7), which is fed to the decoder together with the corresponding concatenated prompt embeddings (Line 8).

Depending on the number of prompts, the proposed parallelization could occupy a massive amount of memory, but even if each image must be processed individually, the overall compute time is reduced. Indeed, handling prompts at run time efficiently allowed us to train simultaneously on all the avalanches of the same image and reduced the training time by approximately

without impacting the number of epochs needed to reach convergence in training.

| Algorithm 1 Data preparation for resource optimization. |

- Input:

Image embeddings , where is the embedding of the ith image and B is the batch size, Prompt embeddings , where and Li is the number of prompts for the ith image

- Output:

Expanded image embeddings and concatenated prompt embeddings

|

| 1: function PrepareDecoderInput()

|

|

2:

| ▹ Total number of prompts |

| 3: |

| 4: |

| 5: for do |

| 6: Repeat | ▹ New shape: |

| 7: Concat |

| 8: Concat |

| 9: end for |

| 10: return | ▹ Final shapes: and |

| 11: end function |

2.5. Input Adaptation

The SAM image encoder was pretrained on RGB images, and it expects a three-channel input. Our avalanche dataset, however, provides six co-registered channels:

,

,

,

, DEM, and SA. To exploit all available information without altering the pretrained encoder architecture, we adopted a multi-encoder strategy inspired by SAM with Multiple Modalities (SAMM) [

16].

Concretely, we used two SAM image encoders that process complementary triplets, namely a primary encoder fed with

and a secondary encoder fed with

, with all channels normalized to a zero mean and unit standard deviation. Both encoders share the same backbone architecture and are adapted with the same PEFT mechanism described in

Section 2.2. For a batch of size

B, the two image encoders produce embeddings

.

A key requirement of this design is that the embeddings produced by the two encoders are compatible with a single mask decoder so that they can be fused and decoded consistently. In the following, we describe our task-aware alignment strategy and the fusion mechanism used to combine the aligned embeddings.

2.5.1. Embedding Alignment

In the SAMM, the auxiliary encoder is aligned to a frozen primary encoder by minimizing a distance metric between their embeddings (e.g., Mean Squared Error (MSE)), an unsupervised objective often referred to as embedding unification. While this facilitates combining modalities, it also encourages the secondary encoder to reproduce information already present in the primary representation. This is not necessarily optimal for segmentation, where the goal is to extract complementary features that improve the final mask prediction.

We instead aligned the secondary encoder to the task-specific representation learned by the primary model. After adapting the primary model to SAR avalanche segmentation (

Section 2.2), we froze its mask decoder and trained the secondary encoder using the supervised segmentation loss computed on the decoder output. Because the decoder parameters are fixed, the secondary encoder must generate embeddings that lie in the same space expected by the decoder, enabling subsequent fusion while preserving complementary information from the secondary modality. In our experiments, this supervised alignment strategy yielded the best performance among the considered input adaptation variants (

Appendix B).

2.5.2. Embedding Fusion

Once both encoders produced aligned embeddings, we fused them at the embedding level. As a simple baseline, we used a global convex combination:

With , this baseline is already an improvement over training on a single modality, confirming that the supervised alignment enables complementary information to be exploited.

To allow the relative contribution of each modality to vary spatially, we introduced a Selective Fusion Gate (SFG) (

Figure 7). The SFG predicts an element-wise weight tensor

from the concatenation of

and

and computes the fused embedding as follows:

where ⊙ denotes element-wise multiplication.

We note that other input adaptation strategies, including channel selection and patch-embedding modifications, were also investigated but did not yield comparable performance improvements. Further details can be found in

Appendix B.

2.6. Training Procedure

The overall training procedure consists of three sequential phases illustrated in

Figure 8 and detailed below:

Phase 1: Primary modality adaptation. The primary model is trained on

. As discussed in

Section 1.1.1, the VV polarization is the most informative SAR source for avalanche mapping and is used to create RGB composites for manual annotation. We therefore used

as the primary SAR inputs and complemented them with DEM. The model used at this stage leverages the approaches discussed in

Section 2.2 (adapter-based encoder tuning and decoder fine-tuning),

Section 2.3 (prompt-robust training), and

Section 2.4 (resource optimization). This supervised training stage is necessary to account for the domain shift arising from natural RGB images to SAR.

Phase 2: Secondary modality alignment. A secondary model is trained to extract image embeddings from the remaining three input channels ([VH

0, VH

1, SA]) in a supervised manner. As described in

Section 2.5.1, we forced alignment to the same embedding space through the frozen decoder of the primary model trained in Phase 1 (

Figure 8). Freezing the decoder reduces the individual performance of the secondary model since we only trained the adapters, but it facilitates the combination of the embeddings later on, which is the main goal. Moreover, this secondary modality is supposed to complement the main modality, which justifies using the decoder from the primary modality.

Phase 3: Embedding fusion. In the final phase, an SFG is trained to combine the embeddings produced by the two encoders. Once again, we performed supervised training, and we leveraged the frozen decoder of the primary model trained in Phase 1 (

Figure 8).

Experiments were carried out using the AdamW optimizer [

31] with

as well as early stopping (patience = 30 epochs) and a ReduceLROnPlateau scheduler (factor =

, patience = 10) monitoring the validation IoU for both phase 1 and phase 2. For phase 3, instead, we reduced the patience of the early stopping to 10 and the ReduceLROnPlateau to 4, again monitoring the validation IoU.

In the preprocessing step (see

Figure 8), we applied image augmentations to reduce overfitting and help the model generalize better. In particular, we applied translation, rotations (360°), flips, Gaussian noise (

), and random masking. Gaussian noise was introduced to tackle the impact of speckle noise on the segmentation performance. After image augmentation, we calculated the prompts related to the current images in the batch as explained in

Section 2.3. To address class imbalance, we used the Dice loss as it gave us good performance and directly correlated with the IoU metric. Since the mask decoder generates a continuous probability map, a binarization step is required. We applied a global threshold of 0.5, which yielded the best performance in our empirical evaluations, to produce the final segmentation mask. All models were trained on an NVIDIA RTX 6000 Ada GPU (NVIDIA Corporation, Santa Clara, CA, USA).

2.7. Segmentation Tool

We developed a web-based tool for semi-automated segmentation in collaboration with a geoscientist responsible for the annotations of the SAR images in the dataset. The tool is designed to support efficient human-in-the-loop annotation and provides the following core functionalities:

Data loading: It loads the files to annotate (SAR image and the DEM) at two different time instants and .

Data visualization: The interface allows visualizing both the RGB composites (obtained from the SAR images as described in Algorithm A1) and the DEM, displayed by simulating a light source to create shadows and highlights (hillshade format), which transforms raw elevation data into a 3D-like representation of the terrain.

Semi-automatic segmentation: The tool allows the annotator to draw a BB on the SAR image around an area with snow avalanche debris. The input data and the prompts are fed to the adapted SAM, which returns a probability map. The annotator can then adjust the mask by setting the threshold for the probability map. The mask corresponding to the selected threshold produces the segmentation mask.

Mask editing: This enables manual correction and refinement of the mask generated by the deep learning model.

The same web tool can also operate in a fully manual mode, where the annotator draws the avalanches by freehand.

The web page of the software application for image segmentation is shown in

Figure 9.

3. Results

In

Section 3.1, we first conduct an ablation study to assess the benefits provided by each component in our adapted SAM architecture with respect to baseline methods. Then, in

Section 3.2, we assess the adapted SAM when operating in a fully automatic segmentation and compare it to popular architectures for image segmentation. Finally, in

Section 3.3, we quantitatively assess the practical benefits of our semi-automatic segmentation tool in a real-world annotation pipeline. Qualitative results for the ablation study are provided in

Figure 10. Unless otherwise stated, all models were trained on the avalanche detection dataset using VV and VH SAR channels along with a DEM and SA as inputs. The performance in the experiments was evaluated according to the IoU, precision, and recall, which are defined in

Appendix A.

3.1. Ablation Study

We compared the effectiveness of the adapted SAM against the following:

The zero-shot version of the SAM, taking as input an RGB image created through Algorithm A1;

The SAM model from Phase 1 (SAM with adapters, with fine-tuning of the decoder with VV channels and the DEM as input);

The SAMM method [

16] with the six-channel input.

We used the same test set with pre-calculated accurate boxes as prompts. The results are reported in

Table 1. Our approach obtained superior performance in almost all metrics, with improvements in the IoU and recall, which are the most important metrics in avalanche detection. In particular, our model achieved the highest IoU metric with respect to any other method we tested.

3.2. Fully Automatic Segmentation

We evaluated the capabilities of our adapted SAM in a fully automated segmentation setting. In this experiment, we simulated a prompt-free setting by providing a single full-image bounding box (covering the entire image domain) as the prompt for our model. It is important to note that this represents a secondary case study, as the primary focus of this work is the semi-automatic, prompt-based annotation tool.

We compared our model against three standard, fully automated segmentation baselines trained and fine-tuned on the same multi-channel avalanche dataset: SegFormer-B1 (13.7 M parameters) [

32], U-Net (14.7 M parameters) [

33], and a DeepLabV3+ (26.7 M parameters) [

34] equipped with a ResNet-50 backbone pretrained on Sentinel-1 imagery [

35]. Notably, U-Net and Segformer are the deep learning models that were used in previous work to perform fully automated segmentation of avalanches from SAR images [

8,

36].

Table 2 shows that our approach achieved a comparable IoU and recall, which is critical to minimize the risk of undetected events. We note that this is a non-trivial result, as the SAM is a prompt-based model, which is not designed to operate with imprecise or full-image bounding-box prompts. By contrast, our training strategy explicitly exposes the model to inaccurate and full-image prompts, enabling it to perform well in this challenging setting. These results highlight the effectiveness of the proposed prompt augmentation strategy (

Section 2.3) and indicate the potential to perform fully automated and minimal prompt segmentation with foundation models in future applications.

We note that to further increase the performance of our SAM as a fully automated segmentation model, it would likely require dedicated retraining (e.g., make full images the priority by increasing their prompt percentage during training). We also note that in this case, the benefit of using a foundation model like the SAM could be down-weighted by the latency occurring when it is not possible to precalculate the image embedding. This is closely related to the specific requirements of the application and must be analyzed on a case-by-case basis, depending on the most important performance measure (inference time, precision, IoU, or recall).

3.3. Semi-Automatic Segmentation Tool

To evaluate how much the proposed SAM-based semi-automated segmentation tool (

Section 2.7) speeds up the annotation procedure in an operational pipeline, we compared the time required to annotate images in the semi-automatic and fully manual mode within the web tool we developed. First, we requested an expert geoscientist to generate high-quality annotations for 50 SAR images from the test set. To perform manual segmentation, the expert took between 1 and 3 min, while annotations in the semi-automatic modality took about 5–30 s, indicating a substantial speedup.

To evaluate if the improvement was statistically significant, we conducted a pairwise comparison through a matched pair analysis on 25 images, i.e., we tested the significance of the difference in time for segmenting the same image manually or with the automated annotation tool. This experiment yielded a 60.28% speedup (using median values), as confirmed by a highly significant

p value of 10

−5 from the paired one-tailed

t-test. These outcomes are consistent with similar domain adaptation studies (e.g., Med−SAM [

11]), confirming SAM’s effectiveness in creating segmentation labels in different domains.

4. Discussion

This study investigated the adaptation of the Segment Anything Model (SAM) framework to snow avalanche segmentation in Synthetic Aperture Radar (SAR) imagery, with the dual objectives of improving segmentation quality and reducing the effort required for manual annotation. By combining parameter-efficient domain adaptation, prompt-robust training, multi-channel input handling, and compute-aware training, we showed that foundation models can be effectively transferred to this highly specialized remote sensing task.

4.1. Summary of Contributions

The primary contribution of this work is an end-to-end methodology to adapt the SAM to SAR avalanche data while preserving its prompt-based interaction. Among the investigated domain adaptation approaches, adapters proved to be the most effective one, enabling efficient fine-tuning by reducing the number of trainable encoder parameters by more than 90%. In addition, the proposed training strategy improved robustness to imprecise prompts, which is essential in realistic human-in-the-loop annotation scenarios. To overcome the limitation of the SAM to three input channels (

Section 2.5 and

Appendix B), we introduced a multi-encoder architecture based on supervised embedding alignment and fusion, designed to extract complementary information from secondary input channels. Overall, our final model achieved an IoU of 0.5981 using accurate BB prompts, representing a 5% improvement over the baseline methods. When used for fully automatic segmentation, our adapted SAM model achieved comparable performance to popular image segmentation architectures, namely U-Net [

33] and Segformer [

32] trained end-to-end on the same training set.

The second major contribution is the development of a semi-automatic avalanche annotation tool that, under the hood, runs the proposed SAM-based segmentation model. The segmentation tool offers multiple interaction modes, including drawing a BB prompt, threshold-based refinement of the returned probability map, and manual mask editing. We measured a speedup of 60.28% compared with the manual annotation process, directly addressing the main bottleneck for scaling up avalanche inventories. This tool has significant implications for operational avalanche monitoring systems, where timely and accurate detection is critical for public safety. Indeed, facilitating the annotation procedure could enable avalanche forecasting centers to process significantly larger volumes of SAR imagery, potentially improving the temporal and spatial coverage of avalanche monitoring programs. This scalability is particularly relevant given the increasing availability of SAR data from missions such as Sentinel-1, which provides regular coverage of mountainous regions regardless of weather conditions.

More broadly, increasing the amount of high-quality labels enables training more accurate and reliable models for snow avalanche detection, while larger and more diverse datasets remain the main bottleneck for automated snow avalanche mapping. In the long term, the proposed tool can support a positive feedback loop in which improved models reduce annotation effort and facilitate further dataset expansion.

4.2. Challenges and Limitations

One of the main technical challenges faced during this work was the long training time, which we addressed with the proposed efficient training algorithm. Looking ahead, the most important performance bottleneck concerns the detection of small avalanches; aside from the inclusion of imprecise prompts, the other solutions we tested did not consistently improve segmentation performance (

Appendix E).

Another limitation is that the dataset includes only acquisitions from the Norway region. The generalization of our model to other geographic areas with different snow conditions and terrain characteristics is not guaranteed and requires additional validation. Nevertheless, we argue that the proposed methodology is general, and the same architecture could be kept as is and retrained on data from different regions.

We also acknowledge that the simpler adapter model with three input channels created through Algorithm A1 represents a strong baseline in terms of the IoU. Nevertheless, the proposed multi-encoder procedure provides a principled way to incorporate additional channels when they are available and informative for the downstream task.

It is also worth noting that the goal of this work was not to explicitly address speckle noise through dedicated denoising architectures. Instead, we focused on building a practical system that leverages the SAM for semi-automatic avalanche annotation, improving robustness and annotation efficiency despite the presence of speckle noise. The investigation of task-specific, speckle-aware network designs is, therefore, considered outside the scope of this study.

Finally, our results focused on avalanches whose deposits are resolvable at Sentinel-1’s 10 m scale; small events may be under-detected.

5. Conclusions

Snow avalanche mapping in SAR imagery is inherently challenging due to speckle noise, acquisition timing, and inter-annotator variability. Our results show that a promptable foundation model, once properly adapted, can act as an effective assistant for this task; it produces accurate masks from simple BBs and, when integrated in an operational pipeline, substantially reduces the annotation time. This creates a practical opportunity to scale up high-quality avalanche inventories, which can in turn improve both prompt-based and fully automatic detection systems.

Beyond avalanche mapping, the proposed methodology for multi-modal input handling and prompt-based training is applicable to other SAR-based detection tasks, including flood detection [

37] and oil spill detection [

36].

Future research should prioritize the following key directions:

Expand training data through larger annotation campaigns supported by the tool, with quality control protocols that improve contour consistency across annotators.

Investigate multi-scale decoding and training strategies that increase sensitivity to small targets while controlling false positives.

Validate and retrain on acquisitions from other regions and seasons, and explore additional inputs when available (e.g., meteorological products or higher-resolution topographic descriptors).

Conduct field evaluations with forecasting centers to assess usability, latency, and reliability in real annotation workflows.