1. Introduction

With the rapid development of hyperspectral imaging technology, hyperspectral sensors can capture narrow and continuous spectral information from ground objects, which not only yields improved ground observation accuracy but also broadens the application potential of hyperspectral remote sensing images (HSI) in fields such as agriculture, medical analysis, and environmental monitoring [

1]. However, owing to the limited spatial resolutions of sensors, the phenomenon of “mixed pixels” is common in HSI, where a single pixel often contains mixed spectral information derived from numerous distinct materials. This phenomenon severely limits the precise applicability of HSI in tasks such as clustering [

2], fine classification [

3], and target detection [

4]. Therefore, HU, whose main purpose is to identify pure objects (i.e., endmembers) and their corresponding proportions (i.e., abundances) [

5] from mixed pixels, has become an important step for processing HSI.

To date, researchers have proposed many methods for solving the HU problem. These methods can be divided into two main categories: linear mixture models (LMM) [

6] and nonlinear mixture models (NLMM) [

7]. An LMM assumes that the mixing of ground materials occurs at a macroscopic scale, where each incident solar photon interacts with only a single type of material. In contrast, an NLMM holds that after the incident solar light reaches the ground, it refracts with various ground objects and then reflects to the sensor. Owing to the advantages of LMMs, such as their simple expression forms, strong operability, and clear physical meaning, they are still some of the most widely used models in HU research.

In recent years, many methods based on LMMs have been proposed; these approaches can be classified into geometric, statistical, sparse regression, tensor, and neural network (NN) methods. Among them, the sparse regression (SR) method [

8] is a semisupervised method based on the theory of compressed sensing. It assumes that the observed hyperspectral data can be represented by a linear combination of atoms in a preknown spectral library, thereby transforming the unmixing problem into the process of finding the optimal endmember subset in the spectral library for modeling the hyperspectral data. Since the SR method does not require endmember extraction or endmember quantity estimation and can efficiently utilize the rich atomic information contained in the spectral library, it has received extensive attention in practical applications [

9]. Ref. [

10] first integrated SR into HU (SUnSAL) and used

regularization to constrain the abundance matrix, obtaining ideal abundance estimates. On this basis, a total variation (TV) regularization term was incorporated into SUnSAL, resulting in piecewise smooth abundance maps and significantly improving the robustness of the constructed model [

11]. Inspired by this, many SR methods with manually set abundance regularization have emerged, and all of them have produced ideal results [

12,

13,

14]. However, these methods have problems such as high spectral library coherence and high computational costs. Ref. [

15] proposed a two-stage optimization algorithm, NeSU-LP, that combines adaptive spectral library pruning and the Nesterov acceleration strategy, providing significantly improved unmixing efficiency. Overall, although the SR method avoids the complexity of endmember extraction, its performance is limited by the large scale and high coherence of the employed spectral library.

Geometric methods are based on convex geometry theory, which holds that the vertices of the simplex formed by hyperspectral data in the given feature space are the endmembers. Therefore, the process of endmember extraction is transformed into the process of identifying the vertices of the simplex. This category mainly includes pure pixel methods and nonpure pixel methods. Among them, a pure pixel algorithm assumes that each endmember has at least one pure pixel in the associated hyperspectral image. Typical algorithms include pixel purity index (PPI) [

16], N-finder algorithm (N-FINDR) [

17], vertex component analysis (VCA) [

18], etc. Nonpure pixel methods are based on the minimum simplex theory [

19] and generate endmembers by estimating the vertices of the simplex; thus, they can handle highly mixed data.

Statistical methods are rooted in probability theory and mathematical statistics theory, and they have the advantage of not relying on pure pixel assumptions. Among them, non-negative matrix factorization (NMF) is a typical representative [

20]. NMF decomposes a non-negative data matrix into the product of two non-negative low-rank submatrices that correspond to the basis matrix and the coefficient matrix [

21,

22,

23]. However, owing to the nonconvexity of its objective function, the algorithm easily falls into local minima during the optimization process. To address this issue, researchers have integrated many prior constraints into NMF to enhance the physical interpretability and robustness of the model; these constraints include abundance sparsity constraints [

24], piecewise smooth constraints [

25], and manifold regularization [

26]. On the basis of the sparse prior, ref. [

27] proposed sparse-constraint NMF by enhancing the abundance sparsity of the model through

regularization and proved that

regularization is easier to solve than

regularization is and has stronger sparsity than

regularization does, making it a better alternative to

regularization. In addition, Yuan and Li et al. proposed a region-space adaptive total variation (RSATV) model [

28] to address the problem that TV regularization is prone to introducing false edges in flat image regions. By combining regional k-means clustering and dual filtering mechanisms, the spatial consistency and robustness of the constructed abundance map can be improved while preserving edge information. Furthermore, Qu and Bao [

29] proposed a multiprior set constraint-based NMF unmixing method, which integrates the minimum volume constraint (MVC), a reweighted

norm, and TV regularization into the NMF framework and imposes constraints on the endmember and abundance matrices in the NMF model, thereby overcoming the limitations of traditional single-matrix constraint methods.

Since NMF usually expands three-dimensional data into a two-dimensional matrix when processing HSIs, this expansion process inevitably leads to the loss of the original spatial structure information. To solve this problem, researchers have proposed non-negative tensor factorization (NTF). NTF methods are based mainly on tensor algebra theory and can be roughly classified into four categories according to their different decomposition strategies. First, canonical polyadic decomposition (CPD) [

30], which represents a tensor as the sum of several rank-one tensors, is suitable for depicting simple structures. Second, Tucker decomposition [

31], which uses a core tensor and multiple factor matrices to achieve low-rank approximation, has stronger representational capabilities. Third, block tensor decomposition (BTD) [

32] retains the local structure of the target tensor while enhancing the interpretability of the model. Fourth, matrix-vector NTF (MV-NTF) [

33] effectively improves the computational efficiency and adaptability of the model by combining tensor decomposition and matrix decomposition strategies. Compared with matrix decomposition, NTF can more effectively preserve the original intrinsic spatial structure of HSIs. However, how to reasonably determine the rank of the target tensor is still an important challenge for this method.

With the rapid development of deep learning, especially the outstanding achievements of convolutional NNs in tasks such as hyperspectral image classification and HU [

34,

35], researchers have begun to integrate multilayer deep structures into the NMF framework. They aim to construct NMF unmixing methods with multiple levels and high representational capabilities. Ref. [

36] proposed multilayer NMF (MLNMF), which iteratively decomposes the target observation matrix into multiple layers to achieve more levels of feature extraction. However, MLNMF is essentially only a series of sequential single-layer decompositions; it lacks cross-layer information feedback and fusion and fails to give full play to the hierarchical modeling advantages of deep structures. To enhance the modeling capability of data structures in HU, ref. [

37] proposed a DNMF method incorporating

sparsity constraint and TV regularization, referred to as SDNMF-TV. By leveraging a hierarchical pretraining and fine-tuning strategy, this method effectively captures the layered structural information of hyperspectral data, thereby improving the estimation accuracy of both endmembers and abundances. Building upon this, ref. [

38] addressed the performance degradation of deep NMF methods under noise interference by introducing an

-norm constraint. The resulting robust DNMF model significantly enhances the model’s resilience to outlier noise, confirming its superior unmixing performance. Moreover, the self-supervised robust deep matrix decomposition model (SSRDMF) jointly optimizes the endmember and abundance estimation processes through an encoder–decoder architecture in combination with sparse noise modeling and self-supervised constraints, significantly enhancing the robustness of the unmixing model against Gaussian noise and sparse noise and further improving its unmixing performance [

39]. In summary, the DNMF method has significant advantages in terms of achieving improved HU accuracy and antinoise performance because of its hierarchical modeling capabilities, adaptive optimization mechanism, and regularization strategy.

On the basis of the above analysis, considering the superior characteristics of DNMF in hierarchical feature learning tasks and drawing on the ideas of deep learning, an adversarial graph regularization and Gram sparsity-constrained DNMF method for spectral unmixing is proposed in this paper. Specifically, our method improves and enhances the existing model from three perspectives—spatial structure preservation, sparse representation, and robustness—by introducing graph structure priors and sparsity mechanisms (as shown in

Figure 1). First, we utilize the manifold graph learning and adversarial learning concepts to design an adversarial graph regularization method composed of a local similarity graph and a heterogeneous repulsion graph, which effectively enhances the continuity within classes and the separability between classes during abundance estimation, thereby improving the ability of the model to identify complex spatial structures and class boundaries. Second, to effectively characterize the sparsity of the abundance components contained in multilayer structures, we introduce a novel sparsity constraint based on inner product penalties, which guides the task of generating unmixing results by penalizing the Gram matrix of abundances, enhancing the interpretability and discrimination of the model. Finally, the designed adversarial graph regularization scheme and Gram sparse constraint are uniformly integrated into the deep robust NMF model to further increase its robustness and accuracy. Additionally, during the iterative process of deep robust NMF, we innovatively introduce a truncated activation function, which truncates the low-amplitude terms in the hierarchical iterative matrix to further promote the generation of zero values in the abundance matrix, thereby enhancing the robustness of the model to noise and outliers while improving its computational efficiency and interpretability.

The main contributions of this study are summarized as follows.

- (1)

A robust DNMF framework is proposed, integrating adversarial graph regularization, a Gram sparsity constraint, and a reconstruction strategy that incorporates an improved ReLU function and the -norm, thereby enhancing the expressive capacity, sparsity, nonlinearity modeling, and decomposition robustness of the multilayer unmixing model when dealing with complex hyperspectral data.

- (2)

An adversarial graph regularization method that considers a local similarity graph and a heterogeneous repulsion graph is designed; this approach dynamically balances the intraclass smoothness and interclass distinctiveness of the abundance matrix. Moreover, a sparse constraint based on the Gram inner product is introduced to guide the process of generating abundance components in each layer, further enhancing the sparse representation ability of the model and improving the interpretability of multilayer factor decomposition.

- (3)

The proposed model is solved using the alternating direction method of multipliers (ADMM), which is adopted as a standard numerical optimization technique to efficiently decompose and solve the resulting constrained optimization problem.

The remainder of this article is organized as follows.

Section 2 introduces the relevant basic models.

Section 3 describes the model of the proposed algorithm and its optimization algorithm.

Section 4 describes and analyzes the results obtained on synthetic datasets and real datasets.

Section 5 provides some conclusions and directions for future research.

3. Proposed Model

In this section, we first delve deeply into the mathematical modeling process of DNMF-AG. The optimization process and time complexity analysis of the algorithm are subsequently discussed in detail. Finally, the specific implementation details of the model are discussed.

3.1. Robust DNMF with Truncated Activation Functions

The traditional NMF method relies on linear decomposition and non-negativity constraints to perform matrix decomposition on data. However, in practical HU tasks, the abundance matrix is often disturbed by noise and outliers, causing some elements to deviate from their physically feasible domain, which in turn affects the unmixing accuracy and the stability of the constructed model. To address this issue, truncated activation functions (such as variants of the ReLU) are introduced in this paper; these functions map negative values or minimal values to zero through truncation, thereby achieving a nonlinear mapping that enhances the expressive power of the model while suppressing the interference of outliers during the optimization process. Additionally, this strategy can improve the stability and physical feasibility of abundance estimation while maintaining data sparsity. Its mathematical formalization is as follows:

where

denotes the element-wise truncated nonlinear activation function, which is employed to suppress unreasonable small values in the abundance matrix caused by noise or outliers. For each element

in the abundance matrix

, let

t be the truncation threshold, the truncated activation function is then defined as:

The traditional NMF method usually adopts a loss function that is based on the Frobenius norm. However, when addressing noise and outliers, the Frobenius norm exhibits strong instability. The fundamental reason for this finding is that the error term is usually expressed as the sum of the squared Euclidean norms of residuals (

12). When extreme values exist, the squared terms significantly amplify the error, causing a few outliers to disproportionately impact the decomposition results, thereby reducing the stability and unmixing accuracy of the model. To overcome this problem, ref. [

40] proposed a robust NMF method by introducing a

norm to redefine the optimization objective, effectively weakening the interference caused by outliers.

In terms of norm selection, the norm and the Frobenius norm have significant differences. For a given matrix , the norm is defined as , whereas the Frobenius norm is defined as . Since the norm avoids the calculation of squared errors, it is more effective than the Frobenius norm when handling noisy data.

Inspired by the theoretical advantages of truncated activation functions and the

norm in terms of improving the performance of NMF, we formulate the DNMF problem as follows:

3.2. Adversarial Graph Regularization

Graph theory [

41] and manifold learning theory [

42] indicate that constructing a nearest-neighbor graph can effectively preserve the local geometric structure of data. However, the existing graph regularization methods (such as the Laplacian regularization term) often overly rely on the local manifold assumption, focusing only on the similarity between samples and neglecting their differences. This limitation leads to overly strong continuity in the abundance matrix space, thereby damaging the detailed information contained within it. To address this issue, a dual-graph adversarial learning mechanism (

Figure 1) is proposed in this paper; this mechanism effectively optimizes the intraclass compactness and interclass separability of the target abundance matrix by dynamically balancing the reward graph and the penalty graph, overcoming the shortcomings of the traditional graph regularization methods.

The reward graph is aimed at enhancing the consistency of the abundance distribution of the pixels within the local neighborhood. Specifically, given a data matrix

, if a pixel

is one of the

K-nearest neighbors of

, similarity connections are connected among the samples of the same type through the

K-nearest neighbors (KNN) criterion:

where

represents the KNN of pixel

and

is a temperature parameter that controls the rate of similarity decay. According to the Laplacian matrix

,

is the degree matrix, and

. The reward map restricts the abundance vectors of pixels belonging to the same class to be as close as possible in the manifold space, which suppresses the loose intraclass distribution caused by noise or gradual changes in the mixing ratio.

A penalty graph is used to enhance the differences between non-neighboring pixels, preventing the potential overlapping of heterogeneous samples in the abundance space. If pixel

is not among the KNNs of pixel

, a connecting edge is constructed and assigned a weight:

Here, the corresponding Laplacian matrix and its associated regularization term enhance interclass distinguishability by maximizing the abundance differences between non-neighboring samples.

It is worth noting that the parameter K is a crucial one in the construction of the reward graph and the penalty graph. It defines the local neighborhood range of each pixel, which directly affects the connectivity and discriminability of the graph structure. In the reward graph, K determines the connection strength between pixels of the same type. If it is too small, it will lead to insufficient intra-class information, affecting the consistency modeling of abundance distribution. In the penalty graph, the setting of K affects the degree of separation between different types of samples. If it is too large, it may introduce a large number of non-similar neighbors, causing the graph structure to be overly smoothed and weakening the discriminability between classes. Therefore, the reasonable setting of K requires a balance between intra-class compactness and inter-class separability.

By jointly optimizing the Laplacian constraints of the reward graph and the penalty graph, the objective function is defined as follows in each layer of the DNMF model:

where

and

and

are weighting parameters that control the balance between intraclass compactness and interclass separability.

3.3. Gram Sparsity Constraint

From a statistical perspective, each pixel in a hyperspectral image is typically composed of only a few endmembers, so the column vectors of the abundance matrix naturally exhibit sparsity. Traditional element-wise sparsity constraints based on the

or

norm can directly control the sparsity of individual elements; however, solving the

norm is an NP-hard problem, and the

norm often provides limited sparsity in practical unmixing applications. Moreover, these methods generally overlook the redundancy and overlap between column vectors, making it difficult to effectively distinguish the endmember compositions of different pixels. To address this limitation, this study introduces an inner-product-based sparsity regularization method within the proposed DNMF framework, namely the Gram sparsity constraint. This constraint effectively suppresses high correlations between columns, thereby highlighting the primary endmember contributions of each pixel, indirectly enhancing sparsity while reducing the influence of noise on unmixing. Specifically, the constraint penalizes the off-diagonal elements of the Gram matrix, preventing the same endmember from exhibiting similar distributions across different pixel columns, thus reducing inter-column redundancy and avoiding potential mixing ambiguities during the unmixing process [

43].

Specifically, considering the abundance matrix

at the

i-th layer, where

, the corresponding Gram matrix

can be expressed as follows:

where

represents the inner product of the abundance vectors of columns

p and

q. The off-diagonal elements

of the Gram matrix reflect the cooperative or competitive relationships among different endmembers during the mixing process. The expression

corresponds to the sum of the off-diagonal elements in the Gram matrix

. In simple terms,

where

represents a matrix of all ones. The inner product

approaches zero if and only if

or

approaches zero. Therefore, this optimization process can induce sparsity in the abundance matrix along the column dimension, suppressing the global participation of redundant endmembers.

3.4. Proposed DNMF-AG Model

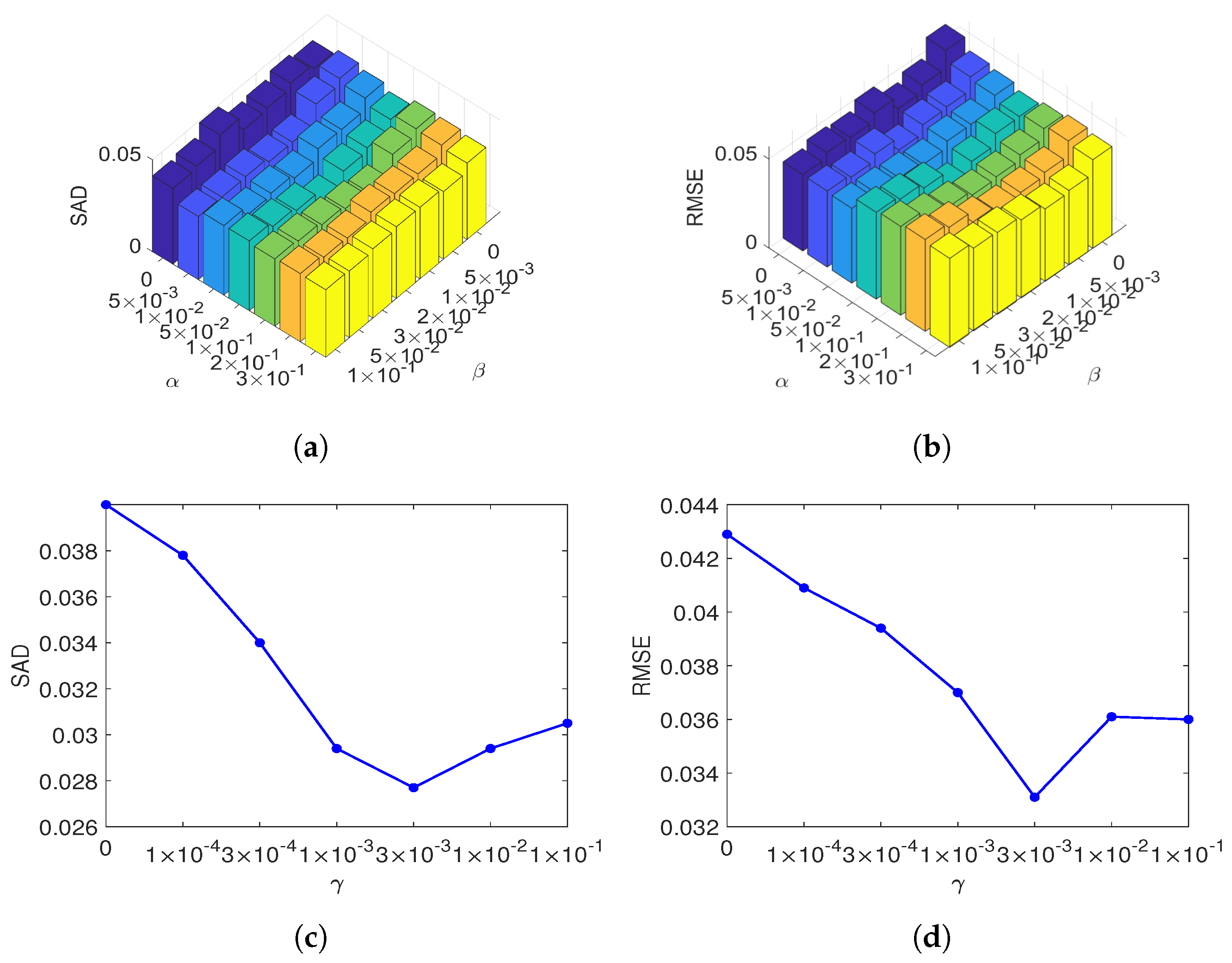

Considering the previous discussion, the structure of DNMF-AG consists of three main parts: (1) robust DNMF with a truncated activation function; (2) an exploration of the problem of adversarial graphs; and (3) Gram sparsity regularization learning. On this basis, the optimization problem of the proposed model is formulated as follows:

where the first term is a robust DNMF reconstruction with a truncated activation; the second and third are adversarial graph and Gram sparsity regularizations. Here,

l is the number of layers,

the improved nonlinear activation, and

,

, and

are the parameters for reward graph, penalty graph, and Gram sparsity, respectively.

3.5. Optimization

To efficiently solve the optimization problem (

21), this paper adopts the Lagrange multiplier method as a standard numerical optimization tool. Using this method, the constrained optimization problem (

21) is transformed into an equivalent unconstrained formulation, which facilitates subsequent numerical solution.

where

and

and

are the Lagrange multipliers for

and

, respectively.

(1) Calculate :

In subproblem , the following two situations may occur.

Based on the KKT condition

and assuming

, the update rule for

is:

Case 2: . We assume that and are ignored in the function , we obtain:

According to the KKT condition, the update rule for

is as follows:

(2) Calculate : In subproblem , the following two situations may occur:

According to the KKT condition, the update rule for

is as follows:

Case 2: For , assuming and ignoring irrelevant terms in , we have:

The following can then be obtained:

According to the KKT condition, the update rule for

is as follows:

The algorithm process can be seen in Algorithm 1.

3.6. Complexity Analysis

This section analyzes the computational complexity of the proposed method, which consists of hierarchical pretraining and global fine-tuning.

During the hierarchical pretraining stage, the classic NMF algorithm is adopted to initialize the decomposition layers at each level. Therefore, the computational complexity of this step can be expressed as

, where

represents the size of each layer, and

represents the number of iterations required by the NMF process. In the fine-tuning stage, each layer involves three main tasks: (1) constructing auxiliary matrices

and

, (2) updating the matrix

, and (3) updating the matrix

. Constructing

and

requires at most

computational complexity. Moreover, the computational complexities for updating matrices

and

are

and

, respectively, where

is the maximum number of iterations involved in the fine-tuning stage of the proposed method. The comprehensive analysis indicates that when the size of each layer

is less than

and

, the overall computational complexity of a single layer can be approximated as

. Therefore, by summing the costs of both the hierarchical pretraining and global fine-tuning stages across all layers, the total computational complexity of the proposed method is approximately

, where

l is the number of layers.

| Algorithm 1: Algorithm of the proposed method. |

- 1:

Input: A hyperspectral image ; the number of layers l; the size of each layer ; the parameters . - 2:

Pretraining all layers: - 3:

for

do - 4:

. - 5:

Set . - 6:

end for - 7:

Fine-tuning all layers: - 8:

repeat - 9:

for all layers do - 10:

Define , and set . - 11:

Define . - 12:

Update by using the rules in ( 25) and ( 28). - 13:

Update by using the rules in ( 31) and ( 34). - 14:

end for - 15:

until convergence is achieved - 16:

Output: Set and .

|

3.7. Implementation Details

To effectively implement the proposed algorithm, several issues need to be considered.

The first issue concerns the initialization of the endmember and abundance matrices. In our implementation, vertex component analysis (VCA) is used only to initialize the matrix , and the fully constrained least-squares (FCLS) algorithm is employed to initialize the matrix ; the subsequent decomposition process follows the standard layer-wise structure of deep non-negative matrix factorization, without introducing any additional initialization strategies.

The second issue is how to ensure that the two LMM constraints are satisfied during the unmixing process. According to the MUR, we conclude that as long as the initial

and

are non-negative, the non-negative constraint can be achieved. In each iteration, we replace

and

with their enhanced forms:

where

controls the weight of the ASC constraint in the objective function. Larger values improve ASC accuracy but slow convergence; therefore, we set

to balance accuracy and convergence speed.

The third issue is choosing the number of layers in DNMF. Too few layers may fail to capture the complexity of the data, while too many can cause overfitting or higher computational cost. We conducted experiments on simulated datasets to evaluate different layer numbers and determined the optimal decomposition depth for subsequent experiments.

The fourth consideration is the use of the truncated activation function. This function enhances the sparsity of the abundance matrix and improves the interpretability of the unmixing results. However, different truncation thresholds can impact sparsity, accuracy, and computational efficiency. Based on ablation experiments on SC1, and taking SAD, RMSE, and runtime into account, a threshold of was selected as the final value.

Finally, two stopping criteria are defined. The first is an error threshold: if the reconstruction error does not exceed this threshold for ten consecutive iterations, the iteration process is terminated. We set this threshold as . The second criterion is the maximum number of iterations. In this model, we set the maximum number of iterations to 3000 to ensure full algorithmic convergence.