SC-SM CAM: An Efficient Visual Interpretation of CNN for SAR Images Target Recognition

Abstract

:1. Introduction

2. Related Work

2.1. Optical-Based CAM

2.2. Self-Matching CAM

2.3. Group-Self-Matching CAM

3. Methodology

3.1. Motivation

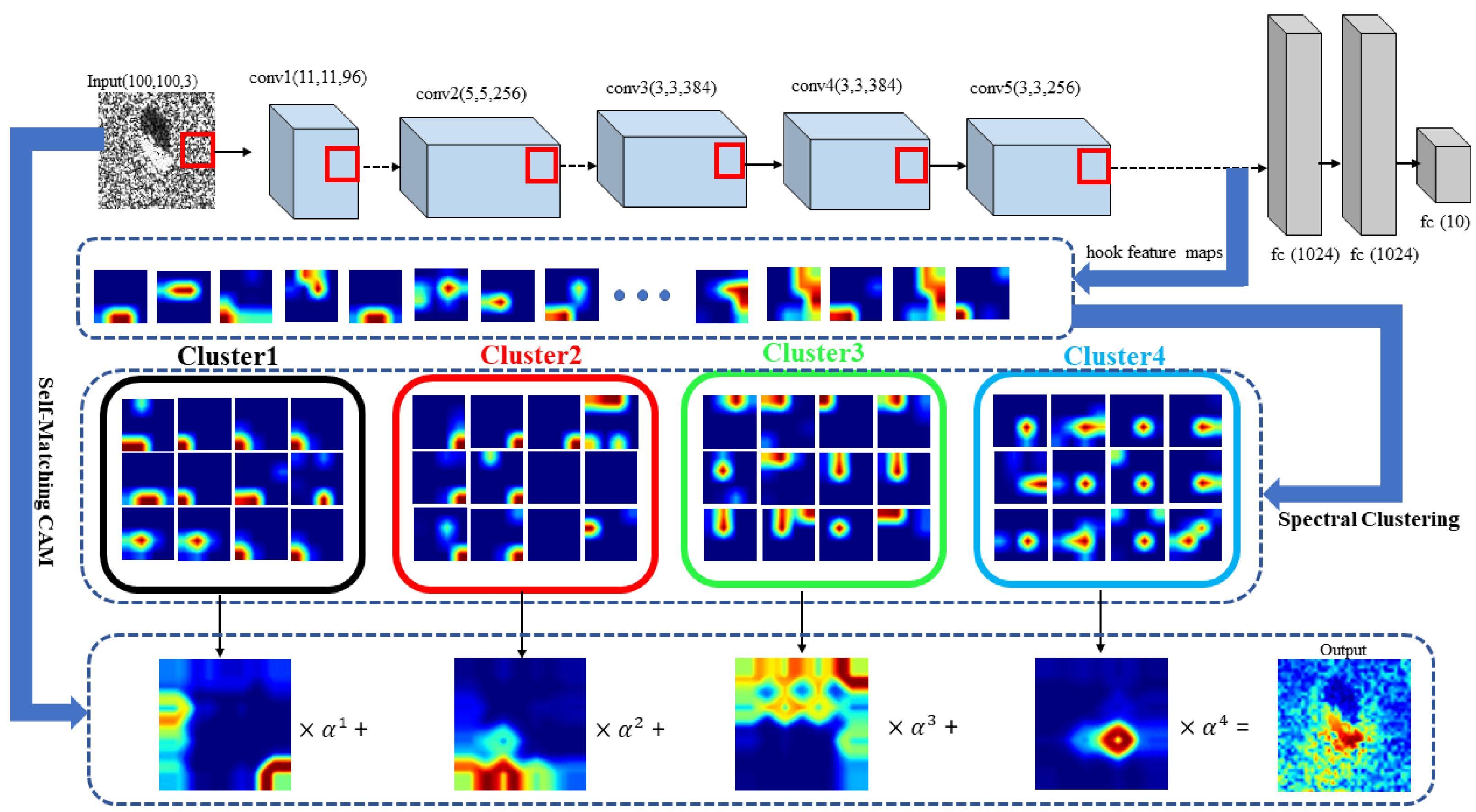

3.2. SC-SM CAM

| Algorithm 1: SC-SM CAM |

| Input: SAR image , model , spectral clustering |

| : |

| initialization: |

| (), k-th feature map |

| n in [1,…,m] |

| # obtain the weights: |

| ← , () |

| # generate final heatmap: |

4. Experimental Results

4.1. Experiment Setup

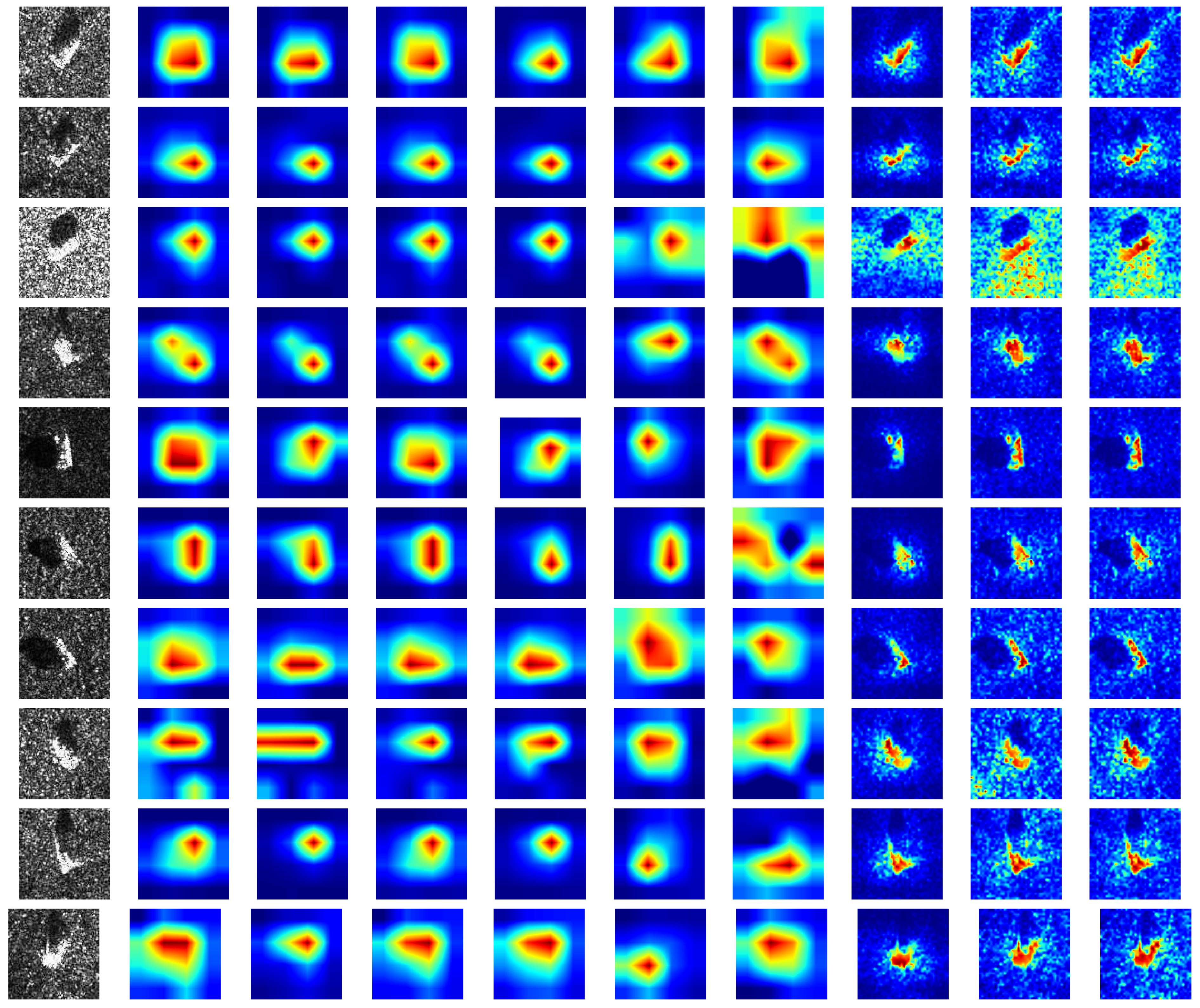

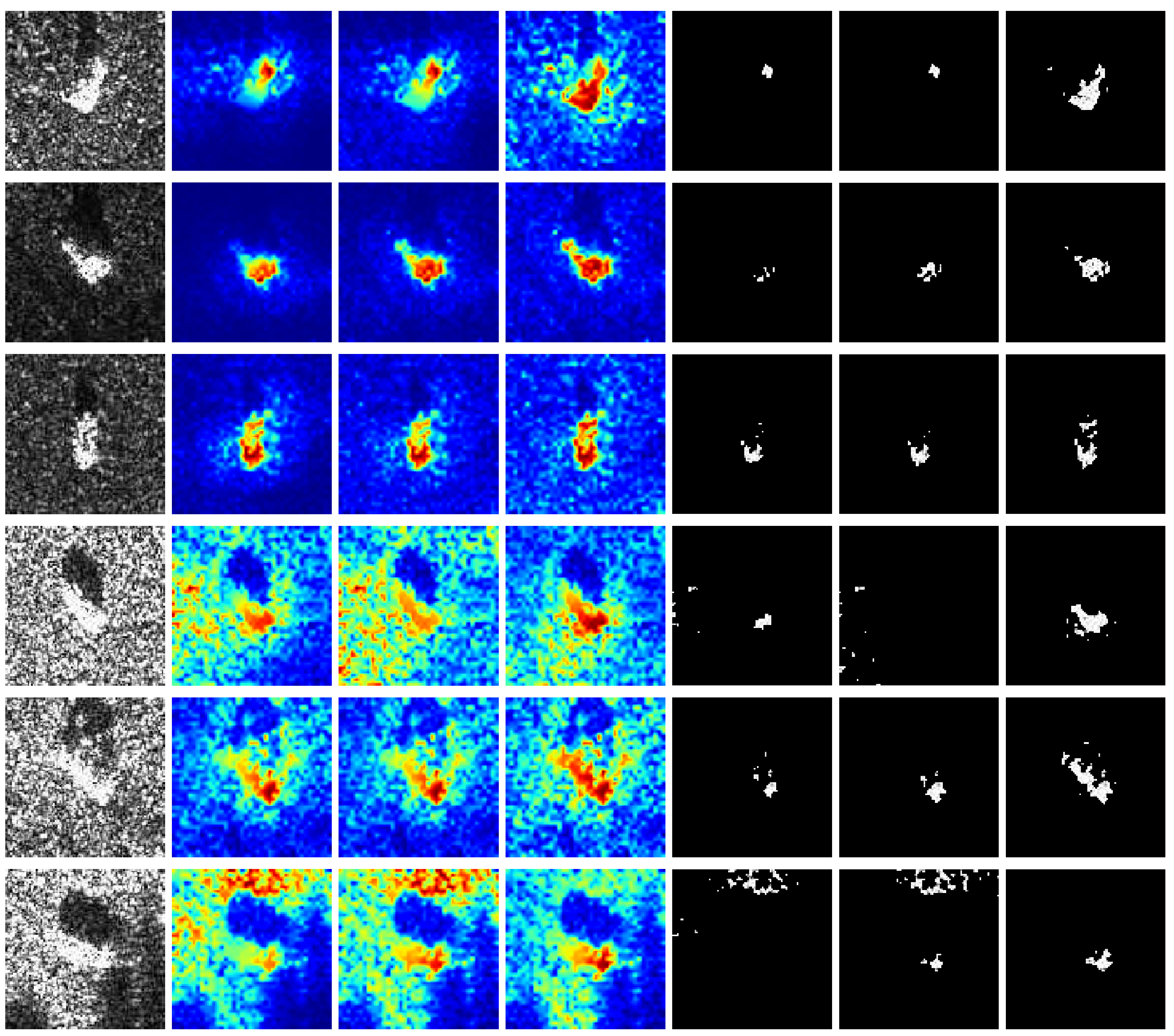

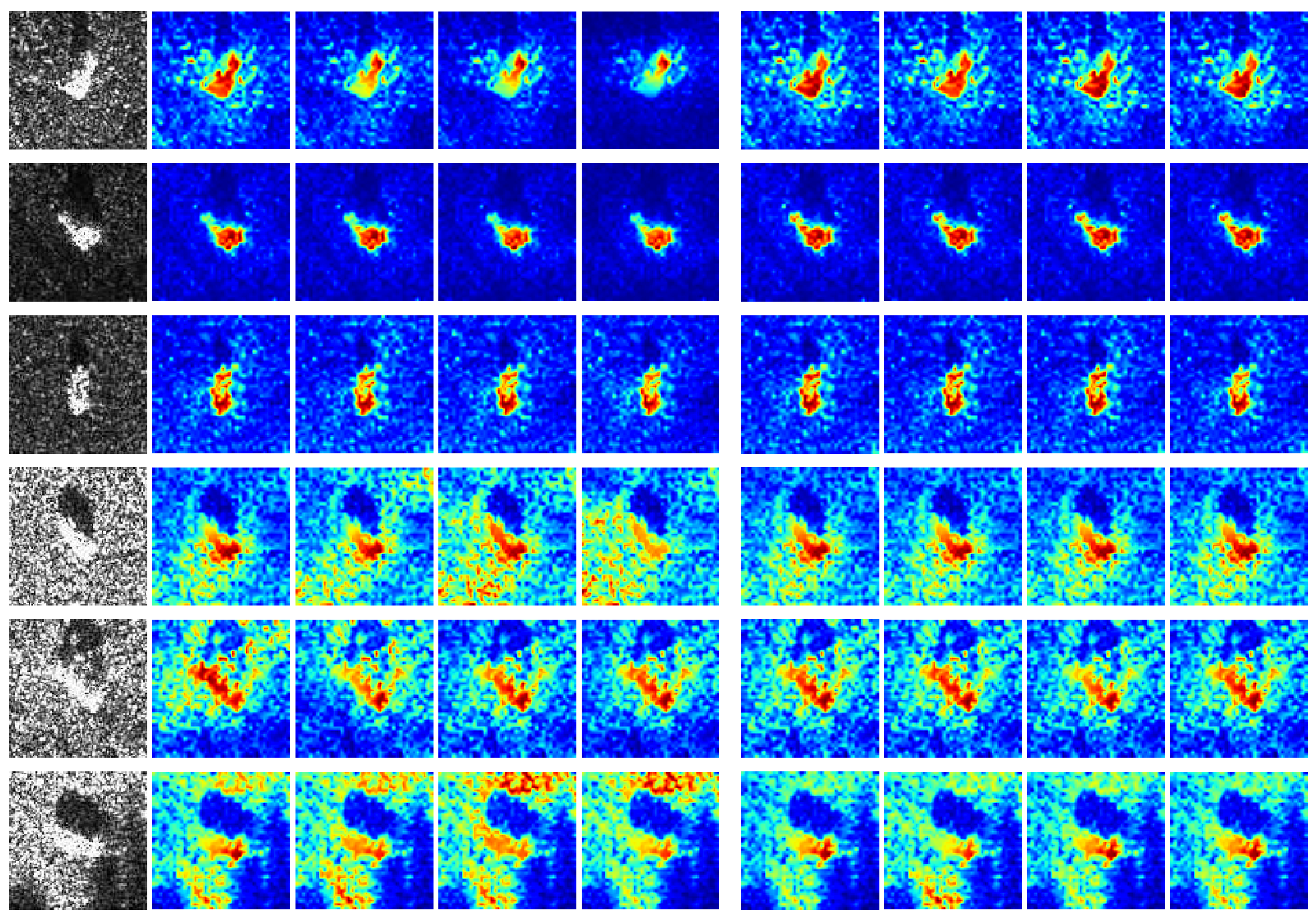

4.2. Class Discriminative Visualization

4.3. Insertion Check

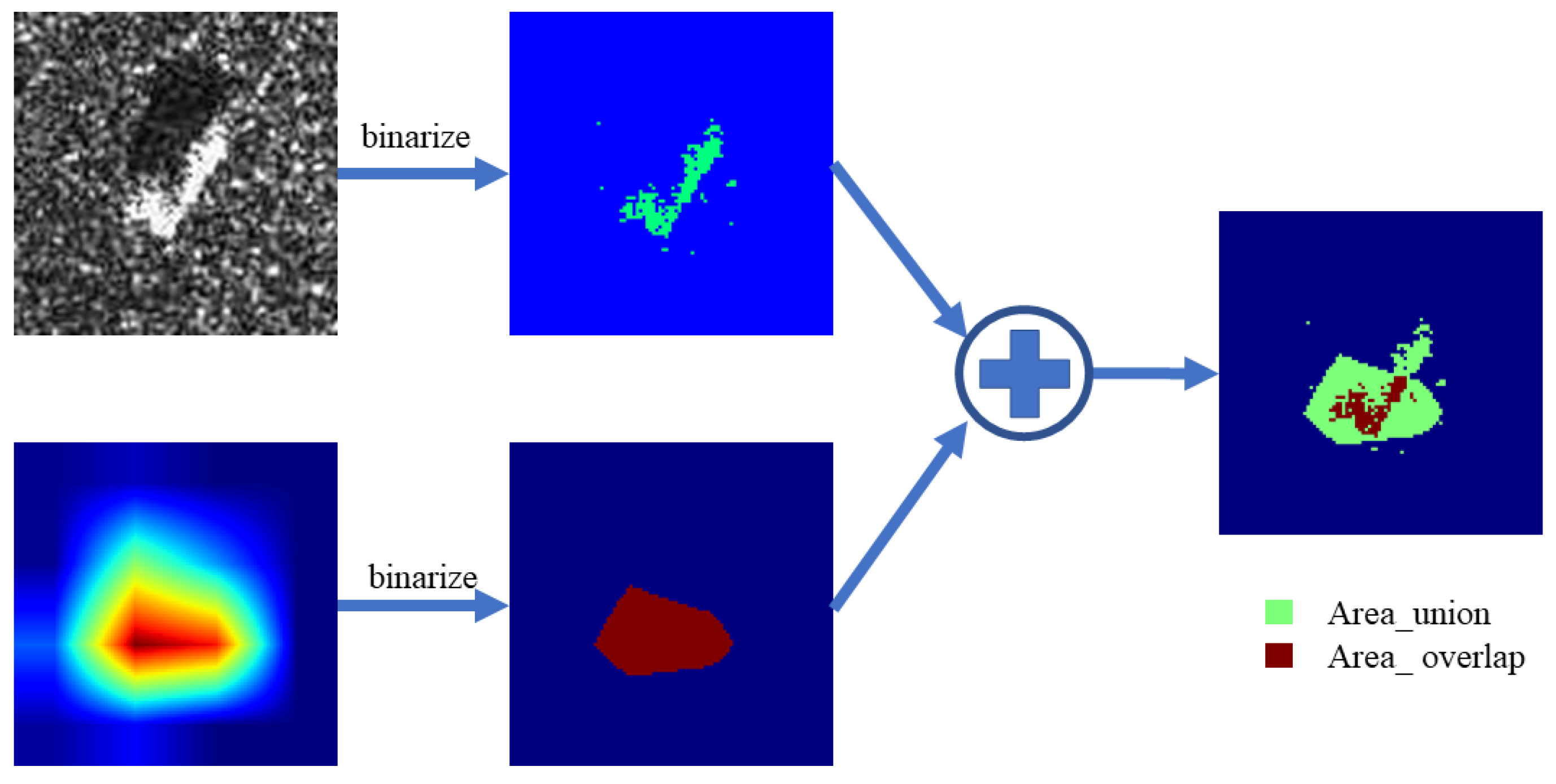

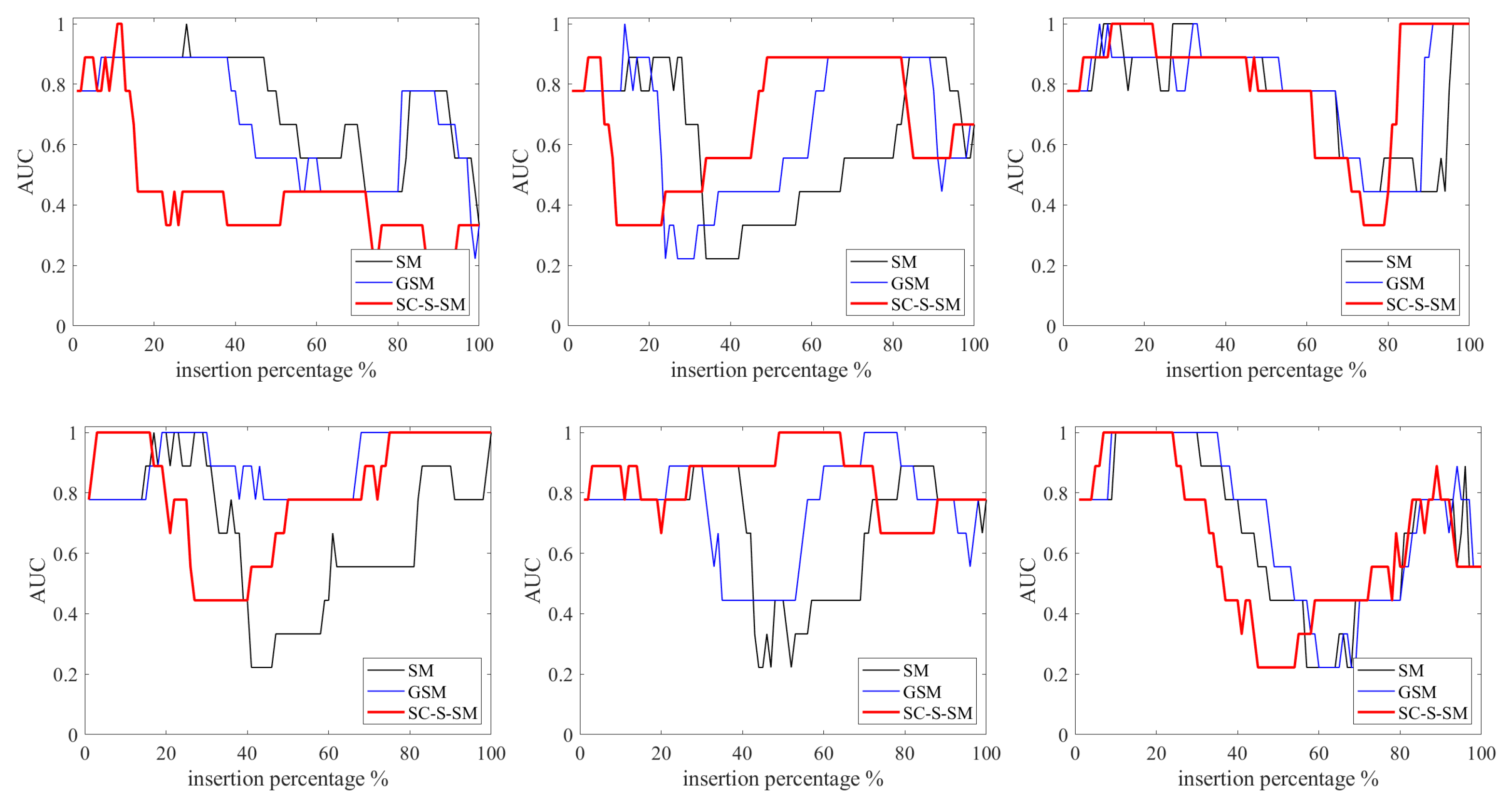

4.4. Ablation Study

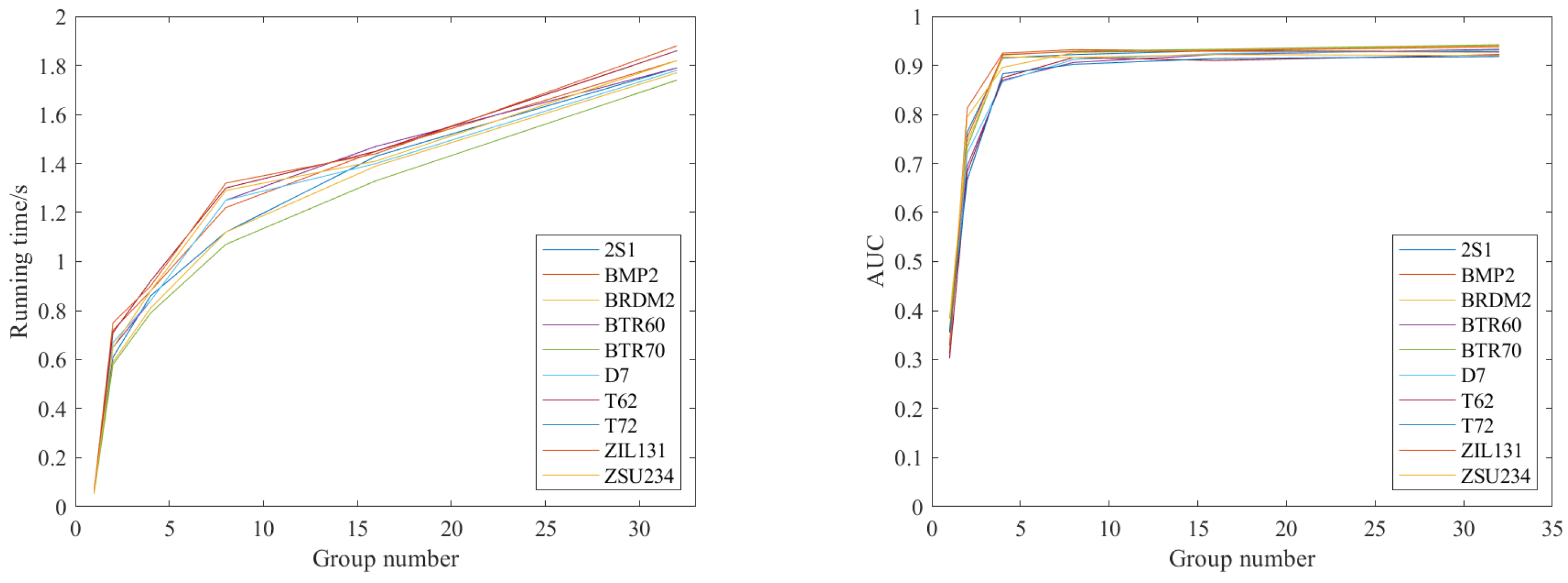

4.5. Computing Efficiency

5. Discussion

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Pallotta, L.; Clemente, C.; Maio, A.D.; Soraghan, J.J. Detecting Covariance Symmetries in Polarimetric SAR Images. IEEE Trans. Geosci. Remote Sens. 2017, 55, 80–95. [Google Scholar] [CrossRef]

- Wang, Z.; Wang, S.; Xu, C.; Li, C.; Yue, B.; Liang, X. SAR Images Super-resolution via Cartoon-texture Image Decomposition and Jointly Optimized Regressors. In Proceedings of the 2017 International Geoscience and Remote Sensing Symposium, Fort Worth, TX, USA, 23–28 July 2017; pp. 1668–1671. [Google Scholar]

- Li, W.; Zou, B.; Zhang, L. Ship Detection in a Large Scene SAR Image Using Image Uniformity Description Factor. In Proceedings of the 2017 SAR in Big Data Era: Models, Methods and Applications, Beijing, China, 13–14 November 2017; pp. 1–5. [Google Scholar]

- Yuan, Y.; Wu, Y.; Fu, Y.; Wu, Y.; Zhang, L.; Jiang, Y. An Advanced SAR Image Despeckling Method by Bernoulli-Sampling-Based Self-Supervised Deep Learning. Remote Sens. 2021, 13, 3636. [Google Scholar] [CrossRef]

- Wang, Y.; Zhang, Y.; Qu, H.; Tian, Q. Target Detection and Recognition Based on Convolutional Neural Network for SAR Image. In Proceedings of the 2018 11th International Congress on Image and Signal Processing, Biomedical Engineering and Informatics, Beijing, China, 13–15 October 2018; pp. 1–5. [Google Scholar]

- Ding, B.; Wen, G.; Huang, X.; Ma, C.; Yang, X. Data Augmentation by Multilevel Reconstruction Using Attributed Scattering Center for SAR Target Recognition. IEEE Geosci. Remote Sens. Lett. 2017, 14, 979–983. [Google Scholar] [CrossRef]

- Xiong, K.; Zhao, G.; Wang, Y.; Shi, G. SPB-Net: A Deep Network for SAR Imaging and Despeckling with Downsampled Data. IEEE Trans. Geosci. Remote Sens. 2020. [Google Scholar] [CrossRef]

- Luo, Y.; An, D.; Wang, W.; Huang, X. Improved ROEWA SAR Image Edge Detector Based on Curvilinear Structures Extraction. IEEE Geosci. Remote Sens. Lett. 2020, 17, 631–635. [Google Scholar] [CrossRef]

- Zhang, L.; Liu, Y. Remote Sensing Image Generation Based on Attention Mechanism and VAE-MSGAN for ROI Extraction. IEEE Geosci. Remote Sens. Lett. 2021. [Google Scholar] [CrossRef]

- Min, R.; Lan, H.; Cao, Z.J.; Cui, Z.Y. A Gradually Distilled CNN for SAR Target Recognition. IEEE Access 2019, 7, 42190–42200. [Google Scholar] [CrossRef]

- Zhou, F.; Wang, L.; Bai, X.R.; Hui, Y.; Zhou, Z. SAR ATR of Ground Vehicles Based on LM-BN-CNN. IEEE Trans. Geosci. Remote Sens. 2018, 56, 7282–7293. [Google Scholar] [CrossRef]

- Yu, J.; Zhou, G.; Zhou, S. A Lightweight Fully Convolutional Neural Network for SAR Automatic Target Recognition. Remote Sens. 2021, 13, 3029. [Google Scholar] [CrossRef]

- Dong, Y.P.; Su, H.; Wu, B.Y. Efficient Decision-based Black-box Adversarial Attacks on Face Recognition. In Proceedings of the 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 15–20 June 2019. [Google Scholar]

- Mopuri, K.R.; Garg, U.; Babu, R.V. CNN Fixations: An Unraveling Approach to Visualize the Discriminative Image Regions. IEEE Trans Image Process. 2017, 28, 2116–2125. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Montavon, G.; Binder, A.; Lapuschkin, S.; Samek, W.; Müller, K.R. Layer-Wise Relevance Propagation: An Overview. In Explainable AI: Interpreting, Explaining and Visualizing Deep Learning; Samek, W., Montavon, G., Vedaldi, A., Hansen, L., Müller, K.R., Eds.; Springer: Cham, Switzerland, 2019; pp. 14–15. [Google Scholar]

- Giacalone, J.; Bourgeois, L.; Ancora, A. Challenges in aggregation of heterogeneous sensors for Autonomous Driving Systems. In Proceedings of the 2019 IEEE Sensors Applications Symposium, Sophia Antipolis, France, 11–13 March 2019; pp. 1–5. [Google Scholar]

- Zhu, C.; Chen, Z.; Zhao, R.; Wang, J.; Yan, R. Decoupled Feature-Temporal CNN: Explaining Deep Learning-Based Machine Health Monitoring. IEEE Trans. Instrum. Meas. 2021, 70, 1–13. [Google Scholar] [CrossRef]

- Petsiuk, V.; Das, A.; Saenko, K. RISE: Randomized input sampling for explanation of black-box models. In Proceedings of the British Machine Vision Conference 2018, Newcastle, UK, 3–6 September 2018. [Google Scholar]

- Amin, M.G.; Erol, B. Understanding deep neural networks performance for radar-based human motion recognition. In Proceedings of the 2018 IEEE Radar Conference, Oklahoma City, OK, USA, 23–27 April 2018; pp. 1461–1465. [Google Scholar]

- Kapishnikov, A.; Bolukbasi, T.; Viégas, F.; Terry, M. Viégas, and Michael Terry. XRAI: Better attributions through regions. In Proceedings of 2019 IEEE/CVF International Conference on Computer Vision, ICCV 2019, Seoul, Korea, 27 October–2 November 2019; pp. 4947–4956. [Google Scholar]

- Bach, S.; Binder, A.; Montavon, G.; Klauschen, F.; Müller, K.R. On Pixel-Wise Explanations for Non-Linear Classifier Decisions by Layer-Wise Relevance Propagation. PLoS ONE 2015, 10, e0130140. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Zhou, B.; Khosla, K.; Lapedriza, A.; Oliva, A.; Torralba, A. Learning Deep Features for Discriminative Localization. In Proceedings of the 2016 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 26 June–1 July 2016. [Google Scholar]

- Ramprasaath, R.S.; Michael, C.; Abhishek, D. Grad-CAM: Visual Explanations from Deep Networks via Gradient-based Localization. arXiv 2015, arXiv:1610.02391v4. [Google Scholar]

- Aditya, C.; Anirban, S.; Abhishek, D.; Prantik, H. Grad-CAM++: Improved Visual Explanations for Deep Convolutional Networks. arXiv 2018, arXiv:1710.11063v34. [Google Scholar]

- Fu, H.G.; Hu, Q.Y.; Dong, X.H.; Guo, Y.I.; Gao, Y.H.; Li, B. Axiom-based Grad-CAM: Towards Accurate Visualization and Explanation of CNNs. In Proceedings of the 2020 31th British Machine Vision Conference (BMVC), Manchester, UK, 7–10 September 2020. [Google Scholar]

- Saurabh, D.; Harish, G.R. Ablation-CAM: Visual Explanations for Deep Convolutional Network via Gradient-free Localization. In Proceedings of the 2020 IEEE Winter Conference on Applications of Computer Vision (WACV), Snowmass, CO, USA, 1–5 March 2020. [Google Scholar]

- Wang, H.F.; Wang, Z.F.; Du, M.N. Methods for Interpreting and Understanding Deep Neural Networks. In Proceedings of the 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Seattle, WA, USA, 14–19 June 2020. [Google Scholar]

- Feng, Z.; Zhu, M.; Stanković, L.; Ji, H. Self-Matching CAM: A Novel Accurate Visual Explanation of CNNs for SAR Image Interpretation. Remote Sens. 2021, 13, 1772. [Google Scholar] [CrossRef]

- Zhang, Q.; Rao, L.; Yang, Y. Group-CAM: Group Score-Weighted Visual Explanations for Deep Convolutional Networks. arXiv 2021, arXiv:2103.13859. [Google Scholar]

- Huang, D.; Wang, C.; Wu, J.; Lai, J.; Kwoh, C. Ultra-Scalable Spectral Clustering and Ensemble Clustering. IEEE Trans. Knowl. Data Eng. 2020, 32, 1212–1226. [Google Scholar] [CrossRef] [Green Version]

- Wei, Y.; Niu, C.; Wang, H.; Liu, D. The Hyperspectral Image Clustering Based on Spatial Information and Spectral Clustering. In Proceedings of the 2019 IEEE 4th International Conference on Signal and Image Processing (ICSIP), Wuxi, China, 19–21 July 2019; pp. 127–131. [Google Scholar]

- Zhu, W.; Nie, F.; Li, X. Fast Spectral Clustering with Efficient Large Graph Construction. In Proceedings of the 2017 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), New Orleans, LA, USA, 5–9 March 2017; pp. 2492–2496. [Google Scholar]

- Stanković, L.J.; Mandic, D.; Daković, M.; Brajović, M.; Scalzo-Dees, B.; Li, S.; Constantinides, A.G. Data Analytics on Graphs—Part III: Machine Learning on Graphs, from Graph Topology to Applications. Found. Trends Mach. Learn. 2020, 13, 332–530. [Google Scholar] [CrossRef]

- Huo, J.; Gao, Y.; Shi, Y.; Yin, H. Cross-Modal Metric Learning for AUC Optimization. IEEE Trans. Netw. Learn. 2017, 29, 4844–4856. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Gultekin, S.; Saha, A.; Ratnaparkhi, A.; Paisley, J. MBA: Mini-Batch AUC Optimization. IEEE Trans. Netw. Learn. 2020, 31, 5561–5574. [Google Scholar] [CrossRef] [PubMed]

| Grad | Grad++ | XGrad | Ablation | Score | Group | SM | G-SM | SC-SM |

|---|---|---|---|---|---|---|---|---|

| Method | Self-Matching | G-SM CAM | SC-SM CAM |

|---|---|---|---|

| = 5% | 0.033 | 0.057 | 0.593 |

| = 10% | 0.127 | 0.125 | 0.667 |

| = 15% | 0.379 | 0.516 | |

| = 20% | 0.415 | 0.579 | 0.815 |

| = 30% | 0.815 | ||

| = 40% | 0.516 | 0.629 | 0.593 |

| = 50% | 0.417 | 0.530 | 0.412 |

| = 60% | 0.379 | 0.406 | 0.267 |

| = 70% | 0.415 | 0.493 | 0.680 |

| = 80% | 0.595 | 0.639 | 0.886 |

| Method | Running Time/s |

|---|---|

| Self-Matching CAM | |

| G-SM-CAM (G = 4) | |

| SC-SM CAM (G = 4) |

| Number of Eigenvectors | Running Time/s |

|---|---|

| 4 | |

| 10 | |

| 50 | |

| 100 | |

| 256 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Feng, Z.; Ji, H.; Stanković, L.; Fan, J.; Zhu, M. SC-SM CAM: An Efficient Visual Interpretation of CNN for SAR Images Target Recognition. Remote Sens. 2021, 13, 4139. https://doi.org/10.3390/rs13204139

Feng Z, Ji H, Stanković L, Fan J, Zhu M. SC-SM CAM: An Efficient Visual Interpretation of CNN for SAR Images Target Recognition. Remote Sensing. 2021; 13(20):4139. https://doi.org/10.3390/rs13204139

Chicago/Turabian StyleFeng, Zhenpeng, Hongbing Ji, Ljubiša Stanković, Jingyuan Fan, and Mingzhe Zhu. 2021. "SC-SM CAM: An Efficient Visual Interpretation of CNN for SAR Images Target Recognition" Remote Sensing 13, no. 20: 4139. https://doi.org/10.3390/rs13204139

APA StyleFeng, Z., Ji, H., Stanković, L., Fan, J., & Zhu, M. (2021). SC-SM CAM: An Efficient Visual Interpretation of CNN for SAR Images Target Recognition. Remote Sensing, 13(20), 4139. https://doi.org/10.3390/rs13204139