Applying Deep Learning to Automate UAV-Based Detection of Scatterable Landmines

Abstract

1. Introduction

1.1. Landmine Overview

1.2. Convolutional Neural Network (CNN) Overview

1.3. Region of Interest

2. Materials and Methods

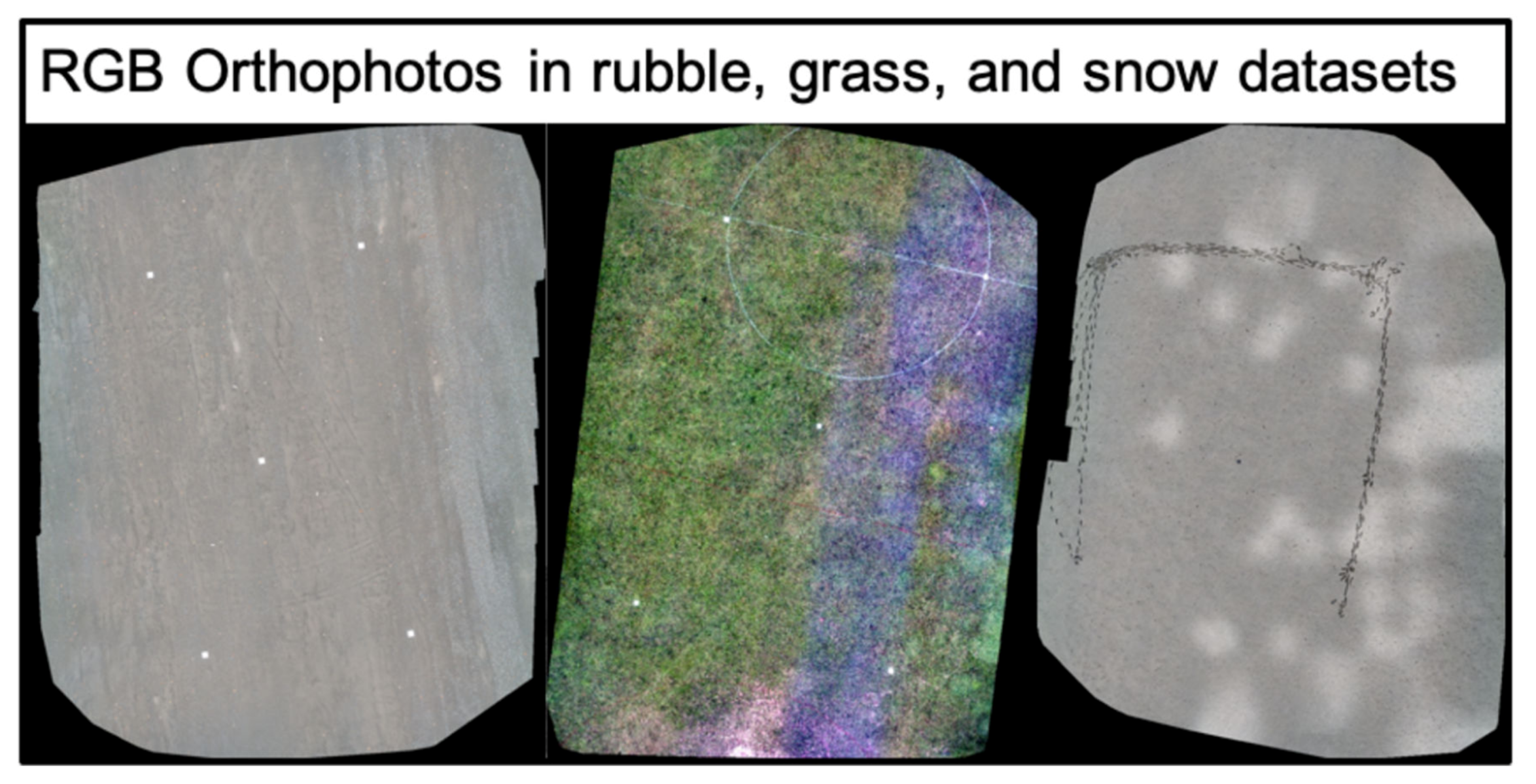

2.1. Proxy Environments

2.2. Instrumentation

2.3. Data Acquisition

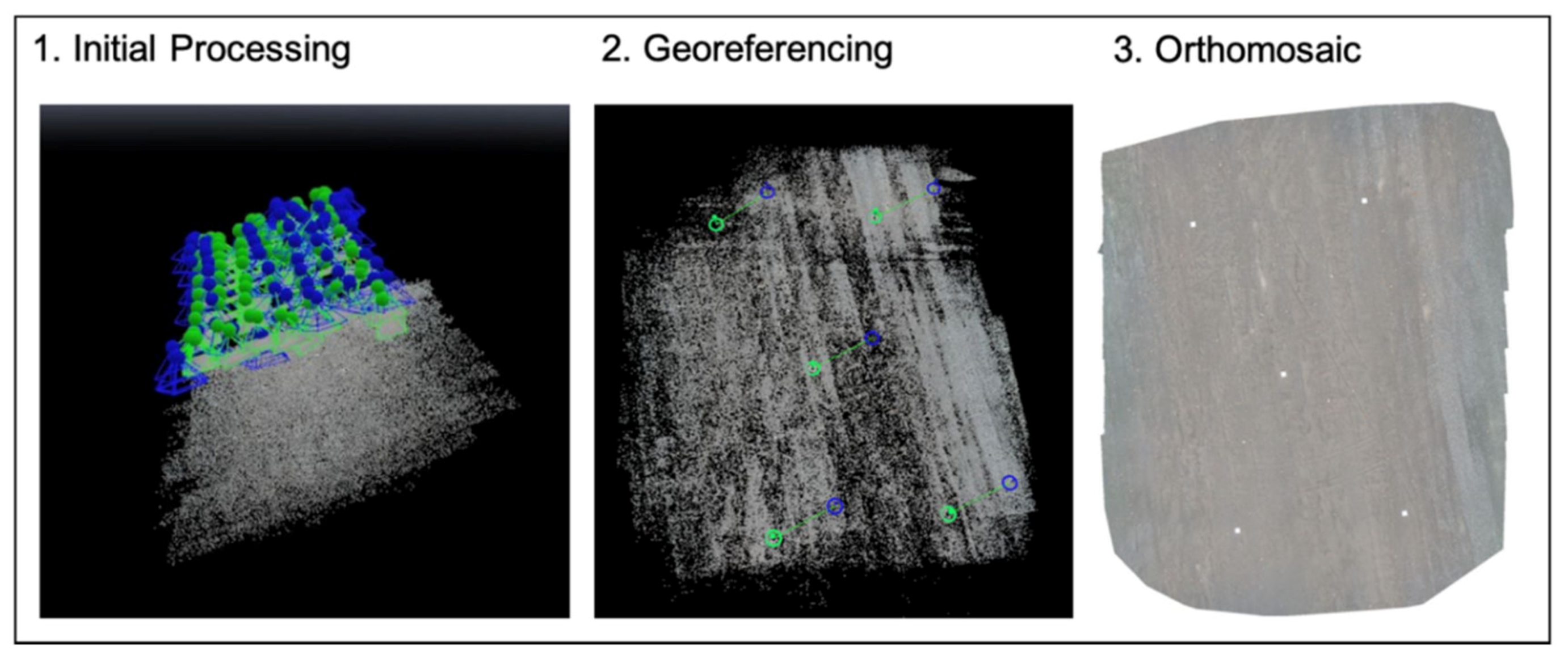

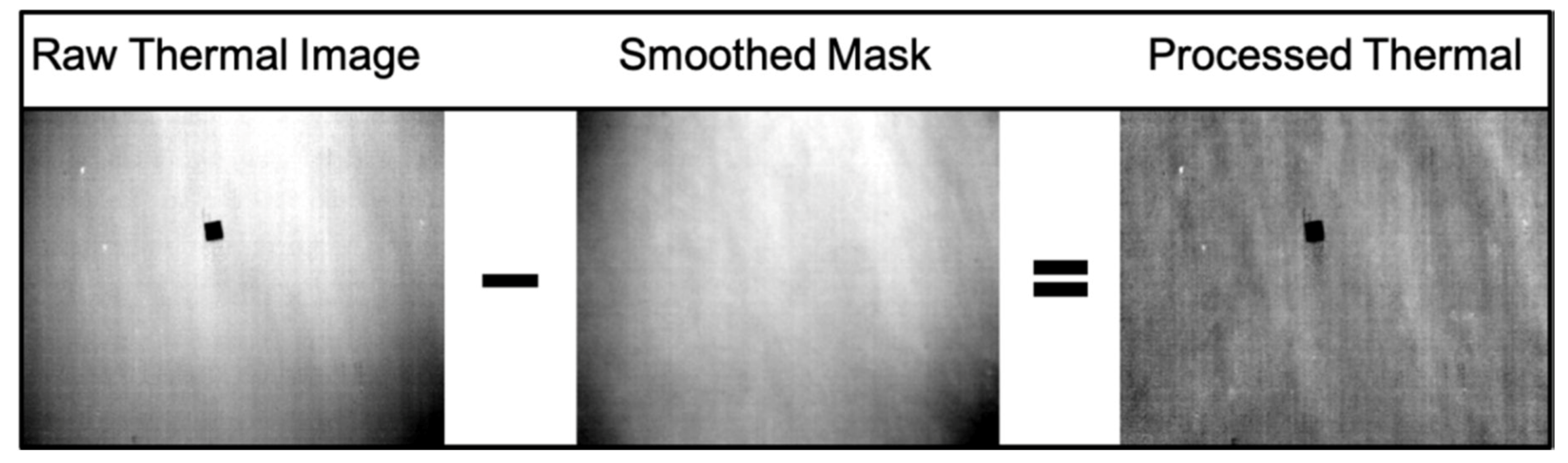

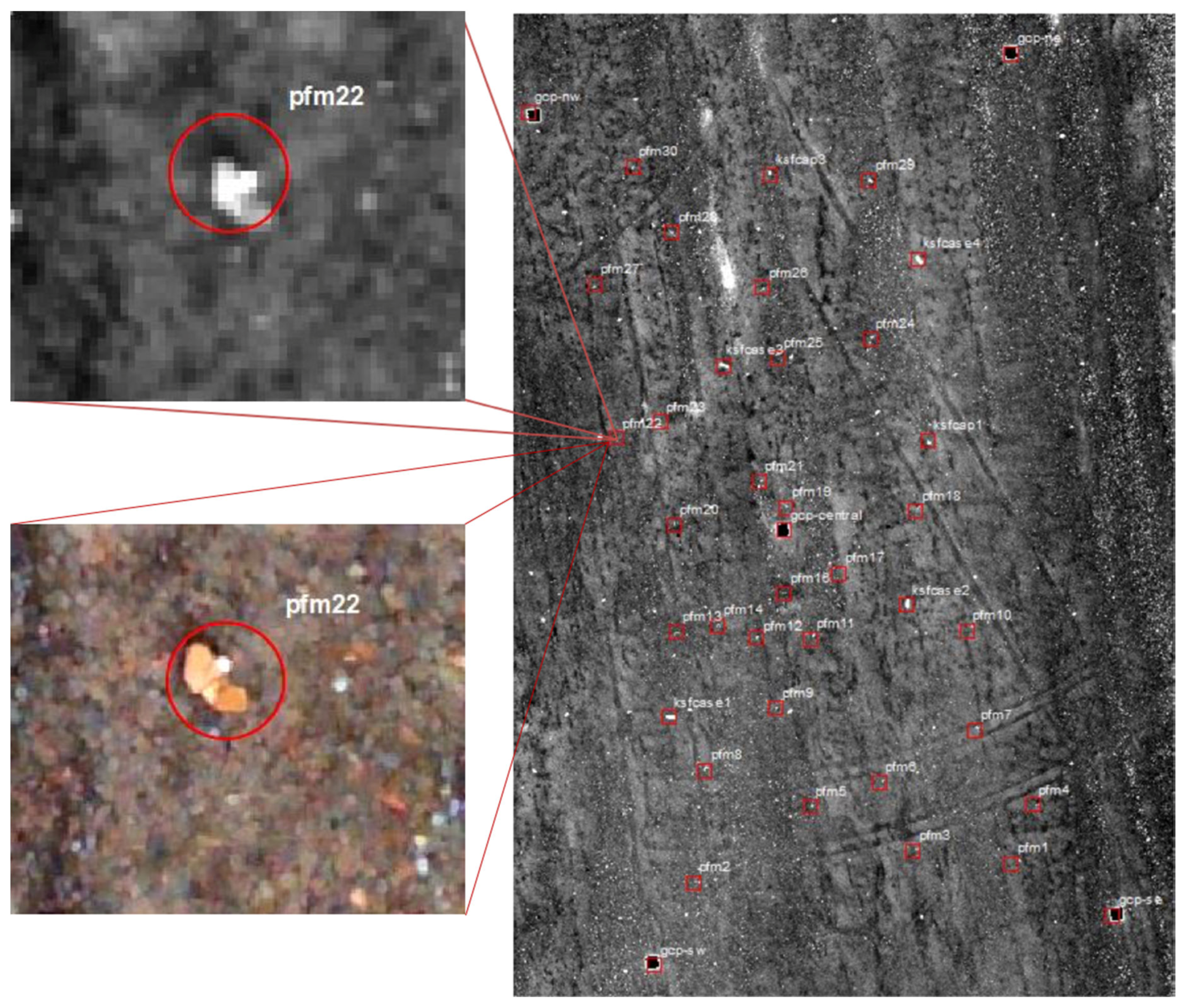

2.4. Image Processing

2.5. CNN Methods

3. Results

Multispectral & Orthophoto Results

4. Discussion

5. Conclusions and Future Work

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Rosenfeld, J.V. Landmines: The human cost. ADF Health J. Aust. Def. Force Health Serv. 2000, 1, 93–98. [Google Scholar]

- Bruschini, C.; Gros, B.; Guerne, F.; Pièce, P.Y.; Carmona, O. Ground penetrating radar and imaging metal detector for antipersonnel mine detection. J. Appl. Geophys. 1998, 40, 59–71. [Google Scholar] [CrossRef]

- Bello, R. Literature review on landmines and detection methods. Front. Sci. 2013, 3, 27–42. [Google Scholar]

- Horowitz, P.; Case, K. New Technological Approaches to Humanitarian Demining; JASON Program Office: McLean, VA, USA, 1996. [Google Scholar]

- Dolgov, R. Landmines in Russia and the former Soviet Union: A lethal epidemic. Med. Glob. Surviv. 2001, 7, 38–42. [Google Scholar]

- Coath, J.A.; Richardson, M.A. Regions of high contrast for the detection of scatterable land mines. In Proceedings of the Detection and Remediation Technologies for Mines and Minelike Targets V, Orlando, FL, USA, 24–28 April 2000; Volume 4038, pp. 232–240. [Google Scholar]

- D’Aria, D.; Grau, L. Instant obstacles: Russian remotely delivered mines. Red Thrust Star. January 1996. Available online: http://fmso.leavenworth.army.mil/documents/mines/mines.htm (accessed on 27 January 2020).

- Army Recognition. Army-2019: New UMZ-G Multipurpose Tracked Minelayer Vehicle Based on Tank Chassis. Available online: https://www.armyrecognition.com/army-2019_news_russia_online_show_daily_media_partner/army-2019_new_umz-g_multipurpose_tracked_minelayer_vehicle_based_on_tank_chassis.html (accessed on 15 January 2020).

- Maslen, S. Destruction of Anti-Personnel Mine Stockpiles: Mine Action: Lessons and Challenges; Geneva International Centre for Humanitarian Demining: Geneva, Switzerland, 2005; p. 191. [Google Scholar]

- De Smet, T.; Nikulin, A. Catching “butterflies” in the morning: A new methodology for rapid detection of aerially deployed plastic land mines from UAVs. Lead. Edge 2018, 37, 367–371. [Google Scholar] [CrossRef]

- Nikulin, A.; De Smet, T.S.; Baur, J.; Frazer, W.D.; Abramowitz, J.C. Detection and identification of remnant PFM-1 ‘Butterfly Mines’ with a UAV-based thermal-imaging protocol. Remote Sens. 2018, 10, 1672. [Google Scholar] [CrossRef]

- DeSmet, T.; Nikulin, A.; Frazer, W.; Baur, J.; Abramowitz, J.C.; Campos, G. Drones and “Butterflies”: A low-cost UAV system for rapid detection and identification of unconventional minefields. J. CWD 2018, 22, 10. [Google Scholar]

- Lakhankar, T.; Ghedira, H.; Temimi, M.; Sengupta, M.; Khanbilvardi, R.; Blake, R. Non-Parametric methods for soil moisture retrieval from satellite remote sensing data. Remote Sens. 2009, 1, 3–21. [Google Scholar] [CrossRef]

- Yuan, H.; Van Der Wiele, C.F.; Khorram, S. An automated artificial neural network system for land use/land cover classification from Landsat TM imagery. Remote Sens. 2009, 1, 243–265. [Google Scholar] [CrossRef]

- Heumann, B.W. An object-based classification of mangroves using a hybrid decision tree—Support vector machine approach. Remote Sens. 2011, 3, 2440–2460. [Google Scholar] [CrossRef]

- Huth, J.; Kuenzer, C.; Wehrmann, T.; Gebhardt, S.; Tuan, V.Q.; Dech, S. Land cover and land use classification with TWOPAC: Towards automated processing for pixel-and object-based image classification. Remote Sens. 2012, 4, 2530–2553. [Google Scholar] [CrossRef]

- Kantola, T.; Vastaranta, M.; Yu, X.; Lyytikainen-Saarenmaa, P.; Holopainen, M.; Talvitie, M.; Kaasalainen, S.; Solberg, S.; Hyyppa, J. Classification of defoliated trees using tree-level airborne laser scanning data combined with aerial images. Remote Sens. 2010, 2, 2665–2679. [Google Scholar] [CrossRef]

- Ma, L.; Liu, Y.; Zhang, X.; Ye, Y.; Yin, G.; Johnson, B.A. Deep learning in remote sensing applications: A meta-analysis and review. ISPRS J. Photogram. Remote Sens. 2019, 1, 166–177. [Google Scholar] [CrossRef]

- Zha, Y.; Wu, M.; Qiu, Z.; Sun, J.; Zhang, P.; Huang, W. Online semantic subspace learning with siamese network for UAV tracking. Remote Sens. 2020, 12, 325. [Google Scholar] [CrossRef]

- Barbierato, E.; Barnetti, I.; Capecchi, I.; Saragosa, C. Integrating remote sensing and street view images to quantify urban forest ecosystem services. Remote Sens. 2020, 12, 329. [Google Scholar] [CrossRef]

- Li, D.; Wang, R.; Xie, C.; Liu, L.; Zhang, J.; Li, R.; Wang, F.; Zhou, M.; Liu, W. A recognition method for rice plant diseases and pests video detection based on deep convolutional neural network. Remote Sens. 2020, 20, 578. [Google Scholar] [CrossRef]

- Prakash, N.; Manconi, A.; Loew, S. Mapping landslides on EO data: Performance of deep learning models vs. traditional machine learning models. Remote Sens. 2020, 12, 346. [Google Scholar] [CrossRef]

- Chen, Y.; Shin, H. Pedestrian detection at night in infrared images using an attention-guided encoder-decoder convolutional neural network. Remote Sens. 2020, 10, 809. [Google Scholar] [CrossRef]

- Lameri, S.; Lombardi, F.; Bestagini, P.; Lualdi, M.; Tubaro, S. Landmine detection from GPR data using convolutional neural networks. In Proceedings of the 2017 25th European Signal Processing Conference (EUSIPCO), Kos, Greece, 28 August–2 September 2017; pp. 508–512. [Google Scholar] [CrossRef]

- Bralich, J.; Reichman, D.; Collins, L.M.; Malof, J.M. Improving convolutional neural networks for buried target detection in ground penetrating radar using transfer learning via pretraining. In Proceedings of the Detection and Sensing of Mines, Explosive Objects, and Obscured Targets XXII, Anaheim, CA, USA, 9–13 April 2017; p. 10182. [Google Scholar] [CrossRef]

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster r-cnn: Towards real-time object detection with region proposal networks. Adv. Neural Inf. Process. Syst. 2015, 39, 91–99. [Google Scholar] [CrossRef]

- Liu, Y.; Cen, C.; Che, Y.; Ke, R.; Ma, Y.; Ma, Y. Detection of maize tassels from UAV RGB imagery with faster R-CNN. Remote Sens. 2020, 12, 338. [Google Scholar] [CrossRef]

- Alganci, U.; Soydas, M.; Sertel, E. Comparative research on deep learning approaches for airplane detection from very high-resolution satellite images. Remote Sens. 2020, 12, 458. [Google Scholar] [CrossRef]

- Lai, C.; Xu, J.; Yue, J.; Yuan, W.; Liu, X.; Li, W.; Li, Q. Automatic extraction of gravity waves from all-sky airglow image based on machine learning. Remote Sens. 2019, 11, 1516. [Google Scholar] [CrossRef]

- Girshick, R.; Donahue, J.; Darrell, T.; Malik, J. Rich feature hierarchies for accurate object detection and semantic segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Columbus, OH, USA, 24–27 June 2014; pp. 580–587. [Google Scholar] [CrossRef]

- Girshick, R. Fast r-cnn. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Santiago, Chile, 7–13 December 2015; pp. 1440–1448. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Spatial pyramid pooling in deep convolutional networks for visual recognition. IEEE Trans. Pattern Anal. Mach. Intell. 2015, 37, 1904–1916. [Google Scholar] [CrossRef]

- Redmon, J.; Divvala, S.; Girshick, R.; Farhadi, A. You only look once: Unified, real-time object detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 26 June–1 July 2016; pp. 779–788. [Google Scholar] [CrossRef]

- Machine-Vision Research Group (MVRG). An Overview of Deep-Learning Based Object-Detection Algorithms. Available online: https://medium.com/@fractaldle/brief-overview-on-object-detection-algorithms-ec516929be93 (accessed on 15 January 2020).

- Gandhi, R. R-CNN, Fast R-CNN, Faster R-CNN, YOLO—Object Detection Algorithms. Available online: https://towardsdatascience.com/r-cnn-fast-r-cnn-faster-r-cnn-yolo-object-detection-algorithms-36d53571365e (accessed on 15 January 2020).

- Hiu, J. Object Detection: Speed and Accuracy Comparison (Faster R-CNN, R-FCN, SSD, FPN, RetinaNet and YOLOv3). Available online: https://medium.com/@jonathan_hui/object-detection-speed-and-accuracy-comparison-faster-r-cnn-r-fcn-ssd-and-yolo-5425656ae359 (accessed on 24 January 2020).

- Pear, R. Mines Put Afghans in Peril on Return. New York Times. 1988. Available online: https://www.nytimes.com/1988/08/14/world/mines-put-afghans-in-peril-on-return.html (accessed on 21 January 2020).

- Dunn, J. Daily Mail. Pictured: The Harrowing Plight of Children Maimed in Afghanistan by the Thousands of Landmines Scattered Across the Country After Decades of War. Available online: https://www.dailymail.co.uk/news/article-3205978/Pictured-harrowing-plight-children-maimed-Afghanistan-thousands-landmines-scattered-country-decades-war.html (accessed on 21 January 2020).

- Strada, G. The horror of land mines. Sci. Am. 1996, 274, 40–45. [Google Scholar] [CrossRef]

- Central Intelligence Agency. Afghanistan Land Use. The World Factbook. Available online: https://www.cia.gov/library/publications/resources/the-world-factbook/geos/af.html (accessed on 7 December 2019).

- Deans, J.; Gerhard, J.; Carter, L.J. Analysis of a thermal imaging method for landmine detection, using infrared heating of the sand surface. Infrared Phys. Technol. 2006, 48, 202–216. [Google Scholar] [CrossRef]

- Thành, N.T.; Sahli, H.; Hào, D.N. Infrared thermography for buried landmine detect: Inverse problem setting. IEEE Trans. Geosci. Remote Sens. 2008, 46, 3987–4004. [Google Scholar] [CrossRef]

- Smits, K.M.; Cihan, A.; Sakaki, T.; Howington, S.E. Soil moisture and thermal behavior in the vicinity of buried objects affecting remote sensing detection. IEEE Trans. Geosci. Remote Sens. 2013, 51, 2675–2688. [Google Scholar] [CrossRef]

- Agarwal, S.; Sriram, P.; Palit, P.P.; Mitchell, O.R. Algorithms for IR-imagery-based airborne landmine and minefield detection. In Proceedings of the SPIE—Detection and Remediation of Mine and Minelike Targets VI, Orlando, FL, USA, 16–20 April 2001; Volume 4394, pp. 284–295. [Google Scholar]

- Laliberte, A.S.; Herrick, J.E.; Rango, A.; Winters, C. Acquisition, orthorectification, and object-based classification of unmanned aerial vehicle (UAV) imagery for rangeland monitoring. Photogramm. Eng. Remote Sens. 2010, 76, 661–672. [Google Scholar] [CrossRef]

- Wigmore, O.; Mark, B.G. Monitoring tropical debris-covered glacier dynamics from high-resolution unmanned aerial vehicle photogrammetry, Cordillera Blanca, Peru. Cryosphere 2017, 11, 2463. [Google Scholar] [CrossRef]

- Metzler, B.; Siercks, K.; Van Der Zwan, E.V. Hexagon Technology Center GmbH. Determination of Object Data by Template-Based UAV Control. U.S. Patent 9,898,821, 20 February 2018. [Google Scholar]

- Cheng, Y.; Zhao, X.; Huang, K.; Tan, T. Semi-Supervised learning for rgb-d object recognition. In Proceedings of the 2014 22nd International Conference on Pattern Recognition, Stockholm, Sweden, 24–28 August 2014; Volume 24, pp. 2377–2382. [Google Scholar]

- Liu, J.; Zhang, S.; Wang, S.; Metaxas, D.N. Multispectral deep neural networks for pedestrian detection. arXiv 2016, arXiv:1611.02644. [Google Scholar]

- Parrot Store Official. Parrot SEQUOIA+. Available online: https://www.parrot.com/business-solutions-us/parrot-professional/parrot-sequoia (accessed on 21 January 2020).

- FLIR. Vue Pro Thermal Camera for Drones. Available online: https://www.flir.com/products/vue-pro/ (accessed on 21 January 2020).

- Pour, T.; Miřijovský, J.; Purket, T. Airborne thermal remote sensing: The case of the city of Olomouc, Czech Republic. Eur. J. Remote Sens. 2019, 52, 209–218. [Google Scholar] [CrossRef]

- Github. Jwyang/Faster-Rcnn.Pytorch. Available online: https://github.com/jwyang/faster-rcnn.pytorch (accessed on 24 January 2020).

- Github. Tzutalin/Labelimg. Available online: https://github.com/tzutalin/labelImg (accessed on 24 January 2020).

- Github. Lozuwa/Impy. Available online: https://github.com/lozuwa/impy#images-are-too-big (accessed on 24 January 2020).

- De Smet, T.; Nikulin, A.; Baur, J. Scatterable Landmine Detection Project Dataset 1. Geological Sciences and Environmental Studies Faculty Scholarship. 4. 2020. Available online: https://orb.binghamton.edu/geology_fac/4 (accessed on 27 January 2020).

- De Smet, T.; Nikulin, A.; Baur, J. Scatterable Landmine Detection Project Dataset 2. Geological Sciences and Environmental Studies Faculty Scholarship. 10. 2020. Available online: https://orb.binghamton.edu/geology_fac/10 (accessed on 27 January 2020).

- De Smet, T.; Nikulin, A.; Baur, J. Scatterable Landmine Detection Project Dataset 3. Geological Sciences and Environmental Studies Faculty Scholarship. 9. 2020. Available online: https://orb.binghamton.edu/geology_fac/9 (accessed on 27 January 2020).

- De Smet, T.; Nikulin, A.; Baur, J. Scatterable Landmine Detection Project Dataset 4. Geological Sciences and Environmental Studies Faculty Scholarship. 8. 2020. Available online: https://orb.binghamton.edu/geology_fac/8 (accessed on 27 January 2020).

- De Smet, T.; Nikulin, A.; Baur, J. Scatterable Landmine Detection Project Dataset 5. Geological Sciences and Environmental Studies Faculty Scholarship. 7. 2020. Available online: https://orb.binghamton.edu/geology_fac/7 (accessed on 27 January 2020).

- De Smet, T.; Nikulin, A.; Baur, J. Scatterable Landmine Detection Project Dataset 6. Geological Sciences and Environmental Studies Faculty Scholarship. 6. 2020. Available online: https://orb.binghamton.edu/geology_fac/6 (accessed on 27 January 2020).

- De Smet, T.; Nikulin, A.; Baur, J. Scatterable Landmine Detection Project Dataset 7. Geological Sciences and Environmental Studies Faculty Scholarship. 5. 2020. Available online: https://orb.binghamton.edu/geology_fac/5 (accessed on 27 January 2020).

| Sensor | Spectral Band | Pixel Size | Resolution | Focal Length | Frame Rate | Image Format |

|---|---|---|---|---|---|---|

| FLIR Vue Pro R | Thermal Infrared: 7.5–13.5 µm | NA | 640 × 512 pixels | 13 mm | 30 Hz (NTSC); 25 Hz (PAL) | TIFF, 14-bit raw sensor data |

| Parrot Sequoia RGB | Visible light: 380–700 nm | 1.34 μm | 4608×3456 pixels | 4.88 mm | Minimum value: 1 fps | JPG |

| Parrot Sequoia 4× monochrome sensors | Green: 530–570 nm Red: 640–680 nm Red Edge: 730–740 nm Near Infrared: 770–810 nm | 3.75 μm | 1280 × 960 pixels | 3.98 mm | Minimum value: 0.5fps | TIFF, RAW 10-bit files |

| Train Data | Train Time (m) | Test Data | Test Time (s) | AP for PFM-1 | AP for KSF-Casing | Mean AP |

|---|---|---|---|---|---|---|

| Six flights, grass & rubble (Fall 2019) | 37 | One flight rubble (Fall 2017) | 1.87 | 0.7030 | 0.7273 | 0.7152 |

| Random 70% of seven total flights | 29 | Random 30% of seven total flights | 5.47 | 0.9983 | 0.9879 | 0.9931 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Baur, J.; Steinberg, G.; Nikulin, A.; Chiu, K.; de Smet, T.S. Applying Deep Learning to Automate UAV-Based Detection of Scatterable Landmines. Remote Sens. 2020, 12, 859. https://doi.org/10.3390/rs12050859

Baur J, Steinberg G, Nikulin A, Chiu K, de Smet TS. Applying Deep Learning to Automate UAV-Based Detection of Scatterable Landmines. Remote Sensing. 2020; 12(5):859. https://doi.org/10.3390/rs12050859

Chicago/Turabian StyleBaur, Jasper, Gabriel Steinberg, Alex Nikulin, Kenneth Chiu, and Timothy S. de Smet. 2020. "Applying Deep Learning to Automate UAV-Based Detection of Scatterable Landmines" Remote Sensing 12, no. 5: 859. https://doi.org/10.3390/rs12050859

APA StyleBaur, J., Steinberg, G., Nikulin, A., Chiu, K., & de Smet, T. S. (2020). Applying Deep Learning to Automate UAV-Based Detection of Scatterable Landmines. Remote Sensing, 12(5), 859. https://doi.org/10.3390/rs12050859