Semiautomated Mapping of Benthic Habitats and Seagrass Species Using a Convolutional Neural Network Framework in Shallow Water Environments

Abstract

1. Introduction

2. Materials and Methods

2.1. Study Areas

2.2. Field Data Collection

2.3. Satellite Data

2.4. Methodology

2.4.1. Benthic Habitat and Seagrass Detection

- For the Shiraho area, 7 benthic cover categories were labeled individually by a human annotator using 3000 underwater images: T. hemprichii seagrass, soft sand, hard sediments (pebbles, cobbles, and boulders), brown algae, other algae, corals (Acropora and Porites), and blue corals (H. coerulea).

- For Fukido cove, 4 seagrass categories were also labeled as E. acoroides, tall T. hemprichii, short T. hemprichii, and seagrass sparse areas using 1500 underwater images.

- All these labeled georeferenced images were used as inputs for the pre-trained VGG16 CNN and BOF approach in order to create the descriptors for use in the semiautomatic recognition process.

- Extracted attributes from the fully connected layer (FC6) of the VGG16 CNN and BOF approach were used as the inputs for training the SVM classifier; the outputs were image labels.

- Validation of the SVM classifier was conducted using 75% randomly-sampled independent images for training and 25% for testing.

- More images were categorized using the validated SVM classifier and checked individually.

2.4.2. Benthic Habitat and Seagrass Mapping

- A number of image patches were extracted around each correctly categorized image location with 2 pixel dimensions in horizontal and vertical directions.

- The image patch size was 2 × 2 × 3 pixels; 1500 image patches were each extracted from the Quickbird imagery for benthic habitat mapping and the Geoeye-1 imagery for seagrasses mapping.

- These image patches were used as inputs for evaluating CNNs with a simple architecture for benthic habitat and seagrass mapping; they were divided into 75% training images and 25% testing images.

- Benthic habitat and seagrass mapping was performed by the trained CNNs using high-resolution satellite images.

3. Results

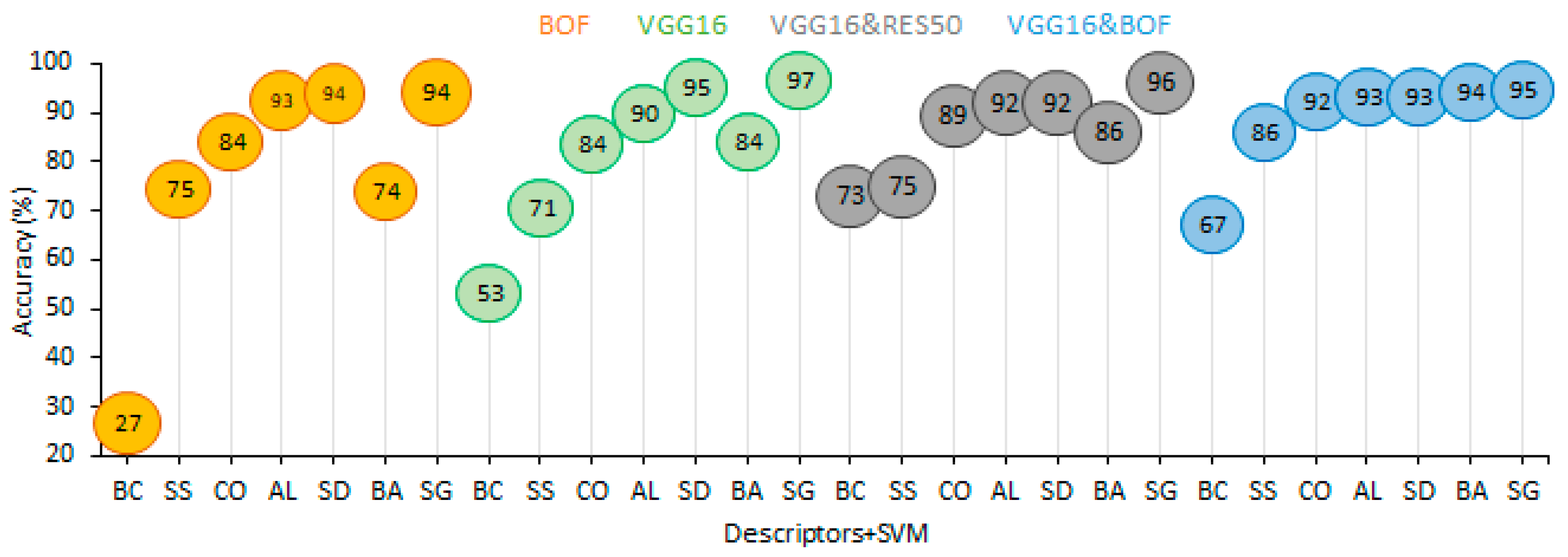

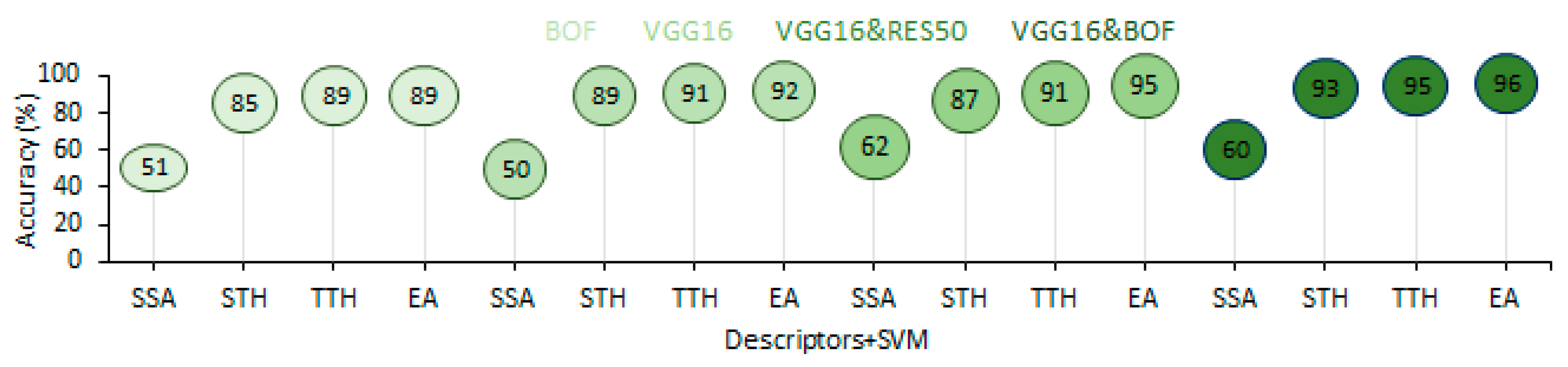

3.1. Benthic Habitat and Seagrass Detection

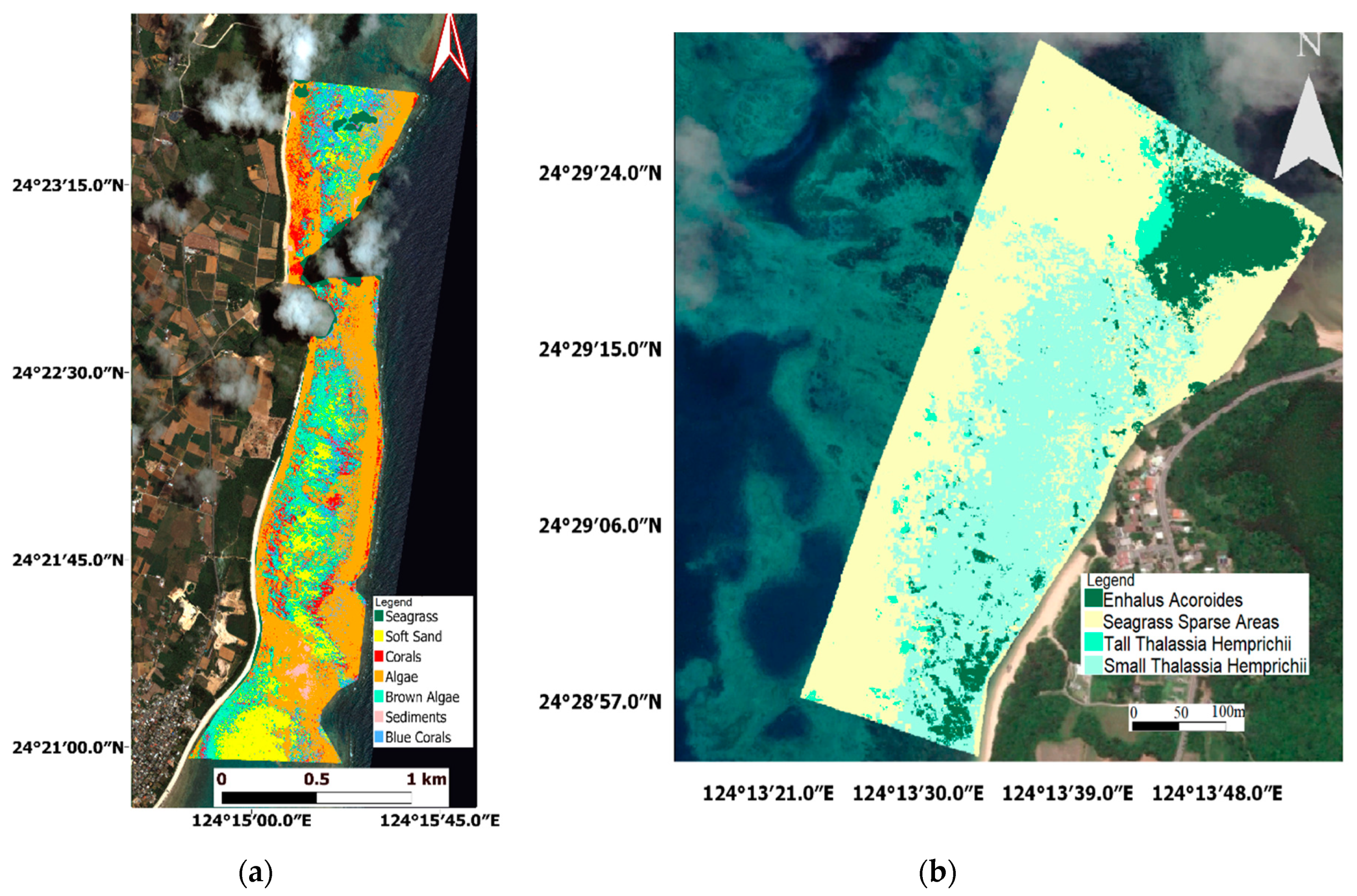

3.2. Benthic Habitat and Seagrass Mapping

4. Discussion

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Sun, X.; Shi, J.; Liu, L.; Dong, J.; Plant, C.; Wang, X.; Zhou, H. Transferring deep knowledge for object recognition in Low-quality underwater videos. Neurocomputing 2017, 275, 897–908. [Google Scholar] [CrossRef]

- Vassallo, P.; Bianchi, C.N.; Paoli, C.; Holon, F.; Navone, A.; Bavestrello, G.; Cattaneo Vietti, R.; Morri, C. A Predictive Approach to Benthic Marine Habitat Mapping: Efficacy and Management Implications. Mar. Pollut. Bull. 2018, 131, 218–232. [Google Scholar] [CrossRef] [PubMed]

- Mahmood, A.; Bennamoun, M.; An, S.; Sohel, F.; Boussaid, F.; Hovey, R.; Kendrick, G.; Fisher, R.B. Deep Learning for Coral Classification. In Handbook of Neural Computation; Academic Press: Cambridge, MA, USA, 2017; pp. 383–401. [Google Scholar]

- Beijbom, O.; Edmunds, P.J.; Roelfsema, C.; Smith, J.; Kline, D.I.; Neal, B.P.; Dunlap, M.J.; Moriarty, V.; Fan, T.Y.; Tan, C.J.; et al. Towards Automated Annotation of Benthic Survey Images: Variability of Human Experts and Operational Modes of Automation. PLoS ONE 2015, 10, 1–22. [Google Scholar] [CrossRef] [PubMed]

- González-Rivero, M.; Beijbom, O.; Rodriguez-Ramirez, A.; Holtrop, T.; González-Marrero, Y.; Ganase, A.; Roelfsema, C.; Phinn, S.; Hoegh-Guldberg, O. Scaling up Ecological Measurements of Coral Reefs Using Semi-automated Field Image Collection and Analysis. Remote Sens. 2016, 8, 30. [Google Scholar] [CrossRef]

- Shihavuddin, A.; Gracias, N.; Garcia, R.; Gleason, A.C.R.; Gintert, B. Image-Based Coral Reef Classification and Thematic Mapping. Remote Sens. 2013, 5, 1809–1841. [Google Scholar] [CrossRef]

- Gauci, A.; Deidun, A.; Abela, J.; Zarb Adami, K. Machine Learning for Benthic Sand and Maerl Classification and Coverage Estimation in Coastal Areas Around the Maltese Islands. J. Appl. Res. Technol. 2016, 14, 338–344. [Google Scholar] [CrossRef]

- Raj, M.V.; Murugan, S.S. Underwater Image Classification using Machine Learning Technique. In Proceedings of the International Symposium on Ocean Electronics, SYMPOL, Ernakulam, India, 11–13 December 2019; pp. 166–173. [Google Scholar]

- Modasshir, M.; Li, A.Q.; Rekleitis, I. MDNet: Multi-Patch Dense Network for Coral Classification. In Proceedings of the OCEANS 2018 MTS/IEEE, Charleston, SC, USA, 22–25 October 2018; pp. 1–6. [Google Scholar]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. ImageNet Classification with Deep Convolutional Neural Networks. In Proceedings of the Advances in Neural Information Processing Systems 25 (NIPS 2012), Curran Associates, Lake Tahoe, NV, USA, 3–8 December 2012; pp. 1097–1105. [Google Scholar]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. In Proceedings of the 3rd International Conference on Learning Representations, ICLR 2015, San Diego, CA, USA, 7–9 May 2015; pp. 1–14. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Szegedy, C.; Liu, W.; Jia, Y.; Sermanet, P.; Reed, S.; Anguelov, D.; Erhan, D.; Vanhoucke, V.; Rabinovich, A. Going Deeper with Convolutions Christian. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 2015; pp. 1–9. [Google Scholar]

- Gómez-Ríos, A.; Tabik, S.; Luengo, J.; Shihavuddin, A.S.M.; Herrera, F. Coral species identification with texture or structure images using a two-level classifier based on Convolutional Neural Networks. Knowl. Based Syst. 2019, 184, 104891. [Google Scholar] [CrossRef]

- Lumini, A.; Nanni, L.; Maguolo, G. Deep learning for plankton and coral classification. Appl. Comput. Inform. 2020, in press. [Google Scholar] [CrossRef]

- Raphael, A.; Dubinsky, Z.; Iluz, D.; Netanyahu, N.S. Neural Network Recognition of Marine Benthos and Corals. Diversity 2020, 12, 29. [Google Scholar] [CrossRef]

- Elawady, M. Sparse Coral Classification Using Deep Convolutional Neural Networks. Master’s Thesis, Heriot-Watt University, Edinburgh, Scotland, 2015. [Google Scholar]

- Beijbom, O.; Edmunds, P.J.; Kline, D.I.; Mitchell, B.G.; Kriegman, D. Automated annotation of coral reef survey images. In Proceedings of the 2012 IEEE Conference on Computer Vision and Pattern Recognition, Providence, RI, USA, 16–21 June 2012; pp. 1170–1177. [Google Scholar]

- Changing Oceans Expedition 2013-RRS James Cook 073 Cruise Report. Available online: https://www.bodc.ac.uk/resources/inventories/cruise_inventory/report/11421/ (accessed on 25 June 2020).

- Bahrani, A.; Majidi, B.; Eshghi, M. Coral Reef Management in Persian Gulf Using Deep Convolutional Neural Networks. In Proceedings of the 4th International Conference on Pattern Recognition and Image Analysis (IPRIA), Tehran, Iran, 6–7 March 2019; pp. 200–204. [Google Scholar]

- King, A.; Bhandarkar, S.M.; Hopkinson, B.M. Deep Learning for Semantic Segmentation of Coral Reef Images Using Multi-View Information. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Salt Lake City, UT, USA, 18–22 June 2018; pp. 1–10. [Google Scholar]

- Gómez-Ríos, A.; Tabik, S.; Luengo, J.; Shihavuddin, A.S.M.; Krawczyk, B.; Herrera, F. Towards highly accurate coral texture images classification using deep convolutional neural networks and data augmentation. Expert Syst. Appl. 2019, 118, 315–328. [Google Scholar] [CrossRef]

- Mahmood, A.; Bennamoun, M.; An, S.; Sohel, F.A.; Boussaid, F.; Hovey, R.; Kendrick, G.A.; Fisher, R.B. Deep Image Representations for Coral Image Classification. IEEE J. Ocean. Eng. 2018, 44, 121–131. [Google Scholar] [CrossRef]

- Mahmood, A.; Ospina, A.G.; Bennamoun, M.; An, S.; Sohel, F.; Boussaid, F.; Hovey, R.; Fisher, R.B.; Kendrick, G.A. Automatic Hierarchical Classification of Kelps Using Deep Residual Features. Sensors 2020, 20, 447. [Google Scholar] [CrossRef] [PubMed]

- Mahmood, A.; Bennamoun, M.; An, S.; Sohel, F.; Boussaid, F.; Hovey, R.; Kendrick, G.; Fisher, R.B. Automatic Annotation of Coral Reefs using Deep Learning. In Proceedings of the OCEANS 2016 MTS/IEEE Monterey, OCE 2016, Monterey, CA, USA, 19–23 September 2016; pp. 1–5. [Google Scholar]

- Bewley, M.; Friedman, A.; Ferrari, R.; Hill, N.; Hovey, R.; Barrett, N.; Marzinelli, E.M.; Pizarro, O.; Figueira, W.; Meyer, L.; et al. Australian sea-floor survey data, with images and expert annotations. Sci. Data 2015, 2, 150057. [Google Scholar] [CrossRef] [PubMed]

- Mahmood, A.; Bennamoun, M.; An, S.; Sohel, F.; Boussaid, F.; Hovey, R.; Kendrick, G.; Fisher, R.B. Coral Classification with Hybrid Feature Representations. In Proceedings of the 2016 IEEE International Conference on Image Processing (ICIP), Phoenix, AZ, USA, 25–28 September 2016; pp. 519–523. [Google Scholar]

- Xu, L.; Bennamoun, M.; An, S.; Sohel, F.A.; Boussaid, F. Classification of corals in reflectance and fluorescence images using convolutional neural network representations. In Proceedings of the Advances in neural information processing systems, Calgary, AB, Canada, 3–6 December 2012; pp. 1097–1105. [Google Scholar]

- Mahmood, A.; Bennamoun, M.; An, S.; Sohel, F.; Boussaid, F. ResFeats: Residual network based features for underwater image classification. Image Vis. Comput. 2020, 93, 103811. [Google Scholar] [CrossRef]

- Reshitnyk, L.; Costa, M.; Robinson, C.; Dearden, P. Evaluation of WorldView-2 and acoustic remote sensing for mapping benthic habitats in temperate coastal Pacific waters. Remote Sens. Environ. 2014, 153, 7–23. [Google Scholar] [CrossRef]

- Lüdtke, A.; Jerosch, K.; Herzog, O.; Schlüter, M. Development of a machine learning technique for automatic analysis of seafloor image data: Case example, Pogonophora coverage at mud volcanoes. Comput. Geosci. 2012, 39, 120–128. [Google Scholar] [CrossRef]

- Turner, J.A.; Babcock, R.C.; Hovey, R.; Kendrick, G.A. Can single classifiers be as useful as model ensembles to produce benthic seabed substratum maps? Estuar. Coast. Shelf Sci. 2018, 204, 149–163. [Google Scholar] [CrossRef]

- Hedley, J.D.; Roelfsema, C.; Brando, V.; Giardino, C.; Kutser, T.; Phinn, S.; Mumby, P.J.; Barrilero, O.; Laporte, J.; Koetz, B. Coral reef applications of Sentinel-2: Coverage, characteristics, bathymetry and benthic mapping with comparison to Landsat 8. Remote Sens. Environ. 2018, 216, 598–614. [Google Scholar] [CrossRef]

- Xu, H.; Liu, Z.; Zhu, J.; Lu, X.; Liu, Q. Classification of Coral Reef Benthos around Ganquan Island Using WorldView-2 Satellite Imagery. J. Coast. Res. 2019, 93, 466–474. [Google Scholar] [CrossRef]

- Wicaksono, P.; Aryaguna, P.A.; Lazuardi, W. Benthic Habitat Mapping Model and Cross Validation Using Machine-Learning Classification Algorithms. Remote Sens. 2019, 11, 1279. [Google Scholar] [CrossRef]

- Nakase, K.; Murakami, T.; Kohno, H.; Ukai, A.; Mizutani, A.; Shimokawa, S. Distribution of Enhalus acoroides According to Waves and Currents. In Geophysical Approach to Marine Coastal Ecology: The Case of Iriomote Island, Japan; Shimokawa, S., Murakami, T., Kohno, H., Eds.; Springer: Singapore, 2020; pp. 197–215. [Google Scholar]

- GoPro Hero3 + (Black Edition) Specs. Available online: https://www.cnet.com/products/gopro-hero3-plus-black-edition/specs/ (accessed on 20 June 2020).

- Collin, A.; Nadaoka, K.; Nakamura, T. Mapping VHR Water Depth, Seabed and Land Cover Using Google Earth Data. ISPRS Int. J. Geo-Inf. 2014, 3, 1157–1179. [Google Scholar] [CrossRef]

- Cortes, C.; Vapnik, V. Support-Vector Networks. Mach. Learn. 1995, 20, 273–297. [Google Scholar] [CrossRef]

- Ishida, H.; Oishi, Y.; Morita, K.; Moriwaki, K.; Nakajima, T.Y. Development of a support vector machine based cloud detection method for MODIS with the adjustability to various conditions. Remote Sens. Environ. 2018, 205, 390–407. [Google Scholar] [CrossRef]

- Nazir, S.; Yousaf, M.H.; Velastin, S.A. Evaluating a bag-of-visual features approach using spatio-temporal features for action recognition. Comput. Electr. Eng. 2018, 72, 660–669. [Google Scholar] [CrossRef]

- Moniruzzaman, M.; Islam, S.M.S. Evaluation of Different Features and Classifiers for Classification of Rays from Underwater Digital Images. In Proceedings of the International Conference on Machine Learning and Data Engineering (iCMLDE), Sydney, Australia, 3–7 December 2018; pp. 83–90. [Google Scholar]

- Loussaief, S.; Abdelkrim, A. Deep learning vs. bag of features in machine learning for image classification. In Proceedings of the International Conference on Advanced Systems and Electric Technologies, IC_ASET 2018, Hammamet, Tunisia, 22–25 March 2018; pp. 6–10. [Google Scholar]

- Zeiler, M.D.; Fergus, R. Visualizing and Understanding Convolutional Networks BT-Computer Vision–ECCV 2014. In Proceedings of the European Conference on Computer Vision, Zurich, Switzerland, 6–12 September 2014; Fleet, D., Pajdla, T., Schiele, B., Tuytelaars, T., Eds.; Springer International Publishing: Zurich, Switzerland, 2014; pp. 818–833. [Google Scholar]

- Roelfsema, C.; Kovacs, E.; Roos, P.; Terzano, D.; Lyons, M.; Phinn, S. Use of a semi-automated object based analysis to map benthic composition, Heron Reef, Southern Great Barrier Reef. Remote Sens. Lett. 2018, 9, 324–333. [Google Scholar] [CrossRef]

- Li, J.; Schill, S.R.; Knapp, D.E.; Asner, G.P. Object-Based Mapping of Coral Reef Habitats Using Planet Dove Satellites. Remote Sens. 2019, 11, 1445. [Google Scholar] [CrossRef]

- Poursanidis, D.; Topouzelis, K.; Chrysoulakis, N. Mapping coastal marine habitats and delineating the deep limits of the Neptune’s seagrass meadows using very high resolution Earth observation data. Int. J. Remote Sens. 2018, 39, 8670–8687. [Google Scholar] [CrossRef]

- Conti, L.A.; Torres da Mota, G.; Barcellos, R.L. High-resolution optical remote sensing for coastal benthic habitat mapping: A case study of the Suape Estuarine-Bay, Pernambuco, Brazil. Ocean Coast. Manag. 2020, 193, 105205. [Google Scholar] [CrossRef]

- Wilson, K.L.; Skinner, M.A.; Lotze, H.K. Eelgrass (Zostera marina) and benthic habitat mapping in Atlantic Canada using high-resolution SPOT 6/7 satellite imagery. Estuar. Coast. Shelf Sci. 2019, 226, 106292. [Google Scholar] [CrossRef]

- Montereale Gavazzi, G.; Madricardo, F.; Janowski, L.; Kruss, A.; Blondel, P.; Sigovini, M.; Foglini, F. Evaluation of seabed mapping methods for fine-scale classification of extremely shallow benthic habitats - Application to the Venice Lagoon, Italy. Estuar. Coast. Shelf Sci. 2016, 170, 45–60. [Google Scholar] [CrossRef]

- Koedsin, W.; Intararuang, W.; Ritchie, R.J.; Huete, A. An Integrated Field and Remote Sensing Method for Mapping Seagrass Species, Cover, and Biomass in Southern Thailand. Remote Sens. 2016, 8, 292. [Google Scholar] [CrossRef]

- Gumusay, M.U.; Bakirman, T.; Kizilkaya, I.T.; Onur, N. A review of seagrass detection, mapping and monitoring applications using acoustic systems. Eur. J. Remote Sens. 2019, 52, 1–29. [Google Scholar] [CrossRef]

- Arias-Ortiz, A.; Serrano, O.; Masqué, P.; Lavery, P.S.; Mueller, U.; Kendrick, G.A.; Rozaimi, M.; Esteban, A.; Fourqurean, J.W.; Marbà, N.; et al. A marine heatwave drives massive losses from the world’s largest seagrass carbon stocks. Nat. Clim. Chang. 2018, 8, 338–344. [Google Scholar] [CrossRef]

- Ceccherelli, G.; Oliva, S.; Pinna, S.; Piazzi, L.; Procaccini, G.; Marin-Guirao, L.; Dattolo, E.; Gallia, R.; La Manna, G.; Gennaro, P.; et al. Seagrass collapse due to synergistic stressors is not anticipated by phenological changes. Oecologia 2018, 186, 1137–1152. [Google Scholar] [CrossRef]

- Wicaksono, P.; Lazuardi, W. Assessment of PlanetScope images for benthic habitat and seagrass species mapping in a complex optically shallow water environment. Int. J. Remote Sens. 2018, 39, 5739–5765. [Google Scholar] [CrossRef]

- Kovacs, E.; Roelfsema, C.; Lyons, M.; Zhao, S.; Phinn, S.; Kovacs, E.; Roelfsema, C.; Lyons, M.; Zhao, S.; Phinn, S. Seagrass habitat mapping: How do Landsat 8 OLI, Sentinel-2, ZY-3A, and Worldview-3 perform? Remote Sens. Lett. 2018, 9, 686–695. [Google Scholar] [CrossRef]

- Ha, N.T.; Manley-Harris, M.; Pham, T.D.; Hawes, I. A Comparative Assessment of Ensemble-Based Machine Learning and Maximum Likelihood Methods for Mapping Seagrass Using Sentinel-2 Imagery in Tauranga Harbor, New Zealand. Remote Sens. 2020, 12, 355. [Google Scholar] [CrossRef]

- Perez, D.; Islam, K.; Hill, V.; Zimmerman, R.; Schaeffer, B.; Shen, Y.; Li, J. Quantifying Seagrass Distribution in Coastal Water with Deep Learning Models. Remote Sens. 2020, 12, 1581. [Google Scholar] [CrossRef]

- Paringit, E.C.; Nadaoka, K. Simultaneous estimation of benthic fractional cover and shallow water bathymetry in coral reef areas from high-resolution satellite images. Int. J. Remote 2012, 33, 3026–3047. [Google Scholar] [CrossRef]

- Chirayath, V.; Li, A. Next-Generation Optical Sensing Technologies for Exploring Ocean Worlds—NASA FluidCam, MiDAR, and NeMO-Net. Front. Mar. Sci. 2019, 6, 521. [Google Scholar] [CrossRef]

| Methodology | BOF | VGG16 | VGG16&RES50 | BOF&VGG16 |

|---|---|---|---|---|

| OA% | 85.2 | 87.5 | 89.9 | 91.5 |

| Kappa | 0.82 | 0.85 | 0.88 | 0.90 |

| Predicted Class | Validated Class | Row. Total | UA | ||||||

|---|---|---|---|---|---|---|---|---|---|

| AL | BA | CO | BC | SD | SS | SG | |||

| AL | 128 | 4 | 2 | 1 | 1 | 0 | 1 | 137 | 0.93 |

| BA | 3 | 47 | 0 | 0 | 0 | 0 | 0 | 50 | 0.94 |

| CO | 4 | 0 | 131 | 4 | 3 | 0 | 0 | 142 | 0.92 |

| BC | 4 | 2 | 6 | 31 | 2 | 0 | 1 | 46 | 0.67 |

| SD | 4 | 0 | 1 | 1 | 128 | 2 | 1 | 137 | 0.93 |

| SS | 0 | 0 | 0 | 0 | 7 | 43 | 0 | 50 | 0.86 |

| SG | 6 | 0 | 0 | 0 | 4 | 0 | 178 | 188 | 0.95 |

| Col. Total | 149 | 53 | 140 | 37 | 145 | 45 | 181 | OA = 91.5% | |

| PA | 0.86 | 0.89 | 0.94 | 0.84 | 0.88 | 0.96 | 0.98 | Kappa val. = 0.90 | |

| Methodology | BOF | VGG16 | VGG16&RES50 | BOF&VGG16 |

|---|---|---|---|---|

| OA% | 84.0 | 86.0 | 87.7 | 90.4 |

| Kappa | 0.77 | 0.80 | 0.83 | 0.86 |

| Predicted Class | Validated Class | Row. Total | UA | |||

|---|---|---|---|---|---|---|

| EA | TTH | STH | SSA | |||

| EA | 85 | 0 | 2 | 2 | 89 | 0.96 |

| TTH | 0 | 131 | 3 | 4 | 138 | 0.95 |

| STH | 4 | 4 | 98 | 0 | 106 | 0.93 |

| SSA | 0 | 1 | 16 | 25 | 42 | 0.60 |

| Col. Total | 89 | 136 | 119 | 31 | OA = 90.4% | |

| PA | 0.96 | 0.87 | 0.82 | 0.81 | Kappa val. = 0.86 | |

| Predicted Class | Validated Class | Row. Total | UA | ||||||

|---|---|---|---|---|---|---|---|---|---|

| SG | SS | AL | CO | BC | BA | SD | |||

| SG | 37 | 0 | 2 | 0 | 13 | 0 | 1 | 53 | 0.70 |

| SS | 0 | 52 | 2 | 0 | 0 | 0 | 0 | 54 | 0.96 |

| AL | 0 | 5 | 49 | 0 | 0 | 0 | 0 | 54 | 0.91 |

| CO | 0 | 0 | 0 | 48 | 3 | 2 | 0 | 53 | 0.91 |

| BC | 3 | 0 | 0 | 2 | 49 | 0 | 0 | 54 | 0.91 |

| BA | 0 | 0 | 0 | 1 | 1 | 50 | 1 | 53 | 0.94 |

| SD | 0 | 0 | 0 | 0 | 2 | 0 | 52 | 54 | 0.96 |

| Col. Total | 40 | 57 | 53 | 51 | 68 | 52 | 54 | OA = 89.9% | |

| PA | 0.93 | 0.91 | 0.92 | 0.94 | 0.72 | 0.96 | 0.96 | Kappa val. = 0.88 | |

| Predicted Class | Validated Class | Row. Total | UA | |||

|---|---|---|---|---|---|---|

| TTH | SSA | EA | STH | |||

| TTH | 84 | 3 | 0 | 7 | 94 | 0.89 |

| SSA | 0 | 80 | 10 | 3 | 93 | 0.86 |

| EA | 0 | 2 | 88 | 4 | 94 | 0.94 |

| STH | 4 | 0 | 0 | 90 | 94 | 0.96 |

| Col. Total | 88 | 85 | 91 | 111 | OA = 91.2% | |

| PA | 0.95 | 0.94 | 0.97 | 0.81 | Kappa val. = 0.88 | |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Mohamed, H.; Nadaoka, K.; Nakamura, T. Semiautomated Mapping of Benthic Habitats and Seagrass Species Using a Convolutional Neural Network Framework in Shallow Water Environments. Remote Sens. 2020, 12, 4002. https://doi.org/10.3390/rs12234002

Mohamed H, Nadaoka K, Nakamura T. Semiautomated Mapping of Benthic Habitats and Seagrass Species Using a Convolutional Neural Network Framework in Shallow Water Environments. Remote Sensing. 2020; 12(23):4002. https://doi.org/10.3390/rs12234002

Chicago/Turabian StyleMohamed, Hassan, Kazuo Nadaoka, and Takashi Nakamura. 2020. "Semiautomated Mapping of Benthic Habitats and Seagrass Species Using a Convolutional Neural Network Framework in Shallow Water Environments" Remote Sensing 12, no. 23: 4002. https://doi.org/10.3390/rs12234002

APA StyleMohamed, H., Nadaoka, K., & Nakamura, T. (2020). Semiautomated Mapping of Benthic Habitats and Seagrass Species Using a Convolutional Neural Network Framework in Shallow Water Environments. Remote Sensing, 12(23), 4002. https://doi.org/10.3390/rs12234002