1. Introduction

The incorporation of Building Information Modelling (BIM) models into the standard design and construction workflow has become widespread in the AEC industry. This offers a broad range of opportunities. One of them is monitoring the achieved building progress over a specific time span. Although the principles of progress monitoring already exist for a long time [

1], the current shift to digital models opens up new possibilities. Furthermore, instead of relying on current error-prone estimations for progress determination either based on visual inspections, material usage or sparse minimalistic measurements, it has become possible to determine the current building state and by extent the progress in construction performances to much more accurate levels by relying on remote sensing methods such as laser scanning and photogrammetry [

2,

3]. These techniques offer the advantage that large parts of the construction site can be reconstructed in a relatively short time span. The obtainable accuracies of those techniques have been rising since their inception [

4] up to acceptable levels for progress and construction monitoring purposes. As a result, progress has become more accurately quantifiable. Moreover, also the three-dimensional reconstruction of the current state can be compared with the designed and desired state of the building at that moment, leading to the detection of planning irregularities. Notwithstanding the broad plethora of opportunities such approaches offer, a contradicting paradigm is still present in the rather conservative construction industry: although digital BIM models are available and various options exist to create accurate reconstructions of the built elements, construction site and progress monitoring currently still mainly rely on visual inspections and assumptions. To overcome this challenge, it is paramount that a streamlined photogrammetric workflow is available that converts the recordings to accurate full-scale models. To reach this goal, we propose a new approach relying on a reference module that is used repeatedly as the backbone for the registration of subsequent datasets (

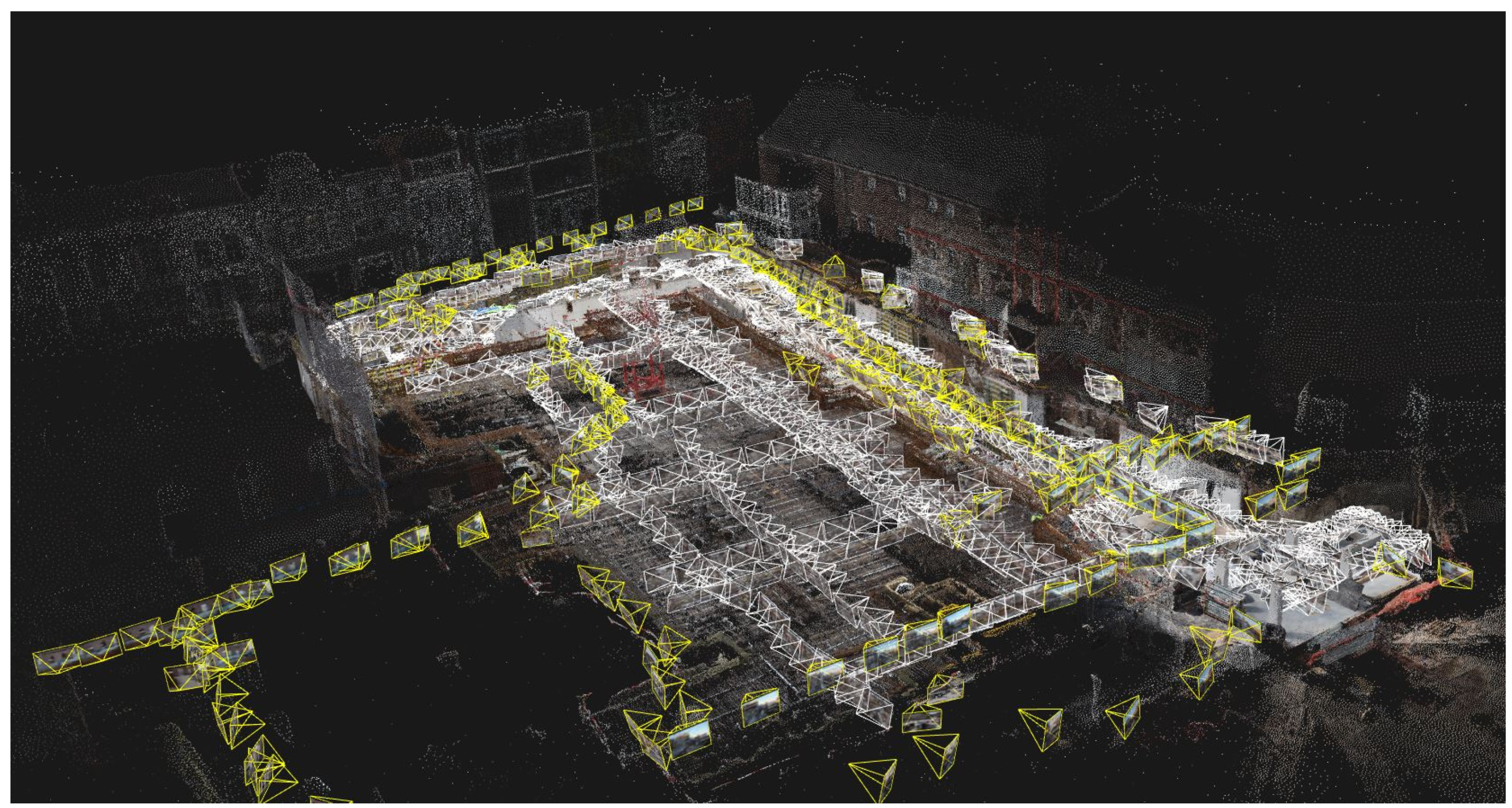

Figure 1). This way, after creating the reference module, the periodic establishment of photogrammetric reconstructions of the site is simplified and streamlined.

This workflow would especially benefit the construction industry, which is currently plagued by high failure costs, as described in numerous works over time [

5,

6,

7,

8]. Apart from planning flaws and design updates, errors originating from the building process itself also form a substantial part of these costs. Therefore, it is vital to mitigate their occurrence by better monitoring the construction process. This can be done by exploiting all available info that the different datasets (i.e., as-design BIM model and recorded datasets) contain. It is essential that they all share the same coordinate system, hence avoiding the appearance of non-existent errors due to misregistrations between datasets (

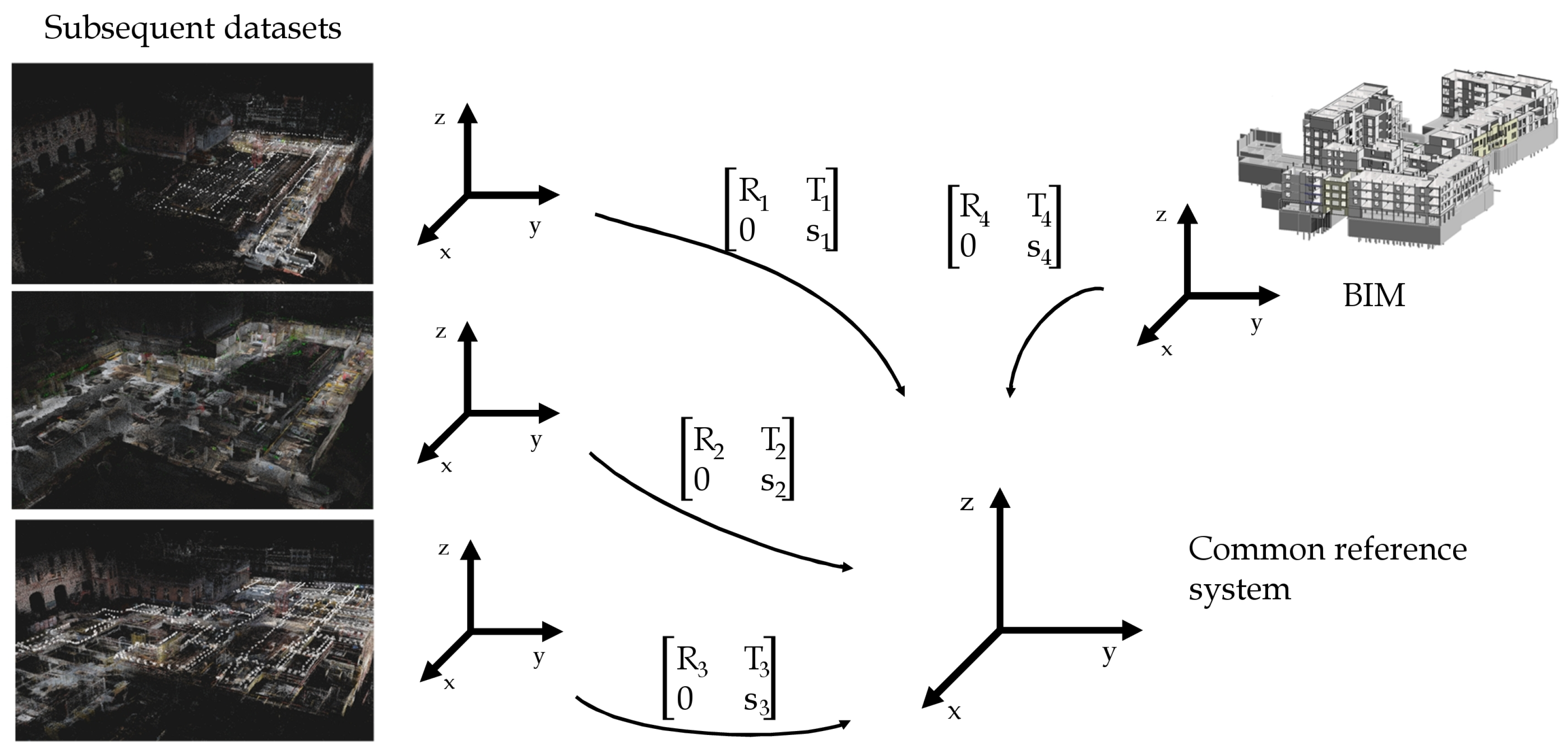

Figure 2). Severe drawbacks are still present in current procedures when it comes to geo-registration as it mainly relies on manual procedures such as indicating the set of GCPs in various images. Therefore the proposed reference module approach eliminates such tedious and error-prone manual procedure in the creation of subsequent datasets.

A third aspect in which the need for an updated framework can be found, is the fast occlusion of GCPs. Typically, these points serve for the establishment of the correct reference system. As construction works proceed however, it frequently happens that GCPs become occluded from a site perspective. In such cases, when following current procedures, one can either decide to abandon the specific occluded point if sufficient others are visible or it is required to alter the recording strategy such that the point can be correctly reconstructed and thus play its ground control role. As becomes clear, this practice quickly requires extra time (both for recording and processing) and does not add to high levels of accuracy. In contrast, our framework approaches this challenge from a different perspective by using pre-registered reference images as ground control rather than individual points.

The remainder of this work is structured as follows. In

Section 2, related work on registering singular and multiple datasets in the construction industry is discussed. Subsequently, the methodology of the proposed geo-registration framework is presented in

Section 3. In

Section 4, the performed experiments on a real-world construction site are presented. The discussion on the conducted experiments and the proposed approach are shown in

Section 5. Finally, the conclusions are drawn in

Section 6.

2. Related Work

From a construction monitoring perspective it is vital to produce consecutive datasets of the construction site. Especially for progress monitoring purposes, it is crucial that these different datasets share a common reference system [

9]. This ensures that comparative progress analyses can be executed swiftly. Currently, two main trends can be noticed to achieve a uniform coordinate system among all datasets. This is also shown in

Table 1, which displays the major works on geo-referencing consecutive datasets alongside a summary of the main drawbacks for each approach.

A first method is to directly geo-reference each recorded dataset. By relying on external measurements, the location of the control points is determined in the desired coordinate system. These then serve as valuable input for processing the recorded data into a geo-referenced dataset. Bosché et al. [

10] present their work on automating the retrieval process of objects represented by 3D CAD models in laser scanning data. They rely on geo-registration to estimate the a-priori positions of the scans. Doing so, the approximate location of the search object is known, resulting in a simplified retrieval process. Similarly, Zhang et al. [

11] and Tuttas et al. [

12] use control points with coordinates known in a predefined reference system to process the laser scans and recorded imagery respectively for progress determination. In the work of Golparvar-Fard et al. [

24], the aim for a common reference framework is approached slightly differently. Instead of relying on Structure-from-Motion (SfM) to reconstruct the site and derive the achieved progress, they use a set of fixed cameras to assess the current building state. By determining their pose once, according to the coordinate system of the 4D model, and consider it as known in subsequent analyses, it is assured that the consecutive image datasets use a uniform coordinate system.

A second and more frequent practice for assuring a common reference system among all datasets relies on co-registration. Instead of geo-referencing every dataset, only the first one is registered according to the desired coordinate system. All subsequent processed datasets are then co-registered to the first dataset based on correspondences. These provide the necessary info to determine the transformation (i.e., translation, rotation and-in case of photogrammetry-scale) parameters to align the new dataset to the reference one. Although co-registration can be executed completely manually such as in [

25], most works rely on a more automated workflow. Typically, the procedure consists of two major stages, namely a coarse registration followed by a fine registration. Over the past decades this framework has undergone numerous optimisations and has been automated on several aspects. Mostly, the fine registration phase relies on an Iterative Closest Point (ICP) algorithm. This was first proposed almost simultaneously by Besl and McKay [

26] and Chen and Medioni [

27]. In the following years numerous works focussed on optimising the fine registration such as presented in [

10,

13,

14,

15,

16,

18].

Up till recently, the coarse alignment was executed mainly manually by indicating corresponding points in both datasets [

17]. A main drawback however is that the ICP or derivative algorithm is only able to correctly handle the data if the distance between the corresponding points falls within its working range [

18]. This means that correct results are only obtained if the coarse registration was executed

well enough. As a result, numerous research was conducted more recently to automate the coarse registration and make it more robust and accurate. In the works of Rusu et al. point feature histograms are used for a first initial alignment of the point clouds [

20,

21]. Also, Bueno et al. present their work on tackling the creation of a well-performing coarse registration algorithm [

22] as well as Kim et al. [

2,

19,

23]. Moreover, in [

19], Kim et al. present a fully automated workflow that further optimises the coarse registration result using an ICP algorithm in combination with the Levenberg–Marquardt algorithm originally presented by Fitzgibbon et al. [

28].

In our work, a different approach is taken that incorporates some aspects of both the aforementioned main trends for geo-registration. A first dataset, serving for reference, is geo-registered by indicating GCPs. Instead of geo-registering or co-registering the subsequent datasets, the pre-registered reference images are actively used in the alignment of the subsequent imagery. These reference pictures hence not only serve as geo-locational anchors, they also execute a function similar to the co-registration approach insofar that the new imagery is registered to the reference pictures based on correspondences. The proposed approach differs from co-registration approaches such as in the works of Golparvar-Fard [

3,

29] insofar that there the subsequent dataset is processed on its own after which it is co-registered with the reference dataset. This encompasses that the processed subsequent dataset is considered as a rigid and indivisible object to transform. Possible occurring drift and alignment issues hence are preserved in the final transformed dataset. In contrast, our work makes active use of the excellent quality of the reference dataset by letting it serve as a crucial backbone for the registration of the new imagery.

Up to the authors knowledge the only work presenting a similar approach as ours can be found in the paper of Aicardi et al. [

30]. However, their work focusses on aerial nadir images, obtained via an Unmanned Aerial Vehicle (UAV), which typically are oriented very similarly. Furthermore, the flights were conducted at relatively large heights, providing an excellent overview on the site. In contrast, in our experiments, terrestrial images are used that typically face all possible directions. These pose a much harder challenge for successful registration as only parts of the referencing surroundings are visible. Furthermore, as construction works evolve, it often is the case that several enclosed parts of the site are only linkable via the reference framework. In our experiments the reference module is actively used such that the new imagery can be aligned whereas in [

30] it only serves as a geo-registration instrument.

In all formerly discussed works, control points are required somewhere in the process. Therefore also several efforts have been undertaken to automate the manual GCP indication process. Typically this is done via coded targets such as described in [

31,

32,

33]. Based on predefined patterns it is possible to detect which specific target is displayed. However, the largest drawback of this approach is the necessity to materialise the GCPs. As in our approach, the GCPs are only used once, the consideration can be made if it is worth the extra effort to materialise the points such that they can be retrieved automatically. Although we did not use this workflow in our experiments, it remains perfectly possible to do so.

3. Methodology

For construction site and especially progress monitoring purposes in the AEC industry, the establishment of a common reference frame for different datasets is paramount. In this light, our work proposes a method to assess the possibilities of employing a reference module, functioning as a geo-registering tool, in the creation of multiple subsequent reconstructions of the site (

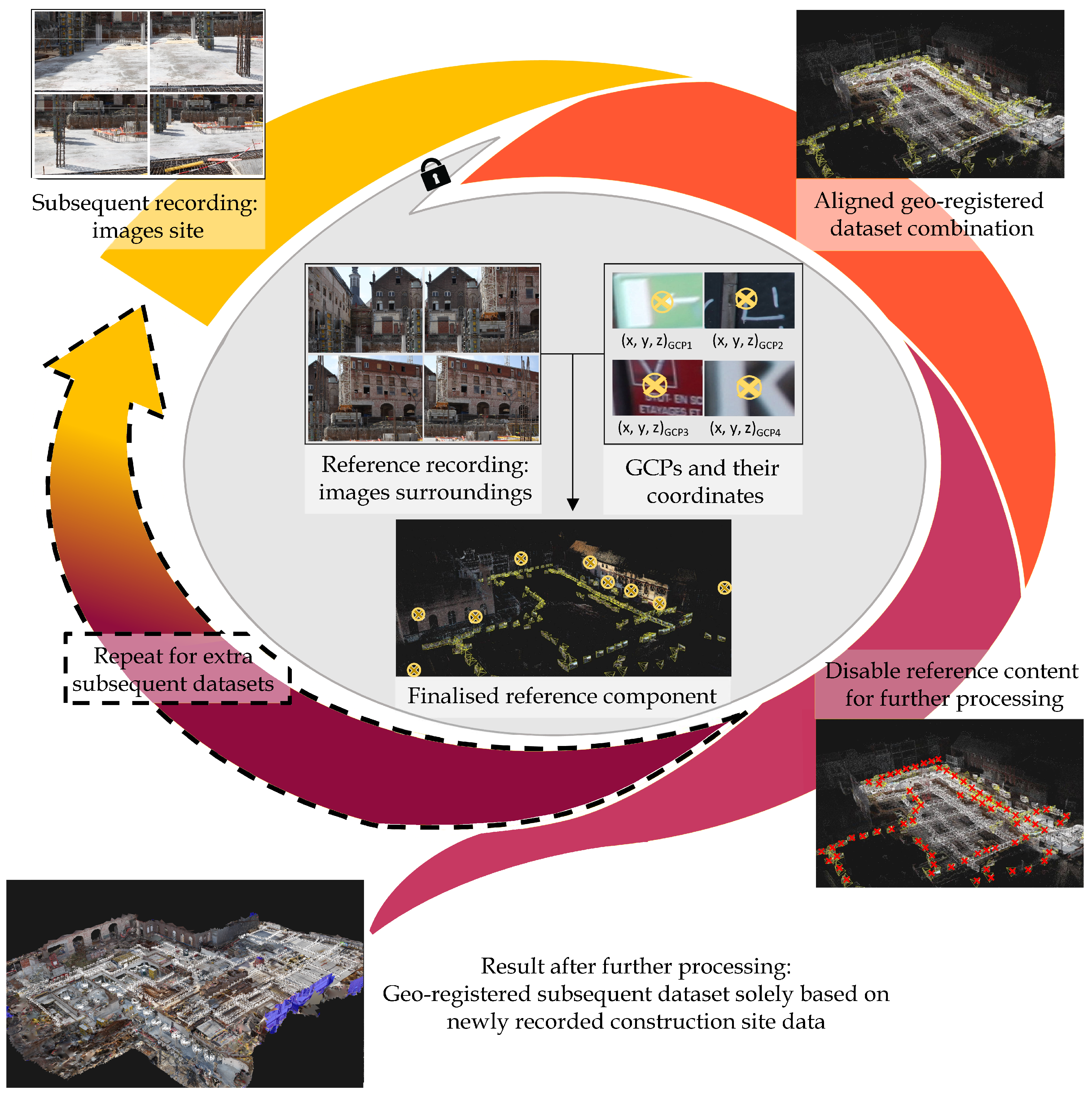

Figure 3). In the following subsections the stages of the procedure are described in depth.

3.1. Reference Module

The first stage consists of establishing the reference module that is used repeatedly in the creation of subsequent datasets (

Figure 3—inner part). The main idea is to establish a referencing tool to ensure that new, subsequent datasets are correctly placed in the adopted common reference system. After creating this reference module, which already incorporates the chosen coordinate system, it is possible to tie the subsequent datasets to it, forcing them to inherit the specific coordinate system. As will become clear, the central and crucial role of the reference module requires extra time and effort during its establishment. The quality is ensured by creating the module with more data than strictly necessary. Two important data sources must be part of the reference module: it is formed on the one hand by aligning images of the construction site’s surroundings and on the other hand it contains GCPs that play a crucial role for providing the necessary coordinate system info for the reference project.

In contrast to subsequent datasets, the images in the reference module do not focus on the construction site, but rather portray the surroundings of the site. The underlying reason is that it is assumed that the surrounding environment does not change over the relatively short time span of the construction works. Even in the case of alterations, it is highly unlikely that all surrounding structures are renewed simultaneously. Therefore these surroundings fit perfectly as a safeguard for reference system control. The first stage in the establishment of the reference module consists of recording imagery of the scenery surrounding the construction site. It is required that the dataset is both accurate as well as detailed and therefore it is advisable that two capturing series are executed: the first one focusses on the detail and the GCPs, while the second series focusses on portraying larger parts of the environment, hence safeguarding the overall accuracy. Even though some areas of the surroundings, such as areas with no clear distinctive points, might not add much value with respect to their future reference purpose, it still is important to record these. The reason is that we want the reference project to form a closed loop, hence safeguarding the desired high quality by mitigating possible occurring drift. Furthermore, to enhance the quality even further, a multiple of the strictly necessary number of images is taken. By capturing the site’s environment profoundly, extra constraints are added such that the network of images is strengthened, both internally in the reference network and later on when registering subsequent recordings. This also ensures that the GCPs are depicted in a multitude of images, again contributing to the reference module quality.

For establishing the correct coordinate system of the reference module project, the common procedure of GCP indication is used. An abundant set of distinctive points are determined for ground control. Similar to the abundant imagery, the redundant GCPs result in a strengthened control network. Furthermore, the additional time investment to add extra points to the strictly necessary minimum is relatively low compared to the increase in overall accuracy. As is commonly the case, the control points are precisely measured by total station to ensure a high quality. Depending on the type of project a specific transformation is then applied to this point set to transform their coordinates into the desired reference system. Subsequently, the points then assure that the project inherits the incorporated coordinate system when processing the data.

The two aforementioned sets of data, i.e., the imagery and GCPs, are photogrammetrically processed. However, as subsequent projects only require the reference images and their pose, a fully reconstructed 3D model is not needed. The processing can be limited to the first photogrammetric step, namely the alignment of the images taking into account the GCPs. Therefore, these points are indicated in an excessive number of images that portray them to maintain the high quality. The resulting finalised reference module consists of an accurately created and highly constrained network of environment images, hence providing the necessary info for processing subsequent datasets into the correct reference system.

3.2. Subsequent Datasets

In the second phase of the framework, one or multiple subsequent datasets are processed with the important aid of the reference module (

Figure 3—outer part). As previously stated, the new datasets only contain images focussing on the actual construction site. Nevertheless, the site’s environment is depicted in considerable parts of the new images, hence ensuring the vital connection between the reference module and the imagery of the subsequent dataset.

In the next step, namely the alignment of the new pictures, the reference component plays its major role. From now on, the module is considered as an accurate representation of the site’s surrounding reality. Therefore, for quality preserving purposes, this component is locked in all its facets: all camera parameters, locations and orientations are considered as known and thus are set to non-adjustable. Subsequently, the new images are added to the reference imagery, after which the alignment is initiated. This results in an aligned and geo-registered dataset combination. By following this procedure, it is made sure that the resulting combined dataset and thus also the new images inherit the desired coordinate system, present in the reference module. Furthermore, because the reference images are locked in this process stage, they serve as anchors to which the new images are tied. In essence this boils down to the addition of several million of possible control points to the project, since all distinctive features in the reference images with a corresponding point in the new imagery, can enforce their control function. This significantly augments the number of control points compared to the sparse set of such points in individual GCP indication approaches. The reference module hence forms an important body of control which adds major accurate constraints to the alignment of the new images and consequently preserves the metric quality in the new dataset.

For the other processing stages, the reference imagery no longer contributes to the project quality and processing as it only contains info on the environment of the site. Therefore, these images are disabled in the dense matching and all subsequent steps in the photogrammetric process.

In conclusion, the proposed workflow delivers subsequent datasets that are solely based on newly recorded imagery of the constructions on the site. However, the result inherits the desired coordinate system and also the qualitative metrical aspects of the reference module. Apart from the creation of the reference module, this means that similar results, namely geo-registered datasets of subsequent recordings, can be obtained without the need for tedious and error-prone GCP indication. Moreover, the reference module is not restricted to single use as it can be employed repeatedly for extra subsequent datasets.

4. Experiments

To assess the validity of the proposed framework, the method was tested profoundly. The experiments took place at a construction site in Ghent, Belgium where three large apartment buildings and an underground parking lot were under construction. In accordance with the methodology stages, the conducted experiments are further discussed.

4.1. Reference Module

The reference images were recorded at the start-up of the construction site of approximately 80 by 80 m. This ensured that the surroundings of the site were clearly photographed without any obstructing building materials or equipment. This allowed for recording the environment in high detail in 296 images, taken with a Canon EOS 5D Mark II. The capturing workflow was executed in two recording series. In a first stage, we focussed on the overall overview. The surrounding scenery was photographed from a relatively large distance of approximately 30 m to ensure a good and accurate superstructure project. Subsequently, the focus shifted to capture the lacking details in the former recording series, by capturing the environment from a closer distance. This reassured that, in the following step, the GCPs could be indicated with high detail and hence with better accuracy. Moreover, the facades of the neighbouring buildings were photographed under different angles such that each of the GCPs could be indicated in multiple images, again augmenting the reference module’s accuracy. The mix of close-range images and images taken from a further distance, form the ideal input for the reference project to be both accurate as well as detailed.

Subsequent to recording the reference imagery, appropriate GCPs such as in

Figure 4 were selected. Typically, the points were distinctive features to facilitate an easier indication in the pictures. Moreover, since materialising the points is not required, it was made sure that the final targets were optimally spread over the scene. This resulted in a total of 23 points, varying in height and evenly distributed over the surrounding structures and facades, to constitute the control network. The points were determined in coordinates up to approximately 2 mm accuracy by reflectorless Leica Viva TS15 total station measurements. As the site was still in start-up phase it was possible to measure all the points from a single total station setup. In other more complex or further advanced projects however, it might be required to post-process the measurements from multiple setups. This would slightly worsen the reference module quality. However, also current methods suffer from a comparably worse GCP location quality when measurements are conducted from multiple total station locations. In this work, the end product is always presented as a geo-registered final project, however in our experiments we limited ourselves to choose the coordinate system of the BIM model as a common reference frame. Opposed to our case and depending on the goals of the consecutive reconstructions of the site, geo-referenced data might be required. In such case, the procedure remains exactly the same: a transformation is calculated such that the GCP locations are represented in the preferred coordinate system. In our case this was the desired local BIM model coordinate system while in other cases that might be a(n) (inter)national reference system.

The combination of the two former datasets, i.e., the imagery of the surroundings and the GCP set, was processed in the next stage. In a photogrammetric software package, RealityCapture in our case, the selected control points were indicated in the available images. The major future role of the reference module necessitated that this process was executed as meticulously as possible. Therefore, we indicated the control points in all images depicting them. Consequentially, the 23 GCPs were indicated 340 times in total. Again, taking in mind the importance of this module for the processing of subsequent datasets, it was required that the processing was executed with optimal settings. Therefore, the parameters in the software package were adjusted to the highest possible quality. The image downscaling parameter, for instance, was set to 1 such that all available info could aid the overall quality of the reference module.

As previously stated in the methodology, it sufficed to limit the processing to the alignment of the imagery. For future use in the creation of subsequent datasets, the reference module was exported as a RealityCapture alignment component, containing reference images and their poses in the chosen coordinate system, GCPs and the calculated sparse point cloud. Depending on the used software, this approach might slightly differ.

4.2. Subsequent Datasets

Similar to the creation of the reference dataset, the first stage of the subsequent dataset creation consists of recording images. Opposed to the capturing workflow for the former dataset however, the focus now lies entirely on the elements under construction on the site. For our experiments we recorded the construction site five weeks after recording the reference dataset and then once more two weeks later. This allowed us to analyse and compare the different datasets profoundly. The results of that are presented in the following

Section 5. The two subsequent datasets consisted of 919 and 715 images, respectively. As becomes clear, the shift in focus to accurately capture the construction elements results in many more images compared to the surroundings recordings. Traditionally, the next step would be to open a new project and start indicating the GCPs in these images. In contrast, in our case, it suffices to import the reference module. As mentioned, depending on the used software package, the exact workflow of this step might differ slightly. The underlying thought is that, for subsequent datasets, one can create a project with the new imagery as well as the already pre-processed reference module in its entirety with all the calculated links and constraints preserved. In our case, the new site images were imported into a new project, after which the reference module, in the shape of the alignment component, was also imported.

Subsequently, the alignment is initialised. However, as the reference module was already accurately processed, all parameters of the reference images, such as location, orientation and internal camera parameters, were considered as known and hence were locked. This ensures that the reference component is considered as a rigid body to which the new images are tied. Additional to metric quality preservation also the coordinate system was hence transferred to the site images dataset.

For the further processing stages of the subsequent datasets up till the final textured mesh product, the reference content does not yield substantial contributions, which allows for disabling the data during the rest of the process. The finalised subsequent projects hence only contain data based on the new imagery of the site. Nevertheless, the dataset is placed in the correct reference system and the metric quality is reassured through the use of the reference module.

5. Discussion

The main objective of our proposed framework is to limit the tedious and error-prone indication of GCPs to a bare minimum. As described in the experiments, our proposed method allows to deliver the required results. Yet, to truly evaluate the proposed workflow and especially the achieved level of quality, an in-depth assessment of the obtained results is paramount. Therefore, the two dataset types and the different processing methods are analysed in the following two

Section 5.1 and

Section 5.2. General remarks on the proposed framework and advantages over other methods are then discussed in

Section 5.3.

5.1. Quality Assessment—Reference Module

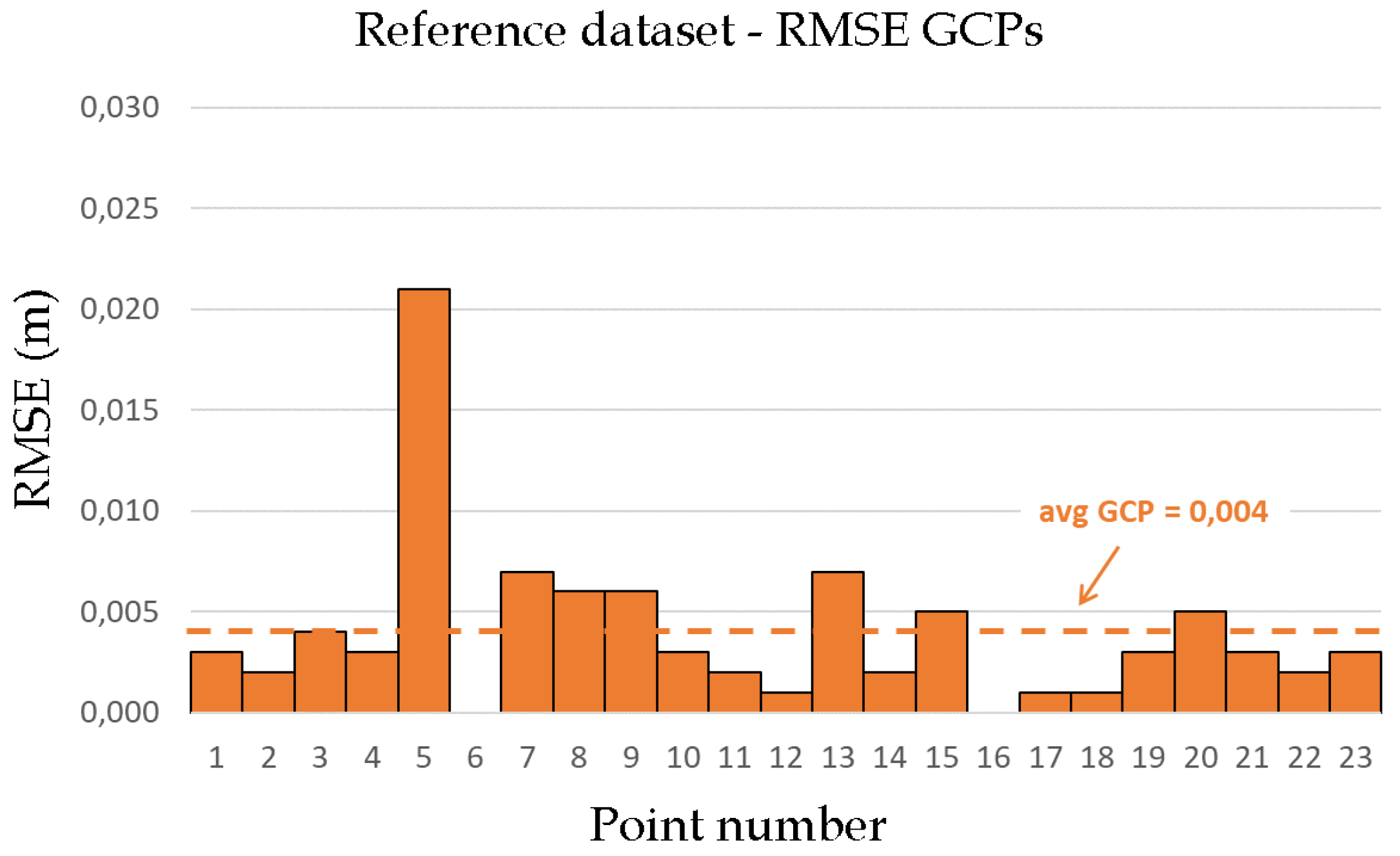

The metrical quality of the reference module forms the absolute centrepiece of our framework. Therefore it is paramount to assess the obtainable accuracy that is later transferred to the multiple subsequent datasets. In a first stage the reference dataset was processed with all 23 points as ground control entities.

Figure 5 shows the root mean square errors (RMSEs) between the actual locations, determined via transforming the total station measured point set, and the locations of the points after alignment. On average a satisfactory RMSE of 4 mm (with a standard deviation of 4 mm) was obtained. Furthermore, it should be taken into account that the GCP locations are only known with a 2 mm accuracy. There exists one main outlier (GCP 5, which is also shown in

Figure 4 (left)) with an RMSE of 21 mm. However, the far distance of the point from the site and hence sharp indication angles in the different pictures, explain this large RMSE. The high quality is also shown in

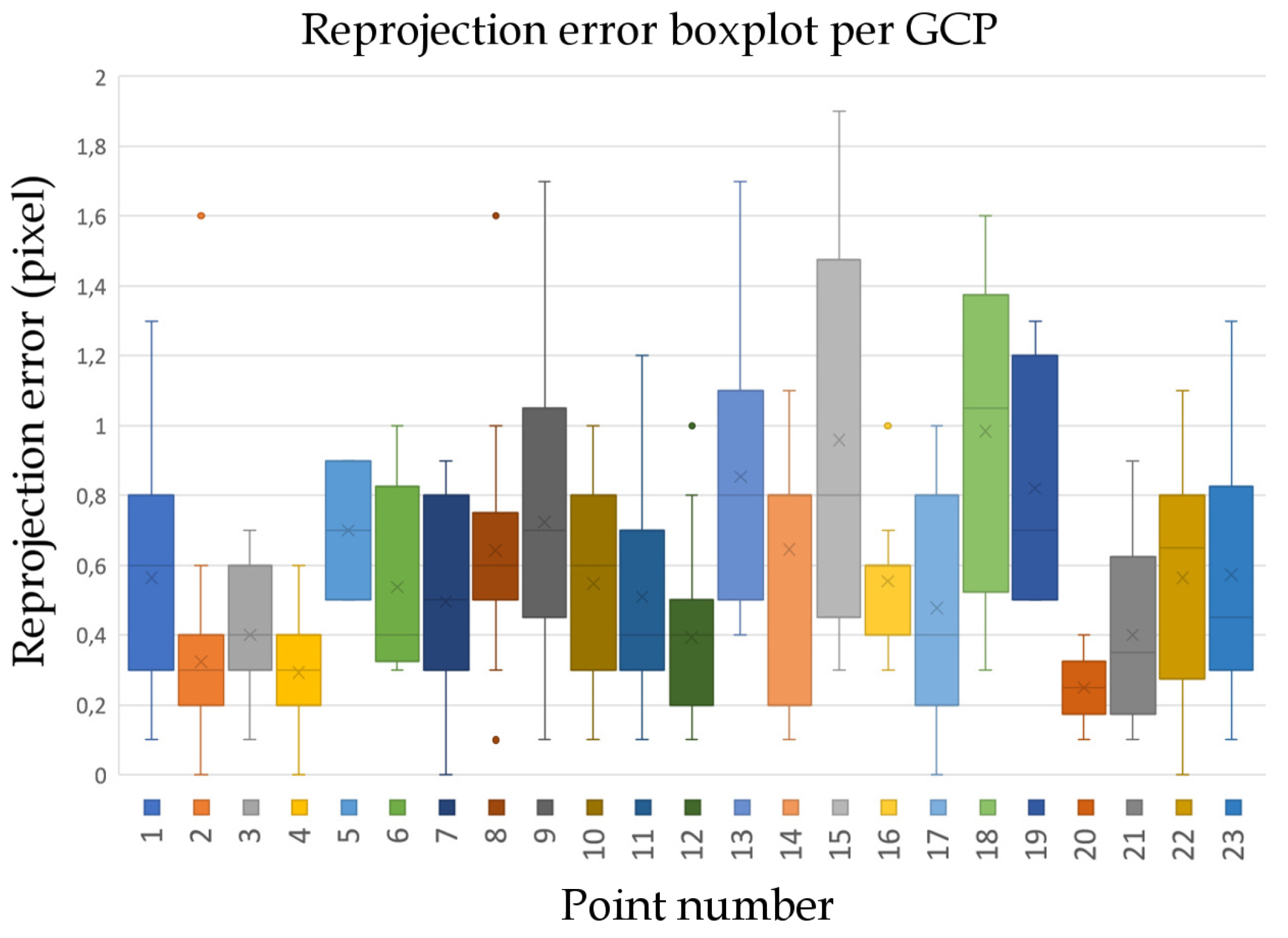

Figure 6, where the reprojection error is given for each of the GCPs. We obtained a median of 0.5 pixels and a standard deviation of 0.36 pixel as reprojection error.

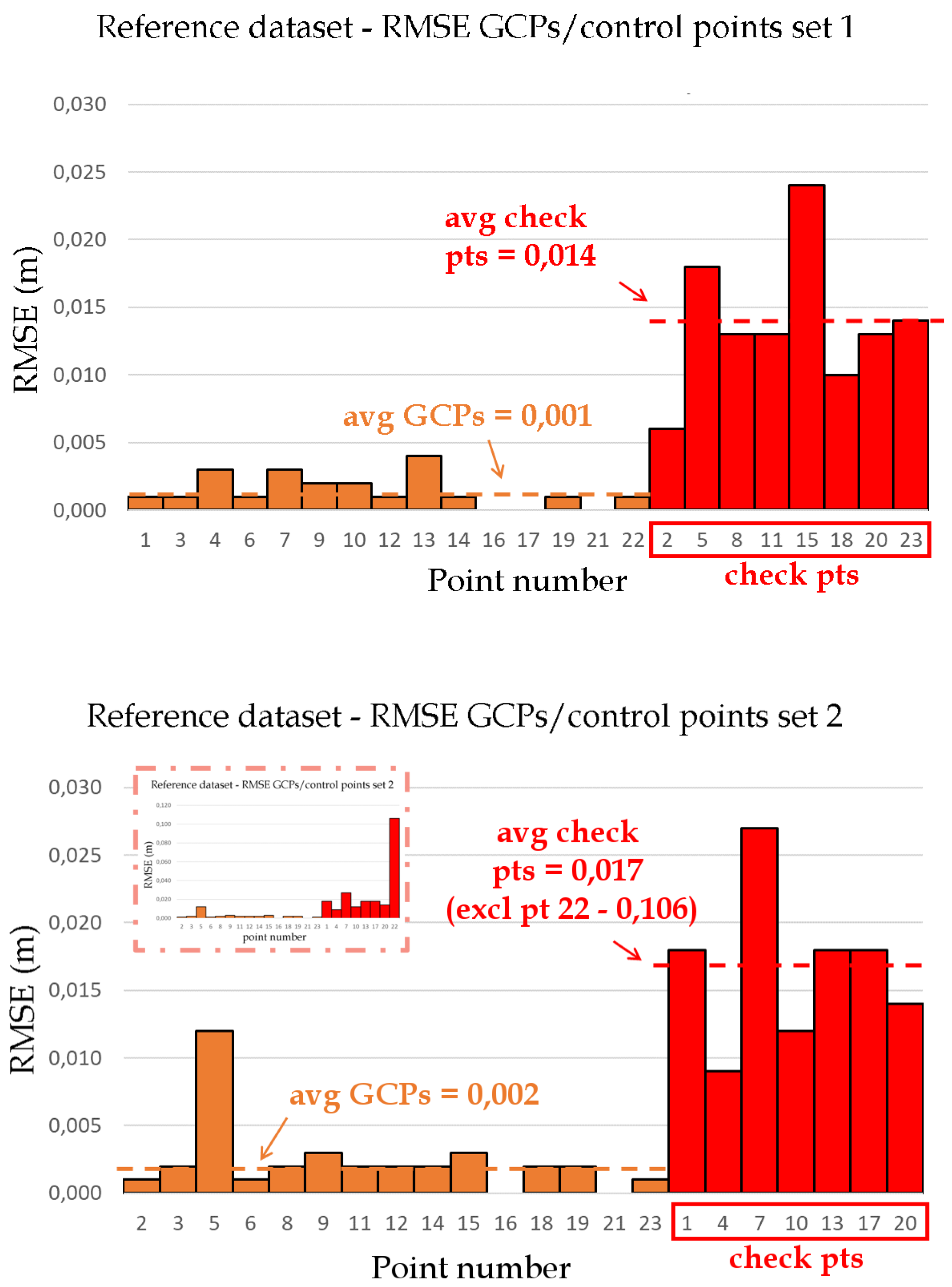

A procedure that better indicates the real quality, however, is to process the reference dataset with only a subset of the targets as GCPs and the others as check points. If all GCPs are used, the obtained accuracy might be overestimated. Photogrammetric software assumes that the coordinates of every GCP are known up to a certain accuracy, which defines their internal relative accuracy. However, the overall weight of the GCPs with respect to the other constraints (from the tiepoints) cannot be set in most photogrammetric software. As a result the GCP weight is typically relatively high. This might result in a deformation of the result in such a way that the model approaches the GCP coordinates as closely as possible. Therefore, two additional analyses were executed that only use a subset of the points as GCPs. The other points, called check points, do not have any ground control function and are processed just like any other point. Doing so, the obtainable accuracy of any random point in the reference dataset is approached much closer by the check point RMSEs than the achieved RMSEs when using all GCPs. The analysis was executed twice with two different GCP subsets consisting of 15 of the total 23 points. The remaining 8 points then serve as check points to assess the actually achieved accuracy. The results are shown in

Figure 7. The RMSE on the GCPs decreases to 1 and 2 mm on average, respectively. As these errors are misleading, the more representative RMSEs to be considered are the ones of the check points which are 14 and 28 mm (with standard deviations of 5 and 32 mm, respectively). However, as can be noticed in the subfigure of

Figure 7 (right) one clear outlier is present in the second dataset. This point also lies relatively far from the construction site. Consequentially, when removing the constraint at that location and knowing that the dataset is adjusted to fit to the GCPs that are located in a more central area, the considered point location is extrapolated, hence yielding such a large RMSE value. Moreover, if the specific point is considered for ground control, such as in the first analysis, no such extreme RMSE values occur. Therefore we conclude this point to be an outlier. When it is excluded, the check points in the second dataset achieve an average RMSE of 17 mm (and standard deviation of 6 mm), which is more comparable to the RMSE in the first dataset.

5.2. Quality Assessment—Subsequent Datasets

Similar to determining the achieved accuracy of the reference module, the same procedure is executed for the subsequent datasets. This also enables for comparing the results of the reference and a subsequent dataset. In the following assessments it is chosen to compare the proposed approach with the approach relying on manual GCP indications. In

Section 2, related works using co-registration approaches are discussed. However, also in that case, at least one of the datasets is geo-referenced, highly likely relying on the common manual GCP indication procedure. Therefore the following assessments show the validity of our method compared to the different other methods.

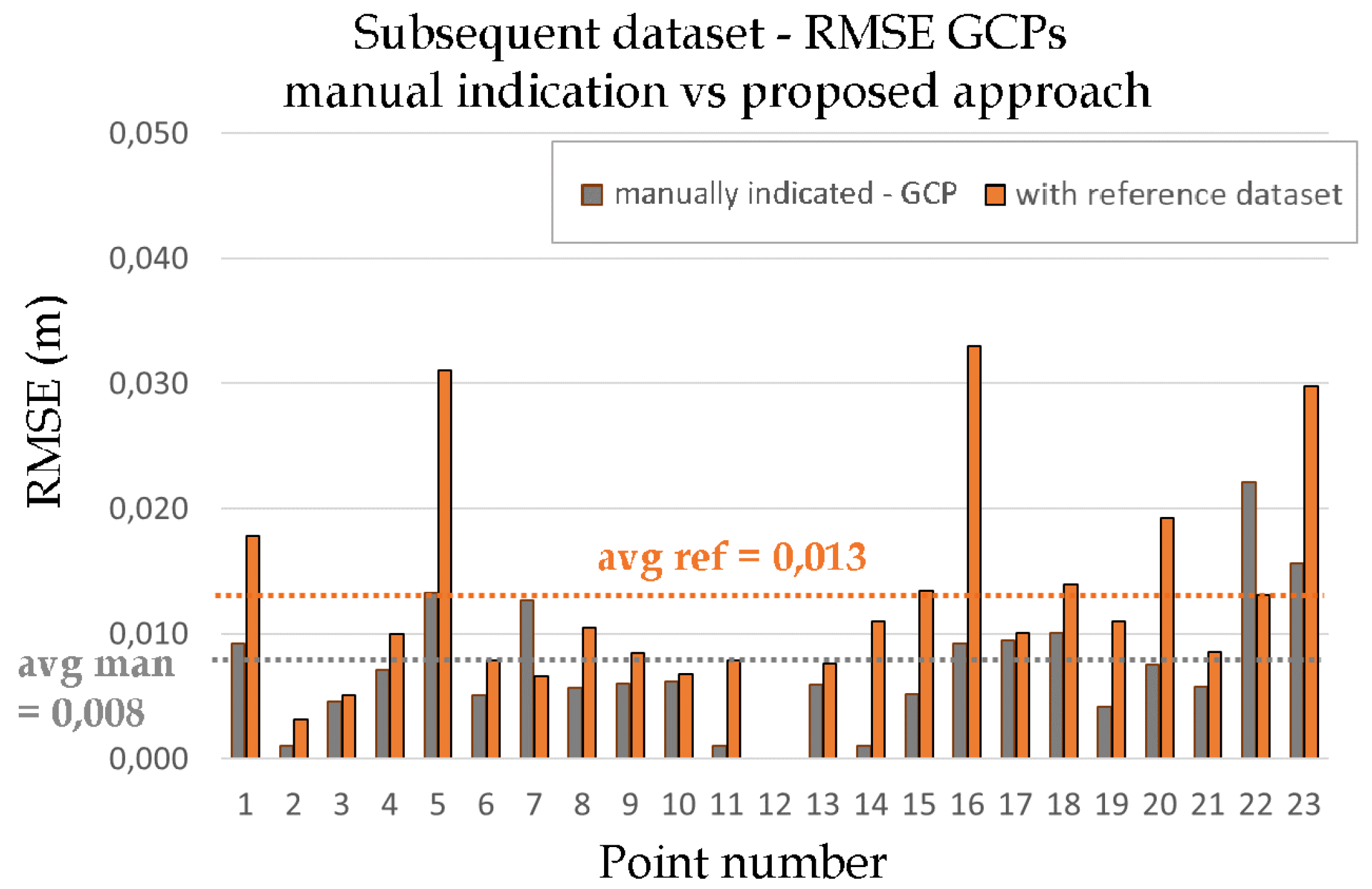

In the first stage, it is assessed how well our method performs regarding coordinate system accuracy. The subsequent imagery set is processed as described in the methodology. After the finalised alignment, all GCPs are indicated in 5 of the new images such that the current location of the point can be determined accurately. These results are then compared to the real total station measured locations (

Figure 8). On average an RMSE of 13 mm is obtained with a standard deviation of approximately 8 mm. This value represents the achievable accuracy of our method.

Referring back to the main objective, namely finding a more user-friendly and less error-prone workflow to geo-reference consecutive datasets, it is also important to compare our obtained results with the results that are achievable by following the traditional method. In that case, for every dataset, the points are indicated in the images after which the dataset is aligned and processed further. For the following analyses we processed one subsequent dataset following the manual indication approach. To evaluate the results as objectively as possible we reused the indicated check points from the previous step (the points indicated in 5 of the new images) but now as GCPs for the alignment. The achieved result is shown in

Figure 9 (grey), together with the result from the previous step, namely the result when following the proposed workflow in this work (orange). As can be noted, the manual procedure outperforms our method (8 mm RMSE vs. 13 mm). It should be taken into consideration, however, that all 23 GCPs were indicated in 5 images each, resulting in a total of 115 target indications. In more realistic cases, the number of GCPs for such project is lower, hence possibly worsening the results.

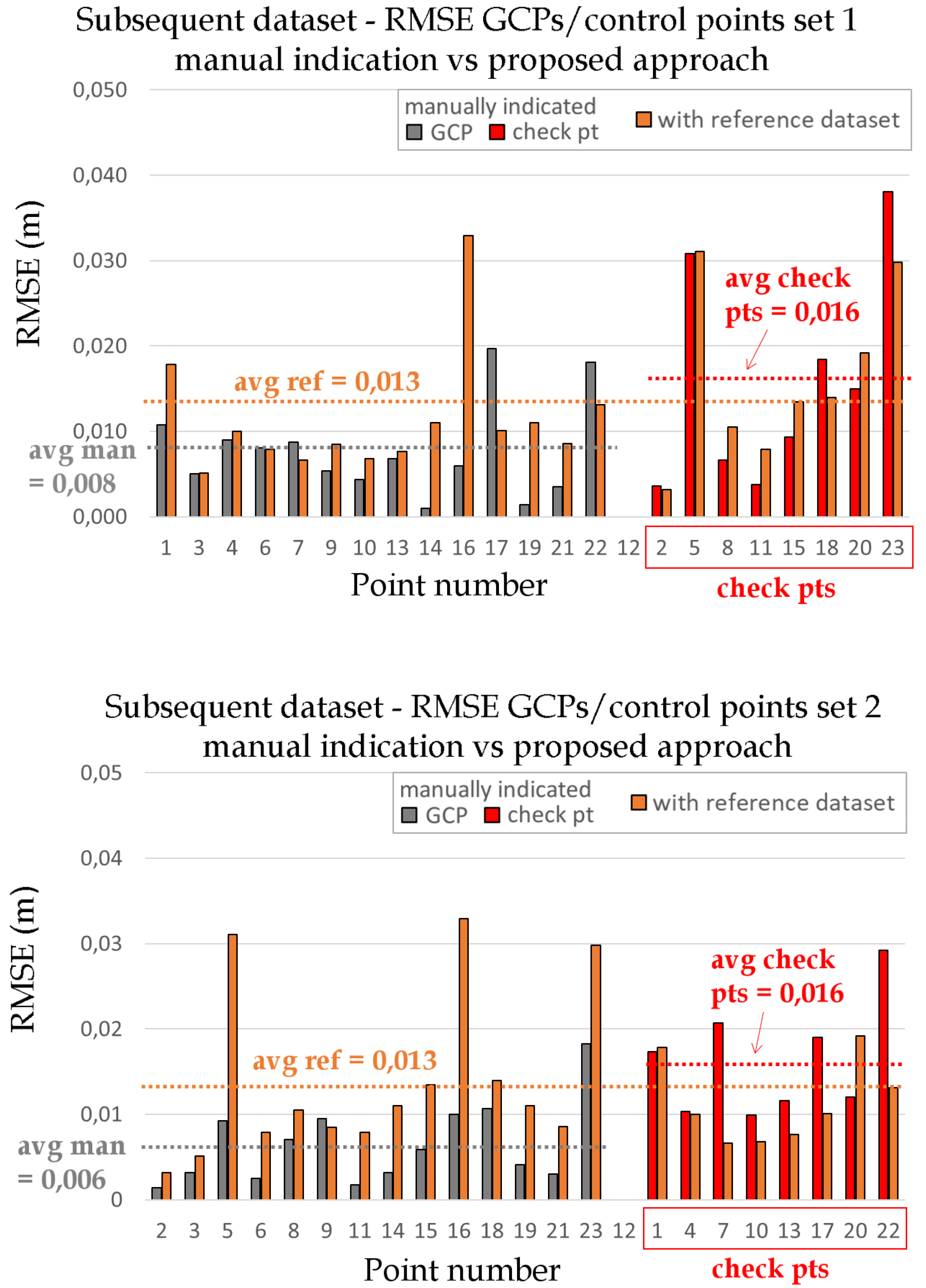

Moreover, similarly to the quality assessment of the reference module, a better quality indicator for the achieved accuracy is obtained when only a subset of indicated points is used for ground control. Therefore, the subsequent dataset was processed with the same two subsets of GCPs of

Figure 7. The results are shown in

Figure 10. It shows the RMSE of the GCPs in grey and of the check points in red. Additionally, the result of our proposed workflow is again included (orange). The red RMSEs represent the achieved accuracy when manually indicating the GCPs in the subsequent datasets. In both cases the RMSE is 16 mm (with standard deviations of 13 and 7 mm, respectively). The results of the second and more objective analysis show that the method proposed in this work slightly outperforms the current common approach that relies on manual GCP indication.

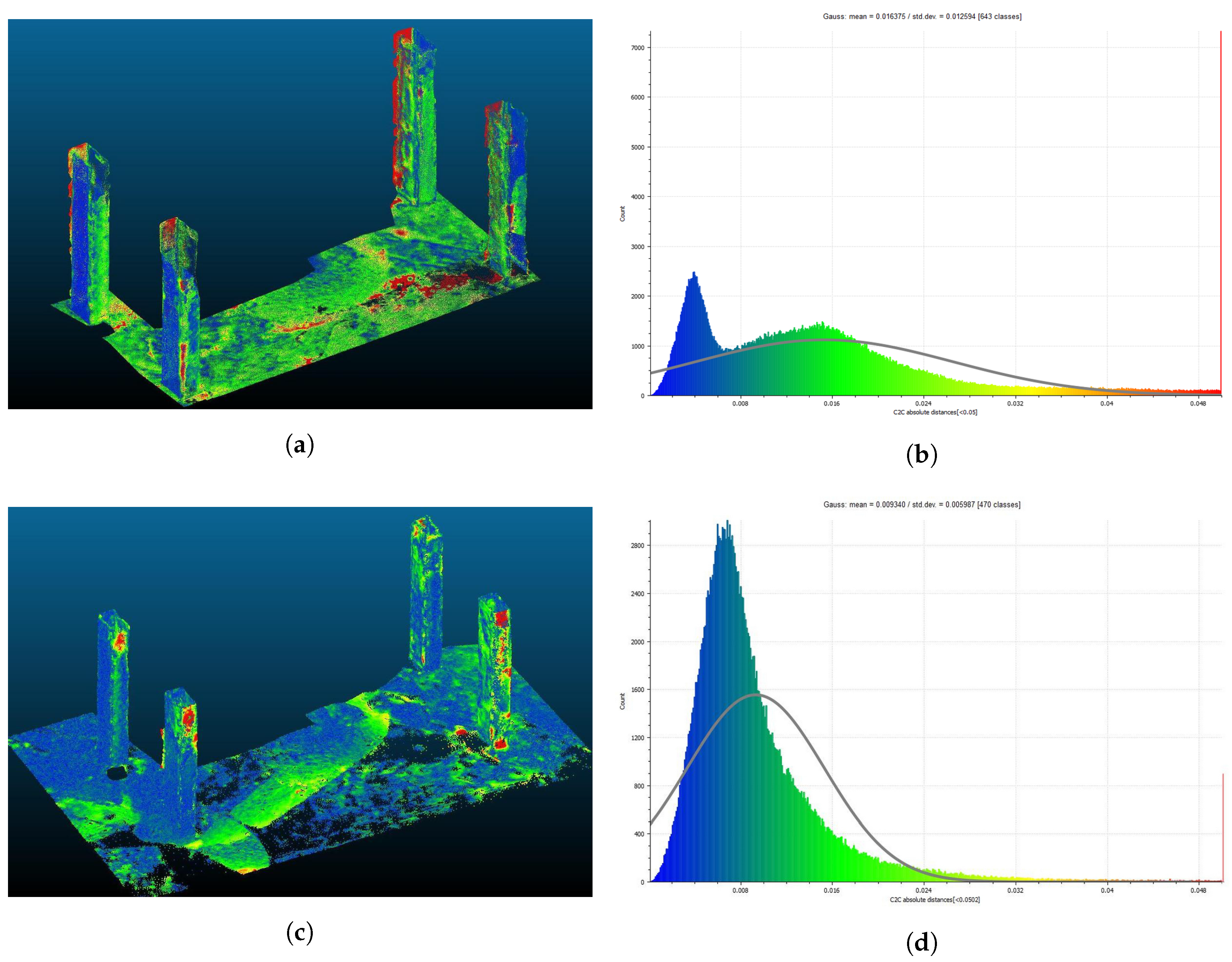

To further assess the obtained results, a comparison was made between the two subsequent datasets both processed with the reference module as well as with manually indicated GCPs (

Figure 11). A set of unaltered finished columns are compared for both datasets. Although in theory the average error should be zero, it is almost inevitable that the two datasets are registered in a slightly different way hence resulting in discrepancies. When processing following the traditional method, the mean disparity is approximately 16 mm with a standard deviation of 13 mm. In contrast, when processing with the reference module, the mean disparity is 9 mm with a standard deviation of 6 mm. This experiment shows that our proposed method is able to outperform the traditional workflow. Furthermore, the calculated discrepancies fall within the expectations, set by the previous experiments (on average 16 mm RMSE on the check points of a subsequent dataset, processed with manually indicated GCPs and 13 mm when processing with the presented method). This analysis again shows the validity of the proposed approach.

5.3. General Discussion

The creation of a reference framework happens only once. This yields the benefit that the materialisation of GCPs becomes obsolete as their future use is dropped. In the traditional procedure, materialised targets are strongly advised for simplifying the indication process and ensuring that each point can be indicated at the exact same spot throughout the different datasets. In our case however, such materialisation has become irrelevant as GCPs are only used once for the constitution of the reference module. After that, when processing subsequent datasets, the reference framework is formed by the aligned imagery and the visual information they contain rather than the specific set of points serving for ground control. Moreover, this yields the additional advantage that formerly unusable points become available. Previously, points were required to be materialised, which is impossible at unreachable locations. In contrast, for the proposed framework materialisation is obsolete, hence providing the opportunity to choose any distinctive point as GCP. This adds to the achieved accuracy level as the spread of the control points can be taken much more into consideration.

The proposed framework heavily relies on a correctly registered reference module. Therefore, the utmost attention for its creation is required. If anomalies were to arise in this module, these would automatically be transferred to all subsequent datasets, possibly resulting in severe deformations. In contrast, because the creation of the reference component happens only once, the time and effort that are put into it can be heavily enlarged. Moreover, because point materialisation is no longer strictly required, a larger set of points can be determined and indicated in the same time span. Despite the required extra efforts, the overall time gains (for the creation of subsequent datasets of the construction site) largely exceed the additional time needed for the reference module. The higher the amount of subsequent datasets, the larger the time benefit of course becomes. Furthermore, the complexity of subsequent recordings also decreases, as the focus solely lies on capturing the construction elements themselves. This encompasses that, while capturing, no extra time is spent to assure that the GCPs are not occluded and visible in enough images under different angles.

One of the critical aspects in our method is the overlap between site images and images of the site’s surroundings. Nevertheless, our experiments have proven this to be a non-issue. Despite focussing only on the actual site and its elements under construction, a substantial amount of the images portray large parts of the site’s environment. In the two mentioned subsequent datasets a significant percentage of the total amount of points in the dataset of approximately 19% and 28%, respectively, contained info on the surrounding scenery rather than the site itself. It can be assumed that this mostly is the case due to the terrestrial perspective of the recordings. As a result, ties are formed at all borders of the project between the new imagery and the reference body, strengthening the overall network and accuracy. This also yields the additional benefit that GCPs should not necessarily be visible in the pictures since any visible part of the neighbouring structures serves as a valuable reference.

Other advantages over current point indication processes also emerge. In the case of manually and, to a lesser extent, also (semi-)automatic indication approaches relying on coded target recognition, each of the individual indications yields a minimal, but unpredictable and random error. This encompasses that every subsequent dataset is processed a little differently than the previous one. When creating the reference module, similar errors occur in our case due to the manual GCP indications. However, the fact that in our approach the exact same module is reused over and over means that every subsequent dataset is geo-referenced equally wrong or right. As a result, when comparing different subsequent datasets for progress monitoring purposes, for example, the absolute error becomes obsolete. This is proven by the former analysis where two subsequent datasets were compared (

Figure 11). The achieved comparative results hence are more accurate and trustworthy.

An interesting future assessment will be how well the method performs accuracy-wise when the construction works reach a further stadium of completion. As the works advance, it is assumed that the site’s environment becomes less visible. Nevertheless, in our opinion this will not cause troubles as even small visible parts of the surroundings still outnumber the sparse set of GCPs in methods. Furthermore, the same difficulties that occlusions pose are also present in projects processed with the traditional methods. In such cases, the accuracy is equally if not more threatened as it is more likely that individual GCPs become invisible. In those cases, the recording workflow must be altered such that GCPs become visible in more images or new GCPs should be created. Also in the proposed approach, in case the accuracies drop below a satisfactory level or very few parts of the surroundings are visible, it remains possible to alter the recording workflow by adding some extra images that focus on the surroundings and have correspondences with the images with the site in focus. Nevertheless, it is assumed that alterations in the capturing workflow are less frequently required compared to current processing methods that rely on visible GCPs. In more severe cases when large parts of the surroundings become permanently occluded, it remains possible to carefully extend the reference dataset with additional imagery to constitute a new reference framework.

6. Conclusions

Frequent recordings are indispensable for monitoring construction sites and the achieved building progress. A framework is required that enables for quickly but accurately recording and processing imagery of the site. Moreover, the resulting three-dimensional reconstructions ideally share a common coordinate system to enable fast and easy comparative analyses. To this end, a new method is presented in this work. The repetitive indication of GCPs in multiple subsequent datasets, a process which is known as tedious and error-prone, is abandoned. In contrast, GCPs are indicated only once, namely at the creation of a reference module. This module is established with imagery only focussing on the environment of the scene rather than the site itself. Large parts of the surroundings are also visible in the imagery of subsequent datasets, even despite focussing only at the elements under construction. This overlap forms the crucial link between the datasets, hence making it possible to use the pre-processed reference module as a valuable starting point for the alignment of the new imagery. Because in further processing stages the reference content is neglected, the same final result is obtained as if the subsequent dataset were processed without the reference module. Nevertheless, the two crucial properties of the reference module are transferred, namely the high accuracy and the incorporated reference system. In our experiments, substantial time gains were noticed for the overall process of creating multiple consecutive datasets, even though the creation of the reference module required extra time and effort. Furthermore, the conducted quality assessments have shown the validity of the proposed method by slightly outperforming the current manual procedure (an average disparity of respectively 9 and 16 mm for unaltered parts of the site). In conclusion, it can be stated that the proposed method is favourable over current procedures for the creation of consecutive datasets.