1. Introduction

Remote sensing can provide valuable information for ecosystem structure and function over large areas [

1] that influence biodiversity [

2]. Aside from area-based approaches [

3], a large number of studies have mentioned the fundamental role of species identification at the single tree level in much forest inventory and management [

4,

5,

6] as well as biodiversity [

7]. Therefore, in order to maintain up-to-date information, effective methods and techniques need to be developed that accurately classify tree species.

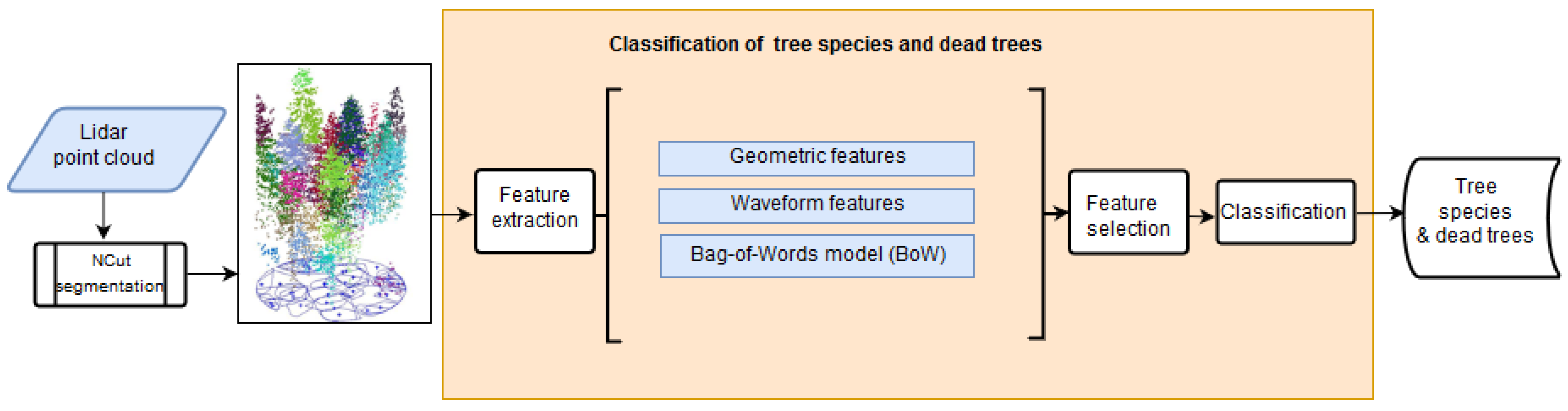

Single tree species can initially be detected using individual tree segmentation approaches and later mapped by a classification strategy. Recent innovative methods for single tree detection have utilized a 3D approach instead of using the canopy height model (CHM) alone to reduce the over/under-segmentation problems [

8]. The detection rates for single trees can be improved significantly by applying the spectral clustering normalized cut method (

) to a (super) voxel forest structure [

9,

10], and introducing a classifier-based adaptive stopping criterion [

11]. Moreover, to segment individual trees Strîmbu and Strîmbu [

12] proposed an approach that captures the topological structure of the forest in hierarchical data structures and quantifies the tree crown component relationships in a weighted graph. Overall, accurate single tree segmentation is an important step for high quality species determinations at the individual tree level.

Over decades, optical imagery that can remotely measure the spectral reflectance of an object has been used as a standard source to discriminate tree species [

6,

13]. Optical aerial and spaceborne instruments can record the spectral signatures of tree species not only in the visible spectral range (RGB), but also in the near-infrared (NIR), short-wave infrared (SWIR), and even thermal infrared. Depending on the radiometric resolution, the radiation can be measured in multiple bands. Multispectral sensors typically provide up to 10 spectral bands, whereas hyperspectral sensors have hundreds of bands [

14]. Recently, spaceborne optical sensors with high spatial and temporal resolution have also been successfully applied to tree species classification. Moreover, dense matching has become a mature technique used to reconstruct objects from a series of highly overlapping images on the pixel level with excellent subpixel accuracy [

15]. Regarding forestry applications, this novel computer vision method enables a dense point cloud to be generated from canopy surfaces that later can be used for tree species classification either on the tree level, or using an area-based approach [

16]. Moreover, multispectral and hyperspectral sensors can be combined with ALS to enrich the limited radiometric information of ALS. The authors of Ullah et al. [

17] demonstrated that estimating forest structural parameters at the stand and forest compartment level can be improved by using point clouds generated from aerial imagery. The authors of Nevalainen et al. [

18] combined an RGB camera and a frame format hyperspectral camera mounted on a multicopter drone. They showed that the single tree detection rate is strongly dependent on the forest stand characteristics and ranges between 40% and 95%. Moreover, the tree species classification accuracy (four different tree species) achieved an overall accuracy of 95% and an F-score of 0.93. In this regard, Maschler et al. [

19] demonstrated the feasibility of classification of 13 tree species (8 broadleaf, 5 coniferous) with an overall accuracy of 89.4% by combining ALS-based tree segments with hyperspectral data acquired by a Hyspex VNIR 1600 push broom instrument. Finally, the study of Grabska et al. [

20] reported a mapping of nine tree species using Sentinel-2 time series. The usage of only two images from different seasons resulted in an overall accuracy higher than 90%. However, the main drawback of these passive sensors for forest applications is the limited forest canopy surface penetration, hence, the forest structure beneath the canopy cannot not be fully captured in 3D.

Over the past decade, ALS point clouds have become an important data source for classifying tree species. Several studies have proposed using structural features from ALS point clouds, such as crown shapes, height distribution percentiles, and proportions of first/single returns for distinguishing between tree species [

21,

22,

23,

24]. Separating trees by height is important for single tree classification, especially in forests where tree height distributions differ between species [

21,

25]. After the advent of single full waveform ALS systems, several studies reported accuracy improvements by applying waveform features that use detailed backscattered pulse information, such as the intensity and pulse width [

26,

27]. The authors of Höfle et al. [

28] used calibrated waveforms from the ALS data to distinguish between European larch (

Larix decidua), English oak (

Quercus robur), durmast oak (

Quercus petraea), and European beech (

Fagus sylvatica), and found that echo width could separate larch from broadleaf trees. However, the responses of the oak and beech represented by the backscatter cross-section and echo width were similar. Reitberger et al. [

9] found that radiometric information derived from full waveform ALS, such as the intensity and pulse width, provide a strong basis for distinguishing between broadleaf and coniferous trees. The authors of Heinzel and Koch [

29] explored a set of waveform-based features for classifying four groups of tree species in a mixed temperate forest with an overall accuracy of 78%. The authors of Yao et al. [

30] found that single wavelength ALS data (1550 nm) could be advantageously used to classify coniferous and broadleaf trees in the Bavarian Forest National Park with a maximum overall accuracy of 90%. However, Shi et al. [

31] found that the classification accuracy decreases by 30% if the detailed tree species mapping (six different tree species) is attempted for the same study area. Further, Hovi et al. [

27] focused on systematically analyzing the identification potential of ALS point cloud features by investigating the sources of the within-species variations. They achieved an overall accuracy of 75% for the identification of three main tree species in Finland using ALS waveform features. Overall, considering the limitations of optical imagery, single wavelength ALS point clouds (full waveform) are superior data sources for classifying tree species [

32]. However, due to the lack of spectral information, detailed tree species identifications have yet to reach sufficiently high accuracies (up to 90%) [

33,

34].

The aforementioned results suggest that the intensity of single wavelength ALS (full waveform) is useful for classifying tree species. Moreover, the reflectivity of each tree is dependent on the laser wavelength. For instance, according to [

35], by using single wavelength ALS (1064 nm) under leaf-on conditions, the average intensity values of broadleaf trees are higher than those of most coniferous tree species. This difference is mainly due to differences in the tree structures. Broadleaf trees have larger single leaves, while coniferous trees have needles with a non-continuous leaf surface [

36]. Recently, Shi et al. [

31] verified that the intensity features make a more significant contribution to tree species classification in mixed temperate forests than the geometric features. Moreover, the bidirectional reflectance and geometry of the volumetric target surfaces significantly influence the intensity values recorded by a ALS system [

6].

Recently introduced multispectral ALS technology is promising for improving forest mapping as it can provide a denser point cloud and higher spectral information. A few studies have focused on the potential of using multispectral ALS point clouds for classifying tree species [

24,

34,

37,

38,

39,

40]. The authors of Lindberg et al. [

24] generated multispectral ALS data using three different instruments during different flights with a point density of 20 points/m

to characterize tree species. They employed visual interpretation to show that, if both spectral and geometric information from multi wavelength ALS data are used, the accuracy of tree species classification is better than that obtained when information from single wavelength ALS data are used. The authors of St-Onge and Budei [

37] used the intensity-based features extracted from three spectral channels of a Titan multispectral ALS system [

41] to classify broadleaf vs. Needle-leaf trees in a Canadian boreal forest and achieved a classification accuracy over 90%. The authors of Yu et al. [

34] used the same sensor and achieved an overall tree species classification accuracy of 85.6% for three different tree species in southern Finland using intensity-based features. The authors of Hopkinson et al. [

38] compared terrain and forest canopy attributes extracted from each wavelength of two multispectral ALS datasets (multisensor and single-sensor). They achieved an overall accuracy of 78% for the classification of land surface and vegetation (8 classes) by integrating spectral and structural information. The authors of Axelsson et al. [

39] used the Optech Titan

X System to investigate ten tree species in a boreal forest, and achieved a cross-validated accuracy of 76.5% using the height and intensity distribution of features from the tree segments. The authors of Budei et al. [

40] classified 10 tree species using the Optech Titan system with an overall accuracy of 75%. So far, combinations of various features from multi spectral ALS point clouds have been mainly used to examine tree species classification in boreal forests.

Thus far, the detection of individual standing dead trees from ALS point clouds has been of minor research interest. The authors of Yao et al. [

10] tackled for the first time the detection of dead trees with crowns using only the ALS intensity (single wavelength 1550 nm) and geometric features such as the crown shape and point height distribution. The study reports a classification accuracy between 71% to 73%. Recently, Polewski [

42] presented a method that uses single tree 3D segments in combination with multispectral aerial imagery. Based on features generated from the covariance matrix of the three image channels, a two-class classification (dead tree and non-dead tree) led to an accuracy of around 88%. The same author reported in the study Polewski et al. [

43] on the detection of standing dead trees without crowns (snags). The method used free shape contexts to generate salient features suitable to describe the single snags in a sparse point cloud. After optimizing the highly dimensional feature space with a genetic algorithm, the new approach classified 285 objects with a classification accuracy of 84.2%.

In summary, the classification of coniferous and broadleaf trees with single wavelength ALS data (full waveform) is possible with high accuracy. The classification of multiple tree species has not yet reached a practical performance. Multispectral ALS has the potential to improve the tree species classification accuracy. The combined classification of tree species and standing dead trees with crowns has been of minor research interest. Moreover, due to the expected high dimensional feature space, techniques are mandatory to reduce the huge number of predictive variables to the most prominent ones.

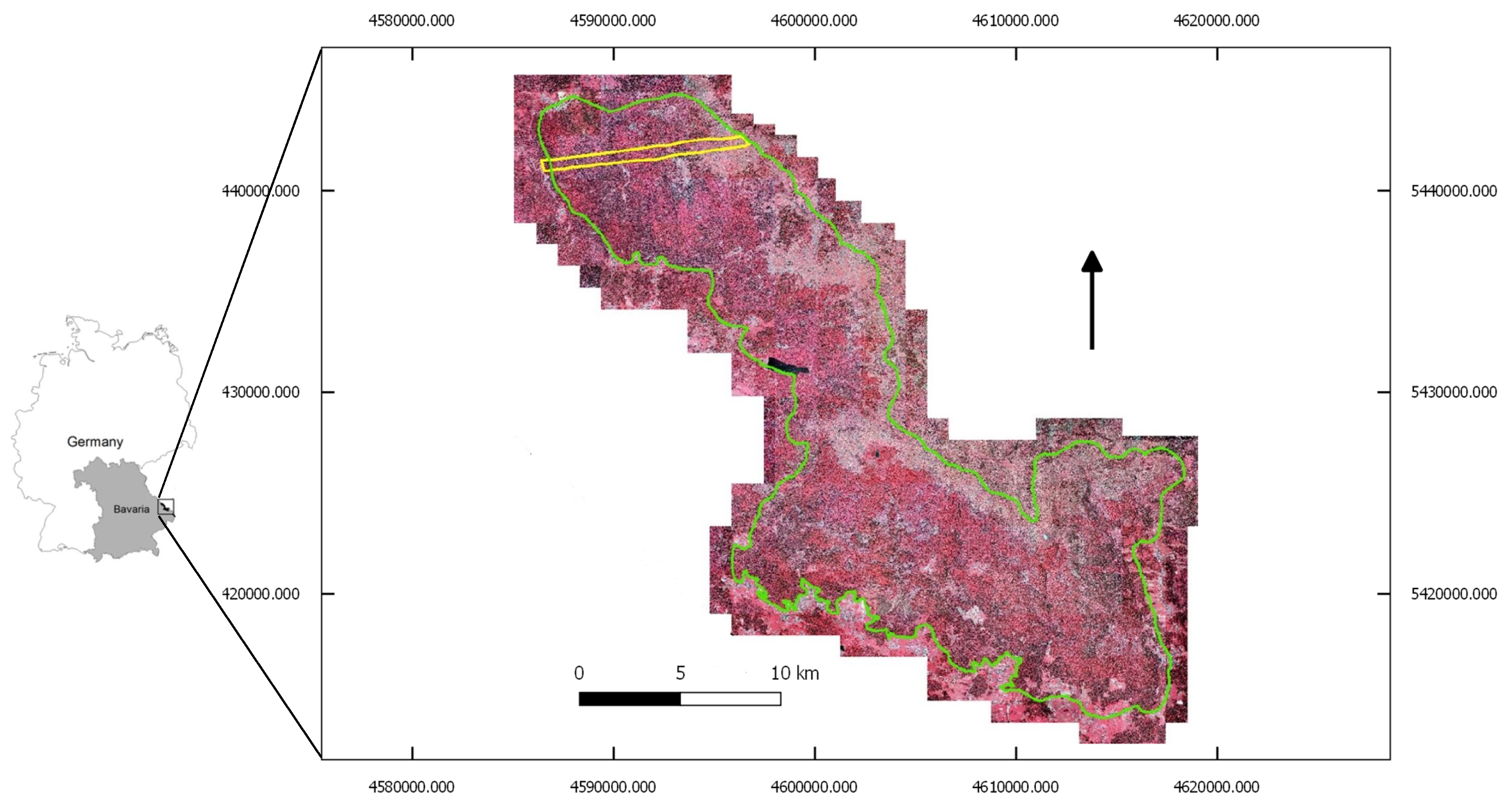

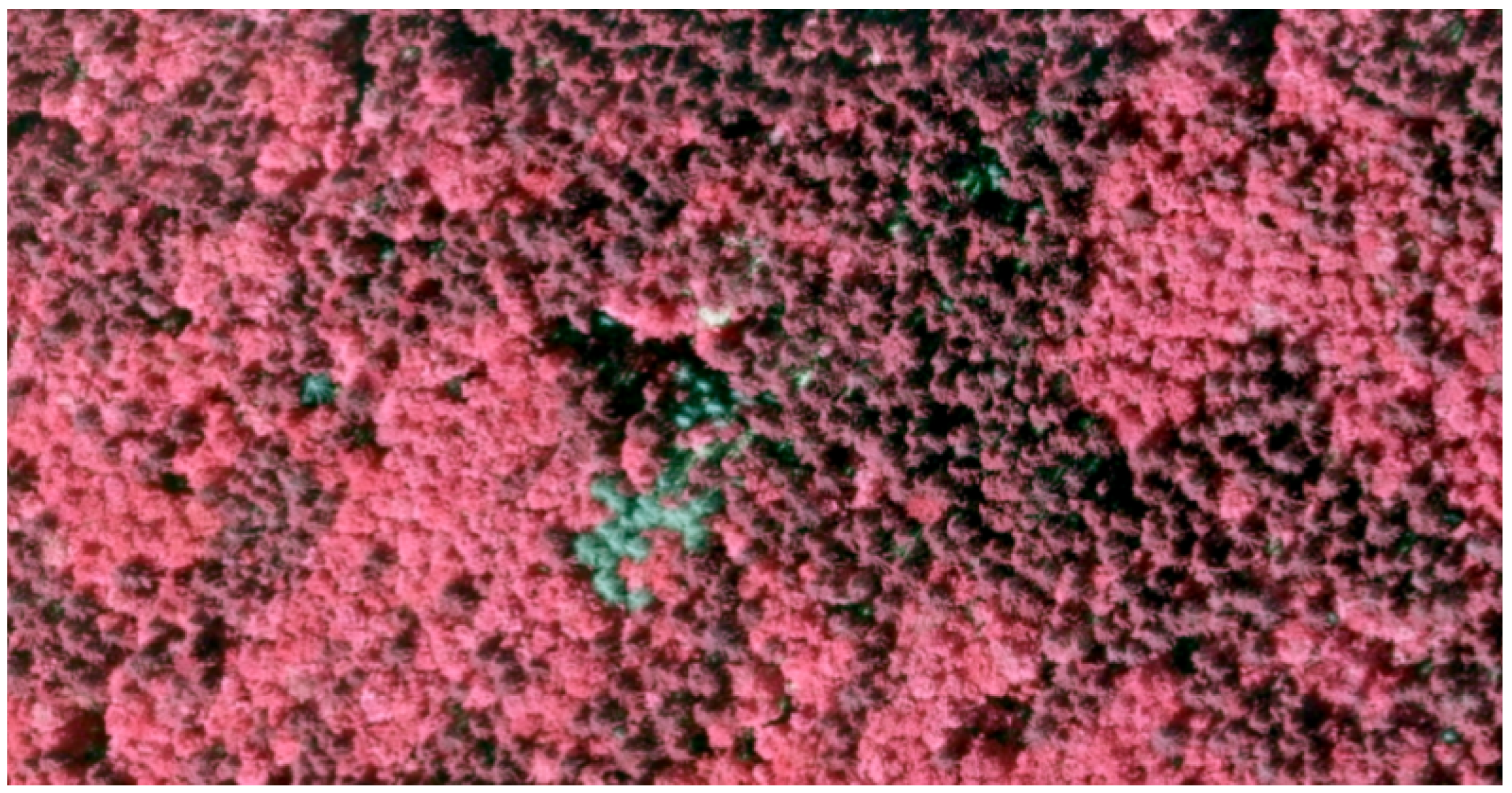

Therefore, the main objectives of this study are to evaluate the accuracy of classification tree species and standing dead trees in a temperate forest located in southeastern Germany using (i) triple wavelength ALS (1550 nm, 1064 nm, and 532 nm), (ii) single wavelength ALS, and (iii) to identify the most important features based on a specific feature selection approach. Thereby, we demonstrate the current potentials and limitations of triple wavelength ALS without fusing optical imagery. We simulate a multispectral ALS sensor by compiling the data from three different ALS sensors which have been carried by two aircrafts on the same day.

6. Conclusions

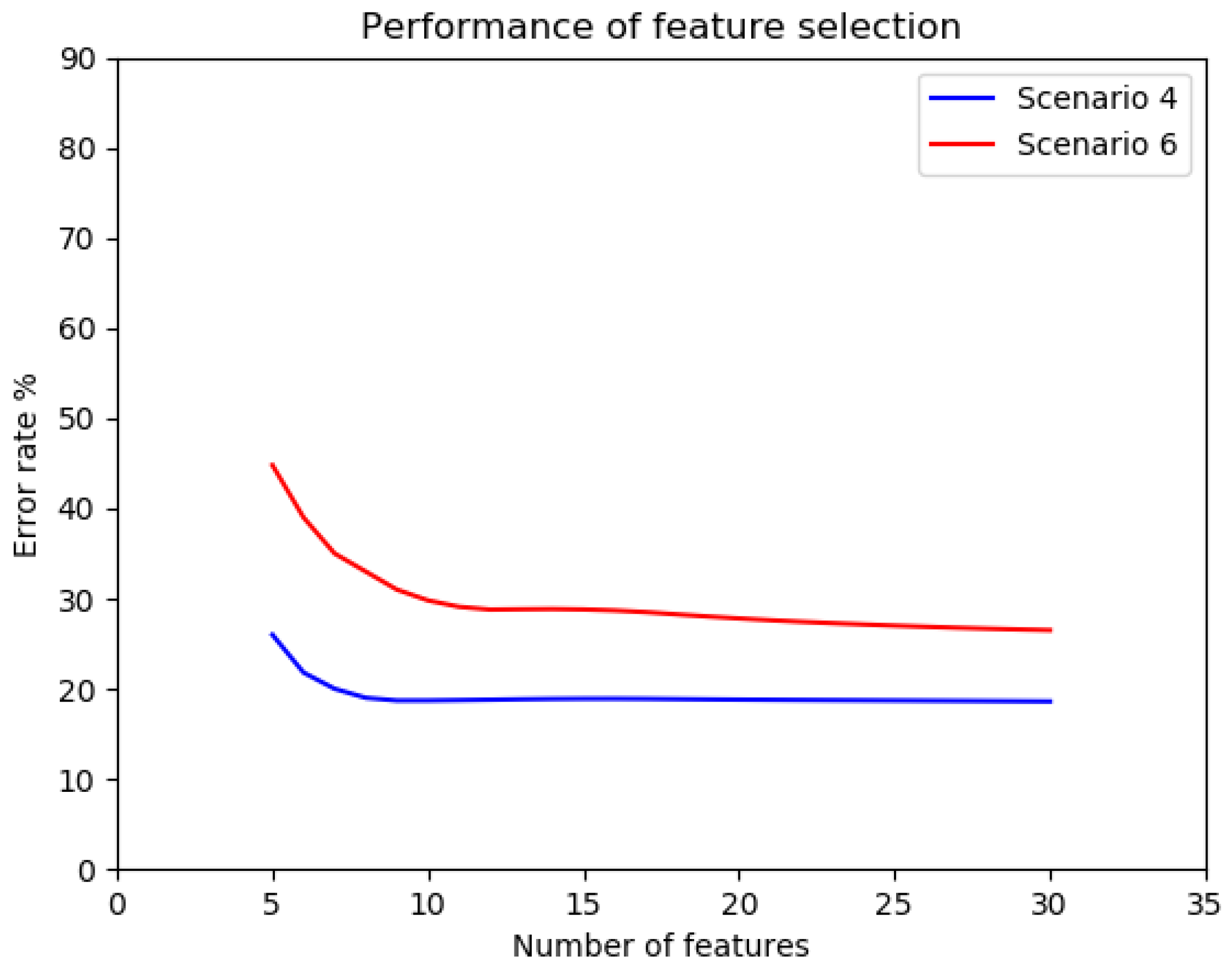

In summary, our experiment illustrated that multi wavelength ALS point clouds improves the characterization of tree species in approaches that work at the single tree level. However, both firs and standing dead trees with crowns could only be classified with reduced completeness of 59% and 73%, respectively. The use of the feature selection step showed that mainly the intensity-related features from spectral channel Ch2 (1064 nm) notably improved the classification rate, achieving an overall accuracy of 82%.

This study performed one of the first experiments examining the applicability of multi wavelength ALS data for classification of tree species and dead trees on a temperate forest. Instead of using one single instrument, we simulated multispectral ALS data by combining sensor data acquired from two different instruments on the same day with stable weather conditions. The flying height and the atmospheric attenuation was the same for the two sensors and almost the same for the third one. Of course, this system configuration has different scan angles and different sensors as well. All in all, we believe that this setup provided radiometric data comparable to those captured by a single instrument consisting of three non-collinear ALS units, e.g., the Titan sensor from Optech. Clearly, the use of a single multispectral ALS instrument has advantages over multiple sensors. Besides the data processing, the data from a single instrument refer to the same flying height and are consistently calibrated. However, both instrument approaches have the drawback that non-collinear laser beams are used meaning that the backscattered pulses do not necessarily result from the same part of the object.

Furthermore, as expected, the feature selection step considerably reduced the high dimensional feature space used to optimize the classification accuracy. Based on the prominent features list, the feature selection procedure was able to identify the most discriminative features. Interestingly, the classification deteriorated by about 6% if all the extracted features without the feature selection step had been used.

From a practical point of view, the accuracy level is not yet optimal and should be at least 90%. Apparently, the radiometric information from the non-collinear multi wavelength ALS data provided at three distinct wavelengths limited the classification of the four forest objects (three tree species and standing dead trees with crowns) to this accuracy level in a temperate forest. Further research needs to be conducted to investigate the relationships between the structural tree crown characteristics and the multi wavelength ALS point cloud features to determine their impact on the classification. Furthermore, a collinear multispectral ALS system, whose emitted laser beams strike the same target simultaneously, or the fusion of ALS with hyperspectral imagery may improve the detailed classification of tree species and dead trees.