LAM: Remote Sensing Image Captioning with Label-Attention Mechanism

Abstract

1. Introduction

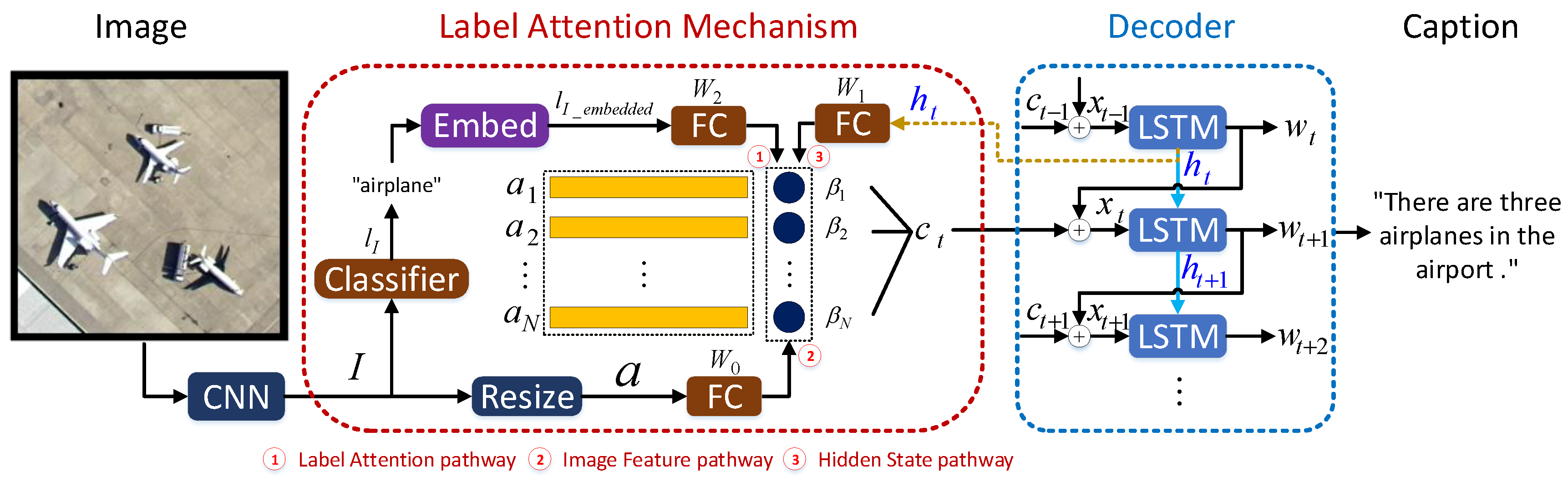

- A novel attention mechanism, namely Label-Attention Mechanism (LAM), is proposed to guide the calculation of attention masks by using label information in attention models. The label information is instructive to attention masks and make them able to attend regions of interest according to the categories of input images. The label-guided image features expose more label-related object information and relationships. Thus, complete description for input images can be easily generated.

- The proposed LAM can provide more precise label information by adopting the predicted labels’ word embedding vectors instead of high-level image features. The content of input images can be concisely represented by the predicted labels’ word embedding vectors. Without redundant information in high-level image features, better sentences can be easily generated to obtain better scores. What is more, the label information is introduced into the calculation of attention masks instead of directly concatenated with middle-level image features. This way of fusion avoids middle-level image features diluting the label information. Simultaneously, it contributes to guiding the calculation of attention masks.

2. Related Works

2.1. Natural Image Captioning (NIC)

2.2. Remote Sensing Image Captioning (RSIC)

3. Method

3.1. Label-Attention Mechanism

3.1.1. Label-Attention Pathway

3.1.2. Image Feature Pathway

3.1.3. Hidden State Pathway

3.2. Generating Sentences

3.3. Extracting Image Features

4. Experiments

4.1. Dataset Description

4.1.1. UCM-Captions

4.1.2. Sydney-Captions

4.1.3. RSICD

4.1.4. Diversity Analysis

4.2. Evaluation Metrics

4.3. Implementations

4.3.1. Preprocessing of Datasets

4.3.2. Fine-Tuning

4.3.3. Optimization

4.4. Results

4.4.1. Quantitative Comparison

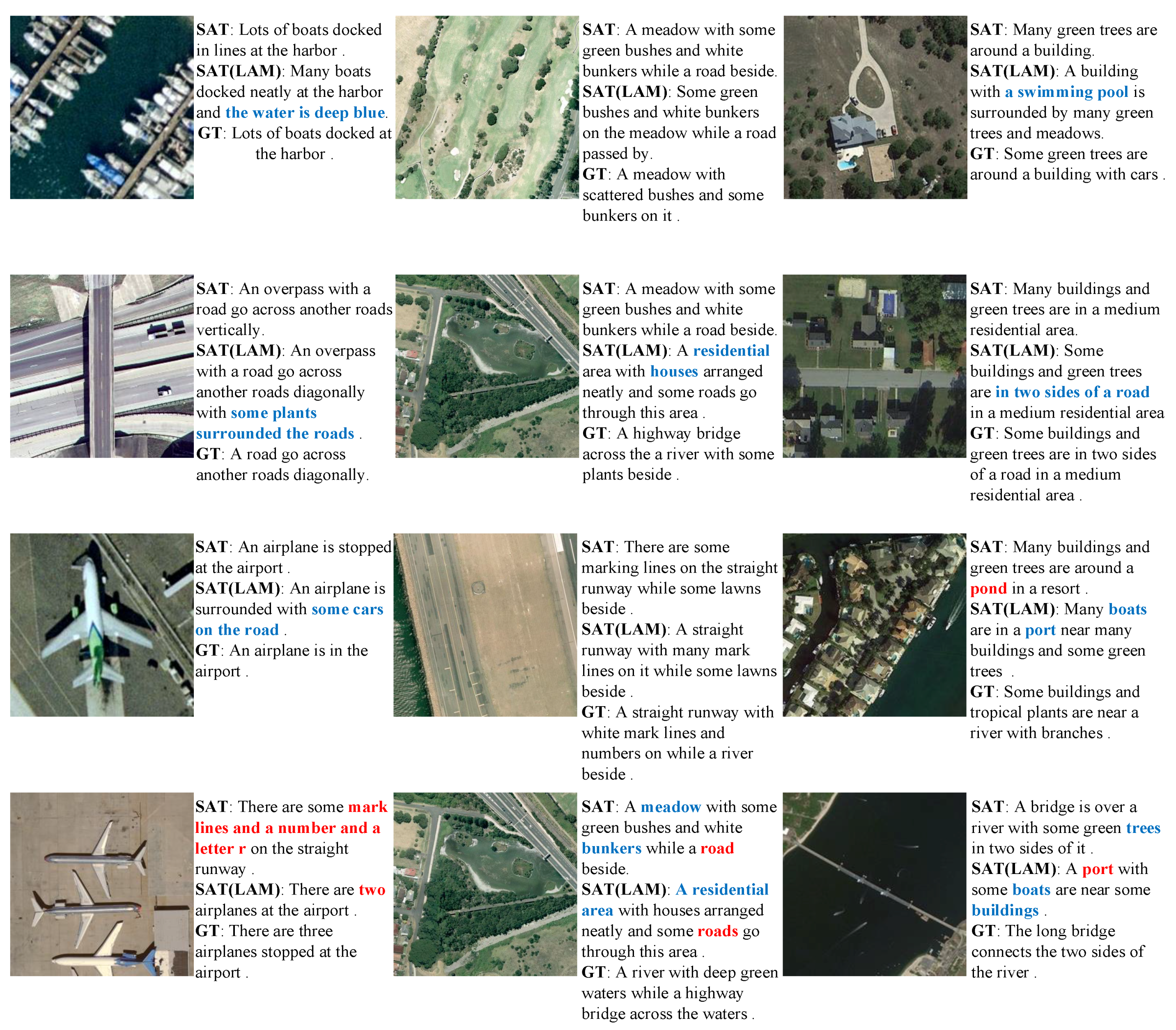

4.4.2. Qualitative Comparison

4.4.3. Parameter Analysis

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Zhang, C.; Zou, T.; Wang, Z. A Fast Target Detection Algorithm for High Resolution SAR Imagery. J. Remote Sens. 2005, 9, 45–49. [Google Scholar]

- Wang, S.; Gao, X.; Sun, H.; Zheng, X.; Sun, X. An Aircraft Detection Method Based on Convolutional Neural Networks in High-Resolution SAR Images. J. Radars 2017, 6, 195–203. [Google Scholar] [CrossRef]

- Yan, Z.; Yan, M.; Sun, H.; Fu, K.; Hong, J.; Sun, J.; Zhang, Y.; Sun, X. Cloud and cloud shadow detection using multilevel feature fused segmentation network. IEEE Geosci. Remote Sens. Lett. 2018, 15, 1600–1604. [Google Scholar] [CrossRef]

- Gao, X.; Sun, X.; Zhang, Y.; Yan, M.; Xu, G.; Sun, H.; Jiao, J.; Fu, K. An End-to-End Neural Network for Road Extraction From Remote Sensing Imagery by Multiple Feature Pyramid Network. IEEE Access 2018, 6, 39401–39414. [Google Scholar] [CrossRef]

- Lu, X.; Wang, B.; Zheng, X.; Li, X. Exploring Models and Data for Remote Sensing Image Caption Generation. IEEE Trans. Geosci. Remote Sens. 2018, 56, 2183–2195. [Google Scholar] [CrossRef]

- Ordonez, V.; Kulkarni, G.; Berg, T.L. Im2Text: Describing Images Using 1 Million Captioned Photographs. In Proceedings of the 24th International Conference on Neural Information Processing Systems, Granada, Spain, 12–15 December 2011; pp. 1143–1151. [Google Scholar]

- Hodosh, M.; Young, P.; Hockenmaier, J. Framing image description as a ranking task: Data, models and evaluation metrics. J. Artif. Intell. Res. 2013, 47, 853–899. [Google Scholar] [CrossRef]

- Sun, C.; Gan, C.; Nevatia, R. Automatic Concept Discovery from Parallel Text and Visual Corpora. In Proceedings of the 2015 IEEE International Conference on Computer Vision, Santiago, Chile, 7–13 December 2015; pp. 2596–2604. [Google Scholar]

- Gong, Y.; Wang, L.; Hodosh, M.; Hockenmaier, J.; Lazebnik, S. Improving Image-Sentence Embeddings Using Large Weakly Annotated Photo Collections. In Proceedings of the European Conference on Computer Vision, Zurich, Switzerland, 6–12 September 2014; pp. 529–545. [Google Scholar]

- Ordonez, V.; Han, X.; Kuznetsova, P.; Kulkarni, G.; Mitchell, M.; Yamaguchi, K.; Stratos, K.; Goyal, A.; Dodge, J.; Mensch, A.; et al. Large Scale Retrieval and Generation of Image Descriptions. Int. J. Comput. Vis. 2016, 119, 46–59. [Google Scholar] [CrossRef]

- Farhadi, A.; Hejrati, M.; Sadeghi, M.A.; Young, P.; Rashtchian, C.; Hockenmaier, J.; Forsyth, D.A. Every picture tells a story: Generating sentences from images. In Proceedings of the 11th European Conference on Computer Vision, Heraklion, Greece, 5–11 September 2010; pp. 15–29. [Google Scholar]

- Kulkarni, G.; Premraj, V.; Dhar, S.; Li, S.; Choi, Y.; Berg, A.C.; Berg, T.L. Baby talk: Understanding and generating simple image descriptions. In Proceedings of the 2011 IEEE Conference on Computer Vision and Pattern Recognition, Colorado Springs, CO, USA, 20–25 June 2011; pp. 1601–1608. [Google Scholar]

- Xu, K.; Ba, J.; Kiros, R.; Cho, K.; Courville, A.C.; Salakhudinov, R.; Zemel, R.; Bengio, Y. Show, Attend and Tell: Neural Image Caption Generation with Visual Attention. In Proceedings of the 32nd International Conference on Machine Learning, Lille, France, 6–11 July 2015; pp. 2048–2057. [Google Scholar]

- Lu, J.; Xiong, C.; Parikh, D.; Socher, R. Knowing When to Look: Adaptive Attention via a Visual Sentinel for Image Captioning. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 3242–3250. [Google Scholar] [CrossRef]

- Chen, S.; Zhao, Q. Boosted Attention: Leveraging Human Attention for Image Captioning. In Proceedings of the 15th European Conference on Computer Vision—ECCV2018, Munich, Germany, 8–14 September 2018; pp. 72–88. [Google Scholar] [CrossRef]

- Mao, J.; Xu, W.; Yang, Y.; Wang, J.; Yuille, A. Explain Images with Multimodal Recurrent Neural Networks. arXiv 2014, arXiv:1410.1090. [Google Scholar]

- Aneja, J.; Deshpande, A.; Schwing, A.G. Convolutional Image Captioning. arXiv 2017, arXiv:1711.09151. [Google Scholar]

- Zhang, X.; Li, X.; An, J.; Gao, L.; Hou, B.; Li, C. Natural language description of remote sensing images based on deep learning. In Proceedings of the 2017 IEEE International Geoscience and Remote Sensing Symposium, IGARSS 2017, Fort Worth, TX, USA, 23–28 July 2017; pp. 4798–4801. [Google Scholar] [CrossRef]

- Zhang, X.; Wang, X.; Tang, X.; Zhou, H.; Li, C. Description Generation for Remote Sensing Images Using Attribute Attention Mechanism. Remote Sens. 2019, 11, 612. [Google Scholar] [CrossRef]

- Mao, J.; Xu, W.; Yang, Y.; Wang, J.; Huang, Z.; Yuille, A. Deep Captioning with Multimodal Recurrent Neural Networks (m-RNN). arXiv 2015, arXiv:1412.6632. [Google Scholar]

- Karpathy, A.; Feifei, L. Deep visual-semantic alignments for generating image descriptions. In Proceedings of the 2015 IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; pp. 3128–3137. [Google Scholar]

- Chen, X.; Zitnick, C. Learning a Recurrent Visual Representation for Image Caption Generation. In Proceedings of the 2015 IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015. [Google Scholar]

- Fang, H.; Gupta, S.; Iandola, F.N.; Srivastava, R.K.; Deng, L.; Dollar, P.; Gao, J.; He, X.; Mitchell, M.; Platt, J. From captions to visual concepts and back. In Proceedings of the 2015 IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; pp. 1473–1482. [Google Scholar]

- Karpathy, A.; Joulin, A.; Li, F. Deep Fragment Embeddings for Bidirectional Image Sentence Mapping. In Proceedings of the 27th International Conference on Neural Information Processing Systems, Montreal, QC, Canada, 8–13 December 2014; pp. 1889–1897. [Google Scholar]

- Krizhevsky, A.; Sutskever, I.; Hinton, G. ImageNet Classification with Deep Convolutional Neural Networks. In Proceedings of the 25th International Conference on Neural Information Processing Systems, Lake Tahoe, NV, USA, 3–6 December 2012; Volume 141, pp. 1097–1105. [Google Scholar]

- Simonyan, K.; Zisserman, A. Very Deep Convolutional Networks for Large-Scale Image Recognition. arXiv 2015, arXiv:1409.1556. [Google Scholar]

- Szegedy, C.; Liu, W.; Jia, Y.; Sermanet, P.; Reed, S.E.; Anguelov, D.; Erhan, D.; Vanhoucke, V.; Rabinovich, A. Going deeper with convolutions. In Proceedings of the 2015 IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; pp. 1–9. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Madison, WI, USA, 26 June–1 July 2016; pp. 770–778. [Google Scholar]

- Vinyals, O.; Toshev, A.; Bengio, S.; Erhan, D. Show and tell: A neural image caption generator. In Proceedings of the 2015 IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; pp. 3156–3164. [Google Scholar]

- Qu, B.; Li, X.; Tao, D.; Lu, X. Deep semantic understanding of high resolution remote sensing image. In Proceedings of the 2016 International Conference on Computer, Information and Telecommunication Systems, Kunming, China, 6–8 July 2016; pp. 1–5. [Google Scholar]

- Yang, Y.; Newsam, S. Bag-of-visual-words and spatial extensions for land-use classification. In Proceedings of the 18th SIGSPATIAL International Conference on Advances in Geographic Information Systems, San Jose, CA, USA, 2–5 November 2010; pp. 270–279. [Google Scholar]

- Zhang, F.; Du, B.; Zhang, L.; Sensing, R. Saliency-Guided Unsupervised Feature Learning for Scene Classification. IEEE Trans. Geosci. Remote Sens. 2015, 53, 2175–2184. [Google Scholar] [CrossRef]

- Li, L.; Tang, S.; Deng, L.; Zhang, Y.; Tian, Q. Image Caption with Global-Local Attention. In Proceedings of the Thirty-First AAAI Conference on Artificial Intelligence, San Francisco, CA, USA, 4–9 February 2017; pp. 4133–4139. [Google Scholar]

- Yao, T.; Pan, Y.; Li, Y.; Mei, T. Incorporating Copying Mechanism in Image Captioning for Learning Novel Objects. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 21–26 July 2017; pp. 5263–5271. [Google Scholar] [CrossRef]

- Wang, Y.; Lin, Z.; Shen, X.; Cohen, S.; Cottrell, G.W. Skeleton Key: Image Captioning by Skeleton-Attribute Decomposition. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 21–26 July 2017; pp. 7378–7387. [Google Scholar] [CrossRef]

- Russakovsky, O.; Deng, J.; Su, H.; Krause, J.; Satheesh, S.; Ma, S.; Huang, Z.; Karpathy, A.; Khosla, A.; Bernstein, M.S. ImageNet Large Scale Visual Recognition Challenge. Int. J. Comput. Vis. 2015, 115, 211–252. [Google Scholar] [CrossRef]

| Datasets | Categories | Caption Mean Length | Vocab (Before) | Vocab (After) | Count |

|---|---|---|---|---|---|

| UCM-Captions | 21 | 11.5 | 315 | 298 | 2100 |

| Sydney-Captions | 7 | 13.2 | 231 | 179 | 613 |

| RSICD | 30 | 11.4 | 2695 | 1252 | 10,000 |

| Methods | B1 | B2 | B3 | B4 | M | R | C | S | |

|---|---|---|---|---|---|---|---|---|---|

| RNNLM [18] | 0.7735 | 0.7119 | 0.6623 | 0.6156 | 0.4198 | 0.7233 | 3.1385 | 0.4677 | 1.0730 |

| SAT [13] | 0.7995 | 0.7365 | 0.6792 | 0.6244 | 0.4171 | 0.7441 | 3.1044 | 0.4951 | 1.0770 |

| FC-Att+LSTM [19] | 0.8102 | 0.7330 | 0.6727 | 0.6188 | 0.4280 | 0.7667 | 3.3700 | 0.4867 | 1.1339 |

| SM-Att+LSTM [19] | 0.8115 | 0.7418 | 0.6814 | 0.6296 | 0.4354 | 0.7793 | 3.386 | 0.4875 | 1.1435 |

| SAT(LAM) | 0.8195 | 0.7764 | 0.7485 | 0.7161 | 0.4837 | 0.7908 | 3.6171 | 0.5024 | 1.2219 |

| SAT(LAM-TL) | 0.8208 | 0.7856 | 0.7525 | 0.7229 | 0.4880 | 0.7933 | 3.7088 | 0.5126 | 1.2450 |

| Adaptive [14] | 0.808 | 0.729 | 0.665 | 0.610 | 0.430 | 0.766 | 3.138 | 0.487 | 1.0848 |

| Adaptive(LAM) | 0.817 | 0.751 | 0.699 | 0.654 | 0.448 | 0.787 | 3.280 | 0.503 | 1.1338 |

| Adaptive(LAM-TL) | 0.857 | 0.812 | 0.775 | 0.743 | 0.510 | 0.826 | 3.758 | 0.535 | 1.2734 |

| Methods | B1 | B2 | B3 | B4 | M | R | C | S | |

|---|---|---|---|---|---|---|---|---|---|

| RNNLM [18] | 0.6861 | 0.6093 | 0.5465 | 0.4917 | 0.3565 | 0.6470 | 2.2129 | 0.3867 | 0.8188 |

| SAT [13] | 0.7391 | 0.6402 | 0.5623 | 0.5248 | 0.3493 | 0.6721 | 2.2015 | 0.3945 | 0.8283 |

| FC-Att+LSTM [19] | 0.7383 | 0.6440 | 0.5701 | 0.5085 | 0.3638 | 0.6689 | 2.2415 | 0.3951 | 0.8355 |

| SM-Att+LSTM [19] | 0.7430 | 0.6535 | 0.5859 | 0.5181 | 0.3641 | 0.6772 | 2.3402 | 0.3976 | 0.8593 |

| SAT(LAM) | 0.7405 | 0.6550 | 0.5904 | 0.5304 | 0.3689 | 0.6814 | 2.3519 | 0.4038 | 0.8671 |

| SAT(LAM-TL) | 0.7425 | 0.6570 | 0.5913 | 0.5369 | 0.3700 | 0.6819 | 2.3563 | 0.4048 | 0.8698 |

| Adaptive [14] | 0.7248 | 0.6301 | 0.5565 | 0.4945 | 0.3609 | 0.6513 | 2.1252 | 0.3973 | 0.8058 |

| Adaptive(LAM) | 0.7323 | 0.6316 | 0.5629 | 0.5074 | 0.3613 | 0.6775 | 2.3455 | 0.4243 | 0.8631 |

| Adaptive(LAM-TL) | 0.7365 | 0.6440 | 0.5835 | 0.5348 | 0.3693 | 0.6827 | 2.3513 | 0.4351 | 0.8746 |

| Methods | B1 | B2 | B3 | B4 | M | R | C | S | |

|---|---|---|---|---|---|---|---|---|---|

| RNNLM [18] | 0.6098 | 0.5078 | 0.4367 | 0.3814 | 0.2936 | 0.5456 | 2.4015 | 0.4259 | 0.8096 |

| SAT [13] | 0.6707 | 0.5438 | 0.4550 | 0.3870 | 0.3203 | 0.5724 | 2.4686 | 0.4539 | 0.8403 |

| FC-Att+LSTM [19] | 0.6671 | 0.5511 | 0.4691 | 0.4059 | 0.3225 | 0.5781 | 2.5763 | 0.4673 | 0.8700 |

| SM-Att+LSTM [19] | 0.6699 | 0.5523 | 0.4703 | 0.4068 | 0.3255 | 0.5802 | 2.5738 | 0.4687 | 0.8710 |

| SAT(LAM) | 0.6753 | 0.5537 | 0.4686 | 0.4026 | 0.3254 | 0.5823 | 2.5850 | 0.4636 | 0.8717 |

| SAT(LAM-TL) | 0.6790 | 0.5616 | 0.4782 | 0.4148 | 0.3298 | 0.5914 | 2.6672 | 0.4707 | 0.8946 |

| Adaptive [14] | 0.6621 | 0.5415 | 0.4667 | 0.4015 | 0.3204 | 0.5823 | 2.5808 | 0.4623 | 0.8694 |

| Adaptive(LAM) | 0.6664 | 0.5486 | 0.4676 | 0.4070 | 0.3230 | 0.5843 | 2.6055 | 0.4673 | 0.8774 |

| Adaptive(LAM-TL) | 0.6756 | 0.5549 | 0.4714 | 0.4077 | 0.3261 | 0.5848 | 2.6285 | 0.4671 | 0.8828 |

| Methods | CNN | B1 | B2 | B3 | B4 | M | R | C | S | |

|---|---|---|---|---|---|---|---|---|---|---|

| SAT [13] | VGG11 | 0.7834 | 0.7248 | 0.6781 | 0.6163 | 0.4190 | 0.7402 | 3.1406 | 0.4728 | 1.0778 |

| VGG16 | 0.7995 | 0.7365 | 0.6792 | 0.6244 | 0.4171 | 0.7441 | 3.1044 | 0.4951 | 1.0770 | |

| VGG19 | 0.7830 | 0.7172 | 0.6620 | 0.6116 | 0.4109 | 0.7497 | 3.1221 | 0.4684 | 1.0725 | |

| SAT(LAM) | VGG11 | 0.7809 | 0.7129 | 0.6598 | 0.6139 | 0.4235 | 0.7424 | 3.2512 | 0.4625 | 1.0987 |

| VGG16 | 0.8195 | 0.7764 | 0.7485 | 0.7161 | 0.4837 | 0.7908 | 3.6171 | 0.5024 | 1.2219 | |

| VGG19 | 0.7876 | 0.7275 | 0.6785 | 0.6339 | 0.4294 | 0.7424 | 3.2584 | 0.4635 | 1.1055 |

| Methods | CNN | B1 | B2 | B3 | B4 | M | R | C | S | |

|---|---|---|---|---|---|---|---|---|---|---|

| SAT [13] | VGG11 | 0.7226 | 0.6495 | 0.5888 | 0.5329 | 0.3524 | 0.6882 | 2.2567 | 0.4082 | 0.8477 |

| VGG16 | 0.7391 | 0.6402 | 0.5623 | 0.5248 | 0.3493 | 0.6721 | 2.2015 | 0.3945 | 0.8283 | |

| VGG19 | 0.7170 | 0.6371 | 0.5765 | 0.5268 | 0.3565 | 0.6536 | 2.2477 | 0.4026 | 0.8374 | |

| SAT(LAM) | VGG11 | 0.7197 | 0.6394 | 0.5772 | 0.5211 | 0.3626 | 0.6597 | 2.3337 | 0.4021 | 0.8558 |

| VGG16 | 0.7405 | 0.6550 | 0.5904 | 0.5304 | 0.3689 | 0.6814 | 2.3519 | 0.4038 | 0.8671 | |

| VGG19 | 0.7084 | 0.6249 | 0.5616 | 0.5085 | 0.3663 | 0.6586 | 2.3088 | 0.4123 | 0.8509 |

| Methods | CNN | B1 | B2 | B3 | B4 | M | R | C | S | |

|---|---|---|---|---|---|---|---|---|---|---|

| SAT [13] | VGG11 | 0.6623 | 0.5390 | 0.4576 | 0.3946 | 0.3114 | 0.5711 | 2.4325 | 0.4574 | 0.8334 |

| VGG16 | 0.6707 | 0.5438 | 0.4550 | 0.3870 | 0.3203 | 0.5724 | 2.4686 | 0.4539 | 0.8403 | |

| VGG19 | 0.6756 | 0.5514 | 0.4605 | 0.3889 | 0.3192 | 0.5687 | 2.4681 | 0.4537 | 0.8397 | |

| SAT(LAM) | VGG11 | 0.6681 | 0.5453 | 0.4644 | 0.3931 | 0.3246 | 0.5805 | 2.5801 | 0.4585 | 0.8674 |

| VGG16 | 0.6753 | 0.5537 | 0.4686 | 0.4026 | 0.3254 | 0.5823 | 2.5850 | 0.4636 | 0.8717 | |

| VGG19 | 0.6780 | 0.5620 | 0.4773 | 0.4118 | 0.3267 | 0.5870 | 2.6662 | 0.4697 | 0.8923 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhang, Z.; Diao, W.; Zhang, W.; Yan, M.; Gao, X.; Sun, X. LAM: Remote Sensing Image Captioning with Label-Attention Mechanism. Remote Sens. 2019, 11, 2349. https://doi.org/10.3390/rs11202349

Zhang Z, Diao W, Zhang W, Yan M, Gao X, Sun X. LAM: Remote Sensing Image Captioning with Label-Attention Mechanism. Remote Sensing. 2019; 11(20):2349. https://doi.org/10.3390/rs11202349

Chicago/Turabian StyleZhang, Zhengyuan, Wenhui Diao, Wenkai Zhang, Menglong Yan, Xin Gao, and Xian Sun. 2019. "LAM: Remote Sensing Image Captioning with Label-Attention Mechanism" Remote Sensing 11, no. 20: 2349. https://doi.org/10.3390/rs11202349

APA StyleZhang, Z., Diao, W., Zhang, W., Yan, M., Gao, X., & Sun, X. (2019). LAM: Remote Sensing Image Captioning with Label-Attention Mechanism. Remote Sensing, 11(20), 2349. https://doi.org/10.3390/rs11202349