RETRACTED: Attention-Based Deep Feature Fusion for the Scene Classification of High-Resolution Remote Sensing Images

Abstract

1. Introduction

- We propose to make attention maps related to original RGB images an explicit input component of the end-to-end training, aiming to force the network to concentrate on the most salient regions that can increase the accuracy of scene classification.

- We design multiplicative fusion of deep features by combining features derived from the attention map with those from the original pixel spaces to improve the performance of these scenes of repeated texture.

- We propose a center-based cross-entropy loss function to better distinguish scene images that are easily confused and decrease the effect of intra-class diversity on representing scene images.

- The proposed ADFF framework is evaluated on three benchmark datasets and achieves state-of-the-art performance in the case of limited training data. Therefore, it can be applied to the land-cover classification of large areas when training data is limited.

2. Related Work

2.1. Feature Representation

2.2. Attention Mechanism

2.3. Feature Fusion

3. Materials and Methods

3.1. Overall Architecture

| Algorithm 1. The procedure of ADFF | |

| 1 | Step 1 Generate attention maps |

| 2 | Input: The original images and their corresponding labels . |

| 3 | Output: Attention maps . |

| 4 | Fine-tune ResNet-18 model on training datasets. |

| 5 | Forward inference full image . |

| 6 | Calculate weight coefficients from Equation (1). |

| 7 | Obtain gray scale saliency map from Equation (2). |

| 8 | Return attention map by upsampling to the size of . |

| 9 | Step 2 End-to-end learning |

| 10 | Input: The original image and the attention maps |

| 11 | Output: Predict probability P |

| 12 | While Epoch=1, 2,…, N do |

| 13 | Fuse features derived from CNN and SFT that are trained from and respectively. |

| 14 | Predict probability of images by the fused features. |

| 15 | Calculate the total loss function .from Equation (6) |

| 16 | Update parameter through back propagating the loss in 15 |

| 17 | End while |

| 18 | Return Predict probability P |

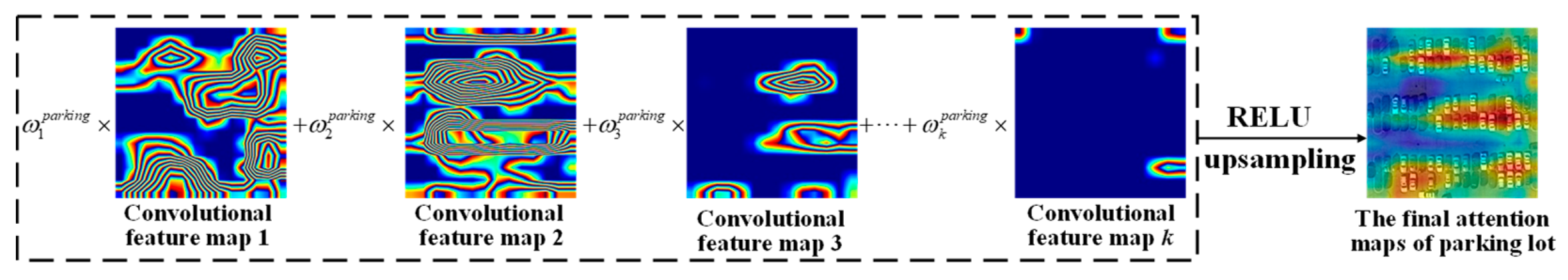

3.2. Attention Maps Generated by Grad-CAM Approach

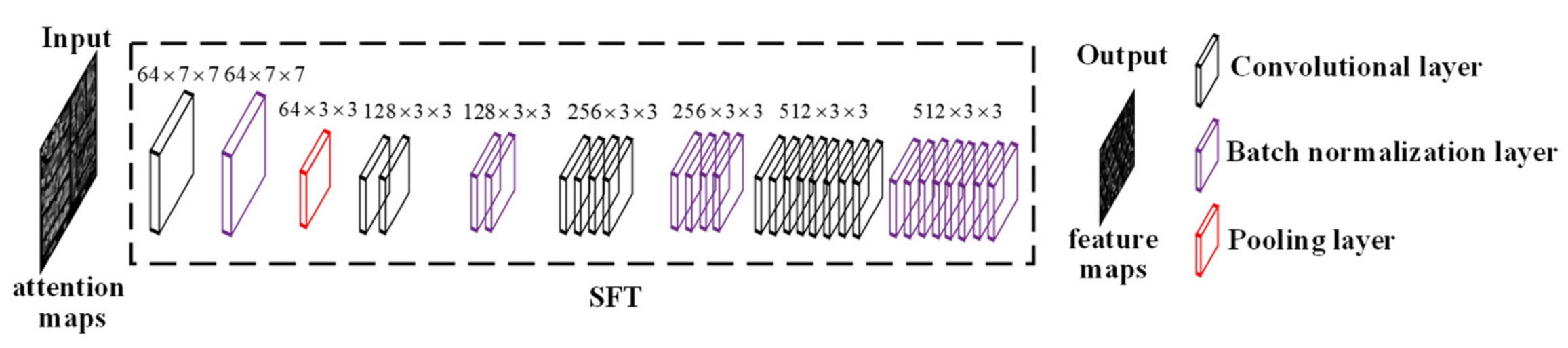

3.3. Multiplicative Fusion of Deep Features Derived from CNN and SFT

3.4. The Center-Based Cross Entropy Loss Function

4. Experimental Results and Setup

4.1. Description of Datasets and Implementation Details

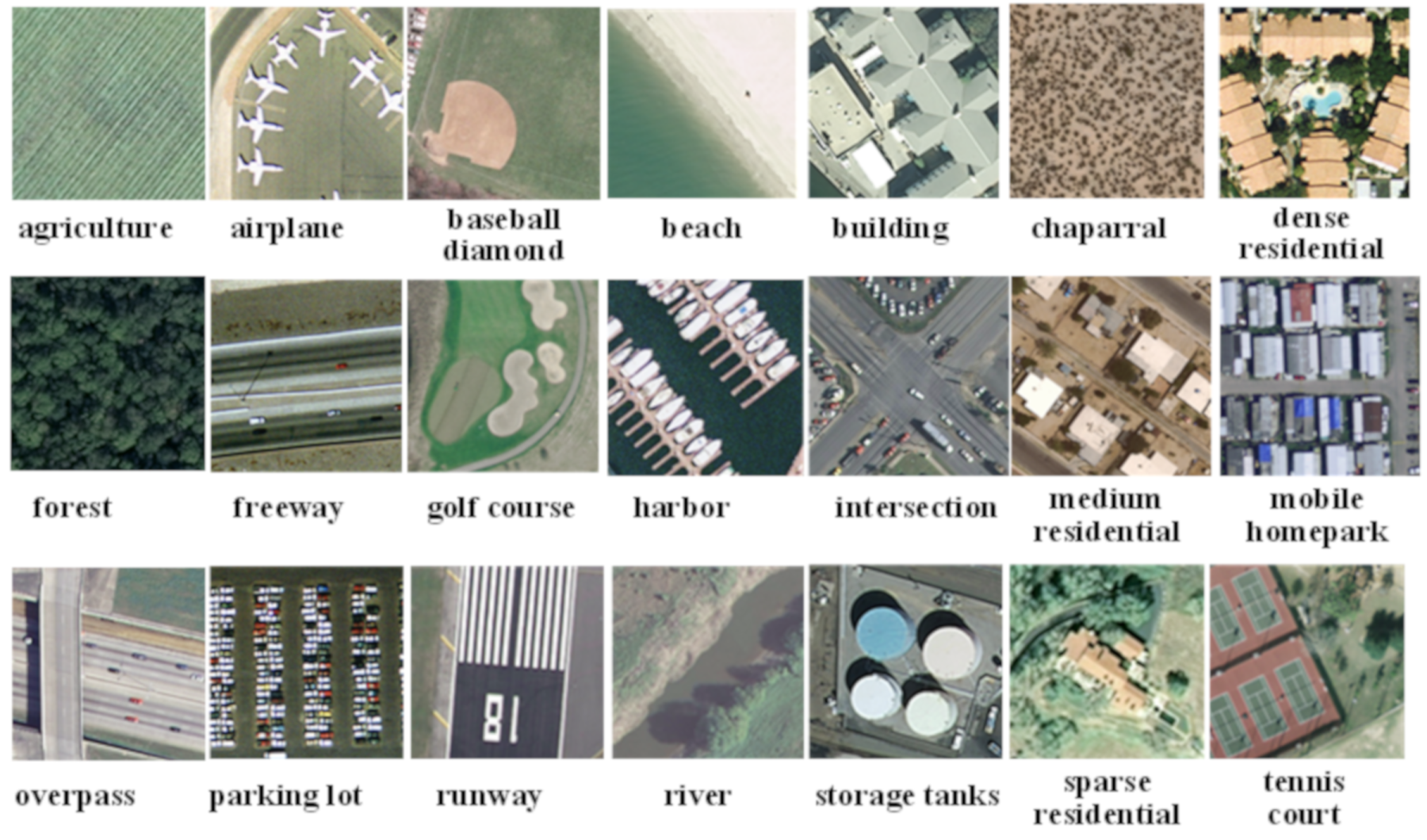

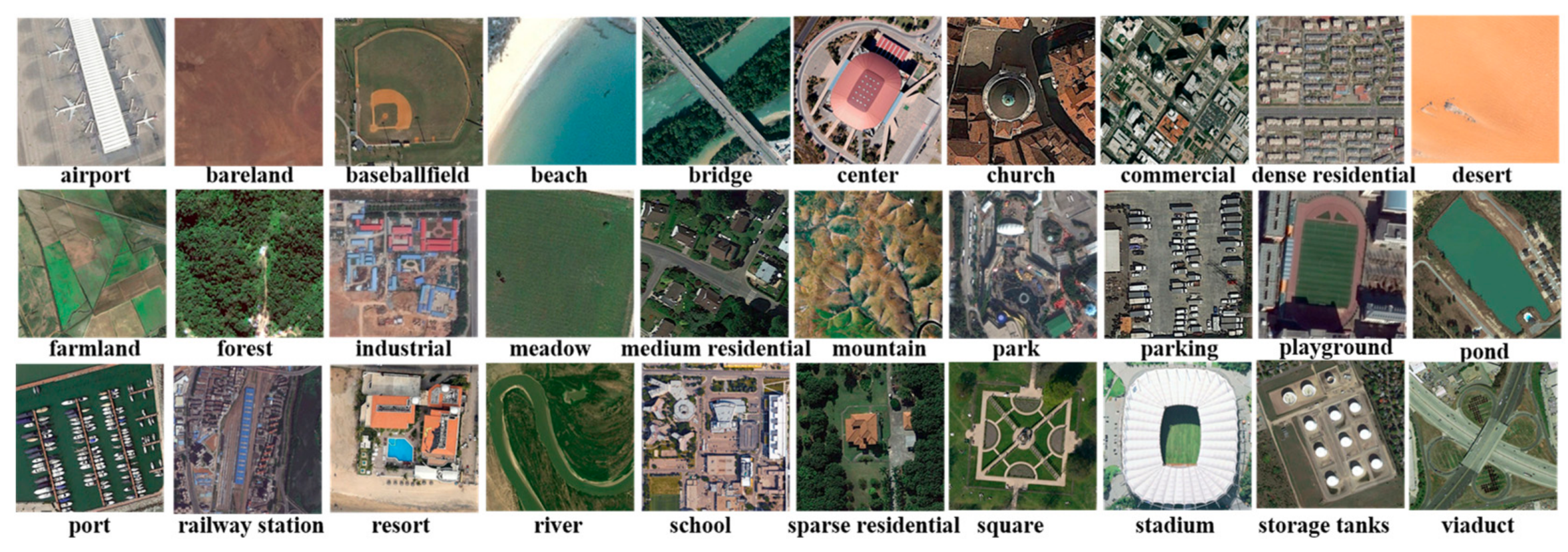

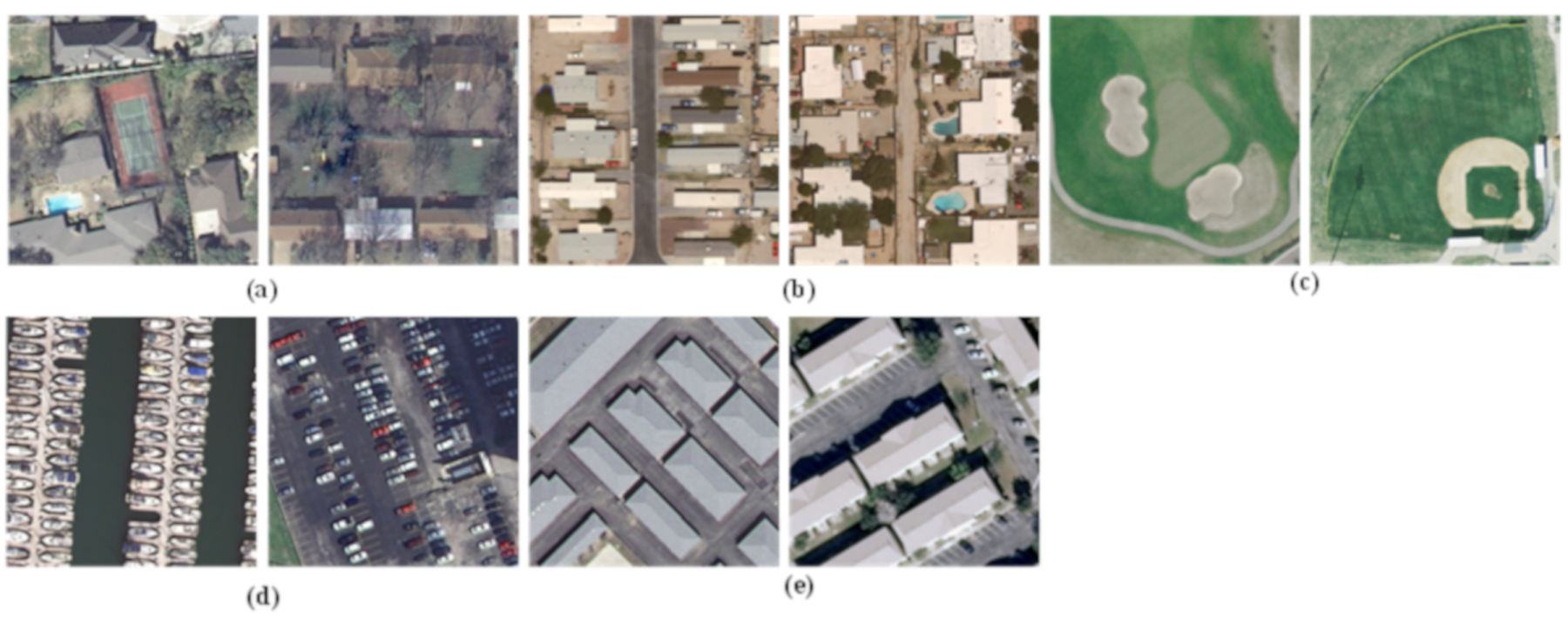

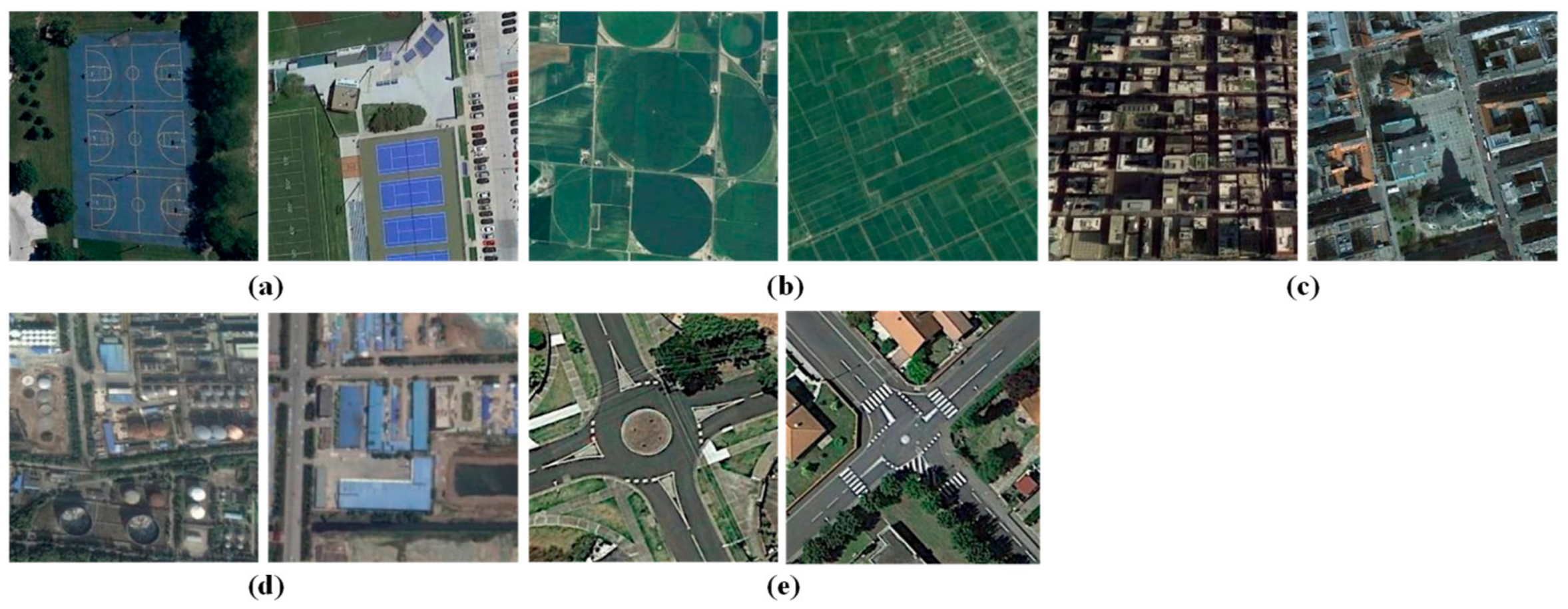

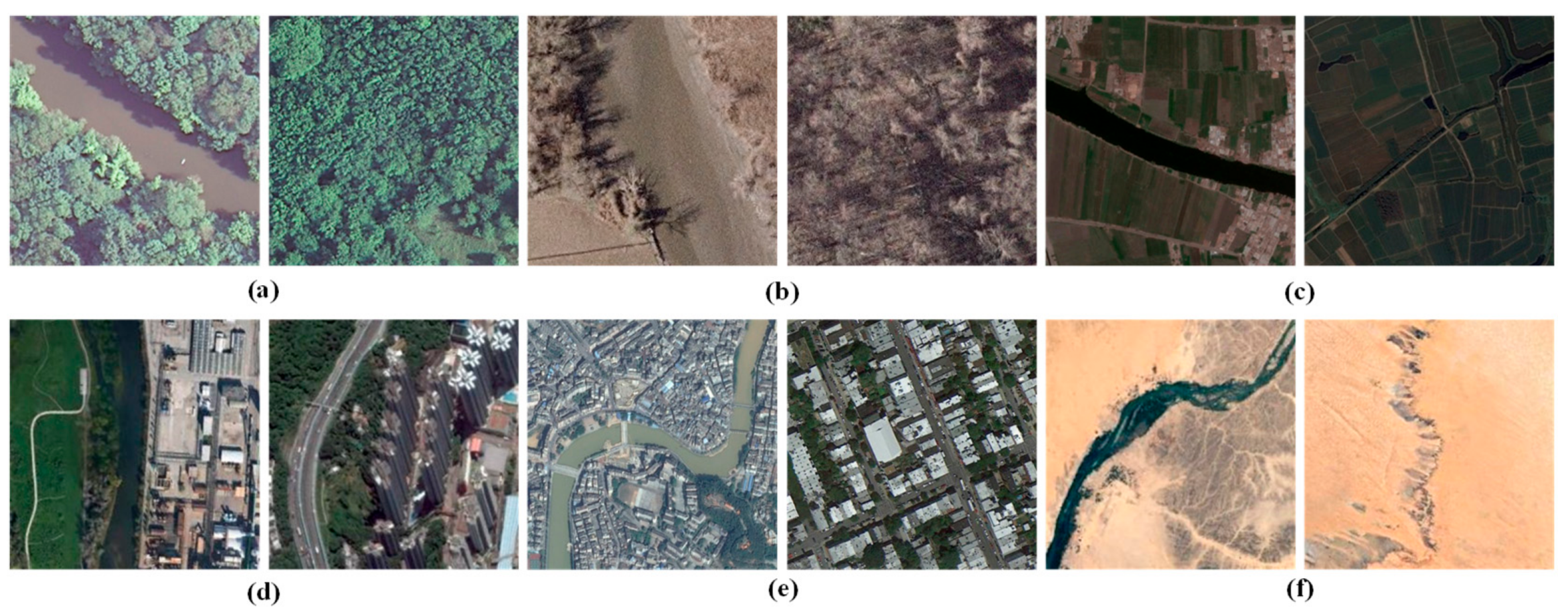

4.1.1. Dataset Description

4.1.2. Compared Approaches and Implementation Details

4.1.3. Evaluation Metrics

4.2. Experimental Results on the UC MERCED Dataset

4.3. Experimental Results on the AID Dataset

4.4. Experimental Results on the NWPU-RESISC45 Dataset

5. Discussion

5.1. The Computation Cost of the Proposed ADFF Framework

5.2. Ablation Studies of the Proposed ADFF Framework

5.3. The Influence of Attention Maps on the Proposed ADFF Framework

5.4. The Limits of the Proposed ADFF Framework in Different Types of Images

5.5. The Limits of the Proposed ADFF Framework in Different Environments

6. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Benedek, C.; Descombes, X.; Zerubia, J. Building development monitoring in multitemporal remotely sensed image pairs with stochastic birth-death dynamics. IEEE Trans. Pattern Anal. Mach. Intell. 2012, 34, 33–50. [Google Scholar] [CrossRef] [PubMed]

- Grinias, I.; Panagiotakis, C.; Tziritas, G. MRF-based Segmentation and Unsupervised Classification for Building and Road Detection in Peri-urban Areas of High-resolution. ISPRS J. Photogramm. Remote Sens. 2016, 122, 145–166. [Google Scholar] [CrossRef]

- Yan, L.; Zhu, R.; Mo, N.; Liu, Y. Improved class-specific codebook with two-step classification for scene-level classification of high resolution remote sensing images. Remote Sens. 2017, 9, 223. [Google Scholar] [CrossRef]

- Yu, Y.; Liu, F. Dense connectivity based two-stream deep feature fusion framework for aerial scene classification. Remote Sens. 2018, 10, 1158. [Google Scholar] [CrossRef]

- Yan, L.; Zhu, R.; Liu, Y.; Mo, N. TrAdaBoost based on improved particle swarm optimization for cross-domain scene classification with limited samples. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2018, 99, 3235–3251. [Google Scholar] [CrossRef]

- Zhu, X.X.; Tuia, D.; Mou, L.; Xia, G.S.; Zhang, L.; Xu, F.; Fraundorfer, F. Deep learning in remote sensing: A comprehensive review and list of resources. IEEE Geosci. Remote Sens. Mag. 2017, 5, 8–36. [Google Scholar] [CrossRef]

- Qi, K.; Guan, Q.; Yang, C. Concentric Circle Pooling in Deep Convolutional Networks for Remote Sensing Scene Classification. Remote Sens. 2018, 10, 934. [Google Scholar] [CrossRef]

- Yan, L.; Zhu, R.; Liu, Y.; Mo, N. Scene capture and selected codebook-based refined fuzzy classification of large high-resolution images. IEEE Trans. Geosci. Remote Sens. 2018, 56, 4178–4192. [Google Scholar] [CrossRef]

- Cheng, G.; Yang, C.; Yao, X.; Guo, L.; Han, J. When deep learning meets metric learning: Remote sensing image scene classification via learning discriminative CNNs. IEEE Trans. Geosci. Remote Sens. 2018, 56, 2811–2821. [Google Scholar]

- Bian, X.; Chen, C.; Tian, L.; Du, Q. Fusing local and global features for high-resolution scene classification. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2017, 10, 2889–2901. [Google Scholar] [CrossRef]

- Castelluccio, M.; Poggi, G.; Sansone, C.; Verdoliva, L. Land use classification in remote sensing images by convolutional neural networks. arXiv 2015, arXiv:1508.00092. [Google Scholar]

- Cheriyadat, A.M. Unsupervised feature learning for aerial scene classification. IEEE Trans. Geosci. Remote Sens. 2013, 52, 439–451. [Google Scholar] [CrossRef]

- Deng, Z.; Sun, H.; Zhou, S.; Zhao, J.; Lin, L.; Zou, H. Multi-scale object detection in remote sensing imagery with convolutional neural networks. ISPRS J. Photogramm. Remote Sens. 2018, 145, 3–22. [Google Scholar] [CrossRef]

- Wang, Q.; Liu, S.; Chanussot, J.; Li, X. Scene classification with recurrent attention of VHR remote sensing images. IEEE Trans. Geosci. Remote Sens. 2019, 57, 1155–1167. [Google Scholar] [CrossRef]

- Rensink, R.A. The dynamic representation of scenes. Vis. Cogn. 2000, 7, 17–42. [Google Scholar] [CrossRef]

- Ma, W.; Yang, Q.; Wu, Y.; Zhao, W.; Zhang, X. Double-Branch Multi-Attention Mechanism Network for Hyperspectral Image Classification. Remote Sens. 2019, 11, 1307. [Google Scholar] [CrossRef]

- Xu, R.; Tao, Y.; Lu, Z.; Zhong, Y. Attention-Mechanism-Containing Neural Networks for High-Resolution Remote Sensing Image Classification. Remote Sens. 2018, 10, 1602. [Google Scholar] [CrossRef]

- Fang, B.; Li, Y.; Zhang, H.; Chan, J. Hyperspectral Images Classification Based on Dense Convolutional Networks with Spectral-Wise Attention Mechanism. Remote Sens. 2019, 11, 159. [Google Scholar] [CrossRef]

- Mei, X.; Pan, E.; Ma, Y.; Dai, X.; Huang, J.; Fan, F.; Du, Q.; Zheng, H.; Ma, J. Spectral-Spatial Attention Networks for Hyperspectral Image Classification. Remote Sens. 2019, 11, 963. [Google Scholar] [CrossRef]

- Hua, Y.; Mou, L.; Zhu, X.X. Recurrently exploring class-wise attention in a hybrid convolutional and bidirectional LSTM network for multi-label aerial image classification. ISPRS J. Photogramm. Remote Sens. 2019, 149, 188–199. [Google Scholar] [CrossRef]

- Shakeel, A.; Sultani, W.; Ali, M. Deep built-structure counting in satellite imagery using attention based re-weighting. ISPRS J. Photogramm. Remote Sens. 2019, 151, 313–321. [Google Scholar] [CrossRef]

- Yan, L.; Zhu, R.; Mo, N.; Liu, Y. Cross-Domain Distance Metric Learning Framework with Limited Target Samples for Scene Classification of Aerial Images. IEEE Trans. Geosci. Remote Sens. 2019, 57, 3840–3857. [Google Scholar] [CrossRef]

- Lunga, D.; Yang, H.L.; Reith, A.; Weaver, J.; Yuan, J.; Bhaduri, B. Domain-adapted convolutional networks for satellite image classification: A large-scale interactive learning workflow. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2018, 11, 962–977. [Google Scholar] [CrossRef]

- Zhang, F.; Du, B.; Zhang, L. Saliency-guided unsupervised feature learning for scene classification. IEEE Trans. Geosci. Remote Sens. 2014, 53, 2175–2184. [Google Scholar] [CrossRef]

- Cheng, G.; Han, J.; Guo, L.; Liu, T. Learning coarse-to-fine sparselets for efficient object detection and scene classification. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; pp. 1173–1181. [Google Scholar]

- Cheng, G.; Han, J.; Guo, L.; Liu, Z.; Bu, S.; Ren, J. Effective and efficient midlevel visual elements-oriented land-use classification using VHR remote sensing images. IEEE Trans. Geosci. Remote Sens. 2015, 53, 4238–4249. [Google Scholar] [CrossRef]

- Cheng, G.; Han, J.; Zhou, P.; Guo, L. Multi-class geospatial object detection and geographic image classification based on collection of part detectors. ISPRS J. Photogramm. Remote Sens. 2014, 98, 119–132. [Google Scholar] [CrossRef]

- Chen, C.; Zhang, B.; Su, H.; Guo, L. Land-use scene classification using multi-scale completed local binary patterns. Signal Image Video Process. 2016, 10, 745–752. [Google Scholar] [CrossRef]

- Zou, J.; Li, W.; Chen, C.; Du, Q. Scene classification using local and global features with collaborative representation fusion. Inf. Sci. 2016, 348, 209–226. [Google Scholar] [CrossRef]

- Liu, B.D.; Xie, W.Y.; Meng, J.; Li, Y.; Wang, Y. Hybrid collaborative representation for remote-sensing image scene classification. Remote Sens. 2018, 10, 1934. [Google Scholar] [CrossRef]

- Liu, B.D.; Meng, J.; Xie, W.Y.; Sao, S.; Li, Y.; Wang, Y. Weighted Spatial Pyramid Matching Collaborative Representation for Remote-Sensing-Image Scene Classification. Remote Sens. 2019, 11, 518. [Google Scholar] [CrossRef]

- Fan, J.; Chen, T.; Lu, S. Unsupervised feature learning for land-use scene recognition. IEEE Trans. Geosci. Remote Sens. 2017, 55, 2250–2261. [Google Scholar] [CrossRef]

- Wu, Z.; Shi, L.; Li, J.; Wang, Q.; Sun, L.; Wei, Z.; Plaza, J.; Plaza, A. GPU parallel implementation of spatially adaptive hyperspectral image classification. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2017, 11, 1131–1143. [Google Scholar] [CrossRef]

- Wu, Z.; Li, Y.; Plaza, J.; Li, A.; Xiao, F.; Wei, Z. Parallel and distributed dimensionality reduction of hyperspectral data on cloud computing architectures. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2016, 9, 2270–2278. [Google Scholar] [CrossRef]

- Wang, G.; Fan, B.; Xiang, S.; Pan, C. Aggregating rich hierarchical features for scene classification in remote sensing imagery. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2017, 10, 4104–4115. [Google Scholar] [CrossRef]

- Othman, E.; Bazi, Y.; Melgani, F.; Alhichri, H.; Alajlan, N.; Zuair, M. Domain adaptation network for cross-scene classification. IEEE Trans. Geosci. Remote Sens. 2017, 55, 4441–4456. [Google Scholar] [CrossRef]

- Hu, F.; Xia, G.S.; Hu, J.; Zhang, L. Transferring deep convolutional neural networks for the scene classification of high-resolution remote sensing imagery. Remote Sens. 2015, 7, 14680–14707. [Google Scholar] [CrossRef]

- Chen, G.; Zhang, X.; Tan, X.; Chen, Y.; Dai, F.; Zhu, K.; Gong, Y.; Wang, Q. Training small networks for scene classification of remote sensing images via knowledge distillation. Remote Sens. 2018, 10, 719. [Google Scholar] [CrossRef]

- Huang, H.; Xu, K. Combing Triple-Part Features of Convolutional Neural Networks for Scene Classification in Remote Sensing. Remote Sens. 2019, 11, 1687. [Google Scholar] [CrossRef]

- Zhang, H.; Zhang, J.; Xu, F. Land use and land cover classification base on image saliency map cooperated coding. In Proceedings of the 2015 IEEE International Conference on Image Processing (ICIP), Quebec City, QC, Canada, 27–30 September 2015; pp. 2616–2620. [Google Scholar]

- Zhou, B.; Khosla, A.; Lapedriza, A.; Oliva, A.; Tarralba, A. Learning deep features for discriminative localization. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 2921–2929. [Google Scholar]

- Selvaraju, R.R.; Cogswell, M.; Das, A.; Vedantam, R.; Parikh, D.; Batra, D. Grad-cam: Visual explanations from deep networks via gradient-based localization. In Proceedings of the IEEE International Conference on Computer Vision; Venice, Italy, 22–29 October 2017, pp. 618–626.

- Chattopadhay, A.; Sarkar, A.; Howlader, P.; Balasubramanian, V. Grad-cam++: Generalized gradient-based visual explanations for deep convolutional networks. In Proceedings of the IEEE Winter Conference on Applications of Computer Vision (WACV), Lake Tahoe, NV, USA, 12–15 March 2018; pp. 839–847. [Google Scholar]

- Feichtenhofer, C.; Pinz, A.; Zisserman, A. Convolutional two-stream network fusion for video action recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 1933–1941. [Google Scholar]

- Chaib, S.; Liu, H.; Gu, Y.; Yao, H. Deep feature fusion for VHR remote sensing scene classification. IEEE Trans. Geosci. Remote Sens. 2017, 55, 4775–4784. [Google Scholar] [CrossRef]

- Zhao, B.; Zhong, Y.; Zhang, L. A spectral–structural bag-of-features scene classifier for very high spatial resolution remote sensing imagery. ISPRS J. Photogramm. Remote Sens. 2016, 116, 73–85. [Google Scholar] [CrossRef]

- Chowdhury, A.R.; Lin, T.Y.; Maji, S.; Learned-Miller, E. One-to-many face recognition with bilinear cnns. In Proceedings of the IEEE Winter Conference on Applications of Computer Vision (WACV), Lake Placid, NY, USA, 7–10 March 2016; pp. 1–9. [Google Scholar]

- Jiang, Y.G.; Wu, Z.; Tang, J.; Li, Z.; Xue, X.; Chang, S. Modeling multimodal clues in a hybrid deep learning framework for video classification. IEEE Trans. Multimed. 2018, 20, 3137–3147. [Google Scholar] [CrossRef]

- Bodla, N.; Zheng, J.; Xu, H.; Chen, J.; Castillo, C.; Chellappa, R. Deep heterogeneous feature fusion for template-based face recognition. In Proceedings of the IEEE Winter Conference on Applications of Computer Vision (WACV), Santa Rosa, CA, USA, 24–31 March 2017; pp. 586–595. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 26 June–1 July 2016; pp. 770–778. [Google Scholar]

- Ba, R.; Chen, C.; Yuan, J.; Song, W.; Lo, S. SmokeNet: Satellite Smoke Scene Detection Using Convolutional Neural Network with Spatial and Channel-Wise Attention. Remote Sens. 2019, 11, 1702. [Google Scholar] [CrossRef]

- Gong, Z.; Zhong, P.; Hu, W.; Hua, Y. Joint learning of the center points and deep metrics for land-use classification in remote sensing. Remote Sens. 2019, 11, 76. [Google Scholar] [CrossRef]

- Wen, Y.; Zhang, K.; Li, Z.; Qiao, Y. A discriminative feature learning approach for deep face recognition. In European Conference on Computer Vision; Springer: Cham, Switzerland, 2016; pp. 499–515. [Google Scholar]

- Yang, Y.; Newsam, S. Bag-of-visual-words and spatial extensions for land-use classification. In Proceedings of the 18th SIGSPATIAL International Conference on Advances in Geographic Information Systems, San Jose, CA, USA, 2–5 November 2010; pp. 270–279. [Google Scholar]

- Xia, G.S.; Hu, J.; Hu, F.; Shi, B.; Bai, X.; Zhong, Y.; Zhang, L.; Lu, X. AID: A benchmark data set for performance evaluation of aerial scene classification. IEEE Trans. Geosci. Remote Sens. 2017, 55, 3965–3981. [Google Scholar] [CrossRef]

- Cheng, G.; Han, J.; Lu, X. Remote sensing image scene classification: Benchmark and state of the art. Proc. IEEE 2017, 105, 1865–1883. [Google Scholar] [CrossRef]

- Liu, N.; Lu, X.; Wan, L.; Huo, H.; Fang, T. Improving the separability of deep features with discriminative convolution filters for RSI classification. ISPRS Int. J. Geo Inf. 2018, 7, 95. [Google Scholar] [CrossRef]

- Anwer, R.M.; Khan, F.S.; van de Weijer, J.; Molinierd, M.; Laaksonena, J. Binary patterns encoded convolutional neural networks for texture recognition and remote sensing scene classification. ISPRS J. Photogramm. Remote Sens. 2018, 138, 74–85. [Google Scholar] [CrossRef]

- Jia, Y.; Shelhamer, E.; Donahue, J.; Karayev, S.; Long, J.; Girshick, R.; Guadarrama, S.; Darrell, T. Caffe: Convolutional architecture for fast feature embedding. In Proceedings of the 22nd ACM International Conference on Multimedia, Orlando, FL, USA, 3–7 November 2014; pp. 675–678. [Google Scholar]

- Szegedy, C.; Liu, W.; Jia, Y.; Sermanet, P.; Reed, S.; Anguelov, D.; Erhan, D.; Vanhoucke, V.; Rabinovich, A. Going deeper with convolutions. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; pp. 1–9. [Google Scholar]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv 2014, arXiv:1409.1556. [Google Scholar]

- Al Rahhal, M.; Bazi, Y.; Abdullah, T.; Mekhalfi, M.; AlHichri, H.; Zuair, M. Learning a Multi-Branch Neural Network from Multiple Sources for Knowledge Adaptation in Remote Sensing Imagery. Remote Sens. 2018, 10, 1890. [Google Scholar] [CrossRef]

- Hoffer, E.; Ailon, N. Deep metric learning using triplet network. In International Workshop on Similarity-Based Pattern Recognition; Springer: Cham, Switzerland, 2015; pp. 84–92. [Google Scholar]

- Minetto, R.; Segundo, M.P.; Sarkar, S. Hydra: An ensemble of convolutional neural networks for geospatial land classification. IEEE Trans. Geosci. Remote Sens. 2019. [Google Scholar] [CrossRef]

- Huang, G.; Liu, Z.; van der Maaten, L.; Weinberger, K.Q. Densely connected convolutional networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 4700–4708. [Google Scholar]

- Cheng, G.; Li, Z.; Yao, X.; Guo, L.; Wei, Z. Remote sensing image scene classification using bag of convolutional features. IEEE Geosci. Remote Sens. Lett. 2017, 14, 1735–1739. [Google Scholar] [CrossRef]

- Yan, L.; Zhu, R.; Liu, Y.; Mo, N. Color-Boosted Saliency-Guided Rotation Invariant Bag of Visual Words Representation with Parameter Transfer for Cross-Domain Scene-Level Classification. Remote Sens. 2018, 10, 610. [Google Scholar] [CrossRef]

| Details | UC Merced | AID | NWPU-RESISC45 |

|---|---|---|---|

| Size of each patch | 256 × 256 | 600 × 600 | 256 × 256 |

| Spatial resolution | 0.3 m | 0.5–8 m | 0.2–30 m |

| The number of classes | 21 | 30 | 45 |

| Images per class | 100 | 200–400 | 750 |

| Total images | 2100 | 10000 | 31500 |

| Methods | Published Year | 50% Training Ratio | 80% Training Ratio |

|---|---|---|---|

| The Proposed ADFF | 2019 | 96.05 ± 0.56 | 97.53 ± 0.63 |

| CaffeNet with DCF | 2018 | 95.26 ± 0.50 | 96.79 ± 0.66 |

| VGG-VD16 with DCF | 2018 | 95.42 ± 0.71 | 97.10 ± 0.85 |

| TEX-Net-LF | 2017 | 95.89 ± 0.37 | 96.62 ± 0.49 |

| CaffeNet | 2017 | 93.98 ± 0.67 | 95.02 ± 0.81 |

| GoogleNet | 2017 | 92.70 ± 0.60 | 94.31 ± 0.89 |

| VGG-16 | 2017 | 94.14 ± 0.69 | 95.21 ± 1.20 |

| Multi-Branch Neural Network | 2018 | 91.33 ± 0.65 | 93.56 ± 0.87 |

| Triplet Network | 2015 | 93.53 ± 0.49 | 95.70 ± 0.60 |

| BOCF | 2018 | 89.31± 0.92 | 92.50± 1.18 |

| salM3LBP-CLM | 2017 | 91.21 ± 0.75 | 93.75 ± 0.80 |

| Scene Capture | 2018 | 91.11 ± 0.77 | 93.44 ± 0.69 |

| Color-Boosted Saliency-Guided BOW | 2018 | 91.85 ± 0.65 | 93.71 ± 0.57 |

| Methods | Published Year | 50% Training Ratio | 20% Training Ratio |

|---|---|---|---|

| The Proposed ADFF | 2019 | 94.75 ± 0.24 | 93.68 ± 0.29 |

| CaffeNet with DCF | 2018 | 93.10 ± 0.27 | 91.35 ± 0.23 |

| VGG-VD16 with DCF | 2018 | 93.65 ± 0.18 | 91.57 ± 0.10 |

| TEX-Net-LF | 2017 | 92.96 ± 0.18 | 90.87 ± 0.11 |

| CaffeNet | 2017 | 89.53 ± 0.31 | 86.46 ± 0.47 |

| GoogleNet | 2017 | 88.39 ± 0.55 | 85.44 ± 0.40 |

| VGG-16 | 2017 | 89.64 ± 0.36 | 86.59 ± 0.29 |

| Multi-Branch Neural Network | 2018 | 91.46 ± 0.44 | 89.38 ± 0.32 |

| Triplet Network | 2015 | 89.10 ± 0.30 | 86.89 ± 0.22 |

| BOCF | 2018 | 87.63 ± 0.41 | 85.24 ± 0.33 |

| salM3LBP-CLM | 2017 | 89.76 ± 0.45 | 86.92 ± 0.35 |

| Scene Capture | 2018 | 89.43 ± 0.33 | 87.25 ± 0.31 |

| Color-Boosted Saliency-Guided BOW | 2018 | 88.67 ± 0.39 | 86.67± 0.38 |

| Methods | Published Year | 20% Training Ratio | 10% Training Ratio |

|---|---|---|---|

| The Proposed ADFF | 2019 | 91.91± 0.23 | 90.58 ± 0.19 |

| CaffeNet with DCF | 2018 | 89.20± 0.27 | 87.59 ± 0.22 |

| VGG-VD16 with DCF | 2018 | 89.56 ± 0.25 | 87.14 ± 0.19 |

| TEX-Net-LF | 2017 | 88.37 ± 0.32 | 86.05 ± 0.24 |

| CaffeNet | 2017 | 79.85 ± 0.13 | 77.69 ± 0.21 |

| GoogleNet | 2017 | 78.48 ± 0.26 | 77.19 ± 0.38 |

| VGG-16 | 2017 | 79.79 ± 0.15 | 77.47 ± 0.18 |

| Multi-Branch Neural Network | 2018 | 76.38 ± 0.34 | 74.45 ± 0.26 |

| Triplet Network | 2015 | 88.01 ± 0.29 | 86.02 ± 0.25 |

| Hydra | 2019 | 94.51 ± 0.29 | 92.44 ± 0.34 |

| DenseNet | 2017 | 90.96 ± 0.31 | 89.38 ± 0.36 |

| BOCF | 2018 | 85.32 ± 0.17 | 83.65 ± 0.31 |

| salM3LBP-CLM | 2017 | 86.59 ± 0.28 | 85.32 ± 0.17 |

| Scene Capture | 2018 | 86.24 ± 0.36 | 84.84 ± 0.26 |

| Color-Boosted Saliency-Guided BOW | 2018 | 87.05 ± 0.29 | 85.16 ± 0.23 |

| The Training Ratio | UC MERCED | AID | NWPU-ESISC45 |

|---|---|---|---|

| 10% | - | - | 15,053 s |

| 20% | - | 10,082 s | 28,355 s |

| 50% | 5349 s | 23,274 s | - |

| 80% | 7983 s | - | - |

| Methods | UC MERCED | AID | NWPU-ESISC45 |

|---|---|---|---|

| The Proposed ADFF | 97.53 ± 0.63 | 94.75 ± 0.24 | 90.91 ± 0.23 |

| Without Attention Maps | 90.46 ± 0.85 | 86.39 ± 0.45 | 83.44 ± 0.36 |

| Without Multiplicative Fusion of Deep Features | 91.63 ± 0.72 | 88.86 ± 0.40 | 85.16 ± 0.34 |

| Without the Center Loss, but only with Cross-Entropy Loss | 93.79 ± 0.68 | 90.87 ± 0.31 | 87.15 ± 0.30 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhu, R.; Yan, L.; Mo, N.; Liu, Y. RETRACTED: Attention-Based Deep Feature Fusion for the Scene Classification of High-Resolution Remote Sensing Images. Remote Sens. 2019, 11, 1996. https://doi.org/10.3390/rs11171996

Zhu R, Yan L, Mo N, Liu Y. RETRACTED: Attention-Based Deep Feature Fusion for the Scene Classification of High-Resolution Remote Sensing Images. Remote Sensing. 2019; 11(17):1996. https://doi.org/10.3390/rs11171996

Chicago/Turabian StyleZhu, Ruixi, Li Yan, Nan Mo, and Yi Liu. 2019. "RETRACTED: Attention-Based Deep Feature Fusion for the Scene Classification of High-Resolution Remote Sensing Images" Remote Sensing 11, no. 17: 1996. https://doi.org/10.3390/rs11171996

APA StyleZhu, R., Yan, L., Mo, N., & Liu, Y. (2019). RETRACTED: Attention-Based Deep Feature Fusion for the Scene Classification of High-Resolution Remote Sensing Images. Remote Sensing, 11(17), 1996. https://doi.org/10.3390/rs11171996