Photogrammetric Solution for Analysis of Out-Of-Plane Movements of a Masonry Structure in a Large-Scale Laboratory Experiment

Abstract

1. Introduction

2. Materials and Methods

2.1. Quasi-Static Cyclic Test

2.2. Photogrammetric Approach

2.2.1. Photographic Sensor

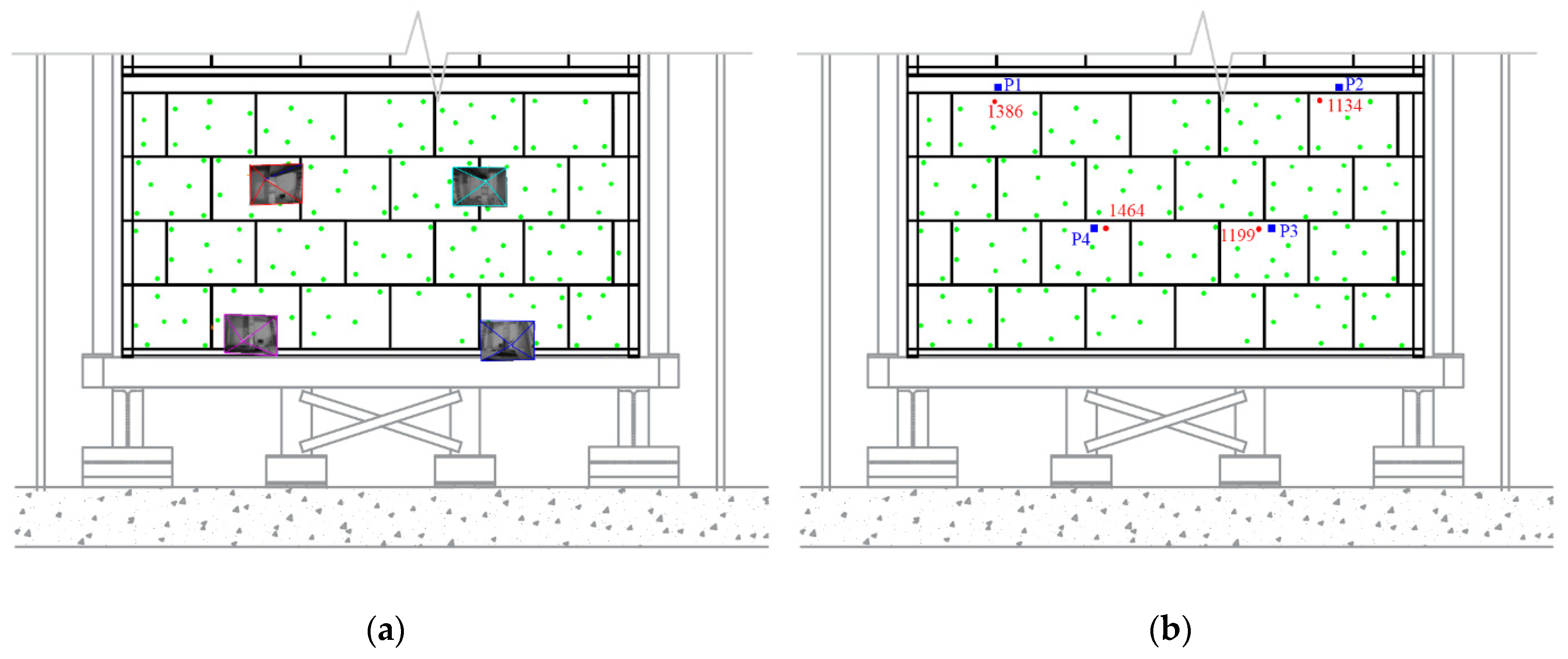

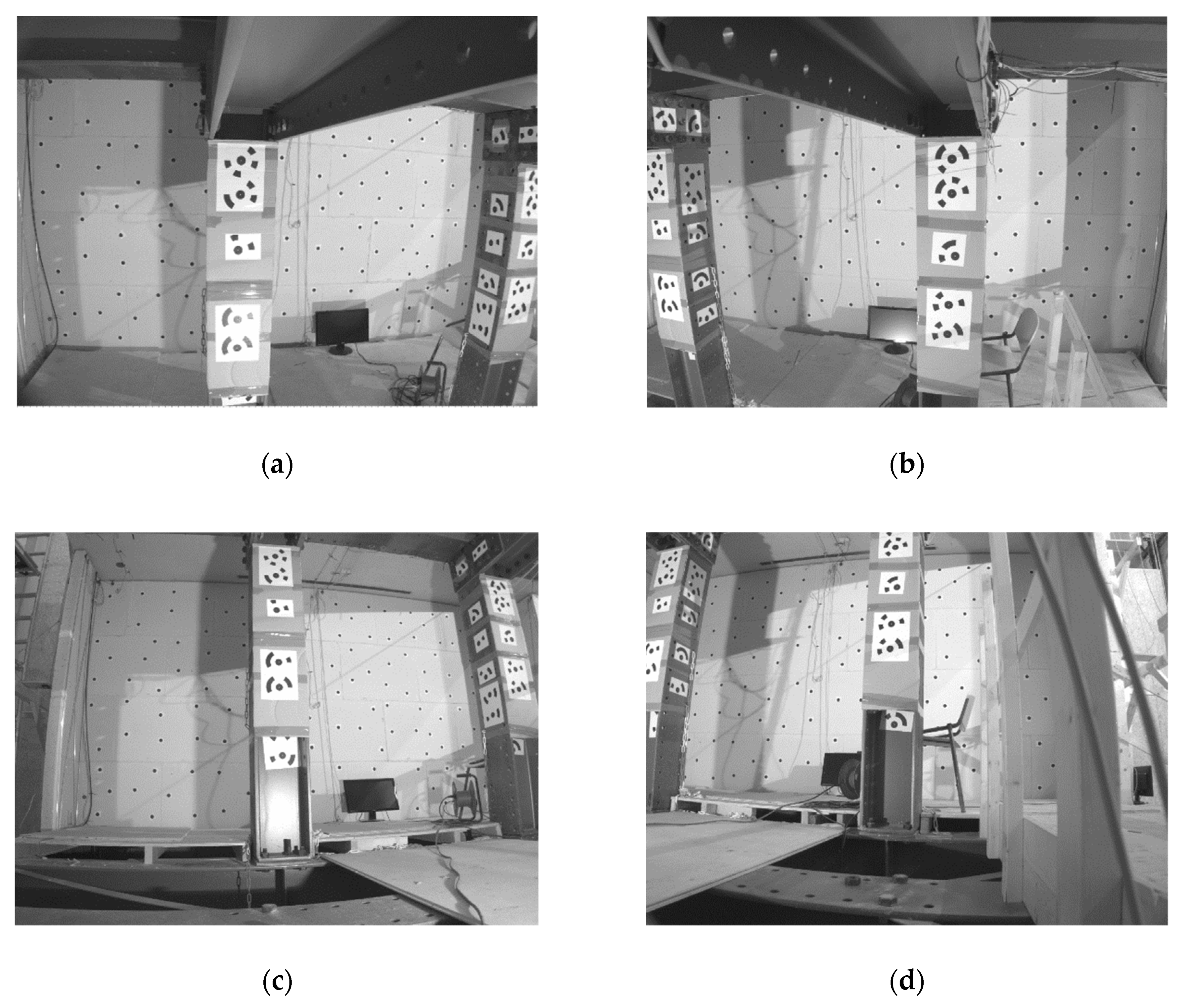

2.2.2. Photogrammetric Network Design

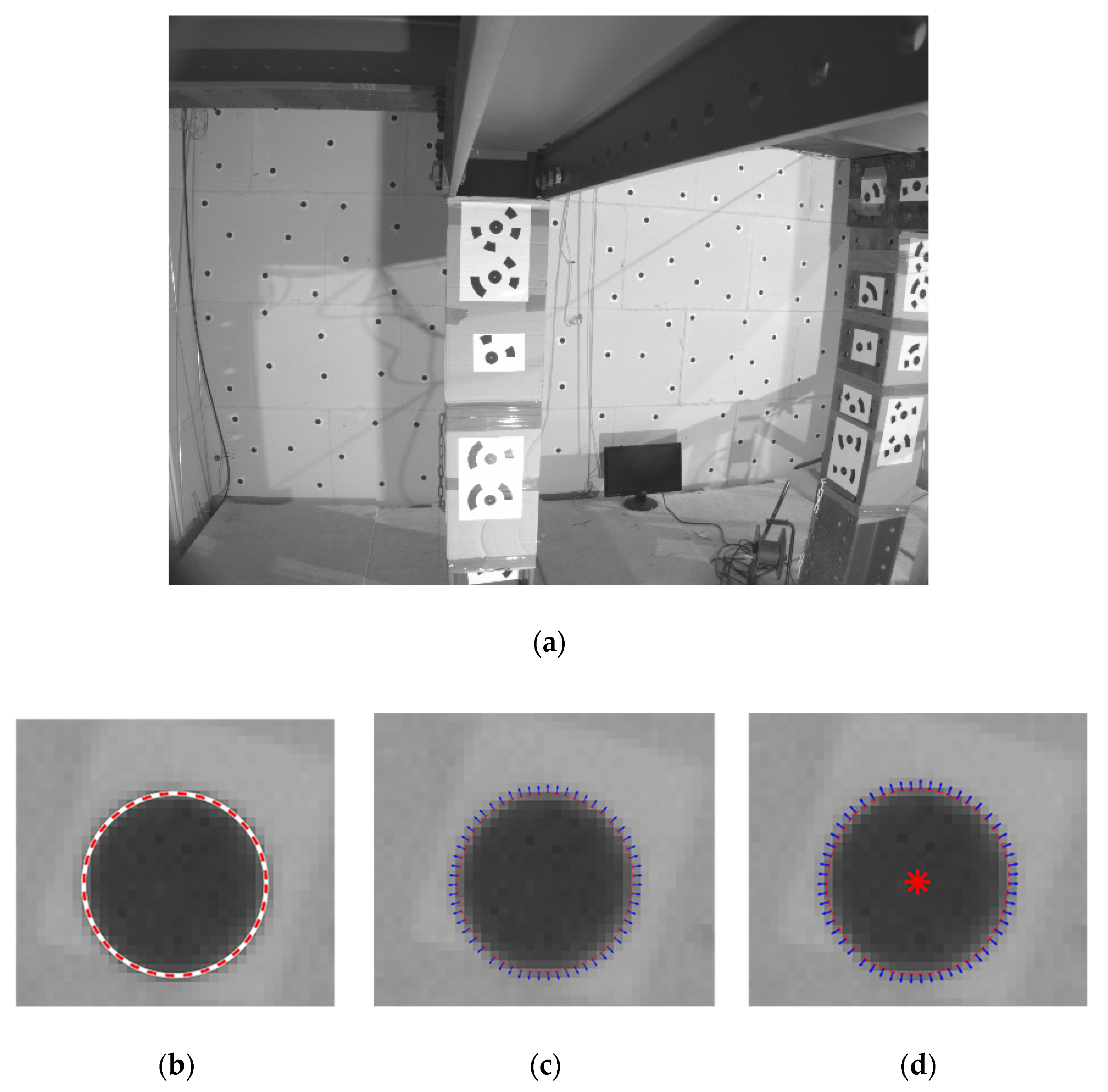

2.2.3. Target Detection and Matching

2.2.4. Photogrammetric Network Adjustment

2.2.5. Target Tracking and Extraction of Out-Of-Plane Movements

- Extraction of the new image coordinates of each tracked target: the extraction of the new center coordinates firstly required constraining the search space, i.e., the area within the image in which the target could be found. For the present study case, a search window with a fixed size was used. This value was established in accordance with the size of the targets, the camera resolution, and the expected displacement of the target. Afterward, the coarse-to-fine strategy shown in Section 2.2.3 was applied with the aim of detecting the new target’s centers.

- Estimation of the new 3D coordinates: by assuming that the external orientation of the cameras remained stable along the test, it was possible to use the bundle adjustment solution to estimate the new 3D coordinates. In this sense the collinearity condition (Equation (4)) was applied, using the new image coordinates of the target’s center as input data.

3. Experimental Results

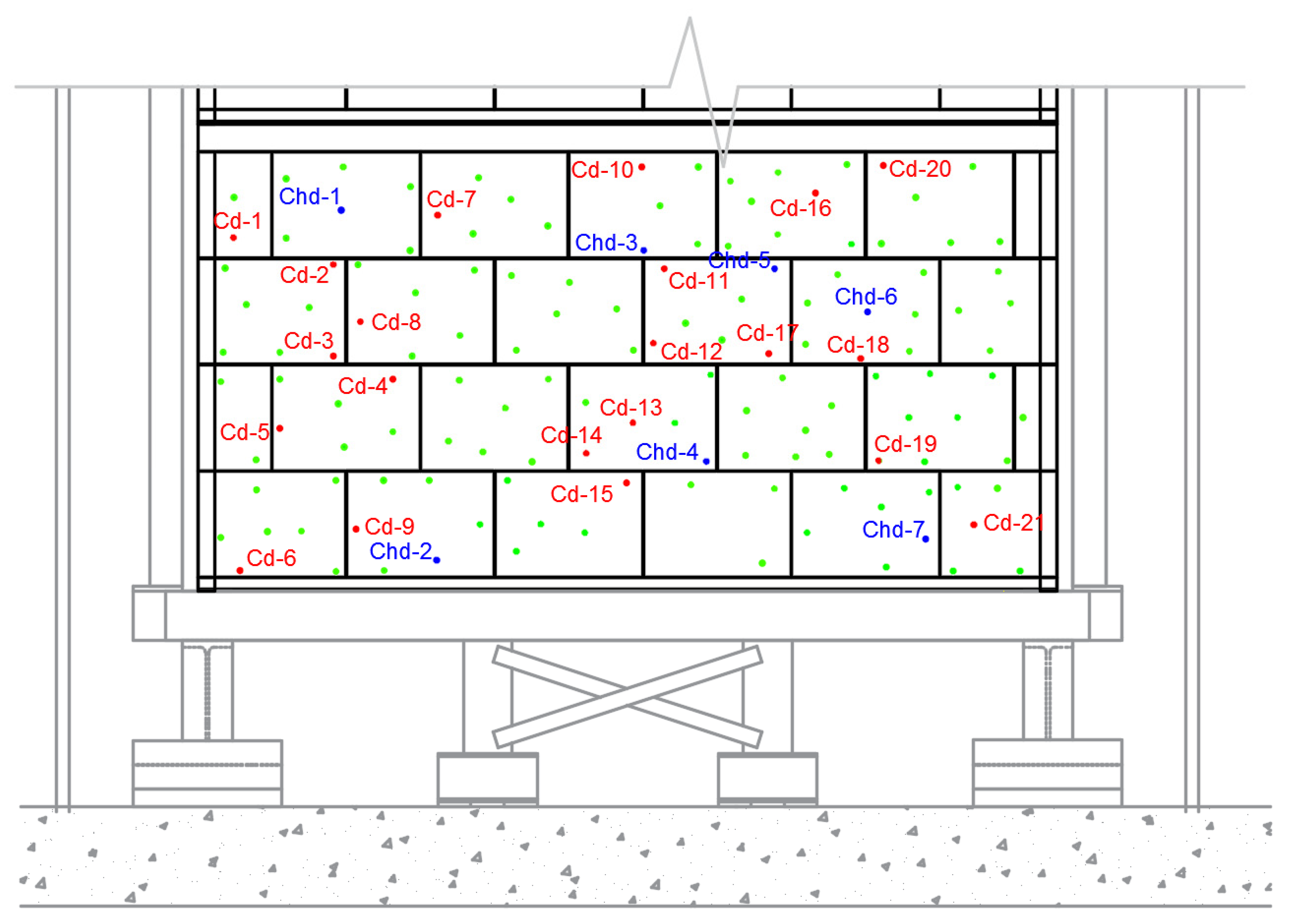

3.1. Network Design and Data Acquisition

3.2. Photogrammetric Network Adjustment

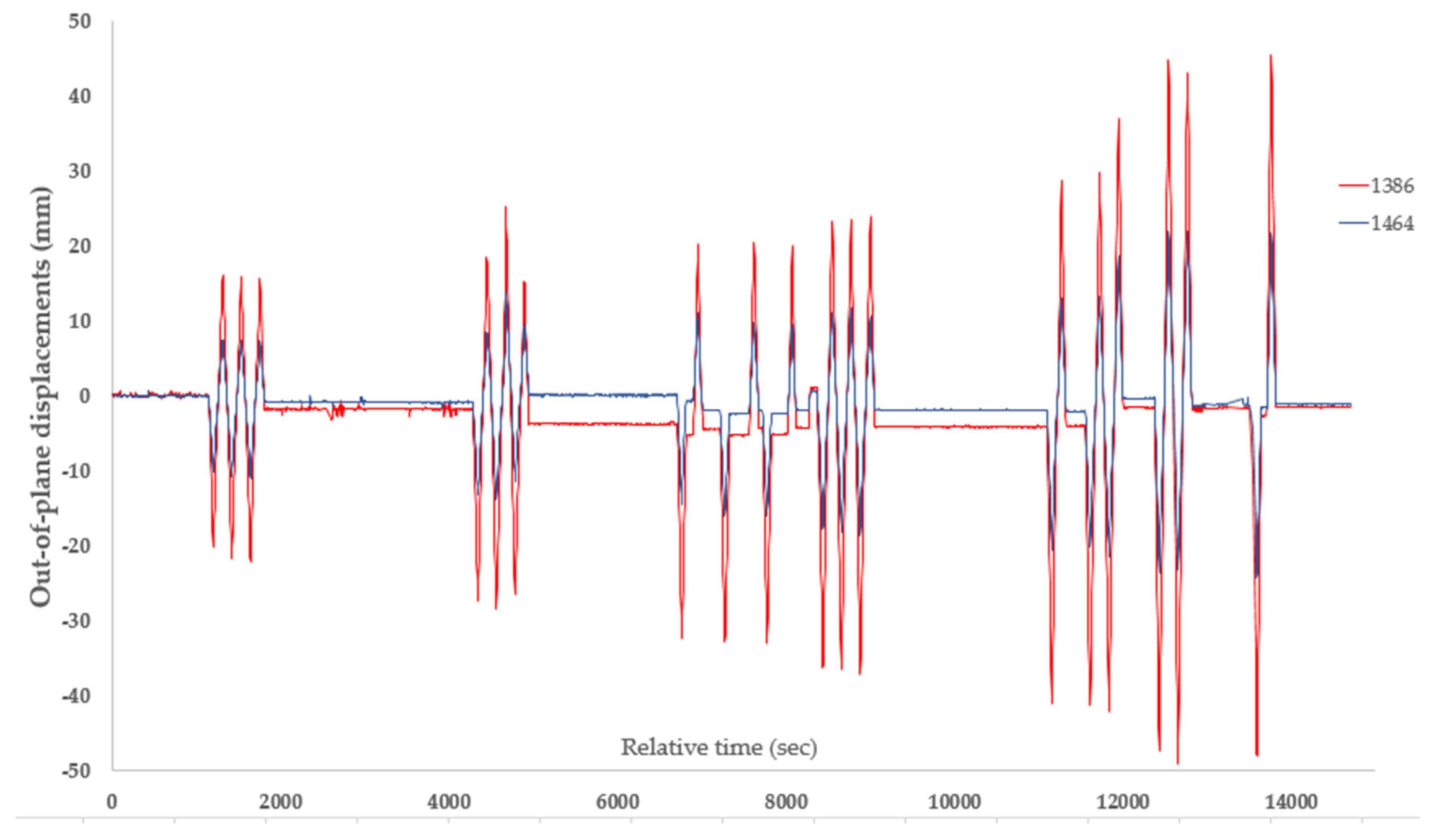

3.3. Analysis of the Out-Of-Plane Displacements Experienced by the Masonry Wall

3.4. Accuracy Assessment of the Photogrammetric Approach

4. Discussion and Conclusions

- In comparison with traditional contacted sensors, such as linear variable differential transformers (LVDTs), potentiometers, or displacement gauges, the proposed methodology offers a contactless methodology that is able to monitor the whole surface of the specimen with a unique photogrammetric network and several tracking targets. Complementary to this, the contact nature of the traditional sensors made it necessary to place them in specific positions with the risk of not capturing the deformation suffered by other locations, or even the cracks.

- The residual error obtained during the detection of the circular targets, which was estimated as 0.09 pixels, corroborates the robustness of the proposed approach.

- The proposed method showed great performance, requiring 115 min for processing the whole dataset. This dataset was made up of 9896 images.

- By comparing the measurements obtained via the traditional contact sensors and the contactless method, it was possible to conclude that the photogrammetric approach was able to detect the out-of-plane movements of the masonry wall with sub-pixel accuracy. This accuracy was estimated at 0.58 pixels (0.99 mm).

- The proposed approach can be applied to specimens subjected to quasi-static cyclic tests for which the laser scanning strategy may not be continuously applied due to the time needed to scan each epoch. Highlighting also its low cost, this approach requires the use of several digital cameras and printed targets.

- This method highlights its flexibility through its application in texture-less structures for which the digital image correlation and the structure from motion cannot be applied property. These two methods require the proper preparation of the surface specimen. This preparation involves the application of a white layer over the specimen and then the application of a black paint pattern made by black spots randomly distributed (speckle pattern) [32]. Furthermore, the use of structure from motion requires the removal of the point cloud noise, as well as the normal estimation of each point to apply a change detection algorithm such as cloud-to-cloud comparison by means of the M3C2 proposed by Lague et al. [50] or even a comparison between the DSMs obtained in different stages as proposed by Scaioni et al. [17].

Author Contributions

Acknowledgments

Conflicts of Interest

References

- Scaioni, M.; Marsella, M.; Crosetto, M.; Tornatore, V.; Wang, J. Geodetic and remote-sensing sensors for dam deformation monitoring. Sensors 2018, 18, 3682. [Google Scholar] [CrossRef] [PubMed]

- Alba, M.; Fregonese, L.; Prandi, F.; Scaioni, M.; Valgoi, P. Structural Monitoring of a Large Dam by Terrestrial Laser Scanning. In Proceedings of the ISPRS Commision V Symposium: Image Engineering and Vision Metrology, Dresden, Germany, 25–27 September 2006. [Google Scholar]

- González-Aguilera, D.; Gómez-Lahoz, J.; Sánchez, J. A new approach for structural monitoring of large dams with a three-dimensional laser scanner. Sensors 2008, 8, 5866–5883. [Google Scholar] [CrossRef] [PubMed]

- Jiang, R.; Jáuregui, D.V.; White, K.R. Close-range photogrammetry applications in bridge measurement: Literature review. Measurement 2008, 41, 823–834. [Google Scholar] [CrossRef]

- Gawronek, P.; Makuch, M. Tls measurement during static load testing of a railway bridge. ISPRS Int. J. Geo-Inf. 2019, 8, 44. [Google Scholar] [CrossRef]

- Valença, J.; Júlio, E.; Araújo, H. Application of photogrammetry to bridge monitoring. In Proceedings of the 12th Conference, Structural Faults and Repair, Edinburgh, UK, 10–12 June 2008. [Google Scholar]

- Yu, C.; Zhang, G.; Liu, X.; Fan, L.; Hai, H. Monitoring bridge dynamic deformation in vibration by digital photography. In Proceedings of the 3rd International Conference on Enviromental Science and Material. Application (ESMA2017), Chongqing, China, 25–26 November 2018. [Google Scholar]

- Lee, H.; Han, D. Deformation measurement of a railroad bridge using a photogrammetric board without control point survey. J. Sens. 2018. [Google Scholar] [CrossRef]

- Puente, I.; Lindenbergh, R.; Van Natijne, A.; Esposito, R.; Schipper, R. Monitoring of progressive damage in buildings using laser scan data. In Proceedings of the International Archives of the Photogrammetry, Remote Sensing and Spatial Information Sciences-ISPRS Archives, Riva del Garda, Italy, 4–7 June 2018; Remondino, F., Toschi, I., Fuse, T., Eds.; pp. 923–929. [Google Scholar]

- Gazić, G.; Dokšanović, T.; Draganić, H. Evaluation of out-of-plane deformation of masonry infill walls due to in-plane loading by digital image correlation. Mater. Today Proc. 2018, 5, 26661–26666. [Google Scholar] [CrossRef]

- Tung, S.-H.; Shih, M.-H.; Sung, W.-P. Applying the digital-image-correlation technique to measure the deformation of an old building’s column retrofitted with steel plate in an in situ pushover test. Sadhana 2014, 39, 699–711. [Google Scholar] [CrossRef][Green Version]

- Salmanpour, A.; Mojsilovic, N. Application of digital image correlation for strain measurements of large masonry walls. In Proceedings of the APCOM & ISCM, Singapore, 11–14 December 2013. [Google Scholar]

- Yang, H.; Xu, X.; Neumann, I. Deformation behavior analysis of composite structures under monotonic loads based on terrestrial laser scanning technology. Compos. Struct. 2018, 183, 594–599. [Google Scholar] [CrossRef]

- Lehman, D.E.; Turgeon, J.A.; Birely, A.C.; Hart, C.R.; Marley, K.P.; Kuchma, D.A.; Lowes, L.N. Seismic behavior of a modern concrete coupled wall. J. Struct. Eng. 2013, 139, 1371–1381. [Google Scholar] [CrossRef]

- Scaioni, M.; Feng, T.; Barazzetti, L.; Previtali, M.; Roncella, R. Image-based deformation measurement. Appl. Geomat. 2015, 7, 75–90. [Google Scholar] [CrossRef]

- Fedele, R.; Scaioni, M.; Barazzetti, L.; Rosati, G.; Biolzi, L. Delamination tests on CFRP-reinforced masonry pillars: Optical monitoring and mechanical modeling. Cem. Concr. Compos. 2014, 45, 243–254. [Google Scholar] [CrossRef]

- Scaioni, M.; Feng, T.; Barazzetti, L.; Previtali, M.; Lu, P.; Qiao, G.; Wu, H.; Chen, W.; Tong, X.; Wang, W. Some applications of 2-D and 3-D photogrammetry during laboratory experiments for hydrogeological risk assessment. Geomat. Nat. Hazards Risk 2015, 6, 473–496. [Google Scholar] [CrossRef]

- González-Aguilera, D.; Gómez-Lahoz, J.; Muñoz-Nieto, Á.; Herrero-Pascual, J. Monitoring the health of an emblematic monument from terrestrial laser scanner. Nondestruct. Test. Eval. 2008, 23, 301–315. [Google Scholar] [CrossRef]

- Gordon, S.J.; Lichti, D.D. Modeling terrestrial laser scanner data for precise structural deformation measurement. J. Surv. Eng. 2007, 133, 72–80. [Google Scholar] [CrossRef]

- Lowes, L.N.; Lehman, D.E.; Birely, A.C.; Kuchma, D.A.; Marley, K.P.; Hart, C.R. Earthquake response of slender planar concrete walls with modern detailing. Eng. Struct. 2012, 43, 31–47. [Google Scholar]

- Garcia-Martin, R.; Bautista-De Castro, Á.; Sánchez-Aparicio, L.J.; Fueyo, J.G.; Gonzalez-Aguilera, D. Combining digital image correlation and probabilistic approaches for the reliability analysis of composite pressure vessels. Arch. Civ. Mech. Eng. 2019, 19, 224–239. [Google Scholar] [CrossRef]

- Sánchez-Aparicio, L.; Villarino, A.; García-Gago, J.; González-Aguilera, D. Photogrammetric, geometrical, and numerical strategies to evaluate initial and current conditions in historical constructions: A test case in the church of san lorenzo (zamora, spain). Remote Sens. 2016, 8, 60. [Google Scholar] [CrossRef]

- Barazzetti, L.; Scaioni, M. Development and Implementation of Image-based Algorithms for Measurement of Deformations in Material Testing. Sensors 2010, 10, 7469–7495. [Google Scholar] [CrossRef] [PubMed]

- Tong, X.; Luan, K.; Liu, X.; Liu, S.; Chen, P.; Jin, Y.; Lu, W.; Huang, B. Tri-camera high-speed videogrammetry for three-dimensional measurement of laminated rubber bearings based on the large-scale shaking table. Remote Sens. 2018, 10, 1902. [Google Scholar] [CrossRef]

- Tong, X.; Gao, S.; Liu, S.; Ye, Z.; Chen, P.; Yan, S.; Zhao, X.; Du, L.; Liu, X.; Luan, K. Monitoring a progressive collapse test of a spherical lattice shell using high-speed videogrammetry. Photogramm. Rec. 2017, 32, 230–254. [Google Scholar] [CrossRef]

- Liu, X.; Tong, X.; Yin, X.; Gu, X.; Ye, Z. Videogrammetric technique for three-dimensional structural progressive collapse measurement. Measurement 2015, 63, 87–99. [Google Scholar]

- Baqersad, J.; Poozesh, P.; Niezrecki, C.; Avitabile, P. Photogrammetry and optical methods in structural dynamics—A review. Mech. Syst. Signal Process. 2017, 86, 17–34. [Google Scholar] [CrossRef]

- Hallermann, N.; Morgenthal, G.; Rodehorst, V. Unmanned aerial systems (UAS)—Case studies of vision based monitoring of ageing structures. In Proceedings of the Non-Destructive Testing in Civil Engineering, Berlin, Germany, 15–17 September 2015. [Google Scholar]

- Teza, G.; Pesci, A.; Ninfo, A. Morphological analysis for architectural applications: Comparison between laser scanning and structure-from-motion photogrammetry. J. Surv. Eng. 2016, 142, 04016004. [Google Scholar] [CrossRef]

- Byrne, J.; O′Keeffe, E.; Lennon, D.; Laefer, D.F. 3D reconstructions using unstabilized video footage from an unmanned aerial vehicle. J. Imaging 2017, 3, 15. [Google Scholar]

- Chen, C.-C.; Wu, W.-H.; Tseng, H.-Z.; Chen, C.-H.; Lai, G. Application of digital photogrammetry techniques in identifying the mode shape ratios of stay cables with multiple camcorders. Measurement 2015, 75, 134–146. [Google Scholar]

- Pan, B. Digital image correlation for surface deformation measurement: Historical developments, recent advances and future goals. Meas. Sci. Technol. 2018, 29, 082001. [Google Scholar]

- Esposito, R.; Messali, F.; Ravenshorst, G.J.; Schipper, H.R.; Rots, J.G. Seismic assessment of a lab-tested two-storey unreinforced masonry Dutch terraced house. Bull. Earthq. Eng. 2019, 17, 4601–4623. [Google Scholar] [CrossRef]

- Fraser, C.S. Network design considerations for non-topographic photogrammetry. Photogramm. Eng. Remote Sens. 1984, 50, 1115–1126. [Google Scholar]

- Esposito, R.; Jafari, S.; Ravenshorst, G.; Schipper, H.; Rots, J. Influence of the behaviour of calcium silicate brick and element masonry on the lateral capacity of structures. In Proceedings of the 10th Australasian Masonry Conference (AMC), Sydney, Australia, 11–14 February 2018; Masia, M., Alterman, D., Totoev, Y., Page, A., Eds.; [Google Scholar]

- Trujillo-Pino, A.; Krissian, K.; Alemán-Flores, M.; Santana-Cedrés, D. Accurate subpixel edge location based on partial area effect. Image Vis. Comput. 2013, 31, 72–90. [Google Scholar]

- Zhang, Y.; Jiang, J.; Zhang, G.; Lu, Y. High-accuracy location algorithm of planetary centers for spacecraft autonomous optical navigation. Acta Astronaut. 2019, 161, 542–551. [Google Scholar] [CrossRef]

- Burson-Thomas, C.B.; Wellman, R.; Harvey, T.J.; Wood, R.J. Water droplet erosion of aeroengine fan blades: The importance of form. Wear 2019, 426, 507–517. [Google Scholar] [CrossRef]

- Li, Y.; Huo, J.; Yang, M.; Zhang, G. Algorithm of locating the sphere center imaging point based on novel edge model and zernike moments for vision measurement. J. Odern Opt. 2019, 66, 218–227. [Google Scholar] [CrossRef]

- Gonzalez-Aguilera, D.; López-Fernández, L.; Rodriguez-Gonzalvez, P.; Hernandez-Lopez, D.; Guerrero, D.; Remondino, F.; Menna, F.; Nocerino, E.; Toschi, I.; Ballabeni, A. Graphos—Open-source software for photogrammetric applications. Photogramm. Rec. 2018, 33, 11–29. [Google Scholar] [CrossRef]

- Sánchez-Aparicio, L.J.; Del Pozo, S.; Ramos, L.F.; Arce, A.; Fernandes, F.M. Heritage site preservation with combined radiometric and geometric analysis of tls data. Autom. Constr. 2018, 85, 24–39. [Google Scholar] [CrossRef]

- Hartley, R.; Zisserman, A. Multiple View Geometry in Computer Vision; Cambridge University Press: Cambridge, UK, 2003. [Google Scholar]

- Abdel-Aziz, Y.; Karara, H.; Hauck, M. Direct linear transformation from comparator coordinates into object space coordinates in close-range photogrammetry. Photogramm. Eng. Remote Sens. 2015, 81, 103–107. [Google Scholar] [CrossRef]

- Kraus, K. Photogrammetry. V. 2, Advanced Methods and Applications; Dümmlers: Berlin, Germany, 1997. [Google Scholar]

- Grafarend, E.W.; Sansò, F. Optimization and Design of Geodetic Networks; Springer Science & Business Media: Berlin, Germany, 2012. [Google Scholar]

- Ballard, D.H. Generalizing the hough transform to detect arbitrary shapes. Pattern Recognit. 1981, 13, 111–122. [Google Scholar] [CrossRef]

- Moré, J.J. The levenberg-marquardt algorithm: Implementation and theory. In Proceedings of the Conference on Numerical analysis, Dundee, UK, 28 June–1 July 1977; pp. 105–116. [Google Scholar]

- Liu, C.; Hu, W. Real-time geometric fitting and pose estimation for surface of revolution. Pattern Recogniti. 2019, 85, 90–108. [Google Scholar] [CrossRef]

- Fraser, C.S. Digital camera self-calibration. ISPRS J. Photogramm. Remote Sens. 1997, 52, 149–159. [Google Scholar] [CrossRef]

- Lague, D.; Brodu, N.; Leroux, J. Accurate 3D comparison of complex topography with terrestrial laser scanner: Application to the rangitikei canyon (nz). ISPRS J. Photogramm. Remote Sens. 2013, 82, 10–26. [Google Scholar] [CrossRef]

| Basler acA2500–14 gm | |

|---|---|

| Type of sensor | MT9P031 (CMOS) |

| Sensor size | 5.7 × 4.3 mm |

| Pixel size | 2.2 µm |

| Resolution (H × V) | 2592 × 1944 pixels |

| Maximum frame rate | 14 fps |

| Focal length | 4 mm |

| Parameter | Initial Values (Laboratory Calibration) | Refined Values (Self-Calibration) | |

|---|---|---|---|

| Focal length (mm) | 4.043 ± 0.002 | 4.025 | |

| Format size (mm) | Height | 5.661 ± 0.005 | 5.655 |

| Width | 4.281 ± 0.005 | 4.277 | |

| Principal point (mm) | X value | 2.982 ± 1.314 × 10−3 | 2.989 |

| Y value | 2.264 ± 2.673 × 10−3 | 2.233 | |

| Radial lens distortion | K1 value | 1.502 × 10−2 ± 2.641 × 10−5 | 1.151 × 10−2 |

| K2 value | 7.084 × 10−5 ± 3.242 × 10−6 | 4.422 × 10−5 | |

| K3 value | 3.447 × 10−6 ± 1.113 × 10−8 | 3.443 × 10−6 | |

| Decentering Lens distortion | P1 value | 4.851 × 10−4 ± 1.235 × 10−5 | 1.334 × 10−4 |

| P2 value | 1.461 × 10−3 ± 3.471 × 10−5 | −7.840 × 10−4 | |

| ID | Expected Value (mm) | Obtained Value (mm) | Absolute Error (mm) | Relative Error (%) |

|---|---|---|---|---|

| Chd-1 | 35.00 | 34.42 | 0.58 (0.34) | 1.65 |

| Chd-2 | 35.00 | 34.96 | 0.04 (0.00) | 0.11 |

| Chd-3 | 35.00 | 35.77 | −0.77 (0.45) | −2.20 |

| Chd-4 | 35.00 | 35.25 | −0.25 (0.15) | −0.71 |

| Chd-5 | 35.00 | 34.14 | 0.86 (0.50) | 2.45 |

| Chd-6 | 35.00 | 35.86 | −0.86 (0.50) | −2.45 |

| Chd-7 | 35.00 | 34.74 | 0.26 (0.15) | 0.74 |

| Parameters | Values | |

|---|---|---|

| Translation | x (mm) | −336.14 |

| y (mm) | −467.46 | |

| z (mm) | 3947.02 | |

| Euler angles | φ (°) | −4.67 |

| θ (°) | −11.54 | |

| Ψ (°) | 10.04 | |

| Cycle | Peak Displacements | Average Velocity (mm/s) | Cycle Duration (s) | |

|---|---|---|---|---|

| Δ− (mm) | Δ+ (mm) | |||

| C17b | −21.38 | 15.95 | 0.007 | 682 |

| C18 | −27.46 | 19.70 | 0.007 | 671 |

| C19 | −32.72 | 20.25 | 0.007 | 684 |

| C20 | −36.68 | 23.64 | 0.007 | 920 |

| C21 | −41.48 | 31.83 | 0.007 | 1450 |

| C22 | −48.19 | 44.51 | 0.007 | 666 |

| Cycle | Peak Displacements | Average Velocity (mm/s) | Cycle Duration (s) | |

|---|---|---|---|---|

| Δ− (mm) | Δ+ (mm) | |||

| C17b | −21.42 | 16.03 | 0.007 | 682 |

| C18 | −27.03 | 20.02 | 0.007 | 671 |

| C19 | −32.35 | 20.75 | 0.007 | 684 |

| C20 | −37.34 | 24.98 | 0.007 | 920 |

| C21 | −41.10 | 33.18 | 0.007 | 1450 |

| C22 | −48.34 | 45.93 | 0.007 | 666 |

| Cycle | Average Displacement (Potentiometer) | Average Displacement (Photogrammetry) | Absolute error (Extremes in Bold) | RMSE (mm) | |||

|---|---|---|---|---|---|---|---|

| Δ− (mm) | Δ− (mm) | Δ− (mm) | Δ+ (mm) | Δ− (mm) | Δ+ (mm) | ||

| C17b | −21.98 | 15.80 | −21.42 | 16.03 | 0.56 | 0.23 | 0.94 |

| C18 | −27.92 | 19.18 | −27.03 | 20.02 | 0.89 | 0.84 | |

| C19 | −33.33 | 19.77 | −32.35 | 20.75 | 0.98 | 0.98 | |

| C20 | −37.46 | 24.09 | −37.34 | 24.98 | 0.12 | 0.89 | |

| C21 | −42.62 | 31.68 | −41.10 | 30.40 | 1.52 | −1.28 | |

| C22 | −47.81 | 44.32 | −48.34 | 43.94 | −0.53 | −0.38 | |

| Cycle | Average Displacement (Potentiometer) | Average Displacement (Photogrammetry) | Absolute Error (Extremes in Bold) | RMSE (mm) | |||

|---|---|---|---|---|---|---|---|

| Δ− (mm) | Δ+ (mm) | Δ− (mm) | Δ+ (mm) | Δ− (mm) | Δ+ (mm) | ||

| C17b | −22.02 | 16.11 | −21.31 | 15.91 | 0.71 | −0.20 | 1.04 |

| C18 | −27.99 | 19.55 | −27.38 | 19.64 | 0.61 | 0.09 | |

| C19 | −33.52 | 19.97 | −32.63 | 20.19 | 0.89 | 0.22 | |

| C20 | −37.67 | 24.19 | −36.57 | 23.57 | 1.10 | −0.62 | |

| C21 | −42.64 | 29.46 | −41.35 | 30.48 | 1.02 | −0.37 | |

| C22 | −47.81 | 44.87 | −48.23 | 44.06 | −0.42 | −0.58 | |

| Cycle | Average Displacement (Potentiometer) | Average Displacement (Photogrammetry) | Absolute Error (Extremes in Bold) | RMSE (mm) | |||

|---|---|---|---|---|---|---|---|

| Δ− (mm) | Δ+ (mm) | Δ− (mm) | Δ+ (mm) | Δ− (mm) | Δ+ (mm) | ||

| C17b | −10.10 | 7.23 | −10.10 | 6.39 | 0.00 | −0.84 | 1.03 |

| C18 | −12.85 | 9.17 | −13.51 | 7.62 | −0.66 | −1.55 | |

| C19 | −15.34 | 9.19 | −15.70 | 8.26 | −0.36 | −0.93 | |

| C20 | −17.34 | 11.21 | −17.62 | 10.02 | −0.28 | −1.19 | |

| C21 | −19.73 | 13.63 | −20.24 | 12.11 | −0.51 | −1.52 | |

| C22 | −21.00 | 22.69 | −19.39 | 23.11 | 1.61 | −1.58 | |

| Cycle | Average Displacement (Potentiometer) | Average Displacement (Photogrammetry) | Absolute Error (Extremes in Bold) | RMSE (mm) | |||

|---|---|---|---|---|---|---|---|

| Δ− (mm) | Δ+ (mm) | Δ− (mm) | Δ+ (mm) | Δ− (mm) | Δ+ (mm) | ||

| C17b | −10.21 | 7.07 | −10.67 | 7.46 | −0.46 | 0.39 | 0.95 |

| C18 | −12.94 | 9.18 | −12.86 | 10.66 | 0.08 | 1.48 | |

| C19 | −15.37 | 9.01 | −15.57 | 10.15 | 0.20 | 1.14 | |

| C20 | −17.24 | 11.02 | −18.20 | 11.16 | −0.96 | 0.14 | |

| C21 | −19.56 | 13.49 | −20.72 | 15.10 | −1.16 | 1.61 | |

| C22 | −22.55 | 20.71 | −21.92 | 21.86 | 0.63 | 1.15 | |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Sánchez-Aparicio, L.J.; Herrero-Huerta, M.; Esposito, R.; Roel Schipper, H.; González-Aguilera, D. Photogrammetric Solution for Analysis of Out-Of-Plane Movements of a Masonry Structure in a Large-Scale Laboratory Experiment. Remote Sens. 2019, 11, 1871. https://doi.org/10.3390/rs11161871

Sánchez-Aparicio LJ, Herrero-Huerta M, Esposito R, Roel Schipper H, González-Aguilera D. Photogrammetric Solution for Analysis of Out-Of-Plane Movements of a Masonry Structure in a Large-Scale Laboratory Experiment. Remote Sensing. 2019; 11(16):1871. https://doi.org/10.3390/rs11161871

Chicago/Turabian StyleSánchez-Aparicio, Luis Javier, Mónica Herrero-Huerta, Rita Esposito, Hugo Roel Schipper, and Diego González-Aguilera. 2019. "Photogrammetric Solution for Analysis of Out-Of-Plane Movements of a Masonry Structure in a Large-Scale Laboratory Experiment" Remote Sensing 11, no. 16: 1871. https://doi.org/10.3390/rs11161871

APA StyleSánchez-Aparicio, L. J., Herrero-Huerta, M., Esposito, R., Roel Schipper, H., & González-Aguilera, D. (2019). Photogrammetric Solution for Analysis of Out-Of-Plane Movements of a Masonry Structure in a Large-Scale Laboratory Experiment. Remote Sensing, 11(16), 1871. https://doi.org/10.3390/rs11161871