Combining UAV-Based Vegetation Indices and Image Classification to Estimate Flower Number in Oilseed Rape

Abstract

1. Introduction

2. Materials and Methods

2.1. Field Experimental Design

2.2. Data Collection

2.3. Image Classification

2.3.1. Image Preprocessing and Color Space Conversion

2.3.2. K-Means Clustering and FCA Calculation

2.3.3. Accuracy Estimation

2.4. Vegetation Indices Calculation

2.5. Model Selection and Validation

3. Results

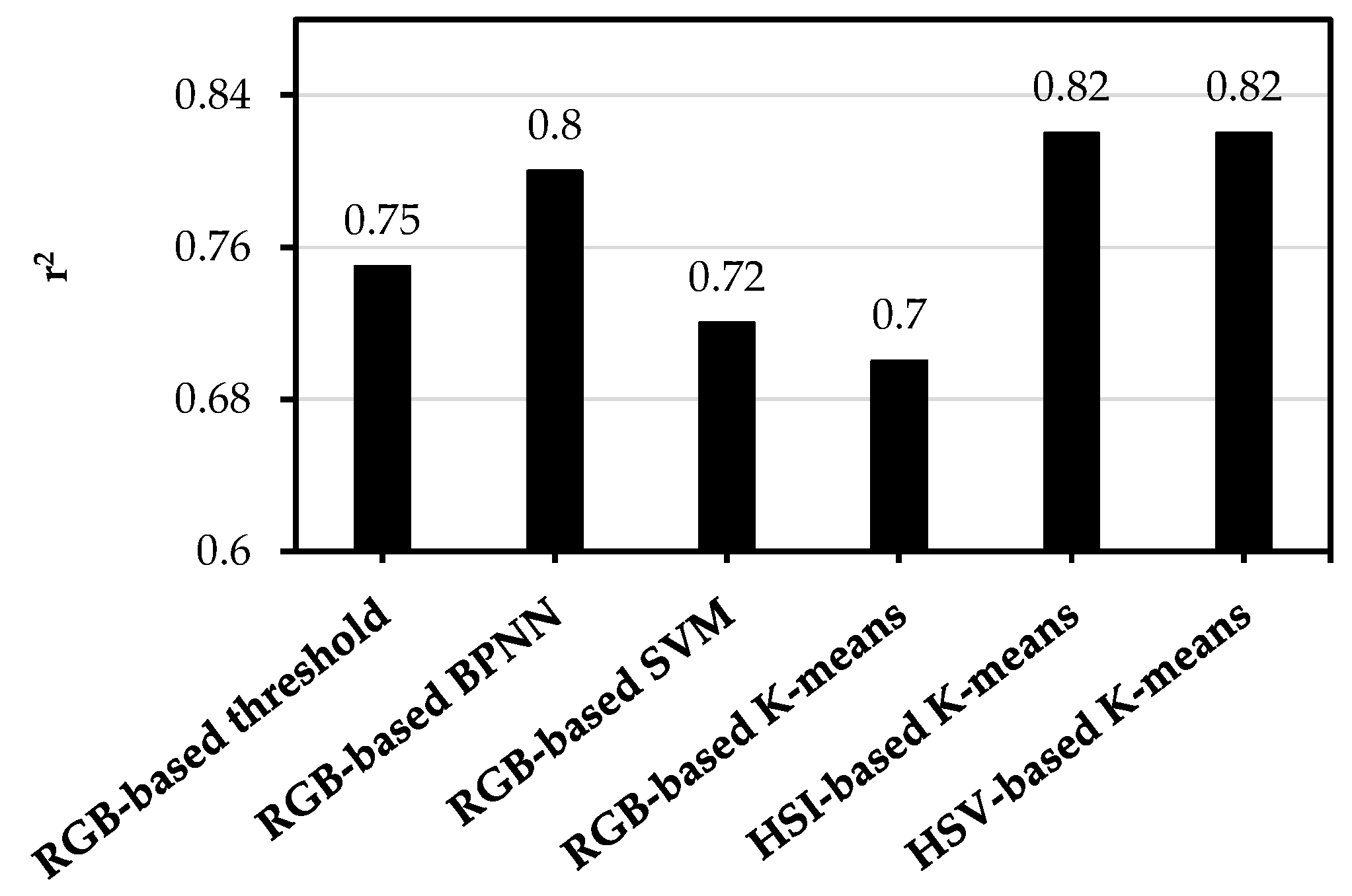

3.1. Image Classification

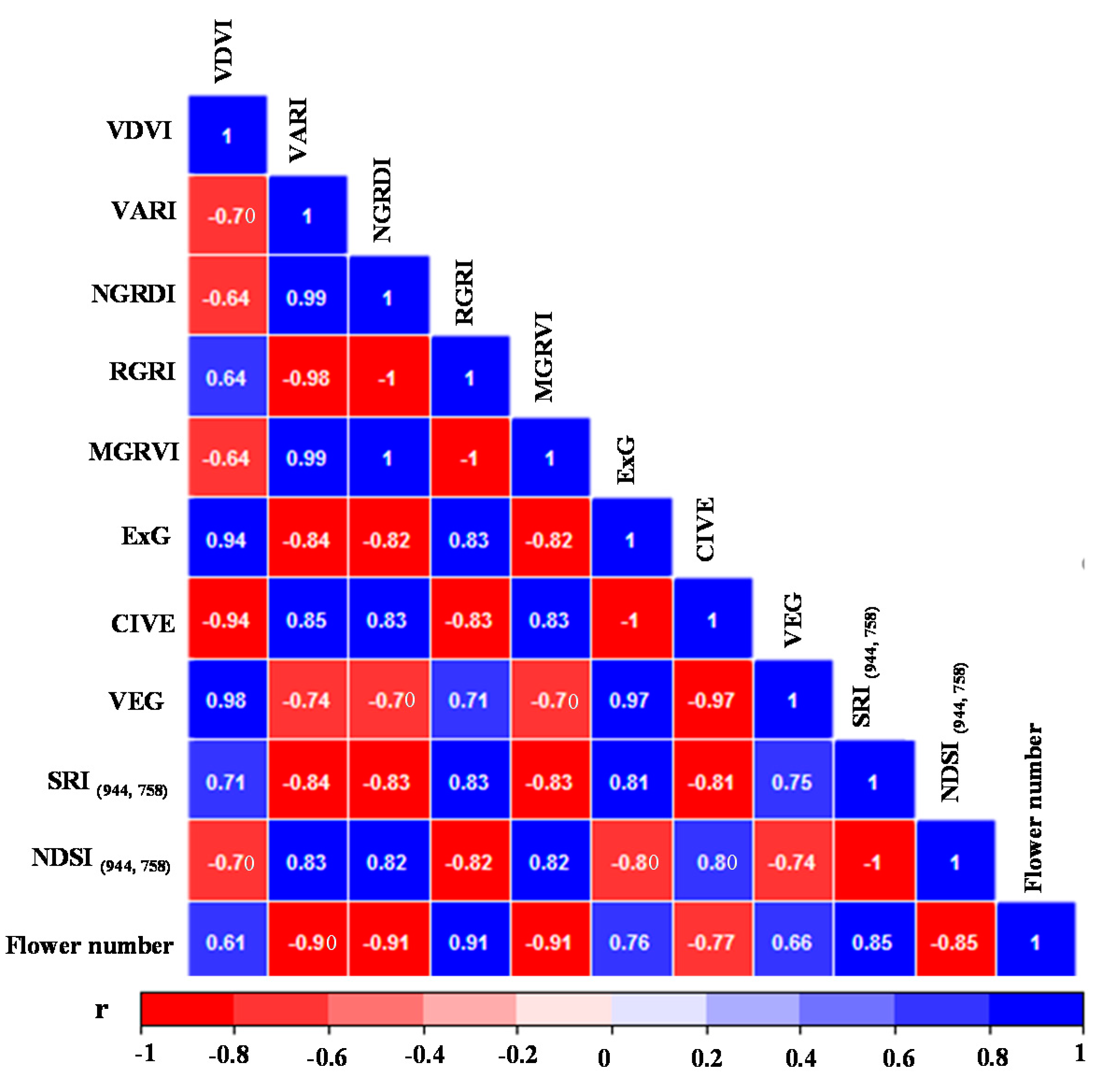

3.2. Correlations for VIs and Flower Number

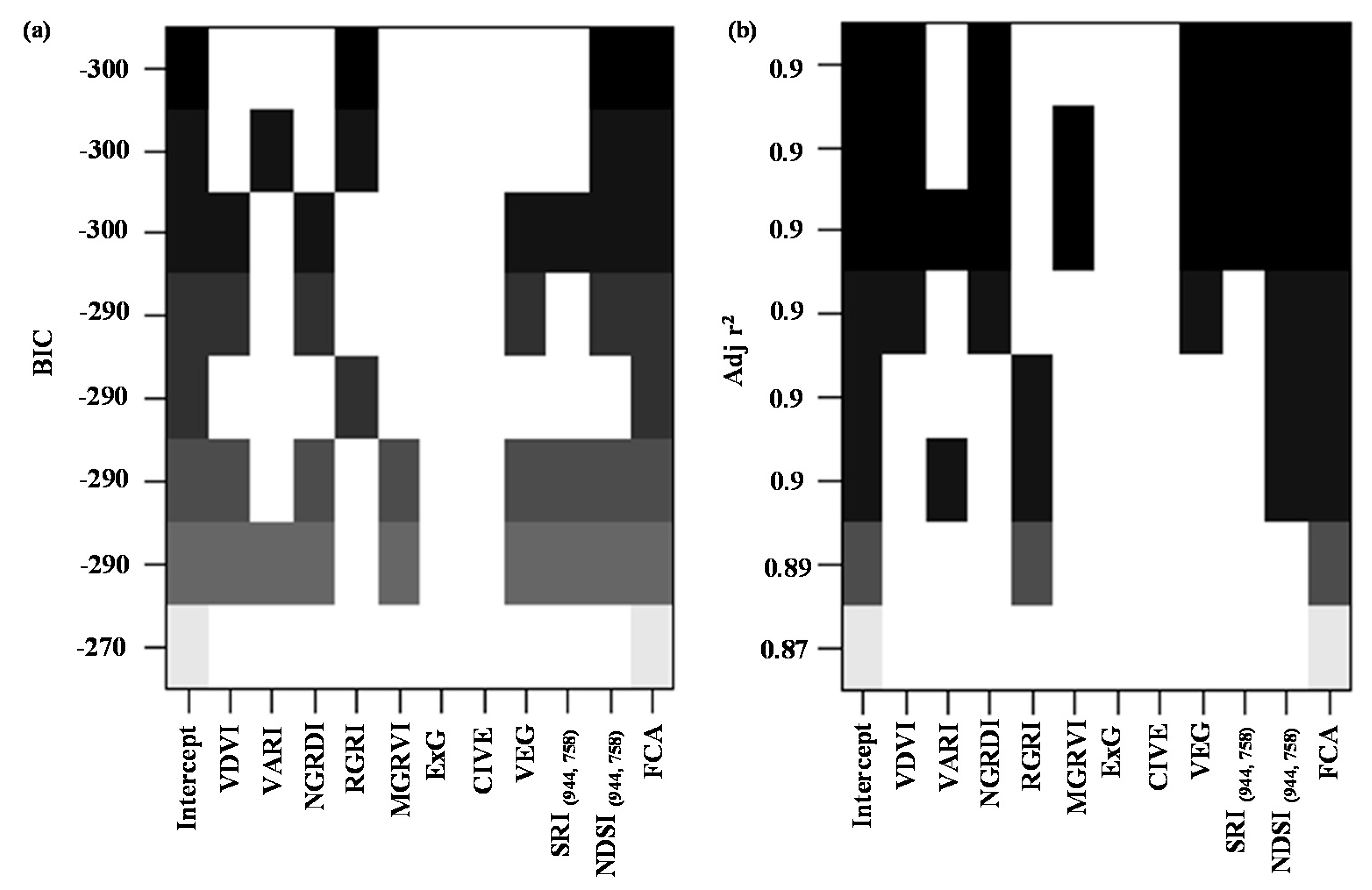

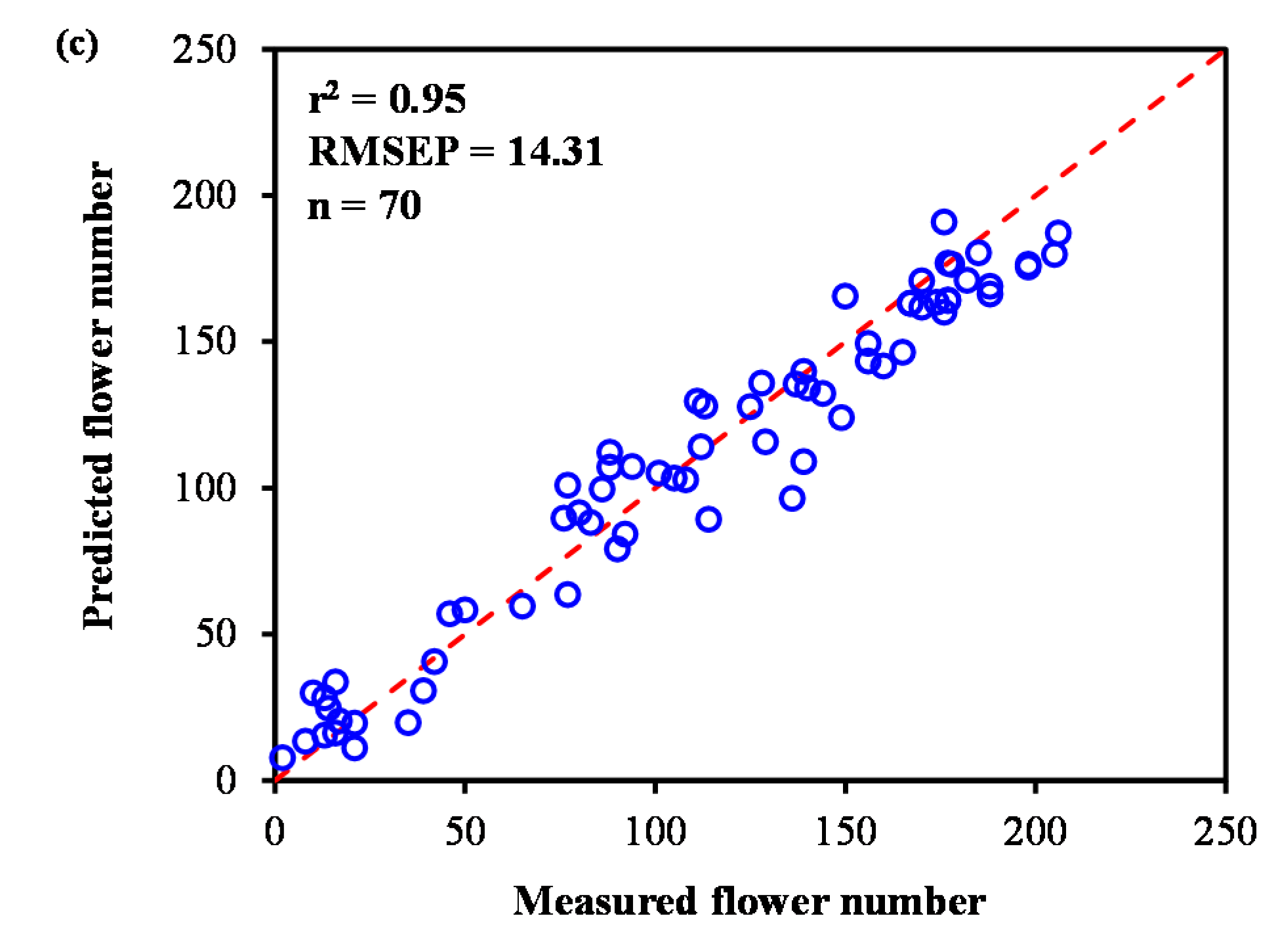

3.3. Model Development and Comparision

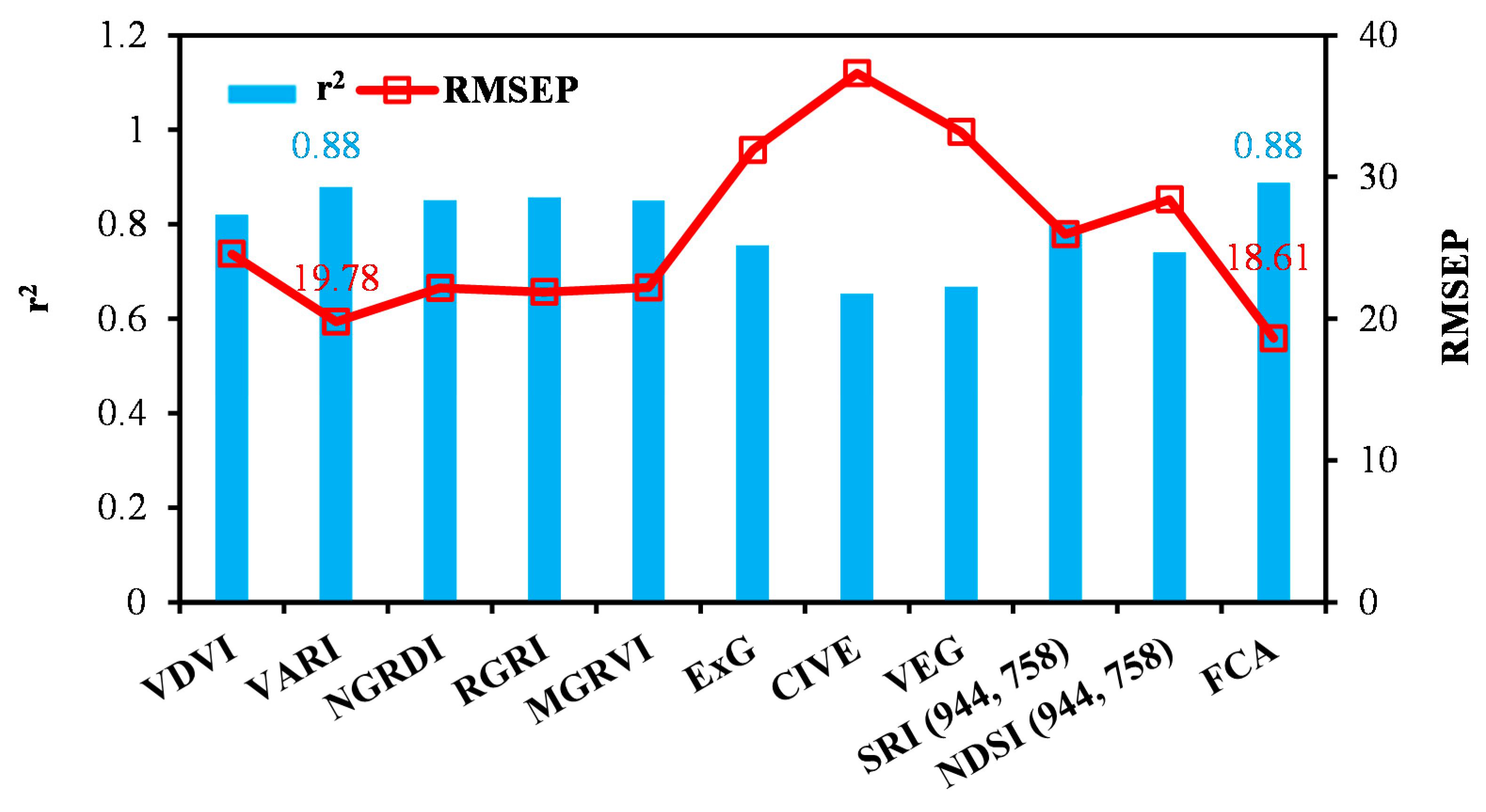

3.3.1. Model Development with Individual UAV Variables

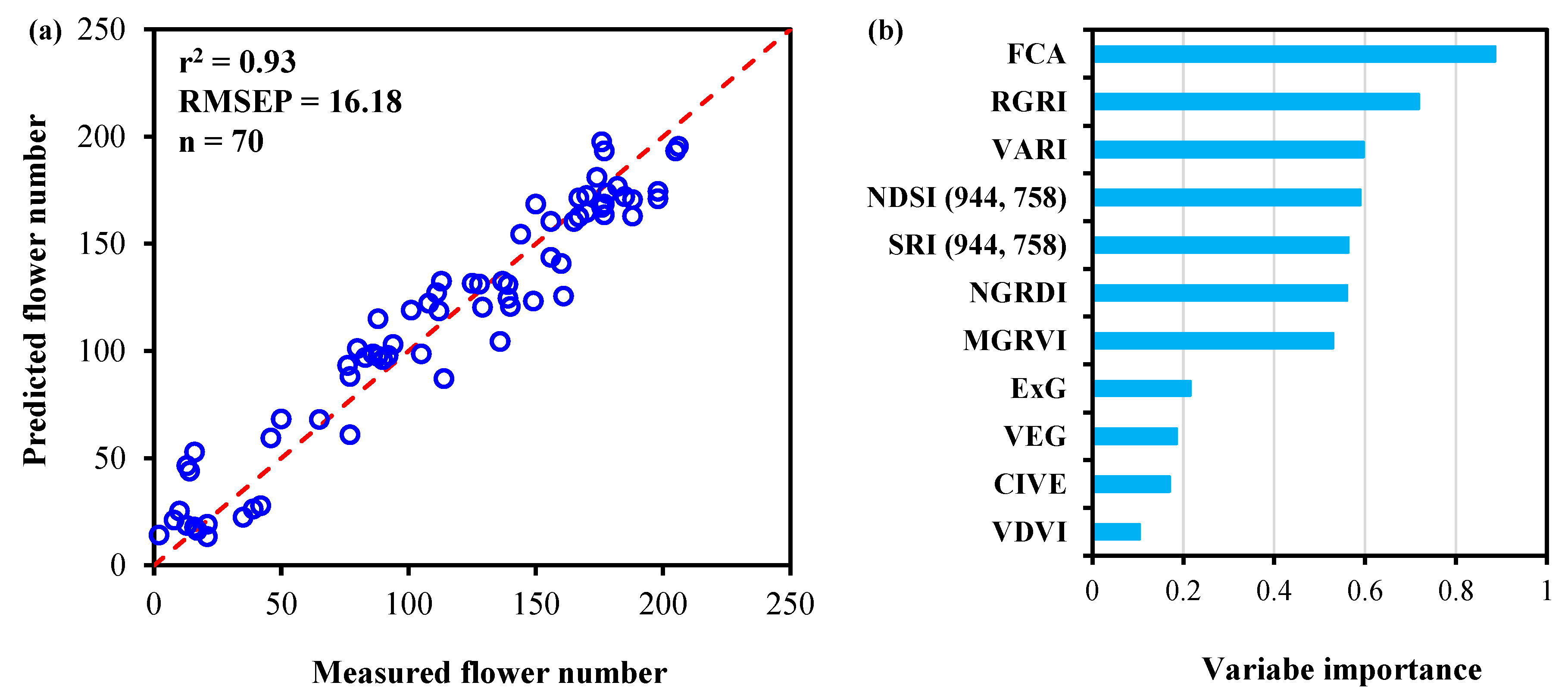

3.3.2. Model Development and Comparison with All UAV Variables

4. Discussion

4.1. Applicability of the Method

4.2. Importance of Variable Rankings

4.3. The Implications and Limitations in This Study

5. Conclusions

Author Contributions

Acknowledgments

Conflicts of Interest

References

- Fang, S.; Tang, W.; Peng, Y.; Gong, Y.; Dai, C.; Chai, R.; Liu, K. Remote estimation of vegetation fraction and flower fraction in oilseed rape with unmanned aerial vehicle data. Remote Sens. 2016, 8, 416. [Google Scholar] [CrossRef]

- Blackshaw, R.E.; Johnson, E.N.; Gan, Y.; May, W.E.; McAndrews, D.W.; Barthet, V.; McDonald, T.; Wispinski, D. Alternative oilseed crops for biodiesel feedstock on the Canadian prairies. Can. J. Plant Sci. 2011, 91, 889–896. [Google Scholar] [CrossRef]

- Faraji, A. Flower formation and pod/flower ratio in canola (Brassica napus L.) affected by assimilates supply around flowering. Int. J. Plant Prod. 2010, 4, 271–280. [Google Scholar] [CrossRef]

- Faraji, A.; Latifi, N.; Soltani, A.; Rad, A.H.S. Effect of high temperature stress and supplemental irrigation on flower and pod formation in two canola (Brassica napus L.) cultivars at Mediterranean climate. Asian J. Plant Sci. 2008, 7, 343–351. [Google Scholar] [CrossRef]

- Burton, W.A.; Flood, R.F.; Norton, R.M.; Field, B.; Potts, D.A.; Robertson, M.J.; Salisbury, P.A. Identification of variability in phenological responses in canola-quality brassica juncea for utilisation in Australian breeding programs. Aust. J. Agric. Res. 2008, 59, 874–881. [Google Scholar] [CrossRef]

- Leflon, M.; Husken, A.; Njontie, C.; Kightley, S.; Pendergrast, D.; Pierre, J.; Renard, M.; Pinochet, X. Stability of the cleistogamous trait during the flowering period of oilseed rape. Plant Breed. 2010, 129, 13–18. [Google Scholar] [CrossRef]

- Li, W.; Niu, Z.; Chen, H.; Li, D.; Wu, M.; Zhao, W. Remote estimation of canopy height and aboveground biomass of maize using high-resolution stereo images from a low-cost unmanned aerial vehicle system. Ecol. Indic. 2016, 67, 637–648. [Google Scholar] [CrossRef]

- Atena, H.; Lorena, G.P.; Suchismita, M.; Daljit, S.; Dale, S.; Jessica, R.; Ivan, O.M.; Prakash, S.R.; Douglas, G.; Jesse, P. Application of unmanned aerial systems for high throughput phenotyping of large wheat breeding nurseries. Plant Methods 2016, 12, 1–15. [Google Scholar] [CrossRef]

- Ines, A.V.M.; Das, N.N.; Hansen, J.W.; Njoku, E.G. Assimilation of remotely sensed soil moisture and vegetation with a crop simulation model for maize yield prediction. Remote Sens. Environ. 2013, 138, 149–164. [Google Scholar] [CrossRef]

- Huang, J.; Sedano, F.; Huang, Y.; Ma, H.; Li, X.; Liang, S.; Tian, L.; Zhang, X.; Fan, J.; Wu, W. Assimilating a synthetic kalman filter leaf area index series into the wofost model to improve regional winter wheat yield estimation. Agric. For. Meteorol. 2016, 216, 188–202. [Google Scholar] [CrossRef]

- Clevers, J.G.P.W.; Gitelson, A.A. Remote estimation of crop and grass chlorophyll and nitrogen content using red-edge bands on sentinel-2 and-3. Int. J. Appl. Earth Obs. 2013, 23, 344–351. [Google Scholar] [CrossRef]

- Moharana, S.; Dutta, S. Spatial variability of chlorophyll and nitrogen content of rice from hyperspectral imagery. ISPRS J. Photogramm. 2016, 122, 17–29. [Google Scholar] [CrossRef]

- Zhu, Y.; Liu, K.; Liu, L.; Myint, S.; Wang, S.; Liu, H.; He, Z. Exploring the potential of worldview-2 red-edge band-based vegetation indices for estimation of mangrove leaf area index with machine learning algorithms. Remote Sens. 2017, 9, 1060. [Google Scholar] [CrossRef]

- Castro, A.I.D.; López-Granados, F.; Jurado-Expósito, M. Broad-scale cruciferous weed patch classification in winter wheat using quickbird imagery for in-season site-specific control. Precis. Agric. 2013, 14, 392–413. [Google Scholar] [CrossRef]

- Martín, M.P.; Barreto, L.; Fernándezquintanilla, C. Discrimination of sterile oat (Avena sterilis) in winter barley (Hordeum vulgare) using quickbird satellite images. Crop Prot. 2011, 30, 1363–1369. [Google Scholar] [CrossRef]

- Zhou, X.; Zheng, H.B.; Xu, X.Q.; He, J.Y.; Ge, X.K.; Yao, X.; Cheng, T.; Zhu, Y.; Cao, W.X.; Tian, Y.C. Predicting grain yield in rice using multi-temporal vegetation indices from uav-based multispectral and digital imagery. ISPRS J. Photogramm. 2017, 130, 246–255. [Google Scholar] [CrossRef]

- Verger, A.; Vigneau, N.; Chéron, C.; Gilliot, J.M.; Comar, A.; Baret, F. Green area index from an unmanned aerial system over wheat and rapeseed crops. Remote Sens. Environ. 2014, 152, 654–664. [Google Scholar] [CrossRef]

- Gao, J.; Liao, W.; Nuyttens, D.; Lootens, P.; Vangeyte, J.; Pižurica, A.; He, Y.; Pieters, J.G. Fusion of pixel and object-based features for weed mapping using unmanned aerial vehicle imagery. Int. J. Appl. Earth Obs. 2018, 67, 43–53. [Google Scholar] [CrossRef]

- Yu, N.; Li, L.; Schmitz, N.; Tiaz, L.F.; Greenberg, J.A.; Diers, B.W. Development of methods to improve soybean yield estimation and predict plant maturity with an unmanned aerial vehicle based platform. Remote Sens. Environ. 2016, 187, 91–101. [Google Scholar] [CrossRef]

- Park, S.; Ryu, D.; Fuentes, S.; Chung, H.; Hernández-Montes, E.; O’Connell, M. Adaptive estimation of crop water stress in nectarine and peach orchards using high-resolution imagery from an unmanned aerial vehicle (uav). Remote Sens. 2017, 9, 828. [Google Scholar] [CrossRef]

- Duan, S.-B.; Li, Z.-L.; Wu, H.; Tang, B.-H.; Ma, L.; Zhao, E.; Li, C. Inversion of the prosail model to estimate leaf area index of maize, potato, and sunflower fields from unmanned aerial vehicle hyperspectral data. Int. J. Appl. Earth Obs. 2014, 26, 12–20. [Google Scholar] [CrossRef]

- Jin, X.; Liu, S.; Baret, F.; Hemerle, M.; Comar, A. Estimates of plant density of wheat crops at emergence from very low altitude uav imagery. Remote Sens. Environ. 2017, 198, 105–114. [Google Scholar] [CrossRef]

- Yang, M.-D.; Huang, K.-S.; Kuo, Y.-H.; Tsai, H.P.; Lin, L.-M. Spatial and spectral hybrid image classification for rice lodging assessment through uav imagery. Remote Sens. 2017, 9, 583. [Google Scholar] [CrossRef]

- Du, M.M.; Noguchi, N. Monitoring of wheat growth status and mapping of wheat yield’s within-field spatial variations using color images acquired from uav-camera system. Remote Sens. 2017, 9, 289. [Google Scholar] [CrossRef]

- Bendig, J.; Yu, K.; Aasen, H.; Bolten, A.; Bennertz, S.; Broscheit, J.; Gnyp, M.L.; Bareth, G. Combining uav-based plant height from crop surface models, visible, and near infrared vegetation indices for biomass monitoring in barley. Int. J. Appl. Earth Obs. 2015, 39, 79–87. [Google Scholar] [CrossRef]

- Maimaitijiang, M.; Ghulam, A.; Sidike, P.; Hartling, S.; Maimaitiyiming, M.; Peterson, K.; Shavers, E.; Fishman, J.; Peterson, J.; Kadam, S. Unmanned aerial system (uas)-based phenotyping of soybean using multi-sensor data fusion and extreme learning machine. ISPRS J. Photogramm. 2017, 134, 43–58. [Google Scholar] [CrossRef]

- Liu, T.; Li, R.; Zhong, X.; Jiang, M.; Jin, X.; Zhou, P.; Liu, S.; Sun, C.; Guo, W. Estimates of rice lodging using indices derived from uav visible and thermal infrared images. Agric. For. Meteorol. 2018, 252, 144–154. [Google Scholar] [CrossRef]

- Sulik, J.J.; Long, D.S. Spectral indices for yellow canola flowers. Int. J. Remote Sens. 2015, 36, 2751–2765. [Google Scholar] [CrossRef]

- Coy, A.; Rankine, D.; Taylor, M.; Nielsen, D.C.; Cohen, J. Increasing the accuracy and automation of fractional vegetation cover estimation from digital photographs. Remote Sens. 2016, 8, 474. [Google Scholar] [CrossRef]

- McLaren, K. Development of cie 1976 (lab) uniform color space and color-difference formula. J. Soc. Dyers Colour. 1976, 92, 338–341. [Google Scholar] [CrossRef]

- Lopez, F.; Valiente, J.M.; Baldrich, R.; Vanrell, M. Fast surface grading using color statistics in the cie lab space. Lect. Notes Comput. Sci. 2005, 3523, 666–673. [Google Scholar] [CrossRef]

- Lloyd, S. Least squares quantization in PCM. IEEE Trans. Inform. Theory 1982, 28, 129–137. [Google Scholar] [CrossRef]

- Wang, X.; Wang, M.; Wang, S.; Wu, Y. Extraction of vegetation information from visible unmanned aerial vehicle images. Trans. Chin. Soc. Agric. Eng. 2015, 31, 152–159. [Google Scholar] [CrossRef]

- Gitelson, A.A.; Kaufman, Y.J.; Stark, R.; Rundquist, D. Novel algorithms for remote estimation of vegetation fraction. Remote Sens. Environ. 2002, 80, 76–87. [Google Scholar] [CrossRef]

- Verrelst, J.; Schaepman, M.E.; Koetz, B.; Kneubuehler, M. Angular sensitivity analysis of vegetation indices derived from chris/proba data. Remote Sens. Environ. 2008, 112, 2341–2353. [Google Scholar] [CrossRef]

- Woebbecke, D.M.; Meyer, G.E.; Vonbargen, K.; Mortensen, D.A. Color indexes for weed identification under various soil, residue, and lighting conditions. Trans. ASAE 1995, 38, 259–269. [Google Scholar] [CrossRef]

- Kataoka, T.; Kaneko, T.; Okamoto, H.; Hata, S. Crop growth estimation system using machine vision. In Proceedings of the 2003 IEEE/ASME International Conference on Advanced Intelligent Mechatronics, Kobe, Japan, 20–24 July 2003; pp. 1079–1083. [Google Scholar] [CrossRef]

- Hague, T.; Tillett, N.D.; Wheeler, H. Automated crop and weed monitoring in widely spaced cereals. Precis. Agric. 2006, 7, 21–32. [Google Scholar] [CrossRef]

- Jordan, C.F. Derivation of leaf-area index from quality of light on the forest floor. Ecology 1969, 50, 663–666. [Google Scholar] [CrossRef]

- Rouse, J.W.; Haas, R.W.; Schell, J.A.; Deering, D.W.; Harlan, J.C. Monitoring the Vernal Advancement and Retrogradation (Green Wave Effect) of Natural Vegetation; NASA Goddard Space Flight Center: Houston, TX, USA, 1974; pp. 1–8. Available online: https://ntrs.nasa.gov/search.jsp?R=19730017588 (accessed on 1 April 1973).

- Horning, N. Random forests: An algorithm for image classification and generation of continuous fields data sets. In Proceedings of the International Conference on Geoinformatics for Spatial Infrastructure Development in Earth and Allied Sciences, Osaka, Japan, 9–11 December 2010. [Google Scholar] [CrossRef]

- Breiman, L. Random forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Neath, A.A.; Cavanaugh, J.E. The Bayesian information criterion: Background, derivation, and applications. Comput. Stat. 2012, 4, 199–203. [Google Scholar] [CrossRef]

- Schwieder, M.; Leitão, P.; Suess, S.; Senf, C.; Hostert, P. Estimating fractional shrub cover using simulated enmap data: A comparison of three machine learning regression techniques. Remote Sens. 2014, 6, 3427–3445. [Google Scholar] [CrossRef]

- Duan, T.; Chapman, S.C.; Guo, Y.; Zheng, B. Dynamic monitoring of ndvi in wheat agronomy and breeding trials using an unmanned aerial vehicle. Field Crops Res. 2017, 210, 71–80. [Google Scholar] [CrossRef]

- Yang, G.; Liu, J.; Zhao, C.; Li, Z.; Huang, Y.; Yu, H.; Xu, B.; Yang, X.; Zhu, D.; Zhang, X. Unmanned aerial vehicle remote sensing for field-based crop phenotyping: Current status and perspectives. Front. Plant Sci. 2017, 8, 1111. [Google Scholar] [CrossRef] [PubMed]

- Fu, Y.; Yang, G.; Wang, J.; Song, X.; Feng, H. Winter wheat biomass estimation based on spectral indices, band depth analysis and partial least squares regression using hyperspectral measurements. Comput. Electron. Agric. 2014, 100, 51–59. [Google Scholar] [CrossRef]

- Torres-Sanchez, J.; Pena, J.M.; de Castro, A.I.; Lopez-Granados, F. Multi-temporal mapping of the vegetation fraction in early-season wheat fields using images from uav. Comput. Electron. Agric. 2014, 103, 104–113. [Google Scholar] [CrossRef]

- Rasmussen, J.; Ntakos, G.; Nielsen, J.; Svensgaard, J.; Poulsen, R.N.; Christensen, S. Are vegetation indices derived from consumer-grade cameras mounted on uavs sufficiently reliable for assessing experimental plots? Eur. J. Agron. 2016, 74, 75–92. [Google Scholar] [CrossRef]

- Lebourgeois, V.; Bégué, A.; Labbé, S.; Houlès, M.; Martiné, J.F. A light-weight multi-spectral aerial imaging system for nitrogen crop monitoring. Precis. Agric. 2012, 13, 525–541. [Google Scholar] [CrossRef]

- Chianucci, F.; Disperati, L.; Guzzi, D.; Bianchini, D.; Nardino, V.; Lastri, C.; Rindinella, A.; Corona, P. Estimation of canopy attributes in beech forests using true colour digital images from a small fixed-wing uav. Int. J. Appl. Earth Obs. 2016, 47, 60–68. [Google Scholar] [CrossRef]

- Iersel, W.V.; Straatsma, M.; Addink, E.; Middelkoop, H. Monitoring height and greenness of non-woody floodplain vegetation with uav time series. ISPRS J. Photogramm. 2018, 141, 112–123. [Google Scholar] [CrossRef]

- Zhang, B.; Liu, C.; Wang, Y.; Yao, X.; Wang, F.; Wu, J.; King, G.J.; Liu, K. Disruption of a carotenoid cleavage dioxygenase 4 gene converts flower colour from white to yellow in brassica species. New Phytol. 2015, 206, 1513–1526. [Google Scholar] [CrossRef] [PubMed]

- Yates, D.J.; Steven, M.D. Reflexion and absorption of solar radiation by flowering canopies of oil-seed rape (Brassica napus L.). J. Agric. Sci. 1987, 109, 495–502. [Google Scholar] [CrossRef]

| Vegetation Indices | Formula | References |

|---|---|---|

| VIs Calculated from RGB Images | ||

| Visible-band Difference Vegetation Index (VDVI) | (2*G − R − B)/(2*G + R + B) | [33] |

| Visible Atmospherically Resistant Index (VARI) | (G − R)/(G + R − B) | [34] |

| Normalized Green-Red Difference Index (NGRDI) | (G − R)/(G + R) | [34] |

| Red-Green Ratio Index (RGRI) | R/G | [35] |

| Modified Green Red Vegetation Index (MGRVI) | (G2 − R2)/(G2 + R2) | [25] |

| Excess Green Index (ExG) | 2*G − R − B | [36] |

| Color Index of Vegetation (CIVE) | 0.441*R − 0.881*G + 0.385*B + 18.787 | [37] |

| Vegetativen (VEG) | G/(Ra*B(1 − a)) a = 0.667 | [38] |

| VIs Calculated from Multispectral Images | ||

| Simple Ratio Index (SRI) | Rλ1/Rλ2 | [39] |

| Normalized Difference Spectral Index (NDSI) | (Rλ1 − Rλ2)/(Rλ1 + Rλ2) | [40] |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Wan, L.; Li, Y.; Cen, H.; Zhu, J.; Yin, W.; Wu, W.; Zhu, H.; Sun, D.; Zhou, W.; He, Y. Combining UAV-Based Vegetation Indices and Image Classification to Estimate Flower Number in Oilseed Rape. Remote Sens. 2018, 10, 1484. https://doi.org/10.3390/rs10091484

Wan L, Li Y, Cen H, Zhu J, Yin W, Wu W, Zhu H, Sun D, Zhou W, He Y. Combining UAV-Based Vegetation Indices and Image Classification to Estimate Flower Number in Oilseed Rape. Remote Sensing. 2018; 10(9):1484. https://doi.org/10.3390/rs10091484

Chicago/Turabian StyleWan, Liang, Yijian Li, Haiyan Cen, Jiangpeng Zhu, Wenxin Yin, Weikang Wu, Hongyan Zhu, Dawei Sun, Weijun Zhou, and Yong He. 2018. "Combining UAV-Based Vegetation Indices and Image Classification to Estimate Flower Number in Oilseed Rape" Remote Sensing 10, no. 9: 1484. https://doi.org/10.3390/rs10091484

APA StyleWan, L., Li, Y., Cen, H., Zhu, J., Yin, W., Wu, W., Zhu, H., Sun, D., Zhou, W., & He, Y. (2018). Combining UAV-Based Vegetation Indices and Image Classification to Estimate Flower Number in Oilseed Rape. Remote Sensing, 10(9), 1484. https://doi.org/10.3390/rs10091484