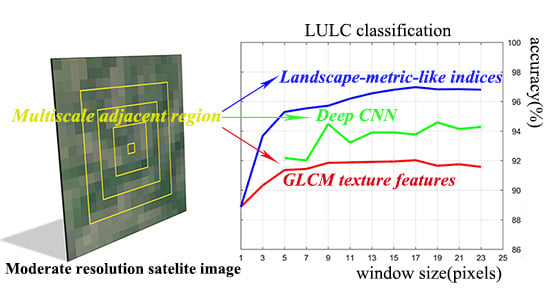

Improvement of Moderate Resolution Land Use and Land Cover Classification by Introducing Adjacent Region Features

Abstract

1. Introduction

2. Methods

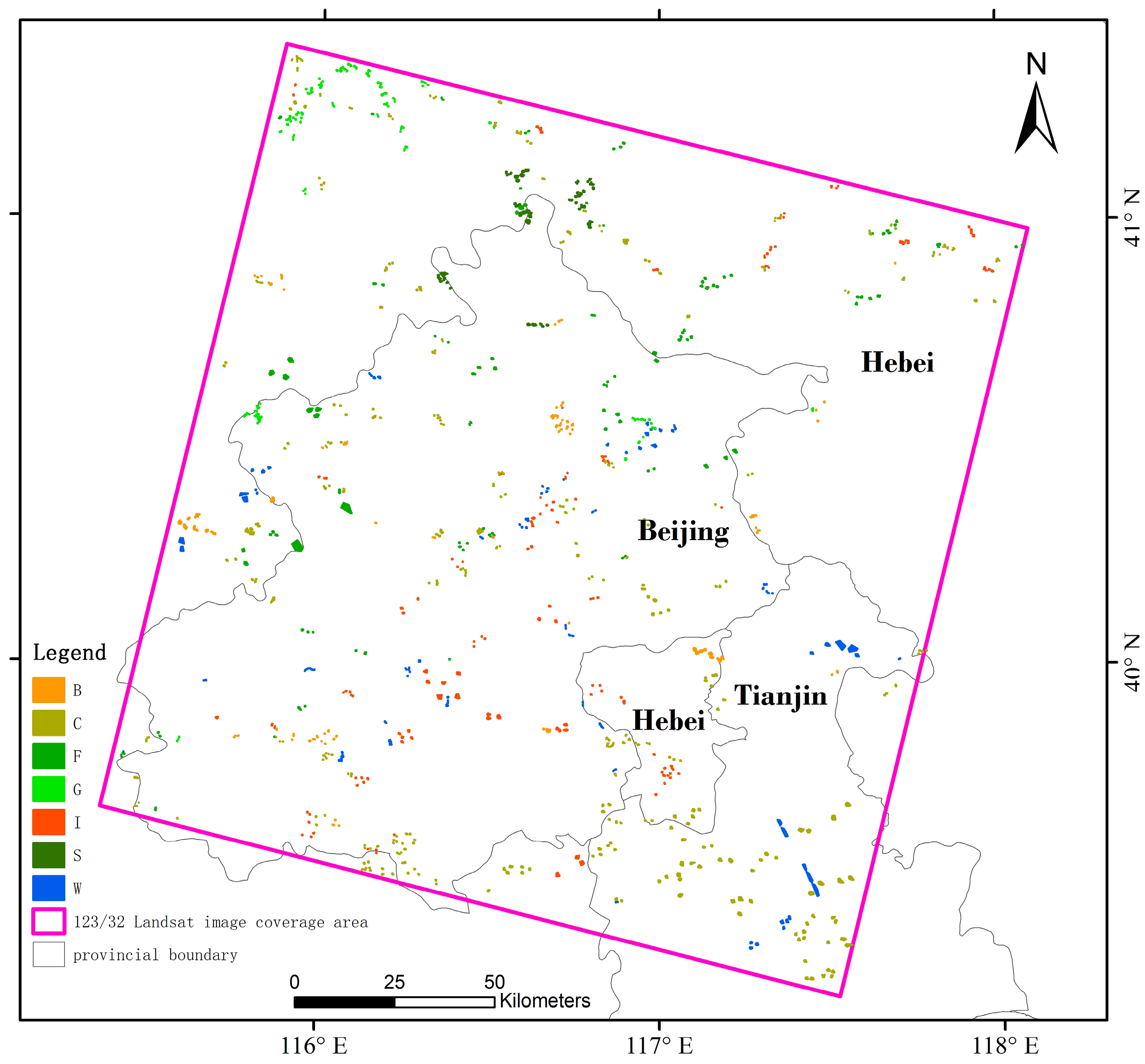

2.1. Study Area

2.2. Dataset

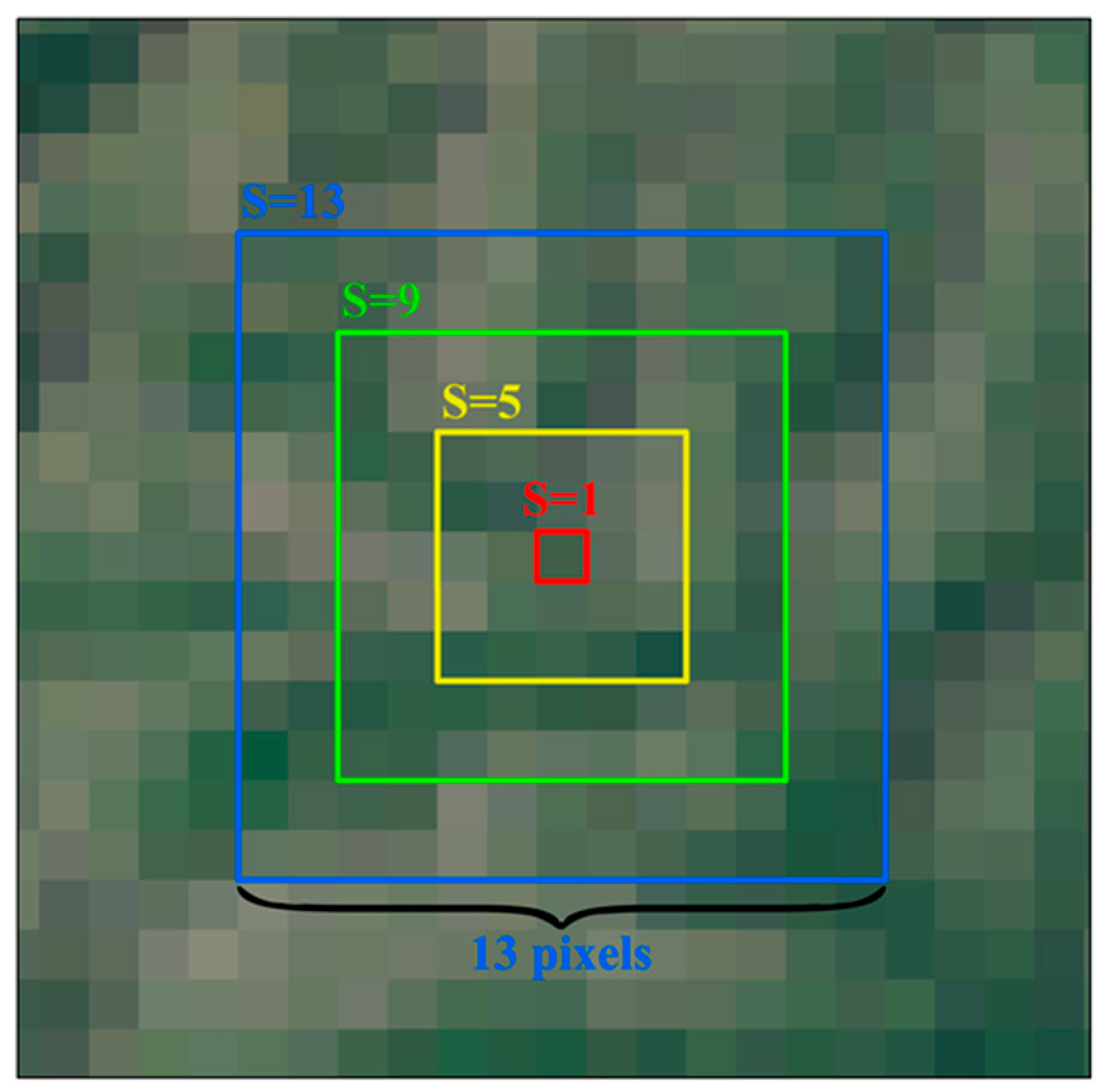

2.3. Adjacent Region Feature Extraction

2.4. Feature Set Configuration and LULC Classification

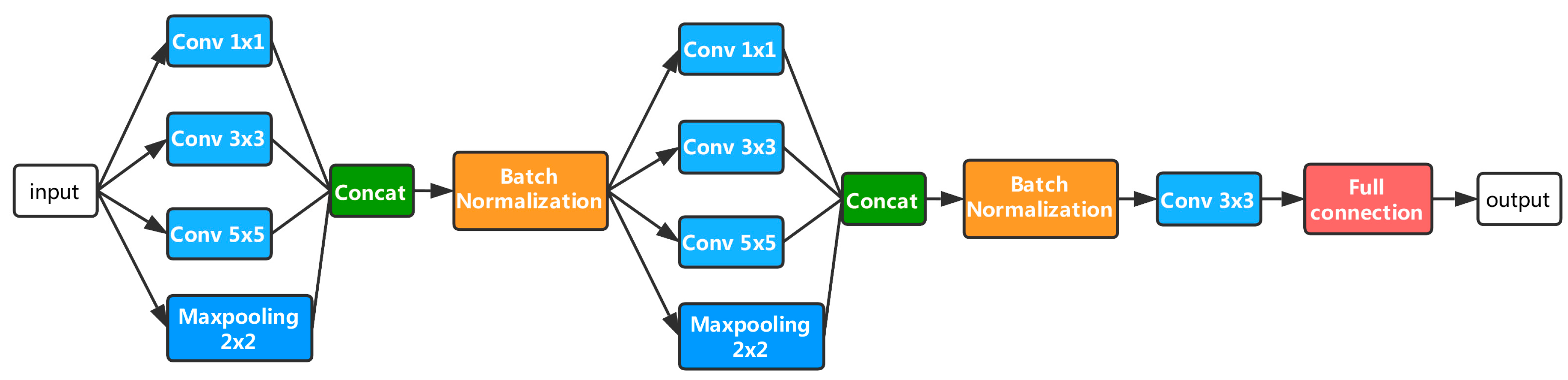

2.5. Comparable Methods

2.6. Accuracy Assessment

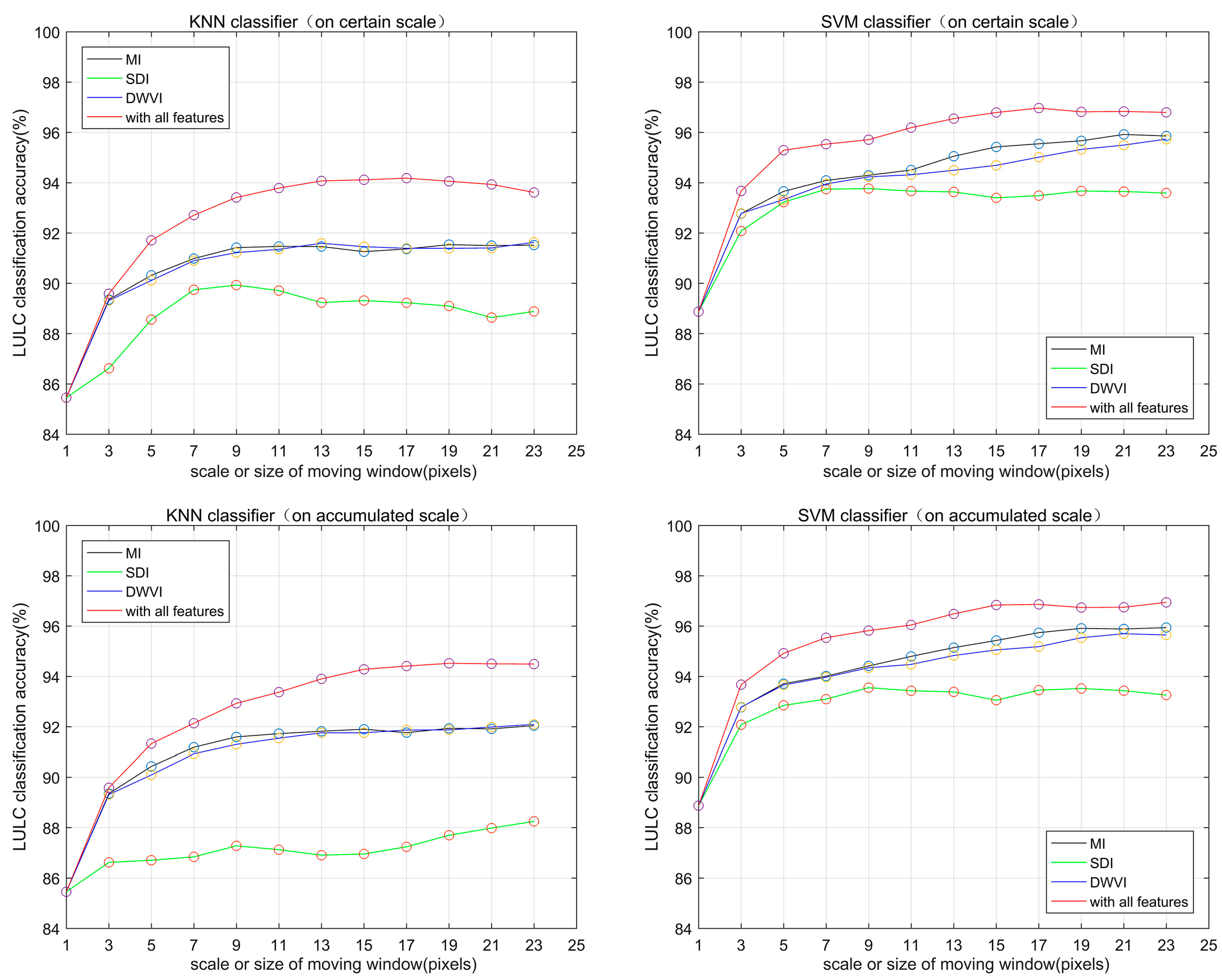

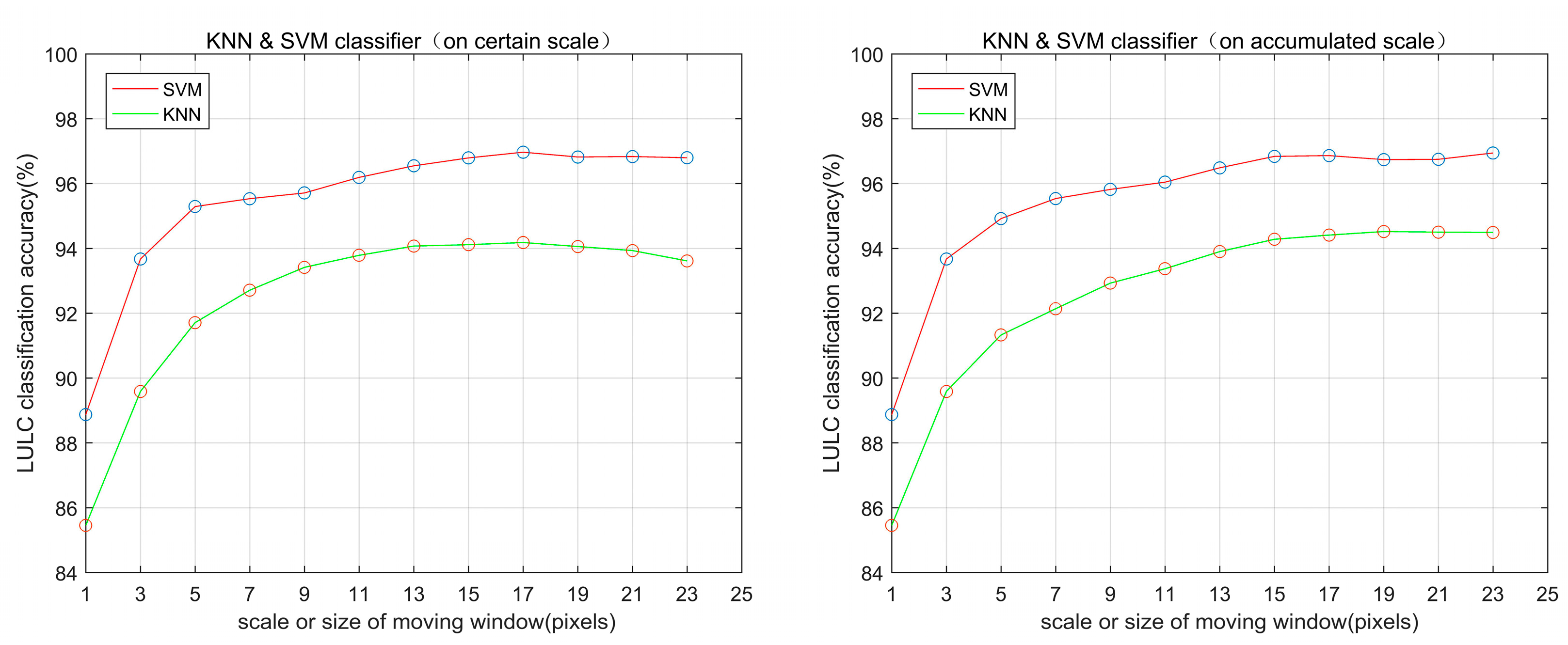

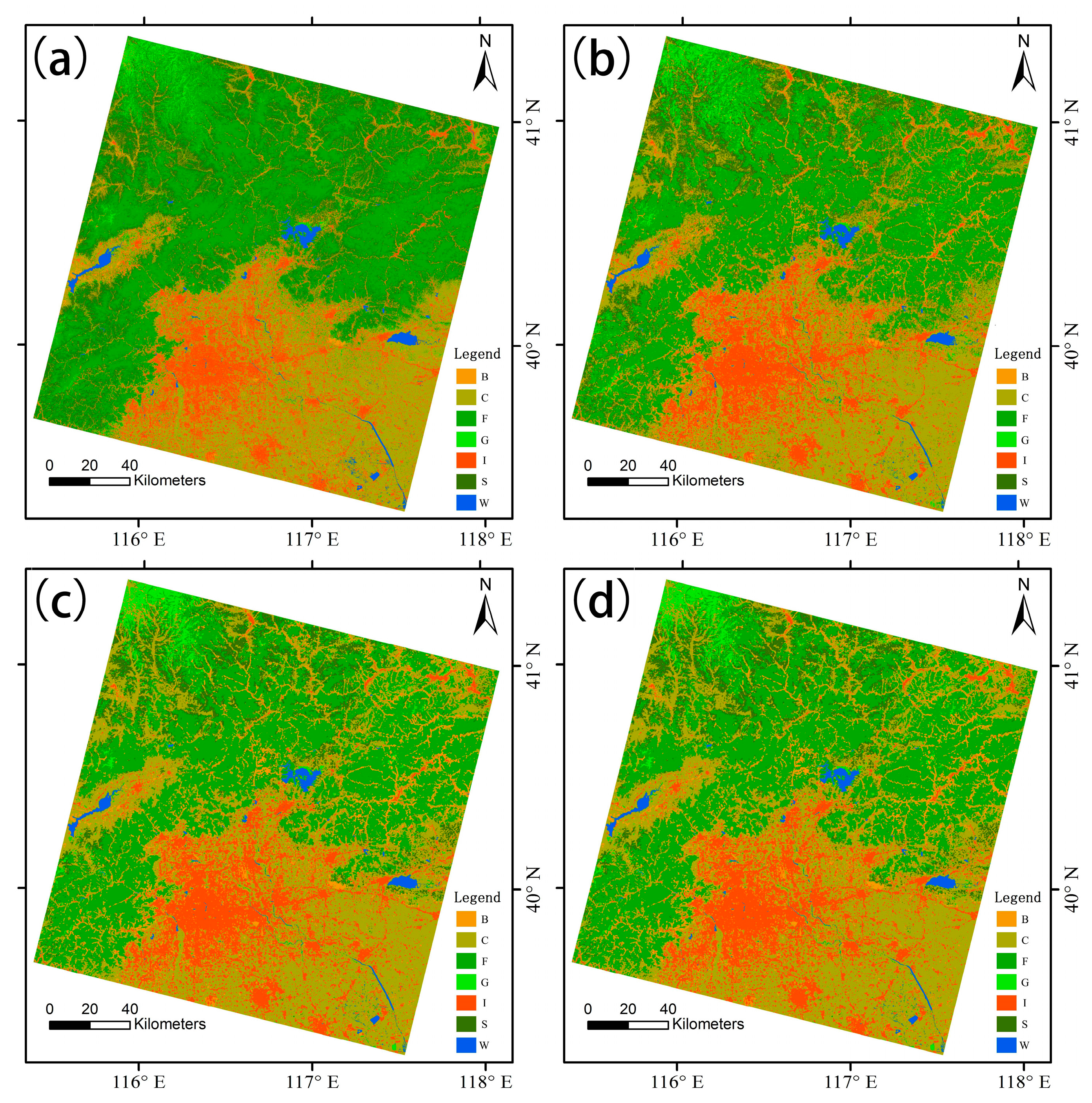

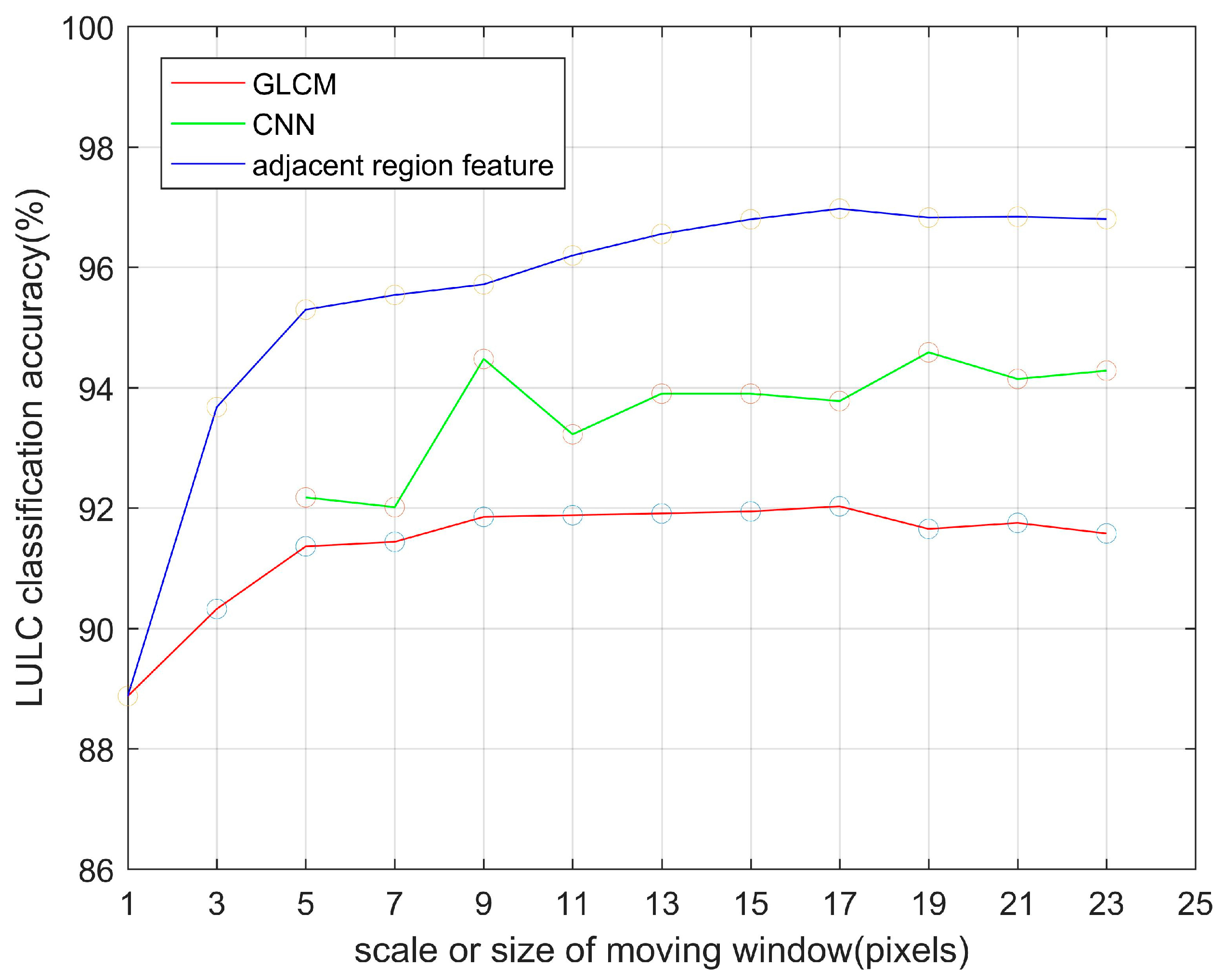

3. Results

4. Discussion

5. Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Justice, C.O.; Vermote, E.; Townshend, J.R.G.; Defries, R.; Roy, D.P.; Hall, D.K.; Salomonson, V.V.; Privette, J.L.; Riggs, G.; Strahler, A.; et al. The Moderate Resolution Imaging Spectroradiometer (MODIS): Land remote sensing for global change research. IEEE Trans. Geosci. Remote Sens. 1998, 36, 1228–1249. [Google Scholar] [CrossRef]

- Pearson, R.G.; Dawson, T.P.; Liu, C. Modelling species distributions in Britain: A hierarchical integration of climate and land-cover data. Ecography 2004, 27, 285–298. [Google Scholar] [CrossRef]

- Pielke, R.A. Land use and climate change. Science 2005, 310, 1625–1626. [Google Scholar] [CrossRef] [PubMed]

- Souza, C.M., Jr.; Siqueira, J.V.; Sales, M.H.; Fonseca, A.V.; Ribeiro, J.G.; Numata, I.; Cochrane, M.A.; Barber, C.P.; Roberts, D.A.; Barlow, J.; et al. Ten-year Landsat classification of deforestation and forest degradation in the Brazilian Amazon. Remote Sens. 2013, 5, 5493–5513. [Google Scholar] [CrossRef]

- Ren, G.; Young, S.S.; Wang, L.; Wang, W.; Long, Y.; Wu, R.; Li, J.; Zhu, J.; Yu, D.W. Effectiveness of China’s National Forest Protection Program and nature reserves. Conserv. Biol. 2015, 29, 1368–1377. [Google Scholar] [CrossRef] [PubMed]

- Verburg, P.H.; Neumann, K.; Nol, L. Challenges in using land use and land cover data for global change studies. Glob. Chang. Biol. 2011, 17, 974–989. [Google Scholar] [CrossRef]

- Roy, D.P.; Wulder, M.A.; Loveland, T.R.; Woodcock, C.E.; Allen, R.G.; Anderson, M.C.; Helder, D.; Irons, J.R.; Johnson, D.M.; Kennedy, R.; et al. Landsat-8: Science and product vision for terrestrial global change research. Remote Sens. Environ. 2014, 145, 154–172. [Google Scholar] [CrossRef]

- Hansen, M.C.; Potapov, P.V.; Moore, R.; Hancher, M.; Turubanova, S.A.; Tyukavina, A.; Thau, D.; Stehman, S.V.; Goetz, S.J.; Loveland, T.R.; et al. High-resolution global maps of 21st-century forest cover change. Science 2013, 342, 850–853. [Google Scholar] [CrossRef] [PubMed]

- Powell, S.L.; Pflugmacher, D.; Kirschbaum, A.A.; Kim, Y.; Cohen, W.B. Moderate resolution remote sensing alternatives: A review of Landsat-like sensors and their applications. J. Appl. Remote Sens. 2007, 1. [Google Scholar] [CrossRef]

- Yuan, F.; Sawaya, K.E.; Loeffelholz, B.C.; Bauer, M.E. Land cover classification and change analysis of the Twin Cities (Minnesota) Metropolitan Area by multitemporal Landsat remote sensing. Remote Sens. Environ. 2005, 98, 317–328. [Google Scholar] [CrossRef]

- Xian, G.; Homer, C.; Fry, J. Updating the 2001 National Land Cover Database land cover classification to 2006 by using Landsat imagery change detection methods. Remote Sens. Environ. 2009, 113, 1133–1147. [Google Scholar] [CrossRef]

- Zhang, Q.; Wang, J.; Gong, P.; Shi, P. Study of urban spatial patterns from SPOT panchromatic imagery using textural analysis. Int. J. Remote Sens. 2003, 24, 4137–4160. [Google Scholar] [CrossRef]

- Pacifici, F.; Chini, M.; Emery, W.J. A neural network approach using multi-scale textural metrics from very high-resolution panchromatic imagery for urban land-use classification. Remote Sens. Environ. 2009, 113, 1276–1292. [Google Scholar] [CrossRef]

- Shaban, M.; Dikshit, O. Improvement of classification in urban areas by the use of textural features: The case study of Lucknow city, Uttar Pradesh. Int. J. Remote Sens. 2001, 22, 565–593. [Google Scholar] [CrossRef]

- Haralick, R.M.; Shanmugam, K. Textural features for image classification. IEEE Trans. Syst. Man Cybern. 1973, 6, 610–621. [Google Scholar] [CrossRef]

- Smits, P.C.; Annoni, A. Updating land-cover maps by using texture information from very high-resolution space-borne imagery. IEEE Trans. Geosci. Remote Sens. 1999, 37, 1244–1254. [Google Scholar] [CrossRef]

- Berberoglu, S.; Curran, P.J.; Lloyd, C.D.; Atkinson, P.M. Texture classification of Mediterranean land cover. Int. J. Appl. Earth Obs. Geoinf. 2007, 9, 322–334. [Google Scholar] [CrossRef]

- Agüera, F.; Aguilar, F.J.; Aguilar, M.A. Using texture analysis to improve per-pixel classification of very high resolution images for mapping plastic greenhouses. ISPRS J. Photogramm. Remote Sens. 2008, 63, 635–646. [Google Scholar] [CrossRef]

- Guidici, D.; Clark, M. One-Dimensional Convolutional Neural Network Land-Cover Classification of Multi-Seasonal Hyperspectral Imagery in the San Francisco Bay Area, California. Remote Sens. 2017, 9, 629. [Google Scholar] [CrossRef]

- Scott, G.J.; Curran, P.J.; Lloyd, C.D.; Atkinson, P.M. Training Deep Convolutional Neural Networks for Land–Cover Classification of High-Resolution Imagery. IEEE Geosci. Remote Sens. Lett. 2017, 14, 549–553. [Google Scholar] [CrossRef]

- Kussul, N.; Lavreniuk, M.; Skakun, S.; Shelestov, A. Deep Learning Classification of Land Cover and Crop Types Using Remote Sensing Data. IEEE Geosci. Remote Sens. Lett. 2017, 14, 778–782. [Google Scholar] [CrossRef]

- Yu, L.; Wang, Z.; Tian, S.; Ye, F.; Ding, J.; Kong, J. Convolutional Neural Networks for Water Body Extraction from Landsat Imagery. Int. J. Comput. Intell. Appl. 2017, 16, 1750001. [Google Scholar] [CrossRef]

- Ishii, T.; Nakamura, R.; Nakada, H.; Mochizuki, Y.; Ishikawa, H. Surface object recognition with CNN and SVM in Landsat 8 images. In Proceedings of the IAPR International Conference on Machine Vision Applications, Tokyo, Japan, 18–22 May 2015. [Google Scholar]

- Chen, D.; Stow, D.A.; Gong, P. Examining the effect of spatial resolution and texture window size on classification accuracy: An urban environment case. Int. J. Remote Sens. 2004, 25, 2177–2192. [Google Scholar] [CrossRef]

- Langley, S.K.; Cheshire, H.M.; Humes, K.S. A comparison of single date and multitemporal satellite image classifications in a semi-arid grassland. J. Arid Environ. 2001, 49, 401–411. [Google Scholar] [CrossRef]

- Feng, G.; Masek, J.; Schwaller, M.; Hall, F. On the blending of the Landsat and MODIS surface reflectance: Predicting daily Landsat surface reflectance. IEEE Trans. Geosci. Remote Sens. 2006, 44, 2207–2218. [Google Scholar] [CrossRef]

- Jia, K.; Liang, S.; Zhang, N.; Wei, X.; Gu, X.; Zhao, X.; Yao, Y.; Xie, X. Land cover classification of finer resolution remote sensing data integrating temporal features from time series coarser resolution data. ISPRS J. Photogramm. Remote Sens. 2014, 93, 49–55. [Google Scholar] [CrossRef]

- Jia, K.; Liang, S.; Wei, X.; Yao, Y.; Su, Y.; Jiang, B.; Wang, X. Land Cover Classification of Landsat Data with Phenological Features Extracted from Time Series MODIS NDVI Data. Remote Sens. 2014, 6, 11518–11532. [Google Scholar] [CrossRef]

- Jia, K.; Liang, S.; Wei, X.; Yao, Y.; Su, Y.; Jiang, B.; Wang, X. Land cover classification using Landsat 8 Operational Land Imager data in Beijing, China. Geocarto Int. 2014, 29, 941–951. [Google Scholar] [CrossRef]

- Zhu, Z.; Woodcock, C.E. Continuous change detection and classification of land cover using all available Landsat data. Remote Sens. Environ. 2014, 144, 152–171. [Google Scholar] [CrossRef]

- Senf, C.; Leitão, P.J.; Pflugmacher, D.; van der Linden, S.; Hostert, P. Mapping land cover in complex Mediterranean landscapes using Landsat: Improved classification accuracies from integrating multi-seasonal and synthetic imagery. Remote Sens. Environ. 2015, 156, 527–536. [Google Scholar] [CrossRef]

- Kussul, N.; Skakun, S.; Shelestov, A.; Lavreniuk, M.; Yailymov, B.; Kussul, O. Regional scale crop mapping using multi-temporal satellite imagery. ISPRS Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2015, XL-7/W3, 45–52. [Google Scholar] [CrossRef]

- Forman, R.T.T.; Godron, M. Patches and Structural Components for A Landscape Ecology. BioScience 1981, 31, 733–740. [Google Scholar]

- Forman, R.T.; Godron, M. Landscape Ecology; Jhon Wiley & Sons: New York, NY, USA, 1986; 619p. [Google Scholar]

- Forman, R.T.T. Some general principles of landscape and regional ecology. Landsc. Ecol. 1995, 10, 133–142. [Google Scholar] [CrossRef]

- Wu, J. Landscape Ecology, Cross-disciplinarity, and Sustainability Science. Landsc. Ecol. 2006, 21, 1–4. [Google Scholar] [CrossRef]

- Wu, J.; Hobbs, R. Key issues and research priorities in landscape ecology: An idiosyncratic synthesis. Landsc. Ecol. 2002, 17, 355–365. [Google Scholar] [CrossRef]

- Turner, M.G. Landscape Ecology: What Is the State of the Science? Annu. Rev. Ecol. Evol. Syst. 2005, 36, 319–344. [Google Scholar] [CrossRef]

- Herold, M.; Menz, G. Landscape metric signatures (LMS) to improve urban land use information derived from remotely sensed data. In A Decade of Trans-European Remote Sensing Cooperation; CRC Press: Boca Raton, FL, USA, 2001; pp. 251–256. [Google Scholar]

- Wu, J.; Shen, W.; Sun, W.; Paul, T.; Tueller, P.T. Empirical patterns of the effects of changing scale on landscape metrics. Landsc. Ecol. 2002, 17, 761–782. [Google Scholar] [CrossRef]

- McGarigal, K.; Cushman, S.A.; Neel, M.C.; Ene, E. FRAGSTATS: Spatial Pattern Analysis Program for Categorical Maps; Umass Lanscape Ecology Lab: Amherst, MA, USA, 2002. [Google Scholar]

- Chen, L.; Fu, B.-J.; Xu, J.Y.; Gong, J. Location-weighted landscape contrast index: A scale independent approach for landscape pattern evaluation based on Source-Sink ecological processes. Acta Ecol. Sin. 2003, 23, 2406–2413. [Google Scholar]

- Wu, J. Effects of changing scale on landscape pattern analysis: Scaling relations. Landsc. Ecol. 2004, 19, 125–138. [Google Scholar] [CrossRef]

- Sofia, G.; Marinello, F.; Tarolli, P. A new landscape metric for the identification of terraced sites: The Slope Local Length of Auto-Correlation (SLLAC). ISPRS J. Photogramm. Remote Sens. 2014, 96, 123–133. [Google Scholar] [CrossRef]

- McGarigal, K. FRAGSTATS Help. Available online: https://www.umass.edu/landeco/research/fragstats/documents/fragstats.help.4.2.pdf (accessed on 8 March 2018).

- Turner, M.G.; O'Neill, R.V.; Gardner, R.H.; Milne, B.T. Effects of changing spatial scale on the analysis of landscape pattern. Landsc. Ecol. 1989, 3, 153–162. [Google Scholar] [CrossRef]

- Hall, E. Computer Image Processing and Recognition; Elsevier: Amsterdam, The Netherlands, 1979. [Google Scholar]

- Lee, J.-S. Refined filtering of image noise using local statistics. Comput. Graph. Image Process. 1981, 15, 380–389. [Google Scholar] [CrossRef]

- Roesser, R. A discrete state-space model for linear image processing. IEEE Trans. Autom. Control 1975, 20, 1–10. [Google Scholar] [CrossRef]

- Tanimoto, S.; Pavlidis, T. A hierarchical data structure for picture processing. Comput. Graph. Image Process. 1975, 4, 104–119. [Google Scholar] [CrossRef]

- Kawata, Y.; Ohtani, A.; Kusaka, T.; Ueno, S. Classification accuracy for the MOS-1 MESSR data before and after the atmospheric correction. IEEE Trans. Geosci. Remote Sens. 1990, 28, 755–760. [Google Scholar] [CrossRef]

- Potere, D. Horizontal positional accuracy of Google Earth’s high-resolution imagery archive. Sensors 2008, 8, 7973–7981. [Google Scholar] [CrossRef] [PubMed]

- Knorn, J.; Rabe, A.; Radeloff, V.C.; Kuemmerle, T.; Kozak, J.; Hostert, P. Land cover mapping of large areas using chain classification of neighboring Landsat satellite images. Remote Sens. Environ. 2009, 113, 957–964. [Google Scholar] [CrossRef]

- Liu, J.; Liu, H.; Janne, H.; Matti, M. Posterior probability-based optimization of texture window size for image classification. Remote Sens. Lett. 2014, 5, 753–762. [Google Scholar] [CrossRef]

- Peterson, L.E. K-nearest neighbor. Scholarpedia 2009, 4, 1883. [Google Scholar] [CrossRef]

- Pal, M.; Mather, P. Support vector machines for classification in remote sensing. Int. J. Remote Sens. 2005, 26, 1007–1011. [Google Scholar] [CrossRef]

- Poursanidis, D.; Chrysoulakis, N.; Mitraka, Z. Landsat 8 vs. Landsat 5: A comparison based on urban and peri-urban land cover mapping. Int. J. Appl. Earth Obs. Geoinf. 2015, 35, 259–269. [Google Scholar] [CrossRef]

- Murray, H.; Lucieer, A.; Williams, R. Texture-based classification of sub-Antarctic vegetation communities on Heard Island. Int. J. Appl. Earth Obs. Geoinf. 2010, 12, 138–149. [Google Scholar] [CrossRef]

- Szegedy, C.; Liu, W.; Jia, Y.; Sermanet, P.; Reed, S.; Anguelov, D.; Erhan, D.; Vanhoucke, V.; Rabinovich, A. Going deeper with convolutions. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015. [Google Scholar]

- Foody, G.M. Status of land cover classification accuracy assessment. Remote Sens. Environ. 2002, 80, 185–201. [Google Scholar] [CrossRef]

- Foody, G.M. Classification accuracy comparison: Hypothesis tests and the use of confidence intervals in evaluations of difference, equivalence and non-inferiority. Remote Sens. Environ. 2009, 113, 1658–1663. [Google Scholar] [CrossRef]

| Experimental Sequences | Feature Dimensions | Feature Set Configurations |

|---|---|---|

| Spectral features only | 6 | 6 spectral features (S = 1) |

| Sequence 1 | 12 | 6 spectral + MI or SDI or DWVI features (S = s) |

| Sequence 2 | 6 + (s + 1) × 3 | 6 spectral + MI or SDI or DWVI features (S ≤ s) |

| Sequence 3 | 24 | 6 spectral + MI & SDI & DWVI features (S = s) |

| Sequence 4 | 6 + (0.5 × (s + 1) − 1) × 18 | 6 spectral + MI & SDI & DWVI features (S ≤ s) |

| Ground Truth (Pixels) | UA (%) | PA (%) | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| B | C | F | G | I | S | W | Total | |||

| Mapped Classes (Pixels) | ||||||||||

| Using Only Spectral Features with KNN | ||||||||||

| B | 2648 | 141 | 2 | 1 | 139 | 12 | 0 | 2943 | 90.0 | 88.3 |

| C | 151 | 2172 | 21 | 82 | 160 | 200 | 47 | 2833 | 76.7 | 72.4 |

| F | 34 | 49 | 2610 | 17 | 2 | 562 | 1 | 3275 | 79.7 | 87.0 |

| G | 2 | 80 | 34 | 2724 | 2 | 47 | 0 | 2889 | 94.3 | 90.8 |

| I | 126 | 63 | 0 | 0 | 2662 | 1 | 0 | 2852 | 93.3 | 88.7 |

| S | 39 | 419 | 331 | 176 | 16 | 2178 | 0 | 3159 | 69.0 | 72.6 |

| W | 0 | 76 | 2 | 0 | 19 | 0 | 2952 | 3049 | 96.8 | 98.4 |

| Using All Adjacent Region Features (MI+SDI+DWVI) with KNN on Accumulated Scales (s ≤ 19) | ||||||||||

| B | 2944 | 46 | 0 | 5 | 65 | 0 | 0 | 3060 | 96.2 | 98.1 |

| C | 11 | 2537 | 16 | 48 | 77 | 61 | 23 | 2773 | 92.5 | 84.6 |

| F | 0 | 82 | 2863 | 102 | 0 | 63 | 1 | 3111 | 92.0 | 95.4 |

| G | 0 | 95 | 98 | 2815 | 0 | 4 | 0 | 3012 | 93.5 | 93.8 |

| I | 35 | 18 | 0 | 0 | 2843 | 0 | 0 | 2896 | 98.2 | 94.5 |

| S | 10 | 212 | 23 | 30 | 0 | 2872 | 0 | 3147 | 91.3 | 95.7 |

| W | 0 | 10 | 0 | 0 | 15 | 0 | 2976 | 3001 | 99.2 | 99.2 |

| Total | 3000 | 3000 | 3000 | 3000 | 3000 | 3000 | 3000 | 21,000 | ||

| Ground Truth (Pixels) | UA (%) | PA (%) | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| B | C | F | G | I | S | W | Total | |||

| Mapped Classes (Pixels) | ||||||||||

| Using Only Spectral Features with SVM | ||||||||||

| B | 2734 | 159 | 6 | 0 | 95 | 12 | 0 | 3006 | 91.0 | 91.1 |

| C | 149 | 2329 | 10 | 92 | 111 | 93 | 38 | 2822 | 79.7 | 77.6 |

| F | 13 | 25 | 2667 | 1 | 0 | 496 | 0 | 2914 | 91.5 | 88.9 |

| G | 1 | 57 | 1 | 2804 | 3 | 20 | 0 | 3202 | 87.6 | 93.5 |

| I | 88 | 50 | 0 | 1 | 2789 | 0 | 0 | 2928 | 95.3 | 93.0 |

| S | 15 | 336 | 496 | 102 | 2 | 2379 | 0 | 3122 | 76.2 | 79.3 |

| W | 0 | 44 | 0 | 0 | 0 | 0 | 2962 | 3006 | 98.5 | 98.7 |

| Using All Adjacent Region Features (MI+SDI+DWVI) with SVM on Certain Scale (s = 17) | ||||||||||

| B | 2973 | 91 | 0 | 14 | 49 | 0 | 0 | 3127 | 95.1 | 99.1 |

| C | 20 | 2779 | 13 | 37 | 15 | 159 | 18 | 3041 | 91.4 | 92.6 |

| F | 0 | 22 | 2955 | 2 | 0 | 30 | 0 | 3009 | 98.2 | 98.5 |

| G | 0 | 61 | 15 | 2941 | 0 | 11 | 0 | 3028 | 97.1 | 98.0 |

| I | 7 | 2 | 0 | 2 | 2936 | 0 | 2 | 2949 | 99.6 | 97.9 |

| S | 0 | 45 | 17 | 4 | 0 | 2800 | 0 | 2866 | 97.7 | 93.3 |

| W | 0 | 0 | 0 | 0 | 0 | 0 | 2980 | 2980 | 100 | 99.3 |

| Total | 3000 | 3000 | 3000 | 3000 | 3000 | 3000 | 3000 | 21,000 | ||

| Classification Methods | Spectral Features Only | GLCM | CNN | With Adjacent Region Features |

|---|---|---|---|---|

| Spectral features only | - | |||

| GLCM | 7.76 | - | ||

| CNN | 12.94 | 5.52 | - | |

| With adjacent region features | 13.77 | 8.32 | 4.37 | - |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Yu, L.; Su, J.; Li, C.; Wang, L.; Luo, Z.; Yan, B. Improvement of Moderate Resolution Land Use and Land Cover Classification by Introducing Adjacent Region Features. Remote Sens. 2018, 10, 414. https://doi.org/10.3390/rs10030414

Yu L, Su J, Li C, Wang L, Luo Z, Yan B. Improvement of Moderate Resolution Land Use and Land Cover Classification by Introducing Adjacent Region Features. Remote Sensing. 2018; 10(3):414. https://doi.org/10.3390/rs10030414

Chicago/Turabian StyleYu, Longlong, Jinhe Su, Chun Li, Le Wang, Ze Luo, and Baoping Yan. 2018. "Improvement of Moderate Resolution Land Use and Land Cover Classification by Introducing Adjacent Region Features" Remote Sensing 10, no. 3: 414. https://doi.org/10.3390/rs10030414

APA StyleYu, L., Su, J., Li, C., Wang, L., Luo, Z., & Yan, B. (2018). Improvement of Moderate Resolution Land Use and Land Cover Classification by Introducing Adjacent Region Features. Remote Sensing, 10(3), 414. https://doi.org/10.3390/rs10030414