A Spectral–Temporal Patch-Based Missing Area Reconstruction for Time-Series Images

Abstract

:1. Introduction

- ➢

- The temporal variation in a diverse landscape is inconsistent. For example, pseudo-invariant features (PIFs), such as buildings and bare land, remain stable over time. However, vegetation cover, such as forest and cultivated farmland, exhibits distinct seasonal changes. The PIFs are expected to have better accuracy than the temporal variant landscape.

- ➢

- The reconstruction accuracy of different bands is diverse. Different bands are characterized by different responses to solar radiation, which will yield different reconstruction accuracies.

- ➢

- The reference pixel for the pixel with missing observations is independently selected without any consideration of neighboring pixels; improper reference pixel selection will produce a different reconstruction accuracy.

- ➢

- The reconstruction residuals, which are induced by the previously mentioned factor, will produce a visual seam line (i.e., false edge) between the reconstructed part and the remaining clear part.

- ➢

- A multi-temporal image segmentation strategy, which incorporates spectral homogeneity and temporal evolution consistency, is utilized to extract the STP.

- ➢

- The textural information from the clear temporal-adjacent image and the spectral information from the clear part of the same image are used to simultaneously reconstruct a missing STP, which will suppress the salt-and-pepper noise.

- ➢

- The seam line will go through the actual edge defined by the STP, instead of the original seam line defined by the missing region and the valid region. As demonstrated by Soille [27], the actual edge between different STPs in the image will help conceal the false edge and obtain the seamless image.

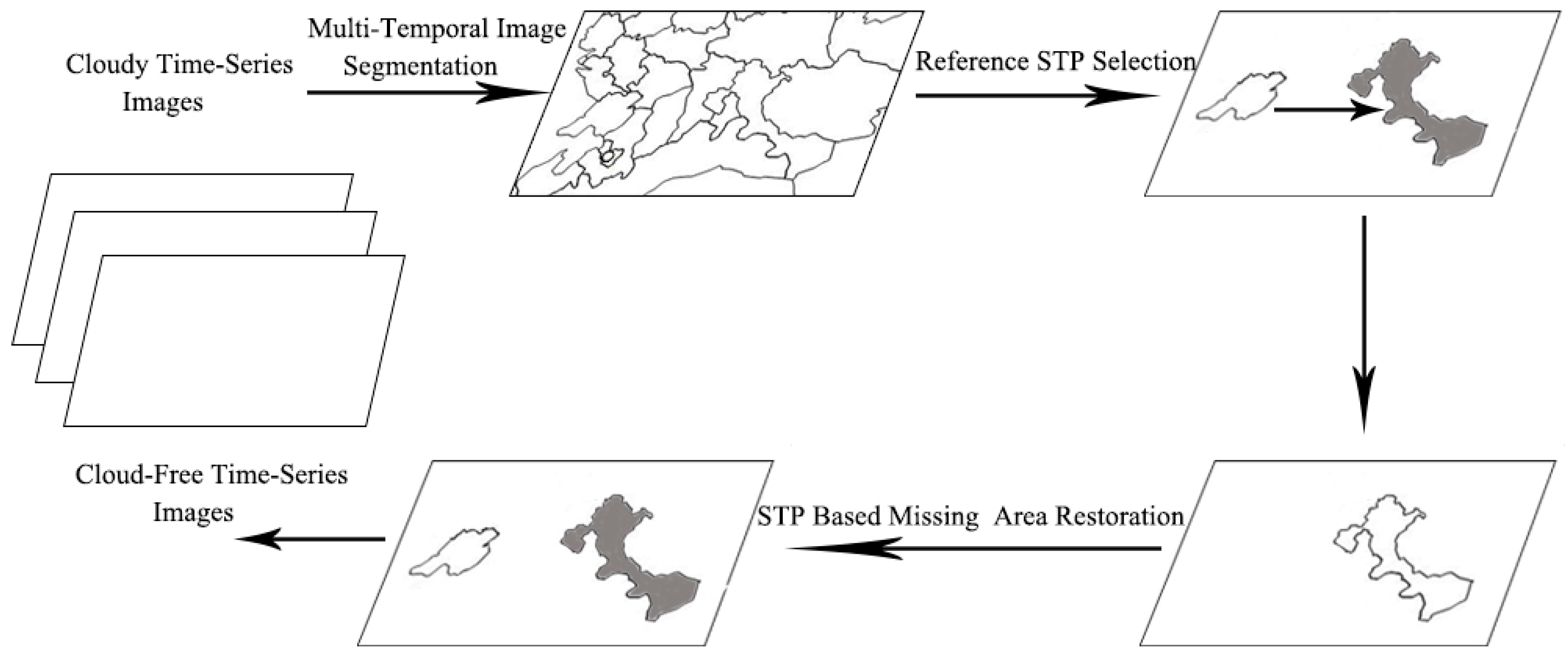

2. Methods

2.1. Multi-Temporal Image Segmentation

2.2. Reference STP Selection for the STPMO

2.3. Missing Value Estimation for STPMO

3. Experimental

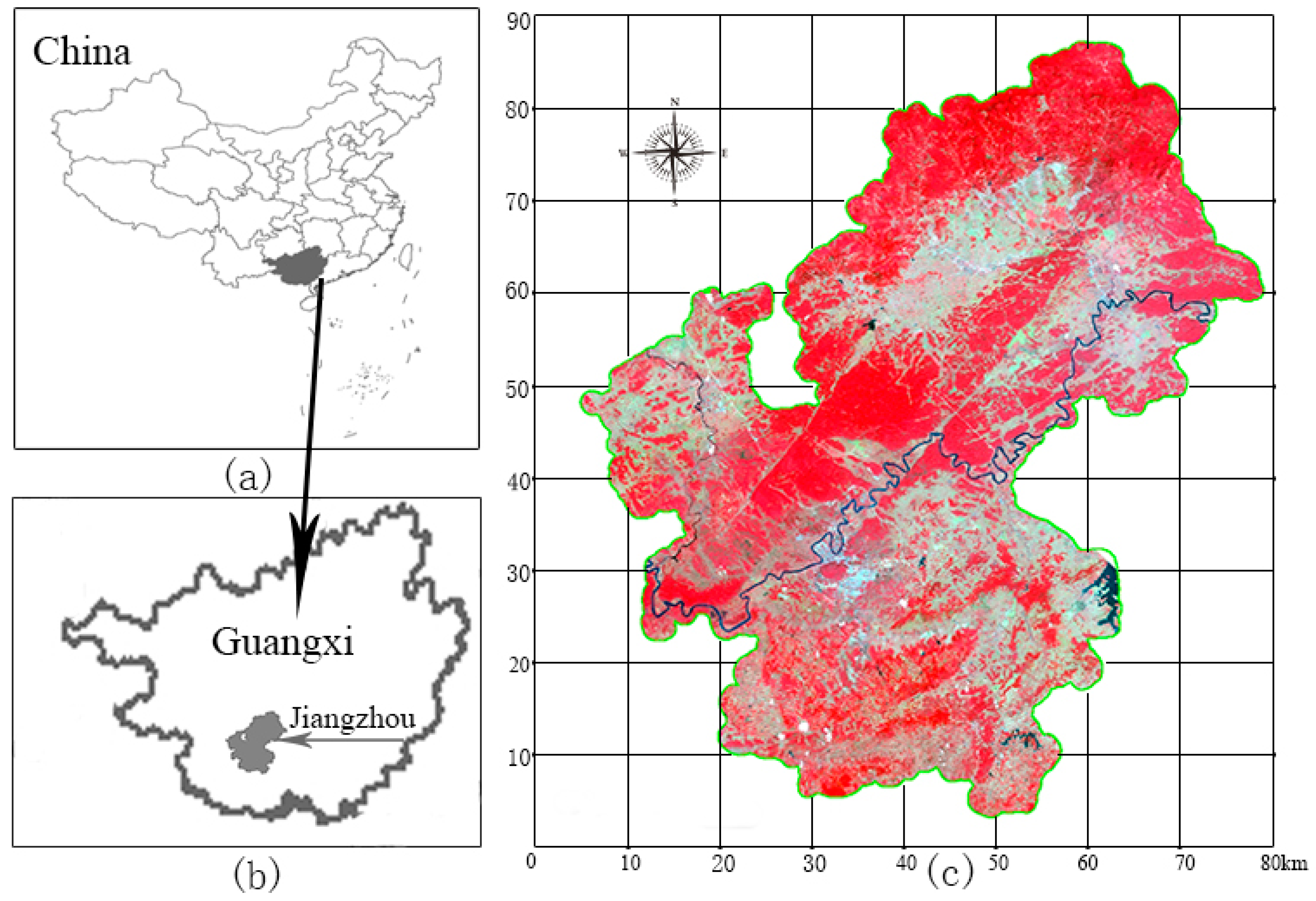

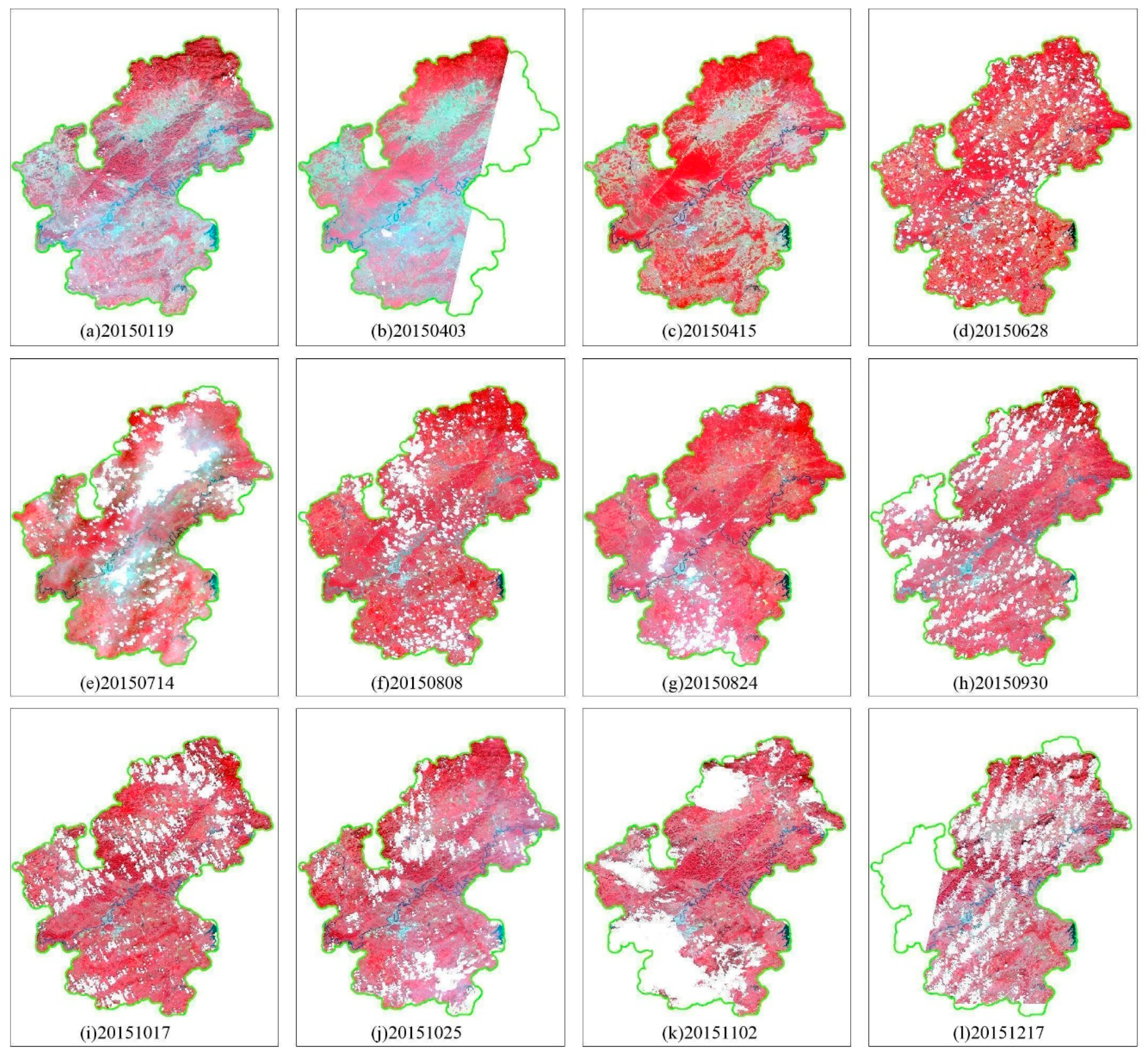

3.1. Study Area and Data

3.2. Experimental Method and Evaluation Method

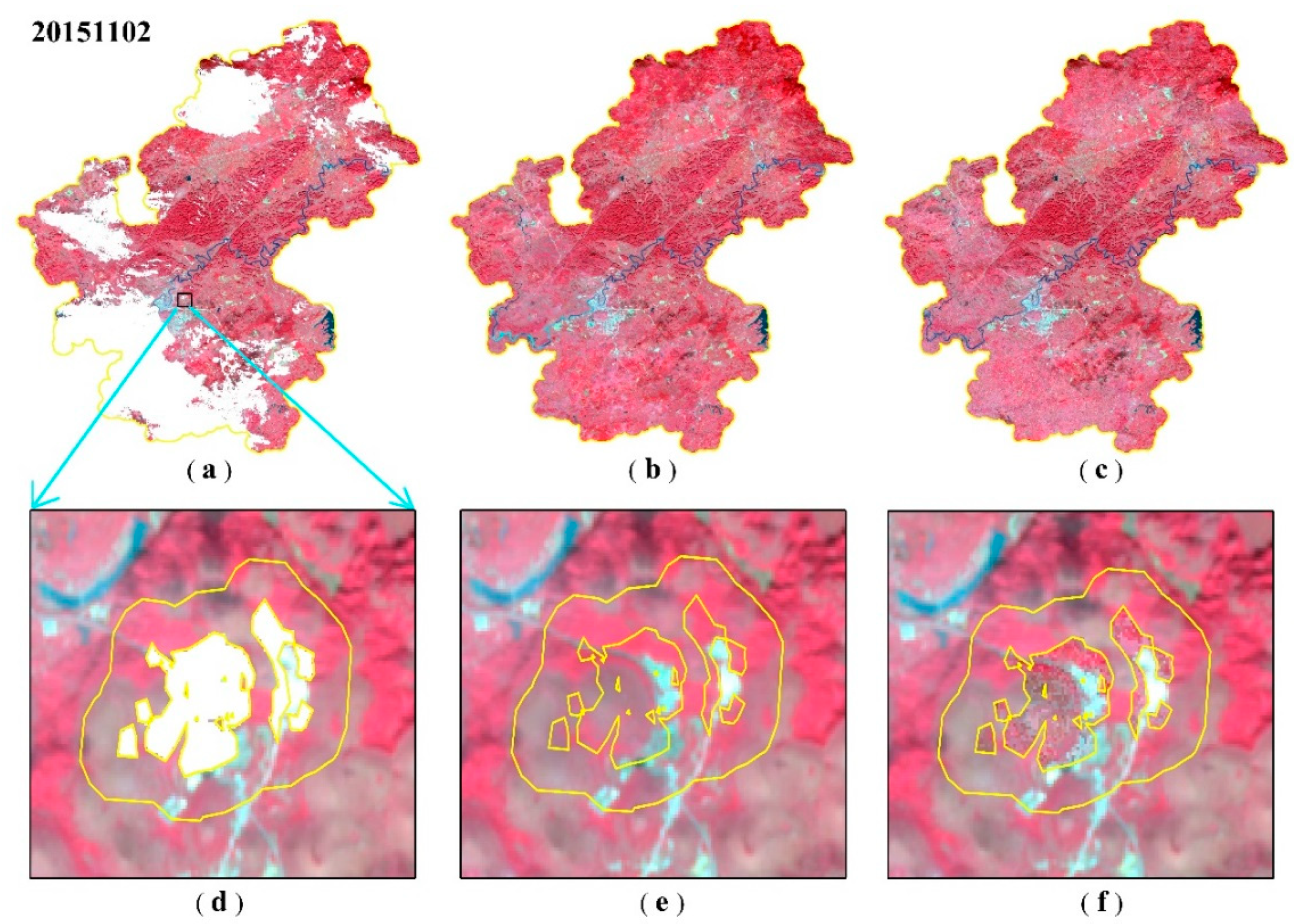

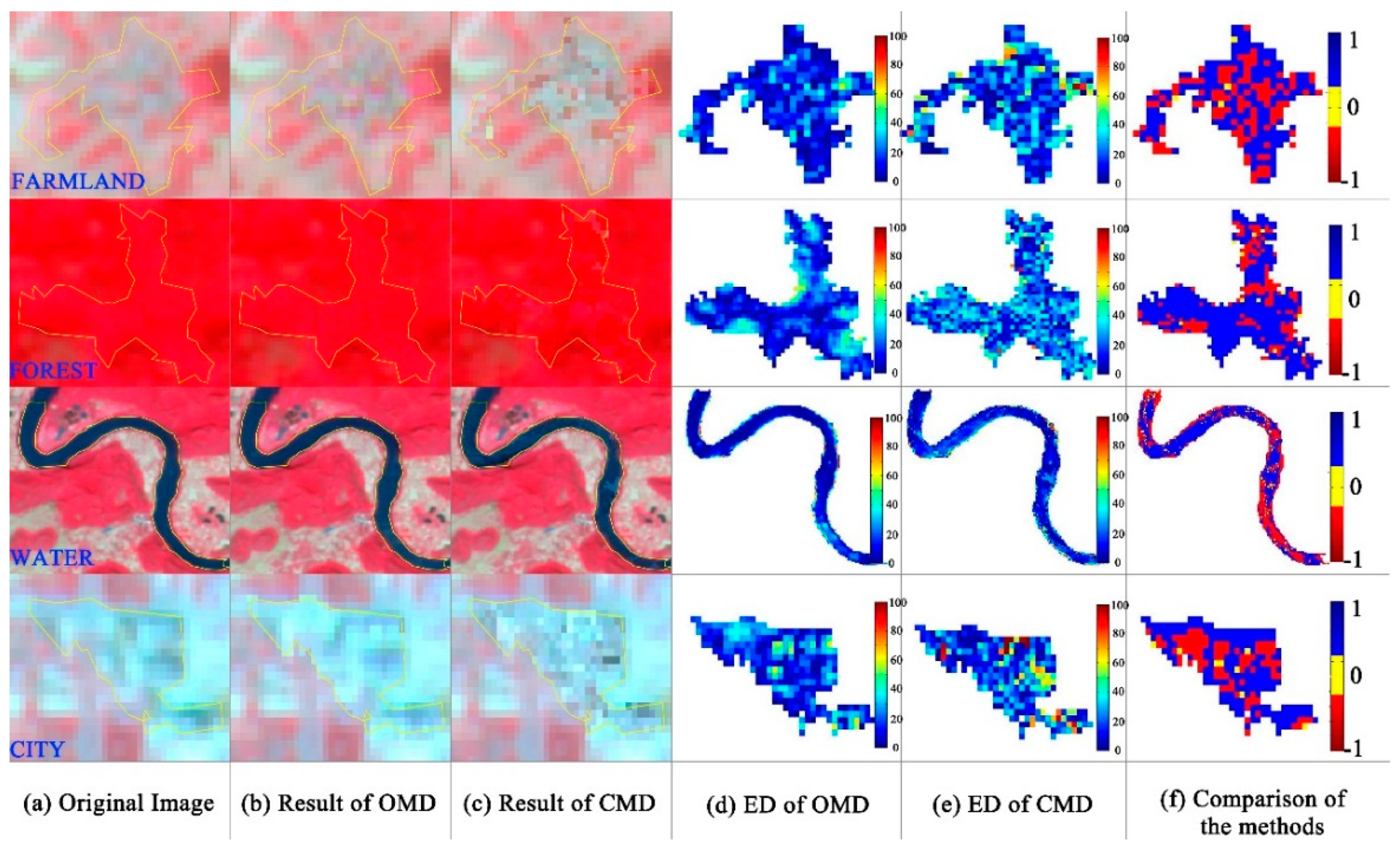

3.3. Experimental Results

4. Discussion

4.1. Factors that Influence the Accuracy of the Algorithm

- ➢

- For different missing STP types. Figure 8a shows the RMSE with one missing time for four types of STPs (i.e., farmland, forest, city, and water). As expected, the higher reconstruction accuracy is obtained for the city, and lower reconstruction accuracy is obtained for forest, farmland, and the lowest is obtained for water. The spectral variation among the cities is relatively slow, whereas the forest and farmland exhibit relatively high temporal variation and are difficult to reconstruct. The estimation error of a water body is the largest, which is primarily attributed to the water being susceptible to sediment charge and chlorophyll content, which does not have a well-defined evolution process.

- ➢

- For the missing date of the images. Figure 8a shows the simulation reconstruction accuracy of the corresponding data. The influence of the growth period of vegetation (forest and farmland) is large, and the influence of the stable time is small. The influence on accuracy for cities is small due to their small changes in the radiation characteristics.

- ➢

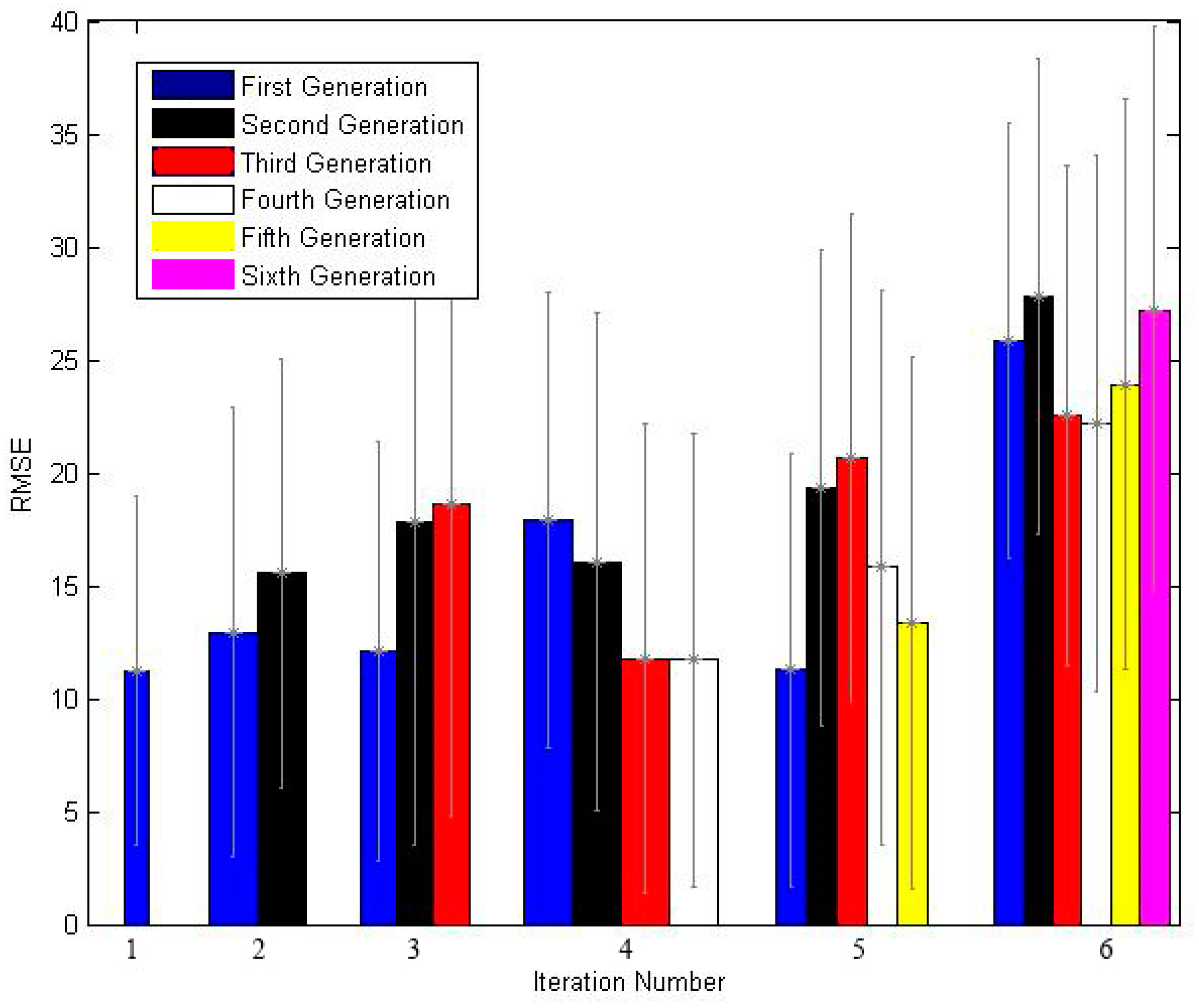

- For the missing number in the time series. Figure 8b shows the variation of RMSE with an increasing missing number. The RMSE value generally increases as the number of missing observations increases; thus, the reconstruction accuracy decreases. Since the method in this paper selects the entire STP as the reference STP, when the reference STP of the missing STP does not change, the influence on the accuracy of the reconstruction results is small. However, when the selected reference STP changes, the accuracy exhibits a steep decrease.

4.2. Error Propagation of the Early Reconstructed STP

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Kaufman, Y. The effect of subpixel clouds on remote sensing. Int. J. Remote Sens. 1987, 8, 839–857. [Google Scholar] [CrossRef]

- Zhang, Y.; Guindon, B.; Cihlar, J. An image transform to characterize and compensate for spatial variations in thin cloud contamination of Landsat images. Remote Sens. Environ. 2002, 82, 173–187. [Google Scholar] [CrossRef]

- Cai, Y.; Guan, K.; Peng, J.; Wang, S.; Seifert, C.; Wardlow, B.; Li, Z. A high-performance and in-season classification system of field-level crop types using time-series Landsat data and a machine learning approach. Remote Sens. Environ. 2018, 210, 35–47. [Google Scholar] [CrossRef]

- Huang, C.; Goward, S.N.; Masek, J.G.; Thomas, N.; Zhu, Z.; Vogelmann, J.E. An automated approach for reconstructing recent forest disturbance history using dense Landsat time series stacks. Remote Sens. Environ. 2010, 114, 183–198. [Google Scholar] [CrossRef]

- Dara, A.; Baumann, M.; Kuemmerle, T.; Pflugmacher, D.; Rabe, A.; Griffiths, P.; Hölzel, N.; Kamp, J.; Freitag, M.; Hostert, P. Mapping the timing of cropland abandonment and recultivation in northern Kazakhstan using annual Landsat time series. Remote Sens. Environ. 2018, 213, 49–60. [Google Scholar] [CrossRef]

- Yan, L.; Roy, D.P. Improved time series land cover classification by missing-observation-adaptive nonlinear dimensionality reduction. Remote Sens. Environ. 2015, 158, 478–491. [Google Scholar] [CrossRef]

- Holben, B.N. Characteristics of maximum-value composite images from temporal AVHRR data. Int. J. Remote Sens. 1986, 7, 1417–1434. [Google Scholar] [CrossRef] [Green Version]

- Brooks, E.B.; Thomas, V.A.; Wynne, R.H.; Coulston, J.W. Fitting the multitemporal curve: A fourier series approach to the missing data problem in remote sensing analysis. IEEE Trans. Geosci. Remote Sens. 2012, 50, 3340–3353. [Google Scholar] [CrossRef]

- Vuolo, F.; Ng, W.-T.; Atzberger, C. Smoothing and gap-filling of high resolution multi-spectral time series: Example of Landsat data. Int. J. Appl. Earth Obs. Geoinf. 2017, 57, 202–213. [Google Scholar] [CrossRef]

- Maxwell, S.K.; Schmidt, G.L.; Storey, J.C. A multi-scale segmentation approach to filling gaps in Landsat ETM+ SLC-off images. Int. J. Remote Sens. 2007, 28, 5339–5356. [Google Scholar] [CrossRef]

- Zhang, C.; Li, W.; Travis, D.J. Restoration of clouded pixels in multispectral remotely sensed imagery with cokriging. Int. J. Remote Sens. 2009, 30, 2173–2195. [Google Scholar] [CrossRef]

- Maalouf, A.; Carre, P.; Augereau, B.; Fernandez-Maloigne, C. A Bandelet-Based inpainting technique for clouds removal from remotely sensed images. IEEE Trans. Geosci. Remote Sens. 2009, 47, 2363–2371. [Google Scholar] [CrossRef]

- Helmer, E.H.; Ruefenacht, B. Cloud-free satellite image mosaics with regression trees and histrogram matching. Photogramm. Eng. Remote Sens. 2005, 71, 1079–1089. [Google Scholar] [CrossRef]

- Helmer, E.H.; Ruefenacht, B. A comparison of radiometric normalization methods when filling cloud gaps in Landsat imagery. Can. J. Remote Sens. 2007, 33, 325–340. [Google Scholar] [CrossRef]

- Melgani, F. Contextual reconstruction of cloud-contaminated multitemporal multispectral images. IEEE Trans. Geosci. Remote Sens. 2006, 44, 442–455. [Google Scholar] [CrossRef]

- Lin, C.; Tsai, P.; Lai, K.; Chen, J. Cloud removal from multitemporal satellite images using information cloning. IEEE Trans. Geosci. Remote Sens. 2013, 51, 232–241. [Google Scholar] [CrossRef]

- Lorenzi, L.; Melgani, F.; Mercier, G. Missing-Area reconstruction in multispectral images under a compressive sensing perspective. IEEE Trans. Geosci. Remote Sens. 2013, 51, 3998–4008. [Google Scholar] [CrossRef]

- Zhu, X.; Gao, F.; Liu, D.; Chen, J. A modified neighborhood similar pixel interpolator approach for removing thick clouds in Landsat images. IEEE Geosci. Remote Sens. Lett. 2012, 9, 521–525. [Google Scholar] [CrossRef]

- Cheng, Q.; Shen, H.; Zhang, L.; Yuan, Q.; Zeng, C. Cloud removal for remotely sensed images by similar pixel replacement guided with a spatio-temporal MRF model. ISPRS J. Photogramm. Remote Sens. 2014, 92, 54–68. [Google Scholar] [CrossRef]

- Malambo, L.; Heatwole, C.D. A multitemporal profile-based interpolation method for gap filling nonstationary data. IEEE Trans. Geosci. Remote Sens. 2016, 54, 252–261. [Google Scholar] [CrossRef]

- Yan, L.; Roy, D.P. Large-area gap filling of Landsat reflectance time series by spectral-angle-mapper based spatio-temporal similarity (SAMSTS). Remote Sens. 2018, 10, 609. [Google Scholar] [CrossRef]

- Gao, G.; Gu, Y. Multitemporal Landsat missing data recovery based on tempo-spectral angle model. IEEE Trans. Geosci. Remote Sens. 2017, 55, 3656–3668. [Google Scholar] [CrossRef]

- Chen, B.; Huang, B.; Chen, L.; Xu, B. Spatially and temporally weighted regression: A novel method to produce continuous cloud-free landsat imagery. IEEE Trans. Geosci. Remote Sens. 2017, 55, 27–37. [Google Scholar] [CrossRef]

- Pouliot, D.; Latifovic, R. Reconstruction of Landsat time series in the presence of irregular and sparse observations: Development and assessment in north-eastern Alberta, Canada. Remote Sens. Environ. 2018, 204, 979–996. [Google Scholar] [CrossRef]

- Blaschke, T. Object based image analysis for remote sensing. ISPRS J. Photogramm. Remote Sens. 2010, 65, 2–16. [Google Scholar] [CrossRef]

- Zomet, A.; Levin, A.; Peleg, S.; Weiss, Y. Seamless image stitching by minimizing false edges. IEEE Trans. Image Process. 2006, 15, 969–977. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Soille, P. Morphological image compositing. IEEE Trans. Pattern Anal. Mach. Intell. 2006, 28, 673–683. [Google Scholar] [CrossRef] [PubMed]

- Shapiro, L.G.; Stockman, G.C. Computer Vision; Prentice-Hall: Upper Saddle River, NJ, USA, 2001. [Google Scholar]

- Tilton, J.C.; Tarabalka, Y.; Montesano, P.M.; Gofman, E. Best merge region-growing segmentation with integrated nonadjacent region object aggregation. IEEE Trans. Geosci. Remote Sens. 2012, 50, 4454–4467. [Google Scholar] [CrossRef]

- Li, D.; Zhang, G.; Wu, Z.; Yi, L. An edge embedded marker-based watershed algorithm for high spatial resolution remote sensing image segmentation. IEEE Trans. Image Process. 2010, 19, 2781–2787. [Google Scholar] [PubMed]

- Dutrieux, L.P.; Jakovac, C.C.; Latifah, S.H.; Kooistra, L. Reconstructing land use history from Landsat time-series: Case study of a swidden agriculture system in Brazil. Int. J. Appl. Earth Obs. Geoinf. 2016, 47, 112–124. [Google Scholar] [CrossRef]

- Comaniciu, D.; Meer, P. Mean shift: A robust approach toward feature space analysis. IEEE Trans. Pattern Anal. Mach. Intell. 2002, 24, 603–619. [Google Scholar] [CrossRef]

- Michel, J.; Youssefi, D.; Grizonnet, M. Stable mean-shift algorithm and its application to the segmentation of arbitrarily large remote sensing images. IEEE Trans. Geosci. Remote Sens. 2015, 53, 952–964. [Google Scholar] [CrossRef]

- Desclée, B.; Bogaert, P.; Defourny, P. Forest change detection by statistical object-based method. Remote Sens. Environ. 2006, 102, 1–11. [Google Scholar] [CrossRef]

- Van Niel, T.G.; Mcvicar, T.R. Determining temporal windows for crop discrimination with remote sensing: A case study in south-eastern Australia. Comput. Electron. Agric. 2004, 45, 91–108. [Google Scholar] [CrossRef]

- Leng, W.; Liang, Y.; Lu, B. Climate suitability analysis for sugarcane planting in Chongzuo city. Agric. Environ. Resour. 2015, 3, 229–231. (In Chinese) [Google Scholar]

- Wang, X.; Ruan, H.; Huang, Z. Analysis on potential productivity of sugarcane in Guangxi Province Based on AEZ Model. Crops 2015, 21, 121–126. (In Chinese) [Google Scholar]

- Li, B. The present situation, problems and countermeasures of sugarcane cultivation in Guangxi. Chin. J. Trop. Agric. 2018, 38, 119–127. (In Chinese) [Google Scholar]

- Ming, D.; Li, J.; Wang, J.; Zhang, M. Scale parameter selection by spatial statistics for GeOBIA: Using mean-shift based multi-scale segmentation as an example. ISPRS J. Photogramm. Remote Sens. 2015, 106, 28–41. [Google Scholar] [CrossRef]

| Spectral Band | Name | Band Range (µm) | Spatial Resolution (m) | Field Width (km) | Revisit Time (Day) |

|---|---|---|---|---|---|

| B1 | blue | 0.45–0.52 | 16 | 800 | 2 |

| B2 | green | 0.52–0.59 | |||

| B3 | red | 0.63–0.69 | |||

| B4 | NIR | 0.77–0.89 |

| No. | Sensor | Image Acquisition Date | Missing Area Percent |

|---|---|---|---|

| 1 | WFV2 | 19 January 2015 | 1.2 |

| 2 | WFV2 | 3 April 2015 | 20 |

| 3 | WFV1 | 15 April 2015 | 0.1 |

| 4 | WFV2 | 28 June 2015 | 16.2 |

| 5 | WFV1 | 14 July 2015 | 21.2 |

| 6 | WFV2 | 8 August 2015 | 16.3 |

| 7 | WFV1 | 24 August 2015 | 9.9 |

| 8 | WFV1 | 30 September 2015 | 24.5 |

| 9 | WFV4 | 17 October 2015 | 20.8 |

| 10 | WFV2 | 25 October 2015 | 16.7 |

| 11 | WFV1 | 2 November 2015 | 31.6 |

| 12 | WFV2 | 17 December 2015 | 38.4 |

| Band | Method | Farmland | Forest | Water | City | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| RMSE | SDE | CC | RMSE | SDE | CC | RMSE | SDE | CC | RMSE | SDE | CC | ||

| NIR | OMD | 20.24 | 60.24 | 0.95 | 32.99 | 46.84 | 0.94 | 40.29 | 36.09 | 0.84 | 17.74 | 30.33 | 0.88 |

| CMD | 33.35 | 72.61 | 0.91 | 54.58 | 55.15 | 0.87 | 26.85 | 45.74 | 0.81 | 26.43 | 32.29 | 0.80 | |

| Red | OMD | 20.85 | 45.29 | 0.86 | 21.34 | 18.48 | 0.85 | 40.34 | 16.31 | 0.72 | 13.26 | 36.56 | 0.97 |

| CMD | 45.29 | 45.19 | 0.87 | 26.75 | 24.01 | 0.67 | 43.61 | 16.53 | 0.83 | 18.84 | 40.66 | 0.88 | |

| Green | OMD | 16.81 | 22.9 | 0.69 | 8.70 | 7.39 | 0.58 | 10.5 | 11.8 | 0.61 | 9.90 | 9.13 | 0.94 |

| CMD | 12.9 | 23.39 | 0.68 | 8.29 | 16.06 | 0.48 | 4.78 | 11.78 | 0.78 | 9.10 | 10.22 | 0.86 | |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Wu, W.; Ge, L.; Luo, J.; Huan, R.; Yang, Y. A Spectral–Temporal Patch-Based Missing Area Reconstruction for Time-Series Images. Remote Sens. 2018, 10, 1560. https://doi.org/10.3390/rs10101560

Wu W, Ge L, Luo J, Huan R, Yang Y. A Spectral–Temporal Patch-Based Missing Area Reconstruction for Time-Series Images. Remote Sensing. 2018; 10(10):1560. https://doi.org/10.3390/rs10101560

Chicago/Turabian StyleWu, Wei, Luoqi Ge, Jiancheng Luo, Ruohong Huan, and Yingpin Yang. 2018. "A Spectral–Temporal Patch-Based Missing Area Reconstruction for Time-Series Images" Remote Sensing 10, no. 10: 1560. https://doi.org/10.3390/rs10101560

APA StyleWu, W., Ge, L., Luo, J., Huan, R., & Yang, Y. (2018). A Spectral–Temporal Patch-Based Missing Area Reconstruction for Time-Series Images. Remote Sensing, 10(10), 1560. https://doi.org/10.3390/rs10101560