3.1. Cost Overrun Prediction Model

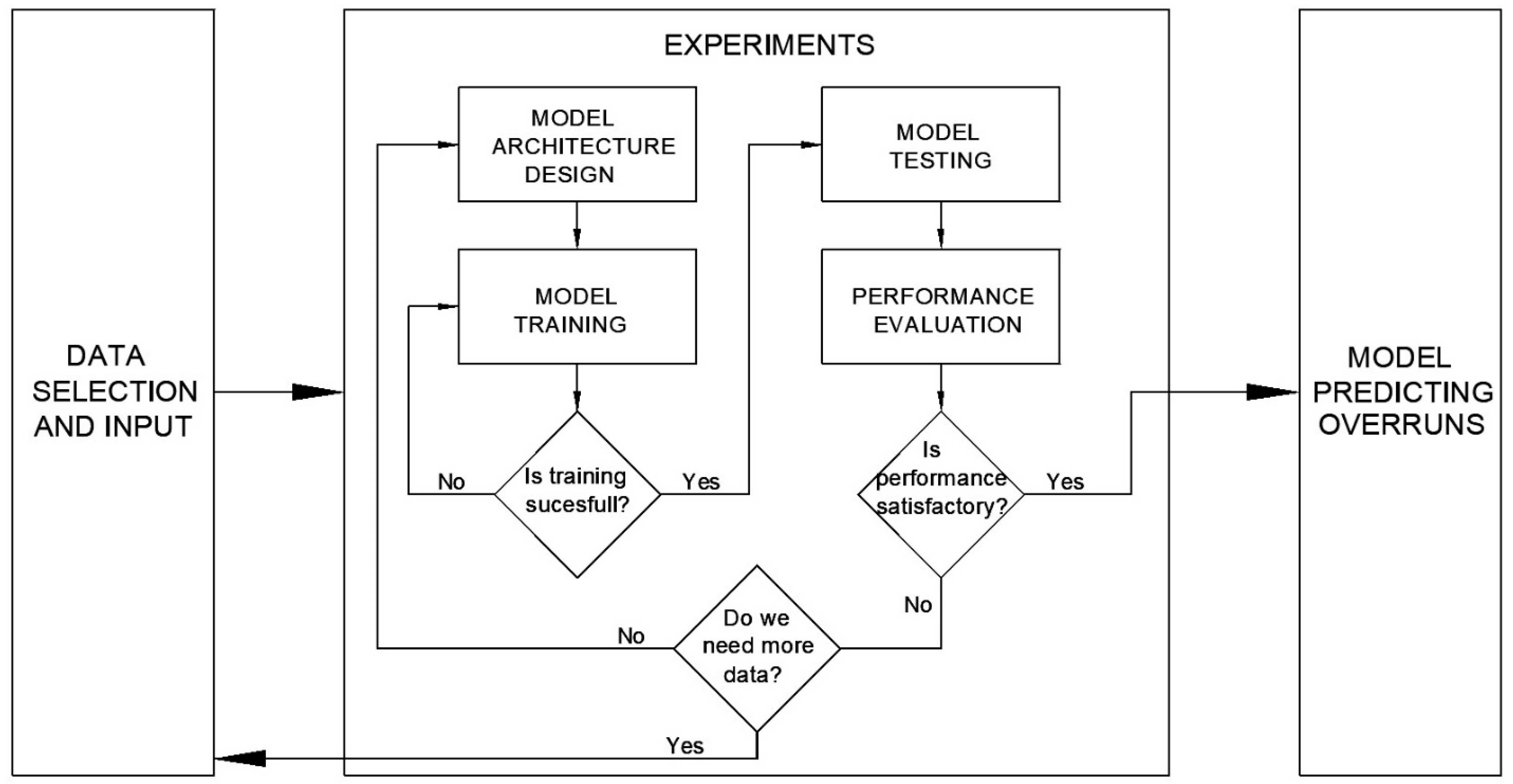

Ninety-one experiments were conducted to determine the optimal model for predicting cost overruns. Various configurations with different numbers of hidden layers and nodes were tested. The initial setup began with a single hidden layer featuring 18 nodes, progressively reducing the number of nodes by one in each subsequent experiment until only 5 nodes remained. Additionally, experiments included configurations with two hidden layers, exploring different combinations of nodes across these layers.

After analyzing the results from all 91 experiments, it was observed that the RMSE values are relatively low, suggesting that the models are generally accurate in their predictions. However, the MAPE values across all experiments were found to be extremely high, with some experiments reaching up to 523%, indicating extremely low prediction accuracy.

Table 5 highlights 10 experiments where the MAPE values are below 180%.

The RMSE values, ranging from 0.393 to 0.443 for the 10 most successful experiments, are acceptable but fell short of expectations. Although the correlation values are reasonably good, MAPE values are too high, indicating that the models are not suitable for practical application. According to

Table 3, the calculated values mean low prediction accuracy.

Nevertheless, the three most optimal solutions were selected for further detailed analysis and commentary (

Table 6). These models are characterized by having two hidden layers. Based on the RMSE values, the optimal model appears to be the first model, which consists of 15 nodes in the first hidden layer and 5 nodes in the second hidden layer. According to the MAPE values, the third model, which utilizes 11 nodes in the first hidden layer and 9 nodes in the second hidden layer, demonstrates the best performance among the models tested. However, despite this model achieving the best MAPE value in the set of experiments, this value remains exceptionally high. A MAPE value of 163.59% signifies that, on average, the model’s predictions deviate from the actual values by 163.59%. This exceptionally high MAPE indicates significant inaccuracies in the model’s predictions, suggesting that the model’s overall predictive performance is poor, pointing to the model’s instability and substantial variability in its predictions. This variability suggests that the model is inconsistent and that its predictions lack reliability. On the other hand, the correlation metric provides favorable results across all models, with the most optimal correlation observed in the model featuring 11 nodes in the first hidden layer and 10 nodes in the second hidden layer.

Considering all the presented parameters, the model with 11 nodes in the first hidden layer and 10 nodes in the second hidden layer was selected as the most optimal architecture for the cost overrun prediction model at this stage of the research. Although the RMSE and correlation values suggest that the model could be capable of accurate predictions, the high MAPE indicates that significant adjustments to the model are necessary. The presented values might indicate that the dataset used for training was too small, potentially failing to capture sufficient variability.

3.3. Discussion

The time overrun prediction model demonstrates a significantly superior performance compared to the cost overrun prediction model when applied to the same set of input data. Based on the optimal neural network architecture selected and the MAPE values presented in

Table 3, it is evident that the model achieves a MAPE of 10.93%, which is classified as good and approaches the boundary of high accuracy (MAPE ≤ 10%). Furthermore, the prediction accuracy for correlations, with a value of 0.979, is rated as very high, indicating exceptional performance. These results strongly support the efficacy of the approach taken and suggest that further refinements could substantially enhance the model’s performance.

In recent years, numerous studies have demonstrated the effectiveness of artificial neural networks (ANNs) for predicting construction project costs and duration.

Han et al. [

16] introduced a BIM-integrated cost prediction model based on a Gray BP Neural Network (PGNN) optimized with the Sparrow Search Algorithm. By extracting engineering quantities from BIM models and incorporating material prices and time-series data, their model achieved a maximum relative error of only 2.99%, an RMSE of 0.1358, and an R

2 of 0.9819. Similarly, Zhang and Mo [

17] developed a BIM–Elman Neural Network (ENN) optimized through Particle Swarm Optimization. Their model utilized a combination of geometric, material, and design-change data from BIM and achieved a prediction accuracy exceeding 95%, with R

2 values above 0.95. Zhang and Zhang [

18] proposed the Deep CostNet for Building Engineering Techniques (DCN-BET), a deep learning-based framework designed for real-time construction cost optimization. Leveraging historical cost records and structured project attributes, the model dynamically updated predictions as new project data became available. Liu et al. [

19] introduced a hypergraph deep learning approach for predicting actual construction costs, modeling complex interdependencies among project features and economic variables. By capturing high-order relationships that traditional neural networks typically overlook, the model demonstrated a high early-stage prediction accuracy and improved generalization, particularly for multidimensional data inputs.

In addition to cost prediction, several recent studies have also explored the use of neural networks to forecast construction projects’ duration, particularly in the early planning phases. Alsugair et al. [

20] proposed a straightforward feedforward ANN model using only three input variables: contract cost, duration, and sector. Despite its simplicity, the model outperformed linear regression, achieving a MAPE of 12.22%. Ji et al. [

21] proposed a hybrid model combining K-means clustering, genetic algorithms, and BP neural networks to forecast residential project durations based on structured numeric inputs. Their method demonstrated a strong predictive accuracy and generalization even on a relatively small dataset. Another advanced study by Anaraki et al. [

22] integrated feedforward and Long Short-Term Memory (LSTM) neural networks to capture both time-independent and sequential time-dependent variables, significantly improving the prediction quality in dynamic project environments.

All these models rely heavily on structured, quantitative data, such as contract values, floor areas, or predefined schedules, and aim to predict the total planned cost or timeline rather than track or anticipate deviations. The outputs are typically overall cost estimates or duration forecasts, with little to no integration of qualitative or stakeholder-driven project dynamics. In contrast, the models presented in this study are specifically designed to predict time and cost overruns during the execution phase, enabling more proactive risk management. Rather than forecasting the total contract duration, they aim to detect emerging delays based on technical and stakeholder performance indicators, such as dynamic plan adherence, change management, client satisfaction, and incident rates. This study recognizes that successful project delivery involves more than accurate forecasting of total cost or duration. It requires the ability to detect early signs of deviation, particularly those rooted in human factors such as team satisfaction, communication quality, and client expectations. The models were trained on a small but highly detailed dataset comprising five real-world infrastructure projects, each evaluated at three stages of execution.

While the time overrun prediction model achieved strong results (MAPE = 10.93%, RMSE = 0.128, correlation = 0.979), the cost overrun model presented challenges, with a high MAPE (166.76%) but a solid RMSE (0.4179) and correlation (0.936). These seemingly conflicting results are largely attributable to the nature of the target variable, cost overruns expressed as percentages, where very small actual cost deviations can produce disproportionately large percentage errors. This highlights a known limitation of MAPE as a metric and reinforces the importance of considering RMSE and correlation alongside it. Unlike other models that rely primarily on technical inputs, this model integrates both quantitative data and qualitative insights. This allows it to capture dimensions of projects’ performance often ignored in traditional cost models. Rather than predicting total cost or duration, the models focus on identifying potential deviations, which are more relevant during the execution phase for real-time decision-making. Furthermore, by including key performance areas like safety, quality, and team satisfaction, the model supports a more holistic, sustainability-aware view of projects’ success. Most importantly, the model emphasizes real-world relevance. Grounded in stakeholder interviews and actual case documentation, the dataset captures the lived complexity of projects’ execution, something not always reflected in BIM or structured contract data.

In summary, while existing studies have shown excellent predictive accuracy, they typically operate in well-defined, data-rich environments and aim to estimate final cost and budget values. This research, though facing challenges in prediction accuracy (particularly with MAPE in the cost overrun prediction model), offers a more dynamic and context-sensitive model. It reflects the realities of construction projects’ execution and contributes a novel perspective by integrating subjective performance indicators and focusing on overruns rather than static totals. These attributes make it highly relevant for advancing proactive, sustainability-informed construction project management.

Although the current progress in developing cost and time overrun prediction models is promising, the models do not yet achieve the desired predictive accuracy. Since MAPE values are crucial for assessing models’ accuracy, the high MAPE value (e.g., 163.59% in the case of cost overrun prediction) clearly indicates significant deviations and a lack of reliability in practical applications. Therefore, significant improvements are required to enhance their practical applicability through the expansion of the dataset and improvement in standardization methods to ensure greater stability and accuracy in predictions. The identified optimal models provide a foundation for future research aimed at advancing the accuracy and utility of cost and time predictions in construction projects. It is anticipated that continued research efforts will yield substantially better results.

Given the comparisons with other models in the literature, it is evident that while the developed models show promise, further adjustments and optimizations are required. The current model, based on a dataset of five projects, has offered valuable insights into its performance. However, its scalability to larger datasets and diverse project types remains uncertain. To address this, future research will focus on expanding the dataset within the defined project selection attributes and retraining the model on a broader sample to empirically test its robustness and generalization capacity. Future work may also involve increasing the number of training experiments to better explore the space of possible neural network architectures, parameters, and hyperparameters. Furthermore, the model can be fine-tuned by adjusting input data attributes to examine how reducing the number of attributes impacts the model’s predictive performance. In this context, feature selection techniques will be applied to systematically identify the most relevant input variables, using both algorithmic methods and domain-specific knowledge to retain the most informative and practically significant features. Although ANN was the sole modeling technique explored in this study, future work will consider additional algorithms to determine whether comparable or improved results can be achieved with alternative methods.

While the current models were developed using a relatively small, domain-specific dataset, their underlying architecture is adaptable to larger and more heterogeneous data sources. Scaling to broader datasets would involve fine-tuning of hyperparameters and potentially deeper architectures to capture greater variability. Once the database is expanded, the models could also be explored in the context of transfer learning, where knowledge gained from construction-related data could be adapted to other industries with similar project execution characteristics.

In parallel, one of the key challenges identified during this study relates to models’ evaluation and interpretability. The high MAPE values in the cost overrun model, despite an acceptable RMSE and correlation, limit the model’s immediate applicability in practice. In real-world project environments, such inconsistency in prediction accuracy can undermine key project stakeholders’ trust and reduce the model’s usefulness as a decision-support tool. Until further improvements are made, this model should be used with caution, ideally in combination with expert judgment. Identifying this challenge highlights the importance of carefully selecting performance measures and considering their limitations when applying predictive models in complex construction scenarios. Future research will therefore consider integrating alternative metrics such as Symmetric Mean Absolute Percentage Error (SMAPE), Mean Absolute Error (MAE), and Mean Squared Error (MSE), which can offer a more balanced view of models’ performance.