State Estimation and Remaining Useful Life Prediction of PMSTM Based on a Combination of SIR and HSMM

Abstract

1. Introduction

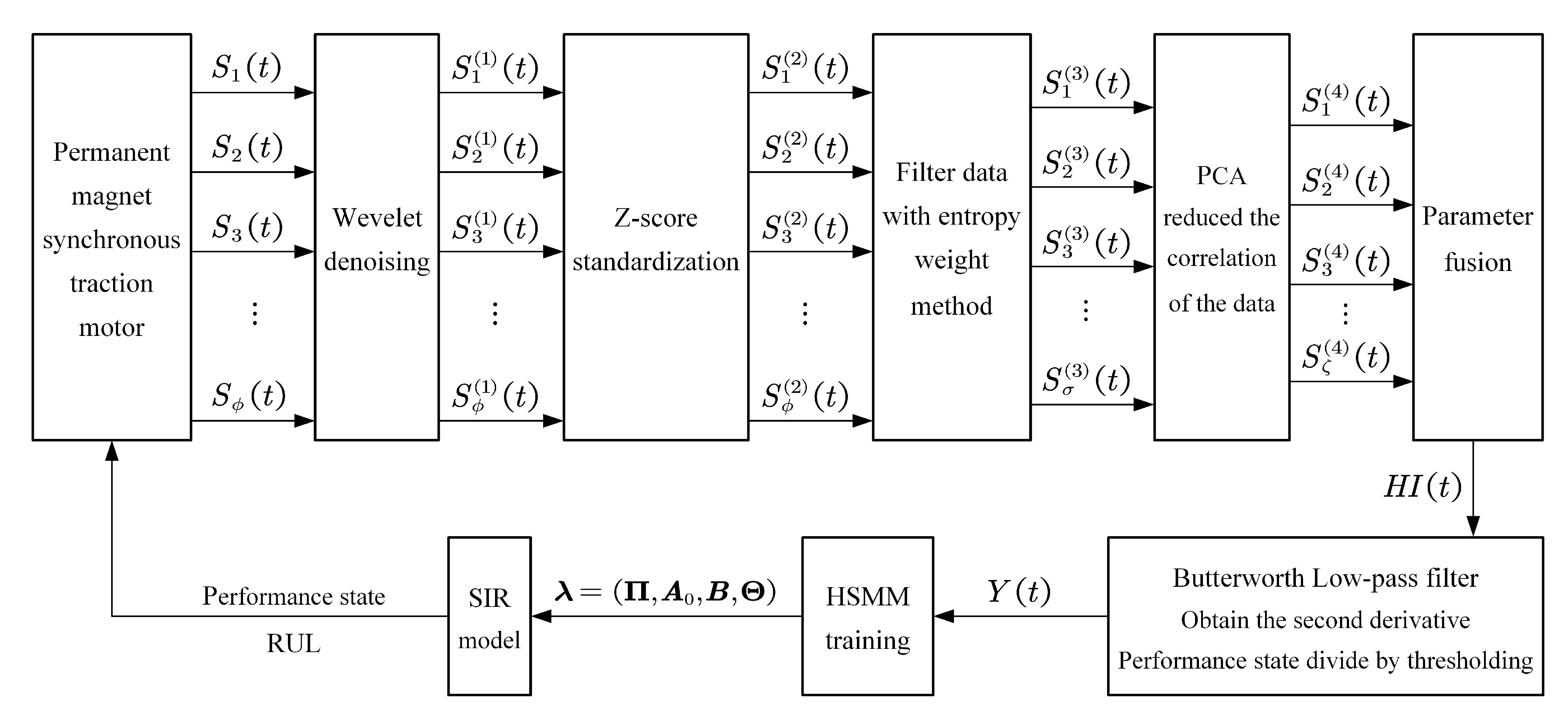

2. MFHI Construction

2.1. Wavelet Denoising

2.2. Filter Data with Entropy Weight Method

2.3. PCA Reduces the Correlation of the Data

- Calculate the covariance matrix of

- Calculate the eigenvalues of , sorting from largest to smallest to get , and obtain the corresponding eigenvector .

- Obtain the principal components, where

- Sort the principal components to get the cumulative contribution rate :

2.4. Parameters Fusion

3. State Estimation and RUL Prediction Combining SIR and HSMM

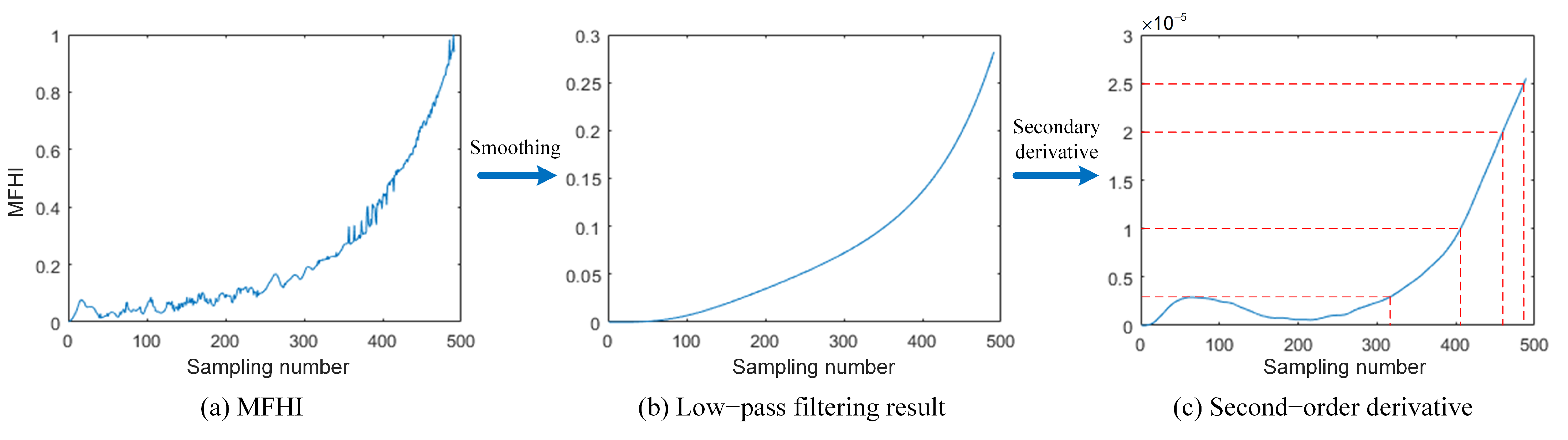

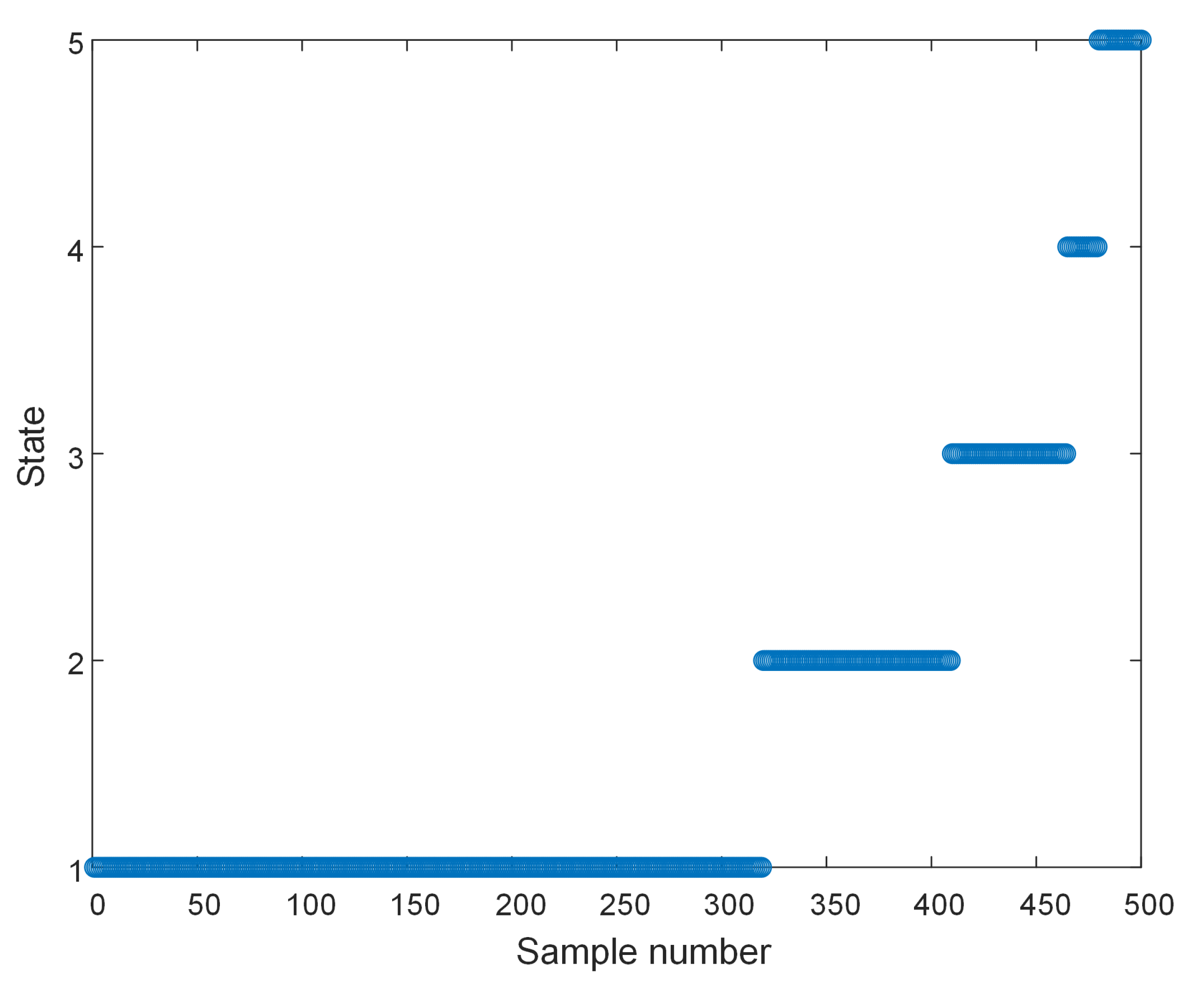

3.1. Observation Sequence Acquisition

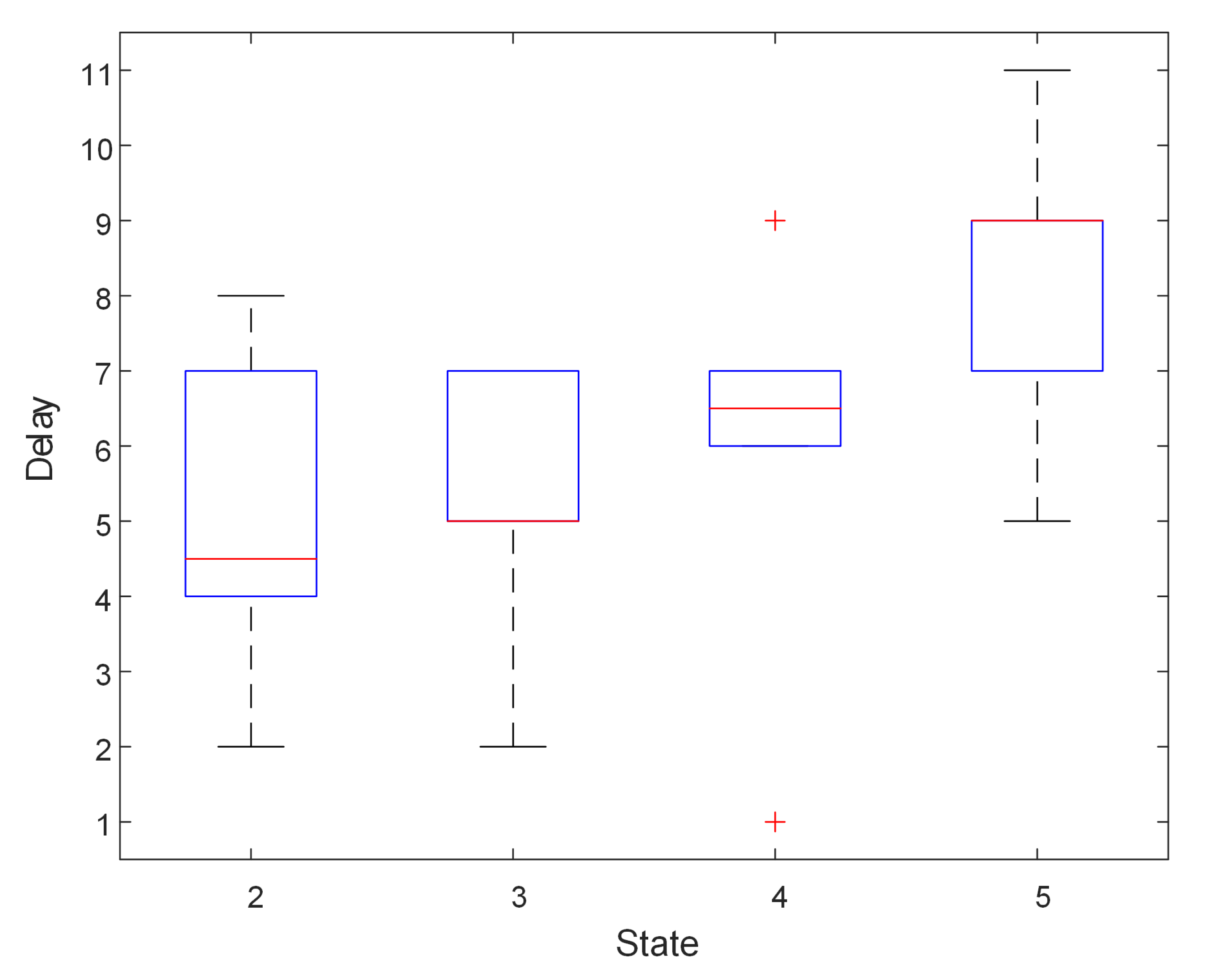

3.2. HSMM Training

- The initial state probability distribution:where is the health state of the PMSTM at the initial moment.

- The state transition probability matrix, which represents the probability of transition between states during the operation of PMSTM:where represents the probability that the PMSTM transitions from state i to state j during the running process.

- The observed state probability matrix:where Q is the number of observable states of PMSTM, and represents the probability of observing the qth observable state when the health state is .

- The dwell time distribution for each state:where is the parameter of the probability density function. is the mean value and represents the proportion of different states.

- Through the current model parameters , the expectation of under condition is obtained by combining the Viterbi algorithm, the forward algorithm and the backward algorithm.

- According to the current observation sequence, the most likely hidden state sequence is obtained by the Viterbi algorithm.Calculate the local state at the initial moment:and recurseThe maximum at time t is the probability of the most likely hidden state. The auxiliary variable is used to store the optimal state of PMSTM at time under the condition that time t is in state j. Thus:Backtracking to get the sequence of hidden states:so the dwell time can be obtained:

- Calculate variables using forward and backward algorithms.Calculate the forward probability of each hidden state at the initial moment:get forward variableCalculate variables using the backward algorithmand get the expectation of under the condition of

- The parameters of the model can be updated by maximizing the expected valueThe equation for updating the model parameters can then be obtained:

| Algorithm 1: HSMM training procedure. |

|

3.3. Recurrent Estimation of Current Health State

- Generate an initial particle set according to the state probability distribution at the initial moment.

- State transition (prediction): According to the particle set obtained at time , the particle set of the state at time t is obtained through the state transition probability matrix :

- Calculate particle weights (update): According to the observed value at time t and the observed state probability matrix , the weight value of each predicted particle is obtained:

- Normalize the calculated weight value of each particle:

- State estimation: Calculate the estimated value of the current health state according to the particle set at time t and the weight, , of each particle:

- Resampling: Calculate the number of effective particles according to the normalized weight of each particle, and resample and update the particle set as the particle set for state estimation at the next moment. The effective particle number can be calculated as:

- State transition probability matrix update: Calculate a new state transition probability matrix according to the residence time of each healthy state of the PMSTM:where

3.4. RUL Prediction

- Calculate the remaining time of the current state.An estimate of the remaining time of the PMSTM in this state can be obtained by weighted summation.

- Calculate the remaining time of the subsequent state.Calculate the next state according to the initial state transition probability matrix until the failure state. The probability that the next state of the PMSTM may appear is defined as:The highest probability is the state that may appear at the next moment:If reaches a failure state, the PMSTM will fail when the dwell time is reached in that state. Calculate the remaining time in each state:

4. Proposed Method

5. Experimental Details and Analysis of Results

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| DCNN | Deep convolutional neural network |

| GMM | Gaussian mixture model |

| HI | Health index |

| HMM | Hidden Markov model |

| HSMM | Hidden Semi-Markov model |

| ISOMAP | Isometric mapping |

| LPP | Lifetime prediction performance |

| MFHI | Multi-parameter fusion health index |

| PCA | Principal component analysis |

| PMSTM | Permanent magnet synchronous traction motor |

| PSO | Particle swarm optimization |

| RMSE | Root mean square error |

| RNN | Recurrent neural network |

| RUL | Remaining useful life |

| SIR | Sample importance resampling |

| SNR | Signal-to-noise ratio |

| SVM | Support vector machine |

| URT | Urban rail transit |

References

- Li, X.; Peter, E.D. Love. Procuring urban rail transit infrastructure by integrating land value capture and public-private partnerships: Learning from the cities of Delhi and Hong Kong. Cities 2022, 122, 103545. [Google Scholar] [CrossRef]

- Zhang, C.; Lu, D.; Xiao, X.; Wang, Y. Modeling and analysis of global energy consumption process of urban rail transit system based on Petri net. J. Rail Transp. Plan. Manag. 2022, 21, 100293. [Google Scholar]

- Jia, Z.; Wu, L.; Chen, W.; Yu, L.; Cao, Y.; Jia, H. Optimization of Transverse Flux Permanent Magnet Machine with Double Omega-Hoop Stator. In Proceedings of the 2019 IEEE International Electric Machines Drives Conference (IEMDC), San Diego, CA, USA, 11–15 May 2019; pp. 1925–1928. [Google Scholar]

- Wang, X.; Fang, X.; Lin, F.; Yang, Z. Predictive current control of permanent-magnet synchronous motors for rail transit including quasi six-step operation. In Proceedings of the 2017 IEEE Transportation Electrification Conference and Expo, Asia-Pacific (ITEC Asia-Pacific), Harbin, China, 7–10 August 2017; Volume 122, pp. 1–6. [Google Scholar]

- Zhang, C.; Zhang, Y.; Dui, H.; Wang, S.; Tomovic, M.M. Importance measure-based maintenance strategy considering maintenance costs. Eksploat. Niezawodn. Maint. Reliab. 2022, 24, 15–24. [Google Scholar] [CrossRef]

- Yang, L.; Wang, F.; Zhang, J.; Ren, W. Remaining useful life prediction of ultrasonic motor based on Elman neural network with improved particle swarm optimization. Measurement 2019, 143, 27–38. [Google Scholar] [CrossRef]

- Chen, Y.; Peng, G.; Zhu, Z.; Li, S. A novel deep learning method based on attention mechanism for bearing remaining useful life prediction. Appl. Soft Comput. 2020, 86, 105919. [Google Scholar] [CrossRef]

- Liu, Y.; Hu, Z.; Todd, M.; Hu, C. Data-Driven Remaining Useful Life Estimation Using Gaussian Mixture Models. In Proceedings of the AIAA Scitech 2021 Forum, Virtual Event, 11–21 January 2021. [Google Scholar]

- Downey, A.; Lui, Y.; Hu, C.; Laflamme, S.; Hu, S. Physics-based prognostics of lithium-ion battery using non-linear least squares with dynamic bounds. Reliab. Eng. Syst. Saf. 2019, 182, 1–12. [Google Scholar] [CrossRef]

- Guo, Q.; Shi, J.; Wang, S.; Zhang, C. Deep Degradation Feature Extraction and RUL Estimation for Switching Power Unit. In Proceedings of the 2019 Prognostics and System Health Management Conference (PHM-Qingdao), Qingdao, China, 25–27 October 2019; pp. 1–5. [Google Scholar]

- Yang, F.; Habibullah, M.S.; Shen, Y. Remaining useful life prediction of induction motors using nonlinear degradation of health index. Mech. Syst. Signal Process. 2021, 148, 107183. [Google Scholar] [CrossRef]

- Zhu, J.; Chen, N.; Peng, W. Estimation of Bearing Remaining Useful Life Based on Multiscale Convolutional Neural Network. IEEE Trans. Ind. Electron. 2019, 66, 3208–3216. [Google Scholar] [CrossRef]

- Shifat, T.A.; Jang-Wook, H. Remaining Useful Life Estimation of BLDC Motor Considering Voltage Degradation and Attention-Based Neural Network. IEEE Access 2020, 8, 168414–168428. [Google Scholar] [CrossRef]

- Zhang, Y.; Xiong, R.; He, H.; Pecht, M.G. Long Short-Term Memory Recurrent Neural Network for Remaining Useful Life Prediction of Lithium-Ion Batteries. IEEE Trans. Veh. Technol. 2018, 67, 5695–5705. [Google Scholar] [CrossRef]

- Li, X.; Ding, Q.; Sun, J. Remaining useful life estimation in prognostics using deep convolution neural networks. Reliab. Eng. Syst. Saf. 2018, 172, 1–11. [Google Scholar] [CrossRef]

- Sateesh Babu, G.; Zhao, P.; Li, X. Deep Convolutional Neural Network Based Regression Approach for Estimation of Remaining Useful Life. In Proceedings of the International Conference on Database Systems for Advanced Applications, Cham, Dallas, TX, USA, 16–19 April 2016; pp. 214–228. [Google Scholar]

- Chen, W.; Chen, W.; Liu, H.; Wang, Y.; Bi, C.; Gu, Y. A RUL Prediction Method of Small Sample Equipment Based on DCNN-BiLSTM and Domain Adaptation. Mathematics 2022, 10, 1022. [Google Scholar] [CrossRef]

- Gougam, F.; Rahmoune, C.; Benazzouz, D.; Varnier, C.; Nicod, J.-M. Health Monitoring Approach of Bearing: Application of Adaptive Neuro Fuzzy Inference System (ANFIS) for RUL-Estimation and Autogram Analysis for Fault-Localization. In Proceedings of the 2020 Prognostics and Health Management Conference (PHM-Besançon), Besancon, France, 4–7 May 2020; pp. 200–206. [Google Scholar]

- Kewalramani, R.; Ram, A. Estimation of Remaining Useful Life of Electric Motor using supervised deep learning methods. In Proceedings of the 2019 IEEE Transportation Electrification Conference (ITEC-India), Bengaluru, India, 17–19 December 2019; pp. 1–4. [Google Scholar]

- Ali, M.U.; Zafar, A.; Nengroo, S.H.; Hussain, S.; Park, G.-S.; Kim, H.-J. Online Remaining Useful Life Prediction for Lithium-Ion Batteries Using Partial Discharge Data Features. Energies 2019, 12, 4366. [Google Scholar] [CrossRef]

- García Nieto, P.J.; García-Gonzalo, E.; Sánchez Lasheras, F.; de Cos Juez, F.J. Hybrid PSO–SVM-based method for forecasting of the remaining useful life for aircraft engines and evaluation of its reliability. Reliab. Eng. Syst. Saf. 2015, 138, 219–231. [Google Scholar] [CrossRef]

- Le Son, K.; Fouladirad, M.; Barros, A.; Levrat, E.; Iung, B. Remaining useful life estimation based on stochastic deterioration models: A comparative study. Reliab. Eng. Syst. Saf. 2013, 112, 165–175. [Google Scholar] [CrossRef]

- Chen, R.; Zhang, C.; Wang, S.; Hong, L. Bivariate-Dependent Reliability Estimation Model Based on Inverse Gaussian Processes and Copulas Fusing Multisource Information. Aerospace 2022, 9, 392. [Google Scholar] [CrossRef]

- Gao, Z.; Li, J.; Wang, R. Prognostics uncertainty reduction by right-time prediction of remaining useful life based on hidden Markov model and proportional hazard model. Eksploat.-Niezawodn.-Maint. Reliab. 2021, 23, 154–164. [Google Scholar]

- Liu, T.; Zhu, K.; Zeng, L. Diagnosis and Prognosis of Degradation Process via Hidden Semi-Markov Model. IEEE/ASME Trans. Mechatronics 2018, 23, 1456–1466. [Google Scholar] [CrossRef]

- Xiao, Q.; Fang, Y.; Liu, Q.; Zhou, S. Online machine health prognostics based on modified duration-dependent hidden semi-Markov model and high-order particle filtering. Int. J. Adv. Manuf. Technol. 2018, 94, 1283–1297. [Google Scholar] [CrossRef]

- Ma, Y.; Jia, X.; Hu, Q.; Bai, H.; Guo, C.; Wang, S. A New State Recognition and Prognosis Method Based on a Sparse Representation Feature and the Hidden Semi-Markov Model. IEEE Access 2020, 8, 119405–119420. [Google Scholar] [CrossRef]

- Zhu, K.; Liu, T. Online Tool Wear Monitoring Via Hidden Semi-Markov Model With Dependent Durations. IEEE Trans. Ind. Inform. 2018, 14, 69–78. [Google Scholar] [CrossRef]

- Cui, L.; Wang, X.; Xu, Y.; Jiang, H.; Zhou, J. A novel Switching Unscented Kalman Filter method for remaining useful life prediction of rolling bearing. Measurement 2019, 135, 678–684. [Google Scholar] [CrossRef]

- Shifat, T.A.; Yasmin, R.; Hur, J. A Data Driven RUL Estimation Framework of Electric Motor Using Deep Electrical Feature Learning from Current Harmonics and Apparent Power. Energies 2021, 14, 3156. [Google Scholar] [CrossRef]

- Wang, Y.; Peng, Y.; Zi, Y.; Jin, X.; Tsui, K. A Two-Stage Data-Driven-Based Prognostic Approach for Bearing Degradation Problem. IEEE Trans. Ind. Inform. 2016, 12, 924–932. [Google Scholar] [CrossRef]

- Jouin, M.; Gouriveau, R.; Hissel, D.; Péra, M.; Zerhouni, N. Particle filter-based prognostics: Review, discussion and perspectives. Mech. Syst. Signal Process. 2016, 72–73, 2–31. [Google Scholar] [CrossRef]

- Lee, Y.; Kim, I.; Choi, S.; Oh, J.; Kim, N. Remaining useful life prediction for PMSM under radial load using particle filter. Smart Struct. Syst. 2022, 29, 799–805. [Google Scholar]

- Povey, D.; Burget, L.; Agarwal, M.; Akyazi, P.; Feng, K.; Ghoshal, A.; Glembek, O.; Goel, N.K.; Karafiát, M.; Rastrow, A.; et al. Subspace Gaussian Mixture Models for speech recognition. In Proceedings of the 2010 IEEE International Conference on Acoustics, Speech and Signal Processing, Dallas, TX, USA, 14–19 March 2010; pp. 4330–4333. [Google Scholar]

- Hu, Z.; Mahadevan, S. Probability models for data-Driven global sensitivity analysis. Reliab. Eng. Syst. Saf. 2019, 187, 40–57. [Google Scholar] [CrossRef]

- Liu, K.; Gebraeel, N.Z.; Shi, J. A Data-Level Fusion Model for Developing Composite Health Indices for Degradation Modeling and Prognostic Analysis. IEEE Trans. Autom. Sci. Eng. 2013, 10, 652–664. [Google Scholar] [CrossRef]

- Ahmad, W.; Khan, S.A.; Manjurul Islam, M.M.; Kim, J. A reliable technique for remaining useful life estimation of rolling element bearings using dynamic regression models. Reliab. Eng. Syst. Saf. 2019, 184, 67–76. [Google Scholar] [CrossRef]

- Ahmad, W.; Khan, S.A.; Kim, J. A Hybrid Prognostics Technique for Rolling Element Bearings Using Adaptive Predictive Models. IEEE Trans. Ind. Electron. 2018, 65, 1577–1584. [Google Scholar] [CrossRef]

- Liu, J.; Lei, F.; Pan, C.; Hu, D.; Zuo, H. Prediction of remaining useful life of multi-stage aero-engine based on clustering and LSTM fusion. Reliab. Eng. Syst. Saf. 2021, 214, 107807. [Google Scholar] [CrossRef]

- Chui, K.T.; Gupta, B.B.; Vasant, P. A Genetic Algorithm Optimized RNN-LSTM Model for Remaining Useful Life Prediction of Turbofan Engine. Electronics 2021, 10, 285. [Google Scholar] [CrossRef]

| State | Normal | Mild Degradation | Moderate Degradation | Severe Degradation | Failure |

|---|---|---|---|---|---|

| Label | 1 | 2 | 3 | 4 | 5 |

| Signal | The Hard Threshold | The Soft Threshold | The Fixed Threshold | |||

|---|---|---|---|---|---|---|

| Number | SNR (dB) | RMSE | SNR (dB) | RMSE | SNR (dB) | RMSE |

| 1 | 22.6290 | 1.7373 | 22.6290 | 1.7373 | 22.6290 | 1.7373 |

| 2 | 17.7301 | 72.3660 | 17.7301 | 72.3660 | 17.9301 | 72.3662 |

| 3 | 18.7183 | 5.4896 | 18.7183 | 5.4896 | 18.7183 | 5.4899 |

| 4 | 28.8169 | 295.2581 | 28.9579 | 290.4806 | 28.9879 | 289.4699 |

| 5 | 58.7933 | 2.7439 | 58.7933 | 2.7439 | 58.7933 | 2.7437 |

| 6 | 17.5773 | 69.3978 | 17.5773 | 69.3978 | 17.5773 | 69.3973 |

| 7 | 21.9337 | 3.1351 | 21.9337 | 3.1351 | 21.9335 | 3.1351 |

| 8 | 17.7781 | 181.0096 | 18.5190 | 166.2539 | 18.8581 | 159.9033 |

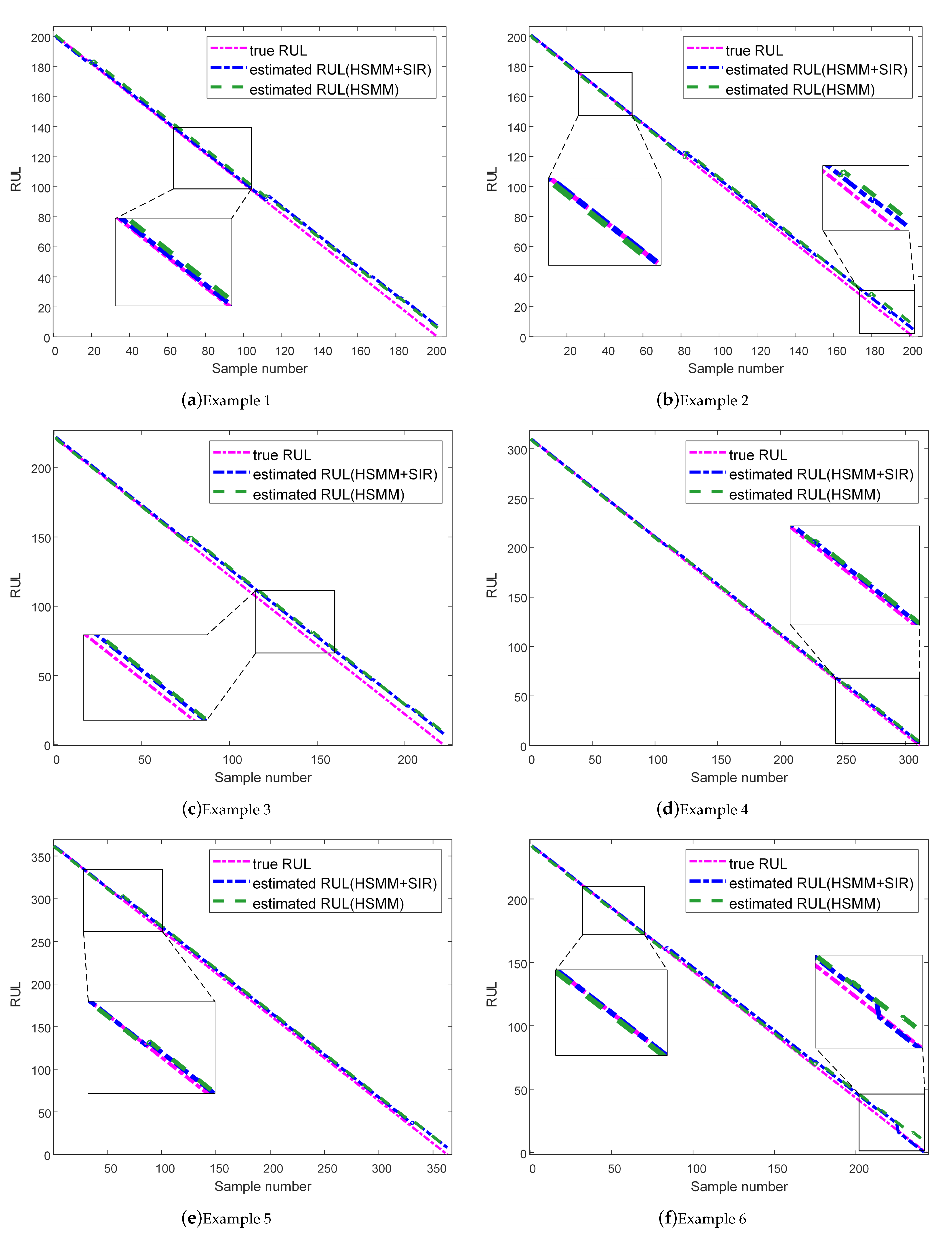

| Method | Index | 1 | 2 | 3 | 4 | 5 | 6 |

|---|---|---|---|---|---|---|---|

| HSMM+SIR | LPP | 95.16% | 93.33% | 90.00% | 96.95% | 95.16% | 96.48% |

| RMSE | 3.2484 | 2.7805 | 4.9477 | 1.8166 | 4.9333 | 2.6907 | |

| HSMM | LPP | 95.00% | 92.33% | 90.00% | 95.86% | 95.16% | 91.79% |

| RMSE | 3.7523 | 3.7951 | 5.5617 | 2.2050 | 4.1889 | 3.1714 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Tian, G.; Wang, S.; Shi, J.; Qiao, Y. State Estimation and Remaining Useful Life Prediction of PMSTM Based on a Combination of SIR and HSMM. Sustainability 2022, 14, 16810. https://doi.org/10.3390/su142416810

Tian G, Wang S, Shi J, Qiao Y. State Estimation and Remaining Useful Life Prediction of PMSTM Based on a Combination of SIR and HSMM. Sustainability. 2022; 14(24):16810. https://doi.org/10.3390/su142416810

Chicago/Turabian StyleTian, Guishuang, Shaoping Wang, Jian Shi, and Yajing Qiao. 2022. "State Estimation and Remaining Useful Life Prediction of PMSTM Based on a Combination of SIR and HSMM" Sustainability 14, no. 24: 16810. https://doi.org/10.3390/su142416810

APA StyleTian, G., Wang, S., Shi, J., & Qiao, Y. (2022). State Estimation and Remaining Useful Life Prediction of PMSTM Based on a Combination of SIR and HSMM. Sustainability, 14(24), 16810. https://doi.org/10.3390/su142416810