Energy Efficiency of Personal Computers: A Comparative Analysis

Abstract

1. Introduction

- Improvements in the management of systems and data centers aimed at optimizing energy efficiency. For example, an interesting way to reduce energy consumption in data centers is, as suggested in [15], to reduce the number of servers that are powered on to a minimum and switching unused servers off or to low power mode.

- Widespread adoption of more efficient technologies. An example of this is to change from Hard Disk Drive (HDD) to Solid State Drive (SDD) technology, which has enabled a significant reduction in the consumption of mass data storage. More efficient technologies are also sought to reduce the environmental impact from the design and manufacturing phases of ICT resources [16].

- Changes in scale. A clear example would be in the move from smaller to large data centers that could be called hyperscale centers (such as Google Cloud, Amazon Web Services, Microsoft Azure, OVHCloud, Rackspace Open Cloud, or Microsoft Azure). In larger centers, power consumption can be better managed. Among the most important factors in energy consumption in data centers is air conditioning, and it is cost-effective to relocate hyperscale centers to locations where climatic conditions are more favorable. For example, one of Google’s largest data centers is located in Finland, where, being a Nordic country (very cold), air conditioning costs are lower than in warmer countries. This center uses the icy seawater of the Gulf of Finland to completely cool all its facilities [17]. This concept also includes proposals for energy-autonomous data centers [18].

2. Materials and Methods

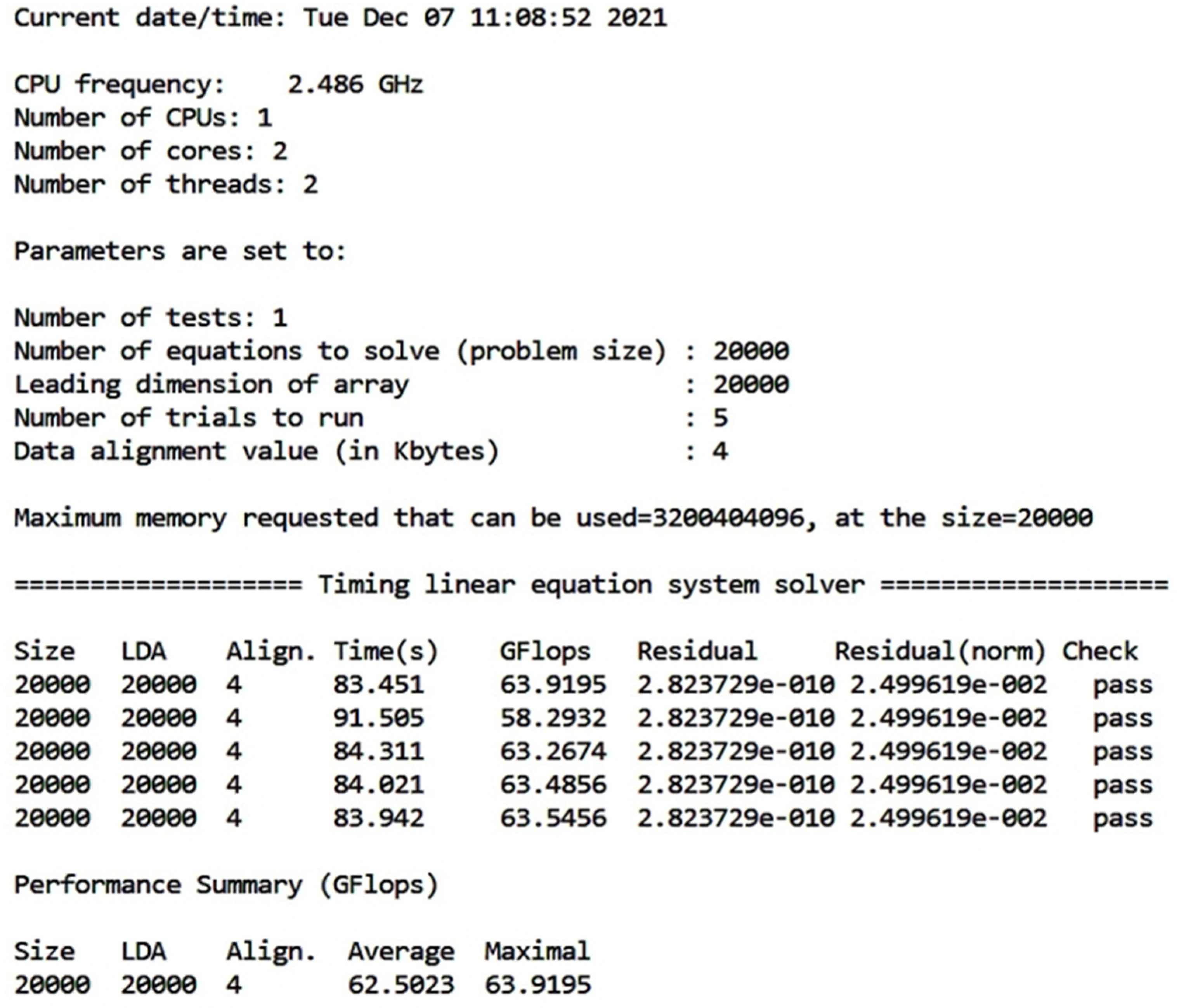

2.1. Reference Application

- N = 15,000 linear equations (Quick, 2 GB benchmark),

- N = 20,000 linear equations (Standard, 2 GB benchmark) or

- N = 32,000 linear equations (Extended, 8 GB benchmark).

2.2. Energy Consumption Measurement Tools

- By using external power meters (wattmeters, ammeters, voltmeters) on the system, in series with the power cables to the wall outlet. With these external power meters, no granularity is obtained since only the overall power consumption of the system is measured, being, therefore, inadequate for a detailed analysis.

- By using separate metering hardware to be connected inside the system by the user. This additional hardware can include devices such as current sensors, current clamps, data acquisition cards, and microcontrollers. Measurements can be performed, for example, on the different DC power supply lines output from the system power supply, thus obtaining a certain degree of granularity. The cause of this behavior is due to the different lines supplying power to different parts of the computing system (motherboard, disk, etc.), although it is not possible to obtain measurements inside the chip.

- By using counters and hardware registers that are included as utilities or interfaces by the processor manufacturers for thermal and power management. With this type of interface, it is possible, for example, to develop tools to control the operation (ON/OFF) of fans or to monitor power consumption. An example is the RAPL (Running Average Power Limit) interface introduced by Intel in their Sandy Bridge processor architecture.

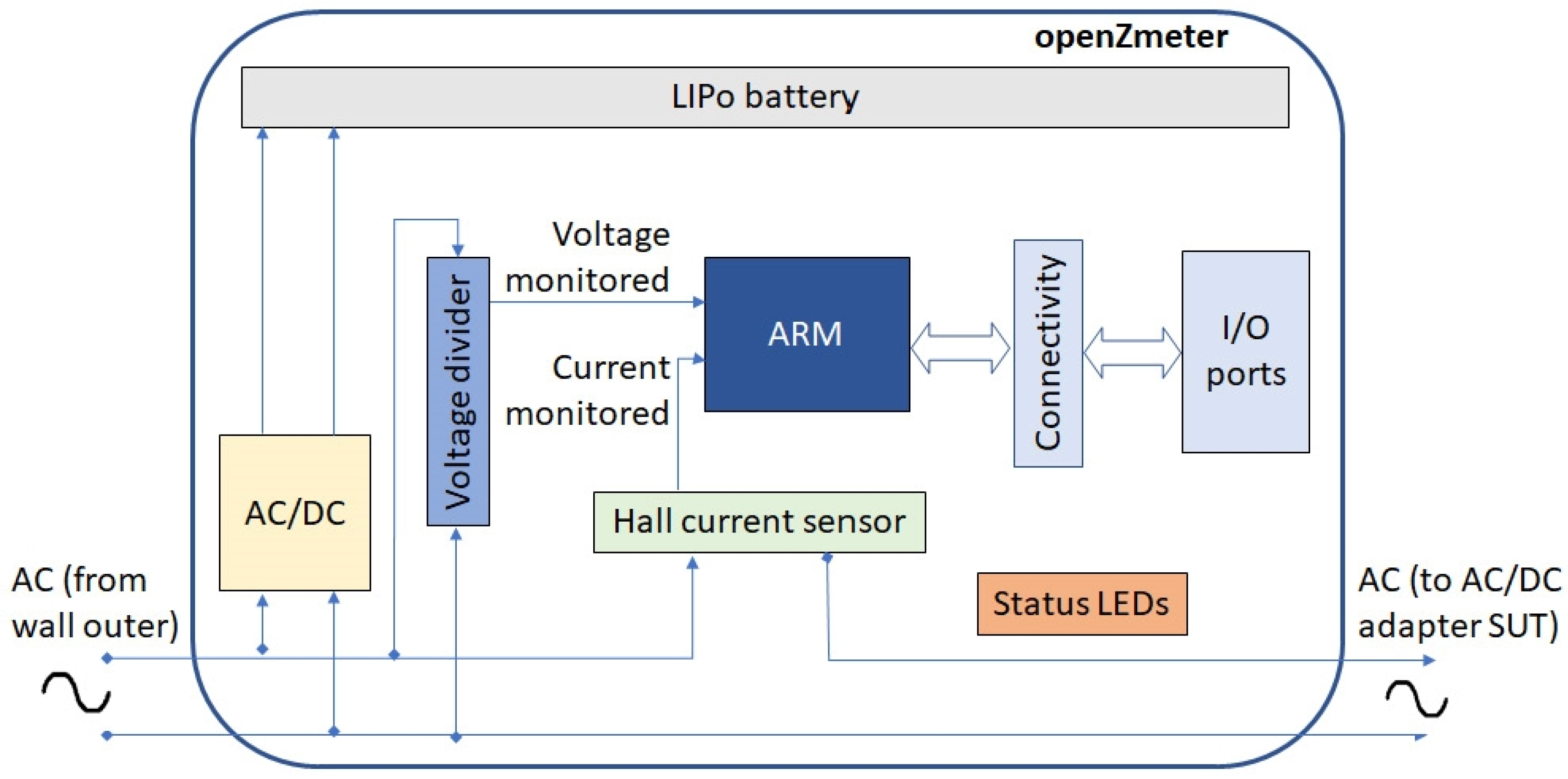

2.2.1. OpenZmeter

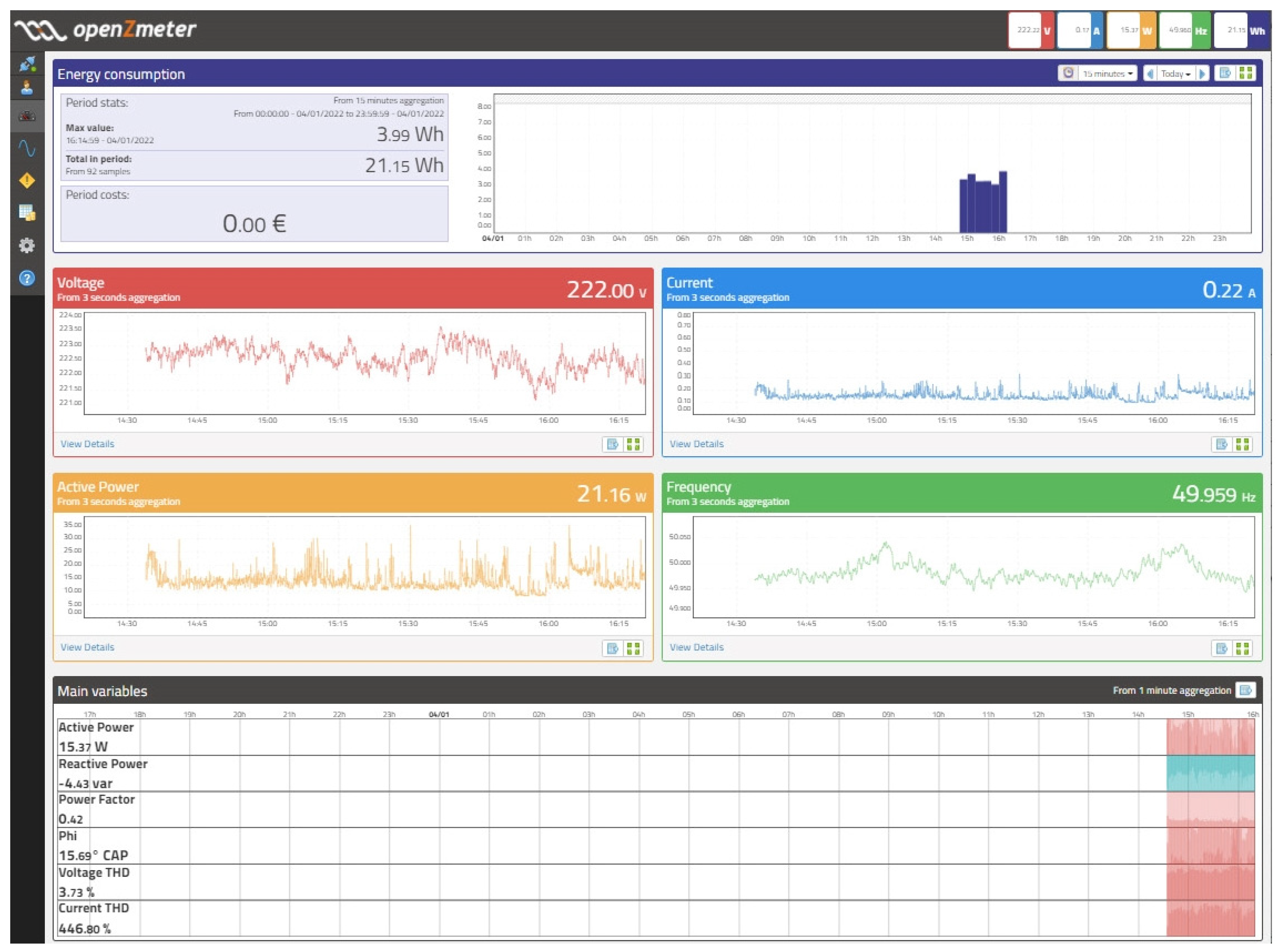

- Electrical measurements: Root Mean Square (RMS) voltage and current values; active, reactive, and apparent power and energy; power factor, harmonics, and frequency in real time through the API and stored in a database.

- Voltage measurement with a precision of 0.1% for RMS values. Frequency measurement with a precision of 10 MHz (in the range 42.5–57.5 Hz for 50 Hz power systems or 55.8–64.2 Hz). Current measurement up to 35 A RMS (integrated Hall-effect sensor).

- Sampling frequency of 15,625 Hz (64 microseconds between samples).

- Connectivity: USB ports (Wi-Fi dongle, 3G/LTE/4G, etc.), Ethernet port, and Wi-Fi. SPI, I2C, UART and PWM. Measurements can be visualized, for example, by accessing oZm via WiFi or the cloud via an MQTT-based synchronization service.

- Free and open system: Open-source software and hardware.

- The active energy consumption (kWh) for a fixed or variable time span (see top of Figure 4). Data can be shown in different time periods using aggregations based on nominal values of 3 s, 1 min, 10 min, or 1 h.

- Plots of RMS voltage, RMS current, frequency, and active power for a 3 s aggregation interval.

2.2.2. Intel Power Gadget

- Package (PKG). This domain includes the entire socket, i.e., of all cores and also the non-core components (L3 last level cache, memory controller, and integrated graphics).

- Power Plane 0 (PP0). This domain includes all processor cores on the socket.

- Power Plane 1 (PP1). This domain includes the graphics processor integrated on the socket (if it has one, as for example in desktop models).

- DRAM. Domain of the random-access memory (RAM) attached to the integrated memory controller.

- PSys. Domain available on some Intel architectures, to monitor and control the thermal and power specifications of the entire system on the chip (SoC), instead of just CPU or GPU. It includes the power consumption of the package domain, System Agent, PCH, eDRAM, and a few more domains on a single-socket SoC.

- (1)

- It largely meets the needs for performing the measurements shown in Section 4,

- (2)

- It has been developed by the manufacturer of the processors under study,

- (3)

- It is freeware, and

- (4)

- It collects data from the RAPL interface.

2.3. Platforms and Processors under Test

2.4. Methodology

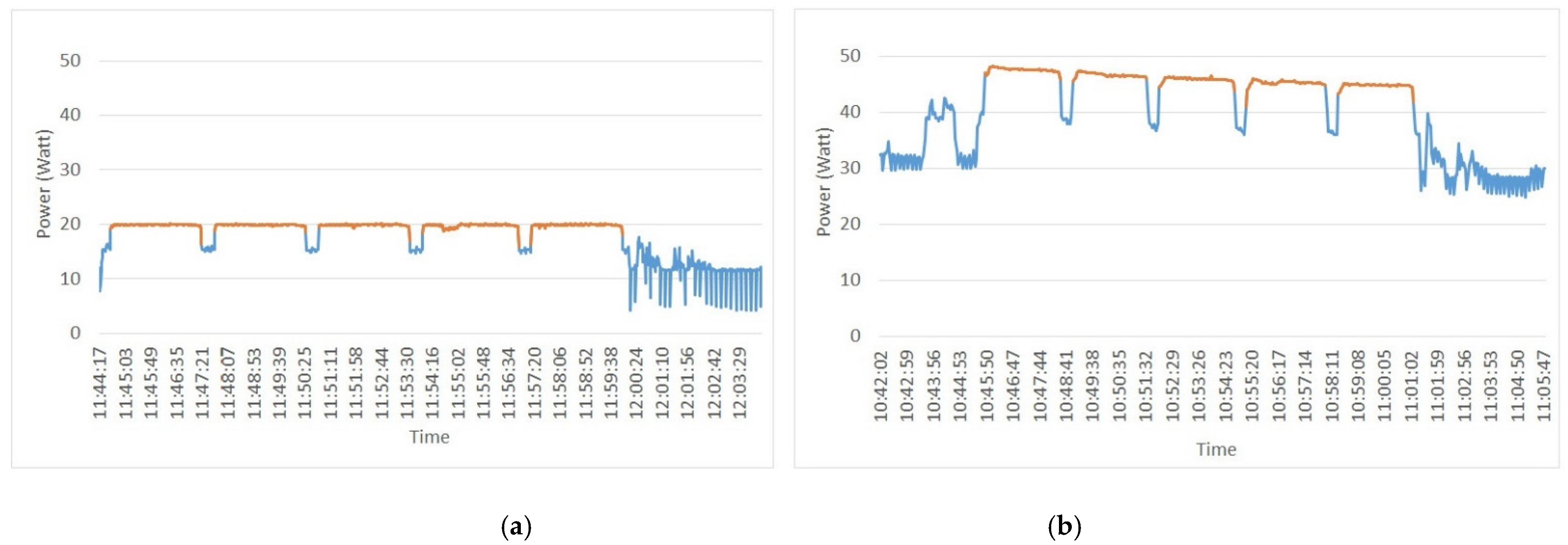

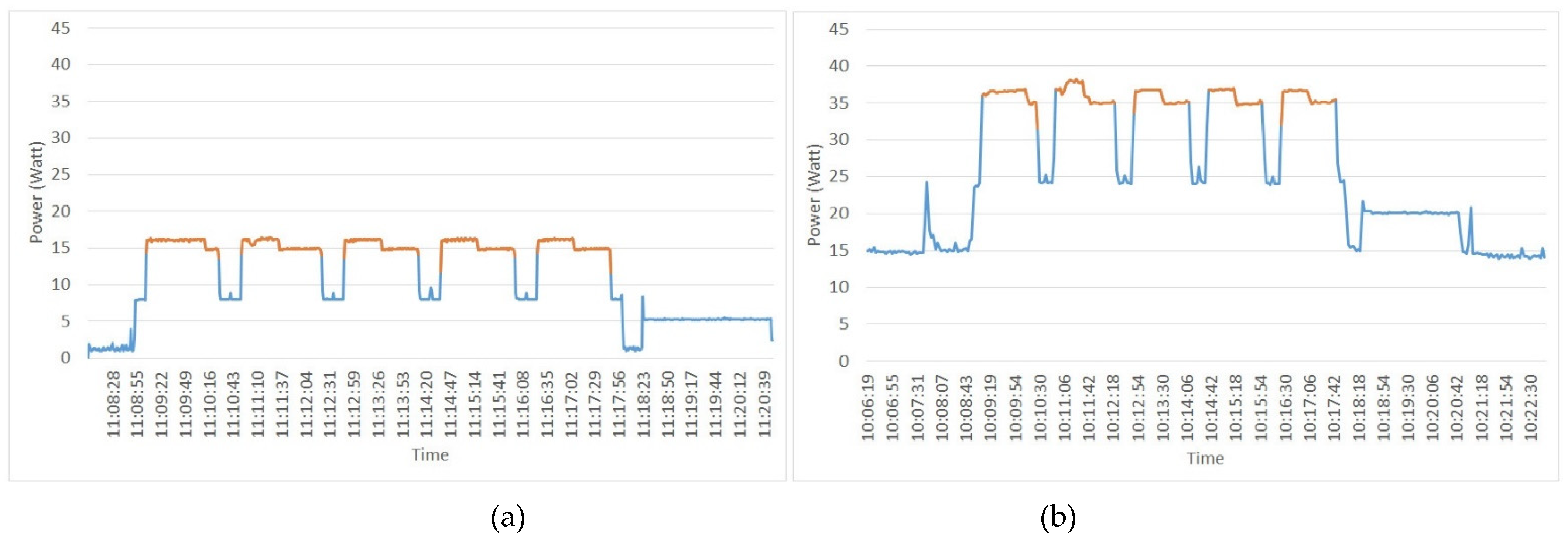

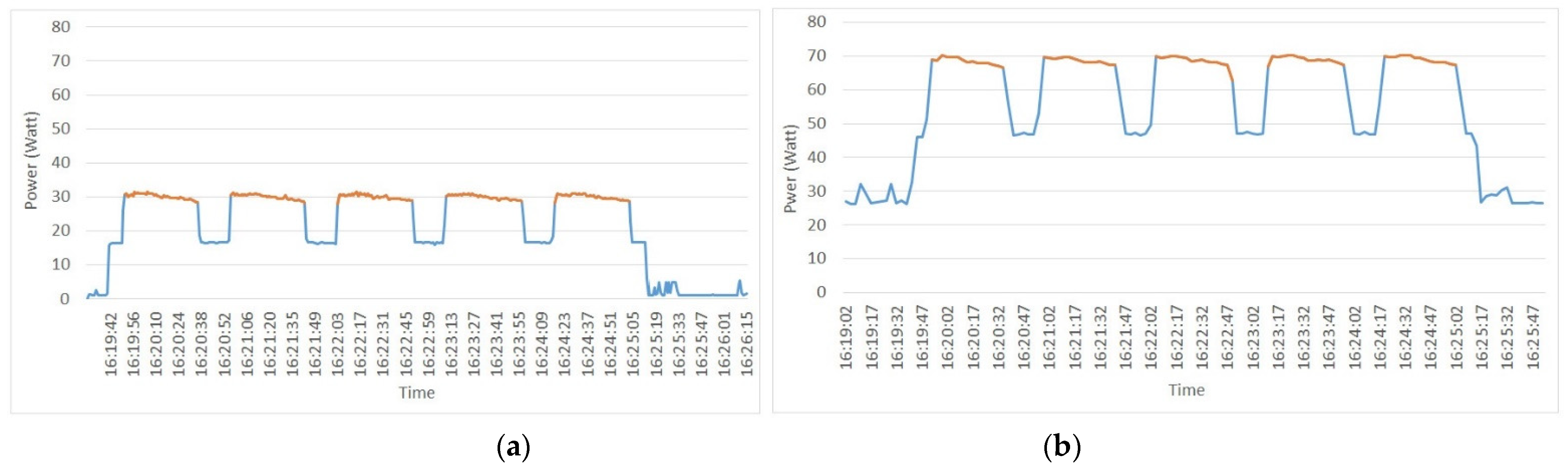

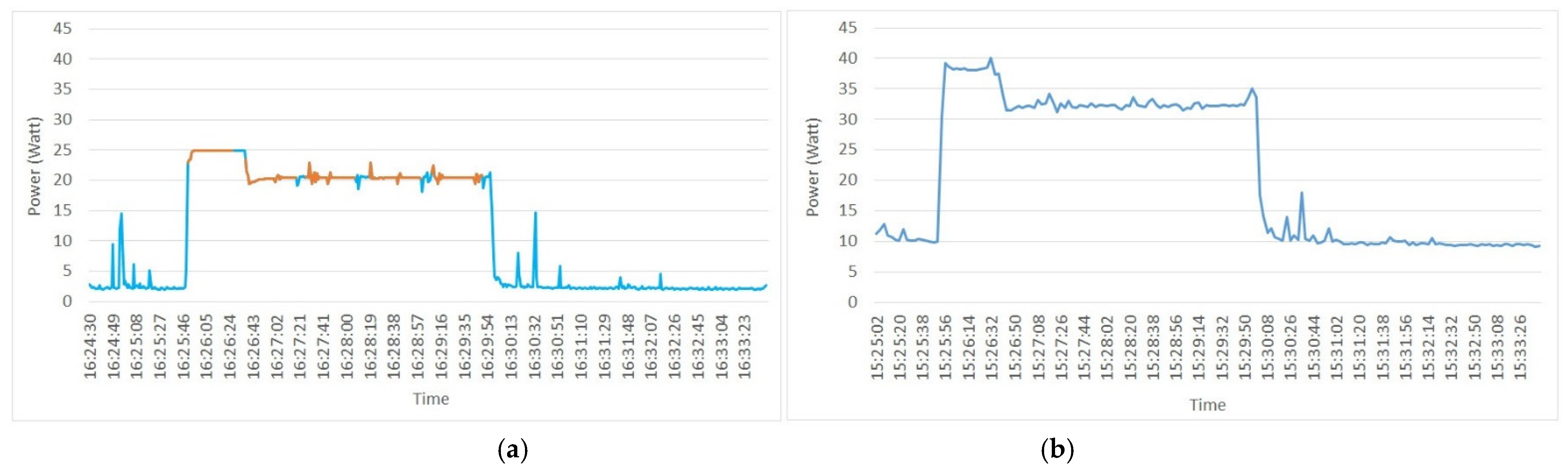

3. Experimental Results

- The processor domain (UC + ALU + FPU + GT + other circuits on the chip)

- The IA domain (UC + ALU + FPU)

- The GT domain, and

- The DRAM domain.

4. Discussion

5. Conclusions and Future Work

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- European Commission, 2030 Climate & Energy Framework. Available online: https://ec.europa.eu/clima/eu-action/climate-strategies-targets/2030-climate-energy-framework_en (accessed on 30 January 2022).

- Roco, M. Nanoscale Science and Engineering at NSF; National Science Foundation: Arlington, TX, USA, 2015. Available online: http://www.nseresearch.org/2015/presentations/NNI_15-1209_Grantees_MRoco_20%20min%20web.pdf (accessed on 26 August 2022).

- Semiconductor Industry Association and the Semiconductor Research Corporation, Rebooting the IT Revolution: A Call to Action. 2015. Available online: https://www.semiconductors.org/wp-content/uploads/2018/06/RITR-WEB-version-FINAL.pdf (accessed on 13 January 2022).

- Zhirnov, V.; Cavin, R.; Gammaitoni, L. Minimum Energy of Computing, Fundamental Considerations. In ICT-Energy-Concepts Towards Zero-Power Information and Communication Technology; IntechOpen: London, UK, 2014. [Google Scholar]

- Burgess, A.; Brown, T. By 2040, There May Not Be Enough Power for All Our Computers. HENNIK RESEARCH, 17 August 2016. 2021. Available online: https://www.themanufacturer.com/articles/by-2040-there-may-not-be-enough-power-for-all-our-computers/ (accessed on 31 January 2022).

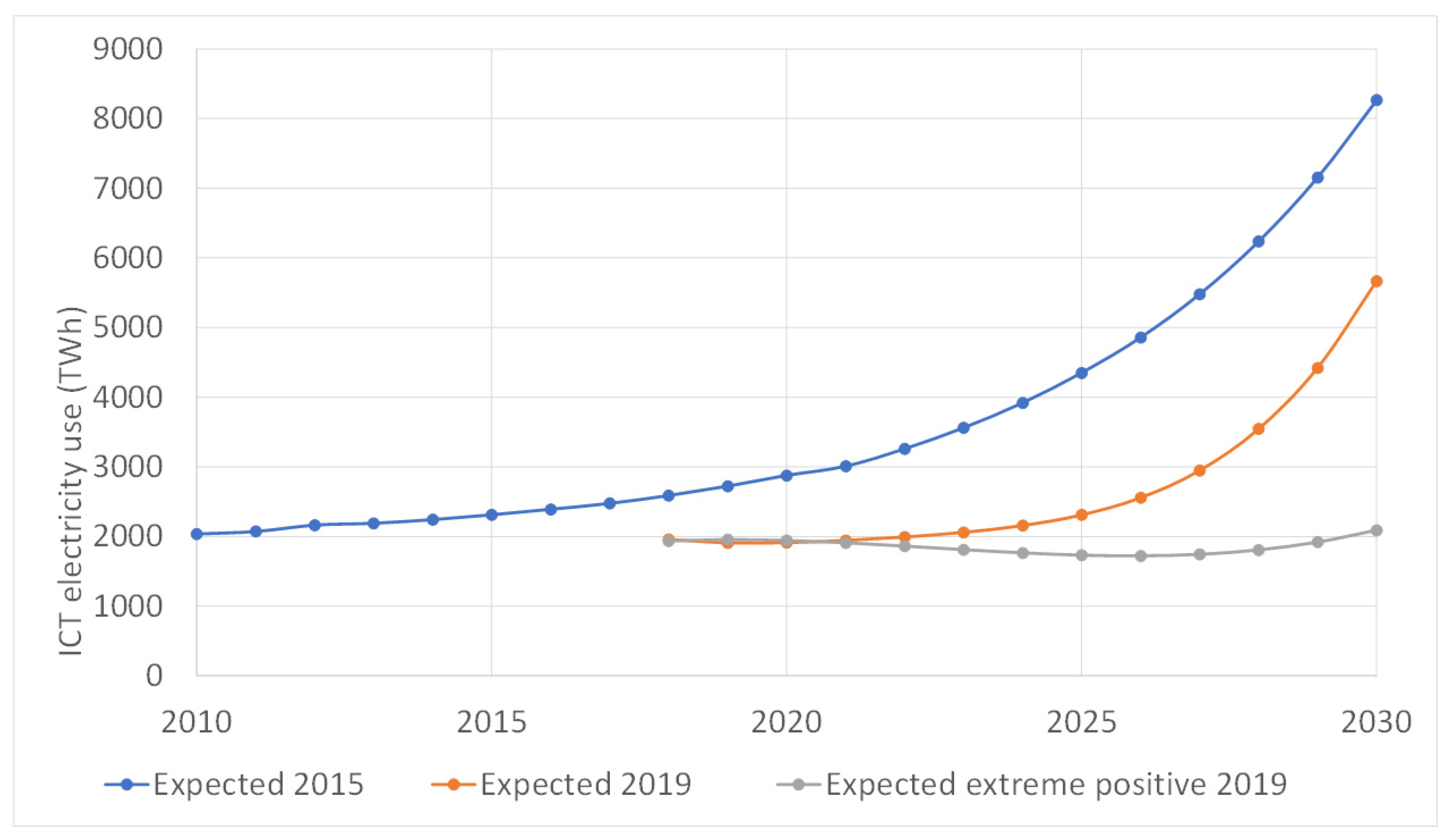

- Andrae, A.S.; Edler, T. On global electricity usage of communication technology: Trends to 2030. Challenges 2015, 6, 117–157. [Google Scholar] [CrossRef]

- Freitag, C.; Berners-Lee, M.; Widdicks, K.; Knowles, B.; Blair, G.; Friday, A. The climate impact of ICT: A review of estimates, trends and regulations. arXiv 2022, arXiv:2102.02622. [Google Scholar]

- Malmodin, J.; Lundén, D. The energy and carbon footprint of the global ICT and E&M sectors 2010–2015. Sustainability 2018, 10, 3027. [Google Scholar] [CrossRef]

- Federal Ministry for Economic Affairs. Development of ICT-Related Electricity Demand in Germany (Report in German). 2015. Available online: https://www.bmwk.de/Redaktion/EN/Pressemitteilungen/2015/20151210-gabriel-studie-strombedarf-ikt.html (accessed on 31 January 2022).

- Federal Ministry for Economic Affairs and Climate Action. Information and Communication Technologies Consume 15% Less Electricity Due to Improved Energy Efficiency. 2015. Available online: https://www.bmwi.de/Redaktion/EN/Pressemitteilungen/2015/20151210-gabriel-studie-strombedarf-ikt.html (accessed on 31 January 2022).

- Shehabi, A.; Smith, S.J.; Sartor, D.A.; Brown, R.E.; Herrlin, M.; Koomey, J.G.; Masanet, E.R.; Horner, N.; Azevedo, I.L.; Lintner, W. United States Data Center Energy Usage Report. Berkeley Lab. 2016. Available online: https://eta.lbl.gov/publications/united-states-data-center-energy (accessed on 31 January 2022).

- Urban, B.; Shmakova, V.; Lim, B.; Roth, K. Energy Consumption of Consumer Electronics in U.S. Report to the CEA; Fraunhofer USA Center for Sustainable Energy Systems: Boston, MA, USA, 2014; Available online: https://www.ourenergypolicy.org/wp-content/uploads/2014/06/electronics.pdf (accessed on 20 August 2022).

- Andrae, A.S. Comparison of several simplistic high-level approaches for estimating the global energy and electricity use of ICT networks and data centers. Int. J. Green Technol. 2019, 5, 51. [Google Scholar] [CrossRef]

- Manganelli, M.; Soldati, A.; Martirano, L.; Ramakrishna, S. Strategies for Improving the Sustainability of Data Centers via Energy Mix, Energy Conservation, and Circular Energy. Sustainability 2021, 13, 6114. [Google Scholar] [CrossRef]

- Hamdi, N.; Walid, C. A survey on energy aware VM consolidation strategies. Sustain. Comput. Inform. Syst. 2019, 23, 80–87. [Google Scholar] [CrossRef]

- Bordage, F. The Environmental Footprint of the Digital World, GreenIT 20. 2020. Available online: https://www.greenit.fr/wp-content/uploads/2019/11/GREENIT_EENM_etude_EN_accessible.pdf (accessed on 31 January 2022).

- Google Data Centers. Hamina, Finland. A White Surprise. Available online: https://www.google.com/about/datacenters/locations/hamina/ (accessed on 31 January 2022).

- Landré, D.; Nicod, J.-M.; Christophe, V. Optimal standalone data centre renewable power supply using an offline optimization approach. Sustain. Comput. Inform. Syst. 2022, 34, 100627. [Google Scholar]

- Kumar, K.; Lu, Y.H. Cloud computing for mobile users: Can offloading computation save energy? Computer 2010, 43, 51–56. [Google Scholar] [CrossRef]

- Liu, Q.; Luk, W. Heterogeneous systems for energy efficient scientific computing. In Proceedings of the International Symposium on Applied Reconfigurable Computing, Hong Kong, China, 19–23 March 2012; Springer: Berlin/Heidelberg, Germany, 2012; pp. 64–75. [Google Scholar]

- Islam, A.; Debnath, A.; Ghose, M.; Chakraborty, S. A survey on task offloading in multi-access edge computing. J. Syst. Archit. 2021, 118, 102225. [Google Scholar] [CrossRef]

- Zhao, M.; Yu, J.J.; Li, W.T.; Liu, D.; Yao, S.; Feng, W.; She, C.; Quek, T.Q. Energy-aware task offloading and resource allocation for time-sensitive services in mobile edge computing systems. IEEE Trans. Veh. Technol. 2021, 70, 10925–10940. [Google Scholar] [CrossRef]

- Maray, M.; Shuja, J. Computation offloading in mobile cloud computing and mobile edge computing: Survey, taxonomy, and open issues. Mob. Inf. Syst. 2022, 2022, 1121822. [Google Scholar] [CrossRef]

- Asadi, A.N.; Azgomi, M.A.; Entezari-Maleki, R. Analytical evaluation of resource allocation algorithms and process migration methods in virtualized systems. Sustain. Comput. Inform. Syst. 2020, 25, 100370. [Google Scholar] [CrossRef]

- ThermoFisher Scientific. StepOne Real-Time PCR System, Laptop. Available online: https://www.thermofisher.com/order/catalog/product/4376373 (accessed on 31 January 2022).

- BioTeke. Ultra-Fast Portable PCR Machine. Available online: https://www.bioteke.cn/4-channel-rapid-POC-Ultra-fast-portable-PCR-machine-pd04043964.html (accessed on 31 January 2022).

- Qureshi, B.; Alwehaibi, S.; Koubaa, A. On Power Consumption Profiles for Data Intensive Workloads in Virtualized Hadoop Clusters. In Proceedings of the 2017 IEEE Conference on Computer Communications Workshops (INFOCOM WKSHPS), Atlanta, GA, USA, 1–4 May 2017; pp. 42–47. [Google Scholar] [CrossRef]

- Mollova, S.; Simionov, R.; Seymenliyski, K. A study of the energy efficiency of a computer cluster. In Proceedings of the Seventh International Conference on Telecommunications and Remote Sensing, Barcelona, Spain, 8–9 October 2018; pp. 51–54. [Google Scholar]

- Qureshi, B.; Koubaa, A. On Energy Efficiency and Performance Evaluation of Single Board Computer Based Clusters: A Hadoop Case Study. Electronics 2019, 8, 182. [Google Scholar] [CrossRef]

- Warade, M.; Schneider, J.-G.; Lee, K. Measuring the Energy and Performance of Scientific Workflows on Low-Power Clusters. Electronics 2022, 11, 1801. [Google Scholar] [CrossRef]

- Xu, X.; Dou, W.; Zhang, X.; Chen, J. EnReal: An energy-aware resource allocation method for scientific workflow executions in cloud environment. IEEE Trans. Cloud Comput. 2015, 4, 166–179. [Google Scholar] [CrossRef]

- Khaleel, M.; Zhu, M.M. Energy-aware job management approaches for workflow in cloud. In Proceedings of the 2015 IEEE International Conference on Cluster Computing, Chicago, IL, USA, 8–11 September 2015; pp. 506–507. [Google Scholar]

- Mishra, S.K.; Puthal, D.; Sahoo, B.; Jayaraman, P.P.; Jun, S.; Zomaya, A.Y.; Ranjan, R. Energy-efficient VM-placement in cloud data center. Sustain. Comput. Inform. Syst. 2018, 20, 48–55. [Google Scholar] [CrossRef]

- Escobar, J.J.; Ortega, J.; Díaz, A.F.; González, J.; Damas, M. Time-energy Analysis of Multi-level Parallelism in Heterogeneous Clusters: The Case of EEG Classification in BCI Tasks. J. Supercomput. 2019, 75, 3397–3425. [Google Scholar] [CrossRef]

- Qureshi, B. Profile-based power-aware workflow scheduling framework for energy-efficient data centers. Future Gener. Comput. Syst. 2019, 94, 453–467. [Google Scholar] [CrossRef]

- Ji, K.; Zhang, F.; Chi, C.; Song, P.; Zhou, B.; Marahatta, A.; Liu, Z. A Joint Energy Efficiency Optimization Scheme Based on Marginal Cost and Workload Prediction in Data Centers. Sustain. Comput. Inform. Syst. 2021, 32, 100596. [Google Scholar] [CrossRef]

- Feng, W.C.; Cameron, K. The green500 list: Encouraging sustainable supercomputing. Computer 2007, 40, 50–55. [Google Scholar] [CrossRef]

- Top500, The Linpack Benchmark. Available online: https://www.top500.org/project/linpack/ (accessed on 31 January 2022).

- Linpack Xtreme. TechPowerUp, 31 December 2020 Version. Available online: https://www.techpowerup.com/download/linpack-xtreme/ (accessed on 31 January 2022).

- Viciana, E.; Alcayde, A.; Montoya, F.G.; Baños, R.; Arrabal-Campos, F.M.; Zapata-Sierra, A.; Manzano-Agugliaro, F. OpenZmeter: An efficient low-cost energy smart meter and power quality analyzer. Sustainability 2018, 10, 4038. [Google Scholar] [CrossRef]

- Hernandez, W.; Calderón-Córdova, C.; Brito, E.; Campoverde, E.; González-Posada, V.; Zato, J.G. A method of verifying the statistical performance of electronic circuits designed to analyze the power quality. Measurement 2016, 93, 21–28. [Google Scholar] [CrossRef]

- What Is openZmeter? Available online: https://openzmeter.com/ (accessed on 31 January 2022).

- Weaver, V.M.; Johnson, M.; Kasichayanula, K.; Ralph, J.; Luszczek, P.; Terpstra, D.; Moore, S. Measuring energy and power with PAPI. In Proceedings of the 2012 41st International Conference on Parallel Processing Workshops, Pittsburgh, PA, USA, 10–13 September 2012. [Google Scholar] [CrossRef]

- Intel® 64 and IA-32 Architectures Software Developer’s Manual Manual Volume 3. pp. 14–31 to 14–39. Available online: https://www.intel.com/content/www/us/en/architecture-and-technology/64-ia-32-architectures-software-developer-system-programming-manual-325384.html (accessed on 31 January 2022).

- Khan, K.N.; Hirki, M.; Niemi, T.; Nurminen, J.K.; Ou, Z. RAPL in action: Experiences in using RAPL for power measurements. ACM Trans. Modeling Perform. Eval. Comput. Syst. 2018, 3, 9. [Google Scholar] [CrossRef]

- Intel Processors for All That You Do. Available online: https://www.intel.com/content/www/us/en/products/details/processors.html (accessed on 31 January 2022).

- Meuer, H.; Strohmaier, E.; Dongarra, J.; Simon, H. The Top500 Project. 2010. Available online: https://top500.org/files/TOP500_MS-Industriekunden_16_10_2008.pdf (accessed on 31 January 2022).

- TOP500. The List. Available online: https://www.top500.org/lists/green500/ (accessed on 31 January 2022).

- EEHPC. Energy Efficient High Performance Computing Power Measurement Methodology (version 2.0 RC 1.0). Energy Efficient High Performance Computing Working Group (EEHPC WG). Available online: https://www.top500.org/static/media/uploads/methodology-2.0rc1.pdf (accessed on 26 August 2022).

- IRDS. International Roadmap for Devices and Systems 2022 Edition Executive Summary. 2022, p. 27. Available online: https://irds.ieee.org/images/files/pdf/2022/2022IRDS_ES.pdf (accessed on 26 August 2022).

- Green500 List June 2022. Available online: https://www.top500.org/lists/green500/list/2022/06/ (accessed on 26 August 2022).

| Parameters to be Set: |

|---|

| Number of equations to solve |

| Leading array dimension |

| Number of times to run Linpack (which can be performed from 1 to 5 times, successively) |

| Data space alignment value (in Kbytes) |

| Maximum memory to be used |

| Generated Results: |

| CPU frequency (GHz) |

| Number of CPUs |

| Number of cores |

| Number of threads |

| Size |

| LDA (leading array dimension) |

| Data alignment value (Kbytes) |

| Time (s) |

| Residual |

| Residual (norm) |

| Raverage (GFlops) |

| Rmaximal (GFlops), Maximal Linpack performance achieved |

| Time | Active Power | Reactive Power | Power Factor | Phi | Voltage THD | Current THD |

|---|---|---|---|---|---|---|

| 6:53 | 36.768 | –7.407 | 0.448 | 23.068 | 3.61 | 296.687 |

| 6:54 | 27.966 | –5.26 | 0.501 | 10.54 | 3.53 | 344.986 |

| 6:55 | 21.681 | –4.636 | 0.478 | 12.179 | 3.552 | 364.808 |

| 6:56 | 20.624 | –4.542 | 0.474 | 12.676 | 3.579 | 368.612 |

| 6:57 | 19.029 | –4.366 | 0.466 | 13.081 | 3.533 | 377.501 |

| 6:58 | 19.432 | –4.36 | 0.465 | 12.831 | 3.595 | 383.131 |

| 6:59 | 19.214 | –4.136 | 0.462 | 12.33 | 3.676 | 388.315 |

| 7:00 | 18.982 | –4.301 | 0.464 | 12.857 | 3.647 | 384.47 |

| 7:01 | 19.07 | –4.414 | 0.464 | 13.232 | 3.638 | 383.253 |

| 7:02 | 18.992 | –4.368 | 0.466 | 13.126 | 3.673 | 381.309 |

| 7:03 | 19.835 | –4.492 | 0.469 | 12.929 | 3.615 | 376.45 |

| 7:04 | 20.227 | –4.477 | 0.466 | 12.666 | 3.679 | 383.472 |

| 7:05 | 16.921 | –4.239 | 0.449 | 14.662 | 3.642 | 399.679 |

| 7:06 | 16.903 | –4.204 | 0.454 | 14.285 | 3.682 | 394.078 |

| 7:07 | 16.252 | –4.207 | 0.449 | 14.95 | 3.686 | 394.636 |

| 7:08 | 16.644 | –4.348 | 0.454 | 15.116 | 3.609 | 384.211 |

| System Time | RDTSC | Elapsed Time (sec) | CPU Utilization (%) | CPU Frequency_0 (MHz) | Processor Power_0 (W) | Cumulative Processor Energy_0 (J) | Cumulative Processor Energy_0 (mWh) |

|---|---|---|---|---|---|---|---|

| 11:44:17:227 | 3.04 × 1012 | 0.988 | 28 | 2900 | 11.976 | 11.829 | 3.286 |

| 11:44:18:247 | 3.04 × 1012 | 2.008 | 16 | 1200 | 7.69 | 19.674 | 5.465 |

| 11:44:19:254 | 3.04 × 1012 | 3.015 | 21 | 2900 | 9.021 | 28.759 | 7.989 |

| 11:44:20:247 | 3.05 × 1012 | 4.009 | 26 | 2900 | 11.621 | 40.307 | 11.196 |

| 11:44:21:246 | 3.05 × 1012 | 5.007 | 41 | 2900 | 13.08 | 53.371 | 14.825 |

| Etc. | |||||||

| IA Power_0 (W) | Cumulative IA Energy_0 (J) | Cumulative IA Energy_0 (mWh) | Package Temperature_0 (C) | Package Hot_0 | GT Power_0 (W) | Cumulative GT Energy_0 (J) | Cumulative GT Energy_0 (mWh) |

| 9.514 | 9.398 | 2.61 | 69 | 0 | 0.024 | 0.024 | 0.007 |

| 5.282 | 14.785 | 4.107 | 59 | 0 | 0.04 | 0.064 | 0.018 |

| 6.646 | 21.478 | 5.966 | 66 | 0 | 0.017 | 0.081 | 0.023 |

| 9.226 | 30.646 | 8.513 | 71 | 0 | 0.012 | 0.093 | 0.026 |

| 10.528 | 41.161 | 11.434 | 69 | 0 | 0.023 | 0.116 | 0.032 |

| Etc. | |||||||

| Package PL1_0 (W). | Package PL2_0 (W) | Package L4_0(W) | Platform PsysPL1_0 (W) | Platform PsysPL2_0(W) | GT Frequency (MHz) | GT Utilization (%) | |

| 35 | 44 | 112 | 0 | 0 | 99,999,999 | 0 | |

| 35 | 44 | 112 | 0 | 0 | 99,999,999 | 0 | |

| 35 | 44 | 112 | 0 | 0 | 99,999,999 | 0 | |

| 35 | 44 | 112 | 0 | 0 | 99,999,999 | 0 | |

| 35 | 44 | 112 | 0 | 0 | 99,999,999 | 0 | |

| Etc. |

| Parameter | Observation |

|---|---|

| System Time | Current time (hh:mm:ss). |

| RDTSC | Time Stamp Counter (TSC), number of CPU cycles since its reset. |

| Elapsed Time | In seconds. |

| CPU Utilization | Central processor unit usage percentage (%). |

| CPU Frequency_0 | CPU frequency (MHz). |

| Processor Power_0 | Power consumed by UC + ALU + FPU + integrated graphics + others (W). |

| IA Power_0 | Power consumed by UC + ALU + FPU (W). |

| Package Temperature_0 | Chip temperature (°C). |

| DRAM Power_0 | Power consumed by DRAM attached to the integrated memory controller (W). |

| GT Power_0 | Power consumed by the discrete graphic processor (W). |

| Package PL1_0 | Limit (threshold) for average package1; power that will not be exceeded (W). |

| Package PL2_0 | Limit (threshold) for average package2; power that will not be exceeded (W). |

| Package PL4_0 | Limit (threshold) for average package4; power that will not be exceeded (W). |

| Platform PsysPL1_0 | Limit (threshold) for average platform1; power that will not be exceeded. The platform is the entire socket (W). |

| Platform PsysPL2_0 | Limit (threshold) for average platform2; power that will not be exceeded (W). |

| GT Frequency | Frequency of the integrated graphic processor unit (GPU) (MHz). |

| GT Utilization (%) | Usage percentage of the integrated graphic processor unit (GPU) (%). |

| SUT1 | SUT2 | SUT3 | SUT4 | SUT5 | |

|---|---|---|---|---|---|

| Reference: | Sony Vaio SVZ1311C5E | ASUS Notebook X550J | Toshiba Portege Z30-C | HP Pavilion All-in-One K1987LF | ASUS Expertbook B9400CEA |

| Processor: | Core i5-3210M 2.5 GHz | Core i5-4200H 2.8 GHz | Core i7-6500U 2.5 GHz | Core i5-10400T 2 GHz | Core i7-1165G7 2.8 GHz |

| Kernels: | 2 cores, 4 threads | 2 cores, 4 threads | 2 cores, 4 threads | 6 cores, 12 threads | 4 cores, 8 threads |

| Memory capacity: | 8 GB | 8 GB | 16 GB | 16 GB | 16 GB |

| Hard disk: | SSD 256 GB | HDD 500 GB | SSD 1 TB | SSD 512 GB | SSD 1 TB |

| Mainboard: | SONY VAIO | ASUS X550J | TOSHIBA | HP 86ED | ASUS Tek |

| Graphics card: | Intel HD Graphics 4000 | NVIDIA GeForce GTX 850M | Intel HD Graphics 520 | UHD Graphics 630 | Intel Iris Xe |

| AC/DC adapter: | Out: 19.5V-33mA. In: 100-240V-1.5A | Out: 19V-6.32 A In: 100-240V-6.32 A | Out: 19.5V-2.37A. In: 100-240V-1.2A | Out: 19.5 V-7.7 A In: 100-240 V-2.5 A | Out: 5-15V 3A. In: 100-240V-1.5A |

| Operating system: | Windows 10 Home | Windows 10 Pro | Windows 10 home | Windows 10 Home | Windows 10 Pro |

| Other Processor Features: | |||||

| Generation: | 3rd | 4th | 6th | 10th | 11th |

| Lithography: | 22 nm | 22 nm | 14 nm | 14 nm | 10 nm |

| Frequency: | 2.5–3.10 GHz | 2.8–3.4 GHz | 2.5–3.10 GHz | 2–3.6 GHz | 4.7 GHz |

| Cache size: | 3 MB | 3 MB | 4 MB | 12 MB | 12 MB |

| Max. memory size: | 32 GB | 32 GB | 32 GB | 128 GB | 64 GB |

| Processor launch date: | Q2, 2012 | Q4, 2013 | Q3, 2015 | Q2, 2020 | Q3, 2020 |

| TDP: | 35 W | 47 W | 15 W | 25 W | 12 W |

| TJuntion: | 105 °C | 100 °C | 100 °C | 100 °C | 100 °C |

| Systems under Test → | SUT1 | SUT2 | SUT3 | SUT4 | SUT5 |

|---|---|---|---|---|---|

| Linpack Extreme Results: | |||||

| Number of CPUs | 1 | 1 | 1 | 1 | 1 |

| Number of cores | 2 | 2 | 2 | 6 | 4 |

| Number of threads used | 2 | 2 | 2 | 6 | 4 |

| Number of trials to run | 5 | 5 | 5 | 5 | 5 |

| Number of equations to solve | 20,000 | 20,000 | 20,000 | 20,000 | 20,000 |

| Data alignment value (KB) | 4 | 4 | 4 | 4 | 4 |

| Time of a trial | 164.29 ± 4.42 | 72.9 ± 1.58 | 85.45 ± 3.40 | 48.19 ± 0.80 | 40.01 ± 2.29 |

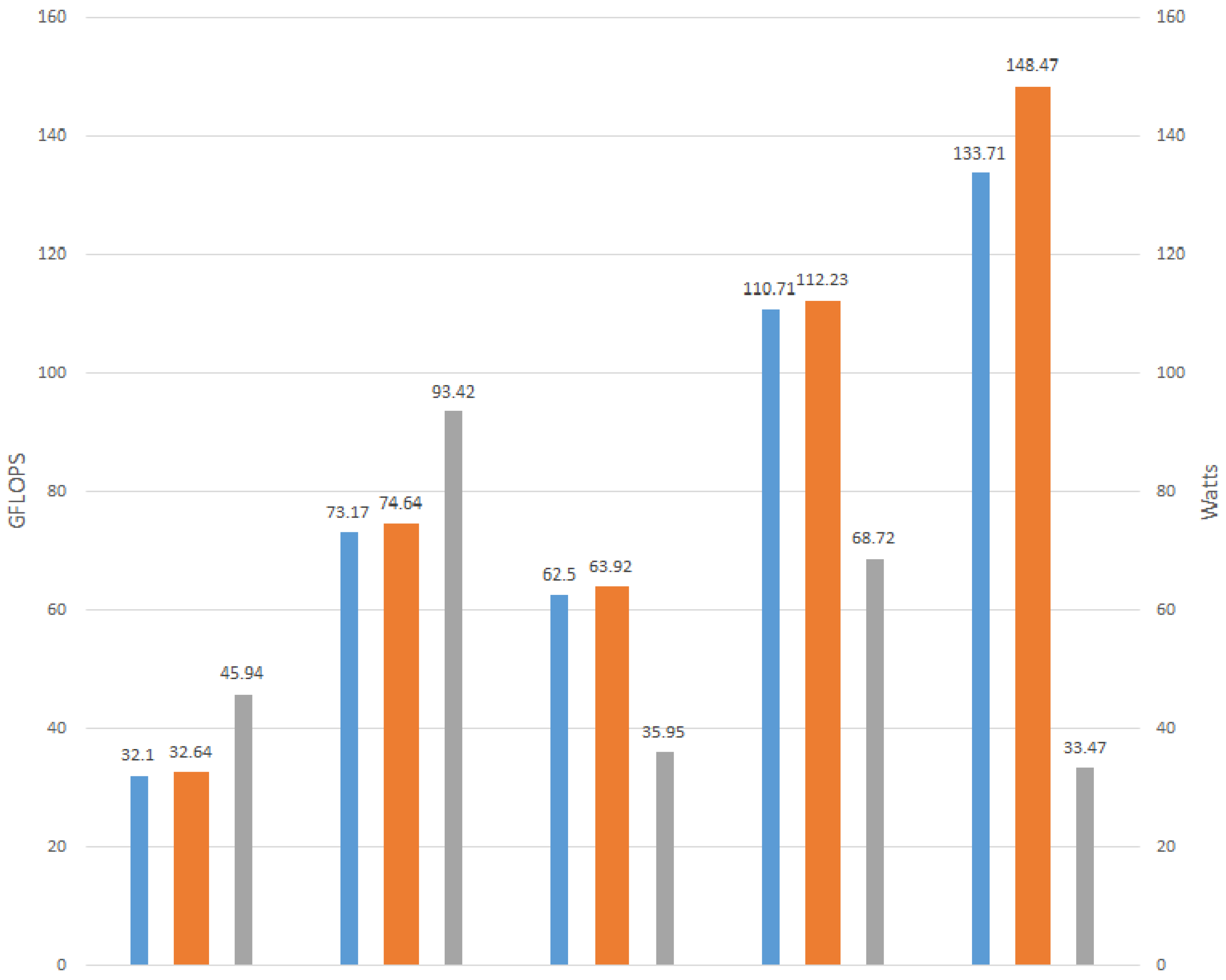

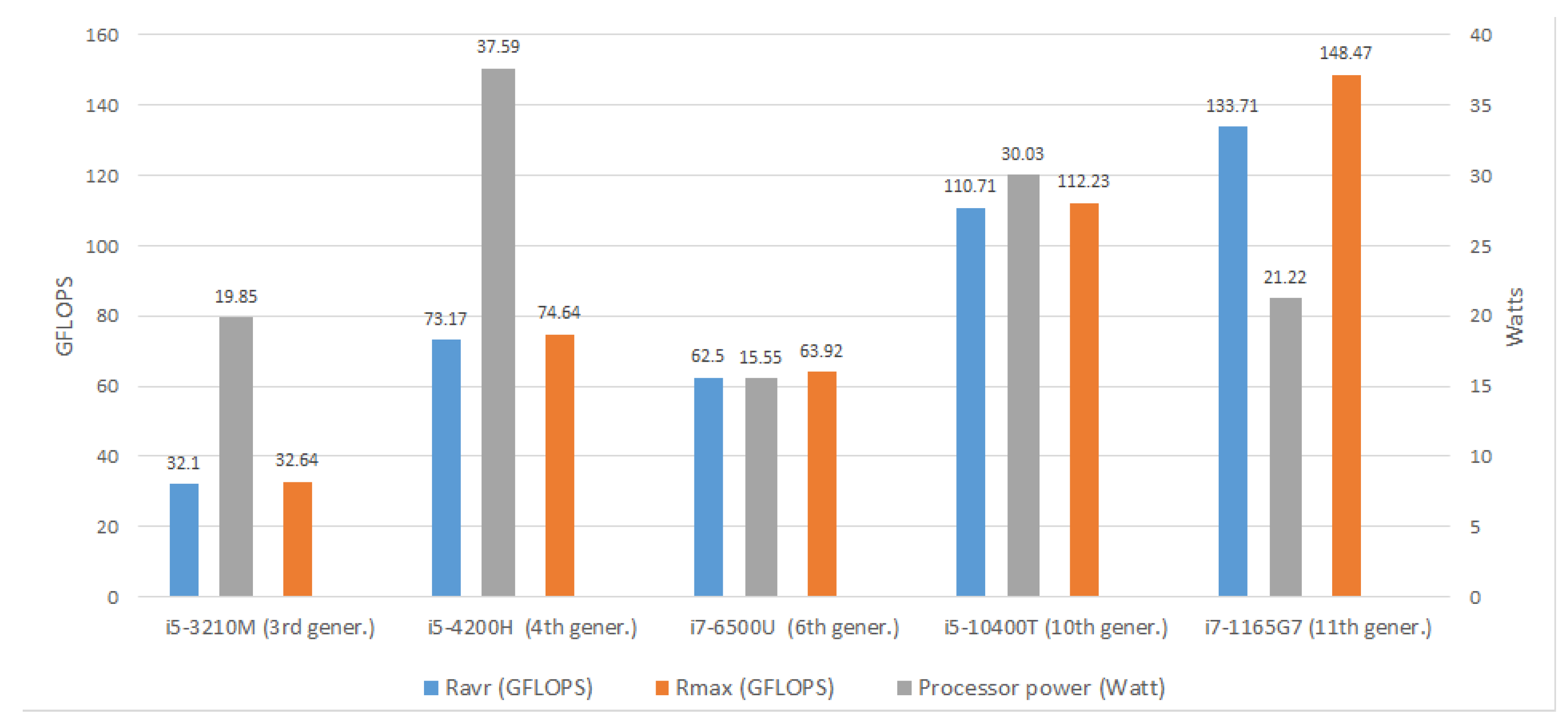

| R average (GFlops) | 32.1 ± 0.83 | 73.17 ± 1.56 | 62.50 ± 2.36 | 110.71 ± 1.80 | 133.71 ± 8.27 |

| R maximal (GFlops) | 32.64 | 74.64 | 63.92 | 112.23 | 148.47 |

| Intel Power Gadget Results: | |||||

| RDTSC (number of cycles) | 1.22 × 1012 | 1.94 × 1011 | 2.19 × 1011 | 9.20 × 1010 | 1.12 × 1011 |

| Elapsed Time (s) | 158.01 ± 19.05 | 69.61 ± 2.58 | 84.4 ± 3.3 | 46.17 ± 0.7 | 39.90 ± 2.17 |

| CPU Frequency (GHz) | 2.882 | 3.390 | 2.486 | 3.281 | 4.606 |

| CPU Utilization (%) | 75.15 ± 1.64 | 69.61 ± 2.58 | 57.54 ± 3.08 | 51.12 ± 1.04 | 51.45 ± 0.51 |

| Processor Power (W) | 19.85 ± 0.06 | 37.59 ± 0.25 | 15.55 ± 0.17 | 30.03 ± 0.06 | 21.22 ± 1.94 |

| IA Power (W) | 16.99 ± 0.05 | 27.68 ± 0.35 | 10.31 ± 3.29 | 29.19 ± 0.06 | 16.84 ± 2.02 |

| GT Power (W) | 0.0203 ± 0.0003 | 2.33 ± 0.09 | 0.007 ± 0.002 | 2.33 ± 0.09 | 0.0118 ± 0.0004 |

| DRAM Power (W) | - | 3.353 ± 0.005 | 2.66 ± 0.40 | 2.825 ± 0.007 | 0 |

| Package Temperature (°C) | 82.94 ± 0.49 | 96.13 ± 0.88 | 70.75 ± 6.08 | 64.39 ± 2.45 | 82.05 ± 1.05 |

| oZm Results: | |||||

| Active power (Pavrg) (W) | 45.94 ± 1.12 | 35.95 ± 1.06 | 93.42 ± 0.80 | 68.72 ± 1.54 | 33.42 ± 2.41 |

| Time of trial run (s) | 166.28 ± 4.20 | 85.45 ± 3.40 | 72.92 ± 1.58 | 48.19 ± 0.80 | 39.9 ± 2.17 |

| SUT1 (Sony Vaio) | Total in Core Phase | Baseline Phases | Attributable to Linpack Execution | |

|---|---|---|---|---|

| Intel Power Gadget measures | CPU Utilization (%) | 75.15 ± 1.64 | 29.69 ± 12.42 | 45.46 |

| Processor Power (Watt) | 19.85 ± 0.06 | 11.90 ± 2.52 | 7.95 | |

| IA Power (Watt) | 16.99 ± 0.05 | 12.52 ± 0.45 | 4.47 | |

| GT Power (Watt) | 0.0203 ± 0.0003 | 0.017 ± 0.002 | 0.0033 | |

| DRAM Power (Watt) | - | - | ||

| Package Temperature (°C) | 82.94 ± 0.49 | 73.41 ± 0.98 | 9.53 | |

| openZmeter | Active power | 45.94 ± 1.12 | 30.37 ± 4.98 | 15.57 |

| SUT 2 (ASUS Notebook) | Total in Core Phase | Baseline Phases | Attributable to Linpack Execution | |

|---|---|---|---|---|

| Intel Power Gadget measures | CPU Utilization (%) | 69.61 ± 2.58 | 15.63 ± 1.61 | 53.98 |

| Processor Power (Watt) | 37.59 ± 0.25 | 12.19 ± 1.56 | 25.40 | |

| IA Power (Watt) | 27.68 ± 0.35 | 15.05 ± 3.82 | 12.63 | |

| GT Power (Watt) | 2.33 ± 0.09 | 2.7 ± 0.31 | –0.37 | |

| DRAM Power (Watt) | 3.353 ± 0.005 | 1.88 ± 0.10 | 1.473 | |

| Package Temperature (°C) | 96.13 ± 0.88 | 85.37 ± 5.73 | 10.76 | |

| openZmeter | Active Power | 93.42 ± 0.80 | 58.53 ± 12.08 | 34.89 |

| SUT3 (Toshiba Portege) | Total in Core Phase | Baseline Phases | Attributable to Linpack Execution | |

|---|---|---|---|---|

| Intel Power Gadget measures | CPU Utilization (%) | 57.54 ± 3.08 | 15.63 ± 1.61 | 53.98 |

| Processor Power (Watt) | 15.55 ± 0.17 | 1.30 ± 0.38 | 14.25 | |

| IA Power (Watt) | 10.31 ± 3.29 | 5.66 ± 2.23 | 4.65 | |

| GT Power (Watt) | 0.01 ± 0.00 | 0.01 ± 0.00 | 0 | |

| DRAM Power (Watt) | 2.66 ± 0.40 | 1.70 ± 0.10 | 0.96 | |

| Package Temperature (°C) | 70.75 ± 6.08 | 62.59 ± 9.59 | 8.16 | |

| openZmeter | Active Power | 35.95 ± 1.06 | 18.37 ± 3.98 | 17.58 |

| SUT 4 (HP Pavilion) | Total in Core Phase | Baseline Phases | Attributable to Linpack Execution | |

|---|---|---|---|---|

| Intel Power Gadget measures | CPU Utilization (%) | 51.12 ± 1.04 | 0.85 ± 0.26 | 50.27 |

| Processor Power (Watt) | 30.03 ± 0.06 | 1.14 ± 0.40 | 28.89 | |

| IA Power (Watt) | 29.19 ± 0.06 | 14.69 ± 3.74 | 14.5 | |

| GT Power (Watt) | 2.33 ± 0.09 | 2.70 ± 0.31 | –0.37 | |

| DRAM Power (Watt) | 2.825 ± 0.007 | 0.89 ± 0.07 | 1.94 | |

| Package Temperature (°C) | 64.39 ± 2.45 | 57.53 ± 5.72 | 6.86 | |

| openZmeter | Active Power | 68.72 ± 1.54 | 30.74 ± 6.06 | 37.98 |

| SUT5 (ASUS Experbook) | Total in Core Phase | Baseline Phases | Attributable to Linpack Execution | |

|---|---|---|---|---|

| Intel Power Gadget measures | CPU Utilization (%) | 51.45 ± 0.51 | 2.54 ± 1.73 | 48.91 |

| Processor Power (Watt) | 21.22 ± 1.94 | 2.31 ± 0.25 | 18.70 | |

| IA Power (Watt) | 16.84 ± 2.02 | 1.03 ± 0.95 | 15.81 | |

| GT Power (Watt) | 0.0118 ± 0.0004 | 0.025 ± 0.007 | –0.02 | |

| DRAM Power (Watt) | - | - | - | |

| Package Temperature (°C) | 82.05 ± 1.05 | 47.06 ± 4.51 | 34.99 | |

| openZmeter | Active Power | 33.42 ± 2.41 | 11.79 ± 3.81 | 21.63 |

| Processor Power (Watt) | Baseline Phases (Watt) | Atributable to Linpack Execution (Watt) | Rmax (GFLOPS) | Power Efficiency (GFLOPS/Watt) | RSD (Relative Standard Deviation) | |

|---|---|---|---|---|---|---|

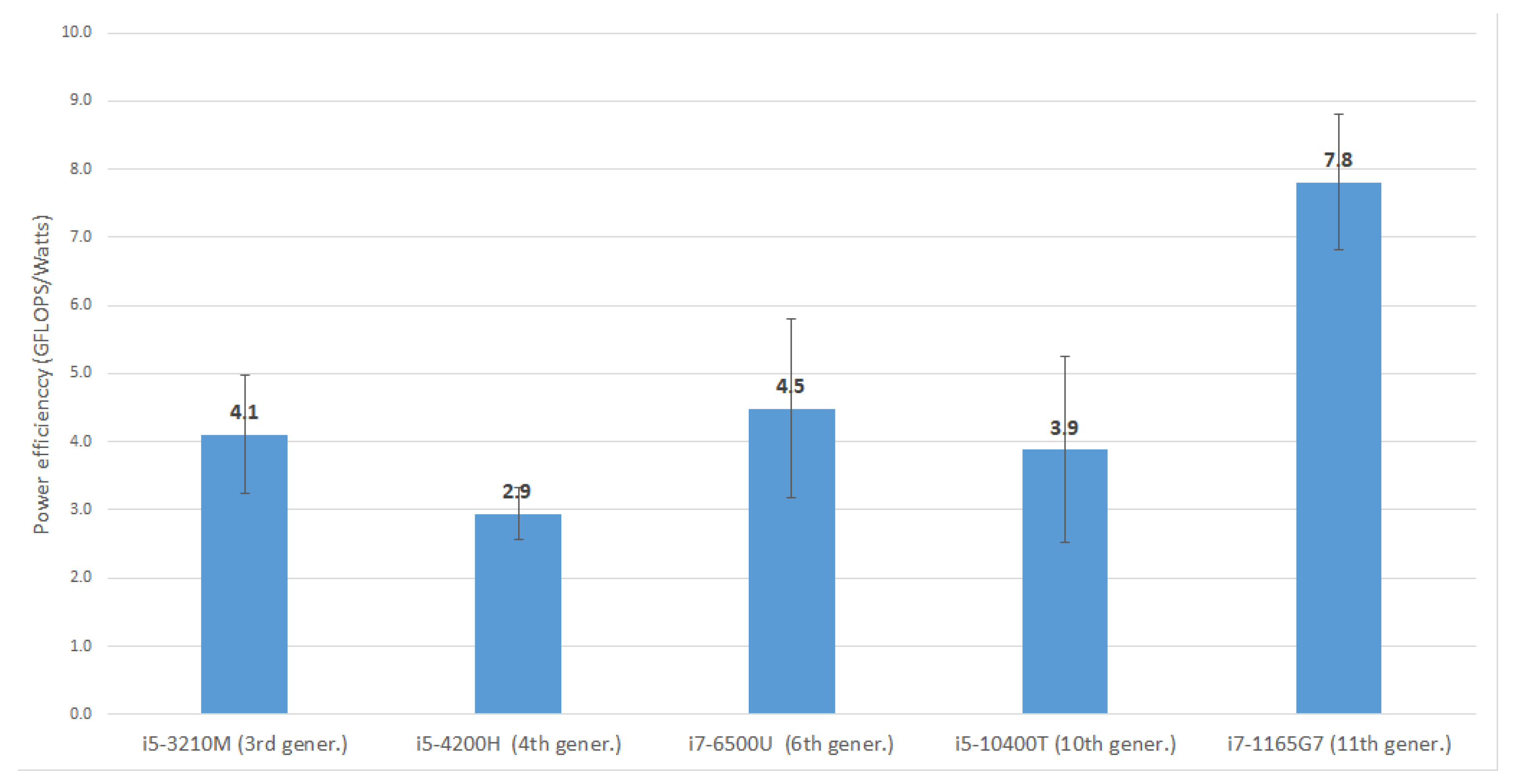

| i5-3210M (3rd gener.) | 19.85 ± 0.06 | 11.90 ± 2.52 | 7.95 ± 1.69 | 32.64 | 4.1 ± 0.87 | 0.21 |

| i5-4200H (4th gener.) | 37.59 ± 0.25 | 12.19 ± 1.56 | 25.40 ± 3.27 | 74.64 | 2.9 ± 0.38 | 0.13 |

| i7-6500U (6th gener.) | 15.55 ± 0.17 | 1.30 ± 0.38 | 14.25 ± 4.18 | 63.92 | 4.5 ± 1.32 | 0.29 |

| i5-10400T (10th gener.) | 30.03 ± 0.06 | 1.14 ± 0.40 | 28.89 ± 10.14 | 112.28 | 3.9 ± 1.36 | 0.36 |

| i7-1165G7 (11th gener.) | 21.22 ± 1.94 | 2.31 ± 0.25 | 19.01 ± 2.40 | 148.47 | 7.8 ± 0.99 | 0.13 |

| Platform | Position in Green500 | Position in TOP500 | Rmax TFLOP/s | Power (KW) | Power Efficiency (GFLOPS/Watt) |

|---|---|---|---|---|---|

| Frontier TDS-HPE Cray EX235a, AMD Oak Ridge National Laboratory United States | 1 | 29 | 19,200 | 309 | 62.684 |

| Frontier-HPE Cray EX235a, AMD Oak Ridge National Laboratory United States | 2 | 1 | 1,102,000 | 21 | 52.227 |

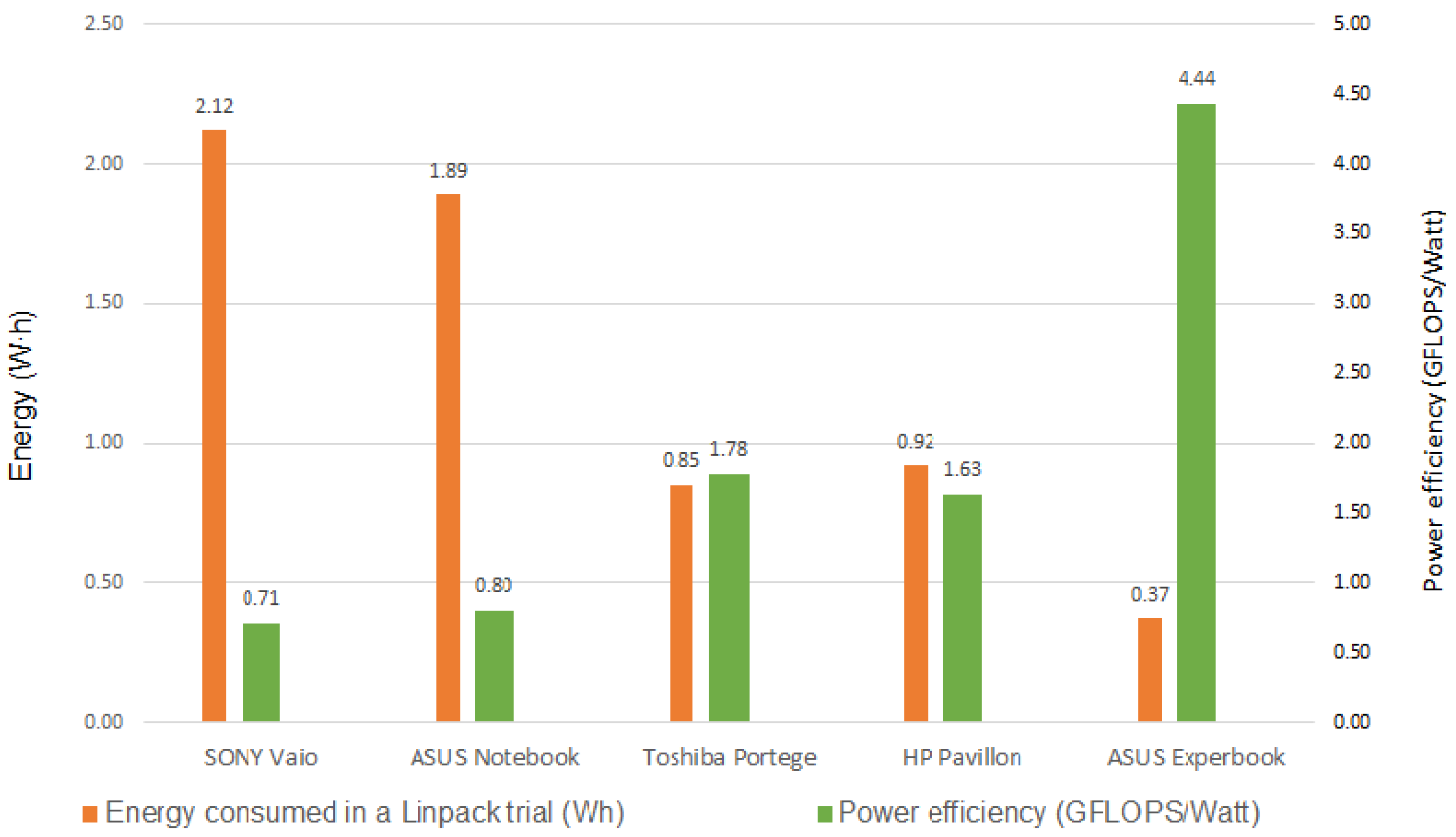

| * SUT5. ASUS 2 | – | – | 0.148 | 0.03347 | 4.436 |

| JOLIOT-CURIE SKL-CEA/TGCC-GENCI, FRANCE | 92 | 124 | 4070 | 917 | 4.434 |

| * SUT2. Toshiba 1 | – | – | 0.064 | 0.0359 | 1.779 |

| occigen2. National de Calcul Intensif-Centre Informatique National de l’Enseignement Suprieur (GENCI-CINES) FRANCE | 167 | 255 | 2490 | 1430 | 1.745 |

| * SUT4. HP | – | – | 0.112 | 0.06872 | 1.633 |

| HKVDPSystem, IT Service Provider, CHINA | 172 | 388 | 1980 | 1216 | 1.627 |

| * SUT3. ASUS | – | – | 0.075 | 0.09342 | 0.7989 |

| * SUT1. SONY | – | – | 0.037 | 0.04594 | 0.7923 |

| Thunder-SGI ICE X, Xeon E5-2699v3/E5-2697 v3, Infiniband FDR, NVIDIA Tesla K40, Intel Xeon Phi 7120P, HPE, Air Force Research Laboratory, United States | 183 | 171 | 3130 | 4820 | 0.649 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Prieto, B.; Escobar, J.J.; Gómez-López, J.C.; Díaz, A.F.; Lampert, T. Energy Efficiency of Personal Computers: A Comparative Analysis. Sustainability 2022, 14, 12829. https://doi.org/10.3390/su141912829

Prieto B, Escobar JJ, Gómez-López JC, Díaz AF, Lampert T. Energy Efficiency of Personal Computers: A Comparative Analysis. Sustainability. 2022; 14(19):12829. https://doi.org/10.3390/su141912829

Chicago/Turabian StylePrieto, Beatriz, Juan José Escobar, Juan Carlos Gómez-López, Antonio F. Díaz, and Thomas Lampert. 2022. "Energy Efficiency of Personal Computers: A Comparative Analysis" Sustainability 14, no. 19: 12829. https://doi.org/10.3390/su141912829

APA StylePrieto, B., Escobar, J. J., Gómez-López, J. C., Díaz, A. F., & Lampert, T. (2022). Energy Efficiency of Personal Computers: A Comparative Analysis. Sustainability, 14(19), 12829. https://doi.org/10.3390/su141912829