AISAR: Artificial Intelligence-Based Student Assessment and Recommendation System for E-Learning in Big Data

Abstract

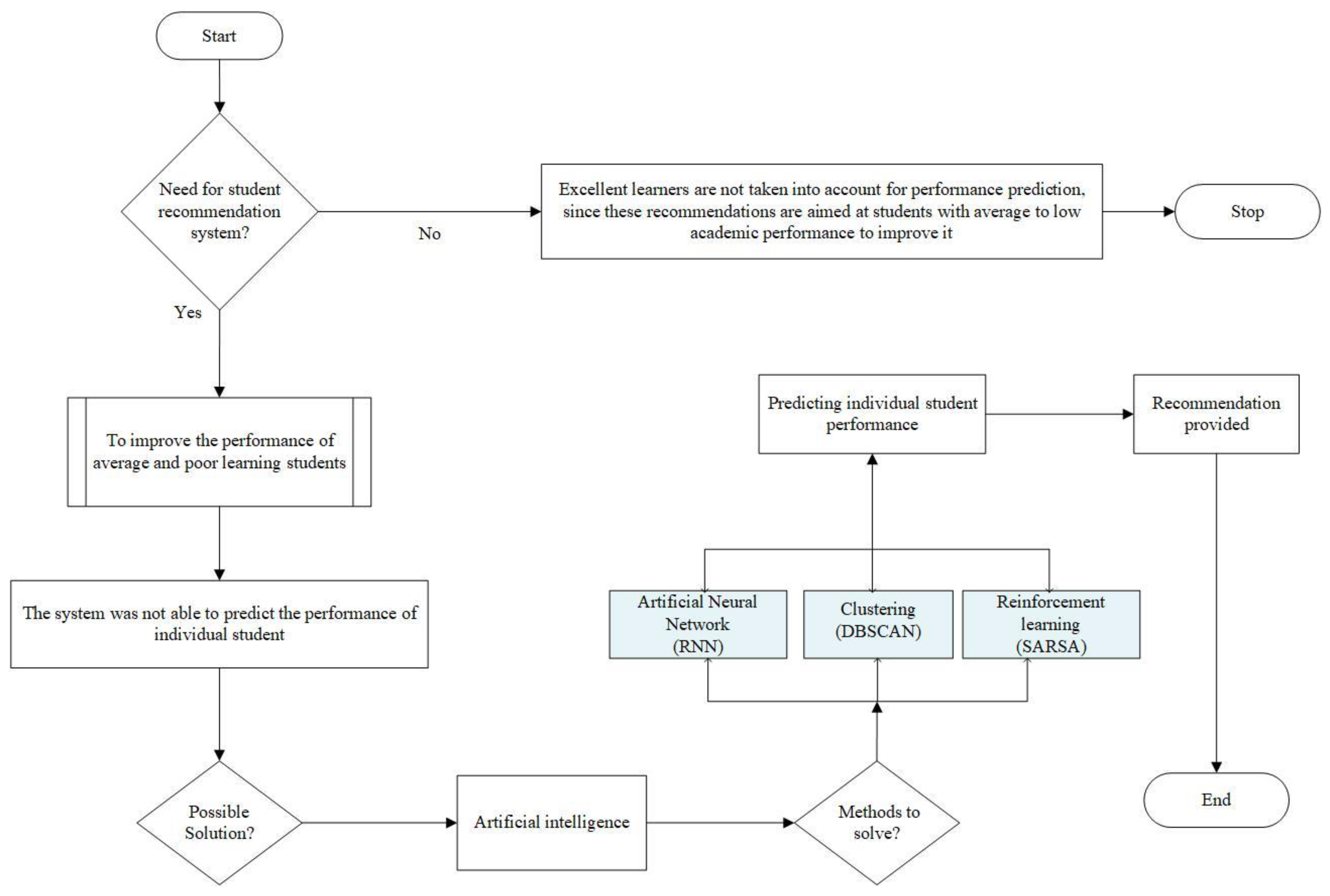

:1. Introduction

- The absence of an accurate prediction of the performance of students with certain difficulties leads to a lack of optimal recommendations for them.

- There is a lack of fast-performing algorithms due to the increasing amounts of input data, which affect the exact prediction of student performance [13].

1.1. Motivation

- The system should effectively analyse a student’s current academic performance and activities and provide an accurate recommendation to improve their performance.

- With an increase in the number of students, the system should make faster computations with zero errors, or else student access will be minimised.

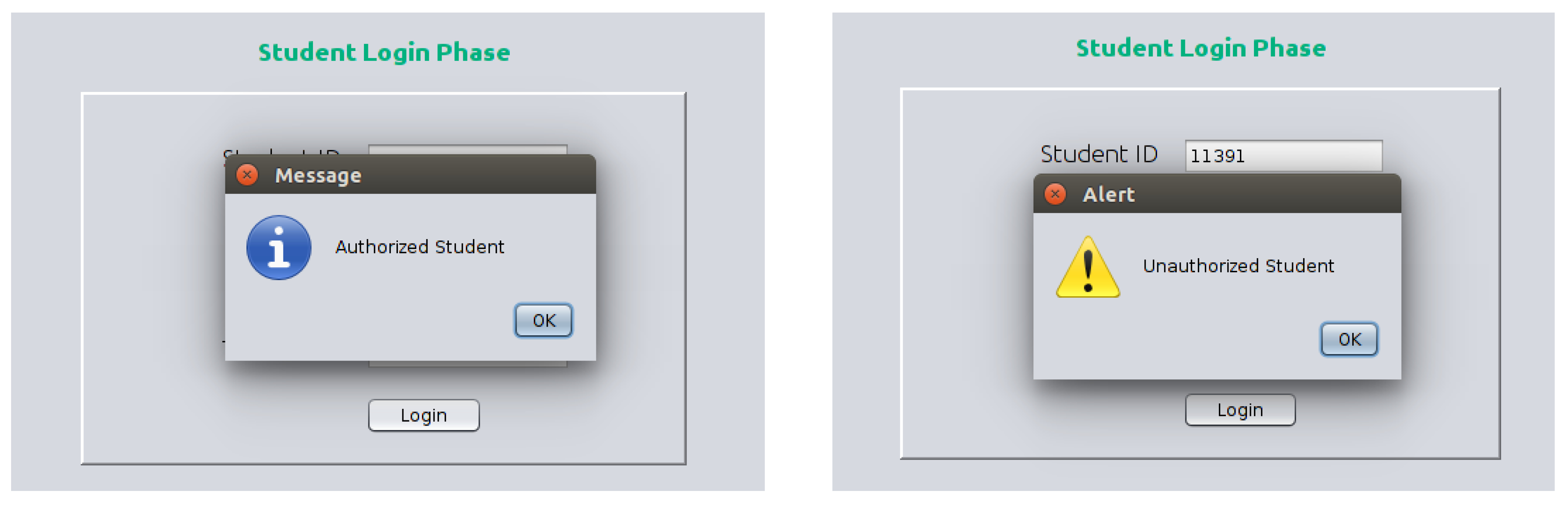

- The system should reduce unauthorised student access, which reduces the number of unnecessary computations that need to be made and connects poor student scores with their better-performing peers.

- In e-learning, how can artificial intelligence techniques be combined with reinforcement learning techniques to achieve faster processing and more accurate results?

- How can we predict the performance of individual students in order to identify students with poor academic performance and enable us to provide appropriate recommendations for them?

1.2. Contribution

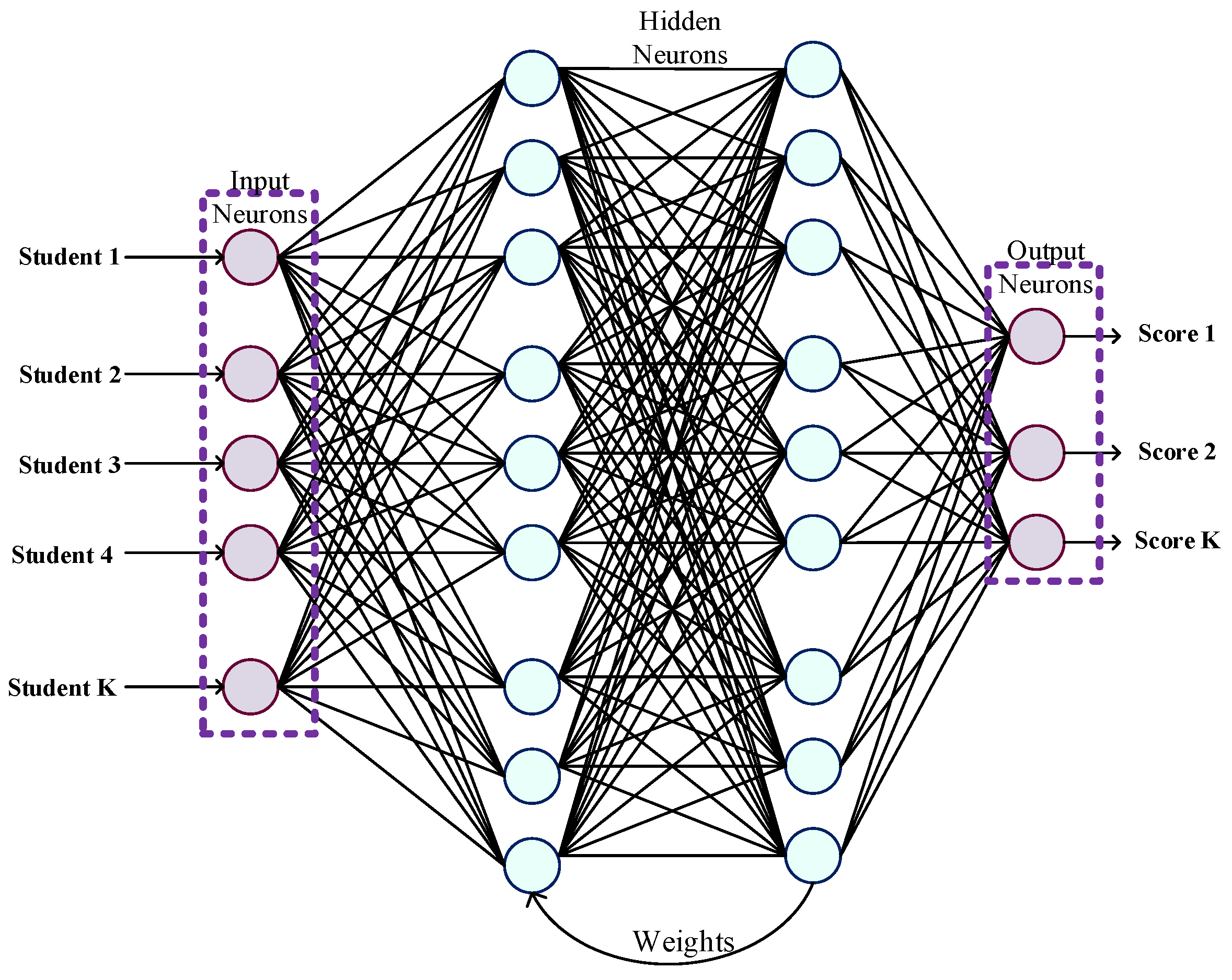

- Students’ scores are estimated using a recurrent neural network (RNN) that considers both examination results and engagement in the classroom.

- A density-based spatial clustering application with noise (DBSCAN) is applied based on the Mahalanobis distance to extract and classify student performance as excellent, average, or poor.

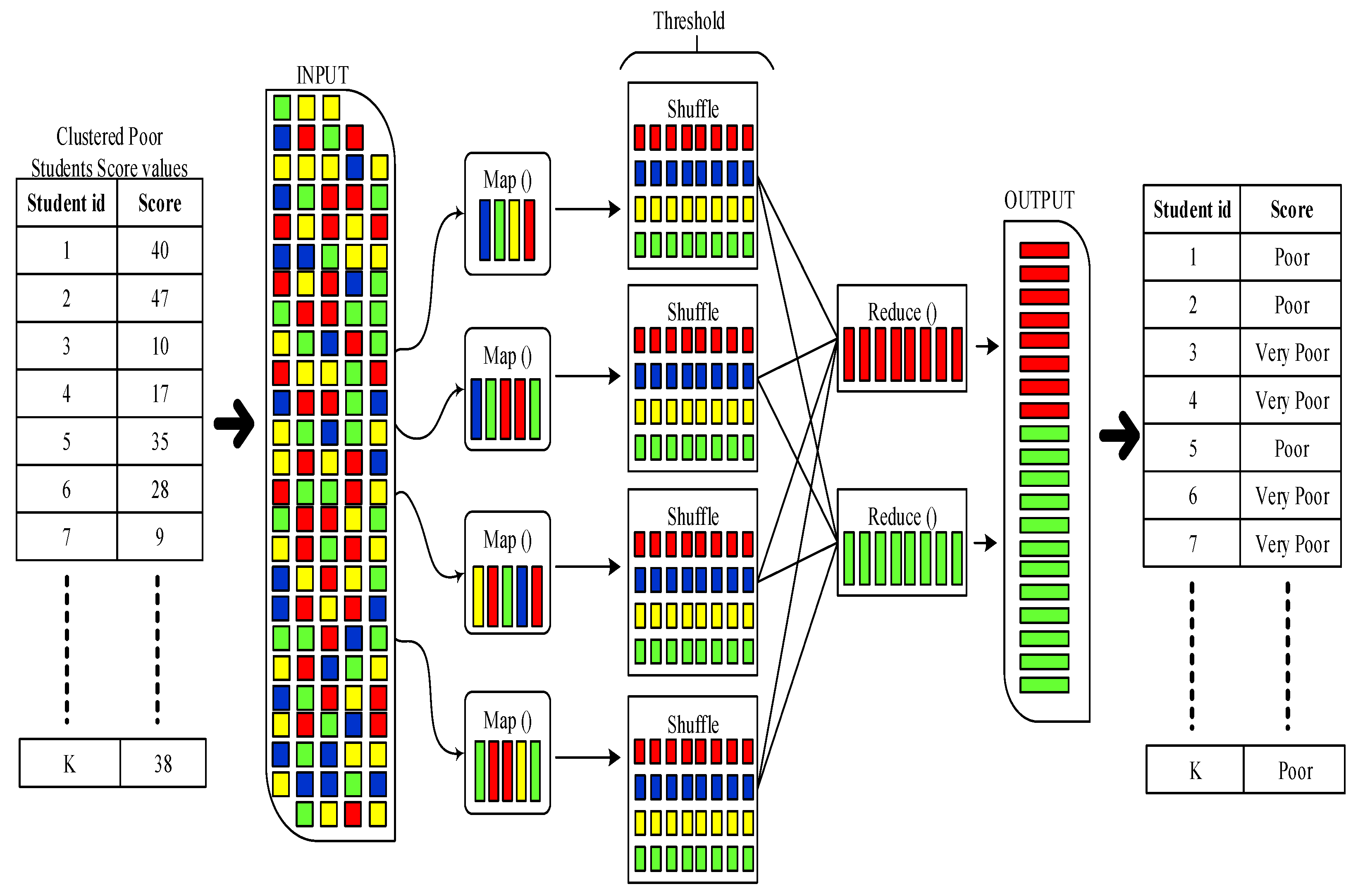

- To predict the performance of individual students, threshold-based MapReduce (TMR) is applied to average and low-scoring students so that recommendations can be made in a more accurate manner.

- Exact recommendations are presented to students by incorporating reinforcement learning algorithms with artificial intelligence. Rule-based state–action–reward–state–action (R-SARSA) delivers recommendations to students autonomously.

1.3. Organisation

2. Related Work

2.1. Student Assessment System

2.2. E-Learning Recommendation System

- Student performance was evaluated without the provision of recommendations, which are helpful for the student to improve their academic scores in future examinations.

- The evaluations of student performance could not accurately identify the score values of individual students.

- Student authentication was not a focus, which allowed unauthorised students to engage in academic malpractices, which affected performance evaluation.

- The increase in the number of students who engaged with the system required results to be obtained faster and for appropriate recommendations to be provided to improve student performance.

3. Problem Statement

- There was an absence of recommendations for the students, which are essential for them to improve their knowledge.

- Grouping students based on their performance (average, good, poor) is not effective in predicting the knowledge level of students individually since the suggestions for instructors will change based on student performance, i.e., not all of the average students require similar recommendations for additional exercises or tests.

- There was the presence of unauthorised students because of a lack of security. This increased the system processing time and led to poor performance predictions.

4. Proposed E-Learning Recommendation System

4.1. Authentication

4.2. Student Score Estimation

4.3. Score-Based Clustering

- Density reachability: Let be a point that is defined to reach another point, , that exists in the density within the distance and ε. Put simply, the points and are supposed to have the number of neighbouring points within ɛ.

- Density connectivity: Let be the data points that are to be clustered. Assume two data points, and , are linked by the density and that is linked with the number of neighbouring points, in which and are present within ɛ. Density connectivity is a chaining process, i.e., , that defines that is a neighbour of , that is a neighbour of , and so forth. This implies that is a neighbour of .

- The effectiveness of the constructed clusters is detected, which enables the system’s accuracy to be improved.

- The risk factors are reduced after completing the entire classification process.

- The goodness, i.e., quality, of the constructed cluster can be measured without merging dissimilar data points into a cluster.

| Algorithm 1: DBSCAN Clustering Based on Mahalanobis Distance |

| Input—Data points Output—Clusters 1. Begin. 2. Select a ith point as from the data points. 3. Assign that the data point be visited. 4. Identify all the neighboring points that are present until the distance ε. Let it be denoted as . 5. If { take as the initial point for creating a new cluster add cluster members as the data points present within distance ɛ into the cluster similarly add members based on else set the data point as noise } end if. 6. Repeat steps 1–4 until all the data points are clustered. 7. End. |

4.4. Student Performance Prediction

- Map:

- Initially, the input cluster is split into the individual score values of individual students. Then, the map phase is executed as the first phase. Each split value from the cluster is operated on based on the mapping function in this phase. This mapper function is presented by processing the key value pairs that are represented as . Let the score values of the student be assigned as key value and the student attendance key value k2. As per the k-value, mapping is performed. The output from the mapper function is

- Reduce:

- During shuffling, the mapped output values are processed by consolidating the matched records in the mapping phase. This shuffling enables duplicate values to be eliminated, and then it groups similar k-values, which results in . The denotes an array of values that is determined from the shuffle operation. During shuffling, the threshold values are fed based on the scoring values. Then, in the reducing phase, the shuffled output is reduced into an output with an exact student performance prediction.

| Algorithm 2: TMR Procedure |

| Let be the attendance of the student and be the score value of the student. Input—Clusters Output—Student performance prediction 1. Begin. 2. Initialise cluster 1, cluster 2. 3. For each cluster, complete the steps below. 4. Split cluster values. 5. Function (Map) // start mapping function. 6. For each -value. 7. Extract . 8. Returns . 9. Repeat for each -value // end mapping function. 10. Function (Reduce) // start reduce function. 11. For each do { compute sum of find } 12. Repeat for each -value. 13. Determine . 14. Store // end reducing function. 15. End. |

4.5. Student Recommendation

5. Experiment Result Analysis

5.1. Implementation Environment

5.2. Comparative Analysis

- Traditional machine learning algorithms are subjected to critical and problematic limitations such as computations, time consumption, and poor performance prediction.

- Clustering is presented as a solution for predicting student performance. However, this was an effective solution, but it was only able to identify the group performance of the students, i.e., it could not determine the individual performance of a student.

- Student recommendations were not optimal for each student participating in the e-learning system.

5.2.1. True-Positive and False-Positive Rate

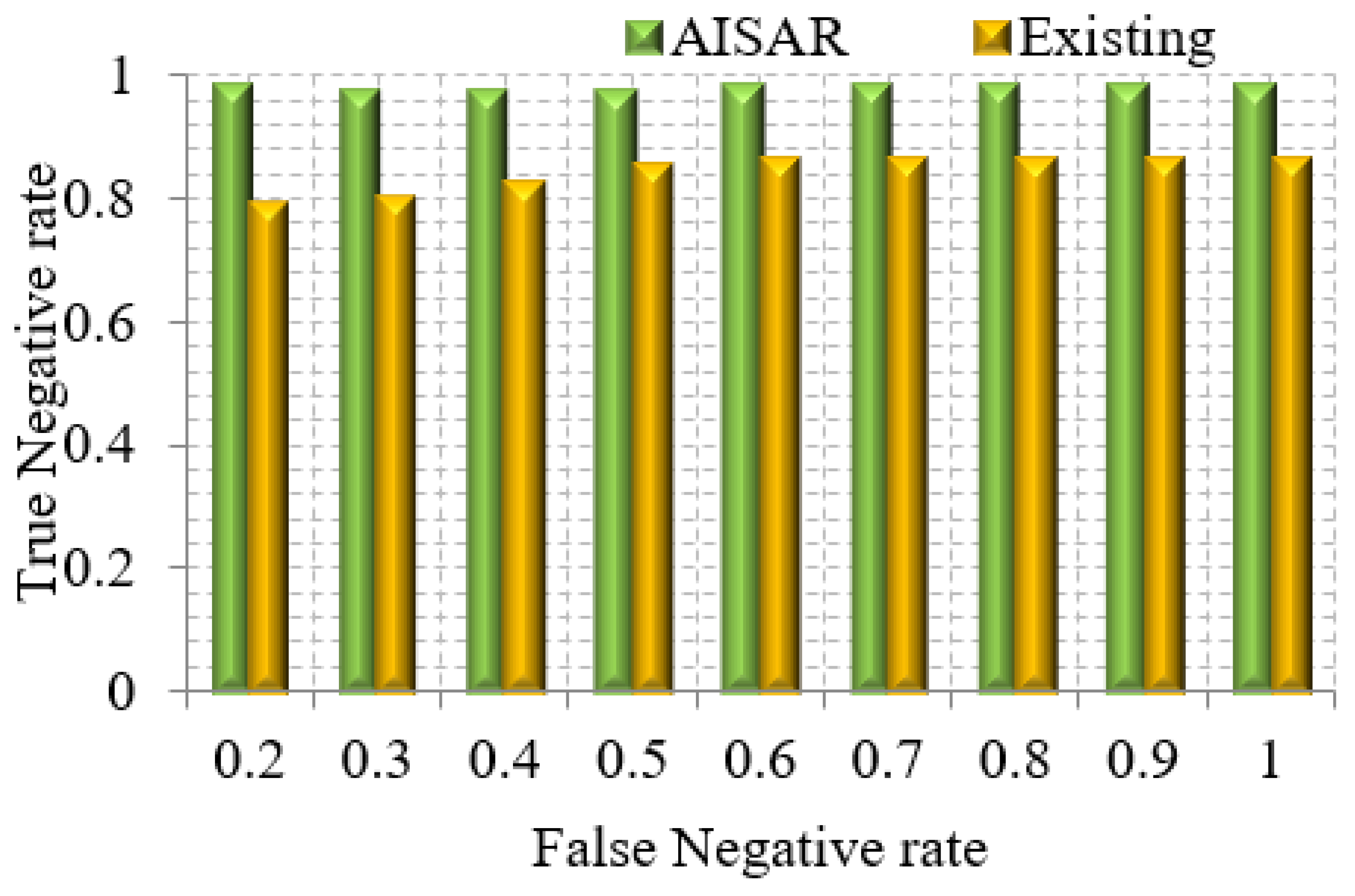

5.2.2. True-Negative and False-Negative Rate

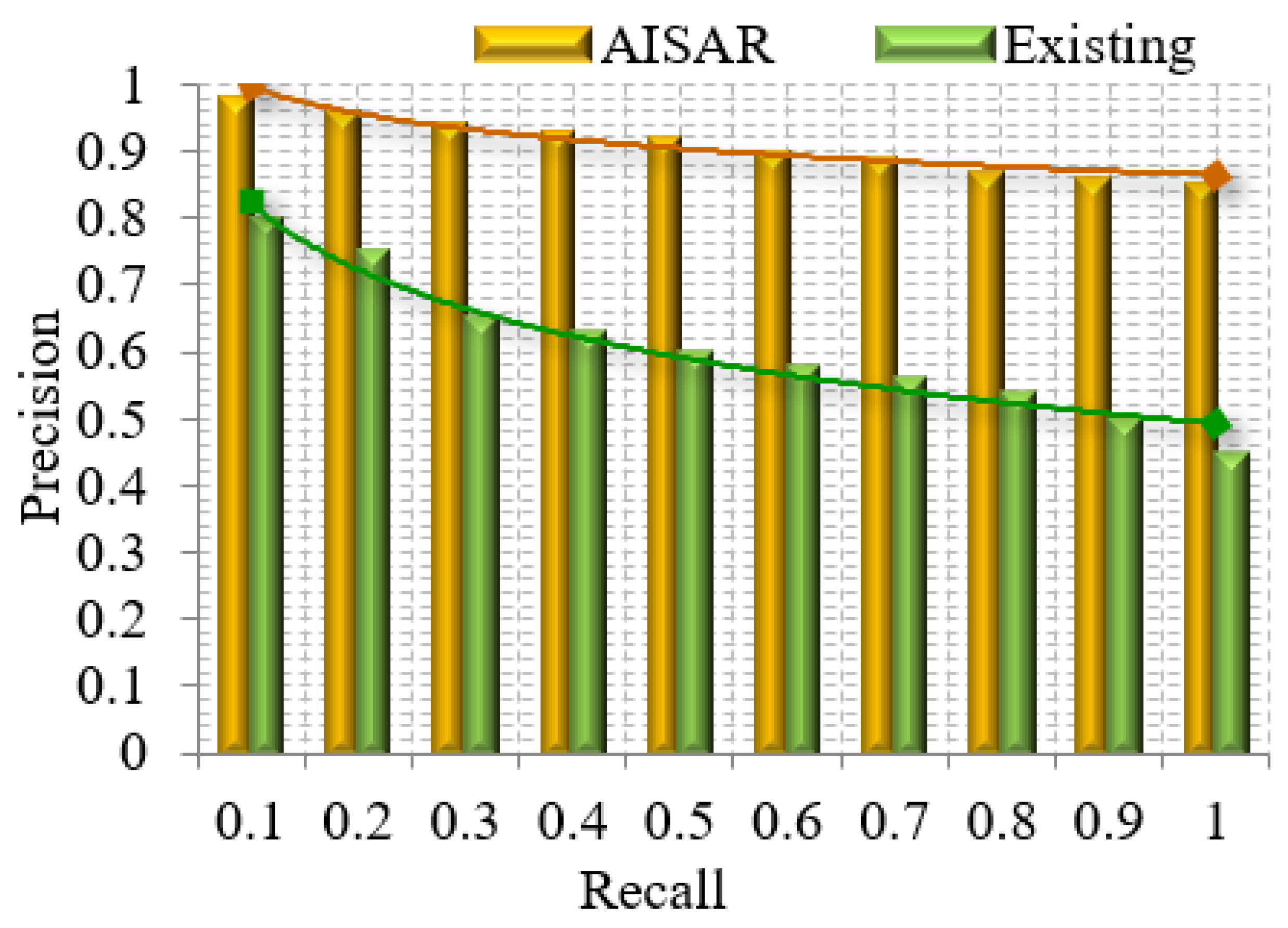

5.2.3. Precision and Recall

5.2.4. Accuracy

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Tawafak, R.M.; Romli, A.B.; Arshah, R.B.A. E-learning Model for Students’ Satisfaction in Higher Education Universities. In Proceedings of the International Conference on Fourth Industrial Revolution (ICFIR), Manama, Bahrain, 19–21 February 2019; pp. 1–6. [Google Scholar]

- Andersson, C.; Kroisandt, G. Opportunities and Challenges with e-Learning Courses in Statistics for Engineering and Computer Science Students. In Proceedings of the IEEE World Engineering Education Conference (EDUNINE), Buenos Aires, Argentina, 11–14 March 2018; pp. 1–4. [Google Scholar]

- Al-Rahmi, W.M.; Yahaya, N.; Aldraiweesh, A.A.; Alamri, M.M.; Aljarboa, N.A.; Alturki, U.; Aljeraiwi, A.A. Integrating Technology Acceptance Model with Innovation Diffusion Theory: An Empirical Investigation on Students’ Intention to Use E-Learning Systems. IEEE Access 2019, 7, 26797–26809. [Google Scholar] [CrossRef]

- Al-Rahmi, W.M.; Alias, N.; Othman, M.S.; Alzahrani, A.I.; Alfarraj, O.; Saged, A.A.; Rahman, N.S.A. Use of E-Learning by University Students in Malaysian Higher Educational Institutions: A Case in Universiti Teknologi Malaysia. IEEE Access 2018, 6, 14268–14276. [Google Scholar] [CrossRef]

- Raspopovic, M.; Jankulovic, A. Performance Measurement of E-Learning Using Student Satisfaction Analysis. Inf. Syst. Front. 2017, 19, 869–880. [Google Scholar] [CrossRef]

- McConnell, D. E-learning in Chinese Higher Education: The View from Inside. High. Educ. 2018, 75, 1031–1045. [Google Scholar] [CrossRef]

- Kew, S.N.; Petsangsri, S.; Ratanaolarn, T.; Tasir, Z. Examining the Motivation Level of Students in E-Learning in Higher Education Institution in Thailand: A Case Study. Educ. Inf. Technol. 2018, 23, 2947–2967. [Google Scholar] [CrossRef]

- Ashwin, T.S.; Guddeti, R.M.R. Impact of Inquiry Interventions on Students in E-Learning and Classroom Environments Using Affective Computing Framework. User Modeling User-Adapt. Interact. 2020, 30, 759–801. [Google Scholar] [CrossRef]

- Fatahi, S. An Experimental Study on an Adaptive E-Learning Environment based on Learner’s Personality and Emotion. Educ. Inf. Technol. 2019, 24, 2225–2241. [Google Scholar] [CrossRef]

- Gao, H.; Wu, H.; Wu, X. Chances and Challenges: What E-learning Brings to Traditional Teaching. In Proceedings of the 2018 9th International Conference on Information Technology in Medicine and Education (ITME), Hangzhou, China, 19–21 October 2018; pp. 420–422. [Google Scholar]

- El Mhouti, A.; Erradi, M.; Nasseh, A. Using Cloud Computing Services in E-Learning Process: Benefits and Challenges. Educ. Inf. Technol. 2018, 23, 893–909. [Google Scholar] [CrossRef]

- Vershitskaya, E.R.; Mikhaylova, A.V.; Gilmanshina, S.I.; Dorozhkin, E.M.; Epaneshnikov, V.V. Present-Day Management of Universities in Russia: Prospects and Challenges of E-learning. Educ. Inf. Technol. 2020, 25, 611–621. [Google Scholar] [CrossRef]

- Souabi, S.; Retbi, A.; Idrissi, M.K.I.K.; Bennani, S. Recommendation Systems on E-Learning and Social Learning: A Systematic Review. Electron. J. e-Learn. 2021, 19, 432–451. [Google Scholar] [CrossRef]

- Li, H.H.; Liao, Y.H.; Wu, Y.T. Artificial Intelligence to Assist E-Learning. In Proceedings of the 14th International Conference on Computer Science & Education (ICCSE), Toronto, ON, Canada, 19–21 August 2019; pp. 653–654. [Google Scholar]

- Chanaa, A. Deep Learning for a Smart E-Learning System. In Proceedings of the 4th International Conference on Cloud Computing Technologies and Applications (Cloudtech), Brussels, Belgium, 26–28 November 2018; pp. 1–8. [Google Scholar]

- Fok, W.W.; He, Y.S.; Yeung, H.A.; Law, K.Y.; Cheung, K.H.; Ai, Y.Y.; Ho, P. Prediction Model for Students’ Future Development by Deep Learning and TensorFlow Artificial Intelligence Engine. In Proceedings of the 4th International Conference on Information Management (ICIM), Oxford, UK, 25–27 May 2018; pp. 103–106. [Google Scholar]

- Khanal, S.S.; Prasad, P.W.C.; Alsadoon, A.; Maag, A. A Systematic Review: Machine Learning Based Recommendation Systems for E-learning. Educ. Inf. Technol. 2020, 25, 2635–2664. [Google Scholar] [CrossRef]

- Mansur, A.B.F.; Yusof, N.; Basori, A.H. Personalized Learning Model Based on Deep Learning Algorithm for Student Behaviour Analytic. Procedia Comput. Sci. 2019, 163, 125–133. [Google Scholar] [CrossRef]

- Zhang, K.; Aslan, A.B. AI Technologies for Education: Recent Research & Future Directions. Comput. Educ. Artif. Intell. 2021, 2, 100025. [Google Scholar] [CrossRef]

- Sousa, M.; Dal Mas, F.; Pesqueira, A.; Lemos, C.; Verde, J.M.; Cobianchi, L. The Potential of AI in Health Higher Education to Increase the Students’ Learning Outcomes. TEM J. 2021, 2, 488–497. [Google Scholar] [CrossRef]

- Tan, J. Information Analysis of Advanced Mathematics Education-Adaptive Algorithm Based on Big Data. Math. Probl. Eng. 2022, 2022, 7796681. [Google Scholar] [CrossRef]

- Wan, S.; Niu, Z. A Hybrid E-Learning Recommendation Approach Based on Learners’ Influence Propagation. IEEE Trans. Knowl. Data Eng. 2019, 32, 827–840. [Google Scholar] [CrossRef]

- Rahman, M.M.; Abdullah, N.A. A Personalized Group-Based Recommendation Approach for Web Search in E-learning. IEEE Access 2018, 6, 34166–34178. [Google Scholar] [CrossRef]

- Lai, R.; Wang, T.; Chen, Y. Improved Hybrid Recommendation with User Similarity for Adult Learners. J. Eng. 2019, 11, 8193–8197. [Google Scholar] [CrossRef]

- Hassan, M.A.; Habiba, U.; Khalid, H.; Shoaib, M.; Arshad, S. An Adaptive Feedback System to Improve Student Performance Based on Collaborative Behavior. IEEE Access 2019, 7, 107171–107178. [Google Scholar] [CrossRef]

- Yağcı, M. Educational Data Mining: Prediction of Students’ Academic Performance Using Machine Learning Algorithms. Smart Learn. Environ. 2022, 9, 1–19. [Google Scholar] [CrossRef]

- Redondo, J.M. Improving Student Assessment of a Server Administration Course Promoting Flexibility and Competitiveness. IEEE Trans. Educ. 2018, 62, 19–26. [Google Scholar] [CrossRef]

- Balderas, A.; De-La-Fuente-Valentin, L.; Ortega-Gomez, M.; Dodero, J.M.; Burgos, D. Learning Management Systems Activity Records for Students’ Assessment of Generic Skills. IEEE Access 2018, 6, 15958–15968. [Google Scholar] [CrossRef]

- Akram, A.; Fu, C.; Li, Y.; Javed, M.Y.; Lin, R.; Jiang, Y.; Tang, Y. Predicting students’ academic procrastination in blended learning course using homework submission data. IEEE Access 2019, 7, 102487–102498. [Google Scholar] [CrossRef]

- Sera, L.; McPherson, M.L. Effect of A Study Skills Course on Student Self-Assessment of Learning Skills and Strategies. Curr. Pharm. Teach. Learn. 2019, 11, 664–668. [Google Scholar] [CrossRef]

- Cerezo, R.; Bogarín, A.; Esteban, M.; Romero, C. Process Mining for Self-Regulated Learning Assessment in E-learning. J. Comput. High. Educ. 2020, 32, 74–88. [Google Scholar] [CrossRef]

- Garg, R.; Kumar, R.; Garg, S. MADM-Based Parametric Selection and Ranking of E-Learning Websites Using Fuzzy COPRAS. IEEE Trans. Educ. 2019, 62, 11–18. [Google Scholar] [CrossRef]

- Al-Tarabily, M.M.; Abdel-Kader, R.F.; Azeem, G.A.; Marie, M.I. Optimizing Dynamic Multi-Agent Performance in E-learning Environment. IEEE Access 2018, 6, 35631–35645. [Google Scholar] [CrossRef]

- Govindasamy, K.; Velmurugan, T. Analysis of Student Academic Performance Using Clustering Techniques. Int. J. Pure Appl. Math. 2018, 119, 309–323. [Google Scholar]

- Kausar, S.; Huahu, X.; Hussain, I.; Wenhao, Z.; Zahid, M. Integration of Data Mining Clustering Approach in The Personalized E-Learning System. IEEE Access 2018, 6, 72724–72734. [Google Scholar] [CrossRef]

- Marras, M.; Boratto, L.; Ramos, G.; Fenu, G. Equality of Learning Opportunity Via Individual Fairness in Personalized Recommendations. Int. J. Artif. Intell. Educ. 2021, 1–49. [Google Scholar] [CrossRef]

- Elkhateeb, M.; Shehab, A.; El-Bakry, H. Mobile Learning System for Egyptian Higher Education Using Agile-Based Approach. Educ. Res. Int. 2019, 2019, 7531980. [Google Scholar] [CrossRef]

- Wan, S.; Niu, Z. An E-Learning Recommendation Approach Based on The Self-Organization of Learning Resource. Knowl. Based Syst. 2018, 160, 71–87. [Google Scholar] [CrossRef]

- Gulzar, Z.; Leema, A.A.; Deepak, G. Pcrs: Personalized Course Recommender System Based on Hybrid Approach. Procedia Comput. Sci. 2018, 125, 518–524. [Google Scholar] [CrossRef]

- Dahdouh, K.; Dakkak, A.; Oughdir, L.; Ibriz, A. Large-Scale E-Learning Recommender System Based on Spark and Hadoop. J. Big Data 2019, 6, 1–23. [Google Scholar] [CrossRef]

- He, H.; Zhu, Z.; Guo, Q.; Huang, X. A Personalized E-learning Services Recommendation Algorithm Based on User Learning Ability. In Proceedings of the 2019 IEEE 19th International Conference on Advanced Learning Technologies (ICALT), Maceio, Brazil, 5–18 July 2019; Volume 2161, pp. 318–320. [Google Scholar]

- Samin, H.; Azim, T. Knowledge Based Recommender System for Academia Using Machine Learning: A Case Study on Higher Education Landscape of Pakistan. IEEE Access 2019, 7, 67081–67093. [Google Scholar] [CrossRef]

- Okada, A.; Whitelock, D.; Holmes, W.; Edwards, C. e-Authentication for Online Assessment: A Mixed-Method Study. Br. J. Educ. Technol. 2019, 50, 861–875. [Google Scholar] [CrossRef]

- Smith, A.; Leeman-Munk, S.; Shelton, A.; Mott, B.; Wiebe, E.; Lester, J. A Multimodal Assessment Framework for Integrating Student Writing and Drawing in Elementary Science Learning. IEEE Trans. Learn. Technol. 2019, 12, 3–15. [Google Scholar] [CrossRef]

- Hussain, M.; Zhu, W.; Zhang, W.; Abidi, S.M.R. Student Engagement Predictions in an E-Learning System and Their Impact on Student Course Assessment Scores. Comput. Intell. Neurosci. 2018, 2018, 6347186. [Google Scholar] [CrossRef]

- Brahim, B.; Lotfi, A. A Traces-Based System Helping to Assess Knowledge Level in E-Learning System. J. King Saud Univ. Comput. Inf. Sci. 2020, 32, 977–986. [Google Scholar] [CrossRef]

- Lee, A.; Han, J.Y. Effective User Authentication System in an E-Learning Platform. Int. J. Innov. Creat. Change 2020, 13, 1101–1113. [Google Scholar]

- Rodríguez-Hernández, C.F.; Musso, M.; Kyndt, E.; Cascallar, E. Artificial Neural Net-works in Academic Performance Prediction: Systematic Implementation and Predictor Evaluation. Comput. Educ. Artif. Intell. 2021, 2, 100018. [Google Scholar] [CrossRef]

- Zhang, Q.; Lu, J.; Jin, Y. Artificial Intelligence in Recommender Systems. Complex Intell. Syst. 2021, 7, 439–457. [Google Scholar] [CrossRef]

- Zhai, X.; Chu, X.; Chai, C.S.; Jong, M.S.Y.; Istenic, A.; Spector, M.; Liu, J.B.; Yuan, J.; Li, Y. A Review of Artificial Intelligence (AI) in Education from 2010 to 2020. Complexity 2021, 2021, 8812542. [Google Scholar] [CrossRef]

- Kuzilek, J.; Hlosta, M.; Zdrahal, Z. Open University Learning Analytics Dataset. Sci. Data 2017, 4, 1–8. [Google Scholar] [CrossRef] [Green Version]

- Siddique, S.A. Improvement of Online Course Content Using MapReduce Big Data Analytics. Int. Res. J. Eng. Technol. 2020, 07, 50–56. [Google Scholar]

| Score Values from RNN | Score-Based DBSCAN Clustering |

|---|---|

| Above 80 | Excellent |

| 50–80 | Average |

| Below 50 | Poor |

| Rule Number | Input | Assessment Value | |

|---|---|---|---|

| Mean Score | Mean Engagement | ||

| 1 | 0 | ||

| 2 | 0.5 | ||

| 3 | 0.5 | ||

| 4 | 1 | ||

| State | Action | Reward |

|---|---|---|

| Practice exercises | ||

| Simple study materials | ||

| Understandable presentations | ||

| ⋮ | ⋮ | ⋮ |

| Sample questions |

| Data File | Description | Attributes |

|---|---|---|

| Courses | Contains the list of all available modules and their presentations |

|

| Assessments | Contains information about assessments in module presentations. Usually, every presentation has a number of assessments followed by the final exam |

|

| VLE | Contains information about the available materials in the VLE. Students have access to these materials online and their interactions with the materials are recorded. |

|

| Studentinfo | Contains demographic information about the students together with their results. |

|

| StudentRegistration | Contains information about the time when the student registered for the module presentation. For students who unregistered, the date of unregistration is also recorded. |

|

| StudentAssessment | Contains the results of student assessments. If a student does not submit the assessment, no result is recorded. The final exam submissions are missing if the result of the assessments is not stored in the system. |

|

| StudentVLE | Contains information about each student’s interactions with the materials in the VLE. |

|

| Work | Methods Used | Disadvantages |

|---|---|---|

| Student engagement prediction by machine learning algorithms [45] | Decision tree |

|

| J48 |

| |

| CART |

| |

| Gradient boosting tree |

| |

| Naïve Bayes |

|

| Method | Accuracy (%) |

|---|---|

| Decision tree | 85.91 |

| J48 | 88.52 |

| Classification and regression tree | 82.25 |

| Gradient boosting tree | 86.43 |

| Naïve Bayes | 82.93 |

| AISAR system | 97.21 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Bagunaid, W.; Chilamkurti, N.; Veeraraghavan, P. AISAR: Artificial Intelligence-Based Student Assessment and Recommendation System for E-Learning in Big Data. Sustainability 2022, 14, 10551. https://doi.org/10.3390/su141710551

Bagunaid W, Chilamkurti N, Veeraraghavan P. AISAR: Artificial Intelligence-Based Student Assessment and Recommendation System for E-Learning in Big Data. Sustainability. 2022; 14(17):10551. https://doi.org/10.3390/su141710551

Chicago/Turabian StyleBagunaid, Wala, Naveen Chilamkurti, and Prakash Veeraraghavan. 2022. "AISAR: Artificial Intelligence-Based Student Assessment and Recommendation System for E-Learning in Big Data" Sustainability 14, no. 17: 10551. https://doi.org/10.3390/su141710551

APA StyleBagunaid, W., Chilamkurti, N., & Veeraraghavan, P. (2022). AISAR: Artificial Intelligence-Based Student Assessment and Recommendation System for E-Learning in Big Data. Sustainability, 14(17), 10551. https://doi.org/10.3390/su141710551