1. Introduction

The use of supercomputers is increasing today, both in science and in economy. Advances in algorithms and software technologies at all levels are essential for further progress in problem-solving with the use of supercomputers. A supercomputer represents computer architecture of high-capacity, i.e., high performance, capable of processing large amounts of data in a very short time. Such computers are usually considered high-performance computers whose construction is not based primarily on von Neumann architecture. Typically, it enables the distribution and parallelization of computer processes. Although today’s desktop computers are more powerful than supercomputers developed just a decade ago, it can be said that common characteristics of supercomputers, regardless of the period in which they occurred, are as follows: maximum available processing speed, maximum possible memory size, the largest physical dimensions, and the highest price, compared to other computers.

Supercomputers are used to solve a variety of problems, including intensive calculations [

1], e.g., in inventory management [

2], military intelligence [

3], climate forecasting [

4], earthquake modeling [

5], transport [

6], production [

7], human health and safety [

8], medical applications [

9], i.e., practically in every field of science or business. The role of supercomputers in all these fields is becoming increasingly important, and supercomputers are increasingly influencing future progress. Clustering of European supercomputers was initiated in 2002, by the project Distributed European Infrastructure for Supercomputing (DEISA), when 11 of the largest supercomputing centers from seven European countries were connected to a 10 Gbit/s network [

10]. The next frontier in achieving performance for supercomputers is 1018 instructions per second and is expected to be reached around 2021–2022 [

11].

The idea that computing power can be provided to customers as a useful service is not new, as it has been dating since 1966 [

12]. Despite that, new technologies, as well as customer demands for high-quality services, come daily, and all of that obviously leads to day-to-day, new competition. In recent decades, the economy and industry have faced a large inflow of new and intensively data-oriented services, including data collection, storage, analysis, and use [

13]. Search engines [

14], social networks, and business intelligence [

15] are just a few examples of such trends [

16].

The amount of digital data in our world has grown enormously, with an exponential trend. The size of the digital universe is estimated to grow from 4.4 zettabytes in 2013 to 44 zettabytes by 2020 [

17,

18]. The vast amount of data being produced, commonly referred to as Big Data [

19], provides great potential in the form of undiscovered structures and relationships [

15]. In order for this potential to be exploited, new knowledge acquired, and business value gained, the produced data must be accessed, then processed, analyzed, and visualized in the most efficient way. To this end, the application of specialized architectures based on the use of supercomputers, often referred to as high-performance computing (HPC) architectures, becomes a necessity in practice [

20,

21,

22]. This will certainly also mean the rapid transition from small, closed computers and storage architectures to large, open, and service-oriented infrastructures [

23]. Since introducing early supercomputers in the 1980s, the high-performance industry has been striving for continuous performance growth. This can be noticed from the trends expressed by the list of top 500 supercomputers [

24], which shows an exponential increase in computing power over the last twenty years. Traditionally, the computing power provided by HPC is mainly used in enterprises, as well as research institutes and academic organizations, which benefit from the rich offerings given by HPC. For example, from the arrangement of complex sequences in DNA to complex simulations in meteorological phenomena, HPC has been proven to be the primary basis for providing procedures for solving complex problems that require very high computational performance [

25,

26,

27,

28].

Science and industry face extensive challenges to cope with very large complex data sets. These big data needed to be processed. A collaborative exchange between scientists and data analysis experts is essential to provide insights and solutions for a specific challenge [

29].

HPC providers appreciate cooperation in HPC-related aspects among national and foreign research centers, as well as mainly with national enterprises. They believe that cooperation with an industry is important for the future development of their organization and that they can help enterprises to meet their needs through HPC. On the other hand, enterprises believe that cooperation with science or industry could foster the HPC usage and their organization development [

30]. HPC intermediaries, which provide consultancy or advisory services in the field of HPC, are important for connecting users of HPC services and HPC centers, as many companies and public research centers lack technical knowledge about HPC. In Europe there is a strong presence of independent software vendors whose are successfully working with research institutions. They struggle to expand their businesses successfully due to their difficulties in raising financing. Commercial banks are generally engaged in the financing of private and commercially oriented HPC centers [

31].

HPC is, according to the European Commission [

32], a strategic resource for Europe’s future. Creating awareness among the students about the importance of HPC topics is very important to educate a workforce to continue work in this area. Students can be introduced to HPC topics through courses, projects, and summer internship programs. Quality of education can be, according to the experiences from India [

33], enhanced with better outcome in undergraduate computer science and engineering programs with the introduction of HPC courses into their curriculum.

Taking into account the increased requirements for performing complex data analyses in research and the application of HPC technologies to support those analyses, there is an objective need for an adequate evaluation and selection of an appropriate HPC architecture, which presents a challenge to the engineering community in the field of computing and informatics.

Supercomputer software, algorithms, and hardware are closely coupled. As architectures change, new software solutions are needed. If a selection of architectures is made without considering software and algorithms, the results of such a decision may be unsatisfactory.

Educated and qualified people are an important part of a supercomputing system. Professionals, i.e., multidisciplinary expert teams for supercomputers, need a set of specialized knowledge of the applications they work with, as well as of various supercomputing technologies. This further implies that both forms of support are necessary for a supercomputing system to operate effectively. In addition to the long implementation and required customizations, one of the problems that occurs when deciding to select some HPC architecture is the possibility of various types of risks occurring. As the biggest, a financial risk arises, and together with it a business risk. It implies that in addition to the resources that need to be allocated for the analysis when selecting some HPC architecture, it is also necessary to provide the resources for the architecture itself, as well as the standards that the architecture requires in order to work. In addition, a team of experts should be provided, which is also necessary to support the implementation of the HPC architecture, in order to achieve positive business results, particularly in the case of specialized problems or projects and the creation of space for future projects. Different risks can lead to the failure of the HPC architecture to be implemented, most often when it cannot meet specific requirements or needs.

In order to avoid the risk of failure of implementation and application of some HPC architecture, it is necessary to clearly define all the undertakings in the entire life cycle of the HPC architecture, starting with the analysis of the architecture selection and throughout its lifetime, in order to achieve positive business results.

A typical process for selecting some architecture could be implemented in several steps. In the first step, an assessment of the current state is important, when it is decided whether architecture changes are needed and if so, why and under what conditions. Then, the next steps would be to analyze plans and budgets, taking into consideration requirements and needs, i.e., assessing the needs for a new solution. Following these information and constraints, we explore current technologies and their capabilities. The next step is deciding which solution is appropriate for the desired changes. The whole process results in decisions that represent the basis for negotiating and creating contracts for the procurement and implementation of the desired architecture.

In such a process, it is necessary to have as much information as possible before making a decision, since when establishing some architecture in an organization, wrong decisions can be made by not paying attention to each criterion and its domain in the required way, so such an approach will not produce the desired results. Failure to achieve the desired results can lead to failure in the implementation and application of the desired HPC architecture. Therefore, it is necessary to provide a multidimensional database model and analysis procedures over such a model, in order to provide opportunities for decision simulations according to specific criteria.

Despite cost reductions and ease of access to high-performance computing platforms, they are still unavailable to most institutions and companies. Supercomputing systems consume a significant amount of energy, leading to high operating costs, reduced reliability, and waste of natural resources. This fact clearly indicates the sensitivity of a decision-making process regarding the selection and implementation of such an architecture.

3. Input Parameters for the Evaluation of Criteria for the Implementation of HPC in Danube Region Countries

Determining the significance of the criteria relevant to the implementation of high-performance computing (HPC) was carried out in 14 countries. Countries that participated in this research were: Austria (AT), Bosnia and Herzegovina (BA), Bulgaria (BG), Croatia (HR), Czech Republic (CZ), Germany (DE), Hungary (HU), Moldova (MD), Montenegro (ME), Romania (RO), Serbia (RS), Slovakia (SK), Slovenia (SL), and Ukraine (UA). All countries were countries of Central and South-East Europe, forming the so-called Danube region. Challenges of the Danube region are, according to Coscodaru et al. [

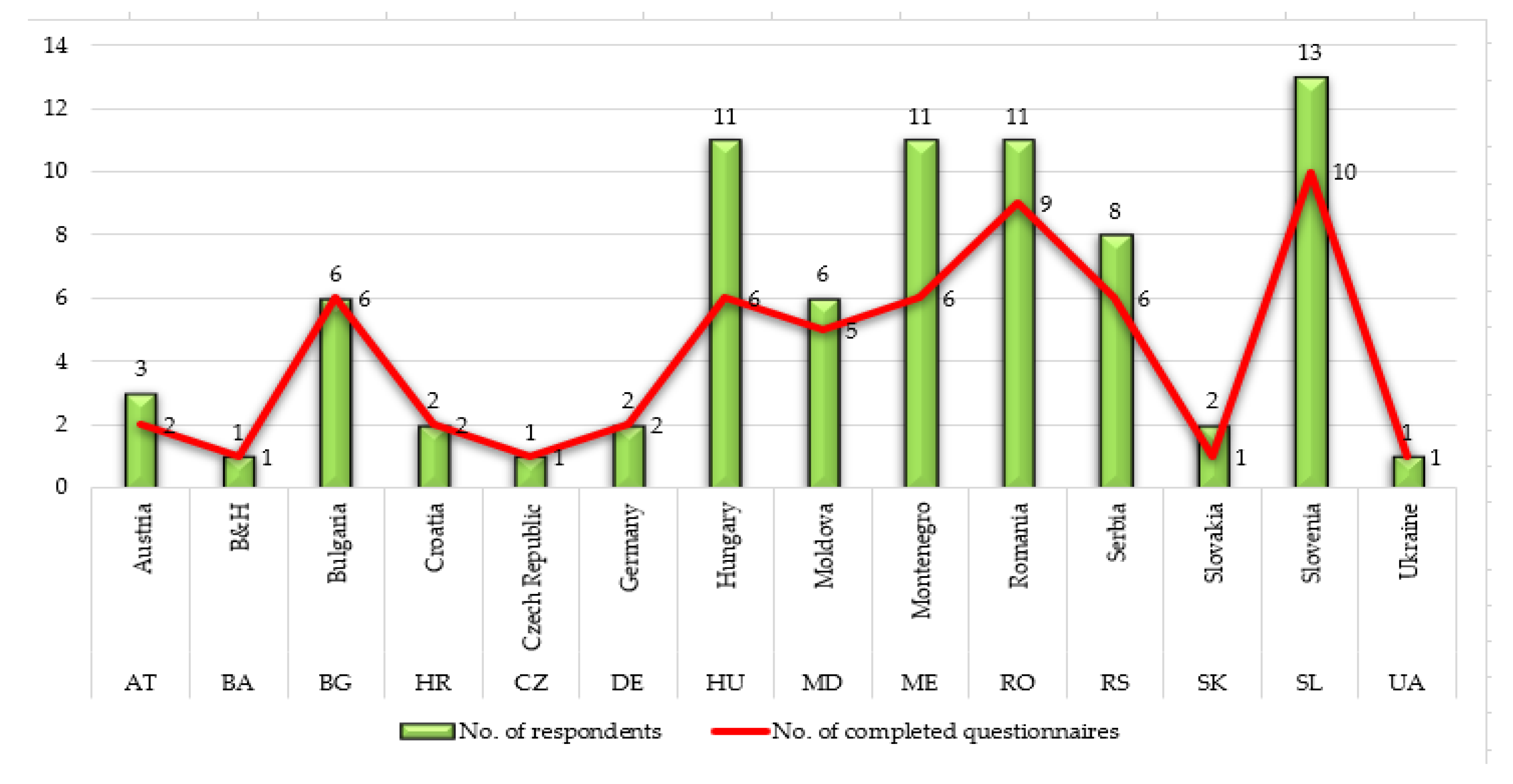

42], its backwardness and substantial disparities between its well-off westernmost parts and the rest of the region. Most HPC infrastructure and knowledge are located in the West of the Danube region. Those countries are either EU member states, pre-accession countries, or EU neighboring countries. Regional nuances were considered in this paper. Countries, as well as the number of respondents and the number of correctly completed questionnaires, are shown in

Figure 1.

The total number of questionnaires sent to the experts for evaluation was 78. Since it is a very complex area still insufficiently researched, the total number of correctly completed questionnaires was 58, which represents 74.36% of the total number of questionnaires sent. The largest number of decision-makers to whom the questionnaire was forwarded was from Slovenia (13), then from Hungary, Montenegro, Romania, 11 each. For other countries, the number of questionnaires forwarded was fewer, which can be seen in

Figure 1. The data for the study were gained by the online survey by the InnoHPC [

43] database conducted in 2017 and by Lapuh postdoctoral research in 2018. Experts using HPC employed in the research institutions and HPC providers were part of the survey. Research institutions were chosen by the convenience, which was based on the literature review, the authors’ expert analysis on the potential usage of HPC in research organizations, and the online search. The experts were found on the basis of organizations’ website research or their published or presented scientific works in the field of HPC. The snowball sampling was used in parallel: participants were asked to suggest partners from other institutions dealing with HPC.

Data in this section represent input parameters in the model.

Figure 1 shows the total number of respondents (decision-makers) per each of 14 Danube region countries. The number of respondents was various and depended on the number of experts in each country (marked with green bar). Some of the decision-makers did not properly fulfil surveys or just gave equal assessments for all criteria. Such surveys were not useful for computation in our methodology, so we have excluded them. The number of correctly completed questionnaires is presented in

Figure 1 and marked with a red line. For example, in Austria, three respondents were included in the research, but one of them did not fulfil the questionnaire in the proper way, so two questionnaires were entered in the further model.

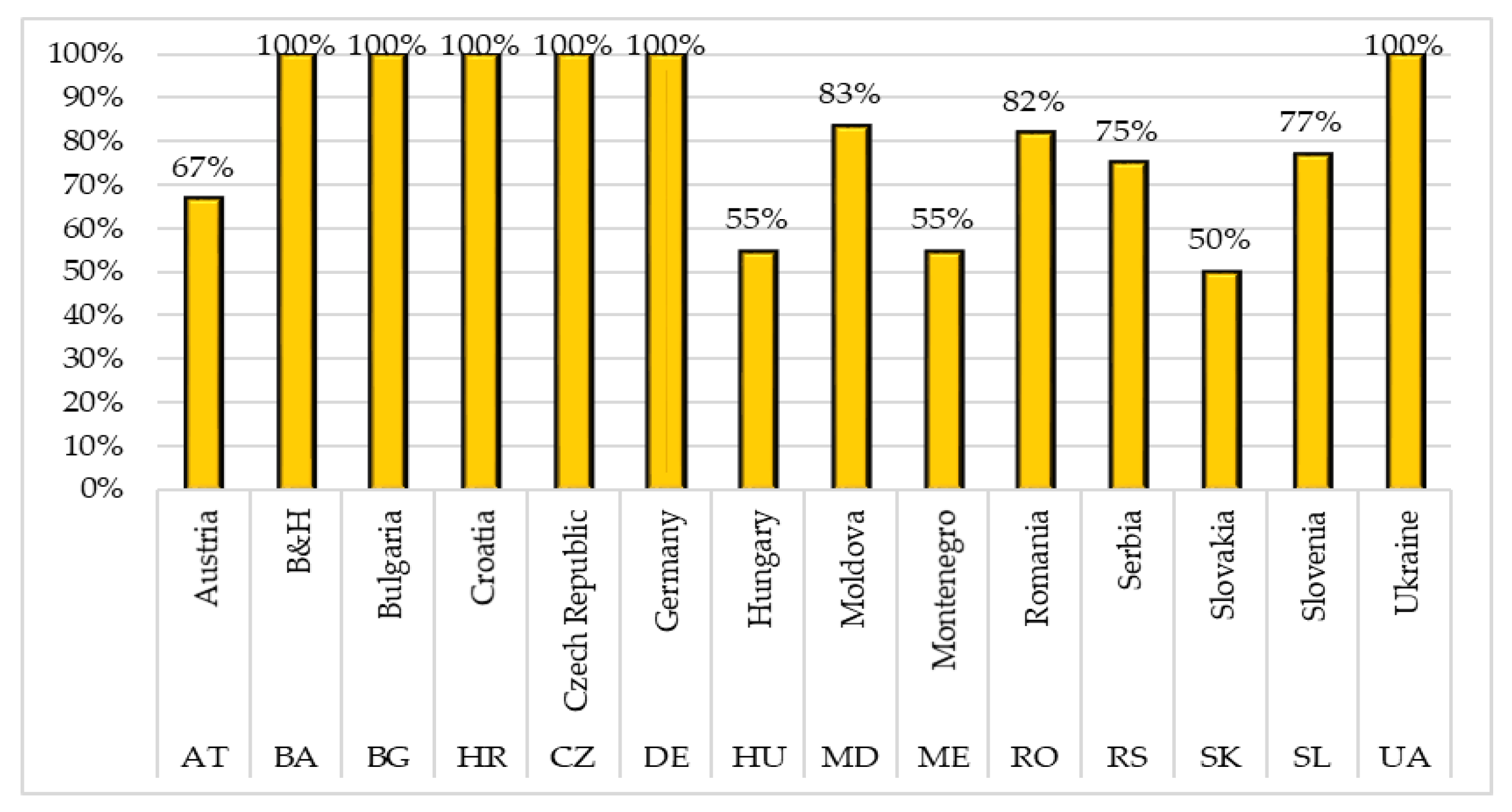

Figure 2 shows that the performance of correctly completed questionnaires was 100% in the following countries: Bosnia and Herzegovina, Bulgaria, Croatia, Czech Republic, Germany, and Ukraine. Moldova, Romania, Slovenia, and Serbia had slightly lower percentages, 83%, 82%, 77%, and 75%, respectively. Countries with 50% or more correctly completed questionnaires were Slovakia (50%), Hungary and Montenegro (55%), and Austria (67%).

The criteria, shown in

Table 3, were evaluated using fuzzy PIPRECIA and fuzzy PIPRECIA-I.

The point of interest of this study was how the experts employed in the research institutions or by the HPC providers dealing with HPC perceive the general degree of HPC development in the country they are working in. The HPC experts were asked to evaluate the HPC situation in the individual countries, regarding the HPC infrastructure, HPC competences, if curriculums contain gaining HPC skills, about the existence of project calls on gaining funding for HPC usage, about their perspective on the general awareness on advantages of using HPC in the country, if and how organizations in the selected individual countries cooperate related to HPC, and if individual countries encourage HPC usage in the legislative documents or strategies.

4. Results

A detailed calculation procedure performed on two respondents from Austria is shown in

Table 4,

Table 5,

Table 6,

Table 7 and

Table 8. Attitudes of respondents using linguistic scales (step 2) shown in

Table 1 and

Table 2 are presented in

Table 4. It is important to note that when evaluating for fuzzy PIPRECIA, it was performed by Equation (7) and the initial criterion was the second one; therefore, cell C

1 in

Table 4 is empty. The evaluation for the inverse fuzzy PIPRECIA method was performed by Equation (11), starting from the penultimate criterion C

10.

Aggregating the values of decision-makers using the average mean yielded the values of

sj shown in

Table 5. Subsequently, using Equation (8) in the third step, the values of

kj were obtained as follows:

In order to obtain the values of

qj, it was necessary to apply the fourth step, i.e., Equation (9).

In order to obtain the value of

wj, the fifth step, i.e., Equation (10), is applied. The sum previously calculated for the values of

qj was

, obtained in the following way:

The following equation,

, was then applied to perform the defuzzification of the values, as shown in the penultimate column of

Table 5.

Finally, the ranks for the obtained criterion values are shown (

Table 5), which were further used to determine Spearman’s correlation coefficient and determine the final ranks of the criteria.

Using the methodology of inverse fuzzy PIPRECIA, the values shown in

Table 6 were obtained. It is important to note that in the same way as previously described, these values were obtained by applying Equations (11)–(14). Assessment by decision-makers from the sixth step was previously shown in

Table 4.

Step 7. Determining the coefficient

:

Step 8. Determining the fuzzy weight

:

In order to obtain the value of

wj, the ninth step, i.e., Equation (14), was applied. The sum previously calculated for the values of

qj was obtained in the following way:

The following equation,

, was then applied to perform the defuzzification of the values.

Step 10. In order to determine the final weights of criteria, it was necessary to apply Equation (15). For example:

The columns labeled DF in

Table 5 and

Table 6 contain the defuzzified weights of the criteria. On the basis of these values, the final weight of the criteria was calculated using Equation (15), as shown in

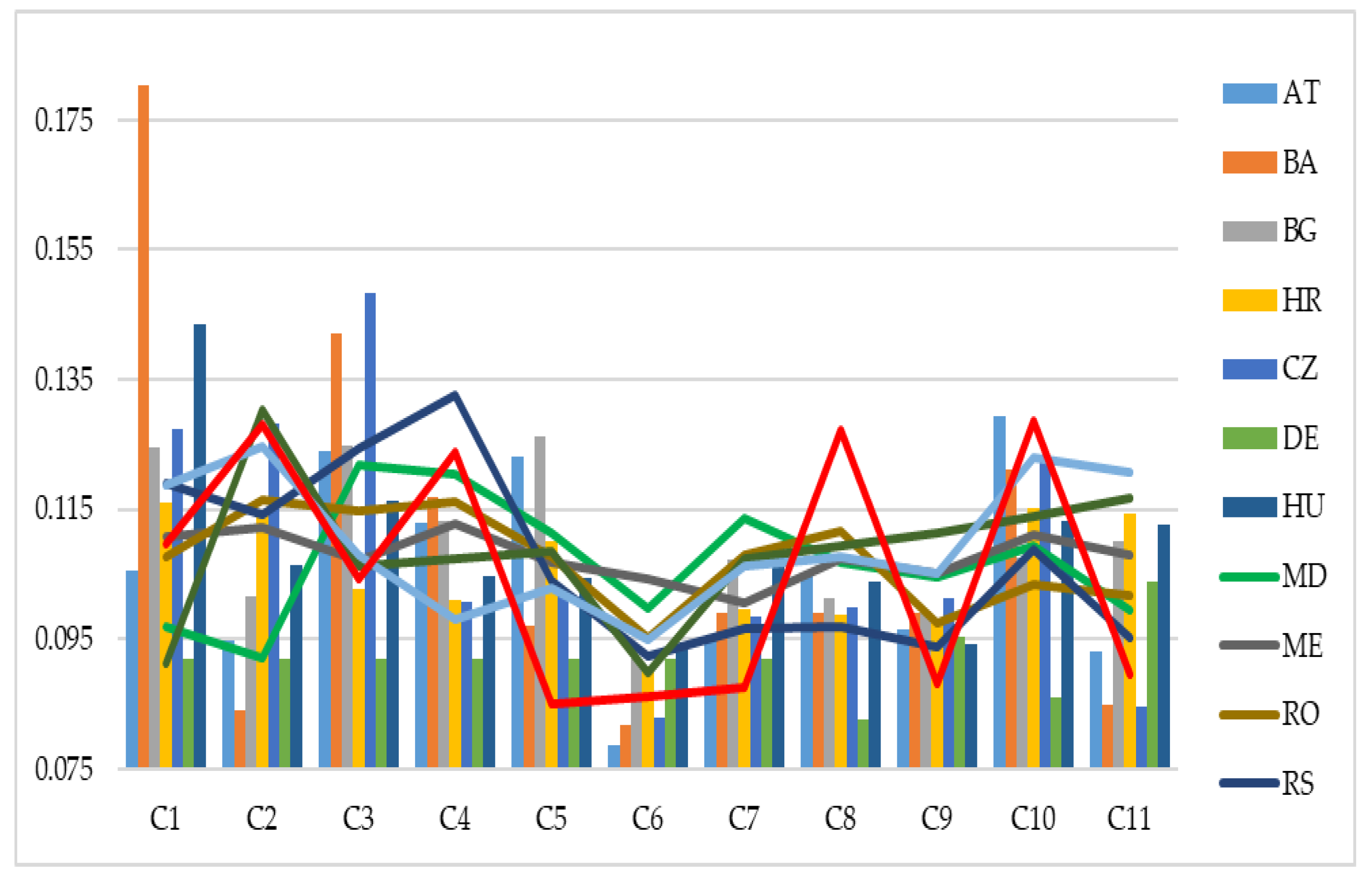

Table 7 and

Figure 3.

Spearman’s correlation coefficient was 0.900, while Pearson’s correlation coefficient was 0.957, which is a very high correlation of both the ranks and values of the criteria obtained by fuzzy and inverse fuzzy PIPRECIA.

As can be seen in

Figure 3, the tenth criterion was the most significant—the degree of science-public authorities’ cooperation related to HPC, which also had a minor variation in weights considering both approaches. A slightly lower value was obtained for C

3—availability of skilled human resources—which was in the second position, with a variation of 0.012. The third most significant criterion was C

5—availability of competitive public funding (e.g., direct public funding, grants, awards, baseline funding)—with a value of 0.123, which indicates that it had almost the same significance as C

3. Its variation or deviation was equal to zero, since it had an identical value by applying both approaches. In the fourth position is C

4—degree to which universities equip students with the necessary knowledge to work in HPC—whose value was 0.113 and a deviation of 0.09. The fifth most significant criterion was C

1—availability of free HPC infrastructure (e.g., having some sort of public funding)—and it had a value of 0.105. Other criteria were less significant, with values below 0.100.

The determination of criteria weights from the remaining 13 countries was carried out in a similar way. The obtained criteria weights according to countries are shown in

Table 8 and

Figure 4.

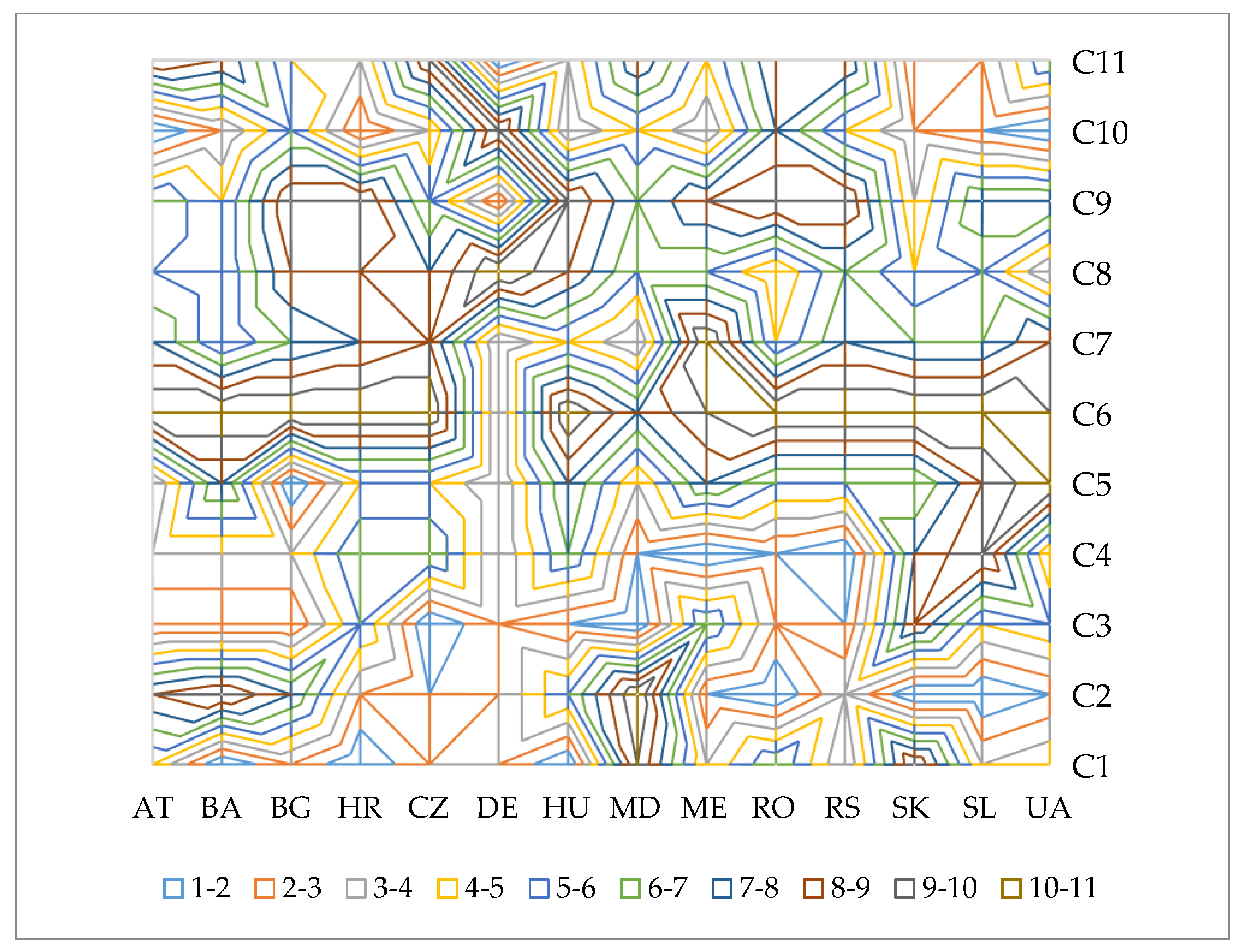

The significance and ranks of criteria according to countries are shown in

Table 9 and

Figure 5.

As can be seen from

Table 8 and

Table 9, and

Figure 4 and

Figure 5, there was a big difference in the importance of the criteria according to the attitudes of respondents from different countries.

Based on the above, it can be seen that there were noticeable differences regarding the importance of the criteria in different countries. According to the Austrian respondents, four criteria, namely, C3, C4, C5, and C10, were the most influential, where criterion C10—degree of science-public authorities’ cooperation related to HPC—had the highest weight, that is, 0.13. It is also significant that the weights of these criteria were approximately the same, that is, the difference between weights of criteria C10 and C4 was very small, at 0.01. According to the opinions of respondents from Bosnia and Herzegovina, criteria C1, C3, C4, and C4 were identified as the most influential, where criterion C1—availability of free HPC infrastructure (e.g., having sort of public funding)—has the highest weight, that is, 0.18. It should be noted that a higher difference between the weight of the most influential criterion and the weight of the least influent criterion was observed in this case, at 0.01. In the case of Bulgaria, five criteria were identified as the most significant, namely C1—availability of free HPC infrastructure (e.g., having some sort of public funding), C3—availability of skilled human resources, C4—degree to which universities equip students with the necessary knowledge to work in HPC, C5—availability of competitive public funding (e.g., direct public funding, grants, awards, baseline funding), and C11—HPC prioritization in legislative documents and strategies.

Based on the research conducted in Croatia, the five most influential criteria could be identified, namely C1, C2, C5, C10, and C11. The criteria C1—availability of free HPC infrastructure (e.g., having some sort of public funding), and C10—degree of science-public authorities’ cooperation related to HPC, had approximately the same weights, while the weights of the criteria C2, C5, and C11 were slightly smaller. In the case of the Czech Republic, the criteria C3—availability of skilled human resources, C2—availability of commercial HPC infrastructure (where you have to pay for using it), C1—availability of free HPC infrastructure (e.g., having sort of public funding), and C10—degree of science-public authorities’ cooperation related to HPC, were recognized as the most significant ones. In the case of Germany, the difference between the weight of the most and least influential criterion was very low at only 0.02, which is why all the criteria had approximately the same significance.

According to the respondents from Hungary, almost 50% of the total importance belonged to criteria C1, C3, C10, and C11, while according to the respondents from Moldova six criteria, namely C3, C4, C5, C7, C8, and C10, were singled out as the most influential. In the case of Montenegro respondents, the difference between the weights of the best and worst placed criteria was only 0.01, which is why almost all the criteria had approximately the same significance, and the most significant were the criteria C4, C2, C1, C9, and C11, to which more than 60% of the weight belonged. According to Romanian respondents, the most important criteria were C2—availability of commercial HPC infrastructure (where you have to pay for using it), C4—degree to which universities equip students with the necessary knowledge to work in HPC, and C3—availability of skilled human resources.

In the case of Serbia, the most important criteria were C4, C3, C1, and C2, while in the case of Slovakia, the most important criteria were C2, C11, and C10. According to the opinion of the respondents from Slovenia, the most important criteria were C2, C1, C11, and C1, while according to the opinion of the respondents from Ukraine, the most important were criteria C10, C2, C8, and C4.

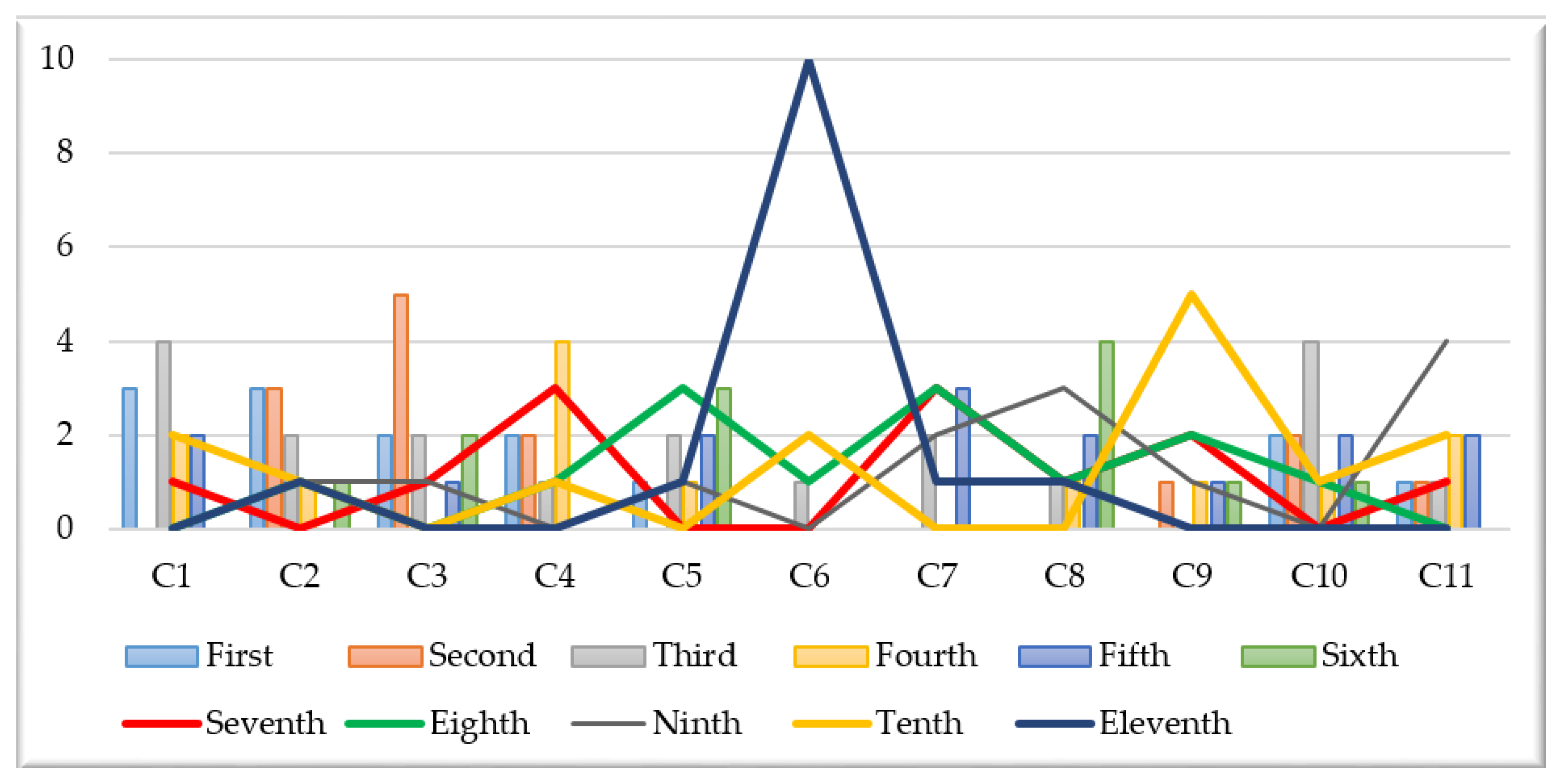

The number of occurrences of criteria from the first to eleventh position in the rankings is shown in

Figure 6.

From the above table, it can be seen that criteria C1—availability of free HPC infrastructure (e.g., having some sort of public funding), C2—availability of commercial HPC infrastructure (where you have to pay for using it), C3—availability of skilled human resources, C4—degree to which universities equip students with the necessary knowledge to work in HPC, and C10—degree of science-public authorities’ cooperation related to HPC were often highly ranked. Their importance was also confirmed by the high mean values of their weights.

Criteria C1—availability of free HPC infrastructure (e.g., having some sort of public funding) and C2—availability of commercial HPC infrastructure (where you have to pay for using it) had the highest number of appearances in the first position, three times each, while criteria C3—availability of skilled human resources, C4—degree to which universities equip students with the necessary knowledge to work in HPC, and C10—degree of science-public authorities’ cooperation related to HPC, each had two appearances in the first position. Criterion C2—availability of commercial HPC infrastructure (where you have to pay for using it) is also interesting because it was once identified as the least important criterion. Criterion C6—availability of private funding for R&D related to HPC, can be mentioned as the least influential criterion because it was identified as the least important criterion based on the attitudes of respondents from nine countries.