1. Introduction: From Decisional Techniques to Decision-Aid Techniques

As Ernest House [

1] (28) notes, all evaluation approaches assume that there is a connection between decision-making and evaluation. How this connection has been interpreted, however, has changed significantly over time. We can broadly distinguish two main views: the traditional view, and a more recent one.

Traditionally, evaluation techniques were considered “decisional techniques”, “decisional tools”. There was a rough idea that, after the important data had been collected, the technique in question would, by itself, indicate the best decision: for instance, the preferable choice among several alternative solutions. This was the assumption behind the first evaluative analyses of an economic character. Evaluations of this kind clearly depended on the more or less implicit adoption of a

rational-comprehensive model, which tended to downplay the ethical and political dimension of decisions, while stressing the role of both technique and technicians [

2,

3]. That approach has been widely criticized since the influential work of Lindblom [

4,

5]. Radaelli and Dente [

6] call this early phase of evaluation research an “age of innocence”.

Partly as a result of such criticism, many evaluation techniques are now considered to be not “decisional tools” but forms of “decision aid/support”. Among the first methods to appear in this “new attire” were the

Planning Balance Sheet Analysis proposed by Nathaniel Lichfield [

7,

8,

9,

10] and the

Goals-Achievement Matrix introduced by Morris Hill [

11]. These techniques were recommended from this new viewpoint precisely because they were seen as means to overcome the hard rationale of certain traditional techniques.

During the subsequent decades, practically all evaluation techniques were developed—and expressly presented—as methods designed to aid decision-making (see e.g., [

12,

13,

14,

15,

16,

17,

18,

19,

20,

21]). Today, the terms “(decision) aid” and “(decision) support” are increasingly common in the acronyms denoting the new approaches. Examples include DSS (Decision Support Systems) [

22], SDSS (Spatial Decision Support System) [

23], CSDSS (stakeholder-driven Collaborative Spatial Decision Support System) [

24], and MCDA (a classic acronym that some reinterpret today as Multi-Criteria Decision Aid) [

25]. As Mahmassani and Krzysztofowicz [

12] (p. 194) observe, the use of properly designed decision aid techniques “can help analysts and decision-makers focus their limited attention, information-processing capabilities, and resources on essential elements of the evaluation, thereby improving the efficiency and effectiveness of the decision-making process” (compare with [

26]).

In this new perspective, evaluators are “advisors to decision-makers”; they are information generators, processors, and analysts, and facilitators of the public dialog [

27] (p. 297).

The problem is that the expression “decision aid” lacks clarity and is by no means unequivocal, for instance, with reference to urban decisional problems. We believe that there is a gap in research and in the academic literature in this regard. Starting from this conviction, this article presents a critical discussion of what being a decision aid might mean for a technical evaluation today.

Section 2 identifies four possible ways in which evaluation techniques can “help” decision-making. We will call them filtering, structuring, prioritizing, and involving.

Section 3 shows how certain evaluation techniques (e.g., Problem Structuring Methods, Discounted Cash Flow Analysis, Cost-Benefit Analysis, Multicriteria Decision Analysis) work with regard to these four dimensions.

Section 4 continues the discussion by underscoring how the current redefinition of boundaries between

technical and

political responsibilities entails specifying what (technical) “aid” might mean.

Section 5 concludes by highlighting the main findings and possible further directions for research.

The article is mainly conceptual and is based on an extensive literature review of decision-aiding methods and techniques. The aim is to revisit the idea itself of decision aid techniques, providing a theoretical framework within which to critically re-discuss this issue.

In general terms, our discussion assumes that the focus is on evaluation techniques which support: (i) public decisions (i.e., authoritative decisions that will be binding for everyone [

28]; (ii) decisions made in the framework of a constitutional democracy (i.e., in an institutional setting where public decision-makers are elected and work within the restrictions of general constraints and counterweights [

29]); and (iii) decisions focused on urban transformations, which have huge social and environmental impacts.

As is well known, urban development is a crucial factor in environmental sustainability. In recent years, the concept of “sustainability” in relation to urban transformations has become increasingly complex. It now encompasses a multiplicity of different aspects: environmental, technical, physical, economic, energy-related, and social. When discussing transformations linked to the city and the urban region, therefore, it is necessary to focus attention on the multidimensional concept of sustainable development, with account taken of the entire range of relationships that can influence the urban system and the local communities involved. Every urban transformation project is in fact characterized by the mediation among the needs of the client, institutional restrictions, financial limits, the respect for certain parameters of environmental protection, and the response of society. It is therefore necessarily generated by a set of intentions, projects, and concrete actions carried out by a multiplicity of actors, whose choices overlap, and sometimes contradict each other.

2. Preliminary Conceptualization: Identifying Four Dimensions of Aid

In regard to urban transformations, the participants in the decision-making process often disagree on what priorities to pursue, or even on the true nature of the problem itself.

In these cases, there is usually a plurality of actors with no subordinate relationships among them, a high degree of autonomy, and their own interests and perspectives that induce them to pursue different objectives (and to identify different elements of the problem as “key factors”). The potential conflict is further exacerbated by the high level of uncertainty in making decisions [

30].

Several aspects characterize decisions about urban transformations, and two in particular suggest the kind of help/aid that decision-makers may actually need: the temporal dimension and the cause-effect relation. In regards to the former issue, urban transformation operations are often fragmented processes, with different time perspectives for various operators. Each evaluative approach requires a specific metric to render the results achieved objectively measurable and comparable. Accordingly, the challenge is to coordinate different operations, which have different amounts of potential in terms of time, profitability, and values [

31]. In regards to the second issue, the point is that the effect of a plan or project cannot be determined linearly from the original schema: the final state achieved does not always fully correspond to the expected one [

32,

33,

34]. Moreover, the effect of an urban regeneration operation can be something more than physical transformation of the urban fabric: consider the narratives in newspapers and social media, the interaction with other projects, etc. None of this can be readily measured or negotiated [

35].

Forms of support for the decision-making process regarding urban issues can be grouped into different categories, depending on the type of aid that the evaluation processes can provide to the decision-maker. We suggest distinguishing four main dimensions of aid:

- -

Filtering information (Fi);

- -

Structuring the problem (Sp);

- -

Prioritizing options (Po);

- -

Involving the public (Ip).

Filtering can be defined as the process of selecting information. It is related to the availability of data, and to the input required by the method which will be applied.

Structuring is related to the definition and representation of the decisional problem. Problems are never given. They are not simply out there waiting to be “solved”. They are always the result of evaluations (made jointly by the expert and the client), concerning for instance: the limits/boundaries to be considered (what aspects of the situation are to be included and what are to be excluded); what factors are the most worrying; what objectives are to be achieved; the timing; etc. These non-strictly objective decisions determine the nature of the problem that ultimately needs to be addressed. Only after structuring the problem is it possible to use formalized models [

36].

Prioritizing, in general terms, is the activity that arranges items or activities in order of importance relative to each other. On applying evaluation techniques, there can be several situations: they range from a final ranking of alternatives to situations where the method must be applied several times to the various alternative scenarios so that solutions can be compared and ordered.

In regards to involving, it should be borne in mind that some methods are inclusive and participative right from the design and processing phase, whereas others consider participation only after the output has been produced.

We will now consider these four dimensions in greater detail.

2.1. First Dimension: Filtering

Many theories on information management and filtering distinguish among data, information, and knowledge. Information is a flow of messages, while knowledge is considered to be the information embedded in people that is created through a process of social interaction [

37].

Ackoff [

38] defines a hierarchy of data, information, knowledge, and wisdom. Wisdom stands at the top of the hierarchy, followed by knowledge, information, and data, in that order. Data are considered products of observations and interactions, which have no value until they are processed and transformed into information [

39] that can be used in the decision-making process. By refining the information, we move on to the knowledge that enables us to control a system, assessing it and eliminating errors in order to make it work properly. Lastly, wisdom is related to the ability to see the consequences of a long-term act [

39].

Thus, the basic assertion is that data are used to create information; the latter is used to create knowledge; and this in turn is used to create wisdom [

40]. The transition from raw data to wisdom—that is, the process whereby data are transformed into more complex phenomena—takes place through filtering, reduction, and refinement [

39].

Data do not have meaning in their raw state. They are products of observation that are given as symbols that represent the properties of objects, events, and environments [

40]. What makes data useless and worthless is that they are without context and interpretation: they arise from elementary, unprocessed observations that enable us to record events, things, activities, and clues that are, however, without a specific meaning [

40]. Whether they become information hinges on the understanding of the individual who looks at them from a functional standpoint [

37].

Information is contained in answers to questions about “who”, “what”, and “when”: they are derived from the data [

40]. What turns data into information is the fact that they are related to a context, and that the relationships between the data and this context or situation are analyzed and understood [

37] in order to make decision-making easier [

41].

The transformation of each element to the next level (i.e., from data to information, from information to knowledge and from knowledge to wisdom) involves understanding relationships, models, and principles respectively [

40]. Data lack meaning and value in themselves. They provide the basis for information, but they have no elaboration or organization. Hence, they must be converted into information through classification, sorting, aggregation, and selection. The processes that then enable information to be converted into knowledge involve organization and processing, use of cognitive frameworks, synthesis of multiple sources of information, and structuring of experiences [

42]. The set of values, experiences, rules, and expert opinions makes it possible to achieve knowledge. The latter is thus processed information that increases the ability to interpret effective actions.

The transformation entails collecting and organizing data, the synthesis of which allows the information to be analyzed and summarized before action occurs. Through this process, the data are contextualized by structuring the consequent action, which is the basis of the decision-making process [

37].

A large number of digital technologies and tools have been produced for the collection and organization of data [

43], including Decision Support Systems (DSS), and Planning Support Systems (PSS). As the literature has pointed out, however, such technologies are difficult to apply in social processes such as spatial planning [

44,

45].

2.2. Second Dimension: Structuring

Structuring is considered to be the “artistic” part of decision analysis; an imaginative and creative process aimed at translating an ill-defined problem into a set of well-defined relationships [

46].

Structuring decision-making problems—a process that starts with a vague and ill-formulated problem and results in one that is modeled and analyzable—is the most important and crucial step in decision analysis [

47]. A decision-maker’s motivation to ask for decision support generally has five reasons: taking new opportunities into account; the need for growth and expansion; controversies between different stakeholders; confusing, unknown, and sometimes conflicting facts; and, lastly, accountability requirements that require choices to be made on the basis of appropriate documentation [

48]. Thus, the most common initial condition consists of concerns, needs, or opportunities that may be vague (and it is not known how to respond to them with a course of action that does not omit relevant aspects).

Phillips [

49] suggests the concept of “requisite decision model”; that is, a model whose form and content are both sufficiently complete to solve a problem. The notion of modeling can be applied to the structure of decision analysis, which requires that structural representations be sufficiently simple and non-complicated in order to grasp the essence of the problem while also producing solid insights [

50]. Thus, structuring the problem emphasizes the formulation of statements by decision-makers about their goals, interests, and concerns, and it transforms these statements into clear and transparent representations that can be formalized mathematically [

50].

Problem structuring is considered to be not only a creative phase but also the most complex stage in the development of decision support systems [

51,

52]. Structuring primarily involves identifying problem elements—such as events, values, actors, decision-making alternatives—and their influence relations [

48]. In this way, the analyst tries to identify and take into account both the objective parts of the problem and the subjective ones (such as the values and opinions of the actors involved). After identifying a set of multiple alternatives and objectives [

53], the analyst will need to identify uncertainties about the outcomes of possible options and what actions should be taken to reduce these uncertainties.

In order to address all these aspects, the most important decision an analyst needs to make is therefore to choose an appropriate analytical structure before starting the numerical modeling and analysis [

50].

Problem structuring can be summarized in three steps [

48]:

Identify the problem. Often, when decision-makers turn to a decision analyst they have only a general idea of the problem. Hence, at this stage it is necessary to investigate the nature of the problem, the decision maker’s values, what stakeholders are involved in the decision, and what the purpose of the analysis is. This step can take the form of simple lists of alternatives and objectives.

Choose an analytical approach. In this second step, the analyst investigates the uncertainties and the conflicting values involved in the analysis. It is thus necessary to understand which analytical decision approaches can be used—and sometimes creatively combined—to explore the alternatives for solving the problem in detail [

54].

Develop a detailed analysis structure. In this step, the focus is on developing a detailed structure for evaluating the problem. For example, hierarchies of priorities among the objectives (which characterize the different projects) will be defined, and criteria will be determined in order to assess how well the projects have contributed to accomplishing the overall objective [

50].

This three-step structuring process rarely follows a strict sequence.

2.3. Third Dimension: Prioritizing

The future is created on the basis of decisions, and a credible future is based on values and priorities. It is thus necessary to be able to address the variety of factors effectively [

55]. There are many factors that influence decisions and the results of the decision-making processes; and often out of impatience we think we can reduce these many diverse factors to only a few (i.e., the ones that we consider important at a certain moment). The reality is that many of these factors may not be so crucial, and actually have a low priority, while others may be very influential [

55].

Evaluation priorities are set in many ways, ranging from a simple list to more sophisticated approaches that combine different parameters, criteria, and evaluation tools [

56]. The latter is the case where multiple criteria are used to select and prioritize problem-solving alternatives.

The question that arises is this: how can priorities be assigned to different factors whose importance can change by many orders of magnitude [

57]?

The first concern when making a decision is

what to include and

where to include it. A widespread approach taken to this issue is to define a hierarchy, for two reasons: first, because it gives an overview of the complex relationships in a situation; second, it helps decision-makers evaluate problems at every level of the hierarchy (thus enabling them to accurately compare homogeneous elements belonging to the same order of magnitude) [

58].

If a hierarchy is not considered, each alternative can be evaluated with a different performance for each criterion (which are compared one by one) [

59]. By introducing a hierarchical structure, the complex problem is broken down into sub-problems, making it possible to compare fewer criteria. The hierarchical structure of an evaluation problem requires that criteria be arranged in ascending order according to the level of abstraction: the higher elements are more abstract and general and are the main objective, while the lower ones are more concrete and particular, and will be the basis on which alternatives are actually compared.

The most commonly used scheme is to have a set of alternatives that are compared in pairs, according to a hierarchy of criteria and based on the decision-makers’ preferences. This pair system is adopted because it is easier to identify preferences by considering a pair of alternatives instead of more than two alternatives all together [

60].

2.4. Fourth Dimension: Involving

An urban development project consists of several steps (e.g., preparation, planning, implementing) whereby specific results can be achieved through proposals, plans, changes, and recommendations [

61]. Urban development includes issues such as transport, land use, infrastructure, housing, economic development, and especially sustainability, which require that a wide range of actors be included in the process [

61,

62]. The actors that influence or are influenced by urban planning are many and highly fragmented. Hence, the purpose of involving them should be to obtain input on concerns and priorities concerning the issues that need to be addressed and resolved.

Many multi-sectoral projects do not create a substantial role for citizens; but in many cases, solving these complex problems revolves around direct citizen participation [

63].

An urban project is a multi-agent process [

64]. It is supervised by strategic agents, such as public authorities and investors who apply or influence regulations. It is implemented by operational agents, such as planners who involve stakeholders through participatory processes. The stakeholders include not only public administrations and investors but also citizens, residents, organizations, and businesses.

Stakeholders can participate in one, several, or all phases of project development (preparation, planning, implementing, and evaluating), doing so in many ways, such as public meetings, conferences, focus groups, or workshops [

64,

65].

In recent decades, a number of approaches have been used to support decision-making by involving stakeholders in the planning process, and thus combine different types of knowledge to enhance the understanding of complex issues [

66]. Involving social actors enriches planning processes. Their participation can be described as the engagement and interaction among the actors, actions, and decisions [

61]. Participation, indeed, is often associated with the concepts of democracy and justice: it is the element that makes it possible to achieve socio-cultural and economic objectives [

61,

67].

From this perspective, citizens can: (i) help professionals to understand and frame the problems in question more accurately; (ii) help to judge the ethical or material tradeoffs needed to make a decision; (iii) provide important information for building solutions and assessing possible intervention scenarios [

63].

Considering stakeholder theory in

Operational Research, three issues can be emphasized: stakeholder theory can be applied as instrumental or moral theory; it can focus on tradeoffs or focus on avoiding them; and, lastly, it can focus on the decision-making organization or on engaging stakeholders [

68]. Accordingly, three different approaches can be taken.

Adopting an “instrumental theory” means that stakeholders are involved in order to have some return on the project; instead, adopting a “moral theory” means focusing on the stakeholders because it is considered the right thing to do. Choosing to act according to one theory or the other has implications for decisions: if an instrumental theory is followed, only those stakeholders who can influence project performance are included, while in the case of moral theory a larger set of stakeholders is taken into account (including those who do not have the power to influence the final performance, but who can nevertheless be affected by it).

The second question relates to the difference between focusing on tradeoffs and avoiding them. In the former case, alternatives are considered as given; in the latter, case stakeholders are encouraged to look for new solutions which match the interests of all stakeholders. The choice between these two lines of action is important because, if tradeoffs are sought, the problem of which stakeholders have priority over the others will arise, while avoiding tradeoff makes it possible to ignore this issue.

Thirdly, studies on stakeholder theory show that there is a wide difference between the interests that planners/organizers consider to be important for stakeholders and their real interests [

68,

69]. This misidentification of interests can lead to problematic decisions when the process is implemented.

To conclude, those who emphasize the aspect of involvement require evaluators to provide “for an equal expression of the participants’ points of view and to organize the confrontation of interests”; their role “is to mediate, to facilitate by proposing methods and tools as an aid to negotiation” [

70] (p. 354).

3. Rediscussing Evaluative Techniques in Terms of the Four Dimensions of Aid

Evaluation takes place in all phases of decision making about urban transformations, and several techniques and tools are available, depending (i) on the phase in which the evaluation takes place (before, during, or after the completion of an urban transformation), (ii) on the accessible data and, above all, (iii) on the purpose of the evaluation.

To pursue sustainable interventions in urban settlements, monetary and non-monetary evaluations are usually used in the ex-ante phase. A monetary evaluation is characterized by an attempt to measure all effects in monetary units, whereas a non-monetary evaluation utilizes a wide variety of measurement units.

In particular, four types of evaluation analysis are often used in this context: Problem Structuring Methods; Discounted Cash Flows Analysis; Cost-Benefit Analysis; and Multicriteria Decision Analysis. In what follows, these evaluation methods are observed through the lens of the above-mentioned four dimensions of aid.

3.1. Problem Structuring Methods

Problem Structuring Methods (PSMs) are participative and interactive techniques that focus on structuring problems rather than solving them directly [

30]. PSMs were developed to bridge the gap between traditional Operational Research and decision analysis in order to address complex, ill-structured problems better. These problems are called “wicked problems” [

71]; that is, complex problems for which there is no simple method of solution. Design studies received considerable attention during the 1960s and 1970s when Horst Rittel proposed this notion of wicked problems, arguing that most designers deal with this kind of problematic situation [

72,

73]. Indeed, design research and practice normally tackle ill-structured, ill-formulated problems (such as transforming historic urban areas, or deciding on a transportation policy, or defining policies to face climate change) [

31]. In particular, “the problem for designers is to conceive and plan what does not yet exist” [

73]. Designers always try to describe and control what is yet to happen by imagining the implications of choices, the possible consequences of different alternatives, and their potential links and associations [

74,

75]. Even if the final result and the future are unknown, it is still possible to investigate strategic approaches to managing uncertainties about future events and consequences of choices made in the present [

76,

77,

78].

The assumption that led to the development of PSMs was that in real-world situations it is not always possible to find a single uncontested representation of the problem situation under consideration. To deal with such situations, PSMs were designed to represent problems by recognizing multiple perspectives [

77]. A representation was necessary at an early stage to cover most of the characteristics that impacted on these systems, using visual, rather than analytical, models to enable: (i) understanding and discussion of the problem, (ii) increasing engagement, and (iii) identification of potential improvements.

PSMs assign low importance to

Fi (

Figure 1): there are no specific initial requirements for applying this method. In fact, data of any kind can be used, quantitative or qualitative, precise or rather vague, in any context. They can consider: the costs of an intervention; the geographical location; the architectural aspects of a building (viz. the parking ramp, the type, the construction materials, the heating system); the time of the intervention; the energy consumption, the management methods, etc. For example, the Strategic Choice Approach (SCA), which is the PSM mainly used in urban and architectural fields, recognizes the presence of different levels of uncertainty in the decision-making process, managing them with the means available to the decision-maker. More precisely, three types of uncertainty are identified. They concern the working environment, the guiding values, and the related decisions.

As their name implies, these techniques assign very high importance to Sp, since structuring is their essential purpose. Several steps and specific forms of representation guide those who apply the method to illustrate also graphically the fundamental elements of the decision problem. We mention by way of example, the “decision graph”, the “option graph”, the “decision scheme” in the SCA, and the “rich picture” in Soft System Methodology.

Po is assigned medium-low importance, because PSMs allow comparison among alternatives, but in qualitative terms and with a certain degree of approximation. Again, in the SCA, the concepts of “comparison area”, the “relative assessment”, and the “advantage comparison” are explicitly very general; and can be adapted to guide the work of the comparing mode at a variety of levels, ranging from a rough definition of “pros” and “cons” to a very detailed quantification.

PSMs assign very high importance to

Ip. Involving is crucial precisely because PSMs first arose as participatory techniques enabling participants to clarify their values, converge on a potentially actionable mutual problem, and agree on commitments that will at least partially resolve it. PSMs were born to be cognitively accessible to actors with a range of backgrounds and without specialist training, so that the developing representation can inform a participative process of problem structuring [

77]. Involving occurs in all three IPO phases (Input, Processing, Output), but mainly in the first two.

3.2. Discounted Cash Flow Analysis

Discounted Cash-Flow Analysis (DCFA) is a quantitative economic evaluation technique. It is one of the forms of financial evaluation that analyzes the present net value of an investment in a project [

79,

80,

81].

The DCFA is presented as a matrix where the rows show incoming and outgoing financial flows, and the columns show the periods of time into which the project is divided, a duration which is set arbitrarily according to the time that it is estimated the project will take.

A project’s profitability is evaluated by discounting cash flows. Two synthetic indicators of financial profitability are obtained: Net Present Value, which is the sum of all discounted future cash flows, and the Internal Rate of Return, which is the annualized rate of earnings on invested capital.

The return is calculated by considering the revenues and costs, and the difference between them, for each time period. The flows thus obtained must be discounted to present value, because differences between revenues and costs at different periods cannot be compared. They cannot simply be added up because there is compound interest, so an appropriate discount factor is applied to reduce the future financial value of an investment to its current value.

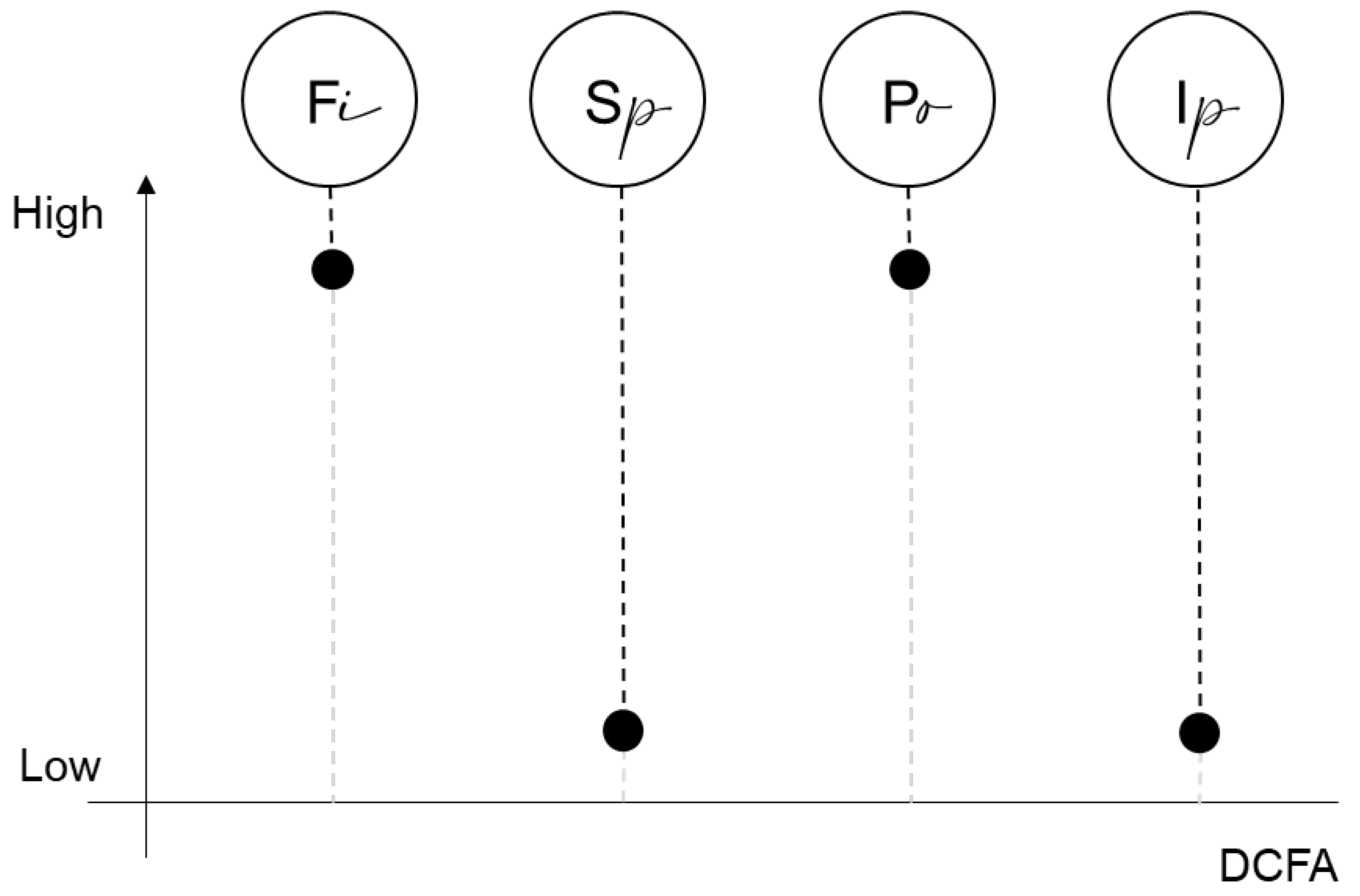

DCFA assigns high importance to

Fi (

Figure 2): only quantitative monetary data can be used. More in detail, in an urban transformation, it is necessary to estimate the costs of acquisition of the property (building or land to be converted), of the remediation, construction, design, supervision of works, etc. Similarly, in regards to revenues, it is necessary to make appraisals of possible sales/lease prices, sales time, quantities sold. Managing missing data is very difficult. For this reason, it is necessary to perform accurate market research and obtain all the data required for the analysis.

Low importance is given to Sp because the problem is already structured in terms of the costs and revenues that the project/plan will generate. The more detailed the urban transformation project is, the more accurate the cost estimate will be and the more likely the market analysis will be. The only hypothesis that must be advanced concerns the operation’s periodization and overall duration.

In regards to Po, DCFA assigns it high importance, given that this technique gives a clear indication of whether or not the operation is feasible (and the indicators are all monetary, referring to actual flows). For example, when comparing two alternative projects to transform an area for the same investment, DCFA suggests choosing the project that generates the highest profitability. The only difficulty is that several applications are needed to compare different scenarios.

DCFA assigns a low importance to Ip. Once the information has been collected, the method is applied by the evaluator, and the public can be involved in participatory discussion (of the results) only at the output stage.

3.3. Cost Benefit Analysis

Cost-Benefit Analysis (CBA) is conducted to find, for a given problem, the solution that will achieve the greatest overall societal welfare. CBA is a systematic and analytical process of comparing the benefits and costs of a project, often of a social nature. It is a formal technique for making informed decisions on the use of society’s scarce resources [

82].

A CBA should include all the benefits and costs associated with an action, whether those goods are marketed (and therefore have price tags), or whether they are outside normal market operations (for instance, air quality or climate change). CBA is usually conducted to estimate environmental assets in a hypothesis of urban and territorial transformation because it can take into account also the externalities generated by the intervention. Externalities are the effects—advantageous (positive externalities) or disadvantageous (negative externalities)—exerted on the production or consumption activity of one individual by the production or consumption activity of another individual, which are not reflected in the prices paid or received. Positive externalities include, for example, an increase in real estate values in the presence of historical-architectural or landscape resources, local development caused by the presence of commercial activities, etc. Negative externalities include damage to historical assets caused by overcrowding, water pollution associated with the use of pesticides in agriculture, etc.

When it is not possible to assign a market value to a given impact, the value is estimated directly by using stated preference methods such as willingness-to-pay and willingness-to-accept, or indirectly by using revealed preference methods like hedonic pricing [

83].

CBA assigns medium importance to

Fi (

Figure 3) because monetary and non-monetary data are considered. This assessment is linked to the fact that, on the one hand, the spectrum of information that can be included in this analysis is very broad; on the other hand, it is more difficult to apply than DCFA, because special procedures must be followed to convert all data into monetary flows. As part of an urban redevelopment project, to be estimated on the cost side are, for example: direct costs (real estate redevelopment costs); indirect costs (inconvenience incurred by the regular users of that specific urban area); intangible costs (inconvenience due to noise and more generally to pollution generated by construction sites). As far as the benefits are concerned, these comprise direct benefits (increase in value of the buildings after the redevelopment project), indirect benefits (improvement of safety conditions in the requalified urban environment), and intangible benefits (contribution to reducing social degradation).

Sp is assigned medium importance. Although the structuring of the problem is apparently very well-defined (as in the DCFA), actually calculating direct costs and benefits and indirect costs and benefits—for which there is no active market (e.g., environmental goods or valuable architectural assets)—requires definition of their “shadow price” (i.e., an estimate of the probable value reflecting the real scarcity of the asset). This operation is complex and delicate.

CBA assigns high importance to Po because the output is a number that “looks” like currency. In fact, the weak aspect of this technique is that, being mainly based on monetization, it can cause a distortion of the values at stake.

In regards to Ip, CBA assigns it low importance. If involving takes place, it will only be after the technique has been applied by the experts that the output can be used in discussing the decision tables.

3.4. Multicriteria Decision Analysis

In general, multicriteria evaluation “is primarily regarded as an aid in the process of decision-making and not necessarily as a means of coming to a singular optimal solution” [

17] (p. 172). In fact, it is generally recognized that MCDA can provide useful support in structuring decision processes involving urban transformations because it enables several aspects to be considered in a complex situation [

84,

85]. MCDA helps the decision-makers in considering qualitative and quantitative aspects and different points of view, as well as in integrating different options. However, since there are many kinds of MCDA, careful attention should be paid to selecting the method most suitable for the decision context analyzed [

86].

Because MCDA encompasses a large family of techniques, we will apply our scheme to two related methods much used particularly in the context of the evaluation of sustainable urban and territorial projects: the Analytic Hierarchy Process (AHP) and the Analytic Network Process (ANP).

The AHP [

58,

87] is a MCDA method based on ratio scales for producing performance scores for the criteria considered and determining their importance. AHP structures the problem at hand hierarchically, where the overall goal is at the top of the hierarchy, and the alternatives to be decided are at the bottom. The criteria used to evaluate the alternatives are in the middle of the hierarchy, between the overall goal and the alternatives themselves. AHP uses a system of pairwise comparisons to weight the criteria and rank the alternatives. The basic idea of the methodology is to transform an objective numerical evaluation for a criterion into a subjective measure of attractiveness.

The ANP is a generalization of the AHP. The basic structure is an influence network of clusters and nodes contained within the clusters. Priorities are established in the same way as in the AHP, using pairwise comparisons and judgment. Many decision problems cannot be structured hierarchically because they involve the interaction and dependence of higher-level elements in a hierarchy on lower-level elements. Not only does the importance of the criteria determine the importance of the alternatives as in a hierarchy, but also the importance of the alternatives themselves determines the importance of the criteria [

88].

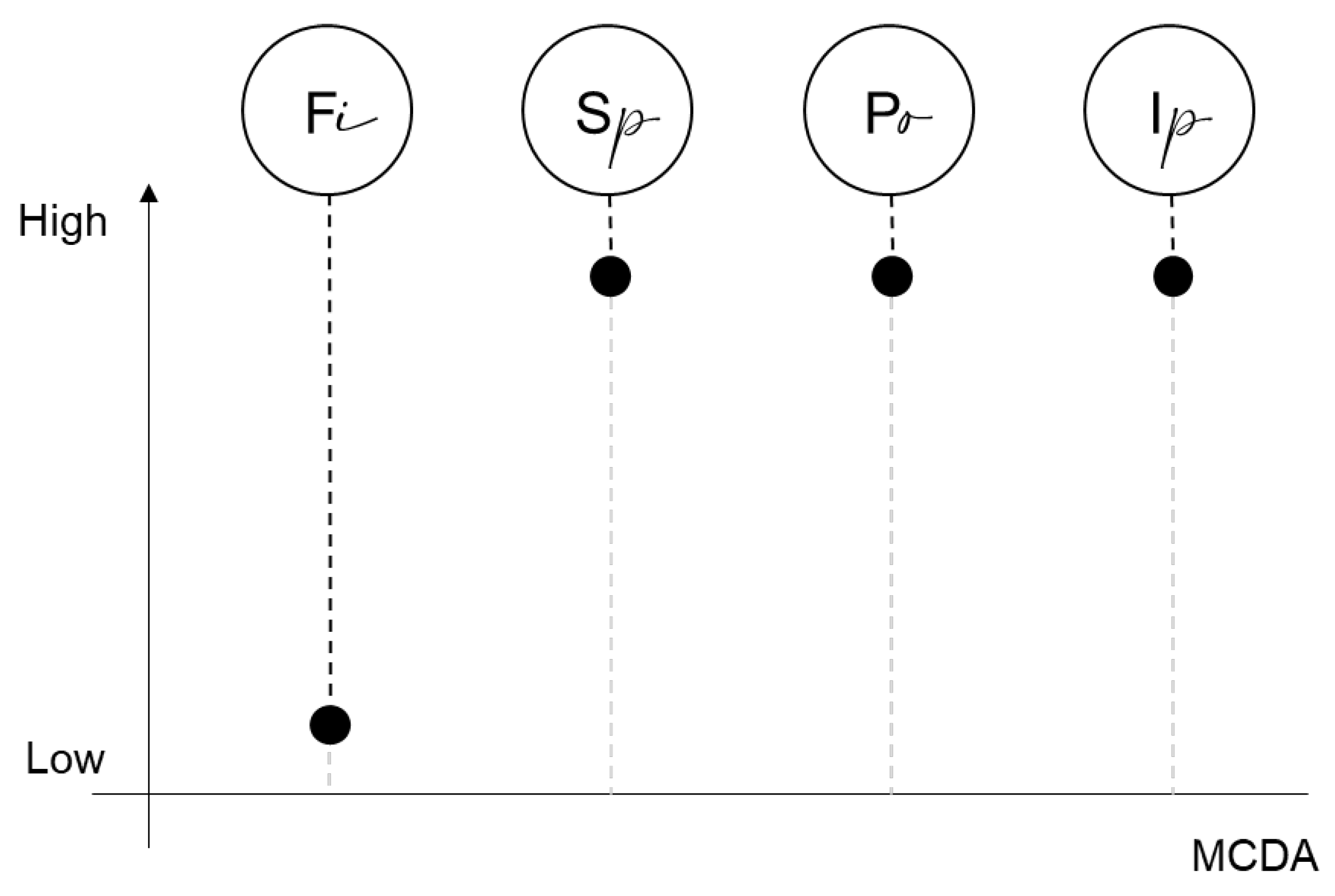

MCDA assigns low importance to

Fi because both qualitative and quantitative data can be used (

Figure 4). MCDA was created to supersede use of the exclusively monetary indicator to evaluate complex projects. Thus, in urban areas it enables account to be taken of all aspects in their own unit of measurement. Transport aspects can be considered (e.g., the distance between underground stations, frequency of vehicles, etc.); environmental aspects (e.g., type of land reclamation required; impacts on local fauna, etc.); architectural aspects (e.g., aesthetics of a building or a bridge; choice of building type), and so on.

Sp is assigned high importance. This is a fundamental part of the method’s success. The MCDA in fact analyzes the objectives through the structure of a complex problem. The criteria for the selection of alternatives are expressed in specific terms of targets and not in terms of basic rules. The structure and the model underlying the articulation of objectives play an important role and, moreover, the approach is closely linked to the analysis of the preferences of decision makers.

MCDA assigns high importance to Po because the method’s output, in the case of both AHP and ANP, is a ranking of alternative solutions to the problem. Such a result, with a ranking of alternatives obtained according to the decision-maker’s preferred judgements, is particularly effective because it is easily understandable and communicable.

MCDA also assigns high importance to Ip because it is a participatory tool by definition. A participatory approach, involving users, planners, and decision-makers at all levels, is one of the factors in the dissemination and success of MCDA. In fact, those involved in a decision-making process are often not able to specify, at first approximation, all the requirements and expectations with respect to the problem to be assessed. Their continuous involvement affords greater understanding of the problem itself, encouraging commitment to a solution.

4. Discussion: Where to Draw the Line between Technical Aid and Political Decisions (and What Kind of Line)

After revisiting certain decision-aid evaluation techniques and their support role, it might be interesting to reverse our basic starting question by asking: in what way does the public decision-maker (in a constitutional democracy) need aid (e.g., to decide about urban issues that have an important social and environmental impact)?

Obviously, any public decision needs to be underpinned by theories and empirical evidence, such as, for instance: “If you do X (e.g., you create a certain urban expansion), Y will happen (e.g., there will be a certain environmental impact)”. But does the decision-maker need something more?

The point is that, today, all evaluation techniques either implicitly or explicitly assume that this is the case and focus on doing and offering more. Specifically, they do not focus simply on providing explanatory theories and empirical evidence about certain phenomena (urban and environmental, for instance), but rather on organizing and structuring elements and aspects of the decision-making process itself.

In other words, the aim is not simply evidence-based decision-making (an idea that has often been applied in an orthodox positivistic perspective [

89]), but, rather, appropriately framed, appropriately conducted, decision-making [

90].

The crucial question is therefore: Where can we draw the line between decisional responsibility (and competence) assigned to public decision-makers and the responsibility (and competence) of technicians in assisting/supporting them? Specifically, how can policy and science/technique be successfully and effectively combined today? Unfortunately, the tragic events of the COVID-19 pandemic have shown that none of this can be taken for granted [

91].

This article does not claim to provide a direct answer to this crucial background question; rather, it wishes to suggest that, today, such an answer would require a critical debate and clearer understanding of what “aid” (to decision-making) can actually mean.

As the article has sought to show, the point is not so much the type of relationship between “evaluation” and “decision” (as is often repeated and overgeneralized in the literature on urban and environmental issues), but between “technical evaluation” and “political evaluation (and decision)”. In other words, some type of evaluation is always involved, whether in the technical sphere or in the political one.

Hence, the issue is not to distinguish between a completely neutral role—that of “technicians”—and an intrinsically non-neutral one—that of “politicians”. (For a critical assessment of this traditional view, see [

92].) Instead, we need to distinguish between the roles and responsibilities of “non-elected experts”, and the roles and responsibilities of “elected decision-makers” [

93]. We also need better understanding of how the former can actually assist and support the latter, obviously being unable to replace them; and without providing them with excuses to shirk their unavoidable decisional responsibilities [

94].

In the end, evaluation cannot substitute the decision-making process. As a consequence, the real critical concern is the actual impact of evaluation

on the decision process [

6] (p. 64).

5. Conclusions

There is a recurrent question in the field of evaluation techniques that is crucial also when addressing urban sustainability issues: Is the role of analysts/evaluators (i) to make the decision themselves by recommending a specific course of action, or is it rather (ii) to present the problem and its implications? [

95] (p. 109).

The first option is the one traditionally adopted by evaluation techniques considered as “decisional techniques”. As indicated in the Introduction (

Section 1), this position is open to criticism, although the role of evaluation techniques could be easily specified from this perspective.

The second option is the one more recently adopted (and critically presented and discussed in this article:

Section 2,

Section 3 and

Section 4) according to which evaluation techniques are instead “decision-aid techniques”. This recent substantial change in the meaning of evaluation techniques has made their role less clear, or at least less intuitively understandable. While certain evaluation techniques have become particularly sophisticated today, their actual role as decision aids has not been explored in detail.

Recognizing this gap, this article has attempted to (i) identify and distinguish four possible dimensions of “aid” (i.e., filtering, structuring, prioritizing, involving), and in this light to (ii) critically re-examine some evaluation techniques (i.e., PSMs, DCFA, CBA, MCDA). As we have seen, different evaluation techniques assign different importance to the various dimensions of aid identified. Hence, using one technique instead of another depends on the type of aid one would expect and that can be obtained. This is a crucial question in political decisions regarding urban sustainability issues, where the traditional linear economic model “take-make-dispose” based on the possibility of having access to large quantities of resources and energy is no longer suitable. The most recent debate on the sustainability of the urban environment focuses on the possibility of designing cities on the basis of the concept of circular economy. In a complex strategy where the different “linear economies” of the various actors involved have to be combined, it seems essential to revisit also the role of decision support instruments in this light.

This article is conceptual and has therefore the typical limitations of mainly theoretical inquiries. We hope it is nevertheless helpful in critically revisiting a crucial issue—what kind of aid evaluation techniques can provide—which also has important practical implications (for instance, in addressing urban environmental problems). Further research developments could empirically detect the concrete support of specific applications of the evaluation techniques discussed. Clearly, the techniques considered here are merely a sample, and the range could be extended to include yet other techniques. New research developments could also explore the possibility of revised or new decision aid techniques.