Enhancing Rural Innovation and Sustainability Through Impact Assessment: A Review of Methods and Tools

Abstract

1. Introduction

2. Developmental Evaluation—A Theoretical Background

3. Materials and Methods

3.1. Step 1: Systematic Literature Search

3.1.1. Data Collection

3.1.2. Analytical Framework

3.2. Step 2: Selection of 18 Articles

4. Results

4.1. RQ 1. Which Methodological Approaches and Impact Areas Have Recently Been Studied in the Literature and Which of them Are Development-Oriented and Seek Adaptation by Integrating Feedback from Stakeholders?

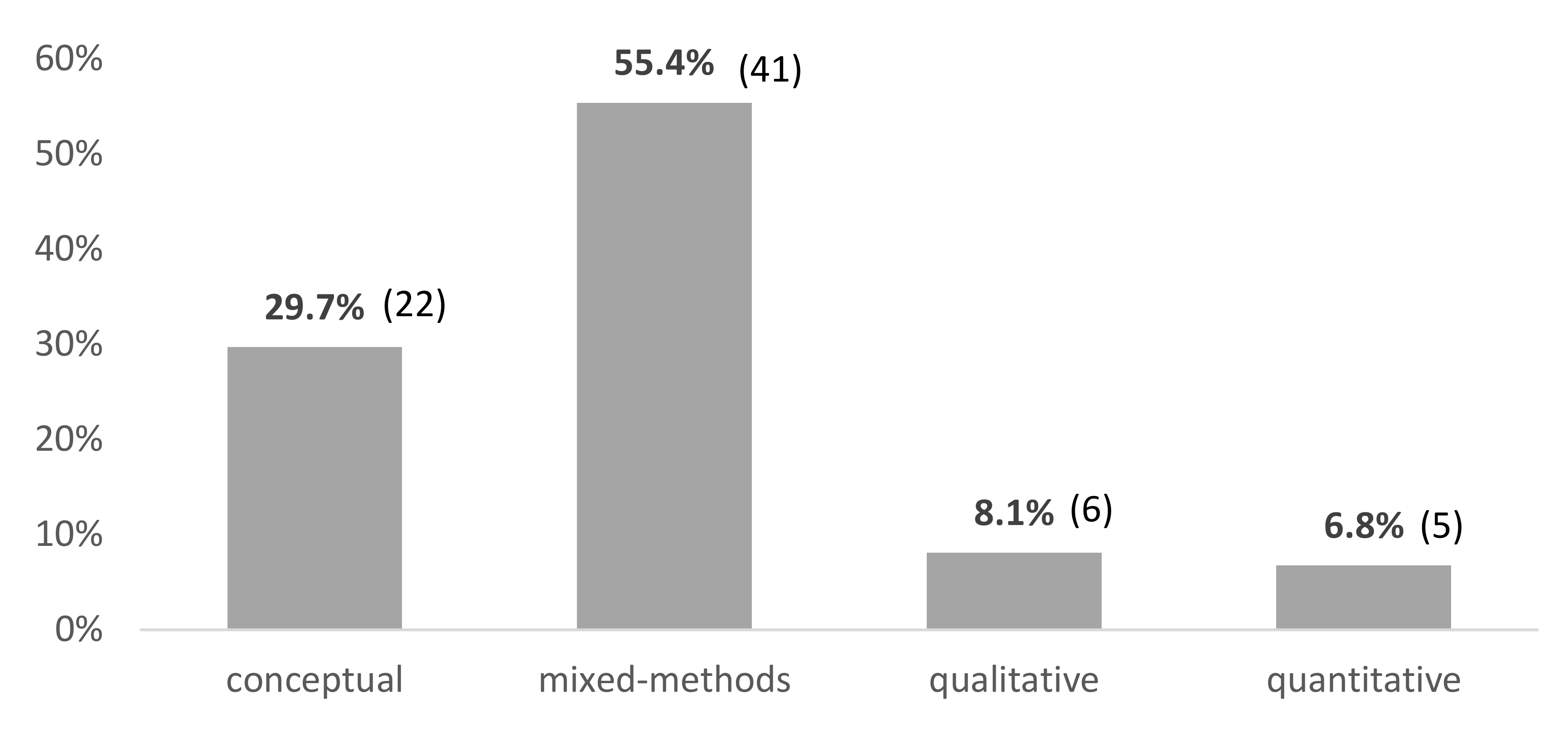

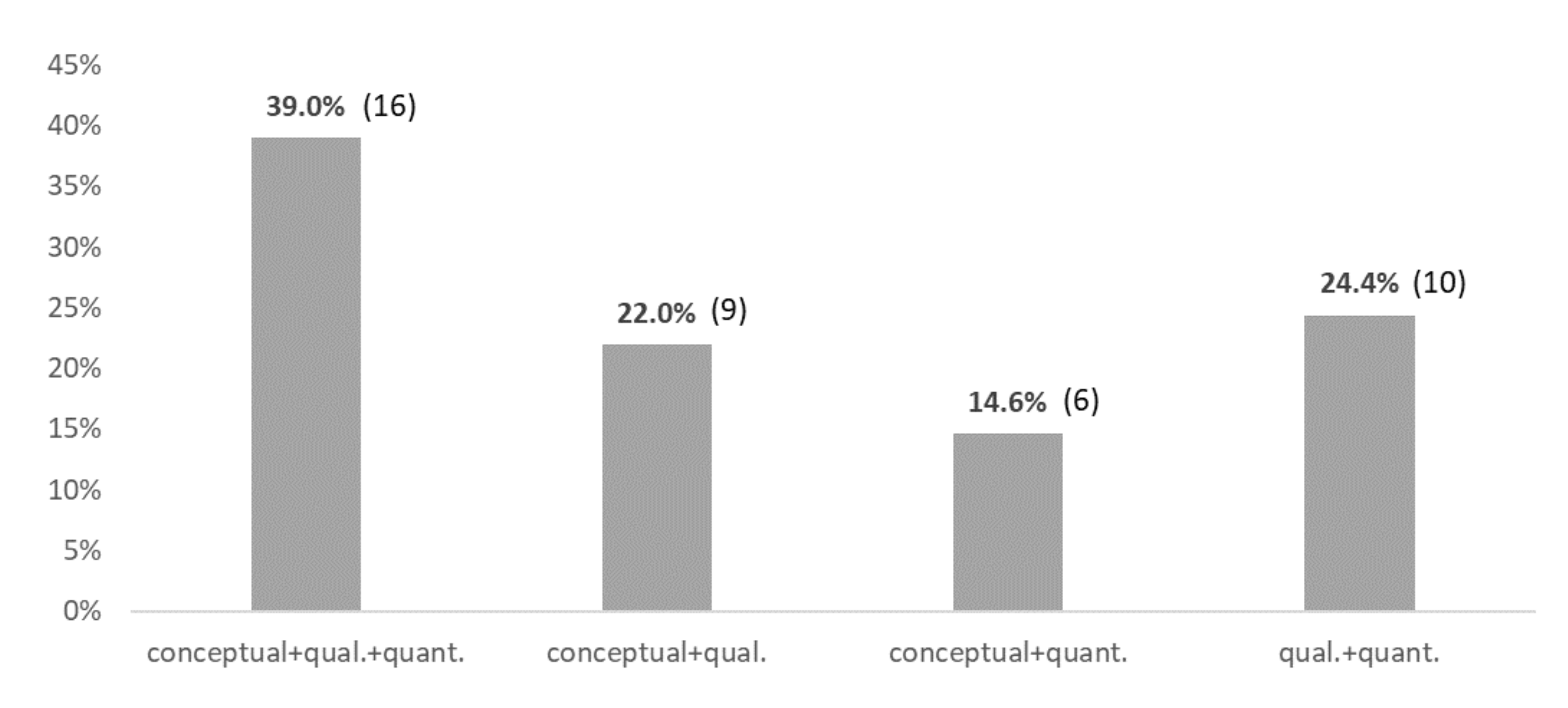

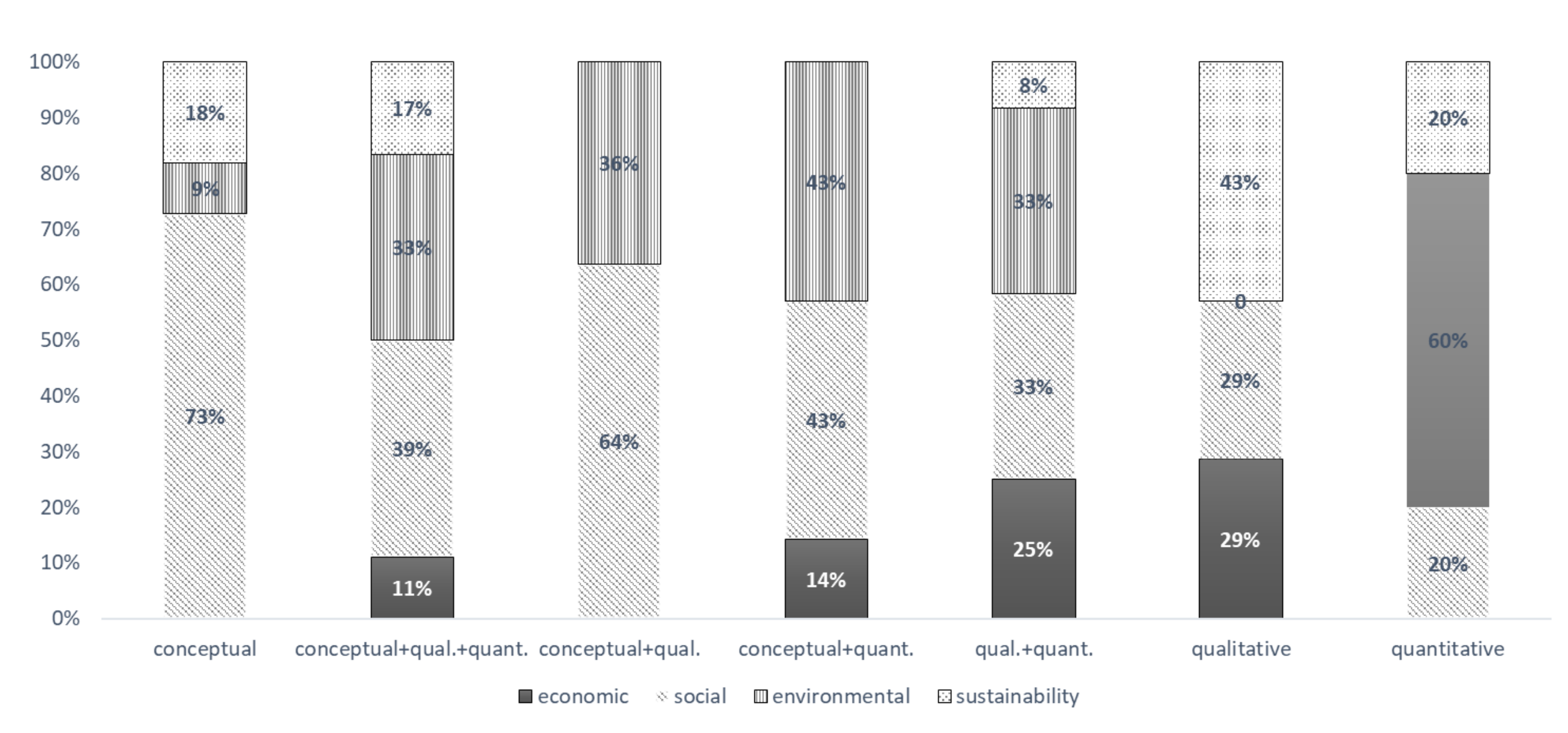

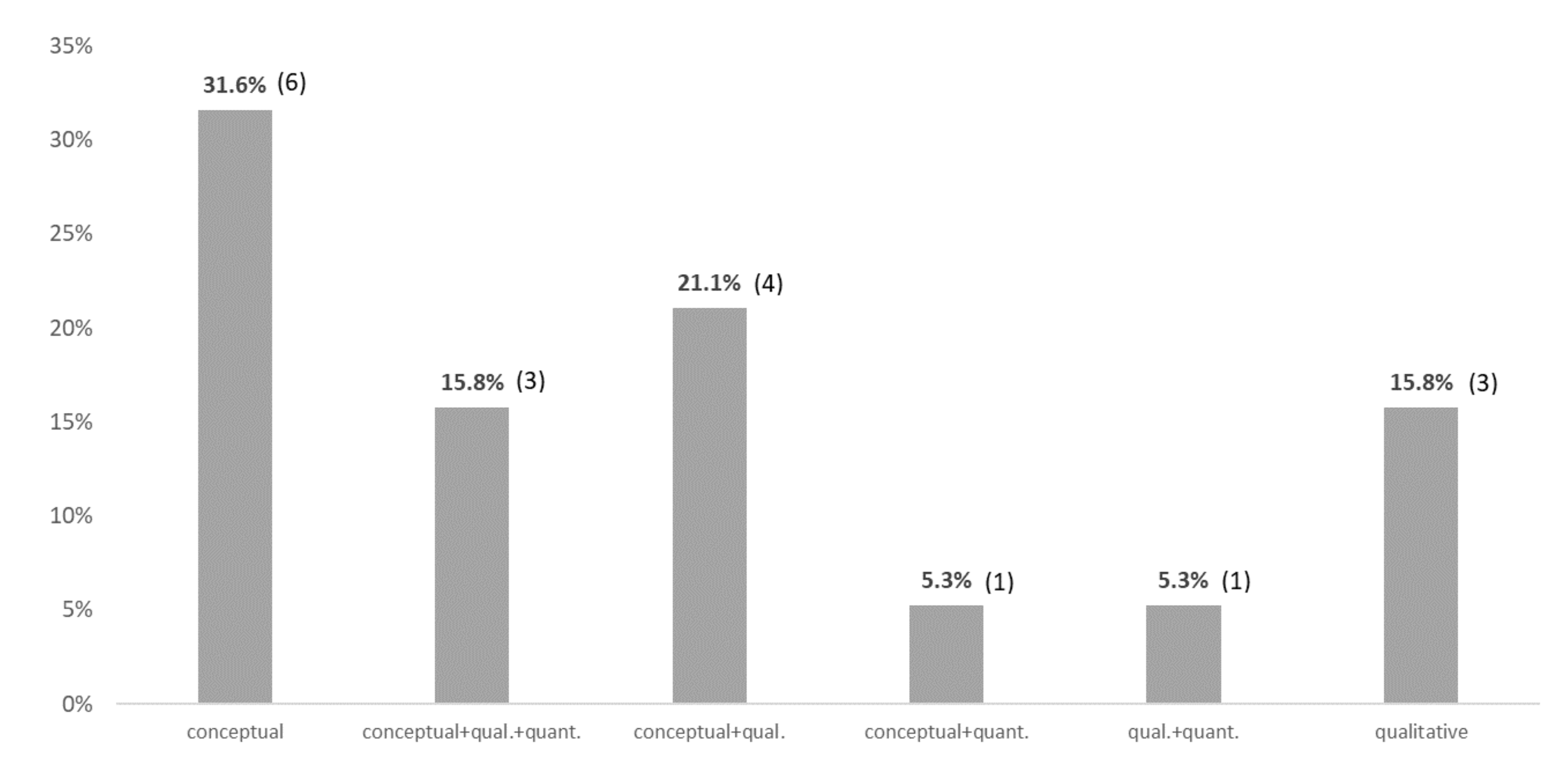

4.1.1. Methodological Approach

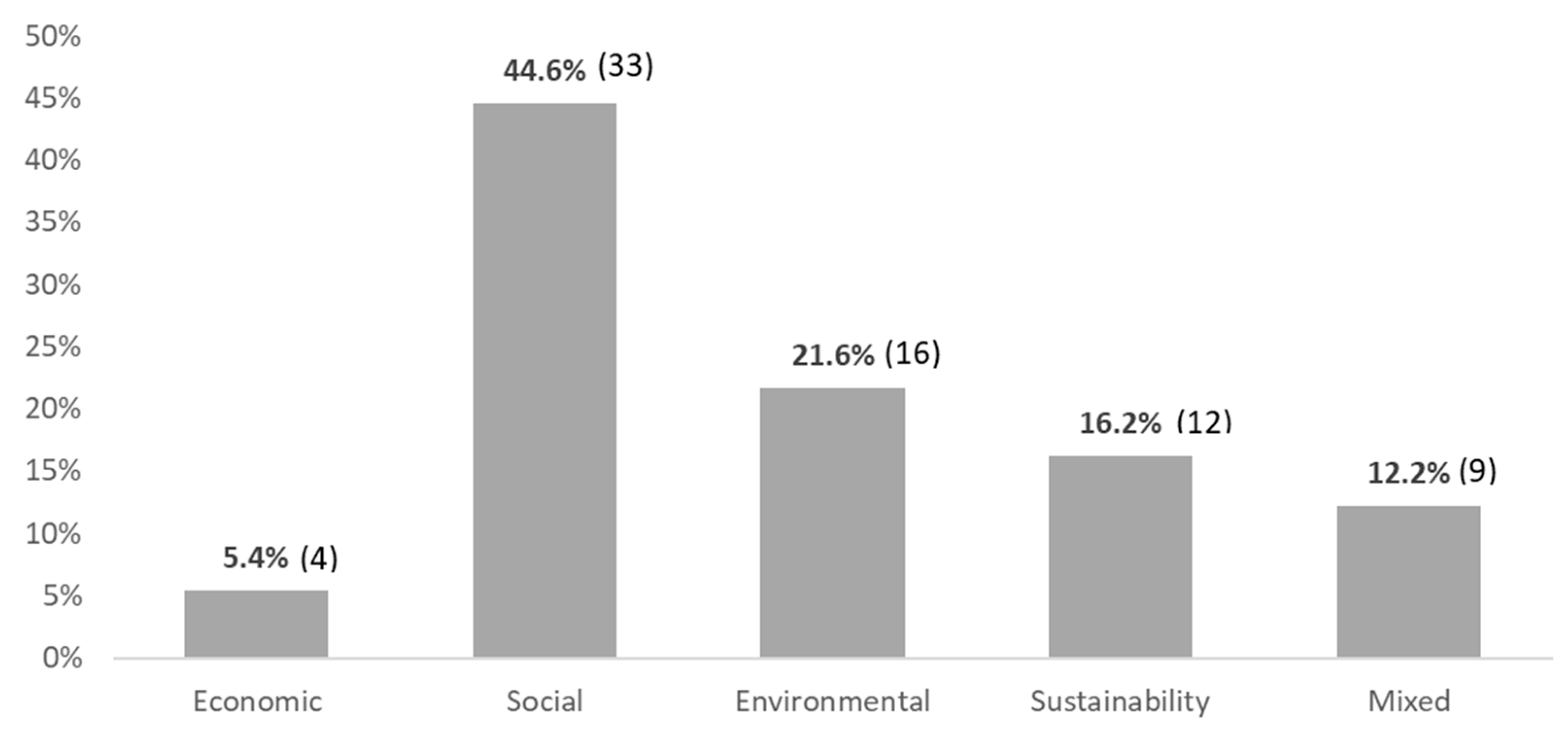

4.1.2. Impact Area

4.2. RQ 2. What Challenges Are Faced in Innovation Processes in the Rural Area?

4.2.1. Identifying a Problem and Being Committed to Change

4.2.2. Co-Creating and Being Context-Dependent

4.2.3. Involving Stakeholders throughout the Process Through Interaction

4.3. RQ 3. Which of These Challenges Are Supported by Impact Assessment?

4.3.1. Responding to the Needs of Stakeholders and Their Context

4.3.2. Thinking in Terms of Complexity

4.4. RQ 4. What are the Current Challenges of Impact Assessment?

4.4.1. Co-creation and Design in Order to Respond to the Context

4.4.2. Developmental Process

4.4.3. Timely Feedback

4.5. RQ 5. What Tools and Methods Are There to Solve the Challenges of Innovation Impact Assessment and Which of the Challenges Remain to Be Solved?

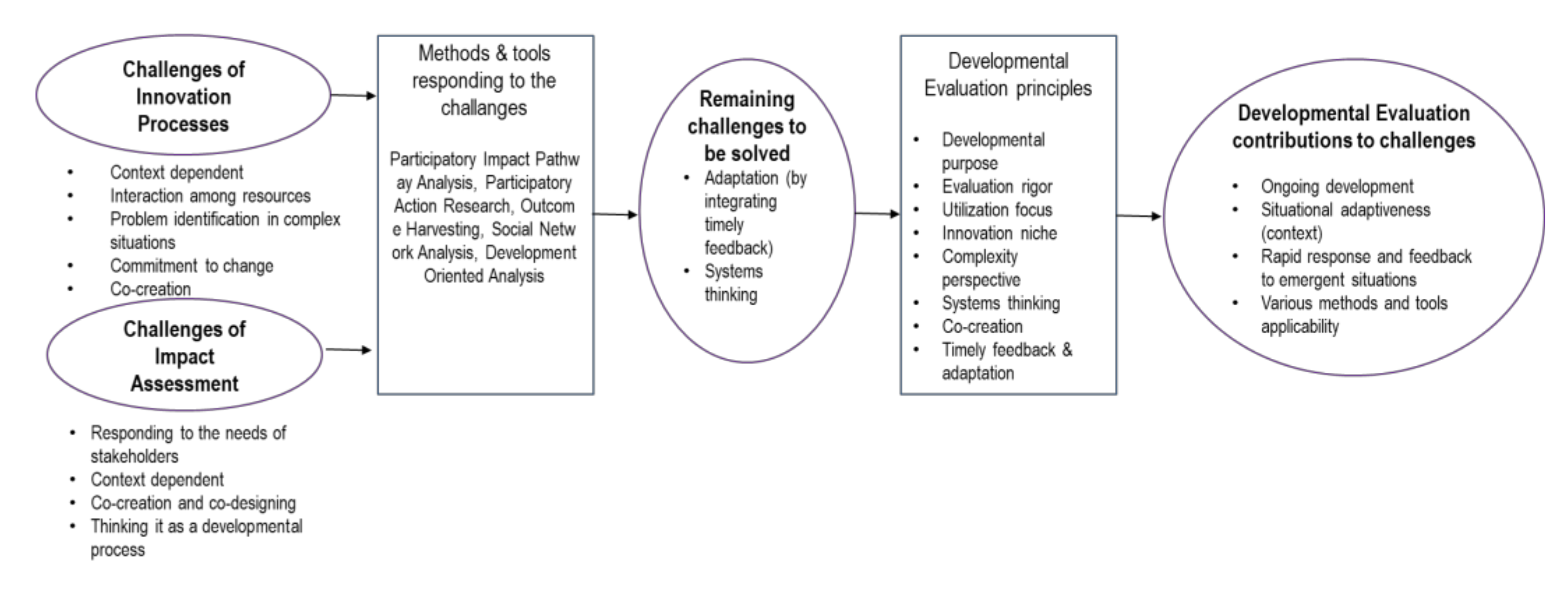

5. Discussion

5.1. Developmental Evaluation Principles

5.2. RQ 6. How Can the Evaluation Approach of Developmental Evaluation Improve the Tools and Methods to Respond to Innovation?

6. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Lyle, G. Understanding the nested, multi-scale, spatial and hierarchical nature of future climate change adaptation decision making in agricultural regions: A narrative literature review. J. Rural Stud. 2015, 37, 38–49. [Google Scholar] [CrossRef]

- Henzler, K.; Maier, S.D.; Jager, M.; Horn, R. SDG-Based Sustainability Assessment Methodology for Innovations in the Field of Urban Surfaces. Sustainability 2020, 12, 4466. [Google Scholar] [CrossRef]

- Singh, C.; Dorward, P.; Osbahr, H. Developing a holistic approach to the analysis of farmer decision-making: Implications for adaptation policy and practice in developing countries. Land Use Policy 2016, 59, 329–343. [Google Scholar] [CrossRef]

- Bopp, C.; Engler, A.; Poortvliet, P.M.; Jara-Rojas, R. The role of farmers´ intrinsic motivation in the effectiveness of policy incentives to promote sustainable agricultural practices. J. Environ. Manag. 2019, 244, 320–327. [Google Scholar] [CrossRef] [PubMed]

- Janker, J.; Mann, S.; Rist, S. Social sustainability in agriculture—A system-based framework. J. Rural Stud. 2019, 65, 32–42. [Google Scholar] [CrossRef]

- Lora, A.V.; Nel-lo Andreu, M.G. Alternative Metrics for Assessing the Social Impact of Tourism Research. Sustainability 2020, 12, 4299. [Google Scholar] [CrossRef]

- Wang, J.; Maier, S.D.; Horn, R.; Holländer, R.; Aschemann, R. Development of an Ex-Ante Sustainability Assessment Methodology for Municipal Solid Waste Management Innovations. Sustainability 2018, 10, 3208. [Google Scholar] [CrossRef]

- Vanclay, F. The Potential Application of Qualitative Evaluation Methods in European Regional Development: Reflections on the Use of Performance Story Reporting in Australian Natural Resource Management. Reg. Stud. 2015, 49, 1326–1339. [Google Scholar] [CrossRef]

- Paz-Ybarnegaray, R.; Douthwaite, B. Outcome Evidencing: A Method for Enabling and Evaluating Program Intervention in Complex Systems. Am. J. Eval. 2017, 38, 275–293. [Google Scholar] [CrossRef]

- Patton, M.Q.; McKegg, K.; Wehipeihana, N. Developmental Evaluation Exemplars: Principles in Practice; Guilford Press: New York, NY, USA, 2016; pp. 234–251. [Google Scholar]

- Naldi, L.; Nilsson, P.; Westlund, H.; Wixe, S. What is smart rural development? J. Rural Stud. 2015, 40, 90–101. [Google Scholar] [CrossRef]

- Organization for Economic Cooperation and Development (OECD). Agricultural Innovation Systems: A Framework for Analysing the Role of the Government; OECD Publishing: Paris, France, 2013. [Google Scholar]

- Preskill, H.; Gopal, S. Evaluating Complexity. Propositions for Improving Practice. 2014. Available online: http://www.fsg.org/publications/evaluating-complexity (accessed on 29 January 2019).

- Vilys, M.; Jakubavicius, A.; Zemaitis, E. Public Innovation Support Index for Impact Assessment in the European Economic Area. Entrep. Bus. Econ. Rev. 2015, 3, 123–138. [Google Scholar] [CrossRef]

- Barrueto, A.K.; Merz, J.; Kohler, T.; Hammer, T. What prompts agricultural innovation in rural Nepal: A Study Using the Example of Macadamia and Walnut Trees as Novel Cash Crops. Agriculture 2018, 8, 21. [Google Scholar] [CrossRef]

- Hall, A.; Sulaimanb, V.R.; Clark, N.; Yogananda, B. From measuring impact to learning institutional lessons: An innovation systems perspective on improving the management of international agricultural research. Agric. Syst. 2003, 78, 213–241. [Google Scholar] [CrossRef]

- Akpoko, J.G.; Kudi, T.M. Impact assessment of university-based rural youths Agricultural Extension Out-Reach Program in selected villages of Kaduna-State, Nigeria. J. Appl. Sci. 2007, 7, 3292–3296. [Google Scholar]

- Del Rio, M.; Hargrove, W.L.; Tomaka, J.; Korc, M. Transportation Matters: A Health Impact Assessment in Rural New Mexico. Int. J. Environ. Res. Public Health 2017, 14, 629. [Google Scholar] [CrossRef]

- Diwakar, P.G.; Ranganath, B.K.; Gowrisankar, D.; Jayaraman, V. Empowering the rural poor through EO products and services—An impact assessment. Acta Astronaut. 2008, 63, 1–4. [Google Scholar] [CrossRef]

- Michelsen, O.; Cherubini, F.; Stromman, A.H. Impact Assessment of Biodiversity and Carbon Pools from Land Use and Land Use Changes in Life Cycle Assessment, Exemplified with Forestry Operations in Norway. J. Ind. Ecol. 2012, 16, 231–242. [Google Scholar] [CrossRef]

- Mutuc, M.E.M.; Rejesus, R.M.; Pan, S.; Yorobe, J.M., Jr. Impact Assessment of Bt Corn Adoption in the Philippines. J. Agric. Appl. Econ. 2012, 44, 117–135. [Google Scholar] [CrossRef][Green Version]

- Dargan, L.; Shucksmith, M. LEADER and innovation. Sociol. Rural. 2008, 48, 274–291. [Google Scholar] [CrossRef]

- Dax, T.; Strahl, W.; Kirwan, J.; Maye, D. The Leader programme 2007–2013: Enabling or disabling social innovation and neo-endogenous development? Insights from Austria and Ireland. Eur. Urban Reg. Stud. 2016, 23, 56–68. [Google Scholar] [CrossRef]

- Dax, T.; Oedl-Wieser, T. Rural innovation activities as a means for changing development perspectives—An assessment of more than two decades of promoting LEADER initiatives across the European Union. Stud. Agric. Econ. 2016, 118, 30–37. [Google Scholar] [CrossRef]

- Bonfiglio, A.; Camaioni, B.; Coderoni, S.; Esposti, R.; Pagliacci, F.; Sotte, F. Are rural regions prioritizing knowledge transfer and innovation? Evidence from Rural Development Policy expenditure across the EU space. J. Rural Stud. 2017, 53, 78–87. [Google Scholar] [CrossRef]

- Turner, J.A.; Klerkx, L.; White, T.; Nelson, T.; Everett-Hincks, J.; Mackay, A.; Botha, N. Unpacking systemic innovation capacity as strategic ambidexterity: How projects dynamically configure capabilities for agricultural innovation. Land Use Policy 2017, 68, 503–523. [Google Scholar] [CrossRef]

- Giannakis, E.; Bruggeman, A. The highly variable economic performance of European agriculture. Land Use Policy 2015, 45, 26–35. [Google Scholar] [CrossRef]

- Cofré-Bravo, G.; Klerkx, L.; Engler, A. Combinations of bonding, bridging, and linking social capital for farm innovation: How farmers configure different support networks. J. Rural Stud. 2019, 69, 53–64. [Google Scholar] [CrossRef]

- Eichler, G.M.; Schwarz, E.J. What Sustainable Development Goals do Social Innovations Address? A Systematic Review and Content Analysis of Social Innovation Literature. Sustainability 2019, 11, 522. [Google Scholar] [CrossRef]

- Barrientos-Fuentes, J.C.; Berg, E. Impact assessment of agricultural innovations: A review. Agron. Colomb. 2013, 31, 120–130. [Google Scholar]

- Mackay, R.; Horton, D. Expanding the use of impact assessment and evaluation in agricultural research and development. Agric. Syst. 2003, 78, 143–165. [Google Scholar] [CrossRef]

- Moschitz, H.; Home, R. The challenges of innovation for sustainable agriculture and rural development: Integrating local actions into European policies with the Reflective Learning Methodology. Action Res. 2014, 12, 392–409. [Google Scholar] [CrossRef]

- Patton, M.Q. Developmental Evaluation: Applying Complexity Concepts to Enhance Innovation and Use; Guilford Press: New York, NY, USA, 2010. [Google Scholar]

- Scriven, M. The methodology of evaluation. In Perspectives of Curriculum Evaluation; Tyler, R.W., Gagne, R.M., Scriven, M., Eds.; Rand McNally: Chicago, IL, USA, 1967; pp. 39–83. [Google Scholar]

- Patton, M.Q. Utilization-Focused Evaluation; SAGE Publishing: Saint Paul, MN, USA, 2008. [Google Scholar]

- United States Agency for International Development (USAID). Evaluation: Learning from Experience. USAID Evaluation Policy. 2011. Available online: www.usaid.gov/sites/default/files/documents/1868/USAIDEvaluationPolicy.pdf (accessed on 2 February 2020).

- Allen, S.; Hunsicer, D.; Kjaer, M.; Krimmel, R.; Plotkin, G.; Skeith, K. Adapted Developmental Evaluation with USAID´s People to People Reconciliation Fund Program. In Developmental Evaluation Exemplars: Principles in Practice; Patton, M., McKegg, K., Wehipeihana, N., Eds.; Guilford Press: New York, NY, USA, 2015; pp. 216–233. [Google Scholar]

- Van Assche, K.; Beunen, R.; Holm, J.; Lo, M. Social learning and innovation. Ice fishing communities on Lake Mille Lacs. Land Use Policy 2013, 34, 233–242. [Google Scholar] [CrossRef]

- Gopal, S.; Mack, K.; Kutzli, C. Using Developmental Evaluation to Support College Access and Success. Challenge Scholars. In Developmental Evaluation Exemplars: Principles in Practice; Guilford Publications: New York, NY, USA, 2015. [Google Scholar]

- Shea, J.; Taylor, T. Using developmental evaluation as a system of organizational learning. Eval. Program Plan. 2017, 65, 83–93. [Google Scholar] [CrossRef] [PubMed]

- Imperiale, A.J.; Vanclay, F. Using Social Impact Assessment to Strengthen Community Resilience in Sustainable Rural Development in Mountain Areas. Mt. Res. Dev. 2016, 36, 431–442. [Google Scholar] [CrossRef]

- Tamee, R.A.; Crootof, A.; Scott, C.A. The Water-Energy-Food Nexus: A systematic review of methods for nexus assessment. Environ. Res. Lett. 2018, 13, 4. [Google Scholar]

- European Commission. Better Regulation “Toolbox”; European Commission: Brussels, Belgium, 2017. [Google Scholar]

- Cong, R.G.; Stefaniak, I.; Madsen, B.; Dalgaard, T.; Jensen, J.D.; Nainggolan, D.; Termansen, M. Where to implement local biotech innovations? A framework for multi-scale socio-economic and environmental impact assessment of Green Bio-Refineries. Land Use Policy 2017, 68, 141–151. [Google Scholar] [CrossRef]

- Quiedeville, S.; Barjolle, D.; Mouret, J.C.; Stolze, M. Ex-post evaluation of the impacts of the science-based research and innovation program: A new method applied in the case of farmers’ transition to organic production in the Camargue. J. Innov. Econ. Manag. 2017, 22, 145–170. [Google Scholar] [CrossRef]

- Spaapen, J.; van Drooge, L. Introducing ‘productive interactions’ in social impact assessment. Res. Eval. 2011, 20, 211–218. [Google Scholar] [CrossRef]

- De Francesco, F.; Radaelli, C.M.; Troeger, V.E. Implementing regulatory innovations in Europe: The case of impact assessment. J. Eur. Public Policy 2012, 19, 491–511. [Google Scholar] [CrossRef]

- Tecco, N.; Baudino, C.; Girgenti, V.; Peano, C. Innovation strategies in a fruit growers association impacts assessment by using combined LCA and s-LCA methodologies. Sci. Total Environ. 2016, 568, 253–262. [Google Scholar] [CrossRef]

- Graef, F.; Hernandez, L.E.A.; König, H.J.; Uckert, G.; Mnimbo, M.T. Systemising gender integration with rural stakeholders’ sustainability impact assessments: A case study with three low-input upgrading strategies. Environ. Impact Assess. Rev. 2018, 68, 81–89. [Google Scholar] [CrossRef]

- Pachón-Ariza, F.A.; Bokelmann, W.; Ramírez, C. Participatory Impact Assessment of Public Policies on Rural Development in Colombia and Mexico. Cuad. Desarro. Rural 2016, 13, 143–182. [Google Scholar] [CrossRef]

- Kumar, R.; Sekar, I.; Punera, B.; Yogi, V.; Bharadwaj, S. Impact Assessment of Decentralized Rainwater Harvesting on Agriculture: A Case Study of Farm Ponds in Semi-arid Areas of Rajasthan. Indian J. Econ. Dev. 2016, 12, 25–31. [Google Scholar] [CrossRef]

- Cristiano, S.; Proietti, P. Evaluating interactive innovation processes: Towards a developmental-oriented analytical framework. In Proceedings of the 13th European IFSA Symposium on Integrating Science, technology, policy and practice, Chania, Greece, 1–5 July 2018. [Google Scholar]

- Douthwaite, B.; Kubyb, T.; Van de Fliert, E.; Schulz, S. Impact pathway evaluation: An approach for achieving and attributing impact in complex systems. Agric. Syst. 2003, 78, 243–265. [Google Scholar] [CrossRef]

- Galan-Diaz, C.; Edwards, P.; Nelson, J.D.; Van der Wal, R. Digital innovation through partnership between nature conservation organisations and academia: A qualitative impact assessment. Ambio 2015, 44, 538–549. [Google Scholar] [CrossRef] [PubMed]

- Momtaz, S. Institutionalizing social impact assessment in Bangladesh resource management: Limitations and opportunities. Environ. Impact Assess. Rev. 2005, 25, 33–45. [Google Scholar] [CrossRef]

- Wu, J.; Chang, I.S.; Lam, K.C.; Shi, M. Integration of environmental impact assessment into decision-making process: Practice of urban and rural planning in China. J. Clean. Prod. 2014, 69, 100–108. [Google Scholar] [CrossRef]

- Stephan, U.; Patterson, M.; Kelly, C.; Mair, J. Organizations Driving Positive Social Change: A review and integrative framework of change processes. J. Manag. 2016, 42, 1250–1281. [Google Scholar] [CrossRef]

- Swagemakers, P. (LIAISON Workshop-Madrid-Mediterranean Macro-Region Workshop, Madrid, Spain). Personal communication, 2018.

- Vanclay, F. The potential application of social impact assessment innintegrated coastal zone management. Ocean Coast. Manag. 2012, 68, 149–156. [Google Scholar] [CrossRef]

- Maredia, M.K.; Shankar, B.; Kelley, T.G.; Stevenson, J.R. Impact assessment of agricultural research, institutional innovation, and technology adoption: Introduction to the special section. Food Policy. 2014, 44, 214–217. [Google Scholar] [CrossRef]

- Gamble, J.A.A. A Developmental Evaluation Primer. Canada: The J.W. McConnell Family Foundation. Available online: http://tamarackcommunity.ca/downloads/vc/Developmental_Evaluation_Primer.pdf (accessed on 2 February 2020).

- Copestake, J. Credible impact evaluation in complex contexts: Confirmatory and exploratory approaches. Evaluation 2014, 20, 412–427. [Google Scholar] [CrossRef]

- Ton, G. The mixing of methods: A three-step process for improving rigour in impact evaluations. Evaluation 2012, 18, 5–25. [Google Scholar] [CrossRef]

- Crevoisier, O. The innovative milieus approach: Toward a territorialised understanding of the economy. Econ. Geogr. 2004, 80, 367–369. [Google Scholar] [CrossRef]

- Pires, A.D.; Pertoldi, M.; Edwards, J.; Hegyi, F.B. Smart Specialisation and Innovation in Rural Areas; S3 Policy Brief Series No. 09/2014; European Commission: Brussels, Belgium, 2014. [Google Scholar]

- Douthwaite, B.; Mur, R.; Audouin, S.; Wopereis, M.; Hellin, J.; Moussa, A.; Karbo, N.; Kasten, W.; Bouyer, J. Agricultural Research for Development to Intervene Effectively in Complex Systems and the Implications for Research Organizations; KIT Working Paper: Amsterdam, The Netherlands, 2017. [Google Scholar]

- Westley, F.; Zimmerman, B.; Patton, M.Q. Getting to Maybe: How the World Has Changed; Random House Canada: Toronto, ON, Canada, 2006. [Google Scholar]

- Milley, P.; Szijarto, B.; Svensson, K.; Cousins, J.B. The evaluation of social innovation: A review and integration of the current empirical knowledge base. Evaluation 2018, 24, 237–258. [Google Scholar] [CrossRef]

- Schramm, L.L.; Nyirfa, W.; Grismer, K.; Kramers, J. Research and development impact assessment for innovation-enabling organizations. Can. Public Adm. 2011, 54, 567–581. [Google Scholar] [CrossRef]

- Horton, D.; Mackay, R. Using evaluation to enhance institutional learning and change: Recent experiences with agricultural research and development. Agric. Syst. 2003, 78, 127–142. [Google Scholar] [CrossRef]

- Kirwan, J.; Ilbery, B.; Maye, D.; Carey, J. Grassroots social innovation and food localisation: An investigation of the Local Food programme in England. Global Environ. Chang. 2013, 23, 830–837. [Google Scholar] [CrossRef]

- Neumeier, S. Social innovation in rural development: Identifying the key factors of success. Geogr. J. 2017, 183, 34–46. [Google Scholar] [CrossRef]

- Neumeier, S. Why do social innovations in rural development matter and should they be considered more seriously in rural development research? Proposal for a stronger focus on social innovation in rural development research. Sociol. Ruralis. 2012, 52, 48–69. [Google Scholar] [CrossRef]

- Reckwitz, A. Toward a Theory of Social Practices A development in culturalist theorizing. Eur. J. Soc. Theory 2002, 5, 243–263. [Google Scholar] [CrossRef]

- Shove, E.; Pantzar, M.; Watson, M. The Dynamics of Social Practice: Everyday Life and How It Changes; Sage Publishing: Los Angeles, LA, USA, 2012. [Google Scholar]

- Lilja, N.; Dixon, J. Responding to the Challenges of Impact Assessment of Participatory Research and Gender Analysis. Exp. Agric. 2008, 44, 3–19. [Google Scholar] [CrossRef]

- Röling, N. Pathways for Impact: Scientists Different Perspectives on Agricultural Innovation. Int. J. Agric. Sustain. 2009, 7, 83–94. [Google Scholar] [CrossRef]

- Watts, J.; Horton, D.; Douthwaite, B.; La Rovere, R.; Thiele, G.; Prasad, S.; Staver, C. Transforming Impact Assessment: Beginning the Quiet Revolution of Institutional Learning and Change. Exp. Agric. 2008, 44, 21–35. [Google Scholar] [CrossRef][Green Version]

- Utting, K. Assessing the Impact of Fair Trade Coffee: Towards an Integrative Framework. J. Bus. Ethics 2009, 86, 127–149. [Google Scholar] [CrossRef]

- Byambaa, T.; Janes, C.; Takaro, T.; Corbett, K. Putting Health Impact Assessment into practice through the lenses of diffusion of innovations theory: A review. Env. Dev. Sustain. 2015, 17, 23–40. [Google Scholar] [CrossRef]

- Jones, N.; McGinlay, J.; Dimitrakopoulou, P.G. Improving social impact assessment of protected areas: A review of the literature and directions for future research. Environ. Impact Assess. Rev. 2017, 64, 1–7. [Google Scholar] [CrossRef]

- Khurshid, N. Impact assessment of agricultural training program of AKRP to enhance the socio-economic status of rural women: A case study of northern areas of Pakistan. Pak. J. Life Soc/ Sci. 2013, 11, 133–138. [Google Scholar]

- Guijt, I.; Kusters, C.S.L.; Lont, H.; Visser, I. Developmental Evaluation: Applying Complexity Concepts to Enhance Innovation and Use: Report from an Expert Seminar with Dr. Michael Quinn Patton; Centre for Development Innovation, Wageningen University and Research Centre: Wageningen, The Netherlands, 2012. [Google Scholar]

- Patton, M.Q. What is Essential in Developmental Evaluation? On Integrity, Fidelity, Adultery, Abstinence, Impotence, Long-Term Commitment, Integrity, and Sensitivity in Implementing Evaluation Models. Am. J. Eval. 2016, 37, 2. [Google Scholar] [CrossRef]

- Bock, B. Rural marginalisation and the role of social innovation: A turn towards nexogenous development and rural reconnection. Sociol. Ruralis. 2016. [Google Scholar] [CrossRef]

| Methodological Approaches | Methods | Example of Specific Methodologies and Tools |

|---|---|---|

| Conceptual | Review Theory-based | Document analysis, literature review, argumentation |

| Conceptual Framework for Innovation Impact Assessment/Innovation Evaluation | Framework development based on reviews (for example, conceptual innovation) | |

| Qualitative | Public participation | Questionnaire, interview, expert surveys, etc. |

| Quantitative | Survey | Regression analysis, Bayesian probabilistic method |

| Economic valuation | Econometric analysis, cost-benefit analysis, cost-effectiveness | |

| Mixed | Participatory evaluation 1 approaches | Individual rating, group voting, actor mapping, evaluation of assessment tools |

| Case studies 2 tool | Detailed analysis of individual research programs |

| Methodological Approach | Impact Area | Methods and Tools Used |

|---|---|---|

| Conceptual (22) | Social (17) | Method: Social Impact Assessment (SIA) (Imperiale and Vanclay, 2016) [41]; Contextual Response Analysis (CRA) (Spaapen and van Drooge, 2011) [46]; case study Tool: Mixed-gendered focus group workshops, literature review |

| Environmental (2) | Method: Environmental Impact Assessment (EIA) (Cong et al., 2017) [44]; Health Impact Assessment (HIA) (Del Rio et al., 2017) [18] | |

| Sustainability (4) | Tool: Literature review on agricultural innovations, farmer-driven innovations, participatory technology department and innovation systems | |

| Mixed-method (41) | Economic (6) | Tool: Calculation of economic indicators, face-to-face interview, static data from international organizations, community survey, Analysis of Variance (ANOVA) |

| Social (21) | Method: Regulatory Impact Assessment (RIA) (De Francesco et al., 2012) [47] Tool: Focus groups, face-to-face interview, rapid appraisal workshop, survey, field work, sustainability indicators, public meetings, health impact assessment, observation, household survey | |

| Environmental (17) | Method: CropWatch agroclimatic indicators (CWAIs) (Gommes et al., 2017) [48] Tool: Indicator species analysis, Mantel test of geographic distance, Bray Curtis coefficient, Life cycle environmental impact assessment | |

| Sustainability (4) | Method: Life Cycle Assessment (LCA) (Tecco et al., 2016) [48]; Environmental Impact Assessment (EIA) (Cong et al., 2017) [44] Tool: Stakeholder questionnaire, focus group interview, rapid rural appraisal, observation, sustainability indicators, scenario analysis | |

| Qualitative (6) | Economic (2) | Tool: Email survey, household interviews, data coding, blind interviews |

| Social (3) | Method: Participatory Impact Pathway Analysis (PIPA), Social Network Analysis (SNA), Outcome Harvesting method (OH) (Quiedeville et al., 2017) [45], Participation Action Research (PAR) (Graef et al., 2018) [49] | |

| Sustainability (3) | Method: Economic-Environmental Input-Output (EEIO) model (Cong et al., 2017) [44] Tool: Geographic information system (GIS), discriminant analysis | |

| Quantitative (6) | Social (1) | Method: Framework for Participatory Impact Assessment (FoPIA) analysis (Pachón-Ariza et al., 2016) [50] |

| Environmental (3) | Tool: Water sample analysis, skeletochronology method, life cycle analysis (LCA), land classification | |

| Sustainability (1) | Method: Crop-wise analysis (Kumar et al., 2016) [51] Tool: Benefit-cost ratio, Net Present Worth (NPW) |

| Author/Year | Impact Area | Tools and Methods | Co-Benefit | Main Findings |

|---|---|---|---|---|

| Copestake (2014) [63] | Economic, Social | Qualitative Impact Protocol (QUIP) | Better reflection of uncertain and insufficiently understood impact pathways, blind interview to avoid bias and gain explicit answers from key informants. | The balanced approaches of exploratory and confirmatory to impact evaluation gave explicit answers to the question. |

| Diwakar et al. (2008) [19] | Social | Earth Observation (EO) | Data monitoring for action that brings transparency to see the developmental process of whole project. | Helps in microlevel plan preparation, concurrent project monitoring and impact assessment in multiple stages throughout the project. |

| Cristiano and Proietti (2018) [52] | Social | Development-Oriented Analysis (DOA) | Focuses on interactive innovation processes and multi-actor approach. | Integrated framework with participatory and reflexive approaches that support policy and project designs promoting development in innovative capacities. |

| Quiedeville et al. (2017) [45] | Social | Participatory Impact Pathway Analysis (PIPA), Outcome Harvesting (OH), Social Network Analysis (SNA) | PIPA: Participatory approach allowing actors to change and increase interactions within the innovation network. OH: Lets PIPA adapt to requirements of ex-post assessment. SNA: Identifies important actors and their statements. | Critical to the success factors of innovation were agricultural policies, economic factors, testing conducted independently by farmers and institutional framework rather than learning and interactions with farmers. |

| Hall et al. (2003) [16] | Social | In-depth review of case studies of a specific project | Case study to demonstrate the importance of institutional learning. | Institutional learning must be embedded in a new perspective of innovation systems by (i) understanding how research community operates, (ii) realizing learning as part of the practice of research organizations, (iii) realizing capacity development and behavioral changes, (iv) realizing evaluation as collective task. |

| Maredia et al. (2014) [60] | Social | Cost-benefit analysis; impact evaluations: decentralized participatory model; ex post assessment: partial equilibrium economic surplus model | Cost-benefit analysis: Assists in making strategic decisions and assesses potential impacts; impact evaluation: Test the effectiveness of projects and institutional innovations; ex post assessment: Analytical approach in assessing the impact on investments. | Methods took into account the variables (time frame, size of intervention, type of research question and evaluation addressed) during the evaluation process. |

| Tecco et al. (2016) [48] | Sustainability | Environmental and social Life Cycle Assessment (LCA) | Assessment of achieved impacts, trade-offs, appropriateness in the context and scale of adoption. | Dynamic combination of data and information provided by stakeholders improved the decision-making process. |

| Wu et al. (2014) [56] | Social | Plan Environmental Impact Assessment (PEIA) | Plan environmental impact assessment throughout various stages of the project to enhance outcome. | For the framework to become a standardized procedure, decision-makers need to accept PEIA as an internal process and not as an external intervention. |

| Paz-Ybarnegaray and Douthwaite (2017) [9] | Social | Outcome-evidencing method | Identifies outcomes giving immediate feedback to ongoing project implementation and makes causal claim to substantiate or challenge the overarching program theory. | Outcome-evidencing allows agents to identify underlying causes and undertake actions along the process; it is a one-off evaluation that answers if, how and in what contexts projects are working. |

| Schramm et al. (2011) [69] | Economic | Smart Science Impact (R&D impact assessment tool) | Relies on ‘voice of customer’ where explicit answers of clients provide valuable input data. | The economic, social and environmental IA tool can be easily adapted for use by government, not-for-profit or private sectors that conduct fund or contract research and development activities. |

| Milley et al. (2018) [68] | Social | Concept of Social Innovation (SI) | Working with the concept of Social Innovation (SI) may lead to innovation. | To find conceptual clarity, SI and relevant evaluation practices, researchers and practitioners need to move toward a principle-based approach grounded in empirical research taking into account the SI context. |

| Ton (2012) [63] | Social | Working with the client’s Monitoring and Evaluation Framework | Assessing the effectiveness of projects and programs with interventions over the value-chain development process considering changing conditions. | The structure and systematic process helps reduce the tendency to one-method design; it enhances critical reflection within the team and allows creativity to find ways to handle information. |

| Vanclay (2015) [8] | Social | Literature review on qualitative evaluations, explanation of performance story-telling | Qualitative methods collect evidence about performance of a project or program and enable the collection of feedback to assist in modifying the project. | Story-telling approach can be effective if it is coherent, credible, and multi-dimensional where the different components are interconnected and the causal relations between them become clear. |

| Imperiale and Vanclay (2016) [41] | Environment, Social | Social Impact Assessment (SIA) | Helps social practitioners in designing the problem with stakeholders and implementing the project with local communities, thus achieving improved social outcomes. | The framework positively changed the outcomes of sustainable development projects and took on a community-oriented approach, that understands better the needs of the affected and conceptualizes actions needed for better social outcomes. |

| Graef et al. (2018) [49] | Social | Participatory Action Research (PAR) | Context-oriented and collaborative research approach: Local stakeholders and scientists together develop and select research methods, generate data and reflect in cycles on how efforts unfold and what the impacts of intervention are. | Allowed the collaborative research of different perspectives of scientists and stakeholders and the learning for scientists and stakeholders. |

| De Francesco et al. (2012) [47] | Social | Regulatory Impact Assessment (RIA) | Tool for major innovation in the reform agenda in many European countries. | The RIA implementation differs by country in terms of political and economic systems and also during the process of implementation. |

| Vilys et al. (2015) [14] | Economic | Public innovation support assessment | The conceptual framework proposes quantitative indicators to support effectiveness at the national level. | The assessment creates new opportunities and proposes indicators that enable the improvement of public support effectiveness. |

| Cong et al. (2017) [44] | Sustainability | LCA, geographic information system (GIS) analysis, economic-environmental input-out (EEIO) model; interregional input-output module (LINE) model | LCA-GIS-EEIO framework upscales micro analysis to macro analysis to see the effects of local changes, disaster, land use changes. It integrates top-down as well as bottom-up approaches. | The framework brings environmental economic contributions with careful selection of location. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Lee, S.Y.; Díaz-Puente, J.M.; Vidueira, P. Enhancing Rural Innovation and Sustainability Through Impact Assessment: A Review of Methods and Tools. Sustainability 2020, 12, 6559. https://doi.org/10.3390/su12166559

Lee SY, Díaz-Puente JM, Vidueira P. Enhancing Rural Innovation and Sustainability Through Impact Assessment: A Review of Methods and Tools. Sustainability. 2020; 12(16):6559. https://doi.org/10.3390/su12166559

Chicago/Turabian StyleLee, So Young, José M. Díaz-Puente, and Pablo Vidueira. 2020. "Enhancing Rural Innovation and Sustainability Through Impact Assessment: A Review of Methods and Tools" Sustainability 12, no. 16: 6559. https://doi.org/10.3390/su12166559

APA StyleLee, S. Y., Díaz-Puente, J. M., & Vidueira, P. (2020). Enhancing Rural Innovation and Sustainability Through Impact Assessment: A Review of Methods and Tools. Sustainability, 12(16), 6559. https://doi.org/10.3390/su12166559