Clear Data as a New Data Typology to Enhance Sustainability in Sport

Abstract

:1. Introduction

2. Instrument Validation

2.1. Item Development

2.2. Content Validation

2.3. Instrument Reliability

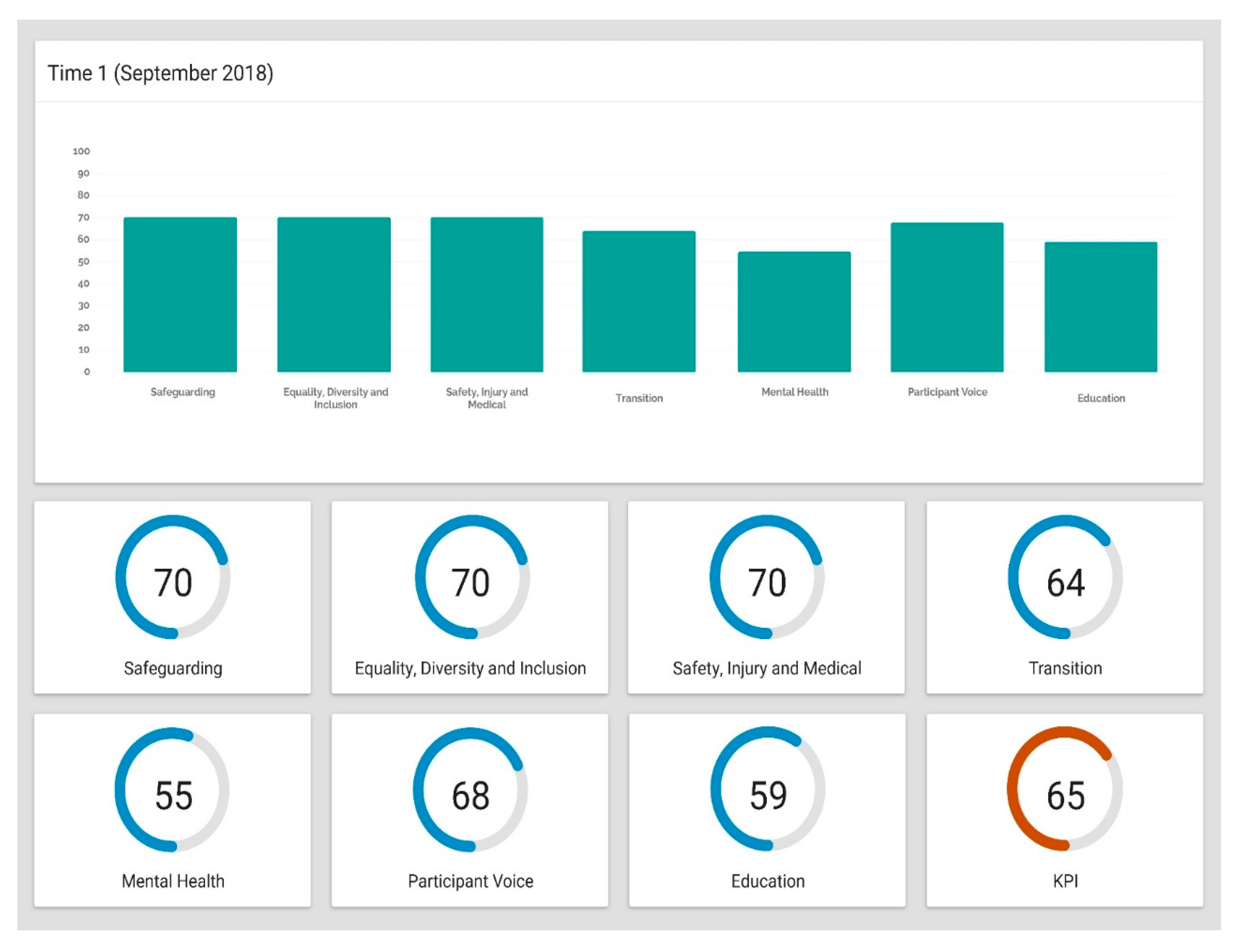

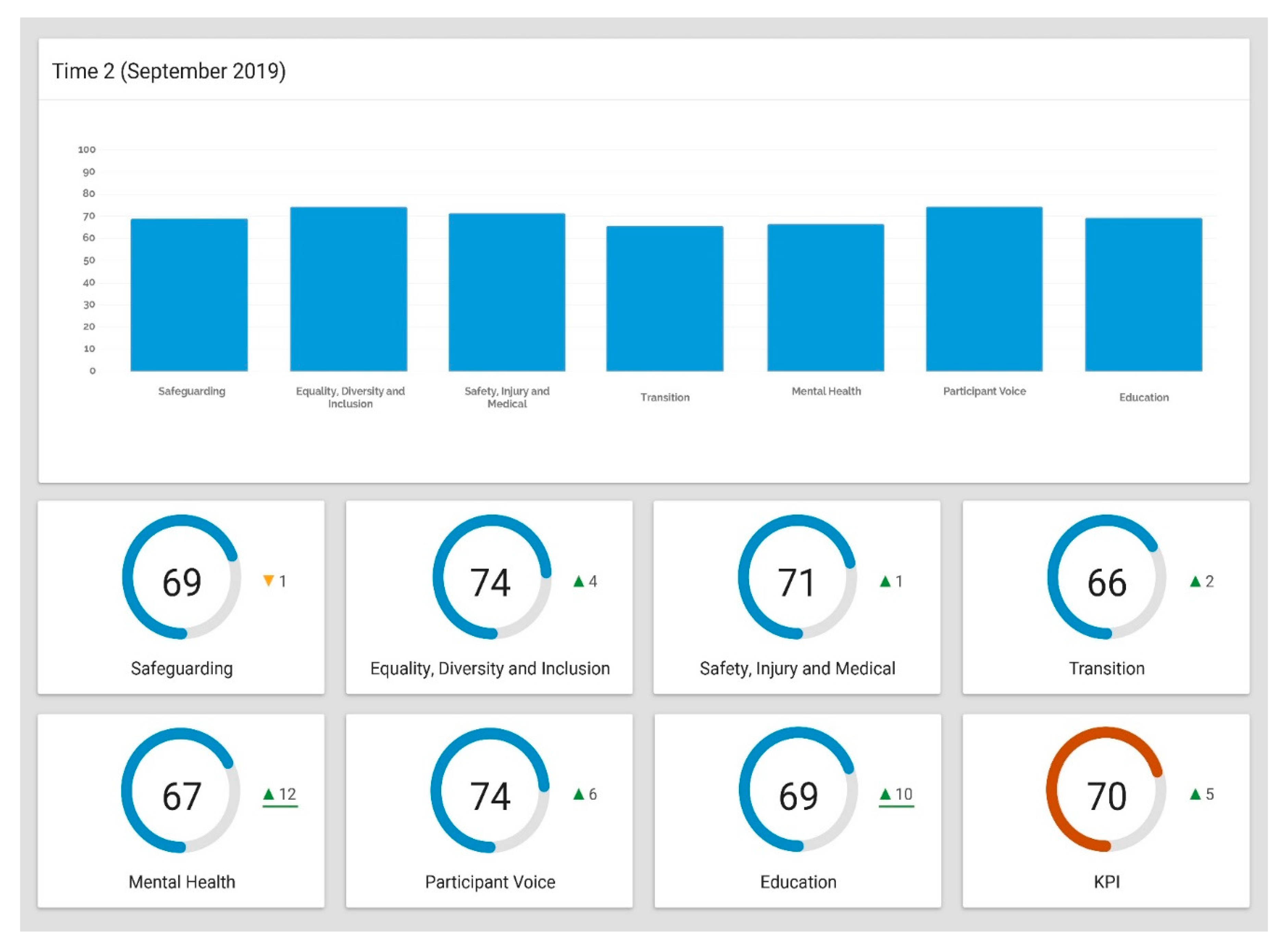

3. Instrument Utility

4. Discussion

Author Contributions

Funding

Conflicts of Interest

References

- Albu, O.B.; Flyverbom, M. Organizational transparency: Conceptualizations, conditions, and consequences. Bus. Soc. 2019, 58, 268–297. [Google Scholar] [CrossRef]

- Kitchin, T. The Data Revolution; Sage: London, UK, 2014. [Google Scholar]

- De Mauro, A.; Greco, M.; Grimaldi, M. A formal definition of big data based on its essential features. Libr. Rev. 2016, 65, 122–135. [Google Scholar] [CrossRef]

- Ayre, L.B.; Craner, J. Open data: What it is and why you should care. Public Libr. Quart 2010, 36, 173–184. [Google Scholar] [CrossRef]

- Darace, D.; Schopfel, J. Grey Literature in Library and Information Studies; DeGruyter Saur: Berlin, Germany, 2010. [Google Scholar]

- Parida, V.; Wincent, J. Why and how to compete through sustainability. Int. Entrep. Manag. J. 2019, 15, 1–19. [Google Scholar] [CrossRef] [Green Version]

- International Olympic Committee. Sustainability Essentials; International Olympic Committee: Lausanne, Switzerland, 2016. [Google Scholar]

- Duty of Care in Sport Review. Available online: https://www.gov.uk/governmentpublications/duty-of-care-in-sport-review (accessed on 30 April 2018).

- Sport Duty of Care Review: Call for Evidence. Available online: www.gov.uk/government/consultations/sport-duty-of-care-review-call-for-evidence (accessed on 30 April 2018).

- Grant, J.S.; Davis, L.L. Selection and use of content experts for instrument development. Res. Nurs. Health 1997, 20, 269–274. [Google Scholar] [CrossRef]

- Wynd, C.A.; Schmidt, B.; Schaefer, M.A. Two quantitative approaches for estimating content validity. West J. Nurs. Res. 2003, 25, 508–518. [Google Scholar] [CrossRef]

- Polit, D.F.; Beck, C.T.; Owen, S.V. Is the CVI an acceptable indicator of content validity? Appraisal and recommendations. Res. Nurs. Health 2007, 30, 459–467. [Google Scholar] [CrossRef] [PubMed]

- Anastasi, A. Psychological Testing, 6th ed.; Macmillan: New York, NY, USA, 1988. [Google Scholar]

- Parmenter, D. Key Performance Indicators, 4th ed.; Wiley: London, UK, 2019. [Google Scholar]

- Morrison-Saunders, A.; Pope, J. Conceptualizing and managing trade-offs in sustainability assessment. Environ. Impact Assess. Rev. 2013, 38, 54–63. [Google Scholar] [CrossRef] [Green Version]

- Geeraert, A. National Sports Governance Observer: Final Report; Danish Institute for Sports Studies: Aarhus, Denmark, 2018. [Google Scholar]

| Item | T1 N | T1 Mean (CI) | T2 N | T2 Mean (CI) | ICC |

|---|---|---|---|---|---|

| Safeguarding | |||||

| - Perceived Support | 107 | 6.76 (±0.32) | 107 | 6.83 (±0.30) | 0.85 |

| - Support Needed | Yes = 18; No = 89 | Yes = 17; No = 90 | 0.99 | ||

| - Received Support | 18 | 6.44 (±0.49) | 17 | 6.58 (±0.49) | 0.93 |

| Equality, Diversity, and Inclusion | |||||

| - Perceived Support | 107 | 6.82 (±0.38) | 107 | 6.79 (±0.37) | 0.89 |

| - Support Needed | Yes = 19; No = 88 | Yes = 18; No = 89 | 0.99 | ||

| - Received Support | 19 | 7.47 (±0.57) | 18 | 7.5 (±0.56) | 0.78 |

| Safety, Injury, and Medical | |||||

| - Perceived Support | 107 | 6.73 (±0.35) | 107 | 6.79 (±0.35) | 0.79 |

| - Support Needed | Yes = 31; No = 76 | Yes = 29; No = 78 | 0.98 | ||

| - Received Support | 31 | 7.81 (±0.71) | 29 | 7.83 (±0.69) | 0.91 |

| Transition | |||||

| - Perceived Support | 107 | 6.64 (±0.30) | 107 | 6.56 (±0.30) | 0.78 |

| - Support Needed | Yes = 16; No = 91 | Yes = 15; No = 92 | 0.99 | ||

| - Received Support | 16 | 7.81 (±0.55) | 15 | 7.87 (±0.53) | 0.81 |

| Mental Health | |||||

| - Perceived Support | 107 | 6.66 (±0.31) | 105 | 6.63 (±0.30) | 0.83 |

| - Support Needed | Yes = 15; No = 92 | Yes = 16; No = 89 | 0.98 | ||

| - Received Support | 15 | 6.53 (±0.44) | 16 | 6.56 (±0.47) | 0.84 |

| Representation of Participant’s Voice | |||||

| - Perceived Support | 107 | 6.49 (±0.34) | 103 | 6.45 (±0.35) | 0.79 |

| - Support Needed | Yes = 20; No = 87 | Yes = 18; No = 85 | 0.99 | ||

| - Received Support | 20 | 7.4 (±0.58) | 18 | 7.44 (±0.58) | 0.92 |

| Education | |||||

| - Perceived Support | 107 | 6.54 (±0.36) | 102 | 6.59 (±0.37) | 0.84 |

| - Support Needed | Yes = 23; No = 84 | Yes = 20; No = 82 | 0.98 | ||

| - Received Support | 23 | 7.26 (±0.61) | 20 | 7.3 (±0.61) | 0.91 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Lavallee, D.; Lowder, J.; Lowder, J. Clear Data as a New Data Typology to Enhance Sustainability in Sport. Sustainability 2020, 12, 4527. https://doi.org/10.3390/su12114527

Lavallee D, Lowder J, Lowder J. Clear Data as a New Data Typology to Enhance Sustainability in Sport. Sustainability. 2020; 12(11):4527. https://doi.org/10.3390/su12114527

Chicago/Turabian StyleLavallee, David, Jeff Lowder, and Jane Lowder. 2020. "Clear Data as a New Data Typology to Enhance Sustainability in Sport" Sustainability 12, no. 11: 4527. https://doi.org/10.3390/su12114527

APA StyleLavallee, D., Lowder, J., & Lowder, J. (2020). Clear Data as a New Data Typology to Enhance Sustainability in Sport. Sustainability, 12(11), 4527. https://doi.org/10.3390/su12114527