A Low-Cost Immersive Virtual Reality System for Teaching Robotic Manipulators Programming

Abstract

1. Introduction

2. Background

- Cost of robotic arms.

- Alternate use among students.

- Space required by robots.

- Courses not requiring attendance.

- Different robot models.

- Hard to evaluate programs of students.

- Safety problems due to inexperienced students.

3. Implementation

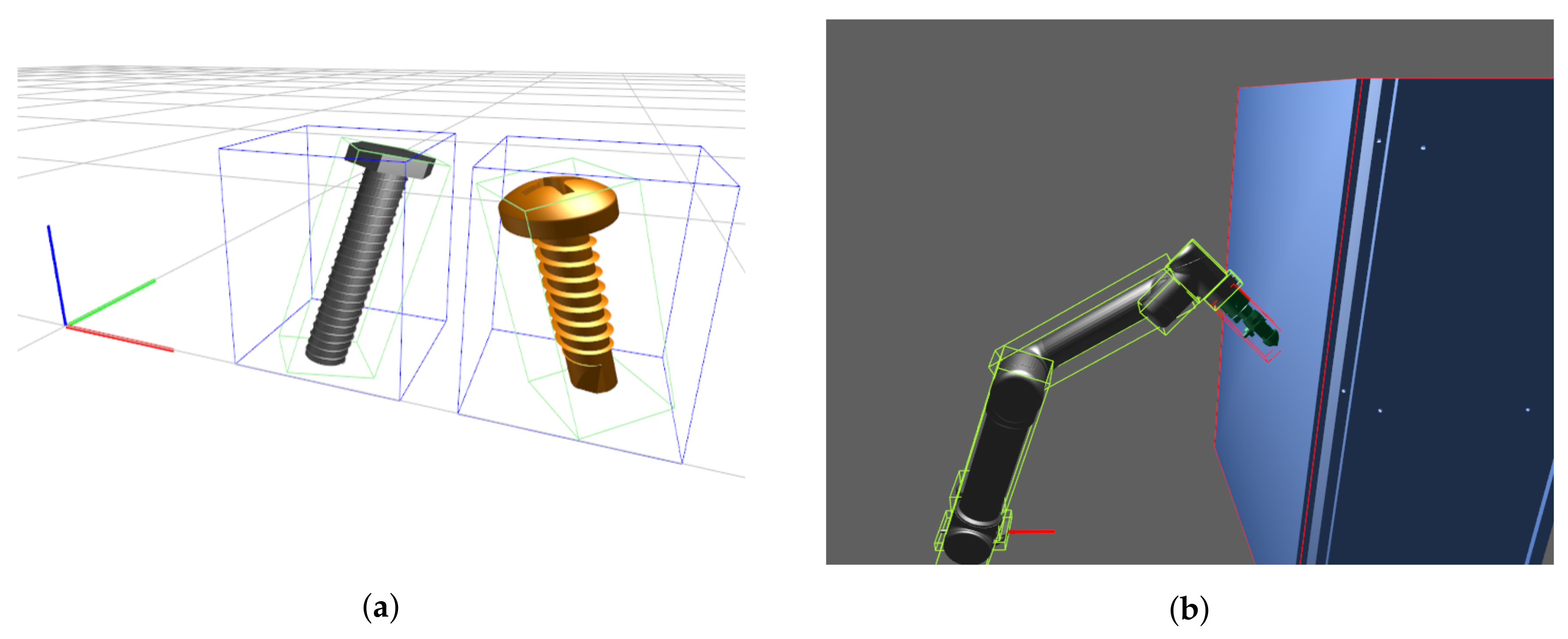

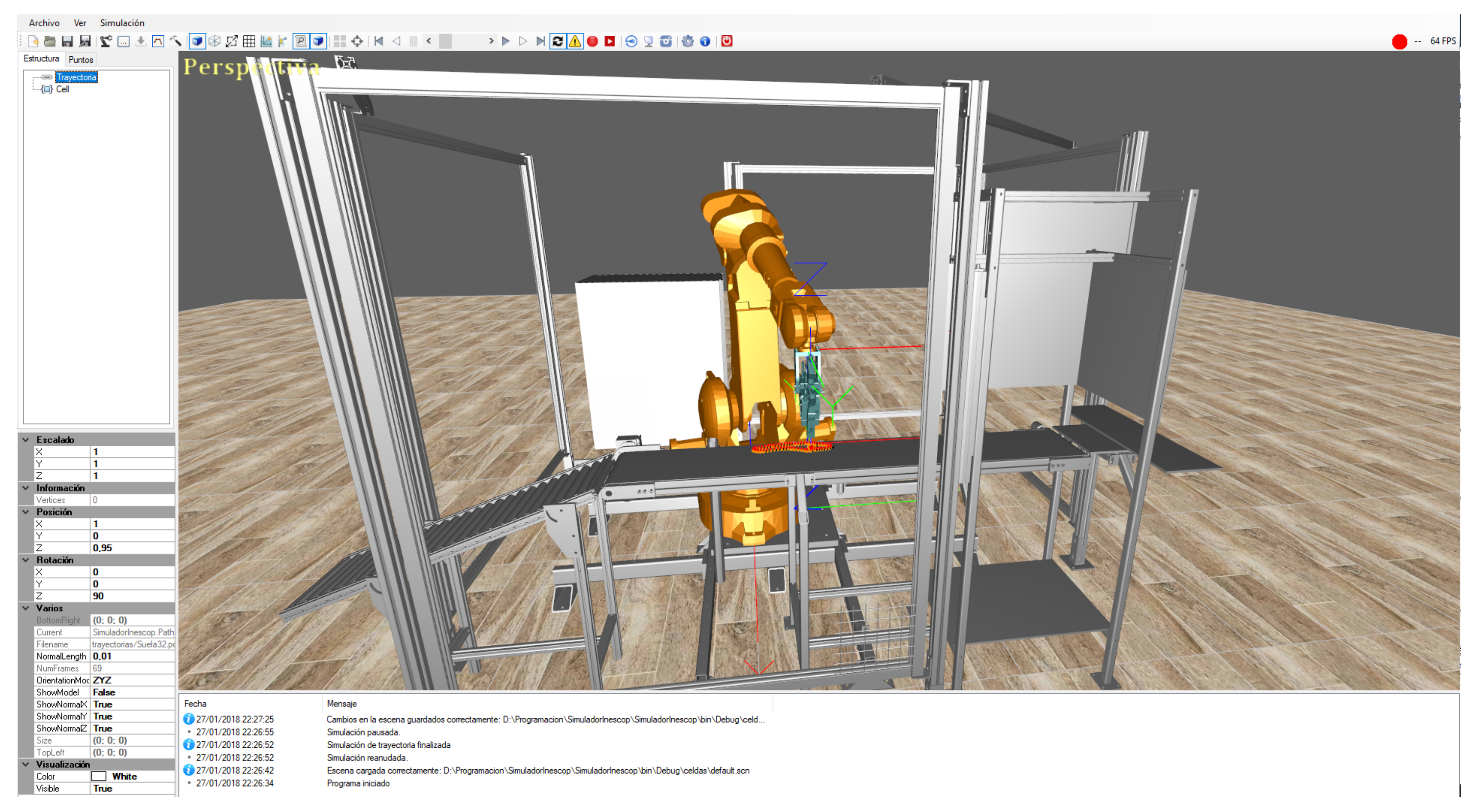

3.1. Simulation Software

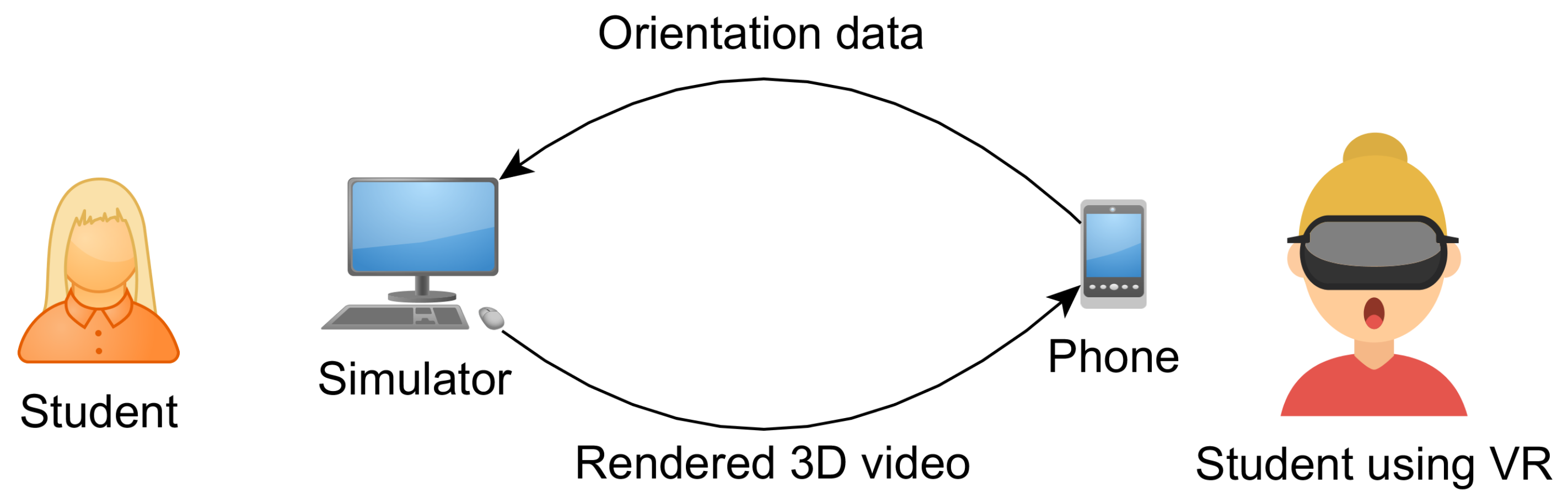

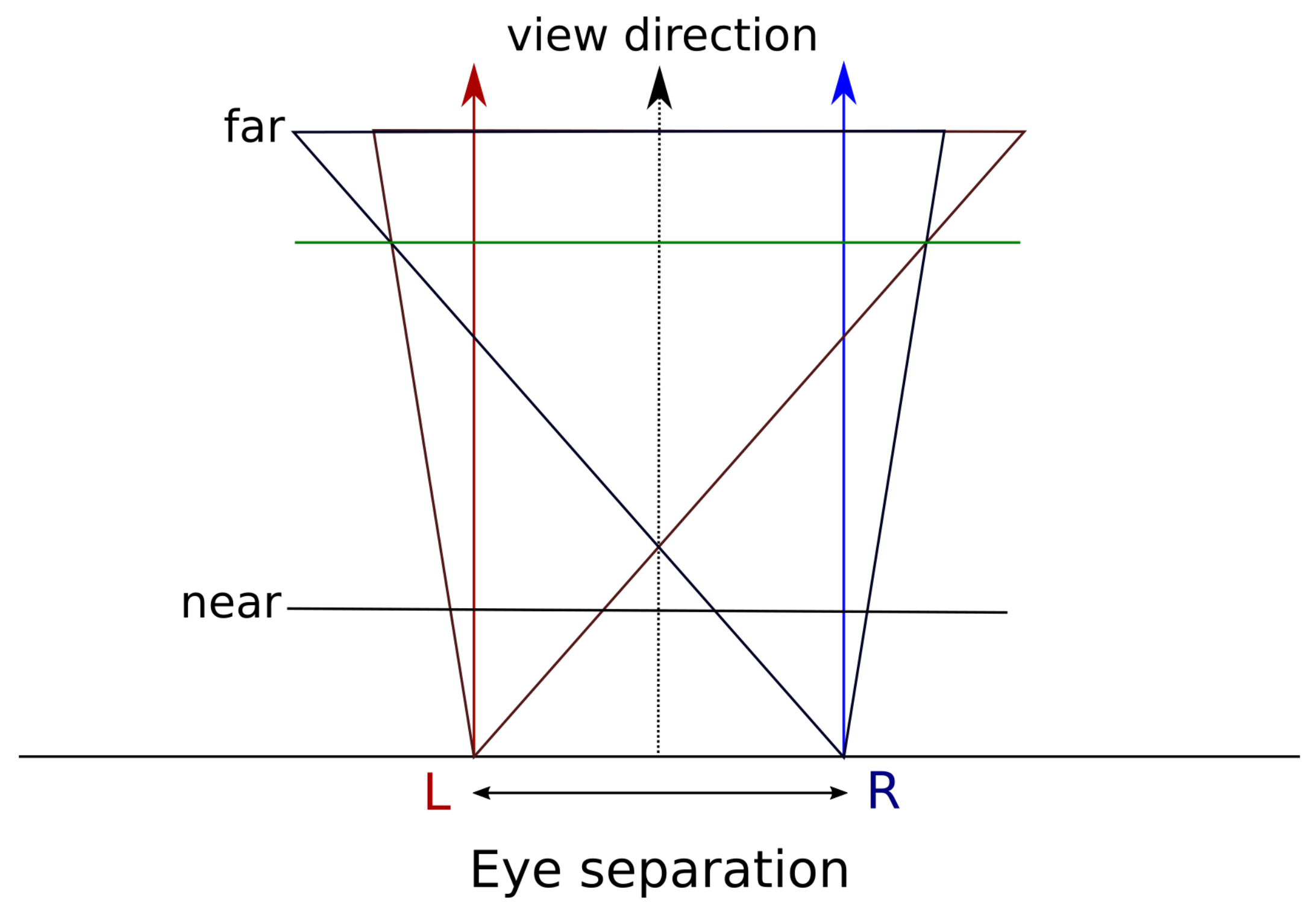

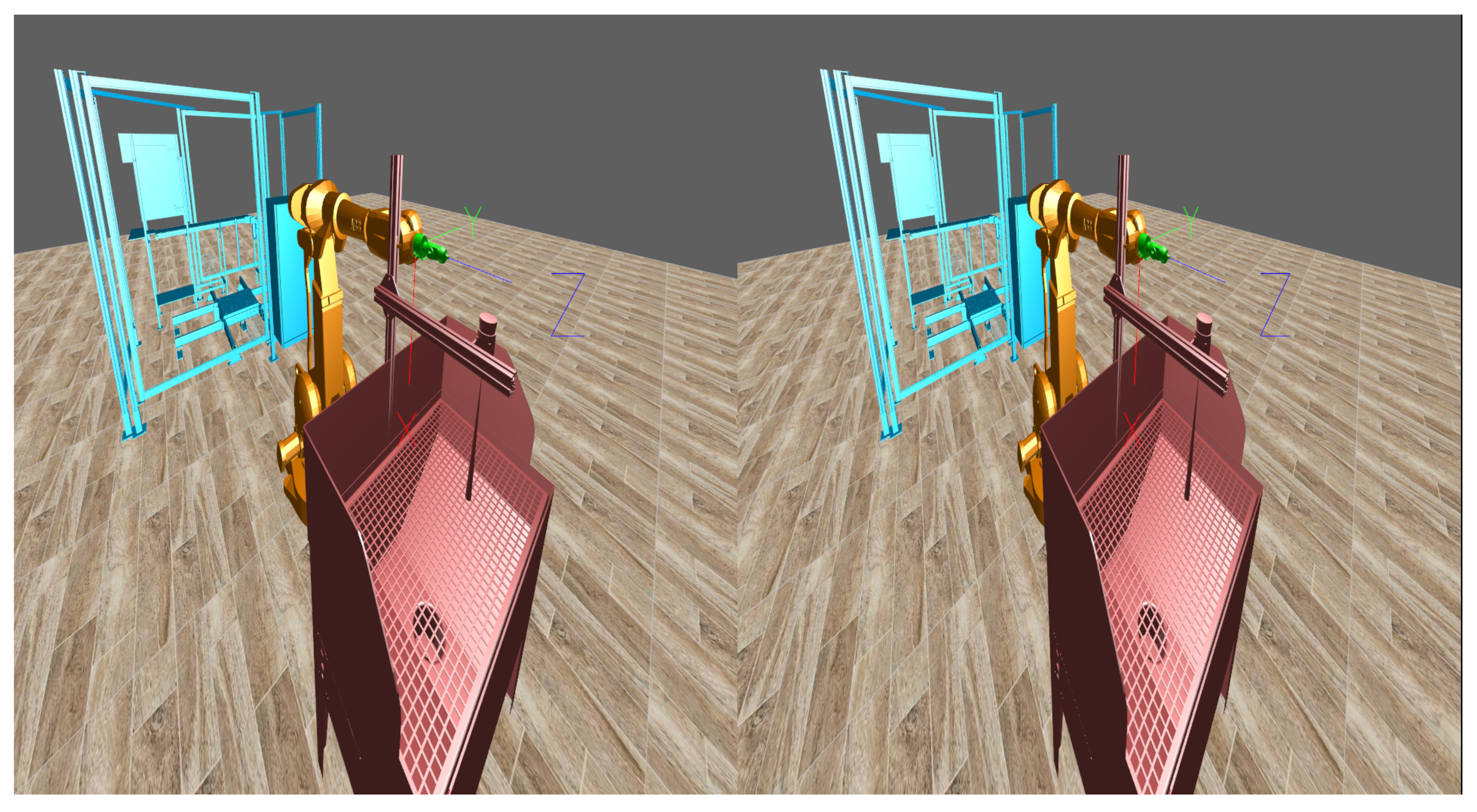

3.2. Virtual Reality

4. Experiment

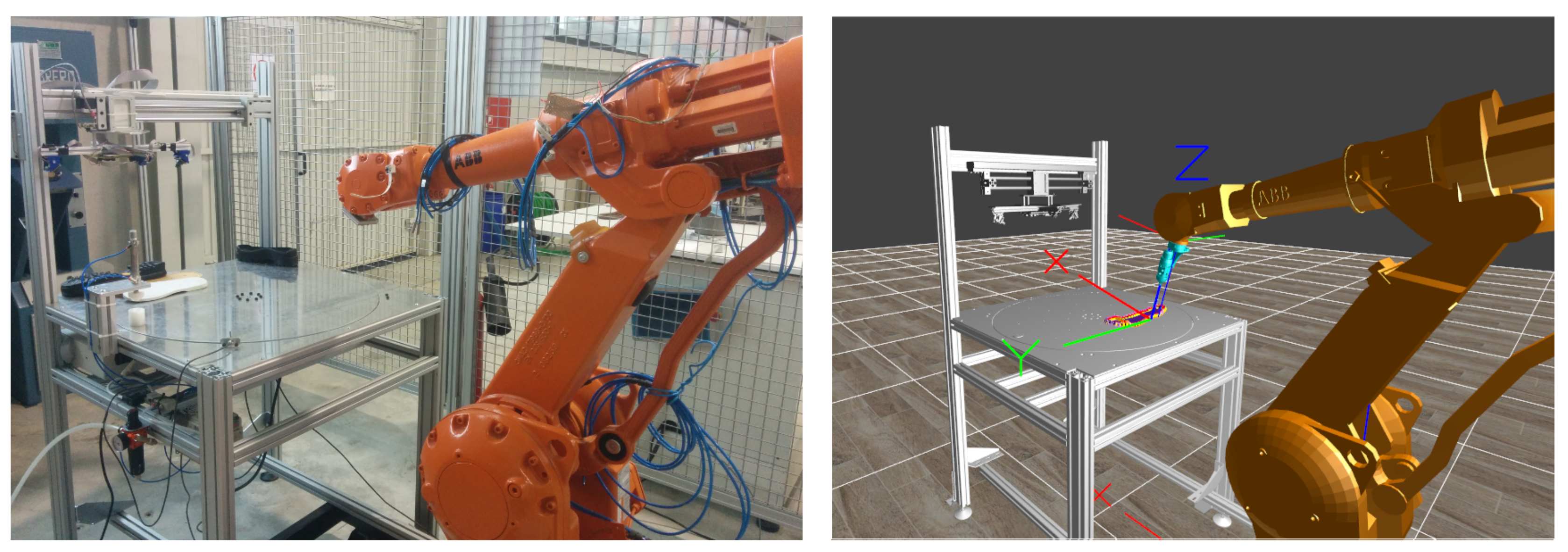

4.1. Prototype Preparation

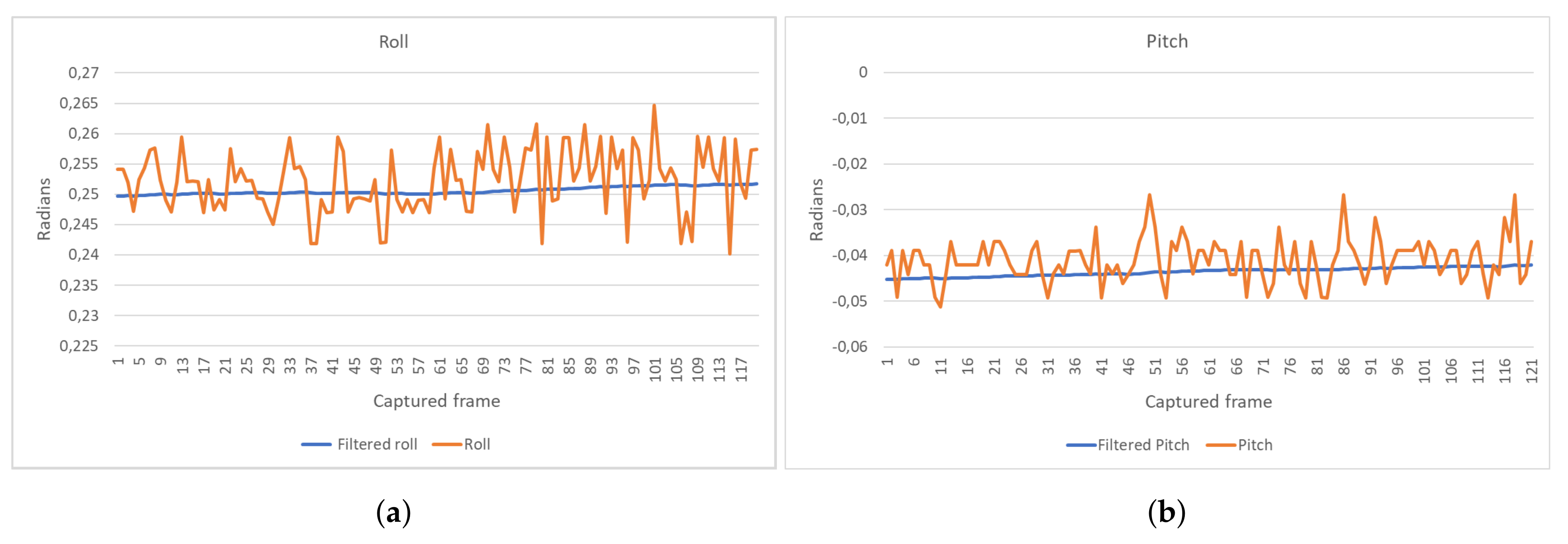

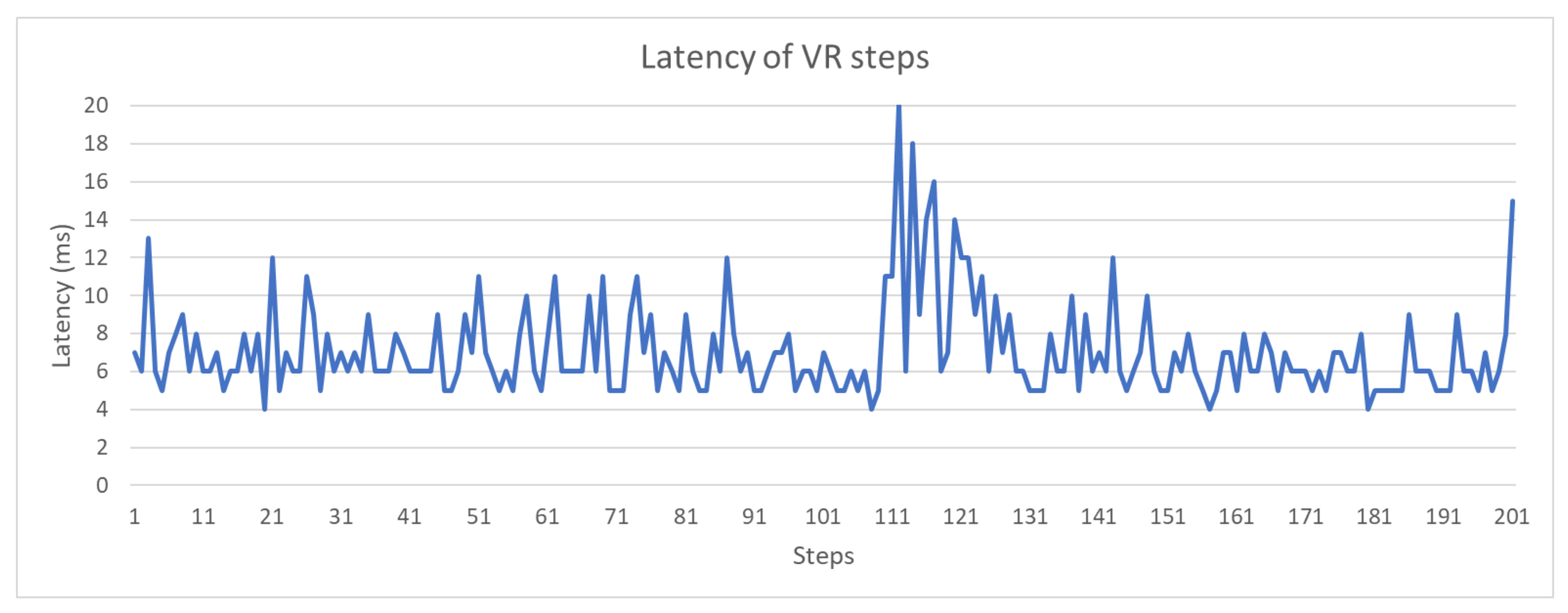

4.2. Measuring Real-Time Capabilities

5. Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

References and Note

- Psotka, J. Immersive training systems: Virtual reality and education and training. Instr. Sci. 1995, 23, 405–431. [Google Scholar] [CrossRef]

- Merchant, Z.; Goetz, E.T.; Cifuentes, L.; Keeney-Kennicutt, W.; Davis, T.J. Effectiveness of virtual reality-based instruction on students’ learning outcomes in K-12 and higher education: A meta-analysis. Comput. Educ. 2014, 70, 29–40. [Google Scholar] [CrossRef]

- Petrakou, A. Interacting through avatars: Virtual worlds as a context for online education. Comput. Educ. 2010, 54, 1020–1027. [Google Scholar] [CrossRef]

- Dalgarno, B.; Lee, M.J.W.; Carlson, L.; Gregory, S.; Tynan, B. An Australian and New Zealand scoping study on the use of 3D immersive virtual worlds in higher education. Australas. J. Educ. Technol. 2011, 27, 1–15. [Google Scholar] [CrossRef]

- Vergara, D.; Rubio, M.P.; Lorenzo, M. New Approach for the Teaching of Concrete Compression Tests in Large Groups of Engineering Students. J. Prof. Issues Eng. Educ. Pract. 2017, 143, 5016009. [Google Scholar] [CrossRef]

- Zwolinski, P.; Tichkiewitch, S.; Sghaier, A. The Use of Virtual Reality Techniques during the Design Process: from the Functional Definition of the Product to the Design of its Structure. CIRP Ann. 2007, 56, 135–138. [Google Scholar] [CrossRef]

- Nomura, J.; Sawada, K. Virtual reality technology and its industrial applications. Annu. Rev. Control 2001, 25, 99–109. [Google Scholar] [CrossRef]

- Bruno, F.; Muzzupappa, M. Product interface design: A participatory approach based on virtual reality. Int. J. Hum. Comput. Stud. 2010, 68, 254–269. [Google Scholar] [CrossRef]

- Shen, Y.; Ong, S.K.; Nee, A.Y.C. Augmented reality for collaborative product design and development. Des. Stud. 2010, 31, 118–145. [Google Scholar] [CrossRef]

- Ong, S.; Mannan, M. Virtual reality simulations and animations in a web-based interactive manufacturing engineering module. Comput. Educ. 2004, 43, 361–382. [Google Scholar] [CrossRef]

- Impelluso, T.; Metoyer-Guidry, T. Virtual Reality and Learning by Design: Tools for Integrating Mechanical Engineering Concepts. J. Eng. Educ. 2001, 90, 527–534. [Google Scholar] [CrossRef]

- Stone, R. Virtual reality for interactive training: An industrial practitioner’s viewpoint. Int. J. Hum. Comput. Stud. 2001, 55, 699–711. [Google Scholar] [CrossRef]

- Gamo, J. A Contribution to Virtual Experimentation in Optics. In Advanced Holography- Metrology and Imaging; InTech: Rijeka, Croatia, 2011; Chapter 16; pp. 357–374. [Google Scholar]

- Casas, S.; Portalés, C.; García-Pereira, I.; Fernández, M. On a First Evaluation of ROMOT—A RObotic 3D MOvie Theatre—For Driving Safety Awareness. Multimodal Technol. Interact. 2017, 1, 6. [Google Scholar] [CrossRef]

- Miyata, K.; Umemoto, K.; Higuchi, T. An educational framework for creating VR application through groupwork. Comput. Graph. 2010, 34, 811–819. [Google Scholar] [CrossRef]

- Abulrub, A.H.G.; Attridge, A.; Williams, M.A. Virtual Reality in Engineering Education: The Future of Creative Learning. Int. J. Emerg. Technol. Learn. (iJET) 2011, 6, 751–757. [Google Scholar]

- Jimeno-Morenilla, A.; Sánchez-Romero, J.L.; Mora-Mora, H.; Coll-Miralles, R. Using virtual reality for industrial design learning: A methodological proposal. Behav. Inf. Technol. 2016, 35, 897–906. [Google Scholar] [CrossRef]

- Saleeb, N.; Dafoulas, G.A. Effects of Virtual World Environments in Student Satisfaction. Int. J. Knowl. Soc. Res. 2011, 2, 29–48. [Google Scholar] [CrossRef][Green Version]

- Thorsteinsson, G.; Page, T. Creativity in technology education facilitated through virtual reality learning environments: A case study. J. Educ. Technol. 2007, 3, 74–87. [Google Scholar]

- Bell, J.T.; Fogler, H.S. Investigation and application of virtual reality as an educational tool. Available online: https://www.researchgate.net/profile/H_Scott_Fogler/publication/247920944_The_Investigation_and_Application_of_Virtual_Reality_as_an_Educational_Tool/links/55f721fb08ae07629dbfcfee/The-Investigation-and-Application-of-Virtual-Reality-as-an-Educational-Tool.pdf (accessed on 29 March 2018).

- Hashemipour, M.; Manesh, H.F.; Bal, M. A modular virtual reality system for engineering laboratory education. Comput. Appl. Eng. Educ. 2011, 19, 305–314. [Google Scholar] [CrossRef]

- Kirakowski, J. The use of questionnaire methods for usability assessment. Assessment 1994, 2008, 1–17. [Google Scholar]

- Sutcliffe, A.; Gault, B. Heuristic evaluation of virtual reality applications. Interact. Comput. 2004, 16, 831–849. [Google Scholar] [CrossRef]

- Saunier, J.; Barange, M.; Blandin, B.; Querrec, R.; Taoum, J. Designing adaptable virtual reality learning environments. In Proceedings of the Proceedings of the 2016 Virtual Reality International Conference, Laval, France, 23–25 March 2016. [Google Scholar]

- Häfner, P.; Häfner, V.; Ovtcharova, J. Teaching Methodology for Virtual Reality Practical Course in Engineering Education. Procedia Comput. Sci. 2013, 25, 251–260. [Google Scholar] [CrossRef]

- Brown, A.; Green, T. Virtual Reality: Low-Cost Tools and Resources for the Classroom. TechTrends 2016, 60, 517–519. [Google Scholar] [CrossRef]

- Vergara, D.; Rubio, M.; Lorenzo, M. On the Design of Virtual Reality Learning Environments in Engineering. Multimodal Technol. Interact. 2017, 1, 11. [Google Scholar] [CrossRef]

- Haddadin, S.; Albu-Schäffer, A.; Hirzinger, G. Safe Physical Human-Robot Interaction: Measurements, Analysis & New Insights. Robot. Res. 2011, 66, 395–407. [Google Scholar]

- Corke, P.I. Robotics, Vision and Control; Springer: Berlin, Germany, 2017; p. 580. [Google Scholar]

- Gil, A.; Reinoso, O.; Marin, J.M.; Paya, L.; Ruiz, J. Development and deployment of a new robotics toolbox for education. Comput. Appl. Eng. Educ. 2015, 23, 443–454. [Google Scholar] [CrossRef]

- Tijani, I.B. Teaching fundamental concepts in robotics technology using MATLAB toolboxes. In Proceedings of the IEEE Global Engineering Education Conference (EDUCON), Abu Dhabi, United Arab Emirates, 10–13 April 2016; pp. 403–408. [Google Scholar]

- Cocota, J.A.N.; D’Angelo, T.; Monteiro, P.M.d.B.; Magalhães, P.H.V. Design and implementation of an educational robot manipulator. In Proceedings of the XI Technologies Applied to Electronics Teaching (TAEE), Bilbao, Spain, 11–13 June 2014; pp. 1–6. [Google Scholar]

- Chinello, F.; Scheggi, S.; Morbidi, F.; Prattichizzo, D. KUKA Control Toolbox. IEEE Robot. Autom. Mag. 2011, 18, 69–79. [Google Scholar] [CrossRef]

- Flanders, M.; Kavanagh, R.C. Build-A-Robot: Using virtual reality to visualize the Denavit-Hartenberg parameters. Comput. Appl. Eng. Educ. 2015, 23, 846–853. [Google Scholar] [CrossRef]

- Freese, M.; Singh, S.; Ozaki, F.; Matsuhira, N. Virtual Robot Experimentation Platform V-REP: A Versatile 3D Robot Simulator. In Lecture Notes in Computer Science (including subseries Lecture Notes in Artificial Intelligence and Lecture Notes in Bioinformatics); Springer: Berlin/Heidelberg, Germany, 2010; Volume 6472, pp. 51–62. [Google Scholar]

- Hurtado, C.V.; Valerio, A.R.; Sanchez, L.R. Virtual Reality Robotics System for Education and Training. In Proceedings of the IEEE Electronics, Robotics and Automotive Mechanics Conference, Morelos, Mexico, 28 September–1 October 2010; pp. 162–167. [Google Scholar]

- Candelas, F.A.; Puente, S.T.; Torres, F.; Gil, P.; Ortiz, F.G.; Pomares, J. A Virtual Laboratory for Teaching Robotics. Int. J. Eng. Educ. 2003, 19, 363–370. [Google Scholar]

- Mehta, I.; Bimbraw, K.; Chittawadigi, R.G.; Saha, S.K. A teach pendant to control virtual robots in Roboanalyzer. In Proceedings of the International Conference on Robotics and Automation for Humanitarian Applications (RAHA), Kerala, India, 18–20 December 2016; pp. 1–6. [Google Scholar]

- Arnay, R.; Hernández-Aceituno, J.; González, E.; Acosta, L. Teaching kinematics with interactive schematics and 3D models. Comput. Appl. Eng. Educ. 2017, 25, 420–429. [Google Scholar] [CrossRef]

- Dede, C. Immersive Interfaces for Engagement and Learning. Science 2009, 323, 66–69. [Google Scholar] [CrossRef] [PubMed]

- Weidlich, D.; Cser, L.; Polzin, T.; Cristiano, D.; Zickner, H. Virtual reality approaches for immersive design. CIRP Ann. Manuf. Technol. 2007, 56, 139–142. [Google Scholar] [CrossRef]

- Mujber, T.; Szecsi, T.; Hashmi, M. Virtual reality applications in manufacturing process simulation. J. Mater. Process. Technol. 2004, 155156, 1834–1838. [Google Scholar] [CrossRef]

- Berg, L.P.; Vance, J.M. Industry use of virtual reality in product design and manufacturing: A survey. Virtual Real. 2017, 21, 1–17. [Google Scholar] [CrossRef]

- Zlajpah, L. Simulation in robotics. Math. Comput. Simul. 2008, 79, 879–897. [Google Scholar] [CrossRef]

- Román-Ibáñez, V.; Jimeno-Morenilla, A.; Pujol-López, F.; Salas-Pérez, F. Online simulation as a collision prevention layer in automated shoe sole adhesive spraying. Int. J. Adv. Manuf. Technol. 2017, 95, 1243–1253. [Google Scholar] [CrossRef]

- Shreiner, D.; Sellers, G.; Kessenich, J.M.; Licea-Kane, B. OpenGL Programming Guide: The Official Guide to Learning OpenGL, Version 4.3; Graphics Programming, Addison-Wesley: Boston, MA, USA, 2013. [Google Scholar]

- Waldron, K.; Schmiedeler, J. Kinematics. In Springer Handbook of Robotics; Springer: Berlin/Heidelberg, Germany, 2008; pp. 9–33. [Google Scholar]

- Radavelli, L.; Simoni, R.; Pieri, E.D.; Martins, D. A Comparative Study of the Kinematics of Robots Manipulators by Denavit-Hartenberg and Dual Quaternion. Mec. Comput. 2012, XXXI, 13–16. [Google Scholar]

- Manocha, D.; Canny, J.F. Efficient inverse kinematics for general 6R manipulators. IEEE Trans. Robot. Autom. 1994, 10, 648–657. [Google Scholar] [CrossRef]

- Smith, R. Open Dynamics Engine ODE, Multibody Dynamics Simulation Software. 2004.

- Lin, M.; Manocha, D.; Cohen, J.; Gottschalk, S. Collision detection: Algorithms and applications. In Algorithms for Robotics Motion and Manipulation: 1996 Workshop on the Algorithmic Foundations of Robotics; A K Peters: Wellesley, MA, USA, 1996; pp. 129–142. [Google Scholar]

- Gottschalk, S.; Lin, M.C.; Manocha, D. OBBTree. In Proceedings of the 23rd Annual Conference on Computer Graphics and Interactive Techniques - SIGGRAPH ’96; ACM: New York, NY, USA, 1996; pp. 171–180. [Google Scholar]

- Fares, C.; Hamam, Y. Collision Detection for Rigid Bodies: A State of the Art Review. In Proceedings of the International Conference Graphicon, Novosibirsk Akademgorodok, Russia, 20–14 June 2005. [Google Scholar]

- Reggiani, M.; Mazzoli, M.; Caselli, S. An experimental evaluation of collision detection packages for robot motion planning. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and System, Lausanne, Switzerland, 30 September–4 October 2002; Volume 3, pp. 2329–2334. [Google Scholar]

- Mihelj, M.; Novak, D.; Begus, S. Introduction to Virtual Reality. In Intelligent Systems, Control and Automation: Science and Engineering; Springer Nature: Berlin, Germany, 2014; Volume 68, pp. 1–16. [Google Scholar]

- Jimeno-Morenilla, A.; Sánchez-Romero, J.L.; Salas-Pérez, F. Augmented and Virtual Reality techniques for footwear. Comput. Ind. 2013, 64, 1371–1382. [Google Scholar] [CrossRef]

- Lányi, C.S. Virtual reality in healthcare. Stud. Comput. Intell. 2006, 19, 87–116. [Google Scholar]

- Lawson, G.; Salanitri, D.; Waterfield, B. Future directions for the development of virtual reality within an automotive manufacturer. Appl. Ergon. 2016, 53, 323–330. [Google Scholar] [CrossRef] [PubMed]

- Michas, S.; Matsas, E.; Vosniakos, G.C. Interactive programming of industrial robots for edge tracing using a virtual reality gaming environment. Int. J. Mechatron. Manuf. Syst. 2017, 10, 237–259. [Google Scholar]

- Jen, Y.; Taha, Z.; Vui, L. VR-Based Robot Programming and Simulation System for an Industrial Robot. Int. J. Ind. Eng. Theory Appl. Pract. 2008, 15, 314–322. [Google Scholar]

- Fleck, M.M. Perspective projection: The wrong imaging model. Available online: http://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.52.8827&rep=rep1&type=pdf (accessed on 29 March 2018).

- Starlino Electronics. A Guide To using IMU (Accelerometer and Gyroscope Devices) in Embedded Applications. Available online: http://www.starlino.com/imu_guide.html (accessed on 29 March 2018).

- Alam, F.; Zhaihe, Z.; Jiajia, H. A Comparative Analysis of Orientation Estimation Filters using MEMS based IMU. In Proceedings of the 2nd International Conference on Research in Science, Engineering and Technology (ICRSET’2014), Dubai, The United Arab Emirates, 21–22 March 2014; pp. 86–91. [Google Scholar]

- Cai, W.; Shea, R.; Huang, C.Y.; Chen, K.T.; Liu, J.; Leung, V.C.M.; Hsu, C.H. A Survey on Cloud Gaming: Future of Computer Games. IEEE Access 2016, 4, 7605–7620. [Google Scholar] [CrossRef]

- Gutman, C.; Waxemberg, D.; Neyer, A.; Bergeron, M.; Hennessy, A. Moonlight an open source NVIDIA Gamestream Client. Available online: http://www.thewindowsclub.com/moonlight-open-source-nvidia-gamestream-client (accessed on 29 March 2018).

- Lackner, J.R. Simulator sickness. J. Acoust. Soc. Am. 1992, 92, 2458. [Google Scholar] [CrossRef]

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Román-Ibáñez, V.; Pujol-López, F.A.; Mora-Mora, H.; Pertegal-Felices, M.L.; Jimeno-Morenilla, A. A Low-Cost Immersive Virtual Reality System for Teaching Robotic Manipulators Programming. Sustainability 2018, 10, 1102. https://doi.org/10.3390/su10041102

Román-Ibáñez V, Pujol-López FA, Mora-Mora H, Pertegal-Felices ML, Jimeno-Morenilla A. A Low-Cost Immersive Virtual Reality System for Teaching Robotic Manipulators Programming. Sustainability. 2018; 10(4):1102. https://doi.org/10.3390/su10041102

Chicago/Turabian StyleRomán-Ibáñez, Vicente, Francisco A. Pujol-López, Higinio Mora-Mora, Maria Luisa Pertegal-Felices, and Antonio Jimeno-Morenilla. 2018. "A Low-Cost Immersive Virtual Reality System for Teaching Robotic Manipulators Programming" Sustainability 10, no. 4: 1102. https://doi.org/10.3390/su10041102

APA StyleRomán-Ibáñez, V., Pujol-López, F. A., Mora-Mora, H., Pertegal-Felices, M. L., & Jimeno-Morenilla, A. (2018). A Low-Cost Immersive Virtual Reality System for Teaching Robotic Manipulators Programming. Sustainability, 10(4), 1102. https://doi.org/10.3390/su10041102