Workload-Aware Edge Node Orchestration and Dynamic Resource Scaling in MEC

Abstract

1. Introduction

- We formulate the edge node orchestration problem as a dynamic variation of the capacitated K-median clustering problem, targeting both communication cost minimization and load balance optimization in space and time.

- We extend our previous temporal stability mechanism [9] by integrating it with the SSAA schemes. This combination allows the system to handle spatial shifts in demand, as well as temporal variations.

- We implement a closed-loop controller using EWMA forecasting to drive dynamic resource scaling, minimizing the operational overhead of service migrations and avoiding SLA violations.

- We conduct extensive simulations on a Manhattan Grid topology under uniform and random traffic patterns as well as on an irregular graph using real-world CPU utilization traces from BitBrains. The results demonstrate that our Workload-Aware Edge Node Orchestration framework, integrated with the SSAA schemes, achieves superior performance in terms of load balancing, latency and service outages compared to other proposed schemes.

2. Related Work

2.1. Static Placement and Latency Minimization

2.2. Load Balancing and Energy Efficiency

2.3. Predictive, Dynamic, and Resilient Orchestration

3. System Model and Problem Statement

3.1. Network Architecture and Dynamic Context

- The Offered Workload represented by a non-negative weight , is the computational demand (measured in MIPS) originating from end-users attached to AN , which varies in both time (temporal intensity) and space (spatial location), reflecting a dynamic environment. Both uniform and non-uniform distributions of across ANs at the network edge are assumed in our model.

- The Total Offered Workload , is the aggregate computational demand (measured in MIPS) originating from all end-users attached to each AN in the network.

- The Dynamic Dimensioning , is the minimum number of active SNs required at time t, chosen from the set of available ANs (each AN may host a SN) to accommodate the aggregate workload demand, such that .

- The Active Service Node Set , is the subset of ANs chosen to host active SNs, where and .

3.2. Traffic and Communication Costs

3.3. Problem Formulation

- Minimization of Communication Latency

- 2.

- Minimization of Load Imbalance

- A.

- Compute-Node Selection Set (CSS) Subproblem

- B.

- Access Node-to-Compute Node Assignment (ACA) Subproblem

- C.

- Edge Node Orchestration Module

- Predictive Sensing: Monitoring whether the offered workload conditions have significantly changed, by measuring the global system utilization and forecasting future demand and filtering temporal short peaks or dips.

- Temporal Stability: Determining the number of active nodes while applying hysteresis constraints to prevent unecessary “ping-pong” reconfigurations.

- Spatial Optimization: Solving CSS and ACA subproblems to activate the most proper SNs and allocate the remaining ANs to these SNs or using precomputed (offline) active sets and ANs (workload) assignments to accelerate reconfiguration phases.

4. Proposed Schemes

4.1. Heuristic for CSS Subproblem

- Scoring: Each candidate node is scored based on the centrality measure , which is the weighted distance of all other ANs to . Nodes are listed in increasing order of this measure. Intuitively, nodes with low (high) scores are topologically central (peripheral) to the current spatial distribution of the workload.

- Selection with Dispersion: The algorithm iteratively selects the best candidate from the sorted list starting from the nodes with low values towards the higher ones. However, to prevent the clustering of servers in heavily loaded central locations, the selection procedure applies a minimum distance between selected nodes constraint that favors the dispersion of the selected servers, thus ensuring a wider coverage area.

4.2. Heuristic for ACA Subproblem

- Determining the visiting order in a RR cycle: First, the normalized distances of every not yet assigned AN from every SN, defined as the AN to SN actual distance divided by the sum of distances of this AN to all SNs. A normalized distance (lower) greater than indicates an AN (close to) far away from a SN. For every SN we find the smallest such normalized distance and we sort SNs in increasing order of these minimum normalized distances. This is the order of visiting SNs in this cycle.

- Assignments during a RR cycle: For each SN visited, if its minimum normalized distance is lower than we assign the AN that corresponds to this minimum normalized distance to this SN and remove the AN from further consideration, provided that the load of the SN will not exceed its capacity. If, however, the SN minimum normalized distance is greater than , the SN and all remaining SNs are skipped to avoid allocating very distant ANs to them.

5. Predictive Utilization Monitoring and System Reconfigurations

5.1. Sensing and Forecasting

5.2. Dynamic Scaling and Threshold Policy

5.3. Hysteresis and Stability Control

6. Evaluation: Results, Analysis and Discussion

- Forward Greedy with Load Balance

- Betweenness Centrality with Load Balance

6.1. Experimental Setup: Environment, Topology and Workload Modeling

- Uniform Offered Workload Distribution: The aggregate workload is evenly distributed across all ANs, , during each time interval. This scenario tests the system’s ability to minimize latency when demand is geographically homogeneous.

- Random Offered Workload Distribution: The offered workload over time is stochastically distributed to emulate heterogeneous demand and create a fully dynamic environment. This scenario introduces high spatial variance, forcing the orchestrator to balance the trade-off between proximity and load balancing.

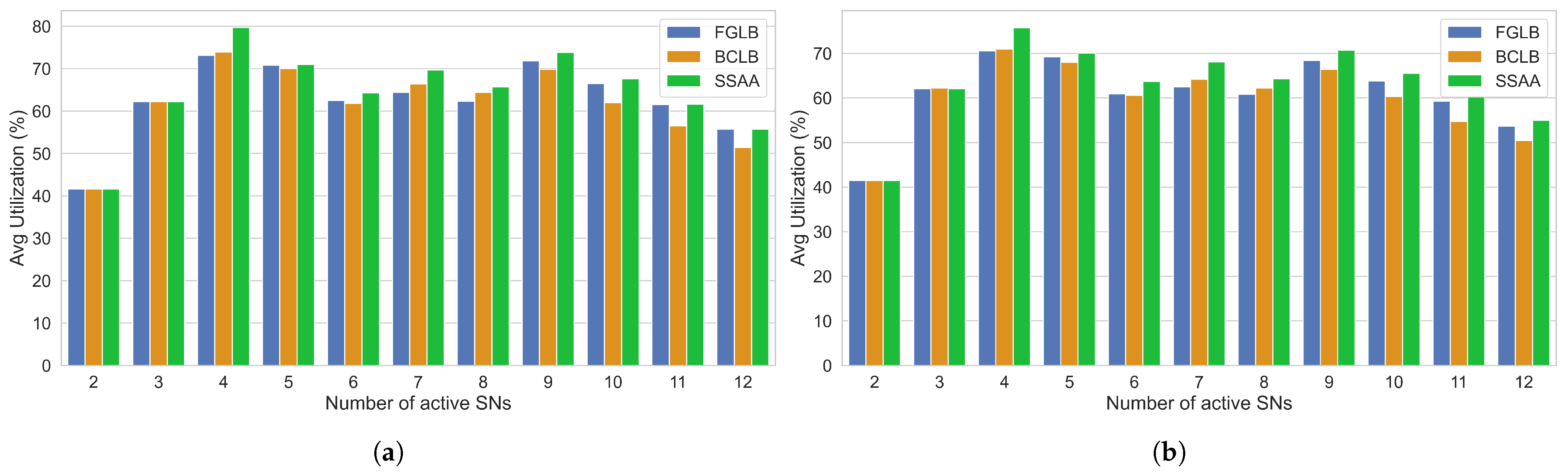

6.2. Resource Utilization Efficiency

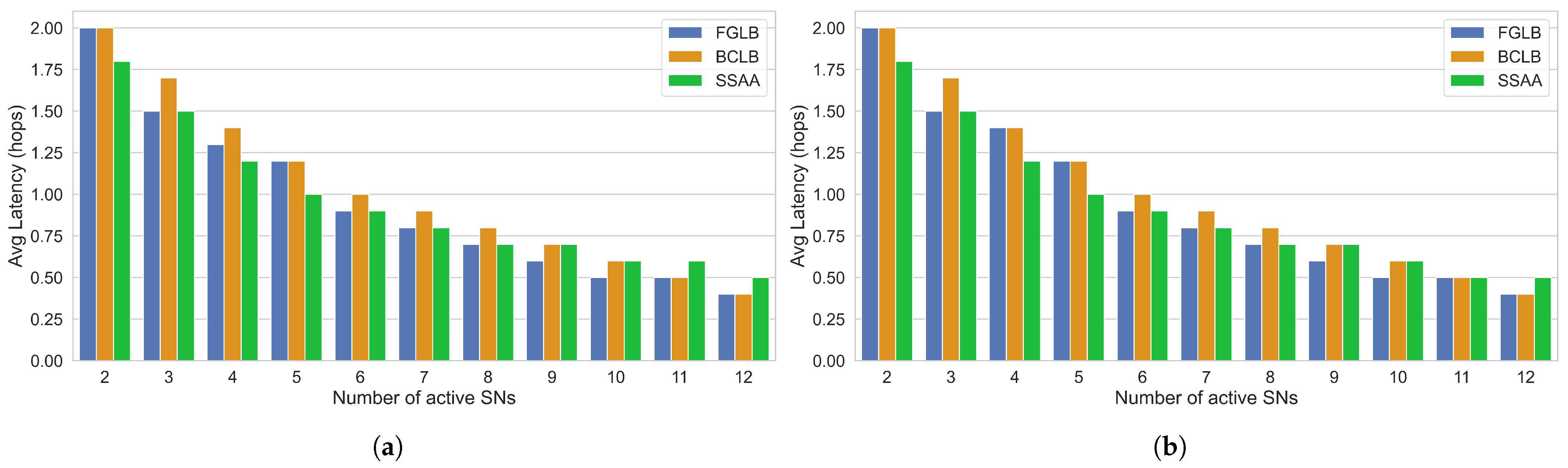

6.3. Latency Performance

6.4. Load Balancing Capability

6.5. Service Reliability

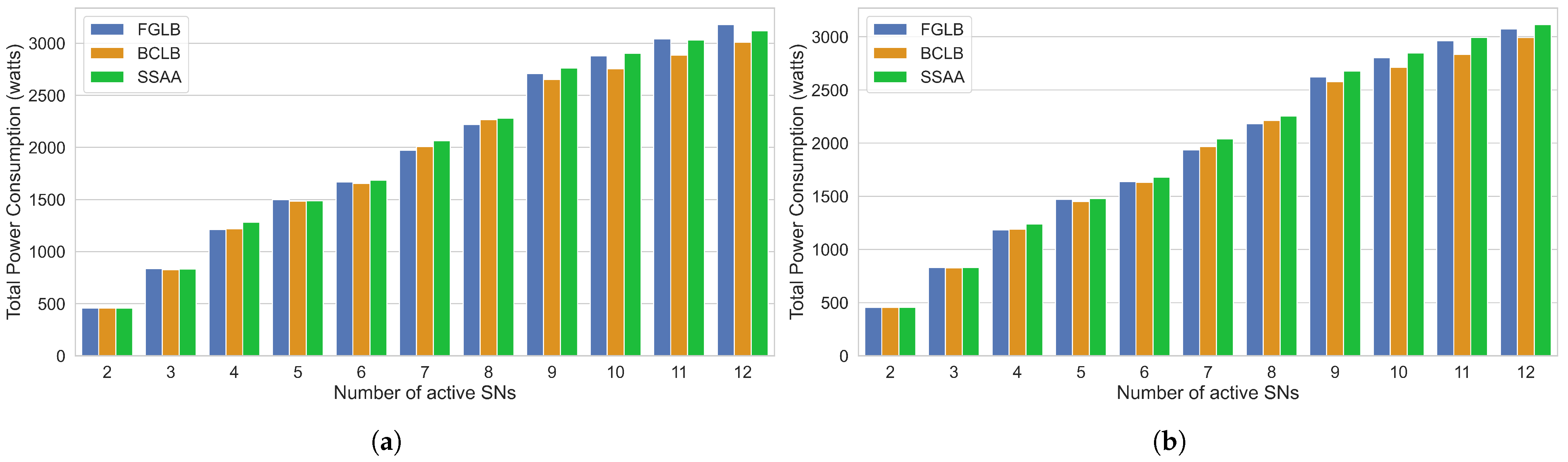

6.6. Energy Efficiency

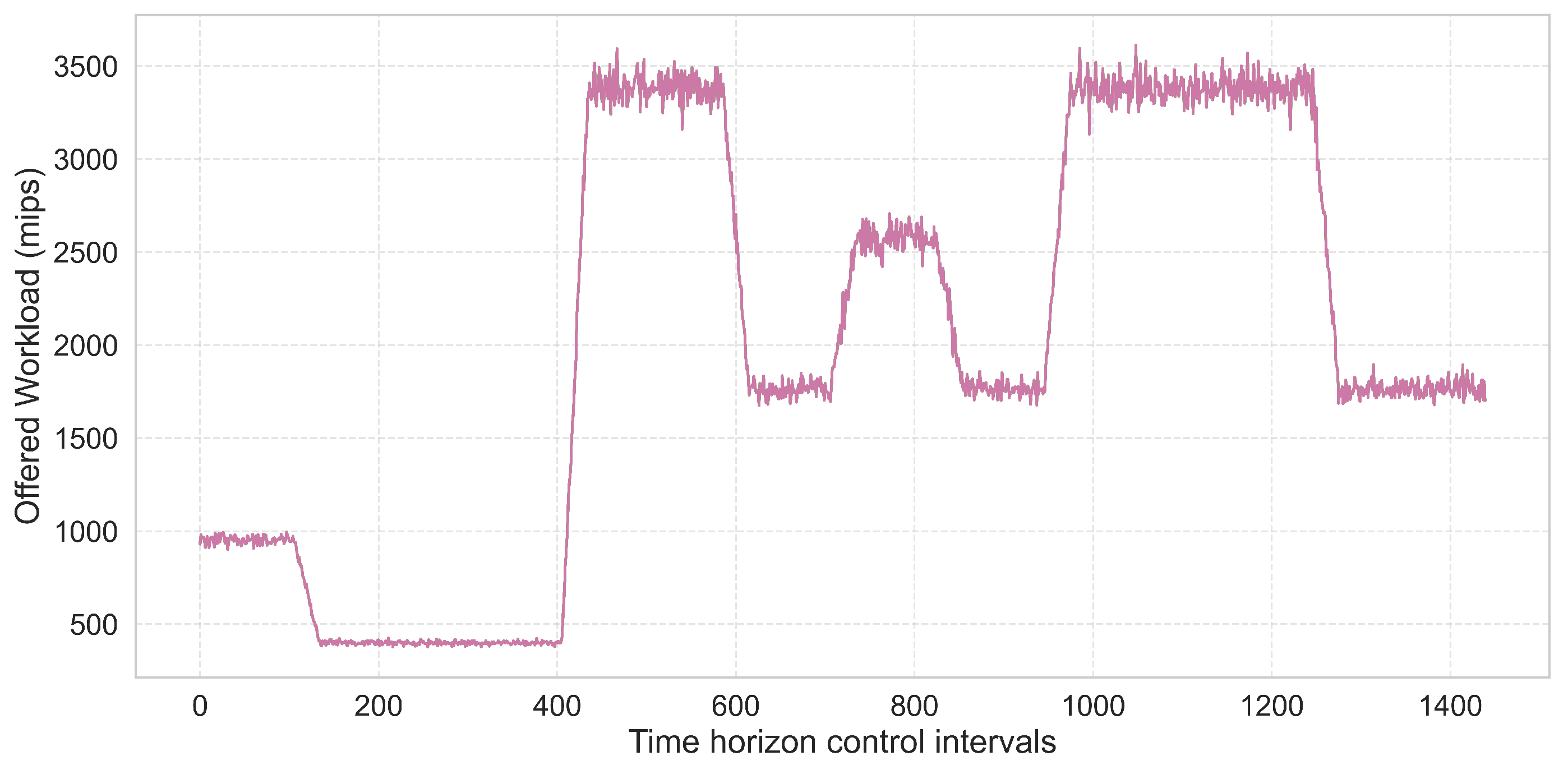

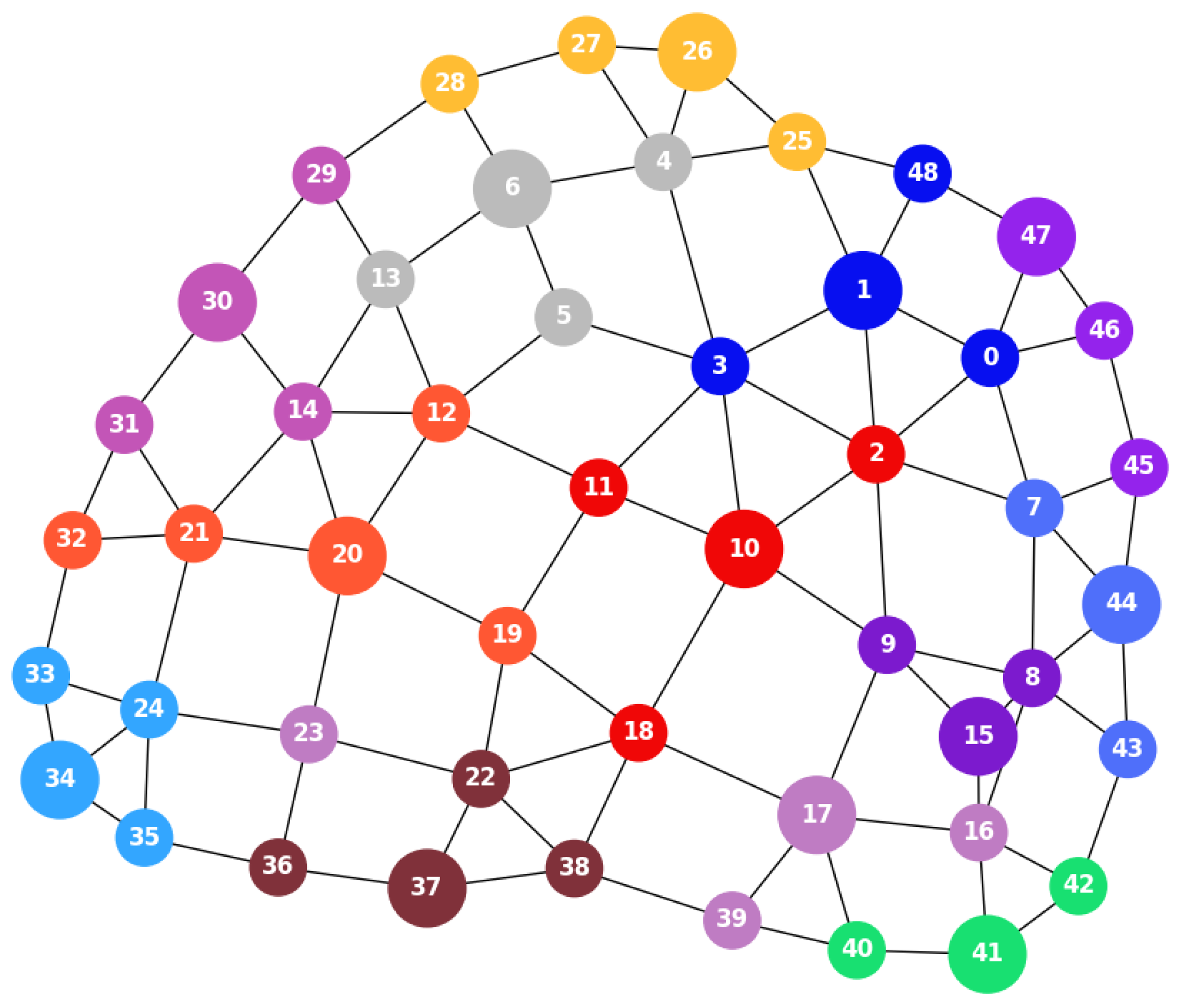

6.7. Performance Evaluation Under Irregular Topology and Real-World Traces

- A.

- Mean Latency

- B.

- Load Balancing Performance

- C.

- Average Resource Utilization

- D.

- Service Reliability

- E.

- Hysteresis Stability Window and EWMA Smoothing Factor

7. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Abbreviations

| ACA | Access Node-to-Compute Node Assignment |

| AN | Access Node |

| AR | Augmented Reality |

| BCLB | Betweenness Centrality with Load Balancing |

| CSS | Compute-Node Selection Set |

| DL | Deep Learning |

| DRL | Deep Reinforcement Learning |

| ENOM | Edge Node Orchestration Module |

| ESP | Edge Server Placement |

| EWMA | Exponentially Weighted Moving Average |

| FGLB | Forward Greedy with Load Balancing |

| ILP | Integer Linear Programming |

| IoT | Internet of Things |

| LSTM | Long Short-Term Memory |

| MEC | Multi-Access Edge Computing |

| MIPS | Million Instructions Per Second |

| OPEX | Operational Expenditure |

| QoE | Quality of Experience |

| QoS | Quality of Service |

| RAN | Radio Access Network |

| RR | Round Robin |

| SSAA | Service-Node Selection and Access-Node Assignment |

| SLA | Service Level Agreement |

| SN | Service Node |

References

- Satyanarayanan, M. The Emergence of Edge Computing. Computer 2017, 50, 30–39. [Google Scholar] [CrossRef]

- Wu, Q.; Wang, W.; Fan, P.; Fan, Q.; Wang, J.; Letaief, K.B. URLLC-Aware Resource Allocation for Heterogeneous Vehicular Edge Computing. IEEE Trans. Veh. Technol. 2024, 73, 11789–11805. [Google Scholar] [CrossRef]

- Satyanarayanan, M.; Bahl, P.; Caceres, R.; Davies, N. The Case for VM-Based Cloudlets in Mobile Computing. IEEE Pervasive Comput. 2009, 8, 14–23. [Google Scholar] [CrossRef]

- Hu, Y.C.; Patel, M.; Sabella, D.; Sprecher, N.; Young, V. Mobile Edge Computing: A Key Technology Towards 5G. ETSI White Pap. 2015, 11, 1–16. [Google Scholar]

- Cao, K.; Liu, Y.; Meng, G.; Sun, Q. An Overview on Edge Computing Research. IEEE Access 2020, 8, 85714–85728. [Google Scholar] [CrossRef]

- Li, S. On Uniform Capacitated k-Median Beyond the Natural LP Relaxation. ACM Trans. Algorithms 2017, 13, 1–18. [Google Scholar] [CrossRef]

- Oikonomou, E.; Rouskas, A. Efficient Schemes for Optimizing Load Balancing and Communication Cost in Edge Computing Networks. Information 2024, 15, 670. [Google Scholar] [CrossRef]

- Oikonomou, E.; Plastras, S.; Tsoumatidis, D.; Skoutas, D.N.; Rouskas, A. Workload Prediction for Efficient Node Management in Mobile Edge Computing. In Proceedings of the 2024 IFIP Networking Conference (IFIP Networking), Thessaloniki, Greece, 3–6 June 2024; pp. 461–467. [Google Scholar]

- Oikonomou, E.; Rouskas, A.; Skoutas, D.N. Adaptive Node Management in Edge Networks Under Time-Varying Workloads. In Proceedings of the 2024 IEEE Virtual Conference on Communications (VCC), NY, USA, 3–5 December 2024; pp. 1–6. [Google Scholar]

- Jia, M.; Cao, J.; Liang, W. Optimal Cloudlet Placement and User to Cloudlet Allocation in Wireless Metropolitan Area Networks. IEEE Trans. Cloud Comput. 2017, 5, 725–737. [Google Scholar] [CrossRef]

- Wang, S.; Zhao, Y.; Xu, J.; Yuan, J.; Hsu, C.-H. Edge server placement in mobile edge computing. J. Parallel Distrib. Comput. 2019, 127, 160–168. [Google Scholar] [CrossRef]

- Kasi, S.K.; Kasi, M.K.; Ali, K.; Raza, M.; Afzal, H.; Lasebae, A.; Naeem, B.; Islam, S.; Rodrigues, J.J. Heuristic Edge Server Placement in Industrial Internet of Things and Cellular Networks. IEEE Internet Things J. 2021, 8, 10308–10317. [Google Scholar] [CrossRef]

- Shibata, K.; Miyata, S. Edge Server Placement and Task Allocation for Maximum Delay Reduction. IEEE Open J. Commun. Soc. 2025, 6, 6207–6217. [Google Scholar] [CrossRef]

- Maqsood, T.; Zaman, S.K.U.; Qayyum, A.; Rehman, F.; Mustafa, S.; Shuja, J. Adaptive thresholds for improved load balancing in mobile edge computing using K-means clustering. Telecommun. Syst. 2024, 86, 519–532. [Google Scholar] [CrossRef]

- Li, Y.; Wang, S. An Energy-Aware Edge Server Placement Algorithm in Mobile Edge Computing. In Proceedings of the 2018 IEEE International Conference on Edge Computing (EDGE), San Francisco, CA, USA, 2–7 July 2018; pp. 66–73. [Google Scholar]

- Fan, Q.; Ansari, N. Towards Workload Balancing in Fog Computing Empowered IoT. IEEE Trans. Netw. Sci. Eng. 2020, 7, 253–262. [Google Scholar] [CrossRef]

- Xie, Z.; Xia, X.; Hu, B.; Khalil, I.; Wang, Z.; Cui, G.; Xie, G.; Xue, M. Optimizing Energy Efficiency with QoE-Awareness in Multi-Access Edge Computing. In Proceedings of the 2025 IEEE/ACM International Symposium on Quality of Service (IWQoS), Gold Coast, Australia, 2–4 July 2025; pp. 1–10. [Google Scholar]

- Tang, Y.; Zhang, H.; Li, Y.; Wang, Z. SDD: Spectral clustering and double deep Q-network based edge server deployment strategy. Front. Comput. Sci. 2025, 7, 1668495. [Google Scholar] [CrossRef]

- Zeng, Y.; Ye, H.; Wang, S. A Deep Reinforcement Learning-Based Approach to Resilient Service Placement for Mobile Edge Computing. IEEE Access 2025, 13, 159231–159239. [Google Scholar] [CrossRef]

- Yu, P.; Wang, X.; Zhou, L.; Li, K. Cost-Effective Server Deployment for Multi-Access Edge Networks: A Cooperative Scheme. IEEE Trans. Parallel Distrib. Syst. 2024, 35, 1583–1597. [Google Scholar]

- Xu, W. A Survey on Dynamic Heterogeneous Resource Scheduling Optimization in Mobile Edge Computing. Sci. J. Intell. Syst. Res. 2025, 7, 54–62. [Google Scholar] [CrossRef]

- Tang, L.; Hou, Q.; Wen, W.; Fang, D.; Chen, Q. Digital-Twin-Assisted VNF Migration Through Resource Prediction in SDN/NVF-Enabled IoT Networks. IEEE Internet Things J. 2024, 11, 35445–35464. [Google Scholar] [CrossRef]

- Nadukuru, S. AI-Based Workload Prediction Models for Optimizing Serverless Resource Allocation. J. Quantum Sci. Technol. 2024, 1, 45–54. [Google Scholar] [CrossRef]

- Xu, W.; Li, Y.; Chen, M.; Wu, J. Fairness-Aware Budgeted Edge Server Placement for Connected Autonomous Vehicles. IEEE Trans. Mob. Comput. 2025, 24, 4762–4776. [Google Scholar] [CrossRef]

- Burbano, J.S.; Abdullah, A.; Zhantileuov, E.; Liyanage, M.; Schuster, R. Dynamic Edge Server Selection in Time-Varying Environments: A Reliability-Aware Predictive Approach. arXiv 2025, arXiv:2511.10146. [Google Scholar]

- Shi, Y.; Yi, C.; Wang, R.; Wu, Q.; Chen, B.; Cai, J. Service Migration or Task Rerouting: A Two-Timescale Online Resource Optimization for MEC. IEEE Trans. Wirel. Commun. 2024, 23, 1503–1519. [Google Scholar] [CrossRef]

- Montgomery, D.C.; Jennings, C.L.; Kulahci, M. Introduction to Time Series Analysis and Forecasting, 2nd ed.; John Wiley & Sons: Hoboken, NJ, USA, 2015. [Google Scholar]

- Dohan, D.; Karp, S.; Matejek, B. K-Median Algorithms: Theory in Practice. Working Paper, Princeton University. 2015. Available online: https://www.cs.princeton.edu/courses/archive/fall14/cos521/projects/kmedian.pdf (accessed on 26 March 2026).

- Karaca, O.; Tihanyi, D.; Kamgarpour, M. Performance guarantees of forward and reverse greedy algorithms for minimizing nonsupermodular nonsubmodular functions on a matroid. Oper. Res. Lett. 2021, 49, 855–861. [Google Scholar] [CrossRef]

- Chernoskutov, M.; Ineichen, Y.; Bekas, C. Heuristic Algorithm for Approximation Betweenness Centrality Using Graph Coarsening. Procedia Comput. Sci. 2015, 66, 83–92. [Google Scholar] [CrossRef]

- SPECpower_ssj2008. Available online: https://spec.org/power_ssj2008/results/res2024q4/power_ssj2008-20241118-01475.html (accessed on 11 February 2025).

- BitBrains. GWA-T-12 BitBrains. Available online: https://atlarge-research.com/gwa-t-12 (accessed on 28 February 2026).

| Period | Time Window | Load Intensity | Avg. Load (MIPS) | Load Dispersion |

|---|---|---|---|---|

| P1 | 00:00–02:00 | Medium–High | 215.0 | 90 |

| P2 | 02:00–07:00 | Medium | 145.0 | 90 |

| P3 | 07:00–10:00 | High | 275.0 | 90 |

| P4 | 10:00–12:00 | Medium | 145.0 | 90 |

| P5 | 12:00–14:00 | Medium–Low | 85.0 | 70 |

| P6 | 14:00–16:00 | Low | 30.0 | 60 |

| P7 | 16:00–21:00 | High | 275.0 | 90 |

| P8 | 21:00–24:00 | Medium | 145.0 | 90 |

| Parameter | Value | Definition |

|---|---|---|

| M | 25 | Total number of ANs |

| Number of Active SNs | ||

| C | 500 | SN Processing Capacity (MIPS) |

| 1 | Manhattan distance between adjacent ANs (hop) | |

| 35%, 75% | Target Utilization Bounds (Low–High) | |

| 0.75 | EWMA smoothing factor | |

| L | 5 | Hysteresis stability window (time epochs) |

| Parameter | Value | Definition |

|---|---|---|

| M | 49 | Total number of ANs |

| Number of Active SNs | ||

| C | 500 | SN Processing Capacity (MIPS) |

| 1 | Manhattan distance between adjacent ANs (hop) | |

| 35%, 75% | Target Utilization Bounds (Low–High) | |

| 0.5, 0.75, 0.9 | EWMA smoothing factor | |

| L | 5, 10, 20 | Hysteresis stability window (time epochs) |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Oikonomou, E.; Rouskas, A. Workload-Aware Edge Node Orchestration and Dynamic Resource Scaling in MEC. Future Internet 2026, 18, 184. https://doi.org/10.3390/fi18040184

Oikonomou E, Rouskas A. Workload-Aware Edge Node Orchestration and Dynamic Resource Scaling in MEC. Future Internet. 2026; 18(4):184. https://doi.org/10.3390/fi18040184

Chicago/Turabian StyleOikonomou, Efthymios, and Angelos Rouskas. 2026. "Workload-Aware Edge Node Orchestration and Dynamic Resource Scaling in MEC" Future Internet 18, no. 4: 184. https://doi.org/10.3390/fi18040184

APA StyleOikonomou, E., & Rouskas, A. (2026). Workload-Aware Edge Node Orchestration and Dynamic Resource Scaling in MEC. Future Internet, 18(4), 184. https://doi.org/10.3390/fi18040184