1. Introduction

The widespread adoption of the internet has made communication, trade, and the spread of information much easier. Nevertheless, it has also provided ample grounds for cyberattacks. Amid these, malicious URLs are still among the most common threats and act as gateways for phishing, malware distribution, and website defacing attacks [

1,

2]. Conventional defense approaches, such as blocklists and heuristic rules, are unable to keep up with fast-changing attacker tactics [

3]. As a result, the recent advances have prompted the adoption of deep-learning methods in the application of malicious URL detection, especially transformer-based models that use contextual representations to attain high detection rates [

4,

5].

Although transformer-based architectures, such as BERT and ELECTRA, have demonstrated strong performance in malicious URL detection, their substantial memory requirements and slow inference time make them impractical for real-time detection or deployment on resource-constrained edge computing devices [

6,

7]. Knowledge distillation (KD) has been introduced as a solution by distilling large teacher models into smaller student models to improve computational efficiency [

5,

8]. In other words, the knowledge from large teacher models is transferred to smaller student models. Nonetheless, most of the existing KD-based methods are predominantly based on classification loss or soft-target guidance. This could limit the models’ ability to effectively learn structured and discriminative feature representations. Therefore, these KD-based approaches could not be effective to separate malicious and benign URLs that share statistical language patterns, undoubtedly in noisy or imbalanced cases [

2,

7]. In the interim, contrastive learning (CL) has shown great promise in reimposing more stringent embedding alignment, although it is currently underutilized for malicious URL detection.

To mitigate these limitations, we propose a lightweight transformer (named Contra-KD) integrating knowledge distillation and contrastive learning to improve computation efficiency and the quality of data representation. Contra-KD demonstrates effective discrimination between malicious and benign URLs, even under imbalanced or adversarial conditions. It attains this by leveraging a teacher-student paradigm enhanced with structured embedding alignment.

The contributions of this work are summarized as follows:

The notion of the new integration of contrastive learning and knowledge distillation in a unified framework to enhance computational efficiency and the model’s discriminative feature learning capability in a lightweight transformer model.

Design of Contra-KD, an ultra-compact transformer: A 6-layer, 8.8 M parameter model designed for malicious URL detection in resource-constrained environments with minimal parameter counts.

Development of a hybrid loss function: A composite loss that exploits supervised classification loss, Kullback–Leibler-divergence-based distillation and contrastive loss to enhance model generalization under class imbalance and adversarial obfuscation.

2. Related Works

The classic malicious URL detection systems primarily relied on blocklists and heuristic rules-based approaches. Though these methods are computationally efficient, they hardly cope with fast-evolving obfuscation tactics such as character substitution, smart redirection, and other stealthy tricks [

9]. In order to overcome these limitations, XGBoost, decision trees, and ensemble classifiers have been proposed. These approaches adopt handcrafted lexical and host-based traits and perform well on balanced datasets. Nevertheless, they are vulnerable to adversarial engineering and hidden feature correlations [

10,

11].

With the widespread use of deep-learning, convolutional neural networks (CNNs) and recurrent neural networks (RNNs) have been introduced to analyze and identify malicious URLs. Compared to the classical machine learning approaches, deep-learning models eliminate the necessity for manual engineering, but they automatically derive significant attributes from URL data. Sarkhi et al. proposed lightweight CNNs to detect malicious URL attacks [

12]. Transformer-based architectures have recently become state of the art in malicious URL detection due to their capacity to capture long-range and contextual dependencies in URL strings. These models, i.e., BERT, DistilBERT, and ELECTRA models, excel at extracting sequential and contextual dependencies in URLs and achieve promising performance in detecting malicious URLs [

13,

14]. Rao et al. proposed a hybrid super learner ensemble model for phishing detection on mobile devices [

15]. The proposed model, named Phish-Jam, employs a super learner ensemble, aggregating predictions from various machine learning algorithms to distinguish legitimate and phishing websites.

Zaimi et al. introduced BERT-PhishFinder, which uses optimized DistilBERT to generate strong results in identifying phishing URLs [

16]. Likewise, Zhang et al. and Zheng et al. proposed detectors based on modular lexical and structural webpage data, including ConvBERT-LMS and hybrids using BiLSTM, as additional applications to improve detection accuracy [

17,

18]. These models are generally large and computationally intensive, making them impractical when deployed in an edge or in real-time applications.

Knowledge distillation (KD) is one of the central model compression strategies to alleviate the inefficiency of large transformers [

19]. Early works such as DistilBERT and TinyBERT have demonstrated that the accuracy performance of student models is comparable to that of their teacher models, with reduced model parameters and computational costs [

20,

21]. More recent developments introduced task-specific and adaptive KD algorithms, i.e., layer-wise adaptive distillation and autocorrelation matrix distillation, which enhance transformer compression [

22,

23]. In cybersecurity applications, KD has been employed in the development of lightweight models for malicious URL detection, malware identification, and intrusion detection [

24]. Its contribution is crucial, especially in resource-constrained environments. Nevertheless, existing KD methods are predominantly dependent on heuristics to design pretext tasks, and this could restrain the model’s generalization capability for notable representatives [

25].

On the other hand, contrast learning (CL) has demonstrated superior effectiveness in enhancing representation learning by promoting similarity among positive pairs and dissimilarity among negative pairs in the embedding space. Çağatan and Gao et al. introduced key techniques such as SimCLR and SimCSE, which elucidate how contrastive objectives can provide strong sentence and visual representations [

26,

27]. Knowledge transfer has recently been enhanced with the use of CL together with KD. For instance, Bao et al. proposed contrast-enhanced (representation)-normalization to improve student model embeddings in the process of distillation [

28]. Such hybrid strategies have shown better generalization in NLP and vision tasks, yet there is less work on their use in cyberspace and URL detection.

The advancement of efficient transformer architectures enables the deployment of the models in real-world applications where computational resources are limited. Surveys highlight that pruning, quantization, and efficient attention mechanisms are major strategies for diminishing transfer complexity [

29]. Models such as MobileBERT and Reformer provide compact model architecture and memory-efficient attention mechanisms [

30,

31]. These developments underline the potential to integrate KD and CL within a transformer design to detect malicious URLs.

In summary, transformer-based models have substantially advanced malicious URL detection. Nonetheless, the high computational cost and vulnerability to adversarial obfuscation impede real-world deployment. KD is one of the solutions for lightweight models; yet it tends to overlook embedding-level discriminative learning. Instead, CL offers a powerful representation learning, but its integration with KD for malicious URL detection is still underexplored; thus, this study presents a lightweight transformer model, Contra-KD, which amalgamates KD and CL for efficient malicious URL detection.

3. Methodology

This study presents a lightweight transformer-based approach that integrates contrastive learning with knowledge distillation in the application of malicious URL detection.

Figure 1 illustrates the overview of the proposed Contra-KD framework. The framework consists of five main phases: Data collection, data preprocessing, model development, ablation study, and system evaluation. Following data collection and exploratory data analysis, the subset was constructed: The imbalanced class subset. Next, data samples were pre-processed to prepare them for subsequent processes. Model development incorporates knowledge distillation and contrastive loss in a transformer-based model. In this study, an ablation study was performed on contrastive learning to evaluate the effects of contrastive components. Finally, the model is trained and then evaluated on unseen data samples for performance assessment. Details of each phase will be further discussed in the following sections.

3.1. Dataset

In this work, a publicly available dataset, named Malicious URL dataset, sourced from Kaggle, is used [

32]. The dataset consists of 651,191 URLs, classed into four classes, which are 428,103 benign, 96,547 defacements, 94,111 phishing, and 32,520 malware URLs. The dataset consists of 40,000 URLs that preserve the original class distribution: 26,263 benign URLs, 5941 defacement URLs, 5806 phishing URLs, and 1989 malware URLs. This imbalance is expected; legitimate web traffic vastly outnumbers malicious activity, and among malicious types, defacement (often automated) may be more common than targeted phishing or malware distribution. The small proportion of malware URLs suggests they are either harder to detect or less frequently captured in this dataset.

The dataset was then segmented into training (80%), validation (10%), and testing (10%) sets.

3.2. Dataset Comprehensive Analysis

This dataset is very imbalanced, with benign URLs comprising 65.66% of the data and malware URLs making up less than 5%. Inspecting the counts of the labels shows a significant class imbalance:

Benign URLs are the most populous among them, comprising around 73% of the samples.

Then there are defacement URLs at about 15%.

Almost 9% are phishing URLs.

Either way, the URLs of malware itself are the rarest: About 3%.

The length of a URL (number of characters) can be an indicative feature. For this dataset:

Phishing URLs had the shortest median length at 35 characters. This implies that phishing URLs are often quite short, perhaps in an effort to disguise them as harmless or to meet display constraints (e.g., emails, SMS). Link shorteners can also create short URLs and are commonly abused by phishing campaigns.

Defacement URLs have the longest median (81 characters) among all. Website defacement attacks often target specific pages or inject malicious content, and the longer URLs may contain exhaustive query parameters or random strings to obscure when an attack occurs or target deep links in a site.

The median malware URL length (49 characters) and the median benign URL length (46 characters) are very similar, both in the mid-40 range. The proximity suggests that typical malware-hosting URLs are not distinguishably different than non-malware-hosting URLs in length, and therefore the URL length alone is a weak discriminator between classes.

Benign URLs, which have an average length of 46, include typical web addresses with directory structures and parameters but are generally less lengthy than defacement URLs.

The corrected data shows a distinct gradient that forms the aggregated point for phishing URLs being shortest, followed by benign and malware (which are closely grouped together), which in turn are shorter than defacement URLs. This mirrors the strategy of each class type: Phishing wants to be easy, malware should hide in plain sight, and defacement can take a long road. That said, these are only median values: A complete boxplot would also display variance and outliers, which could provide further insight for detection strategies. The distribution of URL length by type is shown in a boxplot on

Figure 2.

In addition to length, we also extracted several hand-crafted features to capture structural anomalies:

Number of dots (.)—Benign URLs: a total of 3–4 dots, while phishing and malware usually consist of a high number of subdomains or IP-like strings leading to even higher dot count, i.e., 6–8.

Presence of an IP Address—Around 12% of phishing URLs include a raw IP address (like this:

http://192.168.1.1/…), a definite hint of deception. Fewer than 1% of benign URLs do.

Number of slashes (/)—The malicious URLs has a larger number of embedded segments; they have the highest number of slash counts for phishing URL (median 7) when compared to benign (median 4).

Use of HTTPS—While most benign sites are now on HTTPS, a staggering 23% of phishing URLs also use HTTPS to fool users. Defacement URLs tend to stick to HTTP.

Suspicious TLDs—Specific top-level domains (for example, tk,. ml,. ga,. cf) are over-represented among phishing and malware. A little over eight percent of the malicious URLs use such free TLDs, compared with 0.3 percent of benign ones.

Use of special characters—Special characters similar to @, −, or = are commonly used in malicious URLs. Phishing URLs frequently rely on the man-in-middle technique @ to disguise the real domain and malware utilize encoded parameters (%3D, %2F).

Top level domain (TLD) analysis over classes:

Benign—Dominated by. com (52%), .org (12%), .net (8%), and country-code TLDs such as .ca, .uk (10%).

Defacement—Like benign, but more of .com (60%) and occasional .br, etc., indicating the sites that are commonly targeted (popular CMS platforms).

Phishing—A larger number of TLDs, including many free or less common ones (.tk, .ml, .ga, .xyz). In addition, many fake URLs look like a real brand because they usually have subdomains (e.g., paypal.com, .secure-login, .xyz).

Trojan—Often served on .com, .net, and .org, but also on .info, .biz, and .ru. Malware URLs frequently include domain names that are intentionally misspelled or concatenated (e.g., update-account-amazon.com).

A deep dive of these results shows clear distinctions between the four URL types. Length, dot count, slash count, presence of IPs, and TLD are already quite good at separation. Using more advanced features (n-grams, word embeddings) would probably improve detection even more. The dataset is appropriate for machine learning models training; however, class imbalance should be resolved with a resampling or cost-sensitive approach. What we learned here lays the groundwork for building a phishing/malware detection system.

In addition, this dataset has been widely used for academic purposes to benchmark and evaluate phishing detection systems. One notable example is the dataset used in studies like “Detecting Malicious URLs Using Lexical Analysis” [

33] and “Detecting Phishing with Streaming Analytics” [

34] which trained on this same dataset, showing it is a good representative sample of malicious URL classification. Indeed, its popularity among renowned publications confirms it as an ideal dataset for building powerful detection algorithms because it contains a realistic blend of benign and malicious specimens with a comprehensive set of attack types (malware, phishing and defacement). Leveraging the same dataset as in previous work means that our findings can be directly compared with prior work, and that our models are being assessed on a well-defined, real-world corpus.

3.3. Data Preprocessing

In this phase, some preprocessing steps were taken to clean the dataset and then transform it as input to the learning model:

Handling missing values: Rows which had null or blank values in any of the columns were deleted, so that only complete data is used for training.

Feature encoding: A new feature (“url-len”) was added. This newly created numerical variable was intuitively motivated by the length property of each URL. This feature was chosen as URL length is a well-known hint on which benign and malicious URLs (especially phishing or malware URLs) tend to differ [

7].

Converting categorical labels to numerical labels: Transforming the categorical labels into numerical labels. The groups corresponding to URL classes were converted back into their numerical forms to make them compatible with the output layer of the model.

Tokenization: All URLs were tokenized into sub-words using a pretrained tokenizer. This step was performed to parse the large strings of URLs into manageable pieces so that the model could efficiently learn structural and lexical patterns.

Dynamic Padding: At each training batch we applied dynamic padding to handle variable length sequences. All batches were padded to the length of their longest sequence, preserving its seq2seq structure and reducing computations overhead.

3.4. Model Development and Validation

In this phase, Contra-KD was designed and developed as a lightweight transformer-based architecture for malicious URL detection. Unlike those conventional deep-learning models that are usually computationally intensive, this proposed model amalgamates knowledge distillation with contrastive representation learning to capture discriminative features. Furthermore, the model also utilizes the generalization competence of a large-scale teacher model for reliable detection.

Figure 3 illustrates the overall architecture of the proposed Contra-KD.

The proposed Contra-KD incorporates a compact 6-layer transformer encoder equipped with four attention heads, yielding approximately 8.8 million parameters. Input URL-based data is first tokenized using HuggingFace AutoTokenizer, and truncated to a sequence length of 512. Next, the data is processed by the transformer encoder to generate contextualized representations. In this study, ELECTRA model was chosen as the teacher network due to its capacity to capture subtle token-level dependencies in URLs. Knowledge is transferred from this teacher network (with about 109 M parameters) to Contra-KD (with only approximate 8.8 M parameters). Through this knowledge transfer, Contra-KD achieves a lightweight student model.

The experimental project is conducted using Google Colab which is a cloud-based environment thereby making it ideal for machine learning tasks. The GPU being selected is NVIDIA A100 with RAM of 40 GB. The random seed value employed in the training is 42, and CUDA deterministic value is set to True.

In this proposed architecture, AdamW was employed as the optimizer because it improved the model’s generalization by applying decoupled weight decay in the optimization process. To be specific, it mitigated overfitting as a large weight is penalized without interfering with the momentum updates. The learning rate was set to be 2 × 10

−5, and the batch size was fixed to 16. The model was trained over 15 epochs. The hyperparameters are summarized in

Table 1.

In order to fully leverage the supervised class labels and teacher model guidance, Contra-KD incorporates multiple components to optimize its performance. Cross-entropy loss (CE) is adopted as an objective function to ensure accurate classification of the input URLs based on ground-truth labels such as benign, defacement, malware, and phishing. Additionally, self-supervised contrastive loss is taken into account to group similar URL embeddings and separate dissimilar URLs to capture meaningful discriminative feature representations. Kullback–Leibler (KL) divergence is adopted in the knowledge distillation loss to transfer the soft probability distributions (logits) from the teacher model to the compact student model. With this, the student model achieves better generalization and greater computational efficiency with fewer parameters. The total loss is formulated as a weighted combination:

where α = 0.5 balances distillation and supervised learning, T = 0.1 smooths teacher logits, β = 0.5 weights the contrastive loss with a temperature of 0.5, P_Τ and P_s are the teacher and student distributions, and γ is the ground-truth label.

Below is the detailed pseudocode of the training of the proposed Contra-KD (Algorithm 1):

| Algorithm 1: Contra-KD Training Pseudocode |

Input:- •

Training set of URLs with ground-truth labels - •

Pre-trained teacher model (frozen) - •

Student model (6-layer ELECTRA-small that also returns embeddings) - •

Hyperparameters:

- ○

—distillation weight - ○

—contrastive loss weight - ○

—temperature for contrastive similarity - ○

—temperature for distillation - ○

learning rate , batch size , number of epochs

Output: Trained student model

Step 1—Data Preparation

1.1 Remove samples with missing values.

1.2 Add feature url_len (length of each URL).

1.3 Encode labels: benign → 0, defacement → 1, malware → 2, phishing → 3.

1.4 Tokenize all URLs using a pre-trained tokenizer (max length 512, dynamic padding).

1.5 Split into training (80%), validation (10%), and test (10%) sets.

Step 2—Initialization

2.1 Initialize student model with random weights.

2.2 Freeze teacher model and set it to evaluation mode.

Step 3—Training Loop

For each epoch to :

Shuffle the training data. For each mini batch of size : - a.

Forward pass through student - b.

Forward pass through teacher (no gradient) - c.

Classification loss (cross-entropy) - d.

Distillation loss (KL divergence with temperature) - e.

Contrastive loss on student embeddings (L2-normalize) (cosine similarity scaled by temperature) - f.

Total loss - g.

Backward pass—compute gradients of w.r.t. . - h.

Update student using AdamW optimizer with learning rate .

After each epoch, evaluate on validation set and keep the best model.

Step 4—Return trained student model . |

3.5. System Evaluation

Several performance metrics are used to measure the performance of Contra-KD, including accuracy, precision, F1-score, recall, Area Under the Receiver Operating Characteristic Curve (ROC-AUC), and Matthews Correlation Coefficient (MCC). Accuracy measures the proportion of URLs that are correctly detected by the model, where it can be malicious or benign. The number of truly malicious URLs classified as such depends on precision, and the number of the malicious URLs in the dataset that are recalled depends on recall. F1-score is a balance between precision and recall, which yields an integrated measure. ROC-AUC is a value which estimates how the model can distinguish between multiple URL categories and measures the average area under the ROC curve for all classes. Lastly, MCC are used to examine how reliable the classification is along with true and false positive as well as negative samples. The calculations of the metrics are shown as follows:

4. Results and Discussion

A thorough evaluation of the proposed Contra-KD model was performed in this section. First, we conducted an ablation study to investigate the sensitivity of our model toward its hyperparameters (contrastive loss weight and temperature coefficient). In addition, we evaluated how the embedding layer affects the performance of our model. The performance of the model was evaluated based on several performance measures. These metrics are accuracy, precision, recall, F1-score, ROC-AUC, and MCC. Finally, the performance of Contra-KD was compared with other machine learning and deep-learning methods.

4.1. Ablation Study

We conducted ablation experiments by conducting an extensive study on the dataset to evaluate the contribution of each part in Contra-KD. The experiments focus on the effects of hyperparameters and architectural decisions in the context of contrastive learning and knowledge distillation.

4.1.1. Contrastive Loss Weight

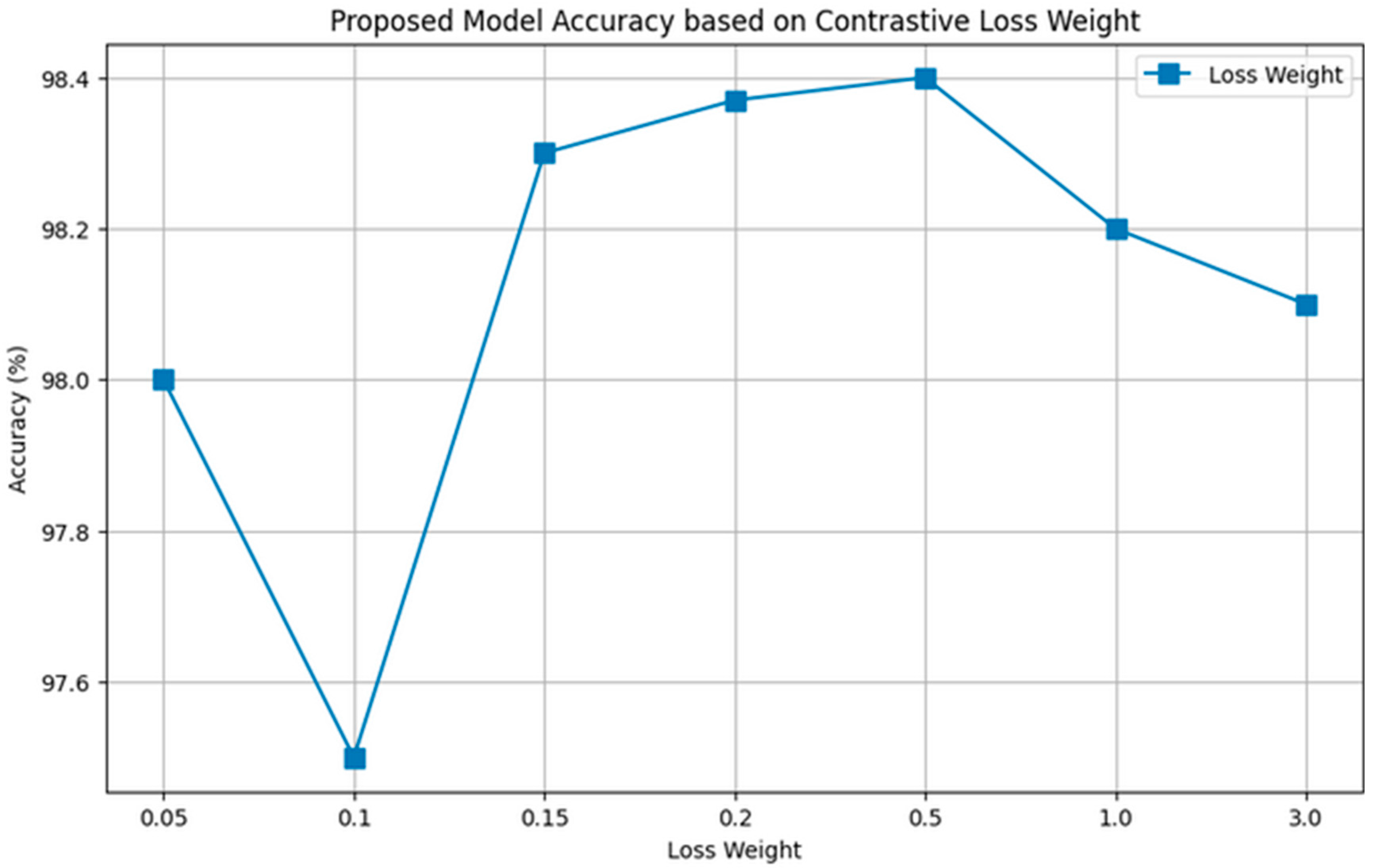

The performance of the model varying the value of the contrastive loss weight α was evaluated in this experiment. A range of values of α was investigated to identify its influence on the optimization process: 0.05, 0.1, 0.15, 0.2, 0.5, 1.0, and 3.0. The performance accuracies of Contra-KD across multiple α values are illustrated in

Figure 4.

Based on the findings, the best performance is attained at α = 0.5, achieving an accuracy of 98.4%. It is observed that when α was set too low, the performance deteriorated. This could be because the contrastive signal was inadequate, affecting the structuring of effective embedding space and yielding sub-optimal separation between benign and malicious URLs. On the other hand, excessively high weights (α ≥ 1.0) also deteriorate the model’s performance. Optimal α = 0.5 indicates a perfect trade-off between imitating teacher (distilling) and learning from ground truth (classification). This is consistent with the theoretical principle that student models learn from both the softened distributions (which encapsulate inter-class relationships) and the hard labels (which provide crisp decision boundaries) of teachers. Hence, a balanced contrastive loss-weight is significant to shape an effective embedding space to improve a model’s discrimination capability.

4.1.2. Temperature Coefficient

In contrastive learning, the temperature coefficient, τ, regulates the sharpness of the similarity distribution. In this study, experiments were conducted with multiple τ values of {0.01, 0.03, 0.05, 0.1, 0.3, 0.5, 1.0}.

Figure 5 depicts the accuracy performance trends of Contra-KD with varied contrastive temperatures. From the results, it is noticed that the performance improved consistently with the increase in τ from 0.01 to 0.05, attaining the highest accuracy of 98.6% at τ = 0.5. However, beyond this threshold, further increases in τ resulted in a decrease in performance. We can deduce that the overly smoothed similarity distribution may shrink the discriminative power of the contrastive signal. Thus, τ = 0.5 was selected as the optimal setting in Contra-KD for subsequent experiments. The value for temperature τ = 0.5 indicates an intermediate level of sharpening similarity over a normal distribution. Lower temperatures (τ < 0.5) generate too smooth distributions, which lead to a decay in contrastive signal. The best τ = 0.5 manages to find the right equilibrium introduced within InfoNCE theory [

35], maximizing gradient magnitude for informative pairs.

4.1.3. Embedding Layer

Another ablation study examining the impact of applying contrastive loss at different embedding layers within Contra-KD was conducted. Three configurations were tested: (i) Applying contrastive loss to the third layer, (ii) combining the 3rd, 4th, and 5th layers, and (iii) applying it to the final layer.

Table 2 tabulates the results of the ablation study.

From the results we can see that using the contrastive loss in the final layer performs best, reaching a performance of ~98.6% across all performance measures. This result indicates that richer semantic abstraction represented by higher layers provides more discriminative reference points for contrastive learning.

4.1.4. Training Performance

The training performance of the proposed model is assessed by the training loss over the epochs that are shown in

Figure 6a, and validation loss over the epochs that are shown in

Figure 6b.

The training performance of the proposed model shows a stable and effective learning process over the 15 training epochs. The loss gradually lowers from around 0.182 in the first epoch to around 0.019 by epoch 14, as you can see from the training loss graph above, suggesting that our model gets better and better at fitting our training data. Even though there is a minor drop in the last epoch where the loss shows a small increase to almost 0.029, overall strong convergence can be seen. The validation loss trends downward in similar fashion, dropping from 0.305 at epoch 1 to 0.060 at epoch 15, indicating good generalization ability for unseen data. There seems to be a little fluctuation in validation loss around epochs 6 and 9, which is expected in stochastic training dynamics for deep-learning optimization. Finally, we see that throughout training, the training and validation loss remain close to each other which indicates low overfitting of the model and good generalization performance. Overall, these performance results verify the stability convergence of our proposed model over time, and its effective learning process and generalization performance in the context of different experimental settings.

4.2. Model Performance

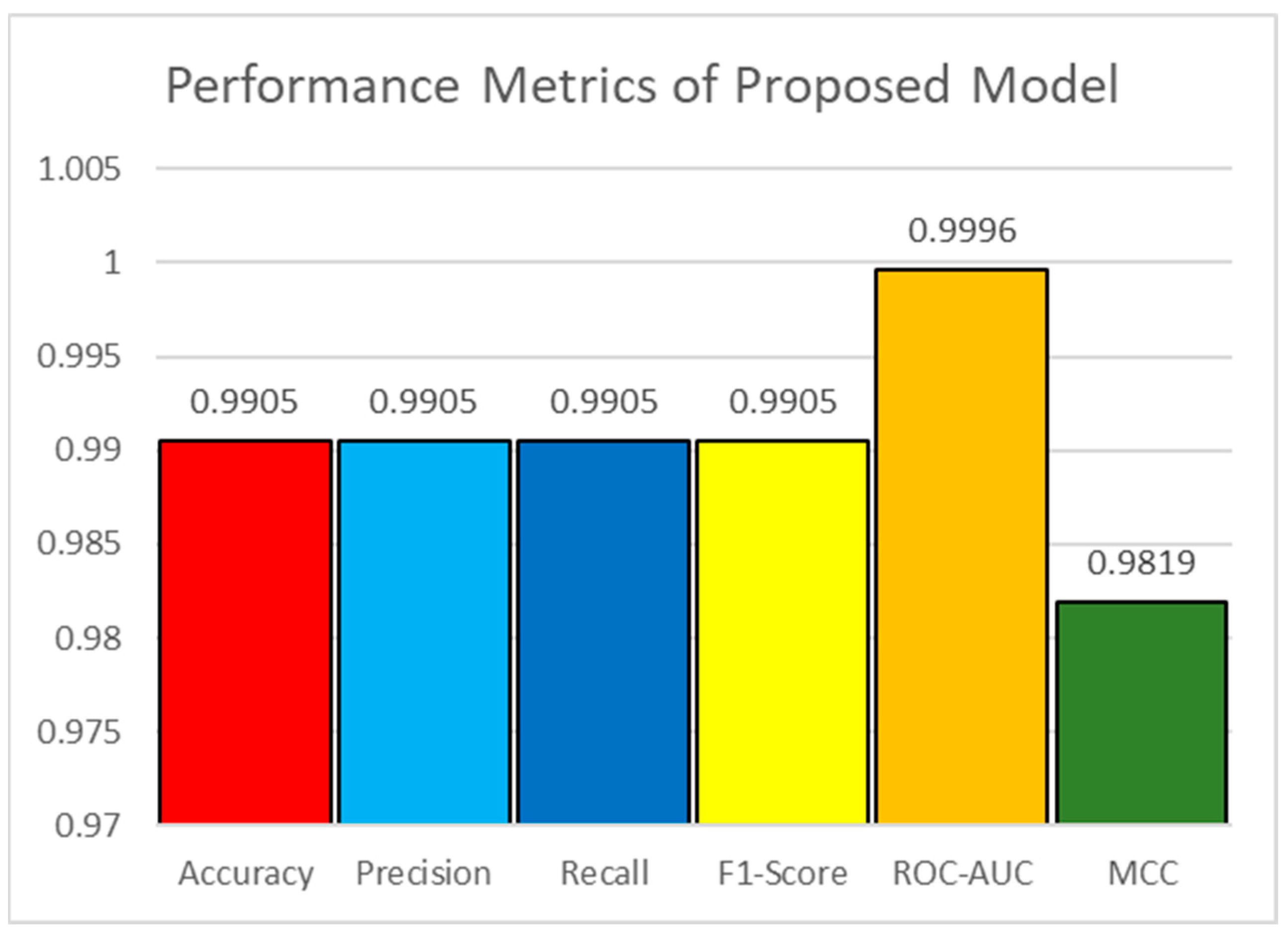

This subsection evaluates the classification performance of Contra-KD, and the results are demonstrated in

Figure 7.

According to the results, Contra-KD yielded an accuracy, precision, recall and F1-score as high as 99%. This means that Contra-KD performs well in separating malicious and benign URLs. The ROC-AUC value of the constructed model that is equal to 0.9996 indicates that this model has strong discriminative power in distinguishing benign URLs with malicious ones. In addition, the MCC score is 0.9819, which again also reinforces this conclusion as a high MCC states that Contra-KD is capable of providing stable and robust performance against imbalanced data. Next, the confusion matrix is illustrated in

Figure 8 and the classification performance is tabulated in

Table 3.

From the confusion matrix in

Figure 8, it can be seen that our proposed Contra-KD model has a very low misclassification rate among all categories. The benign class has only 11 misclassified samples out of the total 2606, and most samples (2595) are correctly classified. As for the malware and phishing categories, misclassifications are still moderate yet a bit higher (0.16%, 2.89% and 3.43%).

Notably, error analysis of the minority classes shows that although the malware class appears to have a misclassification rate of 2.89%, this is due to the sophisticated use of obfuscated offers used in malicious URLs. These URLs often leverage techniques such as character substitution (e.g., “m” → “rn”), hexadecimal encoding, and multi-hop redirects to mask their actual purpose, increasing the challenges for effective detection (as confirmed during manual inspection). The phishing class is misclassified at a rate of 3.43%, where URLs tend to mimic legitimate websites by typosquatting on domain names and including brand names in subdomains (e.g., paypal. security-update.), TLD(s), (

https://www.secureworks.com), and URLs that mimic words with common grammatical structures. Higher error rates in these minority classes are expected due to the significantly fewer training samples available (4.99% for “shopping” and 14.45% for “adware”), and their continuous evolution via adversarial means that attempt to avoid detection, as well as from common lexical characteristics seen with real URLs (e.g., frequent references to terms like “login” or “secure”). To address these problems, any future work can aim to empower such solutions as targeted data augmentation for the minority classes, ensemble models where a group of experts is created on a per-class and exploitation of adversarial training basis, either directly or in disguise.

The results demonstrate the model’s high discriminating ability toward various types of attacks. Moreover, from the class-wise scores shown in

Table 3, it can be observed that the proposed method consistently achieves high precision, recall and F1-scores for all classes. These results also demonstrate the effectiveness of the Contra-KD in capturing the fine-grained properties of different types of URLs.

4.3. Performance Comparison with Existing Approaches

The Floating Point Operations (FLOPs) were further calculated per forward pass to evaluate the computational efficiency of models:

The results suggest that the ELECTRA teacher model has around 32.5 GFLOPs, implying its slightly higher computational cost. In contrast, the introduced method Contra-KD achieves significantly lower computational cost, with only 2.8 GFLOPs per forward pass in 11.6× efficacy compared to the teacher model. The substantial decrease in model size demonstrates the efficiency of the method put forth for generating a compact model that preserves insights learned from different teacher networks. Moreover, even when matched against KD-ELECTRA-Small, which possesses 4.3 GFLOPs, Contra-KD retains a much lighter computational footprint with ∼1.5× ops fewer. The results indicate a good trade-off between model efficacy and performance, potentially allowing the proposed Contra-KD framework to be used in more resource-limited environments.

Next, a performance comparison is presented. We test our proposed Contra-KD against various previous models, such as traditional ML, DL, EL and others (e.g., transformer-based model and lightweight model). All these models are tested on the same dataset for a fair comparison.

Table 4 shows the performance scores (accuracy, precision, recall, F1-score) as well as the parameter count and training time of the models.

Compared with the traditional machine learning models, Contra-KD performs substantially better than traditional machine learning models such as KNN (0.8896 accuracy) and Decision Tree (0.90 across all metrics). Although the ensemble model XGBoost achieves promising performance with 0.93 across all metrics, it remains lower than Contra-KD’s 0.9905. In comparison with the other transformer-based models, we can observe that DistilBERT attains a promising performance with an accuracy of 0.9604. Nevertheless, Contra-KD demonstrates a higher recall and F1-score. The higher recall is crucial in the security domain because a 6.25% drop in recall may cause thousands of malicious URLS to remain undetected. As a consequence, this could amplify the spread of harmful content and spam.

Even though ELECTRA achieves comparable accuracy, recall, and F1-score, its large model size with a parameter count of 109.5 million and a long training time of 5309 s makes its deployment for real-world applications impractical. On the other hand, the proposed Contra-KD achieves a similar detection performance with 91 times fewer parameters (8.81 million) and requires nearly eight times less training time (1353 s). Compared to a compact model, i.e., KD-ELECTRA-Small, Contra-KD is approximately 35% more parameter-efficient and 15% faster to train. This performance is critical to resource-constrained environments where memory and computational limitations are critical considerations.

Table 5 shows the summary of the strengths and weaknesses of each state-of-the-art model.

Based on the results summarized in

Table 5, the proposed Contra-KD model is the best trade-off between detection performance and computational efficiency that surpasses traditional deep-learning approaches as well as recent transformer-based architectures. Contra-KD achieves, with just 8.8 million parameters, 99.05% accuracy, 99.96% ROC-AUC, and 98.18% MCC on a highly imbalanced malicious URL dataset, outperforming lightweight models (e.g., DistilBERT (96.04% accuracy)) by a wide margin, matching the performance of the significantly larger ELECTRA teacher model (109 M parameters) while taking up 91× fewer weights and consuming less than an eighth of the training time. Contra-KD overcomes significant limitations identified in recent approaches: It is not as computationally heavy as TransURL and BERT-based models, generalizes by being agnostic to phishing specifics unlike BERT-PhishFinder, and does not require the engineering of features like Phish-Jam, or complex preprocessing like CSPPC + BiLSTM. By using knowledge distillation with contrastive learning, the student model learns structured and discriminative embeddings that achieve high recall (99.02%) for even minority classes like malware (97% recall) and phishing (97% recall), an important requirement in security, where failing to detect malicious URLs can have costly repercussions. These findings show that Contra-KD is a viable solution for real-world deployment in resource-limited settings without a loss of detection accuracy.

4.4. Adversarial Robustness Analysis

Even though the proposed Contra-KD model achieves remarkable results on clean testing data, real-world malicious URLs utilize complex obfuscation strategies to bypass detection. Common security filters can be bypassed using character-level perturbations (e.g., substitutions, insertions, deletions), typosquatting (e.g., misspelled domain names), and homograph attacks (e.g., Unicode look-alike characters) by attackers. To properly assess model robustness against the manipulation introduced by such attacks, we performed explicit robustness evaluations wherein adversarial examples were generated from the held-out test set according to these attack modes.

Table 6 and

Table 7 provide the summary of the accuracy of Contra-KD in different attack scenarios and its corresponding performance drop with respect to baseline as well as robustness ratio. The results show that, because of clustering semantically similar URLs and separating them from dissimilar ones in the embedding space, the contrastive learning component provides intrinsic robustness to surface-level differences. Even with aggressive combined attacks that mimic real-world obfuscation, the detection accuracy of Contra-KD remains remarkably high, demonstrating its strong suitability for deployment under adversarial settings.

Adversarial robustness analysis shows that many attack types have the accuracy of more than 94% on most single-attack methods for the models learnt by Contra-KD. This robustness is effectively enforced by the contrastive learning component which clusters semantically similar URLs regardless of any surface-level perturbation. Even under aggressive combined attacks that emulate real-world obfuscation techniques, the model achieves 90.2% accuracy, a decline of just 8.85 percentage points from baseline.

In fact, in terms of resilience, the model performs even better against character-level perturbations (97.2% accuracy under substitution) than structural attacks such as typosquatting (94.8%) or homograph attacks (94.1%). This is as expected: Character-level modifications maintain the underlying semantic structure, which can still be distinguished by the contrastive encodings, while domain-level alterations change the URL’s primary identity.

Per-class analysis shows that the accuracy drop under attack is more significant for minority classes (malware, phishing) than majority classes (benign), as follows: Malware/benign F1 decrease of 9% and 3%, respectively. The reason behind such vulnerability is the small number of training samples available for these classes, which leads to less dense areas where witness embedding points are located and hence are more susceptible to adversarial perturbations. This may be mitigated in future work with targeted data augmentation or class-balanced contrastive learning.

These findings demonstrate that Contra-KD inherently extends adversarial robustness via embedding-space regularization, rendering it suitable for prospective deployment in security-sensitive applications where attackers might engage in obfuscation strategies.

5. Conclusions

This paper proposes a lightweight transformer-based model, coined Contra-KD, which incorporates contrastive learning and knowledge distillation in detecting malicious URLs. In contrast to previous approaches that predominantly rely on classification loss or soft-target distillation, Contra-KD leverages embedding-level alignment through a contrastive loss. With this, the student model could learn more discriminative yet compact feature representations. From the obtained empirical results, it is noticed that the proposed Contra-KD achieves encouraging performance with 99.05% accuracy, 99.1% precision, 99.02% recall, 99.05% F1-score, 99.96% ROC-AUC, and 98.18% MCC, with only 8.8 million parameters. Hence, we can deduce that the proposed Contra-KD exhibits superior balance between classification performance and computational efficiency.