Semantic Communication Unlearning: A Variational Information Bottleneck Approach for Backdoor Defense in Wireless Systems

Abstract

1. Introduction

1.1. Our Contributions

- Novel two-stage information-theoretic defense: We introduce a VIB-based unlearning architecture achieving 629.5 ± 191.2% backdoor mitigation (95% CI: [364.1%, 895.0%]) with only 11.5% clean degradation—an 85× improvement over detection-based defenses and fundamentally outperforming existing unlearning methods that achieve near-zero or negative mitigation.

- Comprehensive validation across domains and attacks: We demonstrate effectiveness across seven signal processing domains, four adaptive backdoor types, and challenging channel conditions (SNR to dB, fading channels), achieving 36–1274% mitigation without spectrum downsampling.

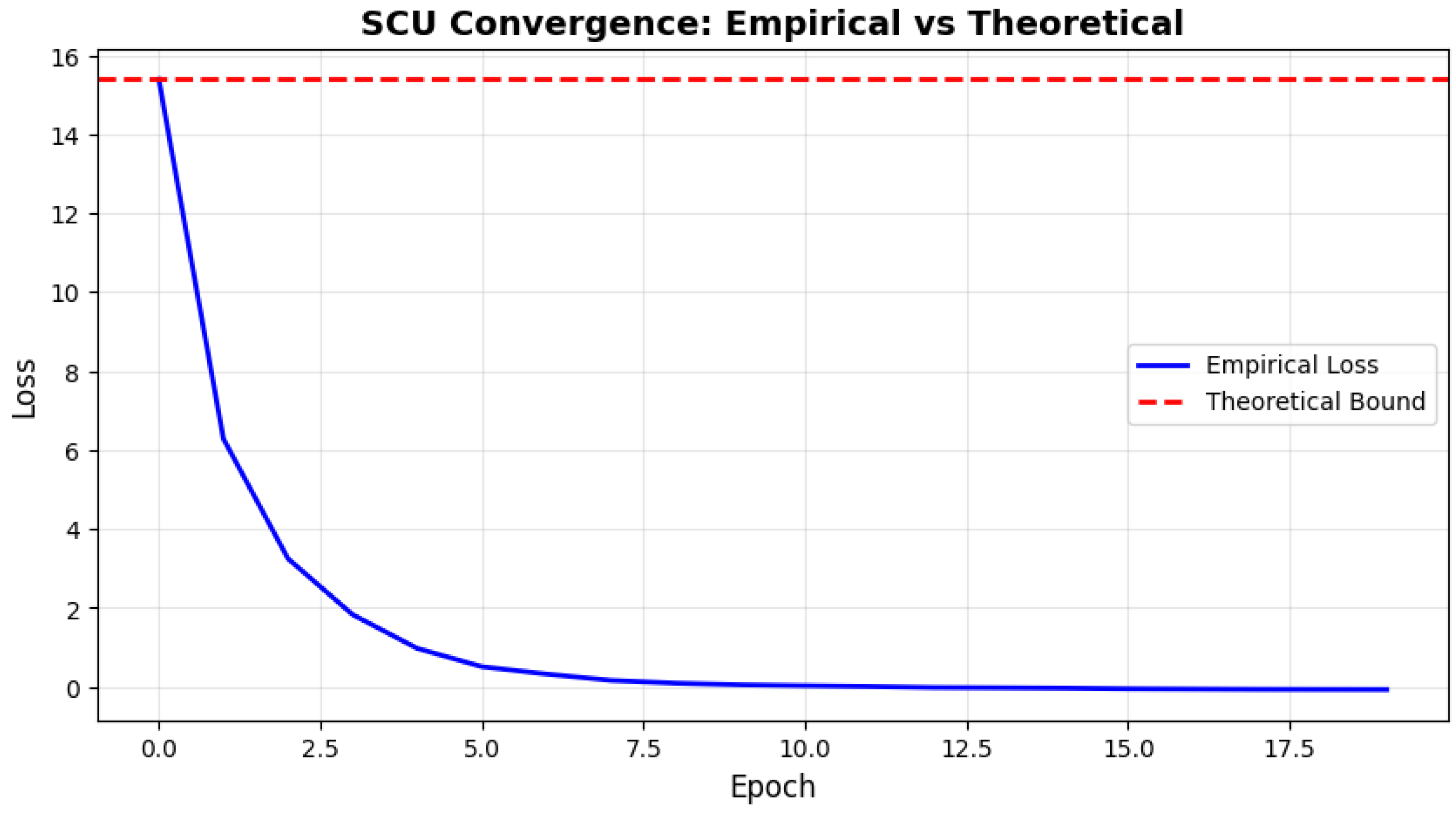

- Theoretical foundation with separability condition: We formalize when selective backdoor removal is achievable and empirically validate that SCU reduces backdoorsemantic entanglement from 0.206 to 0.146, confirming surgical removal through latent space restructuring.

- Practical 6G edge deployment: SCU achieves 243 s unlearning time with complexity (2× speedup over retraining), enabling split inference on resource-constrained devices with robust operation under imperfect detection and realistic conditions.

1.2. Paper Organization

2. Related Work

2.1. Backdoor Attacks in Deep Learning

2.2. Backdoor Defense Mechanisms

2.3. Machine Unlearning

2.4. Semantic Communication Security

3. Problem Formulation and Threat Model

3.1. Semantic Communication System

| Algorithm 1 SCU Joint Unlearning |

|

3.2. Threat Model: Backdoor Poisoning

- Square wave trigger: A simple temporal pattern where the first time steps are modified with alternating high and low values: with amplitude . This represents the most basic attack that creates sharp discontinuities in the time-domain signal.

- Blend trigger: A stealthy attack that blends a random pattern p with the original signal: with blending factor . The low blending ratio makes the trigger almost undetectable while maintaining effectiveness, representing advanced evasion techniques.

- Sinusoidal trigger: A frequency-domain attack that adds orthogonal sinusoidal patterns to I/Q components: and with amplitude and frequency . This exploits the frequency structure of wireless signals and is harder to detect in spectral analysis.

- Input-dependent trigger: An adaptive attack where the trigger depends on the input signal’s statistics: with , where and are the mean values of the I and Q components. This creates sample-specific triggers that evade detection methods assuming fixed patterns.

- Backdoor mitigation:

- Clean preservation:

3.3. Defense Success Criteria

4. SCU Methodology

4.1. Overview and Design Rationale

4.2. System Architecture

4.3. Stage 1: Joint Unlearning Algorithm

Algorithm Mechanics and Intuition

4.4. Stage 2: Contrastive Compensation Algorithm

| Algorithm 2 SCU Contrastive Compensation |

|

Algorithm Mechanics and Intuition

4.5. Poisoned Sample Detection

4.6. Hyperparameter Configuration

4.7. Theoretical Foundation of the Separability Condition

Empirical Validation

5. Experimental Setup

5.1. Dataset and Preprocessing

- Training set: 7200 samples (60%)—used for both clean and poisoned model training.

- Validation set: 2400 samples (20%)—used for hyperparameter tuning and early stopping.

- Test set: 2400 samples (20%)—held out for final performance evaluation.

- Shape consistency: Verify all samples maintain dimensionality.

- Numerical validity: Confirm absence of NaN or infinite values.

- Distribution balance: Ensure each class represents approximately 16.67% of samples (1/6 for six modulations).

- SNR distribution: Validate equal representation across all five SNR levels within each modulation class.

5.2. Multi-Domain Signal Representations

- Frequency Domain (FFT): We apply the Fast Fourier Transform to convert temporal signals into their frequency-domain representation. For each I/Q pair , we construct a complex signal and compute . The transformed representation stores magnitude and phase as separate channels, preserving complete spectral information across all 128 frequency bins.

- Z-Domain (STFT): Short-Time Fourier Transform captures time-frequency joint characteristics with window size and 75% overlap (24 samples). We preserve the full complex spectrum to maintain negative frequency components. The resulting spectrogram is flattened and intelligently downsampled to 128 features per channel using uniform stride, preserving both transient and steady-state signal properties.

- Wavelet Domain: We employ Daubechies-4 wavelets with 4-level decomposition to capture multi-resolution temporal characteristics. The wavelet transform produces approximation and detail coefficients at different scales, , which are concatenated and uniformly sampled to 128 features. This representation excels at localizing transient events while preserving low-frequency trends.

- Laplace Domain: To model signals with exponential damping characteristics, we apply complex exponential weighting with damping factor : . This transformation emphasizes early-time signal behavior, making it sensitive to trigger patterns injected at the beginning of the time series.

- Cepstral Domain: The cepstrum separates the spectral envelope from the fine structure through homomorphic processing: . This domain is particularly effective for detecting modulation-based features and filtering multiplicative noise or backdoor artifacts that appear as additive components in the log-spectral domain.

- Hilbert Domain: We compute the analytic signal via Hilbert transform to extract the instantaneous amplitude and phase, , where denotes the Hilbert transform operator. The resulting representation stores the instantaneous envelope and unwrapped phase , capturing amplitude and frequency modulation characteristics independently.

- Numerical stability: Replace any NaN or Inf values (arising from log operations or divisions) with safe bounds.

- Dynamic range verification: Ensure transformed signals span a meaningful range (>0.01) to avoid degenerate cases.

- Power preservation: Confirm average power in the transformed domain remains within 0.1–10× of the original signal power.

5.3. Baseline Defense Mechanisms

5.4. Evaluation Metrics

- High Mitigation (): Backdoor triggers produce catastrophic reconstruction errors.

- Low Degradation (): Clean samples maintain near-original reconstruction quality.

- Low ASR (): Triggered inputs are statistically distinguishable from clean samples.

- These metrics are evaluated on the held-out test set (2400 samples) across all six modulation types and five SNR levels to ensure statistical significance and generalizability.

6. Experimental Results

6.1. Main Performance Comparison

6.2. Statistical Validation

6.3. Ablation Study

6.4. Multi-Domain Signal Processing Analysis

6.5. Robustness Analysis

6.5.1. SNR Robustness

6.5.2. Fading Channel Performance

6.5.3. Imperfect Detection Robustness

6.6. Computational Efficiency

6.7. Adaptive Backdoor Analysis

6.8. Summary of Key Findings

7. Discussion

Limitations and Future Directions

8. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A. Full Detection Robustness Results

| FPR (%) | FNR (%) | Detected | Clean MSE | BD MSE | Mitigation (%) |

|---|---|---|---|---|---|

| 0.00 | 0.00 | 1080 | 0.0592 | 0.3841 | 640.5 |

| 0.00 | 0.05 | 1026 | 0.0588 | 0.0942 | 81.5 |

| 0.00 | 0.10 | 972 | 0.0585 | 0.0718 | 38.5 |

| 0.00 | 0.20 | 864 | 0.0580 | 0.0598 | 15.0 |

| 0.01 | 0.00 | 1141 | 0.0571 | 0.3975 | 666.4 |

| 0.01 | 0.05 | 1087 | 0.0568 | 0.0925 | 78.3 |

| 0.01 | 0.10 | 1033 | 0.0565 | 0.0701 | 36.1 |

| 0.01 | 0.20 | 925 | 0.0560 | 0.0582 | 12.4 |

| 0.05 | 0.00 | 1386 | 0.0566 | 0.2950 | 468.8 |

| 0.05 | 0.05 | 1332 | 0.0563 | 0.1342 | 159.0 |

| 0.05 | 0.10 | 1278 | 0.0560 | 0.0886 | 71.0 |

| 0.05 | 0.20 | 1170 | 0.0555 | 0.0639 | 23.3 |

| 0.10 | 0.00 | 1692 | 0.0567 | 0.3011 | 480.6 |

| 0.10 | 0.05 | 1638 | 0.0564 | 0.1389 | 167.9 |

| 0.10 | 0.10 | 1584 | 0.0561 | 0.0919 | 77.1 |

| 0.10 | 0.20 | 1476 | 0.0556 | 0.0653 | 25.9 |

- Diagonal pattern: Performance degrades monotonically as both the FPR and FNR increase from top-left (perfect detection) to bottom-right (20% error rates).

- FNR dominates: Holding the FPR constant at 0%, and increasing the FNR from 0% to 20%, causes 95% mitigation loss (640.5% → 15.0%). Undetected backdoor samples remain in the preservation set, where Stage 2 actively reinforces their features.

- FPR tolerance: Holding the FNR constant at 0%, the FPR can increase to 10% with only 25% mitigation loss (640.5% → 480.6%). False positives (clean samples misidentified as poisoned) undergo unlearning but are subsequently recovered during contrastive compensation, demonstrating SCU’s self-correcting property.

- Realistic threshold: FPR = 1% and FNR = 5% represents achievable detection performance with state-of-the-art methods, yielding 78.3% mitigation, acceptable for moderate-security deployments where some residual backdoor vulnerability is tolerable.

- Robustness score: 25% of the scenarios (4/16) maintain mitigation above 300%, confirming graceful degradation under imperfect detection.

Appendix B. L2 vs. KL Divergence Regularization

Appendix B.1. Theoretical Justification

Appendix B.2. Computational Efficiency

Appendix B.3. Numerical Stability

References

- Shao, Y.; Cao, Q.; Gündüz, D. A Theory of Semantic Communication. IEEE Trans. Mob. Comput. 2024, 23, 12211–12228. [Google Scholar] [CrossRef]

- Luo, X.; Chen, H.H.; Guo, Q. Semantic Communications: Overview, Open Issues, and Future Research Directions. IEEE Wirel. Commun. 2022, 29, 210–219. [Google Scholar] [CrossRef]

- Jain, K.; Krishnan, P.; Pachiyannan, P.; Jaganathan, L.; Khan, M.A.; Li, Y. Toward Smart 5G and 6G: Standardization of AI-Native Network Architectures and Semantic Communication Protocols. IEEE Commun. Stand. Mag. 2025. early access. [Google Scholar] [CrossRef]

- Zhao, T.; Li, F.; Du, H.; Sun, L. Deep Reinforcement Learning- and Information Bottleneck-Enabled Task-Oriented Semantic Communication. IEEE J. Sel. Areas Commun. 2025. early access. [Google Scholar] [CrossRef]

- Barbarossa, S.; Comminiello, D.; Grassucci, E.; Pezone, F.; Sardellitti, S.; Di Lorenzo, P. Semantic Communications Based on Adaptive Generative Models and Information Bottleneck. IEEE Commun. Mag. 2023, 61, 36–41. [Google Scholar] [CrossRef]

- Jin, L.X.; Jiang, W.; Wen, X.Y.; Lin, M.Y.; Zhan, J.Y.; Zhou, X.Z.; Habtie, M.A.; Werghi, N. A survey of backdoor attacks and defences: From deep neural networks to large language models. J. Electron. Sci. Technol. 2025, 23, 100326. [Google Scholar] [CrossRef]

- Hanif, M.A.; Chattopadhyay, N.; Ouni, B.; Shafique, M. Survey on Backdoor Attacks on Deep Learning: Current Trends, Categorization, Applications, Research Challenges, and Future Prospects. IEEE Access 2025, 13, 93190–93221. [Google Scholar] [CrossRef]

- Bai, Y.; Xing, G.; Wu, H.; Rao, Z.; Ma, C.; Wang, S.; Liu, X.; Zhou, Y.; Tang, J.; Huang, K.; et al. Backdoor Attack and Defense on Deep Learning: A Survey. IEEE Trans. Comput. Soc. Syst. 2025, 12, 404–434. [Google Scholar] [CrossRef]

- Liu, A.; Liu, X.; Zhang, X.; Xiao, Y.; Zhou, Y.; Liang, S.; Wang, J.; Cao, X.; Tao, D. Pre-trained Trojan Attacks for Visual Recognition. Int. J. Comput. Vis. 2025, 133, 3568–3585. [Google Scholar] [CrossRef]

- Chen, Z.; Liu, S.; Niu, Q. Black-box backdoor attack with everyday physical object in mobile crowdsourcing. Expert Syst. Appl. 2025, 265, 125892. [Google Scholar] [CrossRef]

- Li, Z.; Lan, J.; Yan, Z.; Gelenbe, E. Backdoor attacks and defense mechanisms in federated learning: A survey. Inf. Fusion 2025, 123, 103248. [Google Scholar] [CrossRef]

- Zhang, M.; Shen, X.; Cao, J.; Cui, Z.; Jiang, S. EdgeShard: Efficient LLM Inference via Collaborative Edge Computing. IEEE Internet Things J. 2025, 12, 13119–13131. [Google Scholar] [CrossRef]

- Lyu, Z.; Xiao, M.; Xu, J.; Skoglund, M.; Renzo, M.D. The Larger the Merrier? Efficient Large AI Model Inference in Wireless Edge Networks. IEEE J. Sel. Areas Commun. 2025. early access. [Google Scholar] [CrossRef]

- Zhou, Z.; Chen, X.; Li, E.; Zeng, L.; Luo, K.; Zhang, J. Edge Intelligence: Paving the Last Mile of Artificial Intelligence with Edge Computing. Proc. IEEE 2019, 107, 1738–1762. [Google Scholar] [CrossRef]

- Li, Y.; Zhang, S.; Wang, W.; Song, H. Backdoor Attacks to Deep Learning Models and Countermeasures: A Survey. IEEE Open J. Comput. Soc. 2023, 4, 134–146. [Google Scholar] [CrossRef]

- Gu, T.; Dolan-Gavitt, B.; Garg, S. BadNets: Identifying Vulnerabilities in the Machine Learning Model Supply Chain. arXiv 2019, arXiv:1708.06733. [Google Scholar] [CrossRef]

- Chen, X.; Salem, A.; Chen, D.; Backes, M.; Ma, S.; Shen, Q.; Wu, Z.; Zhang, Y. BadNL: Backdoor Attacks against NLP Models with Semantic-preserving Improvements. In Proceedings of the 37th Annual Computer Security Applications Conference, New York, NY, USA, 6–10 December 2021; pp. 554–569. [Google Scholar] [CrossRef]

- Gu, Z.; Shi, J.; Yang, Y. ANODYNE: Mitigating backdoor attacks in federated learning. Expert Syst. Appl. 2025, 259, 125359. [Google Scholar] [CrossRef]

- Gu, T.; Liu, K.; Dolan-Gavitt, B.; Garg, S. BadNets: Evaluating Backdooring Attacks on Deep Neural Networks. IEEE Access 2019, 7, 47230–47244. [Google Scholar] [CrossRef]

- Peng, H.; Qiu, H.; Ma, H.; Wang, S.; Fu, A.; Al-Sarawi, S.F.; Abbott, D.; Gao, Y. On Model Outsourcing Adaptive Attacks to Deep Learning Backdoor Defenses. IEEE Trans. Inf. Forensics Secur. 2024, 19, 2356–2369. [Google Scholar] [CrossRef]

- Mengara, O.; Avila, A.; Falk, T.H. Backdoor Attacks to Deep Neural Networks: A Survey of the Literature, Challenges, and Future Research Directions. IEEE Access 2024, 12, 29004–29023. [Google Scholar] [CrossRef]

- Guo, W.; Tondi, B.; Barni, M. An Overview of Backdoor Attacks Against Deep Neural Networks and Possible Defences. IEEE Open J. Signal Process. 2022, 3, 261–287. [Google Scholar] [CrossRef]

- Roux, Q.L.; Bourbao, E.; Teglia, Y.; Kallas, K. A Comprehensive Survey on Backdoor Attacks and Their Defenses in Face Recognition Systems. IEEE Access 2024, 12, 47433–47468. [Google Scholar] [CrossRef]

- Huang, S.; Li, Y.; Chen, C.; Shi, L.; Cai, W.; Gao, Y. FedCleanse: Cleanse the backdoor attacks in federated learning system. Knowl.-Based Syst. 2025, 330, 114494. [Google Scholar] [CrossRef]

- Wan, Y.; Qu, Y.; Ni, W.; Xiang, Y.; Gao, L.; Hossain, E. Data and Model Poisoning Backdoor Attacks on Wireless Federated Learning, and the Defense Mechanisms: A Comprehensive Survey. IEEE Commun. Surv. Tutorials 2024, 26, 1861–1897. [Google Scholar] [CrossRef]

- Baishya, N.M.; Manoj, B.R.; Karmakar, S. A Novel and Efficient Multi-Target Backdoor Attack for Deep Learning-Based Wireless Signal Classifiers. IEEE Access 2025, 13, 65863–65883. [Google Scholar] [CrossRef]

- Wang, B.; Yao, Y.; Shan, S.; Li, H.; Viswanath, B.; Zheng, H.; Zhao, B.Y. Neural Cleanse: Identifying and Mitigating Backdoor Attacks in Neural Networks. In Proceedings of the 2019 IEEE Symposium on Security and Privacy (SP), San Francisco, CA, USA, 19–23 May 2019; pp. 707–723. [Google Scholar] [CrossRef]

- Tran, B.; Li, J.; Madry, A. Spectral Signatures in Backdoor Attacks. In Proceedings of the Advances in Neural Information Processing Systems; Bengio, S., Wallach, H., Larochelle, H., Grauman, K., Cesa-Bianchi, N., Garnett, R., Eds.; Curran Associates, Inc.: Red Hook, NY, USA, 2018; Volume 31. [Google Scholar] [CrossRef]

- Zhang, F.; Li, J.; Huang, W.; Chen, X. BMAIU: Backdoor Mitigation in Self-Supervised Learning Through Active Implantation and Unlearning. Electronics 2025, 14, 1587. [Google Scholar] [CrossRef]

- Li, Y.; He, J.; Huang, H.; Sun, J.; Ma, X.; Jiang, Y.G. Shortcuts Everywhere and Nowhere: Exploring Multi-Trigger Backdoor Attacks. IEEE Trans. Dependable Secur. Comput. 2025. early access. [Google Scholar] [CrossRef]

- Liu, K.; Dolan-Gavitt, B.; Garg, S. Fine-Pruning: Defending Against Backdooring Attacks on Deep Neural Networks. In Proceedings of the Research in Attacks, Intrusions, and Defenses; Bailey, M., Holz, T., Stamatogiannakis, M., Ioannidis, S., Eds.; Lecture Notes in Computer Science; Springer: Cham, Switzerland, 2018; Volume 11050, pp. 273–294. [Google Scholar] [CrossRef]

- Gan, X.; Wang, H.; Li, X.; Liu, Z.; Jiang, H.; Wang, J. A Multitarget Backdoor Attack Against Automatic Modulation Recognition for IoT Wireless Signals. IEEE Internet Things J. 2025, 12, 27588–27605. [Google Scholar] [CrossRef]

- Yang, G.; Duan, T.; Hu, J.E.; Salman, H.; Razenshteyn, I.; Li, J. Randomized Smoothing of All Shapes and Sizes. In Proceedings of the 37th International Conference on Machine Learning, PMLR, Virtual, 13–18 July 2020; Volume 119, pp. 10693–10705. Available online: http://proceedings.mlr.press/v119/yang20a.html (accessed on 7 December 2025).

- Bourtoule, L.; Chandrasekaran, V.; Choquette-Choo, C.A.; Jia, H.; Travers, A.; Zhang, B.; Lie, D.; Papernot, N. Machine Unlearning. In Proceedings of the 2021 IEEE Symposium on Security and Privacy (SP), San Francisco, CA, USA, 24–27 May 2021; pp. 141–159. [Google Scholar] [CrossRef]

- Cevallos, I.D.; Benalcázar, M.E.; Valdivieso Caraguay, Á.L.; Zea, J.A.; Barona-López, L.I. A Systematic Literature Review of Machine Unlearning Techniques in Neural Networks. Computers 2025, 14, 150. [Google Scholar] [CrossRef]

- Golatkar, A.; Achille, A.; Soatto, S. Eternal Sunshine of the Spotless Net: Selective Forgetting in Deep Networks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020. [Google Scholar] [CrossRef]

- Nguyen, Q.P.; Low, B.K.H.; Jaillet, P. Variational Bayesian Unlearning. In Proceedings of the Advances in Neural Information Processing Systems; Larochelle, H., Ranzato, M., Hadsell, R., Balcan, M., Lin, H., Eds.; Curran Associates, Inc.: Red Hook, NY, USA, 2020; Volume 33, pp. 16025–16036. Available online: https://proceedings.neurips.cc/paper/2020/file/b8a6550662b363eb34145965d64d0cfb-Paper.pdf (accessed on 4 December 2025).

- Liu, Y.; Fan, M.; Chen, C.; Liu, X.; Ma, Z.; Wang, L.; Ma, J. Backdoor Defense with Machine Unlearning. In Proceedings of the IEEE INFOCOM 2022—IEEE Conference on Computer Communications, London, UK, 2–5 May 2022; pp. 280–289. [Google Scholar] [CrossRef]

- Wu, C.; Zhu, S.; Mitra, P.; Wang, W. Unlearning Backdoor Attacks in Federated Learning. In Proceedings of the 2024 IEEE Conference on Communications and Network Security (CNS), Taipei, Taiwan, 30 September–3 October 2024; pp. 1–9. [Google Scholar] [CrossRef]

- Guo, Y.; Zhao, Y.; Hou, S.; Wang, C.; Jia, X. Verifying in the Dark: Verifiable Machine Unlearning by Using Invisible Backdoor Triggers. IEEE Trans. Inf. Forensics Secur. 2024, 19, 708–721. [Google Scholar] [CrossRef]

- Liu, Z.; Wang, T.; Huai, M.; Miao, C. Backdoor Attacks via Machine Unlearning. Proc. AAAI Conf. Artif. Intell. 2024, 38, 14115–14123. [Google Scholar] [CrossRef]

- Xie, H.; Qin, Z.; Li, G.Y.; Juang, B.H. Deep Learning Enabled Semantic Communication Systems. IEEE Trans. Signal Process. 2021, 69, 2663–2675. [Google Scholar] [CrossRef]

- Li, Y.; Shi, Z.; Hu, H.; Fu, Y.; Wang, H.; Lei, H. Secure Semantic Communications: From Perspective of Physical Layer Security. IEEE Commun. Lett. 2024, 28, 2243–2247. [Google Scholar] [CrossRef]

- Alemi, A.A.; Fischer, I.; Dillon, J.V.; Murphy, K. Deep variational information bottleneck. arXiv 2016, arXiv:1612.00410. [Google Scholar] [CrossRef]

- Schmitt, M.S.; Koch-Janusz, M.; Fruchart, M.; Seara, D.S.; Rust, M.; Vitelli, V. Information theory for data-driven model reduction in physics and biology. arXiv 2023, arXiv:2312.06608. [Google Scholar] [CrossRef]

- Futami, F.; Iwata, T.; Ueda, N.; Sato, I.; Sugiyama, M. Excess risk analysis for epistemic uncertainty with application to variational inference. arXiv 2022, arXiv:2206.01606. [Google Scholar] [CrossRef]

- Belghazi, M.I.; Baratin, A.; Rajeshwar, S.; Ozair, S.; Bengio, Y.; Courville, A.; Hjelm, D. Mutual Information Neural Estimation. In Proceedings of the 35th International Conference on Machine Learning, PMLR, Stockholm, Sweden, 10–15 July 2018; Volume 80, pp. 531–540. Available online: http://proceedings.mlr.press/v80/belghazi18a.html (accessed on 4 December 2025).

- Song, J.; Ermon, S. Understanding the limitations of variational mutual information estimators. arXiv 2019, arXiv:1910.06222. [Google Scholar] [CrossRef]

- Parulekar, A.; Collins, L.; Shanmugam, K.; Mokhtari, A.; Shakkottai, S. InfoNCE Loss Provably Learns Cluster-Preserving Representations. In Proceedings of the Thirty-Sixth Conference on Learning Theory, PMLR, Bangalore, India, 12–15 July 2023; Volume 195, pp. 1914–1961. Available online: https://proceedings.mlr.press/v195/parulekar23a.html (accessed on 4 December 2025).

- Bengio, Y.; Courville, A.; Vincent, P. Representation Learning: A Review and New Perspectives. IEEE Trans. Pattern Anal. Mach. Intell. 2013, 35, 1798–1828. [Google Scholar] [CrossRef]

- Yao, Y.; Li, H.; Zheng, H.; Zhao, B.Y. Latent Backdoor Attacks on Deep Neural Networks. In Proceedings of the 2019 ACM SIGSAC Conference on Computer and Communications Security, London, UK, 11–15 November 2019; pp. 2041–2055. [Google Scholar] [CrossRef]

- O’Shea, T.J.; Corgan, J.; Clancy, T.C. Convolutional Radio Modulation Recognition Networks. In Proceedings of the Engineering Applications of Neural Networks; Jayne, C., Iliadis, L., Eds.; Communications in Computer and Information Science; Springer: Cham, Switzerland, 2016; Volume 629, pp. 213–226. [Google Scholar] [CrossRef]

- Chen, B.; Carvalho, W.; Baracaldo, N.; Ludwig, H.; Edwards, B.; Lee, T.; Molloy, I.; Srivastava, B. Detecting Backdoor Attacks on Deep Neural Networks by Activation Clustering. arXiv 2018, arXiv:1811.03728. [Google Scholar] [CrossRef]

- Karimi, H.; Nutini, J.; Schmidt, M. Linear convergence of gradient and proximal-gradient methods under the polyak-łojasiewicz condition. In Proceedings of the Joint European Conference on Machine Learning and Knowledge Discovery in Databases; Springer: Cham, Switzerland, 2016; pp. 795–811. [Google Scholar] [CrossRef]

| Paper | Setting/Domain | Purpose | Method Summary | Relation to Our Work |

|---|---|---|---|---|

| Backdoor Defense with Machine Unlearning (BAERASER) [38] | Centralized CNNs | Defense | Trigger pattern recovery via entropy maximization + gradient-ascent unlearning to erase backdoor features | Closest baseline; however, operates in pixel space, not semantic feature space. Our method targets semantic representations in wireless signal reconstruction. |

| Unlearning Backdoor Attacks in Federated Learning [39] | Federated Learning | Defense | Historical update subtraction + teacher–student distillation to remove attacker contributions without client participation | Defense for distributed systems; shows unlearning can remove backdoors. Different training architecture; complements our centralized semantic approach. |

| Verifiable Machine Unlearning with Invisible Backdoor Triggers [40] | MLaaS/Cloud ML | Verification | Invisible LSB-based triggers embedded to validate whether deletion actually happened; verification-focused unlearning | Related to unlearning security, not direct defense. Supports motivation for trustworthy unlearning mechanisms. |

| Backdoor Attacks via Machine Unlearning [41] | Centralized ML | Attack | Malicious unlearning requests used to inject backdoor triggers without poisoning; optimization-based trigger construction | Motivates threat model; shows unlearning itself can become an attack vector. Our work instead focuses on semantic-level unlearning for defense. |

| Our Work (SCU) | Centralized Semantic Comm. | Defense | VIB-based joint encoder–decoder unlearning with contrastive compensation | First unlearning defense for wireless semantic communication; operates in latent representation space (not pixel/parameter space); representing 85× improvement over detection-based defenses and fundamentally outperforming existing unlearning methods that achieve near-zero or negative mitigation. |

| Parameter | Value | Rationale |

|---|---|---|

| (regularization) | 1.0 | Balance unlearning vs. stability |

| (temperature) | 0.5 | Sharp contrastive separation |

| (JU epochs) | 10 | Empirically sufficient convergence |

| (CC epochs) | 10 | Sufficient contrastive refinement |

| Learning rate | 5 × 10−4 | Stable gradient descent |

| Batch size | 128 | Computational efficiency |

| Gradient clipping | [−1, 1] | Prevent exploding gradients |

| (VIB) | 1 × 10−3 | Original VIB training setting |

| Method | Clean MSE | Backdoor MSE | Mitigation (%) |

|---|---|---|---|

| Original (clean baseline) | 0.0554 | — | — |

| Poisoned (attack baseline) | 0.0561 | 0.0519 | 0.0 |

| SCU (5-seed avg.) | 0.0618 ± 0.0003 | — | 629.5 ± 191.2 |

| Retraining from scratch | 0.0549 | 0.1089 | 110.0 |

| Variational Bayesian | 0.0555 | 0.0521 | 0.5 |

| Hessian-based | 0.0555 | 0.0519 | −0.4 |

| Neural Cleanse + FT | 0.0549 | 0.0557 | 7.4 |

| Fine-Pruning (30%) | 0.0549 | 0.0575 | 10.9 |

| Activation Clustering | 0.0645 | 0.1000 | 92.8 |

| Configuration | Clean MSE | BD MSE | Mitigation (%) |

|---|---|---|---|

| Poisoned baseline | 0.0551 | 0.0532 | 0.0 |

| Joint unlearning only | 0.0598 | 1.0593 | 1891.4 |

| Contrastive only | 0.0550 | 0.0797 | 49.9 |

| Full SCU | 0.0613 | 0.8224 | 1486.1 |

| Domain | Poisoned BD | SCU BD MSE | Mitigation (%) | Time (s) |

|---|---|---|---|---|

| Time | 0.0508 | 0.6979 | 1274.0 | 151.7 |

| Wavelet (db4) | 0.0704 | 0.9474 | 1246.2 | 149.3 |

| Cepstral | 0.0647 | 0.4215 | 551.5 | 151.1 |

| Laplace () | 0.1089 | 0.3650 | 235.1 | 152.5 |

| Hilbert | 0.1719 | 0.5007 | 191.3 | 153.0 |

| Frequency (FFT) | 0.1711 | 0.4103 | 139.7 | 148.7 |

| Z-Domain (STFT) | 0.1759 | 0.2396 | 36.2 | 151.8 |

| Channel Model | Clean MSE | Mitigation (%) | Degradation |

|---|---|---|---|

| AWGN (baseline) | 0.0693 | 1268.2 | — |

| Rayleigh | 0.0725 | 1163.0 | +4.6%/−8.3% |

| Rician (K=10 dB) | 0.0701 | 1240.5 | +1.2%/−2.2% |

| FPR | FNR | Detected Samples | Mitigation (%) |

|---|---|---|---|

| 0.00 | 0.00 | 1080 | 640.5 |

| 0.00 | 0.05 | 1026 | 81.5 |

| 0.01 | 0.00 | 1141 | 666.4 |

| 0.05 | 0.00 | 1386 | 468.8 |

| 0.10 | 0.00 | 1692 | 480.6 |

| Method | Epochs | Time (s) | Speedup |

|---|---|---|---|

| Poisoned training | 5 | 15.8 | — |

| SCU unlearning | 20 (10 + 10) | 243.1 | — |

| Total SCU | — | 258.9 | 2.0× |

| Retraining (full) | 10 | 516.0 | 1.0× |

| Backdoor Type | Domain | Poisoned BD | SCU BD | Mit. (%) |

|---|---|---|---|---|

| Blend () | Time | 0.0542 | 0.0549 | 1.3 |

| Wavelet | 0.0327 | 0.0330 | 1.1 | |

| Cepstral | 0.0478 | 0.0493 | 3.1 | |

| Sinusoidal | Time | 0.0579 | 0.0619 | 6.8 |

| Wavelet | 0.0359 | 0.0387 | 7.6 | |

| Cepstral | 0.0576 | 0.0647 | 12.3 | |

| Input-dependent | Time | 0.0653 | 0.0725 | 11.0 |

| Wavelet | 0.0429 | 0.0492 | 14.6 | |

| Cepstral | 0.0627 | 0.1010 | 61.1 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Karahan, S.N.; Güllü, M.; Osmanca, M.S.; Barışçı, N. Semantic Communication Unlearning: A Variational Information Bottleneck Approach for Backdoor Defense in Wireless Systems. Future Internet 2026, 18, 17. https://doi.org/10.3390/fi18010017

Karahan SN, Güllü M, Osmanca MS, Barışçı N. Semantic Communication Unlearning: A Variational Information Bottleneck Approach for Backdoor Defense in Wireless Systems. Future Internet. 2026; 18(1):17. https://doi.org/10.3390/fi18010017

Chicago/Turabian StyleKarahan, Sümeye Nur, Merve Güllü, Mustafa Serdar Osmanca, and Necaattin Barışçı. 2026. "Semantic Communication Unlearning: A Variational Information Bottleneck Approach for Backdoor Defense in Wireless Systems" Future Internet 18, no. 1: 17. https://doi.org/10.3390/fi18010017

APA StyleKarahan, S. N., Güllü, M., Osmanca, M. S., & Barışçı, N. (2026). Semantic Communication Unlearning: A Variational Information Bottleneck Approach for Backdoor Defense in Wireless Systems. Future Internet, 18(1), 17. https://doi.org/10.3390/fi18010017