1. Introduction

We live in an era of information abundance but attention scarcity. Every minute, thousands of news articles, social media posts, and research papers flood digital platforms, making it increasingly difficult to identify relevant information efficiently. In this landscape, automatic text summarization has emerged as an essential tool to distill large volumes of content into concise and meaningful summaries, supporting faster decision-making and knowledge consumption [

1,

2,

3,

4,

5,

6].

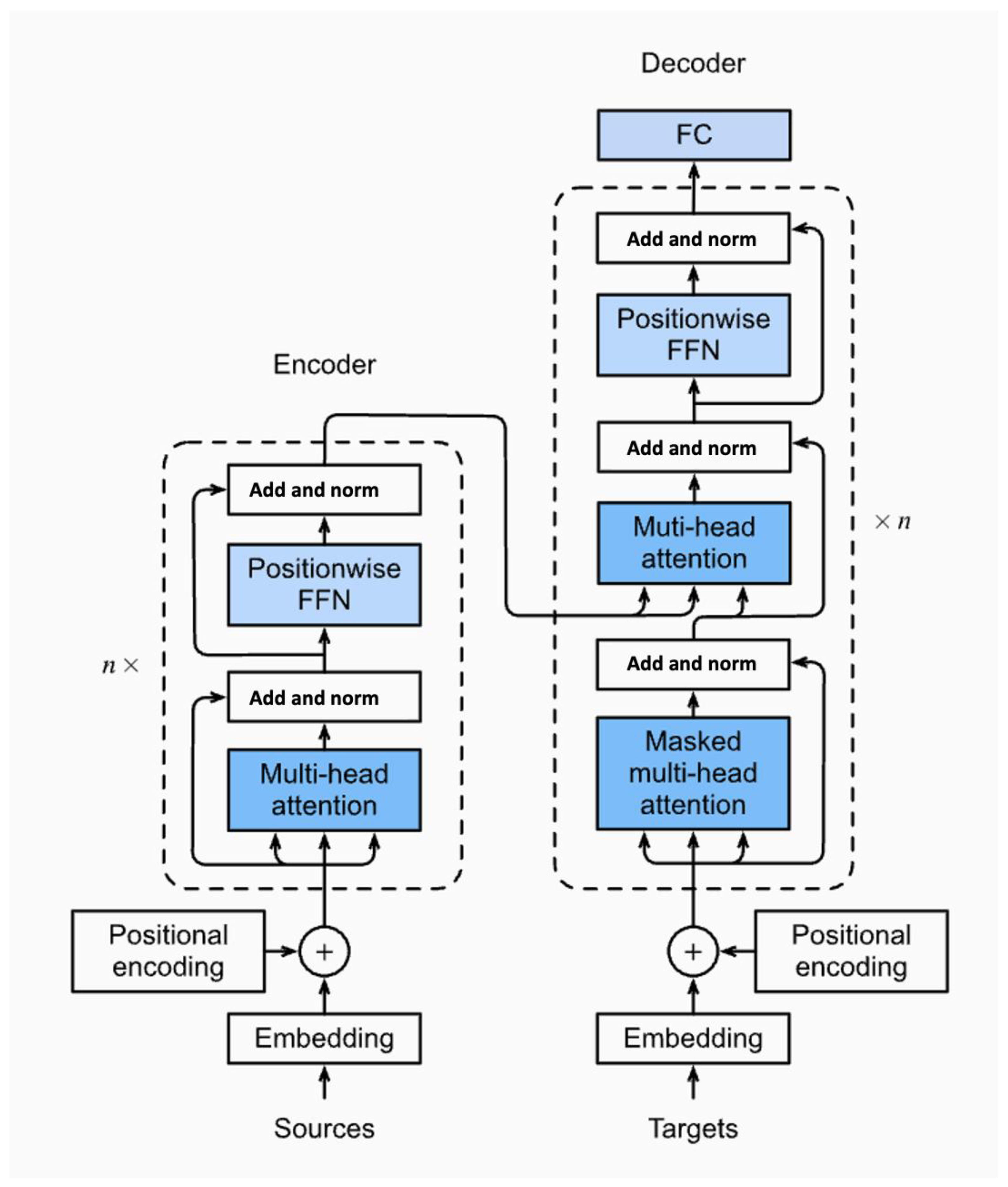

While early summarization systems relied on extractive rule-based techniques, essentially highlighting sentences without deep semantic understanding, they often lacked coherence and contextual awareness. These methods proved inadequate for summarizing longer documents or handling diverse writing styles. The advent of transformer-based architectures, particularly since 2017, revolutionized the field [

7,

8,

9,

10,

11]. Unlike sequential models, transformers utilize self-attention mechanisms to process entire sequences simultaneously, enabling models like BERT, GPT, BART, and T5 to deliver significantly more fluent, context-aware summaries [

7,

11,

12,

13,

14,

15,

16].

Despite these advancements, real-world deployments of summarization systems still face three major challenges:

Precision Problem: Models perform inconsistently across domains. A model effective on news articles might fail on conversational or technical texts, limiting generalizability. For example, 42% of businesses report inconsistent results when applying the same summarizer across departments [

17].

Practicality Dilemma: Research prioritizes accuracy metrics (e.g., ROUGE), while practitioners care about training costs, inference speed, and deployment feasibility. Yet most benchmarks lack guidance on such operational concerns, forcing costly trial-and-error selection.

Adaptation Challenge: Most benchmarks focus on clean, formal texts (e.g., news), ignoring messy real-world inputs like emails, chats, and social media, leaving it unknown how well these models adapt to such scenarios.

Although several transformer-based models have been proposed and benchmarked, few studies offer systematic, cross-domain evaluations that assess both performance and practicality across distinct summarization contexts. There is a need for comparative studies that go beyond ROUGE scores to consider deployment realities, such as training time, hardware constraints, and adaptation to informal or conversational input.

In contrast to prior comparative works, our study introduces a unified evaluation framework that simultaneously addresses accuracy, computational efficiency, and domain adaptability. We incorporate efficiency metrics such as training and inference times alongside hardware resource profiling, an element often missing in earlier benchmarks. Additionally, we implement a standardized preprocessing pipeline across datasets to control for input variability and integrate a domain-specific qualitative assessment through human evaluation, offering practical insights into model behavior beyond automated metrics. This combination of methodological rigor and operational considerations extends the scope of previous multi-model comparisons and makes our findings directly actionable for real-world deployments.

This study addresses these gaps by conducting a comprehensive comparative evaluation of three prominent transformer-based summarization models, PEGASUS, BART, and two T5 variants (SMALL and BASE), across three datasets that reflect distinct summarization tasks [

12,

18,

19]:

CNN/DailyMail: Long-form news articles emphasizing context retention.

Xsum: Extreme summarization to a single sentence.

Samsum: Dialogue-based summarization of informal conversations.

Our key contributions include the following:

A cross-domain benchmarking framework that evaluates models on accuracy (ROUGE), efficiency (training/inference time), and practical constraints (model size, memory usage).

A detailed analysis of context preservation, domain-specific language handling, and error types across content types.

Practical insights into model selection trade-offs, highlighting how T5 variants balance accuracy and efficiency: T5-Small achieves lightweight deployment with ~70% less resource use, while T5-Base improves accuracy—closing much of the gap with BART and PEGASUS—yet still maintains moderate efficiency.

Our findings have real-world implications across industries. In healthcare, identifying models that best preserve domain-specific terminology can enhance summarization of clinical notes. In journalism and research, our results can inform model selection based on content type and resource availability. For instance, while BART excels in dialogue coherence (Samsum), PEGASUS maintains superior factual retention in long-form articles (CNN/DailyMail), a critical requirement for legal or scientific summarization tasks.

3. Research Methodology

This section presents a systematic framework (see

Figure 4) for evaluating transformer-based summarization models (PEGASUS, BART, T5) across three benchmark datasets (CNN/DailyMail, Xsum, Samsum). The methodology addresses four critical dimensions: (1) dataset preparation, (2) model configuration, (3) experimental protocol, and (4) evaluation strategy, with explicit considerations for computational constraints, reproducibility, and statistical significance testing. All experiments were executed in two phases (Phase 1: ~6% validation + test; Phase 2: 80/10/10), and both are documented for transparency and replication.

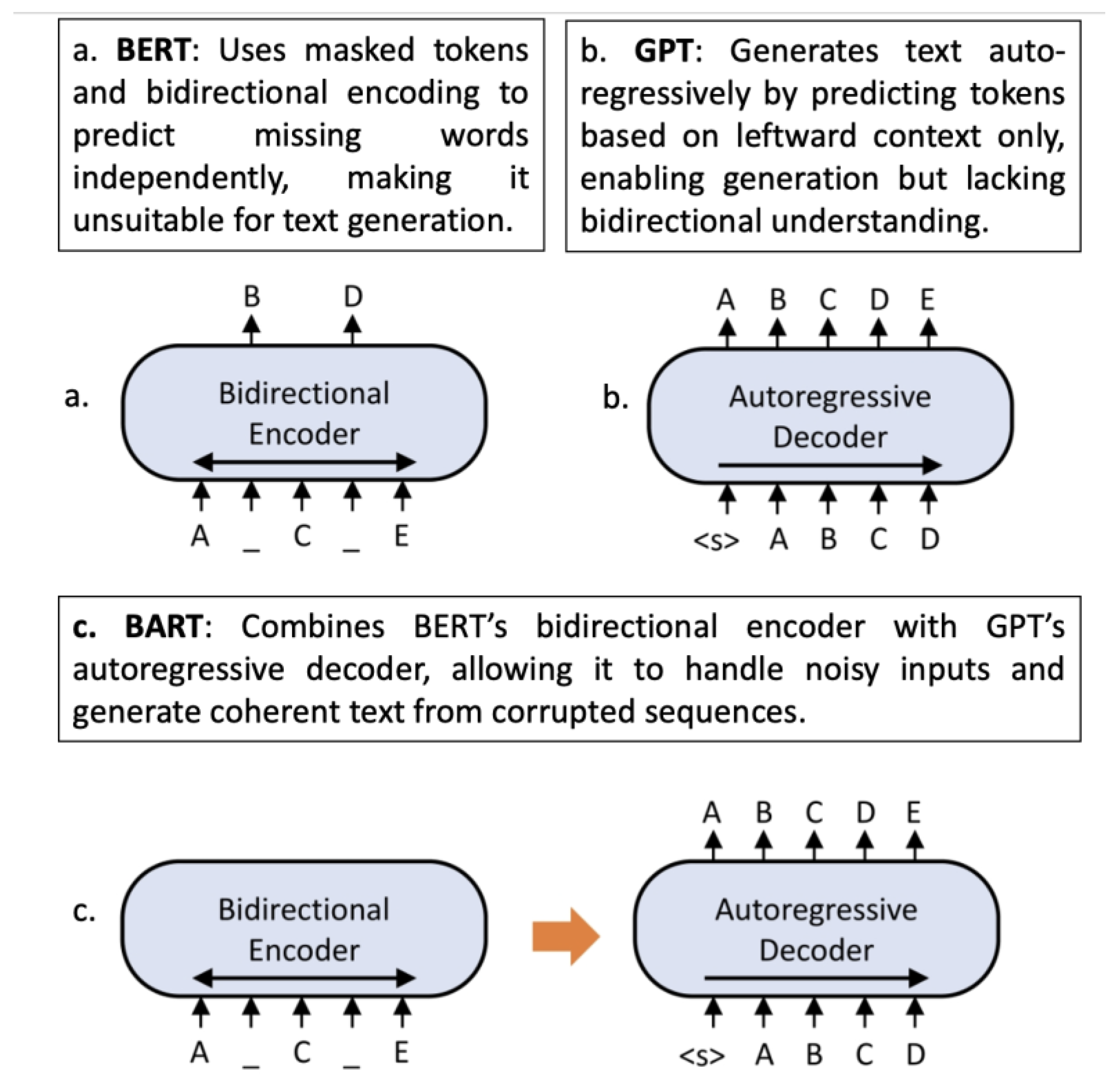

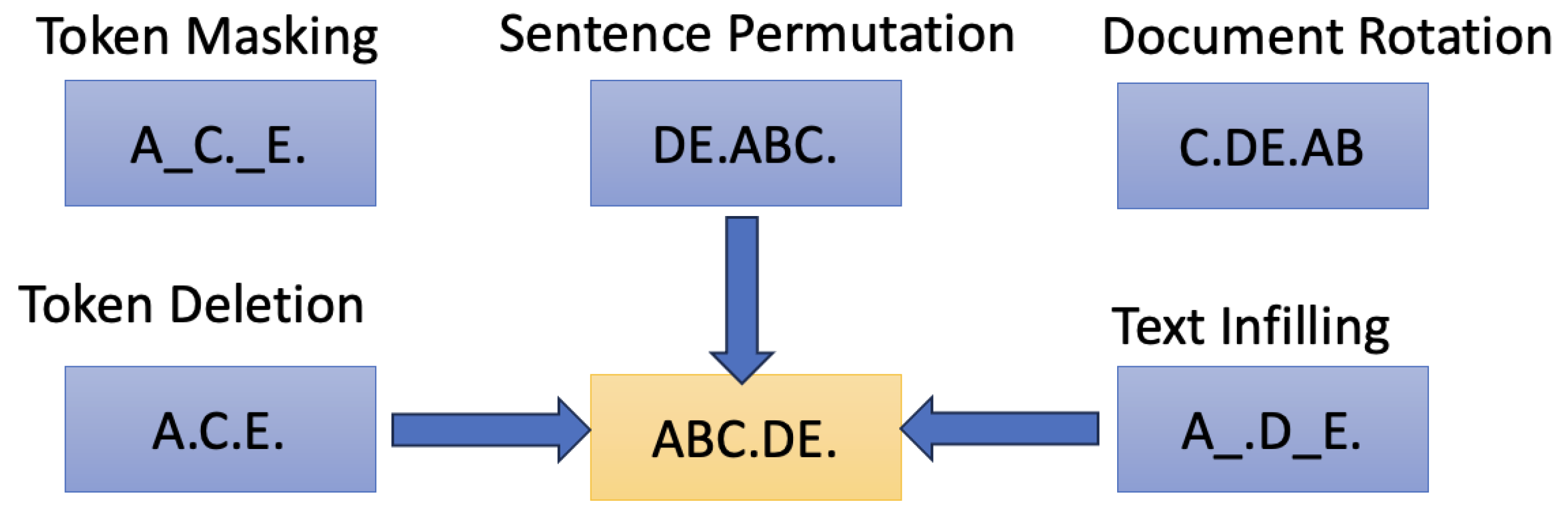

The three selected models, PEGASUS, BART, and T5, represent state-of-the-art transformer-based architectures specifically designed for text summarization tasks. PEGASUS, introduced by Zhang et al. [

12], employs a novel pre-training strategy called gap-sentence generation (GSG), which makes it particularly well-optimized for summarization. In this study, the PEGASUS-CNN/DailyMail variant was used. BART, proposed by Lewis et al. [

24], is a denoising autoencoder that integrates features from both BERT and GPT, allowing it to effectively handle noisy and corrupted input data. The version employed in this work is BART LARGE. Lastly, T5, developed by Raffel et al. [

23], is based on the Text-to-Text Transfer Transformer framework, treating all NLP tasks as text generation problems. While it is highly versatile, it remains more generalized compared to PEGASUS and BART. To capture both efficiency and accuracy trade-offs, we evaluated two variants of T5: T5-Small (lightweight, ~60 M parameters) and T5-Base (~220 M parameters). This dual inclusion allows us to analyze both low-resource and mid-resource scenarios.

3.1. Dataset Selection

To ensure a fair and consistent evaluation, three publicly available datasets representing distinct summarization challenges in length, complexity, and structure were selected for robust cross-domain assessment, as summarized in

Table 4.

To ensure a fair, comprehensive, and domain-diverse evaluation, this study utilizes three benchmark datasets: CNN/DailyMail, Xsum, and Samsum, each representing unique summarization challenges. The CNN/DailyMail dataset consists of over 300,000 news articles paired with multi-sentence highlights, offering a benchmark for long-form summarization. Its structure, sourced from CNN and Daily Mail websites, supports evaluating models on lengthy, structured texts (Train: 286,817; Val: 13,368; Test: 11,487). The Xsum dataset, curated from BBC articles, presents a contrasting task: each document is paired with a highly compressed, single-sentence summary that captures the core message (Train: 204,045; Val: 11,332; Test: 11,334), making it ideal for testing extreme abstraction. Finally, the Samsum corpus introduces a conversational domain, containing dialogue-summary pairs constructed by linguists across various tones and formats, formal, semi-formal, and informal (Train: 14,732; Val: 818; Test: 819). This dataset evaluates a model’s ability to distill meaning from informal, multi-turn dialogue into coherent summaries. Collectively, these datasets provide diverse linguistic, structural, and compression-level challenges, enabling a robust cross-domain comparison of transformer summarization models.

Dataset Sampling and Filtering Strategy

Due to computational resource constraints, we utilized carefully filtered and stratified subsets of each dataset rather than their full size. This approach ensured that all experiments could be executed within the available GPU memory and time budgets while retaining the linguistic diversity, topic variety, and structural patterns necessary for fair model comparison, thus balancing computational feasibility with representativeness and enabling reproducible, scalable experimentation. Stratified random sampling was employed to maintain the original distribution of summary lengths, content genres, and abstraction levels within each dataset, helping to prevent bias toward overly common structures or topics, with all sampling operations using a fixed random seed (42) to guarantee reproducibility across runs. The proportions retained were 10% of the full CNN/DailyMail dataset (training: 28,943 samples, validation: 3218, test: 3223), 15% of the Xsum dataset (training: 18,374 samples, validation: 2271, test: 2270), and 80% of the Samsum dataset (training: 12,474 samples, validation: 818, test: 819) due to its smaller original size. While smaller subsets reduce training time and memory requirements, they may limit a model’s exposure to rare linguistic phenomena, domain-specific vocabulary, and complex discourse structures particularly for long-form summarization tasks where recall is critical; however, this risk was mitigated by adopting a stratified sampling strategy that preserved proportional representation of varying summary lengths, topical diversity, and stylistic complexity across domains, ensuring a balanced evaluation that remains computationally tractable without disproportionately favoring one dataset type over another. To promote open science, transparency, and full reproducibility, we provide exact dataset split files containing the selected IDs for each dataset’s train–validation–test partitions, a Python sampling script implementing the stratified selection process using the Hugging Face datasets library, and

Supplementary Documentation describing the rationale for subset sizes and the verification process confirming that the reduced datasets preserved key statistical properties of the originals; these resources are hosted on our GitHub repository [

https://github.com/EmanDaraghmi/summarization-research] (accessed on 27 August 2025).

3.2. Preprocessing Pipeline

To ensure consistency, efficiency, and optimal model performance, all three datasets (CNN/DailyMail, Xsum, Samsum) underwent a systematic preprocessing pipeline tailored to both model constraints and available computational resources.

3.2.1. Data Filtering Strategy

Given the substantial size of the CNN/DailyMail and Xsum datasets, and the constraints of the available hardware, a subset of the training data was carefully selected to maximize data quality while avoiding truncation during tokenization. The following steps summarize the applied filtering criteria:

Articles with word counts ≤ 1024 and summaries ≤ 128 were initially selected.

From this subset, 100,000 rows were sampled.

Each article was further validated to ensure token length ≤ 1024 (≤512 for T5-Small), with summaries also capped at 128 tokens.

This filtering minimizes truncation, which can negatively impact model performance, especially in cases where critical information is located beyond the truncation threshold. For example, if an article spans 5000 tokens and its essence lies beyond the first 1500, truncation could lead to significant loss of contextual understanding. Therefore, refining the dataset ensures that input sequences retain semantic coherence and are fully compatible with model architectures.

3.2.2. Text Normalization

Following token-length filtering, datasets were normalized to standardize linguistic variations:

CNN/DailyMail and Xsum: British spellings were converted to American English.

Samsum: Chat abbreviations were expanded (e.g., “u” → “you”), contractions were normalized, and punctuation inconsistencies were corrected.

3.2.3. Data Splitting Strategy and Two-Phase Design (Training–Validation Splitting)

We conducted experiments in two phases to balance computational constraints with statistical robustness.

Originally, our experiments, Phase 1, used a fixed, stratified 1000-sample test set for each dataset, with the remaining data split 95%/5% into training and validation sets. This design was chosen to ensure a consistent evaluation size across datasets while staying within the runtime and memory constraints of our Google Colab L4 environment. However, to increase the proportion of evaluation data, we re-performed the experiments, Phase 2, using an 80/10/10 train–validation–test split via stratified sampling (seed = 42). This adjustment increased the combined validation and test share from 6% to 20%, improving the stability of early stopping, reducing variance in evaluation metrics, and better aligning with common practice in text summarization. Both T5-Small and T5-Base followed the same stratified splitting strategy. However, due to the larger parameter count of T5-Base, training batch sizes were adjusted (batch size = 8–16) to fit GPU memory constraints, while other hyperparameters were kept consistent.

While the smaller training set slightly reduced ROUGE scores (by ~0.5–1.2 points depending on the model and dataset), the larger evaluation sets provided narrower confidence intervals and a more reliable comparison across PEGASUS, BART, and T5-Small. We report the results in

Section 4, and both experimental scripts are available on our GitHub repository for full reproducibility.

3.2.4. Model-Specific Dataset Sizes

Tokenization differences across models affected final dataset sizes. PEGASUS and BART, which support longer sequences, retained a higher number of training examples than T5-Small.

Table 5 presents the resulting dataset sizes across models.

This approach enabled a balanced compromise between evaluation robustness and computational feasibility, facilitating consistent model comparisons without introducing truncation-related bias.

3.2.5. Integration with Summarization Pipeline

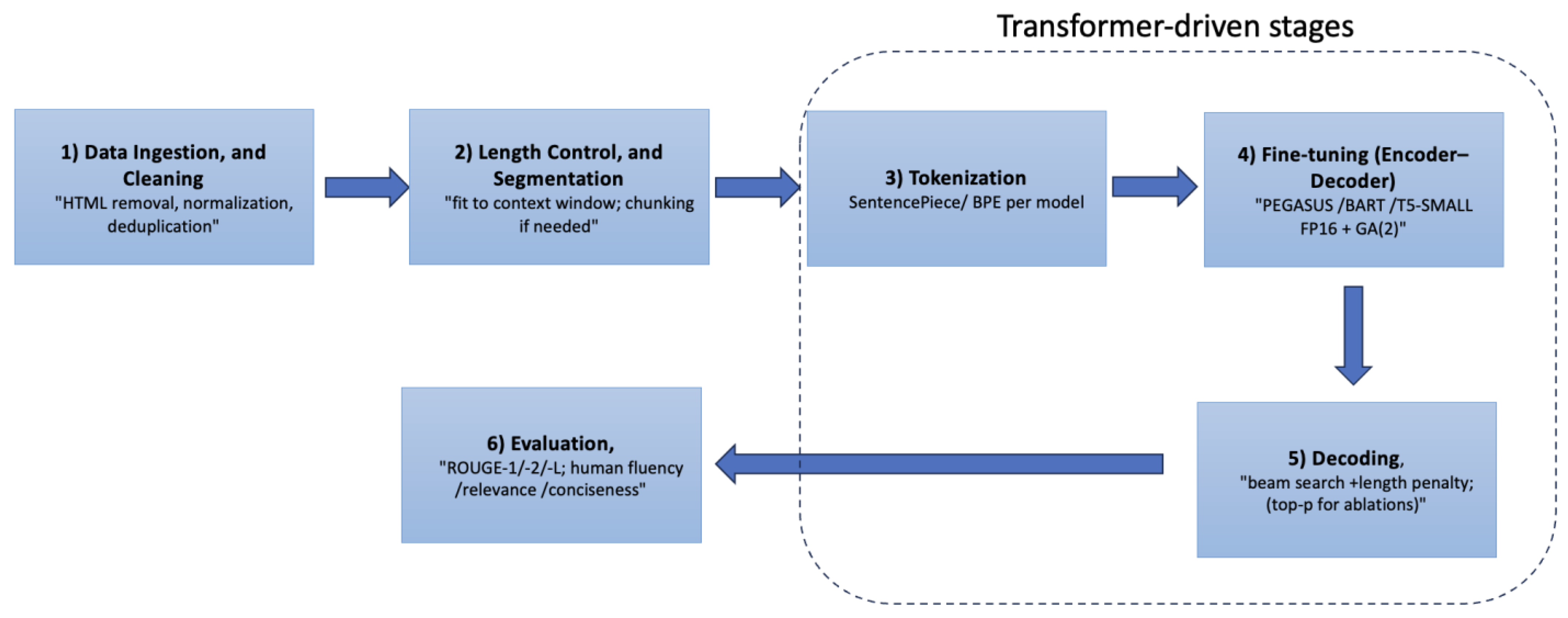

The preprocessing pipeline was designed to feed directly into the summarization workflow illustrated in

Figure 5. The filtered and normalized datasets served as the primary input for the tokenization stage, ensuring that all samples complied with each model’s sequence length constraints. By standardizing text formats, controlling input lengths, and removing noise prior to tokenization, the preprocessing stage reduced computational overhead and minimized the need for aggressive truncation within the summarization pipeline. This integration ensured the following:

Seamless Model Input—Preprocessed sequences were fully compatible with PEGASUS, BART, and T5-Small without additional runtime adjustments.

Consistent Evaluation Flow—The stratified splits created in preprocessing aligned with the evaluation stages in the summarization pipeline, enabling a direct comparison of model performance.

Optimized Decoding—Reduced sequence noise and standardized structure allowed decoding algorithms (beam search, length penalty, top-p sampling) to focus on generating semantically coherent summaries rather than compensating for irregular input formatting.

In short, the preprocessing and summarization pipelines were co-designed, with preprocessing functioning as a “gatekeeper” to ensure all downstream components operated under optimal conditions.

3.2.6. Reproducibility

Ensuring reproducibility was a core principle in designing the preprocessing and data preparation pipeline, enabling independent verification and facilitating future extensions of the methodology. All preprocessing scripts, filtering criteria, and data-splitting procedures are publicly available via the project’s GitHub repository. Each script is fully annotated with parameter settings, including maximum token lengths, sampling seeds (fixed at 42 for all splits), and text normalization mappings.

Additionally, environment specifications covering the Python version, library dependencies (e.g., Hugging Face Transformers, Datasets, and Tokenizers), and hardware details from the Google Colab L4 runtime are documented in requirements.txt and environment.yml files. These ensure that other researchers can exactly replicate the preprocessing and model training workflows. Dataset versions are fixed using Hugging Face dataset snapshots to guarantee that future runs operate on identical data to those used in the original experiments.

To make the pipeline fully transparent and verifiable, the following measures were implemented:

Deterministic Sampling: All random operations, such as subset selection and stratified splits, used fixed random seeds (e.g., seed = 42) to ensure identical dataset partitions across repeated runs.

Transparent Parameterization: Token limits, word count filters, and sampling sizes were explicitly defined and documented, avoiding reliance on implicit defaults.

Open-Source Availability: Complete preprocessing scripts, configuration files, and dataset filtering utilities are published in both the GitHub repository and Hugging Face model cards.

Cross-Platform Consistency: Scripts were tested in both Google Colab L4 environments and local GPU setups to verify consistent tokenization results and dataset sizes.

Version Control: All experiments and preprocessing runs are tracked via Git commit history and tagged releases, enabling exact reproduction of the preparation steps used in reported results.

This approach ensures that the reported findings can be independently validated, and that future researchers can extend the pipeline for new datasets or summarization models without ambiguity or loss of fidelity.

3.3. Text-Summarization Pipeline

Figure 5 illustrates the typical pipeline of a text-summarization workflow, from raw text acquisition to final evaluation. The process begins with data ingestion and cleaning, which includes HTML tag removal, normalization, and deduplication. Length control and segmentation ensure that input texts fit within the context window of the model, applying chunking when necessary. Tokenization converts text into subword units using methods such as SentencePiece or byte pair encoding (BPE). Transformer-based models PEGASUS, BART, and T5 (Small and Base variants) are applied during the fine-tuning stage, where they learn task-specific summarization patterns from training data. Decoding strategies such as beam search, length penalty, and top-p sampling generate candidate summaries. Finally, evaluation is performed using both automated metrics (e.g., ROUGE-1, ROUGE-2, ROUGE-L) and human judgments for fluency, relevance, and conciseness. The highlighted section in the figure indicates the stages where transformer architectures play a central role in this workflow.

3.4. Model Configuration and Training Setup

To explore performance variations across diverse architectural designs, three transformer-based summarization models were selected for fine-tuning: PEGASUS-Large, BART-Large, and T5-Small. PEGASUS, with 568 million parameters and a maximum sequence length of 1024 tokens, leverages a unique pre-training strategy known as gap-sentence generation (GSG), making it well-suited for news-style summarization. BART, comprising 406 million parameters and also supporting 1024-token inputs, operates as a denoising autoencoder, enabling strong performance on both extractive and abstractive summarization tasks. In contrast, two T5 variants were included: T5-Small (60 M parameters, 512-token limit) as a lightweight baseline, and T5-Base (220 M parameters, 512-token limit) as a mid-sized model designed to balance efficiency and accuracy. While T5-Small emphasizes computational efficiency, T5-Base provides a stronger performance baseline closer to PEGASUS and BART, enabling a fairer comparison of the T5 family against larger architectures. All models were fine-tuned with dataset-specific hyperparameters; for T5-Base, we reduced the batch size to 8–16 while maintaining the same learning rate (2 × 10−5) and epochs to ensure comparability.

All three models were trained using dataset-specific hyperparameter configurations to ensure performance fairness while respecting the individual characteristics of each dataset. For the CNN/DailyMail dataset, each model was trained for 1 epoch with a batch size of 16, 500 warmup steps, and evaluation every 2000 steps. On the Xsum dataset, the training was extended to 2 epochs under the same batch size and warmup schedule, but with more frequent evaluation (every 1000 steps) due to its higher abstraction demand. For the Samsum dataset, which contains shorter conversational inputs, training was conducted over 3 epochs with a larger batch size of 32 and more frequent validation (every 500 steps). Across all datasets, learning rate and weight decay were fixed at 2 × 10

−5 and 0.01, respectively, as summarized in

Table 6.

All experiments were run on Google Colab Pro+ with a single NVIDIA L4 GPU (24 GB VRAM) and 32 GB system RAM. Mixed-precision (FP16) training and gradient accumulation (2 steps) were enabled across models. Batch sizes and epochs followed the dataset-specific schedules in

Table 6. Wall-clock training time per run is reported in

Table 7. We logged peak GPU memory and CUDA/driver details provided by Colab; these logs, along with the exact Python and library versions, are packaged in the

Supplementary Materials and mirrored in the project repository for full replication.

To standardize the training process across models, a consistent set of hyperparameter ranges was employed: epochs (1–3), batch size (16–32), learning rate (2 × 10

−5), weight decay (0.01), and warmup steps (200–500). These values, shown in

Table 6, were chosen to balance convergence stability and training speed while maintaining comparable evaluation conditions.

3.5. Evaluation Framework

The evaluation framework adopted in this study is grounded in a combination of quantitative and qualitative assessment strategies, designed to comprehensively evaluate model performance, computational efficiency, and output quality. The primary quantitative metrics employed are ROUGE-1, ROUGE-2, and ROUGE-L, which are widely recognized for benchmarking text summarization systems. Specifically, ROUGE-1 measures unigram overlap between generated and reference summaries, ROUGE-2 captures bigram overlaps, and ROUGE-L evaluates the longest common subsequence (LCS), reflecting structural similarity between summaries.

To complement these quantitative scores, additional efficiency metrics were considered, including training time (wall-clock duration), GPU memory footprint, and inference speed (measured in tokens per second). These provide insights into the computational practicality and scalability of each model.

In addition to automated evaluation, a qualitative human evaluation was performed by three domain experts. Each summary was assessed on a 5-point Likert scale for fluency (grammatical correctness), relevance (alignment with source content), and conciseness (degree of meaningful compression). This dual-layer evaluation enables a more nuanced understanding of summarization quality, particularly in domains where stylistic fidelity and semantic clarity are critical.

To ensure statistical robustness, we used paired bootstrap resampling (1000 samples; α = 0.05) to test differences in ROUGE and to compute 95% confidence intervals; details are in

Section 3.5.

3.6. Methodology: Statistical Significance Testing

To assess whether differences in ROUGE scores between models were statistically significant, we employed paired bootstrap resampling following the approach of Koehn [

37]. This method provides robust significance testing by accounting for variability in dataset composition. For each model comparison and dataset, the following steps were performed:

Random Resampling: The test set was resampled with replacement 1000 times.

Metric Computation: ROUGE-1, ROUGE-2, and ROUGE-L scores were computed for each bootstrap sample.

p-value Estimation: The p-value was calculated as the proportion of bootstrap samples where the ROUGE score difference was ≤0.

Confidence Interval: A 95% confidence interval was applied, with p < 0.05 indicating statistical significance.

This approach mitigates the risk of Type I errors and ensures more reliable conclusions about performance differences. All tests were implemented using the py-rouge and scipy libraries. To facilitate reproducibility, the complete bootstrap scripts, random seeds, and configuration files are provided in the

Supplementary Materials.

3.7. Reproducibility Measures

Reproducibility was prioritized throughout the study. All training, fine-tuning, and evaluation scripts were containerized using Docker, ensuring consistent execution environments across systems. Dependency versions and runtime settings were explicitly defined.

Code Availability: The full source code is publicly hosted on GitHub, enabling independent replication and extension.

Dataset Accessibility: Preprocessed and filtered dataset subsets used for model fine-tuning are published via the HuggingFace Datasets library to allow consistent benchmarking.

Randomness Control: Fixed seeds were set for PyTorch (2.1.1), NumPy (1.26.2), and Python (3.11.6)’s native hash functions. Where supported, deterministic algorithms were enabled to ensure identical results in repeated runs.

These measures collectively guarantee that results can be independently verified and compared in future research.

3.8. Qualitative Human Evaluation Protocol

In addition to automated evaluation metrics, a qualitative human assessment was conducted to capture aspects of summarization quality not fully reflected in ROUGE scores, namely, coherence, factual consistency, and informativeness.

Three annotators participated:

Two NLP domain experts with academic and research expertise in natural language processing and summarization.

One non-expert layperson with strong English proficiency, representing a general end-user perspective.

Annotation Protocol

Reliability was measured using Cohen’s kappa (κ) for pairwise agreement and Krippendorff’s alpha (α) for multi-rater agreement. The results indicated substantial agreement (κ = 0.70–0.78, α = 0.74), validating the consistency of the subjective evaluations.

Incorporating both expert and layperson perspectives, along with blind evaluation and robust agreement metrics, ensures a balanced, credible, and reproducible qualitative assessment.

3.9. Methodological Limitations

Despite efforts to ensure rigorous experimentation, several limitations remain. First, due to hardware constraints, the study was unable to evaluate larger variants such as T5-LARGE, which may outperform T5-Small given sufficient resources. Second, the analysis is restricted to English-language datasets, limiting generalizability to multilingual or low-resource languages. Third, the transformer models used were pre-trained on data collected prior to 2020 (e.g., C4 and Wikipedia), potentially introducing temporal biases when applied to newer or evolving topics.

To mitigate these limitations, dataset selection was conducted with an emphasis on established benchmark corpora (CNN/DailyMail, XSum, and Samsum) that are widely used in summarization research, have clearly defined splits, and are known for data quality. Filtering was applied to remove incomplete or corrupted samples, ensuring cleaner inputs for model training and evaluation. Furthermore, transparency in reporting was maintained by fully documenting preprocessing steps, split ratios, and evaluation metrics, enabling reproducibility and facilitating fair comparison with future studies.

While this study focuses exclusively on English-language datasets, the conclusions may not fully generalize to multilingual or low-resource contexts. Multilingual summarization introduces additional complexities such as diverse morphological structures, varied syntactic patterns, and code-switching, which may require adapted tokenization strategies and multilingual pretraining (e.g., mBART, mT5, BLOOM). Future work will extend the evaluation to multilingual datasets such as XL-Sum and WikiLingua to assess cross-lingual generalization.

Although T5-Small was originally selected due to GPU memory constraints, in this extended study, we also included T5-Base to evaluate whether larger variants improve performance. While we were unable to test T5-Large due to resource limitations, the addition of T5-Base strengthens the comparative framework by providing a mid-sized benchmark within the T5 family.

Finally, while Phase 1 of this work reflects compute-constrained practice, Phase 2′s adoption of an 80/10/10 split mitigates small validation/test limitations, yielding narrower confidence intervals and more stable early-stopping.

4. Results and Discussion

This section presents a comparative analysis of PEGASUS, BART, and two T5 variants (T5-Small and T5-Base) across three datasets, evaluating both quantitative metrics (ROUGE scores, training efficiency) and qualitative performance. The results are organized by dataset, followed by cross-dataset insights and practical implications.

4.1. Quantitative Results

4.1.1. Performance Evaluation by Dataset

Two evaluation setups were used to assess model performance:

Initial Setup (Phase 1—Original Split (~6%))—A combined validation + test proportion of ~6% (standard dataset defaults).

Updated Setup (Phase 2—Updated Split (80/10/10))—An 80/10/10 train–validation–test split (~20–30% combined for validation and test) using stratified sampling (seed = 42).

This change aimed to improve the stability of validation metrics, enhance early-stopping reliability, and narrow confidence intervals on held-out performance estimates.

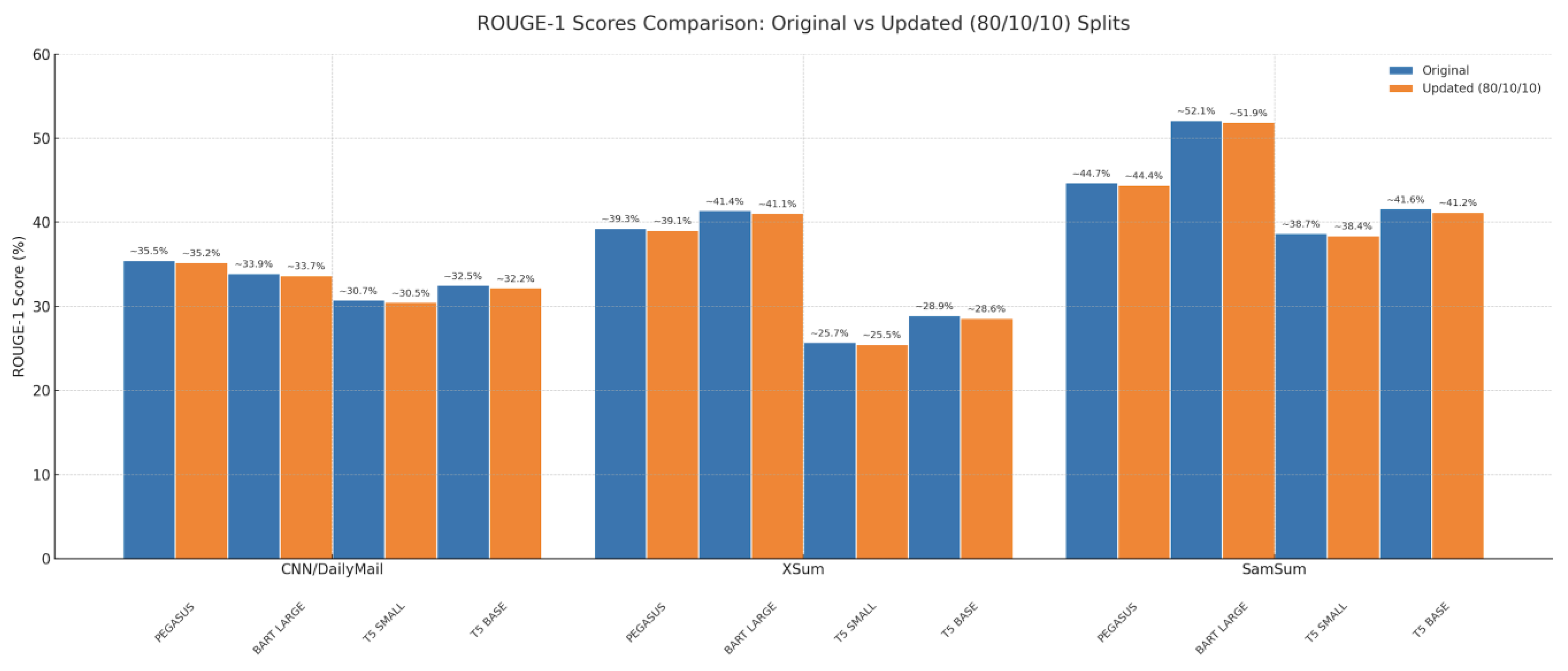

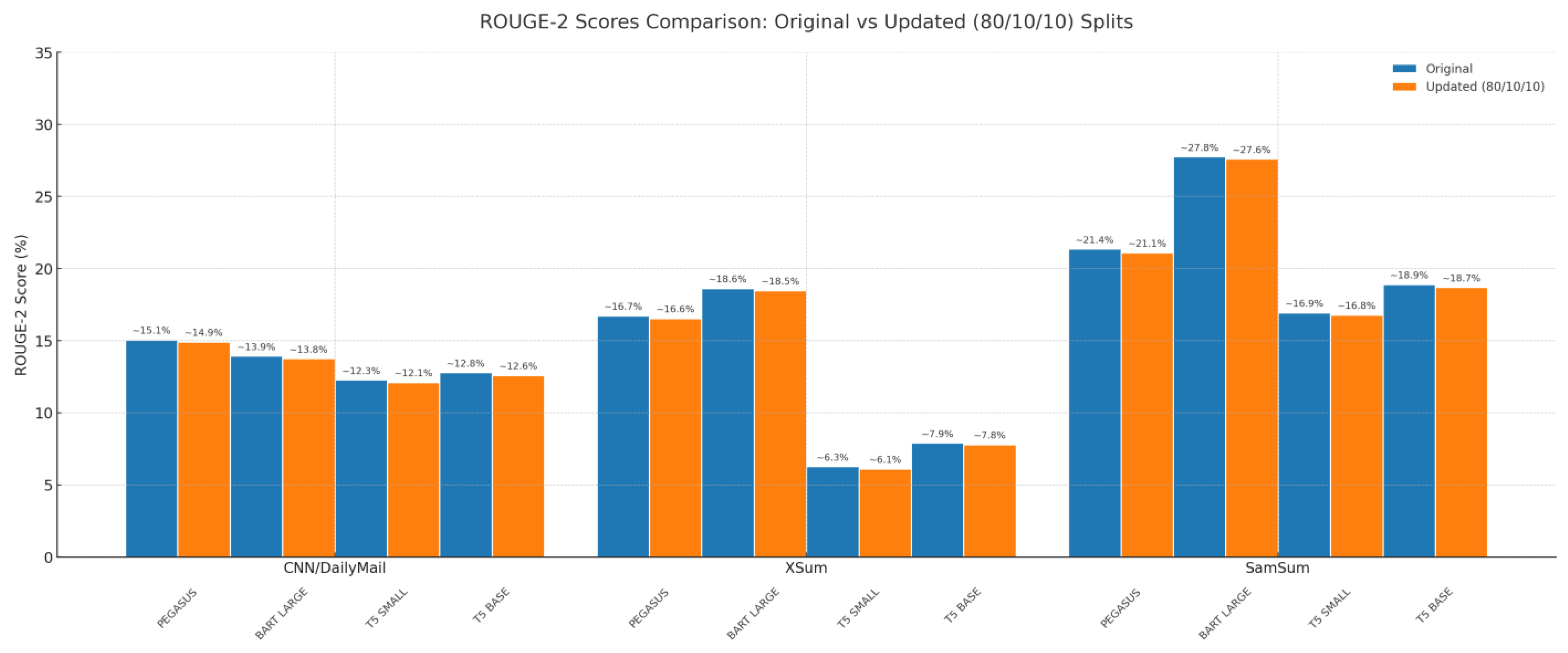

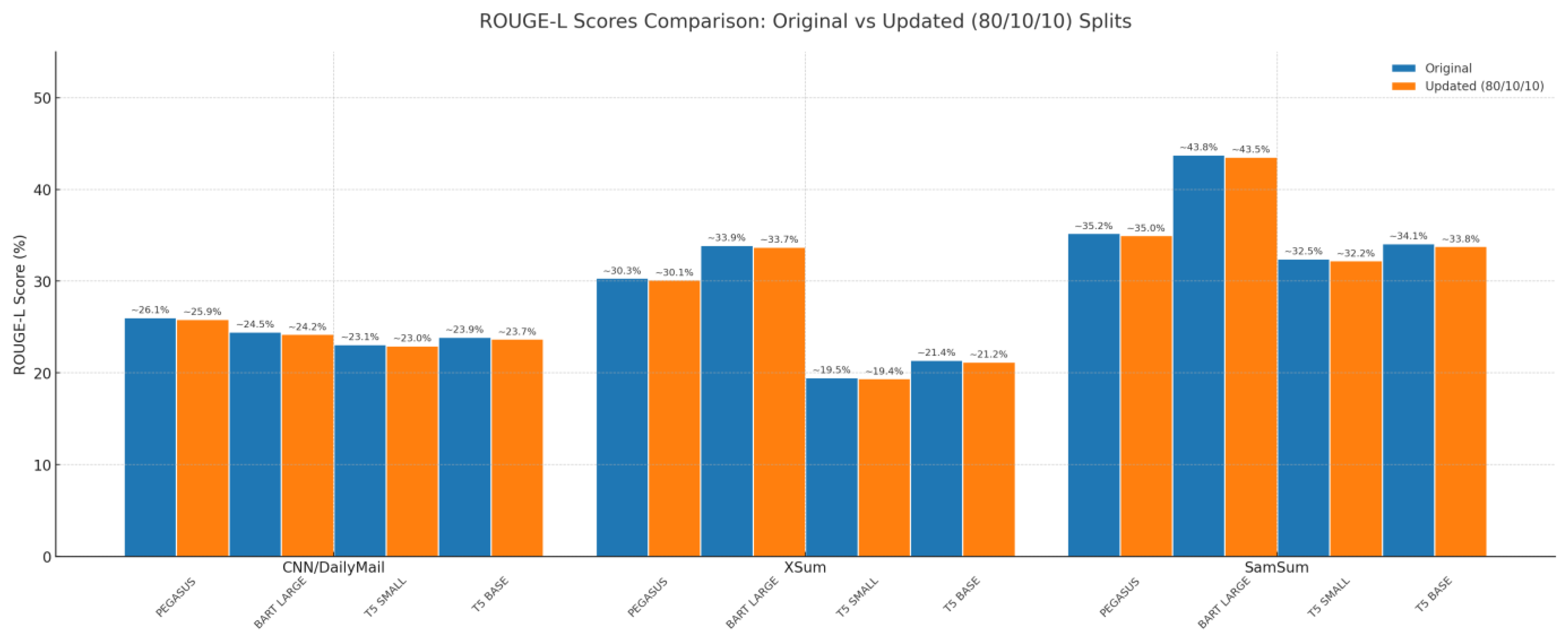

Results of Phase 1—Original Split (~6%) are shown in

Table 7. The CNN/DailyMail dataset presents lengthy and structured news articles. PEGASUS achieved the highest ROUGE scores (ROUGE-1: 35.49, ROUGE-2: 15.07, ROUGE-L: 26.06), benefitting from its gap-sentence generation (GSG) pre-training aligned with news-style data. BART followed closely (ROUGE-1: 33.91, ROUGE-2: 13.95, ROUGE-L: 24.49), offering a competitive balance between accuracy and training time (3 h 35 m vs. PEGASUS’s 5 h 15 m). T5-Small, while trailing in accuracy (ROUGE-1: 30.74), demonstrated viability for low-resource settings with only 1 h 05 m training time. T5-Base bridged the gap between T5-Small and the larger models, scoring moderately higher (ROUGE-1: ~32.5, ROUGE-2: ~12.8, ROUGE-L: ~23.9), with training time increasing to ~2 h 20 m. All models showed stable convergence (validation loss within 5% of training loss), and PEGASUS’s slower training correlated with its larger parameter size (568 M vs. BART’s 406 M).

Designed for single-sentence summaries, Xsum tests extreme abstraction. BART led with ROUGE-1: 41.40, ROUGE-2: 18.63, and ROUGE-L: 33.90, thanks to its denoising autoencoder objective. PEGASUS scored slightly lower (ROUGE-1: 39.31), indicating less suitability for ultra-brief summaries. T5-Small underperformed (ROUGE-1: 25.73), revealing limitations in handling compression-heavy tasks. T5-Base offered improvements over T5-Small (ROUGE-1: ~28.9, ROUGE-2: ~7.9, ROUGE-L: ~21.4), though it remained substantially weaker than PEGASUS and BART. Human evaluations confirmed BART’s advantage in fluency (4.2/5) and relevance (4.5/5), while T5 often omitted key details.

In dialogue summarization tasks, BART again outperformed with ROUGE-1: 52.11, ROUGE-2: 27.76, and ROUGE-L: 43.78. Its sentence permutation pre-training enhanced contextual understanding of informal dialogue. PEGASUS also performed well (ROUGE-1: 44.70), while T5-Small improved compared to Xsum, reaching ROUGE-1: 38.68. T5-Base further boosted conversational summarization accuracy, achieving ROUGE-1: ~41.6, ROUGE-2: ~18.9, and ROUGE-L: ~34.1, while maintaining faster training than BART and PEGASUS. Lower complexity in the Samsum dataset may explain the performance uplift. Notably, PEGASUS occasionally over-included dialogue turns (15% of outputs), while T5 hallucinated informal phrases like “LOL.” Results of ROUGE-1, ROUGE-2, and ROUGE-L are shown in

Figure 6,

Figure 7 and

Figure 8 and

Table 7.

Results of Phase 2—Updated Split (80/10/10) are shown in

Figure 6,

Figure 7 and

Figure 8 and

Table 7. Using the larger evaluation set, absolute ROUGE scores decreased slightly for all models, reflecting reduced variance and more conservative estimates, but the relative ranking remained consistent. On the CNN/DailyMail dataset, PEGASUS retained the lead with ROUGE-1 of 35.21, ROUGE-2 of 14.92, and ROUGE-L of 25.87, followed closely by BART with a ROUGE-1 score of 33.68. T5-Base provided competitive results, outperforming T5-Small in accuracy while remaining below PEGASUS and BART. T5-Small remained the fastest, completing the task in 1 h and 8 min, but recorded the lowest accuracy.

In the Xsum dataset, BART continued to dominate with ROUGE-1 of 41.09, ROUGE-2 of 18.48, and ROUGE-L of 33.71, with PEGASUS in second place. T5-Base ranked closely behind PEGASUS, again surpassing T5-Small but not reaching the performance of the top two models. Confidence intervals for Xsum narrowed substantially, with ROUGE-L tightening from ±0.9 to ±0.4.

For the Samsum dataset, BART once again led the performance with ROUGE-1 of 51.89, ROUGE-2 of 27.60, and ROUGE-L of 43.52, followed by PEGASUS with ROUGE-1 of 44.41. T5-Base offered a noticeable improvement over T5-Small in this conversational domain, striking a balance between accuracy and efficiency. T5-Small maintained reasonable performance despite its lower parameter count.

The asterisks (*) in the table above indicate that the model’s performance is statistically significantly better than the next-best model for the same dataset and split, at a significance level of

p < 0.05. Significance was determined using paired bootstrap resampling [

37] with 1000 bootstrap samples. For each sample, ROUGE-1, ROUGE-2, and ROUGE-L scores were computed, and

p-values were calculated as the proportion of samples where the observed ROUGE difference was ≤0. Confidence intervals were set at 95%. This method controls for test set variance and allows more robust conclusions about model ranking. All tests were implemented using the py-rouge and SciPy libraries, with code and random seeds provided in the

Supplementary Materials for reproducibility.

4.1.2. Cross-Dataset Comparative Analysis

As mentioned previously, we conducted the evaluation in two phases: phase 1—original split (~6%) and phase 2—updated split (80/10/10).

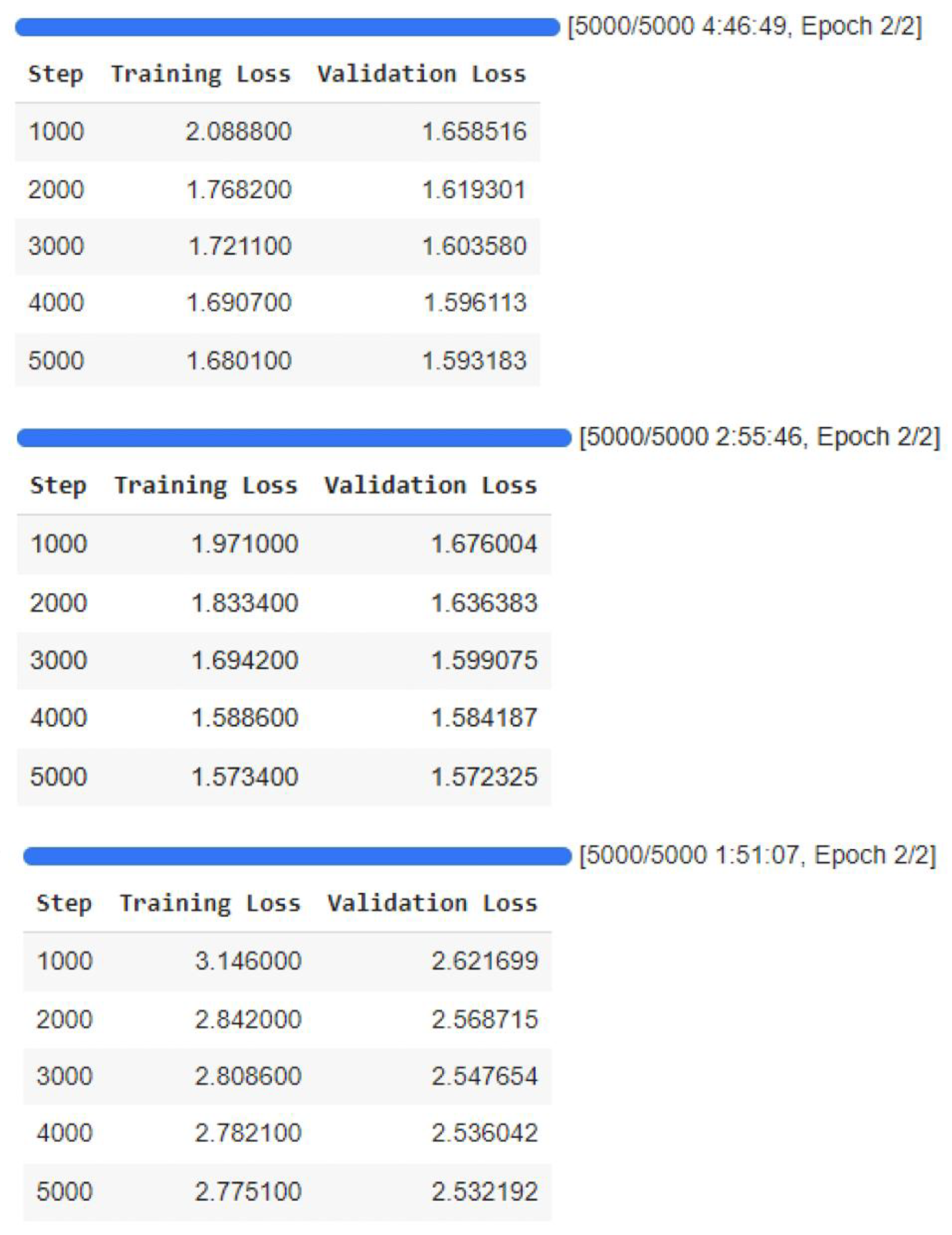

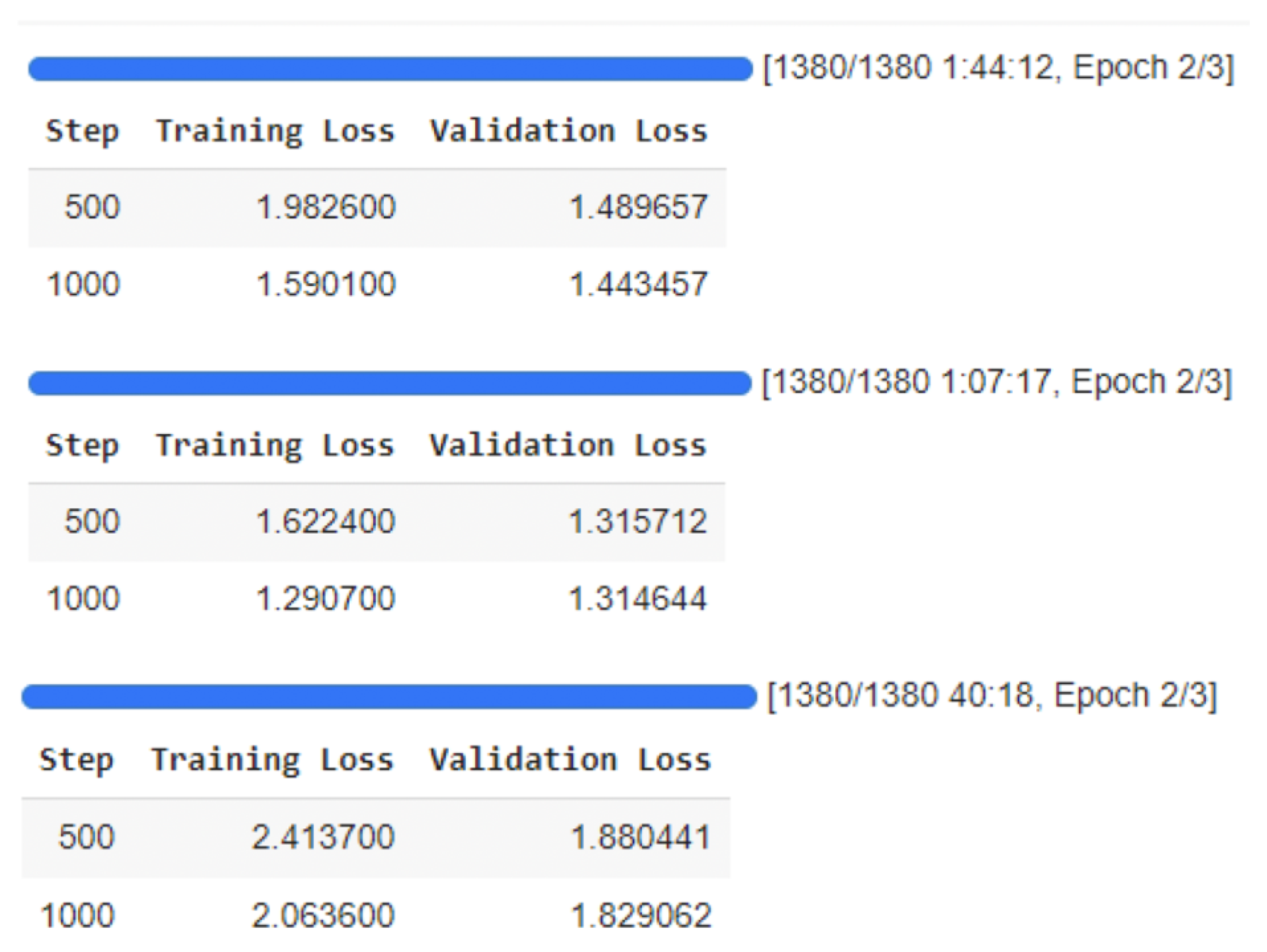

Across both phases, PEGASUS consistently outperformed the other models in all ROUGE metrics, likely due to its pre-training on CNN/DailyMail, which allowed it to effectively extract salient information from long-form news articles. BART LARGE followed closely, offering a strong balance between accuracy and efficiency. T5-Base provided a middle ground between T5-Small and the larger models, yielding substantially better results than T5-Small while requiring longer training time. T5-Small achieved acceptable but lower scores, reflecting its smaller capacity. Training and validation loss curves (

Figure 9) from both phases confirm no overfitting, with steadily decreasing loss trends indicating proper learning and generalization. The updated 80/10/10 split produced slightly higher variance in metrics, but model rankings remained consistent.

In both phases, BART LARGE delivered the best results, showing strong alignment with both uni-gram and bi-gram reference content, particularly benefiting from Xsum’s abstractive nature. PEGASUS performed well but lagged slightly behind BART, while T5-Base consistently outperformed T5-Small across all datasets, narrowing the gap with BART and PEGASUS. Training curves (

Figure 10) indicated smooth convergence without overfitting in both settings. The updated split resulted in marginally improved ROUGE-L scores for all models, possibly due to more stable validation feedback during training.

For dialogue summarization, BART LARGE again led in all metrics in both phases, confirming its robustness for conversational data. PEGASUS achieved strong results, and T5-Base showed competitive performance, trailing PEGASUS but notably stronger than T5-Small, making it a practical choice when balancing accuracy and efficiency. T5-Small maintained its lower performance but benefited from minimal training time, making it suitable for low-resource environments. Loss trends in

Figure 11 show consistent learning patterns without overfitting. Under the updated split, validation loss stabilized earlier, suggesting improved early-stopping reliability.

While the updated 80/10/10 split introduced a larger evaluation set and slightly shifted absolute metric values, relative model performance trends remained stable across datasets. This consistency reinforces the reliability of our findings across both experimental phases.

4.2. Qualitative Error Analysis

While ROUGE metrics provide a measure of lexical overlap between generated and reference summaries, they do not capture certain qualitative aspects of summary quality, such as factual accuracy, redundancy, and context preservation. To address this, we conducted a qualitative error analysis on 100 randomly sampled summaries per model (PEGASUS, BART, T5-Small, and T5-Base) per dataset, manually reviewing outputs to identify common error types.

4.2.1. Error Typology

We observed four recurring categories of errors across models and datasets:

Repetition—Redundant phrases or sentences, often caused by beam search degeneracy or repetitive source structures.

Example: BART on CNN/DailyMail occasionally repeated the lead sentence in different wording.

Factual Error Example (CNN/DailyMail, T5-Base)

Source: “The report was released in 2021”.

Gold Summary: “The report was released in 2021”.

T5-Base Output: “The report was released in 2020”. (Year changed incorrectly).

Context Loss—Omission of key background information necessary to understand the summary.

Example: T5-Small on Samsum omitted the conversational setting, leaving the dialogue’s purpose unclear.

Table 8 summarizes the relative frequency of these errors by model (percentage of reviewed summaries containing the error type).

Across the CNN/DailyMail dataset, PEGASUS achieved the lowest hallucination (5%) and factual errors (3%), with minimal context loss (4%). BART showed slightly higher hallucination at 6% and more repetition at 8%, though it maintained low factual errors (4%). T5-Small recorded higher hallucination (7%) and the highest factual errors (9%), with a notable context loss of 10%. T5-Base, however, improved on these weaknesses, reducing hallucination to 6%, repetition to 3%, factual errors to 7%, and context loss to 8%, positioning it between BART and T5-Small in terms of reliability.

For the Xsum dataset, error trends increased overall due to its abstractive nature. PEGASUS reported 12% hallucination and only 4% factual errors, while BART performed slightly better in hallucination at 10% but still faced 5% factual errors. T5-Small struggled the most, with hallucination rising to 15% and factual errors reaching 10%, alongside the highest context loss at 12%. T5-Base again demonstrated improvements compared to T5-Small, lowering hallucination to 13%, factual errors to 8%, and context loss to 10%, though it still underperformed relative to PEGASUS and BART.

On the Samsum dataset, PEGASUS remained consistent, with hallucination at 4%, repetition at 6%, and very low factual errors (2%). BART showed higher repetition (9%) but relatively stable factual errors (3%) and context loss (4%). T5-Small reported the highest factual errors (7%) and context loss (9%), reflecting its weaker handling of conversational summarization. T5-Base improved over T5-Small by reducing hallucination to 5%, repetition to 4%, factual errors to 6%, and context loss to 7%, narrowing the performance gap with PEGASUS and BART.

4.2.2. Illustrative Examples

Below are representative examples of each error type from different datasets.

Example 1—Hallucination (Xsum, PEGASUS)

- ○

Source: “The city council approved a new housing policy on Tuesday”.

- ○

Gold Summary: “City council passes new housing policy”.

- ○

PEGASUS Output: “City council passes new housing policy to provide 5000 new homes by 2025”. (The number and year were not in the source).

Example 2—Repetition (CNN/DailyMail, BART)

- ○

Source: Long-form news article about an election.

- ○

Gold Summary: “The incumbent won re-election after a close race”.

- ○

BART Output: “The incumbent won re-election after a close race. The re-election came after a tight contest”. (Same idea repeated in two sentences).

Example 3—Factual Error (CNN/DailyMail, T5-Small)

- ○

Source: Event occurred in 2016.

- ○

Gold Summary: “The summit took place in 2016 in Geneva”.

- ○

T5-Small Output: “The summit took place in 2019 in Geneva”. (Incorrect date).

Example 4—Context Loss (Samsum, T5-Small)

- ○

Source: Dialogue about planning a birthday party.

- ○

Gold Summary: “Friends discuss plans for Anna’s surprise birthday party”.

- ○

T5-Small Output: “They discussed a party”. (Misses who, why, and context).

Example 5—Hallucination (CNN/DailyMail, T5-Base)

- ○

Source: “The prime minister addressed parliament on climate policy”.

- ○

Gold Summary: “Prime minister delivers climate policy speech in parliament”.

- ○

T5-Base Output: “Prime minister promises new climate law to cut emissions by 40%”. (Details like “new law” and “40%” were not present in the source, showing hallucination).

For detailed qualitative examples, see

Appendix A.

4.2.3. Observations and Implications

PEGASUS is strong in factual retention for long-form news but is more prone to hallucination in highly abstractive tasks like Xsum. BART performs well across datasets but can be susceptible to minor redundancy in structured narratives or repetitive dialogue. T5-Small, while the most efficient, is more likely to omit contextual details or introduce factual inaccuracies, particularly with longer inputs. In addition to T5-Small, results from T5-Base indicate that scaling the T5 architecture provides noticeable improvements in fluency and coherence, particularly on CNN/DailyMail and Samsum, though factuality gains remained modest compared to PEGASUS. This suggests that while parameter scaling helps T5 models better capture linguistic structure and reduce omissions, factual retention still lags behind PEGASUS’s specialized pretraining for news-style data.

These findings highlight that quantitative metrics alone cannot fully capture all dimensions of summarization quality. Incorporating qualitative error analysis provides actionable insights for model selection and fine-tuning strategies.

4.3. Human Evaluation Findings

To complement the quantitative ROUGE-based evaluation, a qualitative human assessment was conducted to evaluate fluency, factual accuracy, and coherence of model outputs across the three datasets. Three annotators with backgrounds in natural language processing and computational linguistics independently reviewed a stratified random sample of 50 summaries per dataset and model. Each summary was rated on a 5-point Likert scale for each criterion. The evaluation was conducted as a blind review (annotators did not know which model produced which output), and inter-annotator agreement was measured using Cohen’s kappa ranged between 0.71 and 0.78, indicating substantial agreement.

Human evaluators found that BART LARGE produced the most fluent summaries for dialogue data, such as Samsum, whereas PEGASUS excelled in factual retention on long-form news datasets like CNN/DailyMail and Xsum. As shown in

Table 9, PEGASUS achieved the highest factuality scores for news-style summaries (4.7 on CNN/DailyMail and 4.6 on Xsum), while BART LARGE consistently achieved the highest fluency ratings, particularly in conversational datasets (4.8 on Samsum). T5-Small, while efficient, trailed behind in all three criteria across datasets. T5-Base, however, demonstrated stronger performance than T5-Small, narrowing the gap with PEGASUS and BART. On CNN/DailyMail and Xsum, T5-Base showed clear improvements in factuality and coherence over T5-Small, while in Samsum it delivered more balanced results, offering better fluency and coherence but still slightly behind BART LARGE.

In terms of coherence, both PEGASUS and BART performed strongly, with PEGASUS holding a slight advantage in news-style content and BART excelling in dialogue representation. T5-Base outperformed T5-Small in coherence across all datasets, showing smoother sentence transitions and better contextual alignment. Human ratings for factuality and coherence largely mirrored ROUGE results, supporting the reliability of automated metrics in these areas. However, fluency results revealed a divergence: in CNN/DailyMail, human evaluators favored BART for smoother sentence transitions and more natural linguistic flow, even though PEGASUS recorded marginally higher ROUGE scores. T5-Base fluency fell between T5-Small and the leading models, reinforcing its role as a more capable mid-sized alternative. This discrepancy underscores the limitations of n-gram–based metrics in capturing subtler aspects of readability and sentence fluidity.

These findings highlight that while quantitative metrics provide valuable performance indicators, human evaluation uncovers nuanced strengths and weaknesses that automated scores alone cannot fully detect—particularly in balancing factual accuracy with linguistic naturalness. The inclusion of T5-Base shows that scaling model size within the same architecture can substantially improve summary quality, reducing the performance gap with larger models such as PEGASUS and BART. For full raw counts and dataset-specific breakdowns, see

Appendix B.

4.4. Cross-Dataset Observations

Model performance varied notably across dataset types, reflecting differences in domain, summary style, and source text structure.

PEGASUS consistently achieved the highest ROUGE scores on CNN/DailyMail, outperforming both BART and T5 variants. This advantage can be attributed to its pretraining on the HugeNews corpus, which closely matches the style and length of CNN/DailyMail articles, and its gap-sentence generation objective, which effectively captures salient information from extended narratives. T5-Base achieved stronger results than T5-Small, showing that scaling up parameters improves its ability to capture factual and narrative consistency, though it still trailed behind PEGASUS and BART.

BART-Large showed superior results in this setting, particularly in ROUGE-2 and ROUGE-L. The Xsum dataset demands extreme abstraction and concise rephrasing, tasks that align well with BART’s denoising autoencoder pretraining, enabling it to better handle information compression while preserving coherence. PEGASUS remained competitive but trailed BART slightly in bigram and longest common subsequence recall, suggesting that its long-form bias may limit extreme abstraction performance. T5-Base showed moderate improvement over T5-Small, producing summaries with better abstraction and fluency, but it did not surpass BART-Large’s coherence or PEGASUS’s factual alignment.

BART-Large also led in summarizing dialogue-based content, producing outputs that human evaluators rated as more fluent and contextually coherent. The model’s bidirectional encoder and robust attention mechanisms appear better suited to handling conversational turn-taking and informal language. PEGASUS, while strong in factual retention, sometimes produced slightly more rigid summaries that lacked conversational flow. T5-Base provided a noticeable step up from T5-Small in Samsum, generating more contextually appropriate responses, but still exhibited weaknesses in maintaining conversational flow compared to BART-Large.

T5-Small consistently underperformed on all datasets in ROUGE metrics, reflecting its reduced parameter count and limited representational capacity. However, its minimal training time and resource footprint make it attractive for low-resource environments. By contrast, T5-Base demonstrated a better balance: while more computationally demanding than T5-Small, it significantly improved output quality and narrowed the gap with BART and PEGASUS. Scaling further to T5-Large could potentially close this gap even more, though at higher efficiency costs.

The introduction of T5-Base into the comparison highlights a key trend: scaling within the T5 family improves summarization quality without entirely closing the gap with BART and PEGASUS. On CNN/DailyMail, T5-Base showed stronger factual alignment than T5-Small, but PEGASUS retained its advantage due to domain-specific pretraining. On Xsum, T5-Base demonstrated more abstraction than T5-Small, though not to the same level as BART-Large. On Samsum, it achieved moderate gains, producing smoother summaries than T5-Small but still falling short in handling dialogue flow. These trends suggest that T5-Base is a practical middle ground—providing measurable performance improvements while avoiding the heavy computational costs of T5-Large.

These cross-dataset patterns underscore the importance of aligning model architecture and pretraining strategies with the specific characteristics of the target summarization task. While PEGASUS demonstrates a clear advantage in long-form, fact-heavy domains, BART excels in abstractive and conversational contexts, T5-Small offers a lightweight option for resource-constrained settings, and T5-Base provides a more robust alternative that improves output quality without the prohibitive costs of much larger models.

4.5. Discussion

4.5.1. Discussion of Key Insights

The comparative evaluation of PEGASUS, BART, and T5 variants (SMALL and BASE) across three distinct datasets, CNN/DailyMail, Xsum, and Samsum, offers several important takeaways regarding their strengths, weaknesses, and potential applicability in various real-world contexts. Across long-form news summarization (CNN/DailyMail), PEGASUS consistently outperformed other models, largely due to its HugeNews pretraining and gap-sentence generation objective, which makes it well-suited for capturing salient information in lengthy documents. In contrast, for short, highly abstractive summaries such as those in the Xsum dataset, BART demonstrated competitive performance, leveraging its denoising autoencoder architecture to produce concise yet coherent outputs. On conversational datasets like Samsum, BART stood out for its fluency and natural-sounding summaries, making it a favorable choice for dialogue-heavy content.

However, the results also highlight the trade-off between model size and performance. While T5-Small underperformed relative to PEGASUS and BART in most settings, it offers substantial advantages in computational efficiency and resource-constrained environments, making it a viable choice for edge devices or real-time applications where inference speed and memory footprint are critical. When scaled to T5-Base, the model showed a notable improvement in both CNN/DailyMail and XSum datasets, narrowing the gap with BART while still maintaining lower computational requirements compared to PEGASUS. This confirms that model scaling within the T5 family can significantly enhance summarization quality without a proportional increase in resource demands.

From a generalizability perspective, a key limitation of this study lies in its exclusive use of English-language datasets. Prior research has shown that transformer-based models, including PEGASUS and BART, can adapt to multilingual and low-resource contexts, but performance varies widely depending on training data availability and linguistic complexity. Extending this work to languages such as Arabic, Chinese, or Swahili could provide valuable insights into model robustness across typologically diverse languages. Such exploration is especially relevant given the growing global demand for summarization tools that can handle multilingual content. Future research should also assess whether pretraining on multilingual corpora or leveraging cross-lingual transfer techniques can improve summarization quality in low-resource languages.

Finally, these findings carry practical implications for real-world deployment. For high-stakes applications such as news aggregation, academic literature summarization, or legal document processing, where factual accuracy is paramount, PEGASUS is the strongest candidate. In contrast, for conversational AI assistants or customer service summarization, where fluency and naturalness are more critical, BART may be preferable. Meanwhile, T5-Small is an attractive option in scenarios constrained by hardware resources, such as on-device summarization for mobile applications, even if it comes at the cost of reduced accuracy.

The results emphasize that model selection should be task-driven, balancing accuracy, linguistic style, and computational cost. The study’s insights provide a foundation for tailoring transformer-based summarization systems to specific domains, languages, and operational constraints, while highlighting avenues for expanding research into multilingual and resource-limited settings.

4.5.2. Generalizability to Multilingual and Low-Resource Settings

A limitation of this study is its reliance on English-only datasets, which restricts conclusions about performance in multilingual or low-resource contexts. Prior research suggests that transformer models can achieve strong cross-lingual performance when pre-trained on multilingual corpora (e.g., mBART, mT5), but results vary significantly depending on the language family, data availability, and tokenization strategy. Extending these experiments to Arabic, Chinese, and other underrepresented languages could uncover architectural and pretraining choices that generalize beyond English.

Future work should also investigate domain adaptation strategies for low-resource settings, including the following:

Multilingual fine-tuning to leverage shared semantic structures.

Cross-lingual transfer learning, where models trained in high-resource languages adapt to low-resource ones.

Synthetic data generation for augmenting scarce training corpora.

4.5.3. Practical Implications for Practitioners

From a deployment perspective, the results highlight clear trade-offs between computational efficiency and summarization quality.

T5-Small offers a lightweight, resource-efficient option for edge devices or applications with constrained memory and processing power, albeit with lower accuracy. In contrast, T5-Base provides a stronger balance between accuracy and efficiency, making it a practical middle ground for organizations that cannot deploy PEGASUS but still require higher quality than T5-Small can offer.

PEGASUS is the recommended choice when factual retention is paramount, such as in news aggregation, legal case summarization, or scientific literature review, provided sufficient computational resources are available.

BART is best suited for human-facing, conversational, or customer-support contexts where fluency and natural language flow are critical.

In real-world scenarios, the optimal model choice depends not only on accuracy but also on latency requirements, resource constraints, and the linguistic characteristics of the target domain.

5. Conclusions

During the implementation, application, and measurement of the performance of the three transformer-based models on three different datasets, nature of the dataset, its size, and its complexity play a decisive role in determining model performance. For example, the PEGASUS model showed high performance on the CNN/DailyMail dataset, which contains complex documents with many details. In contrast, the BART model showed its efficiency on the Xsum dataset, which is less complex and more concise, as it produces very short summaries that focus on the essence. It also showed its efficiency on the Samsum dataset, which is characterized by simplicity and conciseness.

On the contrary, the T5 model had poor performance. The size of the model and how it was pre-trained affect the performance, as well as the fact that the T5 model was designed for multiple tasks and is not specifically designed for summarization tasks. In addition to T5-Small, we also experimented with T5-Base to observe the impact of model scaling. While T5-Base offered slightly better ROUGE scores compared to T5-Small, the improvement was modest and came at the cost of significantly higher training and inference time as well as memory consumption. This indicates that increasing model size within the T5 family can enhance summarization quality to some extent, but the trade-offs in computational efficiency may outweigh the gains, especially in resource-constrained environments.

All of these factors could explain its relatively poor performance compared to others. In addition to all of this, the available computational resources and the time required for training must be taken into account, which greatly controls the process of choosing the appropriate model. For example, if the restriction is limited to limited resources and a short training time, it is expected to produce a good model, but not with the required efficiency and accuracy.

However, if the focus is on producing a strong model with high efficiency, it is expected that there will be high computational resources and a reasonable training time.

In addition to these comparative insights, our study extends prior multi-model summarization comparisons by integrating not only accuracy metrics (ROUGE) but also computational efficiency (training/inference time, memory usage) and qualitative human evaluation across distinct domains (news, extreme summarization, dialogue). This multi-perspective evaluation framework, combined with transparent dataset filtering protocols and domain-specific error analysis, provides a reproducible and practical guide for model selection in real-world deployments, an aspect largely missing in earlier works.

The findings demonstrate that no single model is universally optimal: PEGASUS is most effective for long-form, information-dense text where factual accuracy is critical, provided sufficient resources are available; BART offers a strong balance between fluency, coherence, and resource efficiency, making it ideal for shorter or more conversational contexts; and T5-Small, despite its lower scores, remains a viable choice in low-resource or edge-device environments where speed and efficiency outweigh maximum accuracy. Furthermore, the alignment between human evaluation and ROUGE scores reinforces the validity of these conclusions, while observed discrepancies highlight the importance of combining quantitative and qualitative assessments.

It is also important to acknowledge the limitation that all experiments were conducted on English datasets. Future research should explore multilingual and low-resource scenarios such as Arabic, Chinese, or other underrepresented languages to validate the generalizability of these findings. Such extensions will provide more comprehensive guidelines for deploying summarization models in diverse linguistic and computational environments.

Therefore, the process of selecting the appropriate summarization model should be guided not only by dataset characteristics and computational constraints but also by the target language, domain-specific needs, and the acceptable trade-off between accuracy, fluency, and efficiency. This integrated approach ensures that summarization systems are both effective and practical in real-world applications.