1. Introduction

The Internet has steadily grown in popularity since its inception, evolving through various stages until reaching the widely adopted Web2.0 era, while Web3.0 technologies continue to emerge and evolve [

1]. Each evolutionary step has expanded the digital ecosystem and, with it, the attack surface that cybercriminals seek to exploit. As online services have become more integral to daily life, the prevalence and sophistication of cyberattacks, particularly malware, have continued to rise [

2]. Malware operations today benefit from low entry barriers, widespread distribution channels, and increasingly advanced evasion techniques [

3], underscoring the need for robust, scalable detection mechanisms [

4].

To address these evolving threats, modern cybersecurity research increasingly relies on machine learning (ML) and deep learning (DL) to complement traditional static and dynamic analysis techniques [

5], while static analysis is efficient for known malware, it struggles against obfuscation; dynamic analysis can detect new variants but is resource-intensive and difficult to scale [

6]. Visual malware detection offers an alternative by converting malware binaries into grayscale or RGB images (

Figure 1) that can be processed by DL architectures, such as convolutional neural networks (CNNs), enabling them to learn texture and structural patterns resilient to certain obfuscation techniques. Nataraj et al. [

6] first established this transformation process, which has since become foundational to DL-based visual malware analysis.

Building on the techniques of representing malware as images, DL has become a powerful approach for learning discriminative patterns from high-dimensional data. Numerous architectures, including deep neural networks (DNNs), CNNs, and recurrent neural networks (RNNs), have been successfully applied to malware detection tasks [

7]. Although these models differ in structure and capabilities, they share a critical dependency: their performance is directly tied to the quality of the dataset used for training. Poor-quality images, inconsistent preprocessing, noise, distortions, or insufficient diversity can degrade learning, reduce generalizability, and undermine evaluation results. Consequently, curating high-quality, consistent, and up-to-date visual malware datasets is essential for advancing detection research.

This challenge brings us to the field of image quality assessment (IQA). IQA provides objective mechanisms to quantify image clarity, distortion, noise, and naturalness—factors that directly influence the effectiveness of DL models trained on visual representations. IQA has been widely applied in multimedia systems and content distribution pipelines, where image quality strongly affects performance and user experience [

8]. In this domain, three types of IQA algorithms are commonly used:

Full-reference algorithms: Compare a distorted image with an original pristine reference.

Reduced-reference algorithms: Compare extracted features from pristine and distorted images to estimate quality.

No-reference algorithms: Operate without any reference image, evaluating quality solely from the provided pixels or bitstream. These methods are among the most challenging due to the absence of ground truth [

9].

Despite the increasing reliance on visual malware datasets, no standardized methodology currently exists to assess their image quality or guide consistent dataset creation. High-entropy malware images differ significantly from natural images, making it unclear which IQA algorithms are reliable for this domain. This gap motivates the need to investigate IQA, particularly no-reference approaches, as a means to evaluate, compare, and ultimately improve visual malware datasets used in DL-based detection systems.

Building upon these research directions, this work proposes a framework to standardize the creation of new visual malware datasets and evaluate current ones. The main contributions of this paper are summarized as follows:

Bridging the gap between IQA and visual malware detection: We integrate IQA techniques with visual malware detection, enhancing the quality and robustness of malware image datasets against obfuscation and zero-day threats.

Investigation of no-reference IQA (NR-IQA) algorithms: We apply NR-IQA algorithms to evaluate the quality of visual malware datasets without needing a pristine reference image. This helps measure image quality and detect issues like blur and noise, crucial for maintaining dataset integrity.

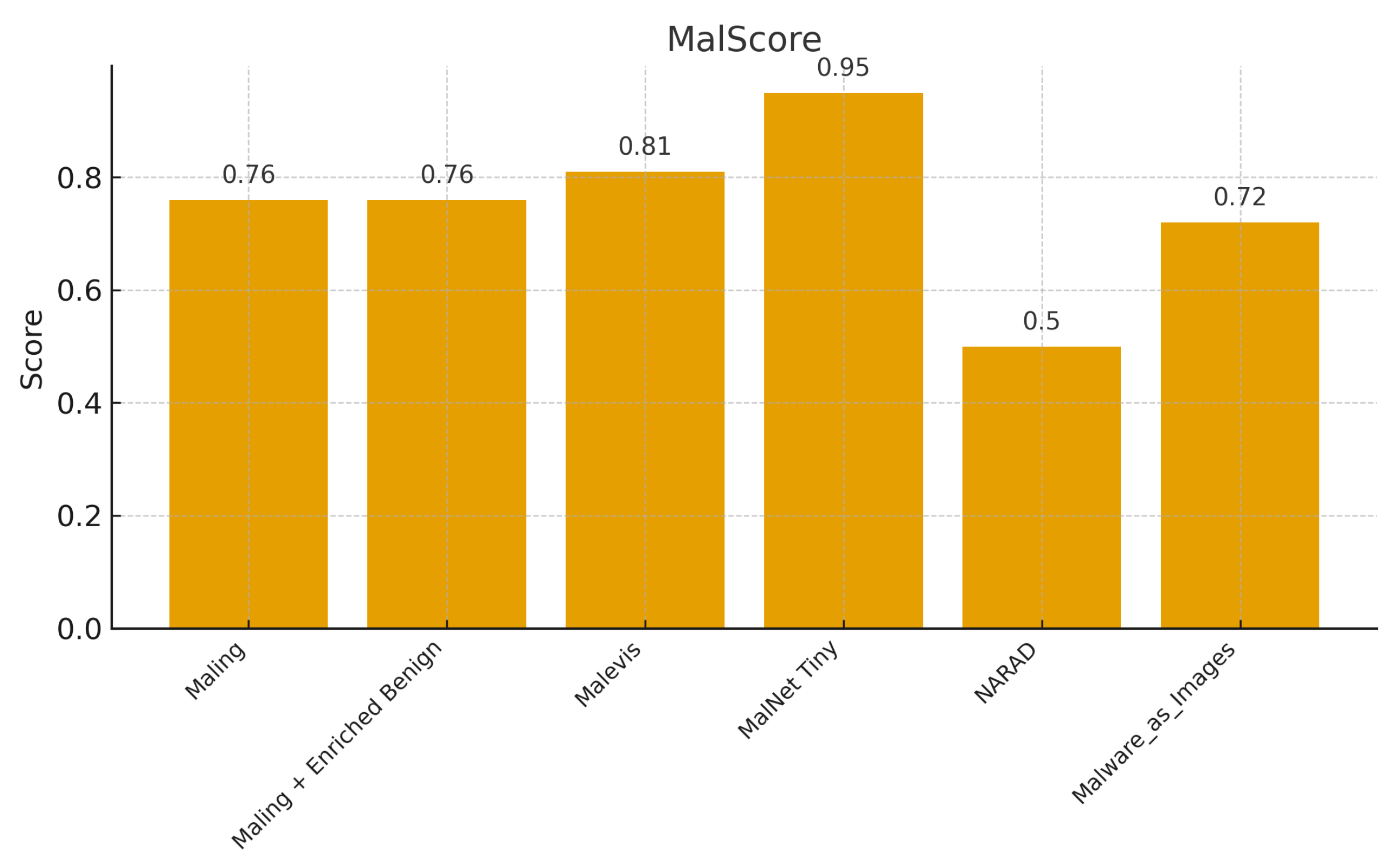

MalScore formula and framework: We propose the MalScore framework, which provides a standardized method to evaluate and compare visual malware datasets using metrics such as BRISQUE score, labeling clarity, dataset size, content diversity, data recency, and preprocessing needs. This formula helps identify areas for improvement and guides the creation of high-quality, diverse, and up-to-date datasets, improving DL model performance in malware detection.

The remaining sections of this paper are outlined as follows:

Section 2 discusses the current state of research regarding visual malware detection and IQA.

Section 3 summarizes the experiment methodology and provides a discussion.

Section 4 describes the proposed framework to evaluate existing datasets and guide the creation of new ones.

Section 5 describes the limitations faced and offers direction for future work.

Section 6 presents concluding remarks.

2. Related Work

There has been considerable attention in the literature on visual malware detection and IQA. However, further research is needed to explore the application of IQA in enhancing the development of visual malware detection.

Nataraj et al. [

6] streamlined the process of converting a malicious software’s bit stream into a visual representation, demonstrating that basing detection on grayscale images and their textures was resilient to obfuscation techniques. They applied an ML algorithm, GIST, achieving a 98% classification accuracy. Building on this, the next logical step was to investigate using RGB images instead of grayscale. He and Kim [

10] explored this by correlating every three bytes to red, green, and blue, respectively. Researchers soon adopted a DL approach to the visual malware classification problem, paving the way for neural network methodologies. Aslan and Yilma [

7] discussed various DL architectures such as CNNs and hybrid deep neural networks. Additionally, other practical DL approaches, like using autoencoders, have been showcased in the work by Xing et al. [

11].

The issue of limited visual malware datasets is frequently highlighted in many studies. Datasets often lack the desired variety or suffer from class imbalance. Additionally, some organizations are hesitant to distribute malware samples due to their sensitive nature, further complicating dataset curation. The use of conditional generative adversarial networks (cGANs) to generate synthetic malware samples has been examined in several studies [

12,

13]. These studies demonstrated that cGANs are effective in mitigating class imbalance issues and can produce a diverse range of malware samples, including synthetically obfuscated malware images.

Malware obfuscation techniques continue to challenge researchers, regardless of the detection method used. Although Nataraj et al. [

6] showed resistance to obfuscation techniques using an ML method, further research is needed. Notably, Phan et al. [

14] demonstrated that reinforcement learning (RL) paired with GANs can generate samples that effectively bypass malware classifiers.

The cited literature demonstrates that dataset curation is a complex task requiring a wide variety of malware samples. A diverse range of malware image samples can make a model more resistant to adversarial attacks and better at detecting unseen samples and zero-day threats. Currently, there is no standard model for dataset creation. Studies such as [

6,

10,

15] provide some guidance on the methodology of converting malicious code bit streams into grayscale and RGB image datasets. However, while these processes are explained, more detail is needed regarding image resolution and quality standards.

To address the dataset quality issue, image-specific concepts must be considered. Ying et al. [

9] differentiated between esthetic images and perceptual quality, noting that an image may be visually pleasing but not necessarily of excellent picture quality. Additionally, images may have the same level of degradation but differ perceptually in perceived quality. These challenges fall within the scope of the NR-IQA research problem, leading to the development of specific algorithms optimized for various tasks. Zhai and Min [

16] introduced several NR-IQA algorithms and discussed human opinion scoring and the purpose behind these algorithms. For instance, they introduced BRISQUE as a spatial quality evaluator, which is considered a general-purpose IQA measure. Shahid et al. [

8] generalized common issues affecting photos, such as blurring, blockiness, ringing, and noise, which are metrics used by pixel-based NR-IQA algorithms to provide a score. Additionally, NR-IQA algorithms can be tuned to detect the “naturalness” of an image.

Unlike existing studies that primarily focus on either the technical aspects of malware detection or the quality assessment of general images, our paper bridges these two fields by applying IQA techniques specifically to the domain of visual malware detection. We propose the MalScore framework, which uses no-reference IQA algorithms to evaluate and enhance the quality of malware image datasets, thereby improving the robustness and effectiveness of malware detection models.

3. Experimental Methodology and Discussion

This section outlines the experimental methodology and discusses the implementation and evaluation of our proposed techniques for enhancing visual malware detection.

3.1. Benchmark Datasets

Experiments were performed on five datasets: Malimg, Malevis, MalNet, NARAD, and Malware as Images.

3.1.1. Malimg Dataset [17]

It contains 9339 visual malware samples spanning across 25 families. For CNN training and blur assessment, the dataset used was enriched with an additional 981 benign grayscale samples obtained from [

18]. During framework calculations, the original Malimg dataset was used.

3.1.2. Malevis Dataset [19]

It contains 9100 training samples with an additional 5126 validation RGB samples, all corresponding to 25 malware families.

3.1.3. MalNet Dataset [20]

It is a massive, 80GB database of Android malware image representations and graph data. It contains 1,262,024 images spanning over 696 families and 47 different types of malware. To improve logistics, the MalNet-Tiny dataset was used containing 61,201 training images, 8742 validation images, and 17,486 test images spanning 43 types of malware.

3.1.4. The NARAD Dataset [21]

It contains 48,240 binary visualization images generated using fuzzy logic. The “Malicious images” subfolder was used containing 11,919 files.

3.1.5. Malware as Images [22]

It converted portable executables (PE) of both malicious and benign software. The malicious files were taken from the Zoo [

23] malware repository and benign files were chosen from PC Magazine’s The Best Free Software of 2020. Each malicious folder contains 75 files with 8 various processing methods. Each benign folder contains 61 files also processed with 8 various processing methods. All original dataset images included an unacceptable X and Y axis border for CNN use. Photos were carefully cropped to remove the border. This manual cropping method could lead to minuscule amounts of data loss due to methodology constraints. To make the overall testing process faster, the Lanczos_1200 processed images were taken and down sampled by 25% using the Lanczos interpolation algorithm.

Table 1 summarizes these known and miscellaneous datasets.

3.2. Dataset Degradation

Various levels of Gaussian blur were applied to the original datasets. This blur was intentionally introduced to degrade neural network classification and IQA performance. As the blur was applied with predefined percentages, statistical insight into the IQA algorithm sensitivity could be gained. Additionally, a correlation between IQA scores and neural network performance can be investigated. After the blur application, three datasets were derived: 3% blur, 10% blur, and 25% blur. In summary, four datasets were investigated with the unmodified original dataset containing 0% blur.

Figure 2 illustrates the varying levels of Gaussian blur applied to a sample from the Malevis set.

To provide full reproducibility and clarify the underlying implementation, the Gaussian blur used in this study was applied using a parameterization that scales with image resolution. Specifically, for each image of width

W and height

H, the standard deviation

of the Gaussian kernel was computed as

where

corresponds to the 3%, 10%, and 25% blur levels, respectively. This formulation ensures that blur severity is proportional to overall image size and therefore consistent across datasets with different dimensions. The Gaussian kernel applied was

which is a widely used low-pass filter in image processing and IQA research.

The following pseudocode summarizes the blur-generation procedure used throughout all experiments:

procedure APPLY_GAUSSIAN_BLUR(image, alpha):

W, H ← image dimensions

sigma ← alpha ∗ sqrt(W^2 + H^2)

blurred ← GaussianFilter(image, sigma)

return blurred

It is important to note that Gaussian blur is not intended to mimic any specific real-world degradation mechanism in malware image pipelines. Instead, it serves as a controlled, monotonic, and mathematically well-defined distortion that allows for systematic evaluation of IQA sensitivity and CNN robustness. Although real degradation in visual malware datasets typically arises from processes such as downsampling, interpolation, compression, or preprocessing inconsistencies rather than optical blur, these artifacts share low-pass characteristics that influence texture and entropy in comparable ways.

3.3. IQA Procedure

The IQA model experiments were facilitated by utilizing calibrated and configured algorithms. As shown in Algorithm 1, six NR-IQA algorithms were run on each original and degraded dataset:

BRISQUE [

24]: BRISQUE evaluates the spatial quality and does not take into account ringing, blur, or blocking. Rather, it analyzes scene statistics to quantify a loss of “naturalness”.

CLIPIQA, CLIPIQA+, CLIPIQA+_rn50_512 [

25]: CLIP operates under the notion that quantification of issues such as blur and noise do not directly correlate with human preferences. CLIP aims to assess the quality perception and abstract perception of an image. The differing versions of the implementation operate on different underlying infrastructures.

CNNIQA [

26]: CNNIQA takes image patches as input and works in the spatial domain without traditional feature extraction methods. It utilizes one convolutional layer with max and min pooling preceding two fully connected layers with an output node. It is additionally capable of demonstrating local quality estimations.

DBCNN [

27]: The DBCNN implementation consists of two specifically trained CNNs trained for different distortion scenarios. The model has been fine tuned on human-evaluated image databases.

Within BRISQUE, lower scores indicate a superior result. Conversely, for all other IQA algorithms, a higher score indicates a superior result. Each one of the six algorithms evaluates image quality differently, with lower scores indicating better quality for BRISQUE, and higher scores indicating better quality for the other algorithms.

| Algorithm 1 IQA model experiments. |

- 1:

procedure RunIQAAlgorithms(datasets) - 2:

for dataset in datasets do - 3:

originalDataset ← dataset.original - 4:

degradedDataset ← dataset.degraded - 5:

scoreBRISQUEOriginal ← RunBRISQUE(originalDataset) - 6:

scoreBRISQUEDegraded ← RunBRISQUE(degradedDataset) - 7:

scoreCLIPIQAOriginal ← RunCLIPIQA(originalDataset, ‘CLIPIQA’) - 8:

scoreCLIPIQADegraded ← RunCLIPIQA(degradedDataset, ‘CLIPIQA’) - 9:

scoreCLIPIQAPlusOriginal ← RunCLIPIQA(originalDataset, ‘CLIPIQA+’) - 10:

scoreCLIPIQAPlusDegraded ← RunCLIPIQA(degradedDataset, ‘CLIPIQA+’) - 11:

scoreCLIPIQAPlusRN50Original ← RunCLIPIQA(originalDataset, ‘CLIPIQA+_rn50_512’) - 12:

scoreCLIPIQAPlusRN50Degraded ← RunCLIPIQA(degradedDataset, ‘CLIPIQA+_rn50_512’) - 13:

scoreCNNIQAOriginal ← RunCNNIQA(originalDataset) - 14:

scoreCNNIQADegraded ← RunCNNIQA(degradedDataset) - 15:

scoreDBCNNOriginal ← RunDBCNN(originalDataset) - 16:

scoreDBCNNDegraded ← RunDBCNN(degradedDataset) - 17:

end for - 18:

end procedure - 19:

function RunBRISQUE(dataset) - 20:

/* BRISQUE evaluates the spatial quality */ - 21:

score ← ExtractSceneStatistics(dataset) - 22:

naturalnessScore ← QuantifyLossOfNaturalness(score) - 23:

return naturalnessScore - 24:

end function - 25:

function RunCLIPIQA(dataset, version) - 26:

/* CLIPIQA evaluates quality perception */ - 27:

if version == ‘CLIPIQA’ then - 28:

score ← AssessQualityPerception(dataset) - 29:

else if version == ‘CLIPIQA+’ then - 30:

score ← AssessQualityPerceptionPlus(dataset) - 31:

else if version == ‘CLIPIQA+_rn50_512’ then - 32:

score ← AssessQualityPerceptionRN50(dataset) - 33:

end if - 34:

return score - 35:

end function - 36:

function RunCNNIQA(dataset) - 37:

/* CNNIQA works in the spatial domain */ - 38:

patches ← ExtractImagePatches(dataset) - 39:

convolutionLayer ← ApplyConvolutionLayer(patches) - 40:

pooledFeatures ← ApplyMaxMinPooling(convolutionLayer) - 41:

flattenedFeatures ← Flatten(pooledFeatures) - 42:

outputScore ← FullyConnectedLayers(flattenedFeatures) - 43:

return outputScore - 44:

end function - 45:

function RunDBCNN(dataset) - 46:

/* DBCNN uses two CNNs trained for different distortion scenarios */ - 47:

cnn1 ← LoadTrainedCNN(‘distortion1’) - 48:

cnn2 ← LoadTrainedCNN(‘distortion2’) - 49:

score1 ← cnn1.evaluate(dataset) - 50:

score2 ← cnn2.evaluate(dataset) - 51:

combinedScore ← CombineScores(score1, score2) - 52:

return combinedScore - 53:

end function

|

3.4. CNN Training and Experimental Configuration

To correlate IQA algorithm scores with neural network performance, a simple ResNet-50 [

28] CNN was implemented using TensorFlow. The objective was not to achieve high accuracy but rather to obtain a metric that could be correlated with blur levels and IQA scores. Each dataset was trained for ten epochs as a proof-of-concept, using only the Malevare set for training. The unmodified original dataset’s folders were combined and then processed using scikit-learn’s

train_test_split. Folders within the datasets with applied blur were combined to form the training set, while the

val folder within the original Malevare set was used as the test set for blurred datasets. Model validation accuracy was recorded and charted.

To ensure full reproducibility and satisfy best-practice standards in DL experimentation, we report the complete training configuration used in all CNN experiments. All models were trained on a workstation equipped with an NVIDIA RTX 4090 GPU (24 GB VRAM), an Intel Core i9–12900K CPU, and 64 GB RAM, running Ubuntu 22.04 LTS, CUDA 12.1, and cuDNN 8.9. The implementation was conducted in TensorFlow 2.14 with Keras high-level APIs and supporting libraries including NumPy 1.26, SciPy 1.11, scikit-learn 1.3 for dataset partitioning, and OpenCV 4.8 for blur operations. All images were resized to a unified resolution of 224 × 224 and normalized to the

floating-point range. A batch size of 32 was used for all runs. Optimization was performed using the Adam optimizer with a learning rate of

,

,

, and no weight decay; all other hyperparameters remained at TensorFlow defaults. Training proceeded for 10 epochs, consistent with the study’s proof-of-concept objective. No data augmentation was applied beyond the Gaussian blur described in

Section 3.2.

The ResNet-50 backbone was initialized with ImageNet-pretrained weights, and only the final classification layers were fine-tuned; the remaining layers were left trainable to preserve representational capacity. The train/validation split used for the Malevare set followed scikit-learn’s

train_test_split with an 80/20 ratio and a fixed

random_state=42 to ensure deterministic reproducibility. During evaluation, accuracy was computed on the unaltered Malevis validation partition. All code used in these experiments is publicly available in the referenced GitHub repository [

29], ensuring that all architectural, preprocessing, and parameter choices can be fully reproduced.

3.5. Results and Discussion

Each IQA algorithm generated a numerical score to evaluate the image quality. Surprisingly, most algorithms did not exhibit a linear correlation between the IQA scores and increasing blur levels. In some instances, such as with CLIPIQA+rn50_512, images with 25% blur consistently received higher average scores than the unblurred images. This outcome suggests that the algorithm is insensitive to blur and does not perform well with “unnatural” images. Similarly, other algorithms also proved unsuitable for dataset curation for analogous reasons. The field of IQA primarily focuses on images depicting “real” objects with artifacts, which may explain these results.

One hypothesis for the higher IQA scores for blurred images is that the blur reduced pixel-to-pixel variations, leading to a smoother appearance. Malicious software images typically contain high entropy, as each pixel corresponds directly to the bit stream. In contrast, “natural” images usually exhibit lower entropy, with fewer drastic pixel changes in the absence of artifacts. The applied blur likely smoothed the inherent noise and entropy of the dataset, resulting in higher “naturalness” scores from the IQA algorithms. Another hypothesis is that the blur simplified the dataset, making it easier for the algorithms to process. This simplification might have made the images appear more aesthetically pleasing to a human viewer.

Of all the IQA algorithms tested, only BRISQUE demonstrated sensitivity to increasing blur levels, exhibiting a strong linear correlation. As anticipated, greater blur resulted in higher BRISQUE scores, indicating progressively poorer image quality. Unfortunately, BRISQUE could not be run on the blurred Malimg dataset due to an issue with the PyTorch implementation, which led to a nan error and thus, N/A in the output for those subsets.

When selecting datasets for CNN testing, Malevis emerged as the most suitable candidate. The NARAD dataset lacked clear labeling of malicious and benign classes, making supervised learning infeasible. The Malware as Images dataset contained too few images (only 136 files) for effective CNN training. Additionally, BRISQUE was unable to compute scores on the blurred Malimg datasets, leaving no data points for comparison. Importing Malnet images into a neural network would have required significant logistical effort. Therefore, Malevis was chosen as a preliminary proof-of-concept, paving the way for future research directions.

To assess the relationship between image quality and CNN performance, the resulting validation accuracies were compared with the corresponding BRISQUE scores using Pearson and Spearman correlation coefficients. The Pearson correlation was 0.287 with a high p-value of 0.714, and the Spearman correlation was 0.6 with a p-value of 0.4, while the numerical coefficients indicate a directional trend, the large p-values clearly show that no statistically significant correlation can be claimed under conventional thresholds (e.g., 0.05 or 0.1). These results therefore reflect only a qualitative indication of a possible association rather than statistical evidence. The limited number of available data points restricts the statistical power of the analysis, reinforcing the need for larger-scale future experiments to more rigorously evaluate the relationship between BRISQUE scores and CNN performance.

4. Proposed Dataset Framework

This framework provides a series of metrics and weights considering IQA, essential dataset organization, and the needs of visual malware detection. These metrics and weights are summarized in a formula that can be used to compare and contrast datasets. Additionally, it can be used to identify areas that are lacking and need improvement. Normalizing relevant data and outputting a score can bring more awareness to the quality of malware image representations.

4.1. Framework Metrics

A series of six metrics are chosen to best represent the goals of MalScore. Each metric has an associated weight to introduce fair dataset classification. The following metrics constitute the MalScore formula.

4.1.1. Inverted and Normalized BRISQUE Score, 35%

The BRISQUE module outputs a numerical score relating to the quality of a provided image. The lower the score, the higher quality BRISQUE deemed the image. Theoretically, the lower bound of this output is 0, meaning that the image is perfect; however, there is no upper bound of the scoring system. This makes score normalization across datasets challenging, so it was opted to use relative normalization. The normalization formula,

Q, is expressed as:

where

Q is the normalized quality score.

is the BRISQUE score of the current image.

is the highest BRISQUE score observed in the dataset.

By inverting the score, Equation (

1) transforms lower BRISQUE scores (which indicate higher quality) into higher numerical values for further use within the framework. Normalization aims to standardize scores across a common scale to allow for fair comparisons across various ranges of dataset performance. The weight of this metric was determined to be 35% as to place heavy emphasis on quality image generation.

4.1.2. Clear Labeling Scheme, 30%

The objective of incorporating datasets into supervised learning methods is to effectively distinguish samples according to a defined labeling scheme. This paper does not address the application of unsupervised techniques. It is imperative to utilize clear and precise labeling schemes to ensure the efficient and accurate classification of malicious samples. Consequently, this metric is assigned a weight of 30% to significantly penalize datasets that lack clear labeling. The input for this metric is a binary score: datasets with clear and complete labeling receive a score of 1, while those without receive a score of 0.

The use of a binary indicator for labeling clarity reflects the categorical nature of this property in visual malware datasets. A dataset either provides a complete, usable labeling scheme or it does not. As highlighted in

Section 3.5, datasets such as NARAD lack sufficiently clear labels, making supervised learning infeasible rather than merely degraded. Because partial or ambiguous label structures cannot support CNN-based classification, a continuous scoring scale would not yield a meaningful distinction. The binary formulation therefore aligns with the practical usability requirements of supervised DL workflows.

4.1.3. Normalized Dataset Size, 25%

Accurate classification of samples using AI necessitates a sufficient quantity of data for effective learning. Inadequate data results in diminished AI performance. Therefore, a substantial weight of 25% is applied to emphasize the importance of curating large datasets. The scoring system relies on the normalization of datasets across compared samples. Given the absence of an upper bound, relative normalization is utilized. A normalized dataset score is derived as:

where

is the normalized dataset score.

D is the size of the current dataset.

is the size of the largest observed dataset.

To facilitate the comparison of datasets of varying sizes, a logarithmic transformation was applied. This transformation helps mitigate the disproportionate influence of larger datasets, which can skew values due to their magnitude. The denominator, representing the size of the largest dataset, serves to incentivize the creation of substantial datasets, while the impact of larger datasets is effectively moderated, it remains a factor in the denominator to encourage the inclusion of high-quality samples. This approach ensures that large, quality datasets are promoted while being subjected to the same rigorous quality assessment as smaller datasets. A weight of 25% is assigned to enforce this criterion and ensure a fair evaluation.

4.1.4. Content Diversity, 4%

In visual malware detection, the primary concerns are detecting variants across different malware families and identifying obfuscated samples. These aspects are crucial for ensuring that a model is robust enough to detect previously unknown samples and potentially zero-day threats. As malware detection capabilities advance, malware evasion techniques evolve in parallel. Therefore, it is essential to include a diverse range of malware samples from multiple malware families and obfuscated samples in the dataset. To incentivize this diversity, a weight of 4% is applied. The scoring for this metric is binary: a score of 1 is assigned if more than 20 malware families are represented, while a score of 0 is given if fewer than 20 malware families are present.

The threshold-based binary scoring for content diversity is designed to distinguish datasets that support generalizable malware classification from those that do not. Widely used benchmarks such as Malimg, Malevis, and MalNet-Tiny all contain more than 20 families, whereas smaller or imbalanced datasets cannot reliably support robustness or zero-day evaluation. Because family diversity in this context determines whether a dataset meets the minimum conditions for meaningful DL experimentation, the metric is treated as a categorical condition rather than a continuous variable. This prevents misleading partial-credit effects and ensures fair comparison across heterogeneous datasets.

4.1.5. Recency of Data, 3%

In addition to including a diverse range of malware families, it is equally important to incorporate recent developments in malware. New malware often exploits newly discovered vulnerabilities, strives to bypass detection mechanisms, and evades analysis in sandbox environments. Including recent samples is essential to counteracting contemporary anti-virus evasion techniques and addressing new malware trends. A weight of 3% is assigned to emphasize the importance of incorporating recent developments while maintaining a balanced consideration of other impactful metrics. The dates considered for this score are based on the year of the published dataset paper. The scoring system is designed to favor more recent datasets. Datasets published within the past 0–5 years score a 1 in this category. Those published between 6 and 10 years ago score 0.8, datasets from 11 to 15 years ago score 0.6, and datasets from 16 to 20 years ago score 0.4.

4.1.6. Need for Processing, 3%

Preprocessing datasets in data science can often be more labor-intensive than running experiments and developing models. Consequently, visual malware datasets should be produced as concisely as possible to enhance research efficiency. The scoring system for this criterion is binary: a score of 1 indicates that little or no preprocessing is required, whereas a score of 0 signifies significant preprocessing requirements to implement the dataset in AI architectures. For example, a dataset that is not inherently visual and requires conversion to a binary image representation should score a 0 due to the extensive preprocessing needed. A weight of 3% is assigned to underscore the importance of concise dataset creation while acknowledging the impact of other, more significant metrics.

Table 2 summarizes the metrics used alongside their assigned weight. The algorithm that informs the framework is now formally defined. Given a set of datasets

D, for each dataset

, we define the following:

: Average BRISQUE score of the dataset d, where a lower score indicates higher image quality.

: Inverted and normalized BRISQUE score, defined as , where higher scores reflect better quality.

: Binary indicator for clear labels, where indicates clear labels, and otherwise.

: Size of the dataset d, measured as the number of samples in the dataset.

: Normalized dataset size, calculated as , applying a logarithmic scaling to size.

: Content diversity score, with indicating sufficient diversity, and otherwise.

: Recency of data score, reflecting the timeliness of the dataset.

: Preprocessing indicator, where indicates no preprocessing is needed, and otherwise.

The overall MalScore for dataset

d is then computed as a weighted sum of these metrics as follows:

where

, and

are the weights attributed to the inverted BRISQUE score, label clarity, normalized dataset size, content diversity, recency of data, and preprocessing need, respectively. These weights are defined as:

= 0.35,

= 0.30,

= 0.25,

= 0.04,

= 0.03, and

= 0.03.

The final MalScore is expressed as a percentage, offering a comparative measure of dataset quality and providing a high-level overview of key features. Improving MalScore performance can contribute to the creation of more meaningful datasets, thereby advancing research in the field of malware detection.

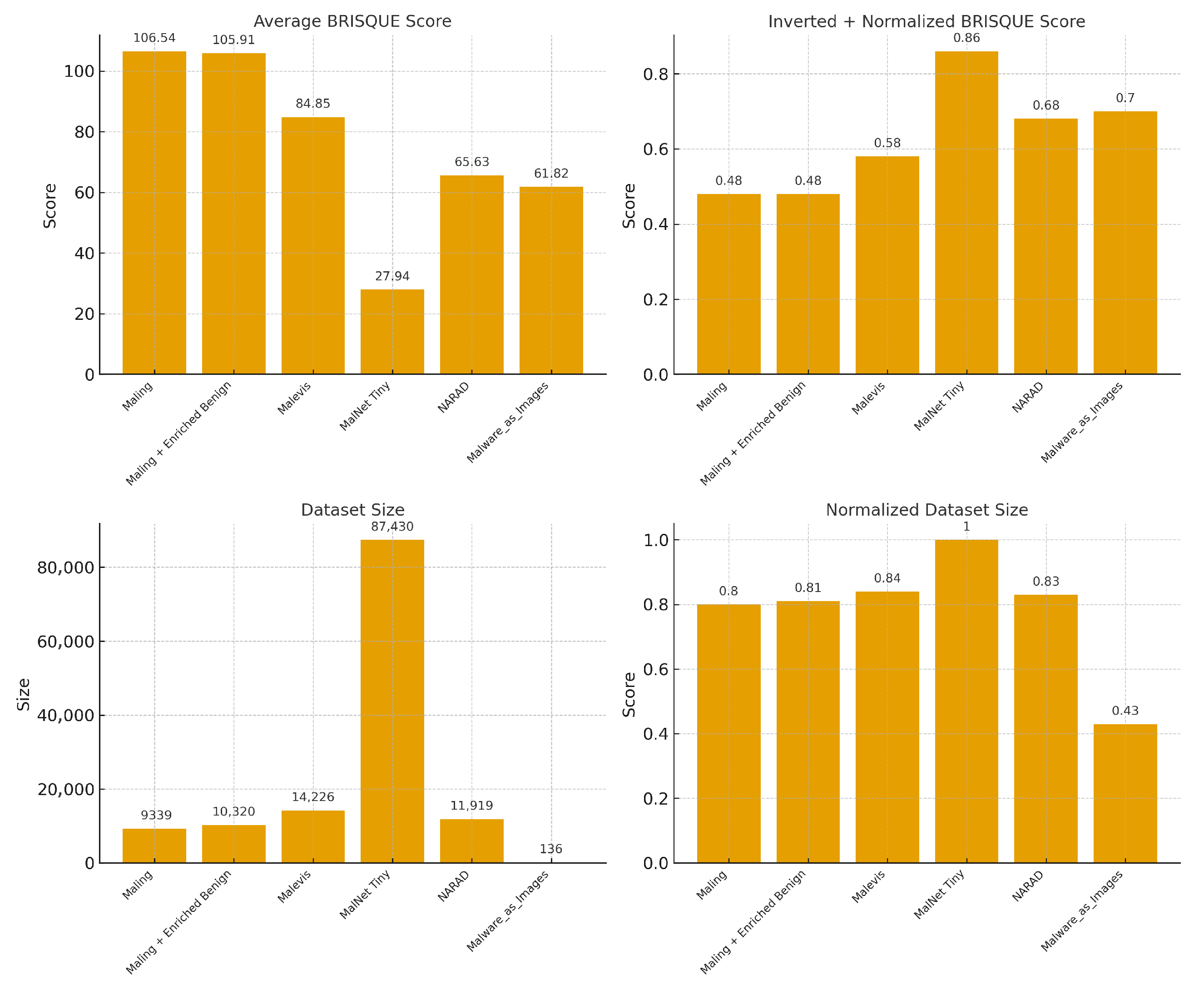

Figure 3 underscores the necessity of score normalization within the MalScore algorithm. As illustrated in

Figure 3, certain datasets would have gained an undue advantage if raw BRISQUE and dataset size scores were utilized.

4.2. Framework Dataset Application

Following the description of the dataset framework, a practical example is carried out on large, known datasets. Overall, the highest observed BRISQUE score was 203.8536377. Furthermore, the largest dataset analyzed was MalNet Tiny with 87,430 samples.

4.2.1. Malimg

The Malimg dataset achieved an average BRISQUE score of 106.54. After inversion and normalization, this score translates to 0.48. The dataset comprises 9339 samples, yielding a normalized dataset size score of 0.80. It received full scores in the categories of clear labels, content diversity, and minimal preprocessing requirements. However, Malimg scored 0.6 in the recency of data category, as the associated dataset paper was published in 2011, making the dataset 13 years old at the time of this writing. The computed MalScore for the dataset is 76%.

4.2.2. Enriched Malimg (With Benign Class)

The enriched Malimg dataset, which includes an additional benign class, achieved an average BRISQUE score of 105.91. This indicates higher quality benign image samples, leading to an improved IQA average. After inversion and normalization, the IQA score is 0.48. The dataset comprises 10,320 samples, resulting in a normalized dataset size score of 0.81. As with the base Malimg dataset, it received full scores in the categories of clear labels, content diversity, and minimal preprocessing requirements. Similarly, the associated paper is the same as that of the Malimg dataset, resulting in a score of 0.6 in the recency of data metric. The calculated MalScore for these dataset is also 76%.

4.2.3. Malevis

The Malevare set features an improved average BRISQUE score of 84.85, which, after inversion and normalization, results in a score of 0.58. The dataset comprises 14,226 samples, yielding a normalized dataset size score of 0.84. It receives full scores in the categories of clear labeling, content diversity, recency of data, and minimal preprocessing requirements. The overall MalScore for the dataset is 81%. This score represents an improvement over the Malimg dataset, attributable to the inclusion of a greater number of higher quality samples.

4.2.4. MalNet Tiny

The Malnet Tiny subset achieved the best average BRISQUE score of 27.94, resulting in an inverted and normalized BRISQUE score of 0.86. It is the largest and best-performing dataset used, with 87,429 samples, translating to a fully normalized dataset size score of 1.0. These dataset excels in all defined metrics, achieving the highest MalScore of 95%.

4.2.5. NARAD “Malicious Images” Subfolder

The NARAD dataset has an average BRISQUE score of 65.63, resulting in an inverted and normalized score of 0.68. The dataset includes 11,919 samples, normalized to a dataset size score of 0.83. Unlike the other datasets, NARAD lacks clear labeling schemes, making classification challenging. Consequently, it scores 0 in the content diversity metric, as the diversity of samples cannot be determined. The dataset receives full scores in the data recency and minimal preprocessing requirements metrics. It achieves the lowest MalScore of 50%, indicating significant room for improvement.

4.2.6. Malware as Images Dataset Evaluation

This smaller dataset has an average BRISQUE score of 61.82, resulting in an inverted and normalized score of 0.70. Due to the presence of duplicate samples processed at different resolutions, only one processing method was considered. The subset includes 136 samples, yielding a significantly smaller normalized dataset size score of 0.43. Despite its small size, it maintains clear labeling schemes, features content diversity, and was developed within the past five years. The dataset incurs a penalty in the need for processing metric, as significant cropping is required to prepare the dataset for input into DL architectures. Its final MalScore is 72%.

It is important to note that due to the nature of BRISQUE results having no upper limit, relative normalization must be used. Consequently, the MalScore formula cannot be applied to an individual dataset in isolation. The provided framework inherently compares datasets and requires at least two inputs for calculation.

Figure 4 presents the final results of MalScore applied across the five described datasets.

5. Discussion

Table 3 provides a detailed overview of the key areas of future work in visual malware detection research. The figure highlights the main focus areas, including Dataset Curation, IQA, and DL models, with specific tasks outlined for each area to guide future research and development.

5.1. Advancing IQA and Model Development for Future MalScore Applications

This study serves as a preliminary bridge between the fields of IQA and visual malware detection. By incorporating IQA algorithm scores during dataset curation, more accurate models can be trained. Although a baseline association has been established, further research is necessary to enhance the statistical significance of the findings. Firstly, additional blur levels should be applied to existing datasets to introduce more data points within the calculations. This increased data would facilitate a more detailed statistical assessment of the relationship between IQA scores and blur levels, thereby determining the blur sensitivity of the IQA algorithm at a finer granularity.

Moreover, a broader range of datasets should be introduced. For instance, Microsoft BIG [

30] is a well-known dataset in the visual malware detection literature, containing nearly half a terabyte of disassembly and bytecode for over 20,000 malware samples, while these dataset is an excellent resource, it lacks image representations of malware, necessitating manual conversion. Future research could involve representing these dataset as images with various DPI settings to investigate the relationship between DPI and IQA algorithm scores.

Further work with CNNs can also be conducted. As more suitable datasets for AI applications become available, the application of CNNs and other DL architectures can generate additional statistical data. These data points would help to further confirm the linear relationship between BRISQUE scores and CNN performance. This study focused on validation accuracy at the end of each epoch. Future studies should include additional metrics such as precision, recall, and F1 score. The inclusion of these metrics would provide more data points, which could influence the p-values of the Pearson and Spearman correlation metrics. As more data are collected with increasingly significant p-values, the findings will become more statistically robust.

Additionally, additional IQA algorithms should be investigated or specifically developed for dataset curation purposes. Current algorithms focus on quantifying the “naturalness” of an image, a feature not typically present in the visual representation of malware, while BRISQUE performed well during experiments as a general-purpose algorithm, other general-purpose algorithms should be evaluated for their applicability. Further research in IQA can be directed towards assessing the image quality of non-traditional images, such as those found in cartoon scenes, and understanding their implications in the context of malware images. This research could potentially lead to the development of specialized IQA algorithms tailored for specific image or video types.

Lastly, a specific algorithm designed to identify the issues present in an image could be developed. Identifying issues such as blur, ringing, or blocking within a dataset could guide the creation of a visual malware IQA algorithm. This issue detector would need to be calibrated in conjunction with other IQA algorithms to accurately interpret the computer vision’s approach to these datasets.

5.2. Positioning MalScore for Evolving Visual Malware Benchmarks

In addition to the widely used visual malware datasets examined in this study, including Malimg, Malevis, MalNet-Tiny, NARAD, and the 2023 Malware as Images corpus, several newer image-based malware datasets have recently emerged. For example, Bruzzese [

31] introduced a 2024 dataset constructed from VirusShare binaries and converted into visual representations for modern ML baselines such as CoAtNet. More recently, Makkawy et al. proposed MalVis, a large-scale Android visualization dataset released in 2025 that transforms APK and bytecode artifacts into consistent RGB malware images [

32]. These datasets represent promising additions to the visual malware literature; however, they appeared after the completion of our experimental phase and are not yet broadly adopted or standardized. Because many newly released collections still require substantial preprocessing to generate consistent image representations, we identify them as natural candidates for future applications of the MalScore framework. Our methodology is intentionally designed to generalize to such emerging datasets as they mature and become more widely integrated into DL-based malware detection research.

5.3. Limitations of Gaussian Blur and Future Real-World Distortion Modeling

Although Gaussian blur provides a clean and mathematically controlled way to introduce monotonic degradation for analyzing IQA sensitivity, it does not fully capture the types of distortions naturally found in visual malware datasets. Real-world degradation more commonly arises from preprocessing artifacts such as interpolation effects, aggressive downsampling, compression noise, and inconsistencies in image conversion pipelines. Therefore, the use of Gaussian blur should be viewed as a methodological probe rather than a literal simulation of operational conditions. Future work will incorporate distortions directly observed in practical malware image corpora to better approximate real-world quality issues and to evaluate how IQA algorithms respond to these domain-specific artifacts.

5.4. MalScore Weighting Strategy and Path Toward Optimization

It is important to note that these weights were not empirically optimized. Rather, they reflect deliberate, design-driven choices appropriate for introducing MalScore. The primary objective of this study is to formalize the structure of the metric and demonstrate its feasibility across representative datasets, not to provide a fully calibrated scoring model. The larger weights assigned to image quality, label clarity, and dataset size follow established practices in visual malware detection, where these factors have a dominant impact on model effectiveness. Conversely, diversity, recency, and preprocessing requirements are incorporated as secondary yet meaningful considerations. A rigorous, data-driven calibration of the weights would require a substantially larger and more standardized collection of visual malware datasets than currently exists; thus, weight optimization is identified as future work. The framework is intentionally designed to be extensible so that the research community may refine or empirically tune these weights as additional datasets become available.