A Hybrid CNN-LSTM Model for SMS Spam Detection in Arabic and English Messages

Abstract

1. Introduction

- Collection of an Arabic SMS dataset labeled as spam or not-spam, which can be helpful in performing studies on Arabic SMS spam.

- Proposal of a model for detecting SMS spam in a mixed mobile environment that supports Arabic and English, based on a hybrid deep learning architecture combining the CNN and LSTM algorithms.

- Comparison of the proposed model with other machine learning algorithms.

- The proposed model outperforms traditional machine learning algorithms by achieving a remarkable accuracy of 98.37%.

2. Related Work

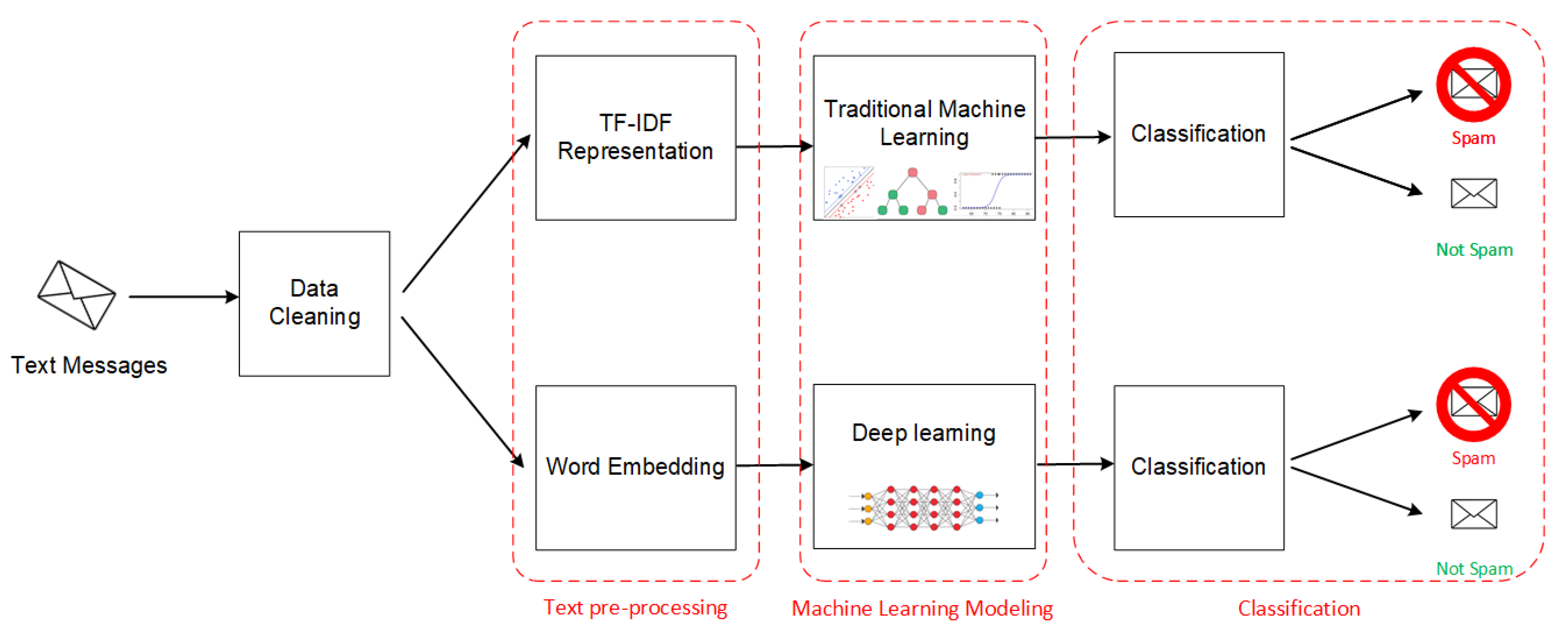

3. The Proposed Model

3.1. Data Cleaning

- Punctuation: remove unnecessary punctuation marks and symbols from the text.

- Capitalization: remove the capital-letters by transforming all words to lower-case.

- Arabic and English stop-words: Stop-words refer to the most common words in a language that are not important for understanding the text. These include words such as “the”, “is”, “a”, “which”, and “on” for English language and في ، من ، إلى for Arabic language. In NLP tasks, it is often useful to remove these types of words before training the models in order to reduce the amount of ‘noise’.

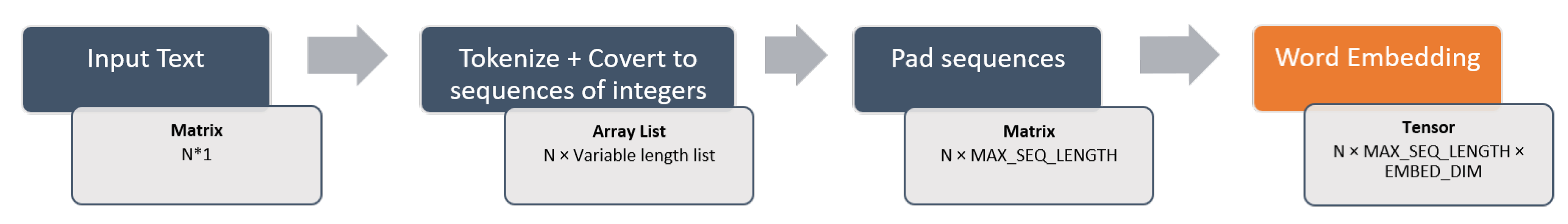

3.2. Text Pre-Processing

3.2.1. TF-IDF Representation

3.2.2. Word Embedding

3.3. Machine Learning Modeling

3.3.1. Traditional Machine Learning

- Support Vector Machine

- K-Nearest Neighbors

- Multinomial Naive Bayes

- Decision Tree

- Logistic Regression

- Random Forest

- AdaBoost

- Bagging classifier

- Extra Trees

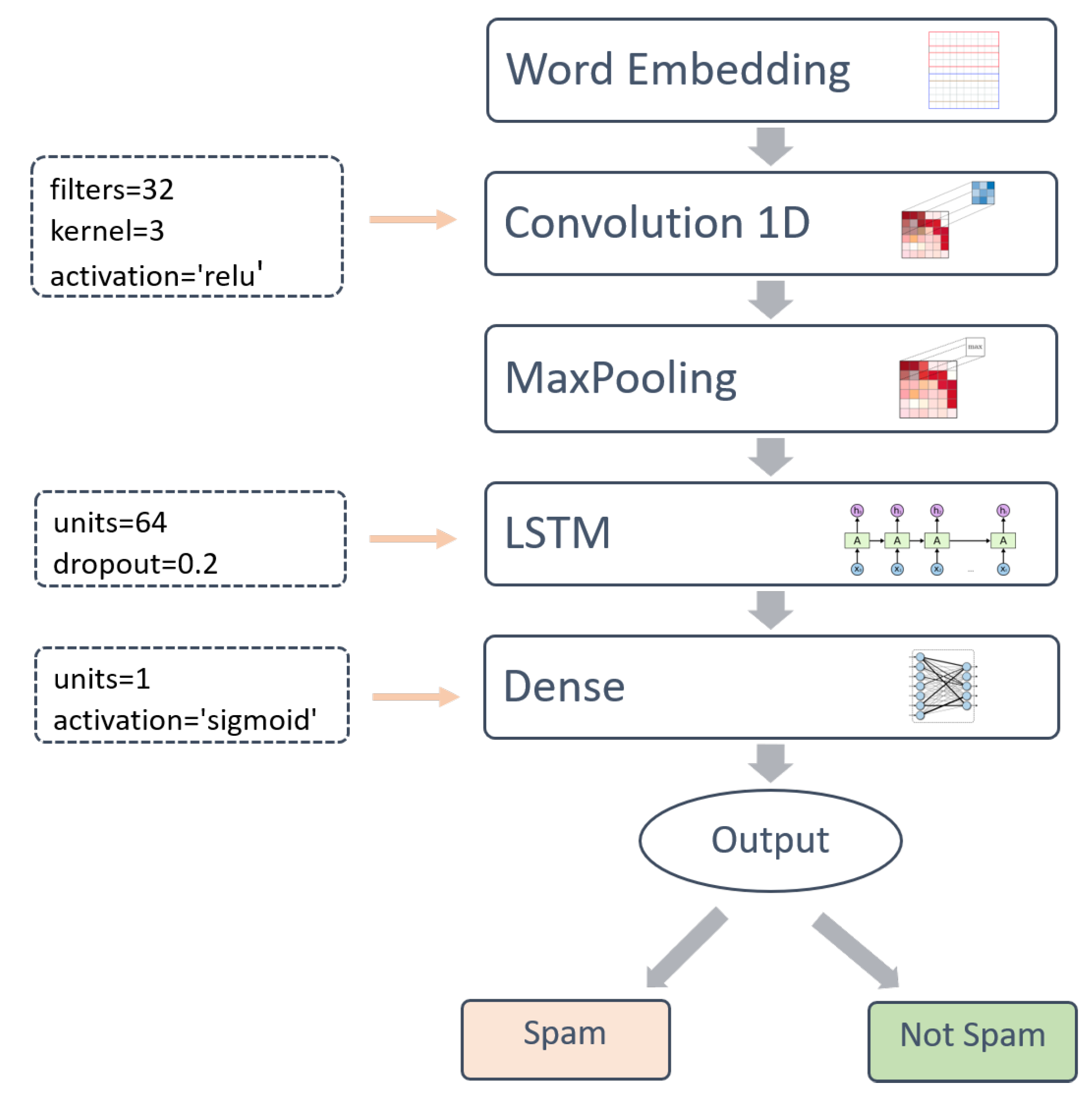

3.3.2. Deep Learning

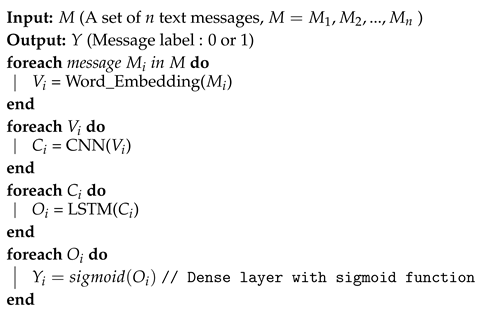

| Algorithm 1: Convolutional Neural Network (CNN)-Long Short-Term Memory (LSTM) model |

|

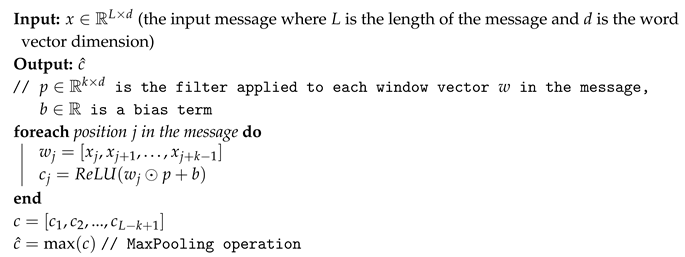

- Convolution 1D

| Algorithm 2: CNN |

|

- MaxPooling

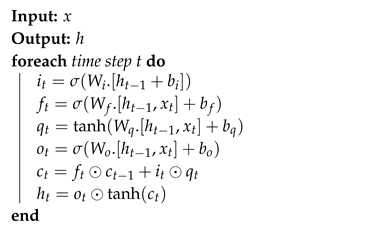

- LSTM

| Algorithm 3: LSTM |

|

- Dense

3.4. Classification

4. Experimental Evaluation

4.1. Dataset Description

4.2. Evaluation Measures

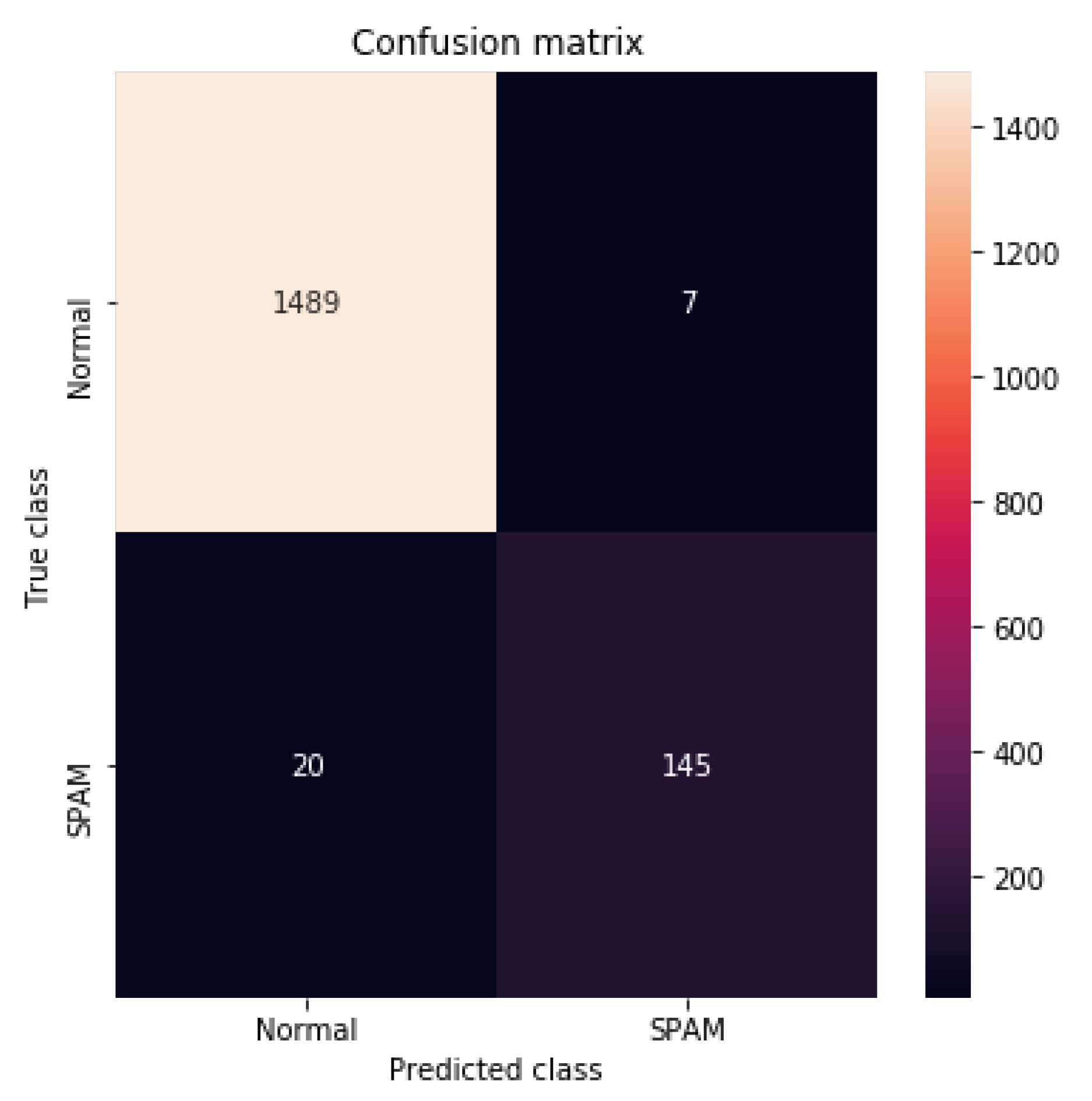

- The confusion matrix is a table which indicates the following measures:

- –

- True Positives (TP): the cases when the actual class of the message was 1 (Spam) and the predicted is also 1 (Spam)

- –

- True Negatives (TN): the cases when the actual class of the message was 0 (Not-Spam) and the predicted is also 0 (Not-Spam)

- –

- False Positives (FP): the cases when the actual class of the message was 0 (Not-Spam), but the predicted is 1 (Spam).

- –

- False Negatives (FN): the cases when the actual class of the message was 1 (Spam) but the predicted is 0 (Not-Spam).

- Accuracy: is the number of messages that were correctly predicted divided by the total number of predicted messages.

- Precision: is the proportion of positive predictions (Spam) that are truly positives.

- Recall: is the proportion of actual Positives that are correctly classified.

- F1-Score: is the harmonic mean of precision and recall.

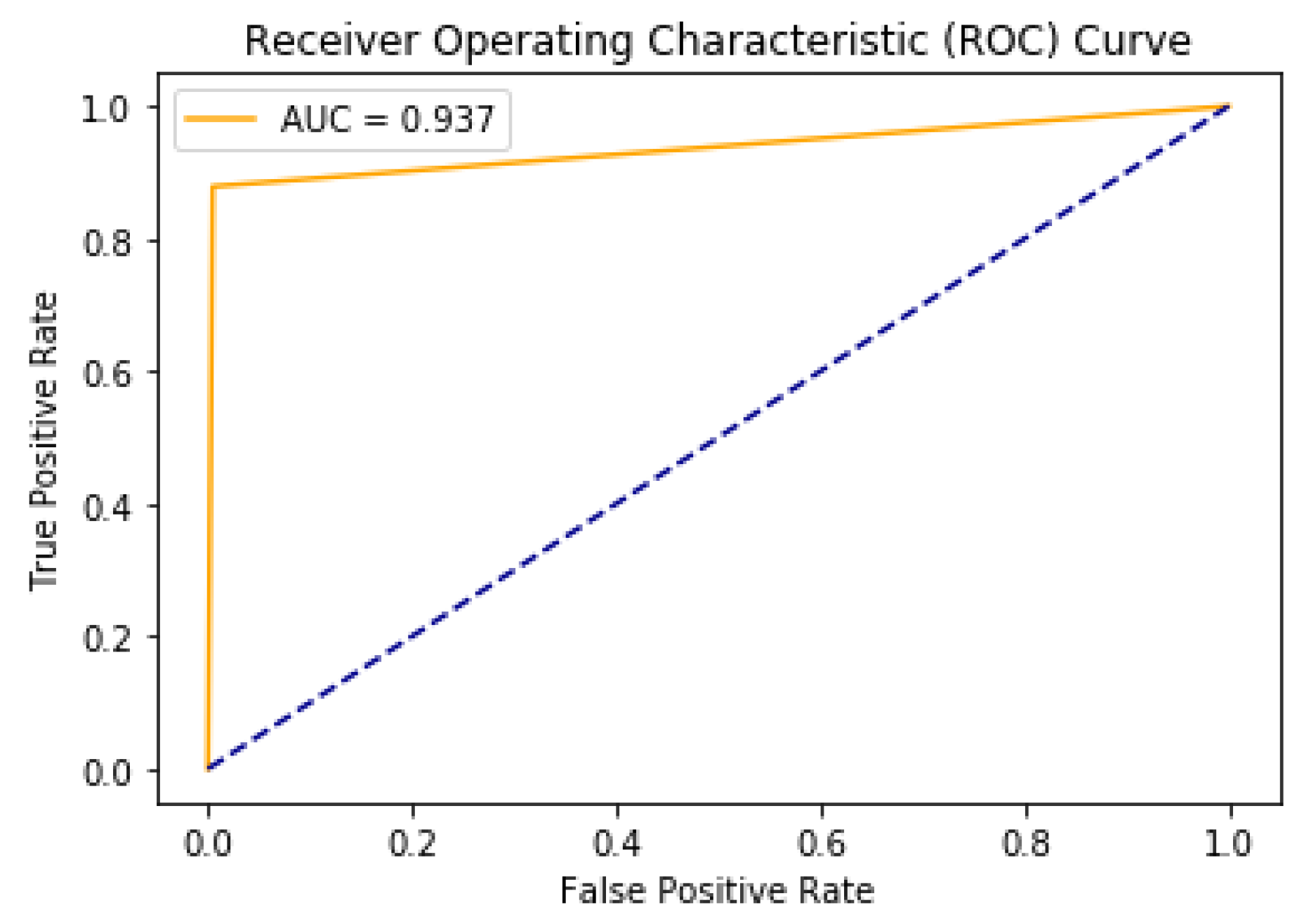

- AUC (Area under the ROC Curve) is an aggregate measure of performance across all possible classification thresholds.

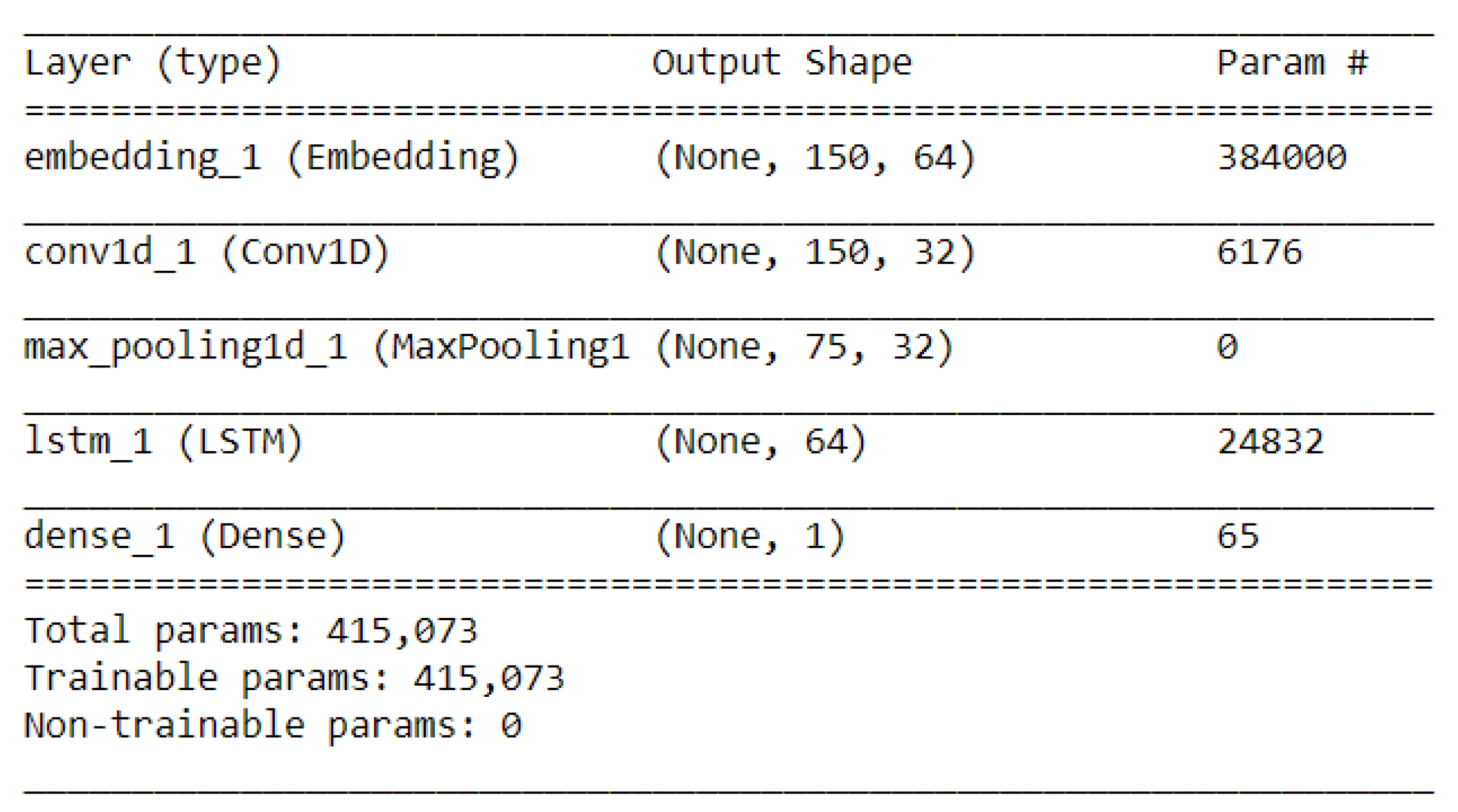

4.3. Parameters of the Deep Learning Layers

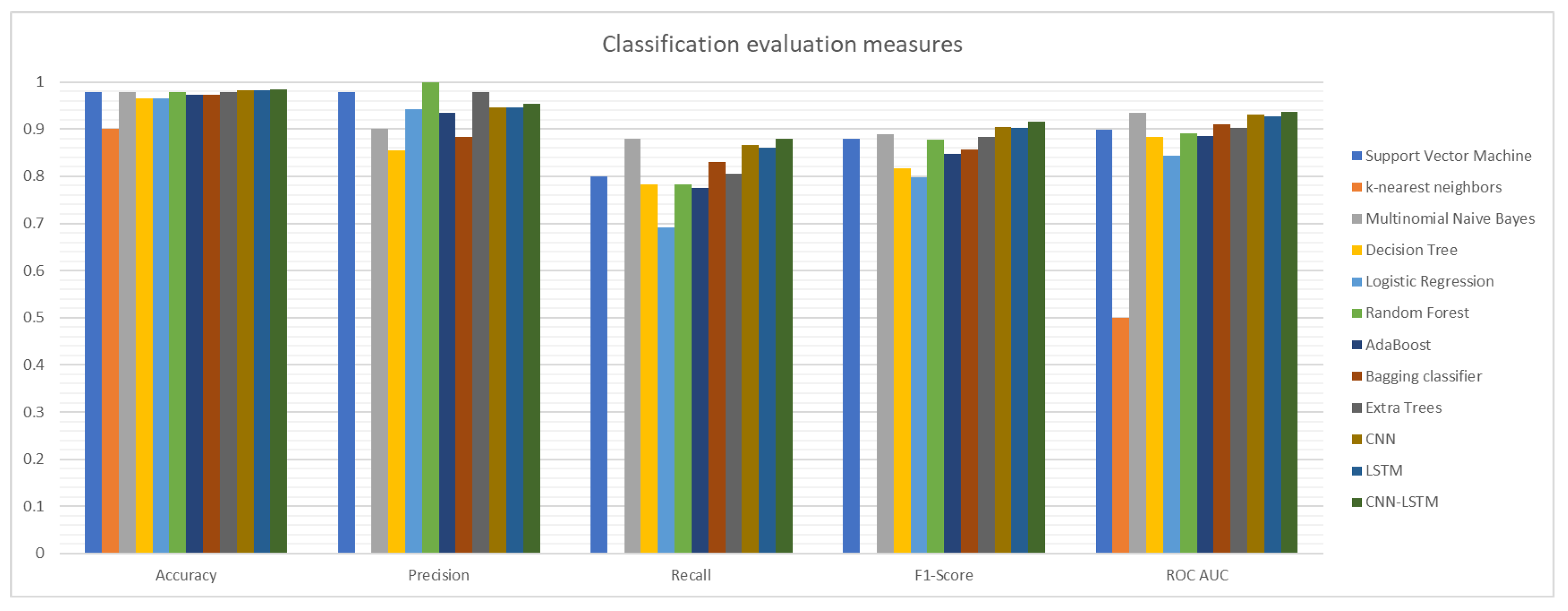

4.4. Experimental Results

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Morreale, M. Daily SMS Mobile Usage Statistics. 2017. Available online: https://www.smseagle.eu/2017/03/06/daily-sms-mobile-statistics/ (accessed on 15 June 2020).

- Roy, P.K.; Singh, J.P.; Banerjee, S. Deep learning to filter SMS Spam. Future Gener. Comput. Syst. 2020, 102, 524–533. [Google Scholar] [CrossRef]

- Tatango. Text Message Spam Infographic. 2011. Available online: https://www.tatango.com/blog/text-message-spam-infographic/ (accessed on 15 June 2020).

- Goel, D.; Jain, A. Smishing-Classifier: A Novel Framework for Detection of Smishing Attack in Mobile Environment. In Proceedings of the Smart and Innovative Trends in Next Generation Computing Technologies (NGCT 2017), Dehradun, India, 30–31 October 2017; pp. 502–512. [Google Scholar]

- Goel, D.; Jain, A.K. Mobile phishing attacks and defence mechanisms: State of art and open research challenges. Comput. Secur. 2018, 73, 519–544. [Google Scholar] [CrossRef]

- Jain, A.K.; Yadav, S.K.; Choudhary, N. A Novel Approach to Detect Spam and Smishing SMS using Machine Learning Techniques. IJESMA 2020, 12, 21–38. [Google Scholar] [CrossRef]

- Mishra, S.; Soni, D. Smishing Detector: A security model to detect smishing through SMS content analysis and URL behavior analysis. Future Gener. Comput. Syst. 2020, 108, 803–815. [Google Scholar] [CrossRef]

- Graves, A. Offline Arabic Handwriting Recognition with Multidimensional Recurrent Neural Networks. In Guide to OCR for Arabic Scripts; Springer: London, UK, 2012; pp. 297–313. [Google Scholar]

- Chherawala, Y.; Roy, P.P.; Cheriet, M. Feature Set Evaluation for Offline Handwriting Recognition Systems: Application to the Recurrent Neural Network Model. IEEE Trans. Cybern. 2016, 46, 2825–2836. [Google Scholar] [CrossRef] [PubMed]

- Elleuch, M.; Kherallah, M. An Improved Arabic Handwritten Recognition System Using Deep Support Vector Machines. Int. J. Multimed. Data Eng. Manag. 2016, 7, 1–20. [Google Scholar] [CrossRef]

- Yousfi, S.; Berrani, S.A.; Garcia, C. Contribution of recurrent connectionist language models in improving LSTM-based Arabic text recognition in videos. Pattern Recognit. 2017, 64, 245–254. [Google Scholar] [CrossRef]

- El-Desoky Mousa, A.; Kuo, H.J.; Mangu, L.; Soltau, H. Morpheme-based feature-rich language models using Deep Neural Networks for LVCSR of Egyptian Arabic. In Proceedings of the 2013 IEEE International Conference on Acoustics, Speech and Signal Processing, Vancouver, BC, Canada, 26–31 May 2013; pp. 8435–8439. [Google Scholar]

- Deselaers, T.; Hasan, S.; Bender, O.; Ney, H. A Deep Learning Approach to Machine Transliteration. In Proceedings of the Fourth Workshop on Statistical Machine Translation, Athens, Greece, 30–31 March 2009; StatMT ’09. Association for Computational Linguistics: Stroudsburg, PA, USA, 2009; pp. 233–241. [Google Scholar]

- Guzmán, F.; Bouamor, H.; Baly, R.; Habash, N. Machine Translation Evaluation for Arabic using Morphologically-enriched Embeddings. In Proceedings of the COLING 2016, the 26th International Conference on Computational Linguistics: Technical Papers, Osaka, Japan, 11–16 December 2016; The COLING 2016 Organizing Committee: Osaka, Japan, 2016; pp. 1398–1408. [Google Scholar]

- Jindal, V. A Personalized Markov Clustering and Deep Learning Approach for Arabic Text Categorization; In Proceedings of the ACL 2016 Student Research Workshop, Berlin, Germany, 7–12 August 2016.

- Dahou, A.; Xiong, S.; Zhou, J.; Haddoud, M.H.; Duan, P. Word Embeddings and Convolutional Neural Network for Arabic Sentiment Classification. In Proceedings of the COLING 2016, the 26th International Conference on Computational Linguistics: Technical Papers, Osaka, Japan, 11–16 December 2016; The COLING 2016 Organizing Committee: Osaka, Japan, 2016; pp. 2418–2427. [Google Scholar]

- Al-Sallab, A.; Baly, R.; Hajj, H.; Shaban, K.B.; El-Hajj, W.; Badaro, G. AROMA: A Recursive Deep Learning Model for Opinion Mining in Arabic as a Low Resource Language. ACM Trans. Asian Low-Resour. Lang. Inf. Process. 2017, 16, 1–20. [Google Scholar] [CrossRef]

- Al-Smadi, M.; Qawasmeh, O.; Al-Ayyoub, M.; Jararweh, Y.; Gupta, B. Deep Recurrent neural network vs. support vector machine for aspect-based sentiment analysis of Arabic hotels’ reviews. J. Comput. Sci. 2018, 27, 386–393. [Google Scholar] [CrossRef]

- Zhou, C.; Sun, C.; Liu, Z.; Lau, F.C.M. A C-LSTM Neural Network for Text Classification. arXiv 2015, arXiv:cs.CL/1511.08630. [Google Scholar]

- Joo, J.W.; Moon, S.Y.; Singh, S.; Park, J.H. S-Detector: An enhanced security model for detecting Smishing attack for mobile computing. Telecommun. Syst. 2017, 66, 29–38. [Google Scholar] [CrossRef]

- Delvia Arifin, D.; Shaufiah; Bijaksana, M.A. Enhancing spam detection on mobile phone Short Message Service (SMS) performance using FP-growth and Naive Bayes Classifier. In Proceedings of the 2016 IEEE Asia Pacific Conference on Wireless and Mobile (APWiMob), Bandung, Indonesia, 13–15 September 2016; pp. 80–84.

- Sonowal, G.; Kuppusamy, K.S. SmiDCA: An Anti-Smishing Model with Machine Learning Approach. Comput. J. 2018, 61, 1143–1157. [Google Scholar] [CrossRef]

- Jain, A.K.; Gupta, B.B. Feature Based Approach for Detection of Smishing Messages in the Mobile Environment. J. Inf. Technol. Res. 2019, 12, 17–35. [Google Scholar] [CrossRef]

- Jain, A.K.; Gupta, B. Rule-Based Framework for Detection of Smishing Messages in Mobile Environment. Procedia Comput. Sci. 2018, 125, 617–623. [Google Scholar] [CrossRef]

- Almeida, T.A.; Silva, T.P.; Santos, I.; Hidalgo, J.M.G. Text normalization and semantic indexing to enhance Instant Messaging and SMS spam filtering. Knowl. Based Syst. 2016, 108, 25–32. [Google Scholar] [CrossRef]

- Yadav, K.; Kumaraguru, P.; Goyal, A.; Gupta, A.; Naik, V. SMSAssassin: Crowdsourcing Driven Mobile-Based System for SMS Spam Filtering. In Proceedings of the 12th Workshop on Mobile Computing Systems and Applications, Phoenix, AZ, USA, 1–2 March 2011; HotMobile ’11. Association for Computing Machinery: New York, NY, USA, 2011; pp. 1–6. [Google Scholar] [CrossRef]

- Agarwal, S.; Kaur, S.; Garhwal, S. SMS spam detection for Indian messages. In Proceedings of the 2015 1st International Conference on Next Generation Computing Technologies (NGCT), Dehradun, India, 4–5 September 2015; pp. 634–638. [Google Scholar]

- Almeida, T.A.; Hidalgo, J.M.G.; Yamakami, A. Contributions to the Study of SMS Spam Filtering: New Collection and Results. In Proceedings of the 11th ACM Symposium on Document Engineering, Mountain View, CA, USA, 19–22 September 2011; DocEng ’11. Association for Computing Machinery: New York, NY, USA, 2011; pp. 259–262. [Google Scholar] [CrossRef]

- Chen, T.; Kan, M.Y. Creating a live, public short message service corpus: The NUS SMS corpus. Lang. Resour. Eval. 2013, 47, 299–355. [Google Scholar] [CrossRef][Green Version]

- Zhang, W.; Yoshida, T.; Tang, X. A comparative study of TF*IDF, LSI and multi-words for text classification. Expert Syst. Appl. 2011, 38, 2758–2765. [Google Scholar] [CrossRef]

- Mikolov, T.; Chen, K.; Corrado, G.; Dean, J. Efficient Estimation of Word Representations in Vector Space. In Proceedings of the 1st International Conference on Learning Representations, ICLR 2013, Scottsdale, AZ, USA, 2–4 May 2013. [Google Scholar]

- Pennington, J.; Socher, R.; Manning, C. GloVe: Global Vectors for Word Representation. In Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing (EMNLP), Doha, Qatar, 25–29 October 2014; Association for Computational Linguistics: Doha, Qatar, 2014; pp. 1532–1543. [Google Scholar] [CrossRef]

| Paper Reference | Approach Objective | Used Methods | Dataset Type |

|---|---|---|---|

| [2] | Classify SMS and identify spam messages | CNN and LSTM |

|

| [7] | Identify spam messages and inspect included URL | Naive Bayes |

|

| [20] | Classify SMS and identify spam messages | Naive Bayes |

|

| [24] | Classify SMS and identify spam messages | Rule-based classification |

|

| [22] | Classify SMS and identify spam messages | Random Forest, Decision Tree, Support Vector Machine and AdaBoost. | |

| [23] | Identify spam messages and inspect included URL | Feature-based technique, Random Forest, Naïve Bayes, Support Vector Machine and Neural Network. |

|

| [25] | Normalize and expand text messages to improve the classification performance | Lexicography, semantic dictionaries and techniques of semantic analysis and disambiguation |

|

| [21] | Classify SMS and identify spam messages | FP-growth and Naive Bayes |

|

| [26] | Classify SMS and identify spam messages | Bayesian learning and Support Vector Machine |

|

| [27] | Classify SMS and identify spam messages | Multinomial Naive Bayes, Support Vector Machine, Random Forest and Adaboost |

|

| Number of the Messages | Training Size | Testing Size | |

|---|---|---|---|

| Spam | 785 | 80% | 20% |

| Not-Spam | 7519 | ||

| Total | 8304 |

| Accuracy | Precision | Recall | F1-Score | ROC AUC | |

|---|---|---|---|---|---|

| Support Vector Machine | 0.978326 | 0.977778 | 0.800000 | 0.880000 | 0.898997 |

| K-Nearest Neighbors | 0.900662 | 0.000000 | 0.000000 | 0.000000 | 0.500000 |

| Multinomial Naive Bayes | 0.978326 | 0.900621 | 0.878788 | 0.889571 | 0.934046 |

| Decision Tree | 0.965081 | 0.854305 | 0.781818 | 0.816456 | 0.883556 |

| Logistic Regression | 0.965081 | 0.942149 | 0.690909 | 0.797203 | 0.843115 |

| Random Forest | 0.978326 | 1.000000 | 0.781818 | 0.877551 | 0.890909 |

| AdaBoost | 0.972306 | 0.934307 | 0.775758 | 0.847682 | 0.884871 |

| Bagging classifier | 0.972306 | 0.883871 | 0.830303 | 0.856250 | 0.909135 |

| Extra Trees | 0.978928 | 0.977941 | 0.806061 | 0.883721 | 0.902028 |

| CNN | 0.981939 | 0.947020 | 0.866667 | 0.905063 | 0.930660 |

| LSTM | 0.981337 | 0.946667 | 0.860606 | 0.901587 | 0.927629 |

| CNN-LSTM | 0.983745 | 0.953947 | 0.878788 | 0.914826 | 0.937054 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Ghourabi, A.; Mahmood, M.A.; Alzubi, Q.M. A Hybrid CNN-LSTM Model for SMS Spam Detection in Arabic and English Messages. Future Internet 2020, 12, 156. https://doi.org/10.3390/fi12090156

Ghourabi A, Mahmood MA, Alzubi QM. A Hybrid CNN-LSTM Model for SMS Spam Detection in Arabic and English Messages. Future Internet. 2020; 12(9):156. https://doi.org/10.3390/fi12090156

Chicago/Turabian StyleGhourabi, Abdallah, Mahmood A. Mahmood, and Qusay M. Alzubi. 2020. "A Hybrid CNN-LSTM Model for SMS Spam Detection in Arabic and English Messages" Future Internet 12, no. 9: 156. https://doi.org/10.3390/fi12090156

APA StyleGhourabi, A., Mahmood, M. A., & Alzubi, Q. M. (2020). A Hybrid CNN-LSTM Model for SMS Spam Detection in Arabic and English Messages. Future Internet, 12(9), 156. https://doi.org/10.3390/fi12090156