Word Sense Disambiguation Using Cosine Similarity Collaborates with Word2vec and WordNet

Abstract

:1. Introduction

2. Related Works

2.1. WordNet

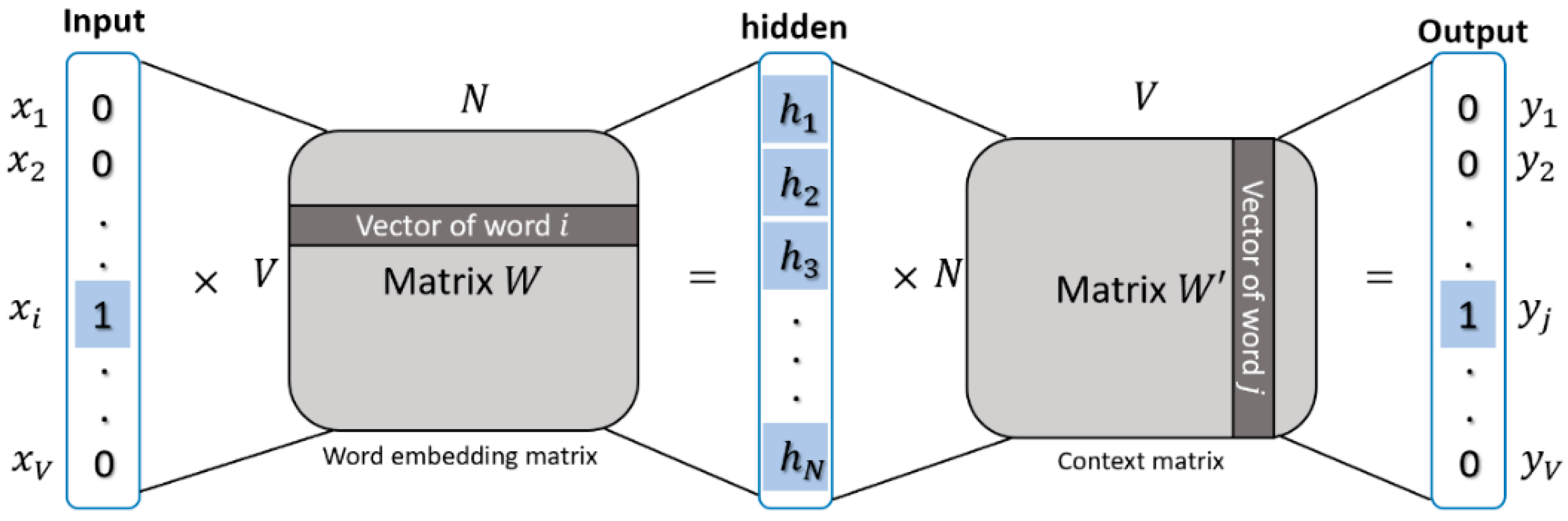

2.2. Word2vec

3. Proposed Method

3.1. Constructing the Sentence Vector

3.2. Cosine Similarity

3.3. The Probability of Sense Distribution

3.4. Putting It Together

| Algorithm 1 Pseudocode of proposed method, wsdw2v |

| Input: a sentence S, a target word w, a context size c, a similarity threshold α, a number of best synsets n_best Output: n_best disambiguate senses (synsets) of the target word w 1: W←Lemmatized(RemoveStopwords(POStag(Tokenized(S)))) 2: wi←GetTargetWordIndex(W, w) 3: C←GetContext(W, wi, c) 4: VC←Word2Vectorizer(C) 5: si←GetSynsetsFromWordNet(wi, GetPOStag(wi)) 6: Result←EmptyDict() 7: For each synset si,j in si : 8: Score←0 9: Sig←GetDefinition(si,j)+GetDefinitionFromRelations(si,j, “hypernym, hyponym, meronym, holonym, entailment”) 10: Sig←Lemmatized(RemoveStopwords(Tokenized(Sig))) |

| 11: VSig←Word2Vectorizer(Sig) 12: Score←Cosim(VC, VSig) 13: If Score < α : 14: Score←P(si,j|wi) 15: Result←Result + {si,j : Score} 16: Return n_best synsets from Result |

4. Experiments and Results

4.1. Training Word-Embedding Vectors

4.2. Determining Similarity Threshold

4.3. Experiment on Sense Relations and Sense Distribution

4.4. Experiment on Context Size

4.5. Experiment on Constructing a Sentence Vector

4.6. Comparing with Other Systems

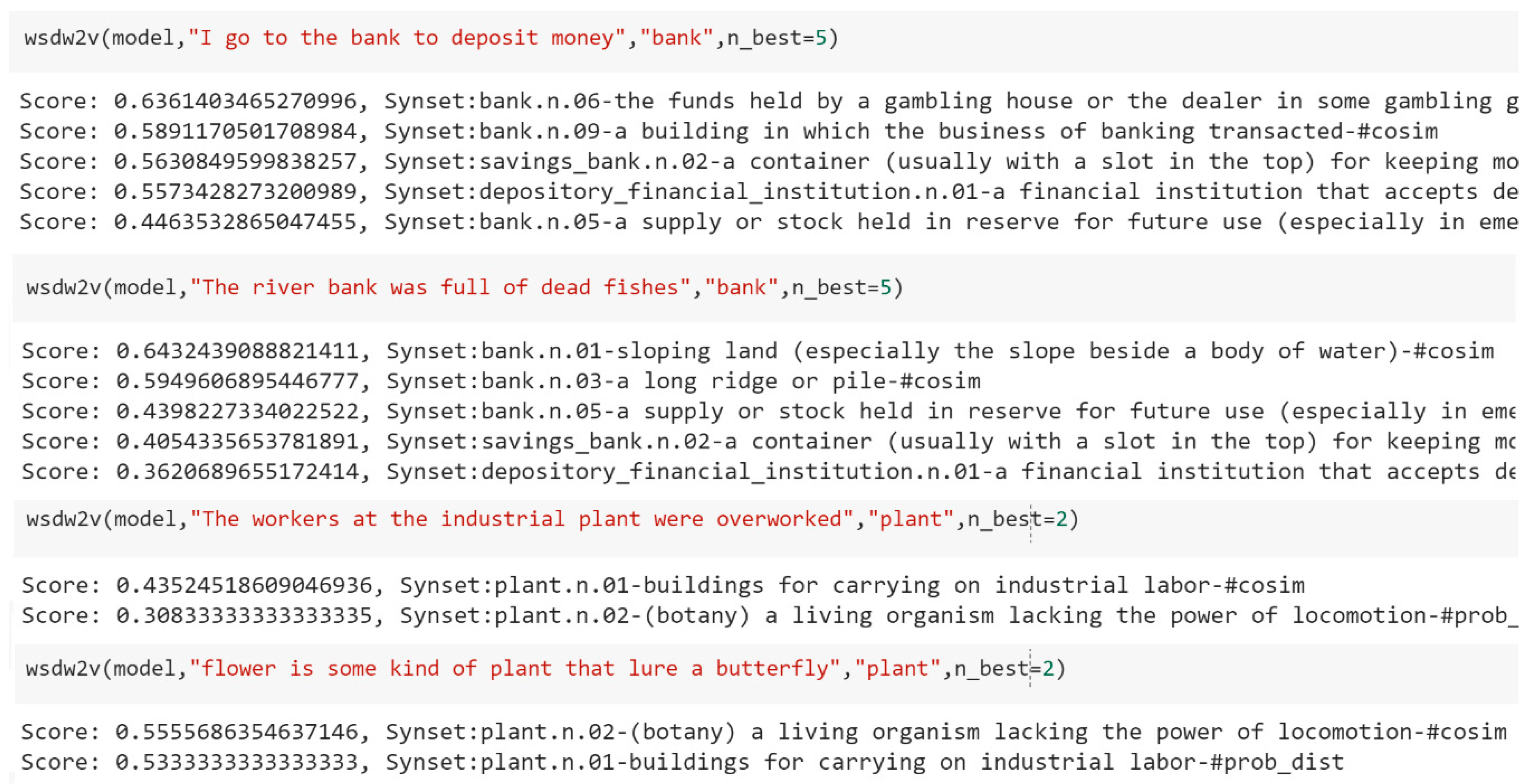

5. Example of Applications

6. Conclusions and Future Works

Author Contributions

Funding

Conflicts of Interest

References

- Zhong, Z.; Ng, H.T. It Makes Sense: A Wide-Coverage Word Sense Disambiguation System for Free Text. In Proceedings of the ACL 2010 System Demonstrations, Uppsala, Sweden, 13 July 2010; pp. 78–83. [Google Scholar]

- Lesk, M. Automatic Sense Disambiguation Using Machine Readable Dictionaries: How to Tell a Pine Cone from an Ice Cream Cone. In Proceedings of the 5th Annual International Conference on Systems Documentation, Toronto, ON, Canada, January 1986; pp. 24–26. [Google Scholar]

- Cowie, J.; Guthrie, J.; Guthrie, L. Lexical Disambiguation Using Simulated Annealing. In Proceedings of the 14th conference on Computational linguistics-Volume 1, Nantes, France, 23–28 August 1992; Association for Computational Linguistics: Stroudsburg, PA, USA, 1992; pp. 359–365. [Google Scholar]

- Kilgarriff, A.; Rosenzweig, J. Framework and Results for English SENSEVAL. Comput. Humanit. 2000, 34, 15–48. [Google Scholar] [CrossRef]

- Banerjee, S.; Pedersen, T. An Adapted Lesk Algorithm for Word Sense Disambiguation Using WordNet. In Proceedings of the International Conference on Intelligent Text Processing and Computational Linguistics, Mexico City, Mexico, 17–23 February 2002; pp. 136–145. [Google Scholar]

- Basile, P.; Caputo, A.; Semeraro, G. An Enhanced Lesk Word Sense Disambiguation Algorithm through a Distributional Semantic Model. In Proceedings of the COLING 2014, 25th International Conference on Computational Linguistics: Technical Papers, Dublin, Ireland, 23–29 August 2014; pp. 1591–1600. [Google Scholar]

- Yarowsky, D. Unsupervised Word Sense Disambiguation Rivaling Supervised Methods. In Proceedings of the 33rd Annual Meeting on Association for Computational Linguistics, Cambridge, MA, USA, 26–30 June 1995; Association for Computational Linguistics, 1995; pp. 189–196. [Google Scholar]

- Miller, G. WordNet: An Electronic Lexical Database; MIT press: Cambridge, MA, USA, 1998. [Google Scholar]

- Landauer, T.K.; Foltz, P.W.; Laham, D. An Introduction to Latent Semantic Analysis. Discourse Process. 1998, 25, 259–284. [Google Scholar] [CrossRef]

- Mikolov, T.; Sutskever, I.; Chen, K.; Corrado, G.S.; Dean, J. Distributed Representations of Words and Phrases and Their Compositionality. In Proceedings of the Advances in Neural Information Processing Systems (NIPS 2013), Lake Tahoe, NV, USA, 5–8 December 2013; pp. 3111–3119. [Google Scholar]

- Baroni, M.; Dinu, G.; Kruszewski, G. Don’t Count, Predict! A Systematic Comparison of Context-Counting vs. Context-Predicting Semantic Vectors. In Proceedings of the 52nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), Baltimore, MD, USA, 23–25 June 2014; Volume 1, pp. 238–247. [Google Scholar]

- Altszyler, E.; Sigman, M.; Ribeiro, S.; Slezak, D.F. Comparative Study of LSA vs Word2vec Embeddings in Small Corpora: A Case Study in Dreams Database. arXiv 2016, arXiv:1610.01520. [Google Scholar]

- Mikolov, T.; Chen, K.; Corrado, G.; Dean, J. Efficient Estimation of Word Representations in Vector Space. arXiv 2013, arXiv:1301.3781. [Google Scholar]

- Mihalcea, R.; (University of Michigan, Michigan, USA). Semcor Semantically Tagged Corpus. Unpublished manuscript. 1998. [Google Scholar]

- Mihalcea, R.; Chklovski, T.; Kilgarriff, A. The Senseval-3 English Lexical Sample Task. In Proceedings of the SENSEVAL-3, the Third International Workshop on the Evaluation of Systems for the Semantic Analysis of Text, Barcelona, Spain, 25–26 July 2004. [Google Scholar]

- Vasilescu, F.; Langlais, P.; Lapalme, G. Evaluating Variants of the Lesk Approach for Disambiguating Words. In Proceedings of the Fourth International Conference on Language Resources and Evaluation (Lrec’04), Lisbon, Portugal, 26–28 May 2004. [Google Scholar]

- Edmonds, P.; Cotton, S. SENSEVAL-2: Overview. In Proceedings of the Second International Workshop on Evaluating Word Sense Disambiguation Systems, SENSEVAL ’01, Toulouse, France, 5–6 July 2001; Association for Computational Linguistics: Toulouse, France, 2001; pp. 1–5. [Google Scholar]

- Mihalcea, R.; Tarau, P.; Figa, E. PageRank on Semantic Networks, with Application to Word Sense Disambiguation. In Proceedings of the 20th International Conference on Computational Linguistics, Geneva, Switzerland, 23–27 August 2004; Association for Computational Linguistics, 2004; p. 1126. [Google Scholar]

- Navigli, R.; Ponzetto, S.P. BabelNet: The Automatic Construction, Evaluation and Application of a Wide-Coverage Multilingual Semantic Network. Artif. Intell. 2012, 193, 217–250. [Google Scholar] [CrossRef]

- Navigli, R.; Jurgens, D.; Vannella, D. Semeval-2013 Task 12: Multilingual Word Sense Disambiguation. In Proceedings of the Second Joint Conference on Lexical and Computational Semantics (* SEM), Volume 2 and the Seventh International Workshop on Semantic Evaluation (SemEval 2013), Atlanta, GA, USA, 14–15 June 2013; Volume 2, pp. 222–231. [Google Scholar]

- Amari, S. Backpropagation and Stochastic Gradient Descent Method. Neurocomputing 1993, 5, 185–196. [Google Scholar] [CrossRef]

- Gutmann, M.; Hyvärinen, A. Noise-Contrastive Estimation of Unnormalized Statistical Models, with Applications to Natural Image Statistics. J. Mach. Learn. Res. 2012, 13, 307–361. [Google Scholar]

- Mnih, A.; Whye Teh, Y. A Fast and Simple Algorithm for Training Neural Probabilistic Language Models. In Proceedings of the 29th International Conference on Machine Learning, ICML 2012, Edinburgh, UK, 26 June–1 July 2012. [Google Scholar]

- Faruqui, M.; Dodge, J.; Kumar Jauhar, S.; Dyer, C.; Hovy, E.; Smith, N.A. Retrofitting Word Vectors to Semantic Lexicons. In Proceedings of the Human Language Technologies: The 2015 Annual Conference of the North American Chapter of the ACL, Denver, CO, USA, 31 May–5 June 2014; pp. 1606–1615. [Google Scholar]

- Yu, L.; Moritz Hermann, K.; Blunsom, P.; Pulman, S. Deep Learning for Answer Sentence Selection. In Proceedings of the Deep Learning and Representation Learning Workshop: NIPS-2014, Montréal, QC, Canada, 8–13 December 2014. [Google Scholar]

- Kenter, T.; de Rijke, M. Short Text Similarity with Word Embeddings. In Proceedings of the 24th ACM International on Conference on Information and Knowledge Management, Melbourne, Australia, 18–23 October 2015; pp. 1411–1420. [Google Scholar]

- Singhal, A.; Google, I. Modern Information Retrieval: A Brief Overview. IEEE Data Eng. Bull. 2001, 24, 35–43. [Google Scholar]

- Manning, C.D.; Raghavan, P.; Schütze, H. Introduction to Information Retrieval; Cambridge University Press: New York, NY, USA, 2008. [Google Scholar]

- SENSEVAL 3 Home Page. Available online: http://web.eecs.umich.edu/~mihalcea/senseval/senseval3/data.html (accessed on 10 November 2018).

- Resnik, P.; Yarowsky, D. A Perspective on Word Sense Disambiguation Methods and Their Evaluation. In Tagging Text with Lexical Semantics: Why, What, and How? Washington, WA, USA, 4–5 April 1997, pp. 79–86.

- Proposal for Senseval Scoring Scheme. Available online: http://web.eecs.umich.edu/~mihalcea/senseval/senseval3/scoring/scorescheme.txt (accessed on 15 November 2018).

- Wagner, W. Steven Bird, Ewan Klein and Edward Loper: Natural Language Processing with Python, Analyzing Text with the Natural Language Toolkit. Lang. Resour. Eval. 2010, 44, 421–424. [Google Scholar] [CrossRef]

- Pedregosa, F.; Varoquaux, G.; Gramfort, A.; Michel, V.; Thirion, B.; Grisel, O.; Blondel, M.; Prettenhofer, P.; Weiss, R.; Dubourg, V.; et al. Scikit-Learn: Machine Learning in Python. J. Mach. Learn. Res. 2011, 12, 2825–2830. [Google Scholar]

- Řehůřek, R.; Sojka, P. Software Framework for Topic Modelling with Large Corpora. In Proceedings of the LREC 2010 Workshop on New Challenges for NLP Frameworks, Valletta, Malta, 22 May 2010; ELRA: Valletta, Malta, 2010; pp. 45–50. [Google Scholar]

- Google Code Archive: word2vec. Available online: https://code.google.com/archive/p/word2vec/ (accessed on 9 October 2018).

- Word Analogies Test for word2vec. Available online: https://raw.githubusercontent.com/RaRe-Technologies/gensim/develop/gensim/test/test_data/questions-words.txt (accessed on 9 October 2018).

- Han, L. UMBC Webbase Corpus. Available online: https://ebiquity.umbc.edu/resource/html/id/351 (accessed on 21 October 2018).

- Han, L.; Kashyap, A.L.; Finin, T.; Mayfield, J.; Weese, J. UMBC_EBIQUITY-CORE: Semantic Textual Similarity Systems. In Proceedings of the Second Joint Conference on Lexical and Computational Semantics, Atlanta, Georgia, USA, 13–14 June 2013; Association for Computational Linguistics, 2013. [Google Scholar]

- Hilbe, J.M. Logistic Regression Models; Chapman and Hall/CRC: Boca Raton, FL, USA, 2009. [Google Scholar]

- Magnini, B.; Strapparava, C. User Modelling for News Web Sites with Word Sense Based Techniques. User Model. User-Adapt. Interact. 2004, 14, 239–257. [Google Scholar] [CrossRef]

- Sidorov, G.; Gelbukh, E.; Pinto, D. Soft Similarity and Soft Cosine Measure: Similarity of Features in Vector Space Model. Computación y Sistemas 2014, 18, 491–504. [Google Scholar] [CrossRef]

- Le, Q.; Mikolov, T. Distributed Representations of Sentences and Documents. In Proceedings of the 31st International Conference on Machine Learning, Beijing, China, 22–24 June 2014. [Google Scholar]

- Pennington, J.; Socher, R.; Manning, C.D. Glove: Global Vectors for Word Representation. In Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing (EMNLP), Doha, Qatar, 25–29 October 2014; pp. 1532–1543. [Google Scholar]

- Bojanowski, P.; Grave, E.; Joulin, A.; Mikolov, T. Enriching Word Vectors with Subword Information. arXiv 2016, arXiv:1607.04606. [Google Scholar] [CrossRef]

- Yu, H.; Hatzivassiloglou, V. Towards Answering Opinion Questions: Separating Facts from Opinions and Identifying the Polarity of Opinion Sentences. In Proceedings of the 2003 Conference on Empirical Methods in Natural Language Processing, Sapporo, Japan, 11–12 July 2003; Association for Computational Linguistics, 2003; pp. 129–136. [Google Scholar]

- Kim, S.-M.; Hovy, E. Determining the Sentiment of Opinions. In Proceedings of the 20th international conference on Computational Linguistics, Geneva, Switzerland, 23–27 August 2004; Association for Computational Linguistics, 2004; p. 1367. [Google Scholar]

- Baccianella, S.; Esuli, A.; Sebastiani, F. Sentiwordnet 3.0: An Enhanced Lexical Resource for Sentiment Analysis and Opinion Mining. In Proceedings of the Seventh conference on International Language Resources and Evaluation (LREC’10), Valletta, Malta, 17–20 May 2010; Volume 10, pp. 2200–2204. [Google Scholar]

- Musto, C.; Semeraro, G.; Polignano, M. A Comparison of Lexicon-Based Approaches for Sentiment Analysis of Microblog Posts. In Proceedings of the 8th International Workshop on Information Filtering and Retrieval, Pisa, Italy, 10 December 2014; pp. 59–69. [Google Scholar]

- Batrinca, B.; Treleaven, P.C. Social Media Analytics: A Survey of Techniques, Tools and Platforms. AI & Soc. 2015, 30, 89–116. [Google Scholar]

- Chamlertwat, W.; Bhattarakosol, P.; Rungkasiri, T.; Haruechaiyasak, C. Discovering Consumer Insight from Twitter via Sentiment Analysis. J. UCS 2012, 18, 973–992. [Google Scholar]

- Hamzehei, A.; Ebrahimi, M.; Shafiee, E.; Wong, R.K.; Chen, F. Scalable Sentiment Analysis for Microblogs Based on Semantic Scoring. In Proceedings of the 2015 IEEE International Conference on Services Computing, New York, NY, USA, 27 June–2 July 2015; pp. 271–278. [Google Scholar]

- Preoţiuc-Pietro, D.; Liu, Y.; Hopkins, D.; Ungar, L. Beyond Binary Labels: Political Ideology Prediction of Twitter Users. In Proceedings of the 55th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), Vancouver, BC, Canada, 30 July–4 August 2017; Association for Computational Linguistics: Vancouver, BC, Canada, 2017; pp. 729–740. [Google Scholar] [CrossRef]

- Liu, Y.; Zhang, L.; Nie, L.; Chen, Y.; Rosenblum, D.S. Fortune Teller: Predicting Your Career Path. In Proceedings of the Thirtieth AAAI Conference on Artificial Intelligence (AAAI’16), Phoenix, AZ, USA, 12–17 February 2016; pp. 201–207. [Google Scholar]

| Synset | Synonyms | Definition & Example |

|---|---|---|

| cake.n.01 | cake, bar | a block of solid substance (such as soap or wax), “a bar of chocolate.” |

| patty.n.01 | patty, cake | small flat mass of chopped food. |

| cake.n.03 | cake | baked goods made from or based on a mixture of flour, sugar, eggs, and fat. |

| coat.v.03 | coat, cake | form a coat over, “Dirt had coated her face.” |

| Word | Definition |

|---|---|

| Pine | 1. Kinds of evergreen trees with needle-shaped leaves. 2. Waste away through sorrow or illness. |

| Cone | 1. Solid body which narrows to a point. 2. Something of this shape whether solid or hollow. 3. Fruit of certain evergreen trees. |

| Configurations | Sense Distribution | Precision | Recall |

|---|---|---|---|

| Sense definition | × | 0.421 | 0.403 |

| Sense definition + sense relations | × | 0.487 | 0.466 |

| Sense definition | √ | 0.480 | 0.459 |

| Sense definition + sense relations | √ | 0.509 | 0.488 |

| Configurations | Precision | Recall |

|---|---|---|

| Context size 2 | 0.446 | 0.427 |

| Context size 6 | 0.455 | 0.436 |

| Context size 10 | 0.487 | 0.466 |

| Context size 14 | 0.471 | 0.451 |

| Configurations | Precision | Recall |

|---|---|---|

| Summing word-embedding vectors | 0.417 | 0.399 |

| Average word-embedding vectors | 0.417 | 0.399 |

| Average word-embedding vectors + idf | 0.416 | 0.398 |

| System/Team | Precision | Recall |

|---|---|---|

| wsdiit/IIT Bombay (Ramakrishnan et al.) | 0.661 | 0.657 |

| Cymfony/(Niu) | 0.563 | 0.563 |

| the “most frequent sense” heuristic-baseline | 0.552 | 0.552 |

| Prob0/Cambridge U. (Preiss) | 0.547 | 0.547 |

| wsdw2v with sense distribution (our method) | 0.509 | 0.488 |

| wsdw2v without sense distribution (our method) | 0.487 | 0.466 |

| clr04-ls/CL Research (Litkowski) | 0.450 | 0.450 |

| CIAOSENSO/U. Genova (Buscaldi) | 0.501 | 0.417 |

| LSA Lesk | 0.408 | 0.408 |

| KUNLP/Korea U. (Seo) | 0.404 | 0.404 |

| Duluth-SenseRelate/U.Minnesota (Pedersen) | 0.403 | 0.385 |

| Simplified Lesk (Kilgarriff and Rosenzweig) | 0.311 | 0.298 |

| Adapted Lesk (Banerjee and Pederson) | 0.247 | 0.236 |

| DFA-LS-Unsup/UNED (Fernandez) | 0.234 | 0.234 |

| DLSI-UA-LS-NOSU/U.Alicante (Vazquez) | 0.197 | 0.117 |

| Original Lesk (M. Lesk) | 0.097 | 0.053 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Orkphol, K.; Yang, W. Word Sense Disambiguation Using Cosine Similarity Collaborates with Word2vec and WordNet. Future Internet 2019, 11, 114. https://doi.org/10.3390/fi11050114

Orkphol K, Yang W. Word Sense Disambiguation Using Cosine Similarity Collaborates with Word2vec and WordNet. Future Internet. 2019; 11(5):114. https://doi.org/10.3390/fi11050114

Chicago/Turabian StyleOrkphol, Korawit, and Wu Yang. 2019. "Word Sense Disambiguation Using Cosine Similarity Collaborates with Word2vec and WordNet" Future Internet 11, no. 5: 114. https://doi.org/10.3390/fi11050114

APA StyleOrkphol, K., & Yang, W. (2019). Word Sense Disambiguation Using Cosine Similarity Collaborates with Word2vec and WordNet. Future Internet, 11(5), 114. https://doi.org/10.3390/fi11050114