Alternating Direction Method of Multipliers for Generalized Low-Rank Tensor Recovery

Abstract

:1. Introduction

2. Notations and Preliminaries

3. Related Works

4. Generalized Low-Rank Tensor Recovery

4.1. Model of GLRTR

4.2. Optimization Algorithm to GLRTR

| Algorithm 1. Solving GLRTR by ADMM |

| Input: Data tensor , sampling index set Ω, regularization parameters λ and τ. |

| Initialize: and ρ. |

| Output: and . |

While not converged do

|

| End while |

5. Convergence Analysis

6. Experimental Results

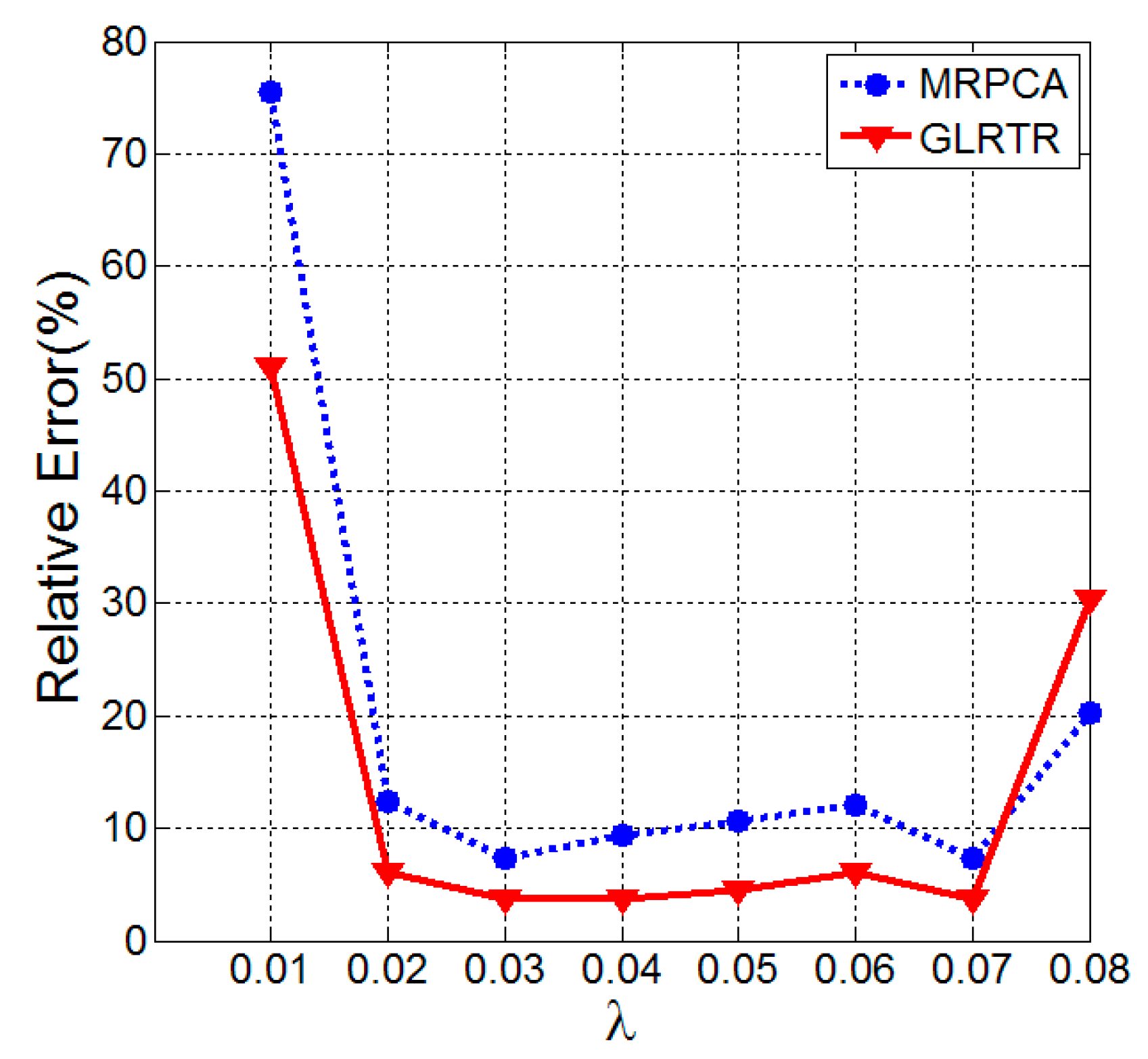

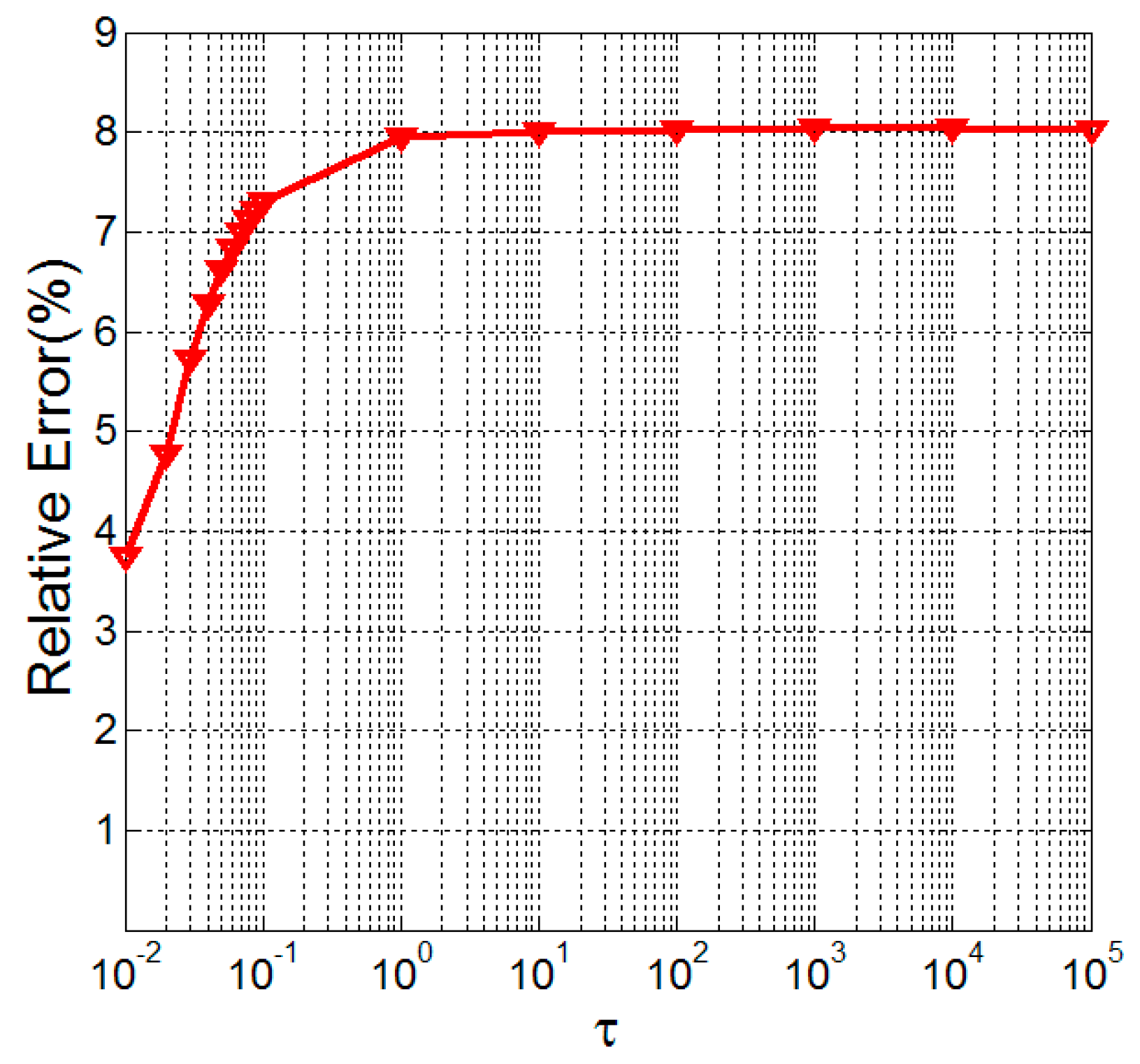

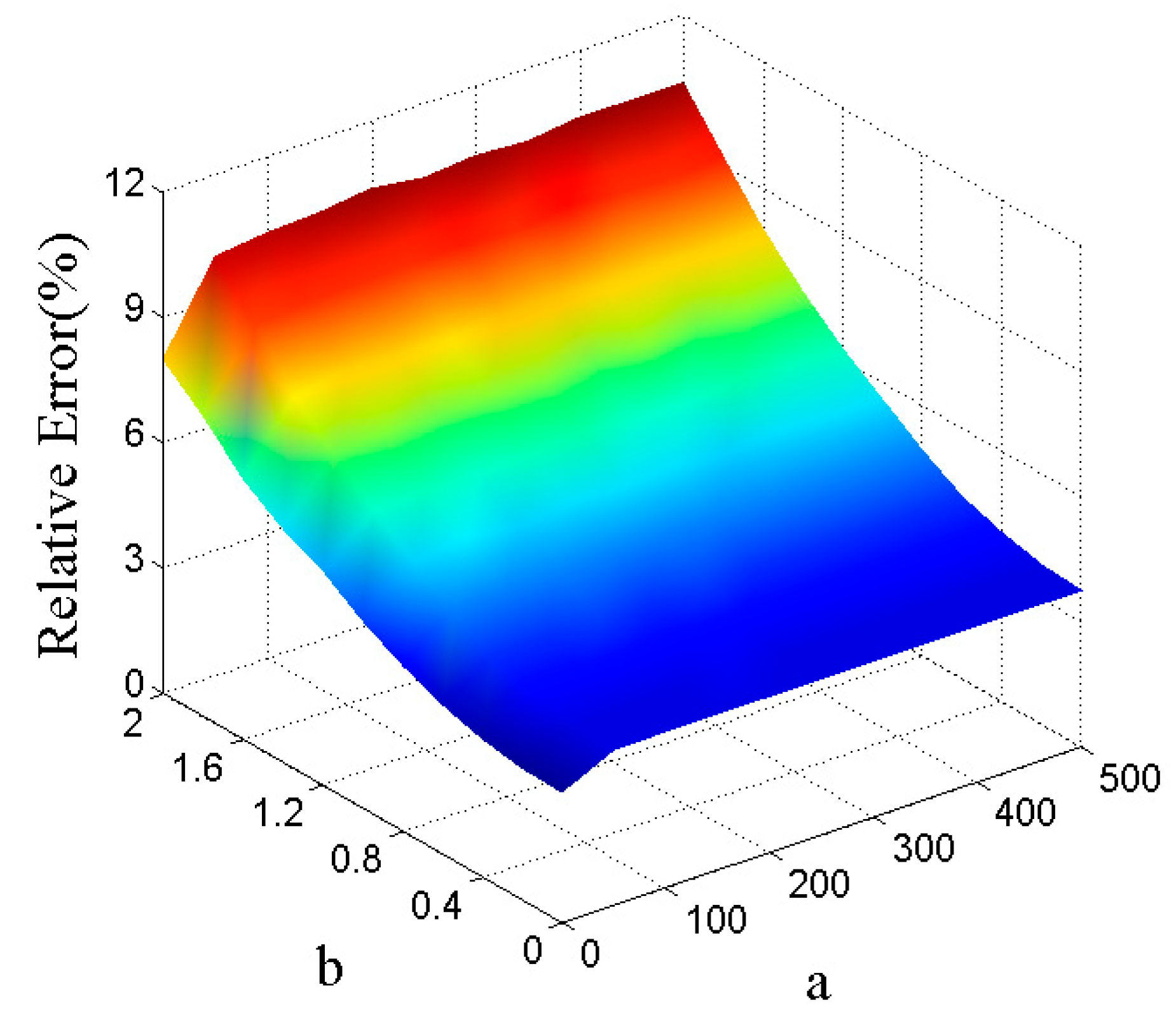

6.1. Synthetic Data

6.2. Influence of Noise and Sampling Rate on the Relative Error

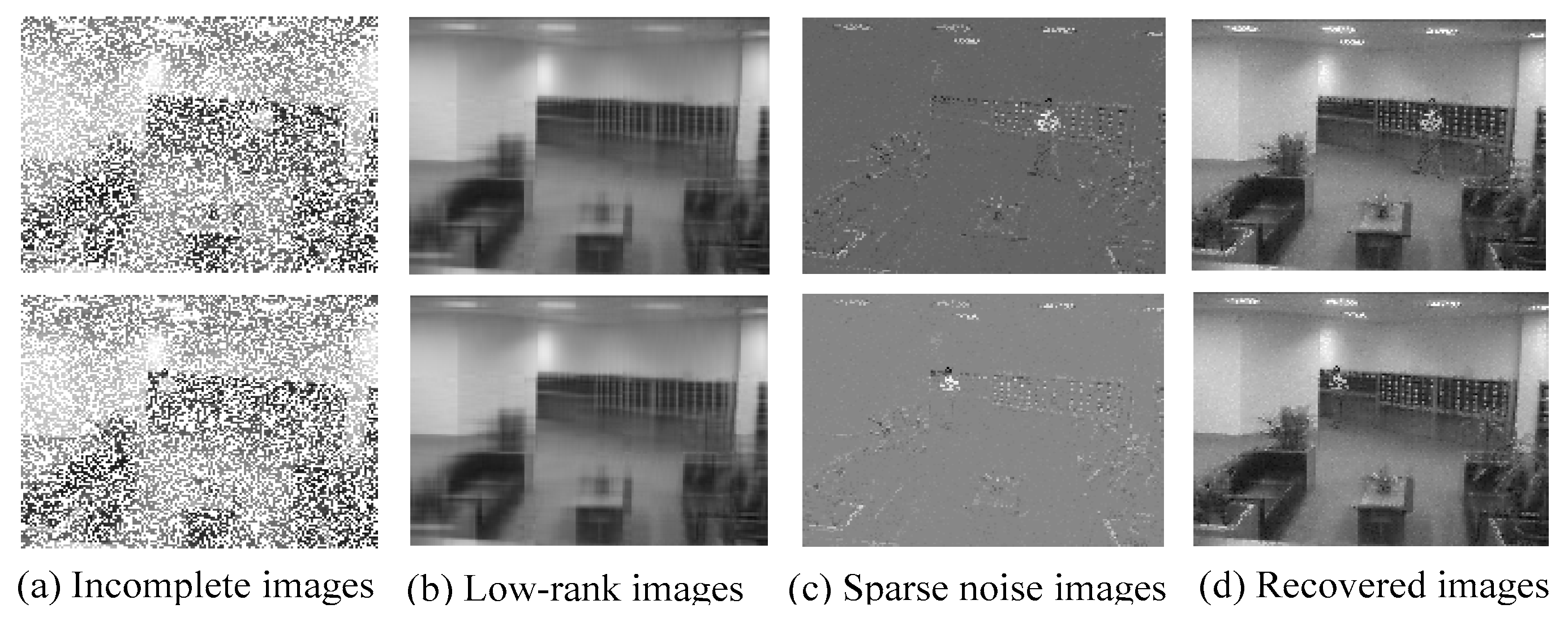

6.3. Applications in Background Modeling

7. Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Candès, E.J.; Recht, B. Exact matrix completion via convex optimization. Found. Comput. Math. 2009, 9, 717–772. [Google Scholar] [CrossRef]

- Candès, E.J.; Li, X.; Ma, Y.; Wright, J. Robust principal component analysis? J. ACM. 2011, 58, 37. [Google Scholar] [CrossRef]

- Liu, G.; Lin, Z.; Yan, S.; Sun, J.; Yu, Y.; Ma, Y. Robust recovery of subspace structures by low-rank representation. IEEE Trans. Pattern Anal. Mach. Intell. 2013, 35, 171–184. [Google Scholar] [CrossRef] [PubMed]

- Candès, E.J.; Plan, Y. Matrix completion with noise. P. IEEE. 2010, 98, 925–936. [Google Scholar] [CrossRef]

- Zhou, Z.; Li, X.; Wright, J.; Candès, E.J.; Ma, Y. Stable principal component pursuit. In Proceedings of the 2010 IEEE International Symposium on Information Theory Proceedings (ISIT), Austin, TX, USA, 13–18 June 2010.

- Xu, H.; Caramanis, C.; Sanghavi, S. Robust PCA via outlier pursuit. IEEE Trans. Inf. Theory. 2012, 58, 3047–3064. [Google Scholar] [CrossRef]

- Shi, J.; Zheng, X.; Yong, L. Incomplete robust principal component analysis. ICIC Express Letters, Part B Appl. 2014, 5, 1531–1538. [Google Scholar]

- Shi, J.; Yang, W.; Yong, L.; Zheng, X. Low-rank representation for incomplete data. Math. Probl. Eng. 2014, 10. [Google Scholar] [CrossRef]

- Liu, Y.; Jiao, L.C.; Shang, F.; Yin, F.; Liu, F. An efficient matrix bi-factorization alternative optimization method for low-rank matrix recovery and completion. Neural Netw. 2013, 48, 8–18. [Google Scholar] [CrossRef] [PubMed]

- Liu, Y.; Jiao, L.C.; Shang, F. A fast tri-factorization method for low-rank matrix recovery and completion. Pattern Recogn. 2013, 46, 163–173. [Google Scholar] [CrossRef]

- De Lathauwer, L.; Castaing, J. Tensor-based techniques for the blind separation of DS–CDMA signals. Signal Process. 2007, 87, 322–336. [Google Scholar] [CrossRef]

- Kolda, T.G.; Bader, B.W. Tensor decompositions and applications. SIAM Rev. 2009, 51, 455–500. [Google Scholar] [CrossRef]

- Liu, J.; Musialski, P.; Wonka, P.; Ye, J. Tensor completion for estimating missing values in visual data. IEEE Trans. Patt. Anal. Mach. Intel. 2013, 35, 208–220. [Google Scholar] [CrossRef] [PubMed]

- Shi, J.; Zhou, S.; Zheng, X. Multilinear robust principal component analysis. Acta Electronica Sinica. 2014, 42, 1480–1486. [Google Scholar]

- Tomasi, G.; Bro, R. PARAFAC and missing values. Chemom. Intell. Lab. Syst. 2005, 75, 163–180. [Google Scholar] [CrossRef]

- Shi, J.; Jiao, L.C.; Shang, F. Tensor completion algorithm and its applications in face recognition. Pattern Recognit. Artif. Intell. 2011, 24, 255–261. [Google Scholar]

- Kressner, D.; Steinlechner, M.; Vandereycken, B. Low-rank tensor completion by Riemannian optimization. BIT Numer. Math. 2014, 54, 447–468. [Google Scholar] [CrossRef]

- Shi, J.; Yang, W.; Yong, L.; Zheng, X. Low-rank tensor completion via Tucker decompositions. J. Comput. Inf. Syst. 2015, 11, 3759–3768. [Google Scholar]

- Gandy, S.; Recht, B.; Yamada, I. Tensor completion and low-n-rank tensor recovery via convex optimization. Inv. Probl. 2011, 27, 19. [Google Scholar] [CrossRef]

- Liu, Y.; Shang, F. An efficient matrix factorization method for tensor completion. IEEE Signal Process. Lett. 2013, 20, 307–310. [Google Scholar] [CrossRef]

- Tan, H.; Cheng, B.; Wang, W.; Zhang, Y.J.; Ran, B. Tensor completion via a multi-linear low-n-rank factorization model. Neurocomputing. 2014, 133, 161–169. [Google Scholar] [CrossRef]

- Goldfarb, D.; Qin, Z. Robust low-rank tensor recovery: Models and algorithms. SIAM J. Matrix Anal. Appl. 2014, 35, 225–253. [Google Scholar] [CrossRef]

- Cai, J.F.; Candès, E.J.; Shen, Z. A singular value thresholding algorithm for matrix completion. SIAM J. Optimiz. 2010, 20, 1956–1982. [Google Scholar] [CrossRef]

- Boyd, S.; Parikh, N.; Chu, E.; Peleato, B.; Eckstein, J. Distributed optimization and statistical learning via the alternating direction method of multipliers. Found. Trends Mach. Learn. 2011, 3, 1–122. [Google Scholar] [CrossRef]

- Rockafellar, R.T. Monotone operators and the proximal point algorithm. SIAM J. Control Optim. 1976, 14, 877–898. [Google Scholar] [CrossRef]

- Databases of Lobby and Bootstrap. Available online: http://perception.i2r.a-star.edu.sg/bk_ model/bk_index.html (accessed on 1 November 2015).

| (r, p′) | (%) | (%) | RE of MRPCA (%) | RE of GLRTR (%) |

|---|---|---|---|---|

| (3, 5) | 1253 | 18.33 | 9.71 ± 0.98 | 6.60 ± 0.69 |

| (3, 10) | 1769 | 19.20 | 8.04 ± 0.84 | 7.99 ± 0.84 |

| (3, 15) | 2218 | 20.05 | 9.84 ± 1.97 | 8.75 ± 1.87 |

| (5, 5) | 569 | 8.79 | 7.39 ± 0.79 | 3.74 ± 0.42 |

| (5, 10) | 855 | 9.40 | 8.55 ± 0.97 | 4.47 ± 0.52 |

| (5, 15) | 1040 | 9.16 | 9.13 ± 0.52 | 5.05 ± 0.31 |

| (r, p′) | (%) | (%) | RE of MRPCA (%) | RE of GLRTR (%) |

|---|---|---|---|---|

| (2, 5) | 1722 | 24.05 | 29.24 ± 7.95 | 13.90 ± 4.68 |

| (2, 10) | 2823 | 37.72 | 48.07 ± 18.47 | 23.98 ± 9.66 |

| (2, 15) | 3052 | 27.17 | 44.71 ± 13.66 | 23.10 ± 8.52 |

| (4, 5) | 448 | 6.97 | 12.00 ± 1.60 | 8.96 ± 1.15 |

| (4, 10) | 563 | 6.04 | 15.12 ± 1.98 | 6.26 ± 0.87 |

| (4, 15) | 758 | 6.58 | 24.22 ± 2.61 | 12.16 ± 2.90 |

| (p, λ) | (30,0.07) | (50, 0.05) | (70, 0.03) | (90,0.03) |

|---|---|---|---|---|

| RE of MRPCA (%) | 87.81 ± 0.27 | 77.02 ± 0.46 | 60.64 ± 1.77 | 11.49 ± 1.73 |

| RE of GLRTR (%) | 11.58 ± 1.72 | 6.62 ± 0.82 | 5.51 ± 0.83 | 4.27 ± 0.47 |

| (p, λ) | (30, 0.03) | (50, 0.035) | (70, 0.03) | (90, 0.03) |

|---|---|---|---|---|

| RE of MRPCA (%) | 88.38 ± 0.25 | 78.94 ± 0.34 | 65.06 ± 0.59 | 40.03 ± 1.34 |

| RE of GLRTR (%) | 62.51 ± 1.72 | 20.09 ± 2.63 | 9.14 ± 0.12 | 8.58 ± 1.11 |

© 2016 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC-BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Shi, J.; Yin, Q.; Zheng, X.; Yang, W. Alternating Direction Method of Multipliers for Generalized Low-Rank Tensor Recovery. Algorithms 2016, 9, 28. https://doi.org/10.3390/a9020028

Shi J, Yin Q, Zheng X, Yang W. Alternating Direction Method of Multipliers for Generalized Low-Rank Tensor Recovery. Algorithms. 2016; 9(2):28. https://doi.org/10.3390/a9020028

Chicago/Turabian StyleShi, Jiarong, Qingyan Yin, Xiuyun Zheng, and Wei Yang. 2016. "Alternating Direction Method of Multipliers for Generalized Low-Rank Tensor Recovery" Algorithms 9, no. 2: 28. https://doi.org/10.3390/a9020028

APA StyleShi, J., Yin, Q., Zheng, X., & Yang, W. (2016). Alternating Direction Method of Multipliers for Generalized Low-Rank Tensor Recovery. Algorithms, 9(2), 28. https://doi.org/10.3390/a9020028