1. Introduction

Guaranteeing the sparsity, modeling accuracy, and efficient optimization of a reduced number of hyperparameters in constructing robust regression models is one of the critical unresolved issues in the realm of machine learning. Practitioners in fields ranging from theoretical research to engineering applications are hesitant to implement machine learning solutions, as the process of constructing predictive regression models with a better generalization performance is not transparent. Support Vector Regression (SVR) [

1,

2] is a well-known type of non-parametric supervised learning model that integrates the control of model structural risk into machine learning. The risk minimization theory allows for a balance between empirical risk and structural risk, and therefore, SVR surmounts a significant shortcoming of other, nonlinear modeling methods, namely over-fitting [

3]. Shortly after its introduction, SVR has been extended in numerous applications, ranging from fault detection [

4,

5,

6], pattern recognition [

7,

8] and predictive control [

9] to brain–computer interfaces [

10].

SVR is viewed as one of the most effective and commonly applied techniques in predictive modeling, with improvements in its predictive performance and generalization capacity significantly relying on the adjustment of its hyperparameters. Although it reveals the generalization capability in regression by introducing the

-insensitive margin, providing new insights into controlling the structural complexity of models, the optimization process implementing the selection of three hyperparameters, which includes regularization factor

, kernel parameter

, and

, makes the regression modeling method more complex [

11,

12,

13,

14,

15,

16,

17]. As mentioned in literature [

18], the training of SVR is time-consuming on large-scale datasets, especially when it comes to hyperparameter optimization. It is currently reported that finding the optimal hyperparameters in machine learning models efficiently without sacrificing prediction accuracy poses major hurdles [

19]. Prevailing techniques like Grid-Search and Random-Search with cross-validation for optimizing the hyperparameters require considerable time in large-scale search spaces, and they do not provide a guarantee of excellent predictive results. Parameters can be categorized into two groups: learnable parameters and hyperparameters. Learnable parameters are obtained from training data using optimization techniques such as quadratic programming (QP), linear programming (LP), or gradient descent. Hyperparameters are not obtained from the data; instead, they must be chosen before starting the learning process, the optimization of which involves identifying the best combination of hyperparameters that either reduces prediction error or enhances prediction accuracy. As a result, hyperparameter optimization plays a vital role in developing powerful and efficient machine learning models.

Various methods exist for hyperparameter optimization in machine learning models, including grid search, probabilistic model-based optimization, random search [

20,

21], gradient descent algorithms, and nature-inspired optimization methods. Grid search methods incorporating performance metric [

22,

23] or a weighted error function [

19] aim to find the optimal combination of hyperparameters through iterative updates. From the standpoint of Bayesian methods, probabilistic model-based optimization [

24,

25] employs a Bayesian model to assess the performance of various hyperparameter configurations and chooses the most advantageous options for evaluation. Gradient descent-based approaches are a widely used class of conventional optimization techniques for adjusting real-valued hyperparameters by computing their gradients. Nature-inspired optimization is another method that employs evolution-based techniques, among them genetic algorithms [

26] or particle swarm optimization [

27], to explore the range of potential hyperparameters. Additionally, the literature [

28] proposed an effective warm-start technique as a practical tool for parameter selection in linear SVR, and the approach has been available for public use. The aforementioned optimization methods aim to find the optimal selection to guarantee a better generalization ability by exhaustively exploring all possible combinations in the hyperparameter space. For instance, there are three hyperparameters in SVR, each with 100 options within its respective space, resulting in a total of

combinations. However, if the number of hyperparameters is reduced to two, the total size of possible combinations will decrease from one million to ten thousand. Thus, the hyperparameter optimization process for SVR will be greatly simplified and improved in terms of both time and spatial scale.

Significant efforts have been dedicated to exploring solutions for the redundancy during the hyperparameter optimization of SVR. In this regard, LSSVR, as a variant of SVR and first introduced in [

29,

30], has been successfully explored and emerged as a prominent technique in machine learning, particularly for classification and regression problems. In the LSSVR framework—rather than addressing a quadratic programming problem with inequality constraints as in the conventional SVR—equality constraints and an

-loss function are employed. This results in an optimization problem whose dual solution is found by solving a system of linear equations. More importantly, compared to SVR, LSSVR requires the optimization of only two hyperparameters, namely the kernel width

and the regularization factor

, significantly improving the optimization process. As a result, LSSVR has seen widespread application and research in recent years, with over 15,000 citations. For instance, it has been successfully applied in short-term traffic flow prediction [

31], optimal control [

32], interval-censored data [

33] and model identification [

34]. Unfortunately, since the emergence of LSSVR, researchers have found that the method lacks model sparsity, meaning that all or most of the samples involved in model training contribute to the regression model, which can easily lead to poor generalization performance. Addressing model sparsity has become a current research hotspot. An early sparse approximation method [

35,

36] was proposed by Johan Suykens, which can perform pruning based on the physical interpretation of the magnitude of the contribution of ordered support values. Follow-up works have sought to address these challenges by introducing an adaptive pruning algorithm [

37,

38], Nyström approximation method along with prototype vectors (PVs) [

39], gradient descent technique [

40] and non-iterative sparse scheme with global representative point selection [

41].

However, these methods remain fundamentally limited in achieving true sparsity. Most approaches, such as pruning or global representative point selection, are inherently a posteriori approximations or filtering processes that artificially induce sparsity by discarding samples from the dense solution, inevitably leading to information loss and performance trade-offs. Moreover, variants employing re-weighted or -insensitive loss functions, while introducing sparsity-inducing mechanisms, still rely on heuristic thresholds or complex regularization without fundamentally altering the underlying structure of the least squares problem, resulting in suboptimal, incomplete, or heavily parameter-dependent sparse solutions. The so-called model sparsity, as described in SVR, refers to the fact that when the value of the Lagrange multiplier is relatively small (e.g., its absolute value is less than ), the corresponding training samples are not support vectors (SVs) and can be ignored. From the perspective of practical applications or current software tools, the core ideas of traditional SVR and LSSVR still dominate the mainstream, including the widely used MATLAB 2025a software. To the best of our knowledge, the following issues remain to be investigated: (1) The optimization of the three hyperparameter combinations in SVR is complex. (2) The introduction of slack variables within SVR results in additional inequality constraints, making the parameter estimation more challenging. (3) The expected sparsity of LSSVR has essentially not been addressed, and despite some proposed improvements, they still come at the cost of model accuracy.

It is worth noting that the use of the -norm, min–max formulations, and Chebyshev-type approximation has been extensively studied in the optimization and robust regression literature. Therefore, the contribution of this work does not aim to introduce a fundamentally new norm-based regression theory. Instead, this study focuses on embedding an -norm constraint into the SVR framework, with particular attention to kernel-based modeling, sparsity, and computational efficiency.

Unlike classical Chebyshev regression or generic -norm minimization approaches, the proposed LI-SVR is derived within the primal–dual structure of SVR and LSSVR. By reformulating the approximation error using a single global variable , the resulting optimization problem remains convex and requires only two hyperparameters, namely the regularization parameter and kernel width. Moreover, the number of inequality constraints is reduced to , which is half of that required by standard -SVR formulations.

More importantly, the proposed formulation naturally restores model sparsity through the Karush–Kuhn–Tucker (KKT) conditions. Only samples whose prediction errors attain the maximum bound actively constrain the solution and become support vectors, while the remaining samples are strictly inactive. This sparsity mechanism differs fundamentally from post hoc pruning strategies or explicit sparsity-inducing regularizers, and leads to a compact kernel regression model without sacrificing convexity or introducing additional tuning parameters.

From this perspective, the proposed LI-SVR should be viewed as a structurally simplified and sparsity-enhanced kernel regression framework inspired by -norm optimization, rather than as a novel norm definition or a replacement of existing robust regression theories.

Inspired by the core ideas of classical SVR and LSSVR, the main contributions of this work are as follows.

By integrating the concept of infinite norm into our research, we investigate the problem of lacking sparsity of LSSVR and optimizing hyperparameters of in SVR, exploring a more efficient and generalizable regression prediction method that ensures both model sparsity and modeling accuracy;

We propose a novel minimum infinite norm Support Vector Regression (LI-SVR) method, distinct from classical SVR and LSSVR, to explore the optimal balance between the complex hyperparameter optimization process and sparsity, overcoming the limitations of traditional regression modeling methods;

We demonstrate the sparsity of the proposed method and explain why it does not require optimization of the epsilon-insensitive parameter of in SVR. Furthermore, we find the maximum approximation error, which is adaptively computed using the proposed method to reflect model accuracy, thereby avoiding the complicated optimization of .

The rest of this article is structured as follows.

Section 2 introduces the theory underlying the algorithms, while the proposed LI-SVR is detailed in

Section 3.

Section 4 presents the experimental analysis of the results. Finally, the conclusions would be gathered in

Section 5.

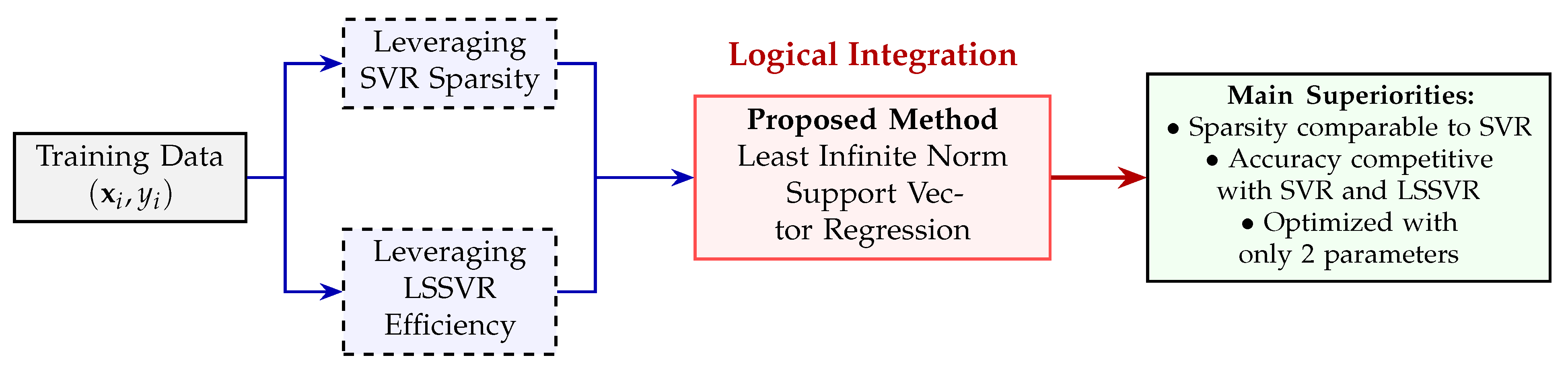

3. SVR and LSSVR-Inspired Least -Norm Support Vector Regression

Based on the comparative analysis in

Section 2, it is evident that SVR achieves sparsity at the cost of increased hyperparameter complexity, whereas LSSVR simplifies optimization but loses sparsity due to its

-norm error formulation.

Section 3 builds directly upon this observation by reformulating the LSSVR objective using an

-norm error criterion, with the aim of restoring intrinsic sparsity while preserving convexity and limiting the number of tunable hyperparameters to two.

Actually, model sparsity is guaranteed by whether the absolute values of the Lagrange multipliers solved are sufficiently small. Given that LSSVR utilizes the

-norm of the error to assess modeling accuracy, it inherently lacks model sparsity. In contrast, SVR obtains sparsity by introducing

, but it adds the complexity of optimizing an additional hyperparameter. To deal with these issues, we propose the least infinity (

)-norm Support Vector Regression (LI-SVR) method, as shown in

Figure 2.

3.1. The -Norm on Approximation Error

The -norm (also known as the maximum norm) is defined as the largest element of the absolute value in a vector. In contrast, the -norm is a square root of the sum of squares in all elements, which calculates the length or distance of the vector in Euclidean space.

Assuming the obtained input–output data and the regression model to be solved are represented as in (

1) and (

2), respectively, where the measurement of

satisfies the following nonlinear system,

According to statistical theory, there exists an arbitrary approximation of the measurement model

g by the nonlinear regression model

f described in Equation (

2). As the approximation precision decreases, fewer SVs are needed in SVR; conversely, more SVs are required. Hence, for any given real continuous function

g and

, a regression model

f can be found,

In traditional LSSVR, the following deviation

is defined,

and its optimization problem employs the

-norm of deviations, implying that all deviations in Formula (

20) contribute to the solution of Lagrange multipliers, which is the primary reason for the loss of sparsity.

Now, we introduce

-norm on the approximation error to replace the

-norm and solve for the regression model

f satisfying condition (

19). Initially, we search for the maximum absolute deviation

in Formula (

20), defined as the

-norm of the approximation error. Next, the minimization of

can be transformed into a

optimization problem to get intrinsic sparsity,

where

denotes the finite training set. For SVR, the interpretation of sparsity implies that contributions of input samples within the pipeline are disregarded by the regression model. Actually, the essentially sparse nature proposed in this paper borrows from the idea of SVR. That is to say, when the deviation

satisfies the condition of

, the contribution of the corresponding sample

will be negligible in (

21).

Lemma 1.

The optimization problem (

21)

can be addressed through linear programming,where and b are the parameters to be determined, and λ represents the maximum approximation error. Proof. Define

as follows,

and the following inequality can be directly derived,

By applying the absolute value operation, the system of inequalities (

24) can be rewritten in the form of (

22). This completes the proof of Lemma 1. □

The sparsity-promoting property of the

-norm formulation can be intuitively understood from its min–max structure. As shown in (

22), the optimization seeks to minimize a single scalar variable

, which represents the maximum approximation error shared by all training samples. Unlike

-based formulations that accumulate errors over all samples, the objective here is governed solely by the worst-case deviation. As a result, only those samples whose residuals attain the maximum bound

actively constrain the solution, while samples with smaller errors remain strictly inactive. Consequently, the regression model is effectively determined by a limited subset of critical samples, which naturally leads to a sparse representation. Next, this lemma will be used to establish a sparse regression model in our approach.

3.2. Establishment of Sparse Kernel Regression Model

In fact, early studies [

42,

43] on the classification of machine learning methods already discussed how the sparsity of models could be enhanced by leveraging the concept of the

-norm. A good regression model

f should be a comprehensive embodiment of both model sparsity and modeling accuracy, as exemplified by SVR and LSSVR. However, SVR’s optimization problem has the following two drawbacks compared to LSSVR: (1) with

N training samples, the introduction of slack variables of

increases the number of inequality constraints by

; (2) it requires the selection of three hyperparameters, including the kernel parameter

, regularization factor

, and insensitivity margin

, while LSSVR only has two of

. In light of this [

42,

43], while maintaining the same number of hyperparameters as LSSVR, we propose the least

-norm SVR(LI-SVR). Hence, drawing inspiration from LSSVR, we substitute the

-norm optimization with the

problem (

22) and yield a new optimization,

where the first term

in (

25) controls the model structure, which is minimized to favor smoother model characteristics; the second term

primarily focuses on improving model accuracy;

is a regularization factor, used to balance the weights between model structure and modeling accuracy. What should be emphasized is that in inequality constraint (

26), we only consider

. This is because

represents the maximum one among all absolute deviations. If

, then

, indicating that regression model

can completely fit the actual outputs

y, which has a high potential to trigger severe overfitting and result in extreme degradation of the model’s generalization performance. It should be emphasized that

is an optimization variable rather than a hyperparameter. Unlike the

parameter in SVR, which must be manually specified prior to training,

is automatically determined during optimization as the maximum approximation error, as shown in Equation (

29). Therefore, the proposed method involves only two hyperparameters: the regularization coefficient

and the kernel width

.

To solve for the weight vector

and the bias term

b, we leverage the Lagrange function with Lagrange multipliers

and

to convert the constraints into an unconstrained optimization problem,

To achieve the optimal solution, partial derivatives of the Lagrange function with regard to

are computed using the Karush–Kuhn–Tucker condition and equated to zero, respectively,

here, the weight vector

can be viewed as a linear expansion of coefficients

in relation to the nonlinear feature mapping

.

From Equation (

28), it can be observed that

is automatically calculated via

and

, eliminating the need for prior determination.

where Equations (

30) and (

31) will be employed for analyzing the sparsity properties and determining whether the

k-th sample

qualifies as an SV.

Now, substituting Formula (

28) into (

27), we obtain,

where the constraints of

and

in (

32) must be met. Maximizing Formula (

27) is equivalent to the maximization of (

32). However, the maximization of (

32) can be reformulated as its dual problem,

Theorem 1.

Optimization problem (

33)

can be solved through quadratic programming, and the solution of and is globally optimal. Proof. We only need to transform optimization (

33) into vector form, as seen in Equation (

34), and the theorem is proven. □

Here,

is an

n-dimensional matrix filled with elements that are all equal to 1,

represents a column vector with all elements equal to 1, the entries of kernel matrix

are calculated using kernel function

, which includes commonly used ones such as polynomial, radial basis, and sigmoid kernels, etc. It has been demonstrated that the radial basis kernel, also known as the Gaussian kernel, exhibits superior performance over other kernel functions in handling nonlinear regression problems. Therefore, the Gaussian kernel function with kernel width

is adopted in this study,

As the optimization (

32) is a typical quadratic programming problem, the solution obtained is globally optimal, thus avoiding local optima. After solving the optimization problem of (

32), we found that the magnitudes of most Lagrange multipliers of

and

are sufficiently small, even negligible. The maximum approximation error

can be computed based on (

28). Subsequently, we derive a bias term

b from Equation (

30),

or (

31),

Here,

and

represent the number of significant differences from zero for components

and

in

and

, respectively. Assuming that the total number of SVs (support vectors) is

, and these SVs are represented as

, we can derive an essentially sparse regression model, namely LI-SVR, comparable to SVR and LSSVR,

It is also revealed in

Section 3.3 that

is substantially smaller than

n. In practice, the quadratic programming problem in Theorem 1 is solved using standard interior-point solvers. Numerical stability is ensured by kernel matrix regularization and normalization of input features. All experiments employ identical solver tolerances to ensure fair comparisons.

3.3. Support Vectors Analysis for Sparse LI-SVR

The number of SVs plays a crucial role in determining the model complexity of SVR. Traditionally, LSSVR lacks sparsity, meaning that all samples contribute to the model construction. This not only increases the structural complexity of the model, leading to potential overfitting issues, but also deteriorates its generalization performance. Therefore, the method proposed in this paper can essentially produce a sparse regression model after solving the optimization problem (

33). The quality of sparsity depends on the number of SVs. In general, more SVs indicate a poorer sparsity, while fewer SVs imply a better one. Below, we will analyze how the SVs are generated in the proposed sparse LI-SVR.

Firstly, we define a deviation

between the actual output

and predicted values

as follows,

where

. Upon completing the solution to the convex quadratic programming problem (

33), the maximum approximation error

can be computed from Equation (

28). According to conditions (

23) and (

30), we further obtain,

It is worth noting that the difference between

and

is that Equation (

39) includes both

and

, whereas Equation (

40) contains only those subject to condition of

. Considering that we derive Equation (

40) by computing the partial derivative of the Lagrangian function with respect to

, the sparse solution of

is dominated by Equation (

40). Logically, the

k-th sample

is selected as an SV only when

strictly, and the contribution of the corresponding

for sparse LI-SVR should not be overlooked. That is to say, only a small fraction of all deviations of (

39) satisfies the condition of

. Similarly, we can conduct an analysis on the sparsity of the Lagrange multiplier

. From, Equations (

23) and (

30), we have,

It should be noted that from Equation (

30),

denotes the maximum value of all negative deviations at this point. Equation (

41) allows us to ascertain an index,

k, of all SVs in which its negative deviations

equal exactly

. It is evident that the numbers

and

of the SVs are markedly smaller than the total sample size

n. Finally, the total number of SVs used in constructing the sparse LI-SVR is computed as

(namely,

, where the size of coefficient

corresponding to these SVs is not equal to zero. The essence of the obtained sparse LI-SVR can be reflected through Theorem 2.

Theorem 2.

Among the Lagrange multipliers and , at most, one of is non-zero, and one of the following three conditions is always satisfied for and : Proof. According to the original constraints (

26), it is apparent that any one in the Equations (

42)–(

44) remains valid,

From the Karush–Kuhn–Tucker (KKT) conditions, we have,

where

,

and

.

Equation (

45) is evidently true when

, whereas Equation (

42) must hold when

. Substituting it into Equation (

46) results in

, implying

. Condition

subjected to both

and

in Theorem 2 is proven. In addition, Equation (

46) also holds true when

. If

, then from Equations (

44) and (

45), it follows that

, which leads to

. This further confirms the condition

of Theorem 2. Similarly, when Equation (

43) holds, both

and

must be met from Equations (

45) and (

46). We derive the third condition

of Theorem 2 again.

However, it is impossible for both

and

to occur simultaneously. If

and

are both met, then we have,

and it is inferred that

is contradictory to the known fact that

. This concludes the proof of Theorem 2. □

Although is influenced by the largest residual, the regularization term jointly constrains the model complexity and prevents overreaction to individual samples. This trade-off limits the sensitivity of to moderate outliers in practice, as confirmed by the experimental results.

In conclusion, we can clearly see that Theorem 2 further elaborates on how to obtain sparse solutions using the proposed method. Additionally, we also observe that the number of samples satisfying condition in Theorem 2 is much higher than the one corresponding to the other two conditions and . Therefore, the proposed sparse LI-SVR method has a fundamentally evolutionary development over the traditional LSSVR presented by Johan Suykens and Joos Vandewalle in enhancing model sparsity and generalization ability.

Further, the proposed LI-SVR formulation explicitly minimizes a regularized upper bound of the prediction error, which inherently limits the influence of noise on the regression function. As further demonstrated in the experimental section, the model exhibits stable performance across different noise realizations and noise distributions, indicating robustness against stochastic disturbances.

3.4. Relationship Between the Global Variable and the -Insensitive Loss

In classical -SVR, sparsity is induced by the -insensitive loss, where prediction errors within a predefined tolerance band do not contribute to the objective function. In contrast, the proposed LI-SVR replaces the fixed -tube with a single global envelope variable , which is jointly optimized with the regression function. This subsection provides a formal discussion on the relationship between and .

For a fixed regression function

, the

-SVR constraint enforces

where

is user-specified and

are slack variables. In LI-SVR, the corresponding constraint can be written as

where

is an optimization variable shared by all samples.

It can be observed that, for any feasible solution of -SVR, choosing yields a feasible solution for LI-SVR. Conversely, for a given LI-SVR solution , setting leads to an -SVR feasible set that contains the LI-SVR solution as a special case. Therefore, LI-SVR can be interpreted as optimizing an upper bound of the -insensitive loss, where the tolerance parameter is no longer fixed a priori but is determined adaptively from the data.

More formally, the LI-SVR objective can be viewed as minimizing a regularized upper bound of the maximum absolute deviation, while -SVR minimizes the sum of deviations exceeding a fixed threshold. When the optimal solution of -SVR satisfies that all active errors lie exactly on the boundary of the -tube, the two formulations coincide with . In general cases, LI-SVR yields a conservative envelope that guarantees bounded worst-case errors, whereas -SVR allows a trade-off between sparsity and tolerance through a predefined margin.

This analysis clarifies that should not be regarded as a direct replacement of , but rather as an adaptive envelope parameter that implicitly controls the insensitivity region through optimization.

In addition, we emphasize that interpreting as an “adaptive ” is not intended to imply a strict theoretical equivalence. Instead, this interpretation arises naturally from the optimality conditions of the proposed formulation.

From the Karush–Kuhn–Tucker (KKT) conditions of the LI-SVR optimization problem, it follows that only samples satisfying,

are associated with nonzero dual variables and thus act as support vectors. All remaining samples strictly satisfy

and are inactive at the optimum. This property closely parallels the role of the

-insensitive region in classical

-SVR, where samples inside the

-tube do not contribute to the solution.

However, a key distinction is that is not predefined but is jointly optimized together with the regression function. As a result, the effective insensitivity region is determined adaptively by the data distribution and regularization trade-off, rather than by user specification. From an optimization viewpoint, represents the smallest envelope that simultaneously satisfies all constraints while minimizing a regularized worst-case error.

4. Experimental Analysis

In comparison with LSSVR and SVR methods, the regression prediction using our approach has the following three significant characteristics: (1) for LSSVR, the proposed method not only achieves inherent sparsity but also outperforms SVR; (2) while SVR requires the optimization of three hyperparameters, the proposed method only optimizes two hyperparameters; (3) by introducing the infinite norm of error, the proposed method adaptively computes the maximum error, which is somewhat similar to the insensitive zone in SVR. Based on this, the following three metrics are introduced to demonstrate the effectiveness of the proposed method: the percentage of support vectors (

), root mean square error (

). The first metric is used to evaluate the complexity of the model structure, while the latter one is used to assess the accuracy of the model. Clearly, these metrics are conflicting, and the evaluation of regression prediction should strike a balance between them.

,

and

(maximum absolute error) are defined as, respectively,

here,

denotes the total number of SVs, which is calculated by applying the conditions of

,

N represents the total number of training samples,

and

are the actual and predicted output, respectively. MAE is reported to reflect the worst-case performance, which is particularly relevant for the proposed

-norm formulation. To demonstrate the rationality and superiority of the proposed method, we compare its performance with that of classical SVR and LSSVR on several benchmark datasets, including static nonlinear functions and linear/nonlinear dynamic systems. All experimental simulations were conducted in MATLAB 2022b, with the hyperparameters of SVR and LSSVR optimized using the built-in MATLAB packages and the LS-SVMlab Toolbox, respectively. Hyperparameter selection is not the primary focus of this study. In the experiments, the regularization parameter

and kernel width

are selected using grid search with cross-validation to ensure fair and stable performance across different datasets.

4.1. Computational Complexity and Scalability

The proposed LI-SVR is formulated as a convex quadratic programming problem with inequality constraints, which is structurally simpler than the standard -SVR formulation involving constraints. In terms of computational complexity, standard SVR requires solving a quadratic programming (QP) problem with inequality constraints, resulting in a worst-case training complexity between and . LSSVR reduces this burden by solving a linear system of size N, leading to a complexity of . The proposed LI-SVR also involves a convex QP problem, but without slack variables and with only non-negativity constraints. In practice, the effective number of active constraints is significantly smaller due to sparsity, yielding training times comparable to or lower than standard SVR. In addition, LI-SVR requires only two hyperparameters, namely the kernel width and the regularization parameter, whereas classical -SVR introduces an additional -insensitive parameter. This reduction in hyperparameter dimensionality significantly alleviates the computational burden associated with hyperparameter optimization, especially when grid search or cross-validation is employed.

In terms of convergence behavior, LI-SVR can be efficiently solved using standard quadratic programming solvers and exhibits stable convergence due to its convex objective and reduced constraint set. Since the kernel matrix structure is identical to that of LSSVR and SVR, the memory footprint scales quadratically with the number of training samples, which is common to kernel-based methods.

Regarding scalability, LI-SVR inherits the same limitations and advantages as kernel SVR methods. While the current work focuses on moderate-scale datasets, the proposed formulation is compatible with existing large-scale extensions, such as decomposition methods or approximate kernel techniques, which can be incorporated in future work.

4.2. Static Nonlinear Functions

Initially, we evaluate the performance of our method by applying it to a simple regression task of the sinc function [

44], which is defined as:

where

U denotes a random variable with a uniform distribution in the interval

, and

v is Gaussian noise with a mean of 0 and a variance of

. To mitigate potential bias in the regression prediction experiments, the mean value derived from ten independent runs for each dataset is used as the evaluation metric. Then, 500 training samples are generated from Equation (

55) to establish the regression prediction model using the LI-SVR method. Likewise, 10 independent test datasets are generated from Equation (

55), with each test dataset consisting of 500 samples. The results obtained for a classical SVR, LSSVR and LI-SVR (our approach) are listed in

Table 1. For training RMSE, our method performs slightly worse than both SVR and LSSVR, while the testing RMSE is between the two. However, the sparsity of our method significantly outperforms them. The

in our method is 2.60%, indicating that only 13 samples (as shown in

Figure 3) out of 500 training samples were used to construct the regression prediction model, compared to 445 and 500 samples required by SVR and LSSVR, respectively.

Figure 4 gives the indices of these SVs obtained by our method, along with the corresponding magnitudes. Clearly, while maintaining modeling accuracy, the proposed LI-SVR exhibits superior sparsity compared to the classical SVR and LSSVR in terms of model sparsity.

4.3. Linear Dynamic System

Consider a linear dynamic system represented by the following transfer function,

with a sampling time

, a total of

samples, and input/output delays both set to 2. The input–output pairs of

for constructing LI-SVR are given as follows,

here

is a random signal defined as

in our MATLAB simulation.

Figure 5 shows the training output of LI-SVR with hyperparameter set

, where the blue solid circles represent the SVs. The corresponding magnitude of the LI-SVR model coefficients for these SVs is given in

Figure 6, accounting for 10.08% of the total sample size, compared to 66.47% for SVR and 100% for LSSVR. The detailed training/testing RMSEs are presented in

Table 2. Although LSSVR achieves the best modeling accuracy, its model structure is significantly more complex, reaching a magnitude of

as indicated by the y-axis in

Figure 7. Further, test data were generated based on Equation (

57), and LI-SVR achieved favorable predicted outputs, as shown in

Figure 8.

4.4. Nonlinear Dynamic System

We consider a nonlinear dynamic system [

44],

with a sampling time

, a total of

samples. The input–output pairs of

for constructing LI-SVR are the same as (

57). When hyperparameter set

are chosen as

, the predicted output and SV are shown in

Figure 9, where the indices associated with the SVs, along with the corresponding model coefficients, are illustrated in

Figure 10. The predictive outputs derived from the test data, as governed by Equation (

58), are presented in

Figure 11. In

Table 3, the modeling precision of the LI-SVR is observed to be intermediate between SVR and LSSVR, yet it demonstrates the most favorable sparsity. In particular, the proposed method constructs a regression model utilizing only 13 SVs out of 200 data points.