BIF-RCNN: Fusing Background Information for Rotated Object Detection

Abstract

1. Introduction

- We propose a novel framework, BIF-RCNN, which explicitly incorporates background context cues into the overall detection architecture.

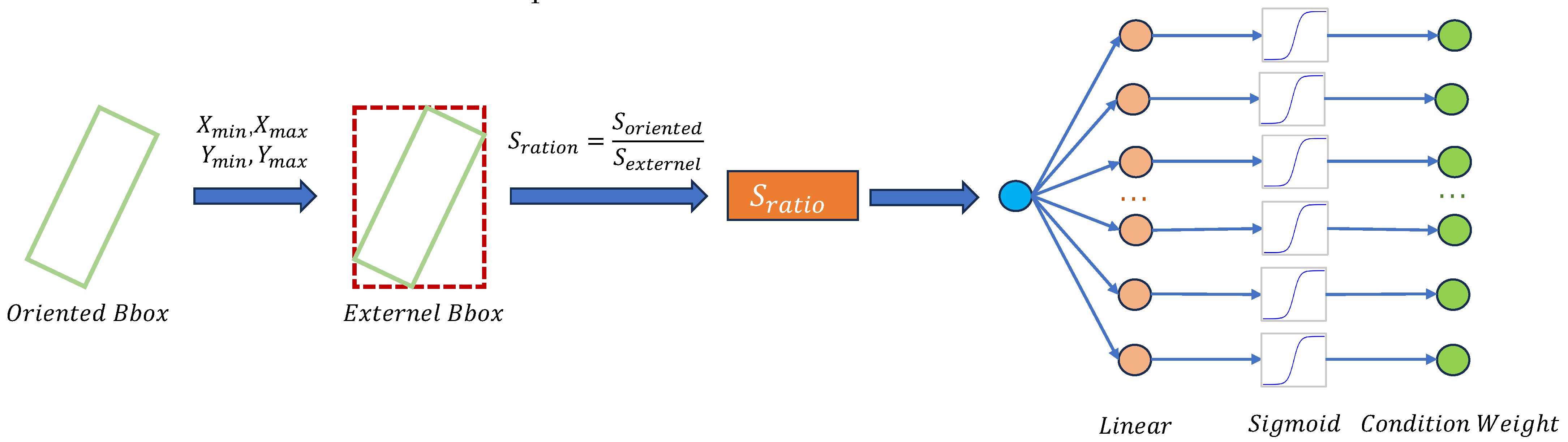

- We introduce a Dual-Level Rotated-Horizontal Feature Fusion Module (DFM) that explicitly couples the features of an oriented proposal with that of its tightest horizontal bounding box, allowing background context outside the rotated region to be distilled into the object representation and yielding a richer, context-aware embedding that markedly boosts localization and classification accuracy in cluttered scenes.

- We formulate a joint optimization loss that couples prediction discrepancy with an entropy constraint to disentangle the highly similar features of rotated instances. Built upon standard cross-entropy, the loss penalizes both the mutual information between ambiguous predictions and the entropy of individual logits, driving the model to yield low-uncertainty decisions on hard, rotation-sensitive samples.

2. Related Work

2.1. Feature Extraction Design for Rotated Object Detection

2.2. Loss Function Design for Rotated Object Detection

3. Method

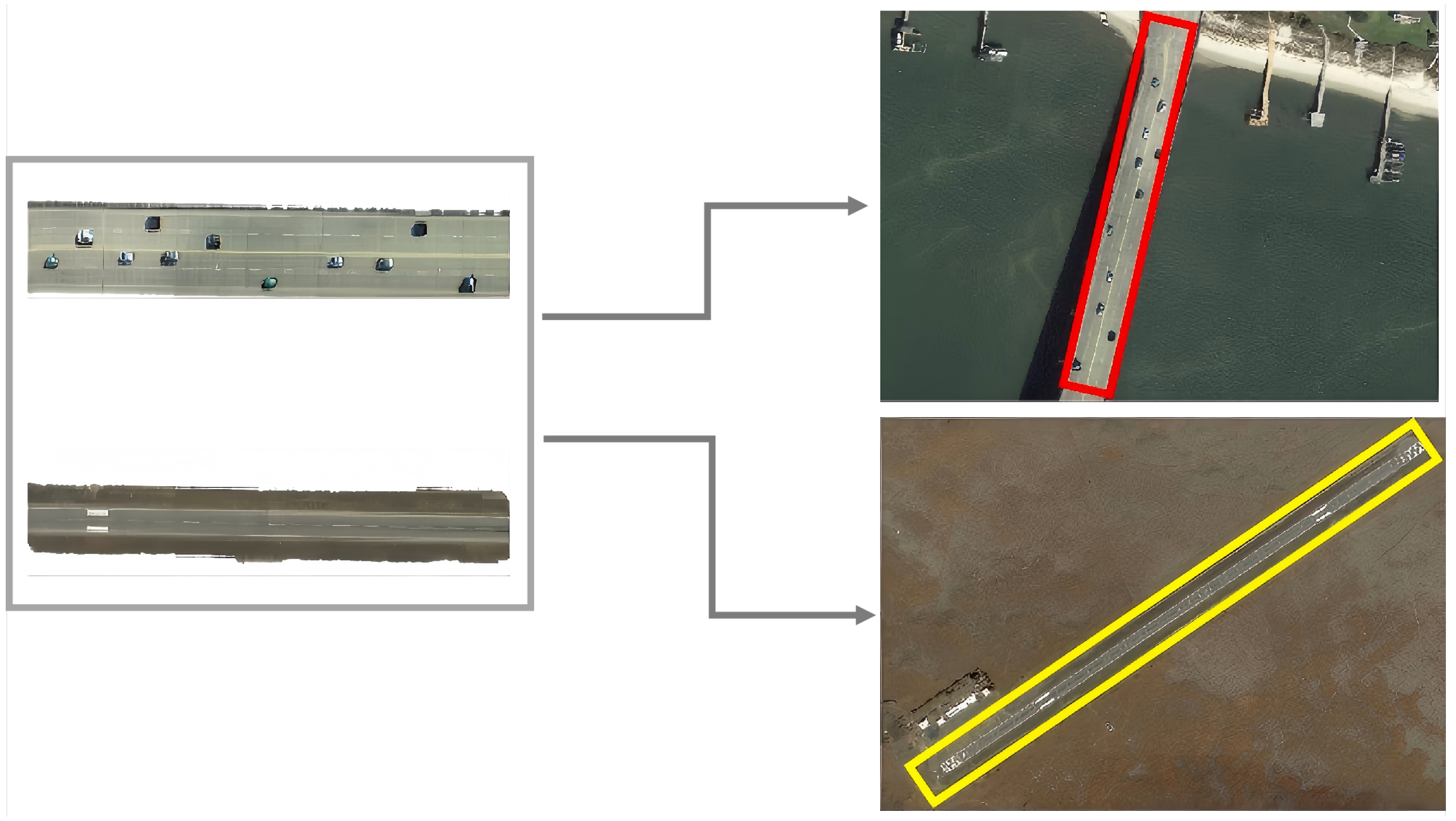

3.1. Dual-Level Rotated-Horizontal Feature Fusion Module (DFM)

3.2. PDE Loss

3.2.1. Prediction Difference

3.2.2. Entropy Constraint

3.3. Training Loss

3.3.1. Classification Loss

3.3.2. Regression Loss

4. Experiment

4.1. Setting

4.1.1. Dataset and Implementation Details

4.1.2. Baseline

4.2. Main Results

4.3. Ablation Study

4.3.1. Ablation on Training Strategies

4.3.2. Ablation on the Joint Optimization Loss

4.3.3. Component-Wise Abaltion

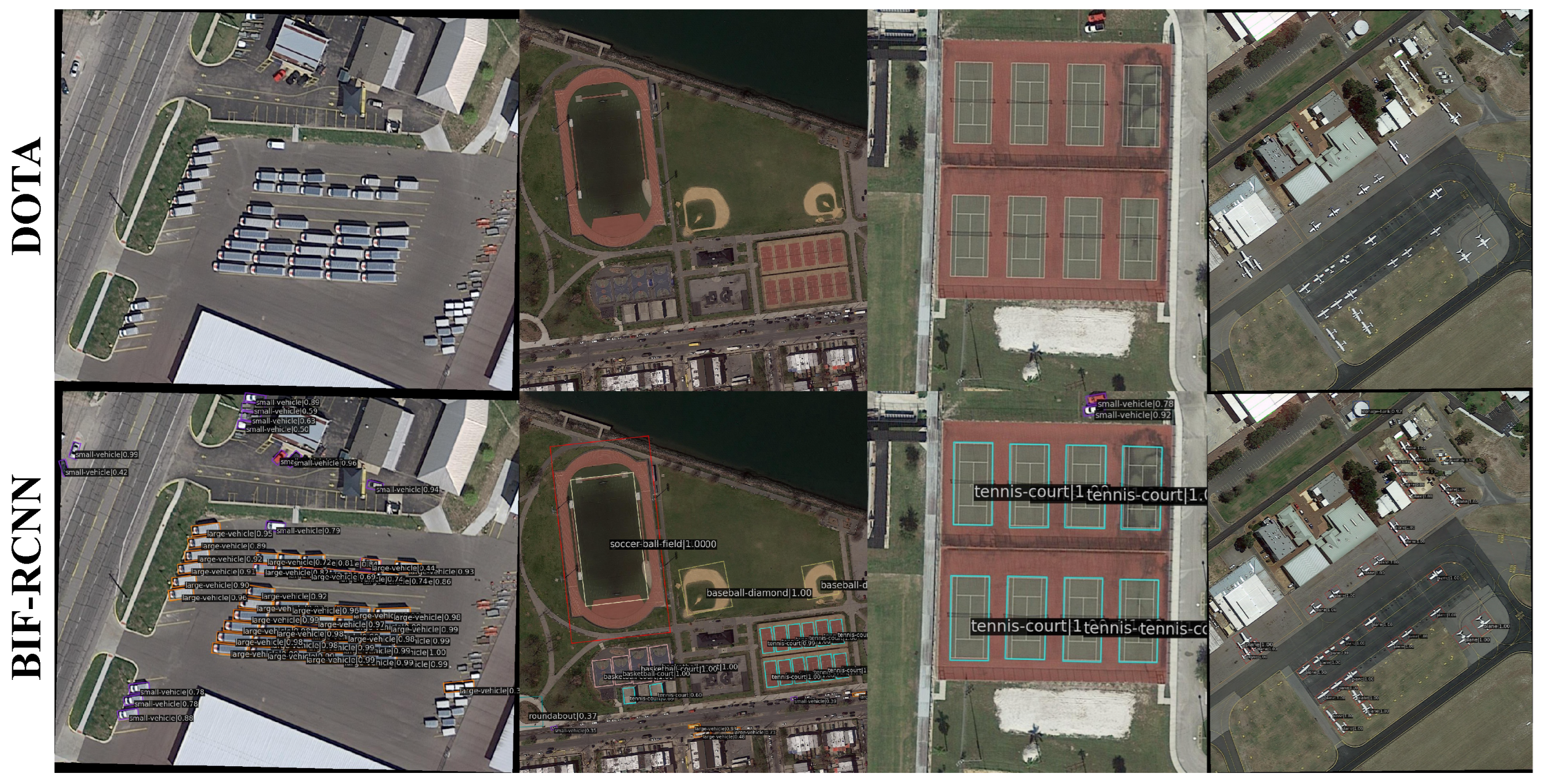

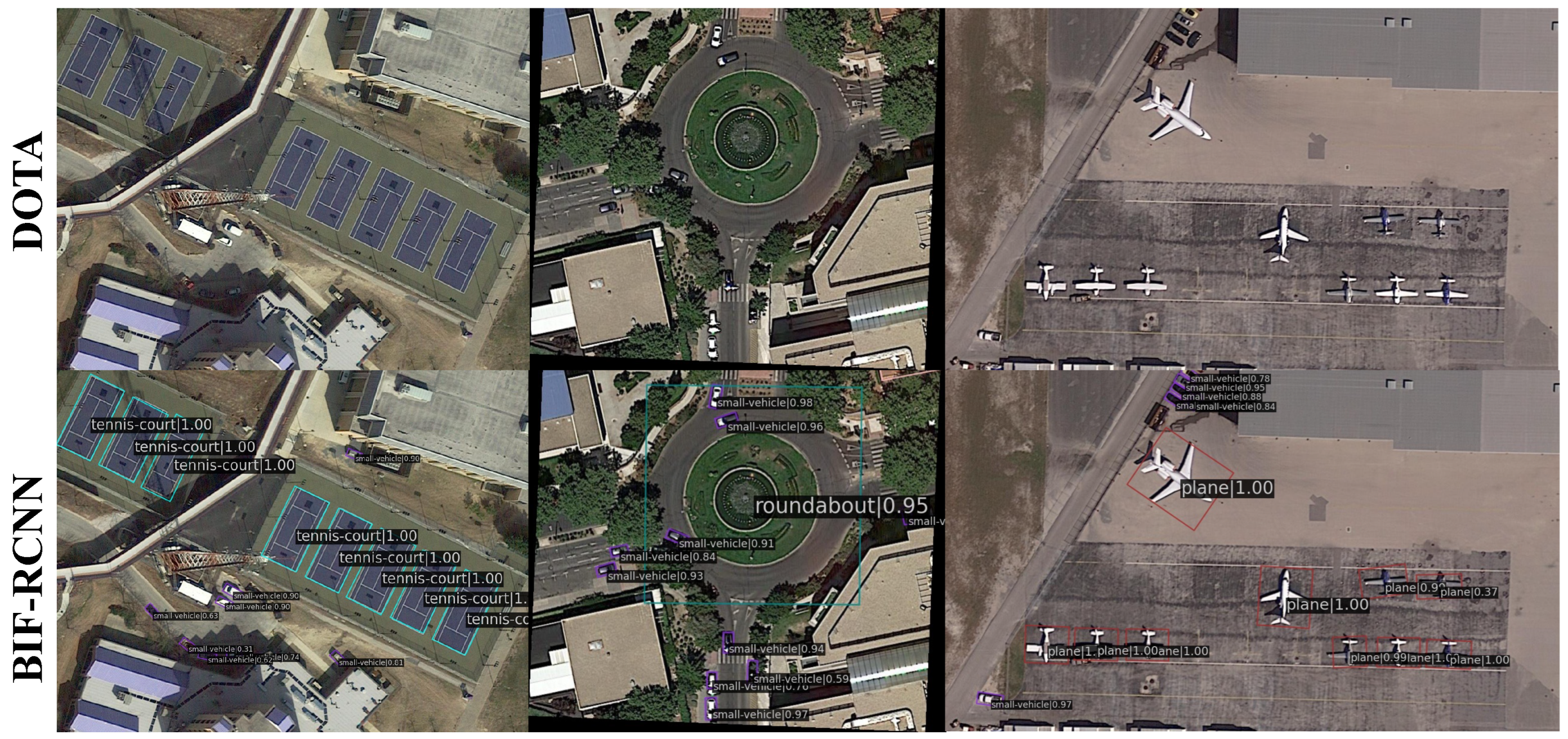

4.4. Visualization of Detection Results

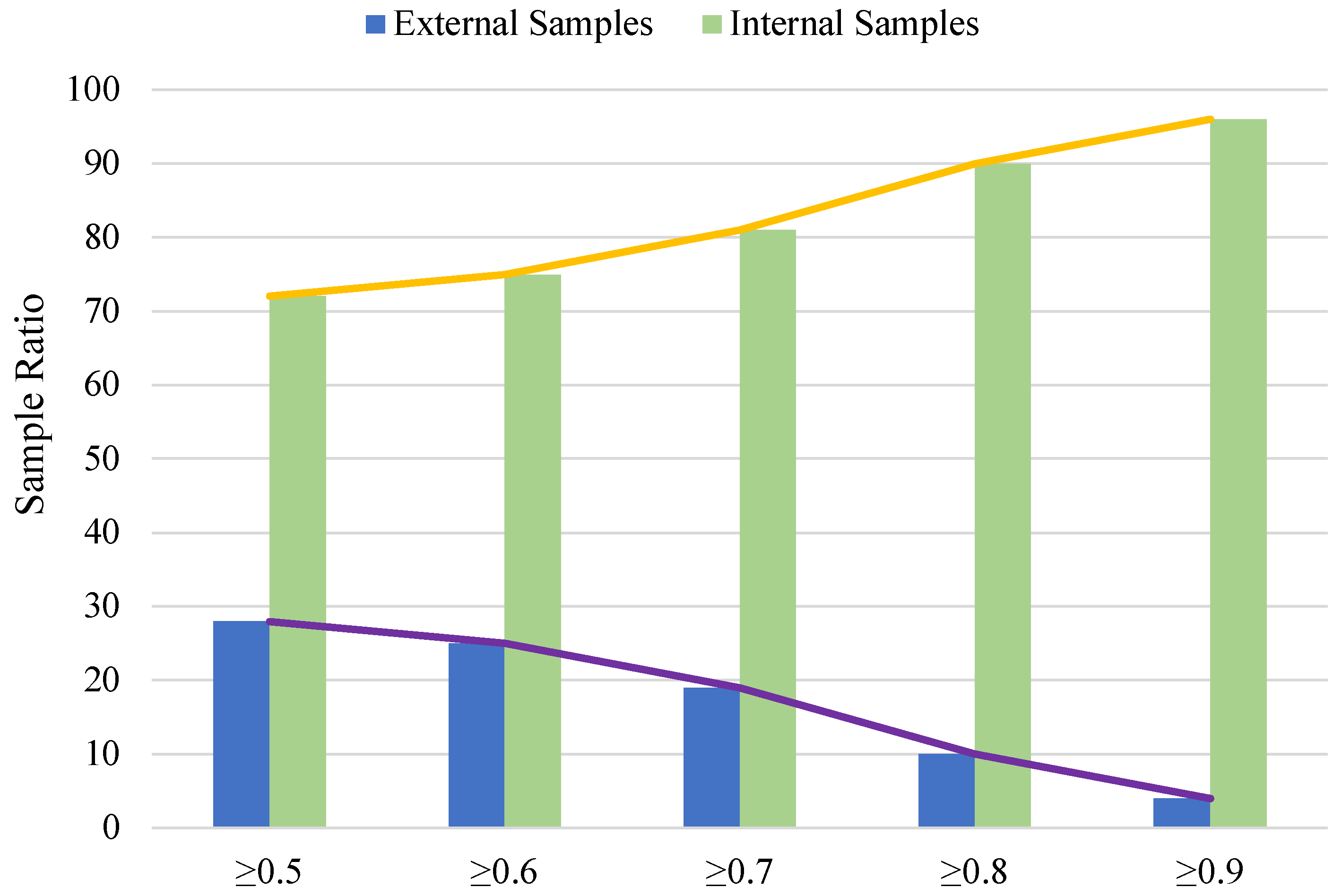

4.5. Effectiveness and Limitations

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| DFM | Dual-Level Rotation-Horizontal Feature Fusion Module |

| PDE Loss | Prediction Difference and Entropy-Constrained Loss |

| BBox | Bounding Box |

| RPN | Region Proposal Network |

| MLP | Multi-Layer Perceptron |

| IoU | Intersection over Union |

| RoIs | Regions of Interest |

| CW | Condition Weight |

| FPN | Feature Pyramid Network |

Appendix A

More Visualizations

References

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster R-CNN: Towards real-time object detection with region proposal networks. IEEE Trans. Pattern Anal. Mach. Intell. 2016, 39, 1137–1149. [Google Scholar] [CrossRef]

- Redmon, J.; Divvala, S.; Girshick, R.; Farhadi, A. You only look once: Unified, real-time object detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; IEEE: New York, NY, USA, 2016; pp. 779–788. [Google Scholar]

- Zhang, G.; Ou, Z.; Xue, K.; Sun, J.; Zhu, Y.; Yao, S.; Shen, Y.; Song, M. DGFSD: Bridging the Gap between Dense and Sparse for Fully Sparse 3D Object Detection. In Proceedings of the 33rd ACM International Conference on Multimedia, Dublin, Ireland,27–31 October 2025; Association for Computing Machinery: New York, NY, USA, 2025; pp. 4669–4678. [Google Scholar]

- Zhang, G.; Song, Z.; Liu, L.; Ou, Z. FGU3R: Fine-Grained Fusion via Unified 3D Representation for Multimodal 3D Object Detection. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Hyderabad, India, 6–11 April 2025; IEEE: New York, NY, USA, 2025; pp. 1–5. [Google Scholar]

- Luo, G.; Sun, J.; Jin, L.; Zhou, Y.; Xu, Q.; Fu, R.; Sun, X.; Ji, R. Domain incremental learning for object detection. Pattern Recognit. 2026, 170, 111882. [Google Scholar] [CrossRef]

- Sapkota, R.; Karkee, M. Object detection with multimodal large vision-language models: An in-depth review. Inf. Fusion 2026, 126, 103575. [Google Scholar] [CrossRef]

- Liu, C.; Ma, X.; Yang, X.; Zhang, Y.; Dong, Y. COMO: Cross-mamba interaction and offset-guided fusion for multimodal object detection. Inf. Fusion 2026, 125, 103414. [Google Scholar] [CrossRef]

- Zahid, F.; Rajput, S.; Ali, S.S.A.; Aromoye, I.A. Challenges and Innovations in 3D Object Recognition: The Integration of LiDAR and Camera Sensors for Autonomous Applications. Transp. Res. Procedia 2025, 84, 618–624. [Google Scholar] [CrossRef]

- Xie, X.; Cheng, G.; Wang, J.; Yao, X.; Han, J. Oriented R-CNN for object detection. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, QC, Canada, 10–17 October 2021; IEEE: New York, NY, USA, 2021; pp. 3520–3529. [Google Scholar]

- Ding, J.; Xue, N.; Long, Y.; Xia, G.S.; Lu, Q. Learning RoI transformer for oriented object detection in aerial images. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 15–20 June 2019; IEEE: New York, NY, USA, 2019; pp. 2849–2858. [Google Scholar]

- Xu, Y.; Fu, M.; Wang, Q.; Wang, Y.; Chen, K.; Xia, G.S.; Bai, X. Gliding vertex on the horizontal bounding box for multi-oriented object detection. IEEE Trans. Pattern Anal. Mach. Intell. 2020, 43, 1452–1459. [Google Scholar] [CrossRef]

- Lin, T.Y.; Goyal, P.; Girshick, R.; He, K.; Dollár, P. Focal loss for dense object detection. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; IEEE: New York, NY, USA, 2017; pp. 2980–2988. [Google Scholar]

- Han, J.; Ding, J.; Li, J.; Xia, G.S. Align deep features for oriented object detection. IEEE Trans. Geosci. Remote Sens. 2021, 60, 5602511. [Google Scholar] [CrossRef]

- Zeng, Y.; Chen, Y.; Yang, X.; Li, Q.; Yan, J. ARS-DETR: Aspect ratio-sensitive detection transformer for aerial oriented object detection. IEEE Trans. Geosci. Remote Sens. 2024, 62, 5610315. [Google Scholar] [CrossRef]

- Li, Y.; Hou, Q.; Zheng, Z.; Cheng, M.M.; Yang, J.; Li, X. Large selective kernel network for remote sensing object detection. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Paris, France, 1–6 October 2023; IEEE: New York, NY, USA, 2023; pp. 16794–16805. [Google Scholar]

- Han, J.; Ding, J.; Xue, N.; Xia, G.S. Redet: A rotation-equivariant detector for aerial object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 20–25 June 2021; IEEE: New York, NY, USA, 2021; pp. 2786–2795. [Google Scholar]

- Su, W.; Jing, D. DDL R-CNN: Dynamic direction learning R-CNN for rotated object detection. Algorithms 2025, 18, 21. [Google Scholar] [CrossRef]

- Ming, Q.; Zhou, Z.; Miao, L.; Zhang, H.; Li, L. Dynamic anchor learning for arbitrary-oriented object detection. arXiv 2020, arXiv:2012.04150. [Google Scholar] [CrossRef]

- Ou, Z.; Chen, Z.; Shen, S.; Fan, L.; Yao, S.; Song, M.; Hui, P. Free3Net: Gliding Free, Orientation Free, and Anchor Free Network for Oriented Object Detection. IEEE Trans. Multimed. 2022, 25, 7089–7100. [Google Scholar] [CrossRef]

- Wang, K.; Liu, J.; Lin, Y.; Wang, T.; Zhang, Z.; Qi, W.; Han, X.; Wen, R. ASL-OOD: Hierarchical Contextual Feature Fusion with Angle-Sensitive Loss for Oriented Object Detection. Comput. Mater. Contin. 2025, 82, 1879. [Google Scholar] [CrossRef]

- Wang, W.; Cai, Y.; Luo, Z.; Liu, W.; Wang, T.; Li, Z. SA3Det: Detecting Rotated Objects via Pixel-Level Attention and Adaptive Labels Assignment. Remote Sens. 2024, 16, 2496. [Google Scholar] [CrossRef]

- Dang, M.; Liu, G.; Kong, A.W.K.; Zheng, Z.; Luo, N.; Pan, R. RO2-DETR: Rotation-equivariant oriented object detection transformer with 1D rotated convolution kernel. ISPRS J. Photogramm. Remote Sens. 2025, 228, 166–178. [Google Scholar] [CrossRef]

- Wang, S.; Jiang, H.; Yang, J.; Ma, X.; Chen, J. AMFEF-DETR: An End-to-End Adaptive Multi-Scale Feature Extraction and Fusion Object Detection Network Based on UAV Aerial Images. Drones 2024, 8, 523. [Google Scholar] [CrossRef]

- Li, S.; Yan, F.; Liu, Y.; Shen, Y.; Liu, L.; Wang, K. A multi-scale rotated ship targets detection network for remote sensing images in complex scenarios. Sci. Rep. 2025, 15, 2510. [Google Scholar] [CrossRef] [PubMed]

- Zhou, W.; Liu, X.; Zheng, Y.; Zhang, D.; Xiang, H. AFPN Based YOLOX for Rotation Object Detection in Remote Sensing Image. In Proceedings of the 2024 China Automation Congress (CAC), Qingdao, China, 1–3 November 2024; IEEE: New York, NY, USA, 2024; pp. 5841–5846. [Google Scholar] [CrossRef]

- Thai, C.; Trang, M.X.; Ninh, H.; Ly, H.H.; Le, A.S. Enhancing rotated object detection via anisotropic Gaussian bounding box and Bhattacharyya distance. Neurocomputing 2025, 623, 129432. [Google Scholar] [CrossRef]

- Ma, S.; Xu, Y. FPDIoU Loss: A loss function for efficient bounding box regression of rotated object detection. Image Vis. Comput. 2025, 154, 105381. [Google Scholar] [CrossRef]

- Li, W.; Shang, R.; Ju, Z.; Feng, J.; Xu, S.; Zhang, W. Ellipse IoU Loss: Better Learning for Rotated Bounding Box Regression. IEEE Geosci. Remote Sens. Lett. 2024, 21, 6001705. [Google Scholar] [CrossRef]

- Xia, G.S.; Bai, X.; Ding, J.; Zhu, Z.; Belongie, S.; Luo, J.; Datcu, M.; Pelillo, M.; Zhang, L. DOTA: A large-scale dataset for object detection in aerial images. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; IEEE: New York, NY, USA, 2018; pp. 3974–3983. [Google Scholar]

- Everingham, M.; Van Gool, L.; Williams, C.K.; Winn, J.; Zisserman, A. The pascal visual object classes (voc) challenge. Int. J. Comput. Vis. 2010, 88, 303–338. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; IEEE: New York, NY, USA, 2016; pp. 770–778. [Google Scholar]

- Lin, T.Y.; Dollár, P.; Girshick, R.; He, K.; Hariharan, B.; Belongie, S. Feature pyramid networks for object detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; IEEE: New York, NY, USA, 2017; pp. 2117–2125. [Google Scholar]

- Yi, J.; Wu, P.; Liu, B.; Huang, Q.; Qu, H.; Metaxas, D. Oriented object detection in aerial images with box boundary-aware vectors. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision (WACV), Virtual, 5–9 January 2021; IEEE: New York, NY, USA, 2021; pp. 2150–2159. [Google Scholar]

- Yang, X.; Liu, Q.; Yan, J.; Li, A.; Zhang, Z.; Yu, G. R3det: Refined single-stage detector with feature refinement for rotating object. arXiv 2019, arXiv:1908.05612. [Google Scholar] [CrossRef]

- Lin, Y.; Feng, P.; Guan, J.; Wang, W.; Chambers, J. IENet: Interacting embranchment one stage anchor free detector for orientation aerial object detection. arXiv 2019, arXiv:1912.00969. [Google Scholar]

- Yang, X.; Zhou, Y.; Zhang, G.; Yang, J.; Wang, W.; Yan, J.; Zhang, X.; Tian, Q. The KFIoU loss for rotated object detection. arXiv 2022, arXiv:2201.12558. [Google Scholar]

- Chen, Z.; Chen, K.; Lin, W.; See, J.; Yu, H.; Ke, Y.; Yang, C. Piou loss: Towards accurate oriented object detection in complex environments. In Proceedings of the European Conference on Computer Vision (ECCV); Springer: Cham, Switzerland, 2020; pp. 195–211. [Google Scholar]

- Pan, X.; Ren, Y.; Sheng, K.; Dong, W.; Yuan, H.; Guo, X.; Ma, C.; Xu, C. Dynamic refinement network for oriented and densely packed object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; IEEE: New York, NY, USA, 2020; pp. 11207–11216. [Google Scholar]

- Qian, W.; Yang, X.; Peng, S.; Yan, J.; Guo, Y. Learning modulated loss for rotated object detection. In Proceedings of the AAAI Conference on Artificial Intelligence, Virtual, 19–21 May 2021; AAAI Press: Palo Alto, CA, USA, 2021; Volume 35, pp. 2458–2466. [Google Scholar]

- Ming, Q.; Miao, L.; Zhou, Z.; Dong, Y. CFC-Net: A critical feature capturing network for arbitrary-oriented object detection in remote-sensing images. IEEE Trans. Geosci. Remote Sens. 2021, 60, 5605814. [Google Scholar] [CrossRef]

- Qian, W.; Yang, X.; Peng, S.; Zhang, X.; Yan, J. RSDet++: Point-based modulated loss for more accurate rotated object detection. IEEE Trans. Circuits Syst. Video Technol. 2022, 32, 7869–7879. [Google Scholar] [CrossRef]

- Guo, Z.; Liu, C.; Zhang, X.; Jiao, J.; Ji, X.; Ye, Q. Beyond bounding-box: Convex-hull feature adaptation for oriented and densely packed object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 20–25 June 2021; IEEE: New York, NY, USA, 2021; pp. 8792–8801. [Google Scholar]

- Xie, X.; Cheng, G.; Rao, C.; Lang, C.; Han, J. Oriented object detection via contextual dependence mining and penalty-incentive allocation. IEEE Trans. Geosci. Remote Sens. 2024, 62, 5618010. [Google Scholar] [CrossRef]

- Jiang, Y.; Zhu, X.; Wang, X.; Yang, S.; Li, W.; Wang, H.; Fu, P.; Luo, Z. R2CNN: Rotational region CNN for orientation robust scene text detection. arXiv 2017, arXiv:1706.09579. [Google Scholar] [CrossRef]

- Yang, X.; Yang, J.; Yan, J.; Zhang, Y.; Zhang, T.; Guo, Z.; Sun, X.; Fu, K. Scrdet: Towards more robust detection for small, cluttered and rotated objects. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Republic of Korea, 27 October–2 November 2019; IEEE: New York, NY, USA, 2019; pp. 8232–8241. [Google Scholar]

- Cheng, G.; Wang, J.; Li, K.; Xie, X.; Lang, C.; Yao, Y.; Han, J. Anchor-free oriented proposal generator for object detection. IEEE Trans. Geosci. Remote Sens. 2022, 60, 5625411. [Google Scholar] [CrossRef]

| Category | Representative Methods | Key Mechanism | Main Limitations |

|---|---|---|---|

| Feature Extraction Design | ASL-OOD [20] | Swin Transformer + Context Fusion | Focus predominantly on internal object features or suppressing background noise, neglecting the potential discriminative value of external background context for confusing targets. |

| SA3Det [21] | Pixel-level Self-Attention | ||

| RO2-DETR [22] | Rotation-Equivariant Attention | ||

| AMFEF-DETR [23] | Multi-scale Feature Interaction | ||

| Loss Function Design | KLD Loss [25] | Kullback–Leibler Divergence | Prioritize geometric alignment precision (e.g., IoU or Gaussian distribution), but lack explicit modeling of classification uncertainty for hard-to-distinguish samples. |

| Bhattacharyya [26] | Anisotropic Gaussian Distribution | ||

| FPDIOU Loss [27] | Point-based IoU Approximation | ||

| Ellipse IoU [28] | Inscribed Ellipse IoU |

| Methods | PL | BD | BR | GTF | SV | LV | SH | TC | BC | ST | SBF | RA | HA | SP | HC | mAP |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| one-stage | ||||||||||||||||

| IENet | 80.20 | 64.54 | 39.82 | 32.07 | 49.71 | 65.01 | 52.58 | 81.45 | 44.66 | 78.51 | 46.54 | 56.73 | 64.40 | 64.24 | 36.75 | 57.10 |

| KFIoU | 88.83 | 77.51 | 47.79 | 74.28 | 71.27 | 62.72 | 74.75 | 90.72 | 82.34 | 81.61 | 58.44 | 64.23 | 64.39 | 67.87 | 44.07 | 70.05 |

| PIoU | 80.90 | 69.70 | 24.10 | 60.20 | 38.30 | 64.40 | 64.80 | 90.90 | 77.20 | 70.40 | 46.50 | 37.10 | 57.10 | 61.90 | 64.00 | 60.50 |

| DRN | 88.91 | 80.22 | 43.52 | 63.35 | 73.48 | 70.69 | 84.94 | 90.14 | 83.85 | 84.11 | 50.12 | 58.41 | 67.62 | 68.60 | 52.50 | 70.70 |

| BBAVectors | 88.35 | 79.96 | 50.69 | 62.18 | 78.43 | 78.98 | 87.94 | 90.85 | 83.58 | 84.35 | 54.13 | 60.24 | 65.22 | 64.28 | 55.70 | 72.32 |

| RSDet | 89.80 | 82.90 | 48.60 | 65.20 | 69.50 | 70.10 | 70.20 | 90.50 | 85.60 | 83.40 | 62.50 | 63.90 | 65.60 | 67.20 | 68.00 | 72.20 |

| CFC-Net | 89.08 | 80.41 | 52.41 | 70.02 | 76.28 | 78.11 | 87.21 | 90.89 | 84.47 | 85.64 | 60.51 | 61.52 | 67.82 | 68.02 | 50.09 | 73.50 |

| R3Det | 88.76 | 83.09 | 50.91 | 67.27 | 76.23 | 80.39 | 86.72 | 90.78 | 84.68 | 83.24 | 61.98 | 61.35 | 66.91 | 70.63 | 53.94 | 73.79 |

| S2A-Net | 89.11 | 82.84 | 48.37 | 71.11 | 78.11 | 78.39 | 87.25 | 90.83 | 84.90 | 85.64 | 60.36 | 62.60 | 65.26 | 69.13 | 57.94 | 74.12 |

| RSDet++ | 86.80 | 82.70 | 54.60 | 71.70 | 76.60 | 71.20 | 83.50 | 87.40 | 83.40 | 85.30 | 72.40 | 62.90 | 70.90 | 72.30 | 70.40 | 75.40 |

| CFA | 88.07 | 74.57 | 49.25 | 74.43 | 79.02 | 74.14 | 86.76 | 90.87 | 80.39 | 86.03 | 48.49 | 58.89 | 64.38 | 66.87 | 22.51 | 69.63 |

| DFDet | 88.92 | 79.25 | 48.40 | 70.00 | 80.22 | 78.85 | 87.21 | 90.90 | 83.13 | 83.98 | 60.07 | 66.49 | 68.27 | 76.78 | 58.11 | 74.71 |

| two-stage | ||||||||||||||||

| R2CNN | 80.94 | 65.67 | 35.34 | 67.44 | 59.92 | 50.91 | 55.81 | 90.67 | 66.92 | 72.39 | 55.06 | 52.23 | 55.14 | 53.35 | 48.22 | 60.60 |

| RoI-Trans | 88.64 | 78.52 | 43.44 | 75.92 | 68.81 | 73.60 | 83.59 | 90.74 | 77.27 | 81.46 | 58.39 | 53.54 | 62.83 | 58.93 | 47.67 | 69.56 |

| SCRDet | 89.41 | 78.83 | 50.02 | 65.59 | 69.96 | 57.63 | 72.26 | 90.73 | 81.41 | 84.39 | 52.76 | 63.62 | 62.01 | 67.62 | 61.16 | 69.83 |

| AOPG | 89.27 | 83.49 | 52.50 | 69.97 | 73.51 | 82.31 | 87.95 | 90.89 | 87.64 | 84.71 | 60.01 | 66.12 | 74.19 | 68.30 | 57.80 | 75.24 |

| BIF-RCNN (Ours) | 89.28 | 83.03 | 53.37 | 72.64 | 79.01 | 82.07 | 87.97 | 90.89 | 87.18 | 84.91 | 62.03 | 66.37 | 73.84 | 68.48 | 56.30 | 75.82 |

| Strategy ID | Description |

|---|---|

| 1 | Jointly train both the horizontal and rotated detection heads, along with the dynamic weighting parameters, for 12 epochs. |

| 2 | Train the horizontal and rotated heads separately for 12 epochs each. During the training of one head, the parameters of the other head are frozen. After both heads are trained, freeze their parameters and train only the dynamic weighting parameters for 4 additional epochs. The dynamic fusion is only activated when the score difference between the top two predicted categories of the rotated head is less than 0.05. |

| 3 | Train the horizontal and rotated heads separately for 12 epochs each, with the parameters of the other head frozen during each phase. Then, jointly fine-tune both heads along with the dynamic weighting parameters for an additional 4 epochs. |

| Methods | PL | BD | BR | GTF | SV | LV | SH | TC | BC | ST | SBF | RA | HA | SP | HC | mAP |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Baseline (heads = 0) | 89.46 | 82.12 | 54.78 | 70.86 | 78.93 | 83.00 | 88.20 | 90.90 | 87.50 | 84.68 | 63.97 | 67.69 | 74.94 | 68.84 | 52.28 | 75.87 |

| Strategy-1 | 88.95 | 74.04 | 45.41 | 67.12 | 73.40 | 77.79 | 87.37 | 90.86 | 83.14 | 83.76 | 45.99 | 57.63 | 65.52 | 62.73 | 41.61 | 69.69 |

| Strategy-2 | 88.35 | 82.98 | 51.90 | 71.12 | 77.61 | 77.58 | 87.72 | 90.90 | 85.99 | 83.51 | 59.13 | 63.20 | 66.78 | 71.47 | 60.69 | 74.66 |

| Strategy-3 | 89.39 | 82.66 | 52.03 | 70.60 | 77.37 | 77.38 | 87.72 | 90.90 | 85.90 | 83.62 | 59.02 | 61.30 | 66.63 | 71.02 | 60.65 | 74.41 |

| Num of Heads | PL | BD | BR | GTF | SV | LV | SH | TC | BC | ST | SBF | RA | HA | SP | HC | mAP |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Baseline (heads = 0) | 89.46 | 82.12 | 54.78 | 70.86 | 78.93 | 83.00 | 88.20 | 90.90 | 87.50 | 84.68 | 63.97 | 67.69 | 74.94 | 68.84 | 52.28 | 75.87 |

| heads = 1 | 89.29 | 75.82 | 50.55 | 68.61 | 77.83 | 77.46 | 87.50 | 90.89 | 85.97 | 84.84 | 60.21 | 62.42 | 67.17 | 68.27 | 50.37 | 73.15 |

| heads = 2 | 89.51 | 75.95 | 49.63 | 65.35 | 78.29 | 77.78 | 87.47 | 90.90 | 84.19 | 83.60 | 56.26 | 62.65 | 66.71 | 70.08 | 56.11 | 72.97 |

| heads = 4 | 89.30 | 81.58 | 52.63 | 70.93 | 77.87 | 77.79 | 87.67 | 90.89 | 84.94 | 84.75 | 60.69 | 65.36 | 67.34 | 66.19 | 55.94 | 74.26 |

| heads = 8 | 89.33 | 83.24 | 52.81 | 70.37 | 78.57 | 77.84 | 87.71 | 90.89 | 83.77 | 84.20 | 59.91 | 66.83 | 67.39 | 68.09 | 48.40 | 73.96 |

| Accuracy Change | Specific Categories |

|---|---|

| Significant Increase (>+1% mAP) | BD, SP, HC |

| No Significant Change (within ±1% mAP) | PL, BR, GTF, SV, SH, TC, ST, RA |

| Significant Decrease (<% mAP) | LV, BC, SBF, HA |

| Num of Heads | PL | BD | BR | GTF | SV | LV | SH | TC | BC | ST | SBF | RA | HA | SP | HC | mAP |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Baseline (epoch = 12) | 89.46 | 82.12 | 54.78 | 70.86 | 78.93 | 83.00 | 88.20 | 90.90 | 87.50 | 84.68 | 63.97 | 67.69 | 74.94 | 68.84 | 52.28 | 75.87 |

| heads = 4 (epoch = 12) | 89.40 | 80.81 | 52.20 | 70.51 | 78.19 | 77.30 | 87.51 | 90.90 | 84.96 | 85.06 | 59.17 | 63.39 | 67.32 | 66.41 | 52.11 | 73.68 |

| heads = 4 (epoch = 16) | 89.33 | 80.81 | 52.92 | 70.01 | 78.04 | 77.60 | 87.60 | 90.87 | 85.62 | 85.07 | 61.55 | 66.62 | 66.71 | 67.36 | 52.52 | 74.23 |

| heads = 8 (epoch = 12) | 89.37 | 75.63 | 51.95 | 71.96 | 77.66 | 81.34 | 87.59 | 90.88 | 85.82 | 84.33 | 57.87 | 68.91 | 67.21 | 68.16 | 53.57 | 74.15 |

| heads = 8 (epoch = 16) | 89.27 | 80.74 | 53.01 | 71.61 | 77.71 | 77.46 | 87.50 | 90.86 | 85.91 | 84.49 | 61.43 | 66.79 | 67.49 | 68.25 | 53.98 | 74.43 |

| Methods | PL | BD | BR | GTF | SV | LV | SH | TC | BC | ST | SBF | RA | HA | SP | HC | mAP |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Baseline | 89.46 | 82.12 | 54.78 | 70.86 | 78.93 | 83.00 | 88.20 | 90.90 | 87.50 | 84.68 | 63.97 | 67.69 | 74.94 | 68.84 | 52.28 | 75.87 |

| Baseline + DFM | 89.27 | 82.30 | 53.24 | 72.14 | 78.67 | 82.62 | 87.94 | 90.90 | 85.89 | 84.57 | 62.04 | 64.73 | 74.56 | 68.87 | 57.19 | 75.66 |

| mAP | ||

|---|---|---|

| 0.6 | 0.4 | 75.25 |

| 0.7 | 0.3 | 75.69 |

| 0.8 | 0.2 | 75.82 |

| 0.4 | 75.79 | |

| 0.6 | 75.11 | |

| 0.8 | 70.90 |

| Methods | PL | BD | BR | GTF | SV | LV | SH | TC | BC | ST | SBF | RA | HA | SP | HC | mAP |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Baseline | 89.46 | 82.12 | 54.78 | 70.86 | 78.93 | 83.00 | 88.20 | 90.90 | 87.50 | 84.68 | 63.97 | 67.69 | 74.94 | 68.84 | 52.28 | 75.87 |

| Baseline + DFM | 89.27 | 82.30 | 53.24 | 72.14 | 78.67 | 82.62 | 87.94 | 90.90 | 85.89 | 84.57 | 62.04 | 64.73 | 74.56 | 68.87 | 57.19 | 75.66 |

| Baseline + PDE | 89.34 | 81.93 | 52.49 | 73.12 | 79.00 | 81.68 | 88.08 | 90.90 | 86.05 | 84.58 | 60.58 | 64.03 | 67.94 | 68.65 | 56.96 | 75.02 |

| Baseline + DFM + PDE | 89.28 | 83.03 | 53.37 | 72.64 | 79.01 | 82.07 | 87.97 | 90.89 | 87.18 | 84.91 | 62.03 | 66.37 | 73.84 | 68.48 | 56.30 | 75.82 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Zhao, J.; Xu, X.; Wang, S.; Zhang, P.; Shen, S.; Zeng, H.; Bu, X.; Shen, Y.; Xue, K.; Zong, P.; et al. BIF-RCNN: Fusing Background Information for Rotated Object Detection. Algorithms 2026, 19, 139. https://doi.org/10.3390/a19020139

Zhao J, Xu X, Wang S, Zhang P, Shen S, Zeng H, Bu X, Shen Y, Xue K, Zong P, et al. BIF-RCNN: Fusing Background Information for Rotated Object Detection. Algorithms. 2026; 19(2):139. https://doi.org/10.3390/a19020139

Chicago/Turabian StyleZhao, Jianbin, Xing Xu, Shaoying Wang, Pengfei Zhang, Shengyi Shen, Hui Zeng, Xiangshuai Bu, Yiran Shen, Kaiwen Xue, Ping Zong, and et al. 2026. "BIF-RCNN: Fusing Background Information for Rotated Object Detection" Algorithms 19, no. 2: 139. https://doi.org/10.3390/a19020139

APA StyleZhao, J., Xu, X., Wang, S., Zhang, P., Shen, S., Zeng, H., Bu, X., Shen, Y., Xue, K., Zong, P., Zhang, G., Ou, Z., Song, M., & Zhu, Y. (2026). BIF-RCNN: Fusing Background Information for Rotated Object Detection. Algorithms, 19(2), 139. https://doi.org/10.3390/a19020139