An In-Depth Review of Speech Enhancement Algorithms: Classifications, Underlying Principles, Challenges, and Emerging Trends

Abstract

1. Introduction

2. Speech Enhancement Algorithms (SEAs)

2.1. Mathematical Formulation of Speech Enhancement Problem

2.2. Single-Channel Speech Enhancement Algorithms (SC-SEA)

2.2.1. Traditional Speech Enhancement Algorithms

- Methods Based on Transform DomainThese methods are based on the transform domain, where the signals are transformed into another domain to show the hidden properties that are used in the desired processing. There are many different transforms that are applied in SE such as STFT, DCT, or wavelet transforms. The most significant SEA that are applied in transform domain are listed below:

- Spectral Subtraction (SS)The method of spectral subtraction (SS) is historically one of the first algorithms suggested for noise reduction [27]. Its operation is based on a simple principle: if we assume that noise is simply added to speech, we can obtain pure speech by subtracting the estimated noise spectrum from the noisy spectrum. To do this, the noise spectrum is estimated (and updated) during the moments when no one is talking [4]. This technique performs the reduction of noise based on precise statistics that estimate the noise energy spectrum by finding the smallest value for a smooth energy spectrum of a distorted speech signal. The spectral-subtractive algorithms are evaluated for a wide range of noise types at varying volume levels using a single-channel speech source and they are suitable for voice communication methods [28].

- Statistical MethodsStatistical speech enhancement methods are a major subclass of transform domain single-channel enhancement algorithms. These methods depend on the statistical modeling of clean speech and noise in the spectral domain (such as STFT or DCT). They estimate the clean speech spectrum by applying optimal estimators derived from Bayesian estimation theory, MMSE theory, or MAP-based inference. These methods are the backbone of classical speech enhancement techniques [4] and include the following:STSA-MMSEThe Short-Time Spectral Amplitude Minimum Mean Square Error Estimator (STSA-MMSE) treats speech enhancement as a statistical estimation issue in the time–frequency domain. In the STFT domain, the noisy signal is modeled as the sum of clean speech and extra noise. This is based on the idea that noise is commonly assumed to follow a Gaussian distribution, while the speech’s spectral amplitude is modeled using appropriate statistical priors. A closed-form optimal gain function was derived that minimizes the predicted squared error between the actual and estimated spectral amplitudes, predicated on this assumption [29]. One of the best things about the STSA-MMSE is that it becomes cleared of noise without adding musical noise artifacts, which is a problem with spectral subtraction algorithms. The estimator combines a priori and a posteriori SNRs to do this. The estimator proved to be of significantly enhanced perceptual quality compared to traditional spectral subtraction methods.The STSA-MMSE estimator is a basis for numerous prevalent statistical speech enhancement techniques, such as the Minimum Mean Square Error Log-Spectral Amplitude Estimator (MMSE-LSA) [29] and the Optimally Modified LSA estimator [30]. The STSA-MMSE is still one of the most important methods for improving single-channel speech and is thought to be a key part of noise suppression based on statistical models. Mojtaba Bahrami and Neda Faraji [31] presented a model that consider a modern extension of the STSA-MMSE algorithm, which depends on modeling the amplitude of the speech spectrum using the Weibull distribution with the inclusion of uncertainty in the presence of speech, resulting in the improved performance of the STSA-MMSE algorithm compared to traditional statistical models.Maximum A Posteriori (MAP)The MAP estimator is a statistical model-based speech enhancement technique that estimates the clean speech spectral components by maximizing the posterior probability depending on the noisy observation. Unlike MMSE, which computes the expectation of the posterior distribution, MAP selects the most probable value. Assuming that noise is Gaussian and speech is a statistical model, the MAP estimator yields gain functions dependent on the a priori and a posteriori SNRs. When the posterior distribution is symmetric, the MAP estimator and the MMSE produce identical results; otherwise, MAP provides another practical choice with a little stronger suppression at low SNR conditions [4]. Thomas Lotter and Peter Vary in [32] presented two speech spectral amplitude estimators based on maximum a posteriori estimation (MAP) using the super-Gaussian statistical modeling of speech Fourier coefficient amplitudes. The model assumes that speech’s spectral amplitudes follow distributions such as Laplace or gamma rather than the traditional Gaussian distribution. The results show that the proposed estimators achieve better speech quality optimization compared to the traditional algorithms such as STSA-MMSE and MMSE-LSA, which assume Gaussian speech and noise models. Raziyeh Ranjbaryan and Hamid Reza Abutalebi [33] proposed a multi-frame MAP algorithm to improve speech quality using a single microphone by utilizing the temporal correlation between speech parameters in the STFT domain. This is realized by using the current frame and a limited number of preceding frames at each time–frequency unit. To form this correlation, a complex factor is employed to express the temporal relationship between the speech parameters in adjacent frames. Multi-frame MAP estimators are then derived to enhance speech and reduce noise. The results prove that this method outperforms conventional MAP estimators and Wiener filters in noise reduction.Wiener Filtering (WF)This is statistically the best way to estimate speech and noise when they are modeled as Gaussian [34]. It works like an "optimal" filter, depending on statistical assumptions and past information to estimate the clean speech from a noisy input. This filter minimizes the mean square error (MSE) of the magnitude spectrum between the estimated speech signal and the clean signal. Its main goal is to reduce the difference between the filtered output and the actual clean signal as much as possible to reach the optimum value. The main limitation of the Wiener filter is that it needs an accurate estimates of both the clean signal and the noise spectra, which is not always easy in practice especially when the noise is non-stationary or rapidly changing [35]. Over the years, different modifications of this filter are used for enhancement task [6]. Anil Garg in [36] proposed a speech enhancement model based on a Long Short-Term Memory (LSTM) network to intelligently set the tuning factor in an adaptive Wiener filter according to signal characteristics. The researcher used a combination of Non-Negative Matrix Factorization (NMF), Empirical Mean Decomposition (EMD), and Bark frequency features to train the system, realizing larger performance compared to traditional models in terms of PESQ and SNR across several noise environments. Rahul Kumar Jaiswal et al. in [37] introduce an implicit WF that adaptively estimates noise using a recursive equation, enabling strong single-channel speech enhancement in both stationary and non-stationary noise conditions. The algorithm is tested on different noises like drones, helicopters, and airplanes, and it is implemented on Raspberry Pi4. This method surpasses the spectral approach in the intelligibility and nature of the speech.

- Signal Subspace algorithms (SSA)The signal subspace approach (SSA) provides dimensionality reduction and a better trade-off between residual noise and the signal distortion of the output signal compared to other existing techniques with the proper tuning of parameters like window size, matrix rank, etc. These algorithms were all founded on the opinion that the vector space of the noisy signal can be decomposed into “signal” and “noise” subspaces. The decomposition can be achieved using either the singular value decomposition (SVD) of a Toeplitz-structured data matrix or the eigen-decomposition of a covariance matrix [4].Chengli Suna and Junsheng Mu proposed in [38] a subspace-based speech enhancement algorithm that relies on eigenvalue filtering. The method first performs generalized eigenvalue decomposition (GEVD) of the covariance matrices for clean speech and noise, and then removes the less significant components with negative eigenvalues, as these primarily represent noise. Because the filtering process makes the eigenvector matrix irreversible, the generalized inverse is used to reconstruct the speech signal. The experimental results show that the proposed method outperforms conventional approaches, particularly under high-noise conditions, in terms of minimizing speech distortion and reducing residual noise. Chengli SUN et al. in [39] presented an improved subspace speech enhancement method based on Joint Low-Range Scattered Decomposition (JLSMD) to address the fault of traditional methods at low SNR values. The noise signal is represented as a Toeplitz matrix, where the low-range portion represents clean speech and the sparse portion represents noise. The experimental results explain the distinction of the proposed method in preserving speech quality and reducing residual noise compared to traditional methods, especially under high-noise conditions.

- Methods based on time domainIn these methods, the operations are performed directly on the speech signal. Therefore, the information is observed and extracted from the waveform time samples. There are several methods were proposed to perform SEA in time domain [40]. Some of these well-known methods are listed below:

- Optimal filteringGenerally, to find the estimated signal from the noisy signal that is degraded by additive noise, a filtering process is applied that performs the suppression operation. It attempts to remove noise from speech in a way that results in the least possible error or distortion [41]. There are several significant filters working in time domain. These filters are mitigating the noise level that is picked up by the microphone signal. For example, the filter called the Minimum Variance Distortionless Response (MVDR) was presented by Capon [42] in this field. It is a linear filter that minimizes the output variance at its output, and simultaneously preserves the distortionless response to a specific input vector [43,44]. Some modification was performed at this filter such as Orthogonal Decomposition Based Minimum Variance Distortionless Response (ODMVDR) [43]. There are other filters working in time domain such as the Linearly Constrained Minimum Variance (LCMV) [45] and Harmonic Decomposition Linearly Constrained Minimum Variance (HDLCMV) [46]. Each filter has its own limitations [43] that are dependent on the nature of the signal and noise type.The time domain Wiener filter (WF), which achieves minimum mean square error without spectral transformation, is another example of this domain. The reconstruction of the clean signal can be gained by making a convolution operation between the WF gain and the observed signal [4]. The WF name is based on the mathematician Norbert Wiener; this scientist formulated WF problems and solved them [47]. Another types of optimum filters can be found in [48].

- Comb filteringComb filtering is one of the earliest and simplest methods used to enhance noisy signals in time domain [49]. Its principle is based on the nature of the speech signal, which is a quasi-periodic. Finite Impulse Response (FIR) is used as a basic approach within it, where its coefficients are changed with the fundamental components of the noisy waveform. The input is the noisy signal. So, a decision to determine the voiced from unvoiced signal is made initially. A scaling factor is applied for unvoiced speech (also for silent periods) to suppress the noise, while for voiced signals, the FIR filter function is applied. The final output is the weighted sum of successive points separated by a constant pitch period of the desired signal. Consequently, the sequential periods of voiced signal are added. On the other hand, the unvoiced sound and noise are not. The output of this filter is given [50] by the Equation (5):where is the noisy signal, is the processed signal, and represents the point of comb coefficients. In practice, this filter is not very effective because the ability to remove the noise depends on increasing the coefficient number, but this has a bad impact when the speech signal characteristics are quickly changing. Besides, when fundamental frequency varies, a comb filter impulse response becomes less desirable. Therefore, this filter is considered not competitive currently and other methods are found, such as adaptive filter [49].

- ANN and HMM FilteringArtificial neural networks (ANNs) and the Hidden Markov Model (HMM) have hidden states, and they are nonlinear modeling techniques which have a good performance in the speech enhancement (SE) process. HMM was applied firstly in the field of SE in [51] to model speech and noise statistics. The general structure is demonstrated in Figure 3. HMM-based methods model speech and noise statistics using composite probabilistic structures and typically estimate clean speech in the MMSE sense through weighted Wiener filtering.Different aspects based on HHM models can be found in [52,53]. Although these approaches demonstrate good performance under matched noise conditions, they often suffer from speech distortion and residual noise, particularly in voiced segments, due to inaccurate state estimation in non-stationary environments.On the other hand, ANN-based speech enhancement methods learn a direct mapping from noisy to clean speech representations through supervised training. The enhanced speech signal is obtained when NN is trained correctly; however, the training process is very time consuming. Besides, the training is implemented for a specific noise type and SNR level, so it cannot be used for another type of noise. This limitation arises from the noise-dependent nature of training, which restricts their generalization capability. Consequently, ANN-based approaches require retraining or prior knowledge of noise characteristics, making them less suitable for real-time speech enhancement systems. For instance, NN in [54] was trained only for white noise and specific SNR. The integration of ANN into cochlear implant (CI) was also performed [55]. This system decomposes the degradation signal, and then extracts a set of features for feeding the NN to make the estimation. However, a minor decrease in performance was noticed and the work is implemented for CI vocoder simulation not for CI users. Basically, statistical learning-based SEAs achieve a good performance when they are working only in same noise condition as the training set [56].

2.2.2. Machine Learning Speech Enhancement Techniques

- Traditional Machine Learning ApproachTraditional machine learning approaches to single-channel speech enhancement refer to methods that depend shallow machine learning models, where the feature extraction and speech enhancement phases are usually separated, relying on specific mathematical or statistical assumptions for modeling speech and noise, and with a limited number of parameters compared to deep learning methods. Depending on the implicit modeling strategy, most well-known machine learning approaches that are used for speech enhancement are: SVM-based, GMM-based [58], and NMF-based methods, which are discussed below.

- Support vector machine (SVM)SVM is a supervised machine learning algorithm used for classification and regression tasks. It attempts to find the best boundary, known as the hyperplane, that separates different classes in the data. It is useful when you want to perform binary classification that has been widely applied in various signal processing and speech-related applications. It is a famous machine learning technology that has grown in popularity due to its ability to address challenging classification problems in a wide range of applications. SVM models are often used in text classification problems because their capacities to handle enormous quantities of features. It is a successful technique for data classification derived from statistical learning theory. The main idea of SVM in speech enhancement is to implicitly map the input features into a higher dimensional feature space by using a kernel function, where it is easier to classify with linear decision surfaces. Note that the SVM is also can be extended to multi-class classification problems using strategies such as one-vs-one [59] or one-vs-all. Therefore, SVMs have been verified to be successful classifiers on several classical speech recognition problems. In the SEA field, different works have used SVM as an important tool. For example, Yuto Kinoshita et al. [60] depended on the SVM algorithm to discriminate between the natural voice of the passengers and the sound of the advertisement produced from the speakers, using two spectral features: spectral centroid and spectral roll-off. The SVM creates a resolution boundary that separates the two categories in a binary manner, allowing the system to keep only the advertisement and remove unwanted sounds. The results showed that the classifier realized a high accuracy of 96%, demonstrating the efficiency of the SVM in separating useful speech in a noisy environment. Another method proposed by Ying-YeeKong et al. [61] used the SVM algorithm to enhance speech signals with high accuracy based on spectral features such as MFCC, gammatone, and spectral moments, within a very short time window of 8.After training the SVM on clean and noise-contaminated samples, the model classifies each segment into its correct type, enabling improved speech quality within hearing aids through dedicated processing for each sound category. The success of this classification process with such accuracy makes the SVM a crucial step in improving speech clarity for the hearing impaired.

- Gaussian mixture model (GMM)The GMM model supposes that any signal (such as noise or speech) does not follow a single, simple distribution, but rather consists of several Gaussian distributions, each representing a different part of the signal. Each Gaussian represents a specific spectral pattern in the noise or speech. By combining several Gaussian distributions, the model can accurately describe complex spectral patterns. A GMM for a mixture signal can be composed of K Gaussians [62], and is given by Equation (6):where denotes to the probability density function (PDF) of the mixture signal when both noise and speech signals exist, is the contribution of the GMM component in the mixture recording, and is the Gaussian mixture of the mixture recording for the GMM component and frequency. Also, and denote the mean and covariance matrix of the mixture for the GMM component and frequency, respectively.Wageesha Manamperi et al. [62] presented a new single-channel speech enhancement approach using a GMM depending on multi-stage parametric Wiener filtering method at low SNR conditions. The proposed method simplifies dynamic noise adaptation and improves the speech quality while retaining the speech’s intelligibility in a non-stationary noise environment with a simple multi-stage parametric Wiener filter. On the other hand, Soundarya M. et al. [63] presented GMM as the core model to improve speech accuracy by representing the correct distribution of voice characteristics in a flexible, probabilistic way. The GMM algorithm models the difference of the audio signal such as pitch, speed, and accent by combining several Gaussian distributions, allowing speech sounds to be distinguished accurately even in the presence of noise. Basically, the use of GMM contributes to improving the quality of the input signal to the ASR system and makes the speech process more accurate and reliable compared to traditional methods.

- Non-Negative Matrix Factorization (NMF)NMF is a dimensionality reduction technique that contains a non-negative data matrix (a matrix with non-negative entries) as a product of two non-negative matrices of lower rank than the initial data matrix. This approach relies only on the amplitude information, while completely discarding the phases of the short-time Fourier transforms (STFTs) [64]. The concept is simply that any signal (or matrix) can be represented as a sum of simple, non-negative components. In the case of sound, the signal does not contain negative energy or intensity values; therefore, this type of analysis is realistic and coherent with the nature of the audio signal. NMF algorithms are suggested by Lee and Seung [65]. Given a non-negative matrix, find a non-negative matrix factor and that given in Equation (7):NMF can be applied to the statistical analysis of multivariable data in the following manner. Given a set of multivariable n-dimensional data vectors, the vectors are placed in the columns of an matrix V where m is the number of examples in the dataset. This matrix is then approximately factorized into an matrix W and an matrix H. Usually, r is chosen to be smaller than n or m, so that W and H are smaller than the original matrix V. This results in a compressed version of the original data matrix doing the decomposition by minimizing the reconstruction error between the observation matrix and the model while limiting the matrices to be non-negative as inputs. This approach has been used in numerous unsupervised learning tasks and also in the analysis of music signals, where the non-negativity constraints alone are adequate for the separation of sound sources [65]. This approach has been used in numerous unsupervised learning tasks and also in the analysis of music signals, where the non-negativity constraints alone are adequate for the separation of sound sources [66].

- Deep Learning ApproachesRecent advances in deep learning methods have provided significant support for progress in the SE research field. With the complexity of acoustic environments increasing and the increase in non-stationary noise, traditional methods have become less generalizable, and require precise feature design and manual parameter adjustment. This has led to the appearance of deep learning techniques, based on multi-layered neural networks, that are able to automatically learn speech representations from data without the need for manual feature extraction [21].Advances in computing power include the availability of graphics processing units (GPUs), which enable the efficient training of large-scale neural networks through parallel computation. Additionally, the accessibility of large datasets has sped up the acceptance of deep learning in speech enhancement. Consequently, deep models such as DNN, CNN, GAN, and VAE have proven to attain a better performance compared to traditional methods, particularly in complex, noisy environments that are invisible during training, making deep learning the main trend in modern speech enhancement research [67]. Deep learning approaches can be classified into three classes according to the learning strategy adopted during training, supervised, unsupervised and semi-supervised [21], which will be discussed below.

- Supervised Single-Channel Speech Enhancement Algorithms (SSC-SEA)Supervised Single-Channel Speech Enhancement Algorithms (SSC-SEA) build separate models for speech and noise signals using labeled data and then bring together these models during the enhancement process. The parameters of the models are gained via training based on the signal samples (speech and noise) and the interfacing model is defined by combining the individual models. Because of this, supervised methods depend on the prior supervision and classification of the speech and noise signals [6,22,58]. Supervised methods overtake unsupervised ones under matched conditions and adequate paired data. They include a wide variety of types, such as DNN, GMM, and SVM.Deep Neural Network (DNN)DNN is an artificial neural network (ANN) containing multiple hidden layers between the input and output layers. DNNs can build complex models for nonlinear processing, their architecture is artificial, and the input data is represented as a multi-layered structure. The additional layers assist with the configuration of the features from the lower layers and certainly can model complex input data containing few units as compared to shallow networks; they need a large dataset. In the case of small datasets, the underlying DNNs are unlikely to perform better than other competing methods. The appearance of DNNs has signaled a turning point in this field. By learning complex nonlinear matching between noisy and clean speech from huge datasets, DNN-based models explained maximum capabilities in preserving speech quality and intelligibility. This evolution from statistical modeling to data-driven deep learning approaches has enabled the identification of modern speech enhancement techniques and powerful performance in challenging acoustic environments [10].Some of the work in recent years has been reviewed as follows: Binh Thien Nguyen et at. in [68] proposed new algorithm to improve enhanced speech by directly estimating phase using DNN, rather than depending on a clean amplitude spectrum as traditional methods do. The system is designed using a real-time convolutional recurrent network (CRN), where the model learns the phase–amplitude relationships from the contaminated signal only. The results showed that the proposed method outperformed traditional phase reconstruction algorithms in terms of the speech quality measure (PESQ) and intelligibility measure (STOI) across various signal-to-noise ratios. On the other hand, the residual noise generated is analyzed by DNN-based SEA by Yuxuan Ke et al. [69]. It has been detected that this noise is time inconsistent, auditorily annoying, and cannot be efficiently reduced using traditional post-processing methods. Three post-processing strategies were proposed based on estimating the noise power spectrum density using the MMSE criterion, while at the same time improving speech probability estimation. Both objective and subjective results proved the efficiency of the proposed methods in reducing residual noise and improving the perceptual quality of speech.Generative Adversarial Network (GAN)Generative adversarial networks (GANs) have indeed received significant attention and demonstrated promising results in the field of speech enhancement. GANs consist of a generator network and a discriminator network. In other words, competitive generative networks are generative models that train two DNN models simultaneously: a generator that captures the distribution of the training data, and a discriminator that estimates the probability that the sample came from the training data and not from the generator [70]. GAN training is generally built on convolutional layers or fully connected layers. Speech enhancement based on GAN training was first introduced by Pascual et al. [71]. The generator network learns to map features of the noisy speech into the clean speech, while the discriminator network acts as a binary classifier that evaluates whether the samples come from the clean speech (real) or the enhanced speech (fake). In general, the two networks are trained in an adversarial manner [67]. Sanberk Serb et al. [72] proposed a two-stage system for real-time speech enhancement that combines a predictive filter (DeepFilterNet2) for noise removal and a GAN network to regenerate lost audio details. The experiments show that the system improves over the first stage predictive model in terms of speech quality. Shrishti Saha Shetu et al. [73] proposed DisCoGAN, which is a time–frequency domain generative adversarial network conditioned by the hidden features of a discriminative model pre-trained for speech enhancement in low SNR scenarios. DisCoGAN outperforms other methods in adverse SNR conditions and can also generalize well in high SNR scenarios.Convolutional Neural Networks (CNNs)Convolutional neural networks (CNNs) are widely used in speech enhancement because they can capably extract local patterns in time–frequency representations such as spectral diagrams. CNNs depend on convolutional kernels and weight sharing [74], which reduces computational complexity and increases the model’s robustness against non-stationary noise. Gautam S. Bhat et al. [75] proposed a speech enhancement algorithm based on CNNs and a multi-objective learning approach, where clean speech spectrum estimation is enhanced using additional features such as Mel-Frequency Spectral Coefficients (MFSCs). The proposed method possesses high computational efficiency, allowing real-time implementation on smartphones with low latency. Objective and subjective tests have proved its effectiveness and practical applicability in noisy environments and hearing-impaired support applications. Ashutosh Pandey1 and DeLiang Wang [76] presented the temporal convolutional neural network (TCNN) mode, which depends on an encoder–decoder architecture with a time convolution module to report time dependencies using causal and extended convolutions. The encoder extracts a low-dimensional representation of the distorted signal, while the decoder employs this representation to reconstruct the time domain-enhanced speech. This pure convolutional architecture enables real-time improvement with low latency and fewer coefficients compared to recurrent models.

- Unsupervised Single-Channel Speech Enhancement Algorithms (USSC-SEA)Unsupervised speech enhancement methods have been lately developed to improve the performance of models in SE field. At this point, the models do not use matching clean–noisy data for training. Instead, they use either not-matching noisy–clean data (i.e., the clean and noisy samples do not correspond), or clean data only, or noisy data only. In other words, enabling speech enhancement without the need for paired clean and noisy data. USSC-SEA can be further divided into noise agnostic (NA) and noise dependent (ND) methods. NA methods use only clean speech signals for training. In contrast, ND methods use noise or noisy speech training samples to learn some noise characteristics [77].Variational Autoencoder (VAE)-based speech enhancement is the clearest and most widely known example of unsupervised deep learning for single-channel speech enhancement [78]. This algorithm depends on a deep variational dynamic autoencoder (DVAE) model pre-trained on pure speech signals to learn the dynamic distribution of speech over time. During the improvement process, this model is used as speech pre-information to distinguish speech from noise in a single-channel signal without the need for noise training data. Noise is modeled adaptively using non-negative matrix analysis (NMF), and speech is separated from noise by a variation expectation–maximization (VEM) algorithm that alternately updates speech and noise estimates. Though the DVAE-based approach is trained in an unsupervised and noise-agnostic manner using only clean speech signals, it becomes noise dependent during the speech enhancement phase by clearly modeling noise using non-negative matrix factorization analysis (NMF). This framework enables the combination of unsupervised speech structure learning and temporal modeling, resulting in higher performance, especially in cases of noise not visible during training.

- Semi-supervised Single-Channel Speech Enhancement AlgorithmsSemi-supervised learning is a combination of two classical learning algorithms: supervised and unsupervised. This approach uses labeled and unlabeled data to improve the quality of speech signals from a single channel, combining aspects of supervised and unsupervised machine learning [79]. The goal is to overcome the limitations of fully supervised algorithms by using large amounts of unlabeled data to create robust models that better generalize to invisible noise, in line with real-life situations. Traditional algorithms, and especially non-stationary noise reduction methods, do not adequately suppress transient interference that distorts speech signal. Therefore, a semi-supervised single-channel noise suppression has been appeared to reduce noise with minimal audio distortion [80].Adaptation learning algorithms are a main example of semi-supervised speech enhancement; they involve three stages of training. In the first stage, a speech enhancement model is trained using a limited amount of manually labeled target data. Then, the initially trained model is used to enhance unlabeled data and generate estimated labels for clean speech. In the final stage, a high-quality subset of this enhanced data is selected to retrain the model, thus improving its performance and adaptability to the target testing environment [81]. Zihao Cui et al. [79] provided an estimator to measure speech purity. For clean speech utterances, a supervised ML is used to train a DL speech enhancement model. While, for noised utterances, the model is updated based on unsupervised manner. The training loss is a combination of the supervised loss and unsupervised loss that provides a semi-supervised SEA. Other works have used a method of semi-supervised learning, Yoshiaki Bando et al. [82] proposed a semi-supervised method for speech enhancement called Variational Autoencoder (VAE-NMF), where speech is shown using a pre-trained deep generative model of VAE on clean speech signals, while noise is modeled using NMF during processing. The speech model outperforms traditional supervised methods, especially in cases of invisible noise. It uses a probabilistic priority to estimate clean speech from the noise signal by sampling the subsequent distribution, while the noise model is adapted to unknown environments.

2.3. Multi-Channel Speech Enhancement Algorithms

2.3.1. Blind Source Separation (BSS)

2.3.2. Beamforming

2.3.3. Multi-Channel Non-Negative Matrix Factorization (NMF)

2.3.4. Deep Learning-Based Multi-Channel Methods

3. Noise: Definition and Classification

4. Different Types of Datasets That Are Used in SEAs

5. Performance Enhancement Strategies for Quality and Intelligibility Assessment Measure for SEA

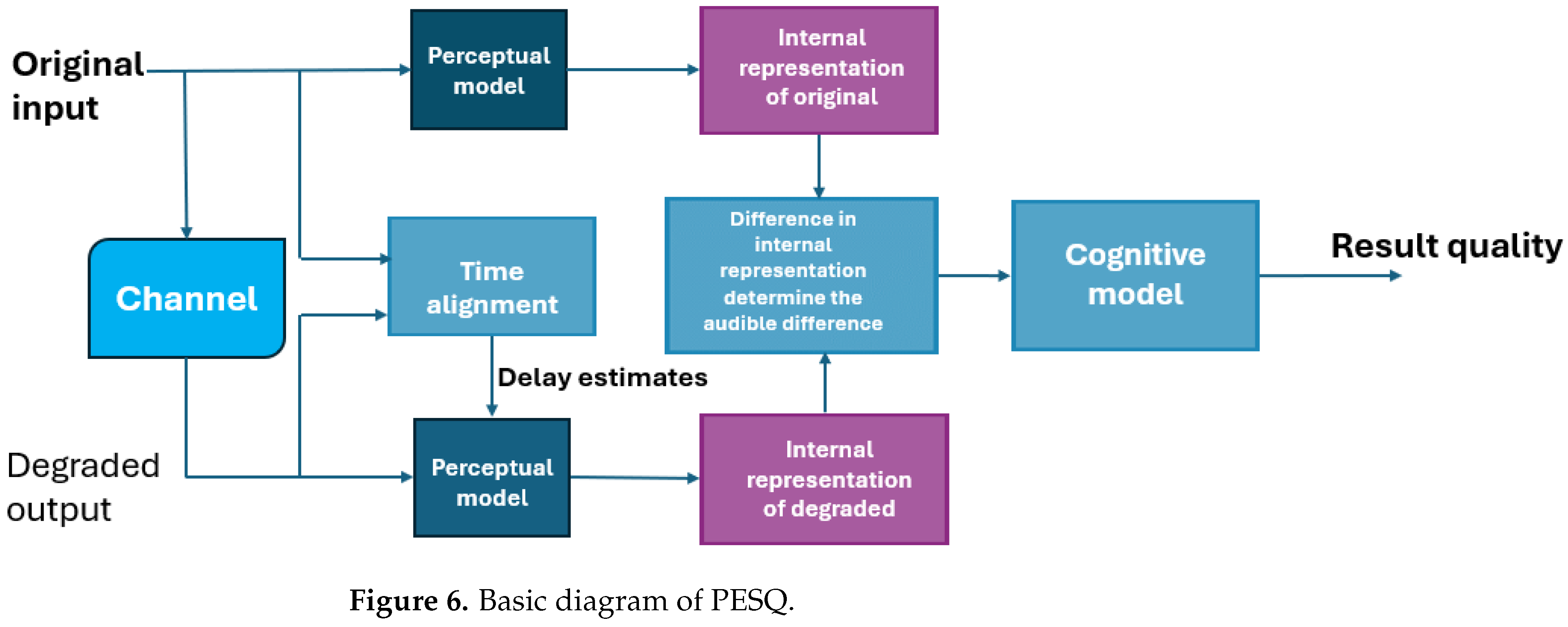

5.1. Perceptual Evaluation of Speech Quality (PESQ)

5.2. Frequency-Weighted Segmental SNR Measures (FWSSNR)

5.3. The Coherence Speech Intelligibility Index Measure (CSII)

5.4. The Short-Time Objective Intelligibility Measure (STOI)

5.5. Weight Spectral Slope (WSS)

5.6. The Composite Measures

5.7. Scale-Invariant Signal-to-Distortion Ratio (SI-SDR)

5.8. Log-Likelihood Ratio (LLR)

6. Key Applications for Speech Enhancement Technologies

6.1. Smart Hearing Aids Application

6.2. Hands-Free Communication Systems

6.3. Speech Recognition Technologies

6.4. Speech-Based Biometric System

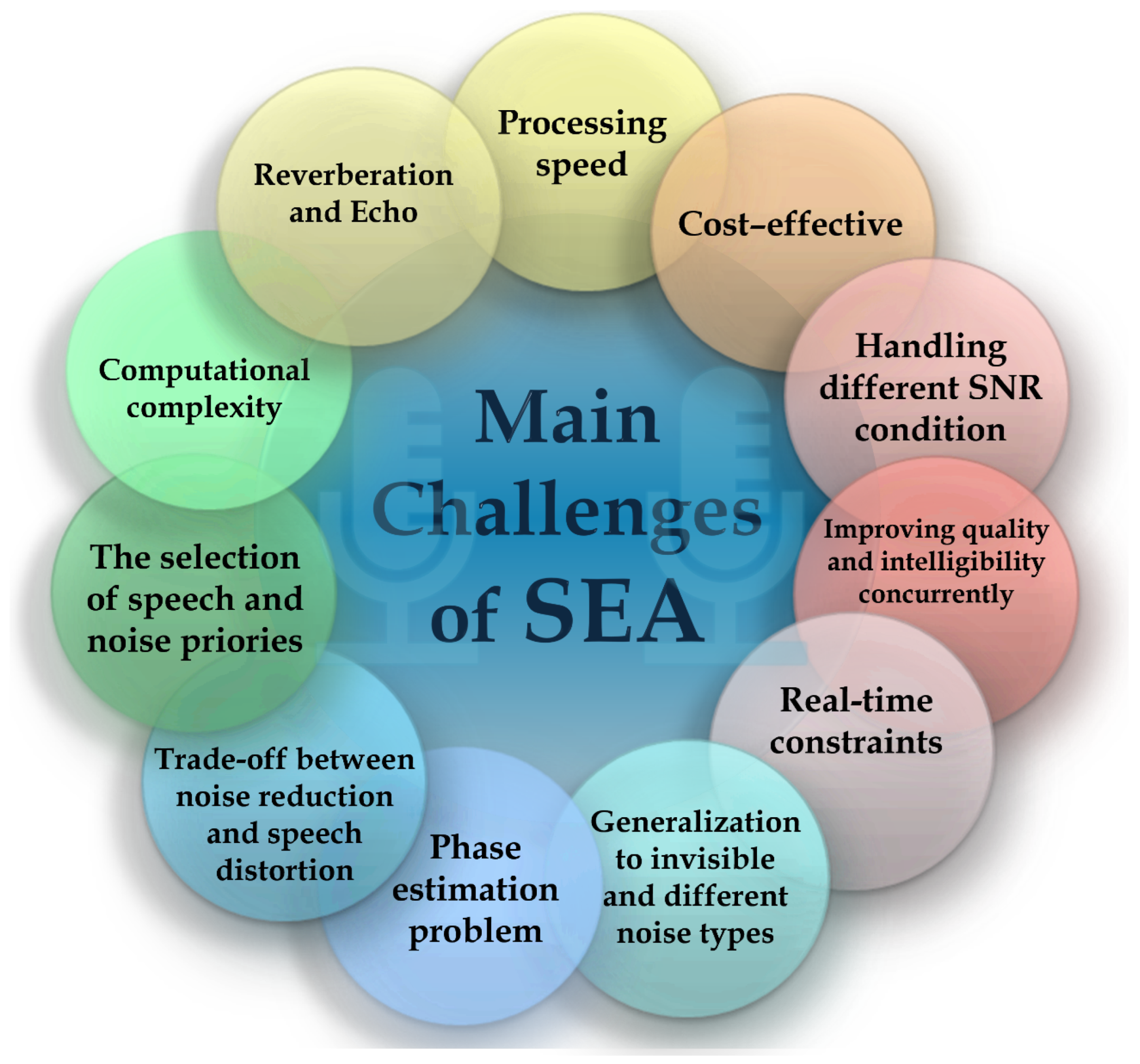

7. Limitations and Challenges of SEA

7.1. Generalization to Invisible Noise Types

7.2. Trade-Off Between Noise Reduction and Speech Distortion

7.3. Phase Estimation Problem

7.4. Computational Complexity and Real-Time Constraints

7.5. Reverberation and Echo

8. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| ANN | Artificial Neural Network |

| ASR | Automatic Speech Recognition |

| BSS | Blind Source Separation |

| CNN | Convolutional Neural Network |

| CRN | Convolutional Recurrent Network |

| CSII | Coherence Speech Intelligibility Index |

| DCCRN | Deep Complex Convolutional Recurrent Network |

| DCHT | Deep Complex Hybrid Transform |

| DCT | Discrete Cosine Transform |

| DFT | Discrete Fourier Transform |

| DKTT | Discrete Krawtchouk–Tchebichef Transform |

| DNN | Deep Neural Network |

| DSP | Digital Signal Processing |

| DVAE | Deep Variational Dynamic Autoencoder |

| DWT | Discrete Wavelet Transform |

| EMD | Empirical Mean Decomposition |

| FFT | Fast Fourier Transform |

| FTB | Frequency Transformation Block |

| FWSSNR | Frequency-Weighted Segmental SNR |

| GAN | Generative Adversarial Network |

| GEVD | Generalized Eigenvalue Decomposition |

| GMM | Gaussian Mixture Model |

| GPU | Graphics Processing Unit |

| HDLCMV | Harmonic Decomposition Linearly Constrained Minimum Variance |

| HMM | Hidden Markov Model |

| ICA | Independent Component Analysis |

| JLSMD | Joint Low-Range Scattered Decomposition |

| LCMV | Linearly Constrained Minimum Variance |

| LLR | Log-Likelihood Ratio |

| LSTM | Long Short-Term Memory |

| MAP | Maximum A Posteriori |

| MFSC | Mel-Frequency Spectral Coefficient |

| MMSE | Minimum Mean Square Error |

| MMSE-LSA | Minimum Mean Square Error Log-Spectral Amplitude |

| STSA-MMSE | Short-Time Spectral Amplitude Minimum Mean Square Error Estimator |

| MNMF | Multi-Channel Non-Negative Matrix Factorization |

| MOS | Mean Opinion Score |

| MVDR | Minimum Variance Distortionless Response |

| NMF | Non-Negative Matrix Factorization |

| ODMVDR | Orthogonal Decomposition-Based Minimum Variance Distortionless Response |

| Probability Density Function | |

| PESQ | Perceptual Evaluation of Speech Quality |

| SC-SE | Single-Channel Speech Enhancement Algorithm |

| SEA | Speech Enhancement Algorithm |

| SID | Speaker Identification |

| SI-SDR | Scale-Invariant Signal-to-Distortion Ratio |

| SNR | Signal-to-Noise Ratio |

| SS | Spectral Subtraction |

| SSA | Signal Subspace Algorithm |

| SSC-SEA | Supervised Single-Channel Speech Enhancement Algorithm |

| STFT | Short-Time Fourier Transform |

| STOI | Short-Time Objective Intelligibility |

| SV | Speaker Verification |

| SVD | Singular Value Decomposition |

| SVM | Support Vector Machine |

| TCNN | Temporal Convolutional Neural Network |

| UNET | U-shaped Network |

| USSC-SEA | Unsupervised Single-Channel Speech Enhancement Algorithm |

| VAE | Variational Autoencoder |

| VEM | Variational Expectation Maximization |

| WF | Wiener Filtering |

| WSST | Wavelet Synchro-Squeezing Transform |

References

- Oruh, J.; Olaniyi, M. Deep learning approach for automatic speech recognition in the presence of noise. South Fla. J. Dev. 2024, 5, e4099. [Google Scholar] [CrossRef]

- Naik, D.C.; Murthy, A.S.; Nuthakki, R. A literature survey on single channel speech enhancement techniques. Int. J. Sci. Technol. Res. 2020, 9, 5082–5091. [Google Scholar]

- Hussein, H.A.; Hameed, S.M.; Mahmmod, B.M.; Abdulhussain, S.H.; Hussain, A.J. Dual stages of speech enhancement algorithm based on super gaussian speech models. J. Eng. 2023, 29, 1–13. [Google Scholar] [CrossRef]

- Loizou, P.C. Speech Enhancement: Theory and Practice; CRC Press: Boca Raton, FL, USA, 2007. [Google Scholar]

- Nasir, R.J.; Abdulmohsin, H.A. Noise Reduction Techniques for Enhancing Speech. Iraqi J. Sci. 2024, 65, 5798–5818. [Google Scholar] [CrossRef]

- Saleem, N.; Khattak, M.I.; Verdú, E. On improvement of speech intelligibility and quality: A survey of unsupervised single channel speech enhancement algorithms. Int. J. Interact. Multimed. Artif. Intell. 2020, 6, 78–89. [Google Scholar] [CrossRef]

- Alam, M.J.; O’Shaughnessy, D. Perceptual improvement of Wiener filtering employing a post-filter. Digit. Signal Process. 2011, 21, 54–65. [Google Scholar] [CrossRef]

- Mahmmod, B.M.; Abdulhussian, S.H.; Al-Haddad, S.A.R.; Jassim, W.A. Low-distortion MMSE speech enhancement estimator based on Laplacian prior. IEEE Access 2017, 5, 9866–9881. [Google Scholar] [CrossRef]

- Ephraim, Y.; Van Trees, H.L. A signal subspace approach for speech enhancement. IEEE Trans. Speech Audio Process. 1995, 3, 251–266. [Google Scholar] [CrossRef]

- Xu, Y.; Du, J.; Dai, L.R.; Lee, C.H. A regression approach to speech enhancement based on deep neural networks. IEEE/ACM Trans. Audio Speech Lang. Process. 2014, 23, 7–19. [Google Scholar] [CrossRef]

- Diehl, P.U.; Zilly, H.; Sattler, F.; Singer, Y.; Kepp, K.; Berry, M.; Hasemann, H.; Zippel, M.; Kaya, M.; Meyer-Rachner, P.; et al. Deep learning-based denoising streamed from mobile phones improves speech-in-noise understanding for hearing aid users. Front. Med Eng. 2023, 1, 1281904. [Google Scholar] [CrossRef]

- Wang, Z.Q.; Wichern, G.; Watanabe, S.; Le Roux, J. STFT-domain neural speech enhancement with very low algorithmic latency. IEEE/ACM Trans. Audio Speech Lang. Process. 2022, 31, 397–410. [Google Scholar] [CrossRef]

- Muhammed Shifas, P.V.; Adiga, N.; Tsiaras, V.; Stylianou, Y. A non-causal FFTNet architecture for speech enhancement. In Proceedings of the Annual Conference of the International Speech Communication Association (Interspeech), Graz, Austria, 15–19 September 2019. [Google Scholar]

- Martin, R.; Breithaupt, C. Speech enhancement in the DFT domain using Laplacian speech priors. In Proceedings of the International Workshop on Acoustic Echo and Noise Control (IWAENC), Kyoto, Japan, 8–11 September 2003; pp. 87–90. [Google Scholar]

- Geng, C.; Wang, L. End-to-end speech enhancement based on discrete cosine transform. In Proceedings of the 2020 IEEE International Conference on Artificial Intelligence and Computer Applications (ICAICA), Dalian, China, 27–29 June 2020; pp. 379–383. [Google Scholar]

- Lee, S.; Wang, S.S.; Tsao, Y.; Hung, J. Speech enhancement based on reducing the detail portion of speech spectrograms in modulation domain via discrete wavelet transform. In Proceedings of the 2018 11th International Symposium on Chinese Spoken Language Processing (ISCSLP), Taipei City, Taiwan, 26–29 November 2018; pp. 16–20. [Google Scholar]

- Matsubara, T.; Kakutani, A.; Iwai, K.; Kurokawa, T. Improvement of wavelet-synchrosqueezing transform with time shifted angular frequency. J. Adv. Simul. Sci. Eng. 2023, 10, 53–63. [Google Scholar] [CrossRef]

- Mahmmod, B.M. A Speech Enhancement Framework Using Discrete Krawtchouk-Tchebichef Transform. Ph.D. Thesis, Universiti Putra Malaysia, Seri Kembangan, Malaysia, 2018. [Google Scholar]

- Koduri, S.K.; T., K.K. Hybrid Transform Based Speech Band Width Enhancement Using Data Hiding. Trait. Du Signal 2022, 39, 969. [Google Scholar] [CrossRef]

- Li, J.; Li, J.; Wang, P.; Zhang, Y. DCHT: Deep complex hybrid transformer for speech enhancement. In Proceedings of the 2023 Third International Conference on Digital Data Processing (DDP), Luton, UK, 27–29 November 2023; pp. 117–122. [Google Scholar]

- Mehrish, A.; Majumder, N.; Bharadwaj, R.; Mihalcea, R.; Poria, S. A review of deep learning techniques for speech processing. Inf. Fusion 2023, 99, 101869. [Google Scholar] [CrossRef]

- Saleem, N.; Khattak, M.I. A review of supervised learning algorithms for single channel speech enhancement. Int. J. Speech Technol. 2019, 22, 1051–1075. [Google Scholar] [CrossRef]

- Yousif, S.T.; Mahmmod, B.M. Speech Enhancement Algorithms: A Systematic Literature Review. Algorithms 2025, 18, 272. [Google Scholar] [CrossRef]

- Weninger, F.; Erdogan, H.; Watanabe, S.; Vincent, E.; Le Roux, J.; Hershey, J.R.; Schuller, B. Speech enhancement with LSTM recurrent neural networks and its application to noise-robust ASR. In Proceedings of the International Conference on Latent Variable Analysis and Signal Separation, Liberec, Czech Republic, 25–28 August 2015; pp. 91–99. [Google Scholar]

- Wang, Z.Q.; Wang, D. All-Neural Multi-Channel Speech Enhancement. In Proceedings of the 19th Annual Conference of the International Speech Communication Association (Interspeech), Hyderabad, India, 2–6 September 2018; pp. 3234–3238. [Google Scholar]

- Cohen, I.; Huang, Y.; Chen, J.; Benesty, J.; Benesty, J.; Chen, J.; Huang, Y.; Cohen, I. Noise Reduction in Speech Processing; Springer: Berlin/Heidelberg, Germany, 2009. [Google Scholar]

- Boll, S. A spectral subtraction algorithm for suppression of acoustic noise in speech. In Proceedings of the ICASSP’79 IEEE International Conference on Acoustics, Speech, and Signal Processing, Washington, DC, USA, 2–4 April 1979; pp. 200–203. [Google Scholar]

- Gupta, M.; Singh, R.K.; Singh, S. Analysis of optimized spectral subtraction method for single channel speech enhancement. Wirel. Pers. Commun. 2023, 128, 2203–2215. [Google Scholar] [CrossRef]

- Ephraim, Y.; Malah, D. Speech enhancement using a minimum-mean square error short-time spectral amplitude estimator. IEEE Trans. Acoust. 2003, 32, 1109–1121. [Google Scholar] [CrossRef]

- Cohen, I. Noise spectrum estimation in adverse environments: Improved minima controlled recursive averaging. IEEE Trans. Speech Audio Process. 2003, 11, 466–475. [Google Scholar] [CrossRef]

- Bahrami, M.; Faraji, N. Minimum mean square error estimator for speech enhancement in additive noise assuming Weibull speech priors and speech presence uncertainty. Int. J. Speech Technol. 2021, 24, 97–108. [Google Scholar] [CrossRef]

- Lotter, T.; Vary, P. Speech enhancement by MAP spectral amplitude estimation using a super-Gaussian speech model. EURASIP J. Adv. Signal Process. 2005, 2005, 354850. [Google Scholar] [CrossRef]

- Ranjbaryan, R.; Abutalebi, H.R. Multiframe maximum a posteriori estimators for single-microphone speech enhancement. IET Signal Process. 2021, 15, 467–481. [Google Scholar] [CrossRef]

- Lim, J.S.; Oppenheim, A.V. Enhancement and bandwidth compression of noisy speech. Proc. IEEE 2005, 67, 1586–1604. [Google Scholar] [CrossRef]

- Dwivedi, S.; Khunteta, A. Performance Comparison among Different Wiener Filter Algorithms for Speech Enhancement. Int. J. Microsystems IoT 2024, 2, 505–514. [Google Scholar]

- Garg, A. Speech enhancement using long short term memory with trained speech features and adaptive Wiener filter. Multimed. Tools Appl. 2023, 82, 3647–3675. [Google Scholar] [CrossRef] [PubMed]

- Jaiswal, R.K.; Yeduri, S.R.; Cenkeramaddi, L.R. Single-channel speech enhancement using implicit Wiener filter for high-quality speech communication. Int. J. Speech Technol. 2022, 25, 745–758. [Google Scholar] [CrossRef]

- Sun, C.; Mu, J. An eigenvalue filtering based subspace approach for speech enhancement. Noise Control Eng. J. 2015, 63, 36–48. [Google Scholar] [CrossRef]

- Chengli, S.U.N.; Jianxiao, X.I.E.; Yan, L. A signal subspace speech enhancement approach based on joint low-rank and sparse matrix decomposition. Arch. Acoust. 2016, 41, 245–254. [Google Scholar] [CrossRef]

- Benesty, J.; Chen, J.; Huang, Y. Speech Enhancement in the Karhunen-Loève Expansion Domain; Morgan & Claypool Publishers: San Rafael, CA, USA, 2011. [Google Scholar]

- Widrow, B.; Glover, J.R.; McCool, J.M.; Kaunitz, J.; Williams, C.S.; Hearn, R.H.; Zeidler, J.R.; Dong, J.E.; Goodlin, R.C. Adaptive noise cancelling: Principles and applications. Proc. IEEE 1975, 63, 1692–1716. [Google Scholar] [CrossRef]

- Capon, J. Investigation of long-period noise at the large aperture seismic array. J. Geophys. Res. 1969, 74, 3182–3194. [Google Scholar] [CrossRef]

- Benesty, J.; Chen, J.; Habets, E.A.P. Speech Enhancement in the STFT Domain; Springer Science & Business Media: Heidelberg, Germany, 2011. [Google Scholar]

- Pados, D.A.; Karystinos, G.N. An iterative algorithm for the computation of the MVDR filter. IEEE Trans. Signal Process. 2001, 49, 290–300. [Google Scholar] [CrossRef]

- Frost, O.L. An Algorithm for Linearly Constrained Adaptive Array Processing. Proc. IEEE 1972, 60, 926–935. [Google Scholar] [CrossRef]

- Christensen, M.G.; Jakobsson, A. Optimal filter designs for separating and enhancing periodic signals. IEEE Trans. Signal Process. 2010, 58, 5969–5983. [Google Scholar] [CrossRef]

- Wiener, F.M. The diffraction of sound by rigid disks and rigid square plates. J. Acoust. Soc. Am. 1949, 21, 334–347. [Google Scholar] [CrossRef]

- Hadei, S.; Lotfizad, M. A family of adaptive filter algorithms in noise cancellation for speech enhancement. arXiv 2011, arXiv:1106.0846. [Google Scholar] [CrossRef]

- Frazier, R.; Samsam, S.; Braida, L.; Oppenheim, A. Enhancement of speech by adaptive filtering. In Proceedings of the ICASSP’76 IEEE International Conference on Acoustics, Speech, and Signal Processing, Philadelphia, PA, USA, 12–14 April 1976; pp. 251–253. [Google Scholar]

- Lim, J.; Oppenheim, A. All-pole modeling of degraded speech. IEEE Trans. Acoust. 1978, 26, 197–210. [Google Scholar] [CrossRef]

- Ephraim, Y. A Bayesian estimation approach for speech enhancement using hidden Markov models. IEEE Trans. Signal Process. 2002, 40, 725–735. [Google Scholar] [CrossRef]

- Xiang, Y.; Shi, L.; Højvang, J.L.; Rasmussen, M.H.; Christensen, M.G. A novel NMF-HMM speech enhancement algorithm based on Poisson mixture model. In Proceedings of the ICASSP 2021 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Toronto, ON, Canada, 6–11 June 2021; pp. 721–725. [Google Scholar]

- Xiang, Y.; Shi, L.; Højvang, J.L.; Rasmussen, M.H.; Christensen, M.G. A speech enhancement algorithm based on a non-negative hidden Markov model and Kullback-Leibler divergence. EURASIP J. Audio Speech Music Process. 2022, 2022, 22. [Google Scholar] [CrossRef]

- Scalart, P.; Filho, J.V. Speech enhancement based on a priori signal to noise estimation. In Proceedings of the 1996 IEEE International Conference on Acoustics, Speech, and Signal Processing Conference Proceedings, Atlanta, GA, USA, 7–10 May 1996; pp. 629–632. [Google Scholar]

- Bolner, F.; Goehring, T.; Monaghan, J.; Van Dijk, B.; Wouters, J.; Bleeck, S. Speech enhancement based on neural networks applied to cochlear implant coding strategies. In Proceedings of the 2016 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Shanghai, China, 20–25 March 2016; pp. 6520–6524. [Google Scholar]

- Xia, B.; Bao, C. Wiener filtering based speech enhancement with weighted denoising auto-encoder and noise classification. Speech Commun. 2014, 60, 13–29. [Google Scholar] [CrossRef]

- Mitchell, T.M. Does machine learning really work? AI Mag. 1997, 18, 11–19. [Google Scholar]

- Wang, D.; Chen, J. Supervised speech separation based on deep learning: An overview. IEEE/ACM Trans. Audio Speech Lang. Process. 2018, 26, 1702–1725. [Google Scholar] [CrossRef]

- Yuan, W.; Xia, B. A speech enhancement approach based on noise classification. Appl. Acoust. 2015, 96, 11–19. [Google Scholar] [CrossRef]

- Kinoshita, Y.; Hirakawa, R.; Kawano, H.; Nakashi, K.; Nakatoh, Y. Speech enhancement system using SVM for train announcement. In Proceedings of the 2021 IEEE International Conference on Consumer Electronics (ICCE), Las Vegas, NV, USA, 10–12 January 2021; pp. 1–3. [Google Scholar]

- Kong, Y.Y.; Mullangi, A.; Kokkinakis, K. Classification of fricative consonants for speech enhancement in hearing devices. PLoS ONE 2014, 9, e95001. [Google Scholar] [CrossRef]

- Manamperi, W.; Samarasinghe, P.N.; Abhayapala, T.D.; Zhang, J. GMM based multi-stage Wiener filtering for low SNR speech enhancement. In Proceedings of the 2022 International Workshop on Acoustic Signal Enhancement (IWAENC), Bamberg, Germany, 5–8 September 2022; pp. 1–5. [Google Scholar]

- Soundarya, M.; Anusuya, S.; Narayanan, L.K. Enhancing Automatic Speech Recognition Accuracy Using a Gaussian Mixture Model (GMM). In Proceedings of the 3rd International Conference on Optimization Techniques in the Field of Engineering (ICOFE-2024), Sambalpur, India, 22–24 January 2024. [Google Scholar]

- Subramani, K.; Smaragdis, P.; Higuchi, T.; Souden, M. Rethinking Non-Negative Matrix Factorization with Implicit Neural Representations. arXiv 2024, arXiv:2404.04439. [Google Scholar] [CrossRef]

- Lee, D.; Seung, H.S. Algorithms for non-negative matrix factorization. In Proceedings of the Advances in Neural Information Processing Systems, Denver, CO, USA, 1 January 2000; Volume 13. [Google Scholar]

- Virtanen, T. Monaural sound source separation by nonnegative matrix factorization with temporal continuity and sparseness criteria. IEEE Trans. Audio Speech Lang. Process. 2007, 15, 1066–1074. [Google Scholar] [CrossRef]

- Yuliani, A.R.; Amri, M.F.; Suryawati, E.; Ramdan, A.; Pardede, H.F. Speech enhancement using deep learning methods: A review. J. Elektron. Dan Telekomun. 2021, 21, 19–26. [Google Scholar] [CrossRef]

- Nguyen, B.T.; Wakabayashi, Y.; Geng, Y.; Iwai, K.; Nishiura, T. DNN-based phase estimation for online speech enhancement. Acoust. Sci. Technol. 2025, 46, 186–190. [Google Scholar] [CrossRef]

- Ke, Y.; Li, A.; Zheng, C.; Peng, R.; Li, X. Low-complexity artificial noise suppression methods for deep learning-based speech enhancement algorithms. EURASIP J. Audio Speech Music Process. 2021, 2021, 17. [Google Scholar] [CrossRef]

- Wu, J.; Hua, Y.; Yang, S.; Qin, H.; Qin, H. Speech enhancement using generative adversarial network by distilling knowledge from statistical method. Appl. Sci. 2019, 9, 3396. [Google Scholar] [CrossRef]

- Pascual, S.; Bonafonte, A.; Serra, J. SEGAN: Speech enhancement generative adversarial network. arXiv 2017, arXiv:1703.09452. [Google Scholar] [CrossRef]

- Serbest, S.; Stojkovic, T.; Cernak, M.; Harper, A. DeepFilterGAN: A Full-band Real-time Speech Enhancement System with GAN-based Stochastic Regeneration. arXiv 2025, arXiv:2505.23515. [Google Scholar]

- Shetu, S.S.; Habets, E.A.P.; Brendel, A. GAN-based speech enhancement for low SNR using latent feature conditioning. In Proceedings of the ICASSP 2025 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Hyderabad, India, 6–11 April 2025; pp. 1–5. [Google Scholar]

- Gu, J.; Wang, Z.; Kuen, J.; Ma, L.; Shahroudy, A.; Shuai, B.; Liu, T.; Wang, X.; Wang, G.; Cai, J.; et al. Recent advances in convolutional neural networks. Pattern Recognit. 2018, 77, 354–377. [Google Scholar] [CrossRef]

- Bhat, G.S.; Shankar, N.; Reddy, C.K.A.; Panahi, I.M.S. A real-time convolutional neural network based speech enhancement for hearing impaired listeners using smartphone. IEEE Access 2019, 7, 78421–78433. [Google Scholar] [CrossRef]

- Pandey, A.; Wang, D. TCNN: Temporal convolutional neural network for real-time speech enhancement in the time domain. In Proceedings of the ICASSP 2019 IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP), Brighton, UK, 12–17 May 2019; pp. 6875–6879. [Google Scholar]

- Lin, X.; Leglaive, S.; Girin, L.; Alameda-Pineda, X. Unsupervised speech enhancement with deep dynamical generative speech and noise models. arXiv 2023, arXiv:2306.07820. [Google Scholar] [CrossRef]

- Bie, X.; Leglaive, S.; Alameda-Pineda, X.; Girin, L. Unsupervised speech enhancement using dynamical variational autoencoders. IEEE/ACM Trans. Audio Speech Lang. Process. 2022, 30, 2993–3007. [Google Scholar] [CrossRef]

- Cui, Z.; Zhang, S.; Chen, Y.; Gao, Y.; Deng, C.; Feng, J. Semi-Supervised Speech Enhancement Based On Speech Purity. In Proceedings of the ICASSP 2023 IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP), Rhodos, Greece, 4–10 June 2023; pp. 1–5. [Google Scholar]

- Ullah, R.; Islam, M.S.; Ye, Z.; Asif, M. Semi-supervised transient noise suppression using OMLSA and SNMF algorithms. Appl. Acoust. 2020, 170, 107533. [Google Scholar] [CrossRef]

- Purushotham, U.; Chethan, K.; Manasa, S.; Meghana, U. Speech enhancement using semi-supervised learning. In Proceedings of the 2020 International Conference on Intelligent Engineering and Management (ICIEM), London, UK, 17–19 June 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 381–385. [Google Scholar]

- Bando, Y.; Mimura, M.; Itoyama, K.; Yoshii, K.; Kawahara, T. Statistical speech enhancement based on probabilistic integration of variational autoencoder and non-negative matrix factorization. In Proceedings of the 2018 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Calgary, AB, Canada, 15–20 April 2018; pp. 716–720. [Google Scholar]

- Doclo, S.; Kellermann, W.; Makino, S.; Nordholm, S.E. Multichannel signal enhancement algorithms for assisted listening devices: Exploiting spatial diversity using multiple microphones. IEEE Signal Process. Mag. 2015, 32, 18–30. [Google Scholar] [CrossRef]

- Zaland, Z.; Mustafa, M.B.; Kiah, M.L.M.; Ting, H.N.; Yusoof, M.A.M.; Don, Z.M.; Muthaiyah, S. Multichannel speech enhancement for automatic speech recognition: A literature review. PeerJ Comput. Sci. 2025, 11, e2772. [Google Scholar] [CrossRef] [PubMed]

- XIe, Y.; Zou, T.; Sun, W.; Xie, S. Blind extraction-based multichannel speech enhancement in noisy and reverberation environments. IEEE Sens. Lett. 2025, 9, 7001104. [Google Scholar] [CrossRef]

- Kajala, M. A Multi-Microphone Beamforming Algorithm with Adjustable Filter Characteristics. Ph.D. Thesis, Tampere University, Tampere, Finland, 2021. [Google Scholar]

- Sawada, H.; Kameoka, H.; Araki, S.; Ueda, N. New formulations and efficient algorithms for multichannel NMF. In Proceedings of the 2011 IEEE Workshop on Applications of Signal Processing to Audio and Acoustics (WASPAA), New Paltz, NY, USA, 16–19 October 2011; pp. 153–156. [Google Scholar]

- Pandey, A.; Wang, D. On cross-corpus generalization of deep learning based speech enhancement. IEEE/ACM Trans. Audio Speech Lang. Process. 2020, 28, 2489–2499. [Google Scholar] [CrossRef]

- Nossier, S.A.; Wall, J.; Moniri, M.; Glackin, C.; Cannings, N. An experimental analysis of deep learning architectures for supervised speech enhancement. Electronics 2020, 10, 17. [Google Scholar] [CrossRef]

- Cohen, I.; Berdugo, B. Speech enhancement for non-stationary noise environments. Signal Process. 2001, 81, 2403–2418. [Google Scholar] [CrossRef]

- Zhao, Y.; Wang, D. Noisy-Reverberant Speech Enhancement Using DenseUNet with Time-Frequency Attention. In Proceedings of the 21st Annual Conference of the International Speech Communication Association (Interspeech), Shanghai, China, 25–29 October 2020; pp. 3261–3265. [Google Scholar]

- Reddy, C.K.A.; Beyrami, E.; Pool, J.; Cutler, R.; Srinivasan, S.; Gehrke, J. A scalable noisy speech dataset and online subjective test framework. arXiv 2019, arXiv:1909.08050. [Google Scholar] [CrossRef]

- Richter, J.; Wu, Y.; Krenn, S.; Welker, S.; Lay, B.; Watanabe, S.; Richard, A.; Gerkmann, T. EARS: An anechoic fullband speech dataset benchmarked for speech enhancement and dereverberation. arXiv 2024, arXiv:2406.06185. [Google Scholar] [CrossRef]

- Garofolo, J.S.; Lamel, L.F.; Fisher, W.M.; Fiscus, J.G.; Pallett, D.S.; Dahlgren, N.L.; Zue, V. TIMIT Acoustic-Phonetic Continuous Speech Corpus; Linguistic Data Consortium: Philadelphia, PA, USA, 1993. [Google Scholar]

- Botinhao, C.V.; Wang, X.; Takaki, S.; Yamagishi, J. Speech enhancement for a noise-robust text-to-speech synthesis system using deep recurrent neural networks. In Proceedings of the 17th Annual Conference of the International Speech Communication Association (Interspeech), San Francisco, CA, USA, 8–12 September 2016; pp. 352–356. [Google Scholar]

- Barker, J.P.; Marxer, R.; Vincent, E.; Watanabe, S. The CHiME challenges: Robust speech recognition in everyday environments. In New Era for Robust Speech Recognition: Exploiting Deep Learning; Springer: Berlin/Heidelberg, Germany, 2017; pp. 327–344. [Google Scholar]

- Watanabe, S.; Mandel, M.; Barker, J.; Vincent, E.; Arora, A.; Chang, X.; Khudanpur, S.; Manohar, V.; Povey, D.; Raj, D.; et al. CHiME-6 challenge: Tackling multispeaker speech recognition for unsegmented recordings. arXiv 2020, arXiv:2004.09249. [Google Scholar] [CrossRef]

- Reddy, C.K.A.; Gopal, V.; Cutler, R.; Beyrami, E.; Cheng, R.; Dubey, H.; Matusevych, S.; Aichner, R.; Aazami, A.; Braun, S.; et al. The Interspeech 2020 deep noise suppression challenge: Datasets, subjective testing framework, and challenge results. arXiv 2020, arXiv:2005.13981. [Google Scholar] [CrossRef]

- Kong, Q.; Liu, H.; Du, X.; Chen, L.; Xia, R.; Wang, Y. Speech enhancement with weakly labelled data from AudioSet. arXiv 2021, arXiv:2102.09971. [Google Scholar] [CrossRef]

- Li, H.; Yamagishi, J. DDS: A new device-degraded speech dataset for speech enhancement. arXiv 2021, arXiv:2109.07931. [Google Scholar]

- Mitra, V.; Wang, W.; Franco, H.; Lei, Y.; Bartels, C.; Graciarena, M. Evaluating robust features on deep neural networks for speech recognition in noisy and channel mismatched conditions. In Proceedings of the 15th Annual Conference of the International Speech Communication Association (Interspeech), Singapore, 14–18 September 2014; pp. 895–899. [Google Scholar]

- Kinoshita, K.; Delcroix, M.; Yoshioka, T.; Nakatani, T.; Habets, E.; Haeb-Umbach, R.; Leutnant, V.; Sehr, A.; Kellermann, W.; Maas, R.; et al. The REVERB challenge: A common evaluation framework for dereverberation and recognition of reverberant speech. In Proceedings of the 2013 IEEE Workshop on Applications of Signal Processing to Audio and Acoustics, New Paltz, NY, USA, 20–23 October 2013; pp. 1–4. [Google Scholar]

- Maciejewski, M.; Wichern, G.; McQuinn, E.; Le Roux, J. WHAMR!: Noisy and reverberant single-channel speech separation. In Proceedings of the ICASSP 2020 IEEE International Conference on Acoustics, Speech, and Signal Processing (ICASSP), Barcelona, Spain, 4–8 May 2020; pp. 696–700. [Google Scholar]

- Mahmmod, B.M.; Baker, T.; Al-Obeidat, F.; Abdulhussain, S.H.; Jassim, W.A. Speech enhancement algorithm based on super-Gaussian modeling and orthogonal polynomials. IEEE Access 2019, 7, 103485–103504. [Google Scholar] [CrossRef]

- Hu, Y.; Loizou, P.C. Subjective comparison and evaluation of speech enhancement algorithms. Speech Commun. 2007, 49, 588–601. [Google Scholar] [CrossRef] [PubMed]

- Thiemann, J.; Ito, N.; Vincent, E. The Diverse Environments Multi-Channel Acoustic Noise Database (DEMAND): A Database of Multichannel Environmental Noise Recordings. In Proceedings of Meetings on Acoustics; Acoustical Society of America: Melville, NY, USA, 2013; Available online: https://cir.nii.ac.jp/crid/1881709542901868672 (accessed on 5 January 2026).

- Vincent, E.; Watanabe, S.; Nugraha, A.A.; Barker, J.; Marxer, R. An analysis of environment, microphone and data simulation mismatches in robust speech recognition. Comput. Speech Lang. 2017, 46, 535–557. [Google Scholar] [CrossRef]

- Gemmeke, J.F.; Ellis, D.P.; Freedman, D.; Jansen, A.; Lawrence, W.; Moore, R.C.; Plakal, M.; Ritter, M. Audio Set: An ontology and human-labeled dataset for audio events. In Proceedings of the 2017 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), New Orleans, LA, USA, 5–9 March 2017; pp. 776–780. [Google Scholar]

- Qian, Y.; Bi, M.; Tan, T.; Yu, K. Very deep convolutional neural networks for noise robust speech recognition. IEEE/ACM Trans. Audio Speech Lang. Process. 2016, 24, 2263–2276. [Google Scholar] [CrossRef]

- Ribas, D.; Llombart, J.; Miguel, A.; Vicente, L. Deep speech enhancement for reverberated and noisy signals using wide residual networks. arXiv 2019, arXiv:1901.00660. [Google Scholar] [CrossRef]

- Loizou, P.C. Speech quality assessment. In Multimedia Analysis, Processing and Communications; Springer: Berlin/Heidelberg, Germany, 2011; pp. 623–654. [Google Scholar]

- Hu, Y.; Loizou, P.C. Evaluation of objective quality measures for speech enhancement. IEEE Trans. Audio Speech Lang. Process. 2007, 16, 229–238. [Google Scholar] [CrossRef]

- Mahmmod, B.M.; Abdulhussain, S.H.; Ali, T.M.; Alsabah, M.; Hussain, A.; Al-Jumeily, D. Speech Enhancement: A Review of Various Approaches, Trends, and Challenges. In Proceedings of the 2024 17th International Conference on Development in eSystem Engineering (DeSE), Khorfakkan, United Arab Emirates, 6–8 November 2024; pp. 31–36. [Google Scholar]

- Beerends, J.G.; Hekstra, A.P.; Rix, A.W.; Hollier, M.P. Perceptual evaluation of speech quality (PESQ): The new ITU standard for end-to-end speech quality assessment part II-psychoacoustic model. J. Audio Eng. Soc. 2002, 50, 765–778. [Google Scholar]

- de Oliveira, D.; Welker, S.; Richter, J.; Gerkmann, T. The PESQetarian: On the Relevance of Goodhart’s Law for Speech Enhancement. arXiv 2024, arXiv:2406.03460. [Google Scholar]

- Grundlehner, B.; Lecocq, J.; Balan, R.; Rosca, J. Performance assessment method for speech enhancement systems. In Proceedings of the 1st Annual IEEE BENELUX/DSP Valley Signal Processing Symposium (SPS-DARTS 2005), Antwerp, Belgium, 19–20 April 2005; pp. 1–4. [Google Scholar]

- Hou, M.; Kodrasi, I. Influence of Clean Speech Characteristics on Speech Enhancement Performance. arXiv 2025, arXiv:2509.18885. [Google Scholar] [CrossRef]

- Kates, J.M.; Arehart, K.H. Coherence and the speech intelligibility index. J. Acoust. Soc. Am. 2005, 117, 2224–2237. [Google Scholar] [CrossRef]

- Taal, C.H.; Hendriks, R.C.; Heusdens, R.; Jensen, J. An algorithm for intelligibility prediction of time–frequency weighted noisy speech. IEEE Trans. Audio Speech Lang. Process. 2011, 19, 2125–2136. [Google Scholar] [CrossRef]

- Medani, M.; Saleem, N.; Fkih, F.; Alohali, M.A.; Elmannai, H.; Bourouis, S. End-to-end feature fusion for jointly optimized speech enhancement and automatic speech recognition. Sci. Rep. 2025, 15, 22892. [Google Scholar] [CrossRef]

- Hwang, S.; Park, S.W.; Park, Y. Design of a Dual-Path Speech Enhancement Model. Appl. Sci. 2025, 15, 6358. [Google Scholar] [CrossRef]

- Jassim, W.A.; Paramesran, R.; Zilany, M.S.A. Enhancing noisy speech signals using orthogonal moments. IET Signal Process. 2014, 8, 891–905. [Google Scholar] [CrossRef]

- Ding, H.; Lee, T.; Soon, Y.; Yeo, C.K.; Dai, P.; Dan, G. Objective measures for quality assessment of noise-suppressed speech. Speech Commun. 2015, 71, 62–73. [Google Scholar] [CrossRef]

- Rosenbaum, T.; Winebrand, E.; Cohen, O.; Cohen, I. Deep-learning framework for efficient real-time speech enhancement and dereverberation. Sensors 2025, 25, 630. [Google Scholar] [CrossRef]

- Jepsen, S.D.; Christensen, M.G.; Jensen, J.R. A study of the scale invariant signal to distortion ratio in speech separation with noisy references. arXiv 2025, arXiv:2508.14623. [Google Scholar] [CrossRef]

- Zhang, H.; Yu, M.; Wu, Y.; Yu, T.; Yu, D. Hybrid AHS: A hybrid of Kalman filter and deep learning for acoustic howling suppression. arXiv 2023, arXiv:2305.02583. [Google Scholar] [CrossRef]

- Priyanka, S.S. A review on adaptive beamforming techniques for speech enhancement. In Proceedings of the 2017 Innovations in Power and Advanced Computing Technologies, Vellore, India, 21–22 April 2017; pp. 1–6. [Google Scholar]

- Sakhnov, K.; Verteletskaya, E.; Simak, B. Dynamical energy-based speech/silence detector for speech enhancement applications. In Proceedings of the World Congress on Engineering, London, UK, 1–3 July 2009; Volume 2. [Google Scholar]

- Nossier, S.A.; Rizk, M.R.M.; Moussa, N.D.; El Shehaby, S. Enhanced smart hearing aid using deep neural networks. Alex. Eng. J. 2019, 58, 539–550. [Google Scholar] [CrossRef]

- Grace, N.V.A.; Sumithra, M.G. Speech enhancement in hands free communication. In Proceedings of the 4th WSEAS International Conference on Electronic, Signal Processing and Control, Rio de Janeiro, Brazil, 25–27 April 2007; pp. 1–6. [Google Scholar]

- Alvarez, D.A. Speech Enhancement Algorithms for Audiological Applications. Ph.D. Thesis, Universidad de Alcalá, Alcalá de Henares, Spain, 2013. [Google Scholar]

- Kalamani, M.; Valarmathy, S.; Poonkuzhali, C.; JN, C. Feature selection algorithms for automatic speech recognition. In Proceedings of the 2014 International Conference on Computer Communication and Informatics, Udaipur Rajasthan, India, 14–16 November 2014; IEEE: Piscataway, NJ, USA, 2014; pp. 1–7. [Google Scholar]

- Das, N.; Chakraborty, S.; Chaki, J.; Padhy, N.; Dey, N. Fundamentals, present and future perspectives of speech enhancement. Int. J. Speech Technol. 2021, 24, 883–901. [Google Scholar] [CrossRef]

- Dey, S.; Barman, S.; Bhukya, R.K.; Das, R.K.; Haris, B.C.; Prasanna, S.M.; Sinha, R. Speech biometric based attendance system. In Proceedings of the 2014 Twentieth National Conference on Communications (NCC), Kanpur, India, 28 February–2 March 2014; pp. 1–6. [Google Scholar]

- Natarajan, S.; Al-Haddad, S.A.R.; Ahmad, F.A.; Kamil, R.; Hassan, M.K.; Azrad, S.; Macleans, J.F.; Abdulhussain, S.H.; Mahmmod, B.M.; Saparkhojayev, N.; et al. Deep neural networks for speech enhancement and speech recognition: A systematic review. Ain Shams Eng. J. 2025, 16, 103405. [Google Scholar] [CrossRef]

- Rehr, R.; Gerkmann, T. Normalized features for improving the generalization of DNN based speech enhancement. arXiv 2017, arXiv:1709.02175. [Google Scholar]

- Brons, I.; Dreschler, W.A.; Houben, R. Detection threshold for sound distortion resulting from noise reduction in normal-hearing and hearing-impaired listeners. J. Acoust. Soc. Am. 2014, 136, 1375–1384. [Google Scholar] [CrossRef] [PubMed]

- Lu, Y.X.; Ai, Y.; Ling, Z.H. Explicit estimation of magnitude and phase spectra in parallel for high-quality speech enhancement. Neural Netw. 2025, 189, 107562. [Google Scholar] [CrossRef]

- Choi, H.S.; Kim, J.H.; Huh, J.; Kim, A.; Ha, J.W.; Lee, K. Phase-aware speech enhancement with deep complex U-Net. In Proceedings of the International Conference on Learning Representations, Vancouver, BC, Canada, 30 April–3 May 2018. [Google Scholar]

- Sinha, R.; Rollwage, C.; Doclo, S. Low-complexity Real-time Single-channel Speech Enhancement Based on Skip-GRUs. In Proceedings of the Speech Communication, 15th ITG Conference, Aachen, Germany, 20–22 September 2023; pp. 181–185. [Google Scholar]

- Valentini-Botinhao, C.; Yamagishi, J. Speech enhancement of noisy and reverberant speech for text-to-speech. IEEE/ACM Trans. Audio Speech Lang. Process. 2018, 26, 1420–1433. [Google Scholar] [CrossRef]

- Li, A.; Liu, W.; Luo, X.; Yu, G.; Zheng, C.; Li, X. A simultaneous denoising and dereverberation framework with target decoupling. arXiv 2021, arXiv:2106.12743. [Google Scholar] [CrossRef]

- Song, S.; Cheng, L.; Luan, S.; Yao, D.; Li, J.; Yan, Y. An integrated multi-channel approach for joint noise reduction and dereverberation. Appl. Acoust. 2021, 171, 107526. [Google Scholar] [CrossRef]

| Method Type | Performance Trade-Offs | Computational Complexity (Low, Medium, and High) | Real-Time Applicability | Strengths | Limitations |

|---|---|---|---|---|---|

| Spectral Subtraction [27] (Single Channel) | Speech quality may be affected if noise estimation is inaccurate. | Low | Available | Simple, fast, and no training required; effective with stationary noise. | Musical noise artifacts and poor performance in non-stationary noise. |

| STSA-MMSE [29] (Single Channel) | Improves speech quality with reduced musical noise. | Medium | Available | Suitable for stationary and quasi-stationary noise. | Limited performance in highly non-stationary noise; relies on statistical assumptions. |

| Wiener Filtering (WF) [34] (Single Channel) | Balances noise reduction while maintaining speech clarity. | Medium | Available | Clear and understandable statistical framework. | Depends on accurate SNR estimation; ineffective in highly non-stationary noise and complex environments. |

| Signal Subspace Algorithms (SSA) [38] (Single Channel) | Good speech–noise separation when subspaces are well estimated. | Medium–High | Limited | Reduces distortion; suitable for signals with clear structure. | High computational cost and poor robustness to non-stationary noise. |

| Traditional Machine Learning (SVM [59], GMM [62], NMF [64]) (Single Channel) | Better than spectral methods but weaker than deep learning in complex scenarios; highly dependent on feature quality. | Medium | Available | Works well with limited data; effective for noise classification and structured noise. | Requires handcrafted features; weaker performance in complex and unseen noise compared to deep learning. |

| Deep Learning Approaches (Supervised [6], Semi-Supervised [79], Unsupervised [77]) (Single Channel) | Best performance in audio quality and intelligibility, but highly dependent on training strategy and data diversity. | High | Limited | Strong performance in non-stationary noise; no manual feature design. | Requires large datasets; limited interpretability; and deployment challenges in real-time and low-resource systems. |

| Blind Source Separation [85] (Multi-Channel) | Highly effective when multiple microphones are available and sources are spatially independent. | Medium–High | Limited | Exploits spatial information; effective for multi-source scenarios. | Sensitive to reverberation; limited robustness in complex environments. |

| Beamforming [86] (Multi-Channel) | Strong performance when the speech source direction is known or estimable. | Medium | Available | Exploits spatial information; improves SNR; suitable as a pre-processing stage. | Limited performance in highly reverberant or spatially overlapping noise. |

| Dataset Name | Type of Data | Description | Reference | Purpose |

|---|---|---|---|---|

| EARS | Clean Speech | Contains approximately 100 h of clean speech recordings at a sampling rate of 48 kHz from 107 speakers with diverse backgrounds. | [93] | Speech enhancement and dereverberation |

| TIMIT | Clean Speech | Includes 630 speakers across eight different American English accents. Each speaker records ten sentences using a high-quality microphone in a quiet room. The sampling rate is 16 kHz. | [94,104] | Speech recognition |

| NOIZEUS | Clean and Noisy Speech | Consists of 30 spoken sentences from six speakers (three male and three female). Clean speech is corrupted with realistic noises such as babble, car, train, street, airport, and restaurant at SNR levels of 0, 5, 10, and 15 dB. The sampling rate is 8 kHz. | [4,105] | Evaluation of single-channel speech enhancement algorithms |

| DEMAND | Real Environmental Noise | A database of 16-channel environmental noise recordings captured from real (non-simulated) environments using multiple microphones. The sampling rate is 48 kHz. | [95,106] | Testing algorithms using real-world noise in diverse environments |

| CHiME-4/CHiME-6 | Real-world Noisy Speech | CHiME-4 includes real and simulated multi-channel recordings based on WSJ0 sentences. CHiME-6 was recorded in real homes using multiple microphone arrays in different rooms. | [96,97,107] | CHiME-4: Evaluation of speech enhancement and multi-microphone ASR systems. CHiME-6: Speech recognition in everyday home environments |

| DNS Challenge Dataset | Real-world Noise with Simulated Noisy Speech | Contains thousands of hours of clean speech and real environmental noises, including office, street, car, restaurant, factory, storm, and household noises. | [98] | Training and evaluation of real-time deep noise suppression models |

| AudioSet | Weakly labeled Noisy Audio | A large-scale dataset containing approximately 5000 h of 10 s audio clips collected from YouTube, covering over 500 sound categories. | [99,108] | Training and evaluation of audio event classification systems |

| DDS Dataset | Noisy–Clean Speech | Includes studio-quality clean speech and corresponding distorted versions, totaling 1944 h of recordings collected under 27 realistic conditions using three microphones and nine acoustic environments. | [100] | Training and evaluation of speech enhancement models and adaptation for ASR systems |

| AURORA-4 | Noisy Speech | Contains 16 kHz speech data derived from the WSJ0 corpus with additive noises at SNRs between 10 and 20 dB. The training set includes 7138 utterances from 83 speakers. | [101,109] | Evaluation of speech enhancement and speech recognition systems |

| REVERB Challenge Dataset | Reverberant Noisy Speech | A database of speech affected by reverberation and noise, including real recordings and simulated far-field microphone data at 16 kHz. | [102,110] | Evaluation of robust speech recognition algorithms |

| WHAMR! | Clean, Noisy, and Reverberant Speech | Includes clean speech, two-speaker mixtures, real environmental noise, reverberation, and full mixtures combining speech, noise, and reverberation with random gains. | [103] | Evaluation of speech enhancement, echo cancellation, and speaker separation algorithms |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Abdulhusein, N.T.; Mahmmod, B.M. An In-Depth Review of Speech Enhancement Algorithms: Classifications, Underlying Principles, Challenges, and Emerging Trends. Algorithms 2026, 19, 134. https://doi.org/10.3390/a19020134

Abdulhusein NT, Mahmmod BM. An In-Depth Review of Speech Enhancement Algorithms: Classifications, Underlying Principles, Challenges, and Emerging Trends. Algorithms. 2026; 19(2):134. https://doi.org/10.3390/a19020134

Chicago/Turabian StyleAbdulhusein, Nisreen Talib, and Basheera M. Mahmmod. 2026. "An In-Depth Review of Speech Enhancement Algorithms: Classifications, Underlying Principles, Challenges, and Emerging Trends" Algorithms 19, no. 2: 134. https://doi.org/10.3390/a19020134

APA StyleAbdulhusein, N. T., & Mahmmod, B. M. (2026). An In-Depth Review of Speech Enhancement Algorithms: Classifications, Underlying Principles, Challenges, and Emerging Trends. Algorithms, 19(2), 134. https://doi.org/10.3390/a19020134