A Multi-Agent Cooperative Group Game Model Based on Intention-Strategy Optimization

Abstract

1. Introduction

2. Fundamental Theory

2.1. Multi-Agent Reinforcement Learning Theory

2.2. Intention Recognition Theory

2.2.1. Bayesian Intention Inference

2.2.2. Recursive Reasoning Model

2.3. Cooperative Game Theory

2.3.1. Coalition Game Fundamentals

2.3.2. Shapley Value

2.3.3. Core Solution

3. Intention-Strategy Optimization Based Multi-Agent Cooperative Group Game Model

3.1. Overall Framework Design

3.2. Group Attention-Based Intention Recognition Network

3.2.1. Dynamic Graph Construction

3.2.2. Multi-Head Attention Mechanism

3.2.3. Intention Prediction

3.2.4. Intention Label Generation

- (1)

- Rule-Based Labeling for Structured Scenarios

- Navigate: Agent’s velocity vector points toward its target with cosine similarity > 0.8.

- Avoid: Agent deviates from shortest path while another agent is within dsafe = 2.0 units.

- Wait: Agent remains stationary (speed < 0.1) for more than 3 consecutive timesteps.

- Replan: Agent changes movement direction by more than 90° within 5 timesteps.

- (2)

- Trajectory Clustering for Complex Scenarios

- (3)

- Label Quality Assurance

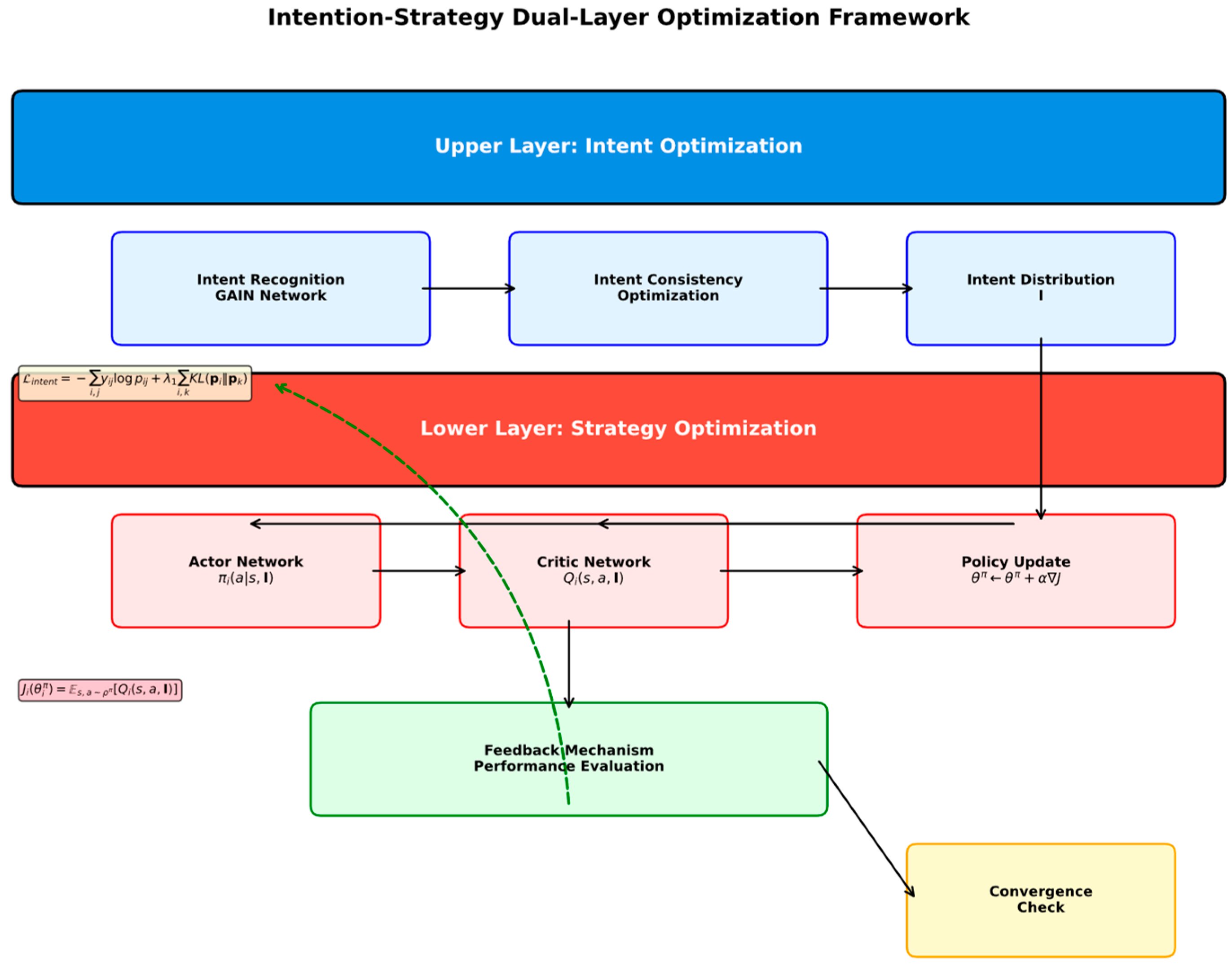

3.3. Intention-Strategy Bi-Level Optimization Framework

3.3.1. Upper-Level Intention Optimization

3.3.2. Lower-Level Strategy Optimization

3.3.3. Bi-Level Collaborative Optimization

3.4. Adaptive Group Game Equilibrium Algorithm

3.4.1. Dynamic Weight Allocation Mechanism

3.4.2. Distributed Nash Equilibrium Solving

3.4.3. Convergence Guarantee

3.5. Complete Algorithm Flow

| Algorithm 1 ISO-MAGCG |

| Input: Environment E, Agent set N, Episodes T, Learning rates απ, αQ, αintent Output: Optimized cooperative strategies π* 1 Initialize GAIN, Actor, Critic networks and replay buffer D 2 for episode = 1 to T do 3 Reset environment, get initial state s0 4 for step t = 0 to max_steps do 5 // Upper layer: Intent recognition 6 Construct dynamic graph Gt and compute node features Ht 7 Apply multi-head attention: Ht+1 = MultiHeadAttention (Ht) 8 Predict intent distributions: = Softmax(Wintent ) 9 10 // Lower layer: Strategy optimization 11 for agent i = 1 to n do 12 Select action: = πi(st, It) + εt 13 end for 14 Execute actions, observe rewards and next state 15 Store experience (st, at, rt, st+1, It) to buffer D 16 17 if |D| > batch_size then 18 Sample batch from D 19 // Update networks 20 Update Critic: ← -αQ▽LQ 21 Update Actor: ← + απ ∇Ji 22 Update Intent: θintent ← θintent - αintent ∇Lintent 23 24 // AGEA equilibrium solving 25 Compute contributions: = ContributionCalculation() 26 Update weights: = exp(β )/Σj exp(β ) 27 Update strategy estimations and compute best responses 28 Soft update target networks 29 end if 30 end for 31 Check convergence, break if converged 32 end for 33 return π* = {, , …, } |

3.6. Algorithm Complexity Analysis

3.6.1. Time Complexity

3.6.2. Space Complexity

- GAIN network parameters: O (K·d2 + n·d);

- Actor-Critic network parameters: O (n·(ds·da + da·dπ));

- Dynamic graph storage: O (n2);

- Experience replay buffer: O(B·(ds + da + dQ)).

4. Experimental Design and Results Analysis

4.1. Experimental Environment Setup

4.1.1. Experimental Platform Configuration

4.1.2. Experimental Scenario Design

4.1.3. Network Architecture Parameters

4.2. Baseline Method Comparison

4.2.1. Baseline Algorithm Selection

4.2.2. Evaluation Metrics

4.3. Comparative Experimental Results

4.3.1. Overall Performance Comparison

- MAT’s attention mechanism operates on the observation-action space without explicit intention modeling, yielding implicit coordination patterns but lacking the interpretability and goal-directedness afforded by intention recognition. In contrast, ISO-MAGCG’s GAIN module explicitly predicts agent intentions to guide strategy optimization.

- ISO-MAGCG’s bi-level optimization framework enables co-evolution of intention recognition and strategy learning, whereas MAT frames coordination as a single-level sequence prediction task; the mutual feedback between ISO-MAGCG’s intention and strategy modules fosters more coherent cooperative behaviors.

4.3.2. Convergence Analysis

4.4. Ablation Study

4.4.1. Component Effectiveness Validation

4.4.2. Hyperparameter Sensitivity Analysis

- (1)

- Under-differentiation (τ < 0.5): Low temperatures produce near-uniform weights regardless of contribution, failing to leverage agent heterogeneity. Performance degrades 8–12%.

- (2)

- Optimal range (0.5 ≤ τ ≤ 2.0): Balanced weights appropriately reward high contributors while maintaining collective optimization. Performance remains within 2% of optimal.

- (3)

- Over-concentration (τ > 5.0): Excessive focus on top contributors neglects supporting agents, causing coordination failures and 10–15% degradation.

4.5. Scalability Analysis

Agent Number Scalability

4.6. Experimental Results Discussion

4.6.1. Performance Advantage Analysis

- Explicit vs. Implicit Coordination: Unlike MAT, which learns implicit coordination patterns from rewards via transformer attention, ISO-MAGCG’s explicit intention modeling enables faster convergence (via structured intermediate supervision) and interpretable predictions—critical for safety-critical applications (e.g., autonomous driving, medical robotics) requiring action explainability.

- Dynamic Intentions vs. Static Roles: ROMA’s capability-based static role clustering is limited in dynamic scenarios, where agents need to switch behavioral modes (e.g., Navigate → Avoid → Cooperate) within episodes. ISO-MAGCG’s dynamic intention recognition adapts to such temporal shifts, overcoming ROMA’s rigidity.

- Task-Specific Attention Design: While both MAT and GAIN utilize attention mechanisms, MAT focuses on observation–action pairs for sequence-based action prediction, whereas GAIN’s graph attention targets agent interaction graphs for intention inference—enabling more relevant feature extraction for cooperative tasks.

4.6.2. Limitations and Improvement Directions

- Computational Complexity and Large-Scale Scalability. The O(n2) complexity poses challenges for systems with >100 agents. Three approximation strategies are proposed:

- Hierarchical Attention: Partition agents into k groups, compute intra-group attention O((n/k)2) and inter-group attention O(k2). Overall complexity reduces to O(n2/k + k2), approaching O(n) when k = √n. Preliminary tests with k = 4 groups on 16 agents showed only 3.1% degradation.

- Sparse Attention: Sample edges based on spatial proximity within threshold dconnectd. For uniformly distributed agents, expected edges become , significantly reducing computation in sparse scenarios.

- Low-Rank Factorization: Approximate the n × n attention matrix using rank-r decomposition, reducing complexity to O(nr).

- Intention Space Design. Current intention spaces are predefined. Future work will explore unsupervised intention discovery using VAE architectures.

- Environmental Adaptability. Adaptability in highly dynamic environments needs further validation through domain randomization and curriculum learning.

- Communication Overhead. Distributed design requires information exchange that may be affected in bandwidth-constrained scenarios. Federated learning approaches warrant investigation.

- Simulation-to-Real Transfer. All experiments were conducted in simulation. Deploying to physical systems (UAV swarms, robotic teams) faces challenges:

- (a)

- Observation Noise: Preliminary robustness tests with Gaussian noise (σ = 0.1) showed only 3.2% degradation, suggesting reasonable tolerance. Systematic evaluation under realistic sensor models is needed.

- (b)

- Communication Constraints: Limited bandwidth may necessitate fully decentralized variants with local intention estimation.

- (c)

- Actuation Dynamics: Continuous actions must respect physical limits not captured in kinematic simulations.

- (d)

- Domain Shift: Real-world intention distributions may differ, requiring online adaptation capabilities.

- (a)

- UAV swarm coordination for search and rescue;

- (b)

- Warehouse multi-robot picking systems;

- (c)

- Autonomous vehicle platoons for highway driving.

5. Conclusions and Future Work

5.1. Research Summary

- Theoretical contributions: Established an intention-strategy bi-level optimization theoretical framework, integrating intention recognition and strategy optimization into a unified framework for collaborative evolution; proposed group intention recognition theory based on graph attention, capturing complex intention dependencies through dynamic graph modeling and multi-head attention mechanisms; constructed an intention-aware multi-agent group game model, extending traditional game theory applications by using intention states as prior information for game strategies.

- Methodological contributions: Designed the Graph Attention Intention Network (GAIN) achieving 94.3% intention recognition accuracy; developed the Adaptive Group Evolution Algorithm (AGEA) combining global search and local optimization for efficient joint optimization; proposed a distributed training architecture supporting parallel training of large-scale multi-agent systems.

- Experimental validation: Constructed a multi-scenario evaluation system covering navigation, resource collection, and defense cooperation. Compared with five mainstream baseline algorithms, ISO-MAGCG significantly outperforms existing methods in task success rate, cumulative reward, cooperation efficiency, and other metrics, with average task success rate improvement of 8.4%, cooperation efficiency improvement of approximately 12%, and convergence speed improvement of 30%, demonstrating excellent performance advantages and scalability.

5.2. Future Research Directions

- Theoretical aspects: Research adaptive intention space learning methods to reduce dependence on expert knowledge, automatically discovering intention patterns based on unsupervised learning; extend convergence theory under non-stationary environments, considering the impact of environmental dynamics, observation noise, and other practical factors; develop multi-level intention modeling theory, establishing relationships between different abstraction levels such as short-term intentions and long-term goals; investigate continuous and latent intention space learning using VAE architectures while maintaining interpretability.

- Methodological aspects: Develop efficient approximation algorithms based on sampling, compression, and other techniques to reduce O(n2) computational complexity; research federated learning architectures to reduce communication overhead while protecting agent privacy; explore multi-modal intention recognition methods, integrating multiple information sources such as behavioral trajectories, linguistic communication, and visual information to improve recognition accuracy.

- Application aspects: Validate method effectiveness in real scenarios such as UAV swarms, robot cooperation, and intelligent transportation; research human–robot collaboration systems, considering human behavioral uncertainty and subjectivity; address challenges of system scale, heterogeneity, and dynamics for large-scale distributed systems like smart cities and IoT.

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Ardy, M.M.M.; Adzkiya, D. Optimisation of multi-agent based medical diagnosis with adaptive particle swarm and firefly algorithm in healthcare sector. Eng. Headw. 2025, 29, 33–40. [Google Scholar] [CrossRef]

- Mohamed, M.A.; Shadoul, M.; Yousef, H.; Al Abri, R.; Sultan, H.M. Multi-agent based optimal sizing of hybrid renewable energy systems and their significance in sustainable energy development. Energy Rep. 2024, 12, 4830–4853. [Google Scholar] [CrossRef]

- Rashid, T.; Samvelyan, M.; Schroeder, C.W.D.; Farquhar, G.; Foerster, J.; Whiteson, S. Monotonic value function factorisation for deep multi-agent reinforcement learning. J. Mach. Learn. Res. 2020, 21, 7234–7284. [Google Scholar]

- Son, K.; Kim, D.; Kang, W.J.; Hostallero, D.E.; Yi, Y. QTRAN: Learning to factorize with transformation for cooperative multi-agent reinforcement learning. In Proceedings of the 36th International Conference on Machine Learning (ICML), Long Beach, CA, USA, 10–15 June 2019; pp. 5887–5896. [Google Scholar]

- Kong, X.; Yang, J.; Chai, X.; Zhou, Y. An advantage duplex dueling multi-agent Q-learning algorithm for multi-UAV cooperative target search in unknown environments. Simul. Model. Pract. Theory 2025, 142, 103118. [Google Scholar] [CrossRef]

- Li, G.; Zhao, Z.; Chai, R.; Zhu, M. An analysis of the mechanism and mode evolution for blockchain-empowered research credit supervision based on prospect theory: A case from China. Mathematics 2025, 13, 3557. [Google Scholar] [CrossRef]

- Zhang, Y.; Zhou, Y. Cooperative multi-agent actor-critic approach using adaptive value decomposition and parallel training for traffic network flow control. Neurocomputing 2025, 623, 129384. [Google Scholar] [CrossRef]

- Chernyavskiy, A.; Skrynnik, A.; Panov, A. Applying opponent and environment modelling in decentralised multi-agent reinforcement learning. Cogn. Syst. Res. 2025, 89, 101306. [Google Scholar] [CrossRef]

- Xu, Z.; Sun, H.; Sun, P. Irregular mobility: A dynamic alliance formation incentive mechanism under incomplete information. Inf. Sci. 2025, 712, 122155. [Google Scholar] [CrossRef]

- Asadi, M. Detecting IoT botnets based on the combination of cooperative game theory with deep and machine learning approaches. J. Ambient Intell. Humaniz. Comput. 2021, 13, 5547–5561. [Google Scholar] [CrossRef]

- Terán, J.; Aguilar, J.L.; Cerrada, M. Mathematical models of coordination mechanisms in multi-agent systems. CLEI Electron. J. 2013, 16, 5. [Google Scholar] [CrossRef]

- Jia, Y.; Zhang, Z.; Tan, X.; Liu, X. Asymmetric active cooperation strategy in spectrum sharing game with imperfect information. Int. J. Commun. Syst. 2015, 28, 539–554. [Google Scholar] [CrossRef]

- Li, M.; Chen, S.; Shen, Y.; Liu, G.; Tsang, I.W.; Zhang, Y. Online multi-agent forecasting with interpretable collaborative graph neural networks. IEEE Trans. Neural Netw. Learn. Syst. 2022, 35, 4761–4773. [Google Scholar] [CrossRef] [PubMed]

- Pang, Y.; Zhao, X.; Hu, J.; Yan, H.; Liu, Y. Bayesian spatio-temporal graph transformer network (B-STAR) for multi-aircraft trajectory prediction. Knowl. Based Syst. 2022, 249, 108998. [Google Scholar] [CrossRef]

- Zhao, Z.; Zhang, Y.; Chen, W.; Zhang, F.; Wang, S.; Zhou, Y. Sequence value decomposition transformer for cooperative multi-agent reinforcement learning. Inf. Sci. 2025, 720, 122514. [Google Scholar] [CrossRef]

- Yi, P.; Lei, J.; Hong, Y.; Chen, J. Embodied intelligent game: Models and algorithms for autonomous interactions among heterogeneous agents. Unmanned Syst. 2025, 13, 1365–1394. [Google Scholar] [CrossRef]

- Jiang, L.; Li, Q.; Chen, X. A novel multi-agent game-theoretic model for cybersecurity strategies in EV charging networks: Addressing risk propagation and budget constraints. Energy 2025, 330, 136847. [Google Scholar] [CrossRef]

- Grover, P.; Huo, M. Phase transition in a kinetic mean-field game model of inertial self-propelled agents. Chaos 2024, 34, 123106. [Google Scholar] [CrossRef]

- Byeon, H.; Thingom, C.; Keshta, I.; Soni, M.; Hannan, S.A.; Surbakti, H. A logic Petri net model for dynamic multi-agent game decision-making. Decis. Anal. J. 2023, 9, 100355. [Google Scholar] [CrossRef]

- Inukai, J.; Taniguchi, T.; Taniguchi, A.; Hagiwara, Y. Recursive Metropolis-Hastings naming game: Symbol emergence in a multi-agent system based on probabilistic generative models. Front. Artif. Intell. 2023, 6, 1229127. [Google Scholar] [CrossRef] [PubMed]

- An, N.; Zhao, X.; Wang, Q.; Wang, Q. Model-free distributed optimal consensus control of nonlinear multi-agent systems: A graphical game approach. J. Frankl. Inst. 2023, 360, 8166–8187. [Google Scholar] [CrossRef]

- Manoharan, A.; Sujit, P.B. Nonlinear model predictive control framework for cooperative three-agent target defense game. J. Intell. Robot. Syst. 2023, 108, 21. [Google Scholar] [CrossRef]

- Inoue, D.; Ito, Y.; Kashiwabara, T.; Saito, N.; Yoshida, H. Partially centralized model-predictive mean field games for controlling multi-agent systems. IFAC J. Syst. Control 2023, 24, 100228. [Google Scholar] [CrossRef]

- Zhao, W.; Fan, S.; Hou, J.; Yu, J. Noncooperative multiagent game model for the electricity market on the basis of the renewable energy portfolio standard. Eng. Rep. 2022, 4, e12544. [Google Scholar] [CrossRef]

- Zhang, J.; Wang, G.; Yue, S.; Song, Y.; Liu, J.; Yao, X. Multi-agent system application in accordance with game theory in bi-directional coordination network model. J. Syst. Eng. Electron. 2020, 31, 279–289. [Google Scholar] [CrossRef]

- Zeng, Y.; Xu, K.; Qin, L.; Yin, Q. A semi-Markov decision model with inverse reinforcement learning for recognizing the destination of a maneuvering agent in real time strategy games. IEEE Access 2020, 8, 15392–15409. [Google Scholar] [CrossRef]

- Jia, W.; Ji, M. Multi-agent deep reinforcement learning for large-scale traffic signal control with spatio-temporal attention mechanism. Appl. Sci. 2025, 15, 8605. [Google Scholar] [CrossRef]

- Wang, D.; He, J.; Wang, X.; Li, Z. Sensor activation policy optimization for K-diagnosability based on multi-agent reinforcement learning. Inf. Sci. 2025, 718, 122360. [Google Scholar] [CrossRef]

| Scenario | Intention Space | Size | Labeling Method |

|---|---|---|---|

| Multi-Agent Navigation | {Navigate, Avoid, Wait, Replan} | 4 | Rule-based: velocity direction toward target (Navigate), proximity deviation (Avoid), stationary > 3 steps (Wait), direction change > 90° (Replan) |

| Resource Collection | {Collect, Transport, Cooperate, Explore} | 4 | Rule-based + proximity: agents within d_coop = 3.0 moving toward same resource (Cooperate) |

| Defense Cooperation | {Intercept, Patrol, Support, Retreat, Hold} | 5 | DTW trajectory clustering + manual semantic labeling |

| Scenario | Total Labels | Distribution | Auto-Check Pass | Manual Accuracy | Agreement |

|---|---|---|---|---|---|

| Navigation | 48,000 | Navigate 42%, Avoid 28%, Wait 18%, Replan 12% | 97.3% | 96.8% | 97.2% |

| Resource Collection | 52,000 | Collect 35%, Transport 25%, Cooperate 24%, Explore 16% | 96.1% | 95.4% | 96.1% |

| Defense Cooperation | 45,000 | Intercept 22%, Patrol 28%, Support 20%, Retreat 15%, Hold 15% | 94.8% | 95.1% | 95.7% |

| Configuration Type | Specific Configuration |

|---|---|

| Hardware Configuration | |

| CPU | Intel Core i9-12900K |

| GPU | NVIDIA RTX 4090 (24 GB VRAM) |

| Memory | 64 GB DDR4 |

| Software Environment | |

| Operating System | Ubuntu 20.04 LTS |

| Programming Language | Python 3.9 |

| Deep Learning Framework | PyTorch 1.12.0 |

| GPU Acceleration | CUDA 11.6 |

| Simulation Environment | OpenAI Gym + PettingZoo |

| Scenario | Environment Scale | Agent Number | State Space | Action Space | Reward Design |

|---|---|---|---|---|---|

| Multi-Agent Navigation | 20 × 20 grid | n ∈ {4, 6, 8, 10} | R4n | {0, 1, 2, 3, 4} | Reach target +10, collision −5, time −0.1 |

| Resource Collection | 15 × 15 grid | n ∈ {6, 8, 10, 12} | R6n+25 | {0, 1, 2, 3, 4, 5} | Collection +5, cooperation +2, efficiency reward |

| Defense Cooperation | 25 × 25 continuous | n ∈ {8, 10, 12, 15} | R8n+4m | [−1, 1]2 | Defense +8, cooperation +3, area control |

| Network Module | Parameter Configuration | Value |

|---|---|---|

| GAIN Network | Hidden layer dimension | 128 |

| Number of attention heads | 4 | |

| Number of network layers | 3 | |

| Actor Network | Hidden layer dimensions | [256, 128, 64] |

| Activation function | ReLU | |

| Output layer activation | Tanh | |

| Critic Network | Hidden layer dimensions | [256, 128, 64] |

| Activation function | ReLU | |

| Training Parameters | Actor learning rate | 3 × 10−4 |

| Critic learning rate | 1 × 10−3 | |

| Intent learning rate | 5 × 10−4 | |

| Discount factor | 0.99 | |

| Soft update coefficient | 0.005 | |

| Batch size | 256 |

| Algorithm | Type | Core Features | Main Parameters | Year |

|---|---|---|---|---|

| QMIX | Value decomposition | Monotonicity assumption, centralized training distributed execution | Mixing network [64, 32], learning rate 5 × 10−4 | 2018 |

| MADDPG | Policy gradient | Centralized Critic, continuous actions | Actor/Critic [400, 300], α = 1 × 10−2 | 2017 |

| COMA | Actor-Critic | Counterfactual baseline, credit assignment | GRU hidden 64, entropy coefficient 1 × 10−3 | 2018 |

| QTRAN | Value decomposition | Relaxed monotonicity constraint | Transform network [64, 64], λ = 5 × 10−3 | 2019 |

| MAPPO | Policy optimization | Parameter sharing, centralized value | Network [64, 64], ε = 0.2, λ = 0.95 | 2021 |

| MAT | Transformer-based | Multi-agent transformer, sequential modeling | d_model = 128, heads = 4, layers = 2 | 2022 |

| ROMA | Role-based | Emergent role discovery, role encoder | Role dim = 64, n_roles = 4, α = 5 × 10−4 | 2020 |

| Algorithm | Navigation Task | Resource Collection | Defense Cooperation | ||||||

|---|---|---|---|---|---|---|---|---|---|

| TSR (%) | ACR | CE (%) | TSR (%) | ACR | CE (%) | TSR (%) | ACR | CE (%) | |

| QMIX | 72.3 ± 2.1 | 145.2 ± 8.3 | 23.4 ± 3.2 | 68.5 ± 2.8 | 89.7 ± 5.1 | 18.7 ± 2.9 | 65.8 ± 3.1 | 112.4 ± 6.7 | 21.2 ± 3.5 |

| MADDPG | 75.8 ± 1.9 | 152.6 ± 7.1 | 28.1 ± 2.8 | 71.2 ± 2.3 | 95.3 ± 4.8 | 22.4 ± 2.1 | 69.4 ± 2.7 | 118.9 ± 5.9 | 25.3 ± 2.8 |

| COMA | 78.1 ± 2.3 | 158.9 ± 6.8 | 31.7 ± 3.1 | 73.6 ± 2.6 | 98.1 ± 4.2 | 25.8 ± 2.7 | 71.7 ± 2.9 | 125.3 ± 6.2 | 28.6 ± 3.2 |

| QTRAN | 76.4 ± 2.0 | 149.8 ± 7.5 | 26.9 ± 2.9 | 70.9 ± 2.4 | 92.6 ± 4.9 | 20.3 ± 2.5 | 68.2 ± 3.0 | 115.7 ± 6.4 | 23.1 ± 2.9 |

| MAPPO | 81.2 ± 1.7 | 165.4 ± 5.9 | 35.2 ± 2.4 | 76.8 ± 2.1 | 103.2 ± 3.8 | 29.6 ± 2.3 | 74.5 ± 2.5 | 131.8 ± 5.1 | 32.4 ± 2.6 |

| MAT | 83.5 ± 1.6 | 172.8 ± 5.4 | 38.6 ± 2.2 | 79.1 ± 1.9 | 108.5 ± 3.5 | 33.2 ± 2.1 | 76.8 ± 2.3 | 138.4 ± 4.8 | 35.7 ± 2.4 |

| ROMA | 82.1 ± 1.8 | 168.3 ± 5.7 | 36.4 ± 2.5 | 77.5 ± 2.0 | 105.1 ± 3.9 | 31.1 ± 2.4 | 75.2 ± 2.6 | 134.2 ± 5.3 | 33.8 ± 2.7 |

| ISO-MAGCG | 89.6 ± 1.4 * | 187.3 ± 4.2 * | 47.8 ± 1.9 * | 84.2 ± 1.8 * | 125.6 ± 3.1 * | 42.3 ± 2.0 * | 82.1 ± 2.0 * | 156.2 ± 4.6 * | 45.1 ± 2.2 * |

| Algorithm | Convergence Episodes | Final Performance (%) | Training Stability | Computation Time (min) | p-Value * |

|---|---|---|---|---|---|

| QMIX | 1850 ± 120 | 68.9 ± 2.8 | 0.78 ± 0.05 | 8.7 ± 0.6 | <0.001 |

| MADDPG | 1720 ± 95 | 72.1 ± 2.3 | 0.82 ± 0.04 | 12.4 ± 0.8 | <0.001 |

| COMA | 1680 ± 110 | 74.5 ± 2.6 | 0.85 ± 0.03 | 15.6 ± 1.1 | <0.001 |

| QTRAN | 1780 ± 105 | 71.8 ± 2.4 | 0.80 ± 0.04 | 9.8 ± 0.7 | <0.001 |

| MAPPO | 1520 ± 85 | 77.5 ± 2.1 | 0.89 ± 0.02 | 10.3 ± 0.5 | <0.001 |

| MAT | 1380 ± 75 | 80.2 ± 1.9 | 0.91 ± 0.02 | 14.8 ± 0.6 | <0.001 |

| ROMA | 1450 ± 80 | 78.6 ± 2.0 | 0.88 ± 0.03 | 11.2 ± 0.5 | <0.001 |

| ISO-MAGCG | 1180 ± 65 | 85.3 ± 1.8 | 0.94 ± 0.02 | 13.2 ± 0.4 | - |

| Model. | Navigation | Resource Collection | Defense Cooperation | Average | Decrease |

|---|---|---|---|---|---|

| Complete | 89.6 ± 1.4 | 84.2 ± 1.8 | 82.1 ± 2.0 | 85.3% | - |

| w/o GAIN | 78.4 ± 2.3 | 72.6 ± 2.7 | 69.8 ± 3.2 | 73.6% | −11.7% |

| w/o Attention (GAIN w/MLP) | 82.1 ± 2.0 | 76.8 ± 2.3 | 74.2 ± 2.7 | 77.7% | −7.6% |

| w/o ISO | 81.2 ± 2.1 | 76.1 ± 2.4 | 73.5 ± 2.8 | 76.9% | −8.4% |

| w/o AGEA | 83.7 ± 1.9 | 78.9 ± 2.2 | 75.2 ± 2.6 | 79.3% | −6.0% |

| w/o Multi-Head (K = 1) | 85.1 ± 1.8 | 80.3 ± 2.0 | 77.6 ± 2.4 | 81.0% | −4.3% |

| Hyperparameter | Test Range | Optimal Value | Performance Range | Sensitivity |

|---|---|---|---|---|

| Learning rate | [1 × 10−5, 1 × 10−2] | 3 × 10−4 | [76.2%, 89.6%] | Medium |

| Number of attention heads | [1, 8] | 4 | [81.3%, 89.6%] | Low |

| Weight parameter | [0.01, 1.0] | 0.1 | [82.7%, 89.6%] | Low |

| Batch size | [64, 512] | 256 | [85.1%, 89.6%] | Very low |

| Temperature | [0.1, 10] | 1.0 | [77.2%, 89.6%] | Medium-High |

| Discount factor | [0.9, 0.999] | 0.99 | [84.8%, 89.6%] | Low |

| Agent Number | ISO-MAGCG | MAPPO | QMIX | Performance Retention Rate | Computation Time (min) |

|---|---|---|---|---|---|

| 4 | 92.1 ± 1.2 | 85.3 ± 1.8 | 78.6 ± 2.1 | 100% | 2.1 |

| 8 | 89.6 ± 1.4 | 81.2 ± 1.7 | 72.3 ± 2.3 | 97.3% | 8.5 |

| 12 | 85.2 ± 1.8 | 76.1 ± 2.1 | 65.8 ± 2.8 | 92.5% | 19.1 |

| 16 | 82.6 ± 2.0 | 72.8 ± 2.4 | 61.4 ± 3.1 | 89.7% | 35.2 |

| 20 | 79.4 ± 2.3 | 68.5 ± 2.7 | 56.9 ± 3.4 | 86.2% | 58.7 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Tang, M.; Chen, R.; Zhu, J. A Multi-Agent Cooperative Group Game Model Based on Intention-Strategy Optimization. Algorithms 2026, 19, 22. https://doi.org/10.3390/a19010022

Tang M, Chen R, Zhu J. A Multi-Agent Cooperative Group Game Model Based on Intention-Strategy Optimization. Algorithms. 2026; 19(1):22. https://doi.org/10.3390/a19010022

Chicago/Turabian StyleTang, Mingjun, Renwen Chen, and Junwu Zhu. 2026. "A Multi-Agent Cooperative Group Game Model Based on Intention-Strategy Optimization" Algorithms 19, no. 1: 22. https://doi.org/10.3390/a19010022

APA StyleTang, M., Chen, R., & Zhu, J. (2026). A Multi-Agent Cooperative Group Game Model Based on Intention-Strategy Optimization. Algorithms, 19(1), 22. https://doi.org/10.3390/a19010022