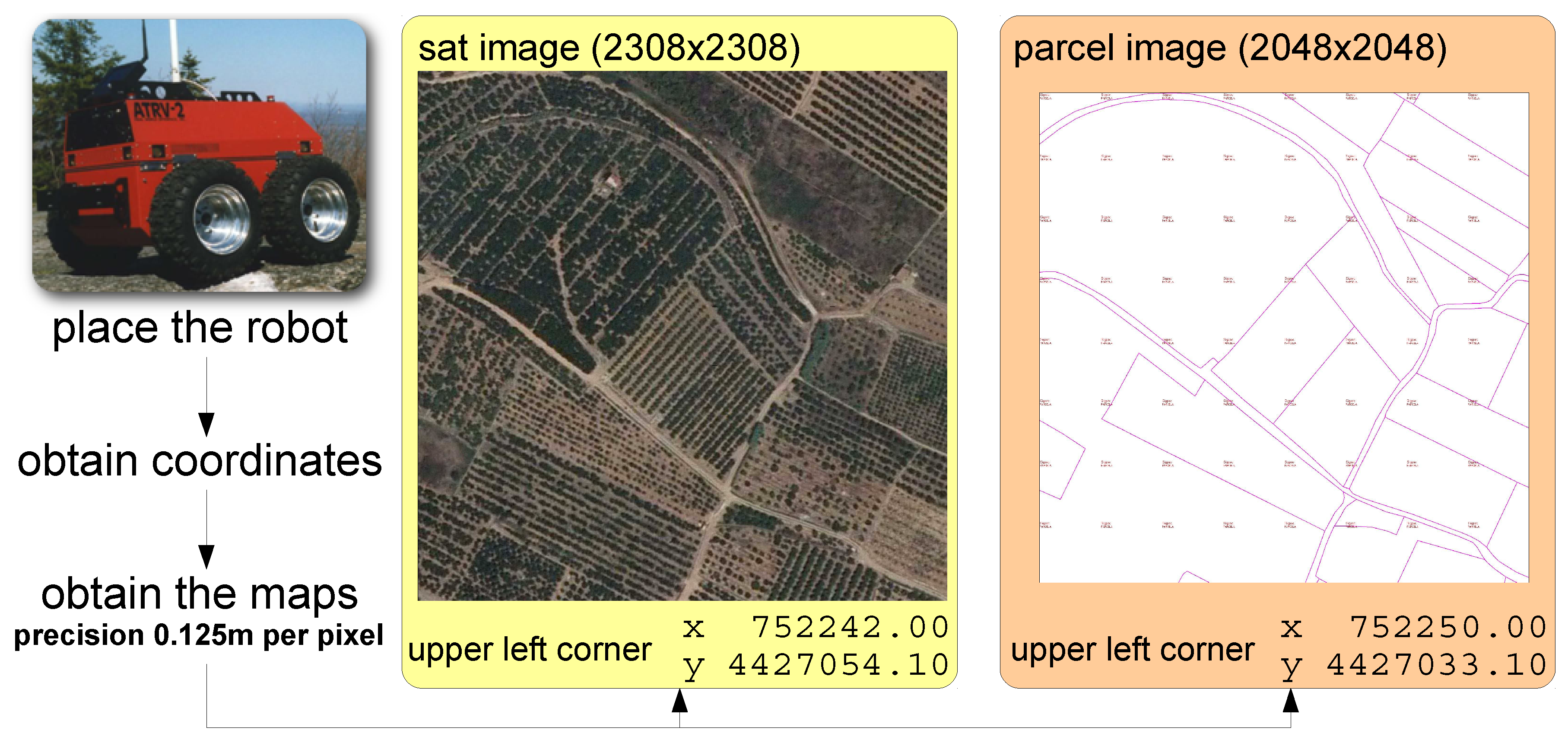

This section introduces the steps necessary to obtain the path planning for an autonomous robot (or team of robots) using the current location of the robots, satellite imagery, and cadastral information. For this purpose, we identified two main stages.

3.1. First Stage: Development of the Autonomous Mapping System

The proposed autonomous mapping module is composed of two main sub-modules:

Acquisition sub-module: This sub-module is in charge of acquiring the images from online databases to generate the final map of the parcel. Concretely, the system will download the images from a public administrative database,

SIGPAC, using the information provided by the

GPS sensor included in the robots. In other related experiments, we installed a submeter precision GPS in the autonomous robots (GPS Pathfinder ProXT Receiver) [

22]. The location accuracy obtained in those cases encourages us to integrate that GPS in the current system. However, the GPS integration is out of this paper’s scope, since the main objective of this paper is to introduce the algorithms to combine satellite and cadastral images and to show a proof-of-concept. This sub-module provides two images to the “map creation module”: the satellite image of the parcel and the image with parcel borders.

Map creation sub-module: This sub-module will be in charge of extracting all the information from the images: paths, orange trees, borders, and obstacles. With the extracted information, the map (path planning) which the robots should follow will be generated. The initial strategy to cover efficiently all the parcels with the robots will be done in this sub-module. The maps and satellite imagery resolution are set to 0.125 m per pixel. This resolution was easily reached with both services (satellite imagery and cadastral imagery) and was low enough to represent the robot dimensions (around 50 by 80 cm), and the computation burden was not so demanding. With the new Galileo services, higher resolution images can be obtained (with lower cm per pixel rates). Although more precise paths could be obtained, the computational cost of fast marching to generate the paths would also considerably increase.

3.1.1. Acquisition Sub-Module

This sub-module is in charge of obtaining the two images which are needed to create the map of the parcel from the current position of the autonomous system. Its description is detailed in Algorithm 1 where represents the current position of the robotic system, is the resolution of the acquired imagery, and stands for the radius of the area.

| Algorithm 1 ObtainImages{,,} |

= ObstainSatImage{,,} = ObstainBorders{,,} Return:,

|

The output generated in this sub-module includes the full remote image of the area, , and the borders of the parcels, , which will be processed in the “Map Creation module”.

3.1.2. Map Creation Sub-Module

This sub-module is in charge of creating the map of the parcel from the two images obtained in the previous sub-module. The map contains information about the parcel: trees rows, paths, and other elements where the robot cannot navigate through (i.e., obstacles). Its description can be found in Algorithm 2.

| Algorithm 2 GenerateMap{,,} |

= GenerateParcelMask{,} [,] = CropImagery{,} [,,] = ExtractMasks{,,}

|

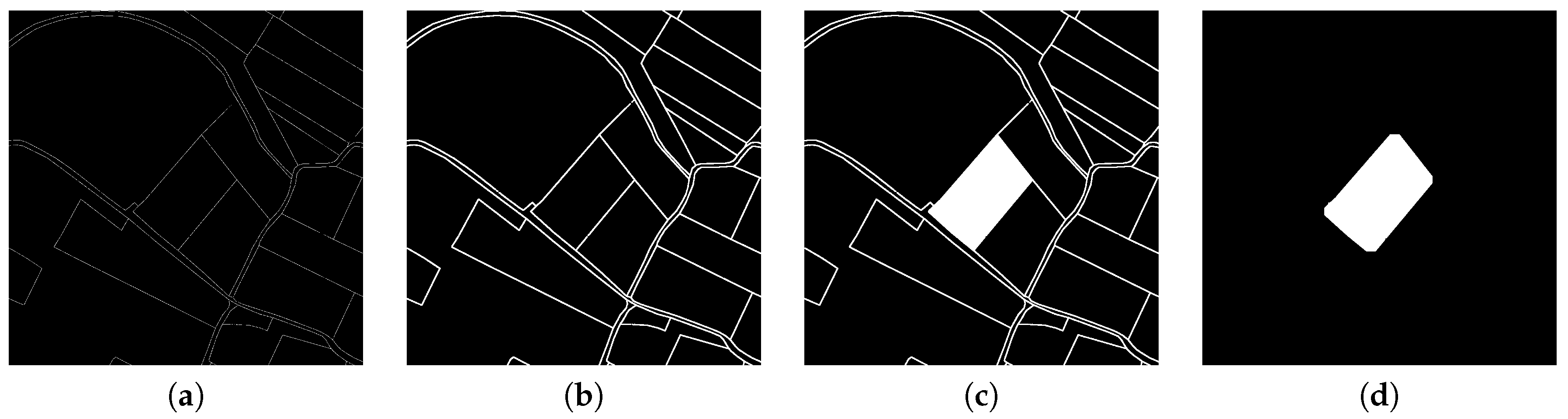

The first step consists of determining the full parcel mask from the borders previously acquired. With the robot coordinates and the image containing the borders, this procedure generates a binary mask that represents the current parcel where the robot is located. This procedure is described in Algorithm 3.

| Algorithm 3 GenerateParcelMask{,} |

Determine position in : and Convert to grayscale: Binarize : Dilate : Flood-fill in : Erode : Dilate : Return:

|

Once the parcel mask is determined from the parcel image, it is time to remove those areas outside the parcel in the satellite image and the parcel mask itself. To avoid underrepresentation of the parcels, a small offset was added to the mask. This step is performed to reduce imagery size for further treatment with advanced techniques. This procedure is detailed in Algorithm 4.

| Algorithm 4 CropImagery{,} |

Determine real position of left-upper corner of Determine real position of left-upper corner of Determine offset in pixels with respect Determine parcel’s bounding box: Determine first and last row of that is in parcel Determine first and last column of that is in parcel Crop directly: Crop according to offset values: Return: and

|

Since the location of the pixels in the real world is known using the WGS84 with UTM zone 30N system (EPSG:32630), the offset between the satellite image and the parcel mask can be directly computed. Then, it is only necessary to determine the bounding box surrounding the parcel in the parcel mask to crop both images.

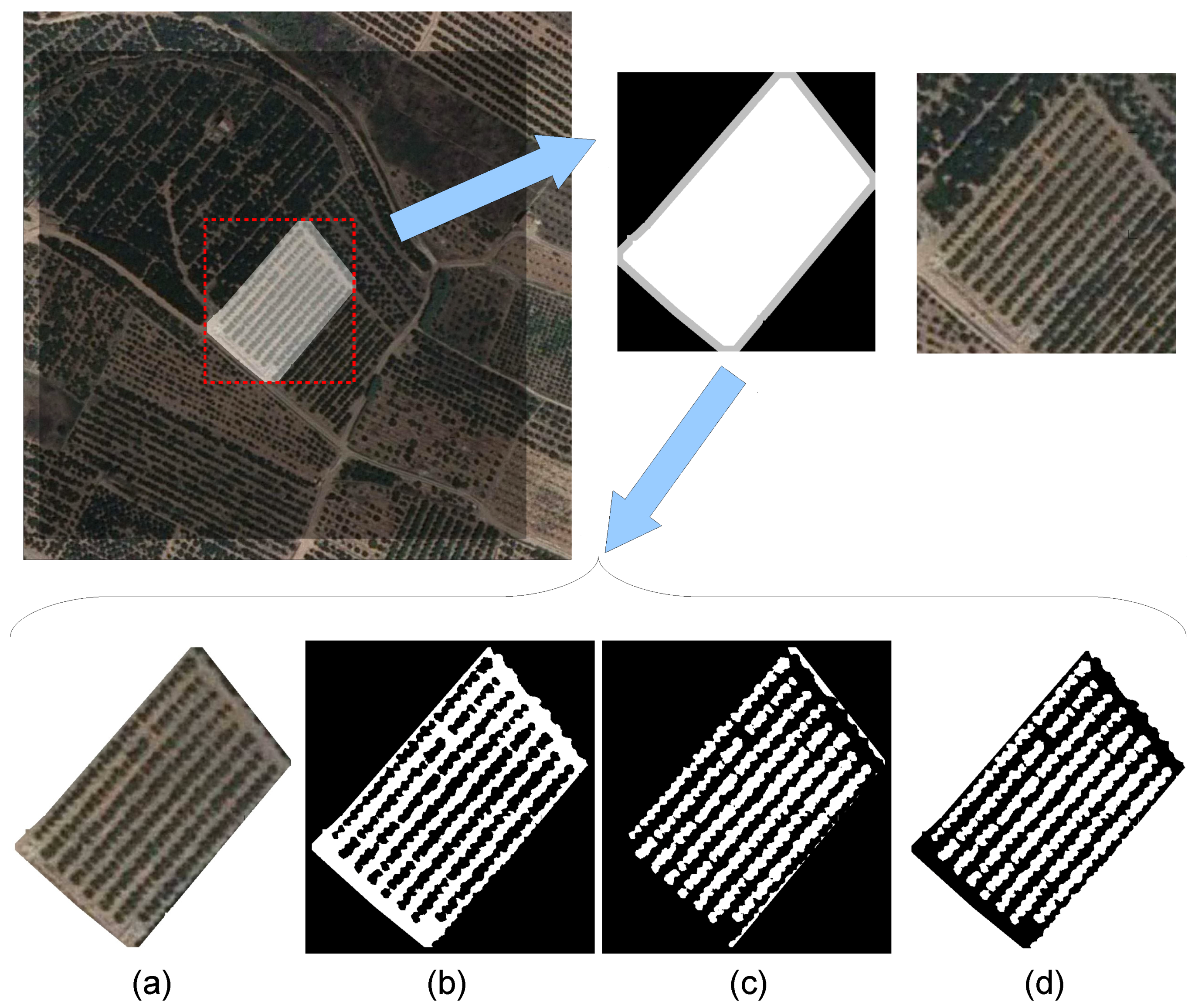

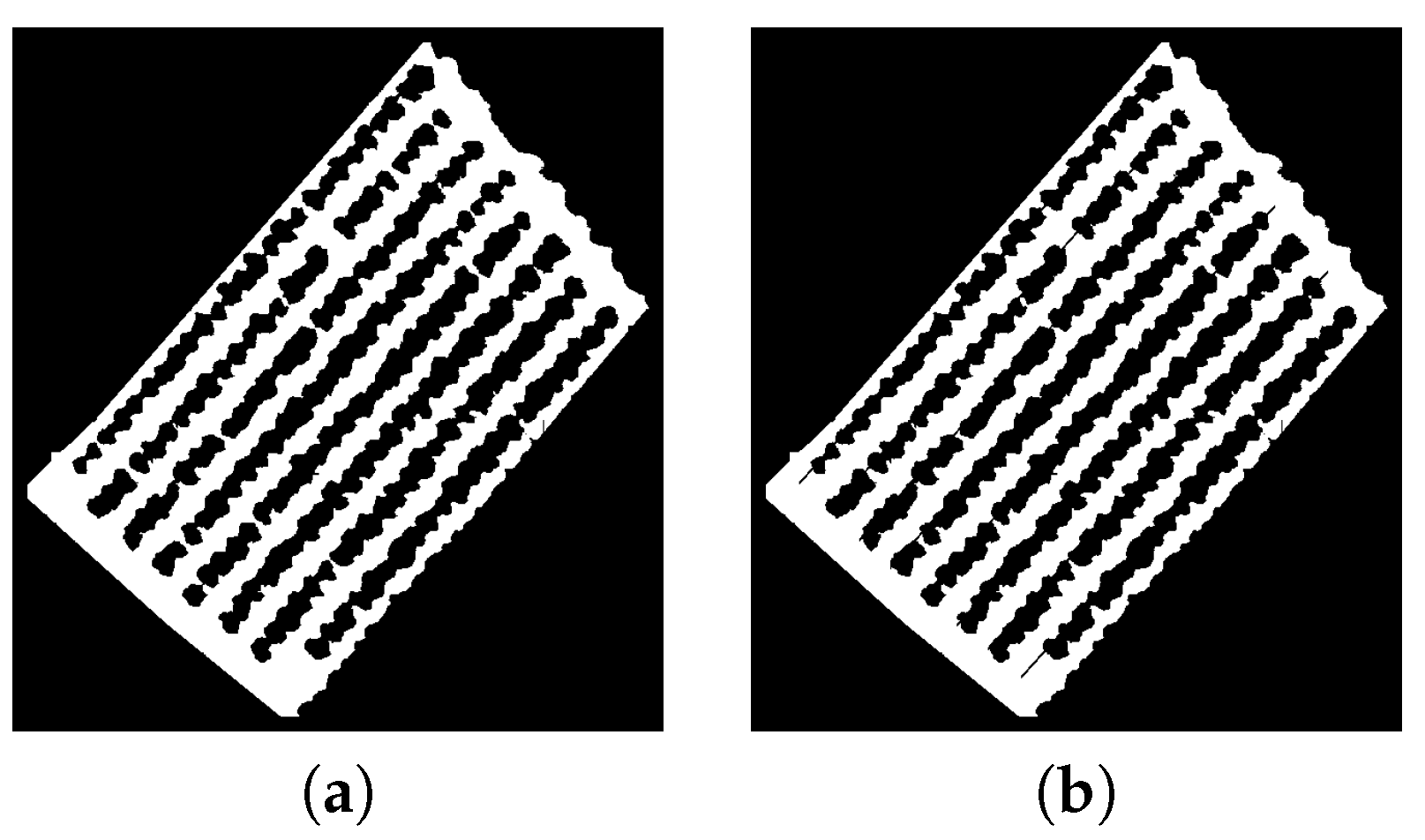

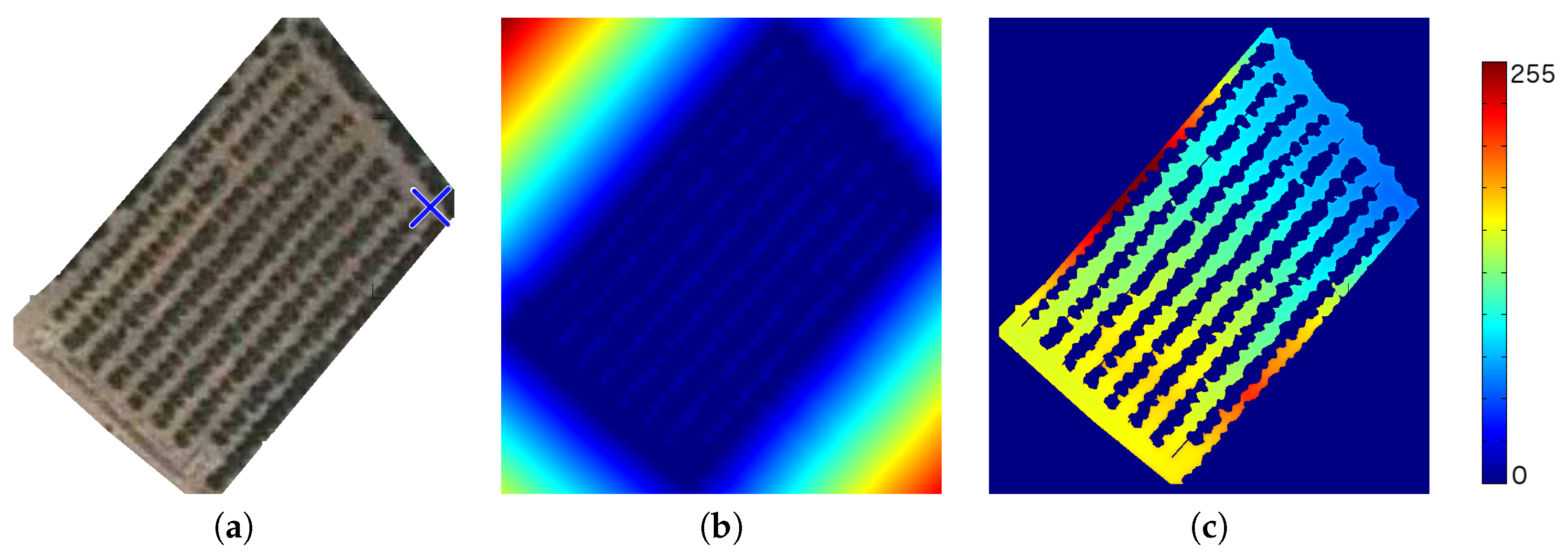

At this point, the reduced satellite map needs to be processed to distinguish between the path and obstacles. We analyzed different satellite images from groves located in the province of Castellón in Spain, and the images were clear with little presence of shadows. Therefore, we set the binarization threshold to 112 in our work. Although we applied a manual threshold, a dynamic thresholding binarization could be applied to have a more robust method to distinguish the different elements in the satellite image, in case shadows were present. Algorithm 5 has been used to process the reduced satellite map andto obtain the following three masks or binary images:

—a binary mask that contains the path where the robot can navigate;

—a binary mask that contains the area assigned to orange trees;

—a binary mask that contains those pixels which are considered obstacles: this mask corresponds to the inverse of the path.

| Algorithm 5 GenerateMasks{ and } |

Convert to gray scale: Extract and values of the parcel pixels in Normalize values: Generate B&W Masks: Remove path pixels not connected to in Generate Mask: Return: and

|

3.2. Second Stage: Path Planning

This module is used to determine the final path that the robotic system or team of robots should follow. The principal work for path planning in autonomous robotic systems is to search for a collision-free path and to cover all the groves. This section is devoted to describing all the steps necessary to generate the final path (see Algorithm 6) using as input the three masks provided by Algorithm 5.

To perform the main navigation task, the navigation module has been divided into five different sub-modules:

Determining the tree lines

Determining the reference points

Determining the intermediate points where the robot should navigate

Path planning through the intermediate points

Real navigation through the desired path

First of all, the tree lines are determined. From these lines and the Path binary map, the reference points of the grove are determined. Later, the intermediate points are selected from the reference points. With the intermediate points, a fast path free of collisions is generated to cover all the groves. Finally, the robotic systems perform real navigation using the generated path.

| Algorithm 6 PathPlanning{,,} |

= DetectLines{,} [,] = ReferencePoints{,} = CalculatePath{,,, Return:

|

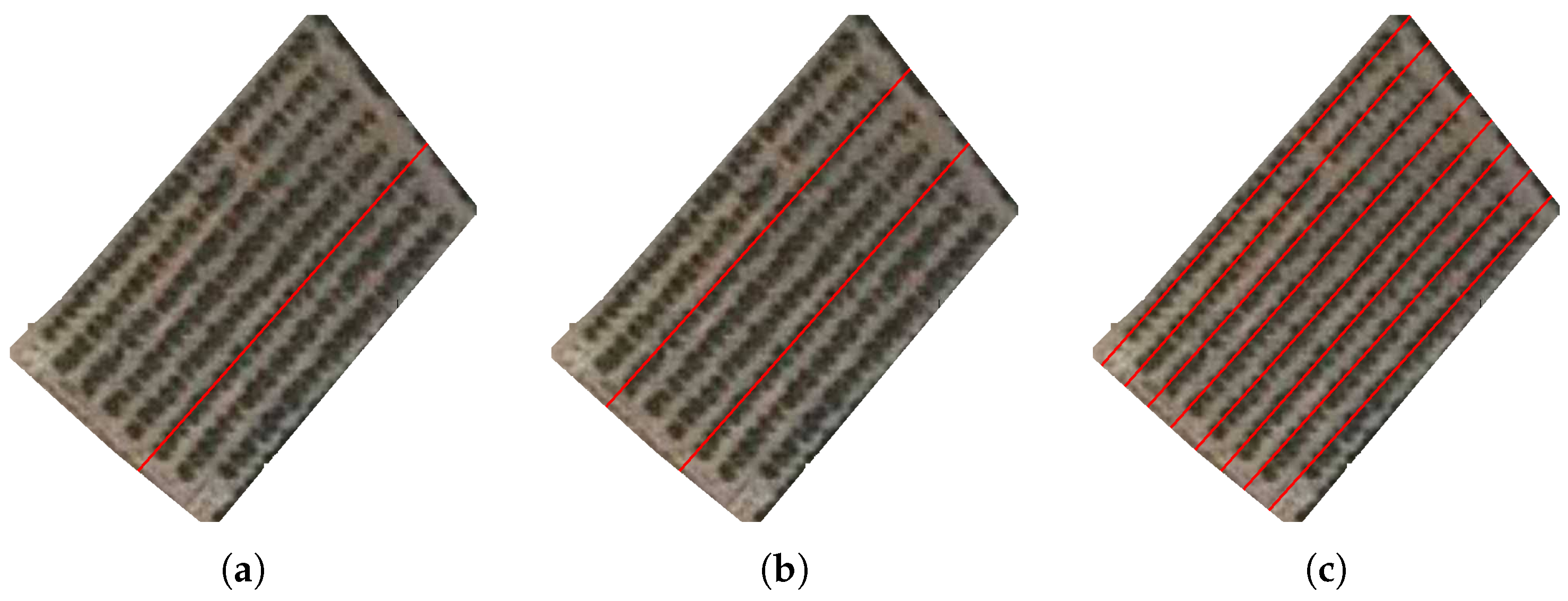

3.2.1. Detecting Tree Lines

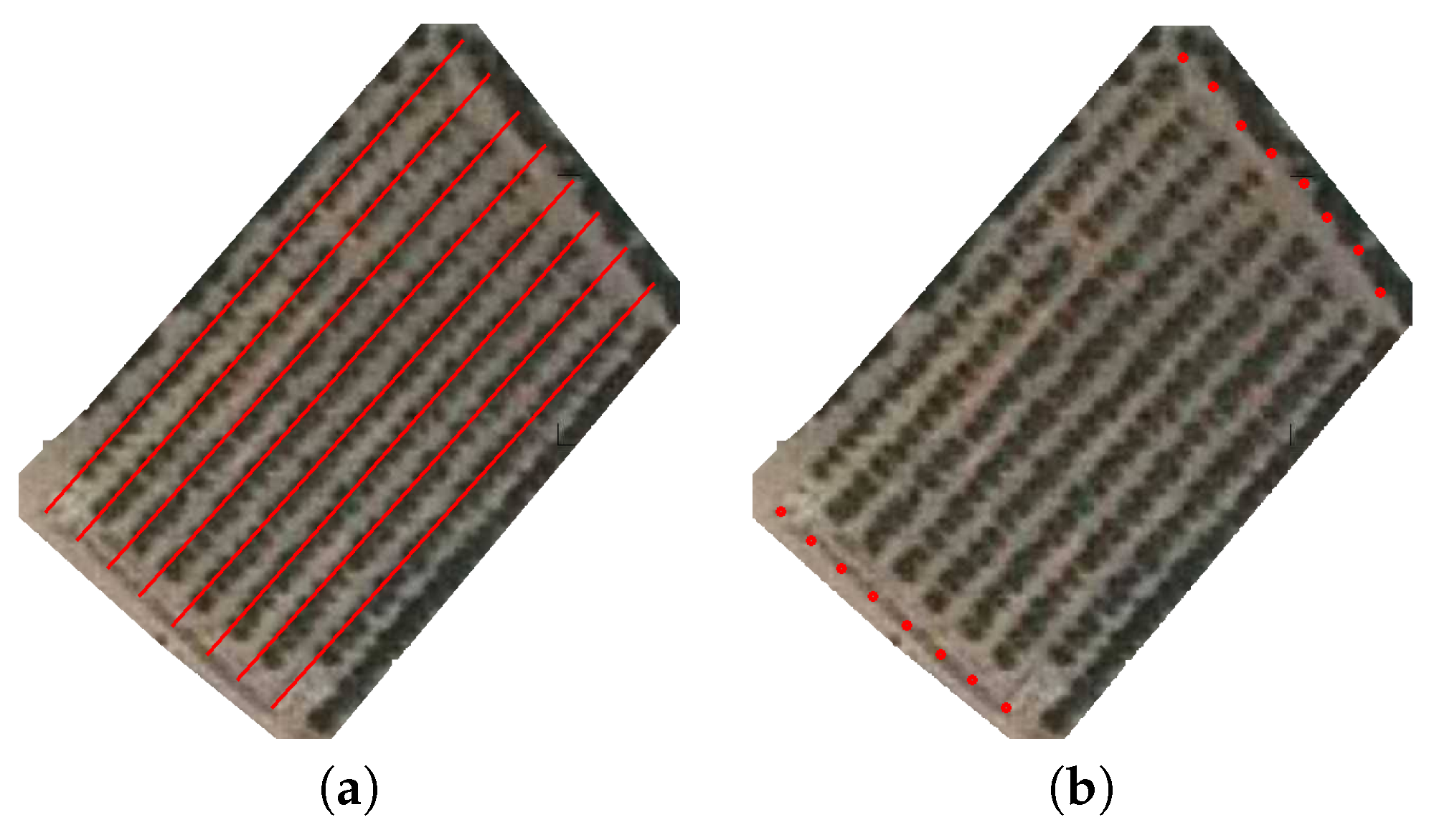

The organization of citric groves is well defined as it can be seen in the artificial composition depicted in

Figure 2; the trees are organized in rows separated by a relatively wide path to optimize treatment and fruit collection. The first step of the navigation module consists of detecting the tree lines.

The

Obstacles binary map (

in Algorithm 5) contains the pixels located inside the parcel that correspond to trees. For this reason, the rows containing trees are extracted by using the Hough Transform method (see Reference [

24]). Moreover, some constraints to this technique have been added to avoid selecting the same tree line several times. In this procedure, the

Hesse standard form (Equation (

1)) is used to represent lines with two parameters:

(angle) and

(displacement). Each element of the resulting matrix of the Hough Transform contains some votes which depend on the number of pixels which corresponds to the trees that are on the line.

According to the structure of the parcels, the main tree rows can be represented with lines. We applied Algorithm 7 to detect the main tree rows (rows of citric trees). First, the most important lines, the ones with the highest number of votes, are extracted. The first extracted line (the line with the highest number of votes) is considered the “main” line, and its parameters (, and ) are stored. Then, those lines that have at least and of which the angle is are also extracted. This first threshold () has been used to filter the small lines that do not represent full orange rows, whereas the second threshold () has been used to select those orange rows parallel to the main line. We have determined the two threshold values according to the characteristics of the analyzed groves: the target groves have parallel orange rows with similar length.

| Algorithm 7 DetectLines{,} |

Apply to : Extract line with highest value from H: ,) Set Treshold value: Limit H to angles close the main angle (): lines = true Store main line parameters in : while lines do Extract candidate line with highest value from : ,) Remove value in the matrix: if () then Calculate that distance to previous extracted lines is lower than threshold: if distance between candidate and stored lines is never lower than threshold then Store line parameters in : end if else lines = false end if end while Sort stored lines: Return:

|

Furthermore, to avoid detecting several times the same tree row, each extracted line cannot be close to any previous extracted line. We used Equation (

2) to denote the distance between a candidate line (

c) and a previous extracted line (

i) since the angles are similar (

). If this distance is higher than a threshold value (

), the candidate line is extracted. We use Equation (

3) to calculate the threshold value according to the difference of angles of the two lines.

Hough Transform has been commonly used in the literature related to detection tasks in agricultural environments. Recent research shows that it is useful to process local imagery [

25,

26] and remote imagery [

27,

28].

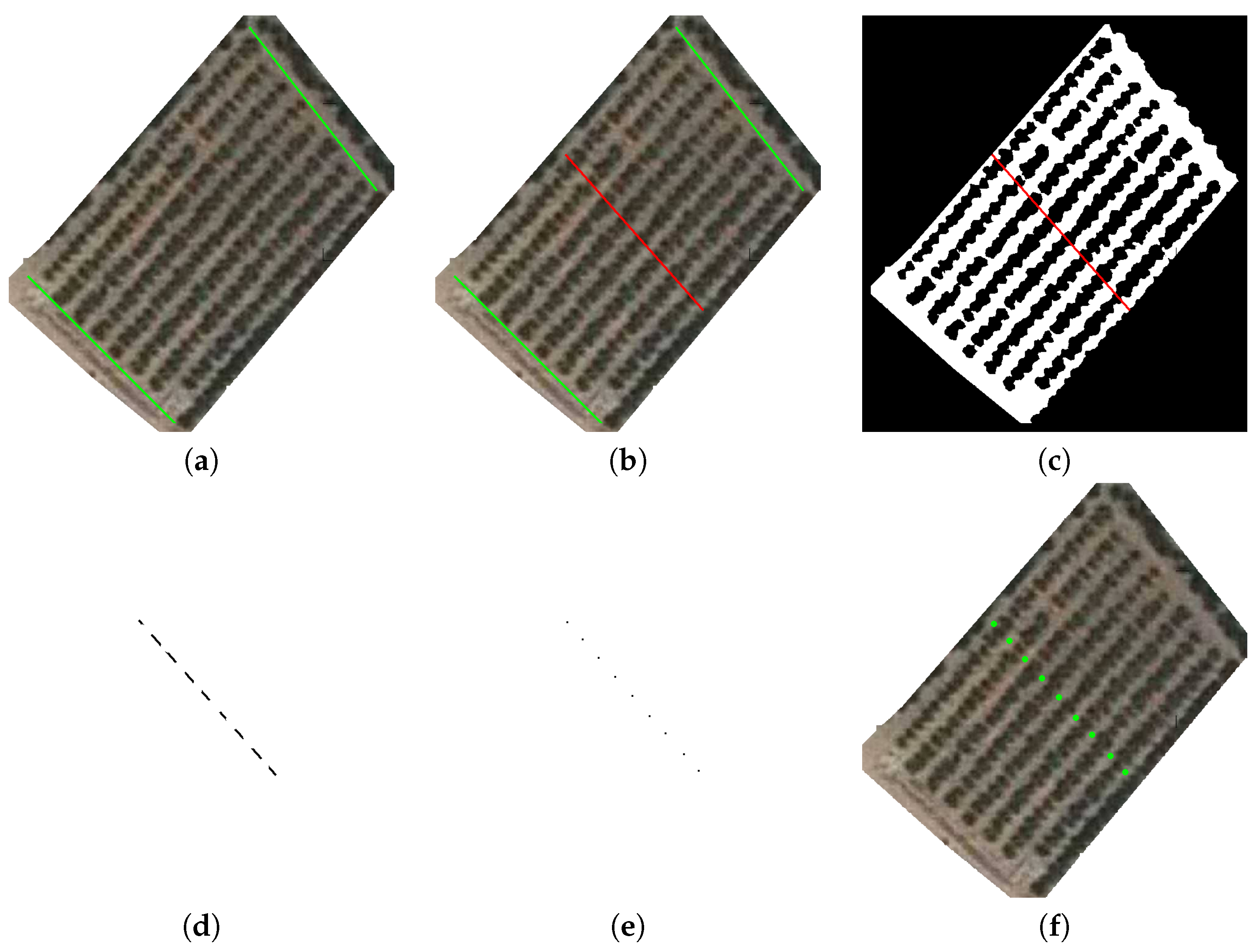

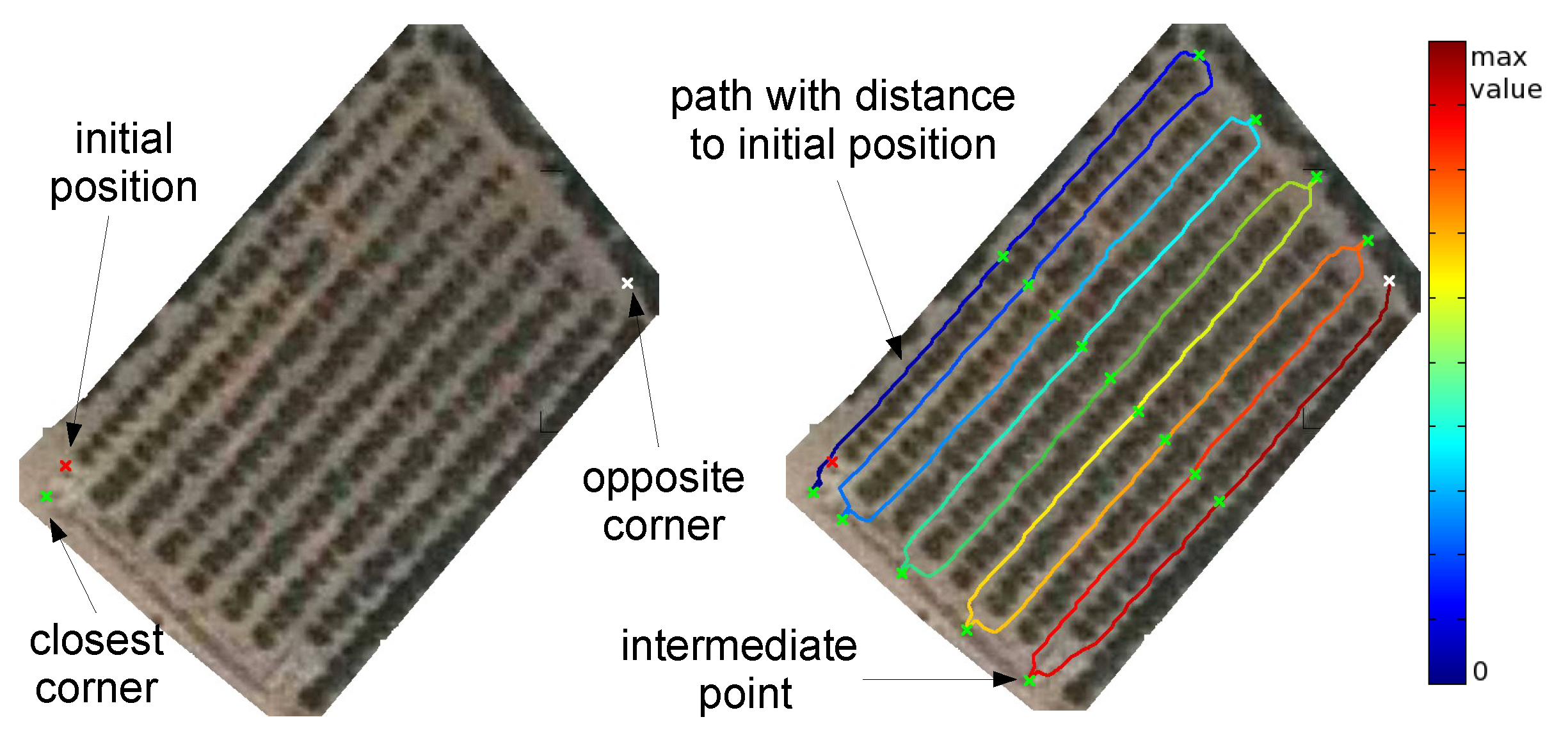

3.2.2. Determining the Reference Points

Reference or control points contain well-known points and parts of the grove, e.g., the vertex of the orange tree lines or the middle of paths where farmers walk. A set of reference points will be used as intermediate points in the entire path that a robotic system should follow.

Firstly, the vertexes of the orange tree rows are extracted. For each extracted line, the vertexes correspond to the first and last points that belongs to the path according to the Path binary map .

Then, Hough transform is applied again to detect the two border paths perpendicular to the orange rows. With these two lines, their bisection is used to obtain additional reference points. These last points correspond to the centre of each path row (the path between two orange rows). Concretely, the intersection between the bisection line and the Path binary map is used to calculate the points. After obtaining the intersection, the skeleton algorithm is applied to obtain the precise coordinates of the new reference points.

However, under certain conditions, two central points have been detected for the same row, e.g., if obstacles are present at the centre of the path, the skeleton procedure can provide two (or more) middle points between two orange tree rows. In those cases, only the first middle point is selected for each path row.

Finally, all the reference points can be considered basic features of the orange grove since a subset of them will be used as intermediate points of the collision-free path that a robotic system should follow to efficiently navigate through the orange grove. For that purpose, we applied Algorithm 8 to extract all the possible middle points in a parcel.

| Algorithm 8 ReferencePoints{ } |

= Extract reference points from = Extract reference points that belong to middle of path rows Return: and

|

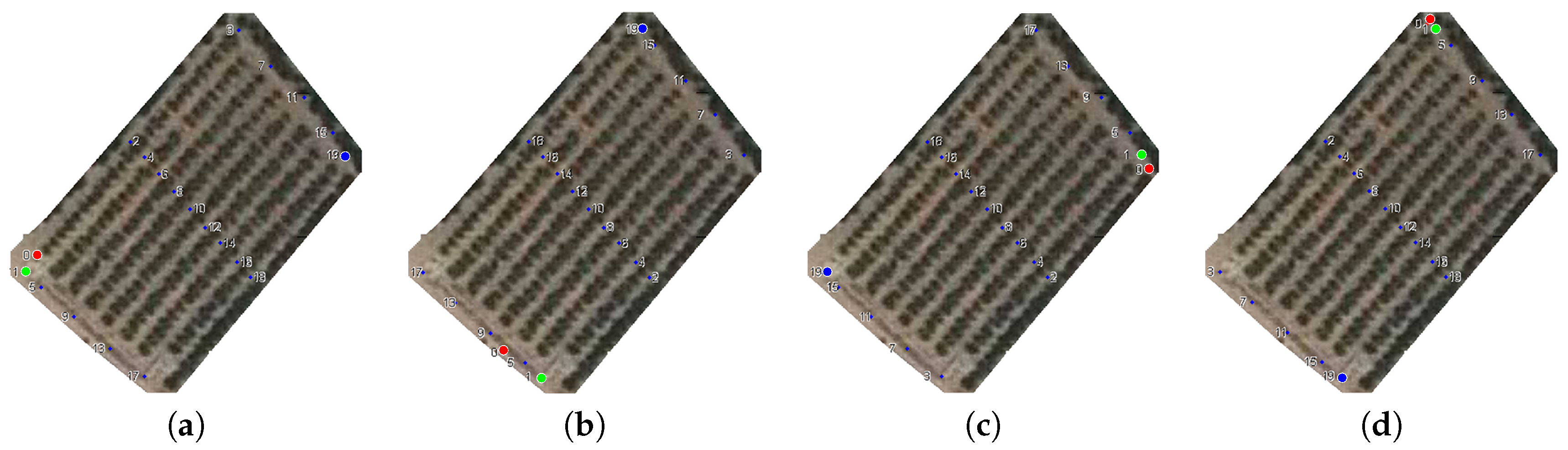

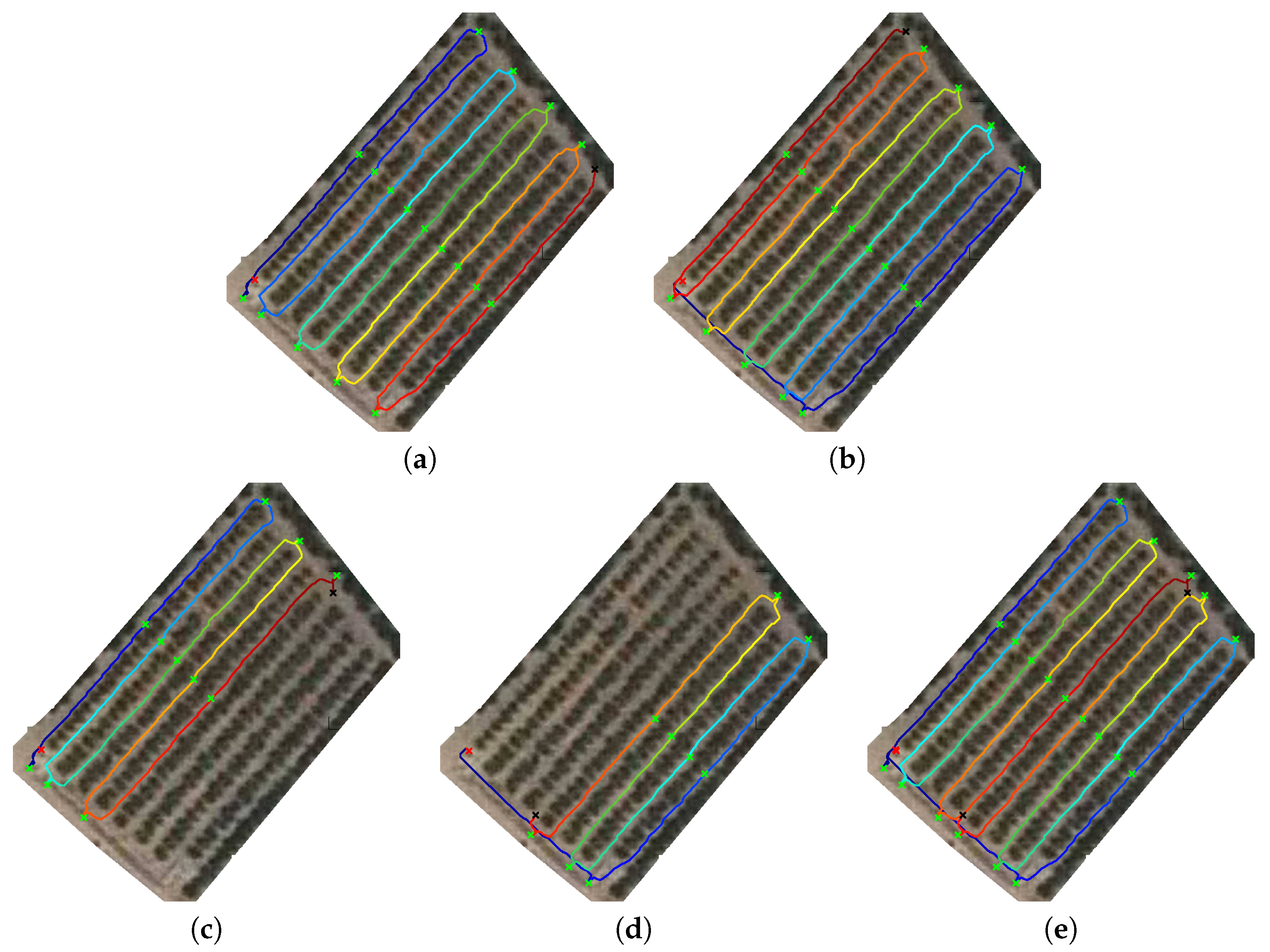

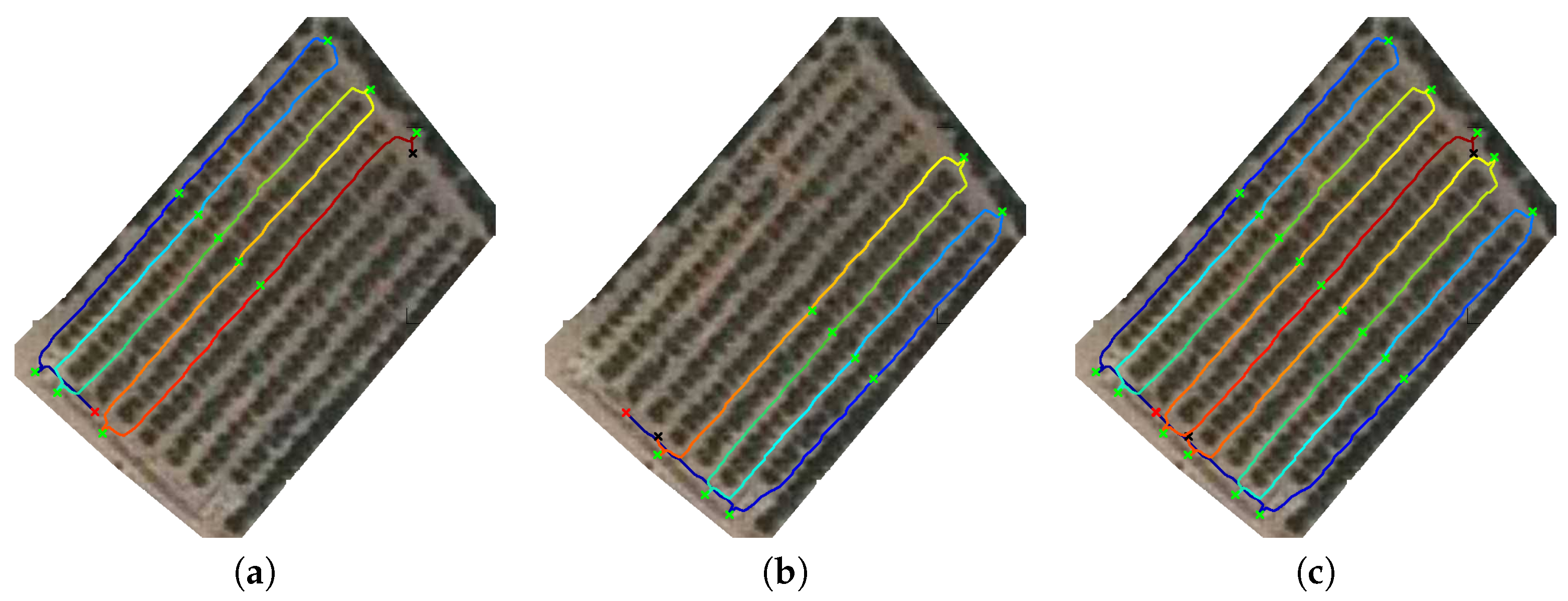

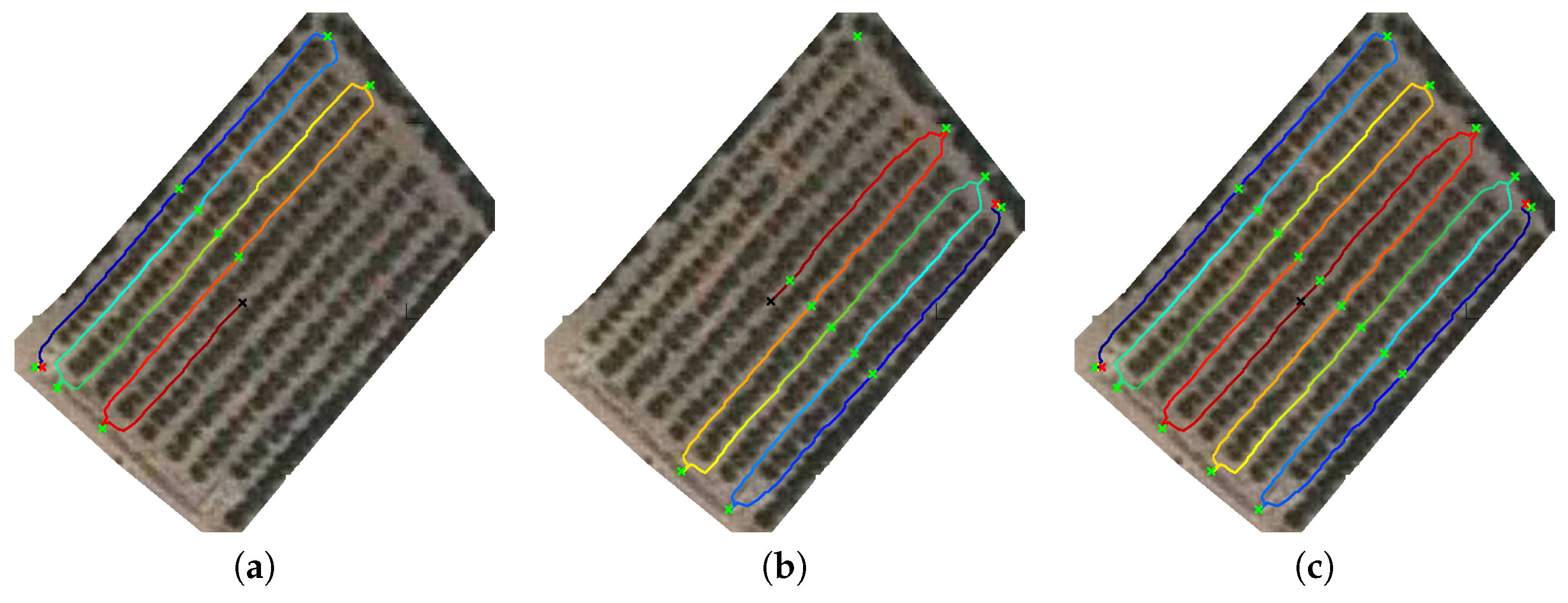

3.2.3. Determine Navigation Route from Reference Points

In this stage, the set with the intermediate points is extracted from the reference points. They correspond to the points where the robot should navigate. The main idea is first to move the robot to the closest corner, and then, the robot navigates through the grove (path row by path row), alternating the direction of navigation (i.e., a zig-zag movement).

Firstly, the closest corner of the parcel is determined by the coordinates provided by the robotic system using the Euclidean distance. The corners are the vertexes of the lines extracted from the first and last rows containing trees previously detected. The idea is to move the robot from one side of the parcel to the opposite side through the row paths. The sequence of intermediate points is generated according to the first point (corner closest to the initial position of the robot) being the opposite corner the destination. Intermediate points are calculated using Algorithm 9.

Finally, this stage is not directly performed by path planning but Algorithm 10) will be used to determine the sequence of individual points. Those points will be the basis for the next stage where the paths for any robotic system that composes the team are determined.

| Algorithm 9 IntermediatePoints{,,} |

Extract corners from : = [,,,] Detect closest corner to : Add to Add to Return:

|

| Algorithm 10 SequencePoints{,,} |

Let be the number of points in if is then for i = 1 to (-1) do Add MiddlePaths(i) to Add to end for Add MiddlePaths() to Add Vertexes() to end if if is then for i = 1 to (-1) do Add MiddlePaths(i) to Add to end for Add MiddlePaths() to Add Vertexes() to end if if is then for i = 1 to (-1) do Add MiddlePaths(j) to Add to end for Add MiddlePaths(1) to Add Vertexes(2) to end if if is then for i = 1 to (-1) do Add MiddlePaths(j) to Add - module(i+1,2)) to end for Add MiddlePaths(1) to Add Vertexes(1) to end if Return:

|

3.2.4. Path Planning Using Processed Satellite Imagery

Once the initial and intermediate points and their sequence are known, the path planning map is determined. This means calculating the full path that the robotic system should follow.

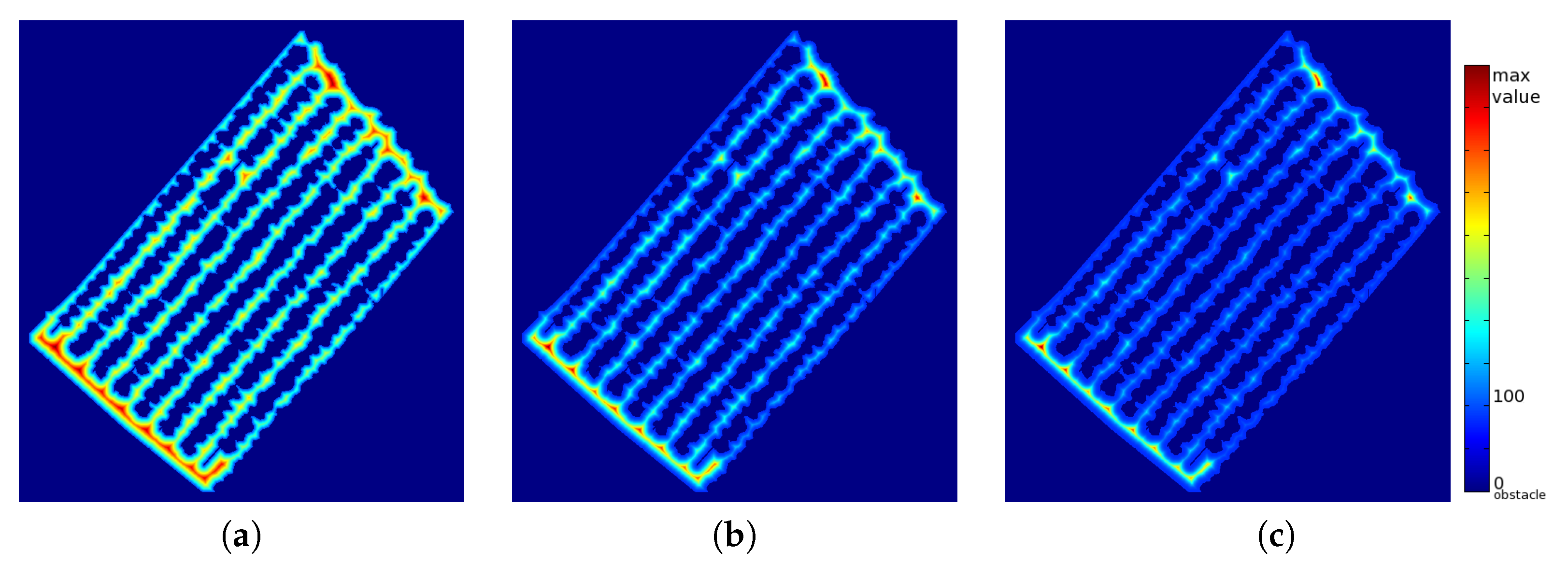

Firstly, the

map is calculated with Equation (

4). This map is related to the distance to the obstacles (where the robot cannot navigate). The novel equation introduces a parameter,

, to penalize those parts of the path close to obstacles and to give more priority to those areas which are far from obstacles. Theoretically, the robotic system will navigate faster in those areas far from obstacles due to the low probability of collision with the obstacles. Therefore, the equation has been chosen to penalize the robotic system for navigating close to obstacles.

where

refers to the pixel-distance of point

in the image to the closest obstacle. The final

value is set to value 1 when the pixel corresponds to an obstacle, whereas it has a value higher than 100 in those pixels which correspond to the class “path”.

The final procedure carried out to calculate the image is detailed in Algorithm 11.

| Algorithm 11 = CalculateSpeed{, } |

Add detected tree lines to the path binary mask Select Non-Path points with at least one neighbor which belong to path: Set initial values: ∀ for x=1: do for y=1: do if PathMask(x,y) belongs to Path then Calculate Euclidean distance to all ObstaclePoints Select the lowest distance value: end if end for end for Return:

|

Then, the Fast Marching algorithm is used to calculate the matrix. It contains the “distance” to a destination for any point of the satellite image. The values are calculated using the values and the target/destination coordinates in the satellite image. The result of this procedure is a matrix of which the normalization can be directly represented as an image. This distance does not correspond to the Euclidean distance; it corresponds to a distance measurement based on the expected time required to reach the destination.

The Fast Marching algorithm, introduced by Sethian (1996), is a numerical algorithm that is able to catch the viscosity solution of the Eikonal equation. According to the literature (see References [

29,

30,

31,

32,

33]), it is a good indicator of obstacle danger. The result is a distance image, and if the speed is constant, it can be seen as the distance function to a set of starting points. For this reason, Fast Marching is applied to all the intermediate points using the previously calculated distances as speed values. The aim is to obtain one distance image for each intermediate point; this image provides information to reach the corresponding intermediate point from any other point of the image.

Fast Marching is used to reach the fastest path to a destination, not the closest one. Although it is a time-consuming procedure, an implementation based on MultiStencils Fast Marching [

34] has been used to reduce computational costs and to use it in real-time environments.

The final matrix obtained is used to reach the destination point from any other position of the image with an iterative procedure. The procedure carried out to navigate from any point to another determined point is given by Algorithm 12. First, the initial position (position where the robotic system is currently placed) is assigned to the path; then, the algorithm seeks iteratively for the neighbour with lowest distance value until the destination is reached ( value equal to 0). This algorithm will provide the path as a sequence of consecutive points (coordinates of the distance image). Since the geometry of the satellite imagery is well known, the list of image coordinates can be directly translated to real-world coordinates. This information will be used by the low-level navigation system to move the system according to the calculated path.

| Algorithm 12 = NavigationPt2Pt{,,} |

whiledo Search the neighbor with lowest Distance Value: Add to end while Return:

|

Finally, the final full path that the robotic system should follow consists of reaching sequentially all the intermediate points previously determined. This strategy is fully described in Algorithm 13, but it is only valid for the case of using only one single robotic system in the grove. For more than one robot present in the grove, an advanced algorithm is needed. Algorithm 14 introduces the strategy for two robots when they are close to each other.

| Algorithm 13 CalculatePath{,,,} |

= for i = 1 to NumberOfIntermediatePoints do ,,} Add to end for Return:

|

| Algorithm 14 CalculatePath{,,,} |

Extract current position for robot 1: Extract current position for robot 2: Extract corners from : = [,,,] Detect closest corner and the distance for the two robots ifthen Add to Add to Detect opposite corner to closest to robot 2: Add to Add to else Add to Add to Detect opposite corner to closest to robot 1: Add to Add to end if = for i = 1 to NumberOfIntermediatePoints do f ,,} Add to end for = for i = 1 to NumberOfIntermediatePoints do ,,} Add to end for Store

|