Whereas there are many methods to create surrogate models, we focus on response surface methods that treat the simulation code as a black box. This is one reason the surrogate modeling techniques we explore are feasible for use with high-fidelity codes. Two surrogate models are based on a polynomial expansion of the physical model evaluations using Legendre polynomials and radial basis functions. We employ discrete projections, which interpolate the data, to evaluate the Legendre polynomial surrogate models. To compare, we consider radial basis function surrogate models constructed using regression and a Legendre polynomial-based surrogate model constructed using sparsity-controlled regression for evaluation of the surrogate models. In addition, we consider a surrogate model based on a multivariate adaptive regression splines (MARS) algorithm. Finally, we demonstrate Gaussian processes and artificial neural networks for surrogate model construction.

For all but neural networks, we can express surrogate models as

where

are coefficients and

are basis functions that define the surrogate model. The trend functions

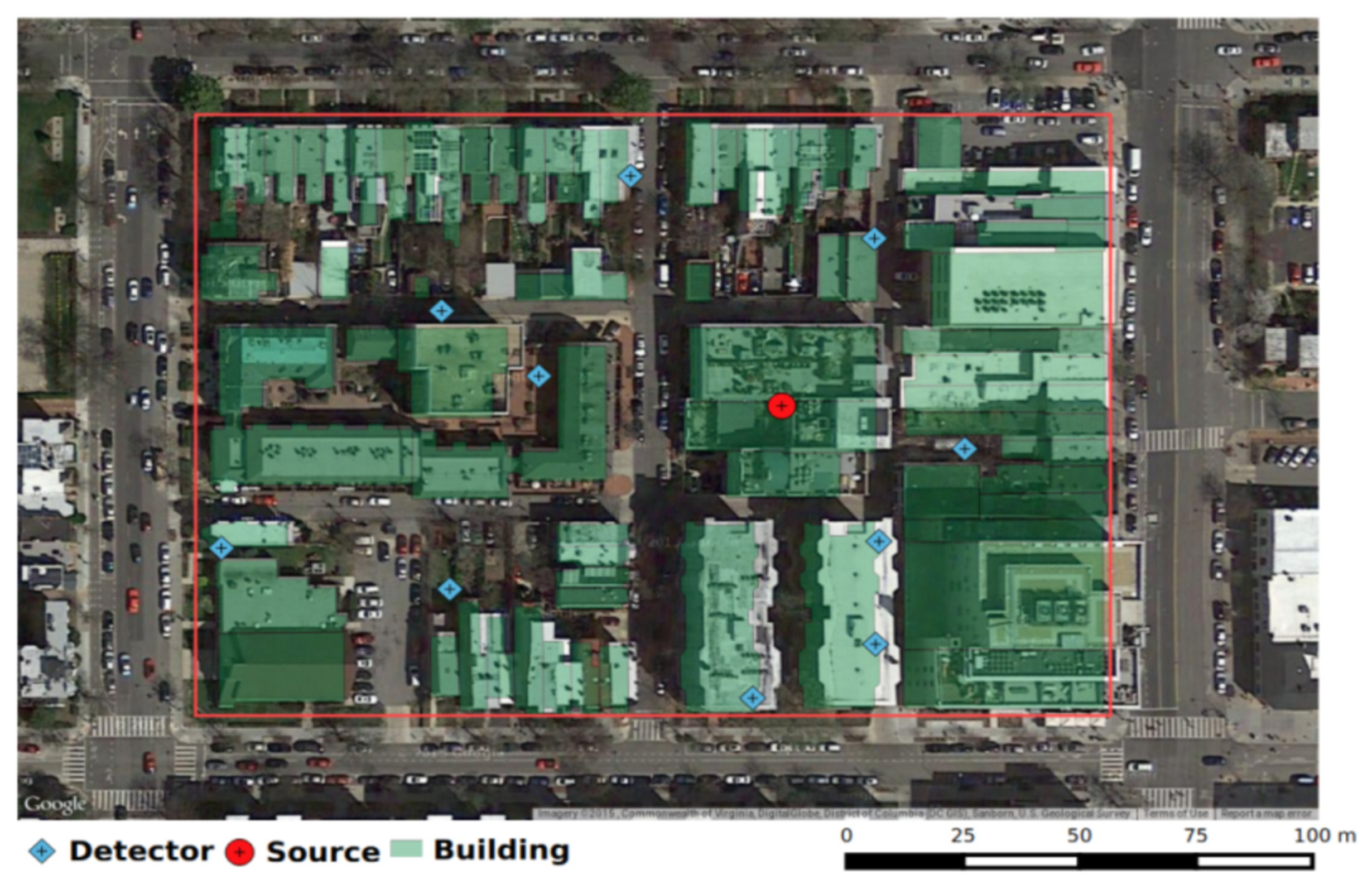

quantify global trends exhibited by the model. To demonstrate the goal of surrogate model construction, we plot a Gaussian process-based surrogate response surface versus the training points for a fixed intensity in

Figure 2. We note that the detector response increases as the source is moved closer to the detector location and the response surface accurately quantifies the behavior of the training data. Additionally, we note that the surrogate model has smoothed the surface represented by the training data, most notably near the singularity at the detector location caused by the

term in the denominator of Equation (

3).

To verify each of the

d models, we compare the surrogate and physical models at

randomly generated test points

. The physical model takes 13.63 min to compute detector responses for the test points. We quantify errors by computing the relative root mean square error (

)

at each detector. Since the detector responses (in counts per second) vary over orders of magnitude, we compute the surrogate model responses based on the natural logarithm of the ray-tracing solution. For each surrogate model, we report

and plot the surrogate and physical model responses at the first 50 test points in

Section 5. Whereas the surrogate and physical model responses at these test points are discrete, we plot the points continuously for clarity. Additionally, we plot the

of each surrogate model for

for the

detectors. We note that performance metrics such as proper scoring rule [

18] or Mahalanobis distance can be employed to obtain some assessment of the uncertainty in the surrogate model, but we leave this as future work.

3.1. Legendre Polynomials

For polynomial interpolation and regression, we assume that the physical model responses can be expressed as

which we approximate to obtain the surrogate model

We take

to be multivariate Legendre polynomials—i.e., products of univariate Legendre polynomials—which are defined as the solution to the differential equation

The first three univariate Legendre polynomials are

for

. These polynomials are orthogonal on the interval

with respect to the density

. We note that the roots of the univariate Legendre polynomials provide the Gauss–Legendre training points,

, that we use to construct the surrogate models.

An important feature of polynomial approximations is that their accuracy is improved for functions with more regularity. We have spectral convergence for Legendre polynomial expansions of functions on

with error bounds

Here,

is a constant that may depend on

k and

, where

is a Sobolev space of

functions with weak derivatives of all orders up to

k in

. We should observe the convergence rate

, since our model is continuous, but has non-continuous derivatives [

19]. We observe a convergence rate of

, where

means the relative root mean squared error defined in Equation (

10) of the Legendre-polynomial-based surrogate model employing

N basis functions. This is not the convergence rate of

that we expected to observe, since

However, this rate is closer to

for certain values of

N, as can be seen in the convergence results in

of the Legendre polynomials that we compile in

Table 1. We also bound

N from above to address overfitting the noise in the physical model response.

We consider two methods to solve for the coefficients

. The first is based on discrete projection, which exploits the orthogonality of the polynomials

to interpolate the function of interest at

; i.e., the physical model simulations defined in Equation (

3). Multiplying both sides of Equation (

13) by

and integrating over

, yields

by exploiting the orthogonality relation

Here, is a normalization constant and is the associated density over .

We approximate this integral by a quadrature rule, such as Gauss–Legendre quadrature, to obtain the approximate relation

for the coefficients of the surrogate model. Here,

are the sampled inputs,

are the quadrature weights, and

, where

solves Equation (

3) and we have dropped the dependence on

w. We employ Gaussian quadrature, since with only

training points, polynomials of degree less than or equal to

can be integrated exactly. We set

and

, where

p is the number of parameters—three in our case—and

K is the maximum degree of the multivariate Legendre polynomials [

17].

We construct the multivariate Legendre polynomials as tensor products of univariate Legendre polynomials. As discussed in Ref. [

20], the surrogate model error decreases roughly exponentially with polynomial degree, provided that a high enough order quadrature rule is used; e.g., large

R. However, results with high degree polynomials—large

K—diverge because of large fluctuations in the function

between the quadrature points produced by overfitting; i.e.,

begins fitting observation errors.

To determine the correct degree

K, we compute the sum of squares (SSq) error of the surrogate model evaluated at the test points

and compute the likelihood

for the surrogate model

with maximum polynomial degree

. We plot the results in

Figure 3, where the results for the

surrogate models are plotted with the mean value overlaid in a thicker line. We see that the mean sum of squares error has a distinct minimum and the mean likelihood has a maximum at

. Hence, we use this value when computing the surrogate model and employ

total basis functions. Akaike information criteria (AIC), Bayesian information criteria (BIC), or cross validation techniques can also be employed to determine the value of

K that balances accuracy versus overfitting of the surrogate model. These cross-validation procedures are discussed further in Ref. [

21,

22]. An alternative to using such a high degree polynomial for this surrogate model is to employ splines, which we consider in

Section 3.2.

We note that these surrogate models, excluding the Gaussian processes surrogate model, are all parametric models. Whereas there are multiple definitions of parametric models, we define a parametric model as one where the class of basis functions is defined prior to construction of the model. Since we employ the class of Legendre polynomials to construct this surrogate model, we classify it as a parametric surrogate model. We classify Gaussian processes as semi-parametric under this definition, as is discussed in

Section 3.4.

An alternate method to obtain the coefficients

is to perform a least absolute shrinkage and selection operator (LASSO) regression [

23]. Borrowing from compressive sensing (CS), we bound the

norm of the coefficients to enforce sparsity. Therefore, we can formulate the problem as the optimization problem

where

is a matrix of the Legendre basis functions,

is the vector of observations obtained from the statistical model defined in Equation (

12), and

is the vector of coefficients. To solve this optimization problem, we use the MATLAB SPGL1 solver, which is detailed in Ref. [

23].

We tested multiple values of

, but use

to obtain the results discussed in

Section 4 and

Section 5. We determine this value of

by computing the 1-norm of the coefficients obtained via discrete projection. We find that these coefficients have a 1-norm between 32 and 38, and, when we decrease

below 32, significant error is introduced. When we increase

to greater than 38, the 1-norm of the coefficients is less than

, meaning that the constraint has no effect on the problem. When comparing the coefficients between these two methods, we see that they are similar but not identical, even when

. Further analysis on determining optimal values of

is discussed in Ref. [

23].

3.2. Multivariate Adaptive Regression Splines

Multivariate Adaptive Regression Splines (MARS) were first proposed by [

24] as a procedure for performing adaptive nonlinear regression using piecewise linear (spline) basis functions. MARS follows from recursive partitioning regression, which is also outlined in Ref. [

24]. The MARS linear basis functions, often termed hinge functions, are introduced in pairs on either side of a “knot”

t, where there is an inflection point in a particular parametric direction; e.g.,

are introduced concurrently. Here,

denotes the

ith component of

q, where

in our model, since

. In this way, the domain is divided so that

is zero for

and

is zero for

.

We employ an adaptive regression algorithm to generate knot locations using data

from the statistical model defined in Equation (

12) for the 10 detectors. We take the knot locations to be

. An additive MARS model would take the basis functions to be

, but we consider products of the linear basis functions, thus we take the basis functions to be

Here,

labels the component of the parameter vector or “predictor variable”

,

is the level of interaction between the linear basis functions, and

is the

kth knot employed by the

jth basis function. Furthermore, the positive subscript means take the maximum of zero and the argument, as in Equation (

27), and we take

and

. For simplicity, we consider only piecewise linear basis functions

, although this algorithm can also be performed with other piecewise basis functions, such as cubic. Additionally, we consider up to second-order interactions of the linear basis functions

; i.e.,

, and first-order interactions of the linear basis functions that are a function of the same variable. We found that employing second-degree interactions—i.e., products of linear basis functions of the same variable—did not increase the performance of the surrogate models significantly.

The surrogate model is

where

and the coefficients

are estimated using least-squares regression. Note that this is the same form as Equation (

11), for

and

. The construction of the MARS model takes place in two steps. In the forward step, basis functions are added to the model to reduce a “lack of fit” value, which we take to be the least-squares error of the surrogate model to the physical model evaluated at the training points. This is performed until a user defined maximum number of terms is reached. The maximum number of terms we use in the forward process of the MARS algorithm to construct this surrogate model is

.

The MARS algorithm purposefully overfits the model to the data in its forward simulation and then performs a backward deletion strategy in the second step to remove basis functions that no longer contribute sufficiently to the accuracy of the model fit. This is performed via a model selection procedure employing Generalized Cross-Validation (GCV) to compare models with subsets of the basis functions. The GCV equation,

is a goodness of fit test that uses the parameter

to penalize large numbers of basis functions

N [

25]. We use a default penalty parameter of

and a threshold value of

, which is used as a stopping criterion for the backward phase. The suggested threshold value is

and should be reduced for noise free data.

To better understand the MARS model structure, consider the problem of approximating the data depicted in

Figure 4. We employ the MARS model

to approximate the data. The knot locations at

and

delimit the regions where different linear relationships are identified. By considering the products of these linear basis functions, we can quantify nonlinear behaviors of the model.

To motivate error analysis of the MARS surrogate model, we consider the full MARS model

where

is the coefficient of the constant basis function. The sum is over the basis functions

where we now consider higher-order products of basis functions; i.e.,

. Here,

is the set of predictor variable component labels for the

jth basis function

. Whereas this representation of the model does not provide insight into the model development, it allows us to rearrange the model in a way that reveals the predictive behavior of the model. By collecting basis functions that involve identical predictor variable sets, we obtain the representation

These sums represent the

level interactions, if present, between the variables within the model. By adding the univariate contributions to the bivariate contributions, we obtain the representation

This provides a bivariate tensor product spline approximation representing the joint bivariate contributions of

and

to the model [

24]. Similar rearranging can be performed by employing this representation combined with the trivariate functions to obtain the joint contributions of

,

, and

. Since Equation (

35) is similar to analysis of variance decomposition [

17], we refer to this as the ANOVA decomposition of the MARS model. The optimal additive approximation corresponds to the first-order terms in the ANOVA expansion, thus, if the higher-order indices are small, then the function can be approximated well by an additive model; i.e.,

. Note that, for our purposes, we consider a model with second-order interactions, thus we truncate Equation (

35) at the second-order interactions. Unfortunately, the adaptivity of MARS makes it difficult to bound the error in the manner of spectral approaches that bound the error in terms of the coefficients

. However, cross validation procedures, such as those detailed in Ref. [

21,

22], can be used to provide an error estimate for the MARS model.

The MARS algorithm can be cast in a Bayesian framework in which case the number of basis functions

N, their coefficients

, and their form—knot points

, sign indicators

, and the level of interaction

—are considered to be random [

26]. Since any MARS model can be uniquely defined by these values, the Bayesian MARS model sets up a probability distribution over the space of possible MARS structures. The data are then used to infer these hyperparameters by employing a Markov chain Monte Carlo (MCMC) reversible jump simulation algorithm [

27]. Currently, a form of this algorithm has been implemented in the R package BASS (Bayesian adaptive spline surface), but no such package exists for MATLAB. Hence, a comparison of BASS with these surrogate models is deferred to future research.

The MARS surrogate model takes advantage of the local low dimensionality of the function of interest, even if that function is strongly dependent on a large number of variables; i.e., large

p. We employ the third-party ARESLab toolbox for MATLAB [

28], which employs an algorithm similar to MARS [

24]. Following the backward deletion step performed by this toolbox, the surrogate models discussed in

Section 4 and

Section 5 for each of the 10 detectors employ between

and

basis functions.

3.3. Radial Basis Functions

Here, we consider expansions

with radial basis functions

defined in terms of the Euclidean distance

We remind the reader that

for our problem. A common choice of

is

Here, the hyperparameter

is a scale factor, which is typically inferred when constructing the surrogate model. Details regarding the manner in which

affects conditioning and stability are provided in Ref. [

29]. Gaussian radial basis functions defined in Equation (

40) have the advantage of physical interpretation and super-spectral convergence; i.e., errors decrease as

. Additionally, multiquadric, inverse multiquadric, and thin plate splines are described in

Table 2.

To compute the coefficients

, we formulate Equation (

37), with observations given by Equation (

39) as the matrix system

where

,

, and

are the errors from Equation (

12). For

, the least squares estimate is given by

where

is the pseudo-inverse of

A. To compute

w, we use the MATLAB backslash command which employs a QR factorization. Other solution techniques are discussed in Ref. [

29,

30]. For the purpose of comparing with Legendre surrogate models, we employ the same number of basis functions

by randomly selecting center points

for the basis functions

from the training data, such that

.

In

Table 2, we observe that the Gaussian radial basis functions surrogate model does not perform as well as the inverse multiquadric and thin-plate spline radial basis function surrogate models. This decrease in performance is likely due to the smoothness of the Gaussian radial basis functions as compared with the other basis functions. The inverse multiquadric function provides the best accuracy and low computational cost in comparison with the other basis functions. Therefore, we employ inverse multiquadric radial basis functions to develop surrogate models that we compare with the other surrogate modeling methods in

Section 4 and

Section 5. We note that radial basis functions are often employed in the development of neural networks as the activation functions of the neural network nodes, which is discussed further in

Section 3.5.

Whereas the shape parameter

can be treated as a hyperparameter to be optimized for each problem, we set

. We chose this by testing multiple values of

and choosing the value that provided the smallest

, defined in Equation (

10). Because we randomly sample the basis function center points, optimizing the value of

is difficult, whereas, if we used all or a constant set of the training points as center points for the basis functions, we could optimize this hyperparameter. The value of

can affect the conditioning of the problem and, if too large, cause the Runge phenomenon to introduce large errors, as discussed in Ref. [

29]. However, decreasing the value of

improves conditioning and decreases accuracy, so these two effects must be considered when setting this hyperparameter.

3.4. Gaussian Process Regression

In Gaussian process- or kriging-based surrogate models, one treats the high-fidelity simulations as realizations of a Gaussian process

The mean and covariance functions and are constructed to reflect the trends and correlation structure of the physical model u.

We employ a constant mean

, where

is a hyperparameter that we infer and

is an

vector of ones, since we employ observations for

M parameter values

to compute the surrogate model. Whereas universal kriging employs a polynomial trend function

, we limit our analysis to ordinary kriging by employing a constant mean

. Additionally, we employ covariance functions of the form

where

denotes the model variance and

is a parametric correlation function or kernel. By the definition of parametric given in

Section 3.1, Gaussian processes are semi-parametric. This is because the class of basis functions employed is not fully determined, but follow from the predefined parametric correlation function. A fully nonparametric Gaussian processes model is discussed in Ref. [

31]. A common choice for

is the squared exponential function

which can be expressed as

with

defined in Equation (

39) if we consider

. Comparison with Equation (

40) illustrates that this is comparable to employing radial basis functions as a correlation function. We note that this kernel is isotropic in the sense that the length scale

ℓ is the same for each of the

scaled components of the parameter

.

For inputs

, the associated covariance and correlation matrices have entries

and

. For the statistical model defined in Equation (

12), it follows that

where

. Note that

serves as a nugget, which results in an emulator that does not interpolate the data and attaches a non-zero uncertainty bound around the data.

Whereas use of the correlation function from Equation (

45) facilitates comparison with radial basis surrogate models, it is overly smooth for the urban source applications with discontinuous derivatives. This motivates consideration of the less smooth Matern correlation functions [

32,

33]. As summarized in

Table 3, we consider three isotropic correlation functions

and Equation (

45), where

denotes the Euclidean distance between samples

and

and the subscripts denote half integer choices with explicit representations. We also consider three anisotropic kernel functions where the characteristic length scale

is allowed to vary for each component of

. As detailed in Ref. [

33], this yields the anisotropic Automatic Relevance Determination (ARD) correlation functions

where

Note that reduces to when .

Since the ARD Matern kernel with Matern parameter value 3/2 outperforms the other kernel functions, we consider this kernel for the rest of our analysis in

Section 4 and

Section 5. The ARD Matern 3/2 kernel performs better than the ARD Matern 5/2 kernel because it is less smooth than the ARD Matern 5/2—the Matern 3/2 kernel has one continuous derivative, whereas the Matern 5/2 kernel has two—and hence can more accurately quantify the non-smooth behavior of the physical model. Anisotropic functions are important for this application of surrogate models, since the domain differs greatly in each parametric direction [

33]. We additionally note that metrics, such as those discussed in Ref. [

34], can be employed to test the performance of these surrogate models and can be used to assess the performance of other surrogate modeling techniques. These metrics are able to account for the correlation between the validation data, but we leave the evaluation of these performance metrics to future work.

We optimize the hyperparameters, , using a dense, symmetric rank-1-based, quasi-Newton algorithm to approximate the Hessian that is required to solve this problem. We set their initial values, respectively, as the standard deviation of the predictors and the standard deviation of the responses divided by the square root of two. We employ the MATLAB package fitrgp, which is both robust and relatively easy to use.

3.5. Neural Networks

The final surrogate model is based on neural networks, which was originally developed to solve problems in a way that emulates the brain. They are typically organized in layers of their core structures, called neurons or nodes, each of which has an inherent activation function. There are also sets of coefficients that act on the connections between nodes, which are tuned by a learning algorithm and are capable of accurately approximating nonlinear functions [

35]. For our model, the input nodes of the neural network are the parameter components

associated with the location and intensity of a nuclear source. The output node is the neural network surrogate model approximation to the ray-tracing solution defined in Equation (

3) for a detectors response

. Here, we develop a feed-forward artificial neural network, meaning that the information is only passed forward through the hidden layer. This is unlike a feedback network, which allows for information transfer in both directions and consequently loops within the network. We note that while we employ supervised machine learning methods, unsupervised learning methods might potentially be employed for future work.

The construction of the neural network surrogate model is divided into two steps: choosing a network structure and training the network. To define the network architecture, we must define the number of hidden layers, the number of neurons in each layer, the activation functions associated with each neuron, and the performance function used to evaluate the accuracy of the network during training. There have been many advances made in the development of deep learning algorithms [

36], but for simplicity we consider a single hidden layer for this model. We set the hidden layer of the fitting network to a size of 35, which we obtained from testing hidden layer sizes to obtain an optimal hidden layer size for this problem. We note that the use of a large number of hidden layers or hidden layer neurons can lead to overfitting and increased computational time. Hence, these measures are normally employed only for highly complex problems.

Each neuron performs a linear transformation,

for the

p components

, where

are the neural network coefficients. Therefore, there are

coefficients to train for this model, where

is the number of neurons in the model and one is the dimension of the output. This transformation is followed by a nonlinear operation defined by the symmetric sigmoid activation function

We employ the MATLAB neural network toolbox to evaluate the surrogate model. We apply the mean squared error performance function, since this performance function provides a good balance between accuracy and computation time during surrogate model construction when compared with other performance functions provided by the MATLAB neural network toolbox.

To train the network, we employ a nonlinear least-squares regression to compute the coefficients using the training data

. We employ the Levenberg–Marquardt back-propagation training function, since this outperforms other built-in training functions in terms of error. The only exception is Bayesian regulation back-propagation, which requires approximately four times the computational time for an improvement of only 0.01 in the surrogate model

. This is due in part to the fact that the Levenberg–Marquardt algorithm does not require the computation of the Hessian matrix, unlike many of the other MATLAB built in training functions. We set the training parameters associated with this training function to their default values and compare the performance of this surrogate model with the other models in

Section 4 and

Section 5.